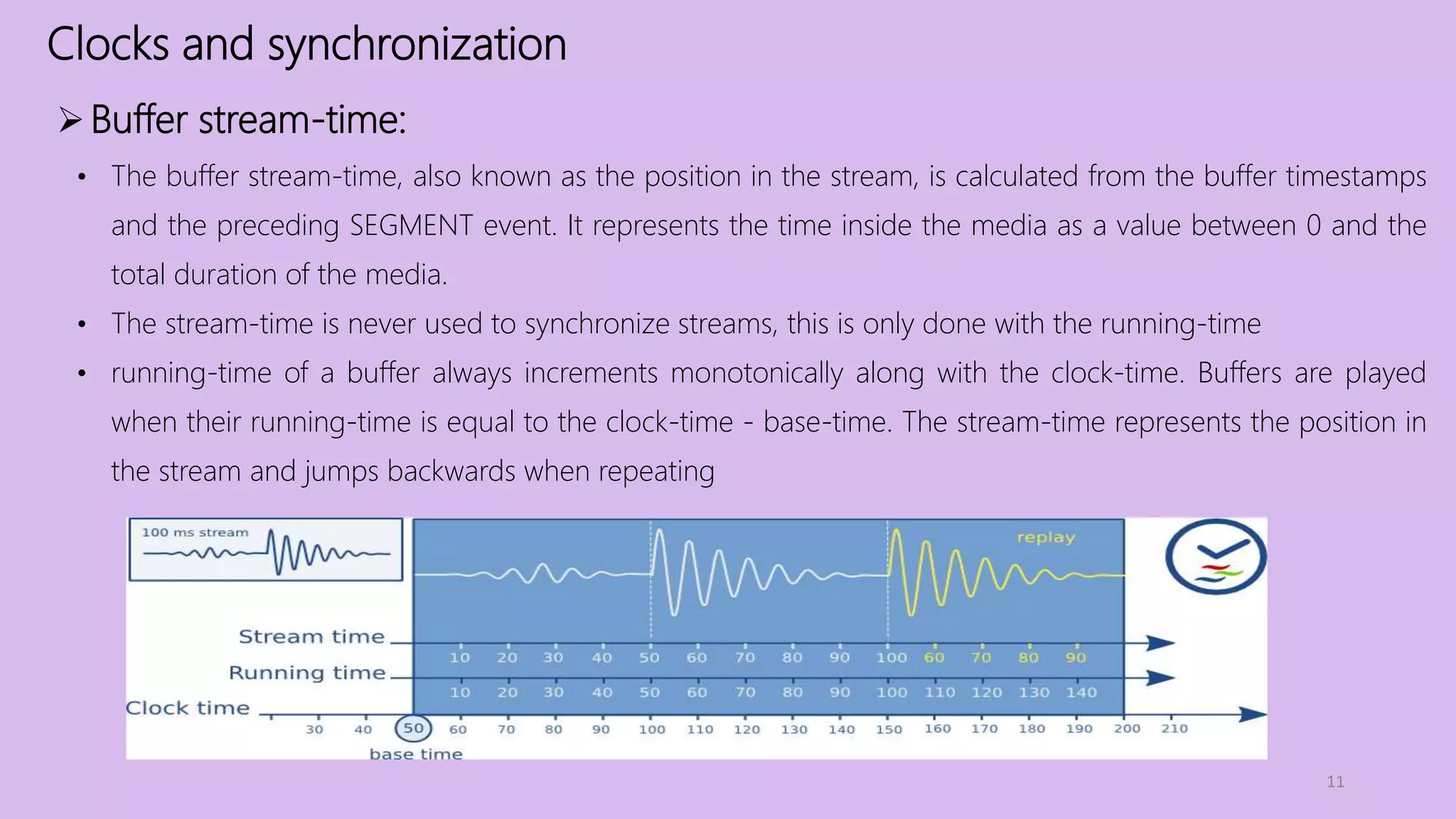

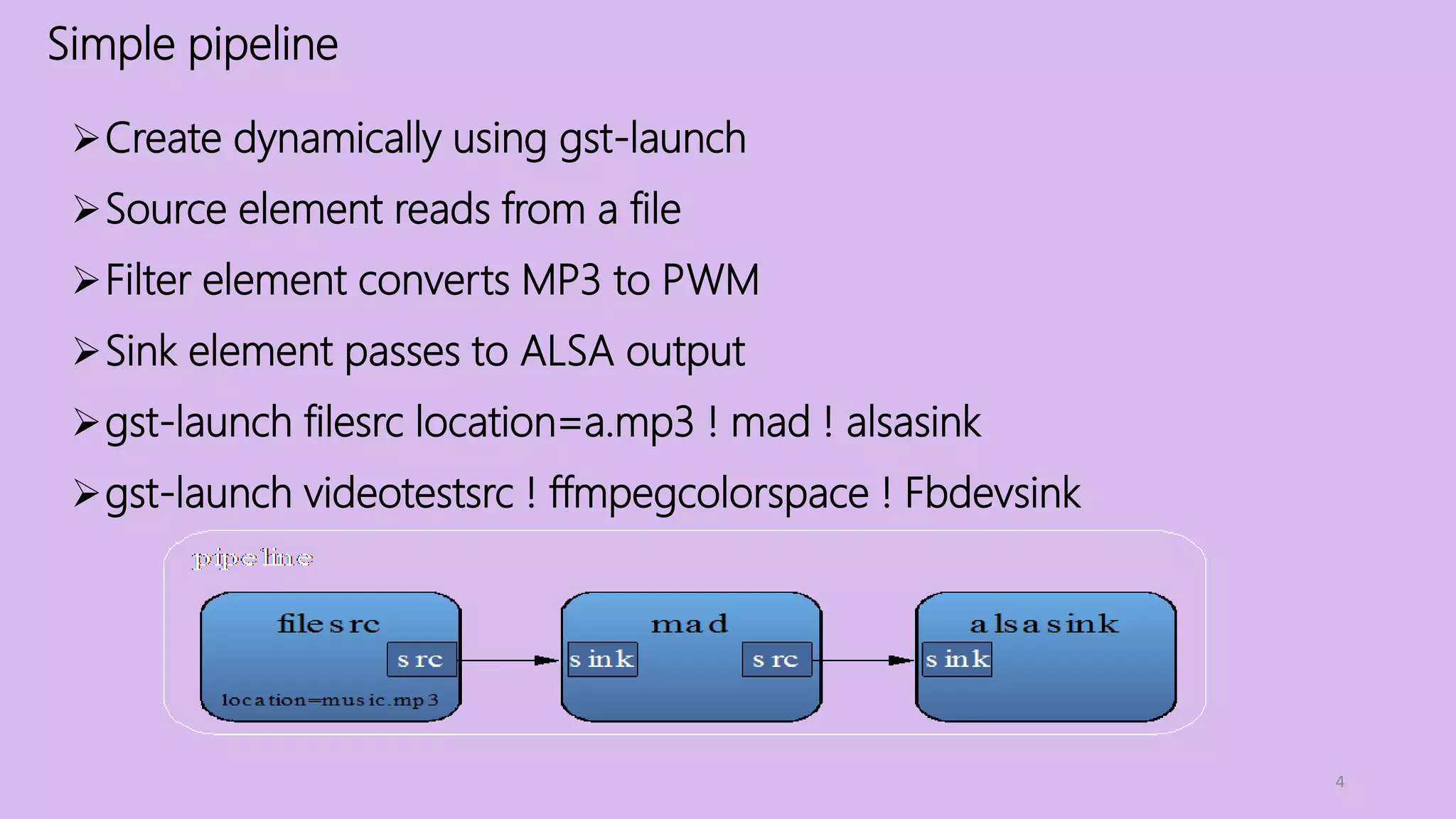

Gstreamer is a multimedia framework that provides elements and pipelines for creating multimedia applications. It includes components for audio, video, images, streaming, and more. Elements can be connected in pipelines to process and deliver multimedia data. The framework handles buffer passing and event notification between elements. precise synchronization is achieved through use of a unified clock and timestamping of buffers.

![Memory

8

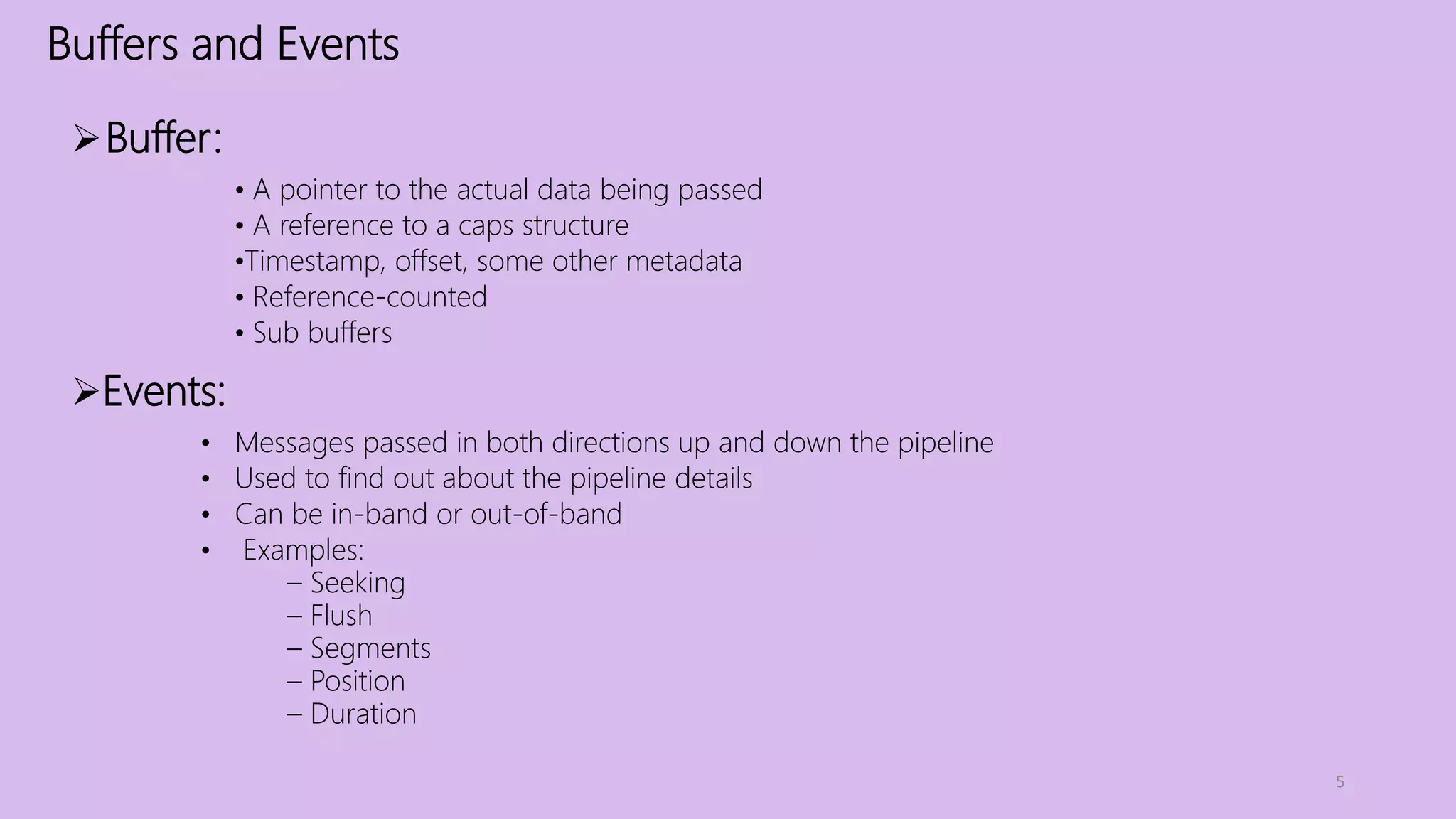

• Most objects might deal with are refcounted.

• GstMemory is an object that manages a region of memory

• GstMemory objects are created by a GstAllocator object

• Different allocators exist for, for example, system memory, shared memory and memory

backed by a DMAbuf file descriptor

• The buffer contains one or more GstMemory objects thet represent the data in the buffer

/* allocate 100 bytes */

mem = gst_allocator_alloc (NULL, 100, NULL);

/* get access to the memory in write mode */

gst_memory_map (mem, &info, GST_MAP_WRITE);

/* fill with pattern */

for (i = 0; i < info.size; i++) info.data[i] = i;

/* release memory */

gst_memory_unmap (mem, &info);](https://image.slidesharecdn.com/gstreamer-internals-180620024618/75/Gstreamer-internals-8-2048.jpg)