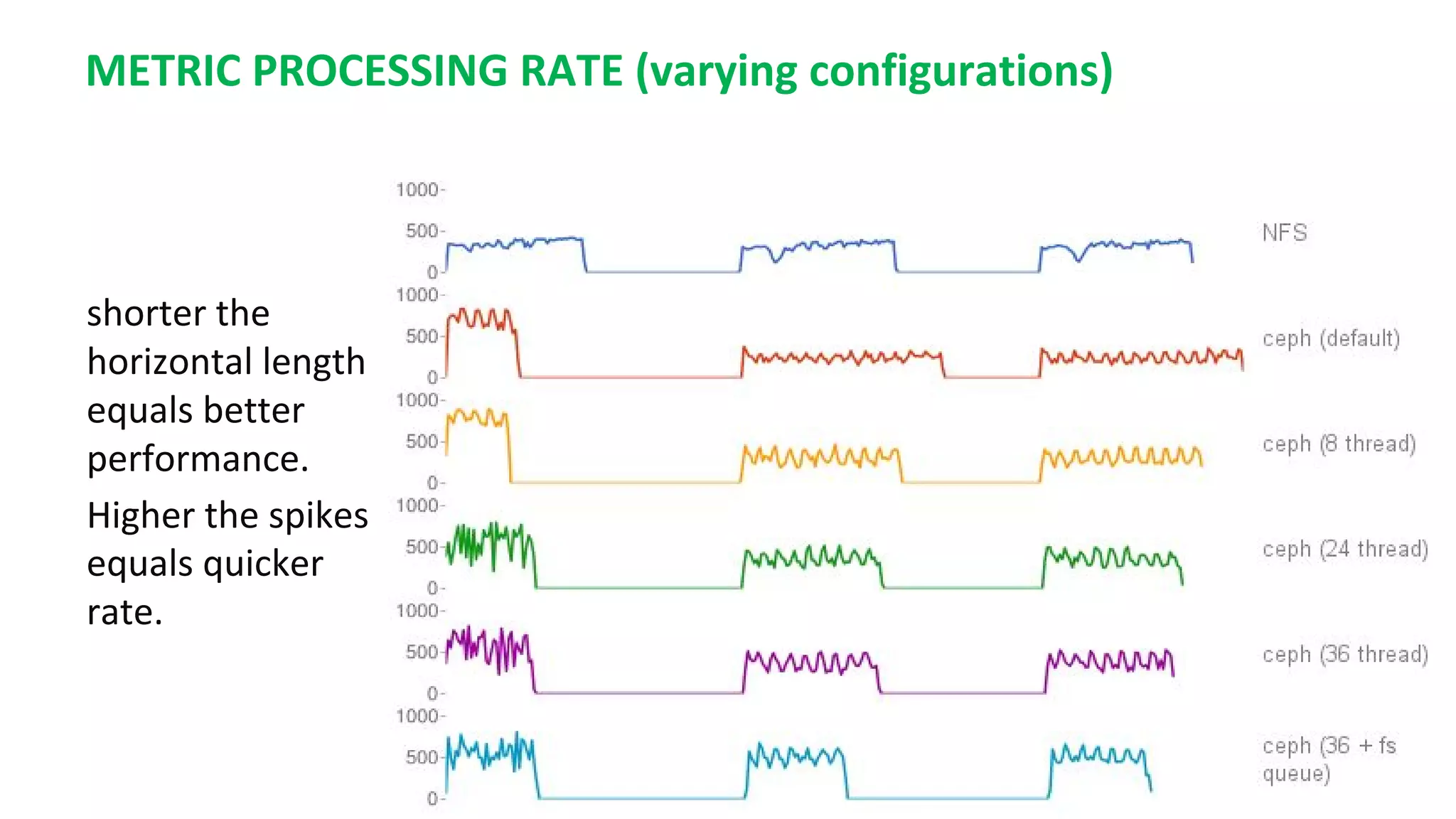

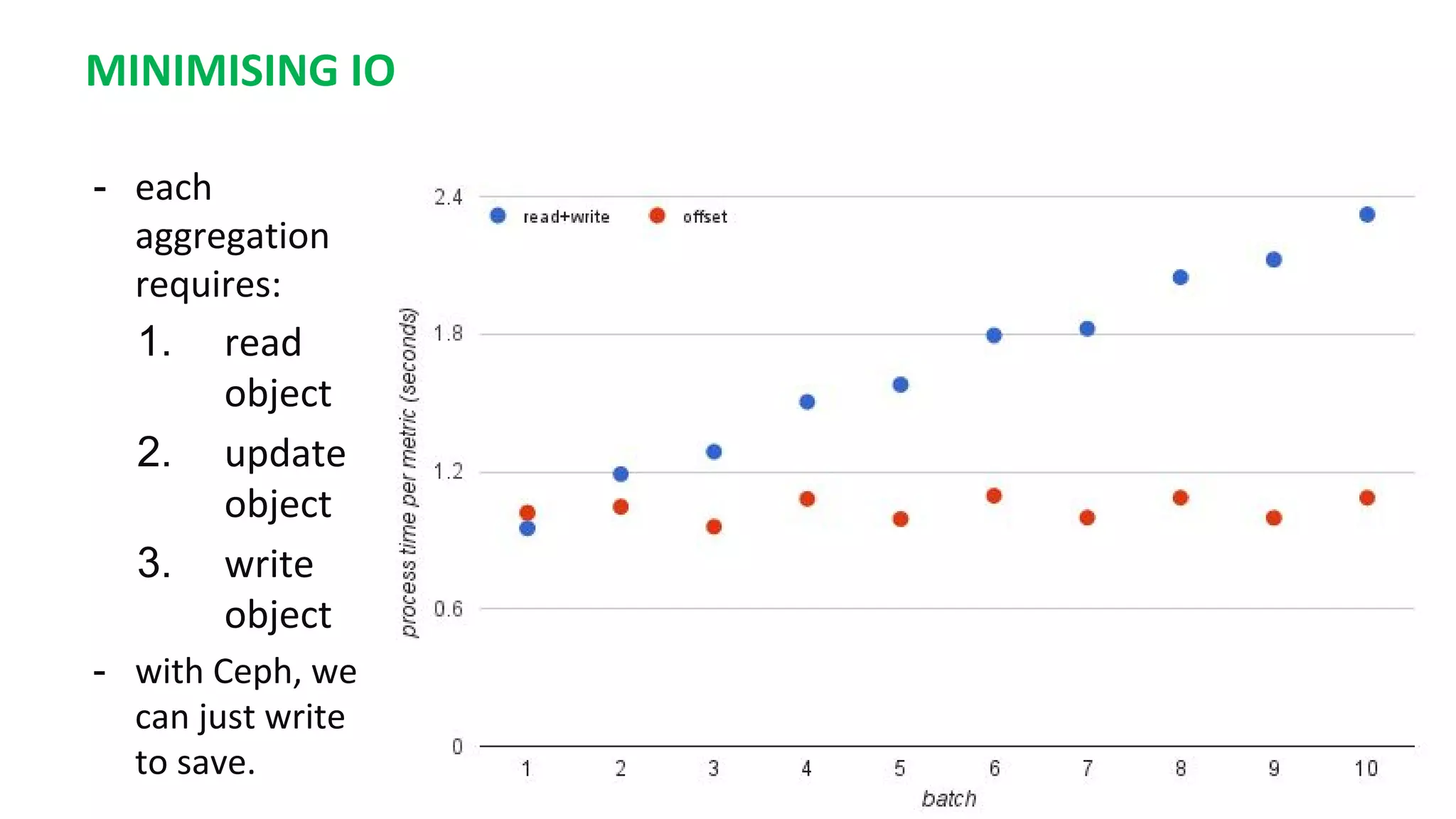

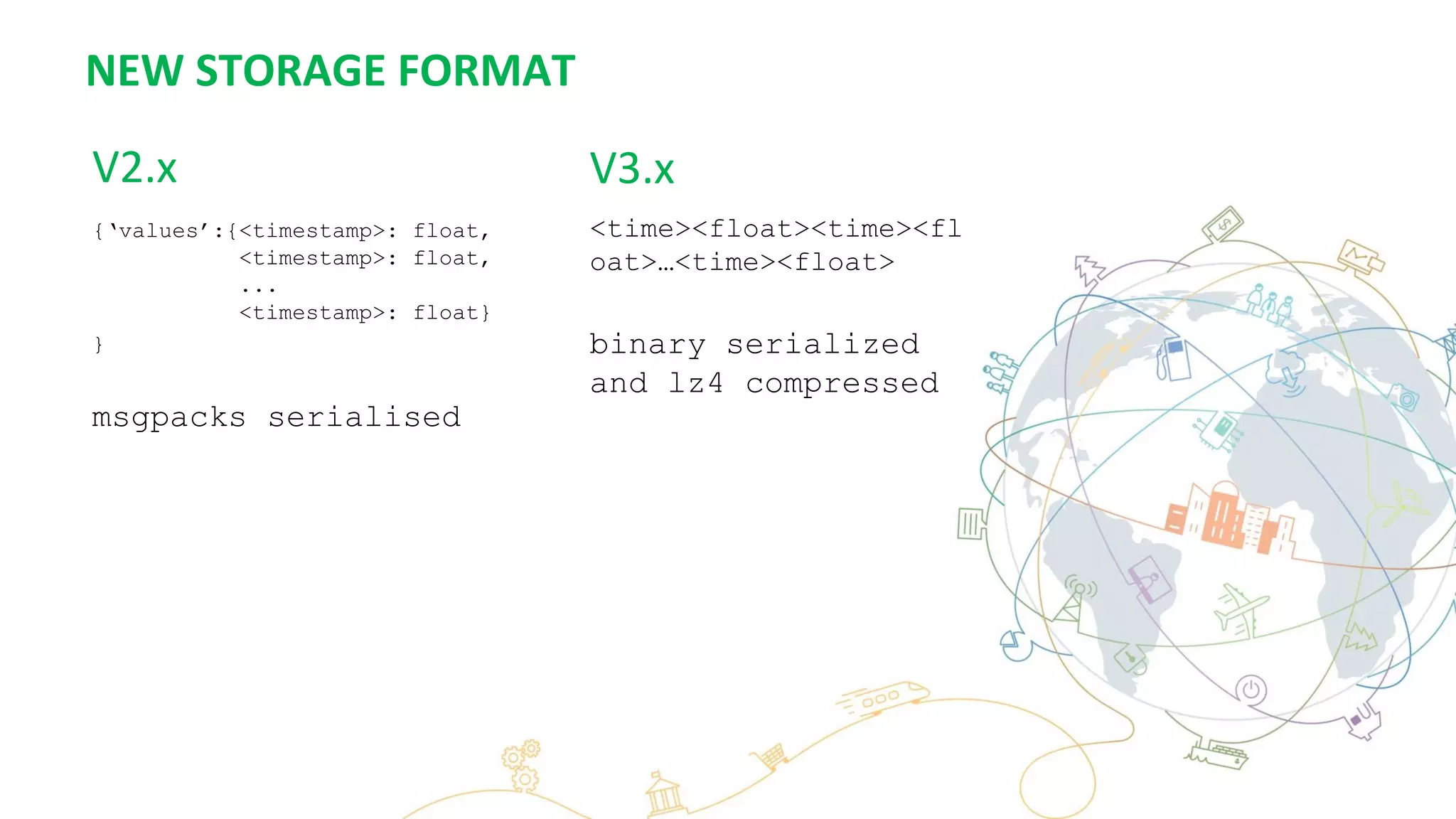

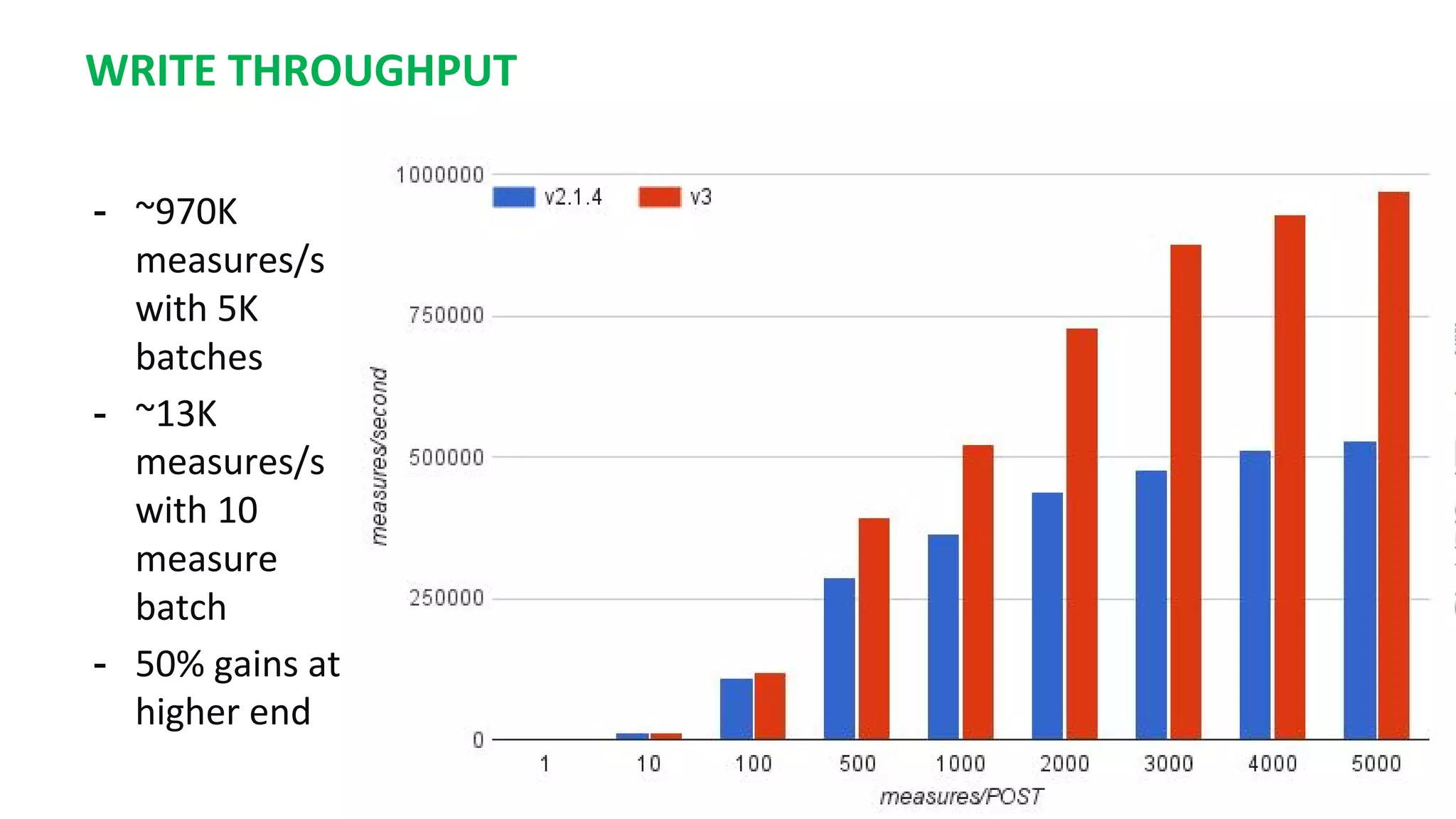

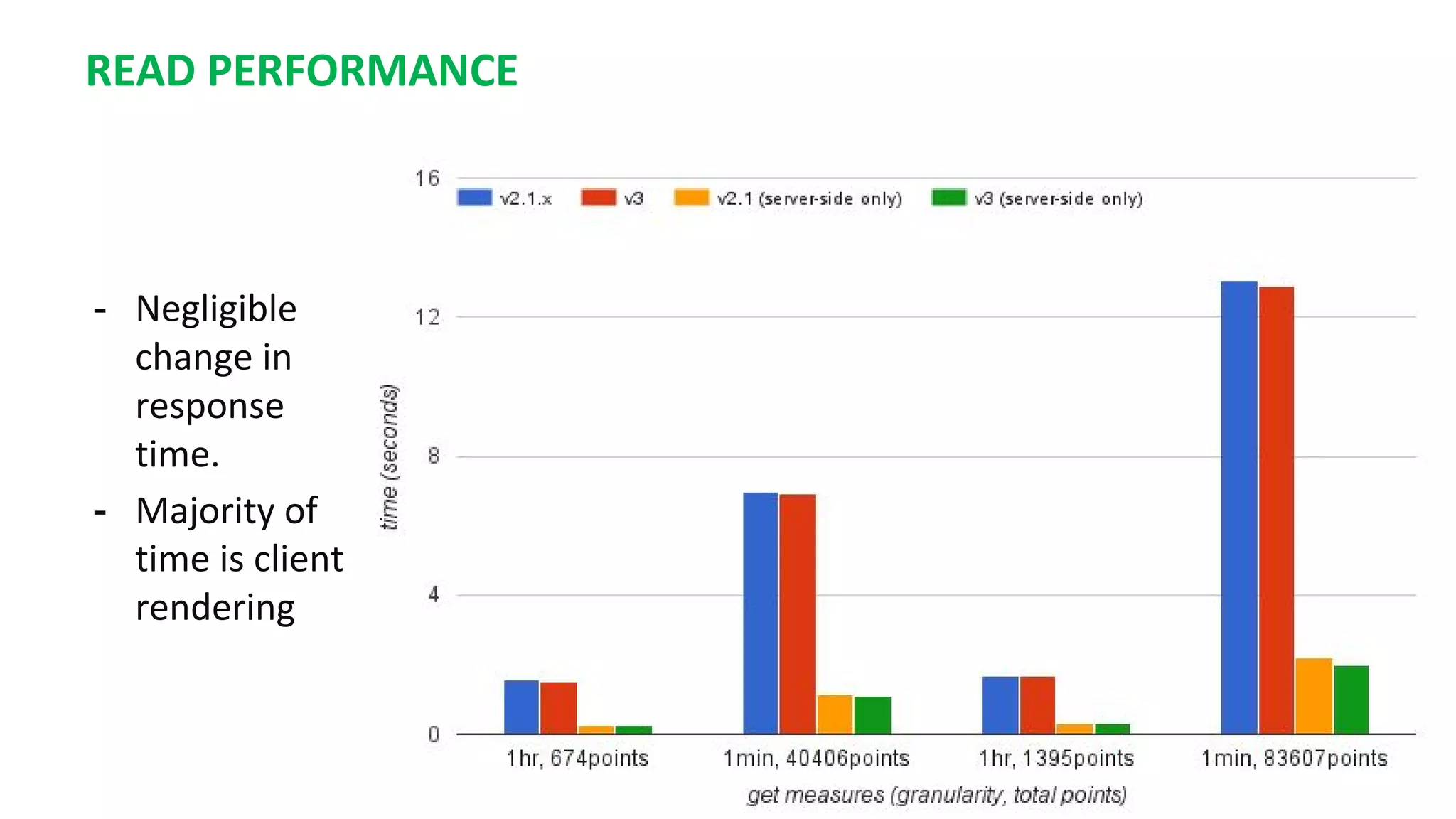

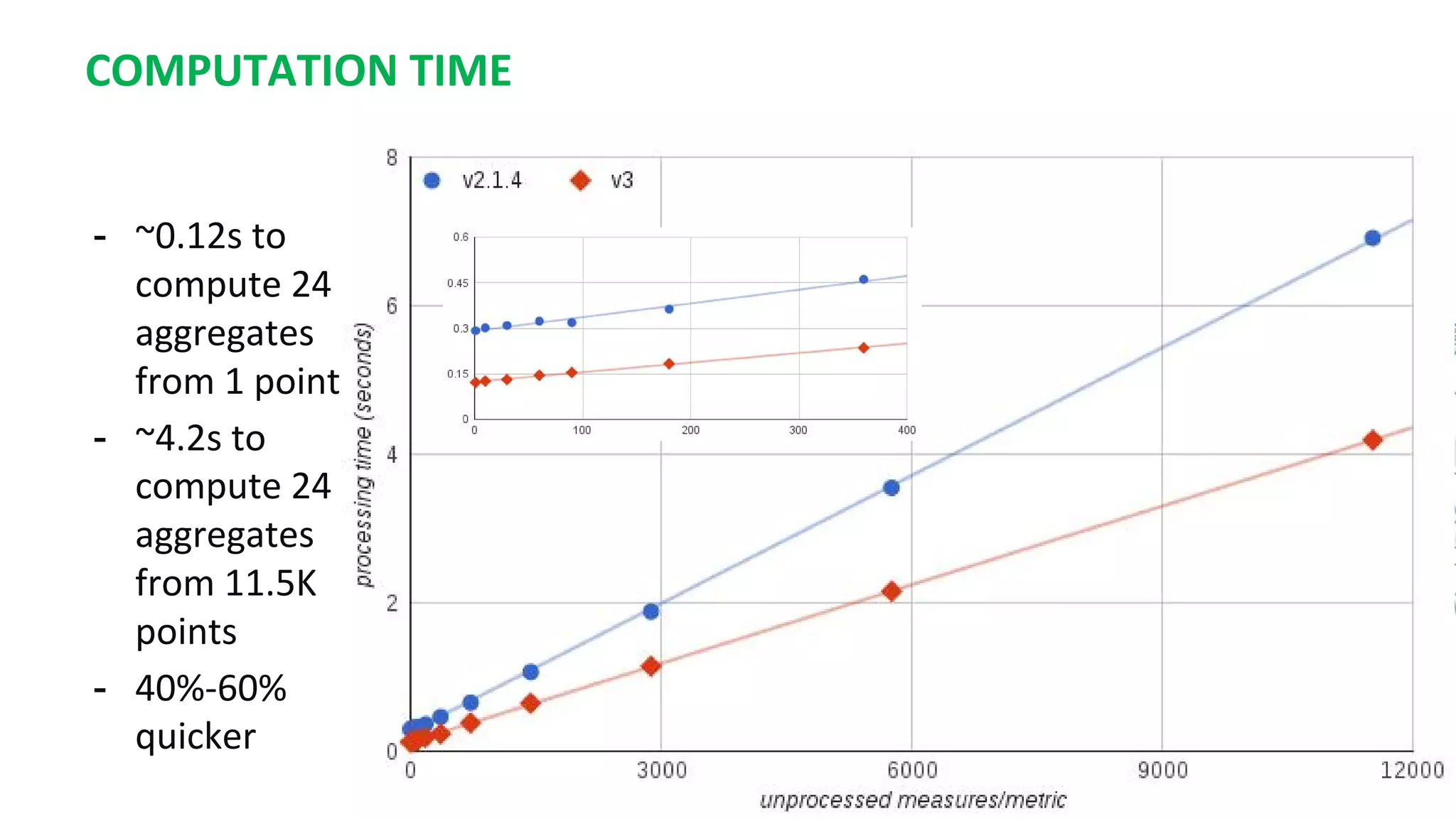

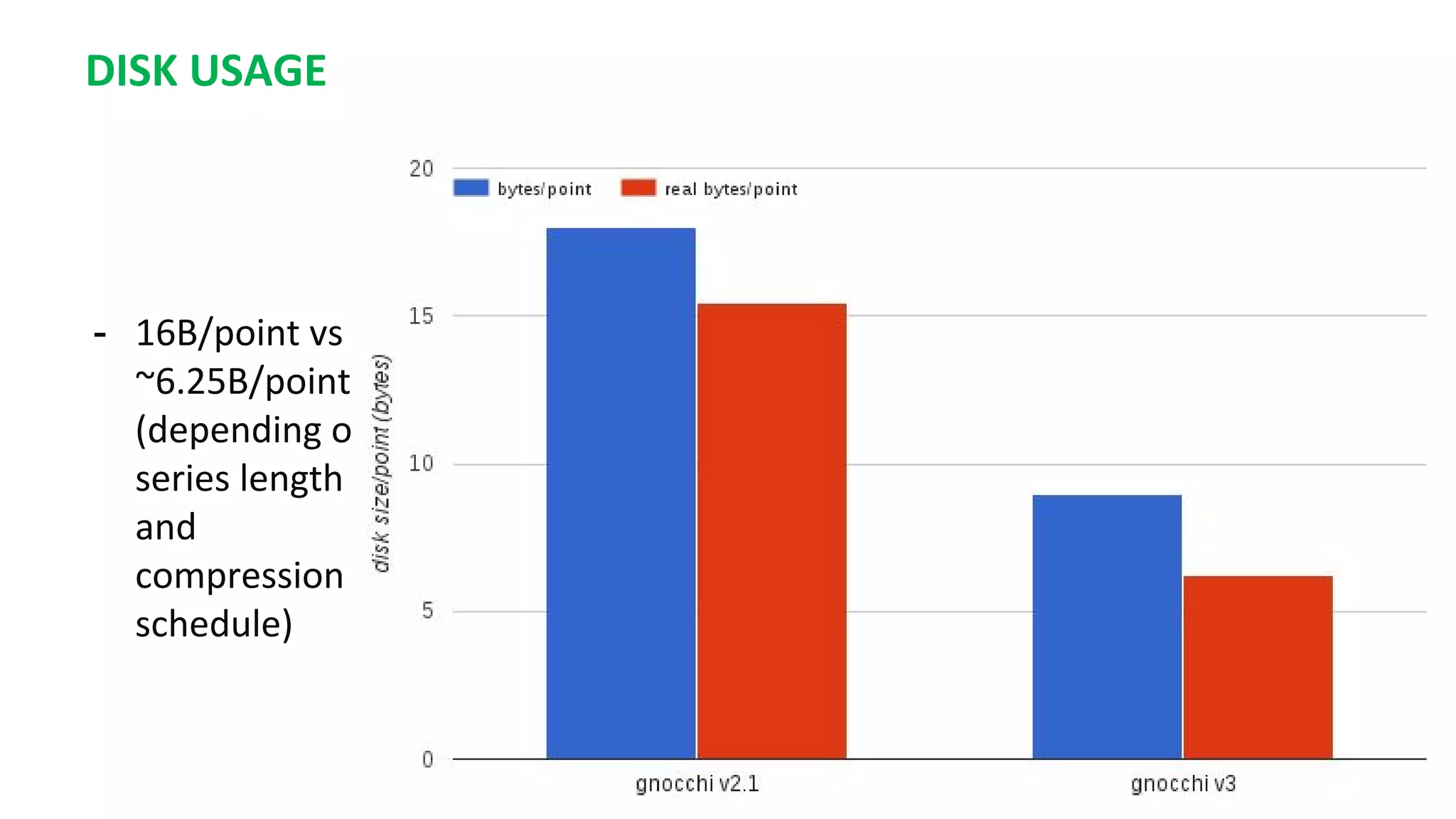

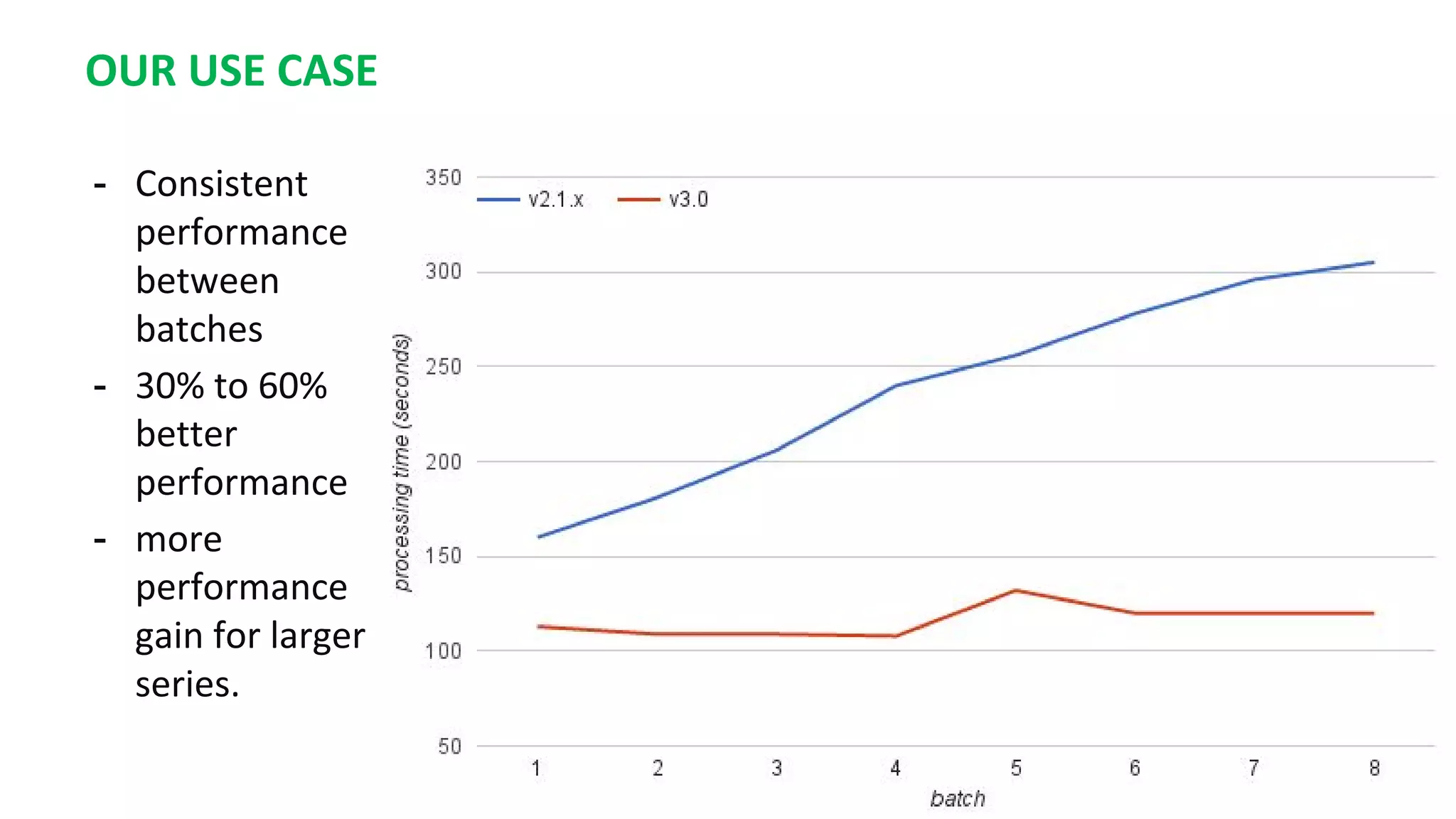

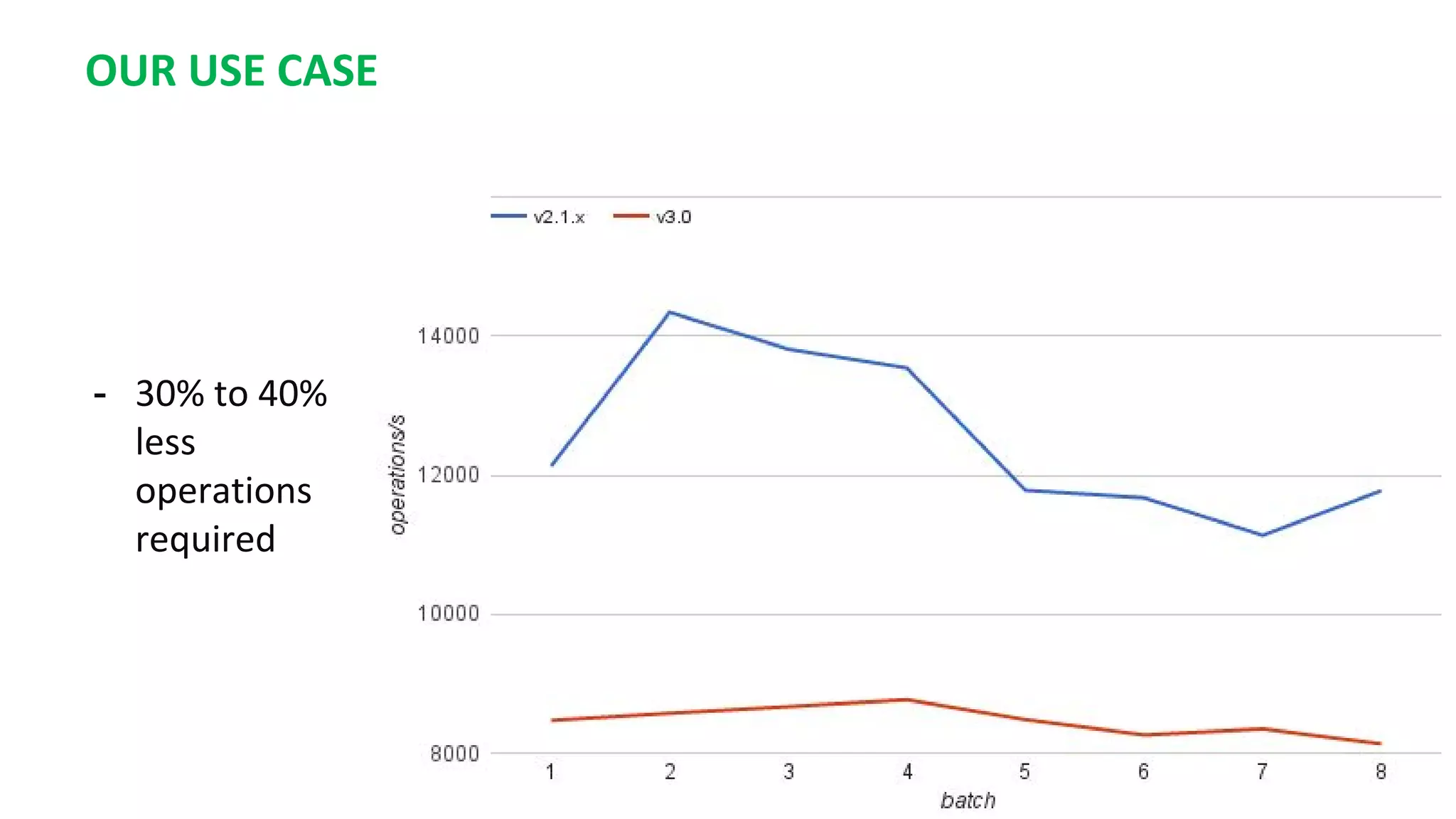

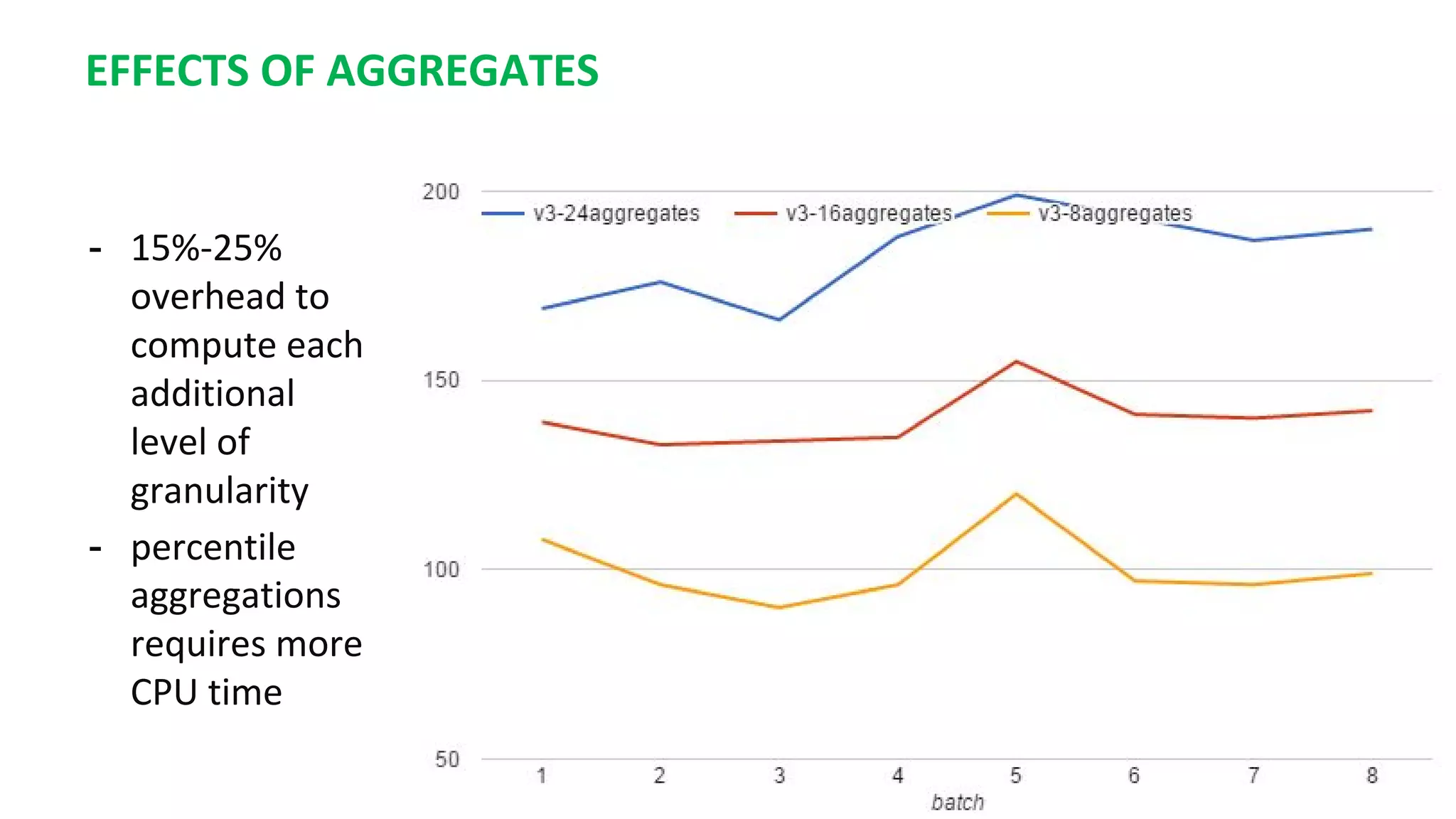

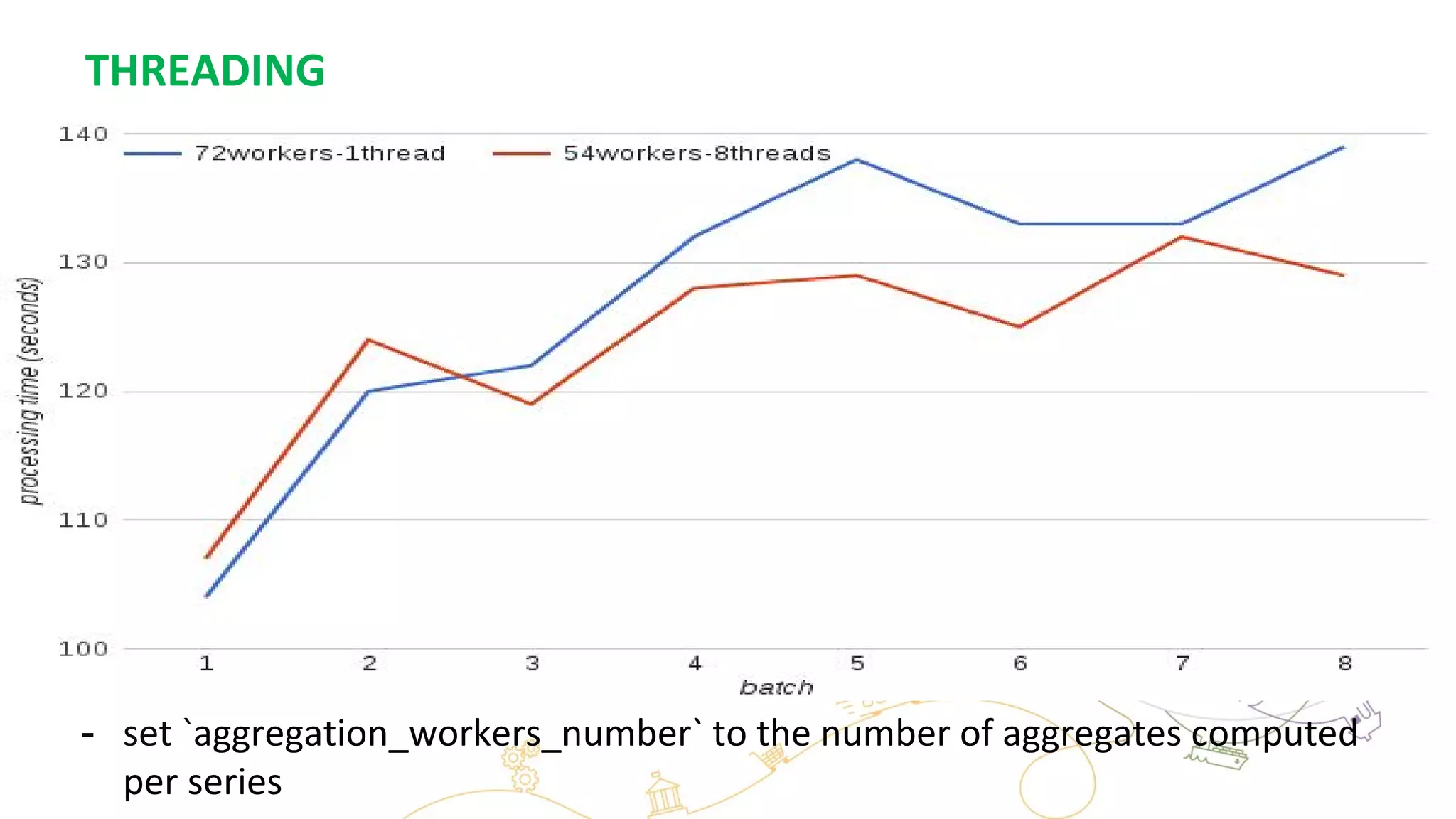

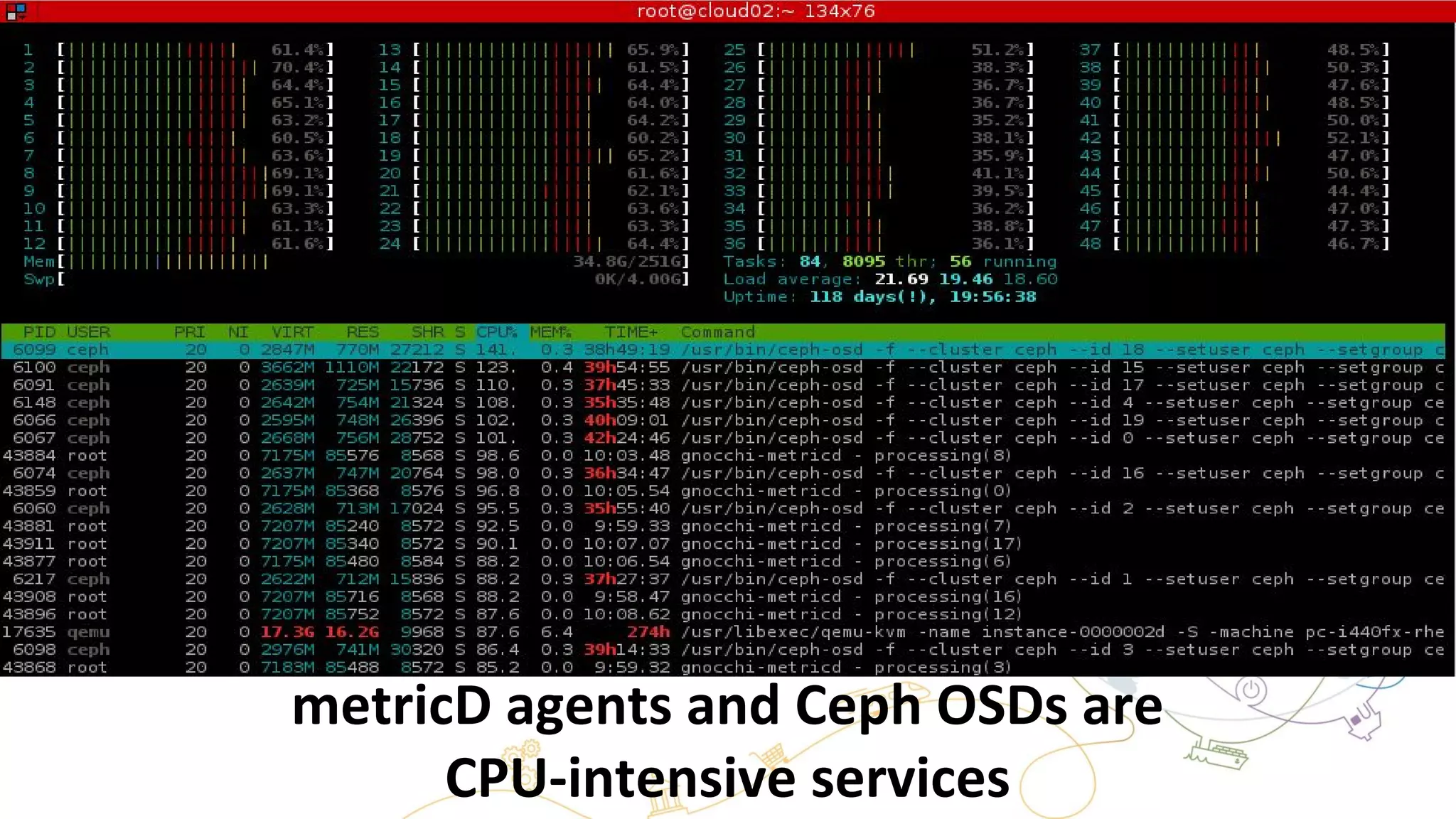

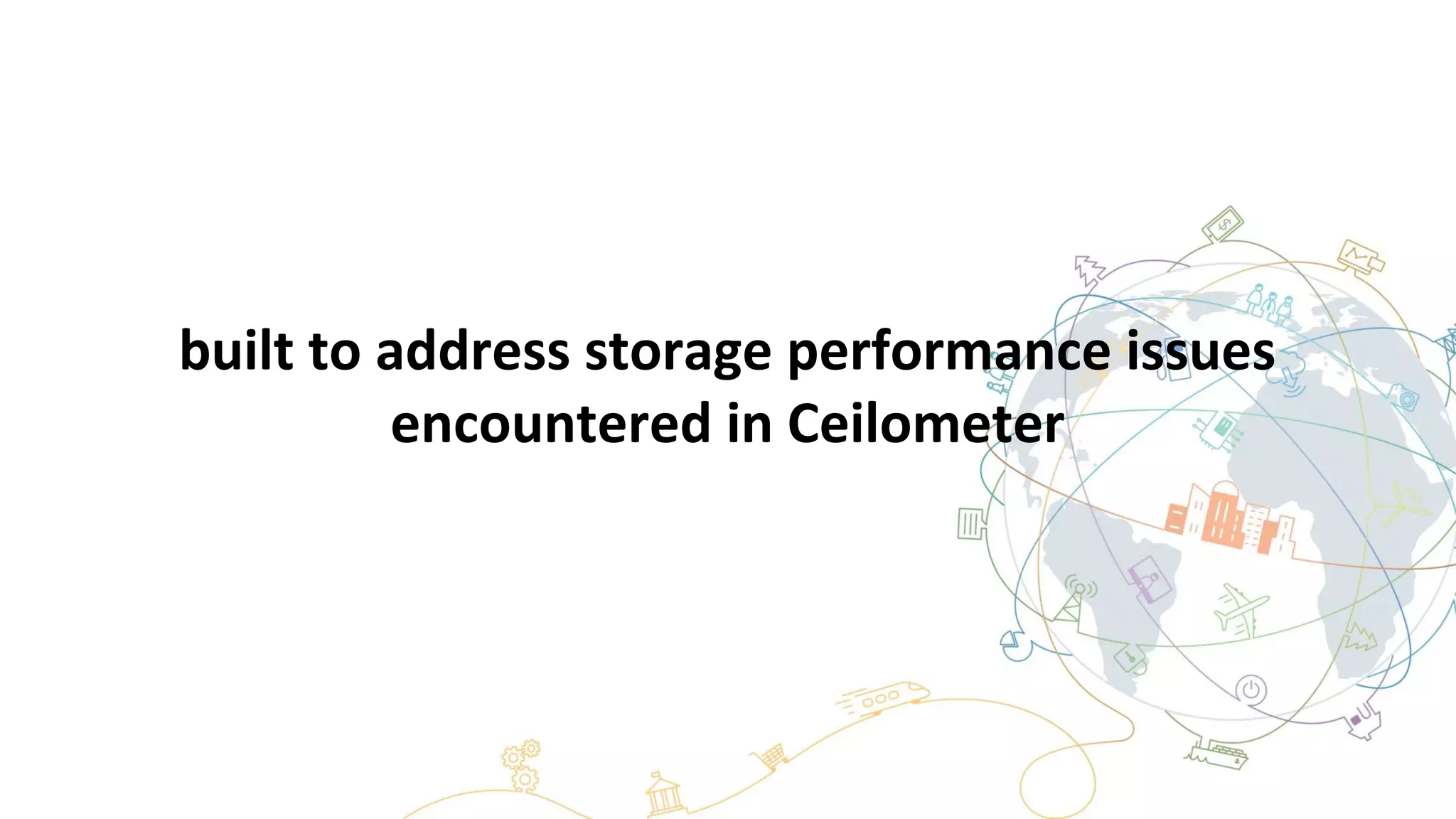

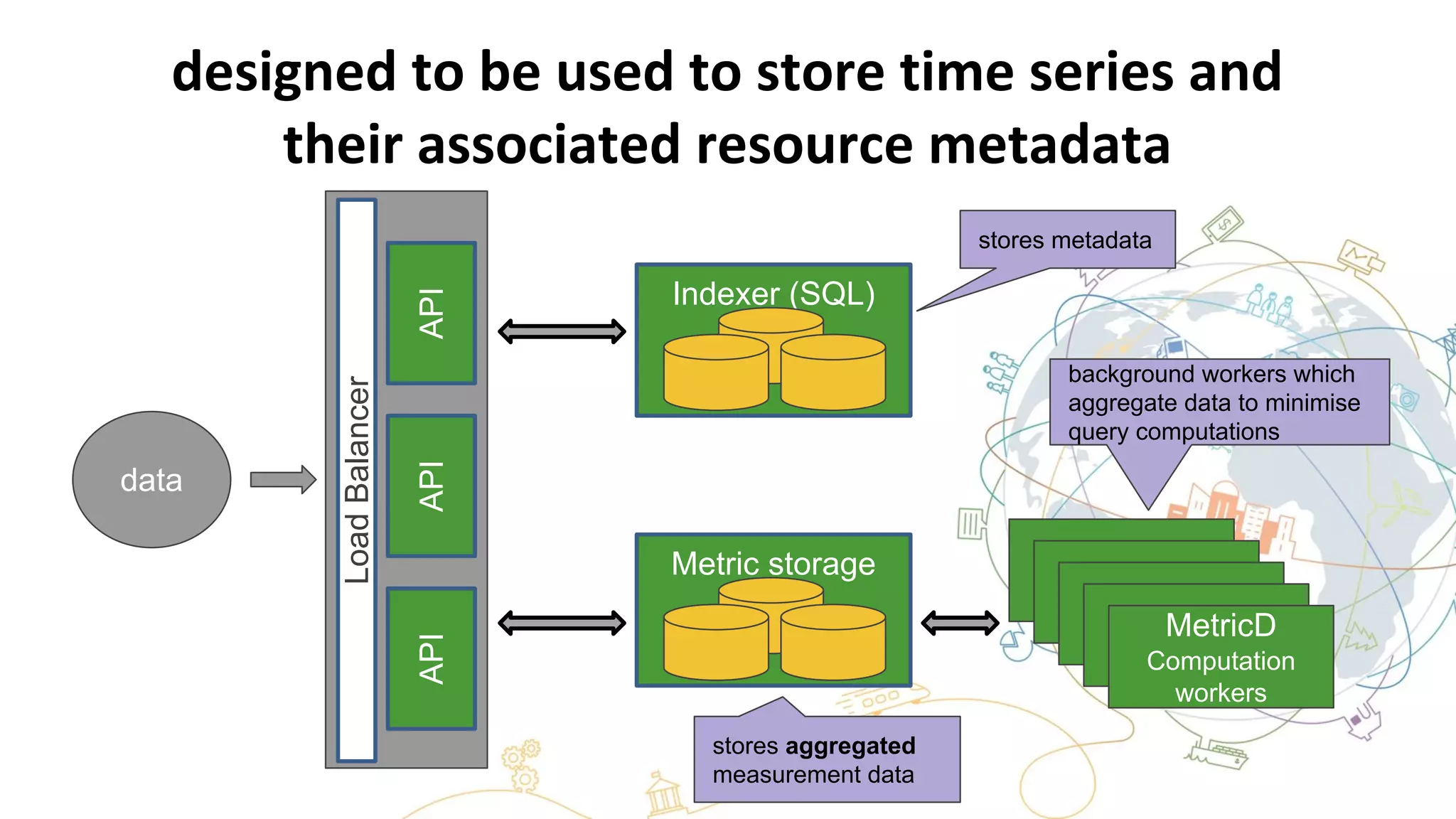

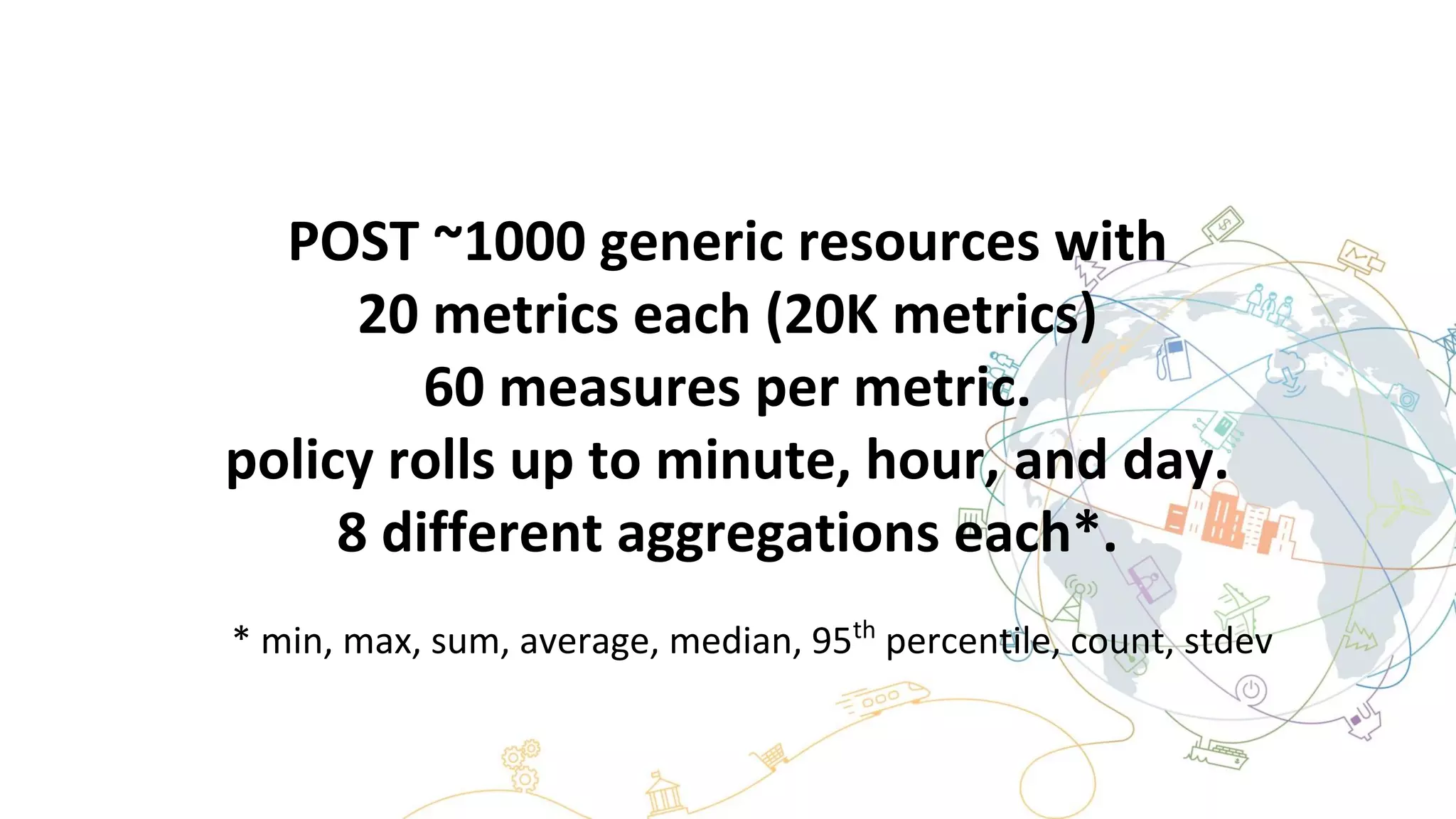

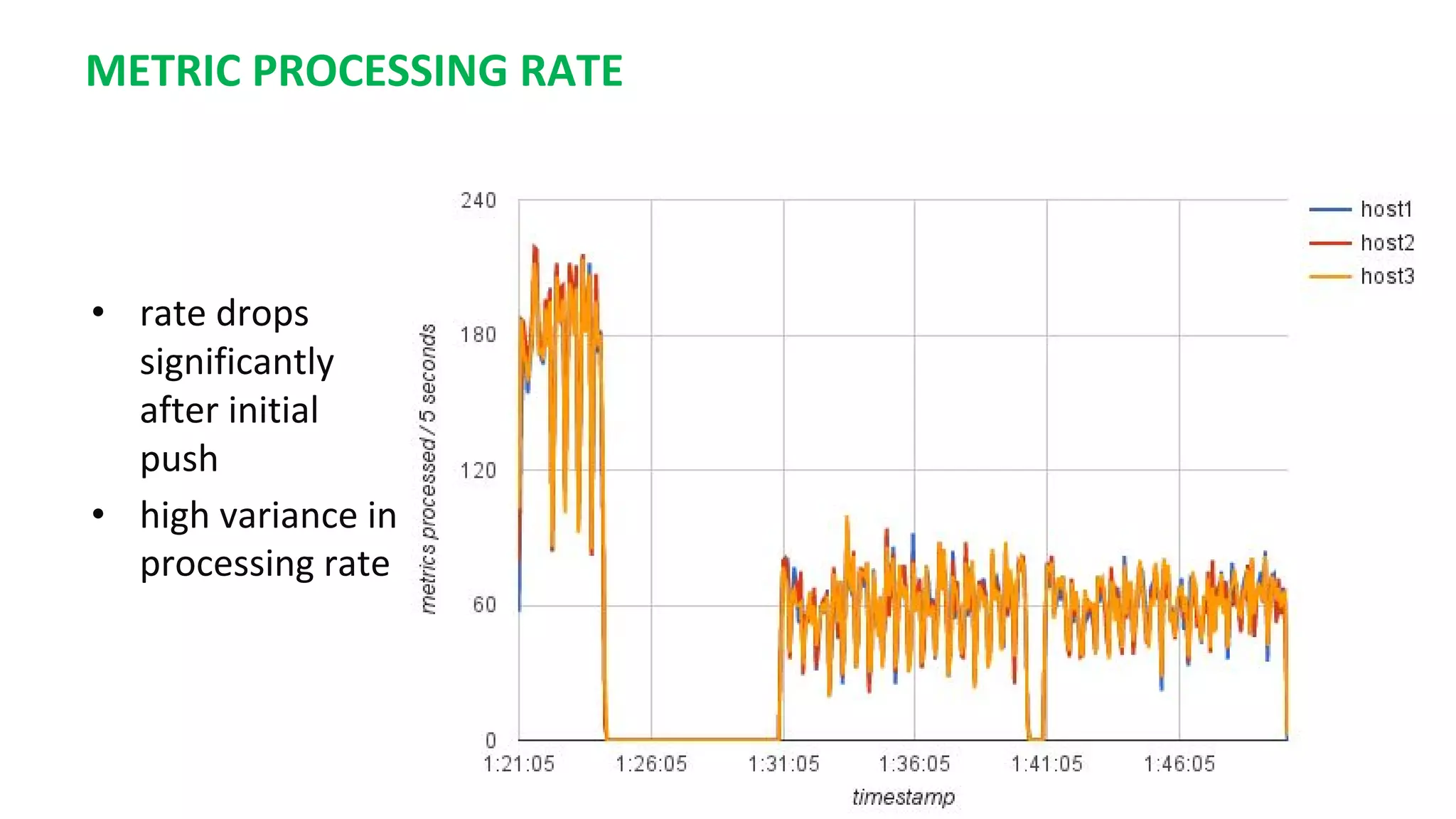

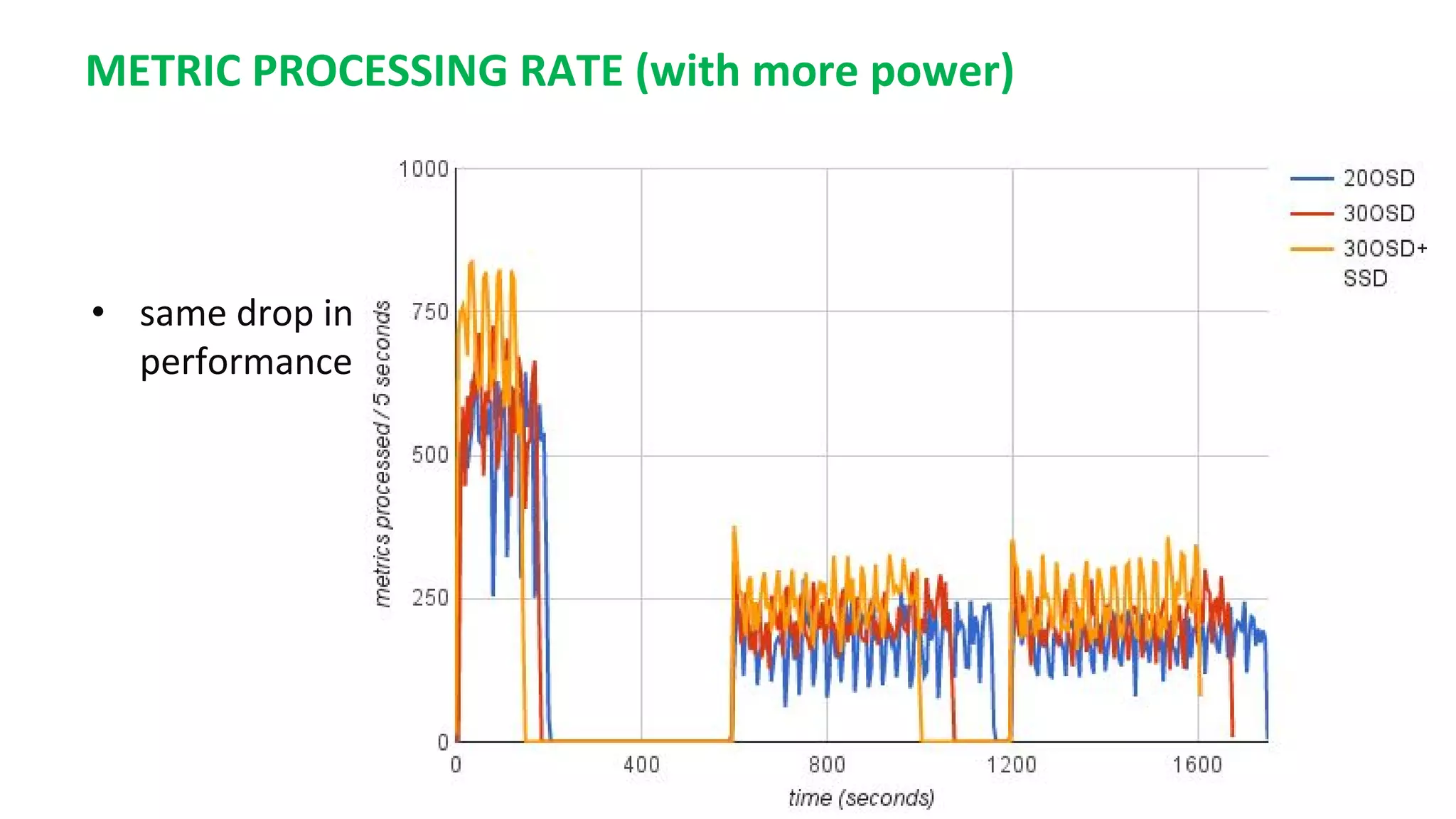

This document summarizes the author's experience optimizing Gnocchi, an open source time-series database, to store metrics for hundreds of thousands of resources over many months. The author describes improving performance by adding Ceph storage nodes, tuning Ceph configurations, minimizing I/O operations, and improving the storage format. Benchmark results show the new version achieves 50% higher write throughput, 40-60% faster computation times, 30-60% better overall performance, and 30-40% fewer operations. Usage hints are also provided to help optimize for different use cases.

![CEPH CONFIGURATIONS

original conf

[osd]

osd journal size = 10000

osd pool default size = 3

osd pool default min size = 2

osd crush chooseleaf type = 1

[osd]

osd journal size = 10000

osd pool default size = 3

osd pool default min size = 2

osd crush chooseleaf type = 1

osd op threads = 36

filestore op threads = 36

filestore queue max ops = 50000

filestore queue committing max

ops = 50000

journal max write entries = 50000

journal queue max ops = 50000

good enough conf

http://ceph.com/pgcalc/ to calculate required # of placement groups](https://image.slidesharecdn.com/gnocchiv3brownbag-161101165626/75/Gnocchi-v3-brownbag-19-2048.jpg)