This document discusses frameworks in the context of big data solutions. It makes several key points:

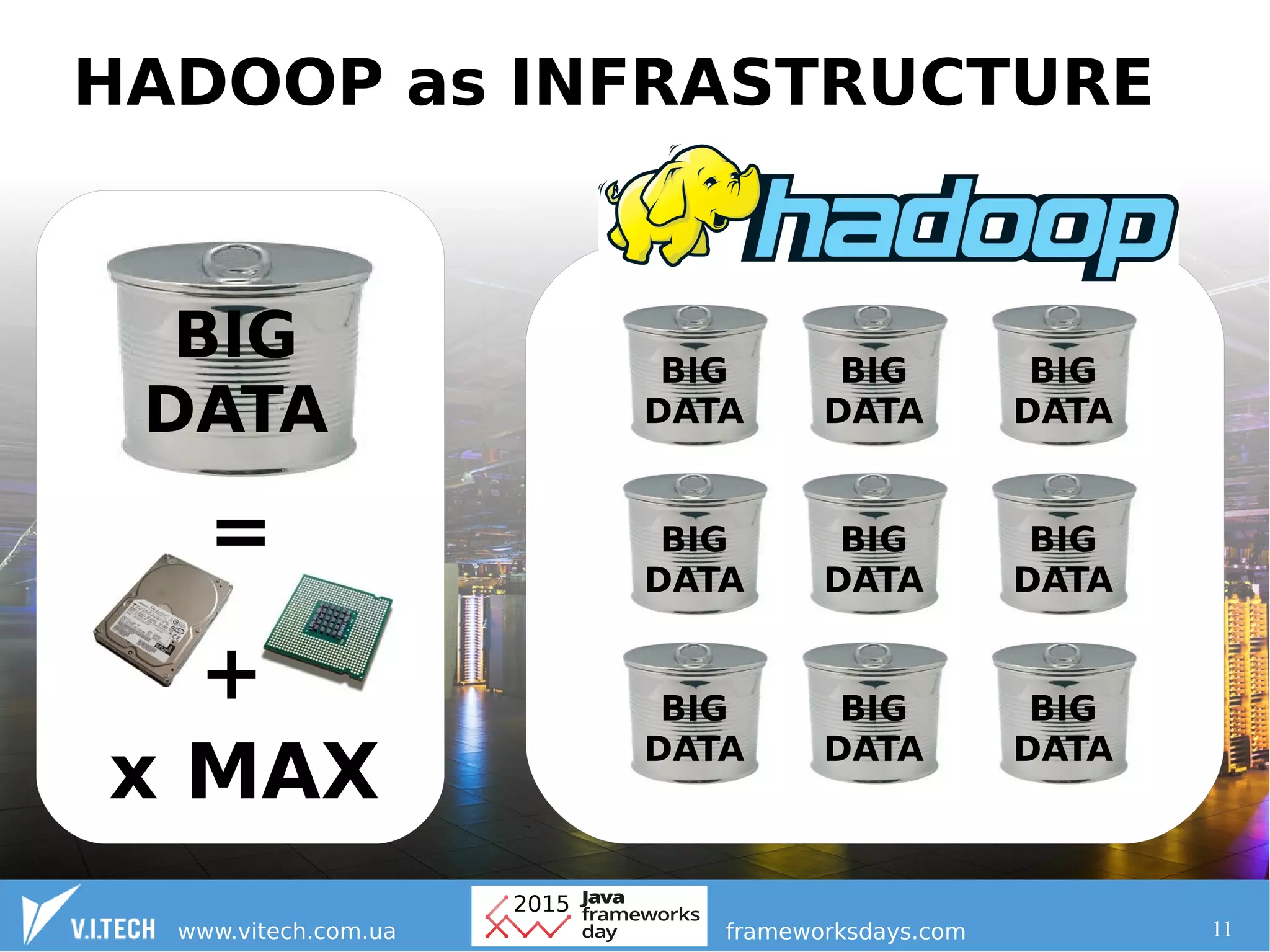

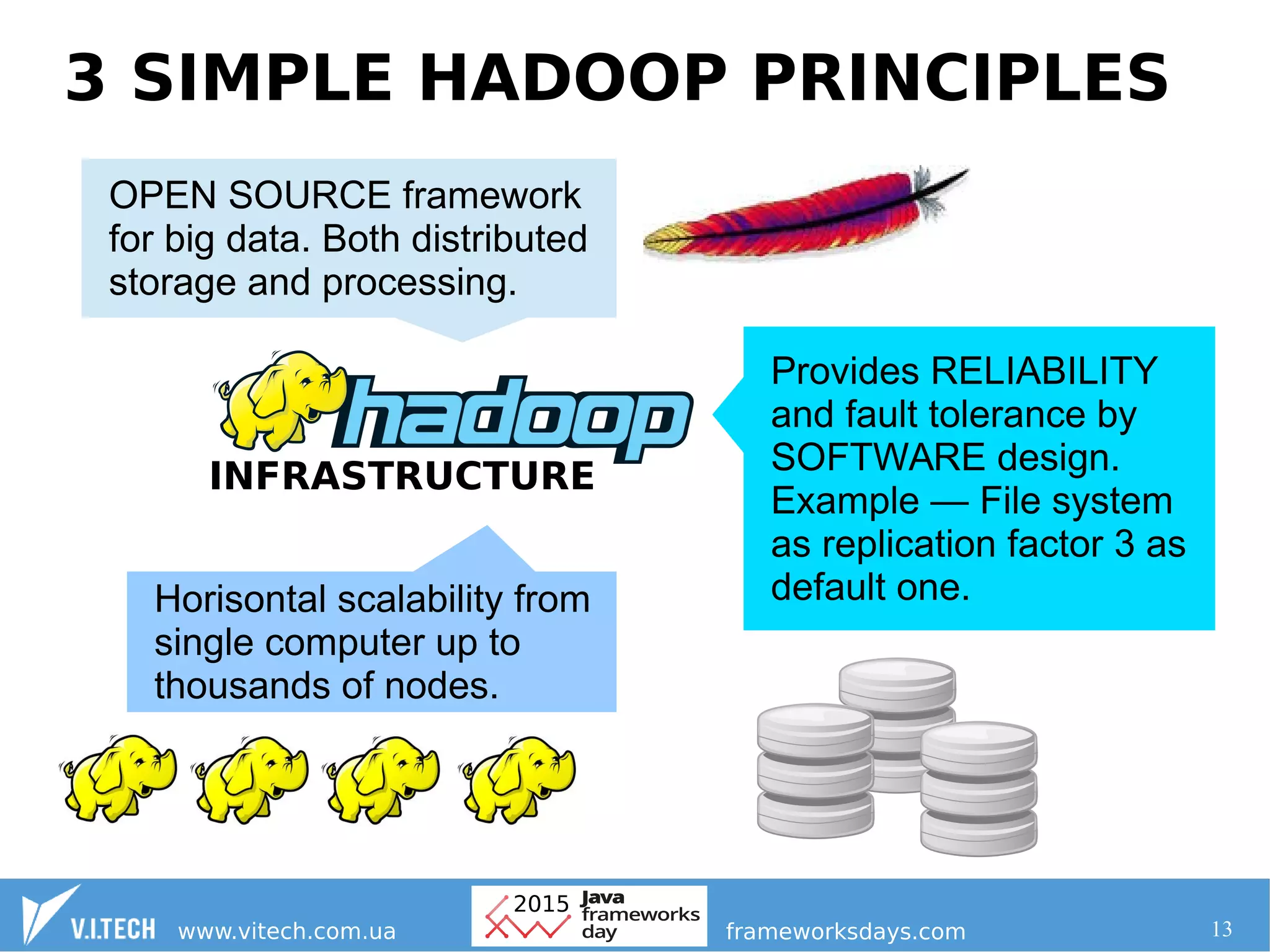

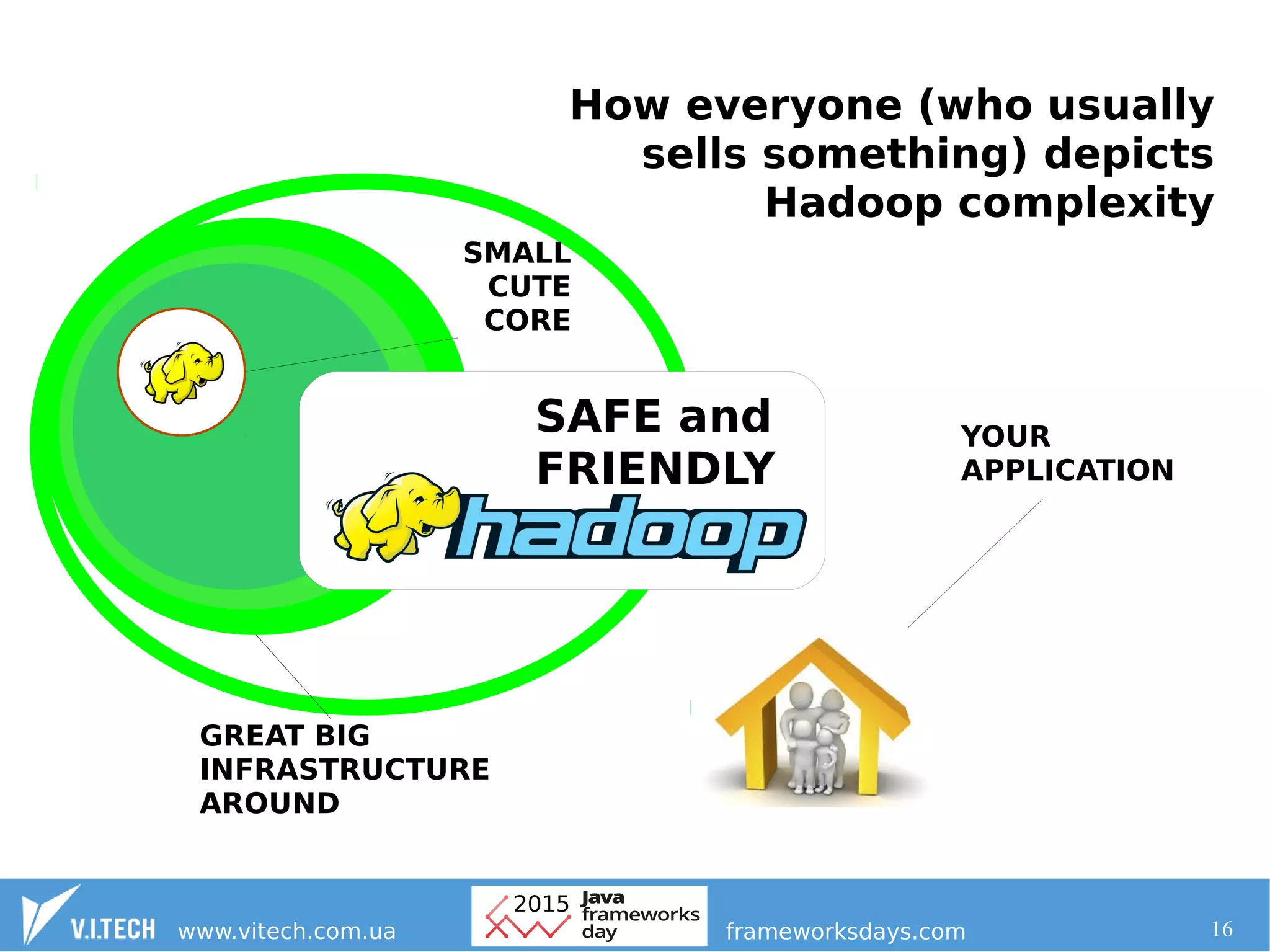

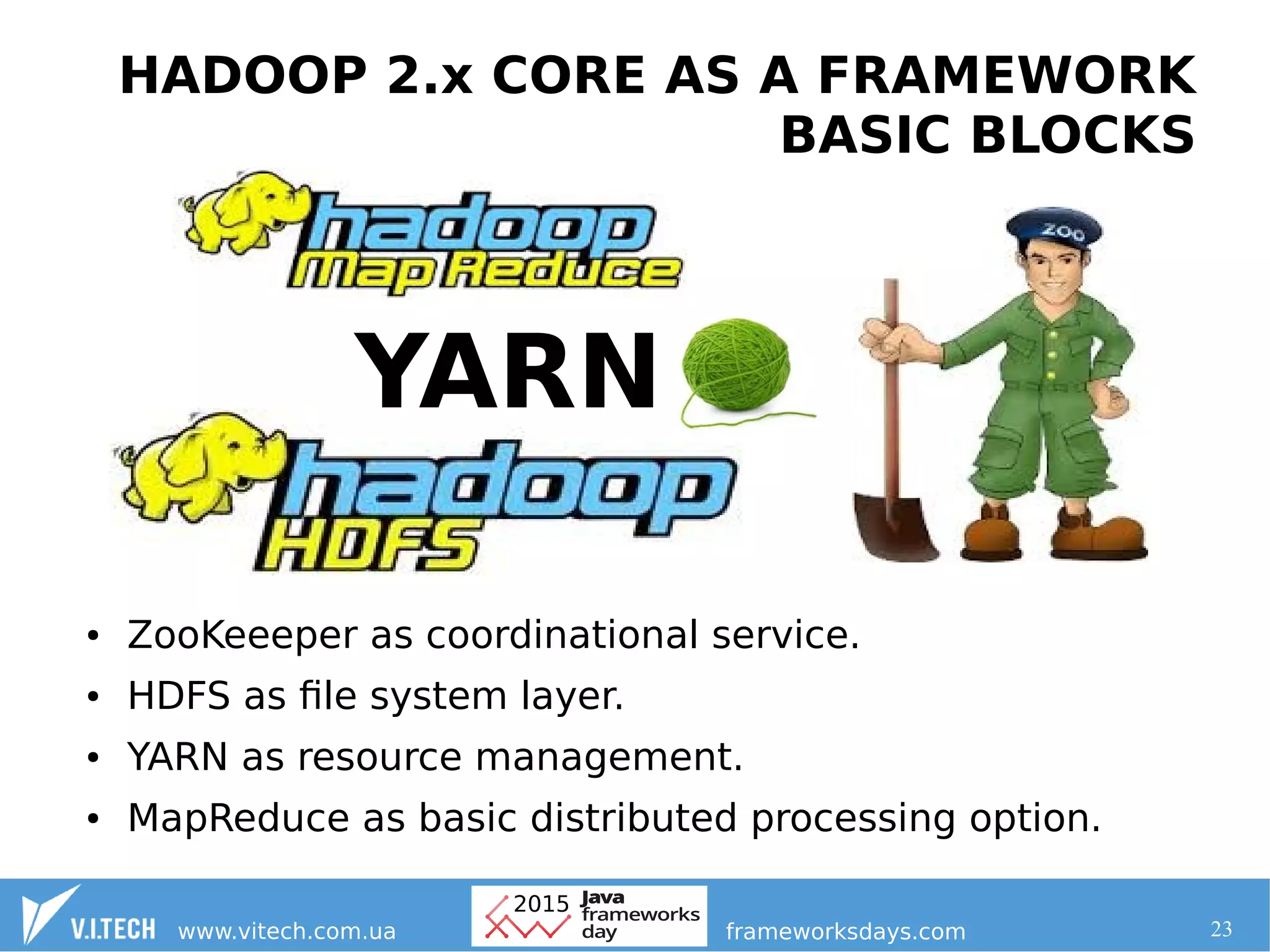

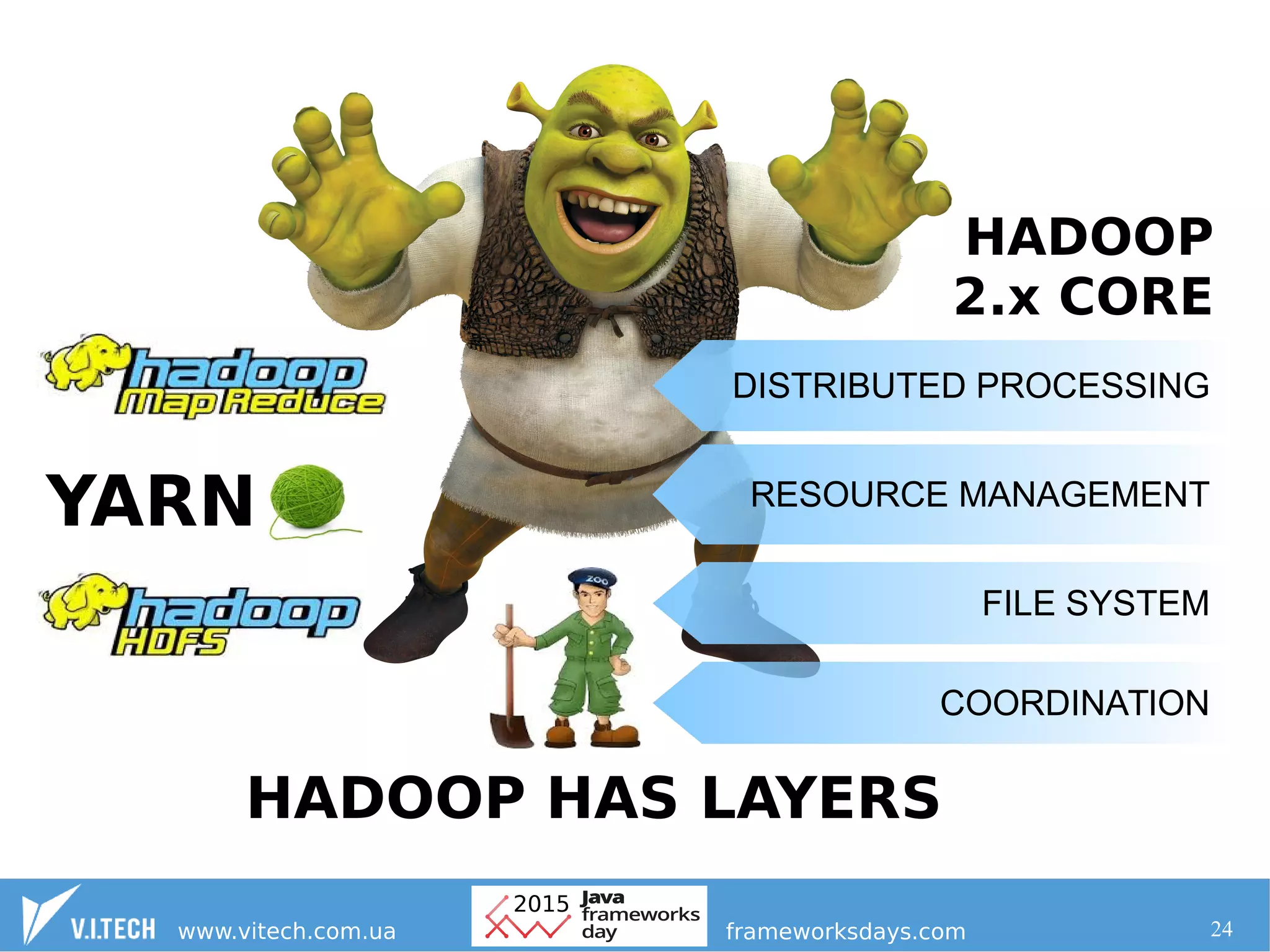

1. Hadoop provides a stable core infrastructure for building big data solutions, with layers for resource management, distributed processing, file system, and coordination.

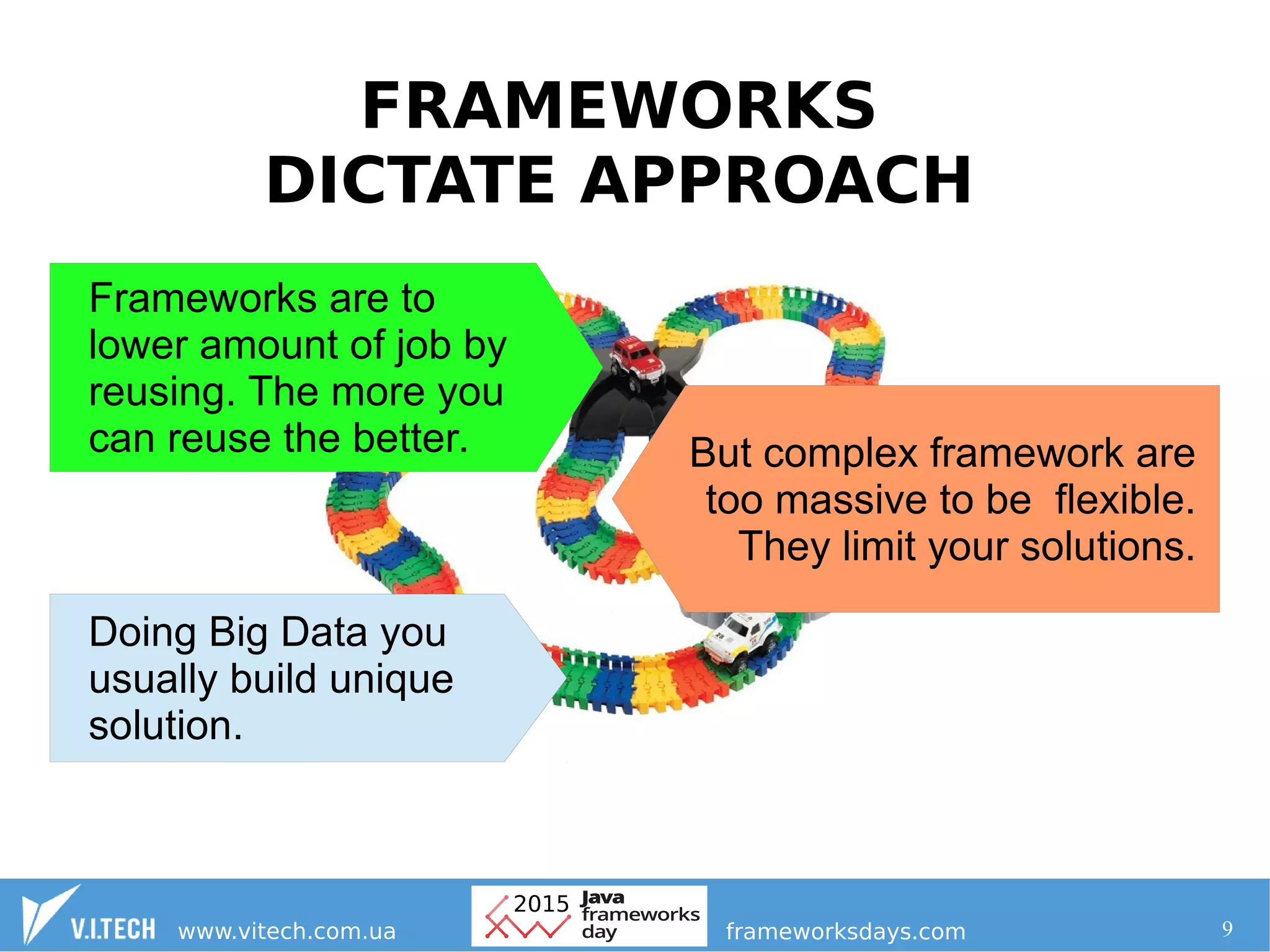

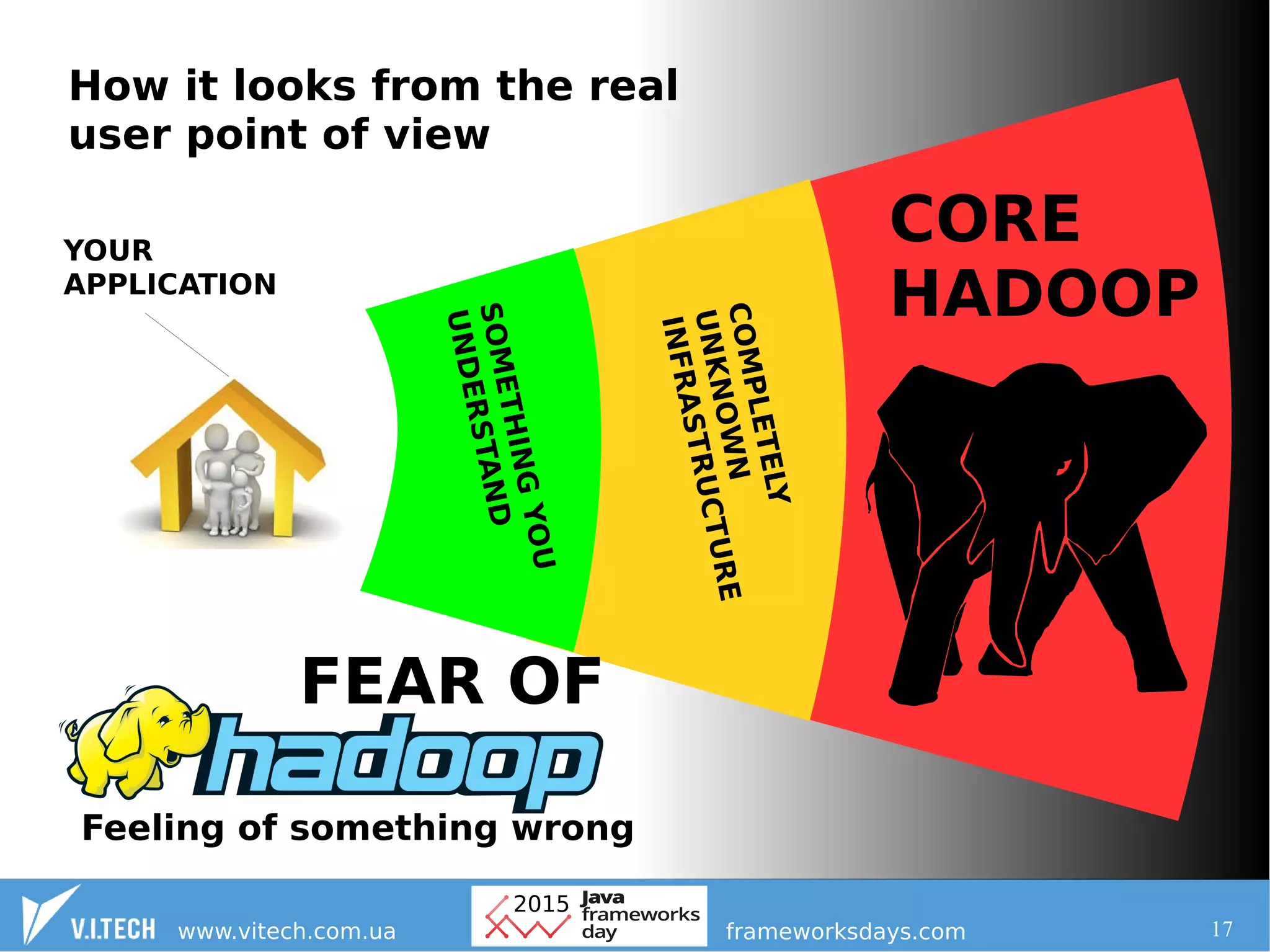

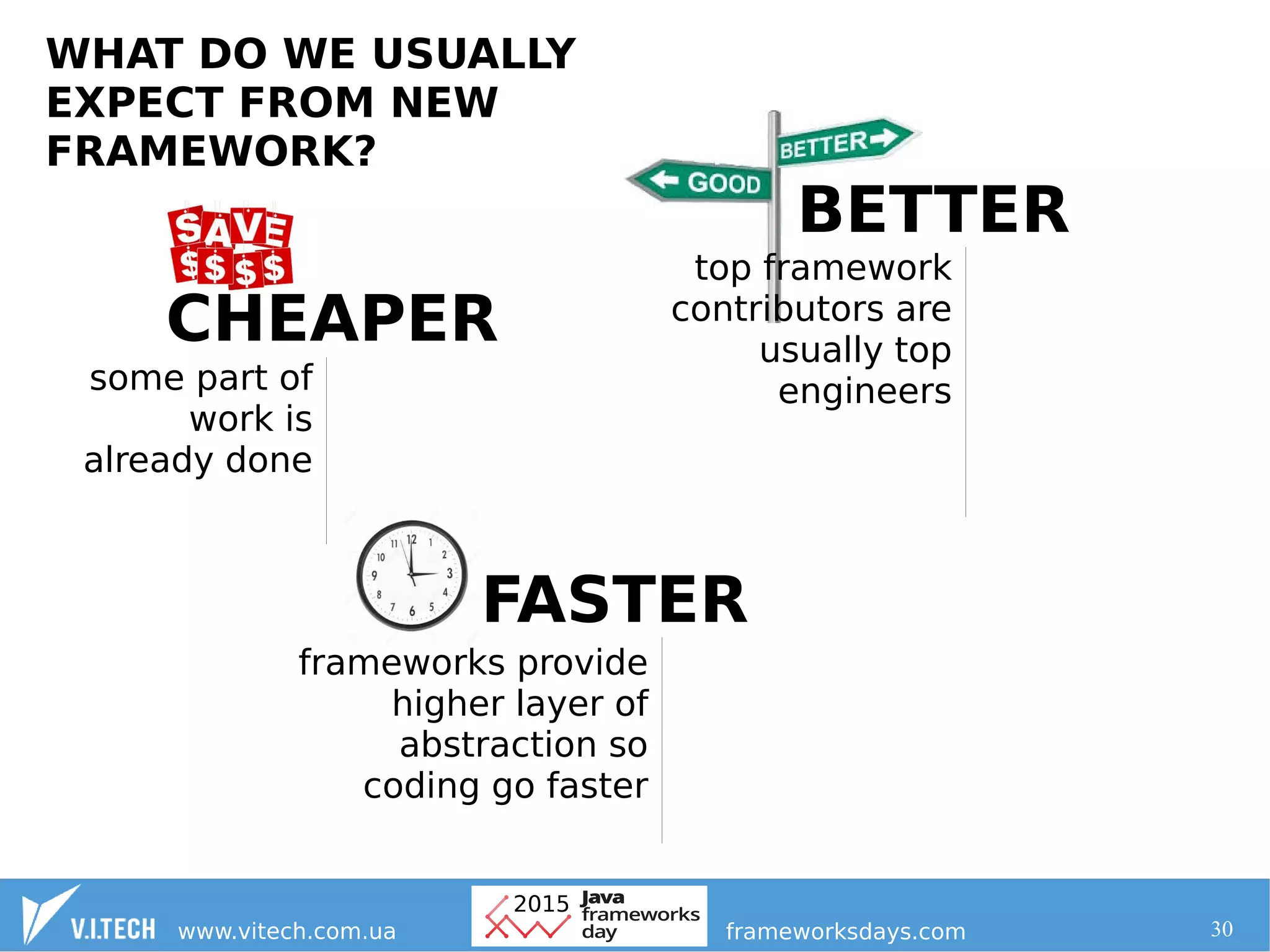

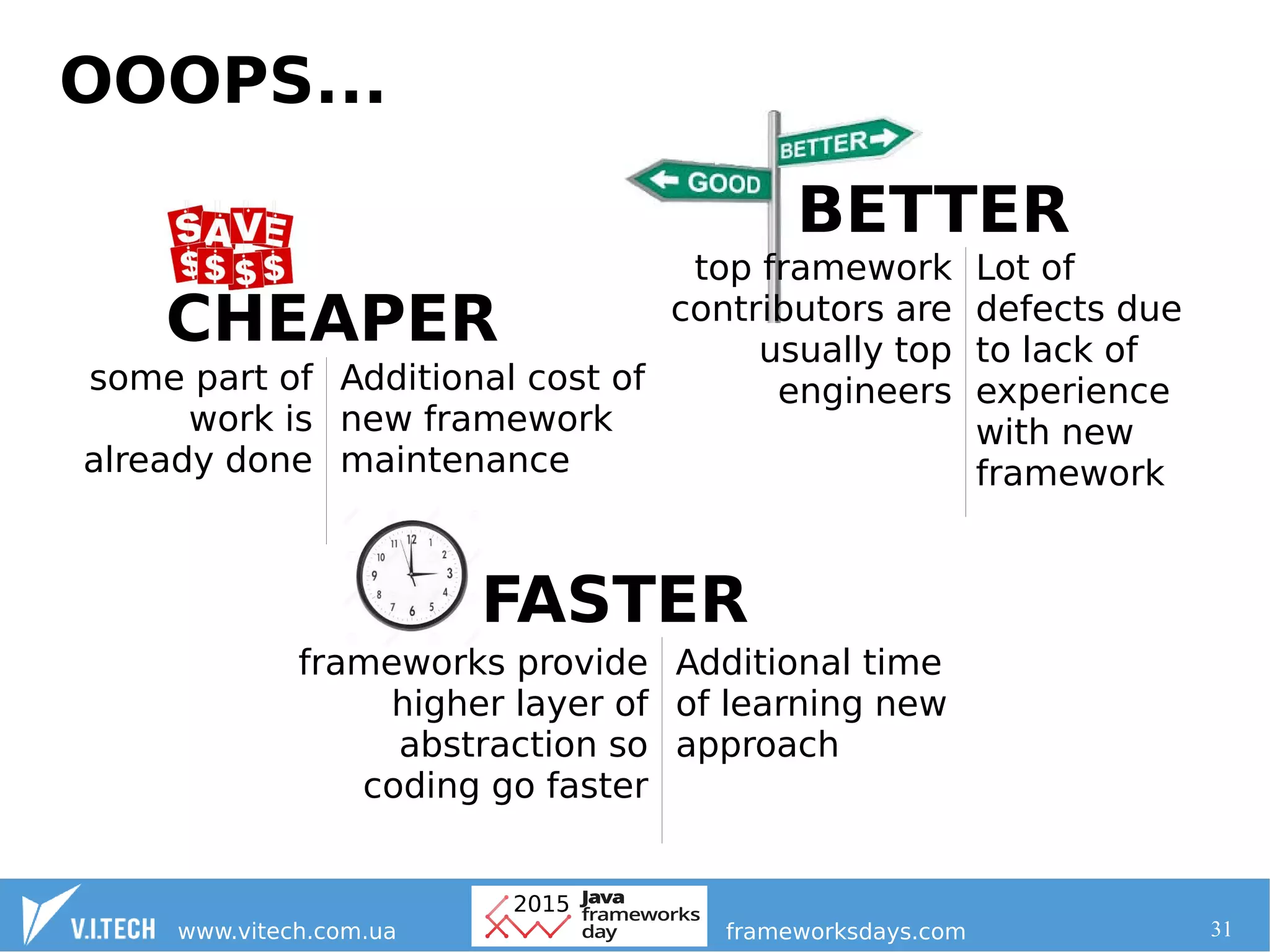

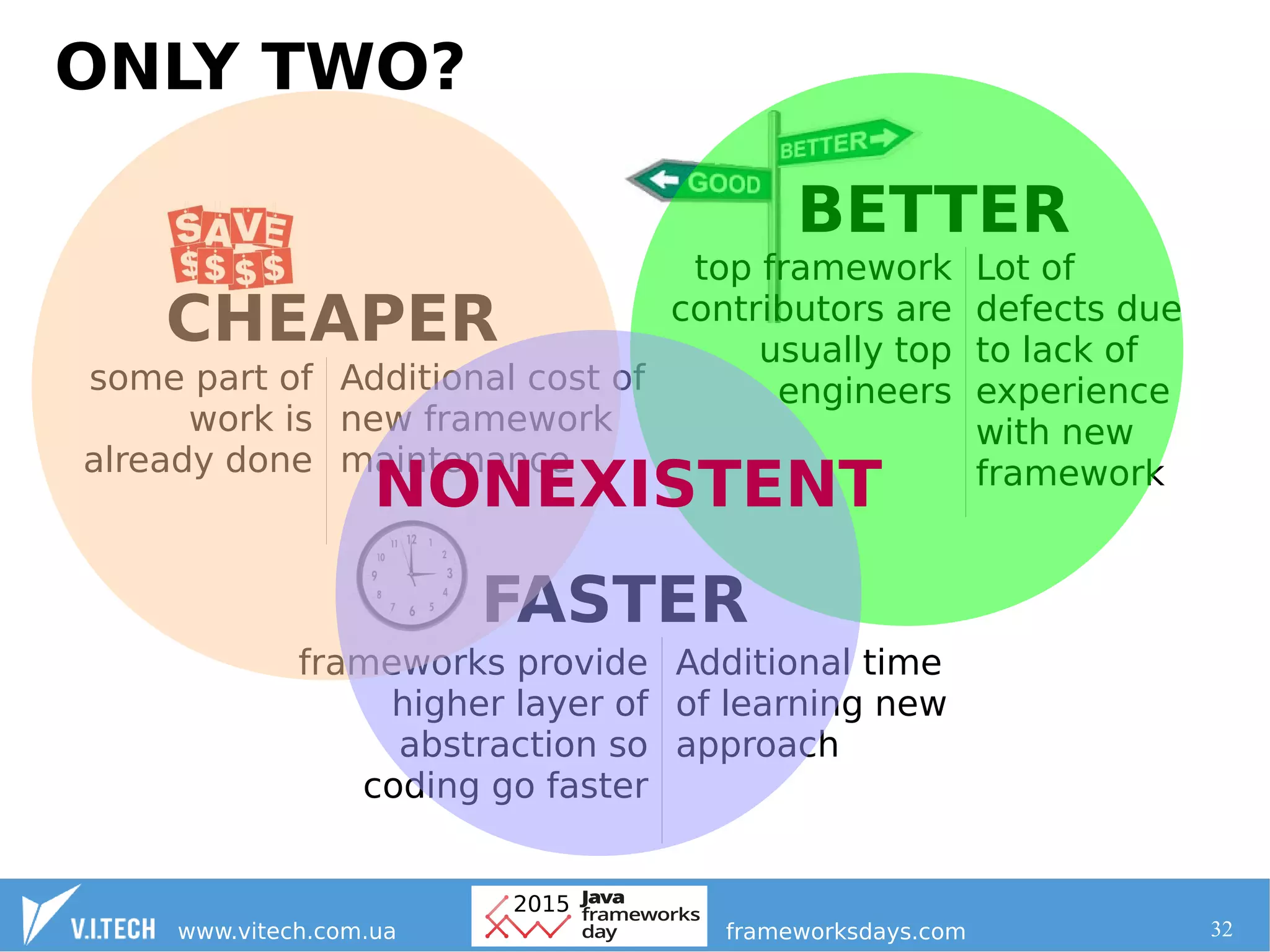

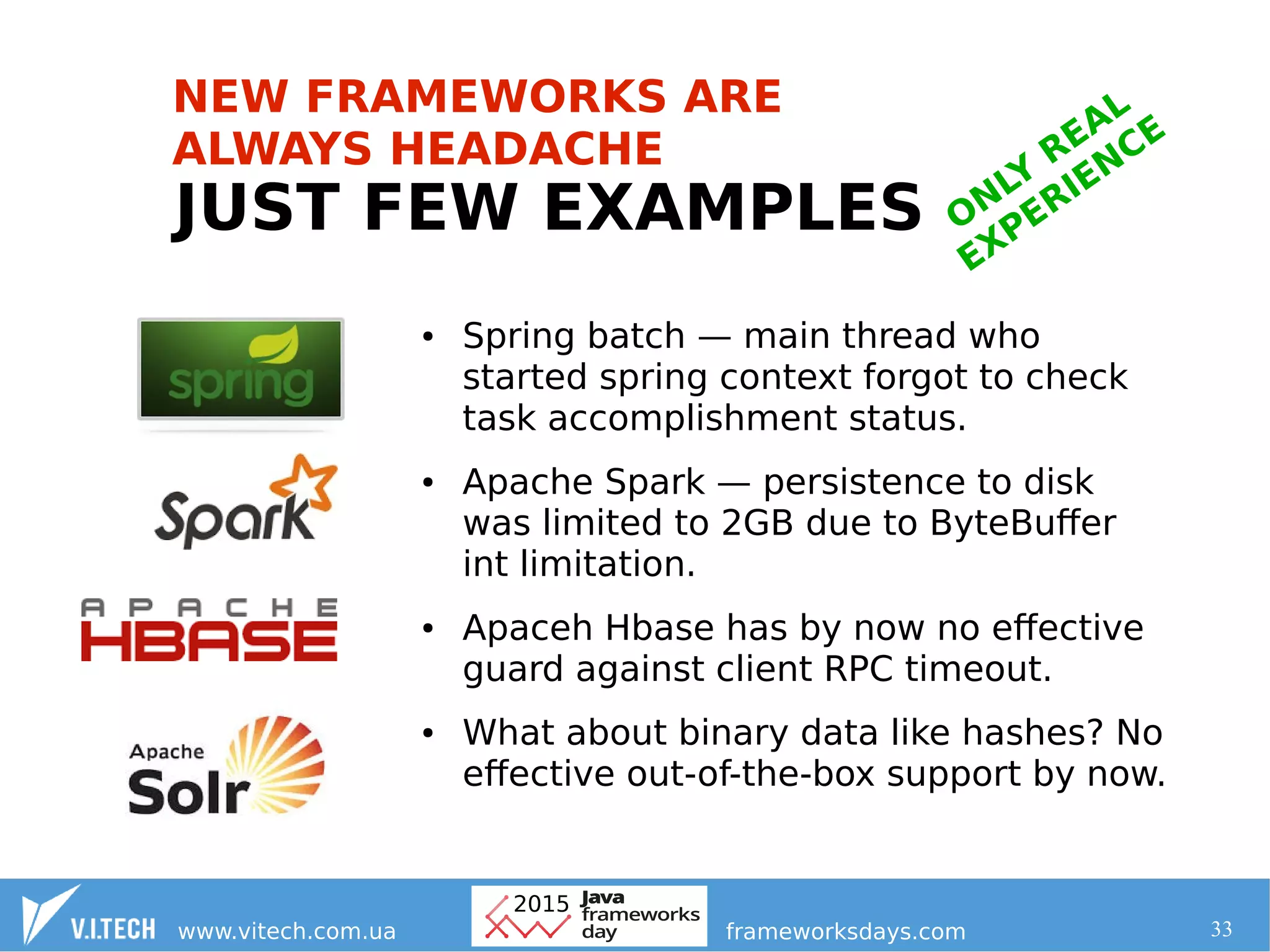

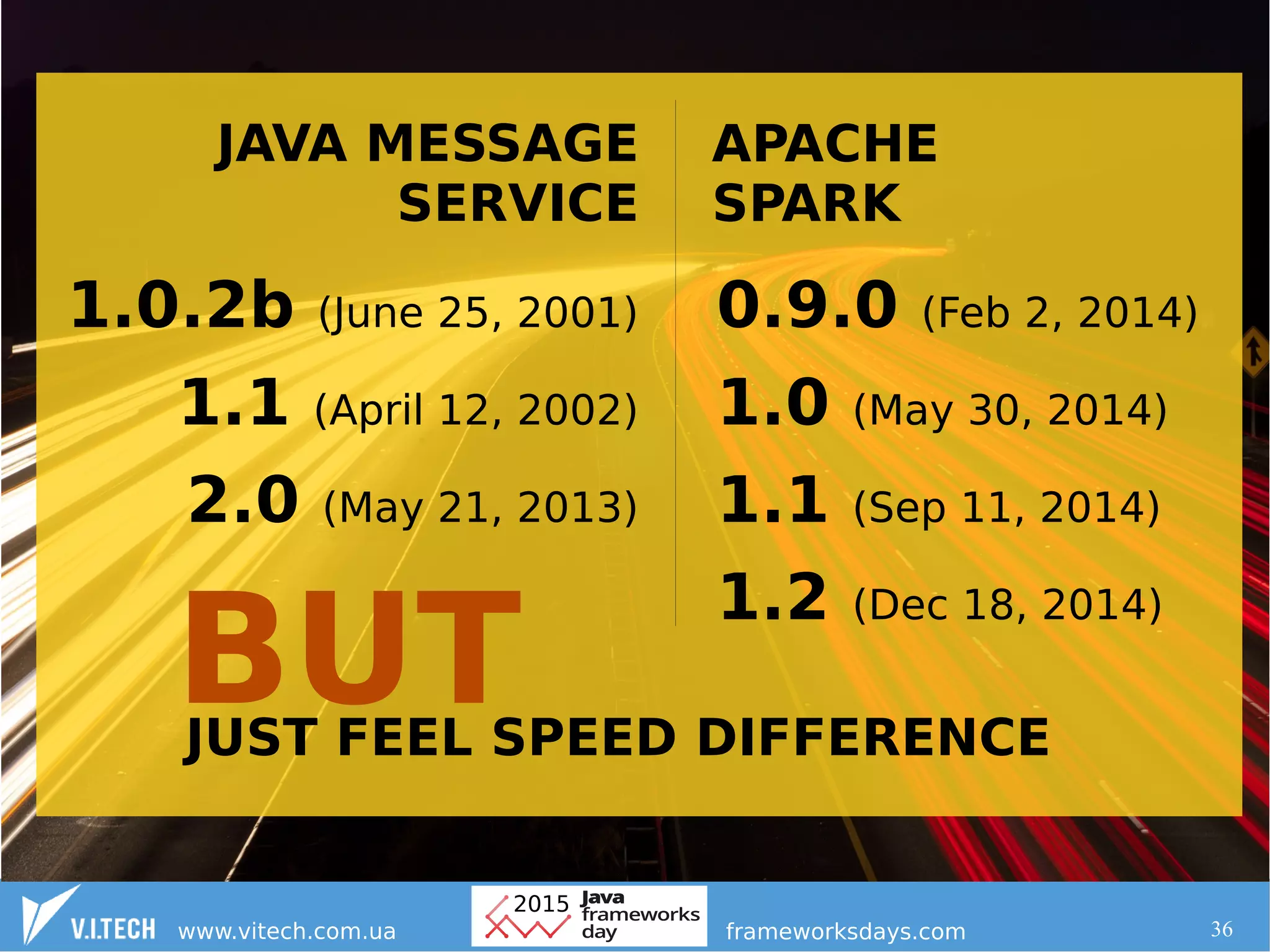

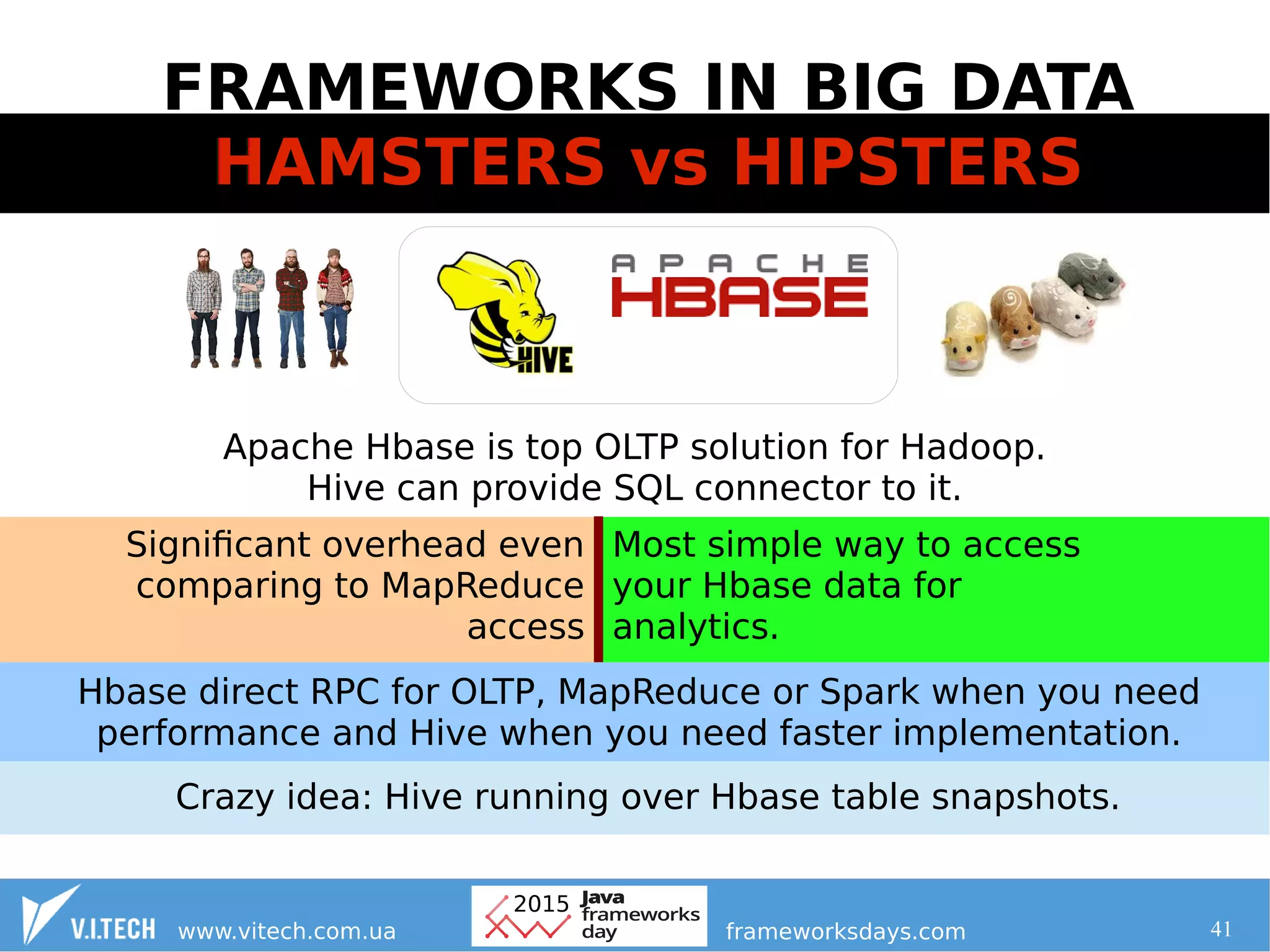

2. When going beyond the Hadoop core, frameworks should be selected that have a stable approach, flexible functionality, and an active community to contribute to existing solutions rather than creating new ones.

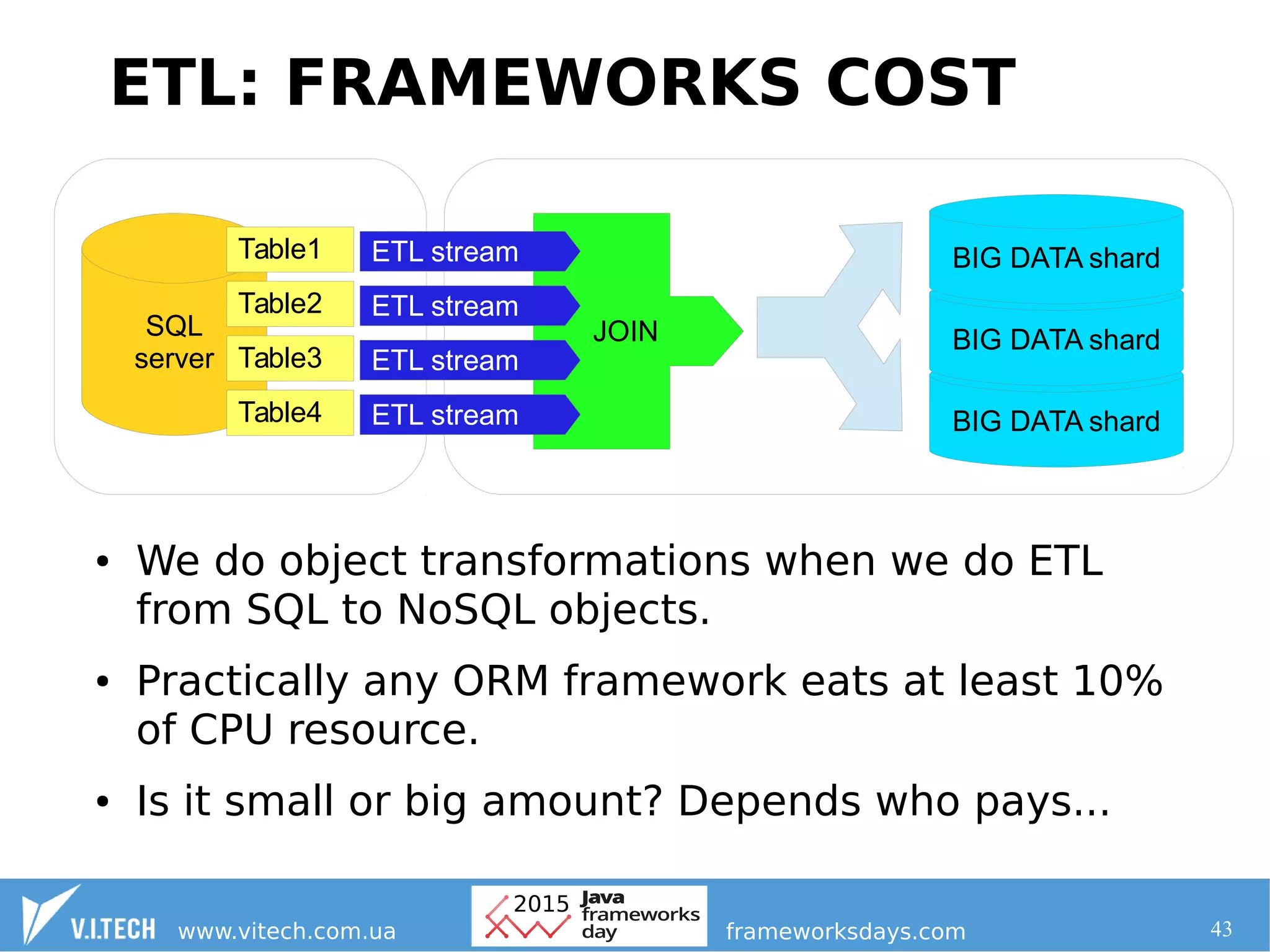

3. Performance overhead from frameworks is directly paid for with additional computing resources in large clusters, so frameworks should be chosen carefully based on their overhead. Creating new frameworks limits future flexibility the more users it has.