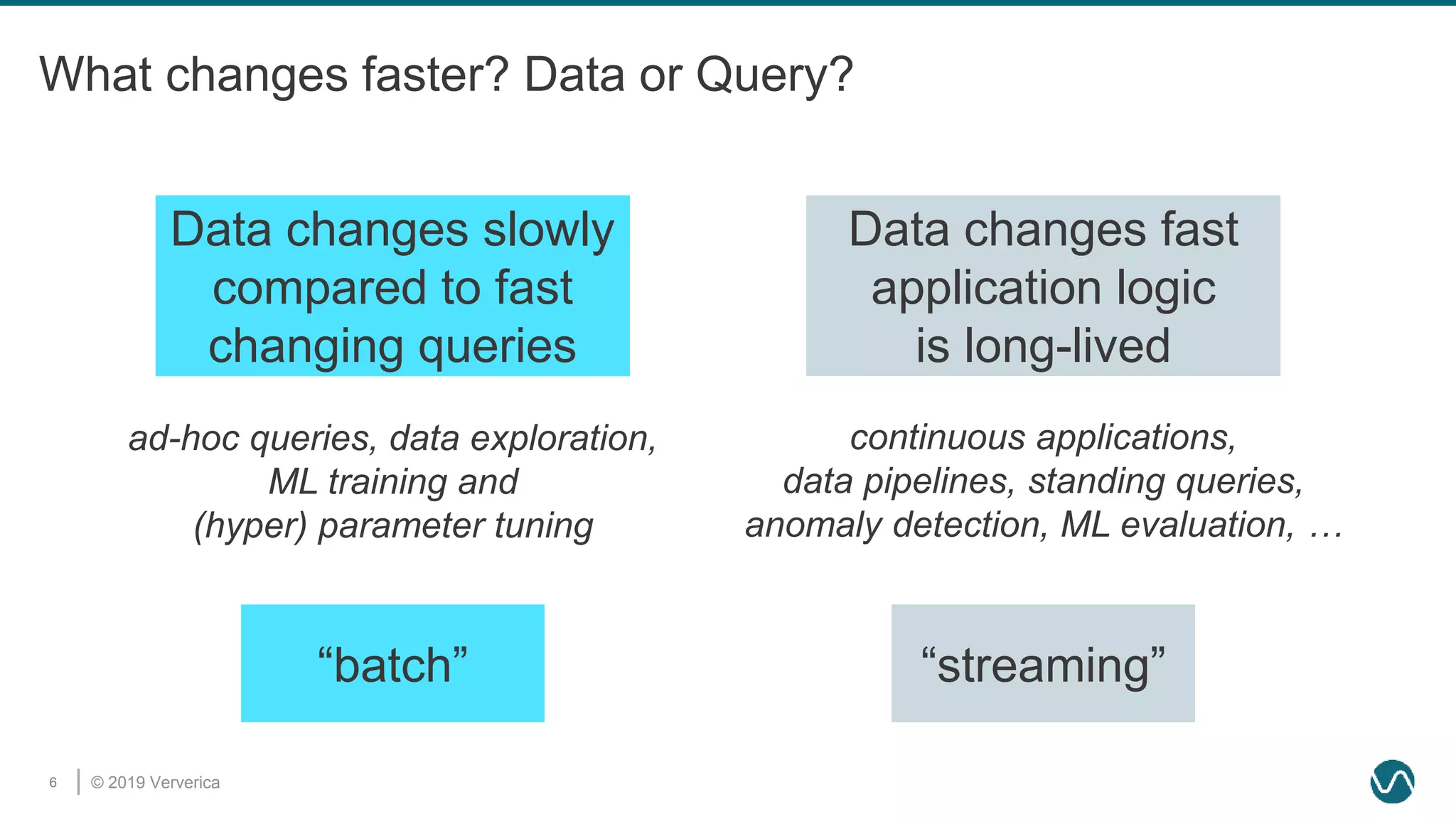

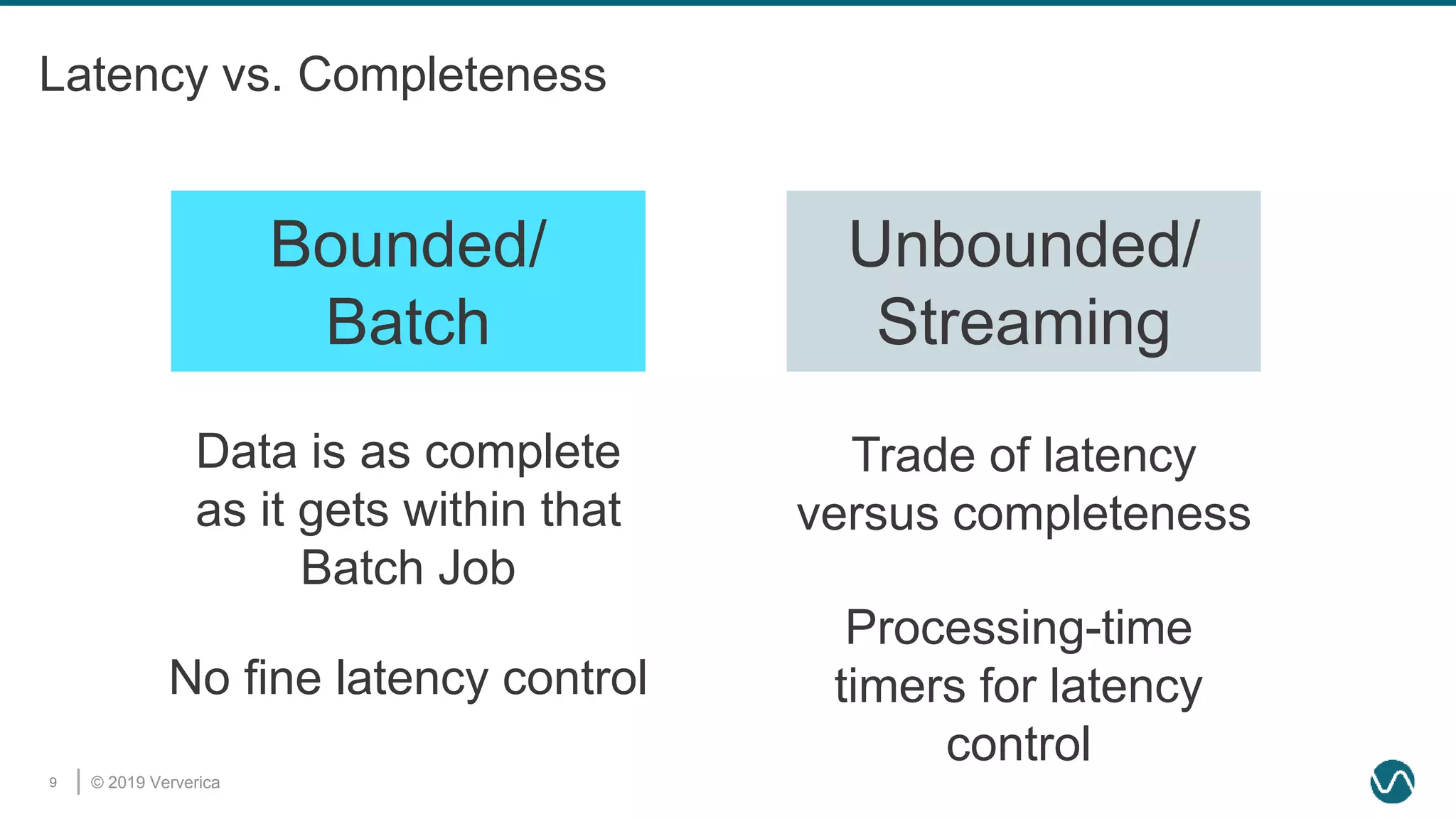

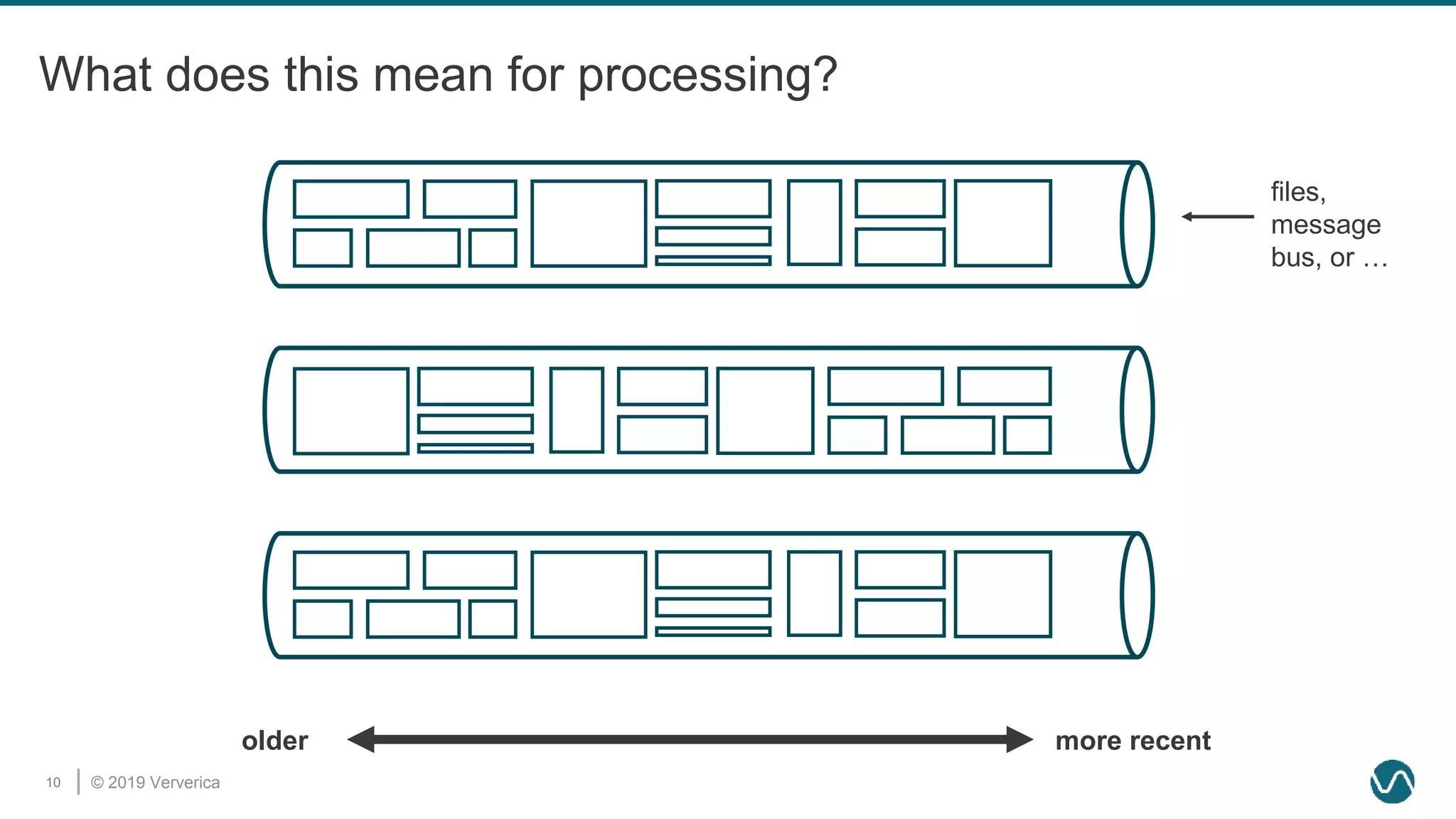

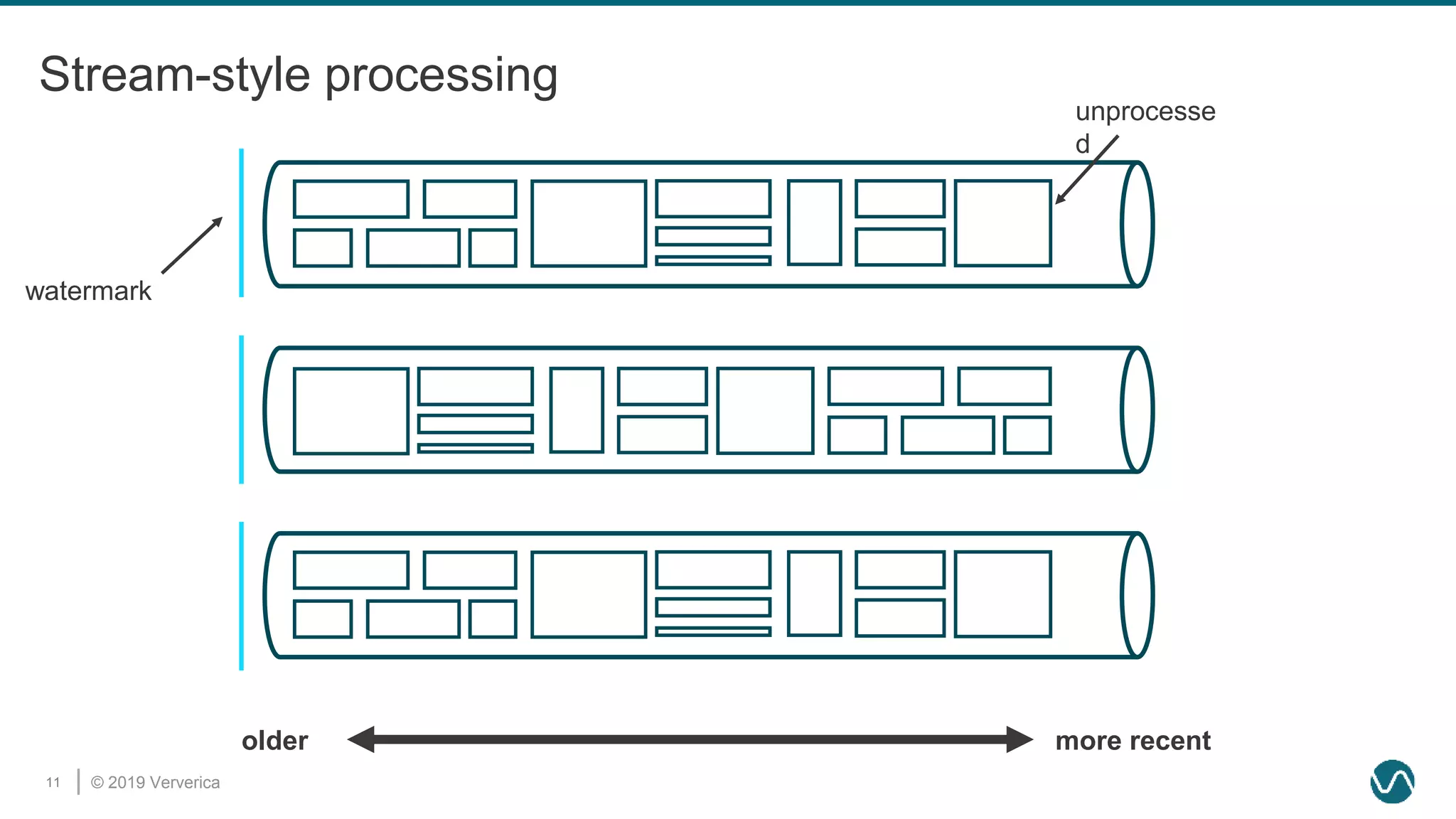

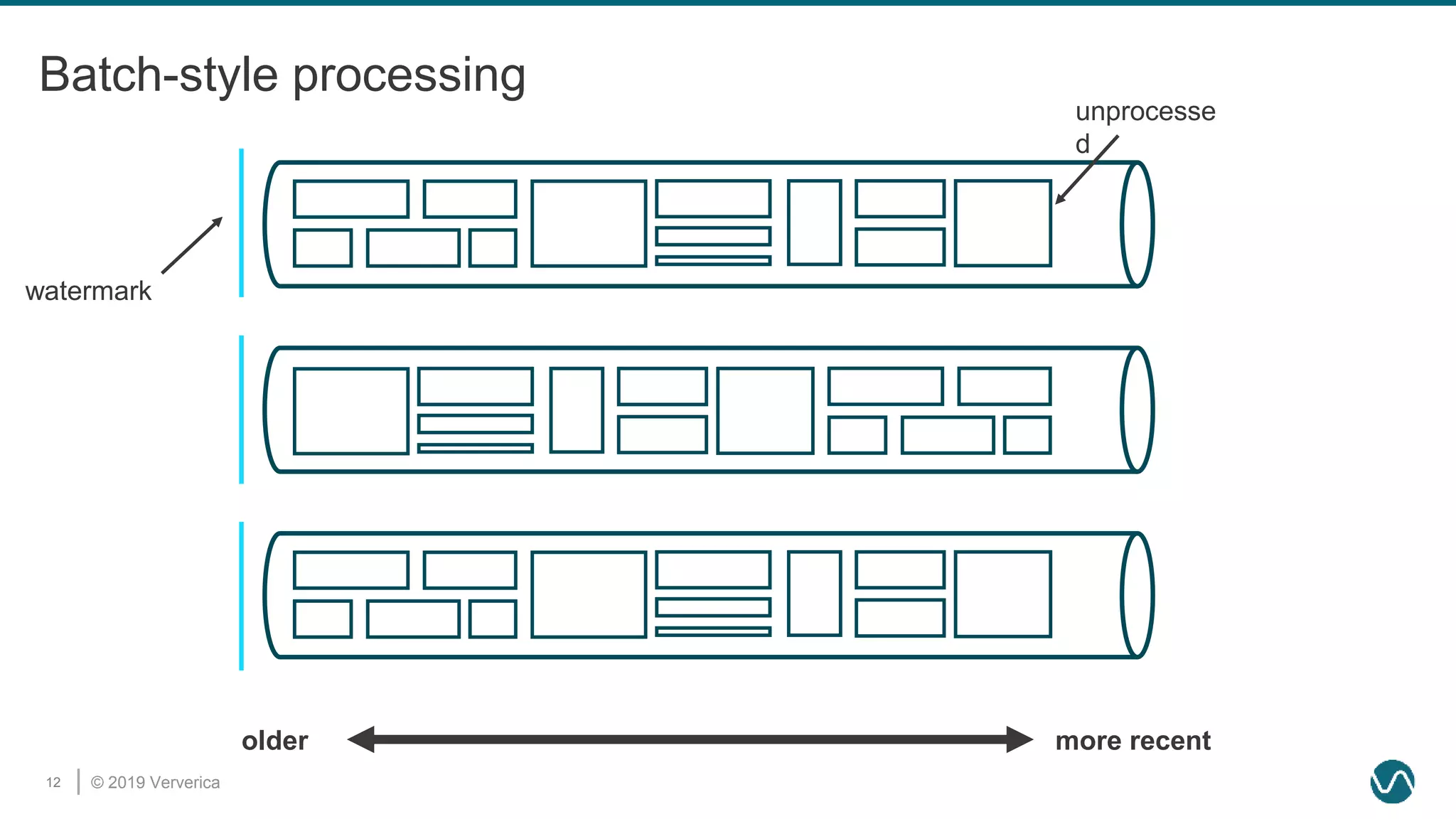

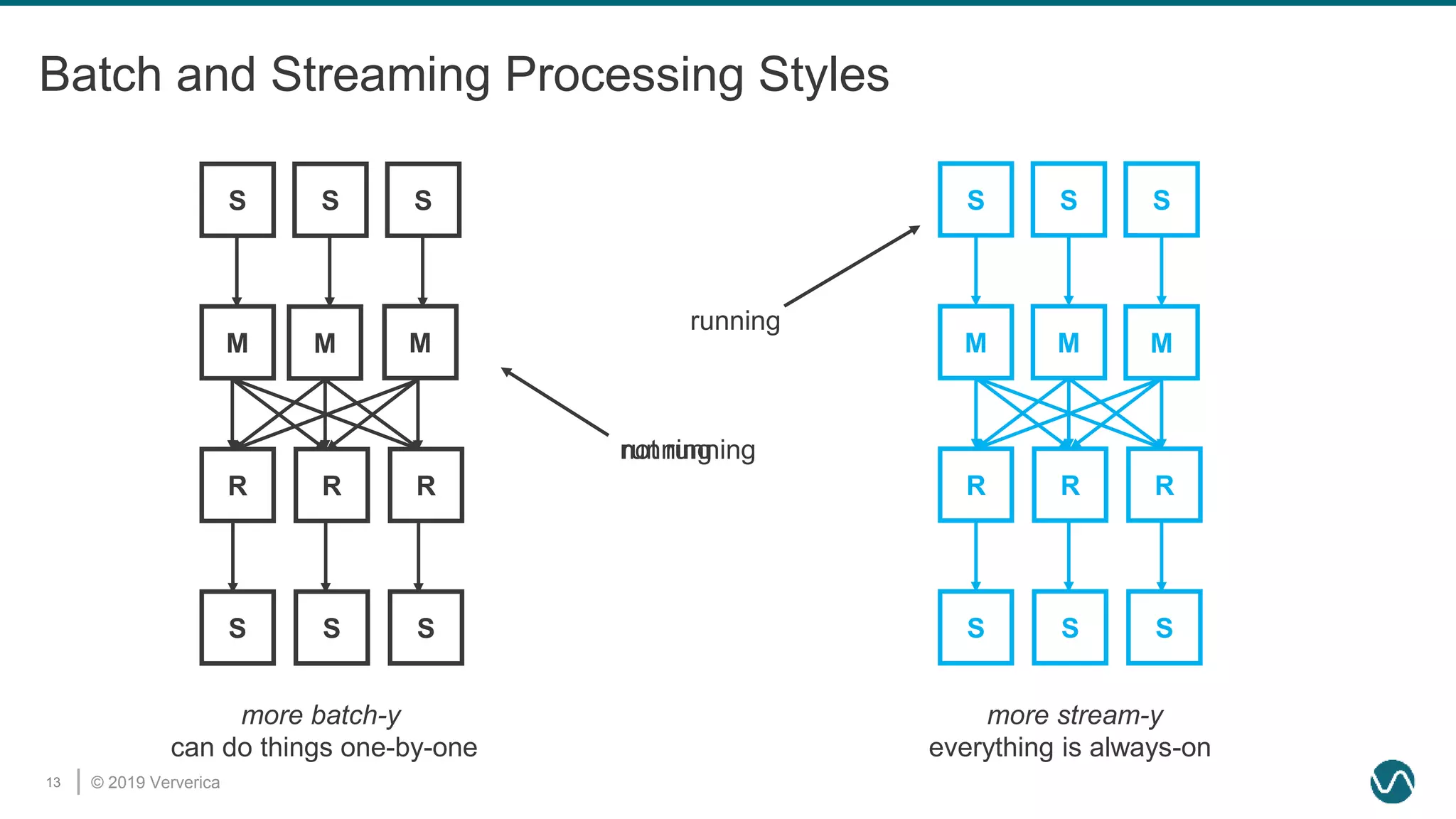

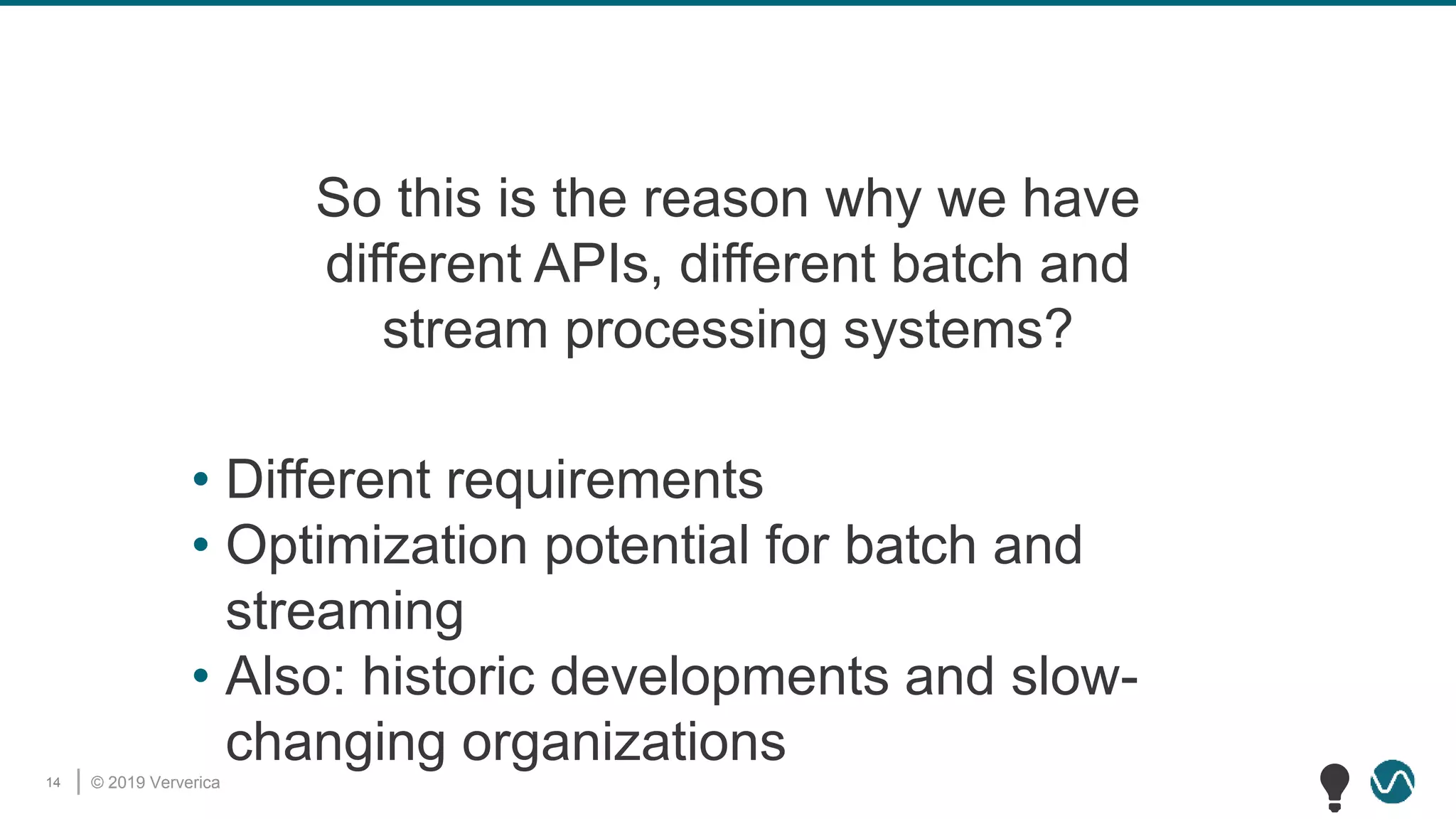

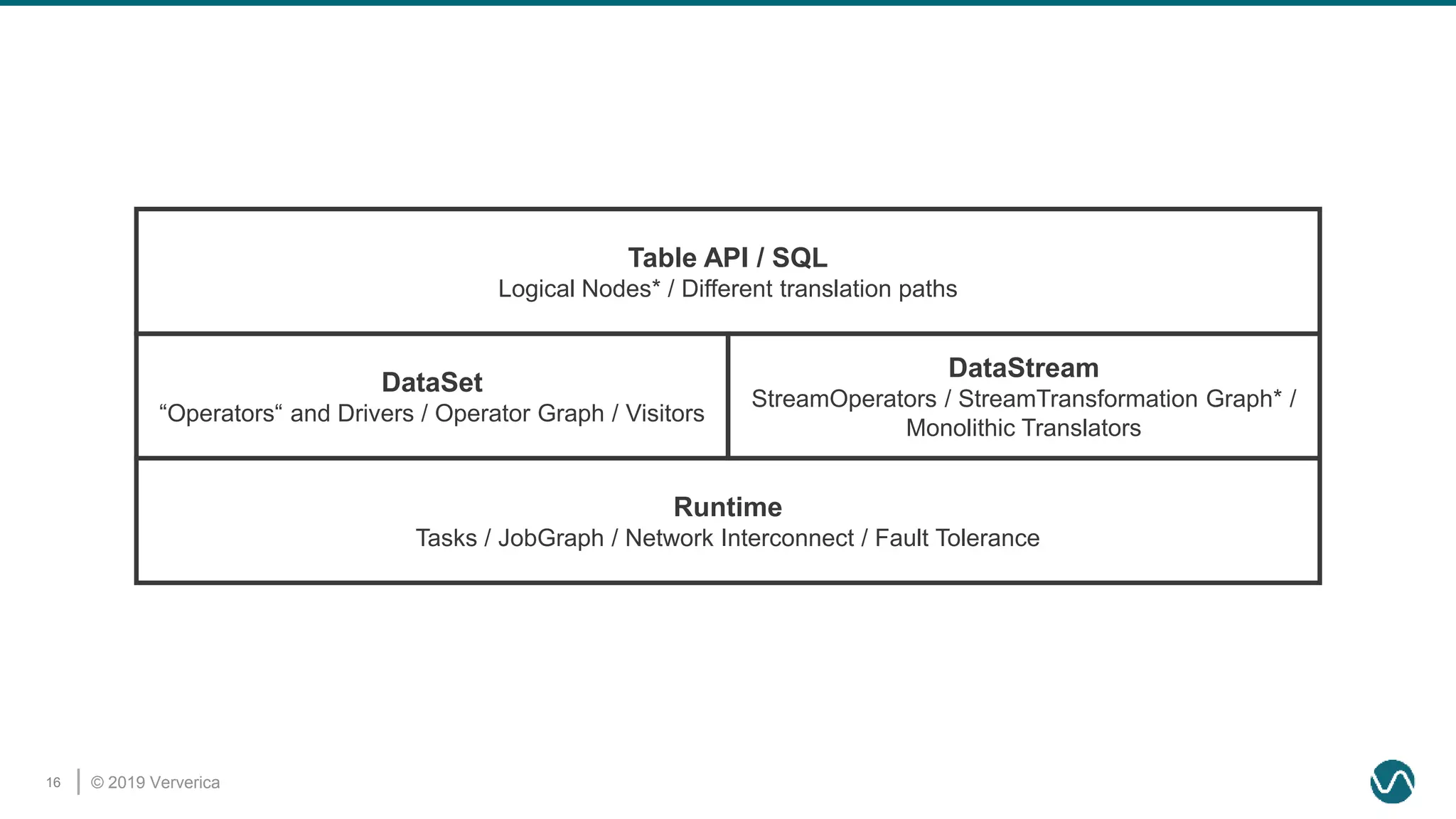

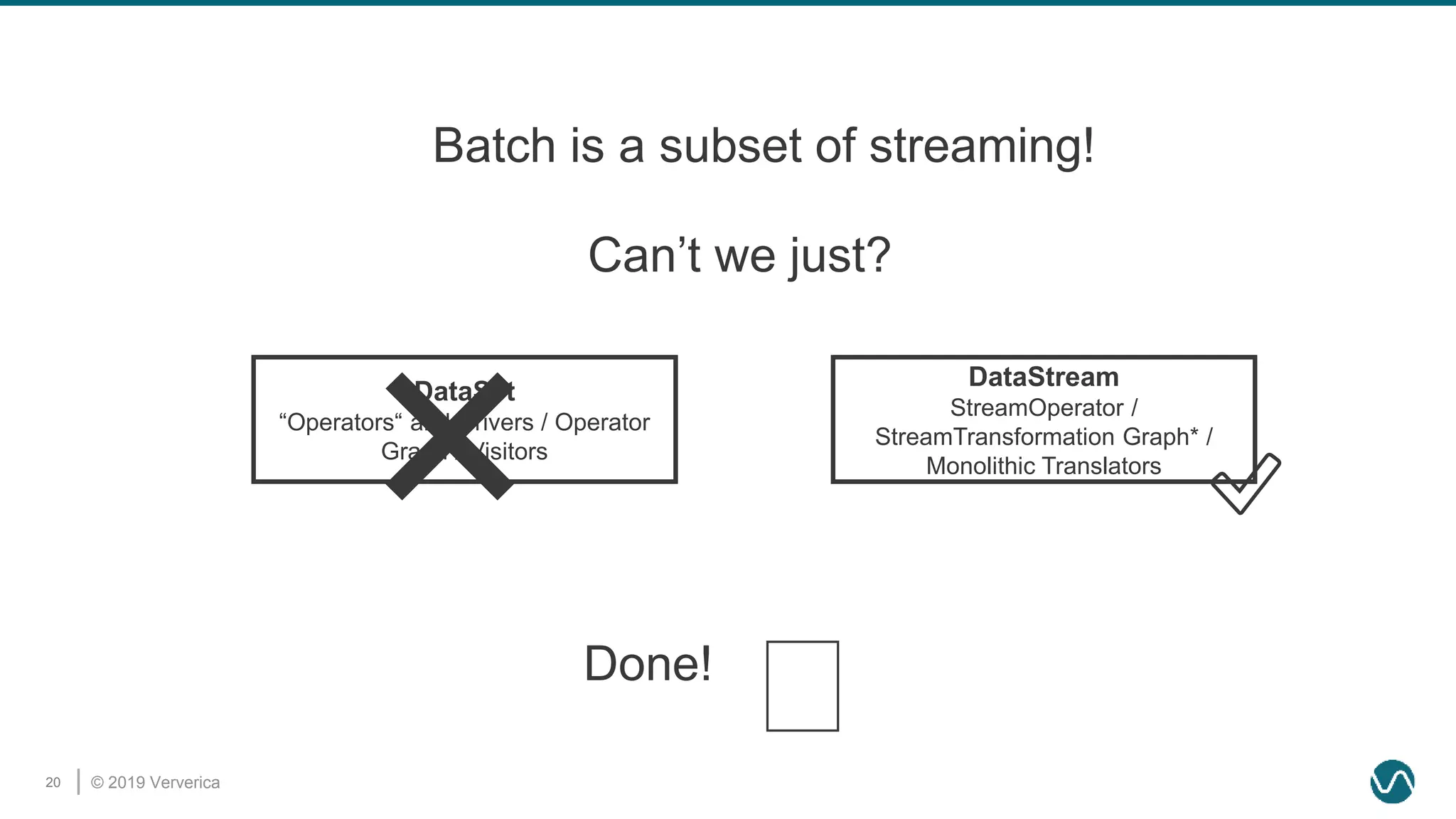

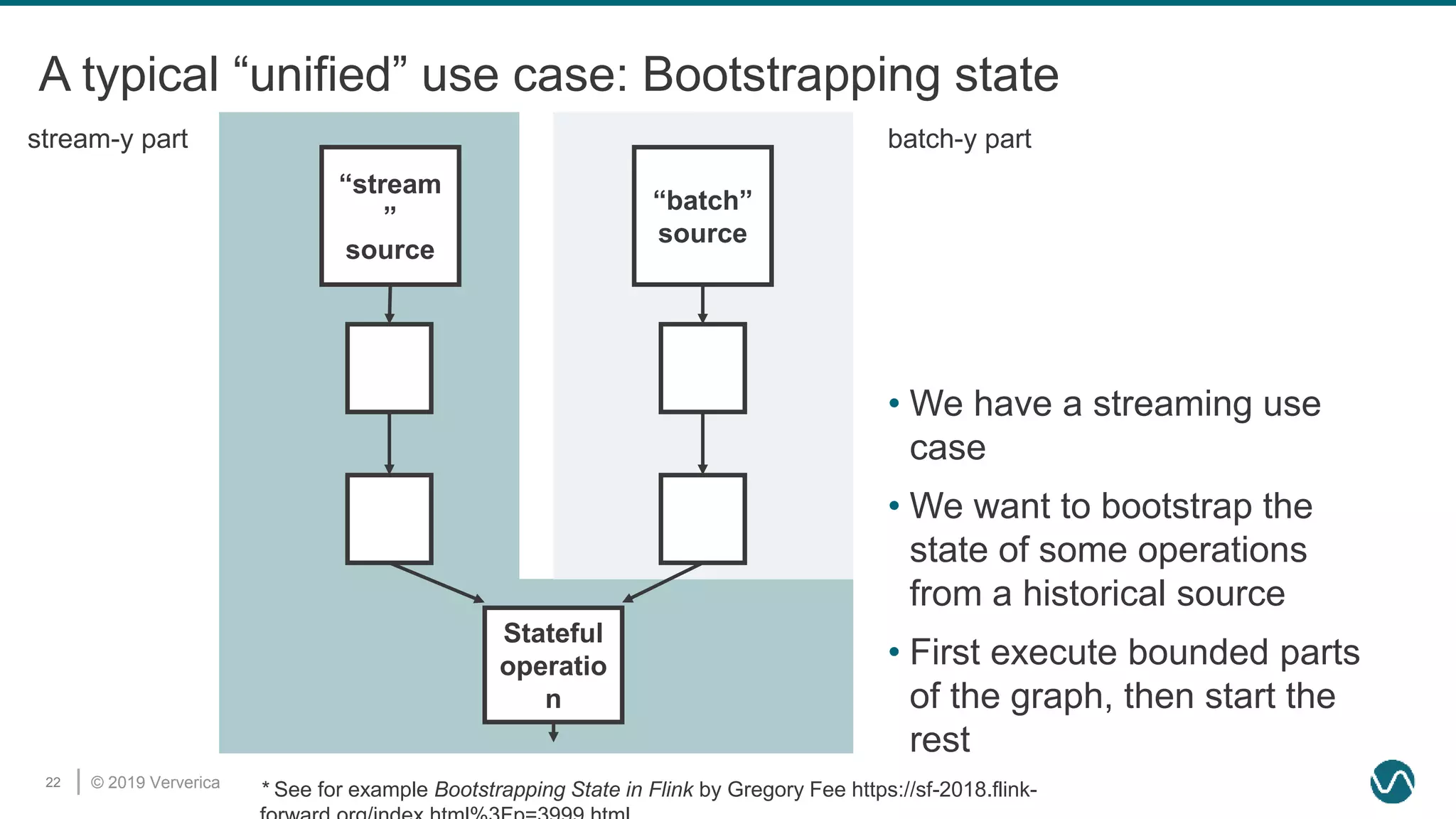

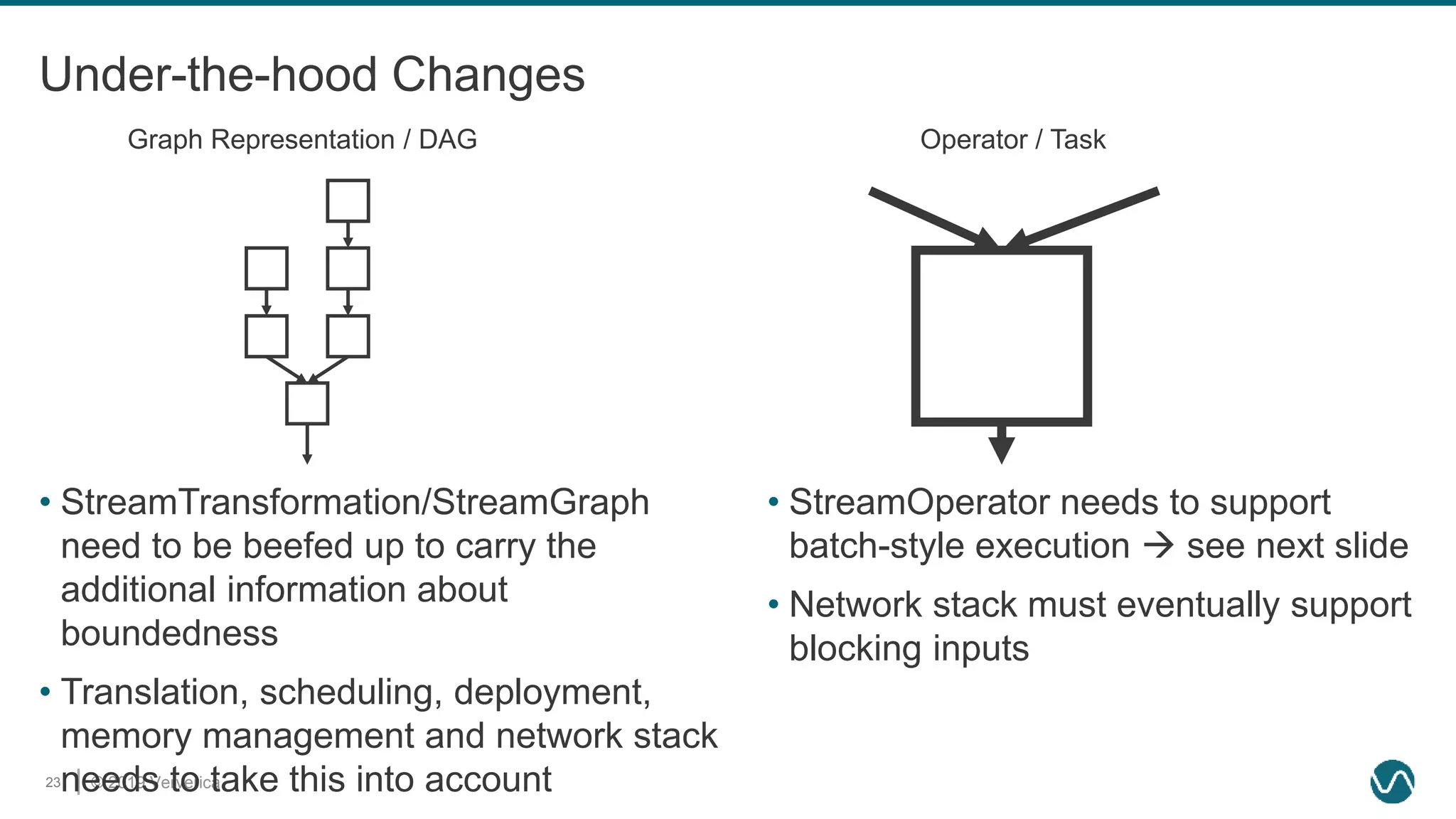

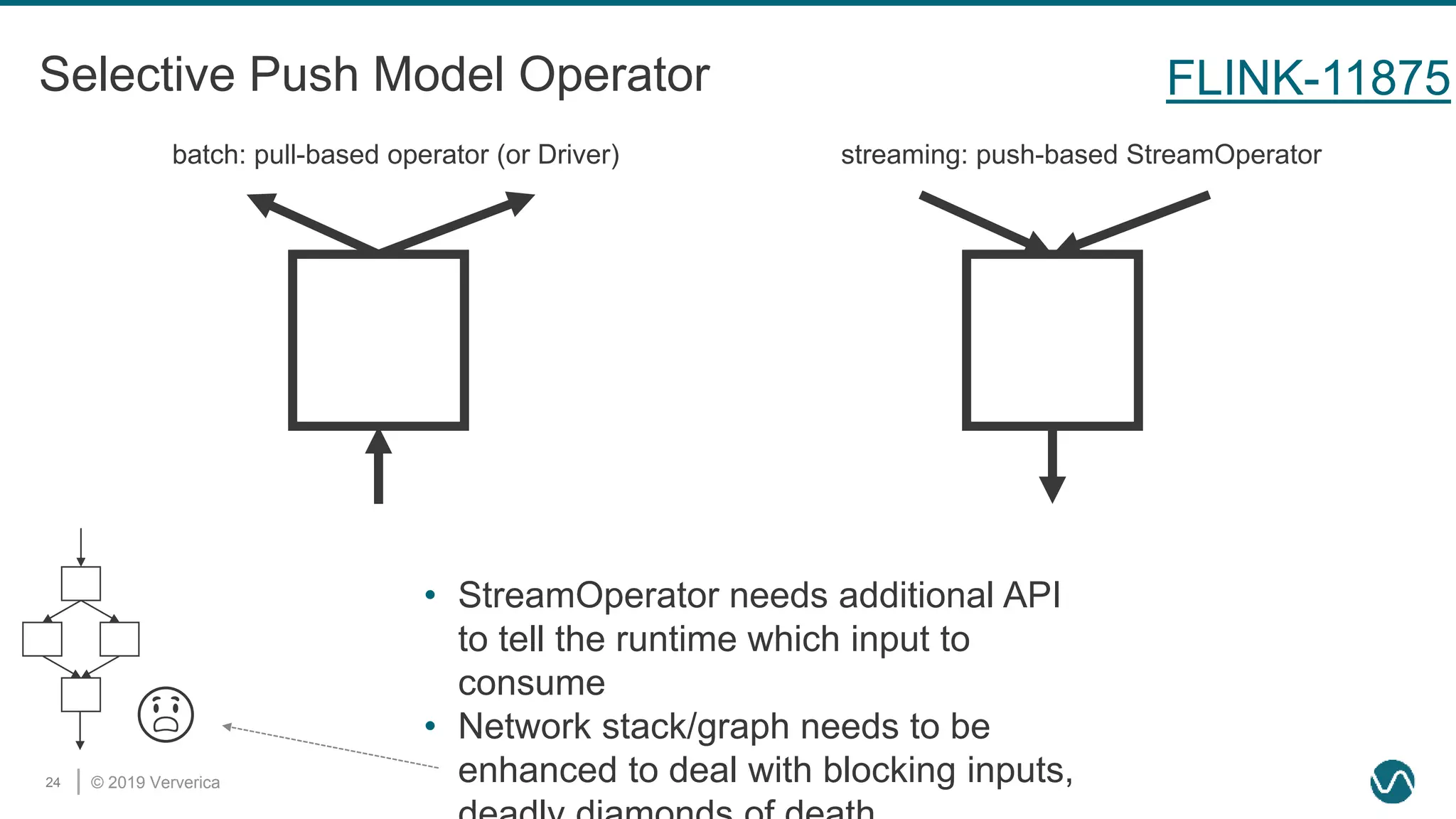

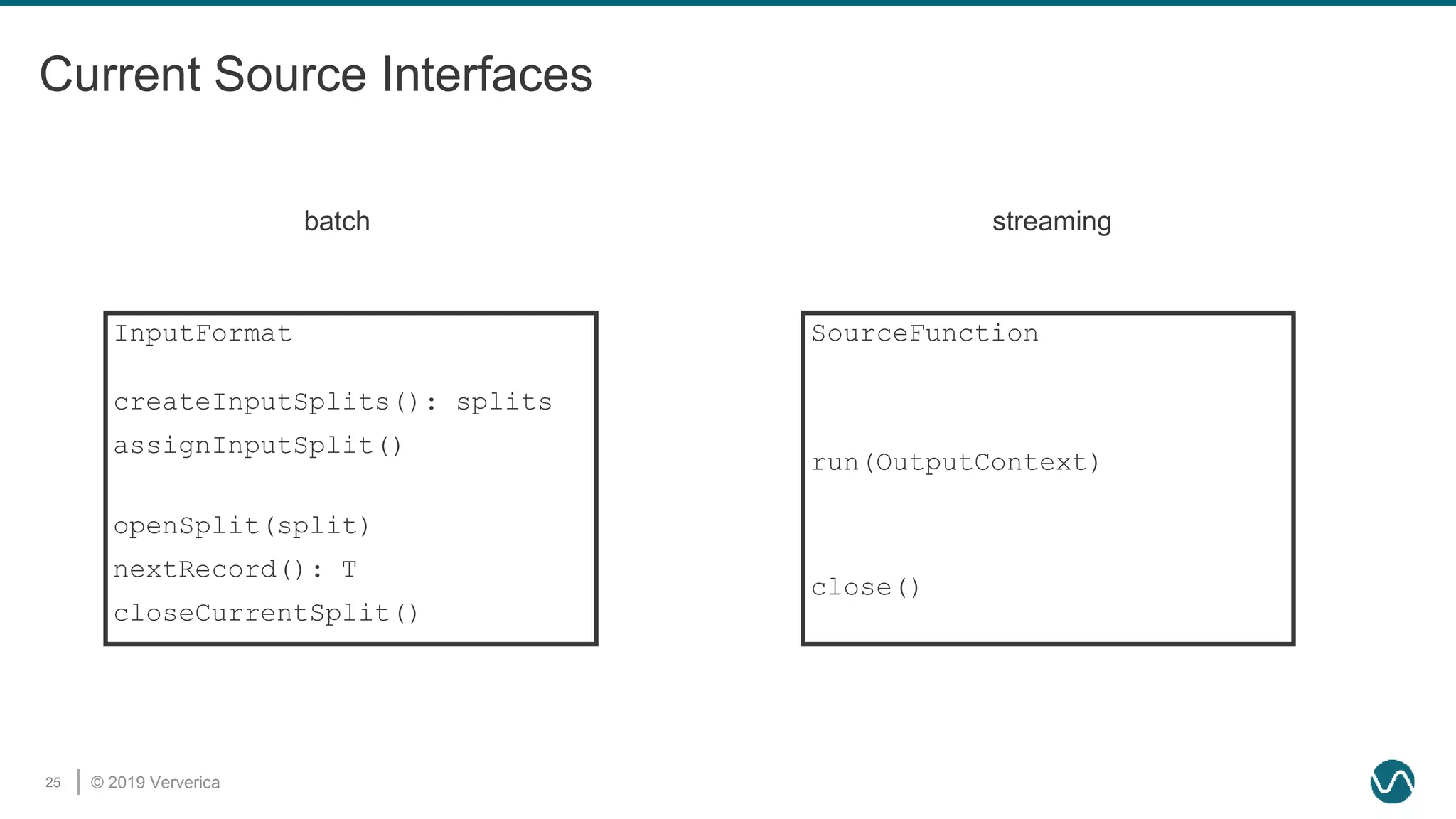

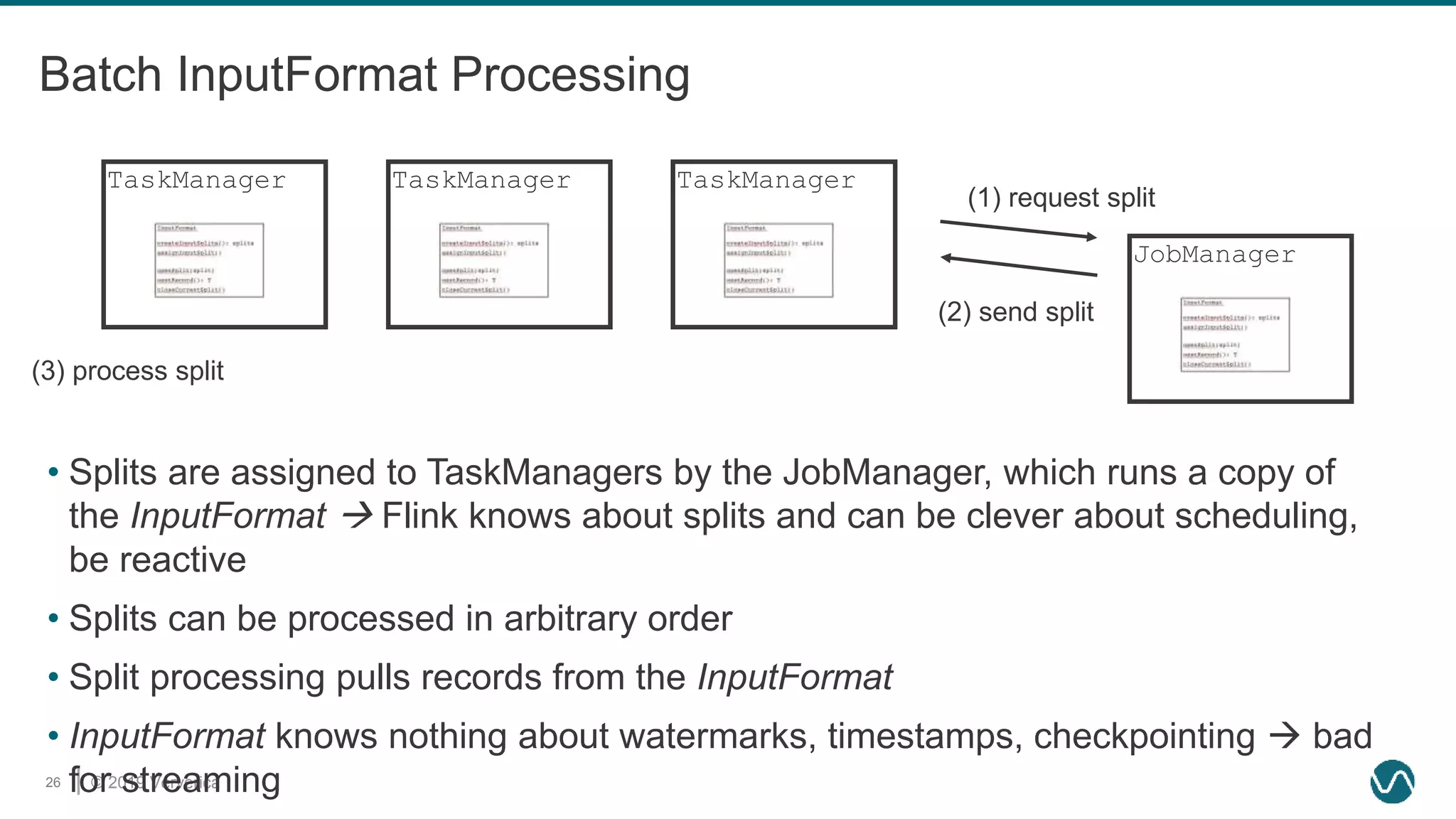

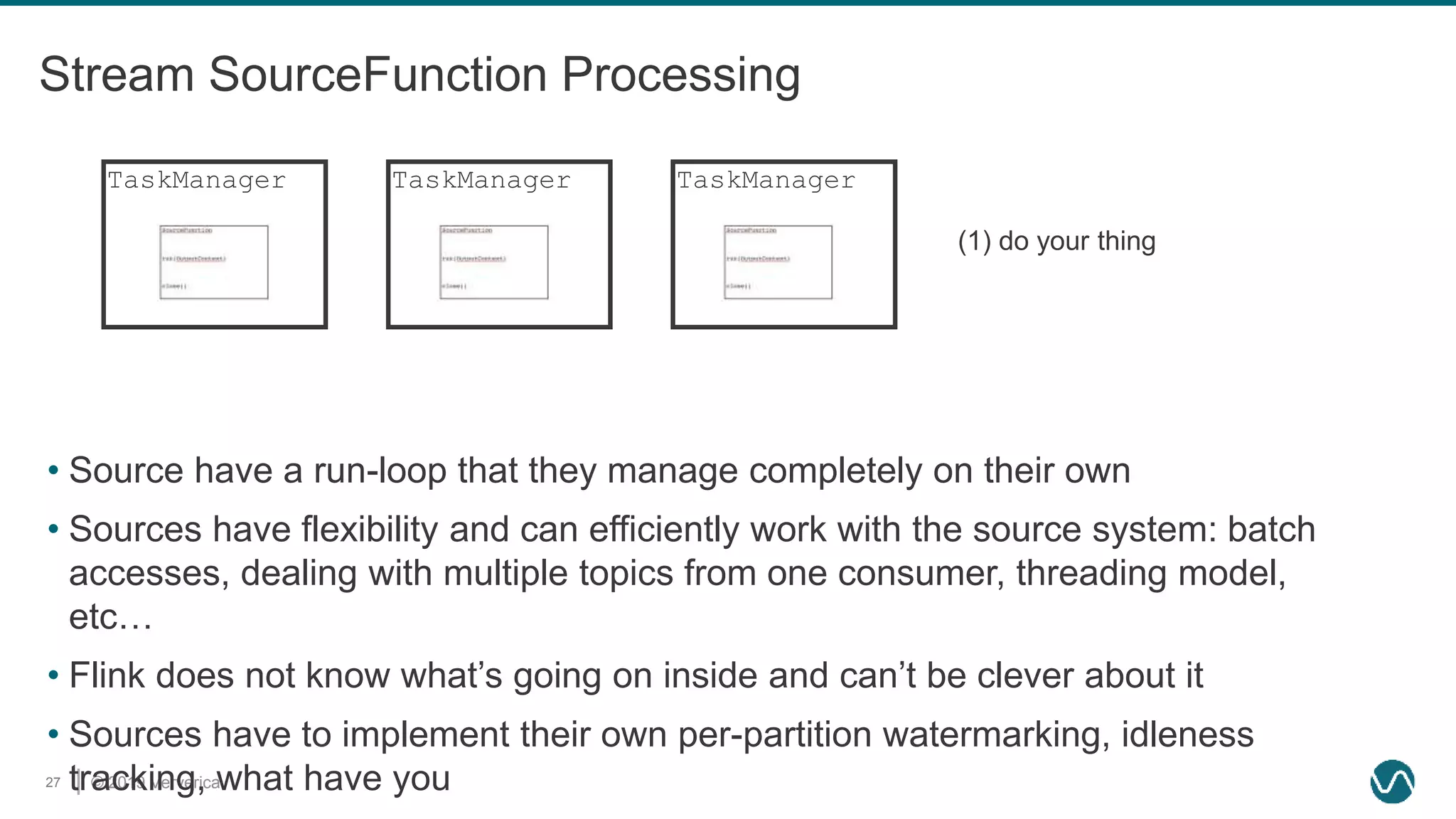

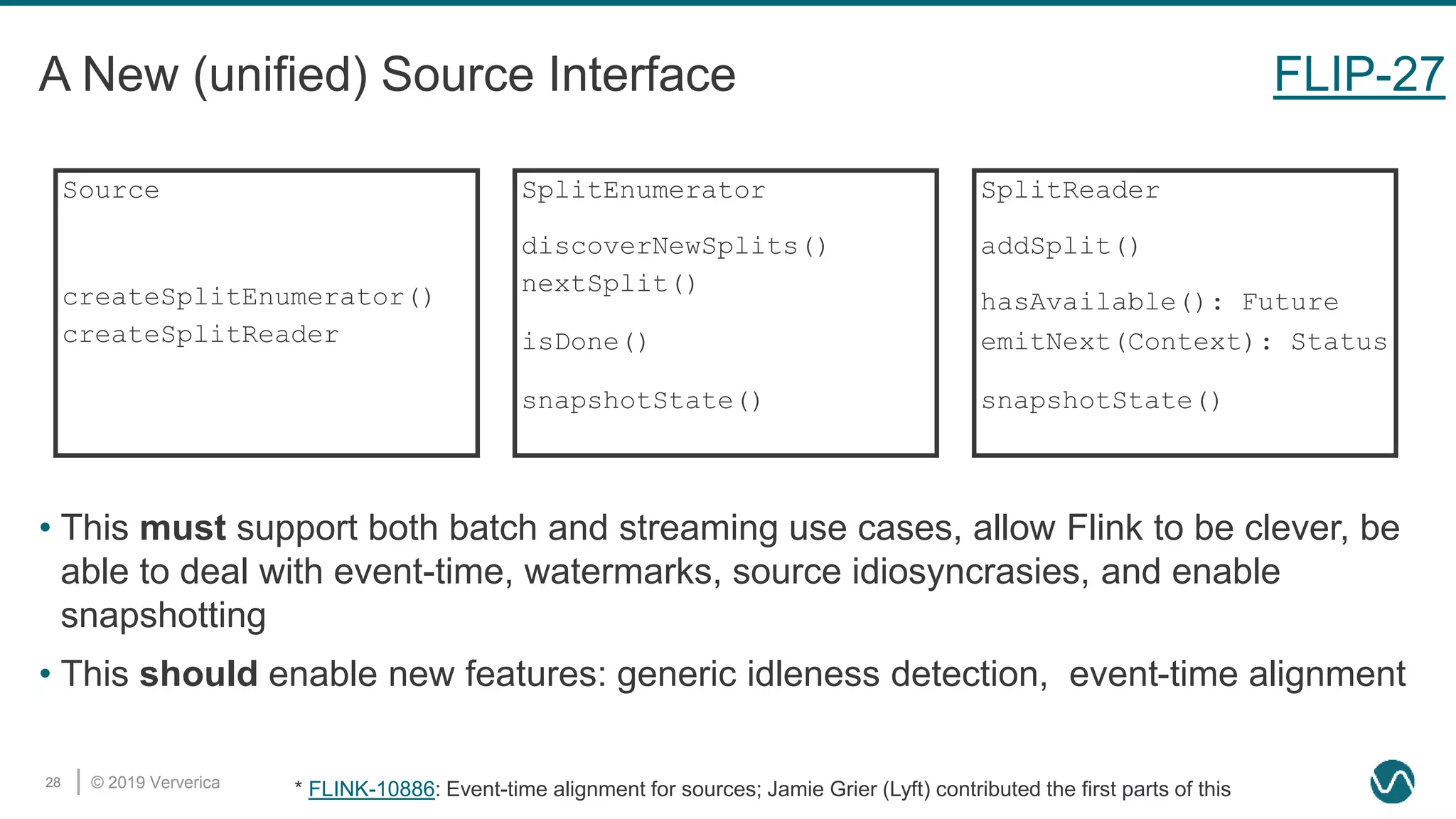

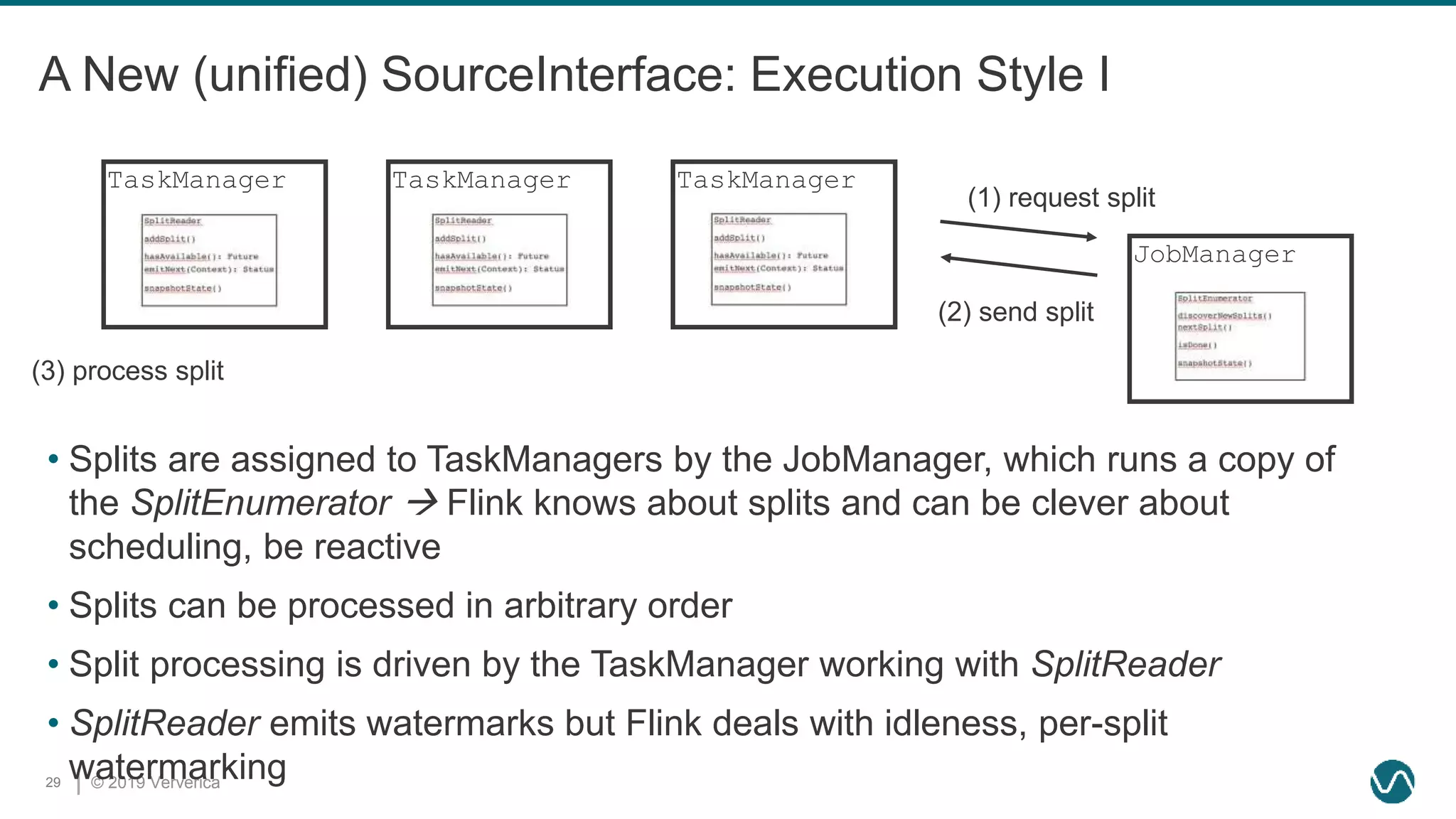

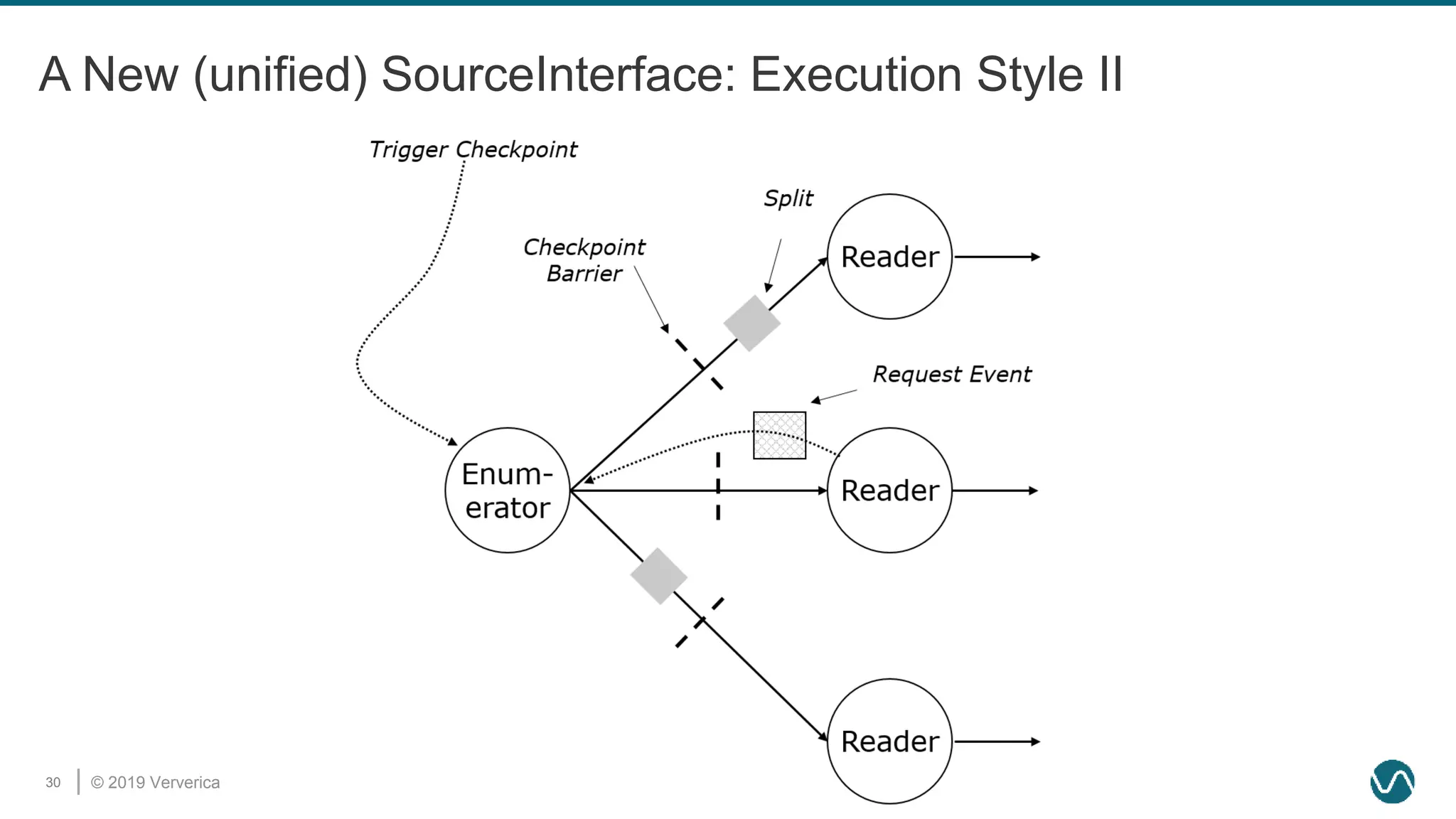

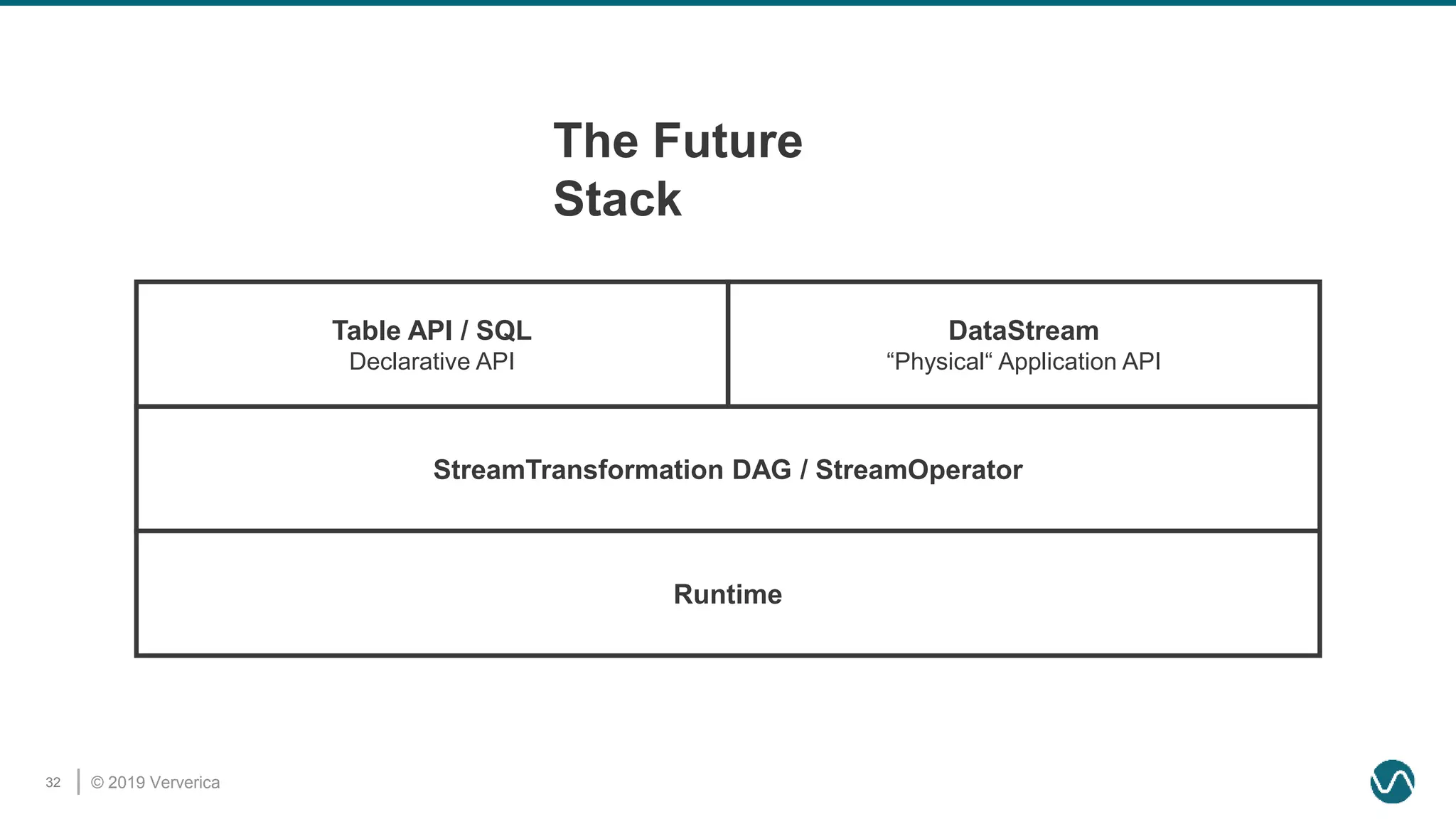

The document discusses the rethinking of the Apache Flink stack and APIs to unify batch and streaming processing. It highlights the challenges of maintaining separate APIs and processing styles, as well as the benefits of creating a unified system to improve efficiency and ease of use. Future improvements include a new unified source interface and enhancements to existing components to better support both data processing paradigms.