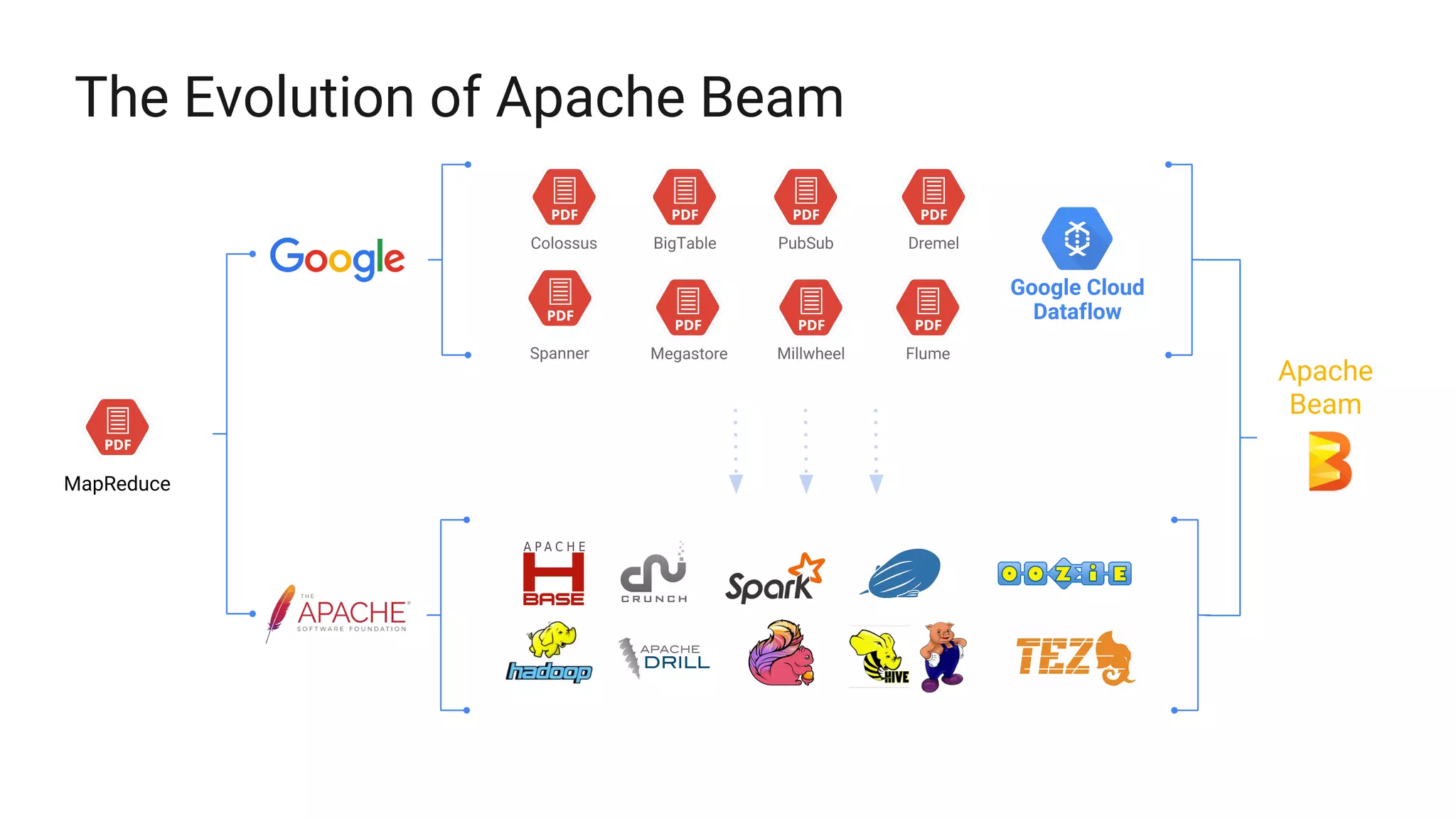

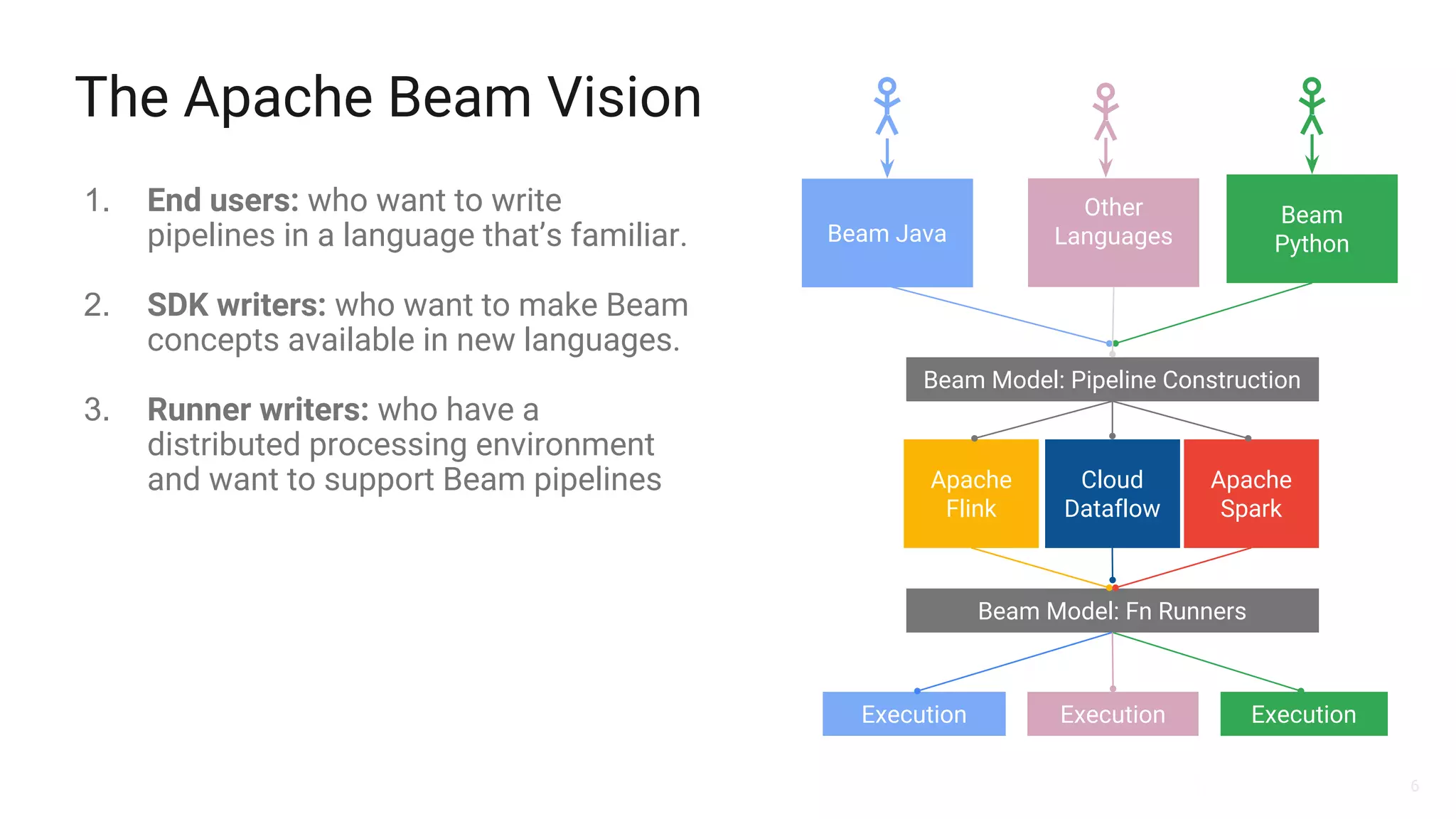

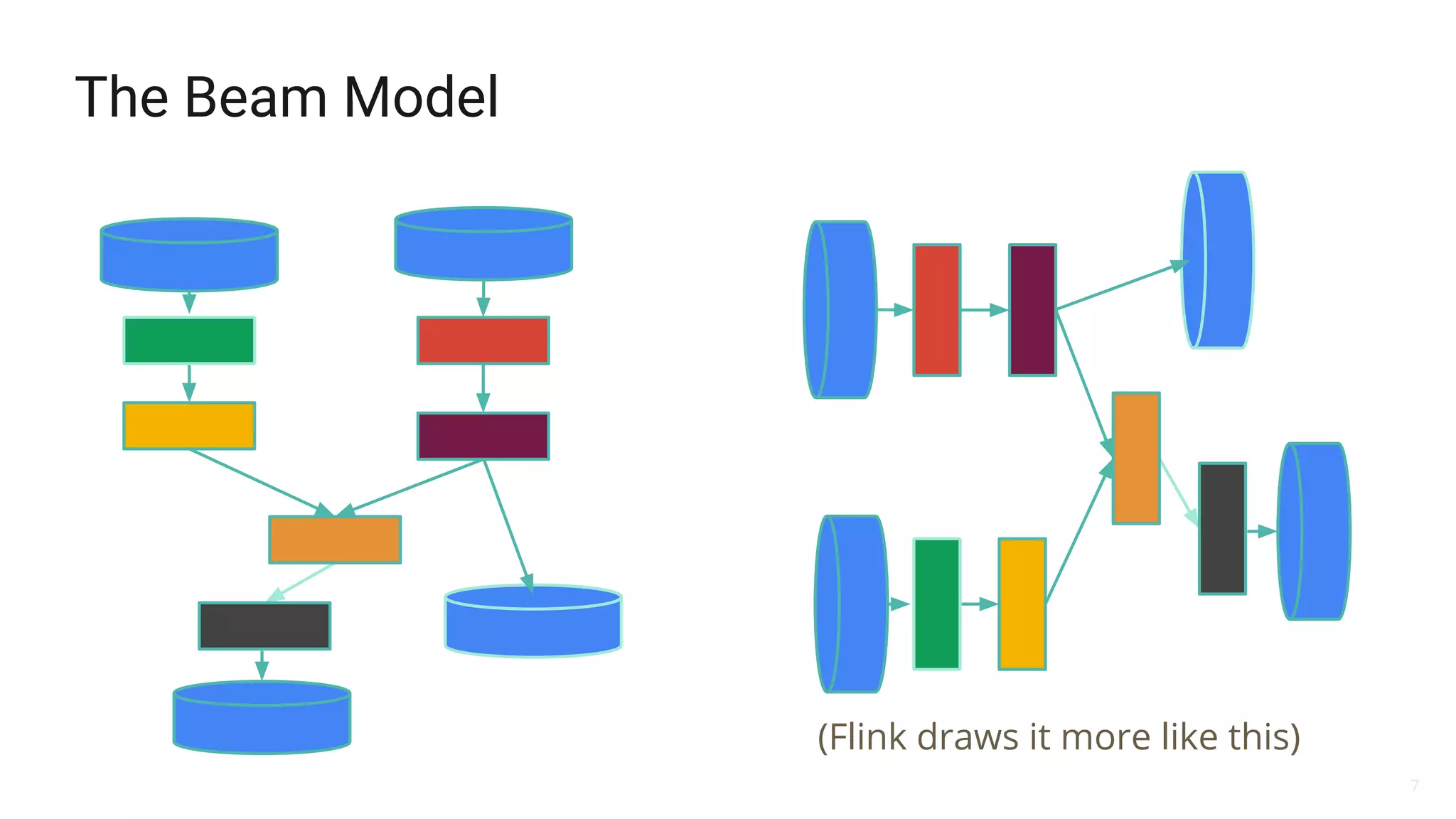

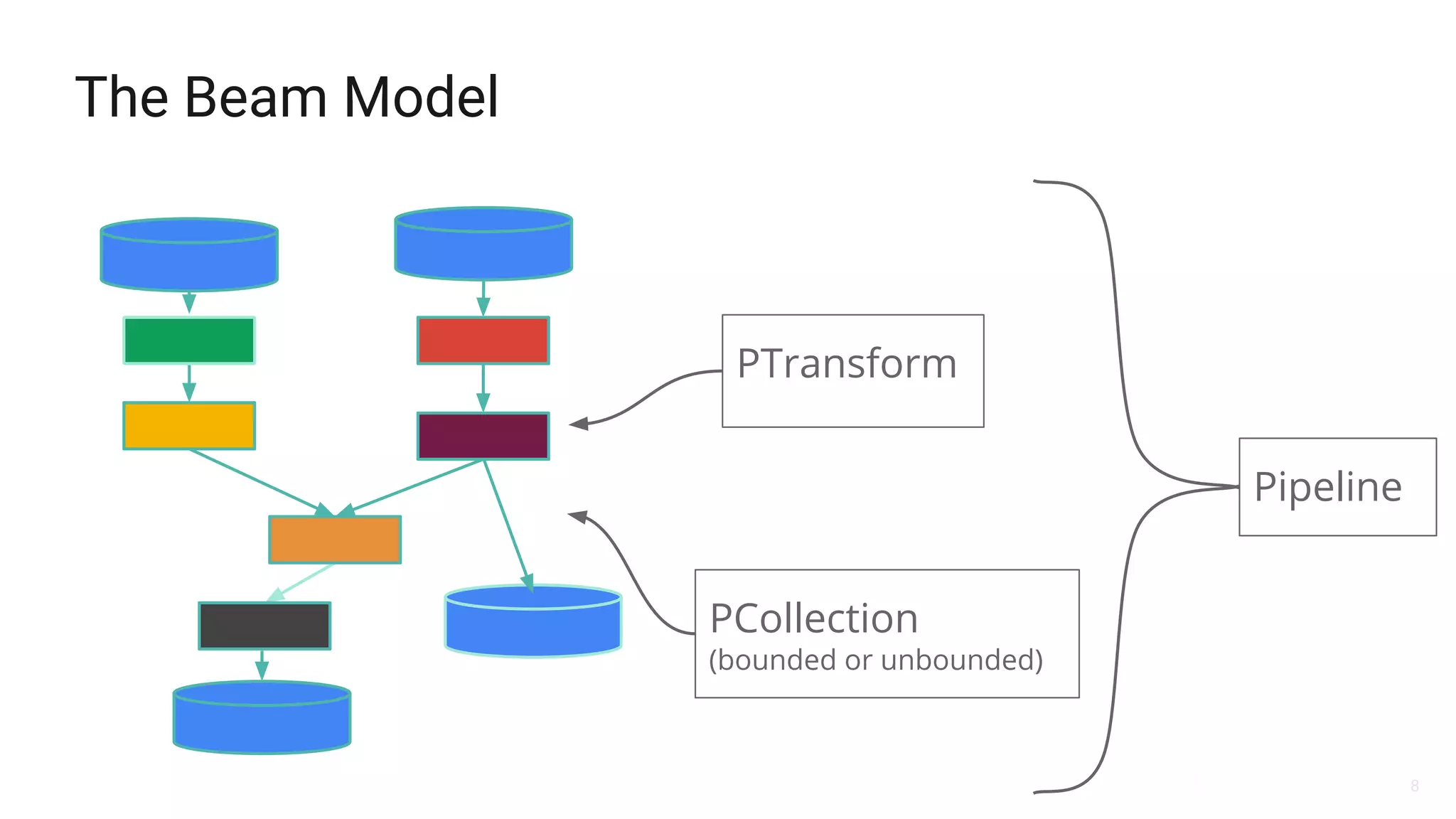

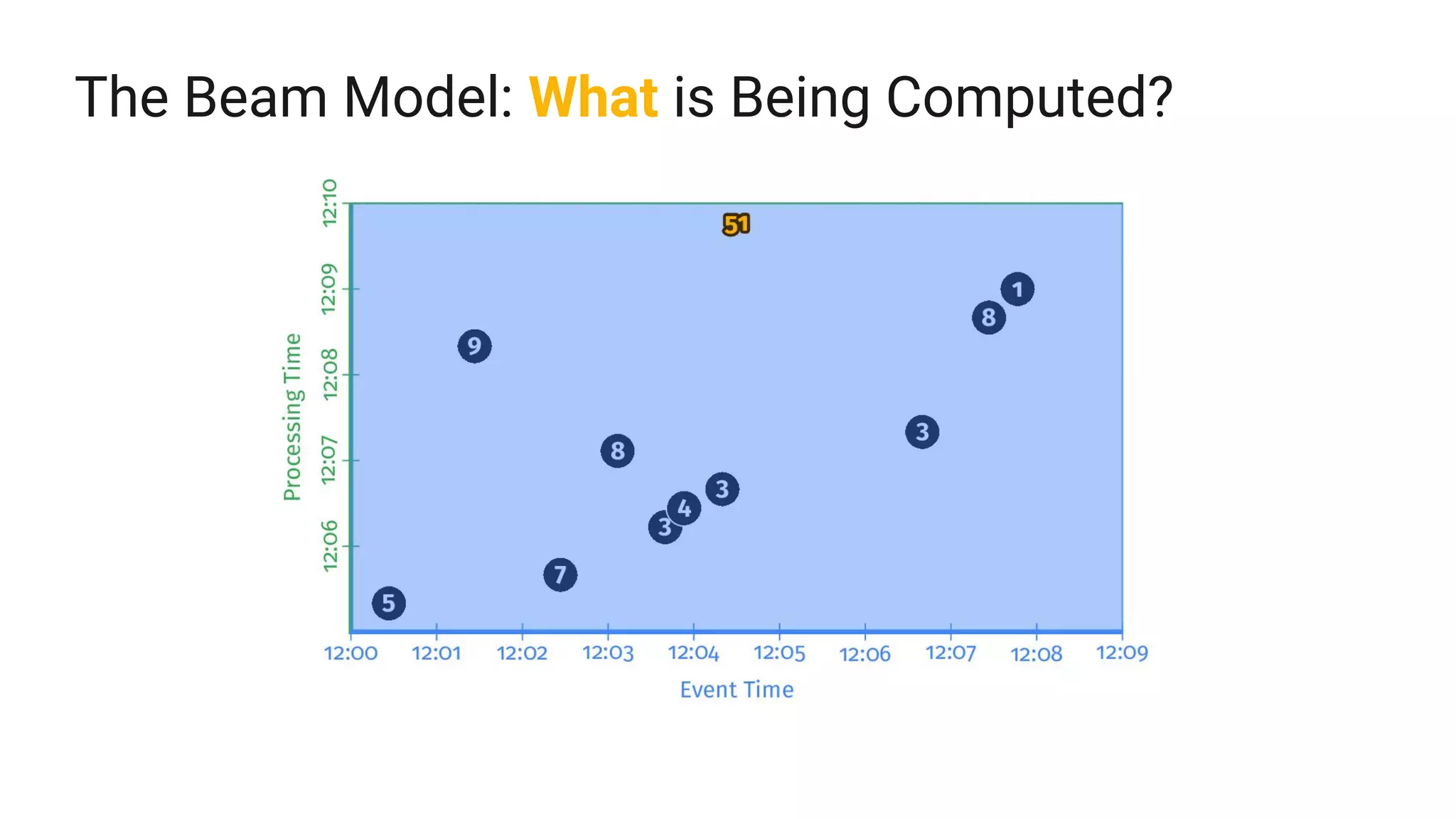

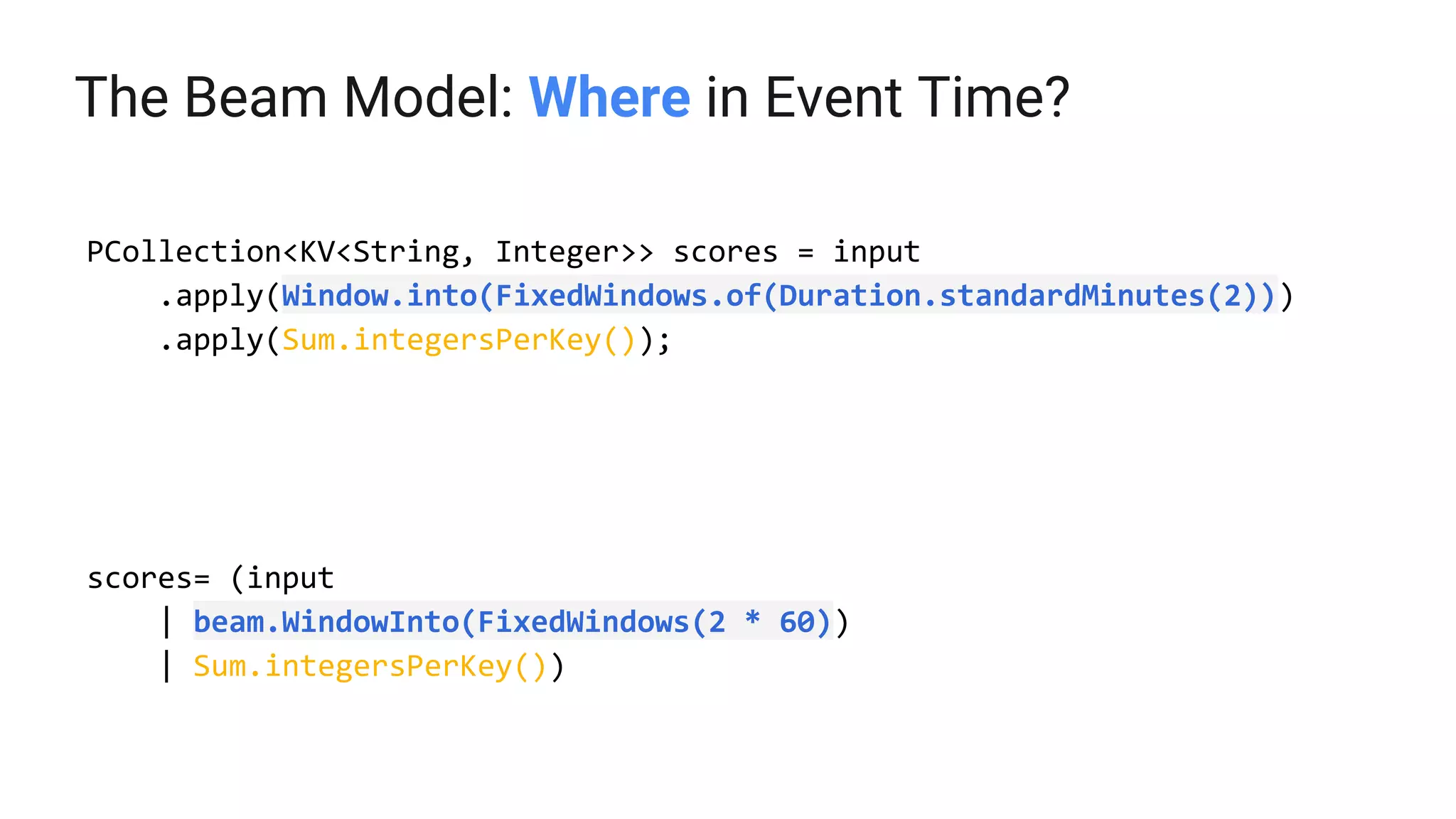

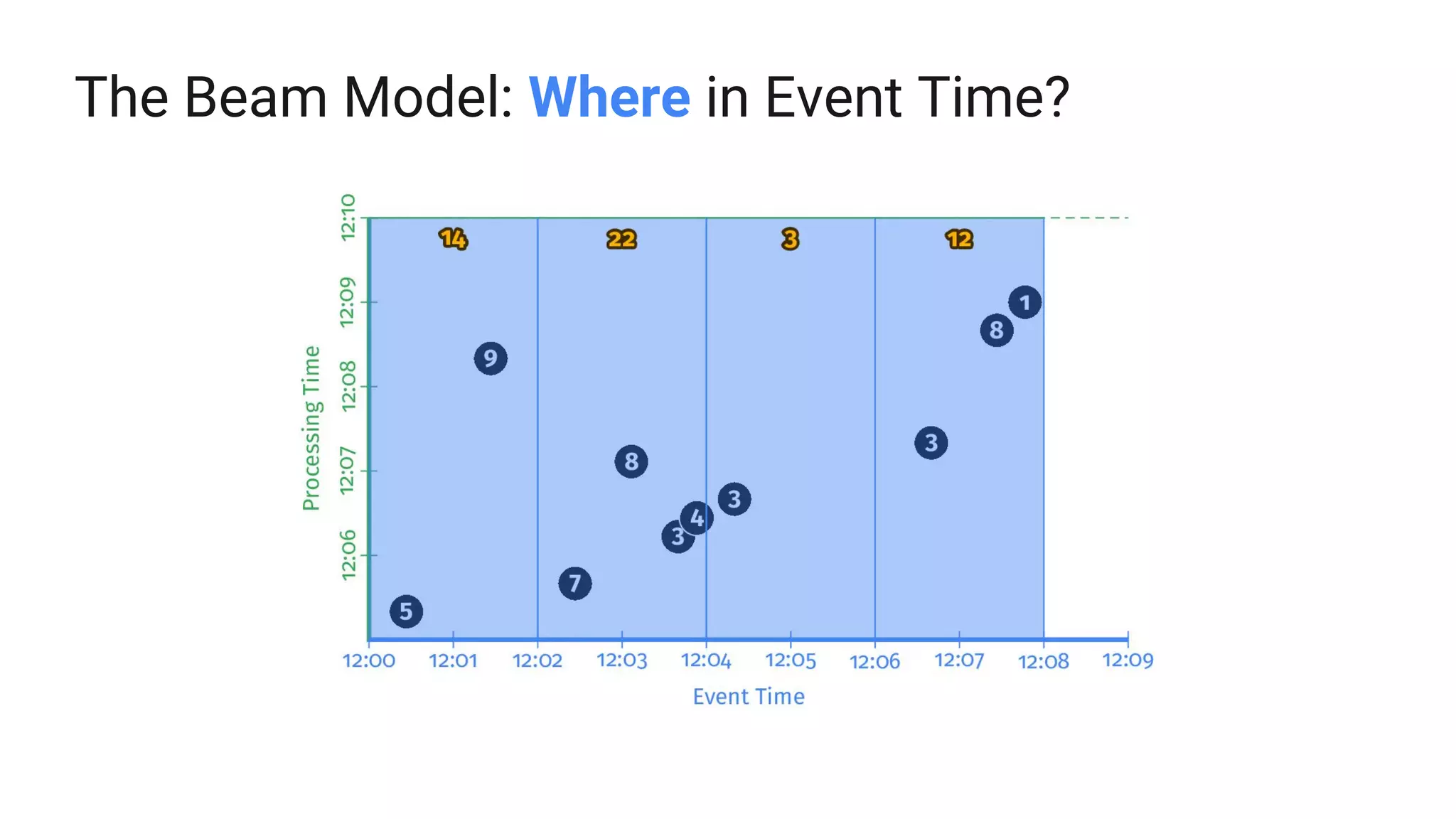

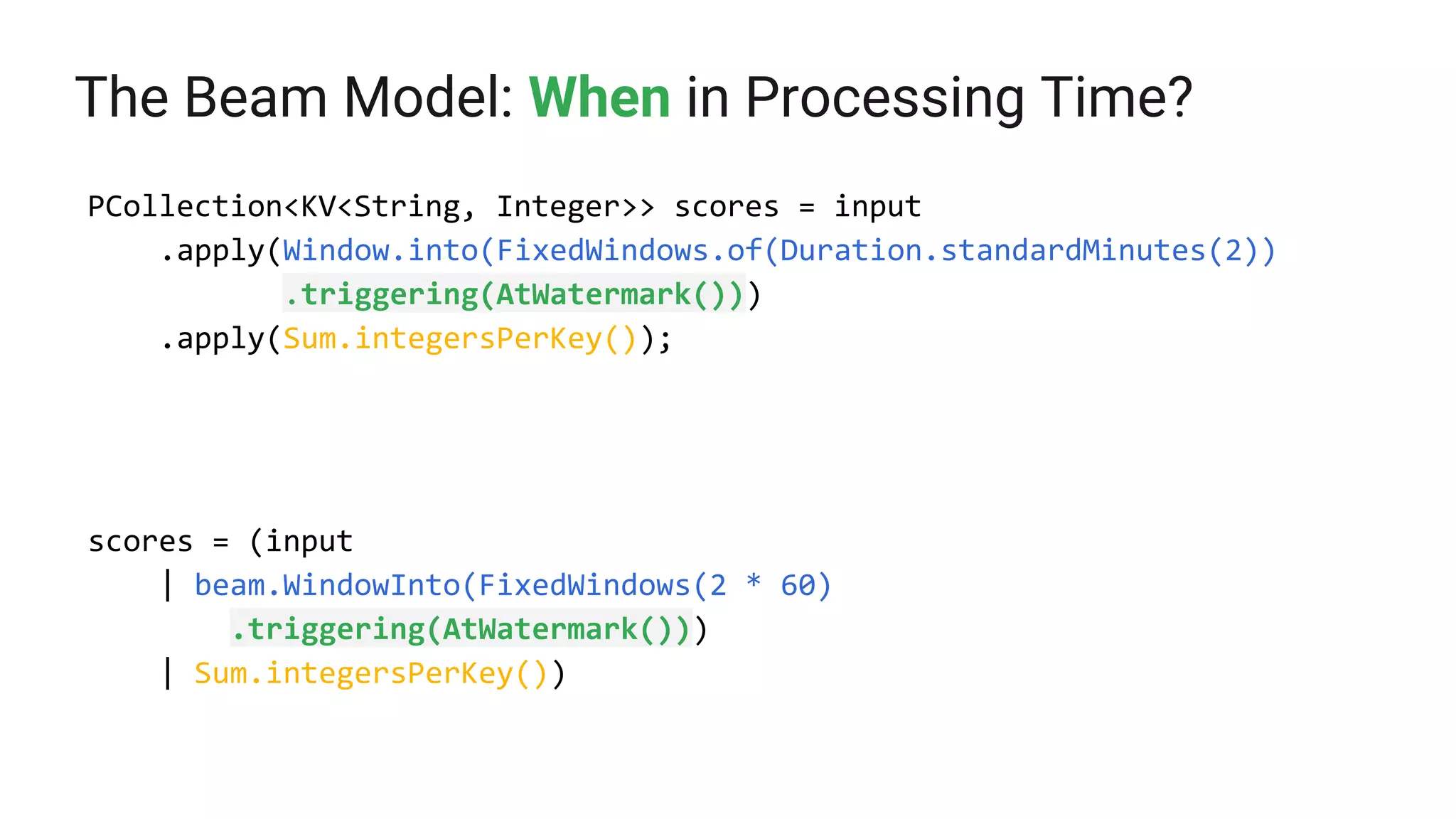

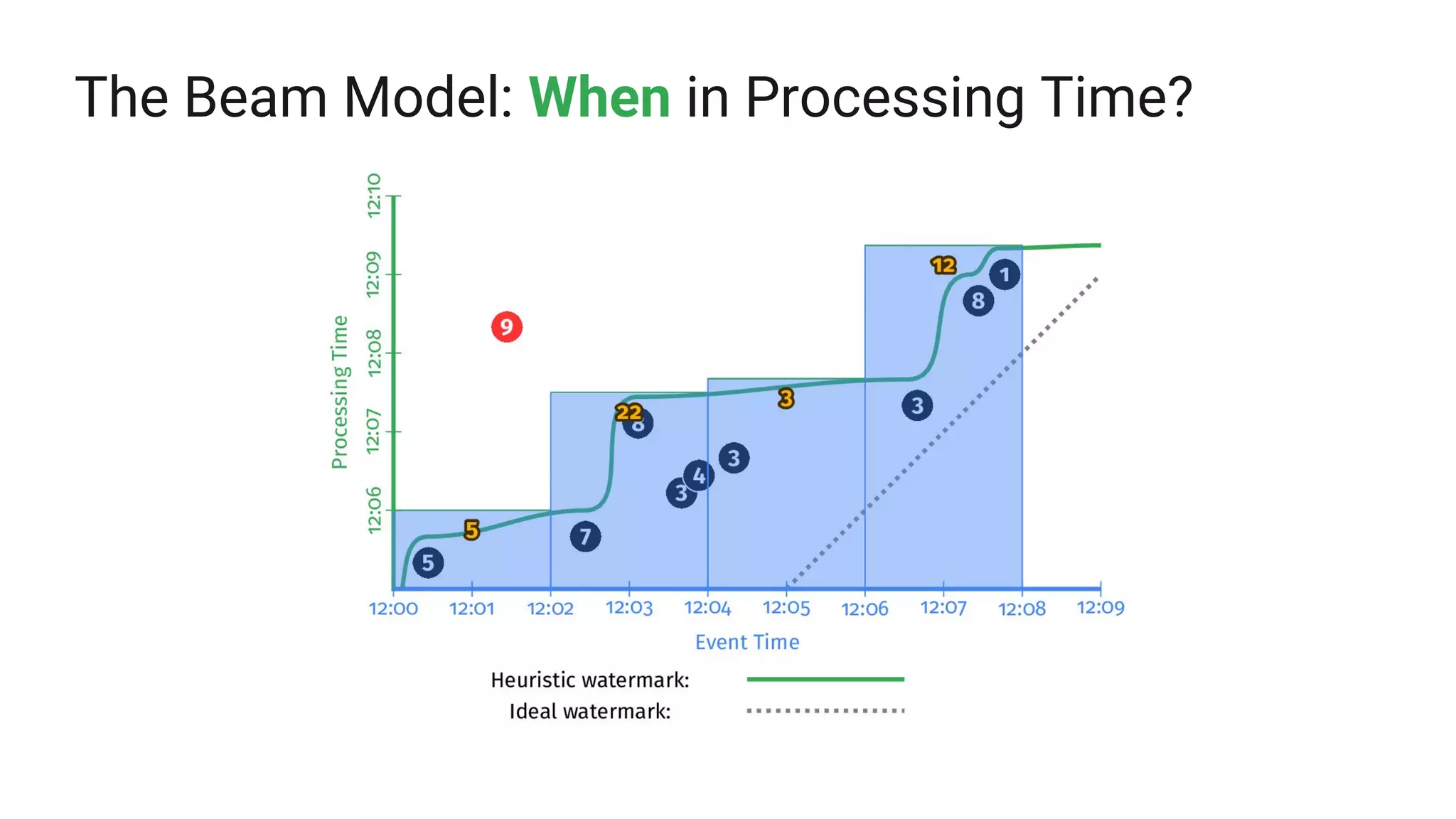

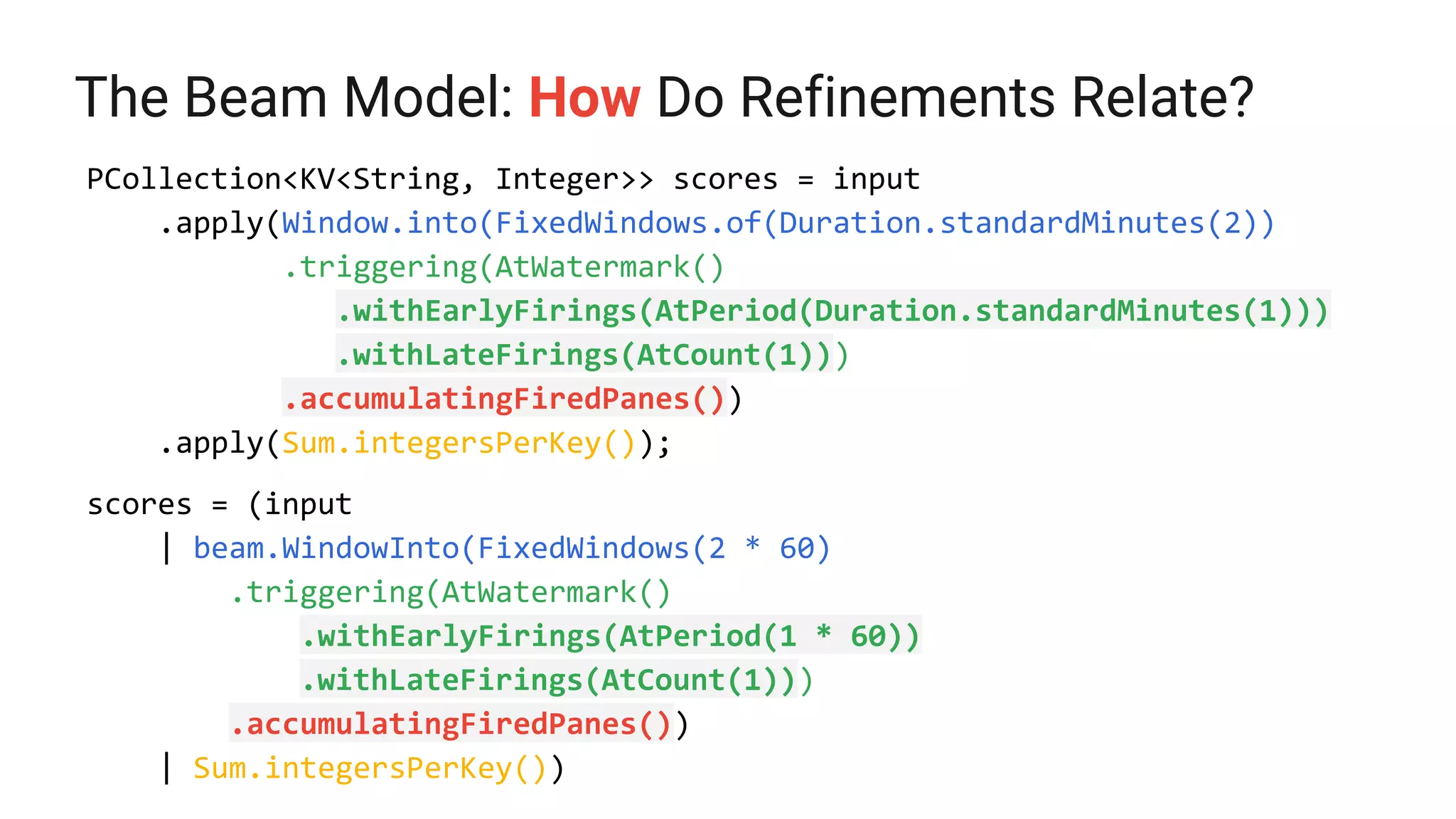

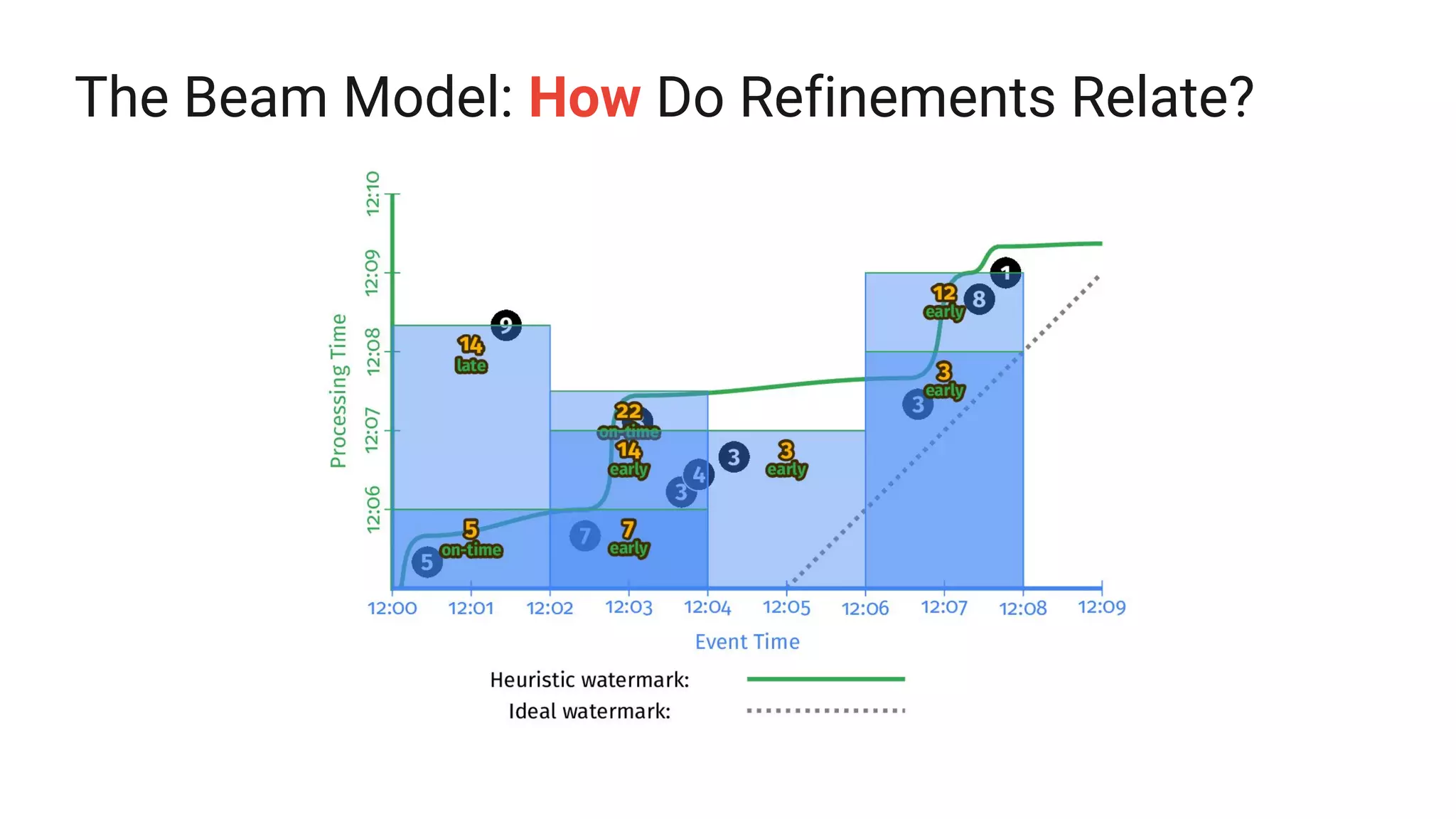

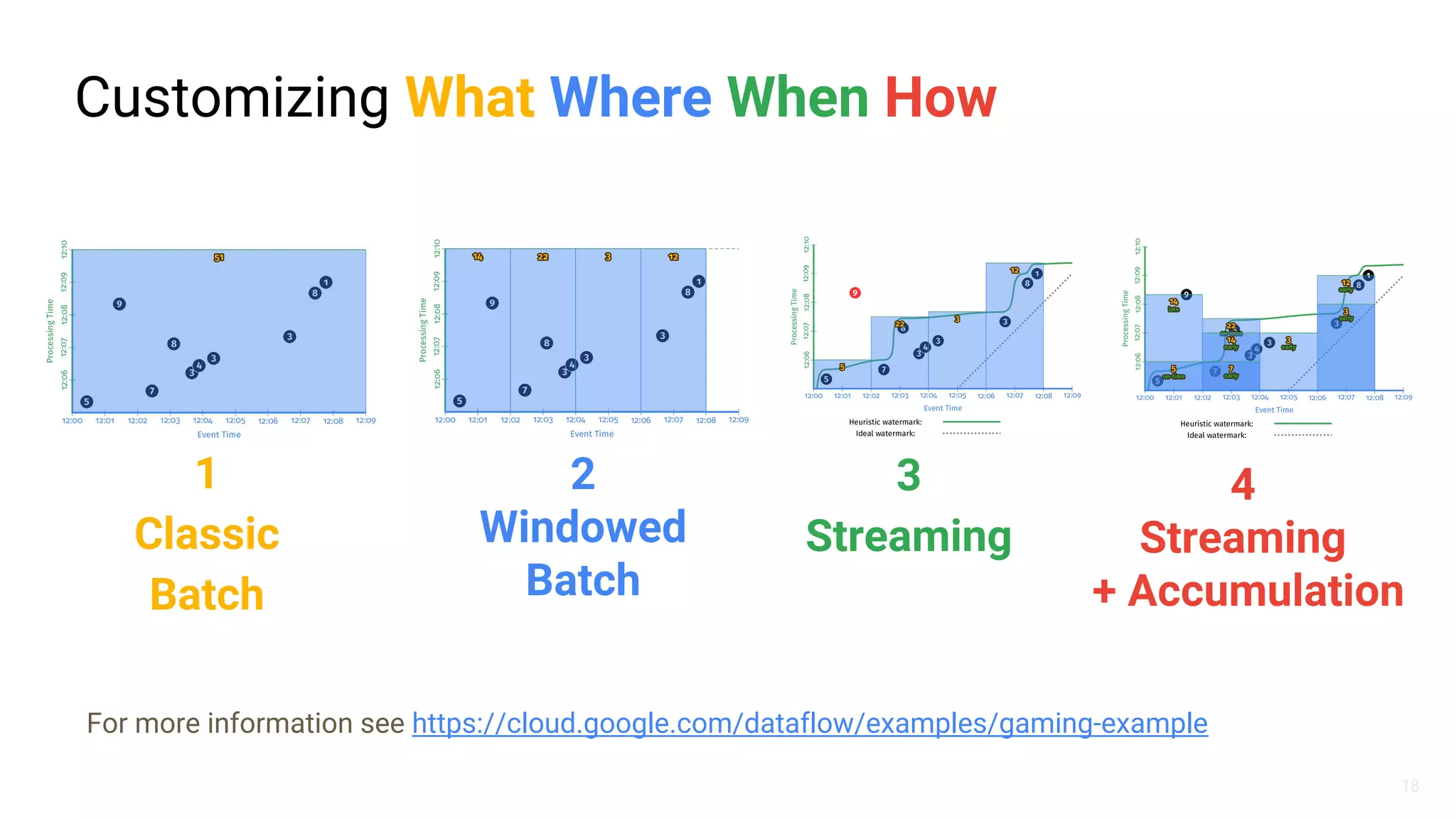

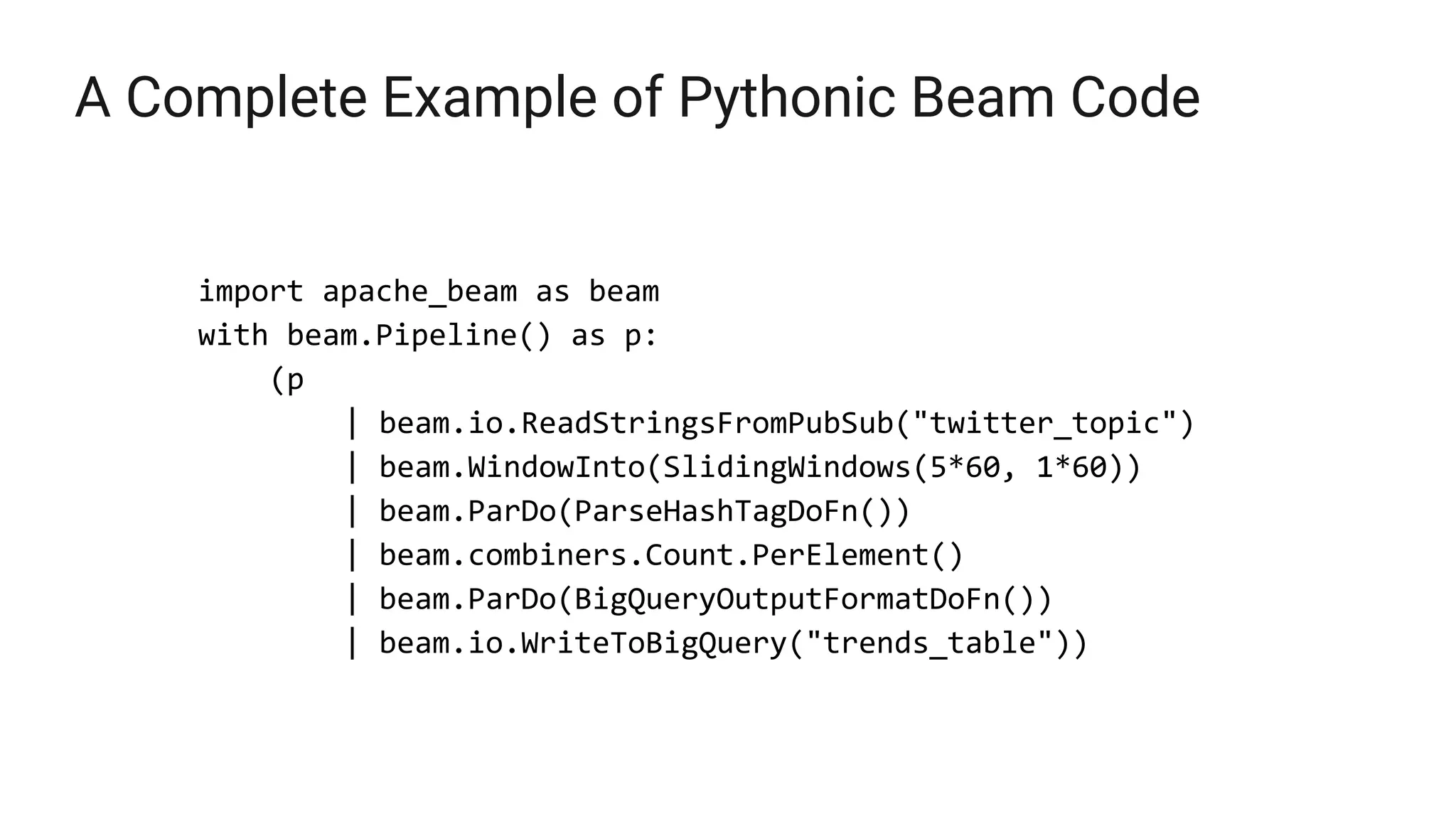

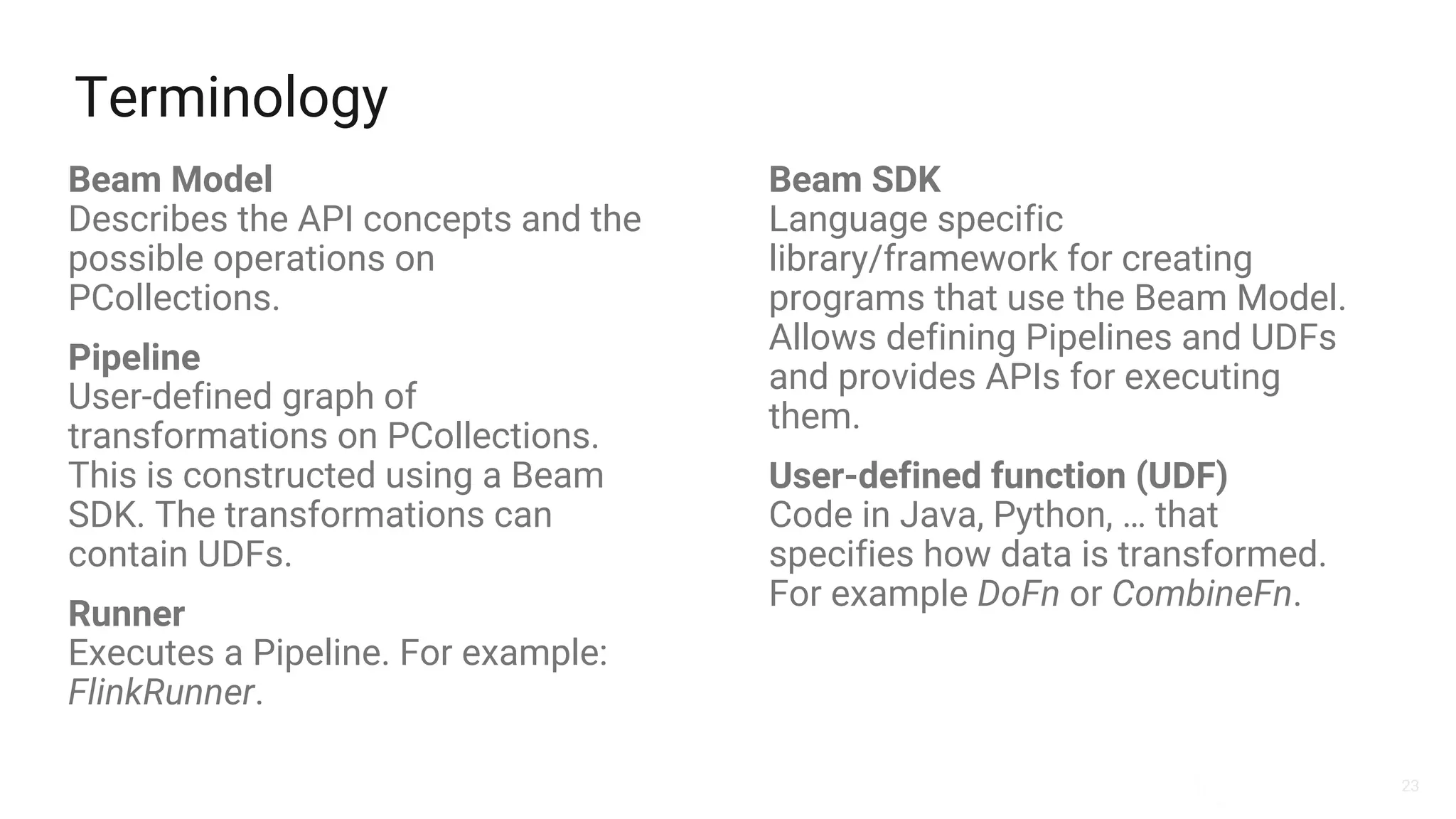

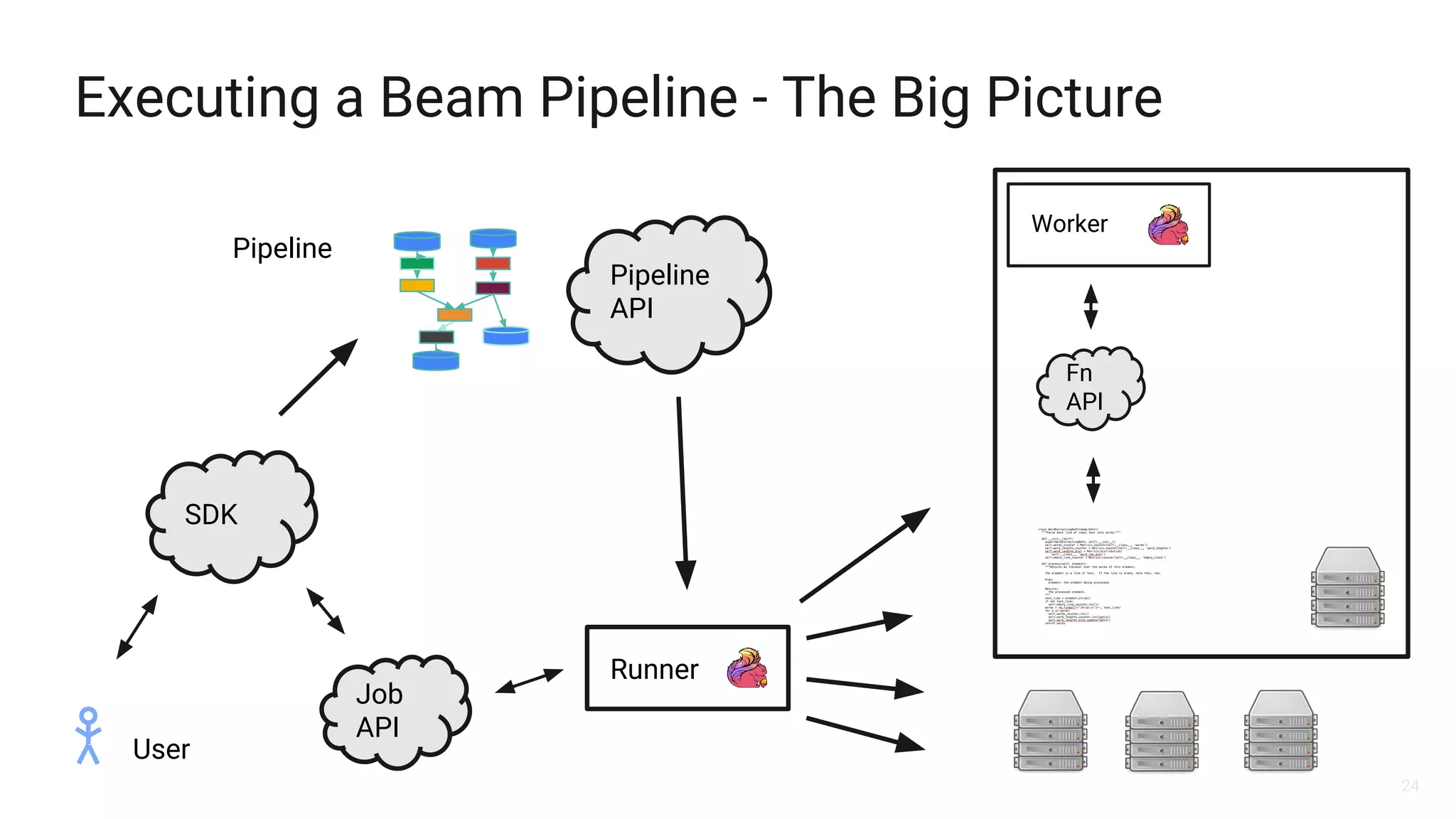

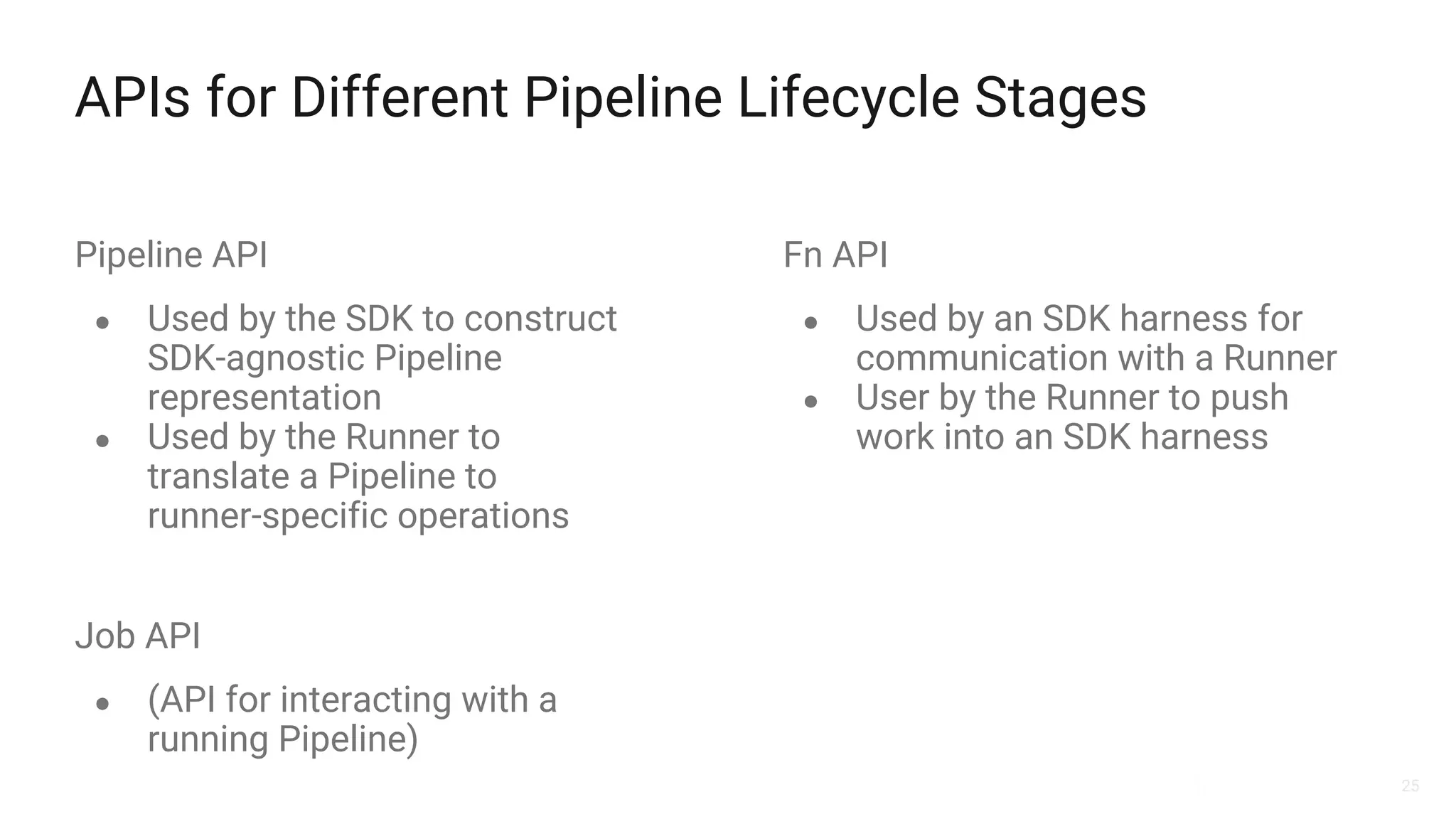

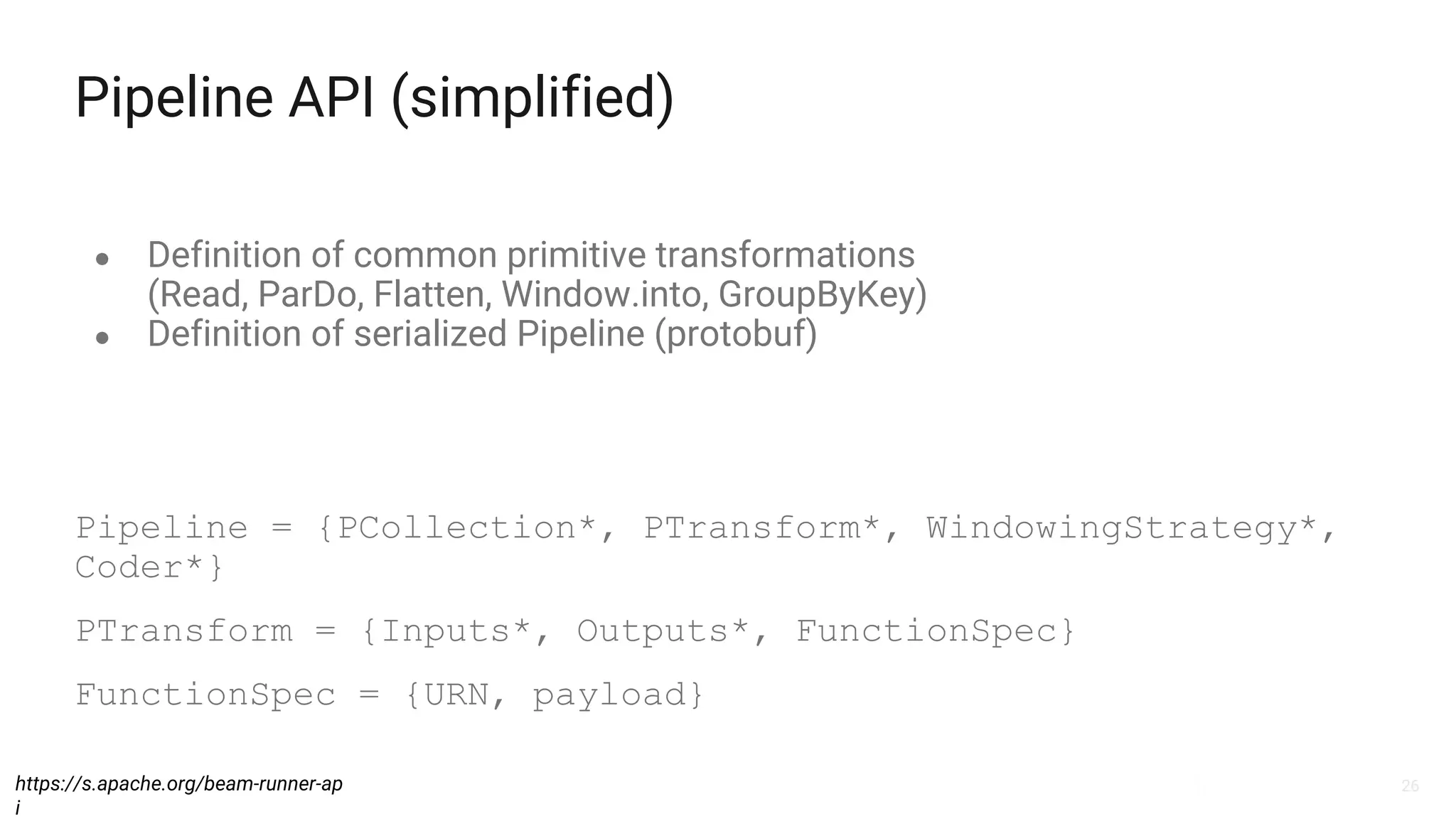

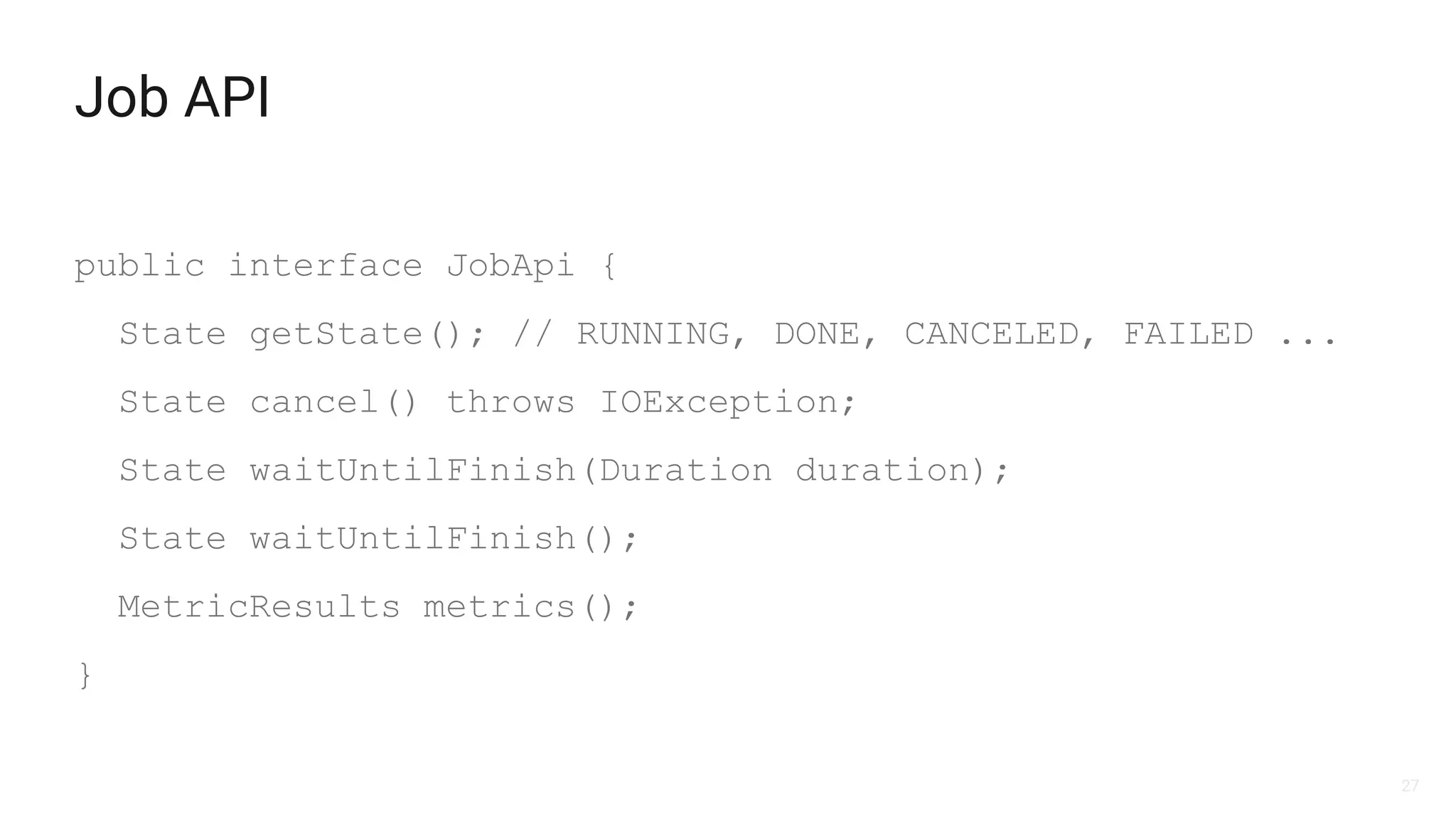

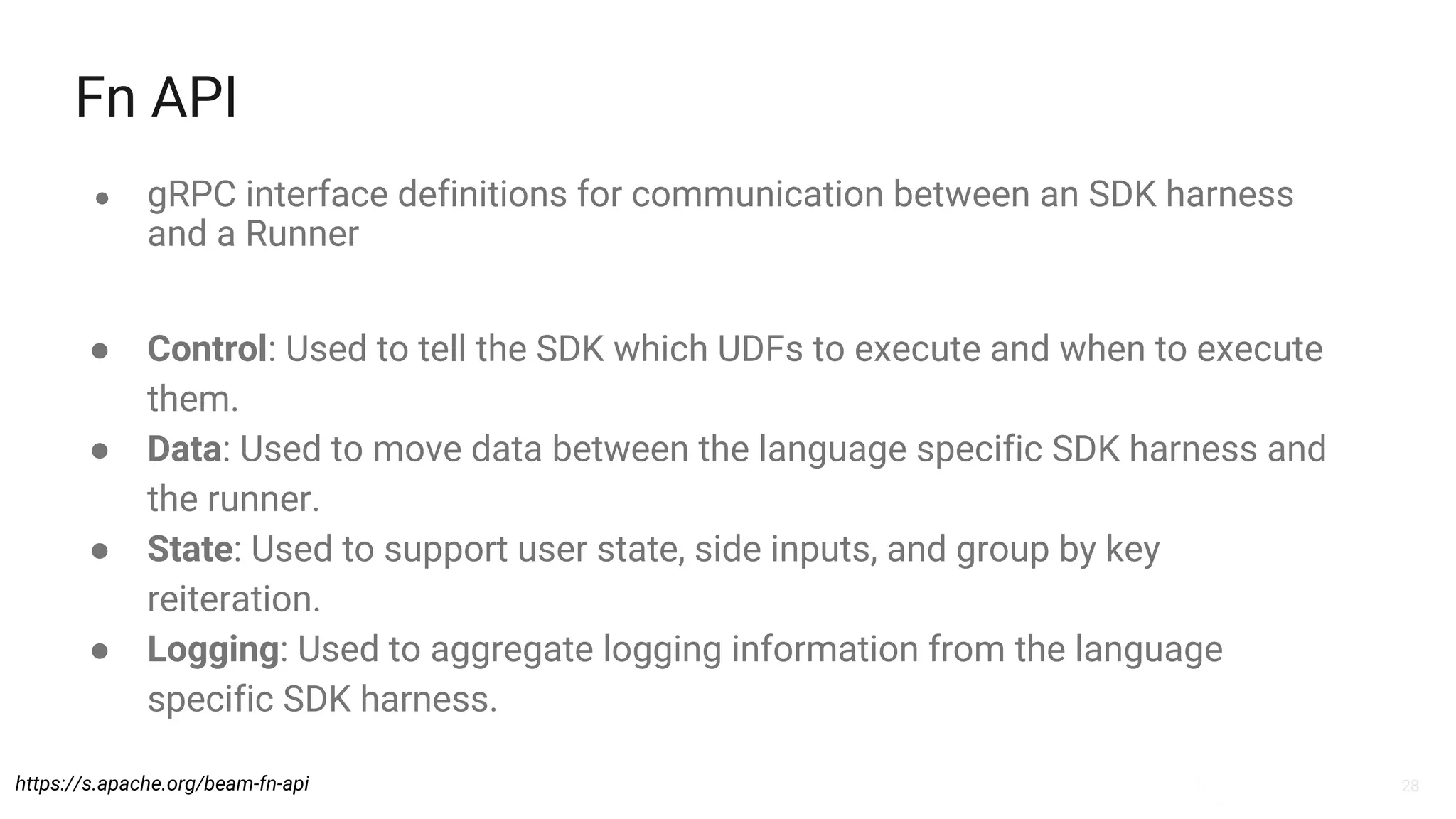

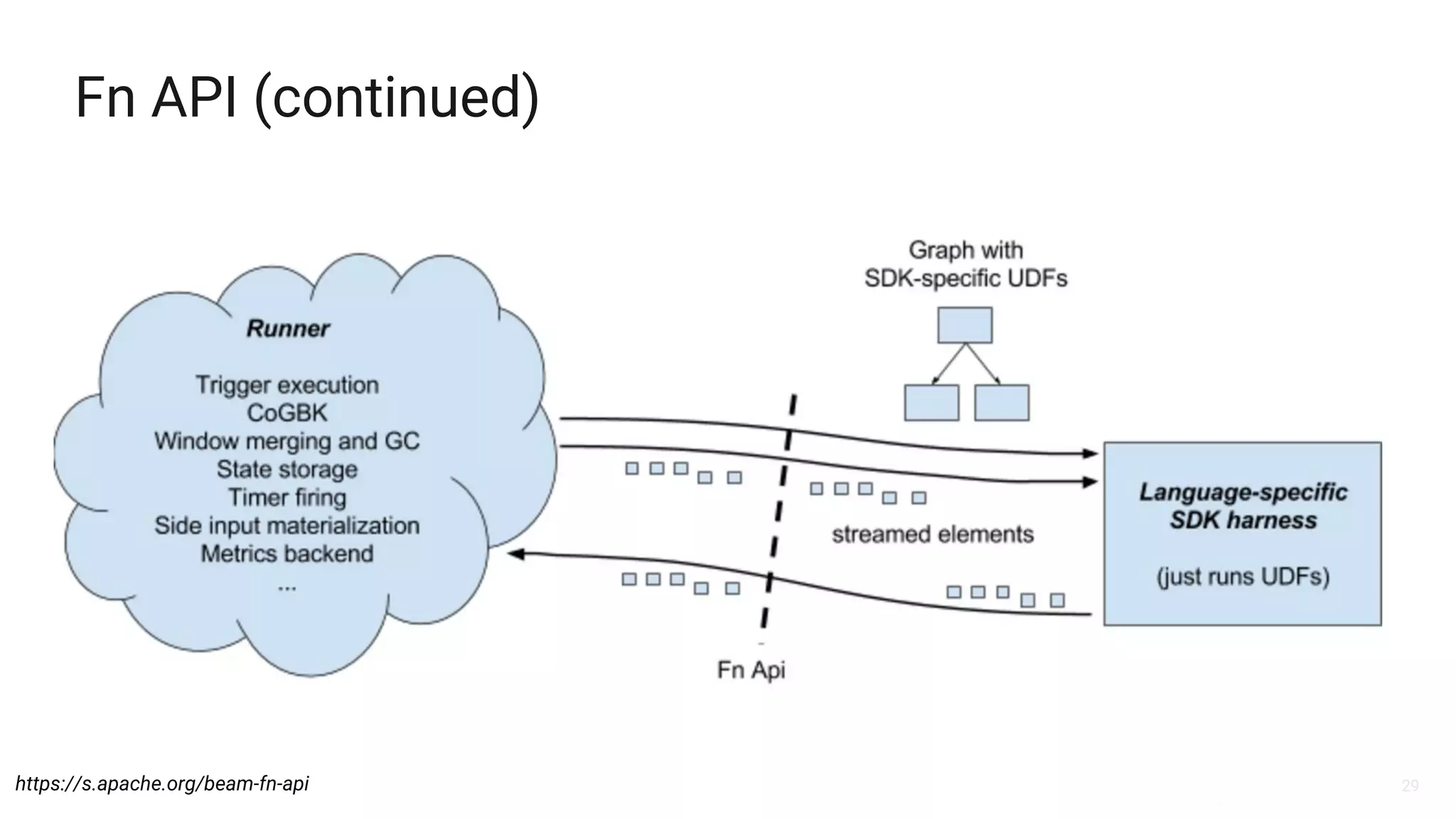

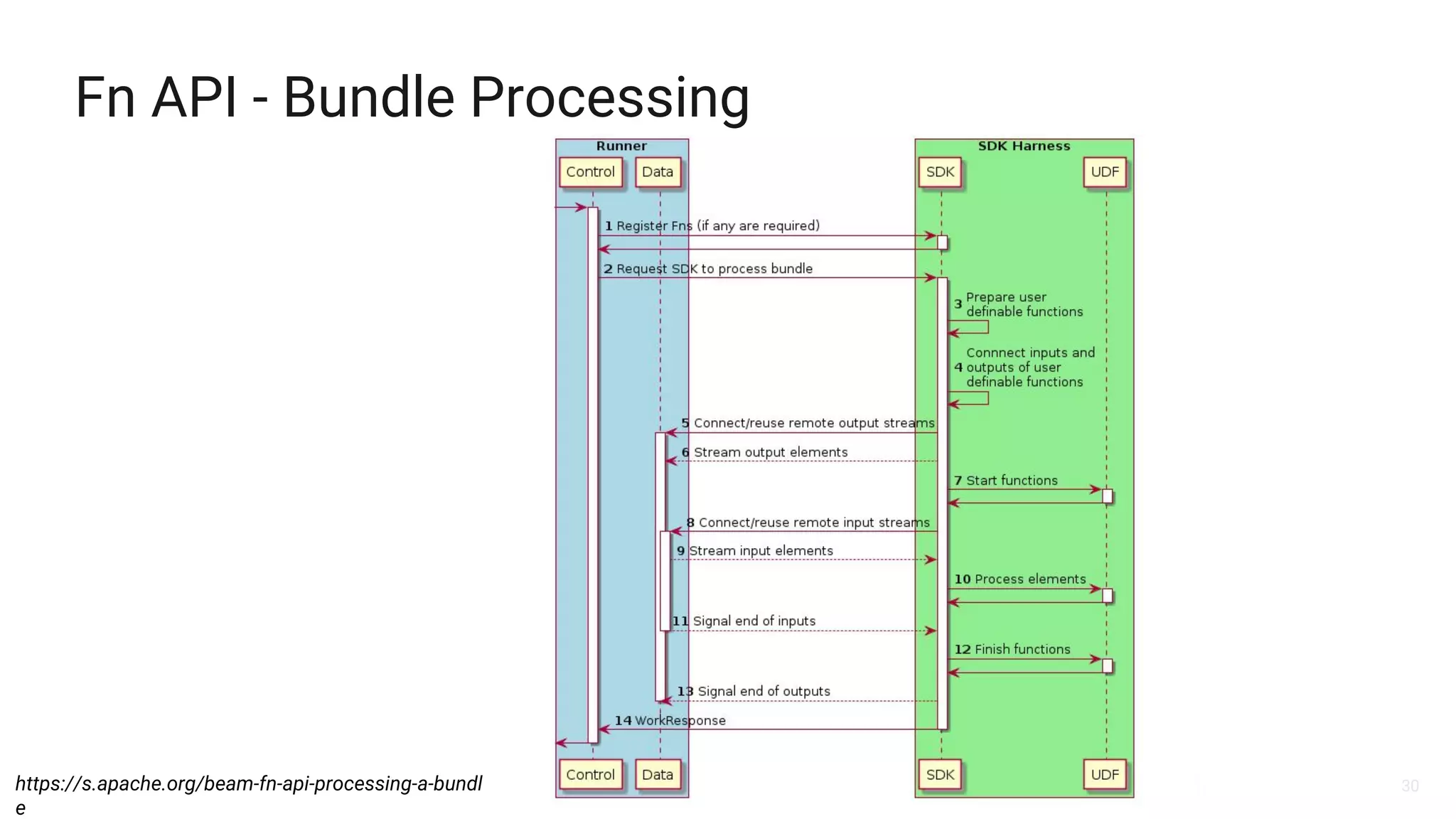

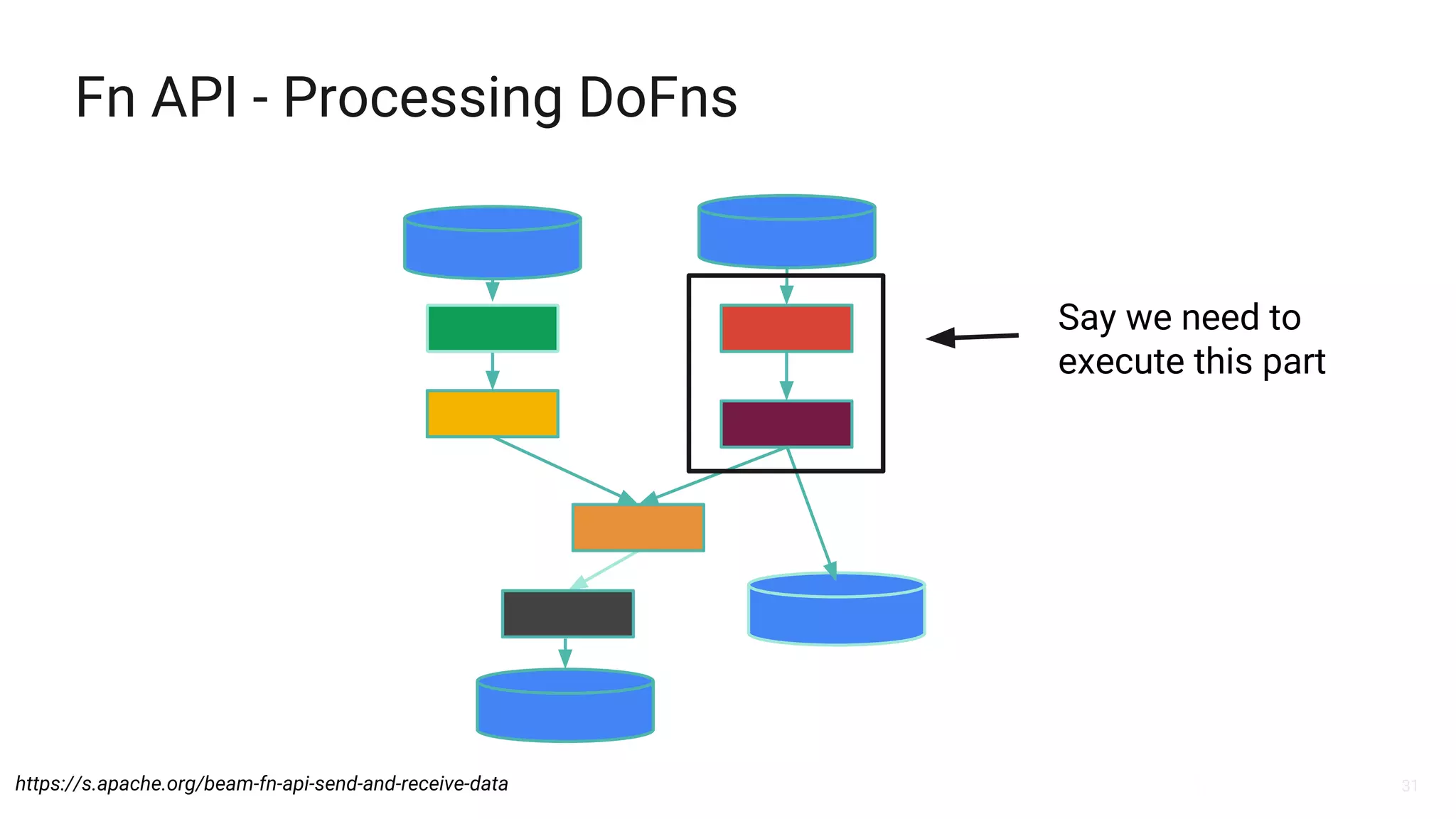

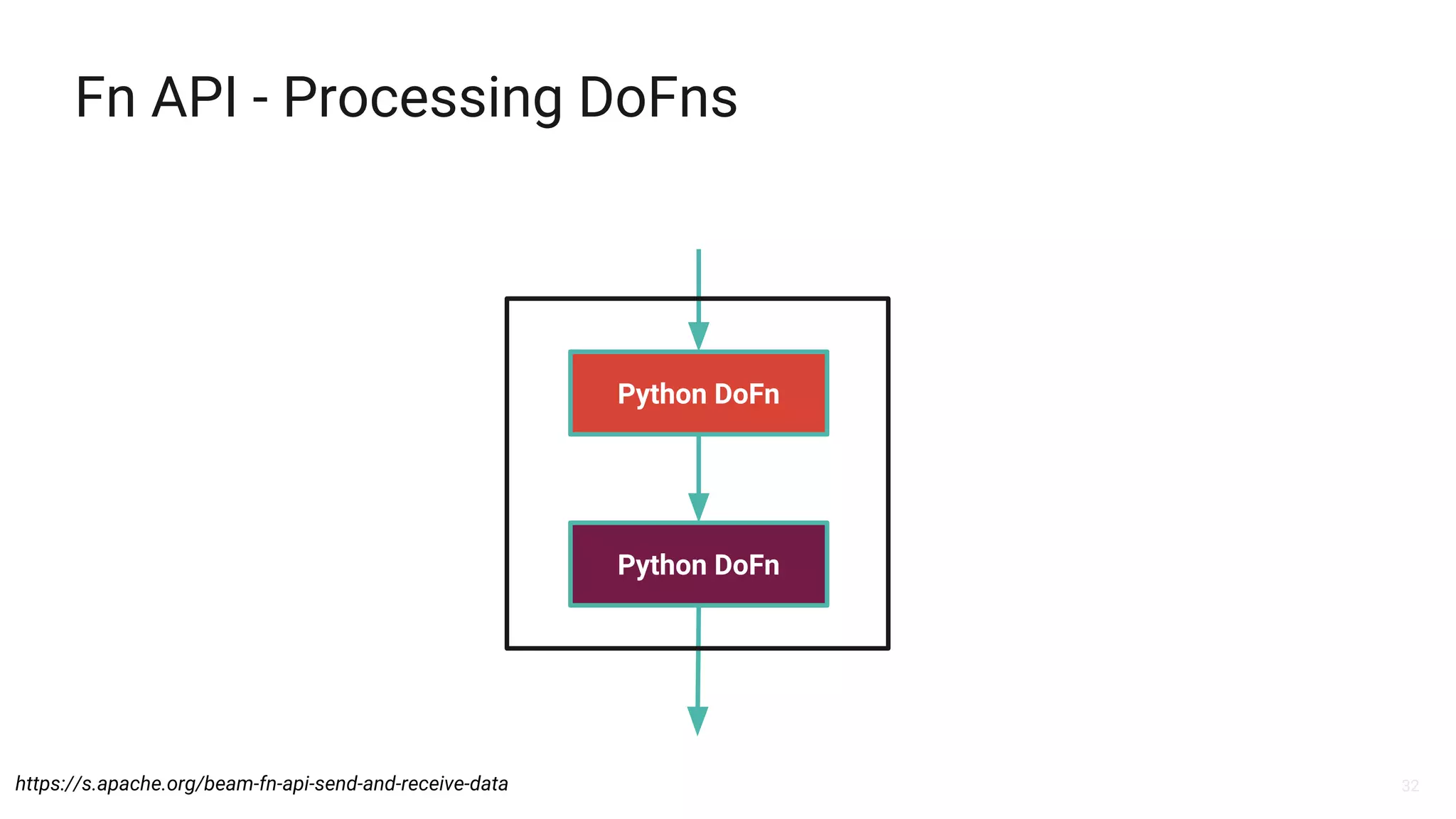

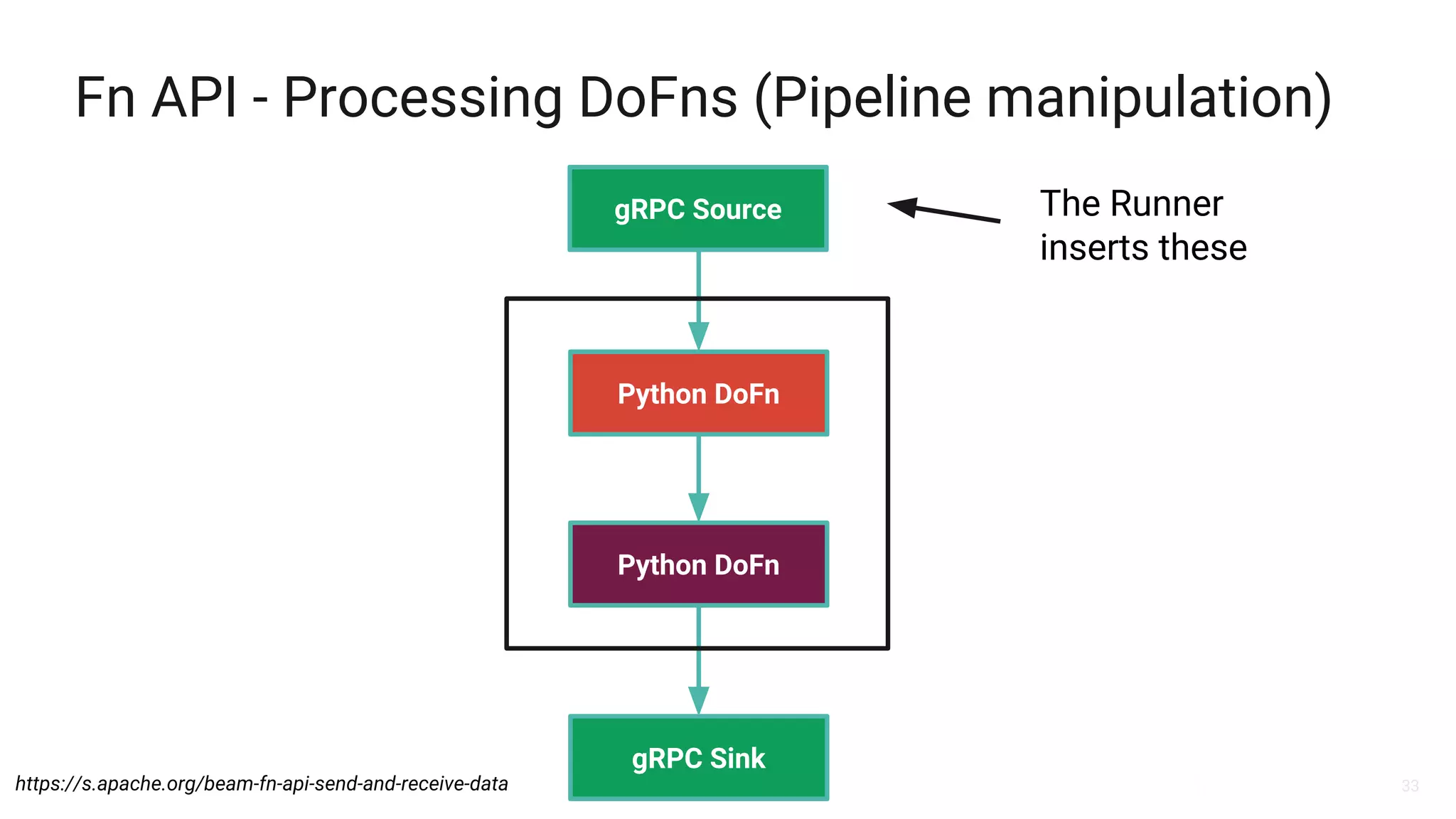

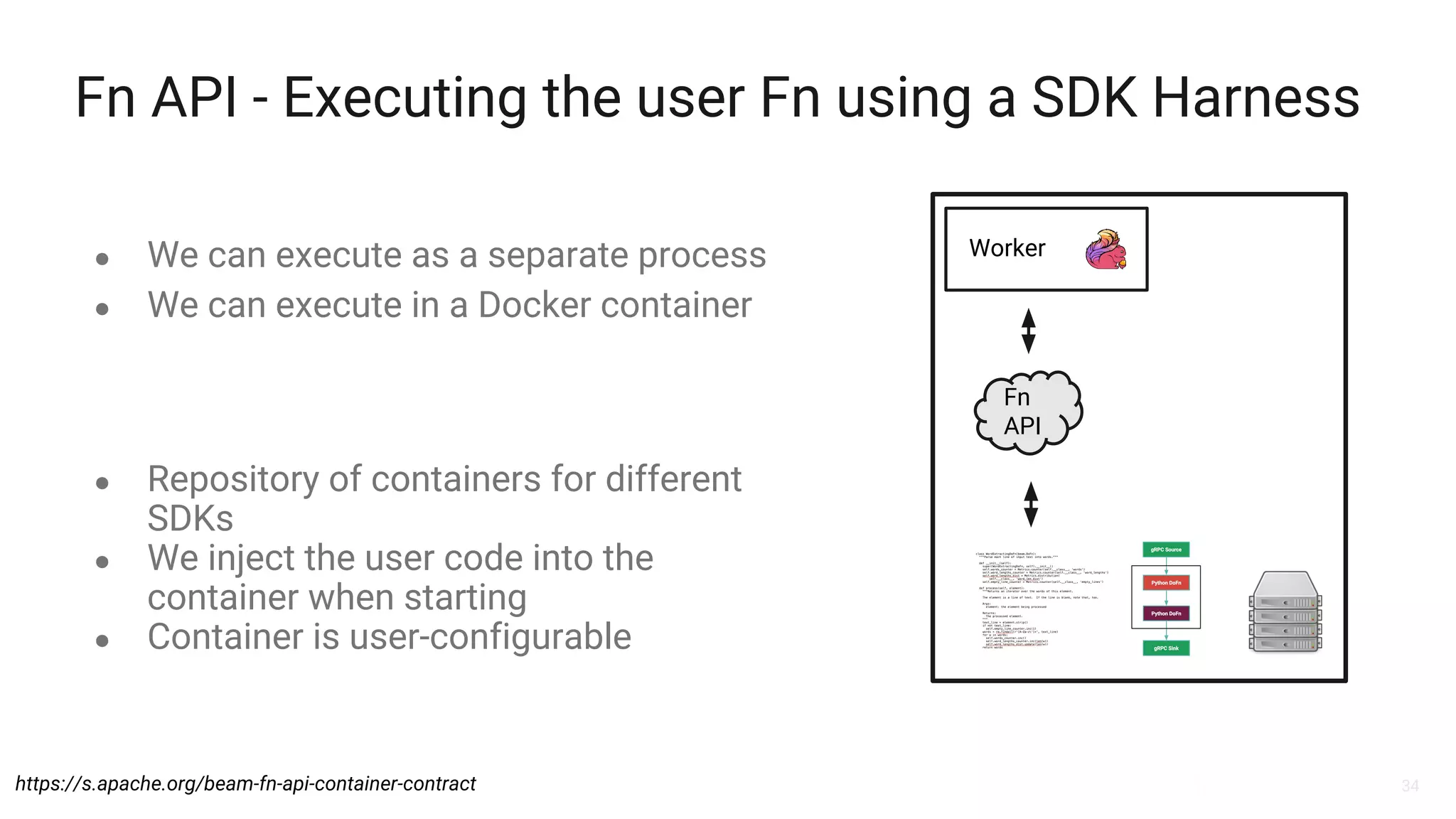

The document discusses Apache Beam and its integration with Apache Flink for stream processing, highlighting the evolution of Beam, its model, and the portability APIs for executing Pythonic Beam jobs. It covers the architecture of the Beam model, the roles of SDKs and runners, and how to construct and execute pipelines using various programming languages. Additionally, it addresses the advantages and challenges of using Beam with Flink, emphasizing the future developments and resources for further learning.