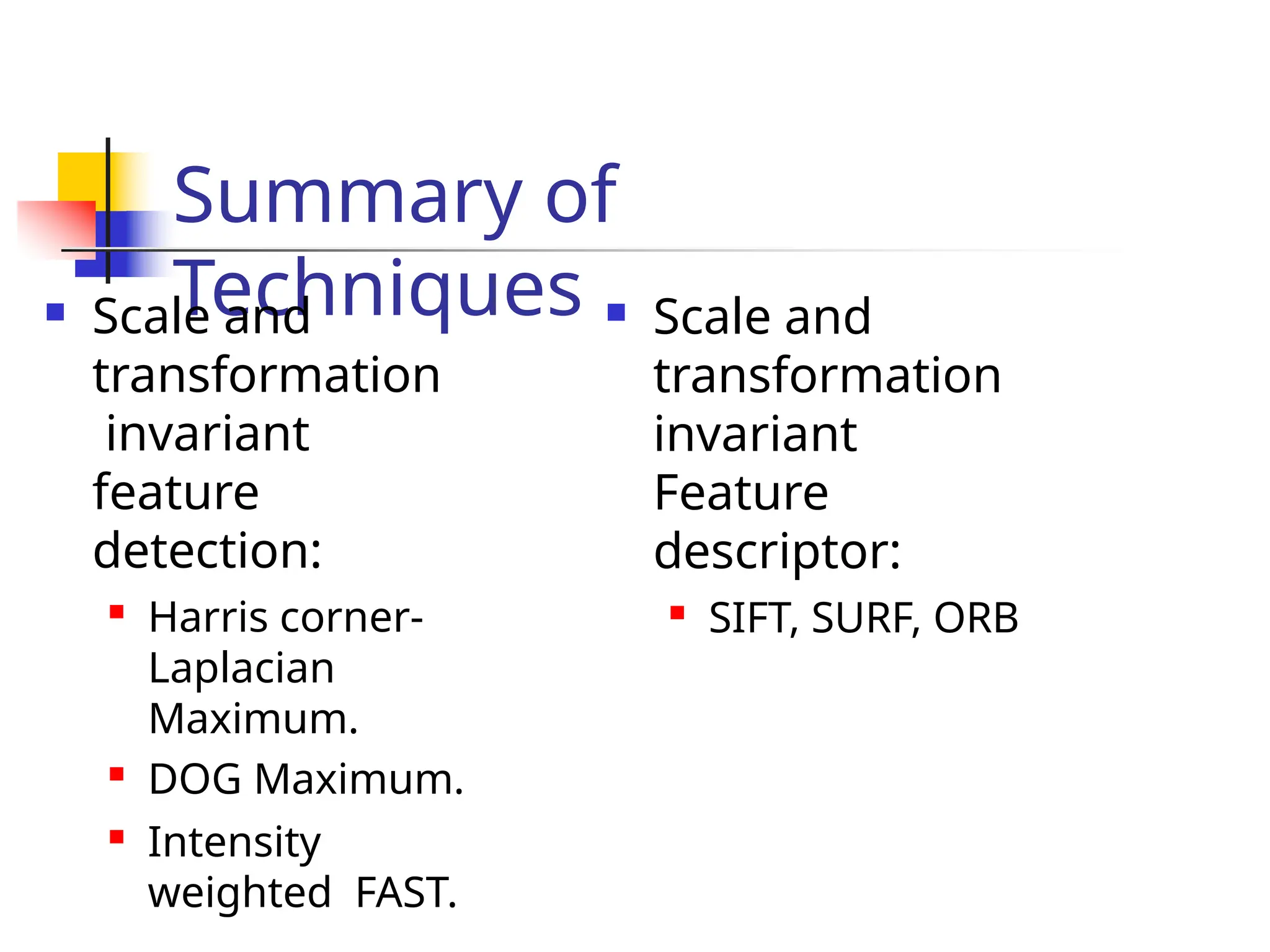

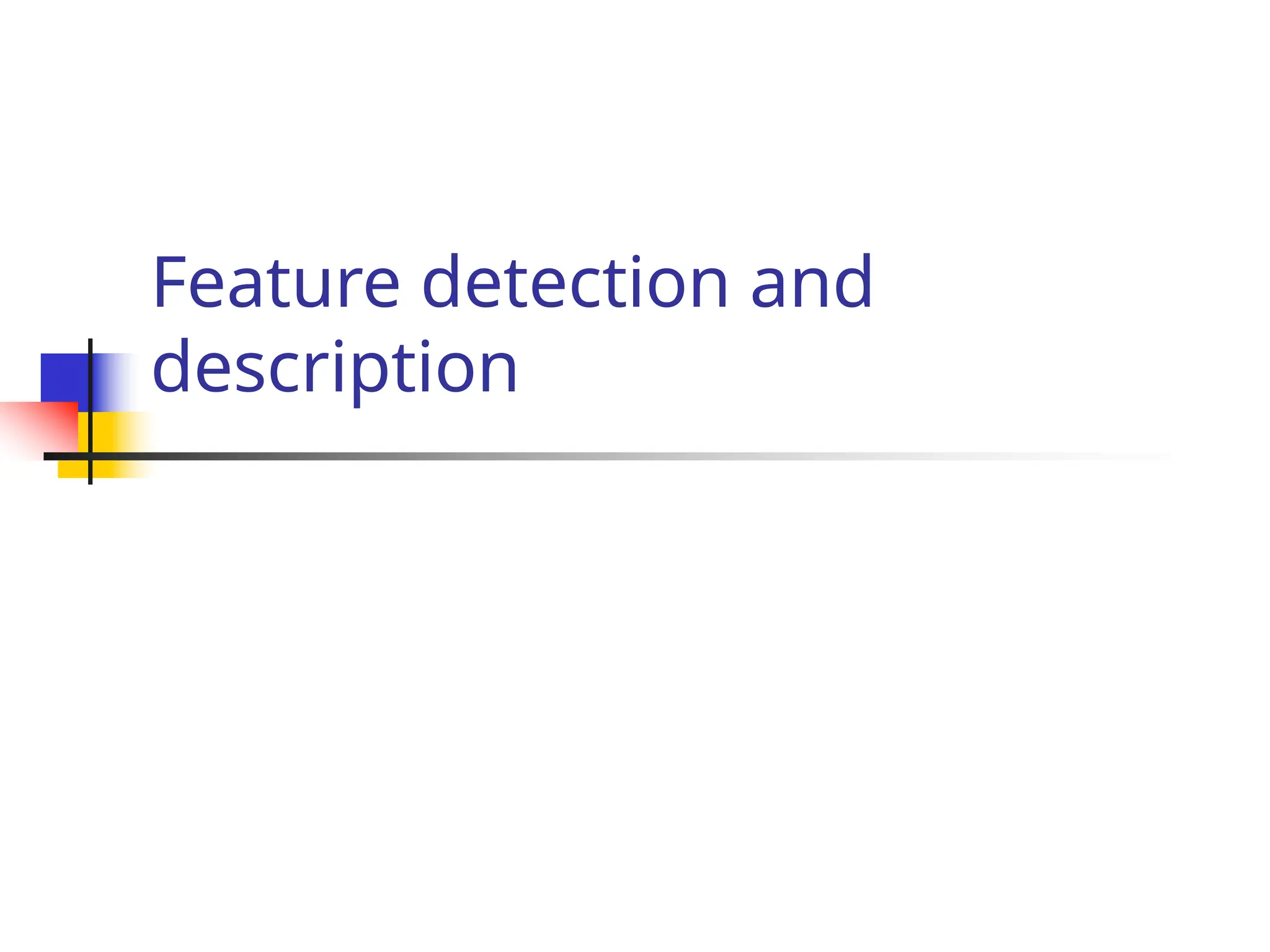

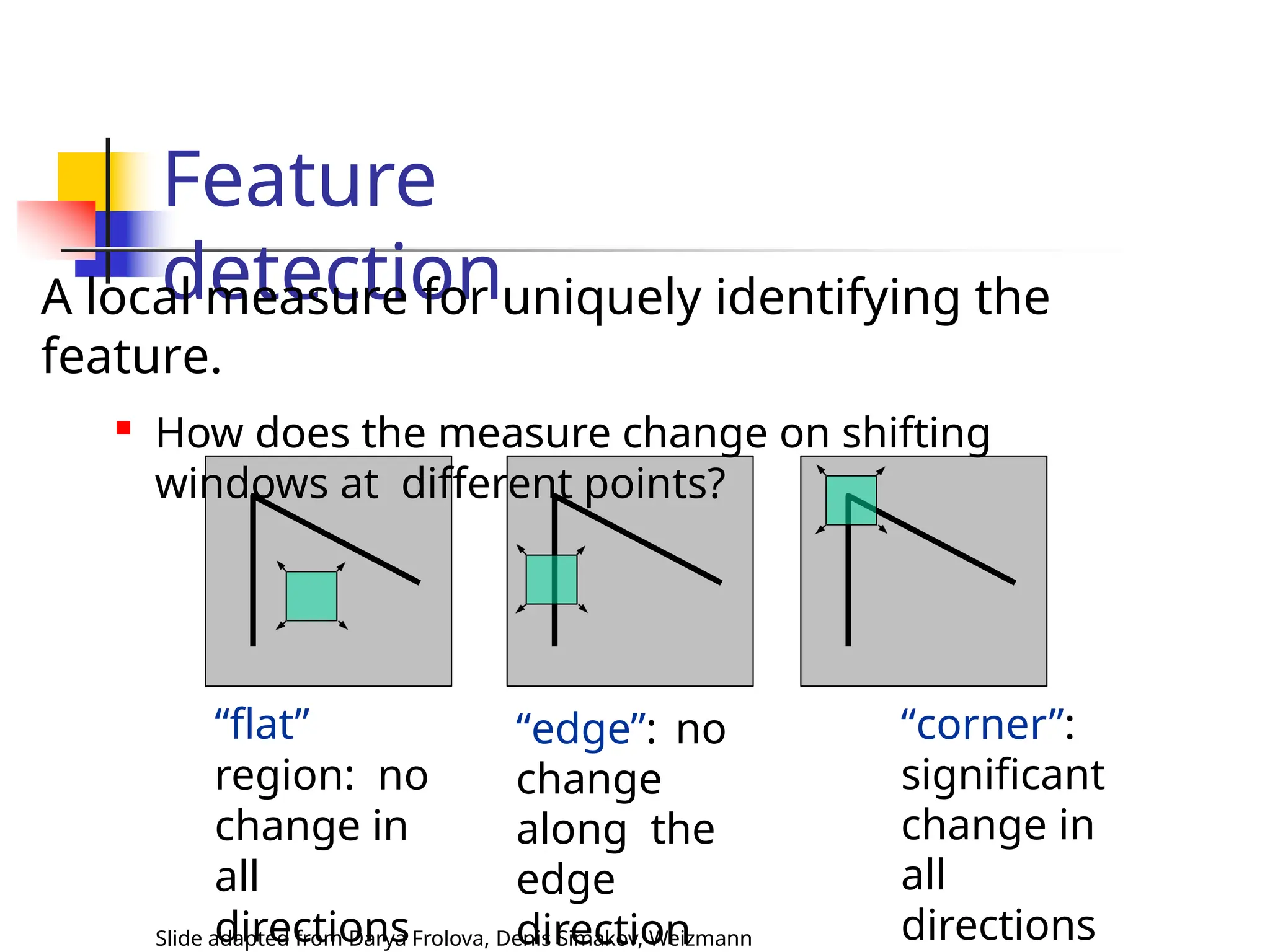

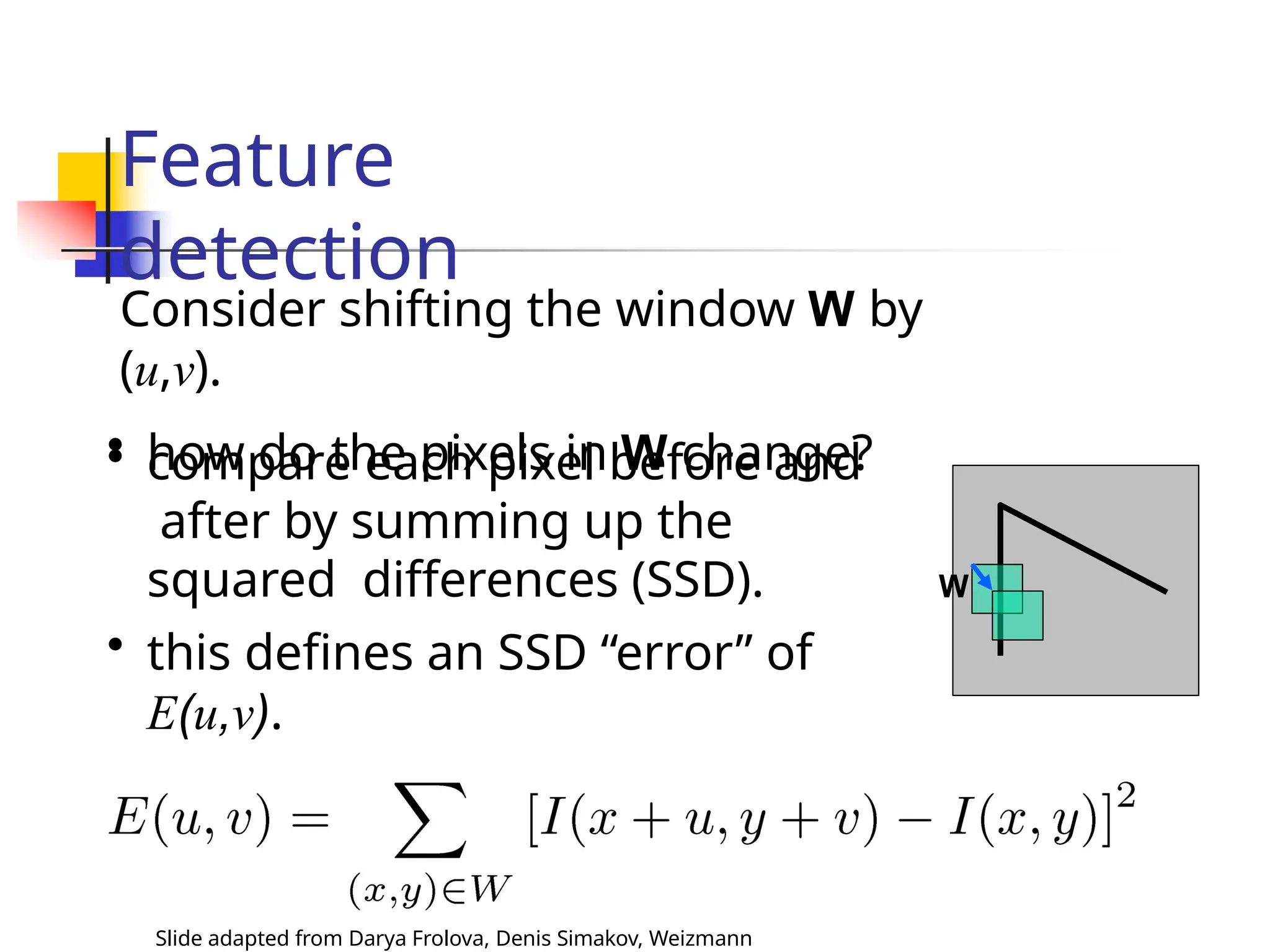

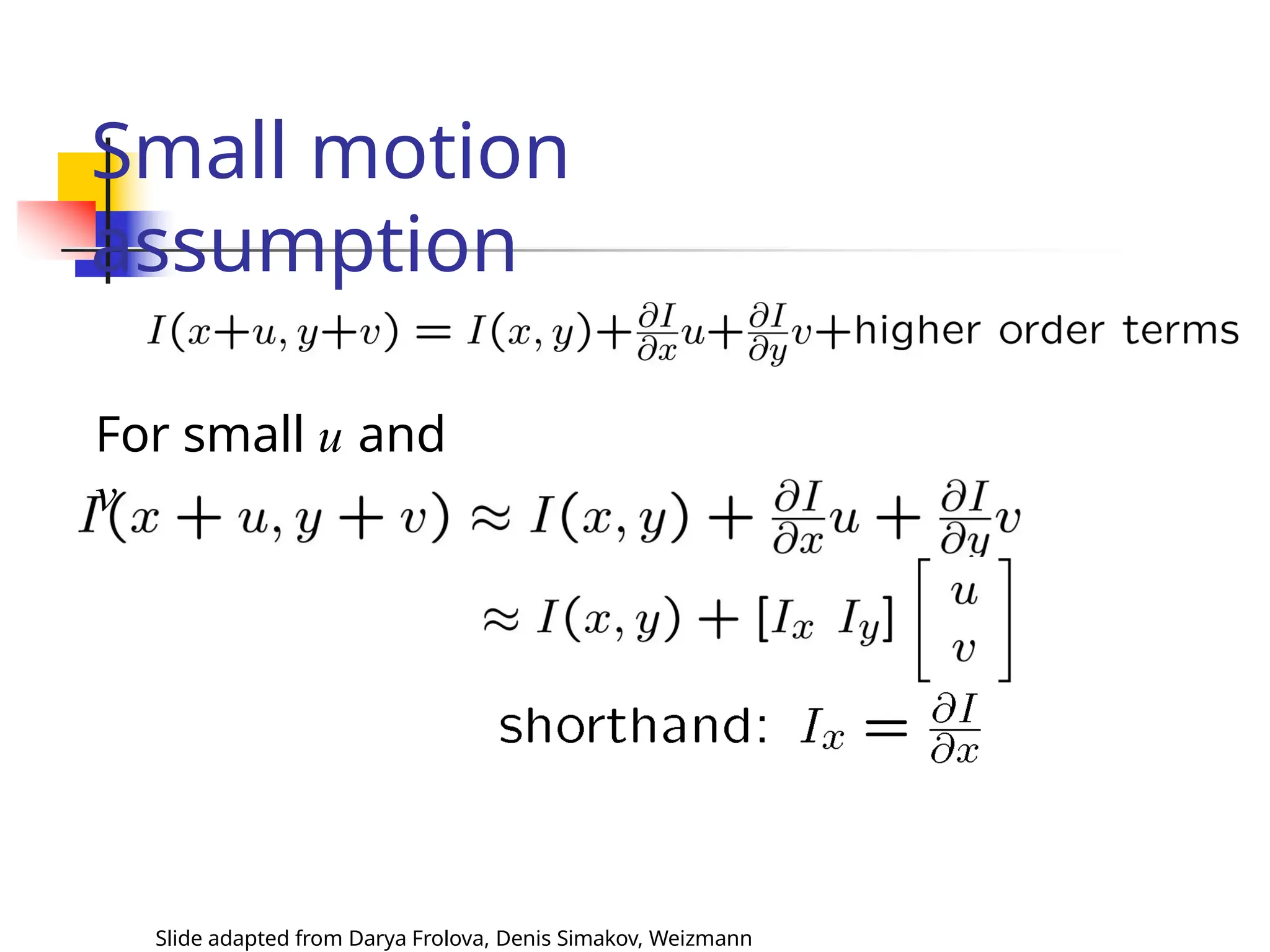

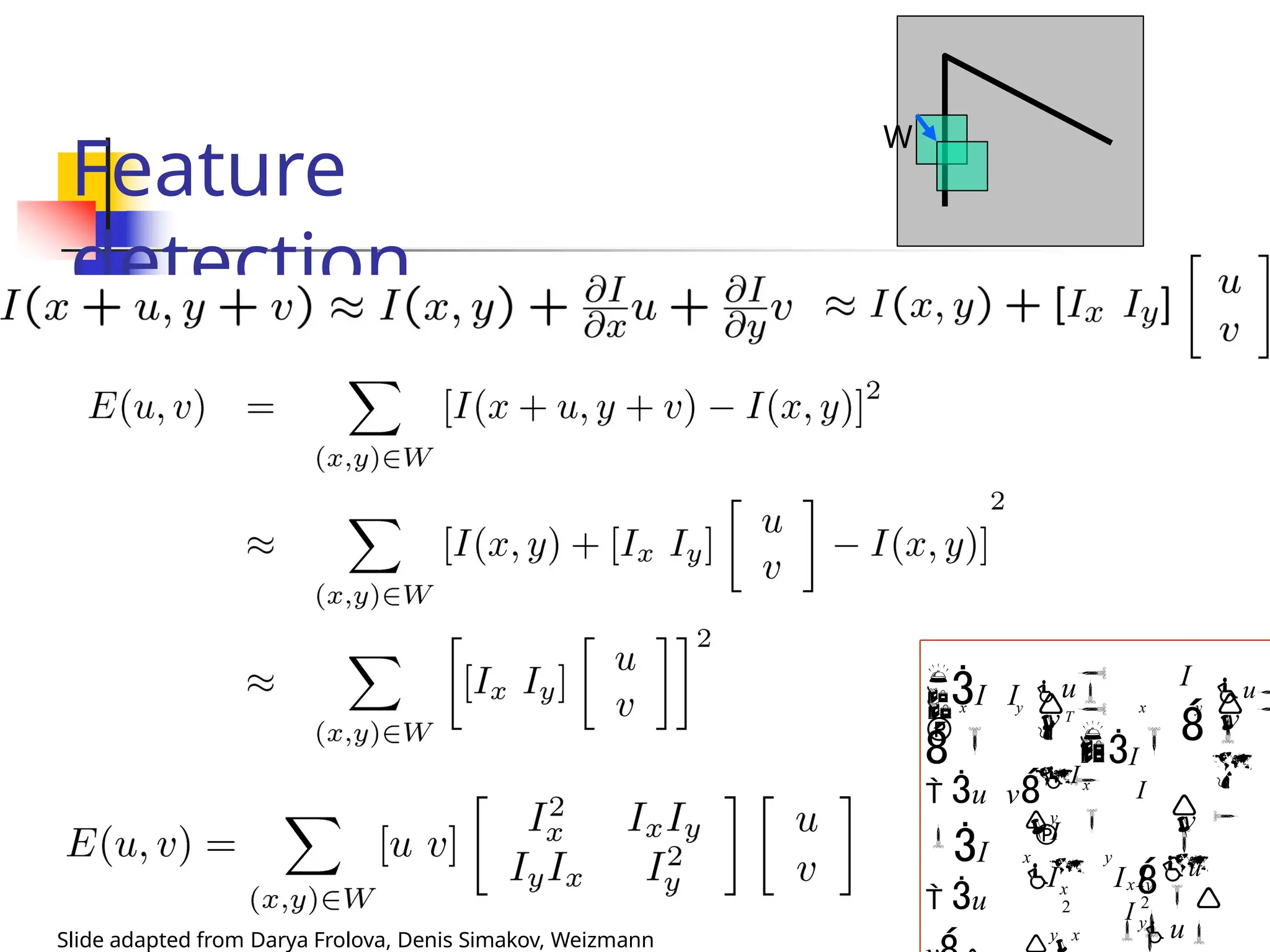

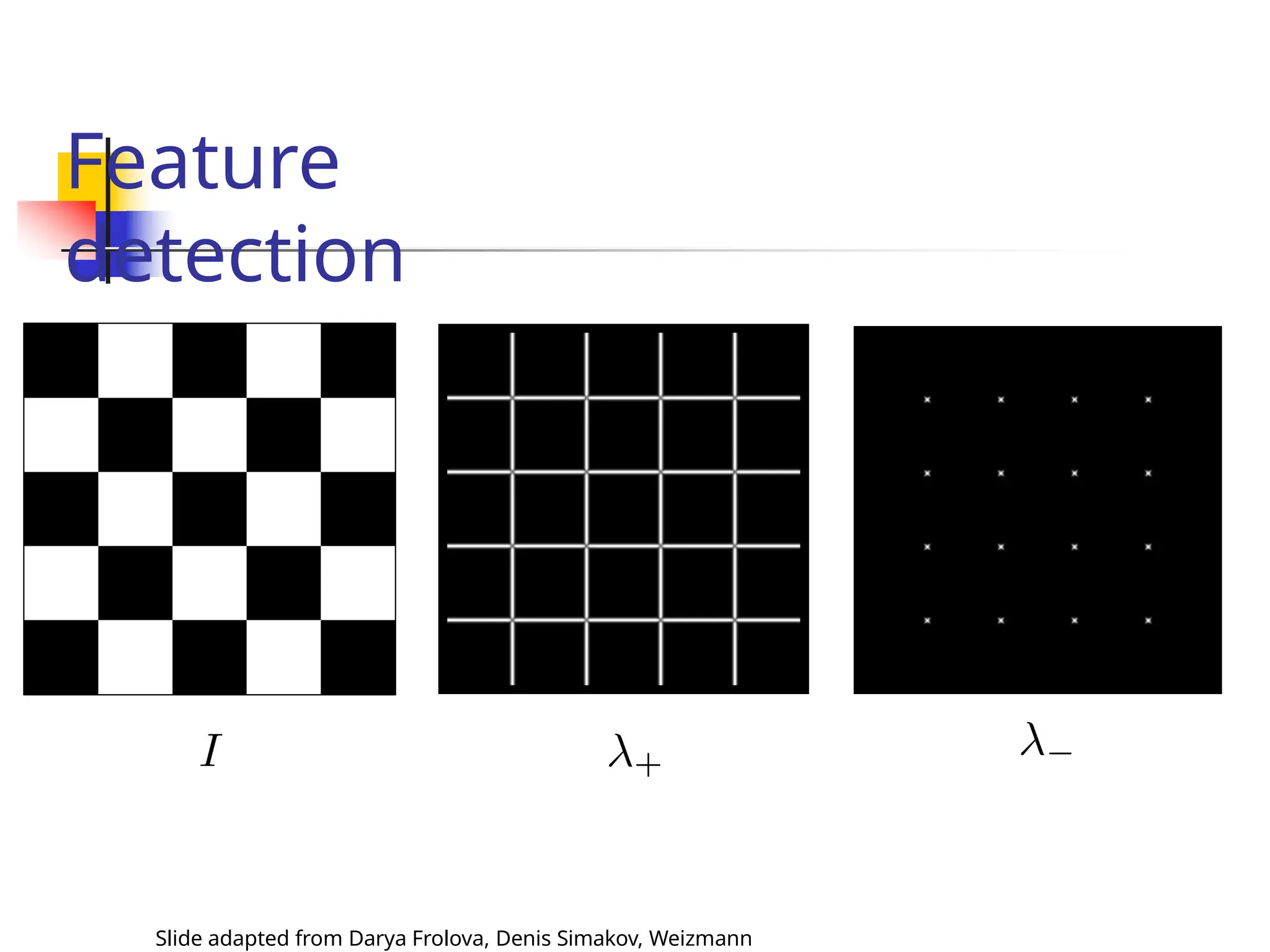

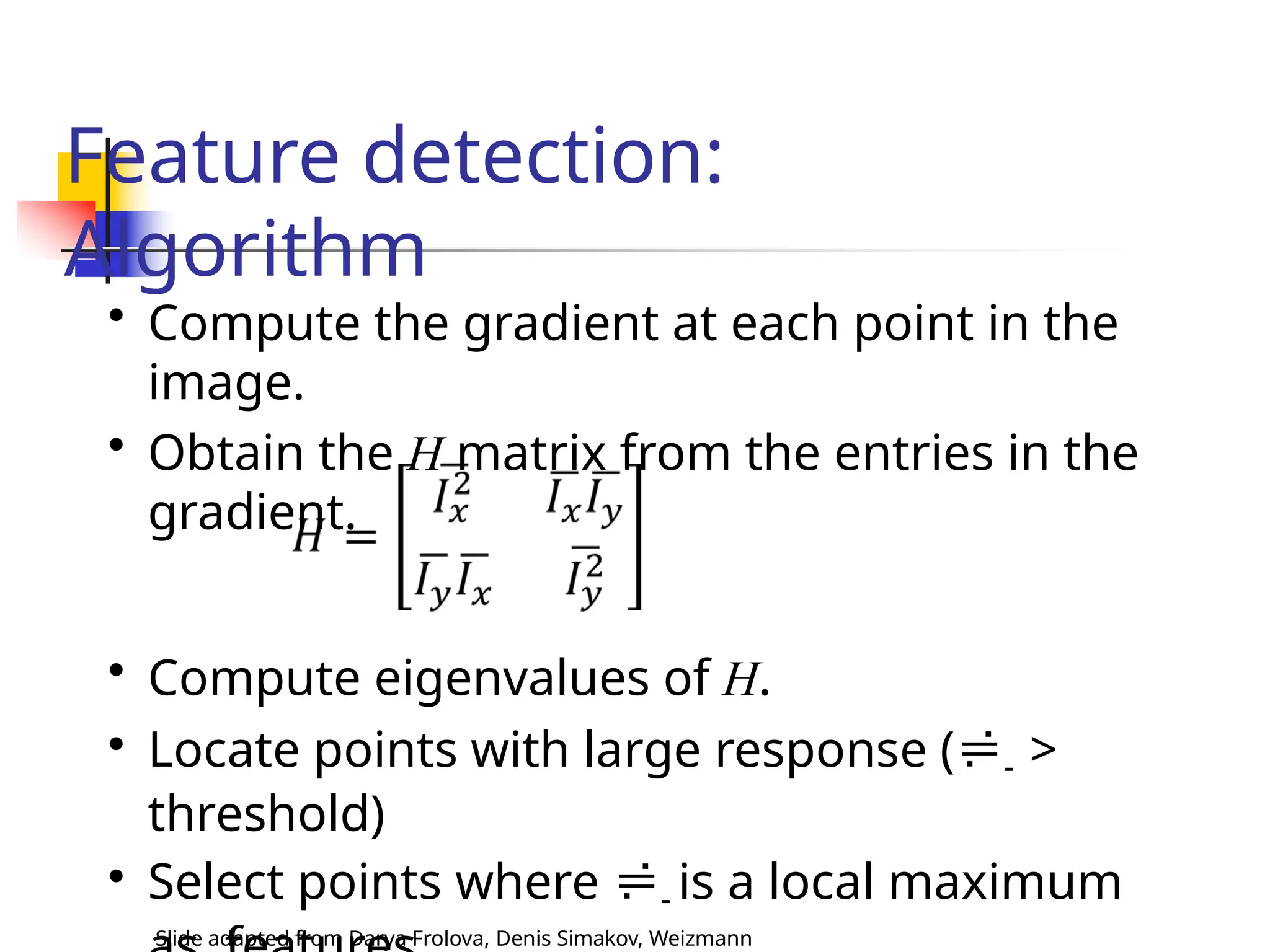

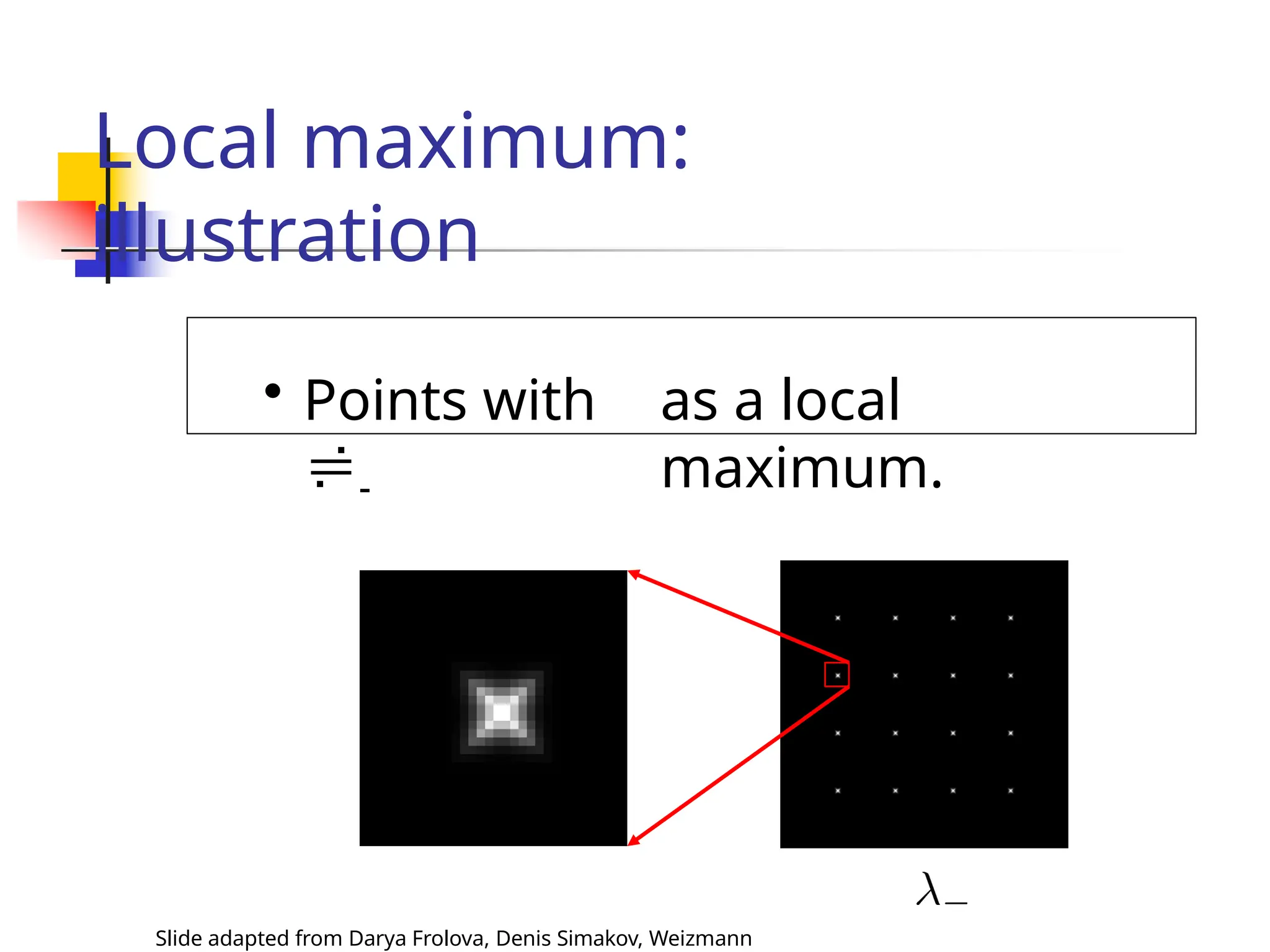

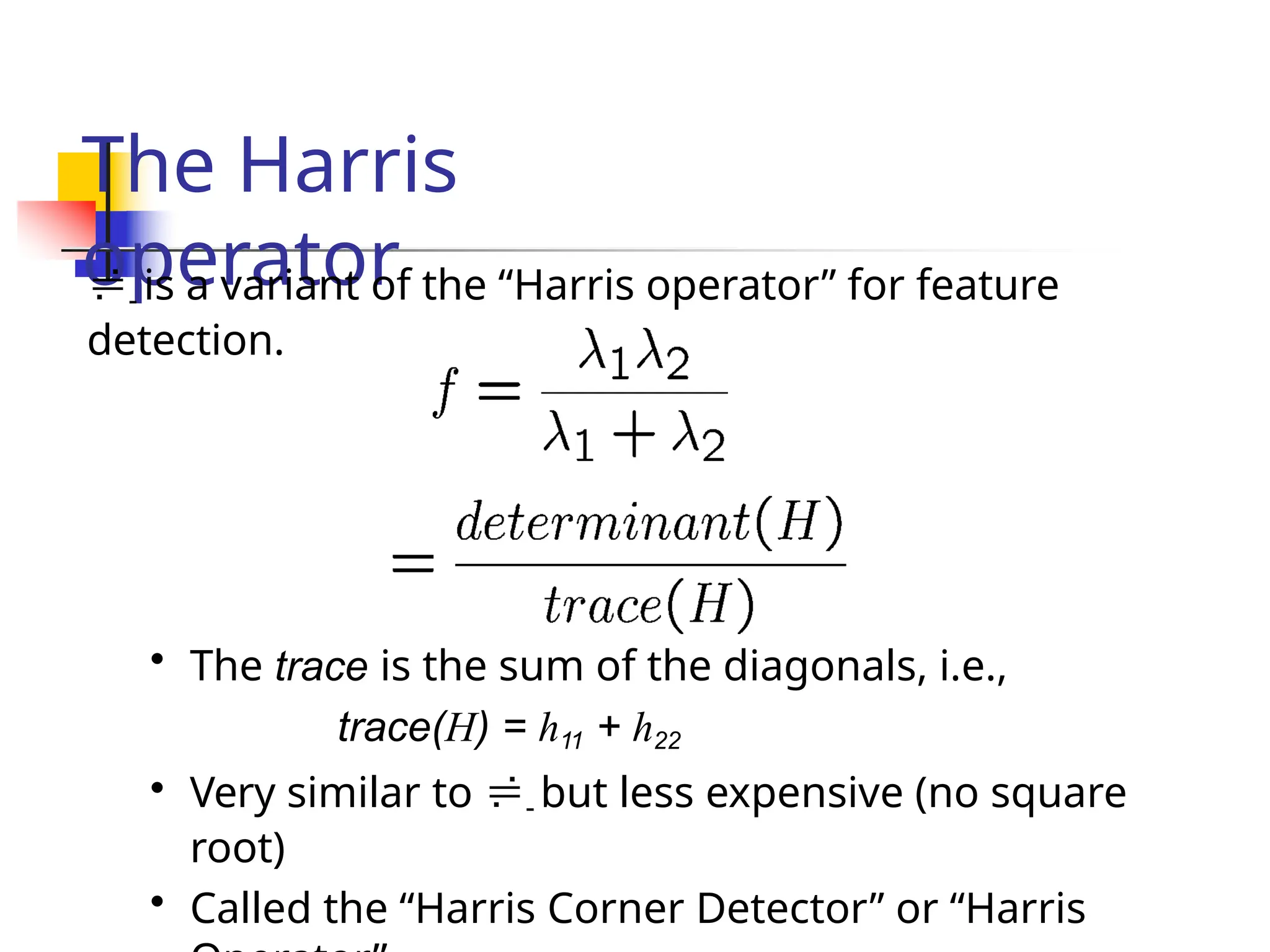

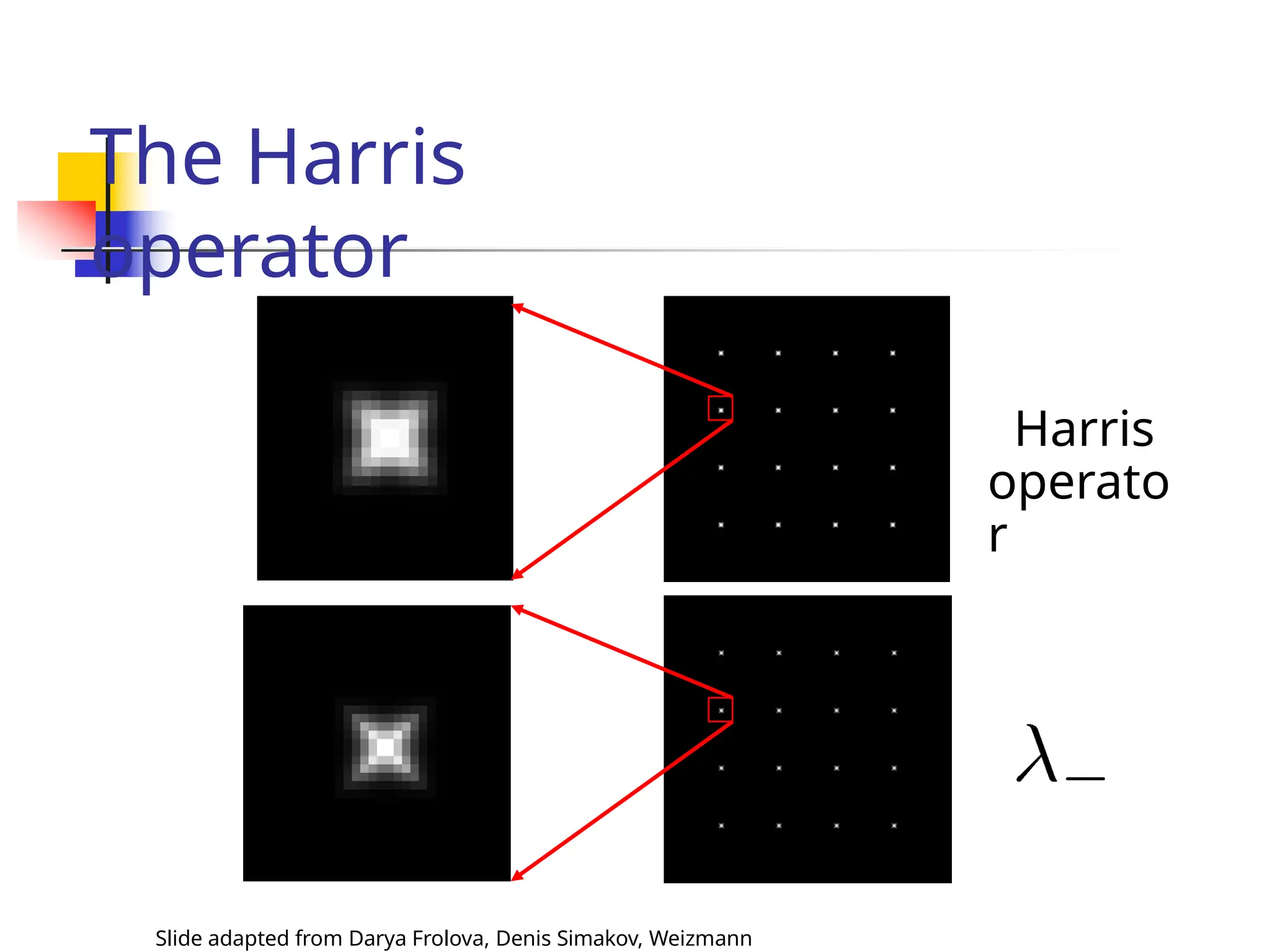

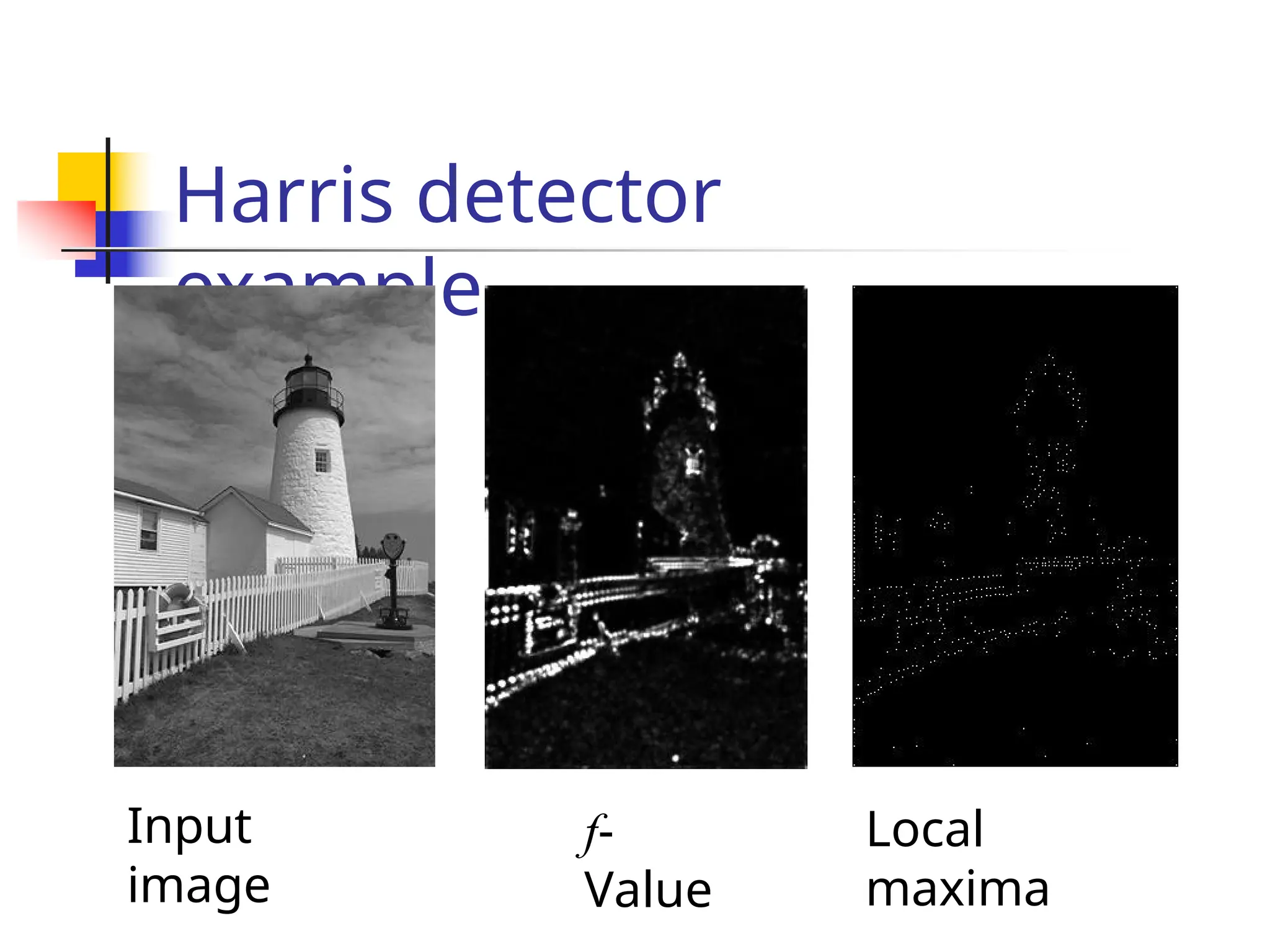

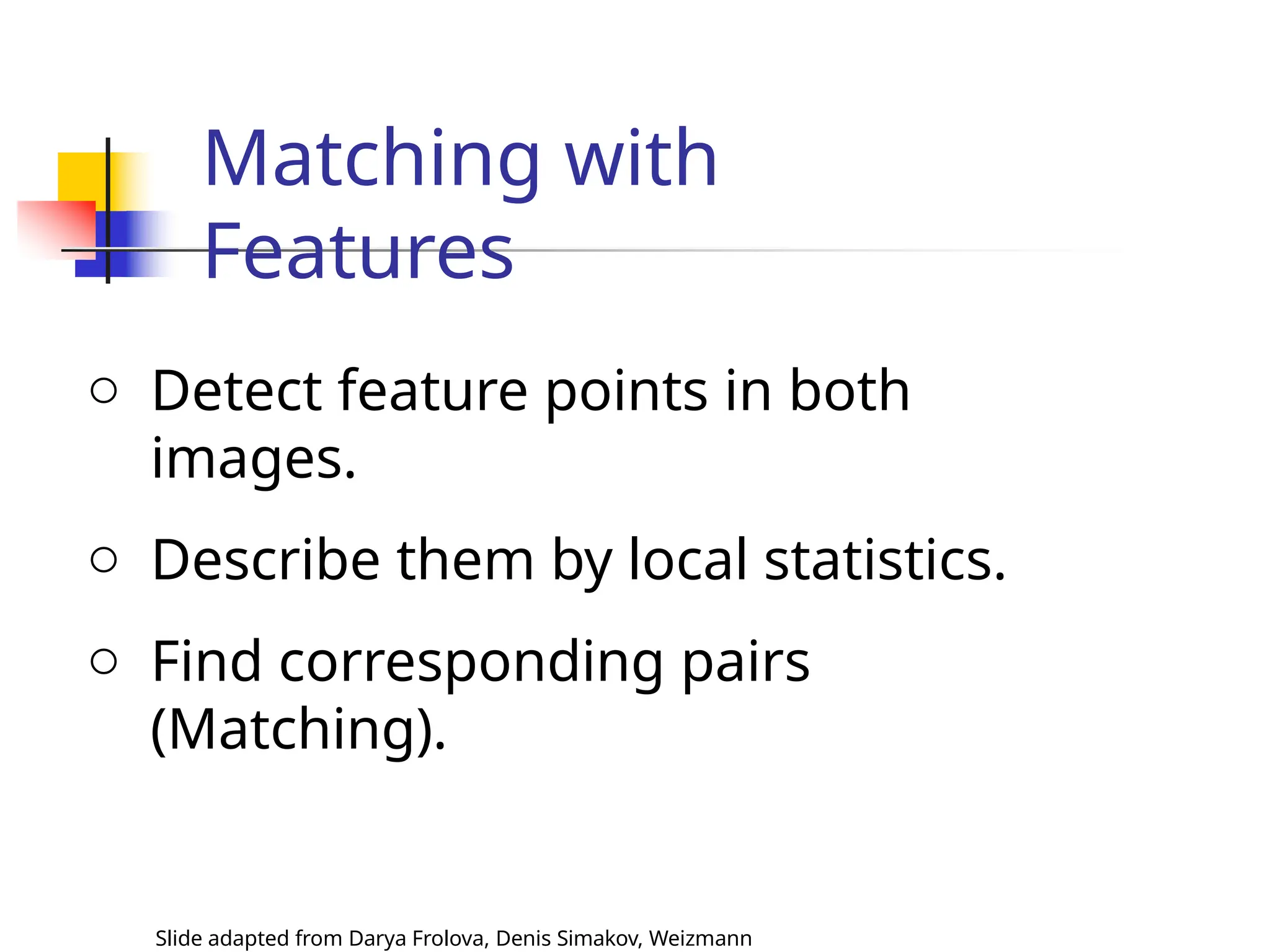

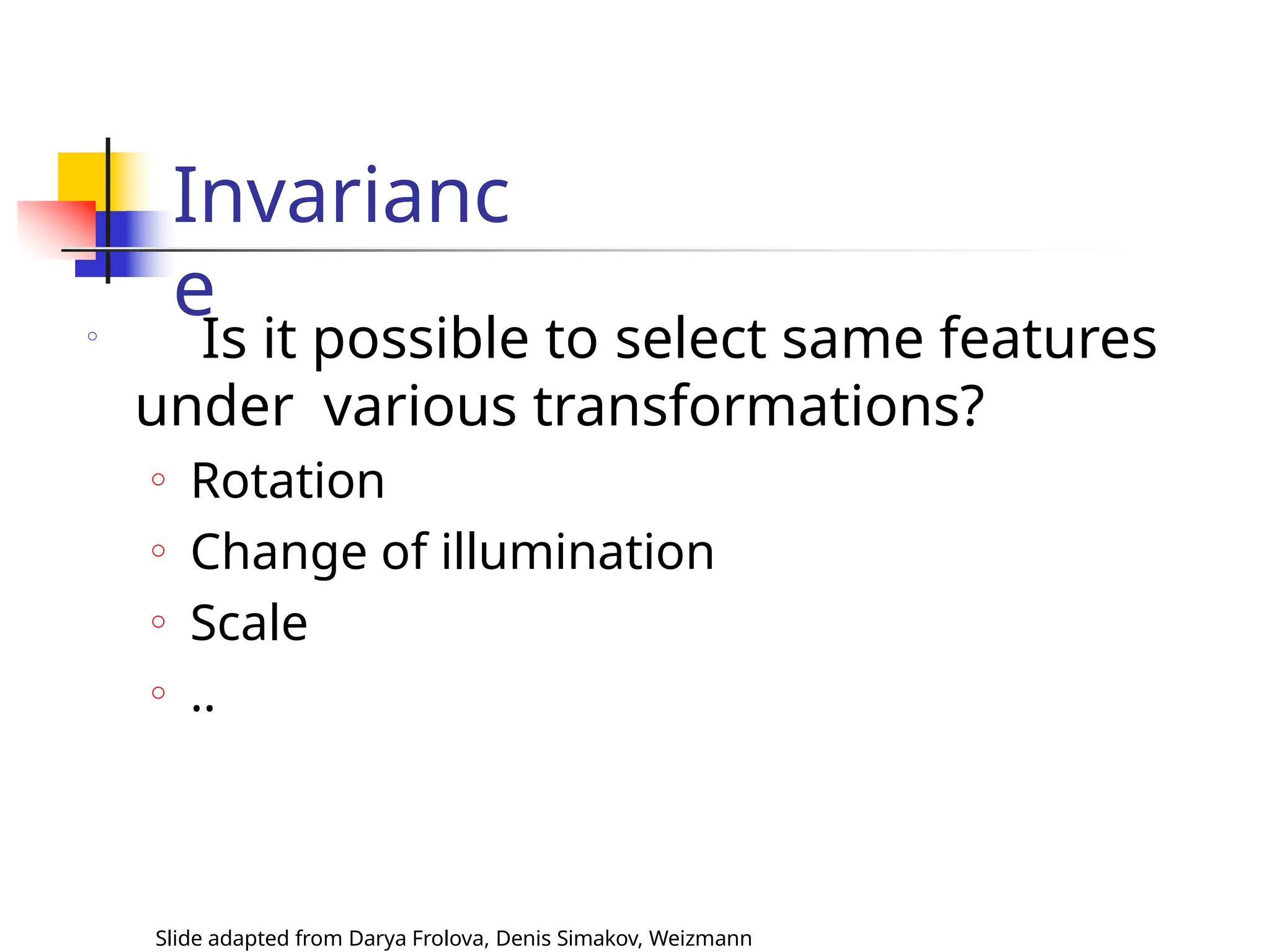

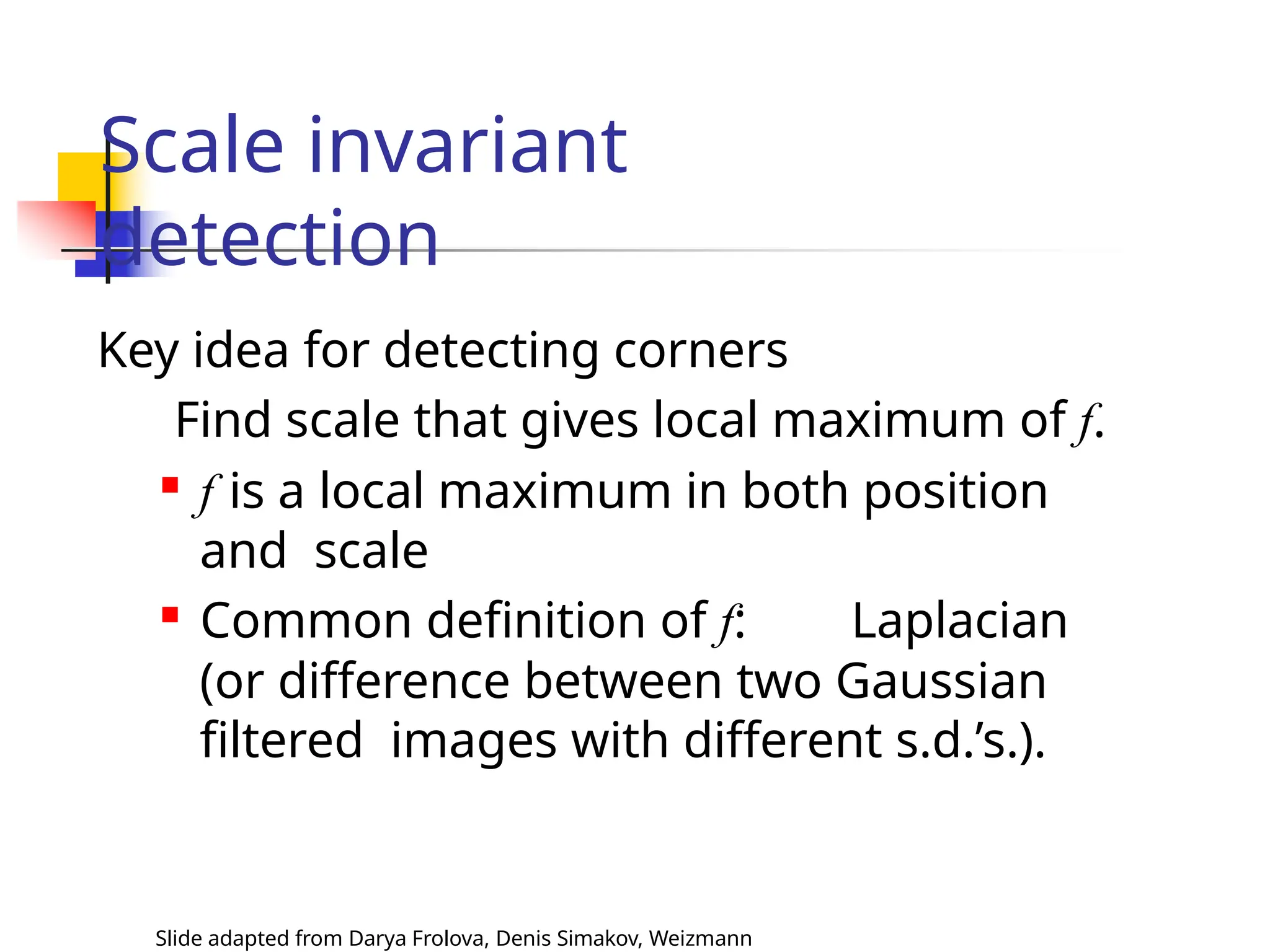

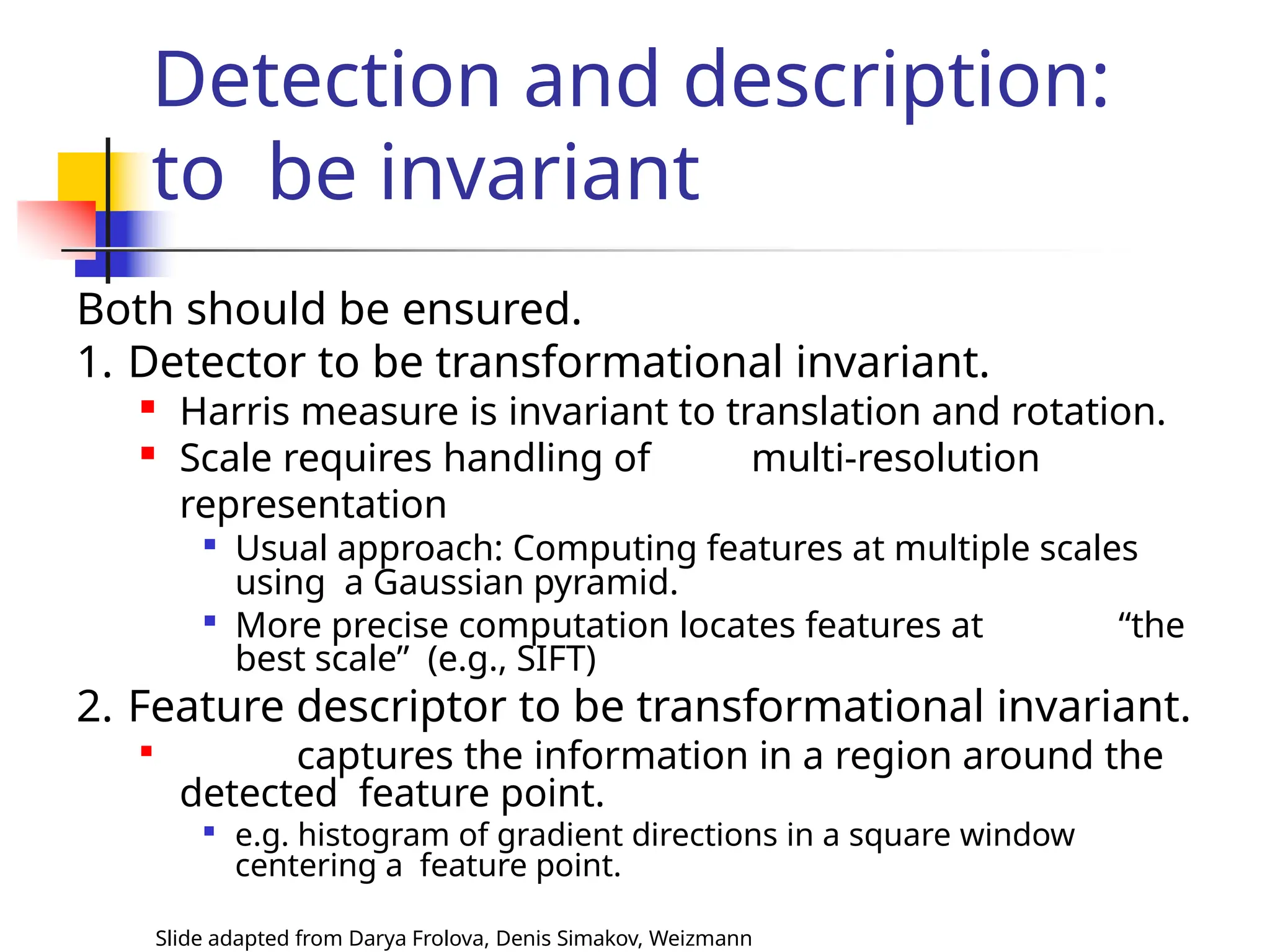

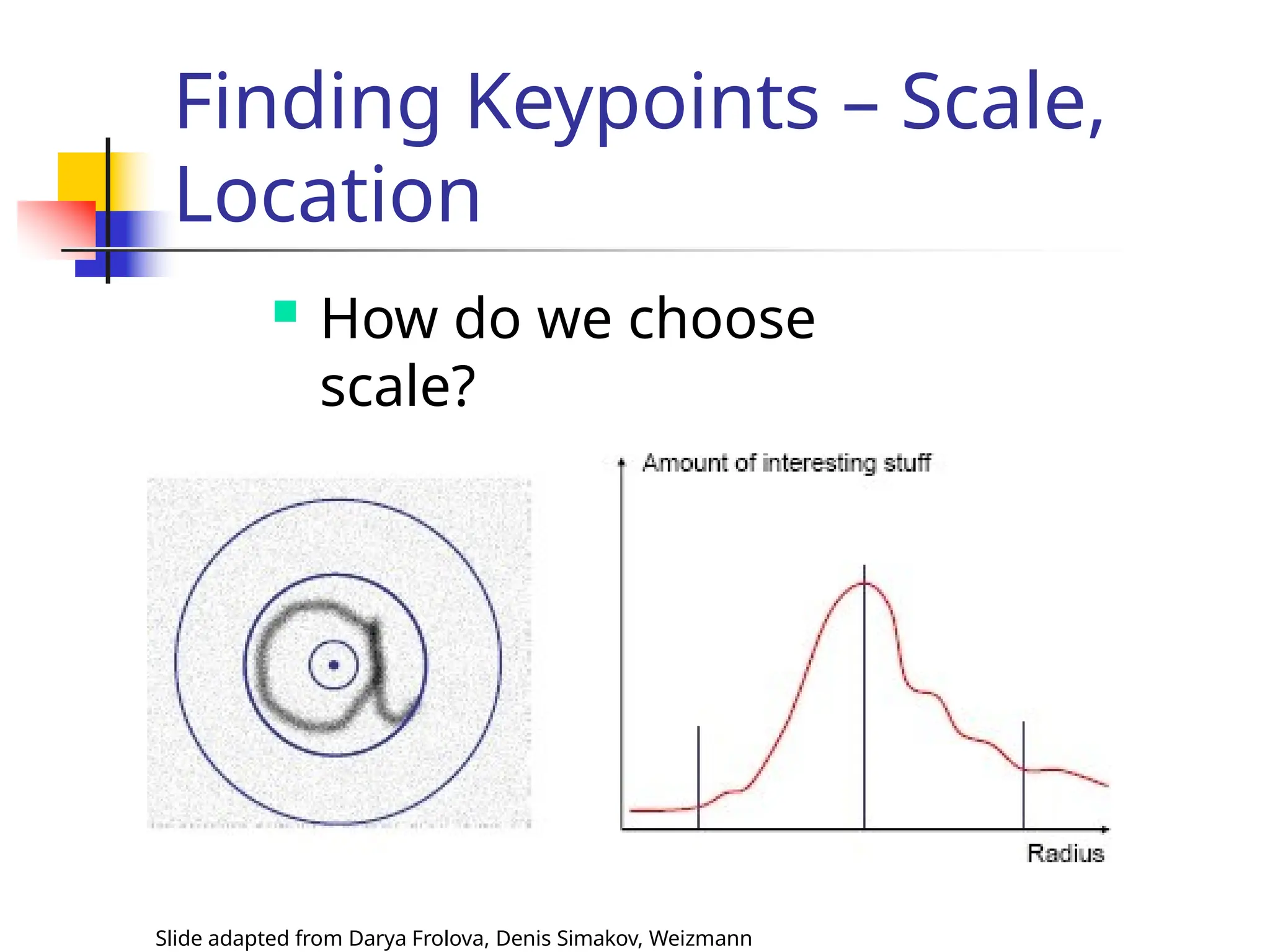

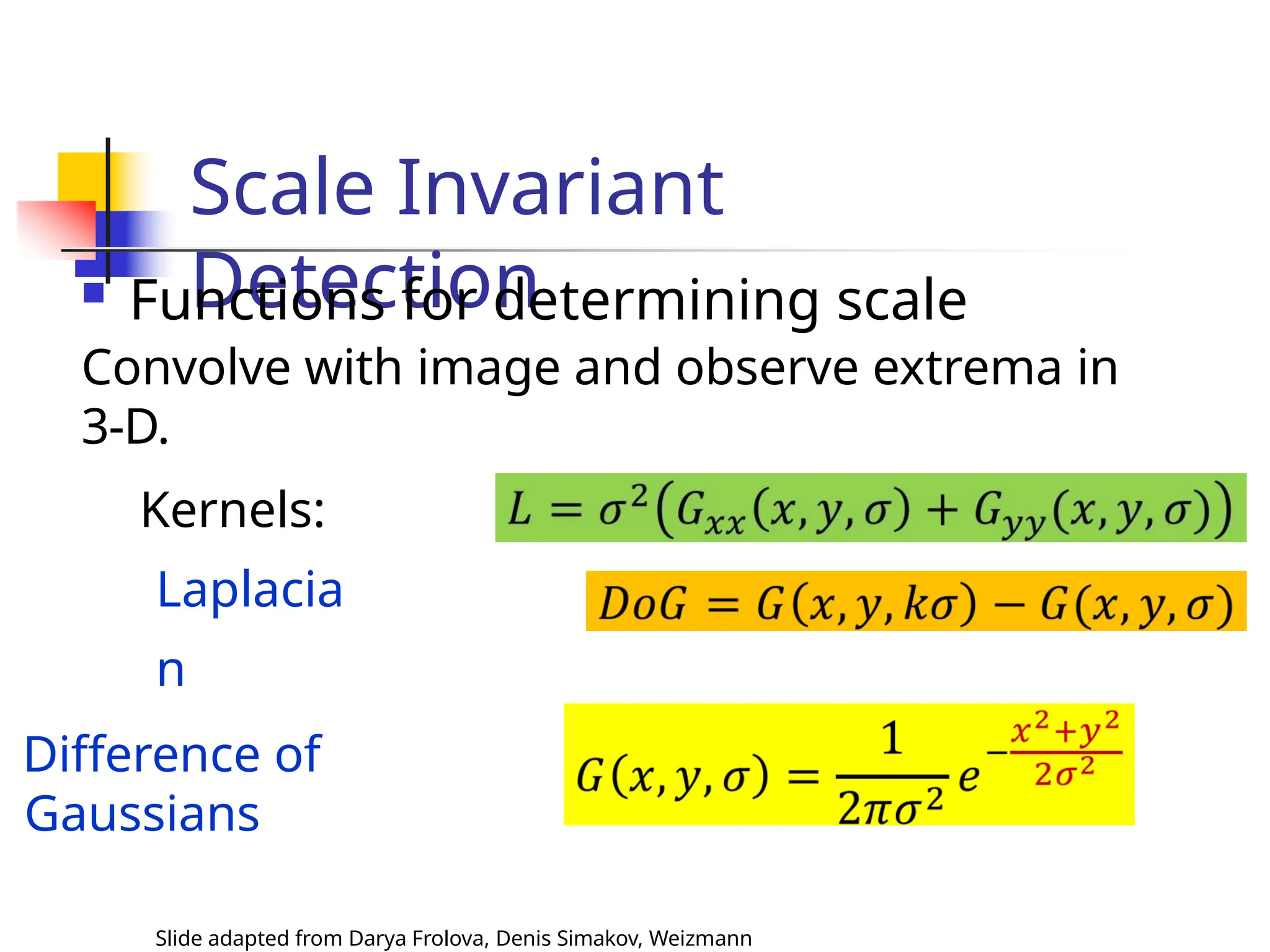

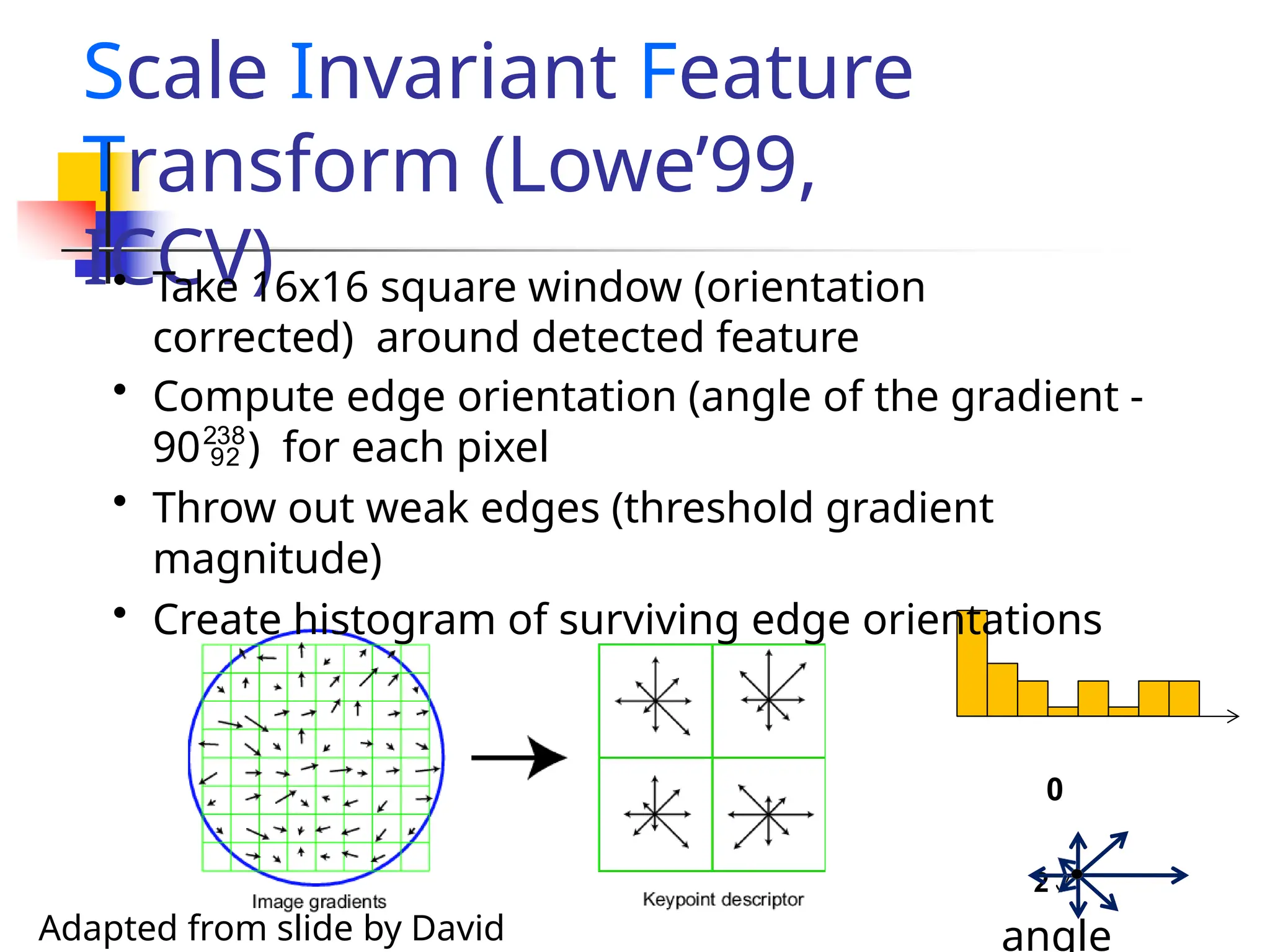

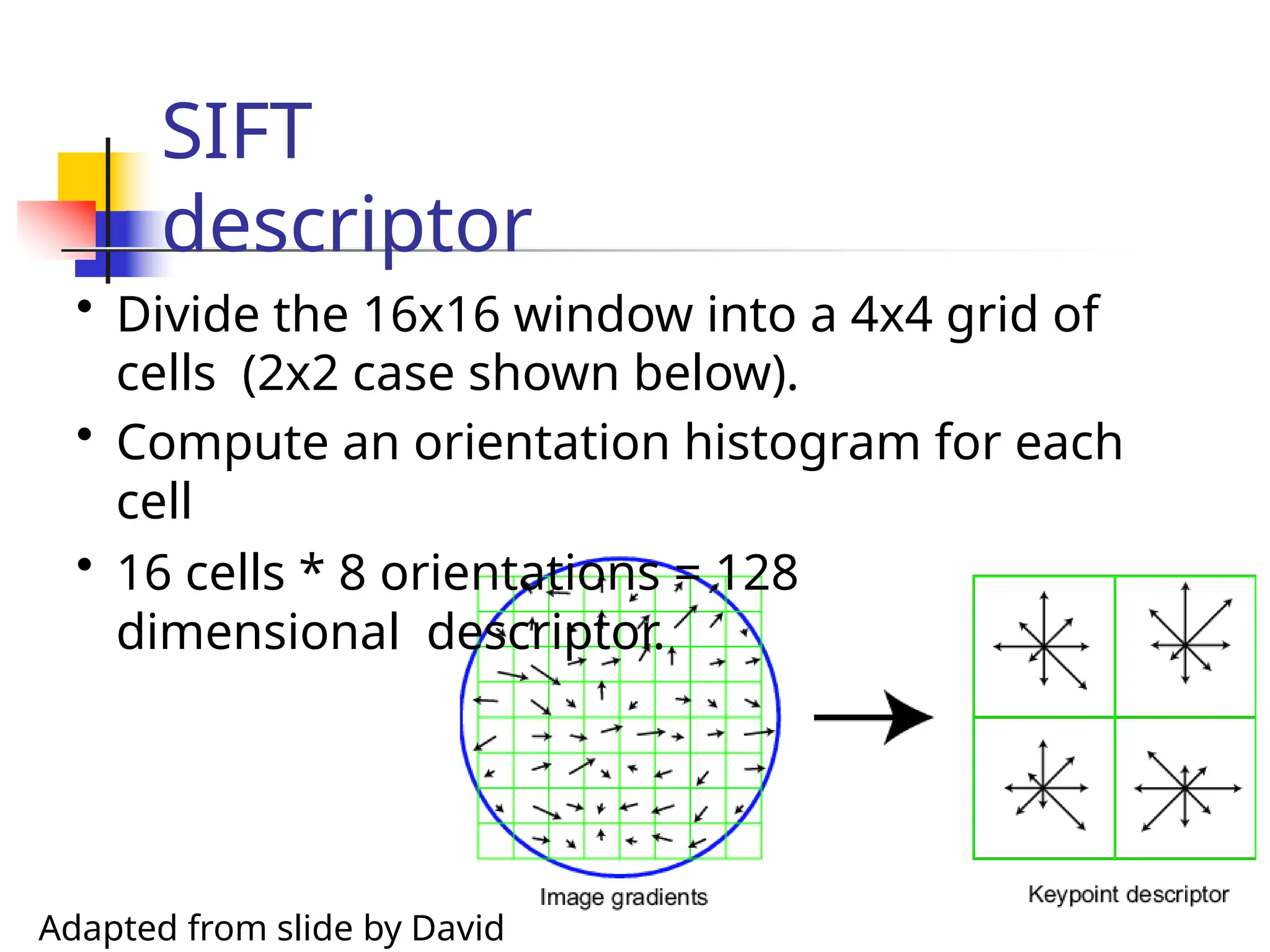

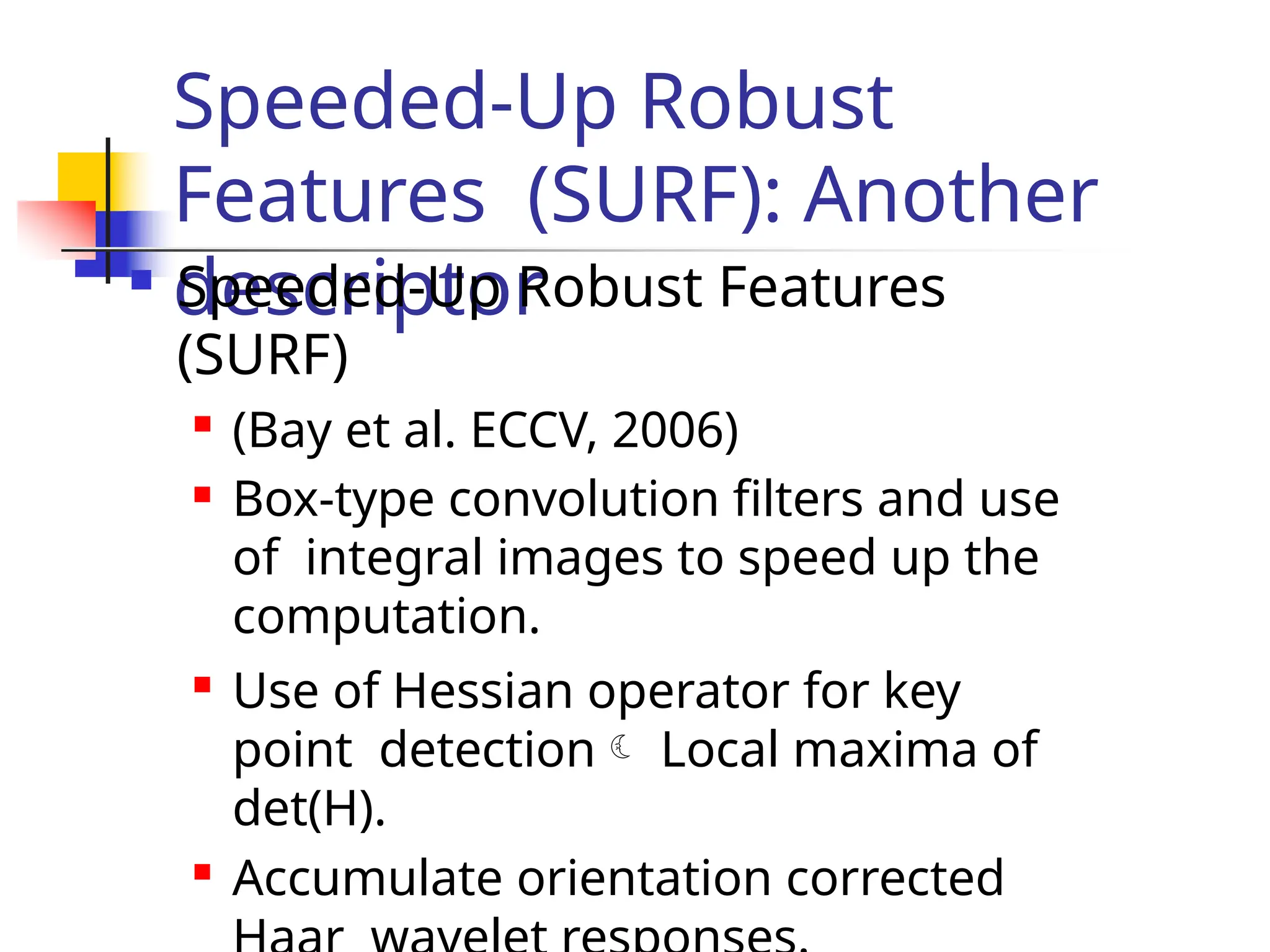

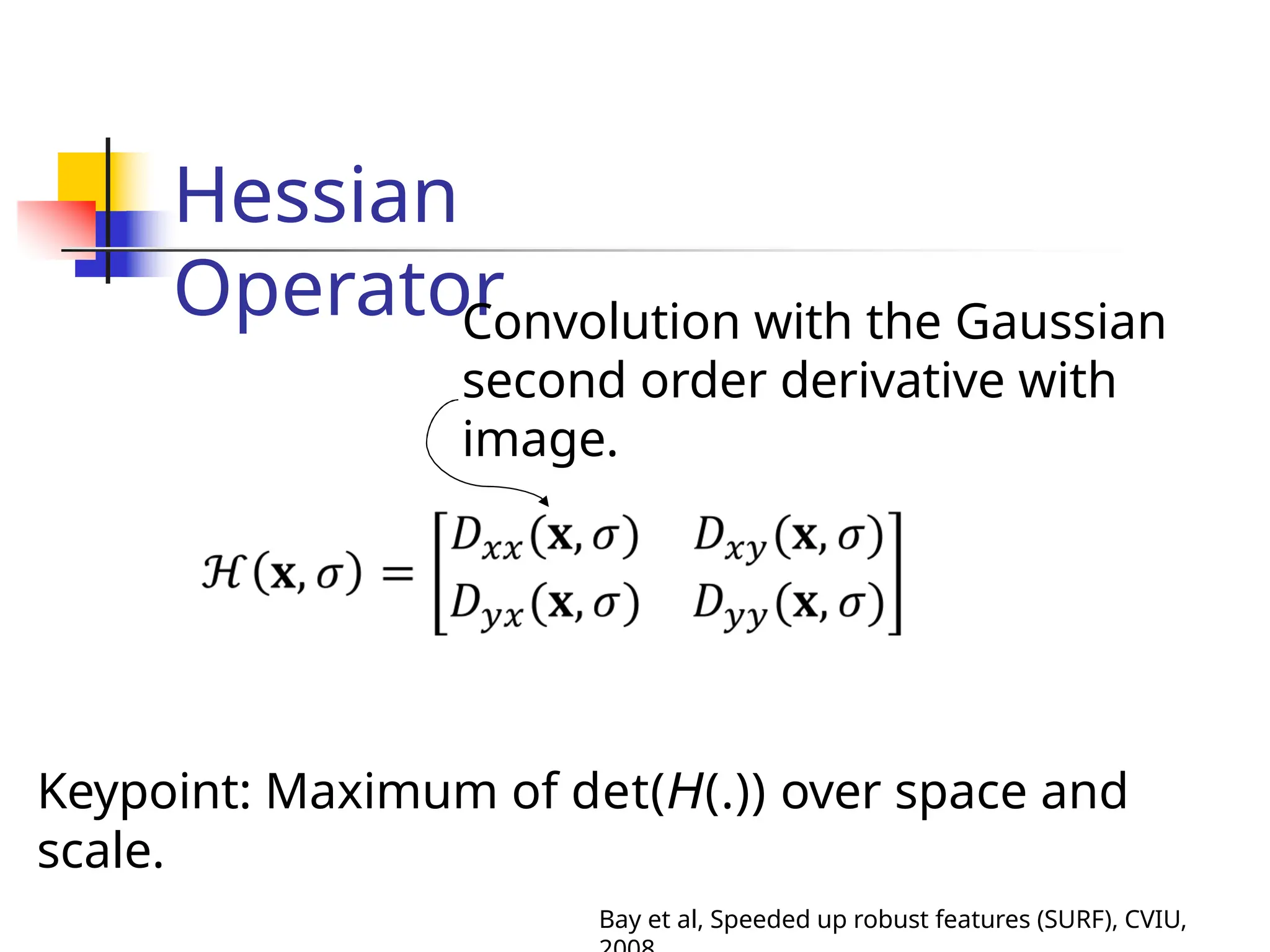

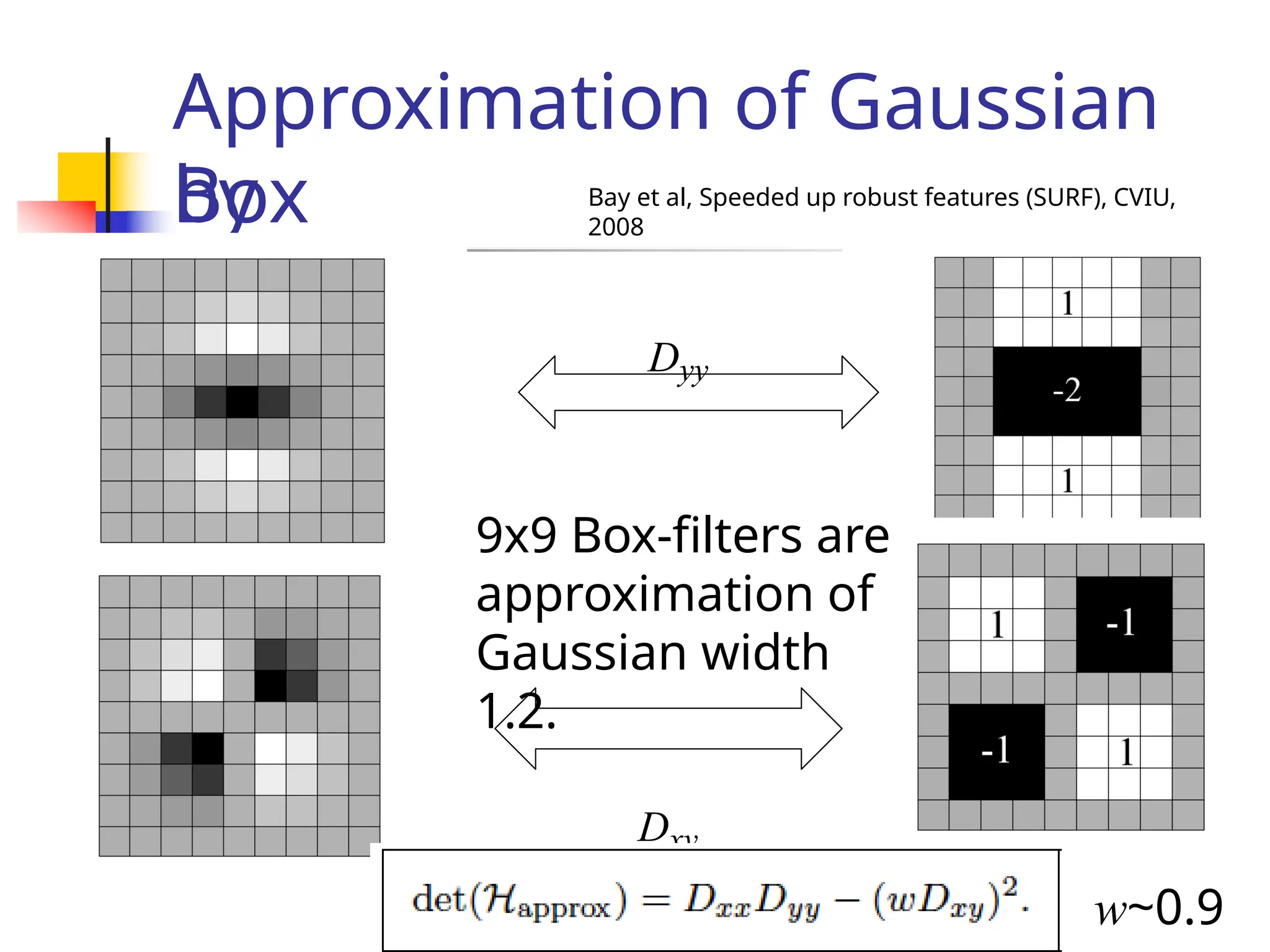

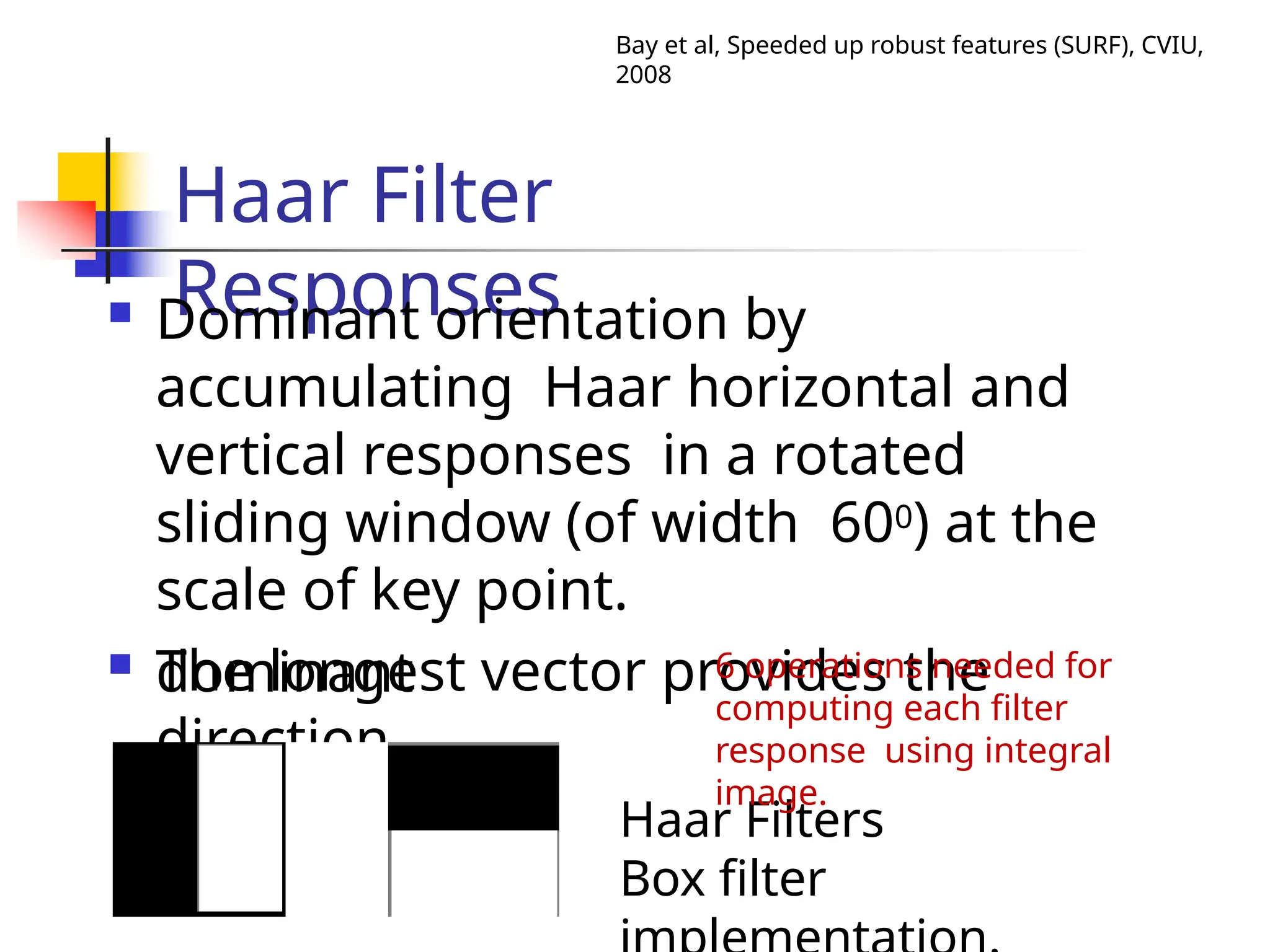

The document discusses feature detection and description in computer vision, focusing on various types of features such as corners, edges, and flat regions. It outlines methods for identifying and matching features between images, including the Harris operator for corner detection and scale-invariant features like SIFT and SURF. Additionally, it covers the importance of invariance to transformations such as translation, rotation, and scale for effective feature matching.

![Quick

eigenvalue/eigenvector

review

The eigenvectors of a matrix A are the vectors x that

satisfy:

The scalar is the eigenvalue corresponding to x

The eigenvalues are found by solving:

For eigen vector solve the following:

Once you know , you find eigen vector [x y]T by solving

the

above Slide adapted from Darya Frolova, Denis Simakov, Weizmann](https://image.slidesharecdn.com/unit4part12-241129140109-ad0c6a4d/75/feature-matching-and-model-description-pptx-8-2048.jpg)

![Feature scoring

function

Slide adapted from Darya Frolova, Denis Simakov, Weizmann

E(u,v) to be large for small shifts in all

directions

• the minimum of E(u,v) should be

large, over all unit vectors [u v].

• this minimum is given by the

smaller eigenvalue (-) of H](https://image.slidesharecdn.com/unit4part12-241129140109-ad0c6a4d/75/feature-matching-and-model-description-pptx-10-2048.jpg)

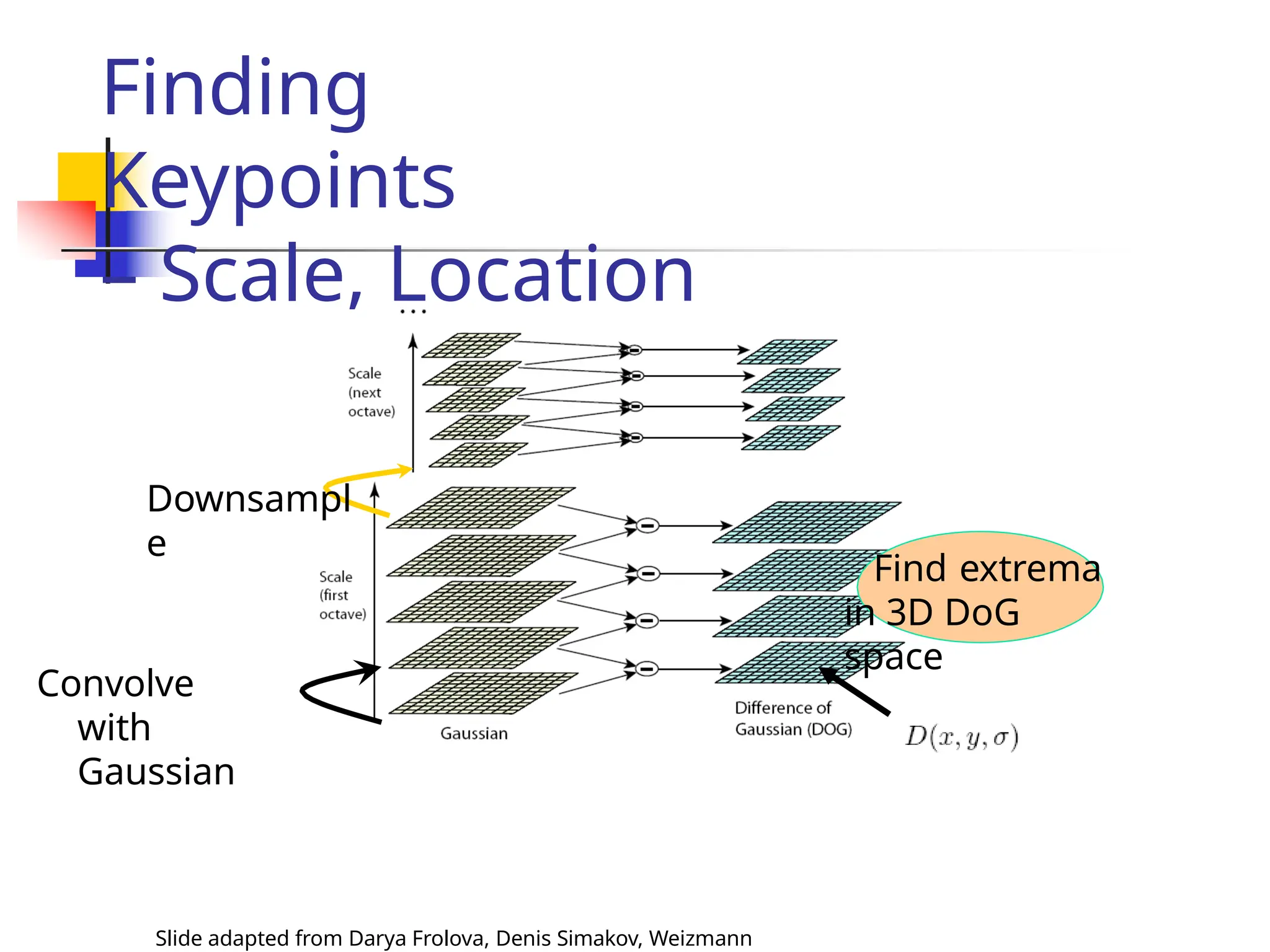

![Scale Invariant

Detection: Summary

Slide adapted from Darya Frolova, Denis Simakov, Weizmann

Given: two images of the same scene with a large

scale difference between them.

Goal: find the same interest points

independently in each image.

Solution: search for maxima of suitable

functions in

scale and in space (over the image).

Methods:

1. Harris-Laplacian [Mikolajczyk, Schmid]: maximize

Laplacian over scale, Harris’ measure of corner

response over the image.](https://image.slidesharecdn.com/unit4part12-241129140109-ad0c6a4d/75/feature-matching-and-model-description-pptx-29-2048.jpg)

![Matchin

g

Representation of a key-point by a feature

vector.

e.g. [f0 f1 … fn]T

Use distance functions / similarity measures.

L1 norm

L2

norm

L](https://image.slidesharecdn.com/unit4part12-241129140109-ad0c6a4d/75/feature-matching-and-model-description-pptx-49-2048.jpg)

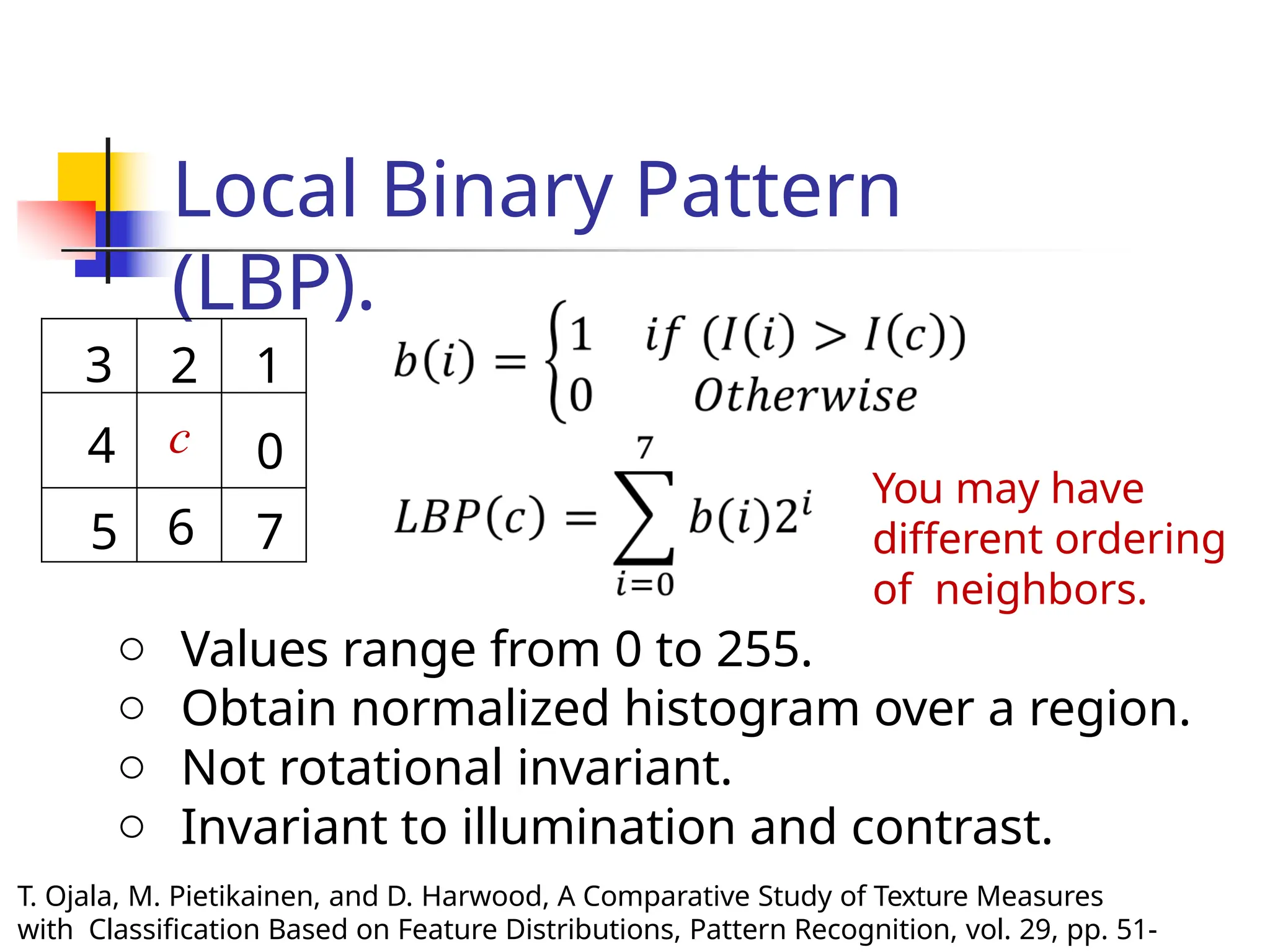

![Laws’ texture energy

features

A set of 9 5x5 masks used to

compute

texture energy.

L5 (Level): [1 4 6 4 1]

E5 (Edge): [-1 -2 0 2 1]

S5 (Spot): [-1 0 2 0 -1]

R5 (ripple): [1 -4 6 -4 1]

Computation with

mask: Convolution

A mask: Outer

product of any

pair.

e.g. E5L5: E5.L5T

K. Laws, “Rapid Texture Identification”, in SPIE Vol. 238: Image Processing

for](https://image.slidesharecdn.com/unit4part12-241129140109-ad0c6a4d/75/feature-matching-and-model-description-pptx-60-2048.jpg)

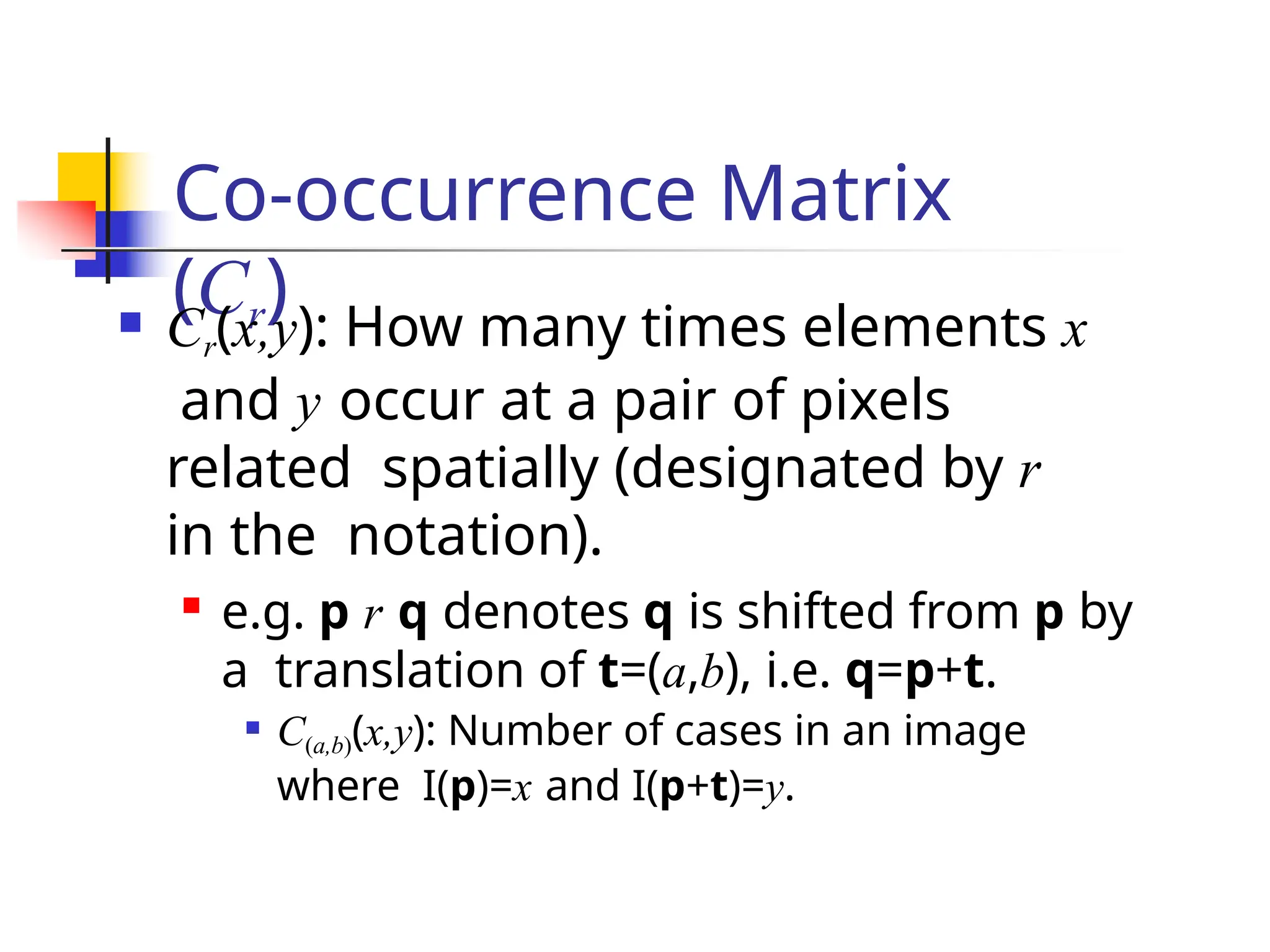

![Laws’ texture energy

features

K. Laws, “Rapid Texture Identification”, in SPIE Vol. 238: Image Processing

for

A set of 9 5x5 masks used to

compute

texture energy.

L5 (Level): [1 4 6 4 1]

E5 (Edge): [-1 -2 0 2 1]

S5 (Spot): [-1 0 2 0 -1]

R5 (ripple): [1 -4 6 -4 1]

16 such masks possible.

Combine a few pairs to make 9

masks.

L5E5 and E5L5

L5R5 and

R5L5 E5S5

and S5E5

L5S5 and S5L5

E5R5 and

R5E5 S5R5

and R5S5

S5S5

R5R

5

E5E5

Take

average

of

response

s of two

masks.](https://image.slidesharecdn.com/unit4part12-241129140109-ad0c6a4d/75/feature-matching-and-model-description-pptx-61-2048.jpg)

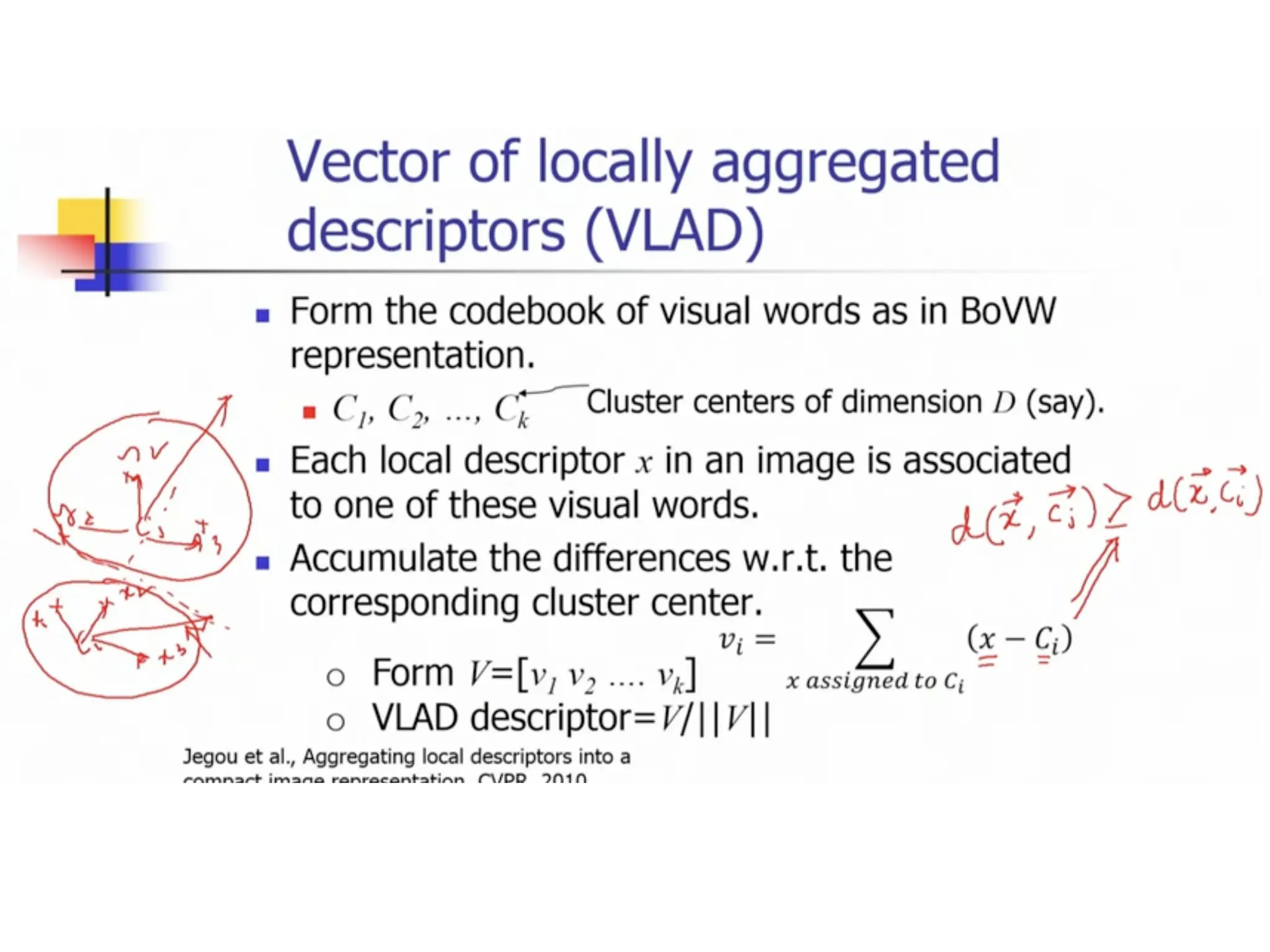

![Vector of locally aggregated

descriptors (VLAD)

Form the codebook of visual words as in

BoVW representation.

C1, C2, …, Ck

Cluster centers of dimension D

(say).

Each local descriptor x in an image is

associated to one of these visual words.

Accumulate the differences w.r.t.

the corresponding cluster center.

o Form V=[v1 v2 …. vk]

o VLAD descriptor=V/||V||

Dimension:

k.D

Jegou et al., Aggregating local descriptors

into a compact image representation, CVPR,](https://image.slidesharecdn.com/unit4part12-241129140109-ad0c6a4d/75/feature-matching-and-model-description-pptx-66-2048.jpg)