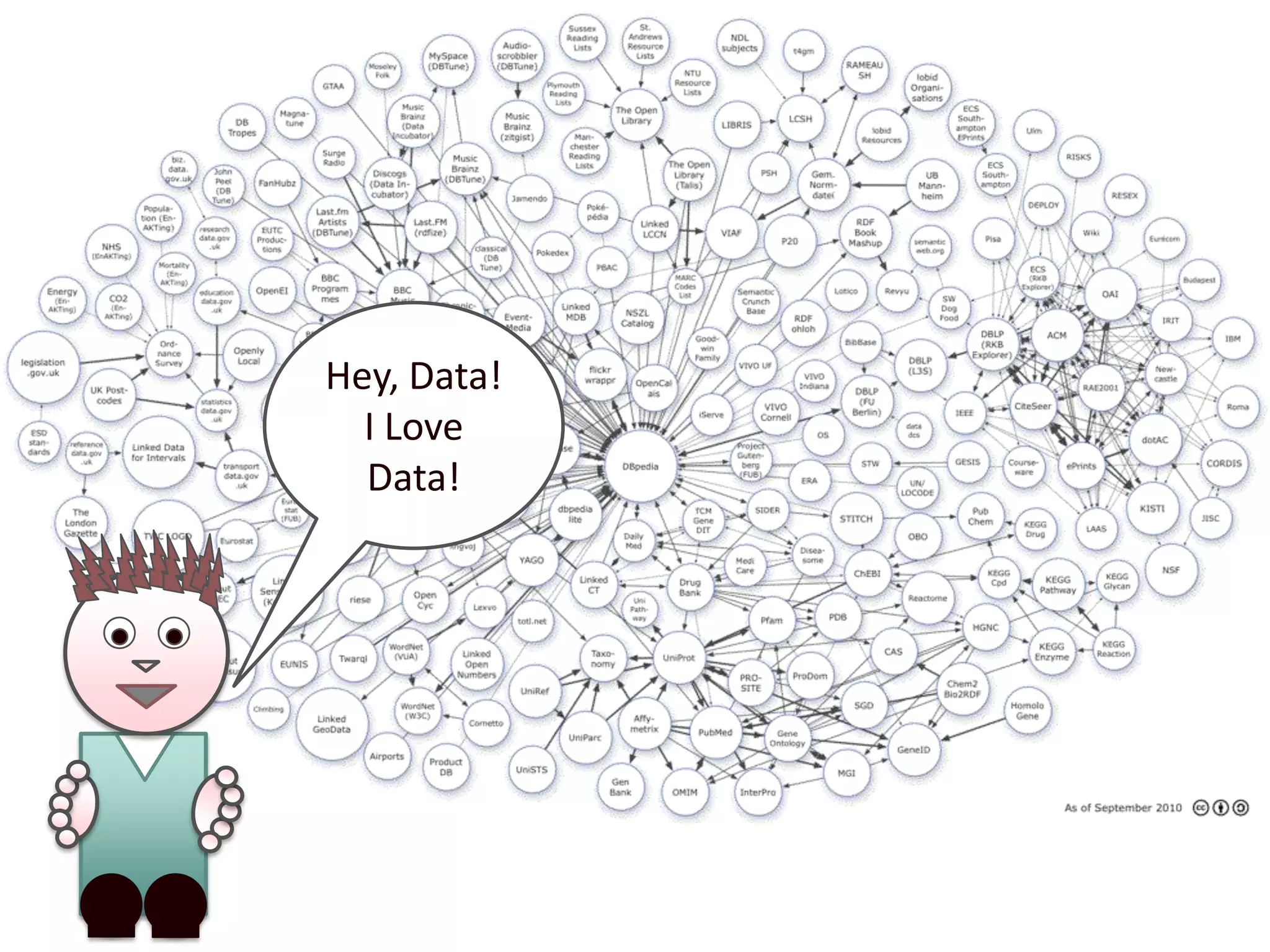

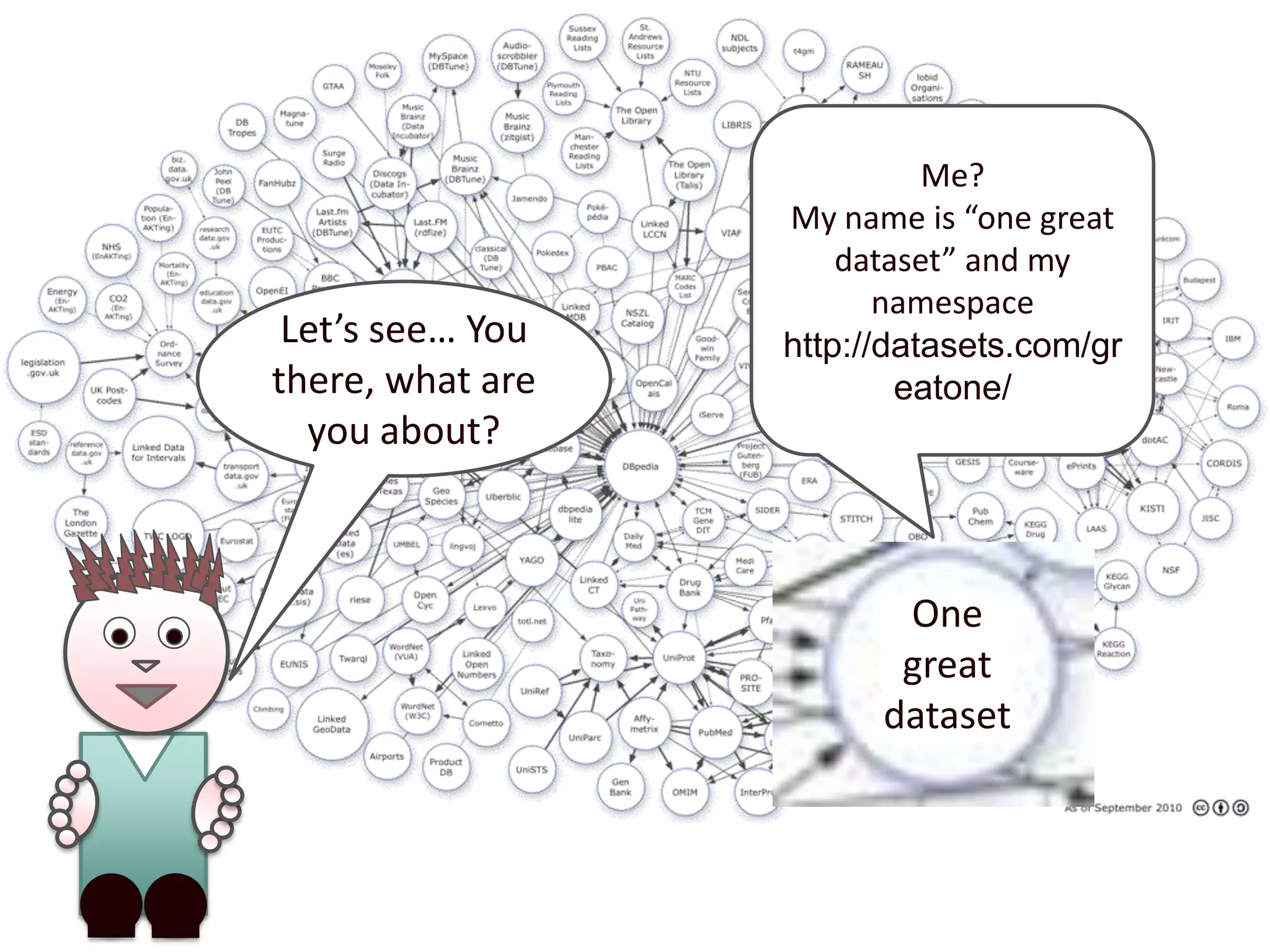

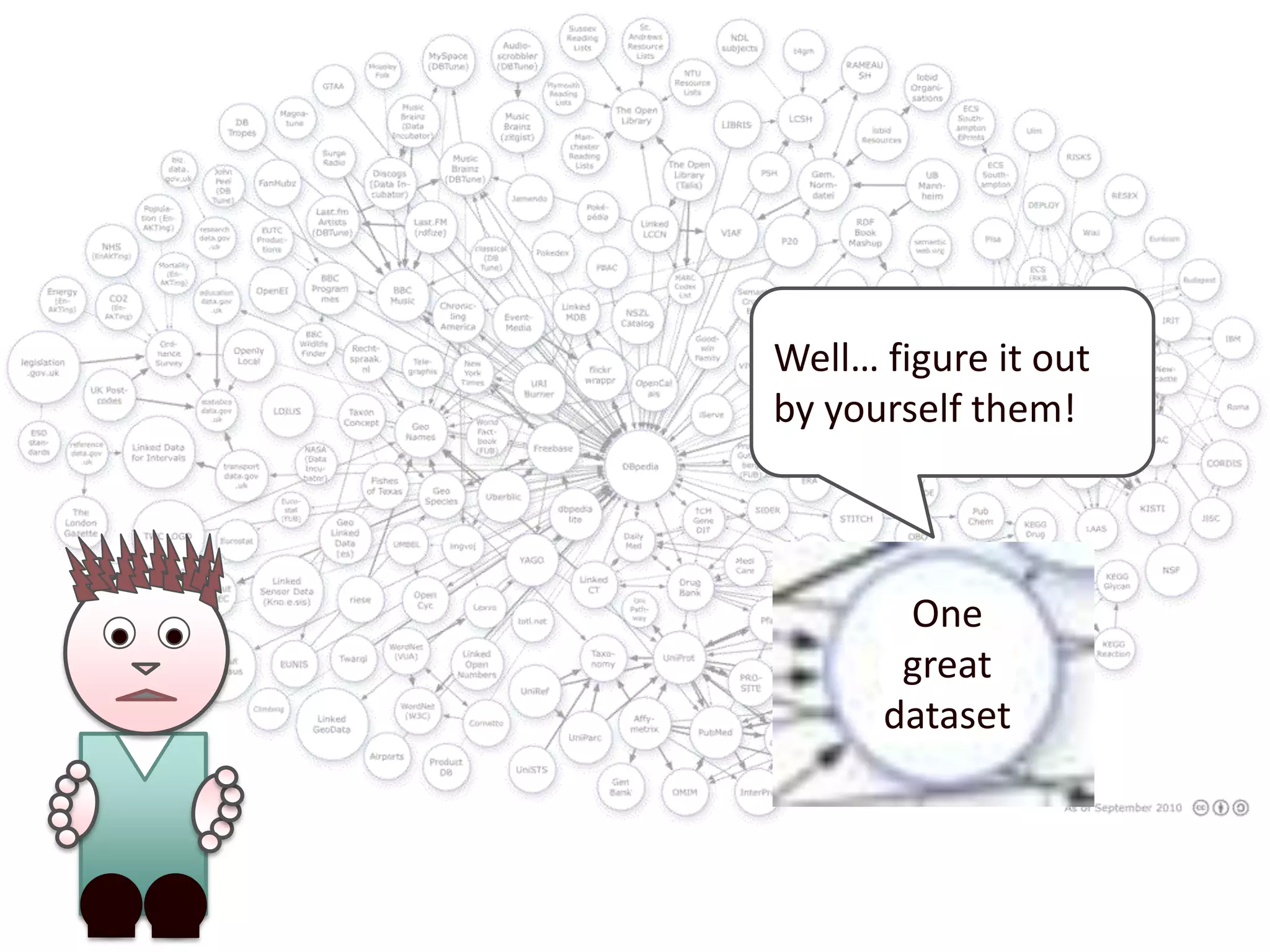

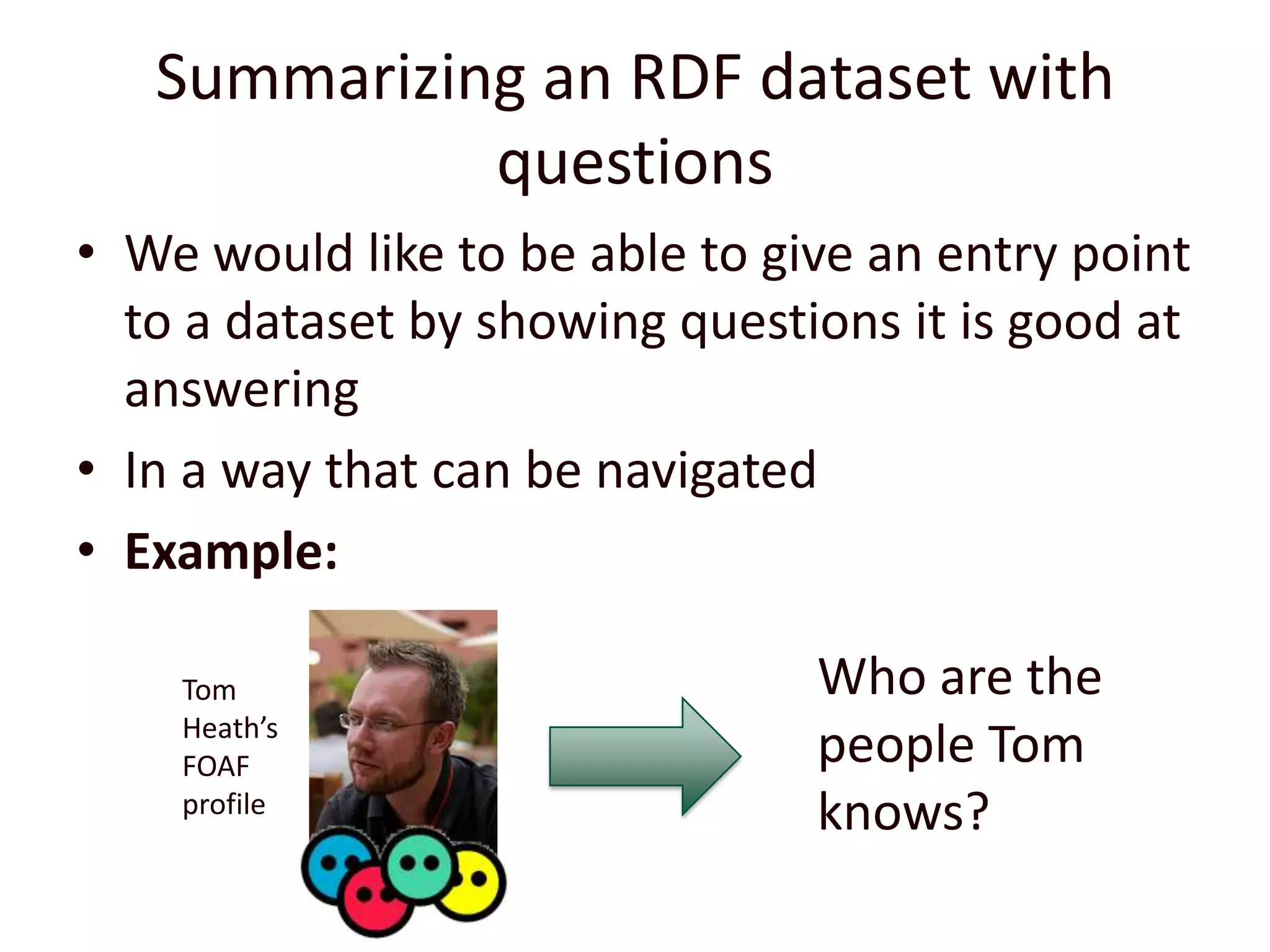

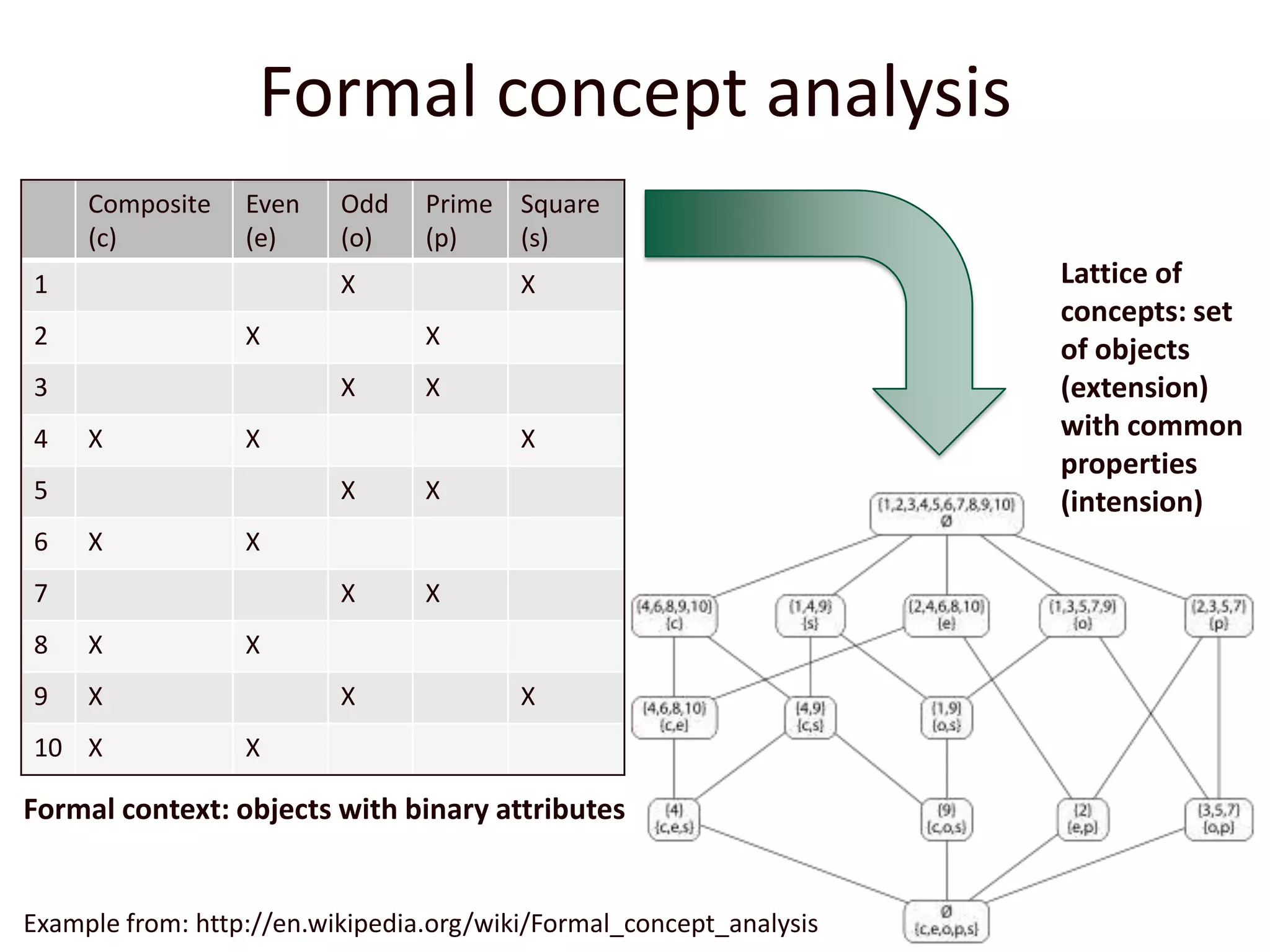

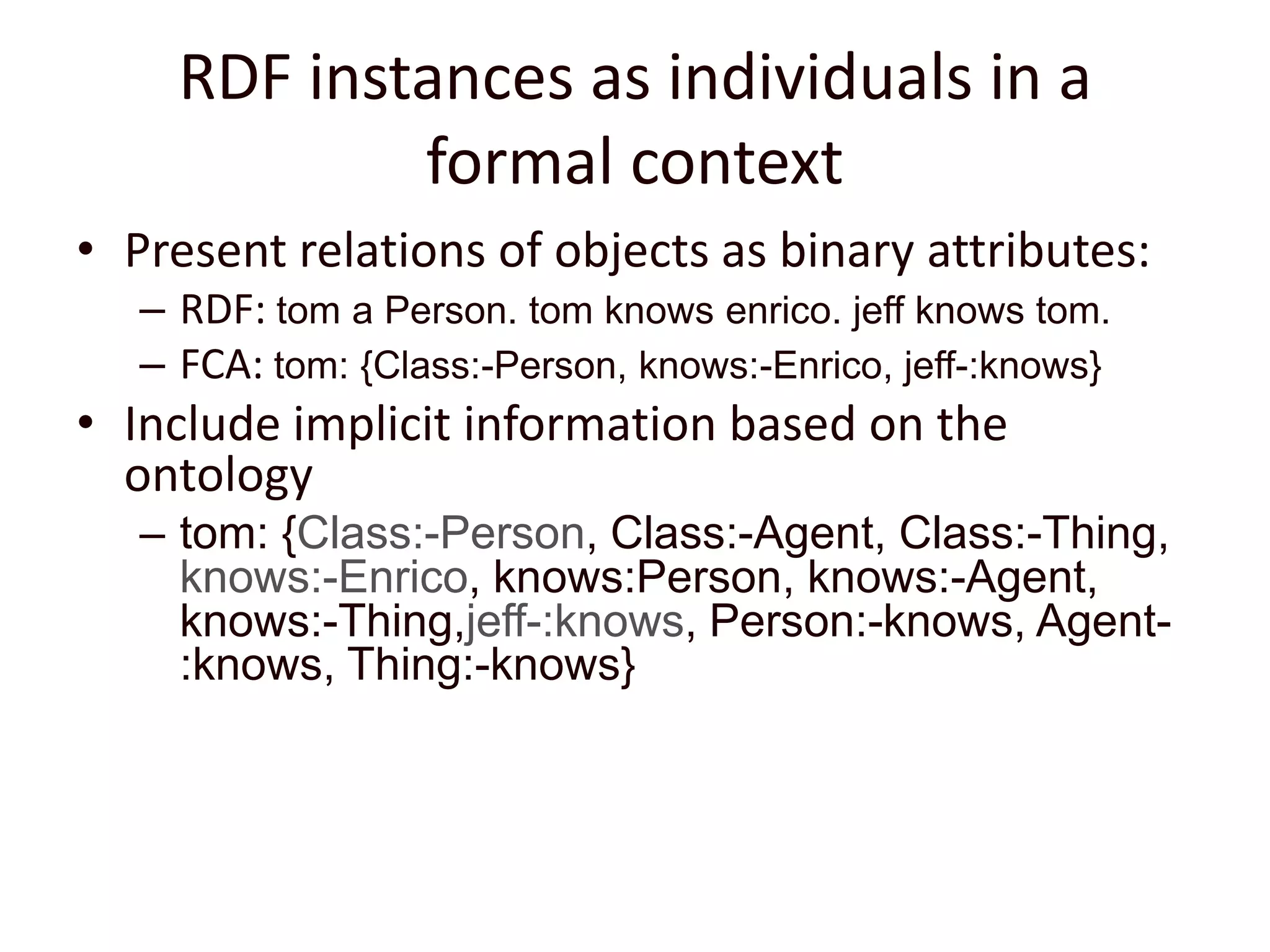

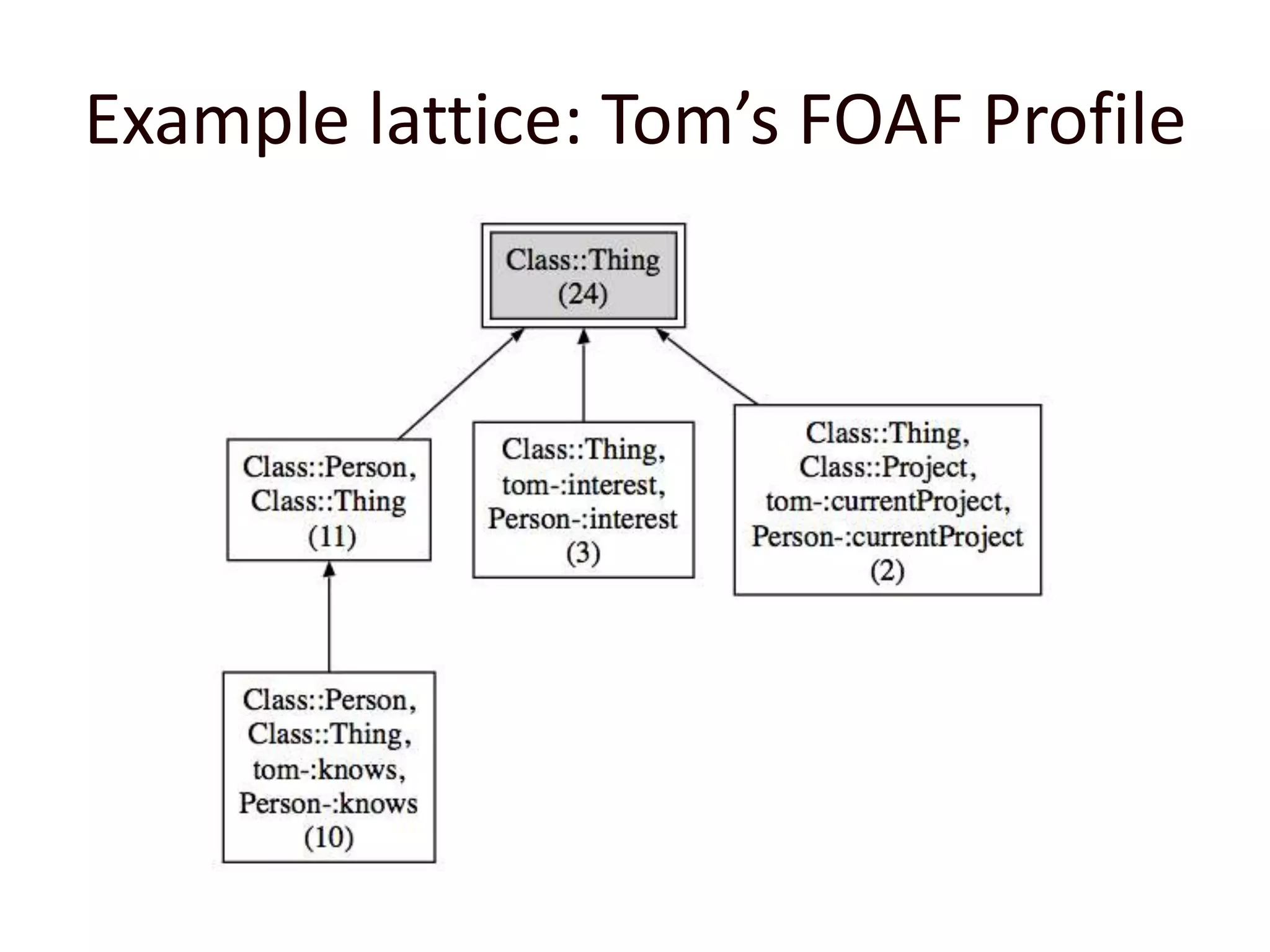

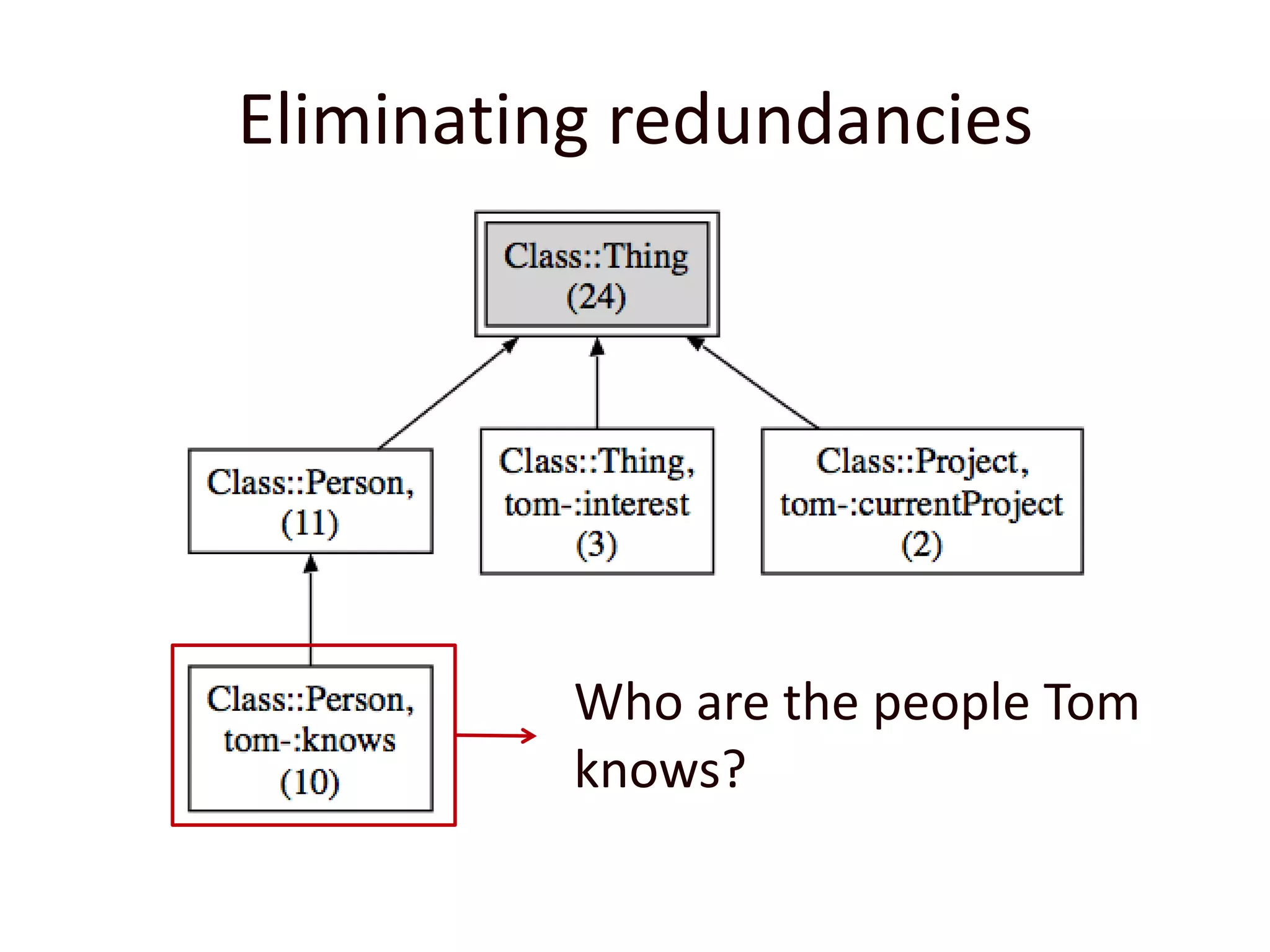

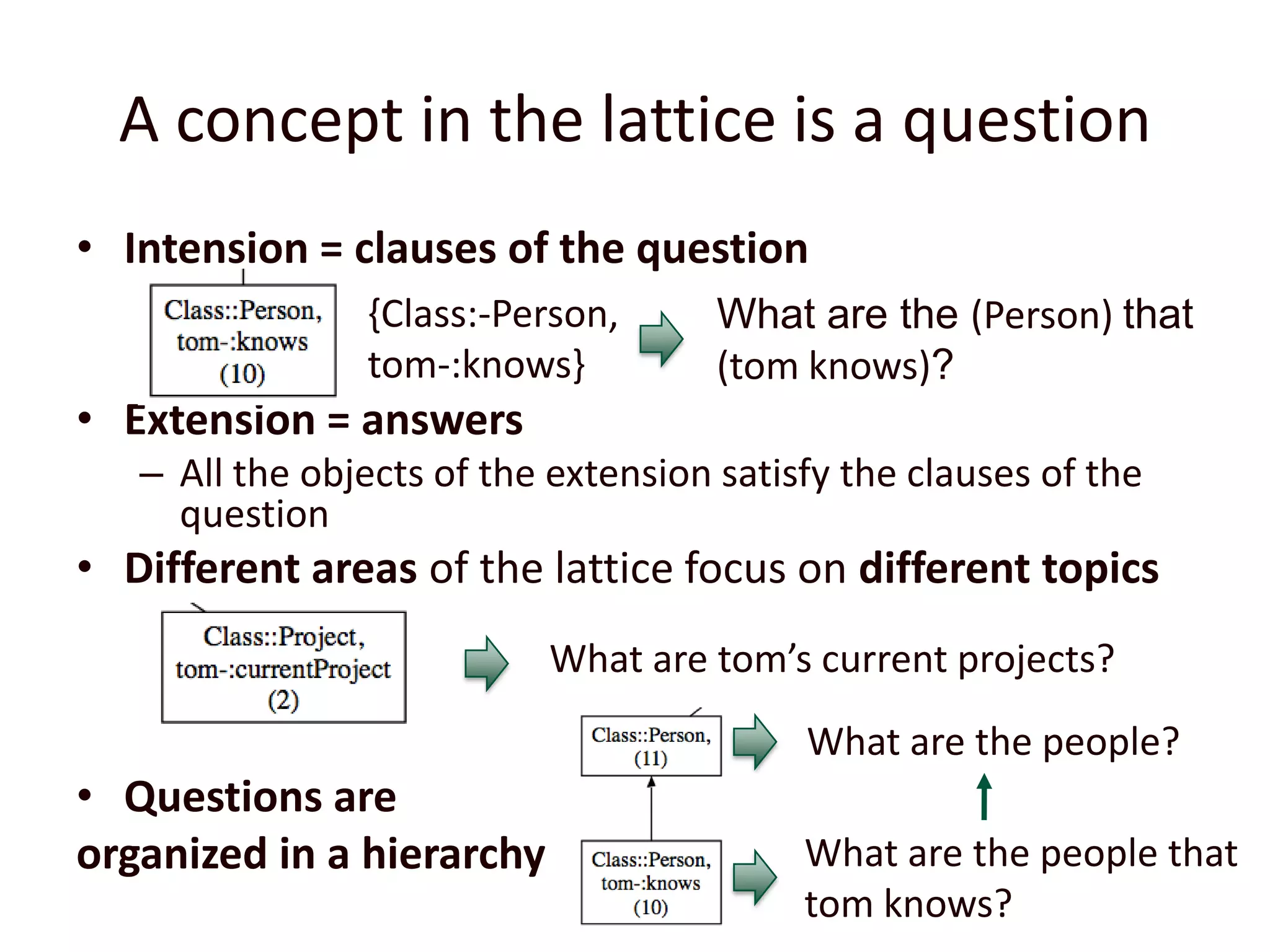

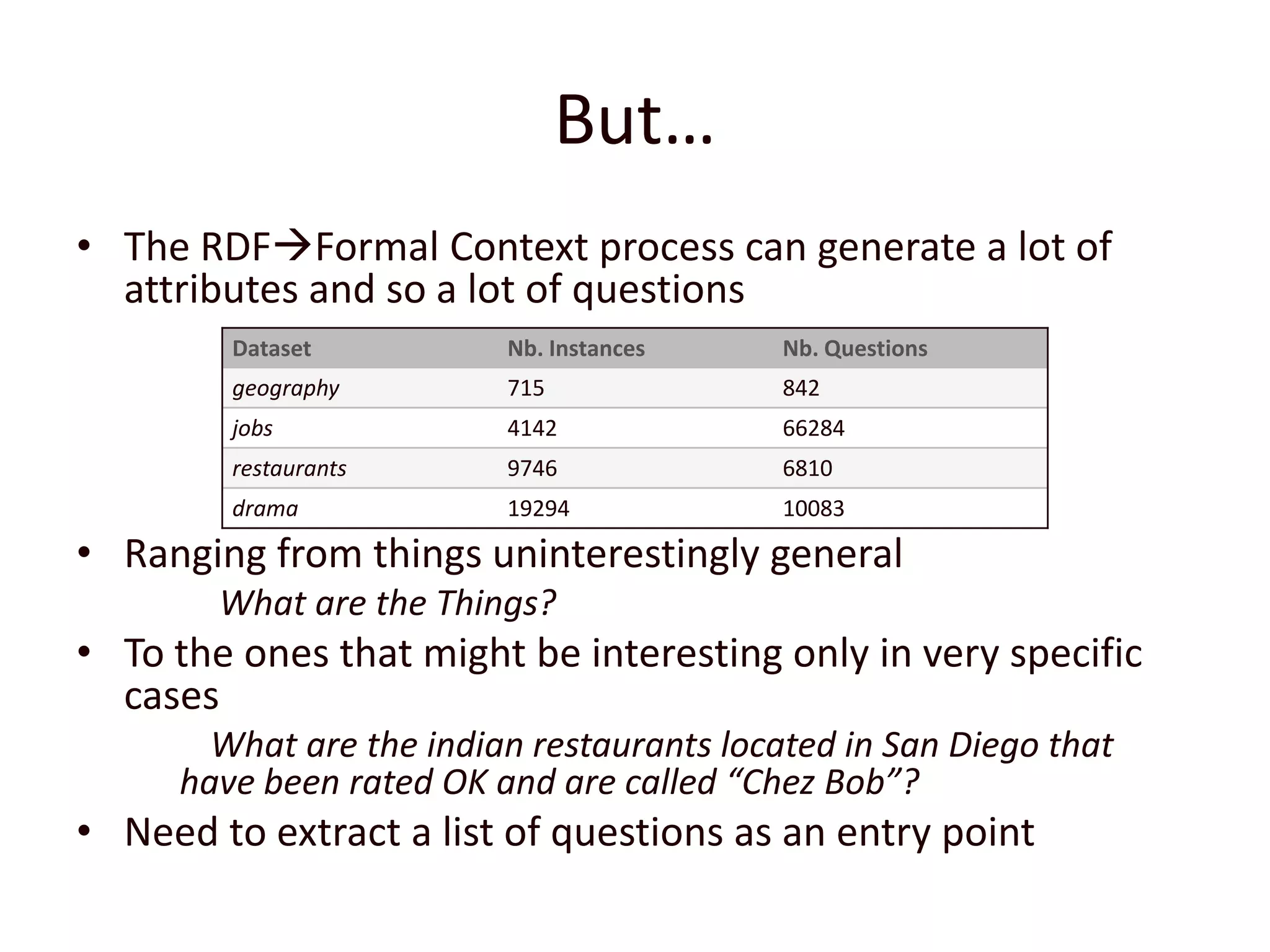

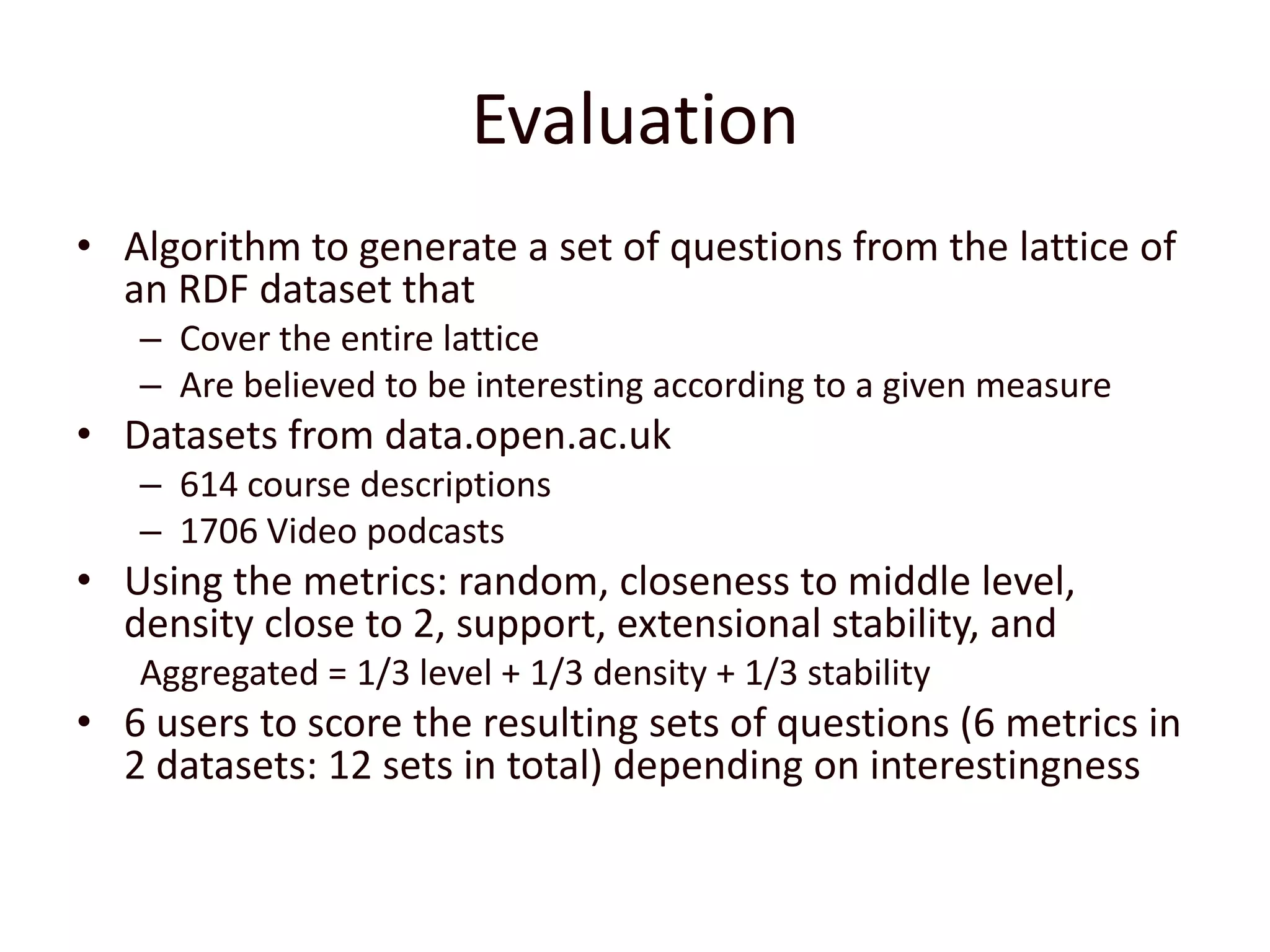

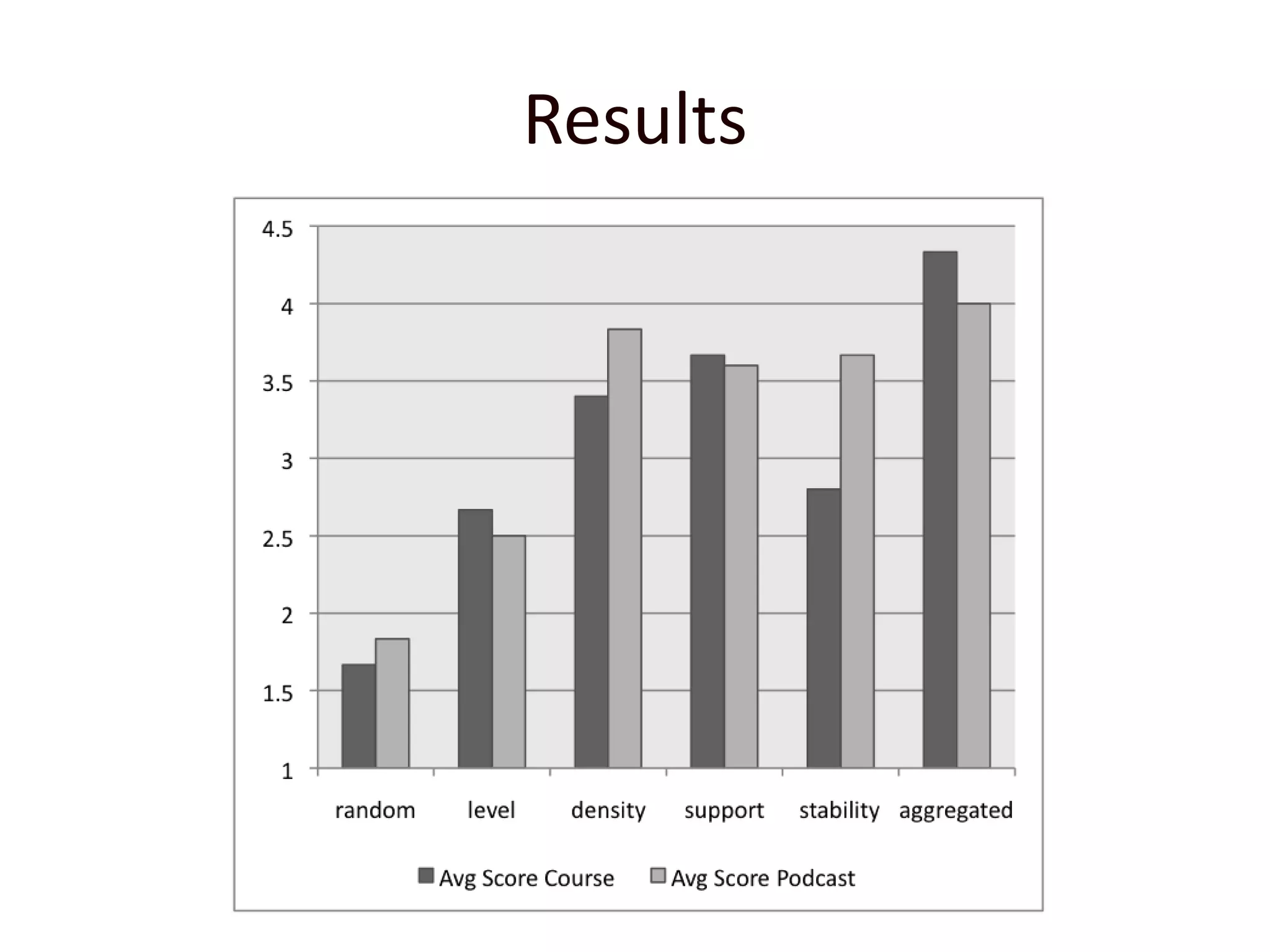

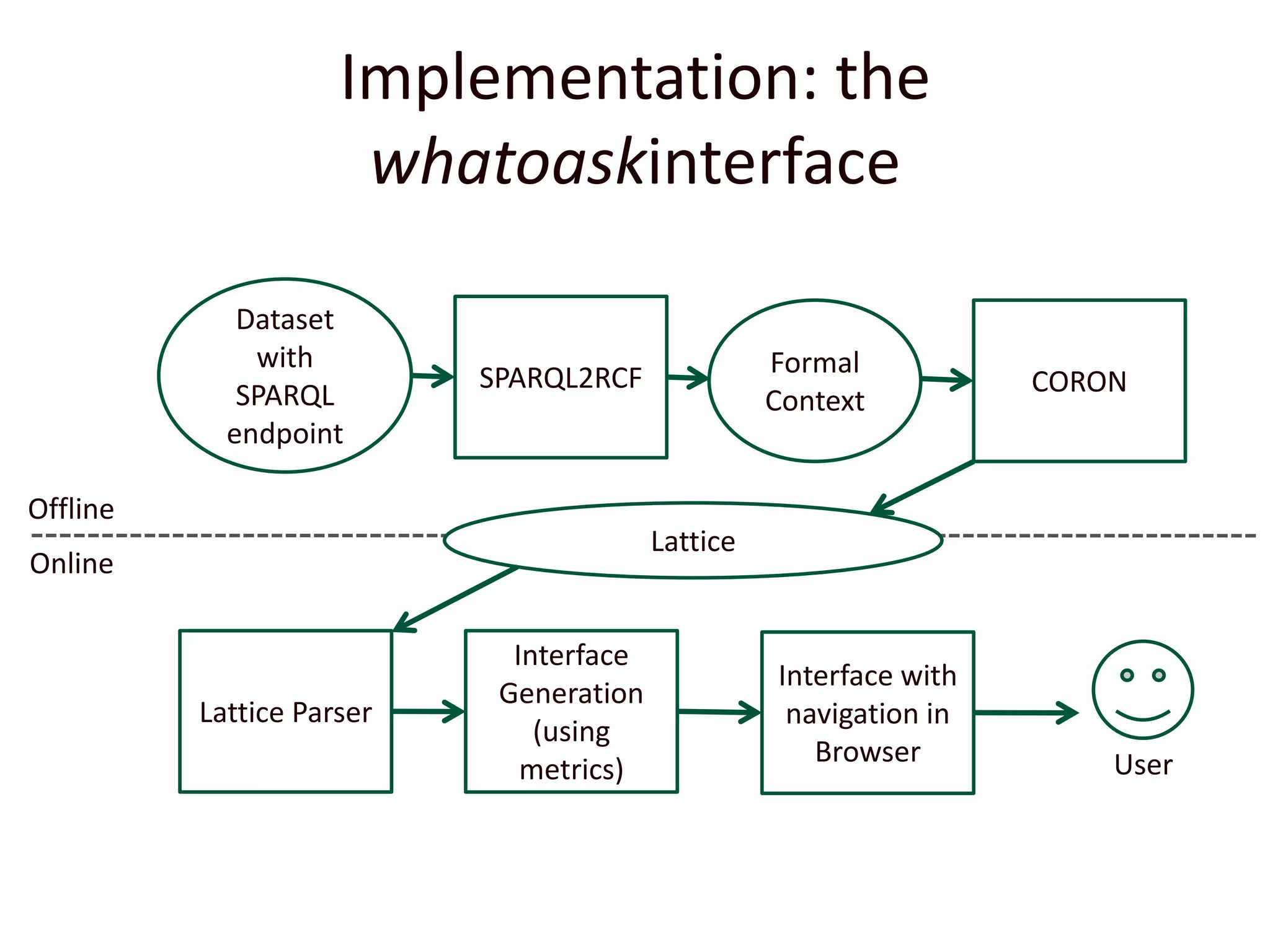

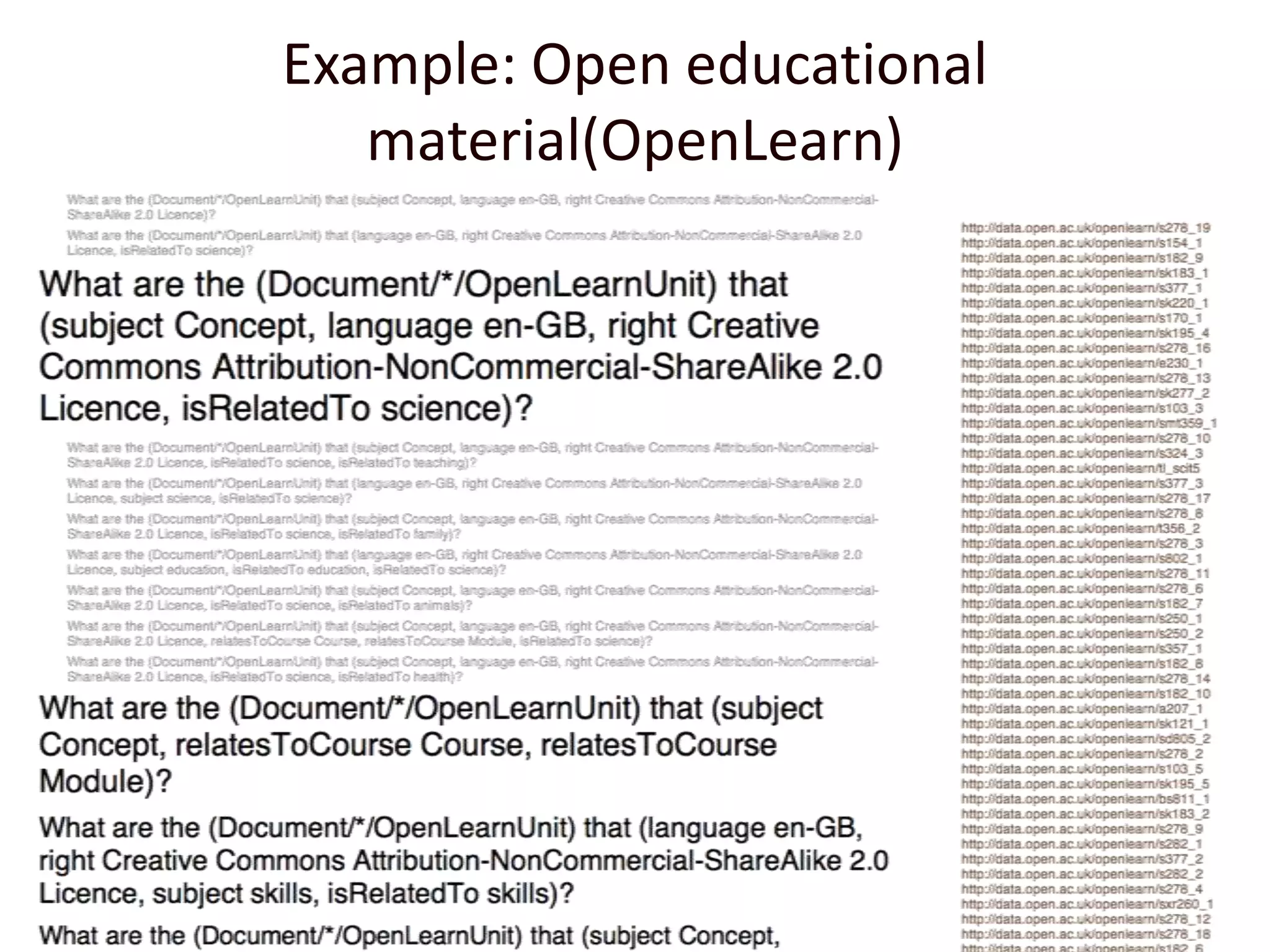

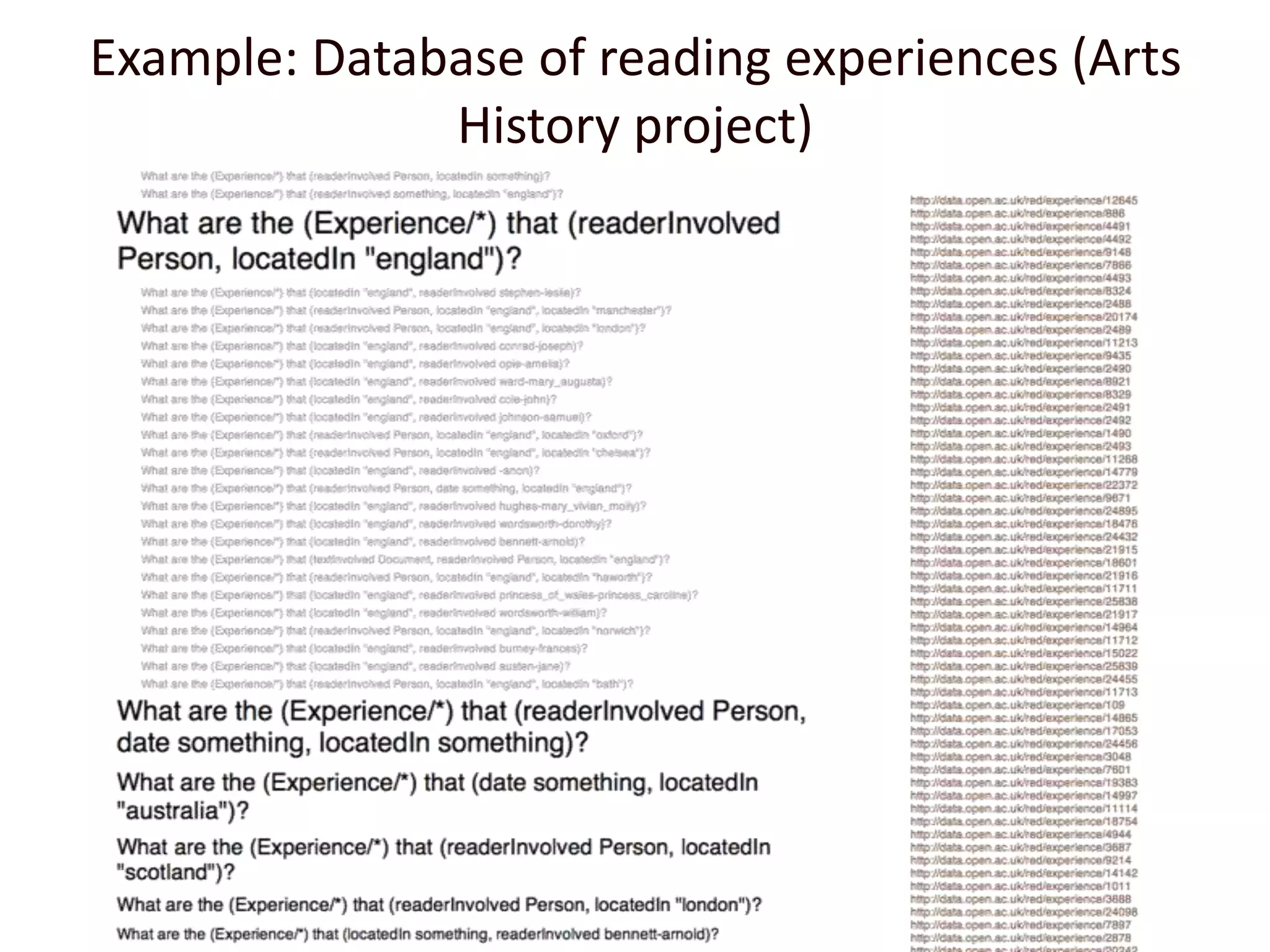

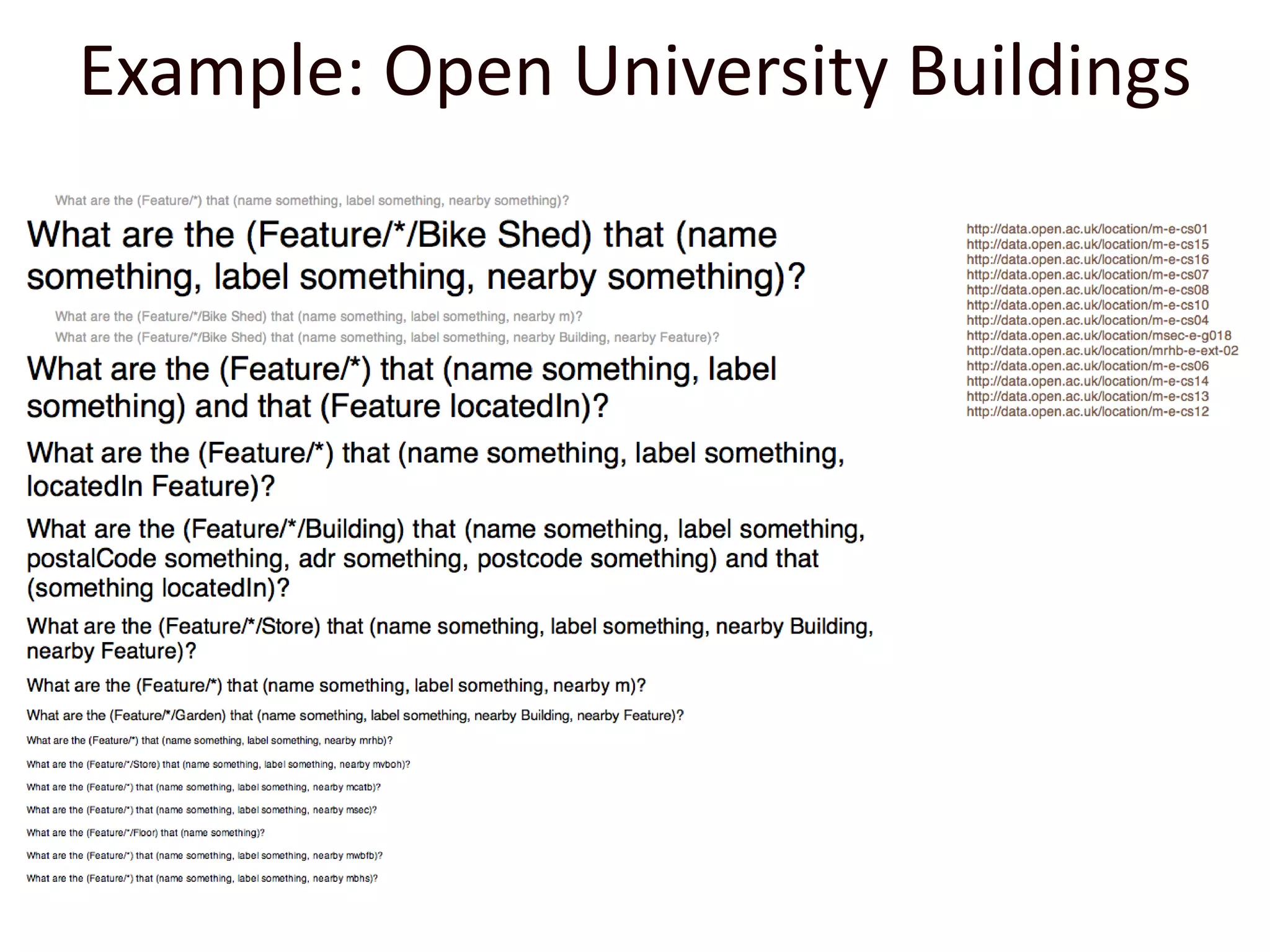

The document discusses using formal concept analysis to extract relevant questions from RDF datasets, aiming to provide entry points for exploration through well-structured queries. By analyzing object relationships and organizing questions hierarchically, the authors propose a method to generate a list of questions that cover different aspects of the dataset while ensuring clarity and ease of navigation. The study includes metrics for evaluating question interestingness and demonstrates a practical application of the technique through a beta interface.