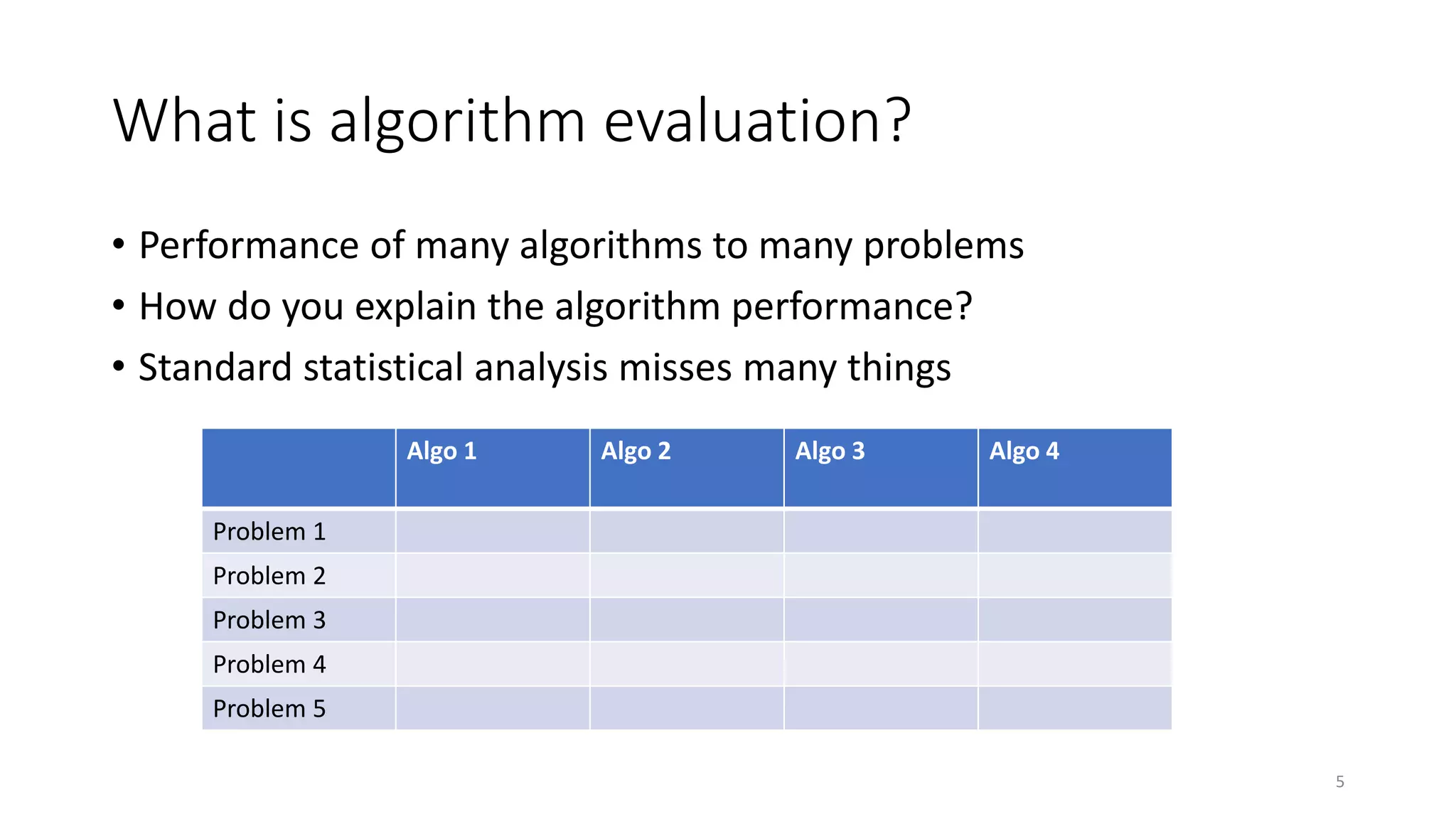

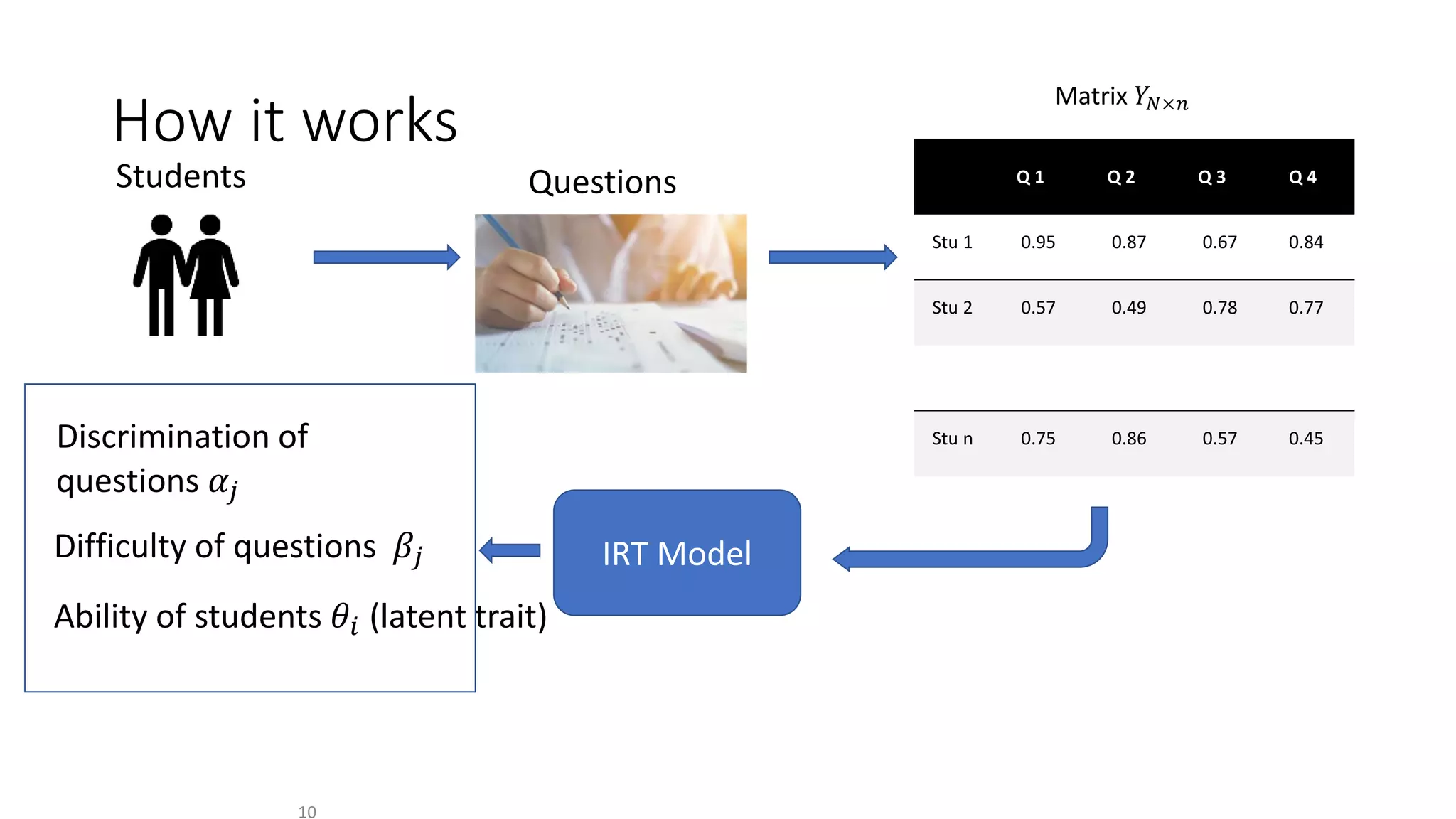

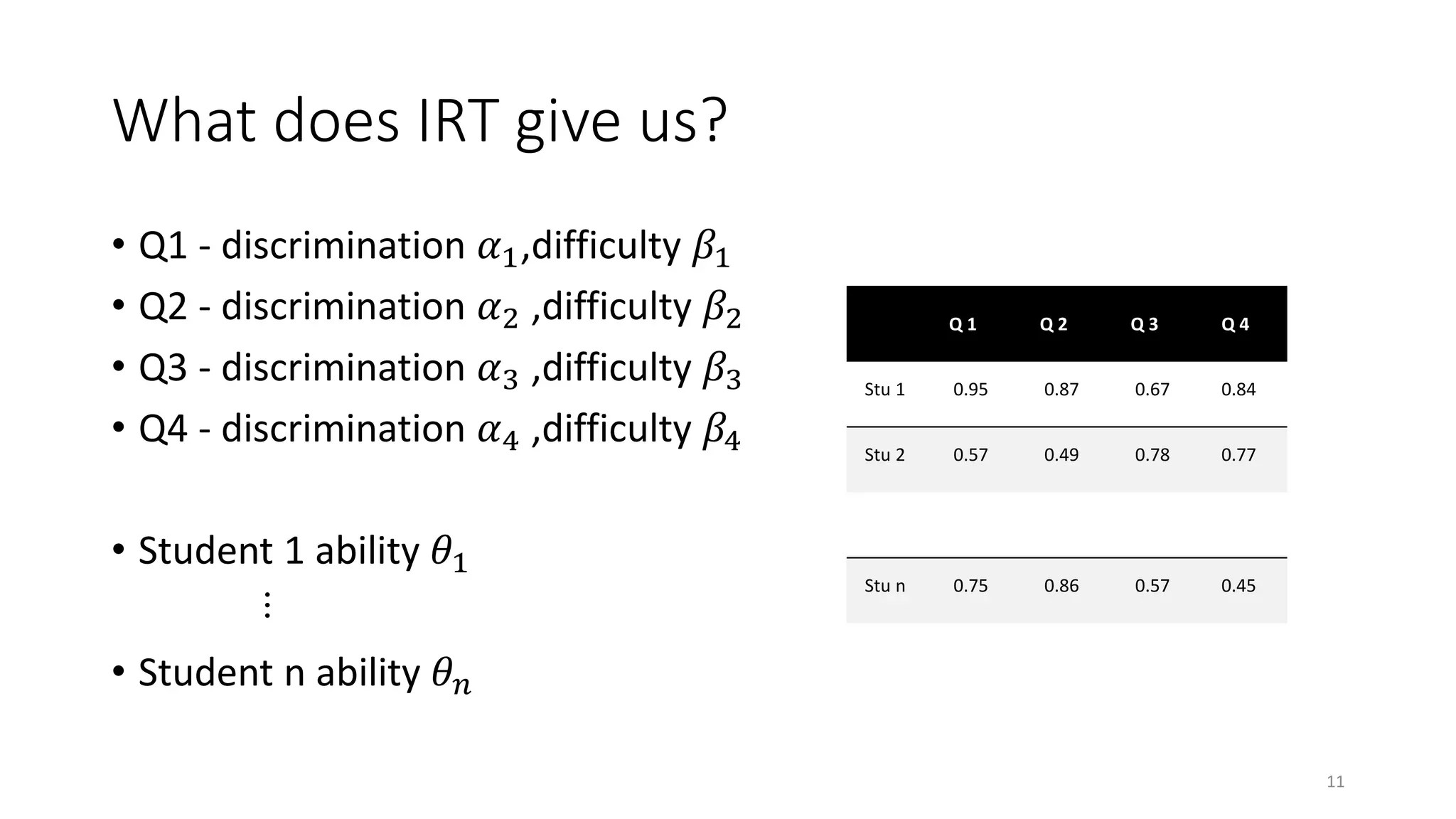

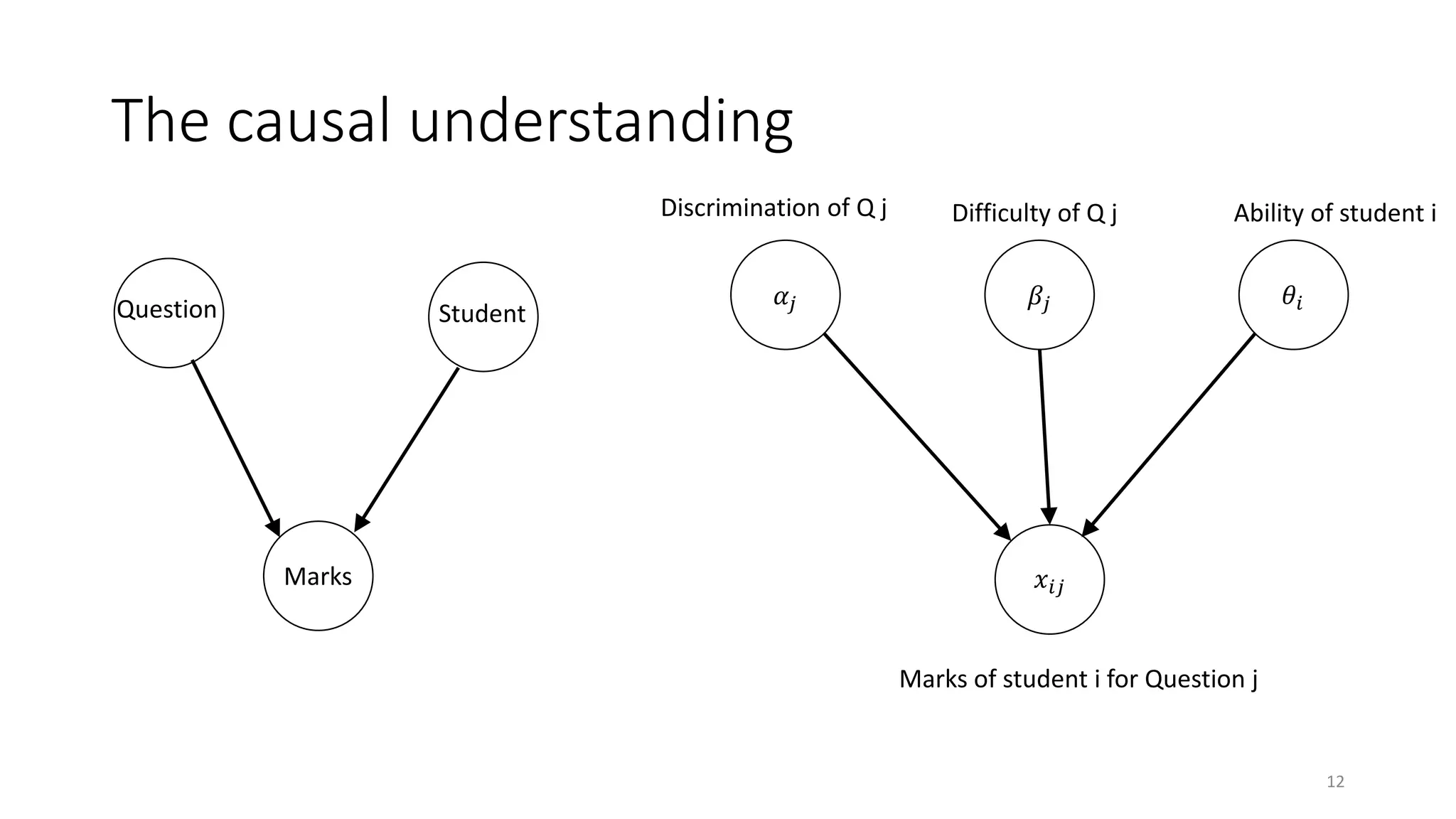

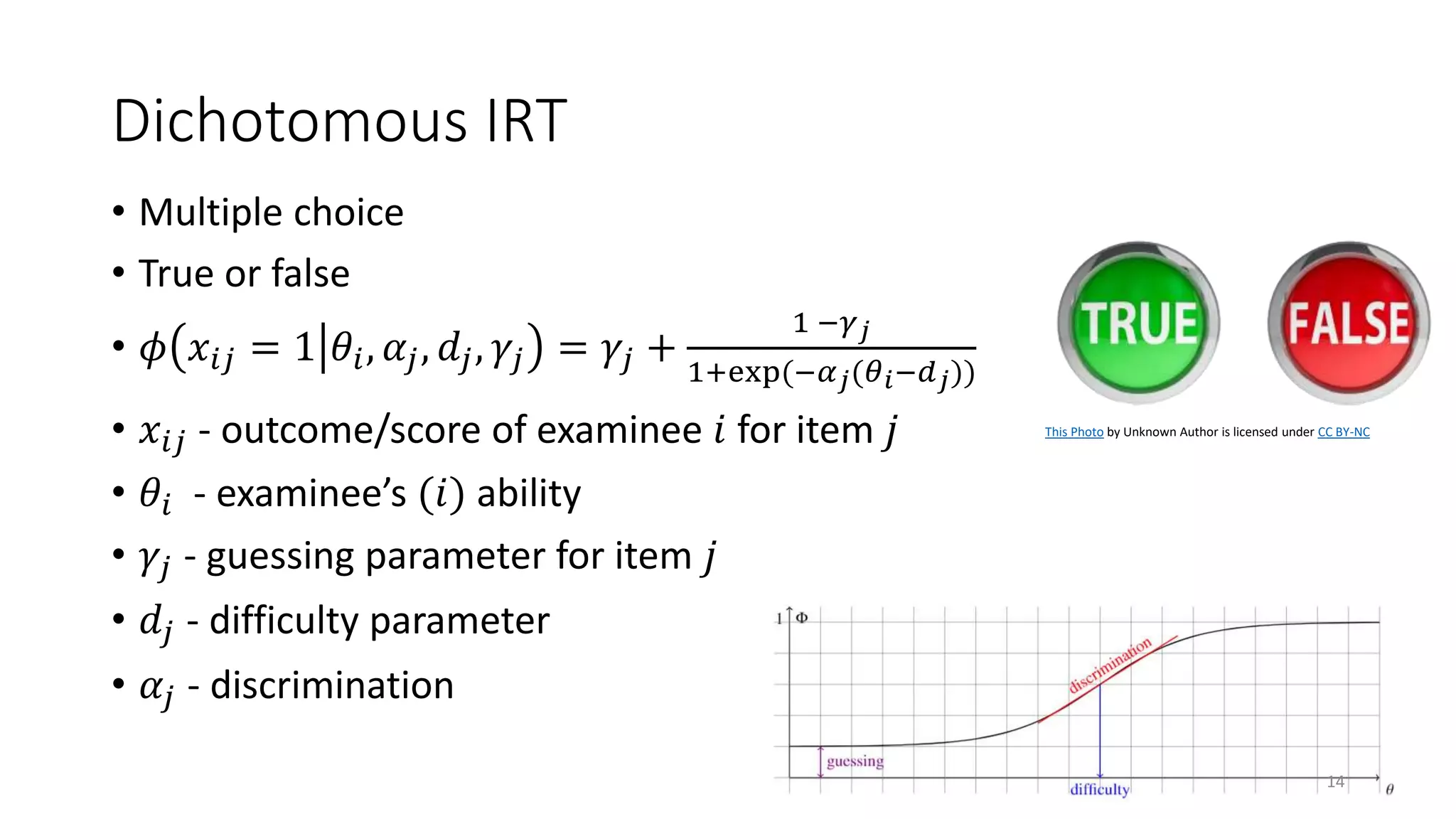

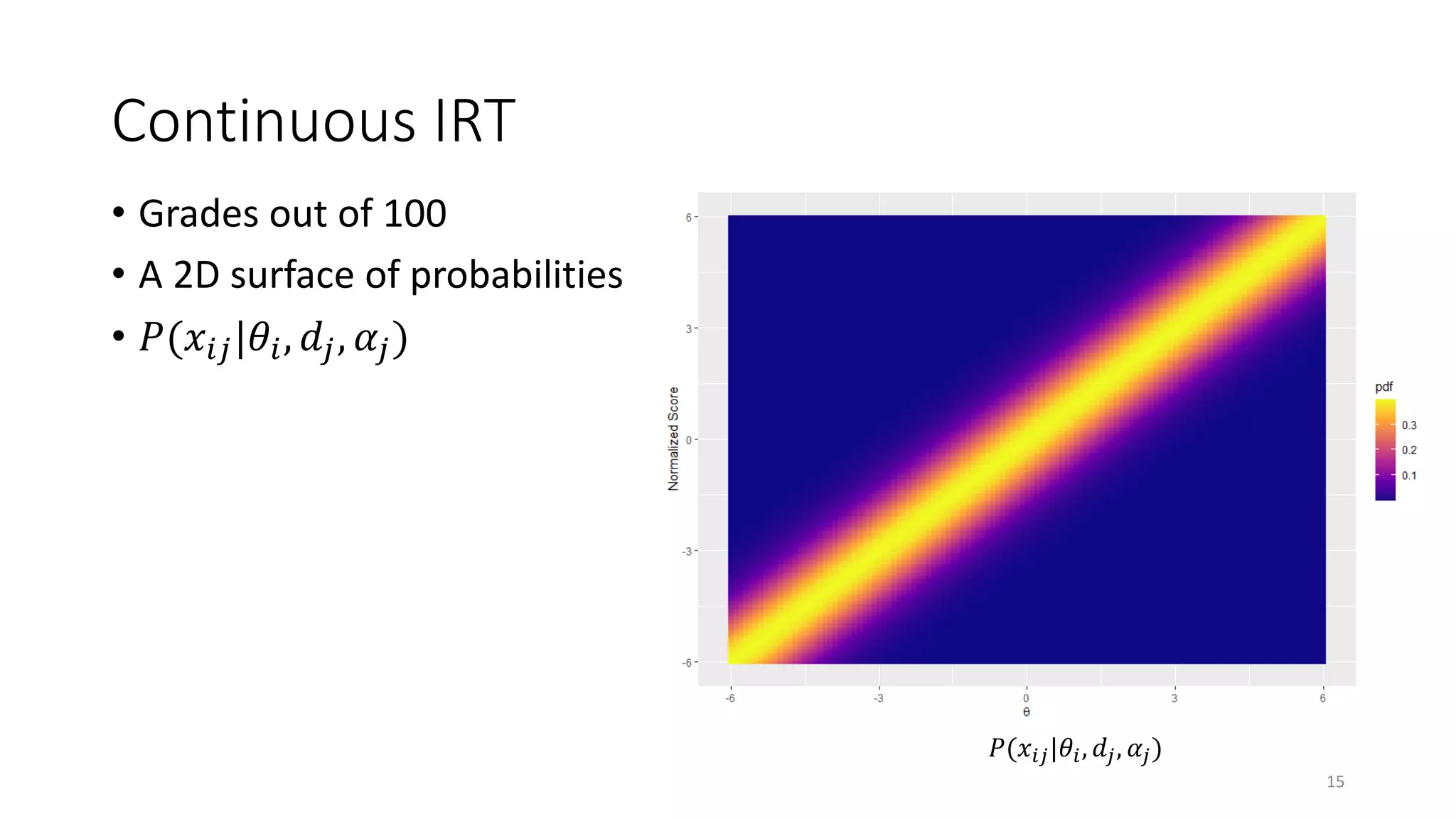

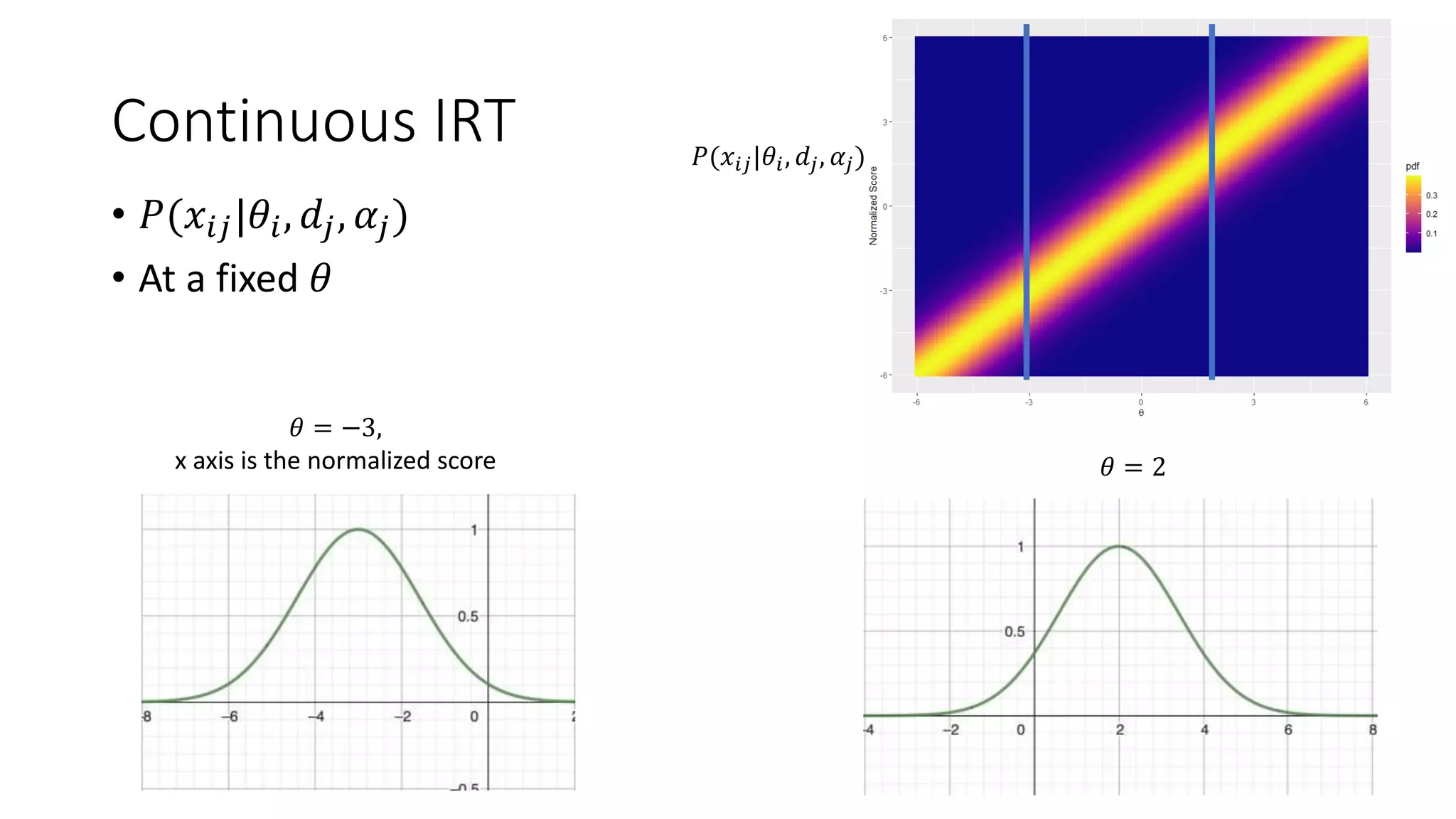

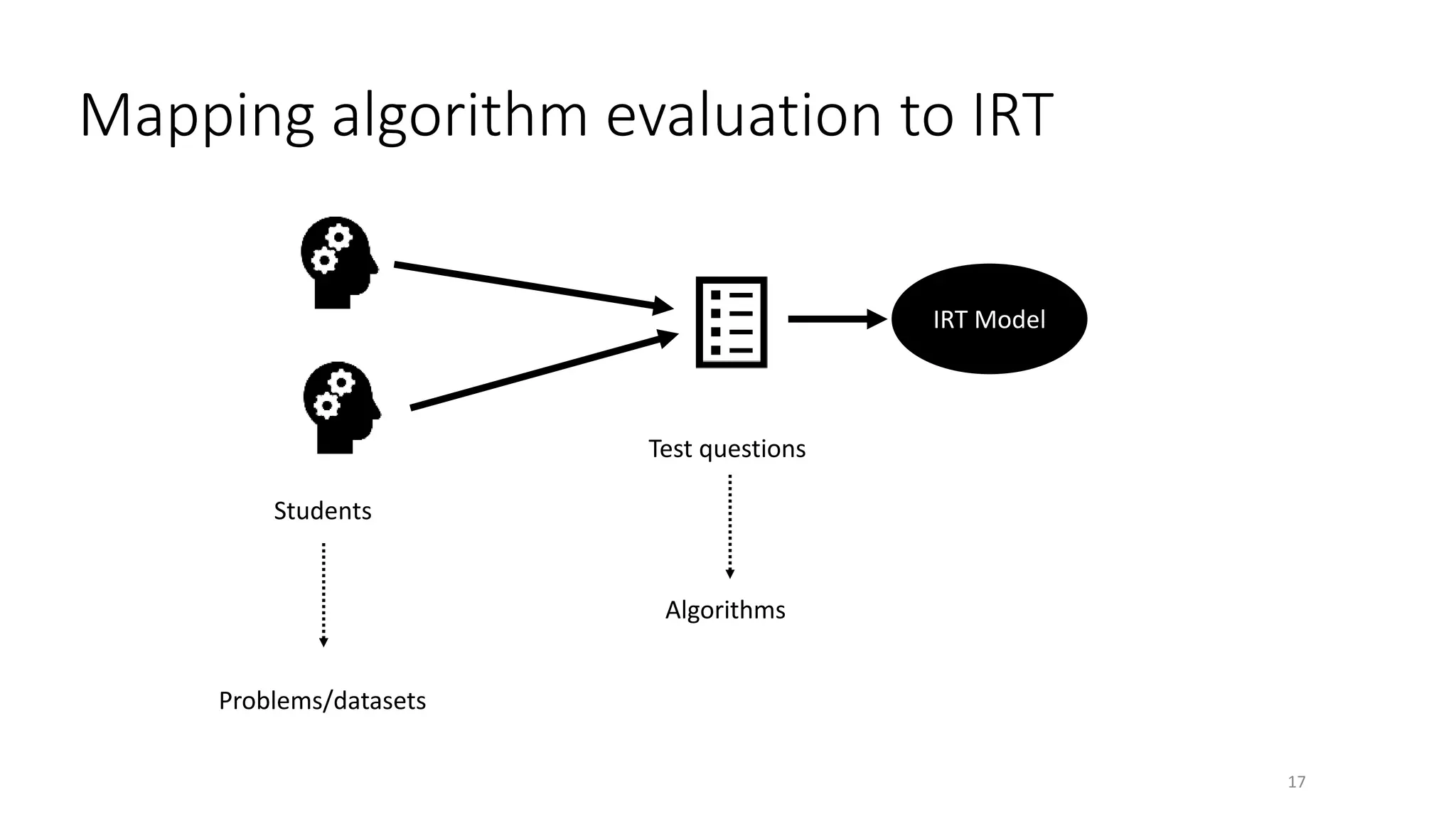

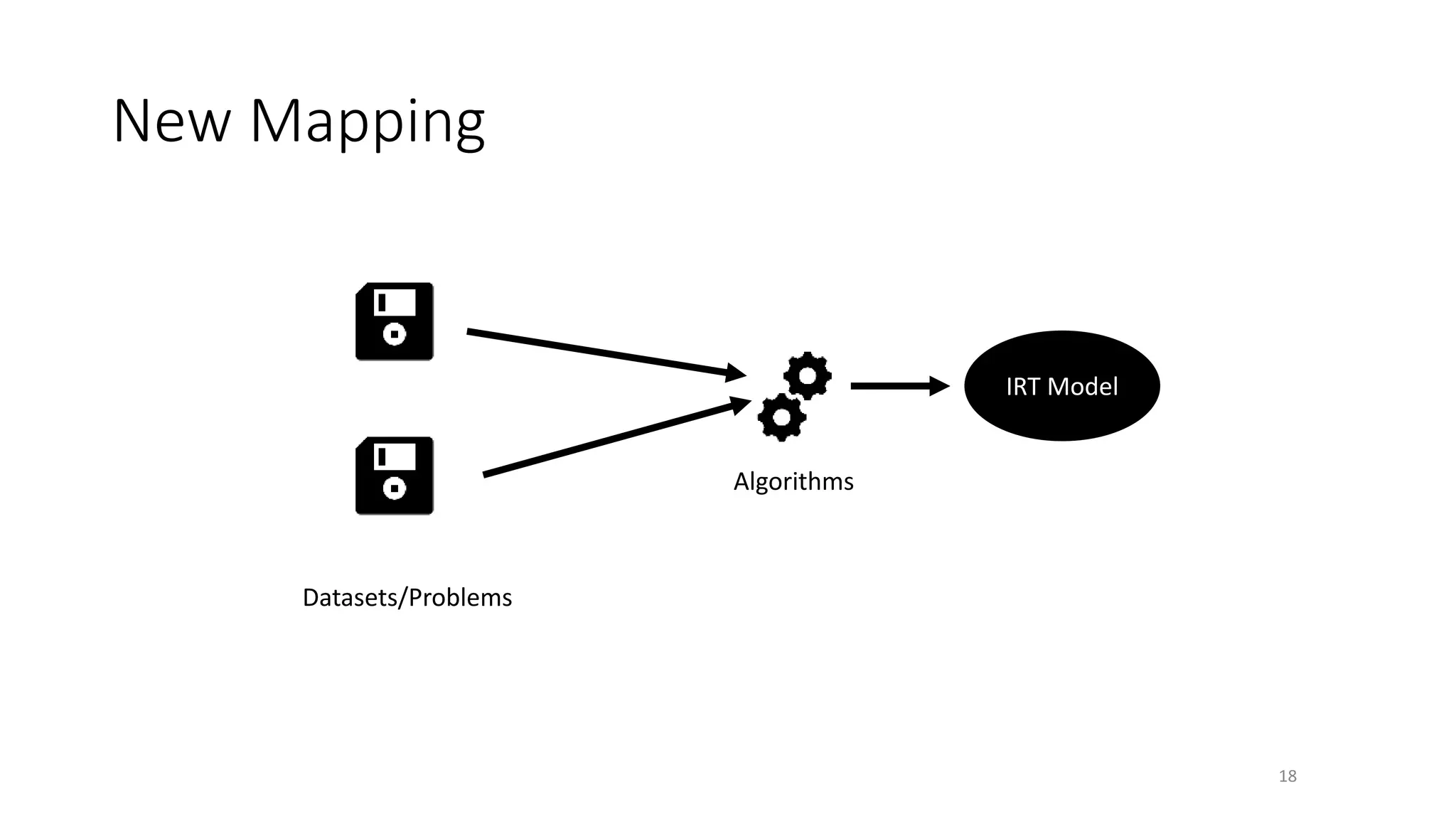

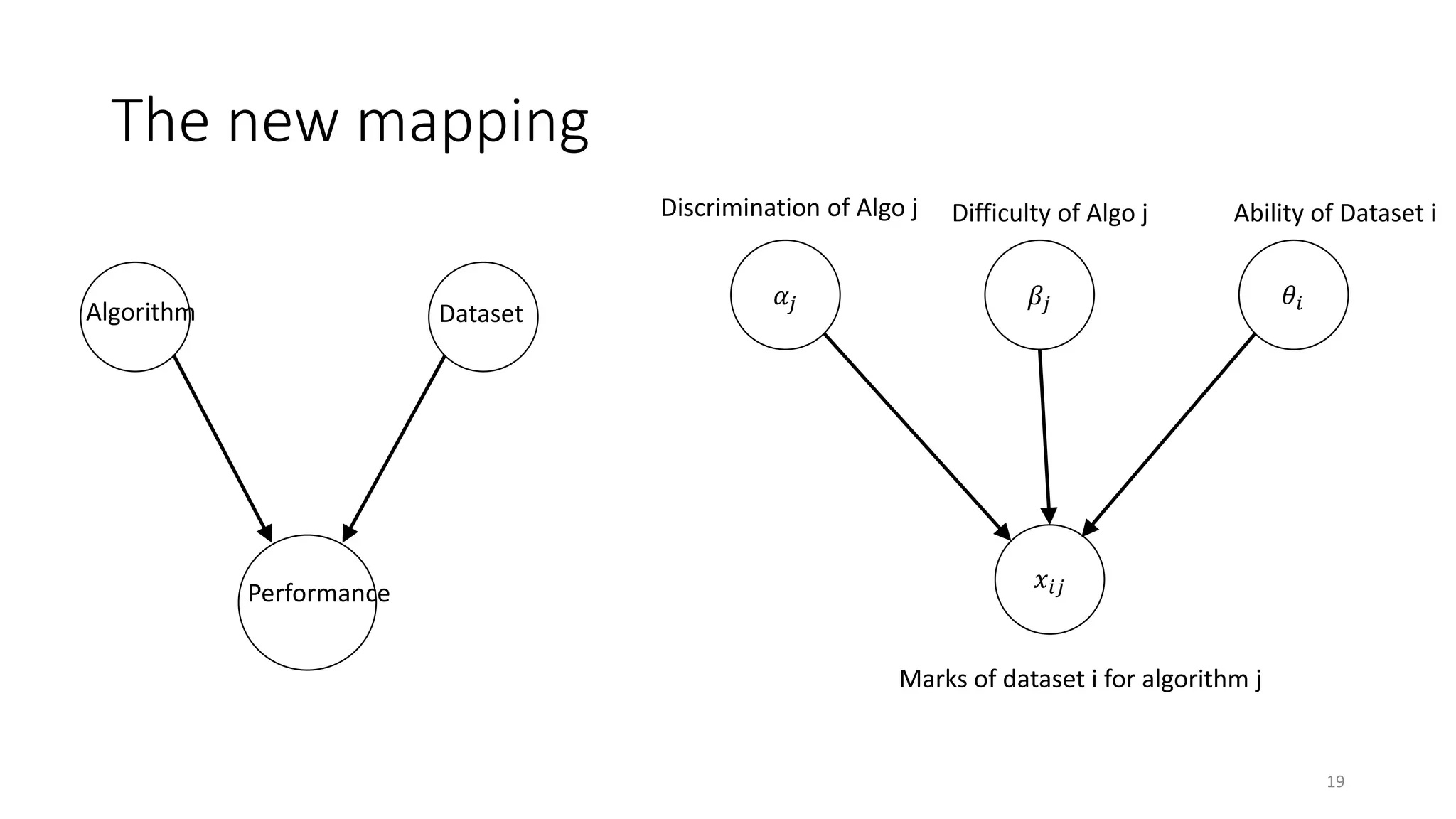

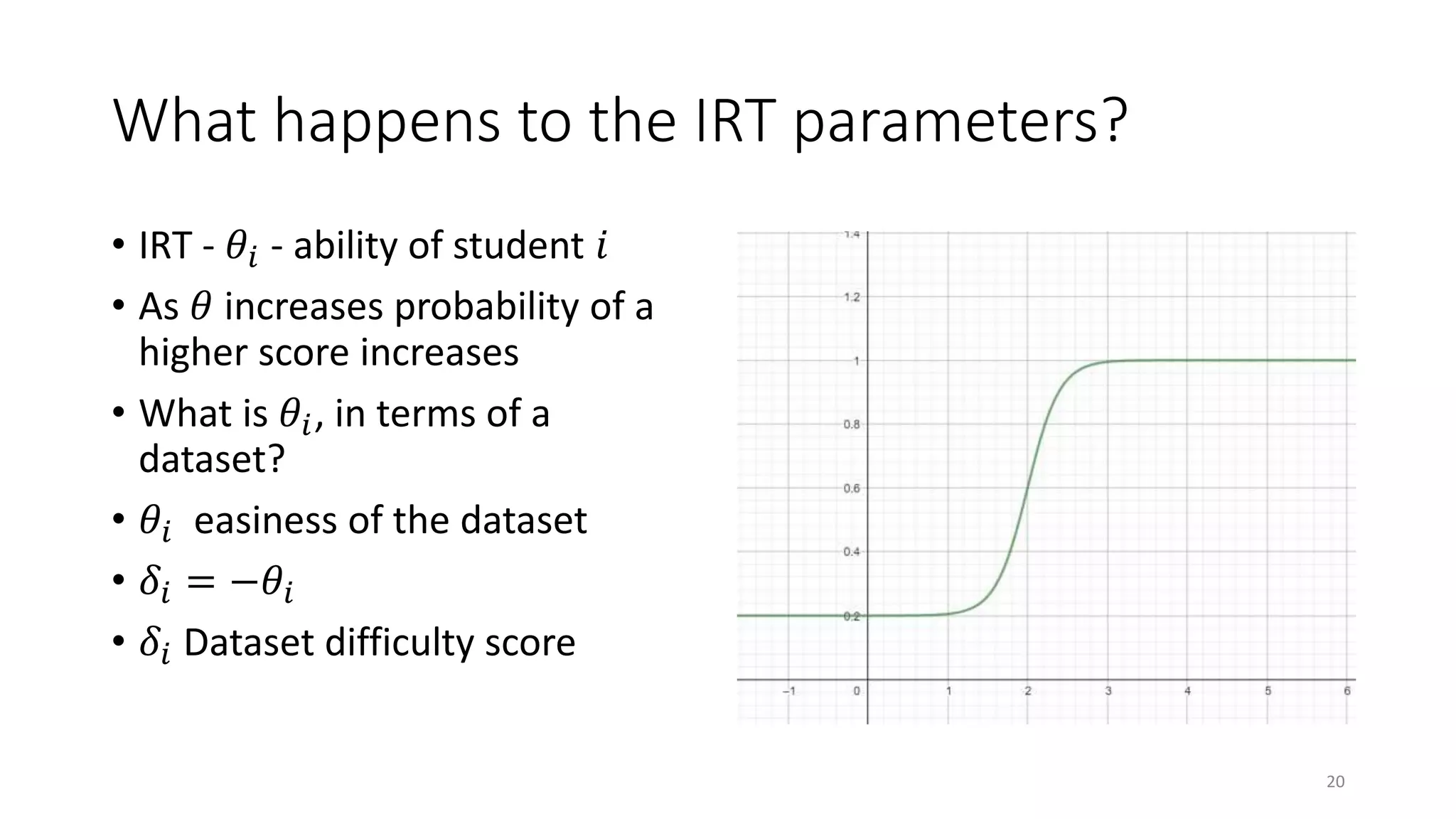

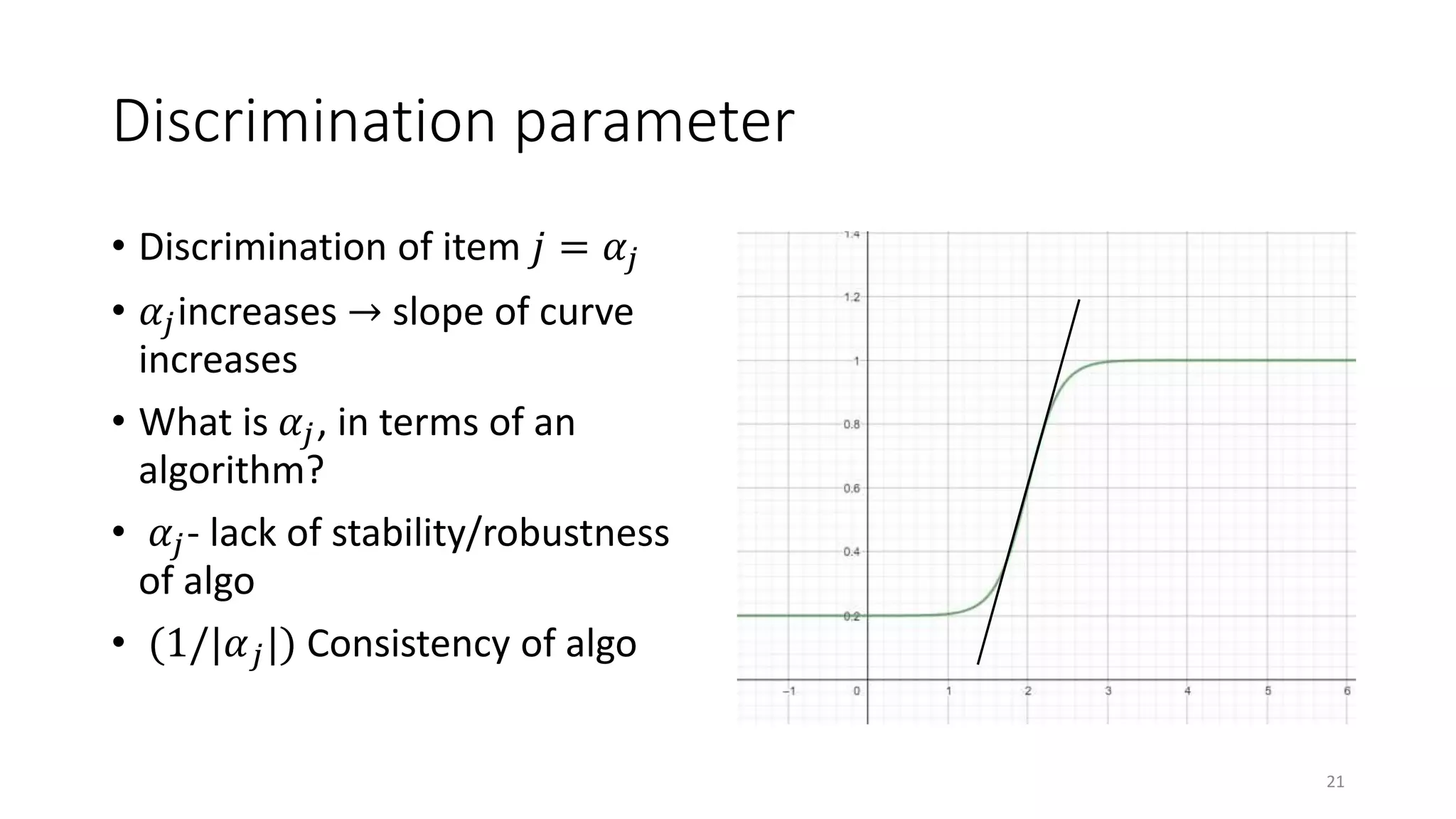

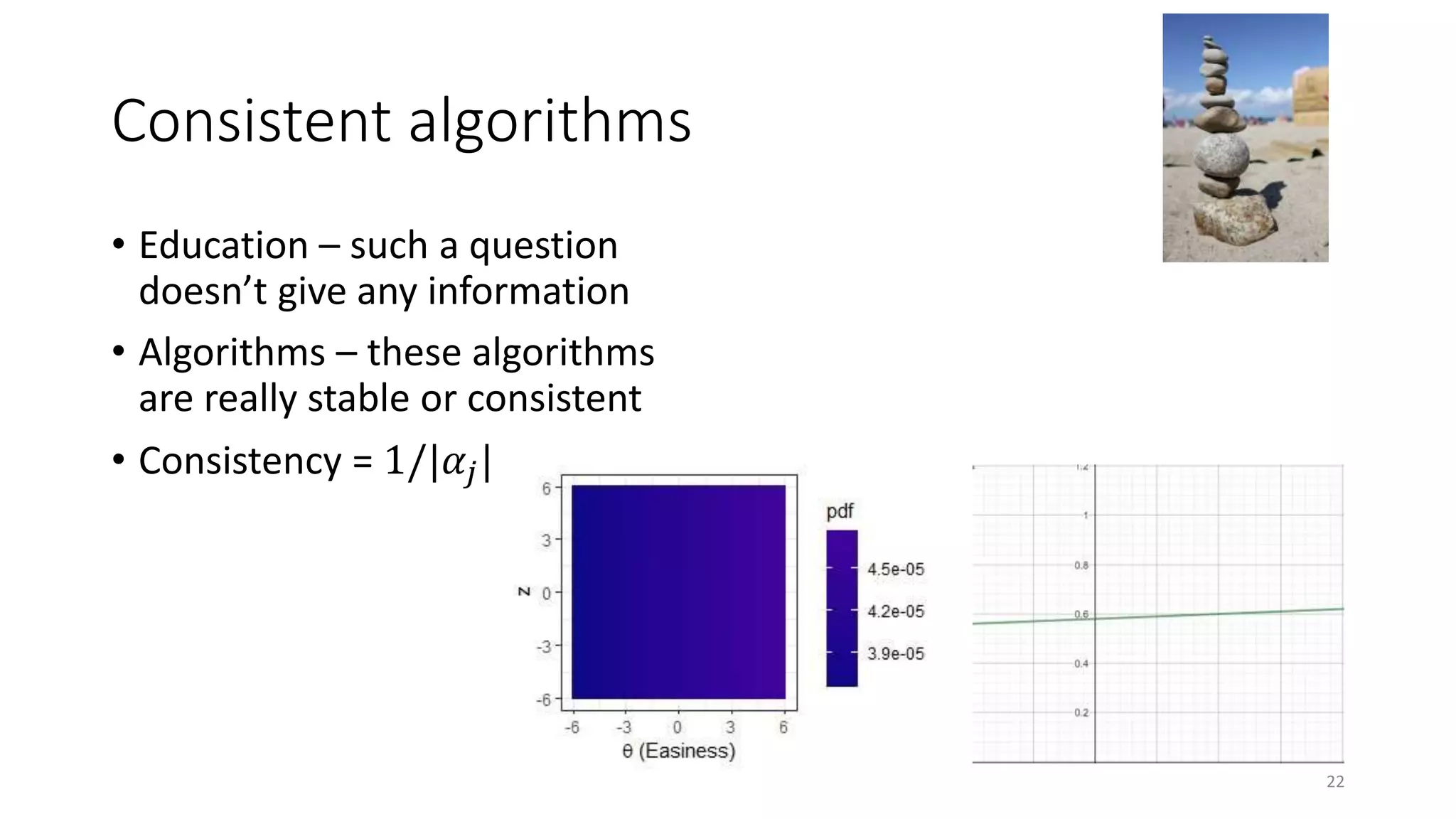

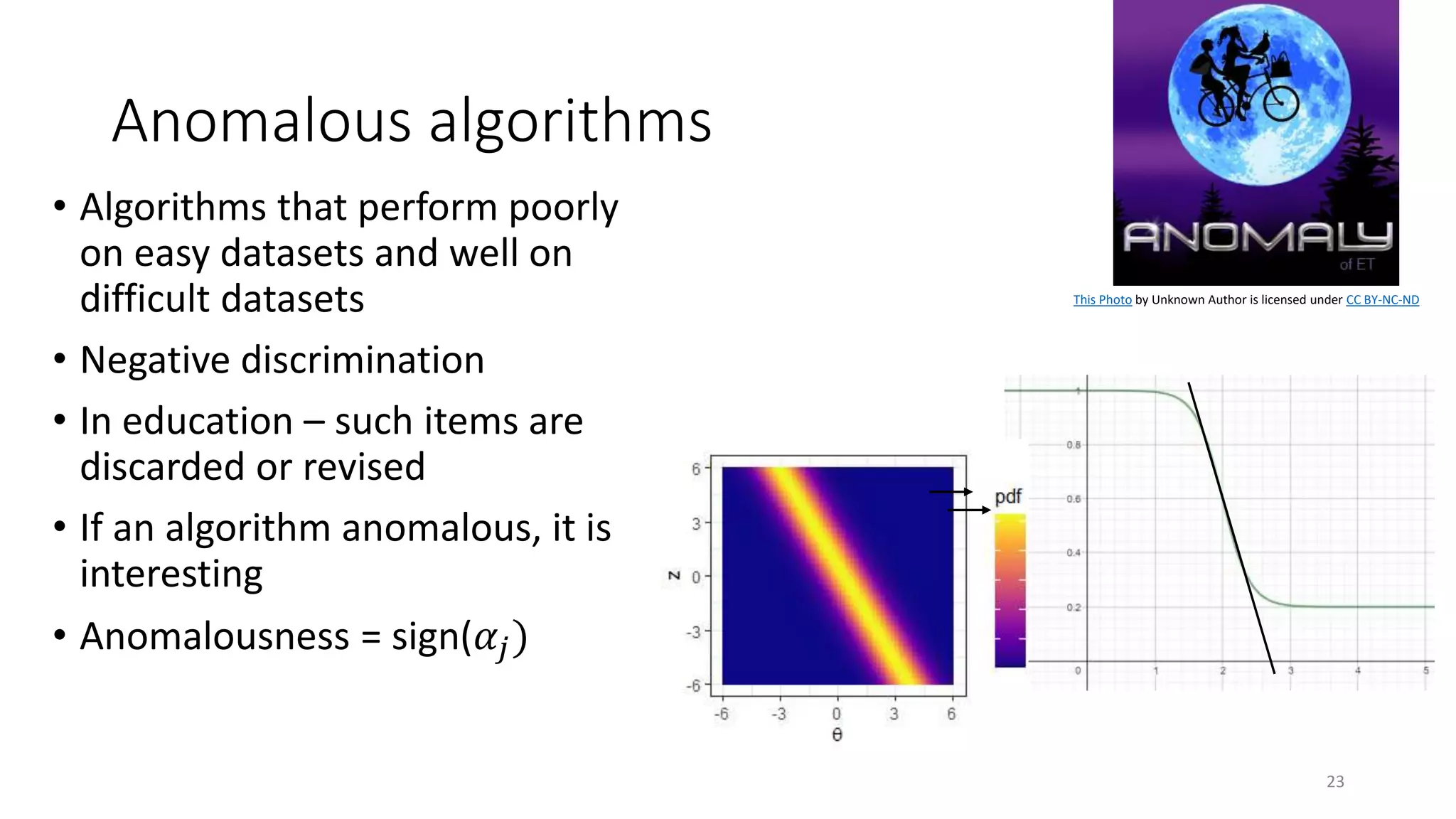

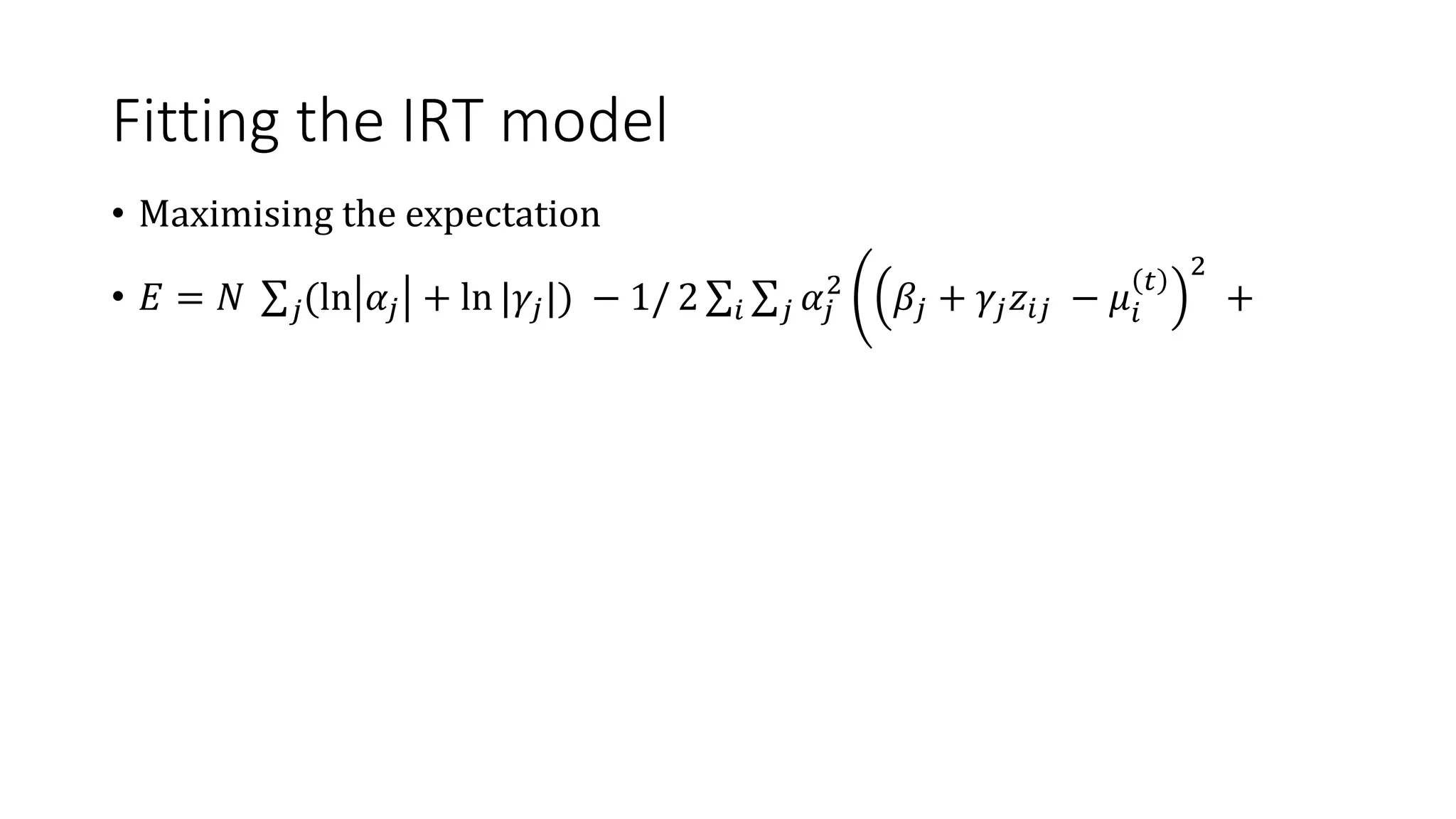

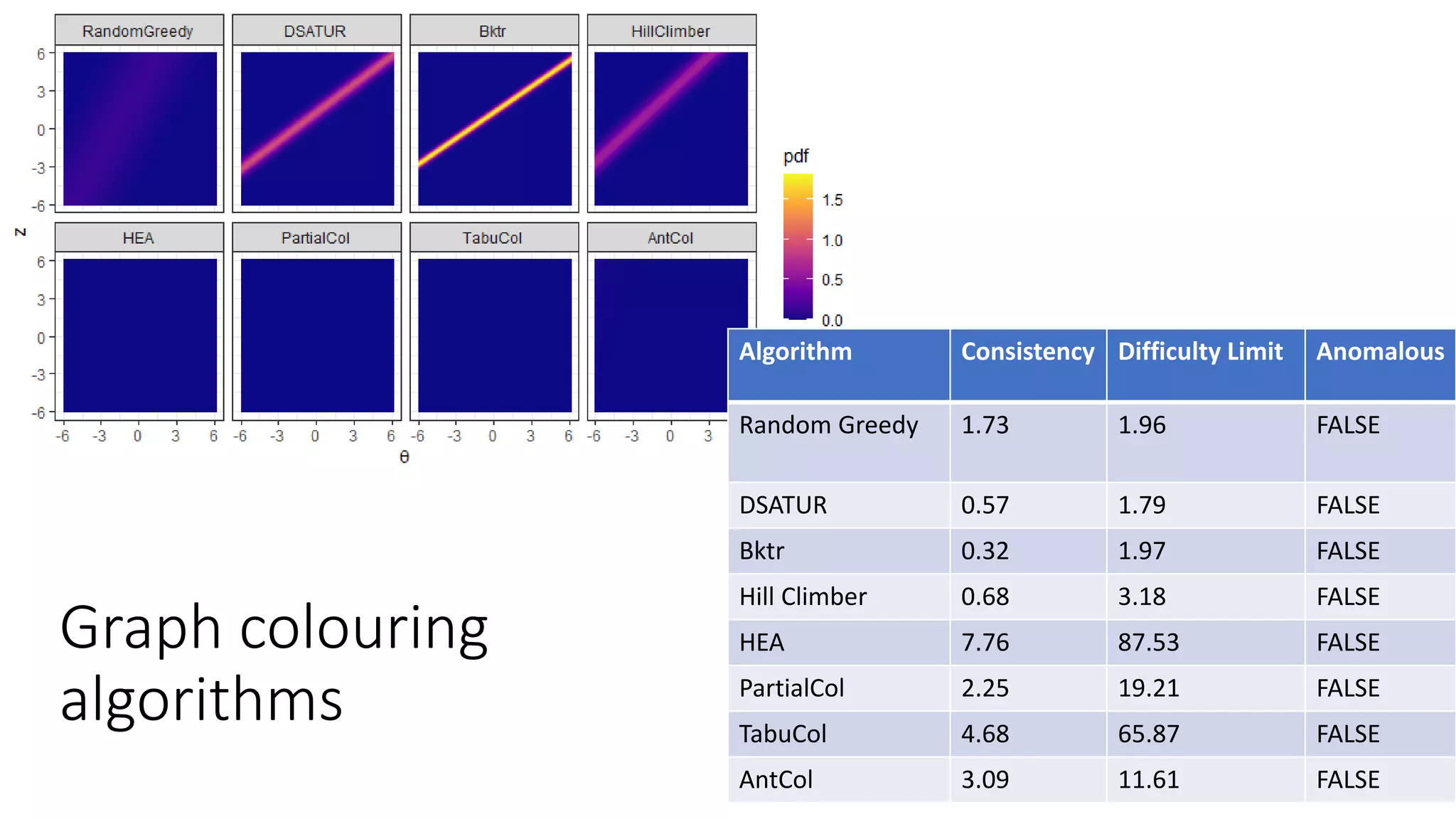

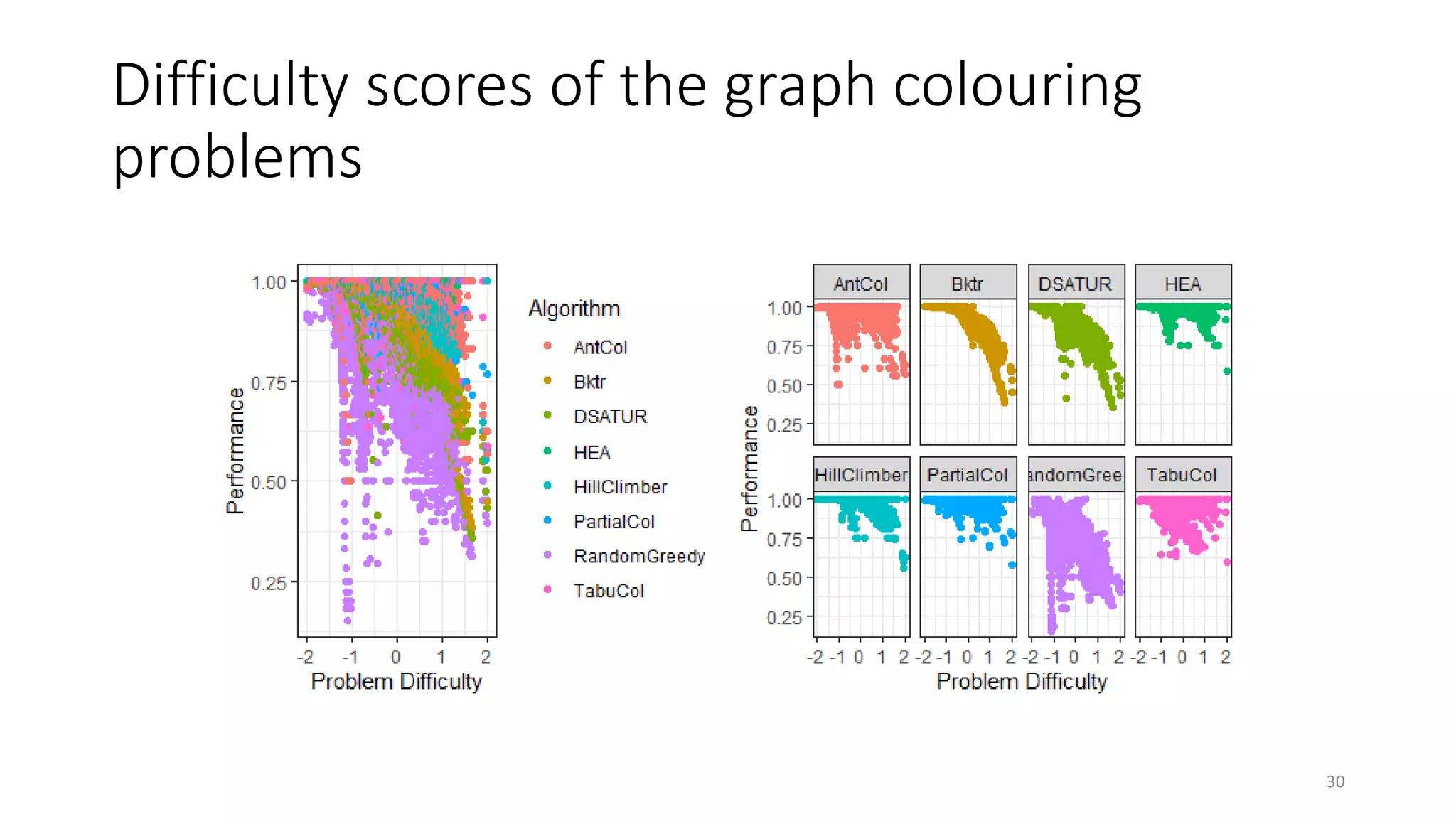

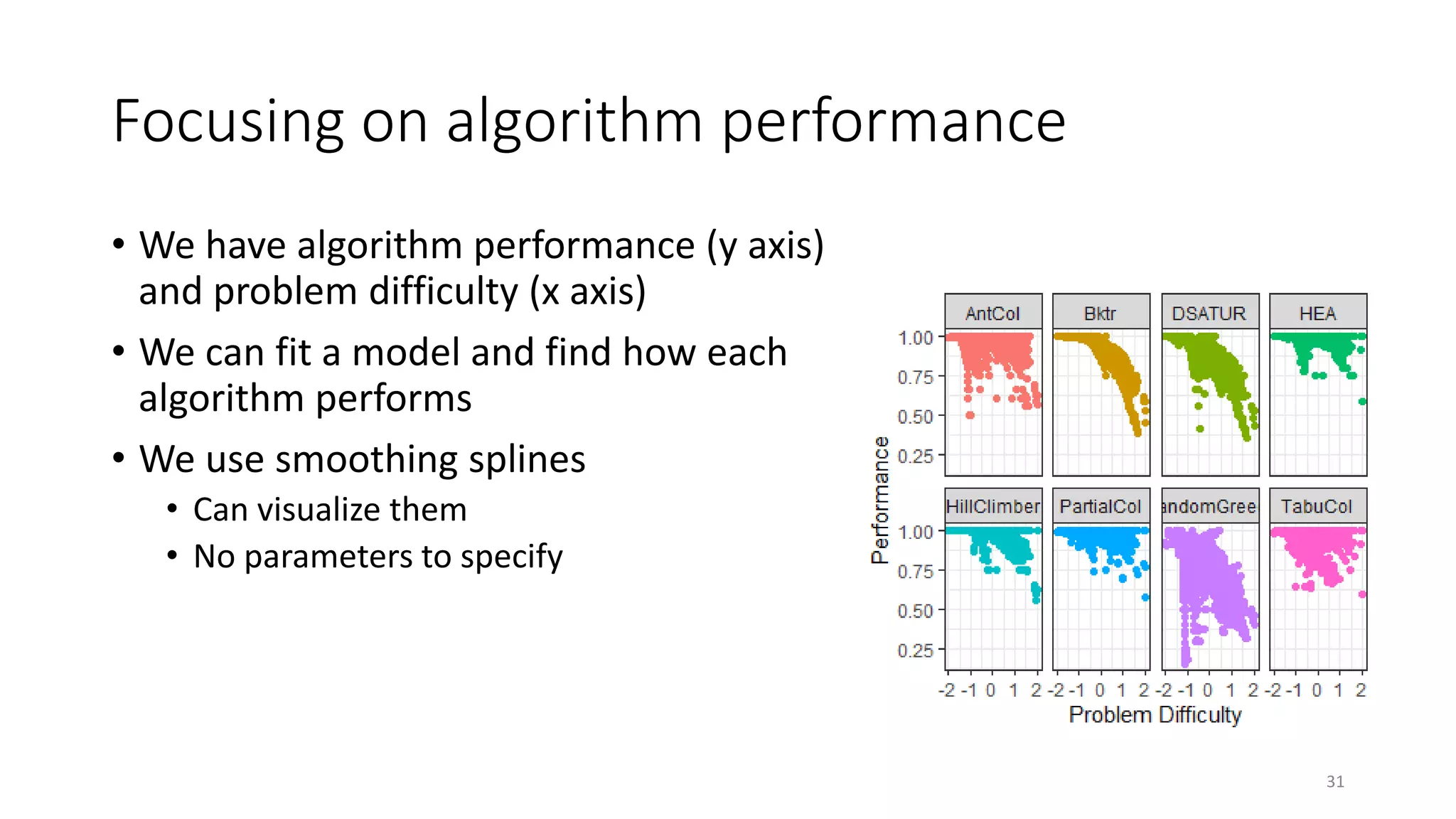

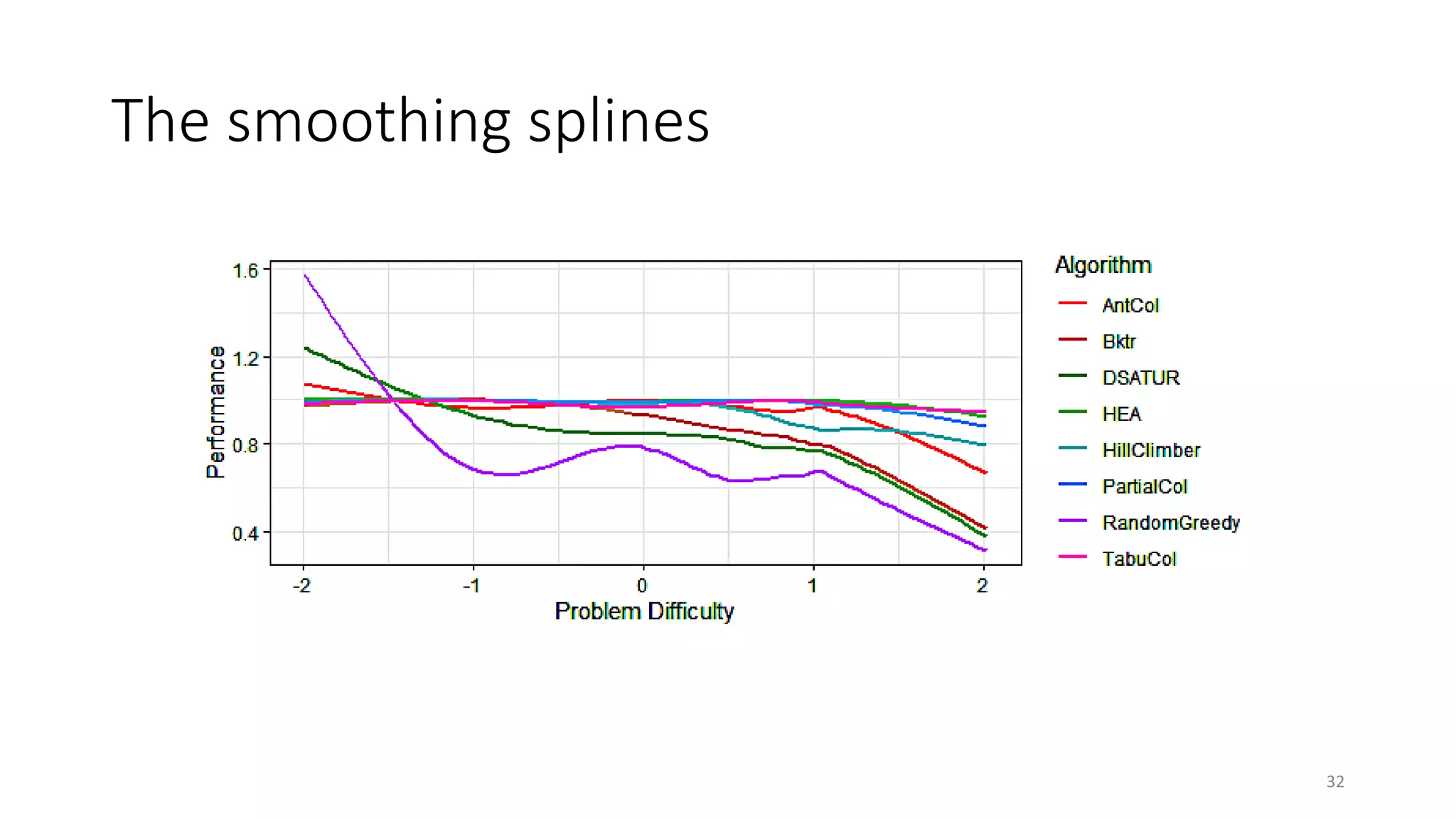

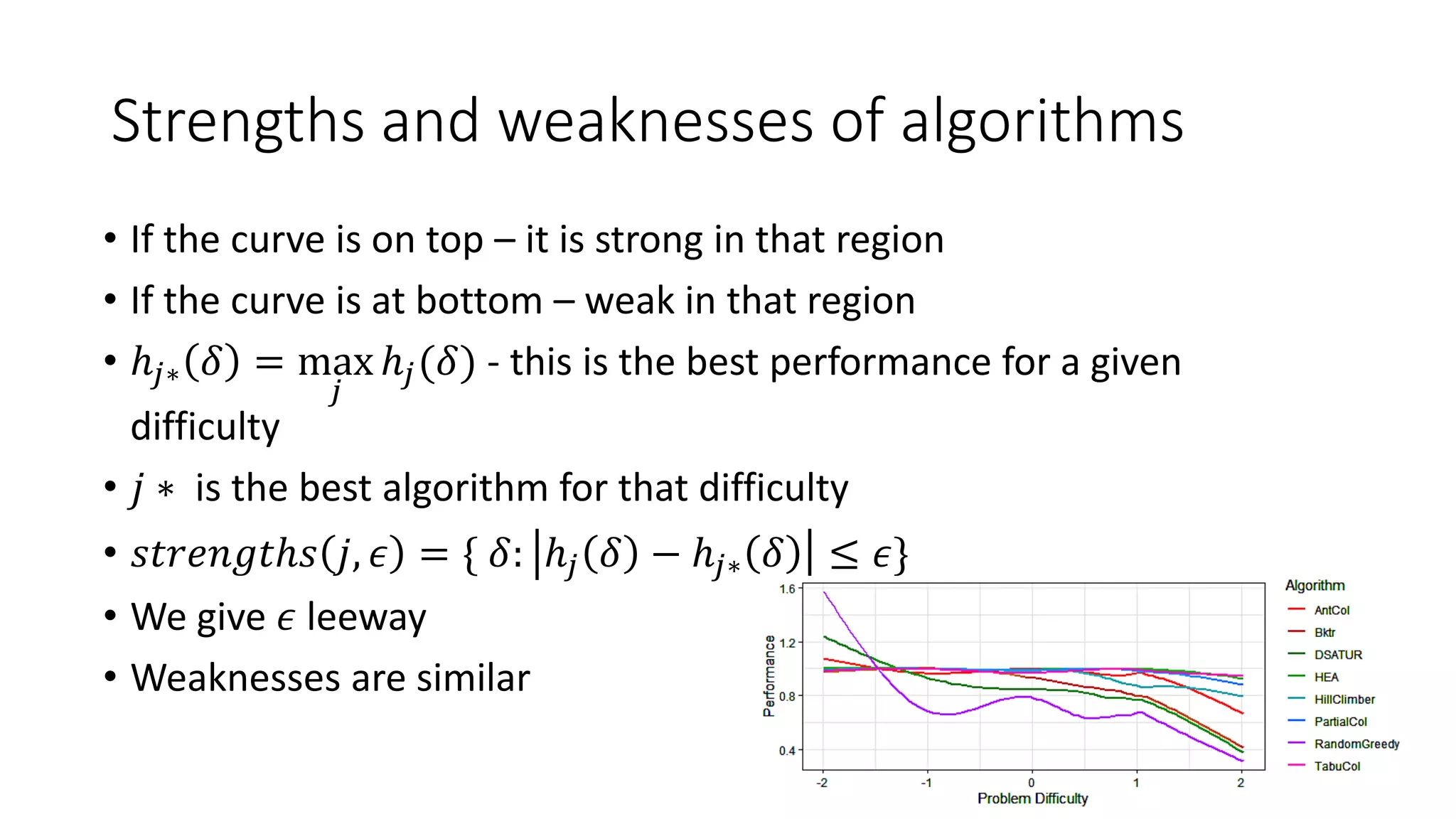

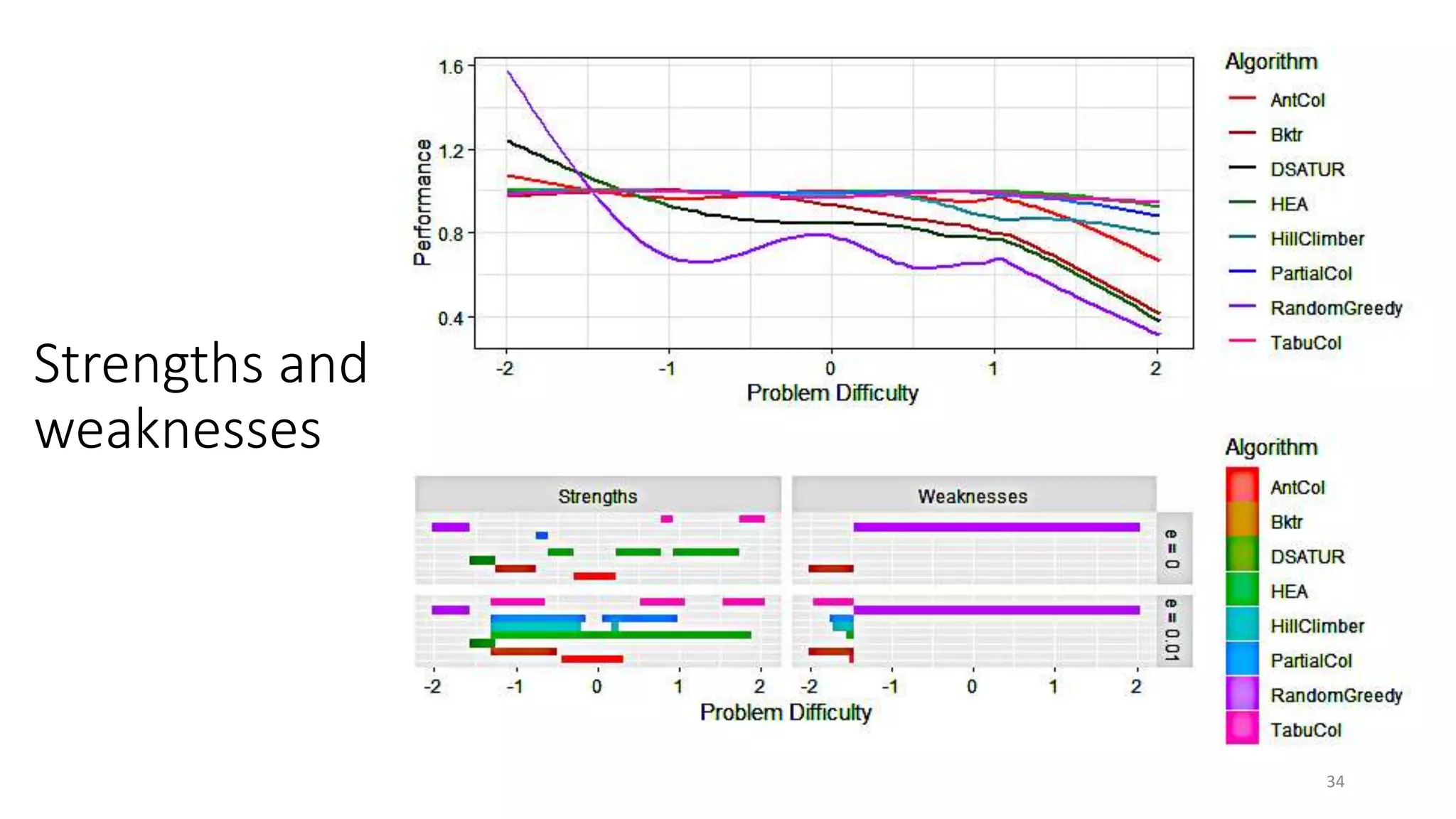

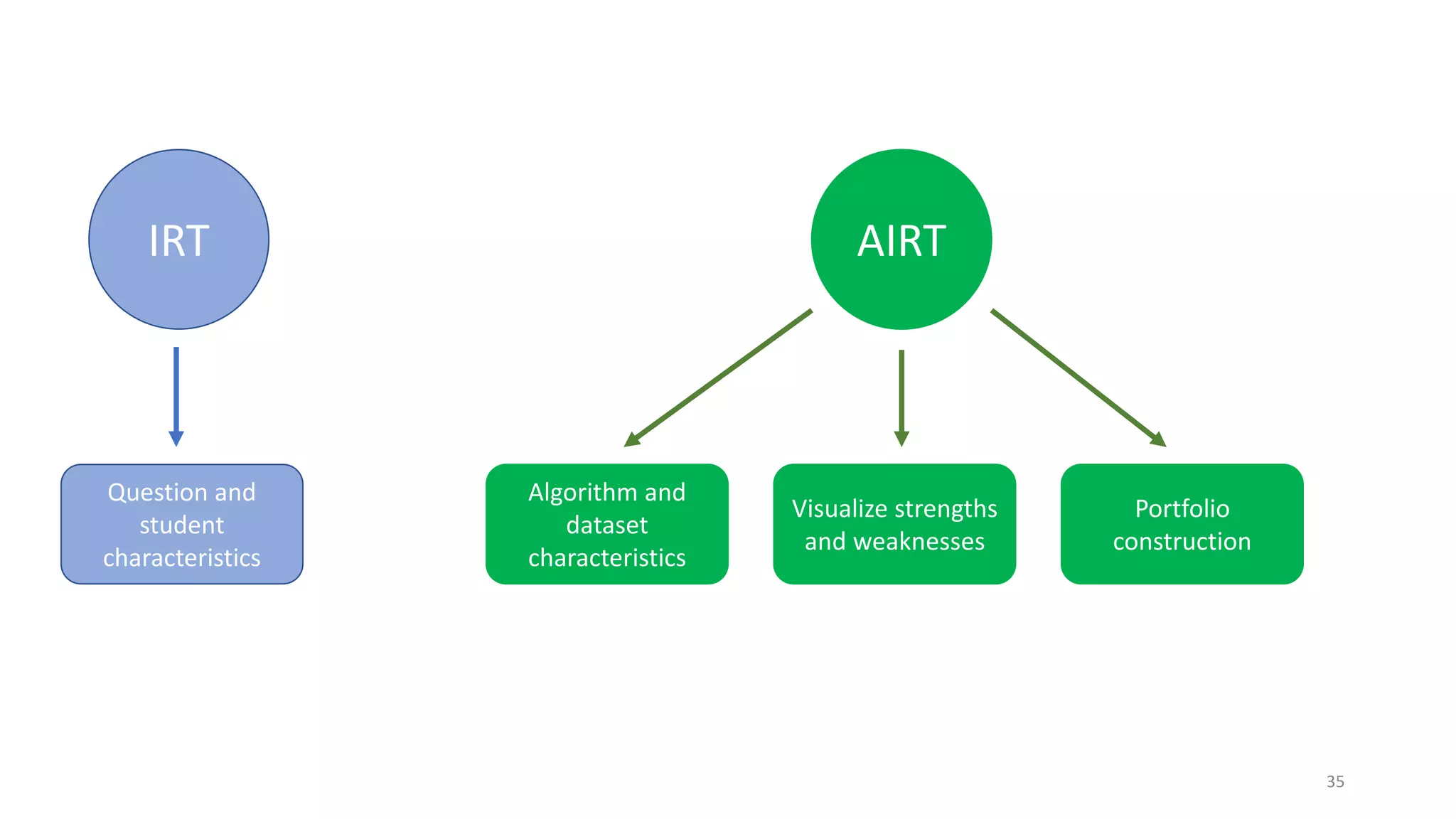

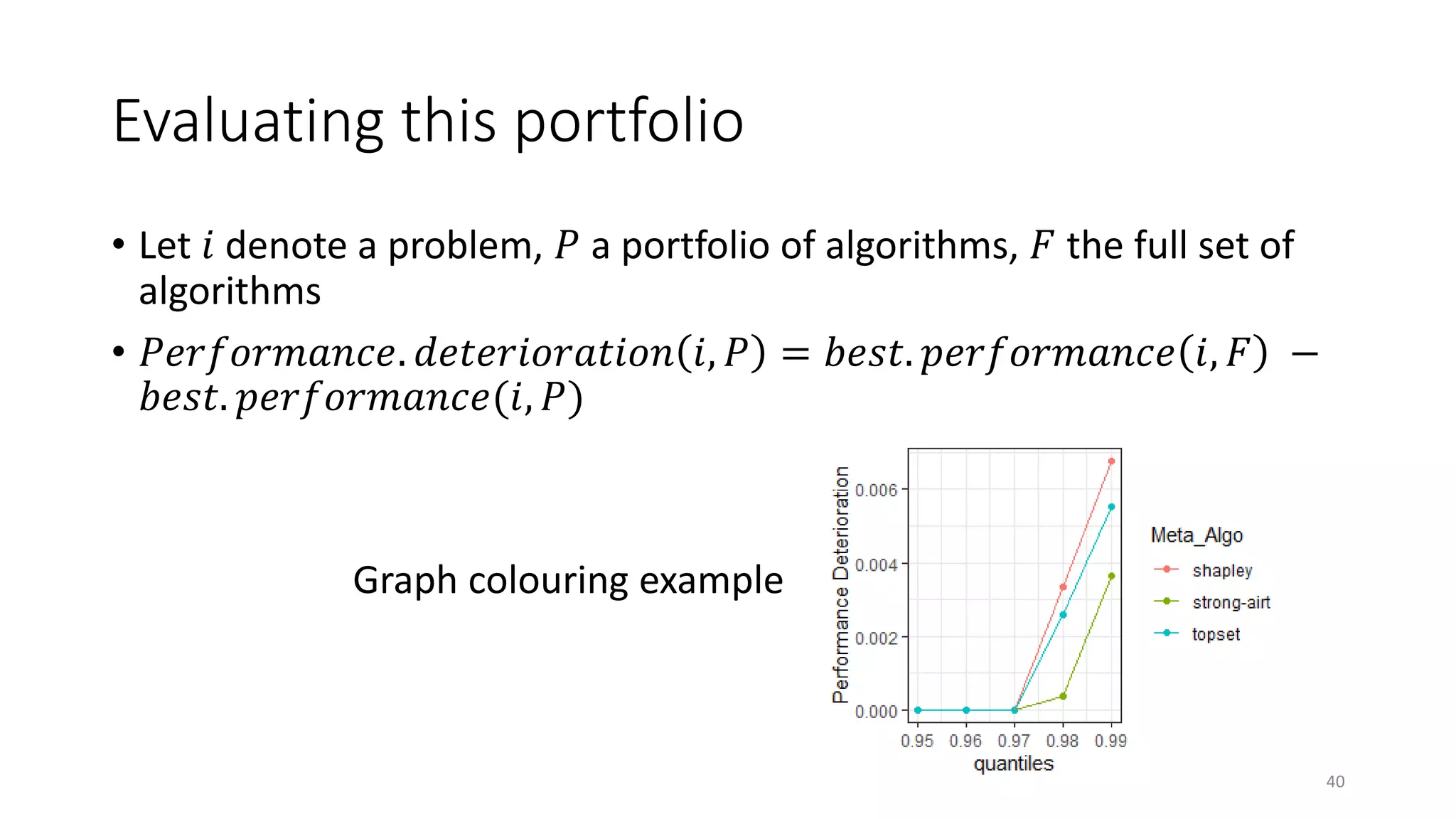

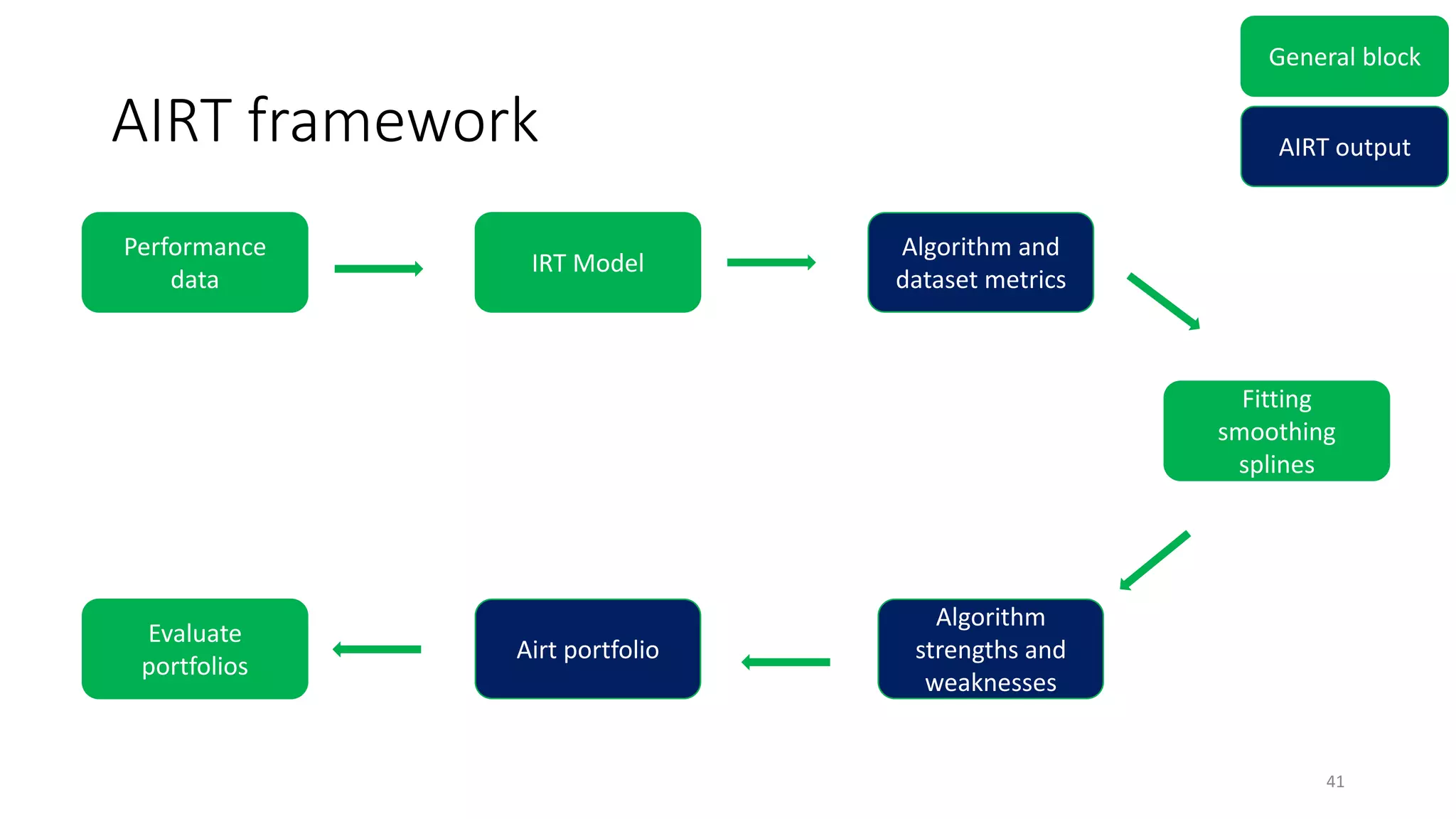

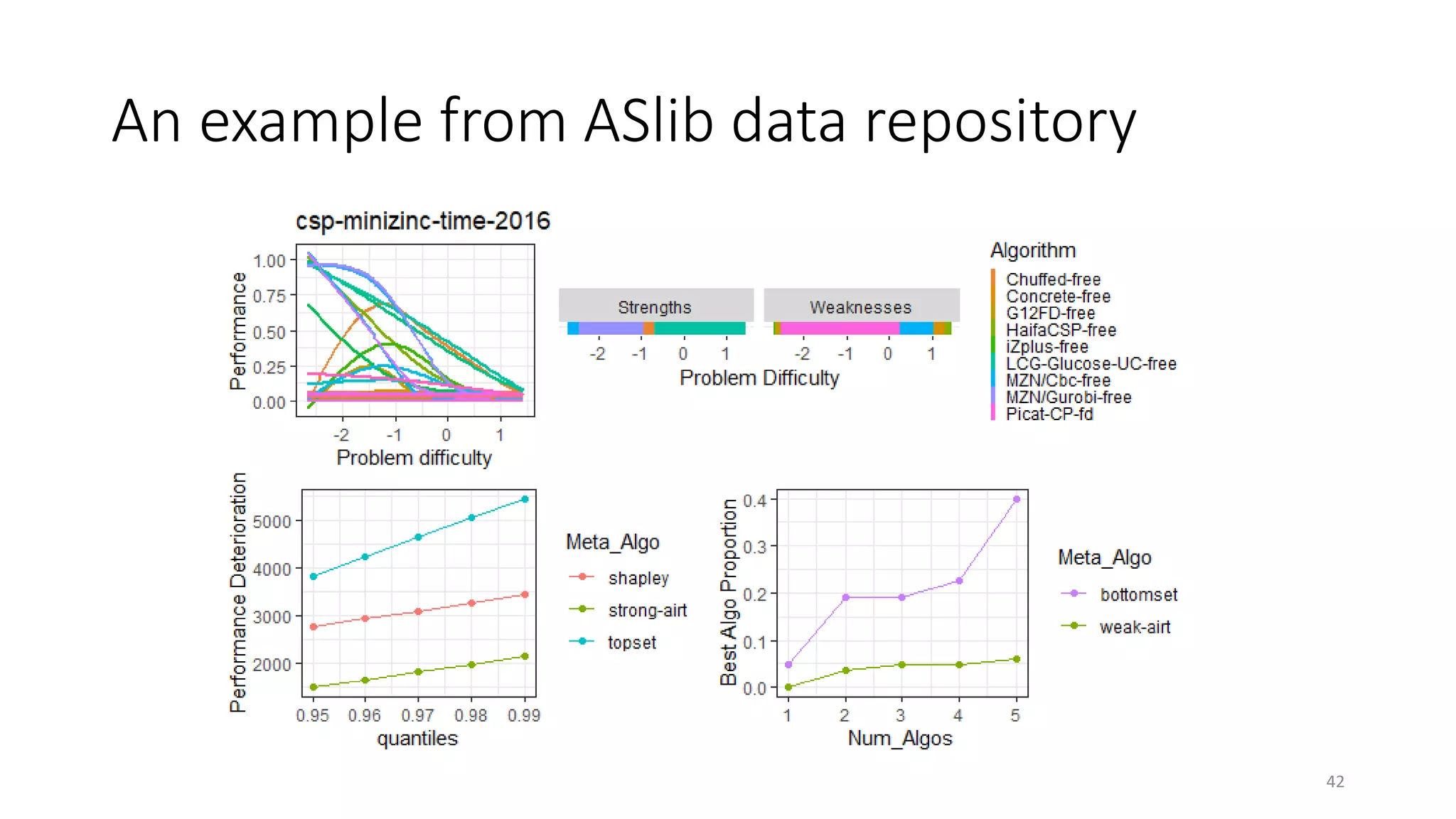

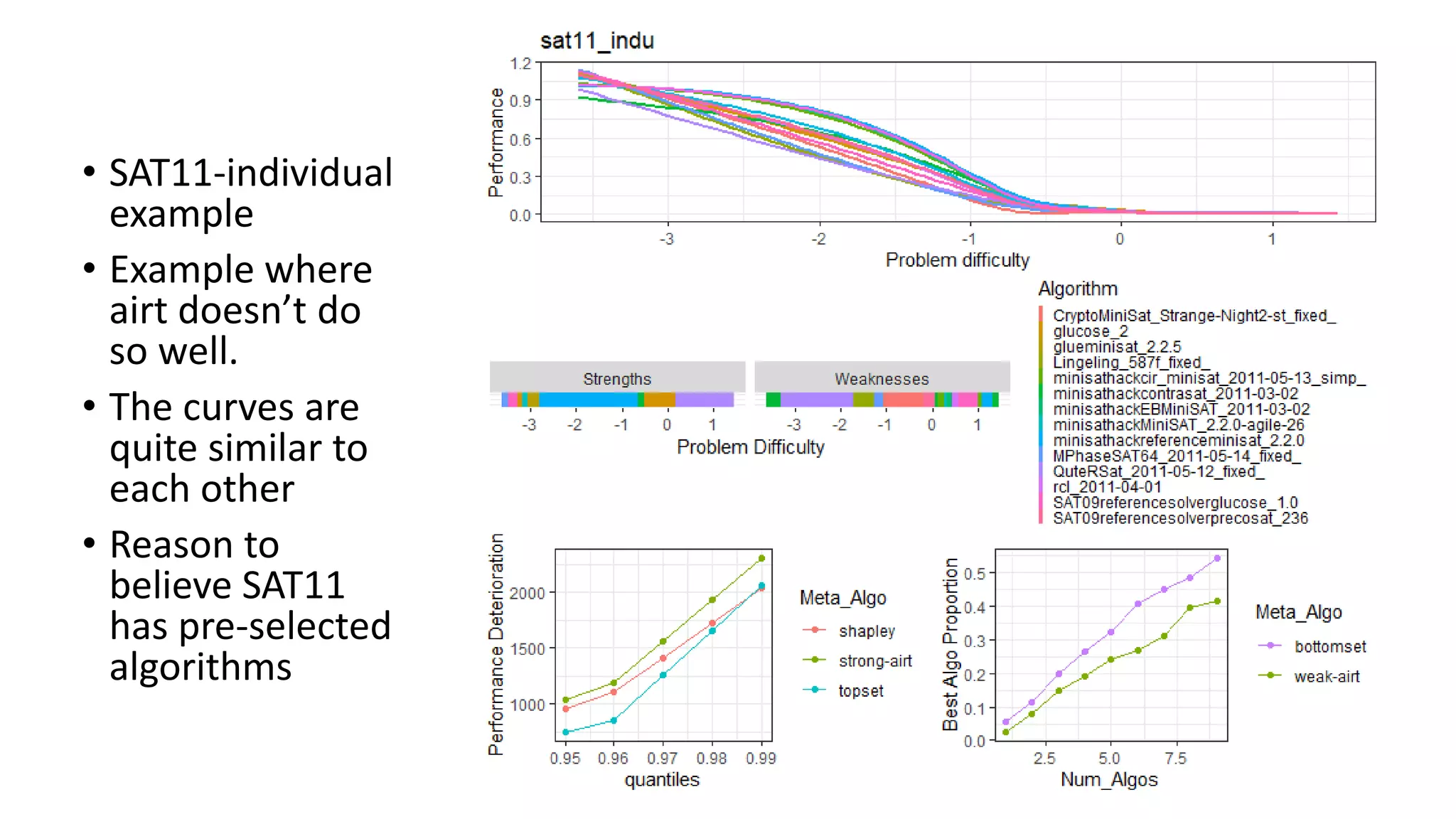

The document discusses the integration of educational models, specifically Item Response Theory (IRT), into the evaluation of algorithms within the field of explainable artificial intelligence (XAI). It outlines how IRT can help in assessing algorithm performance by measuring their anomalies, consistency, and difficulty limits, providing a clearer understanding of their strengths and weaknesses. Finally, it presents the 'airt' framework for selecting diverse algorithm portfolios that effectively address various datasets and problems.