The document discusses the importance of data quality in big data environments, highlighting the need for correct, complete, timely, and consistent data to support analytics and decision-making. It outlines various strategies for ensuring data quality, including testing methodologies, monitoring tools, and the integration of robust data pipelines. Additionally, it emphasizes the cultural shift required for continuous improvement in data quality practices, drawing parallels with historical trends in code quality.

![www.scling.com

class CleanUserTest extends FlatSpec {

def validateInvariants(

input: Seq[User],

output: Seq[User],

counters: Map[String, Int]) = {

output.foreach(recordInvariant)

// Dataset invariants

assert(input.size === output.size)

assert(input.size should be >= counters["upper-cased"])

}

def recordInvariant(u: User) =

assert(u.country.size === 2)

def runJob(input: Seq[User]): Seq[User] = {

// Same as before

...

validateInvariants(input, output, counters)

(output, counters)

}

// Test case is the same

}

24

Invariants

● Some things are true

○ For every record

○ For every job invocation

● Not necessarily in production

○ Reuse invariant predicates as quality

probes](https://image.slidesharecdn.com/engineeringdataquality-191108212359/75/Engineering-data-quality-24-2048.jpg)

![www.scling.com

Measuring correctness: counters

● User-defined

● Technical from framework

○ Execution time

○ Memory consumption

○ Data volumes

○ ...

25

case class Order(item: ItemId, userId: UserId)

case class User(id: UserId, country: String)

val orders = read(orderPath)

val users = read(userPath)

val orderNoUserCounter = longAccumulator("order-no-user")

val joined: C[(Order, Option[User])] = orders

.groupBy(_.userId)

.leftJoin(users.groupBy(_.id))

.values

val orderWithUser: C[(Order, User)] = joined

.flatMap( orderUser match

case (order, Some(user)) => Some((order, user))

case (order, None) => {

orderNoUserCounter.add(1)

None

})

SQL: Nope](https://image.slidesharecdn.com/engineeringdataquality-191108212359/75/Engineering-data-quality-25-2048.jpg)

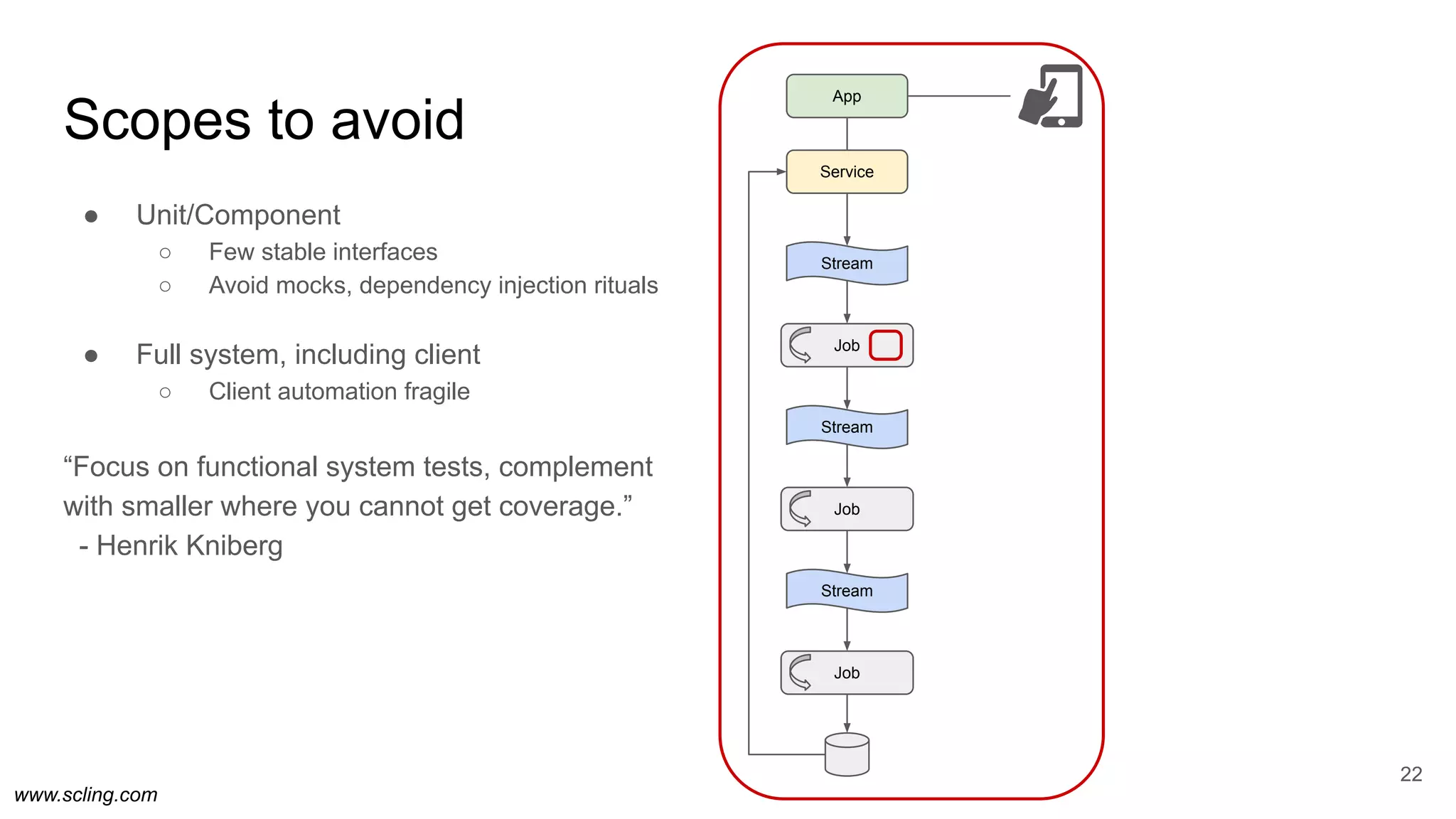

![www.scling.com

val orderLateCounter = longAccumulator("order-event-late")

val hourPaths = conf.order.split(",")

val order = hourPaths

.map(spark.read.json(_))

.reduce(a, b => a.union(b))

val orderThisHour = order

.map({ cl =>

# Count the events that came after the delay window

if (cl.eventTime.hour + config.delayHours <

config.hour) {

orderLateCounter.add(1)

}

order

})

.filter(cl => cl.eventTime.hour == config.hour)

class OrderShuffle(SparkSubmitTask):

hour = DateHourParameter()

delay_hours = IntParameter()

jar = 'orderpipeline.jar'

entry_class = 'com.example.shop.OrderJob'

def requires(self):

# Note: This delays processing by three hours.

return [Order(hour=hour) for hour in

[self.hour + timedelta(hour=h) for h in

range(self.delay_hours)]]

def output(self):

return HdfsTarget("/prod/red/order/v1/"

f"delay={self.delay}/"

f"{self.hour:%Y/%m/%d/%H}/")

def app_options(self):

return [ "--hour", self.hour,

"--delay-hours", self.delay_hours,

"--order",

",".join([i.path for i in self.input()]),

"--output", self.output().path]

Incompleteness recovery

36

SQL: Separate job for measuring window leakage.](https://image.slidesharecdn.com/engineeringdataquality-191108212359/75/Engineering-data-quality-36-2048.jpg)

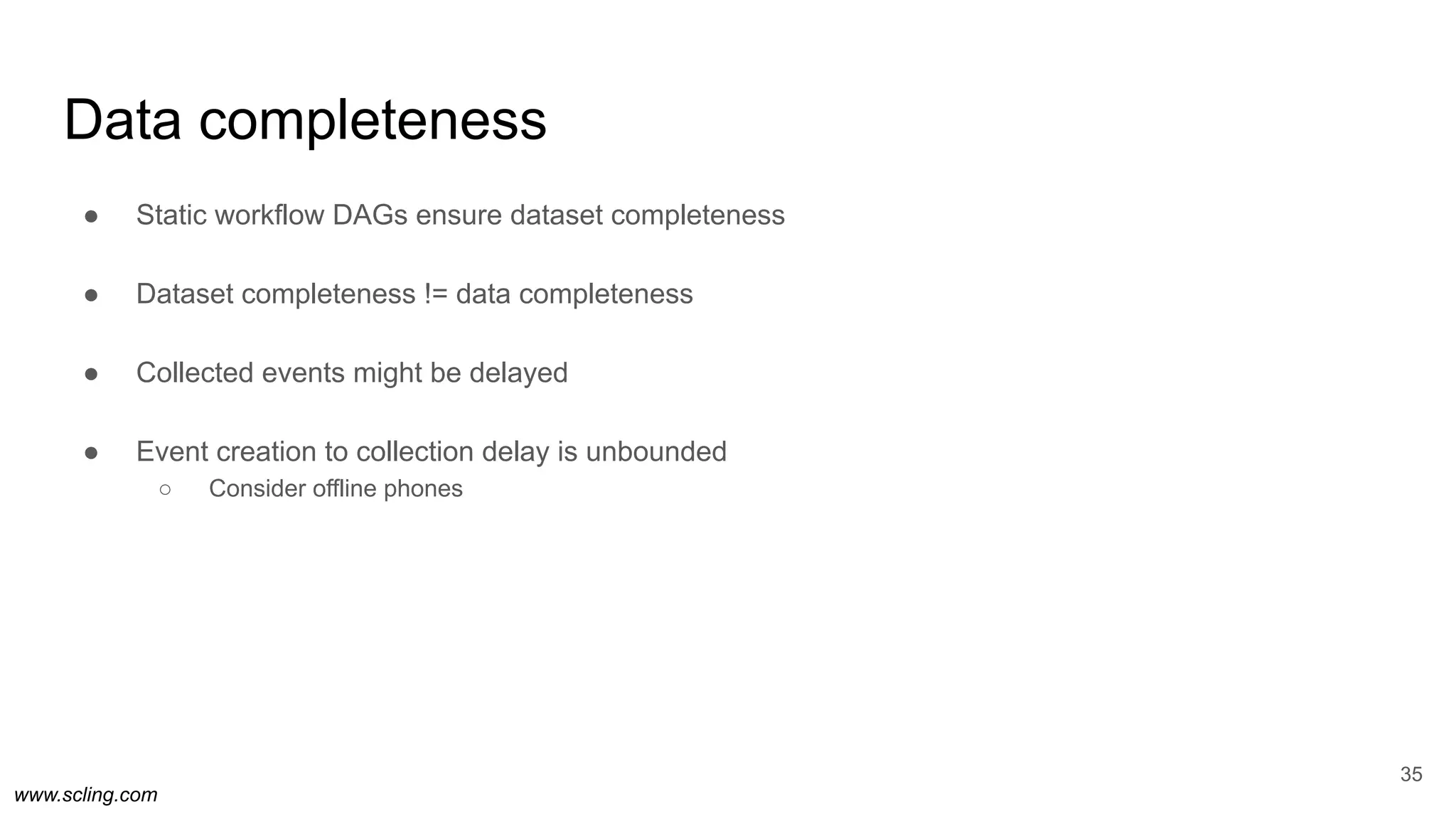

![www.scling.com

class OrderShuffleAll(WrapperTask):

hour = DateHourParameter()

def requires(self):

return [OrderShuffle(hour=self.hour, delay_hour=d)

for d in [0, 4, 12]]

class OrderDashboard(mysql.CopyToTable):

hour = DateHourParameter()

def requires(self):

return OrderShuffle(hour=self.hour, delay_hour=0)

class FinancialReport(SparkSubmitTask):

date = DateParameter()

def requires(self):

return [OrderShuffle(

hour=datetime.combine(self.date, time(hour=h)),

delay_hour=12)

for h in range(24)]

Fast data, complete data

37

Delay: 0

Delay: 4

Delay: 12](https://image.slidesharecdn.com/engineeringdataquality-191108212359/75/Engineering-data-quality-37-2048.jpg)