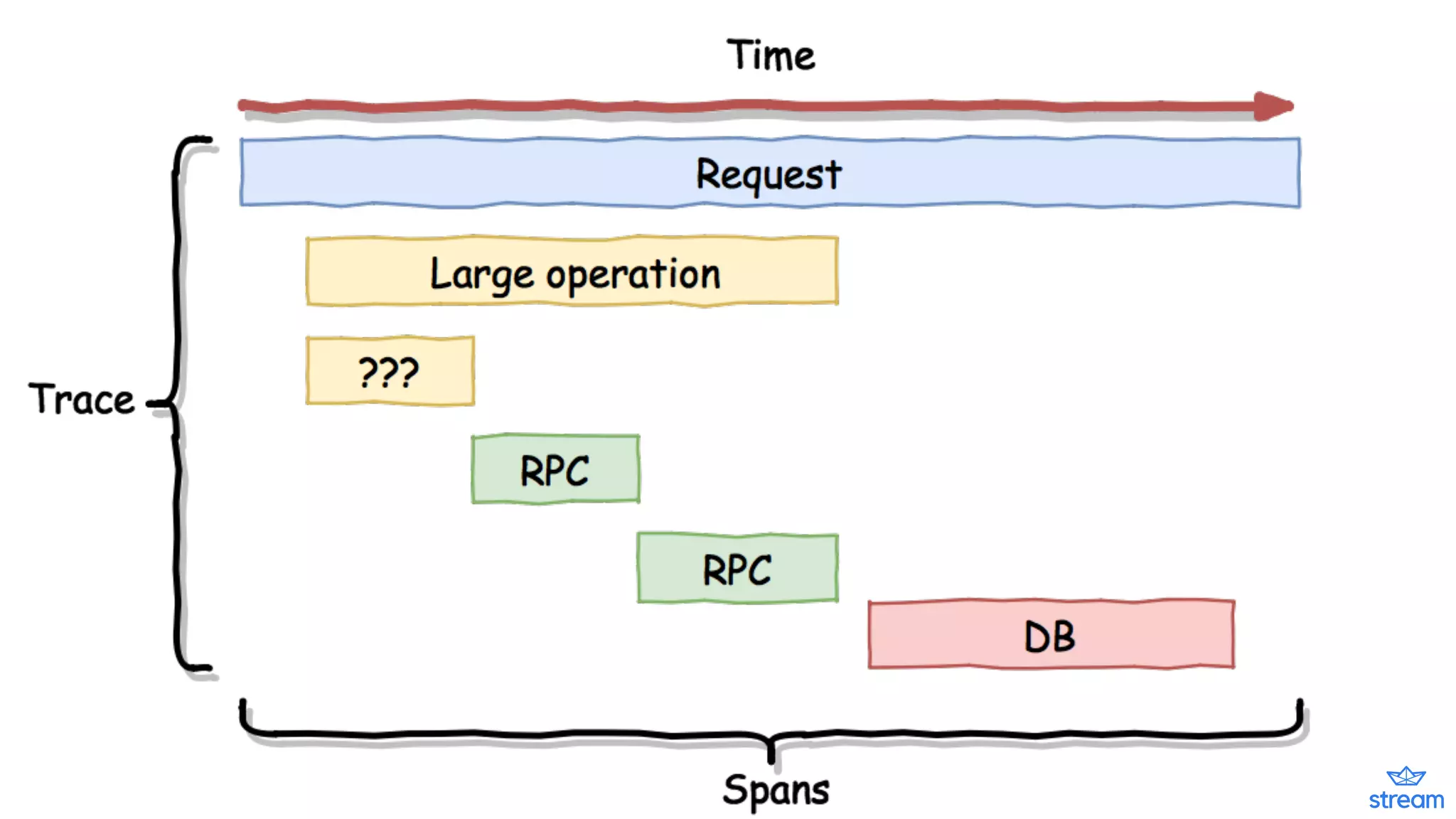

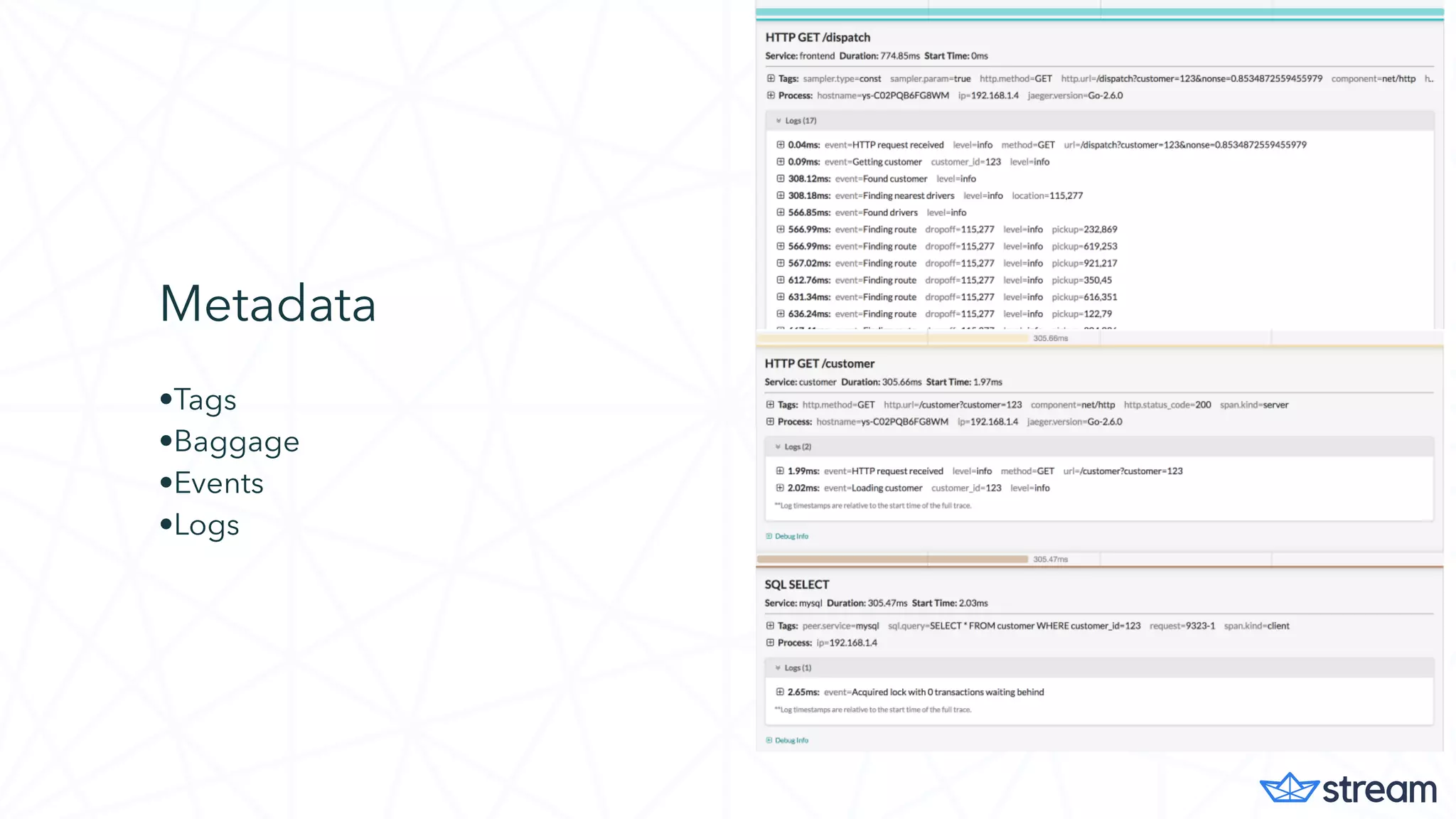

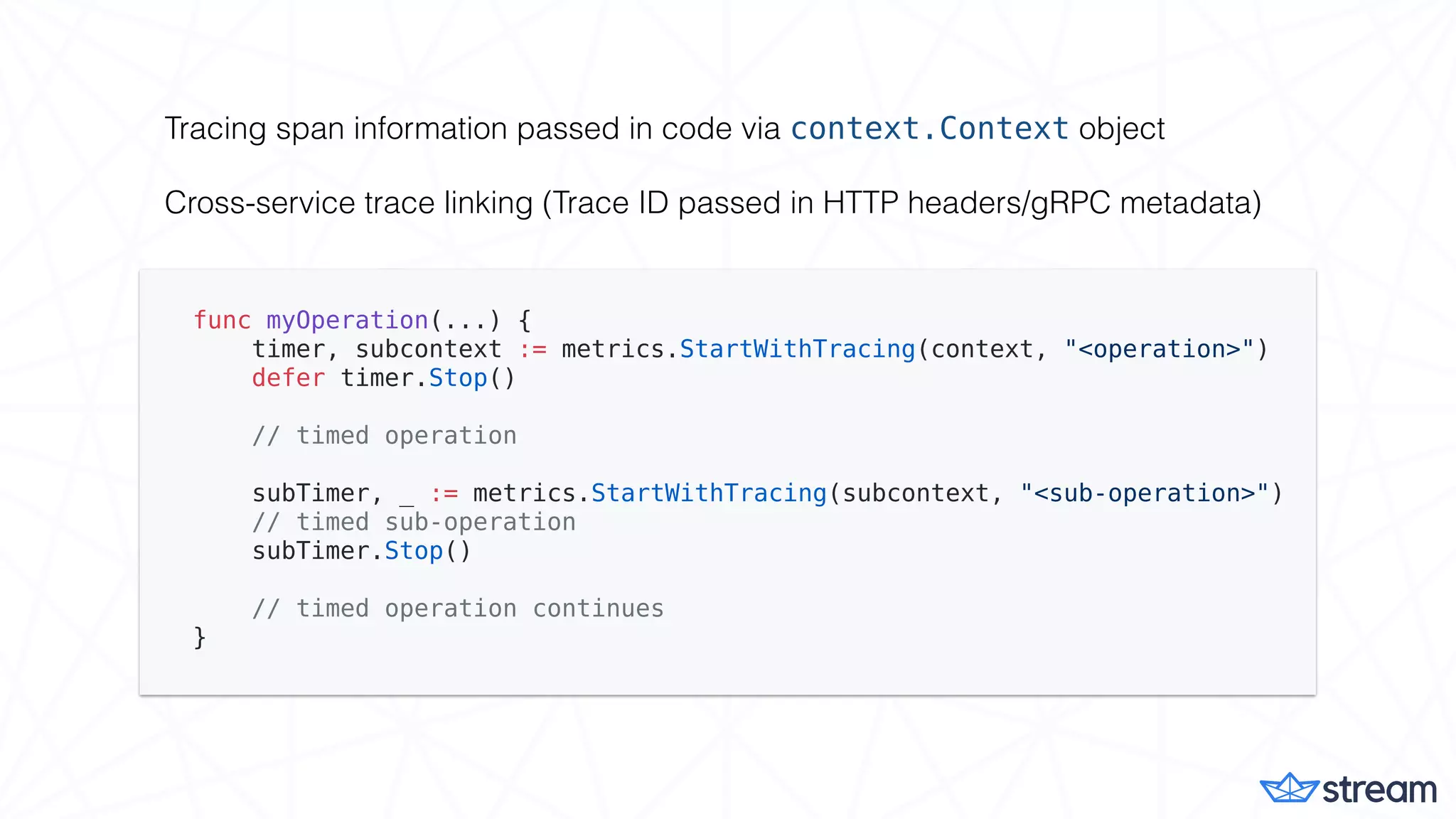

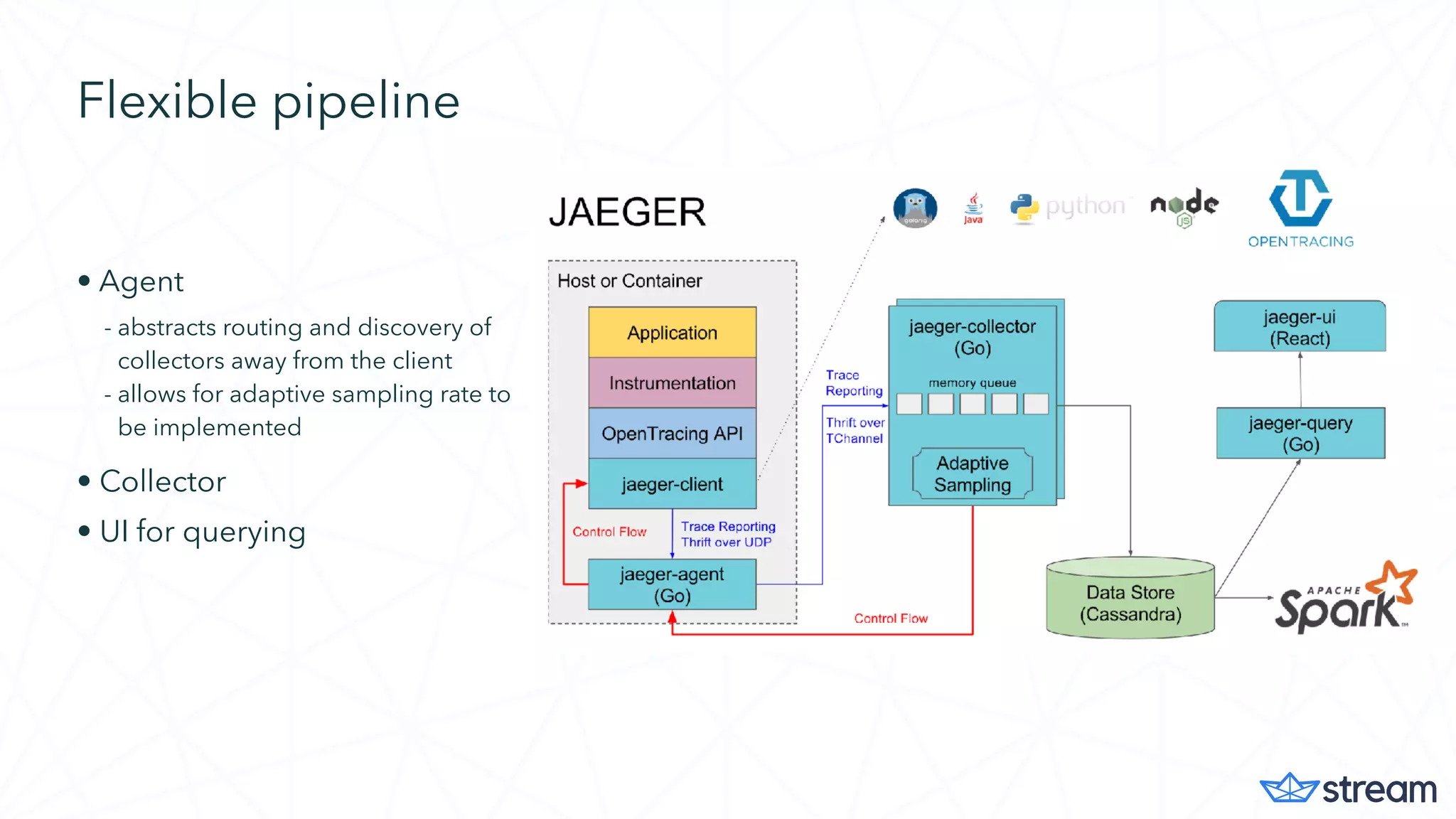

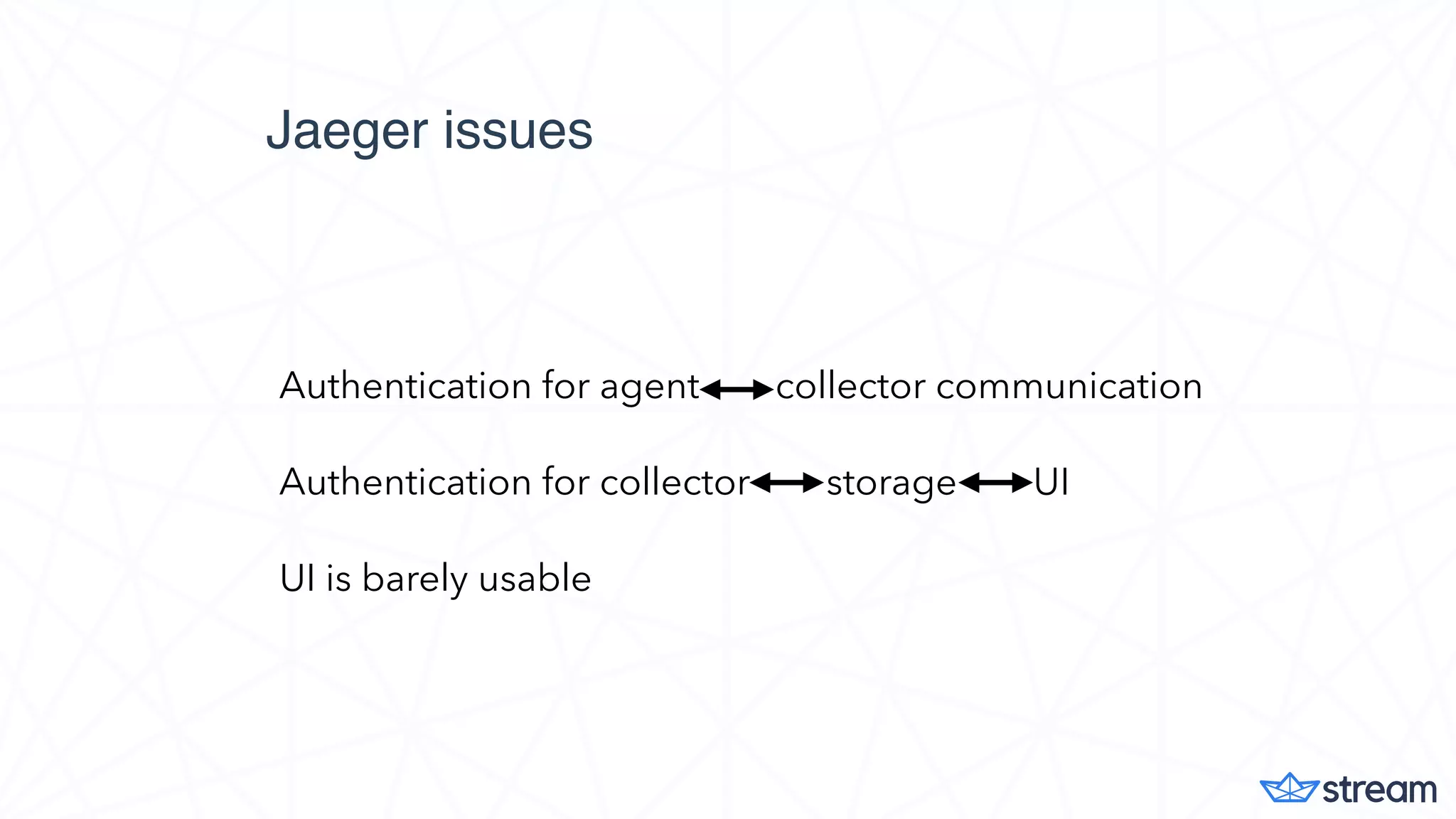

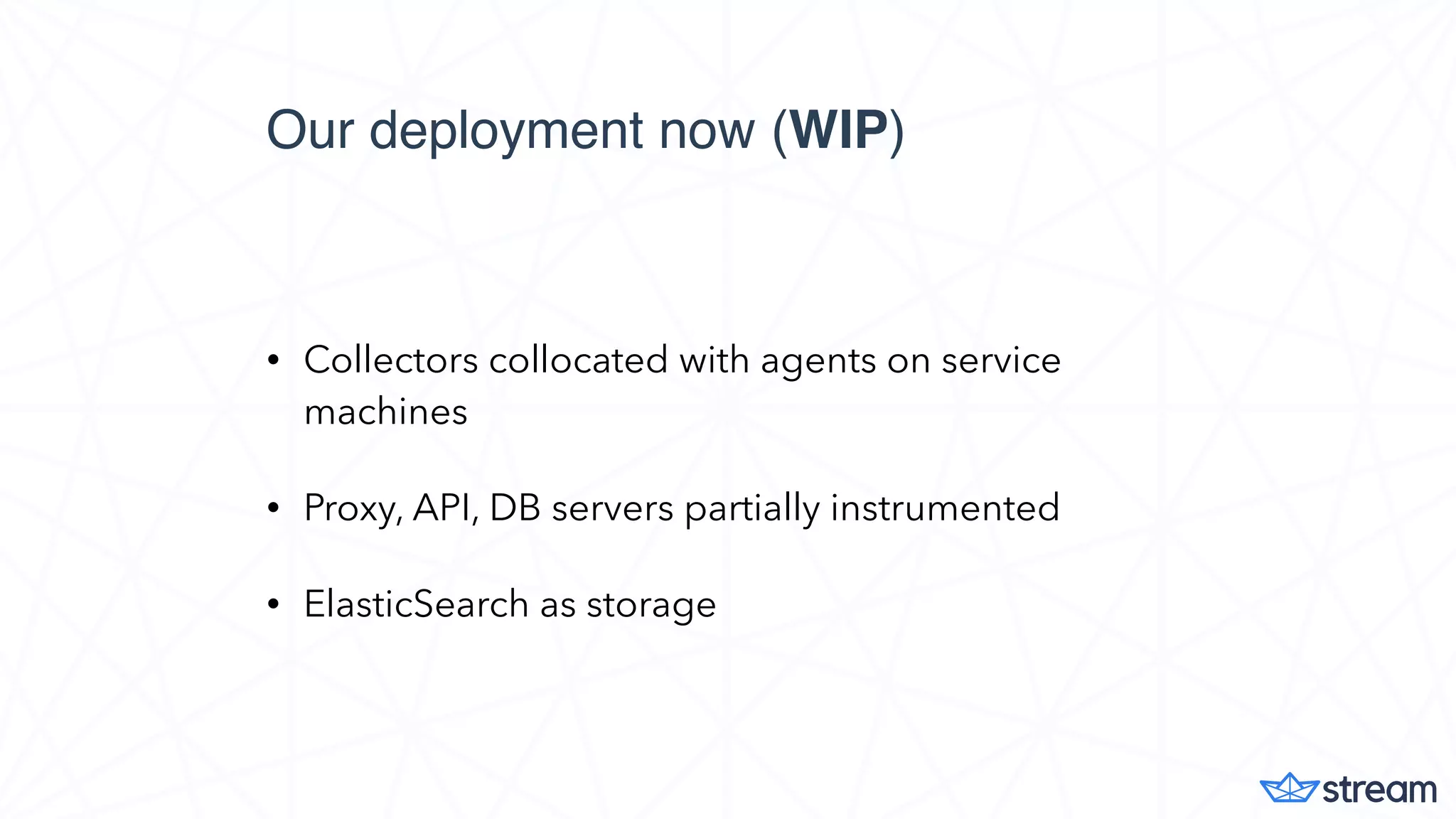

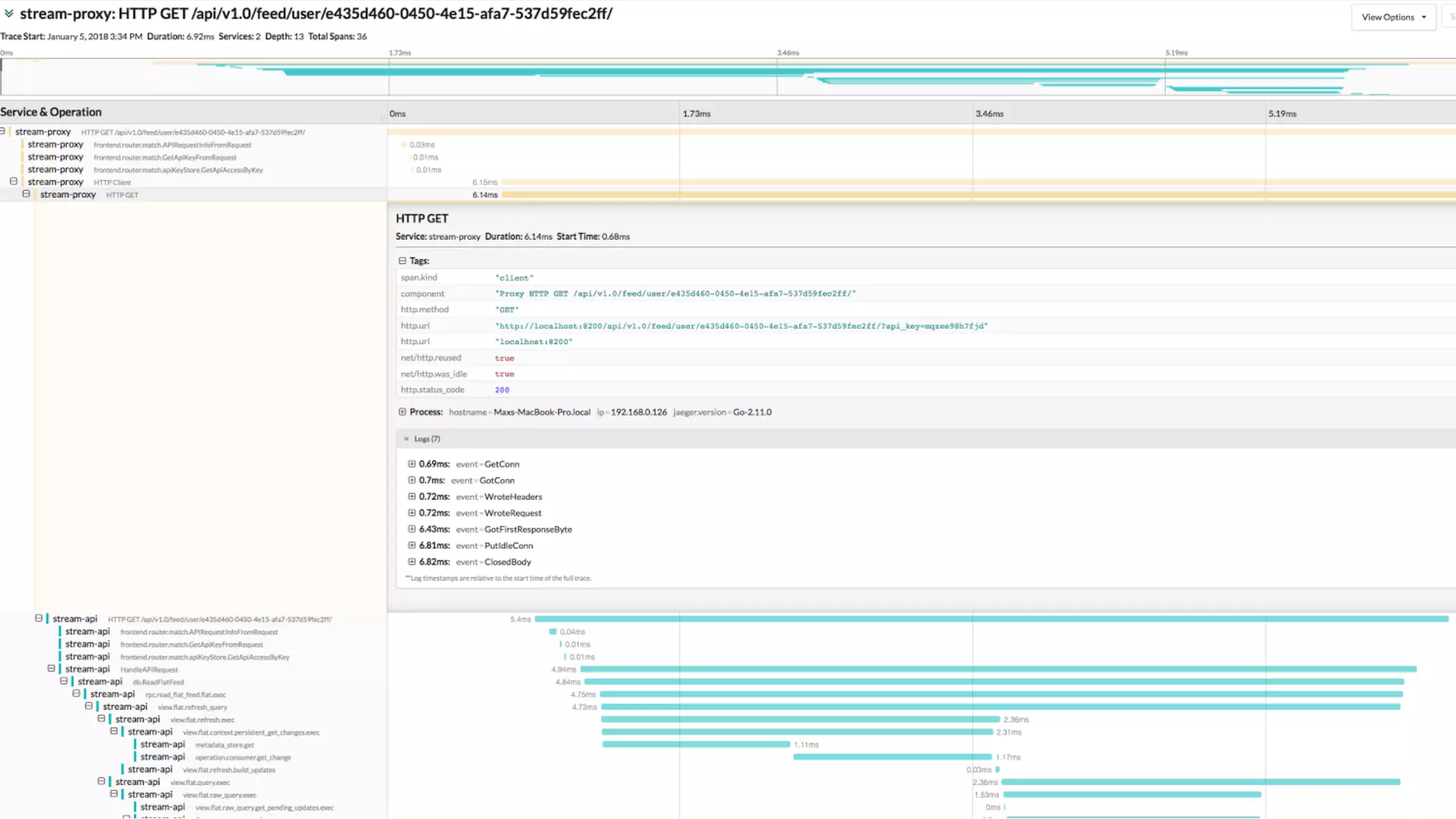

The document discusses distributed tracing using Jaeger and OpenTracing to analyze slow requests and identify performance bottlenecks in microservices. It highlights the importance of passing unique trace IDs for contextualizing metadata and integrating various services while addressing the challenges of data aggregation and visibility. Jaeger, released by Uber Technologies, facilitates this tracing with features like a flexible pipeline and a user interface, though usability concerns are noted.