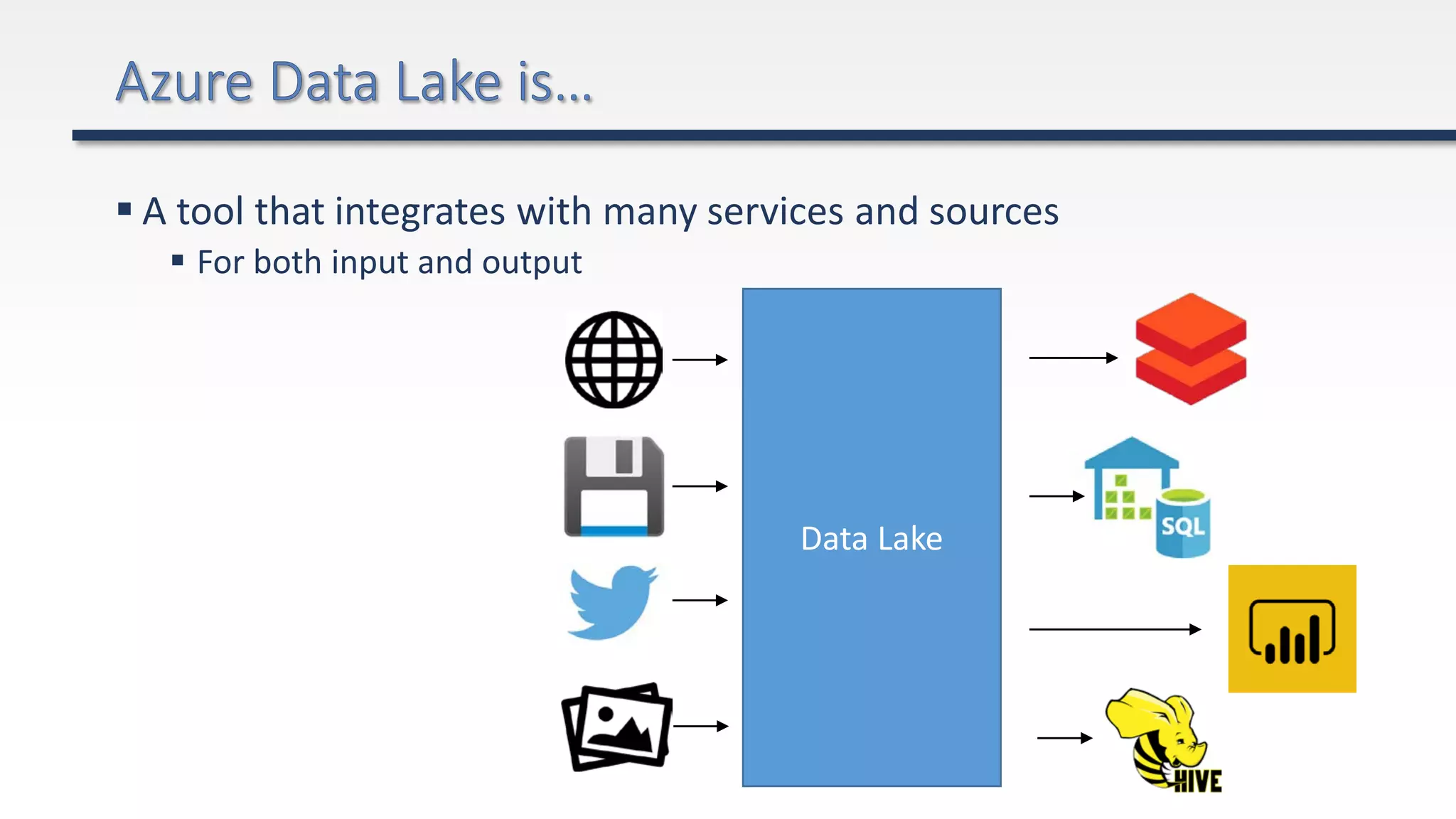

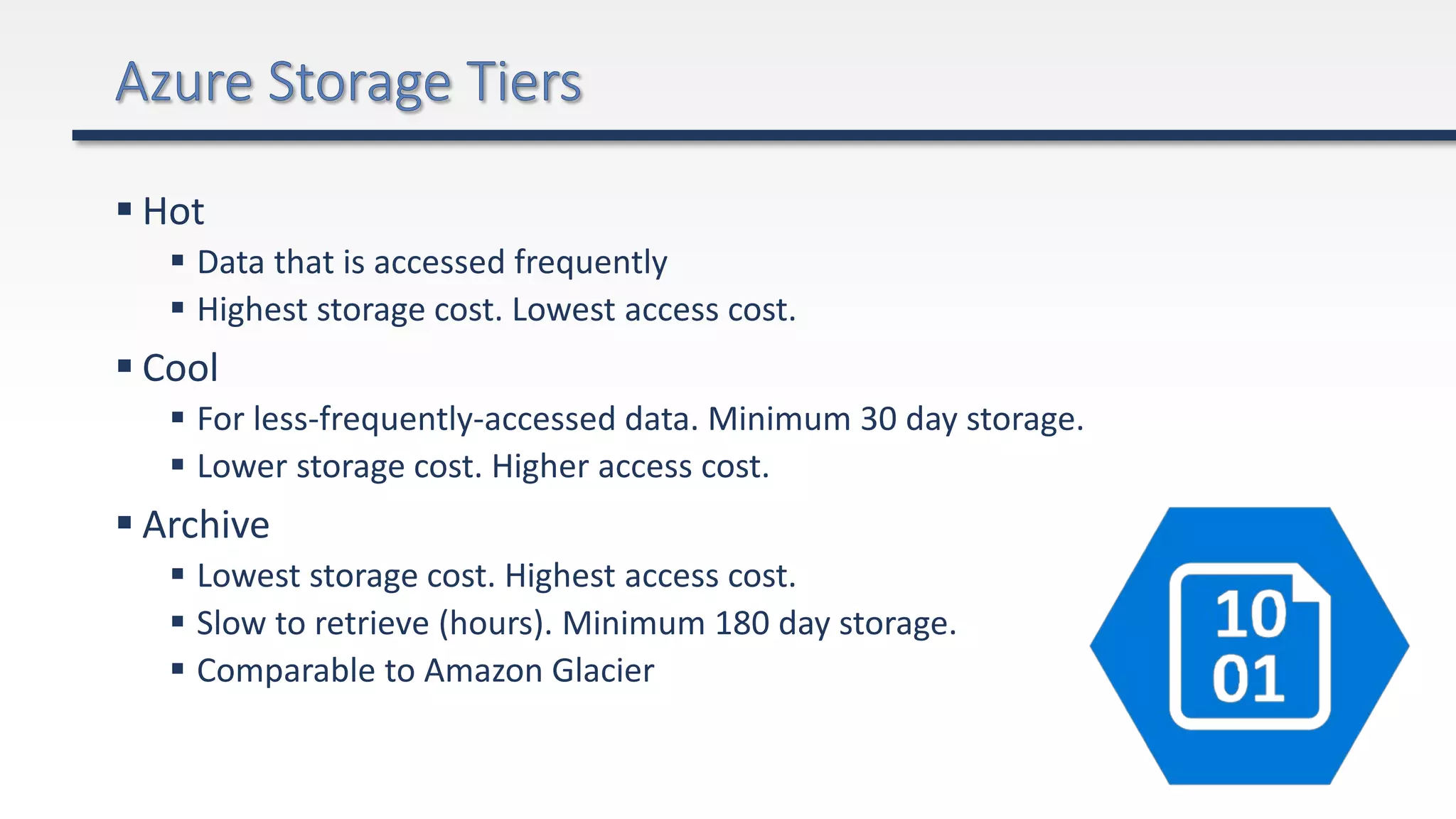

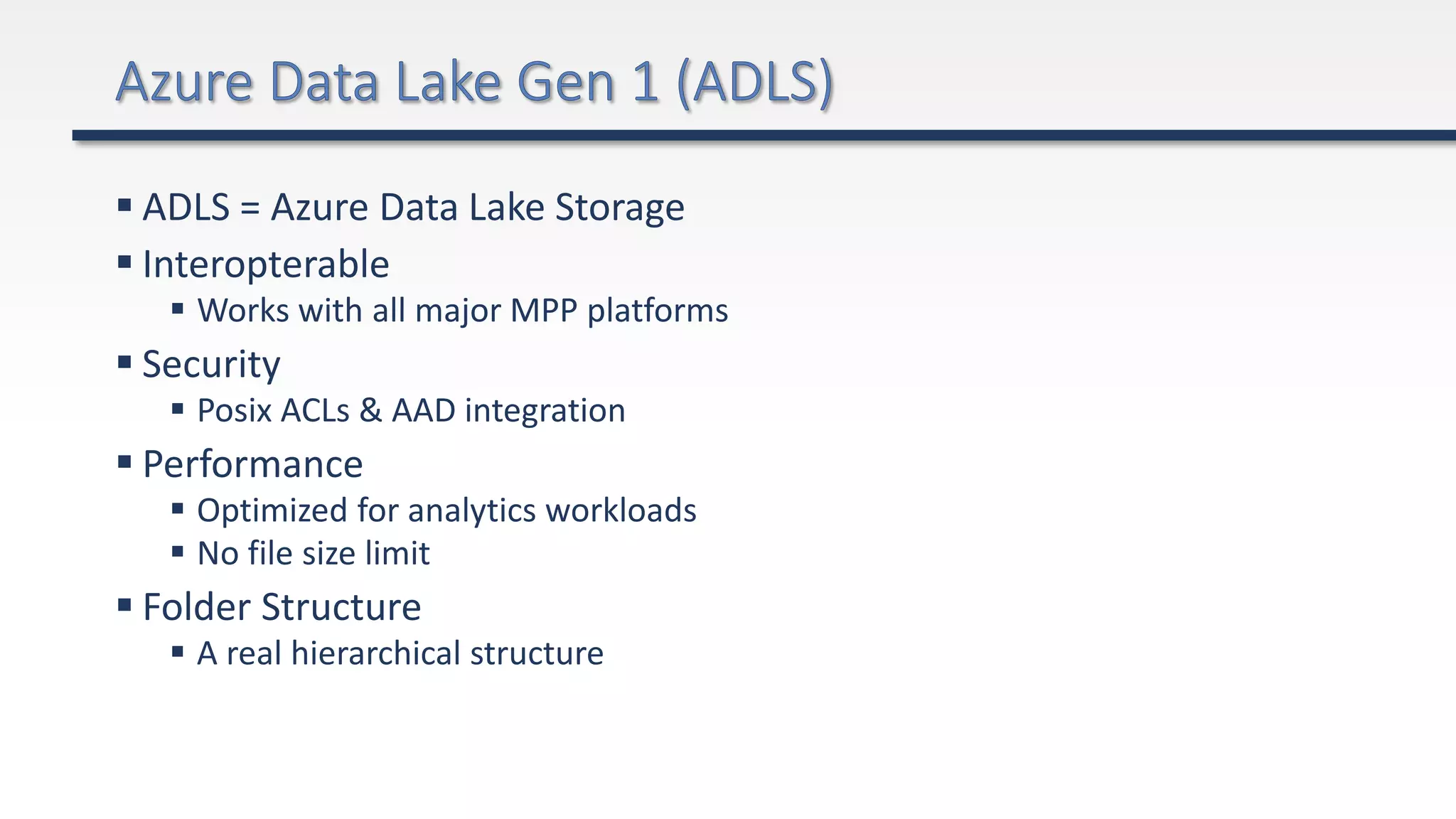

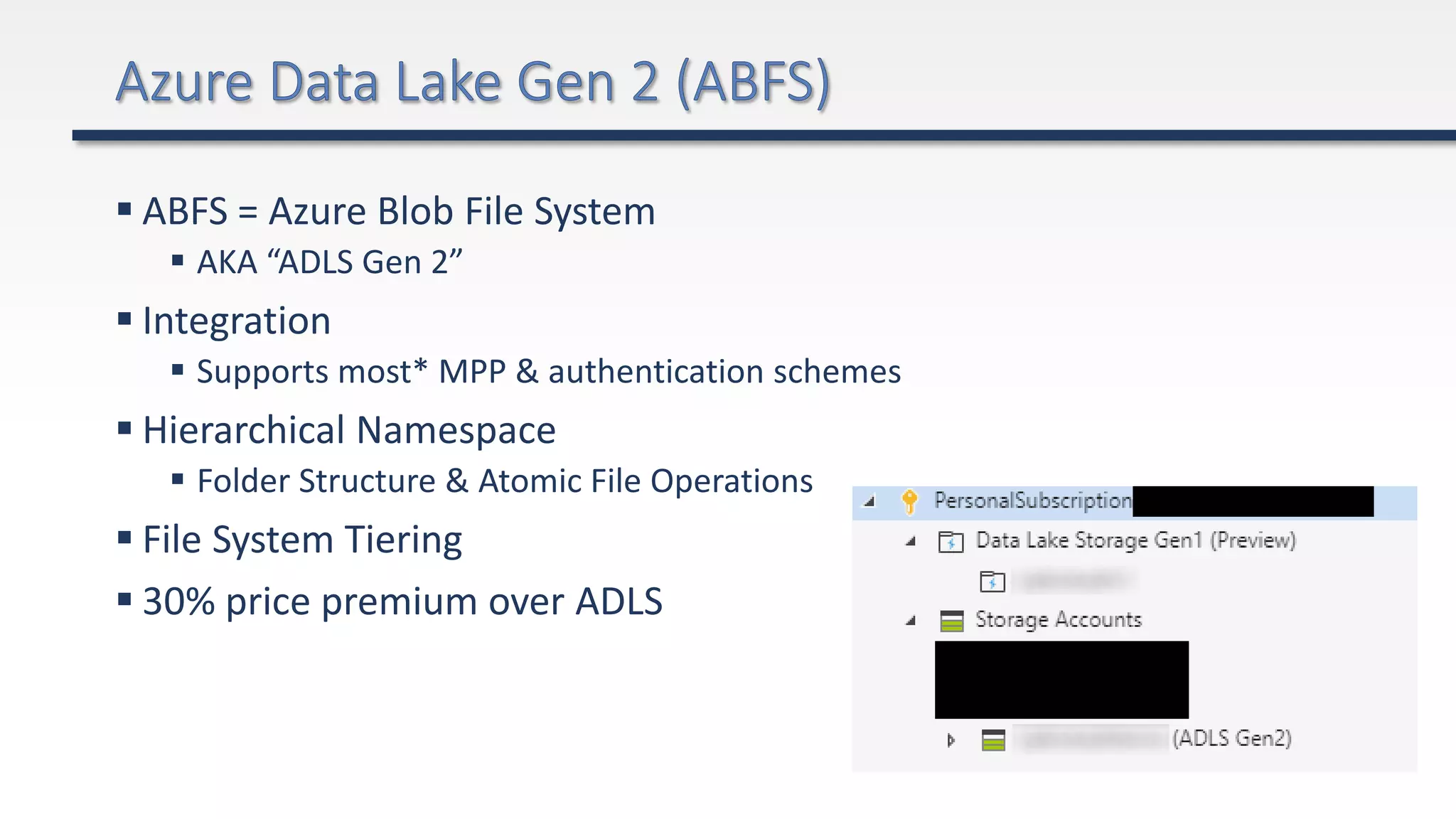

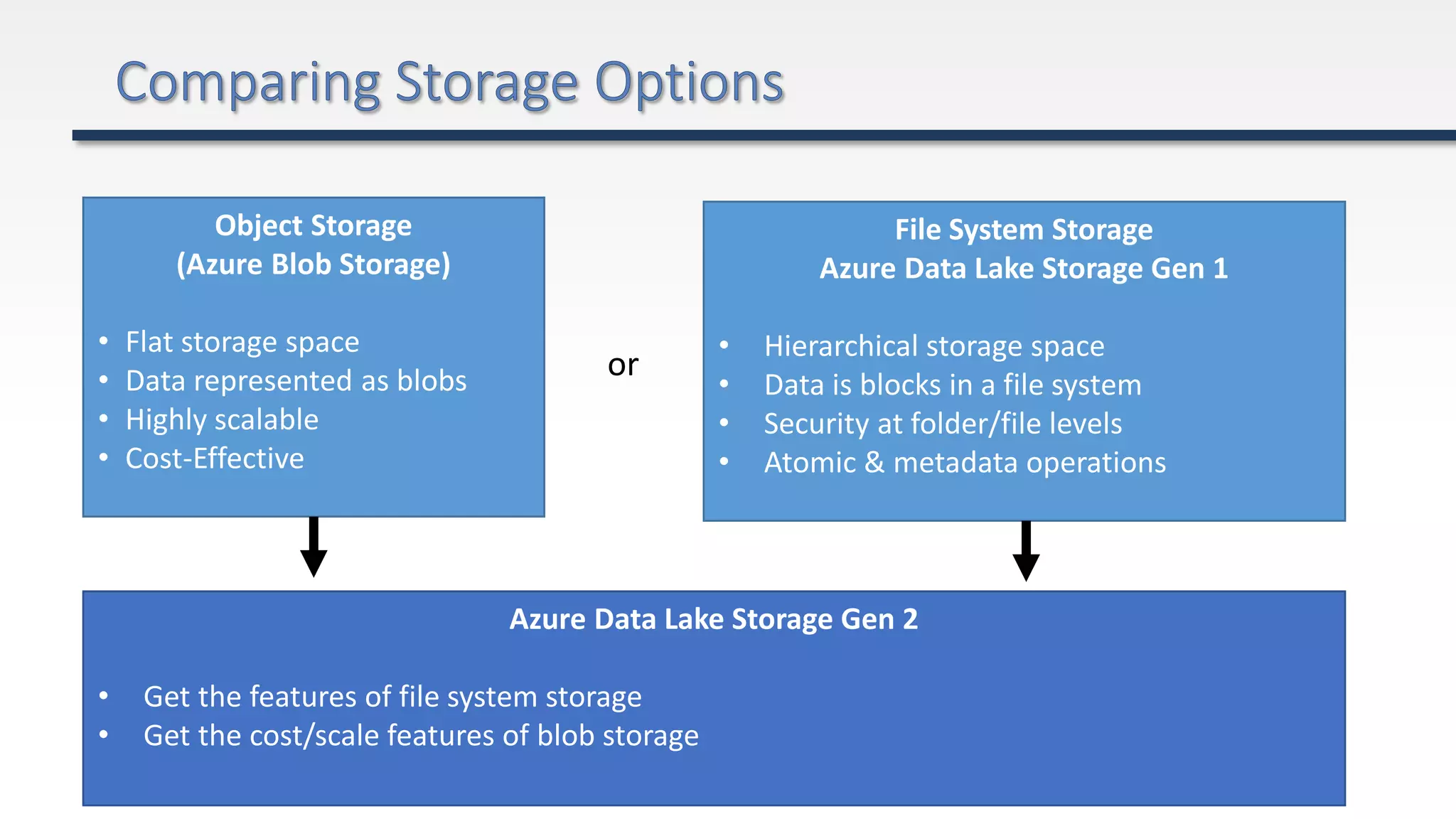

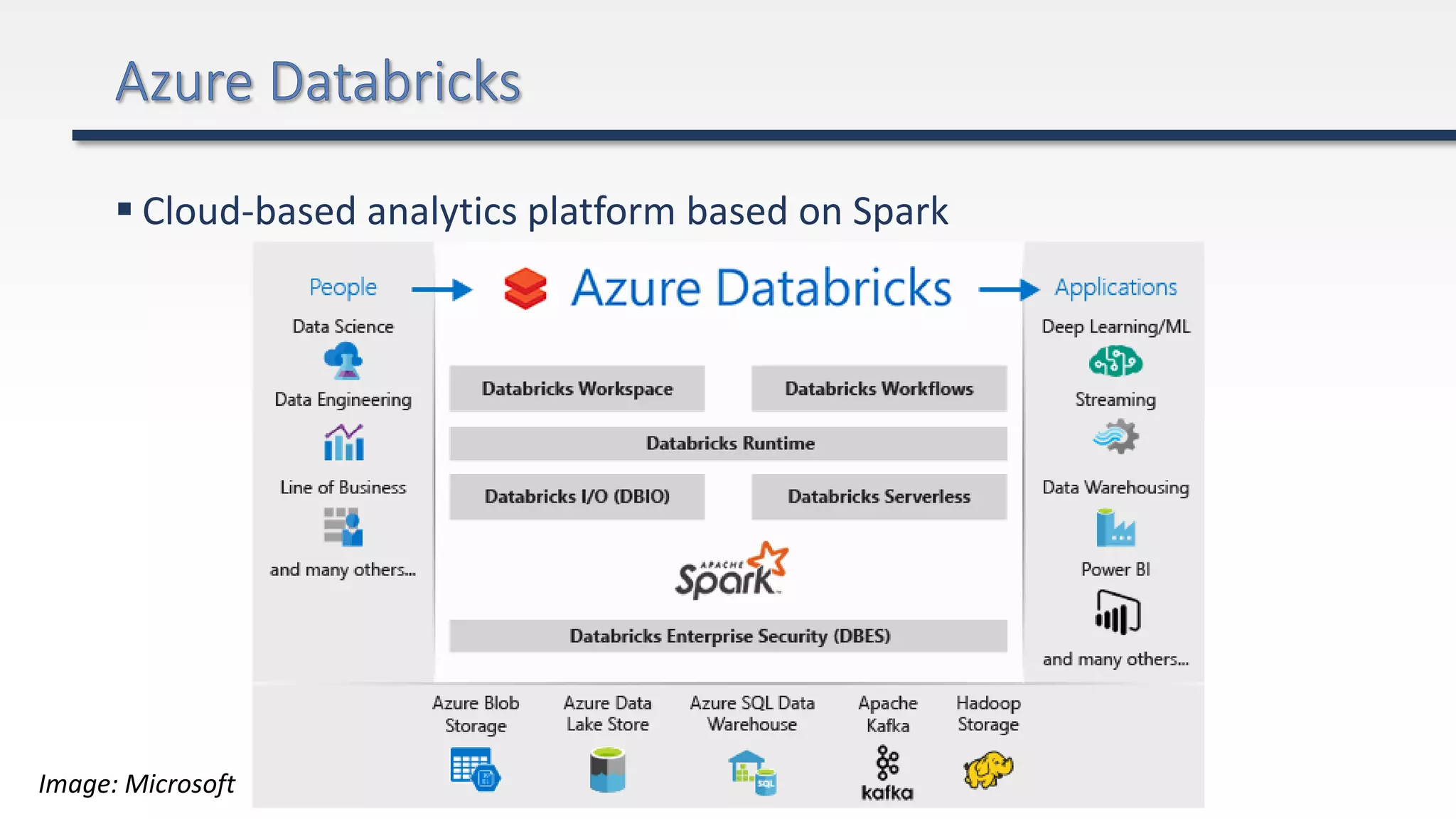

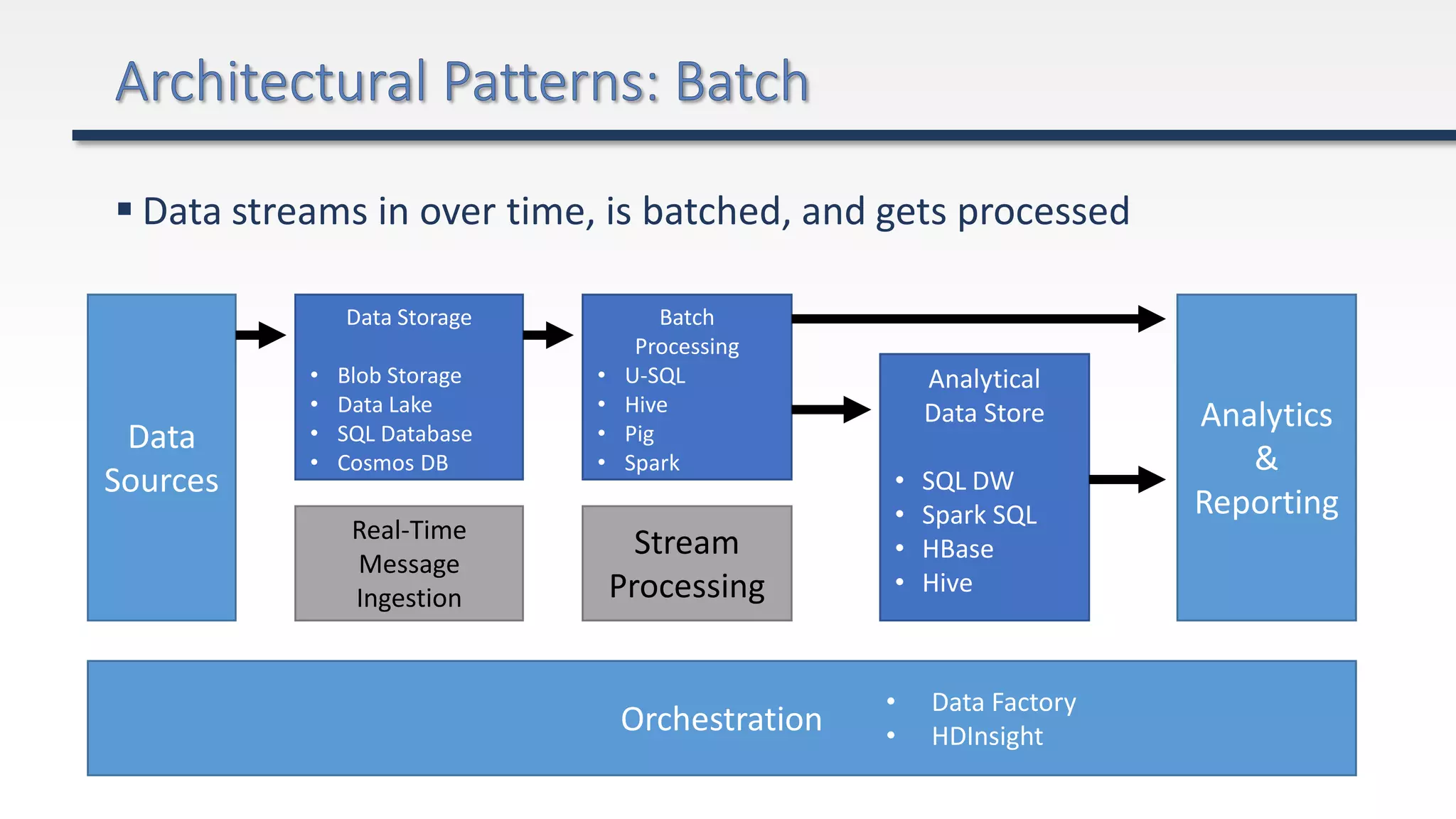

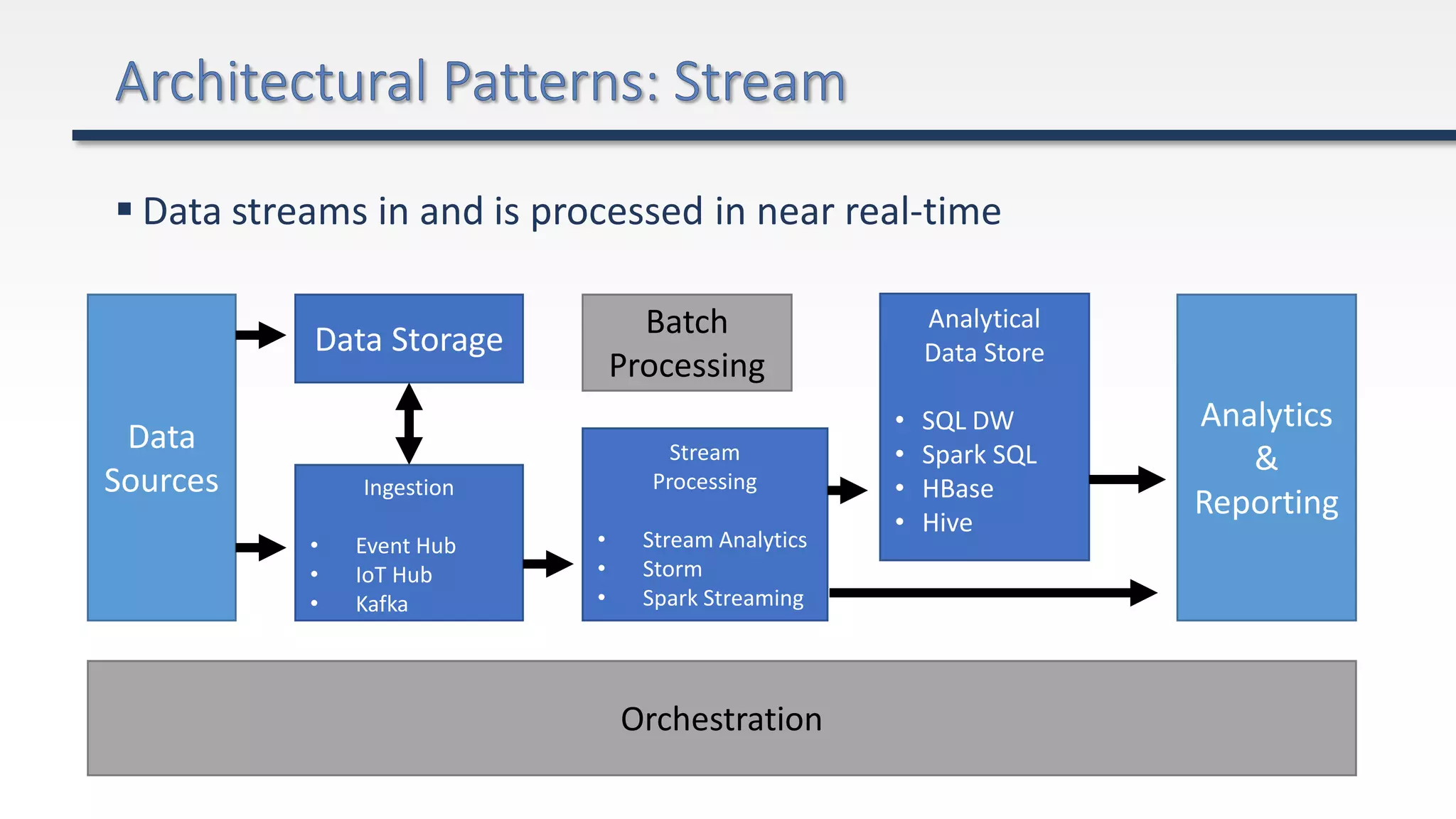

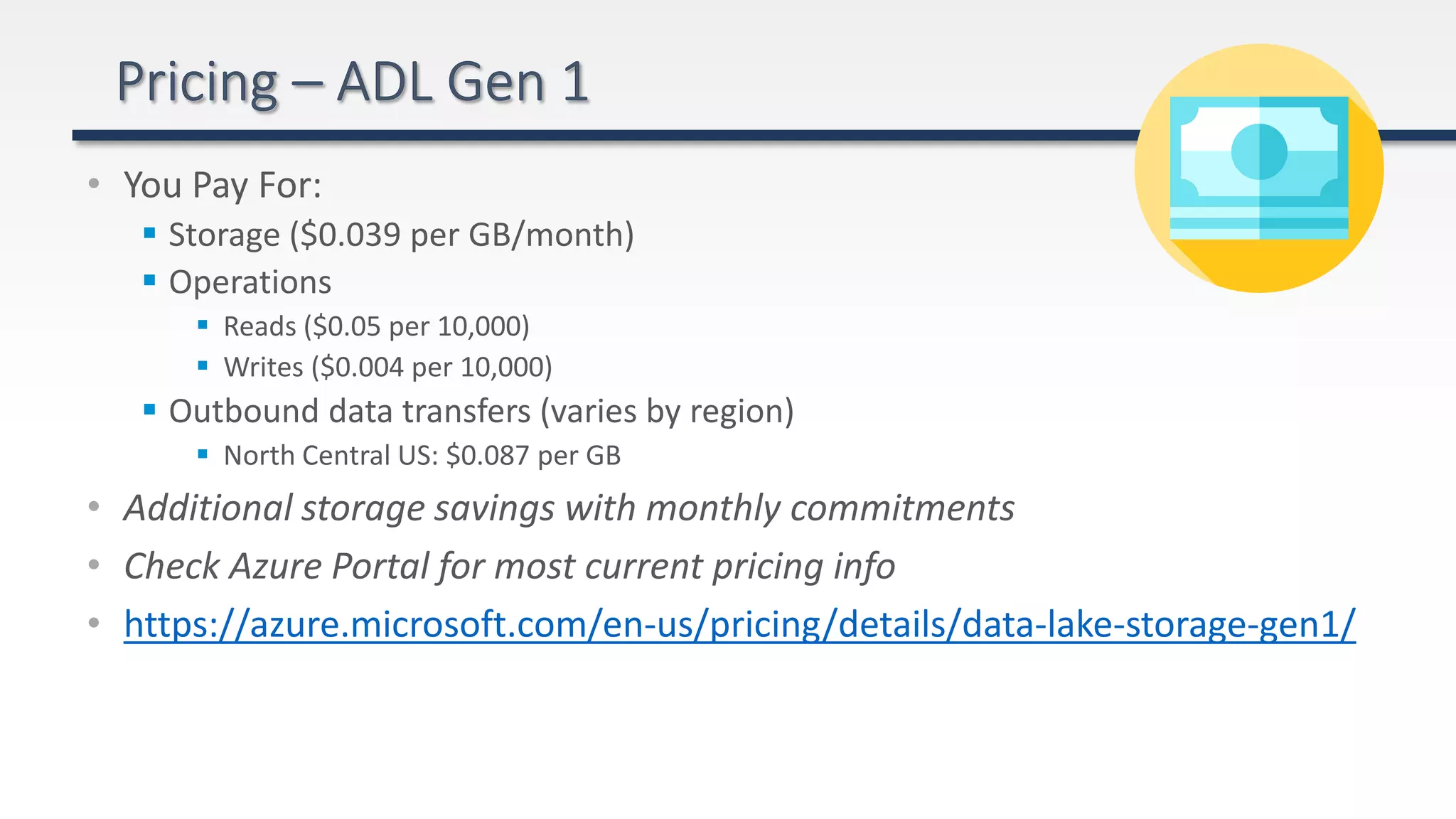

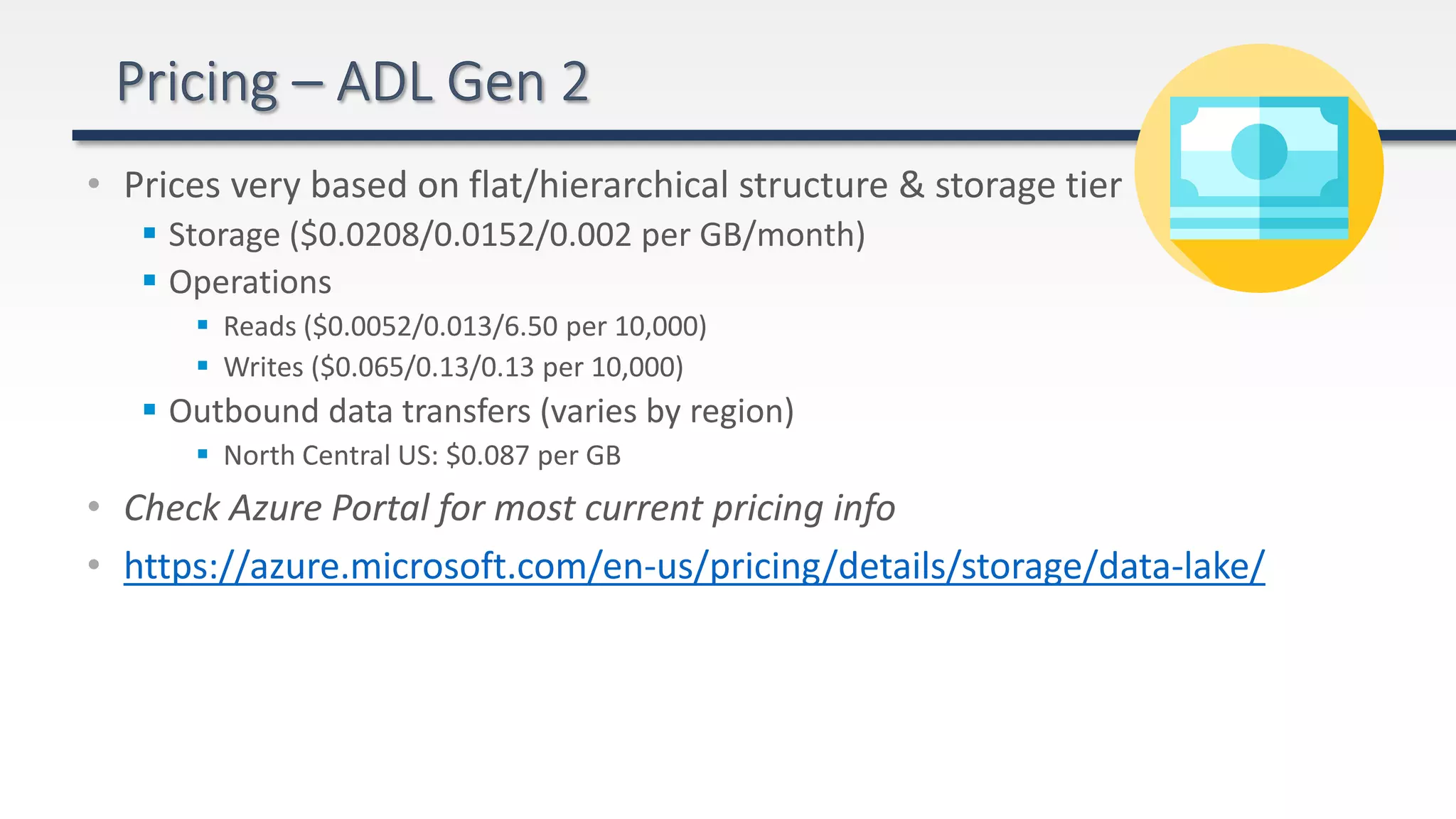

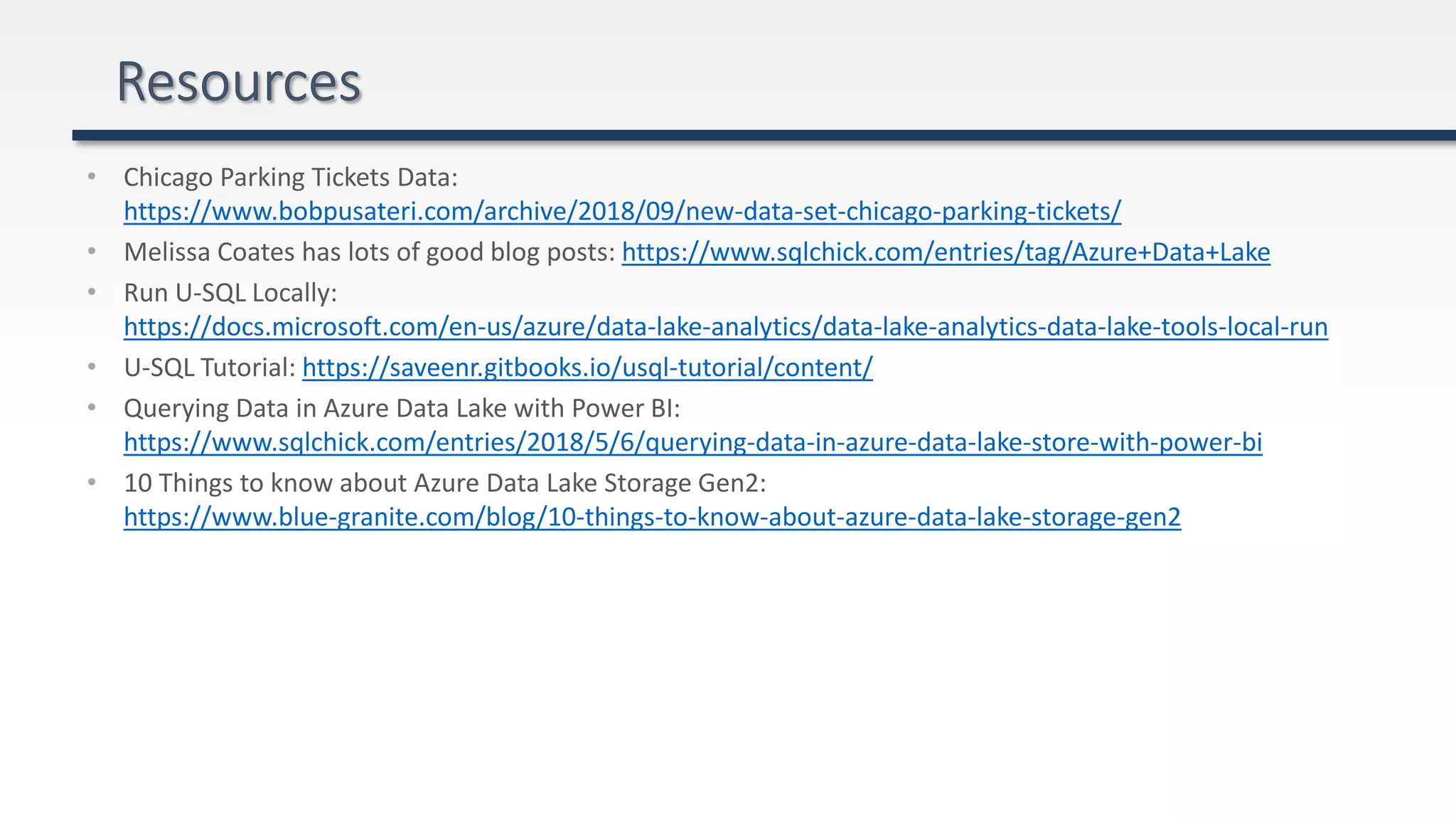

The document provides an extensive overview of Azure Data Lake and related storage solutions, highlighting their characteristics, functionalities, and performance considerations for handling large volumes of data. It discusses various types of data storage, organization strategies, and integrations with different computing engines while emphasizing the importance of data management and governance. Additionally, it outlines the cost structure associated with using these services in the Azure ecosystem.