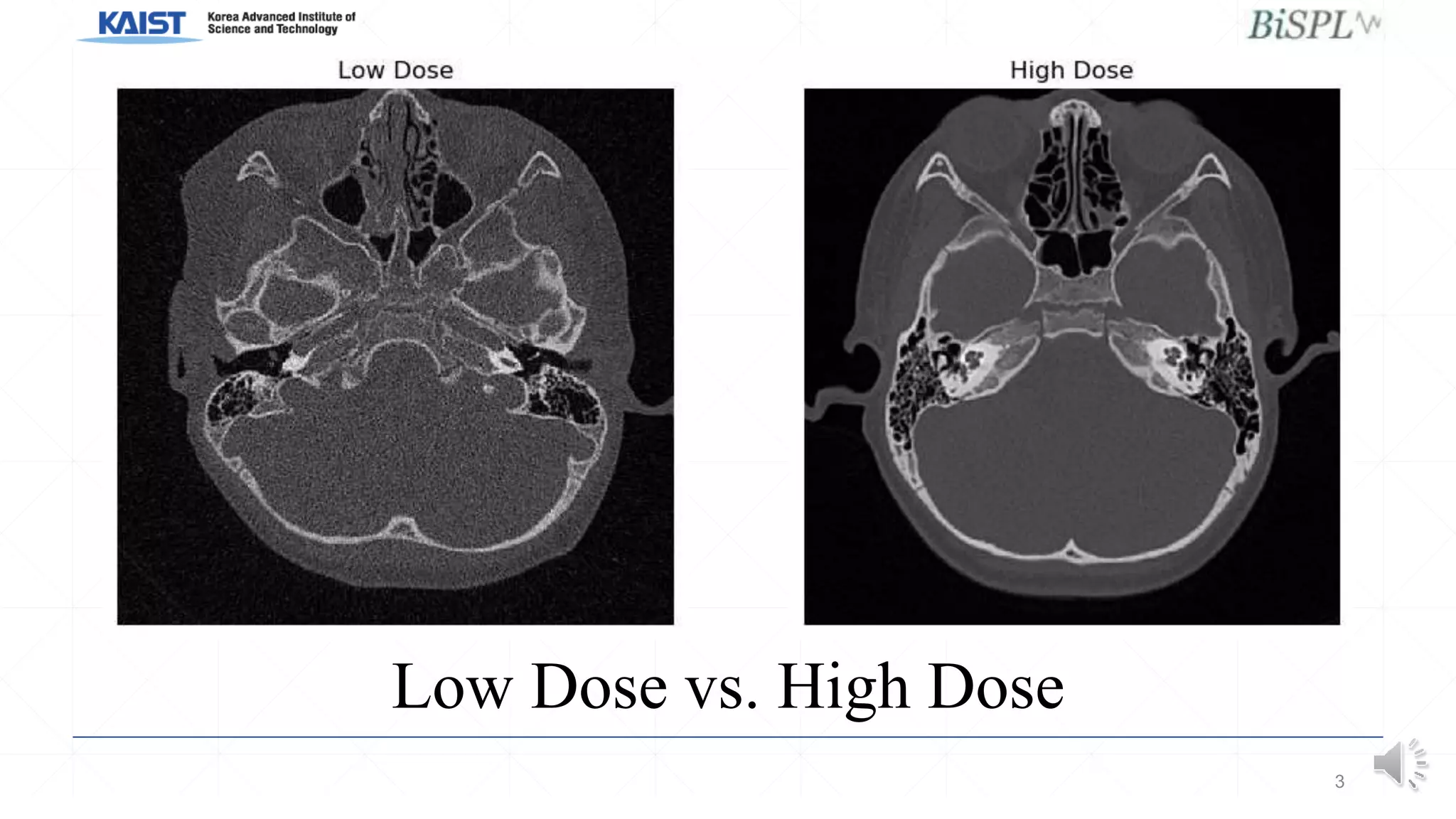

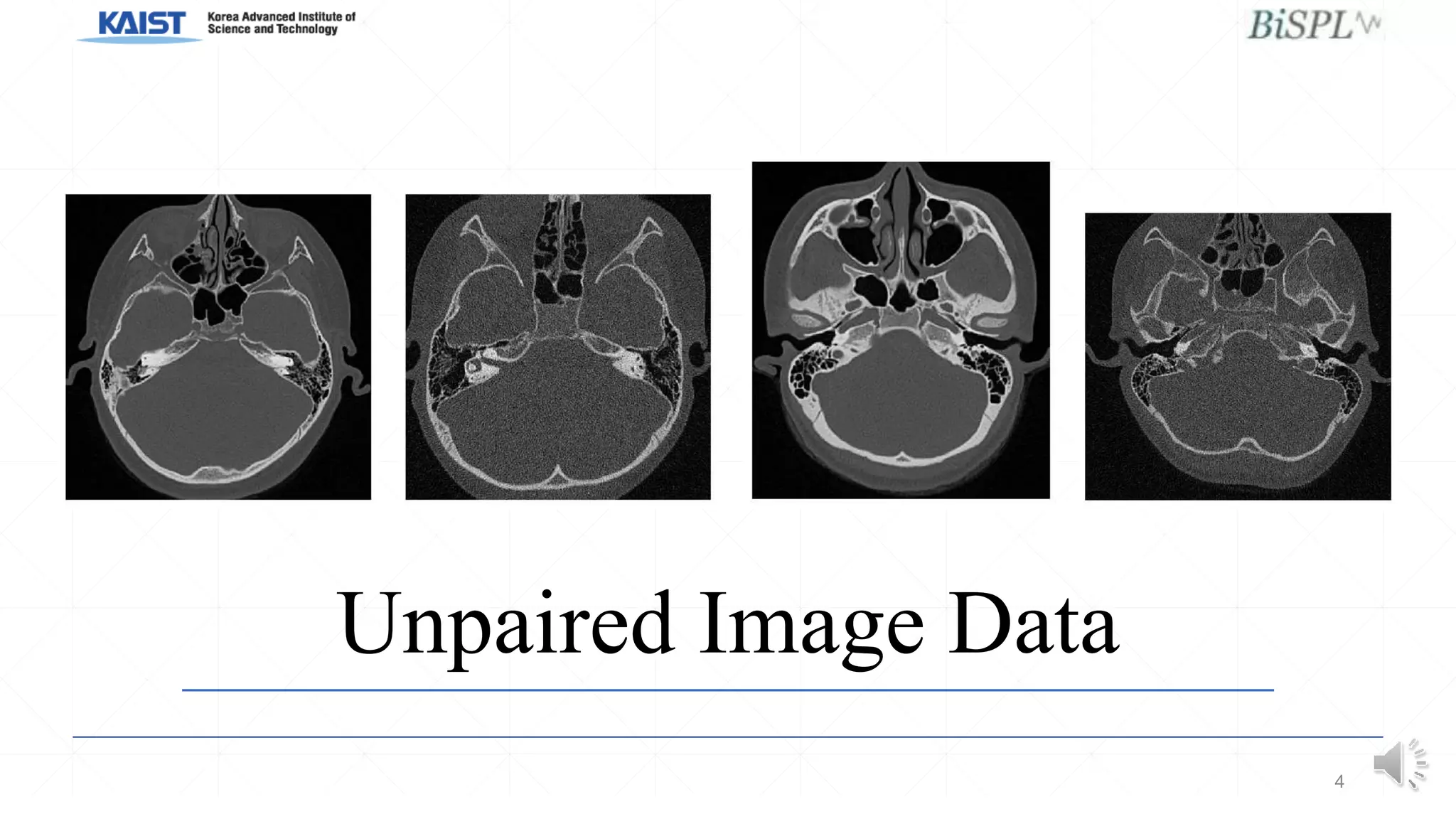

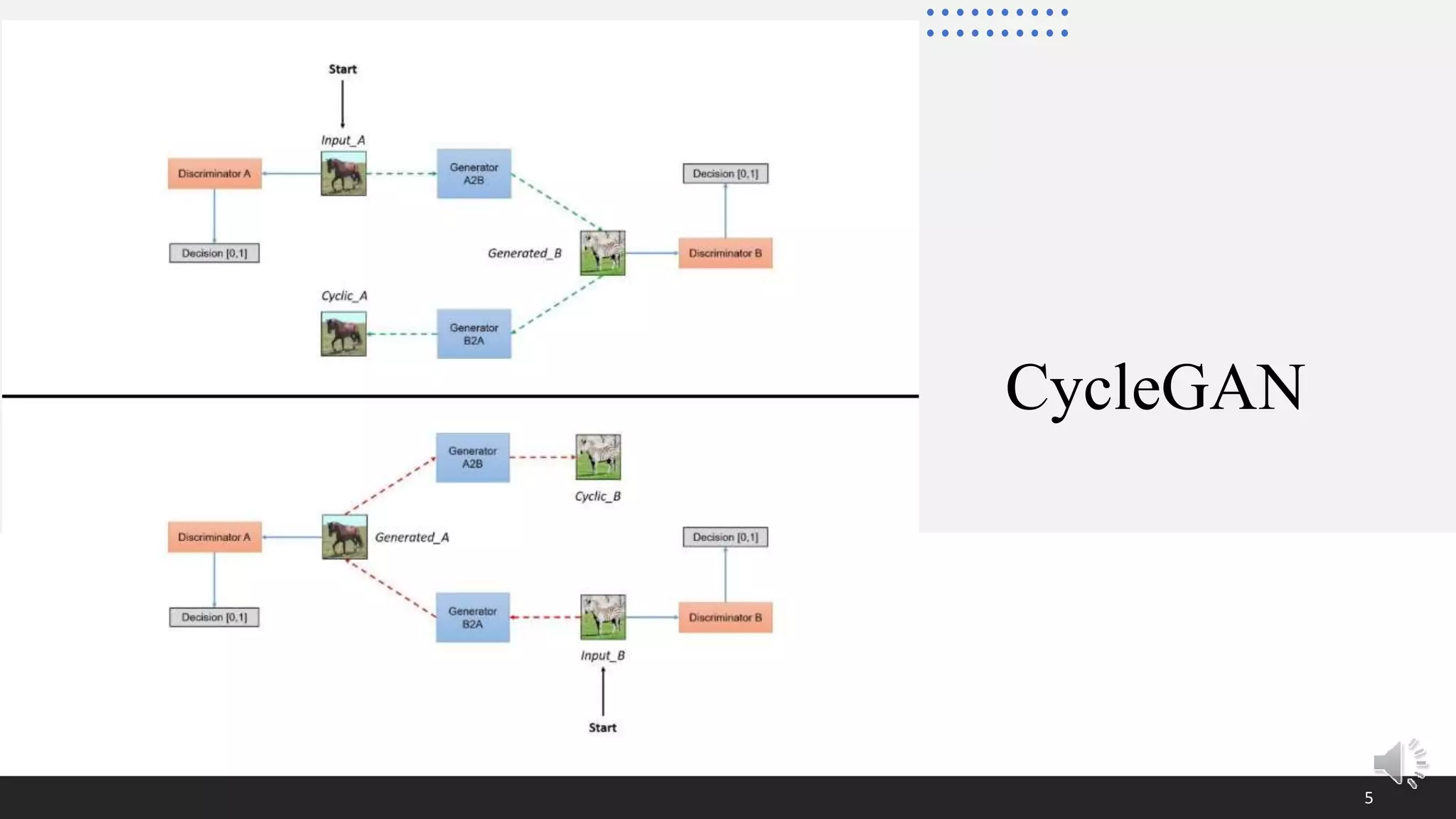

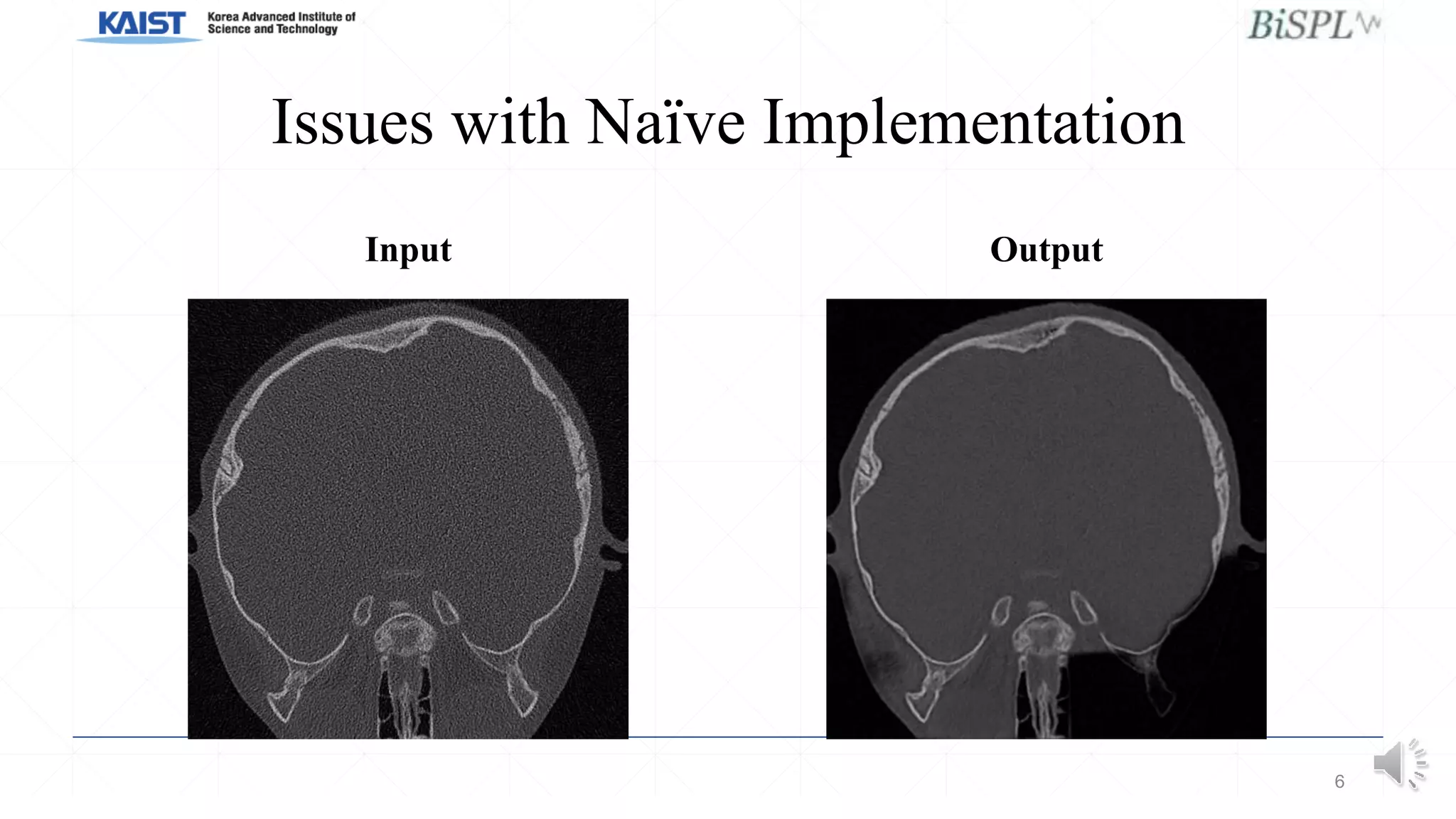

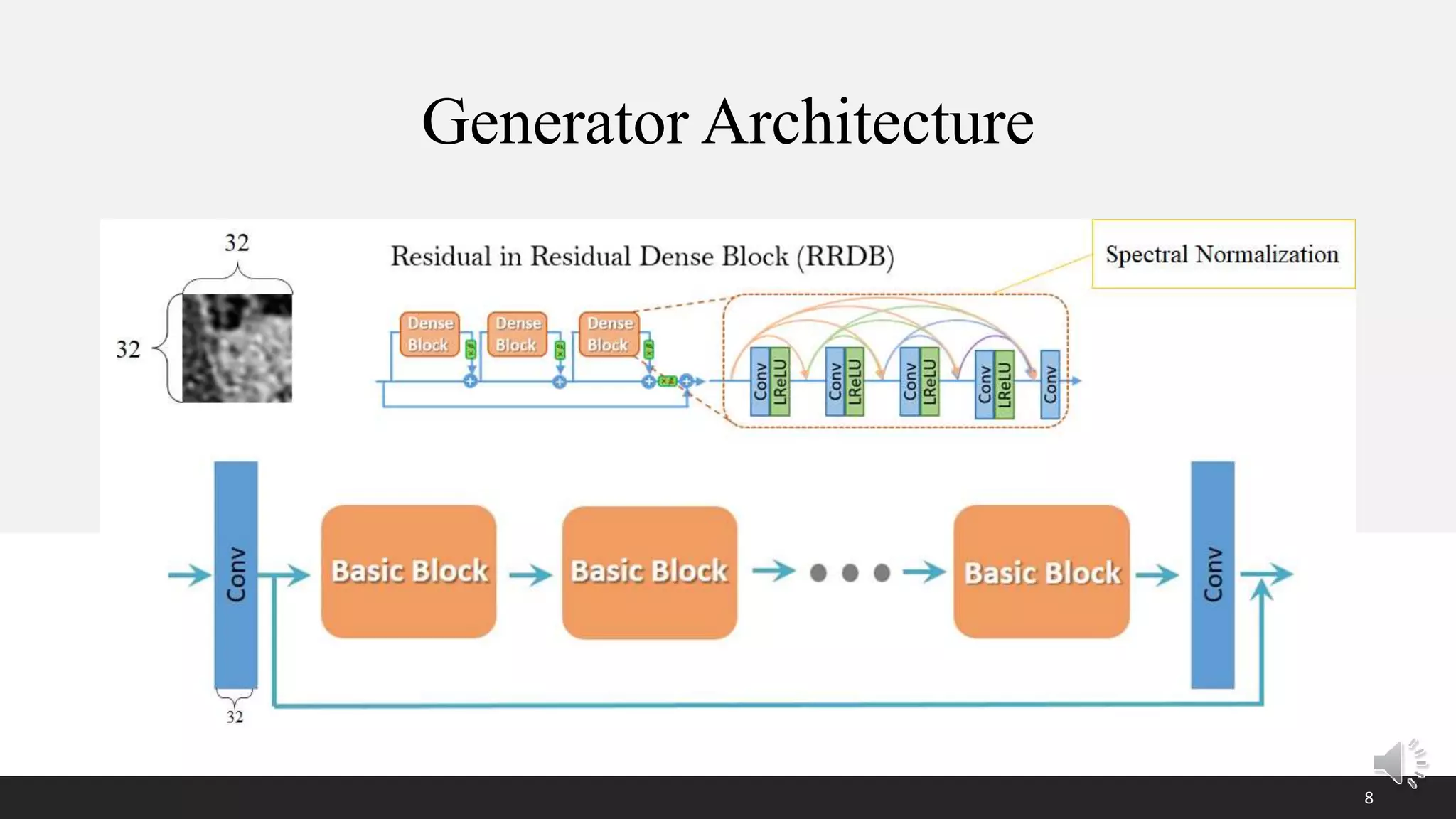

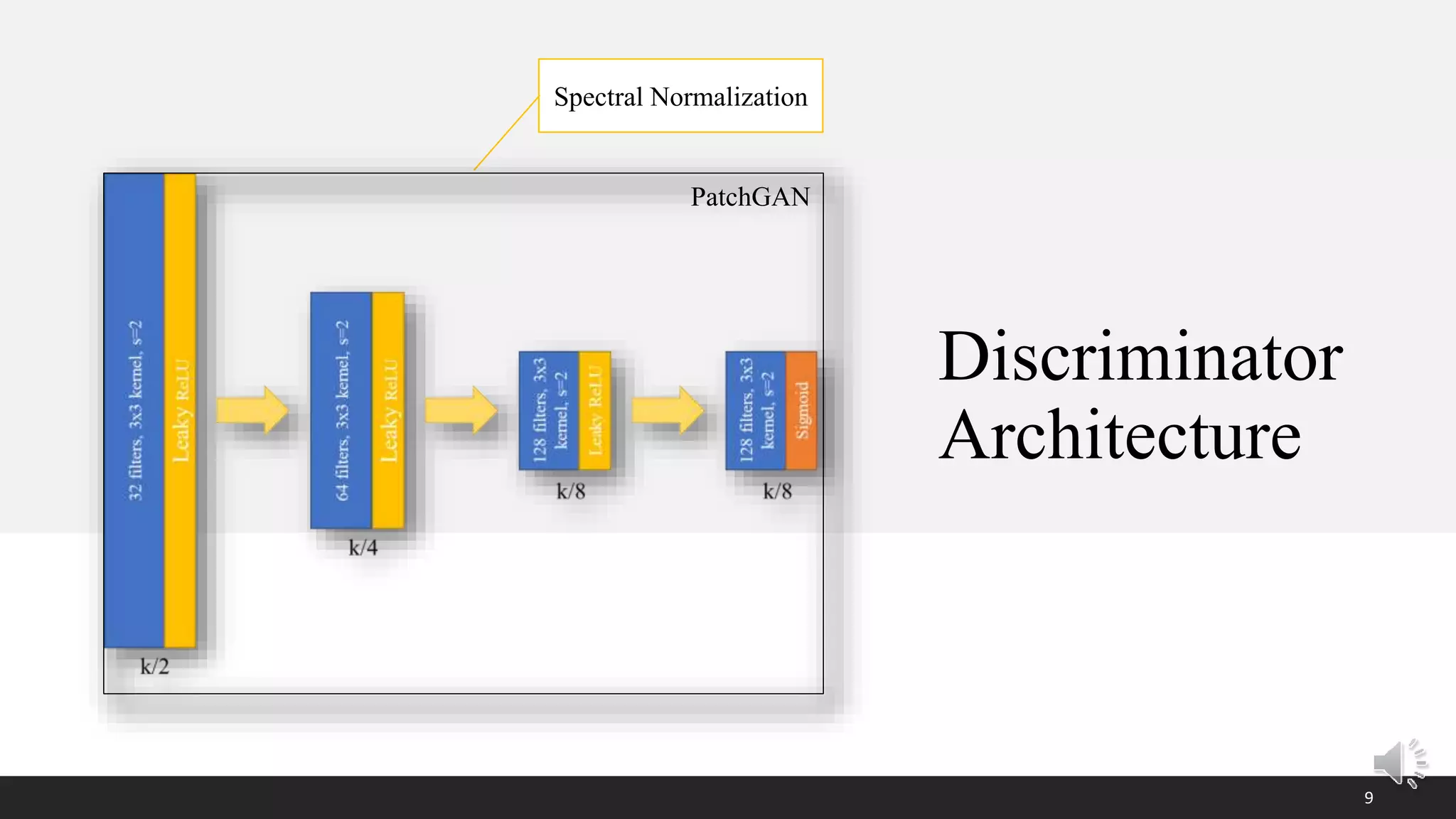

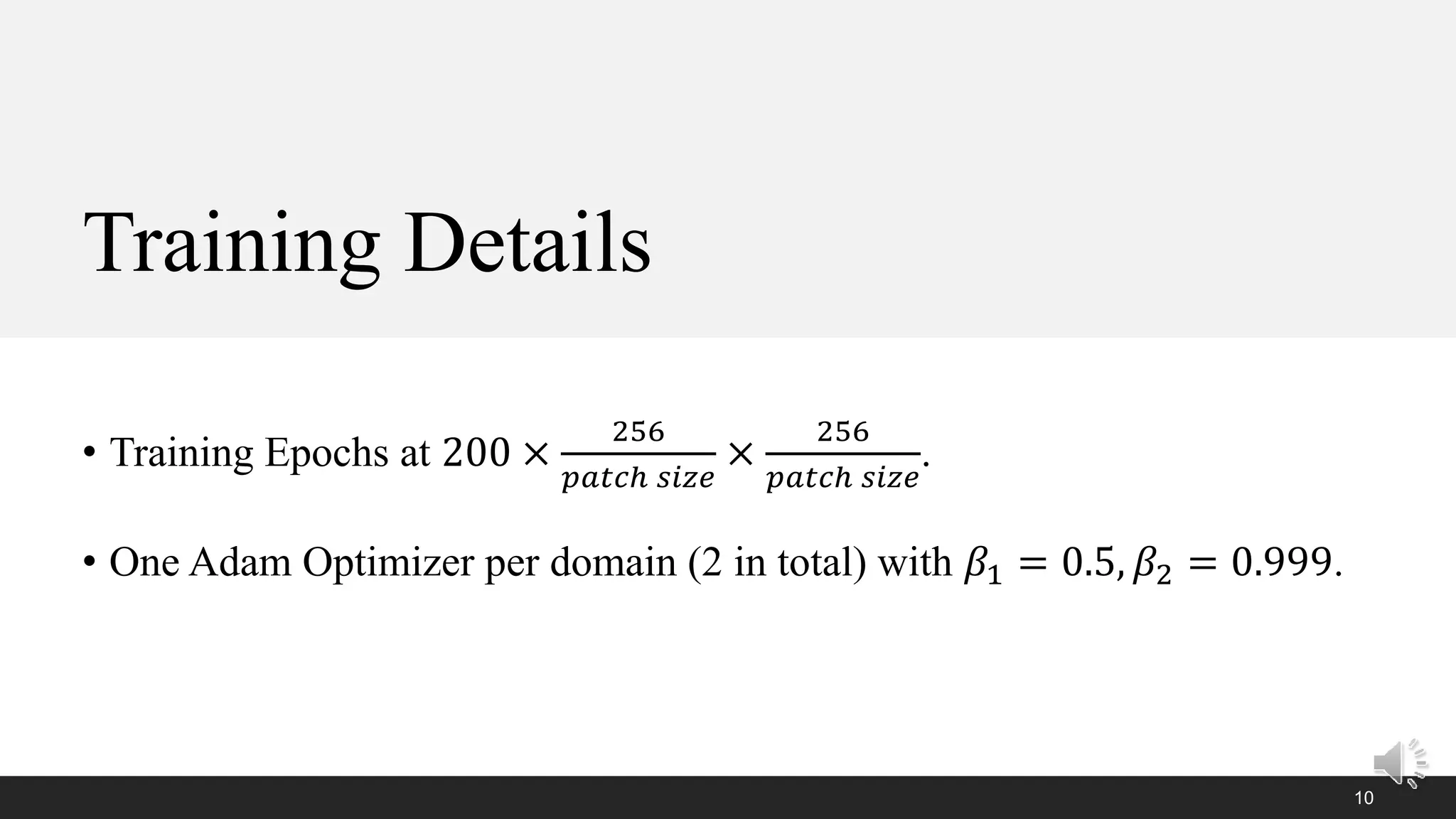

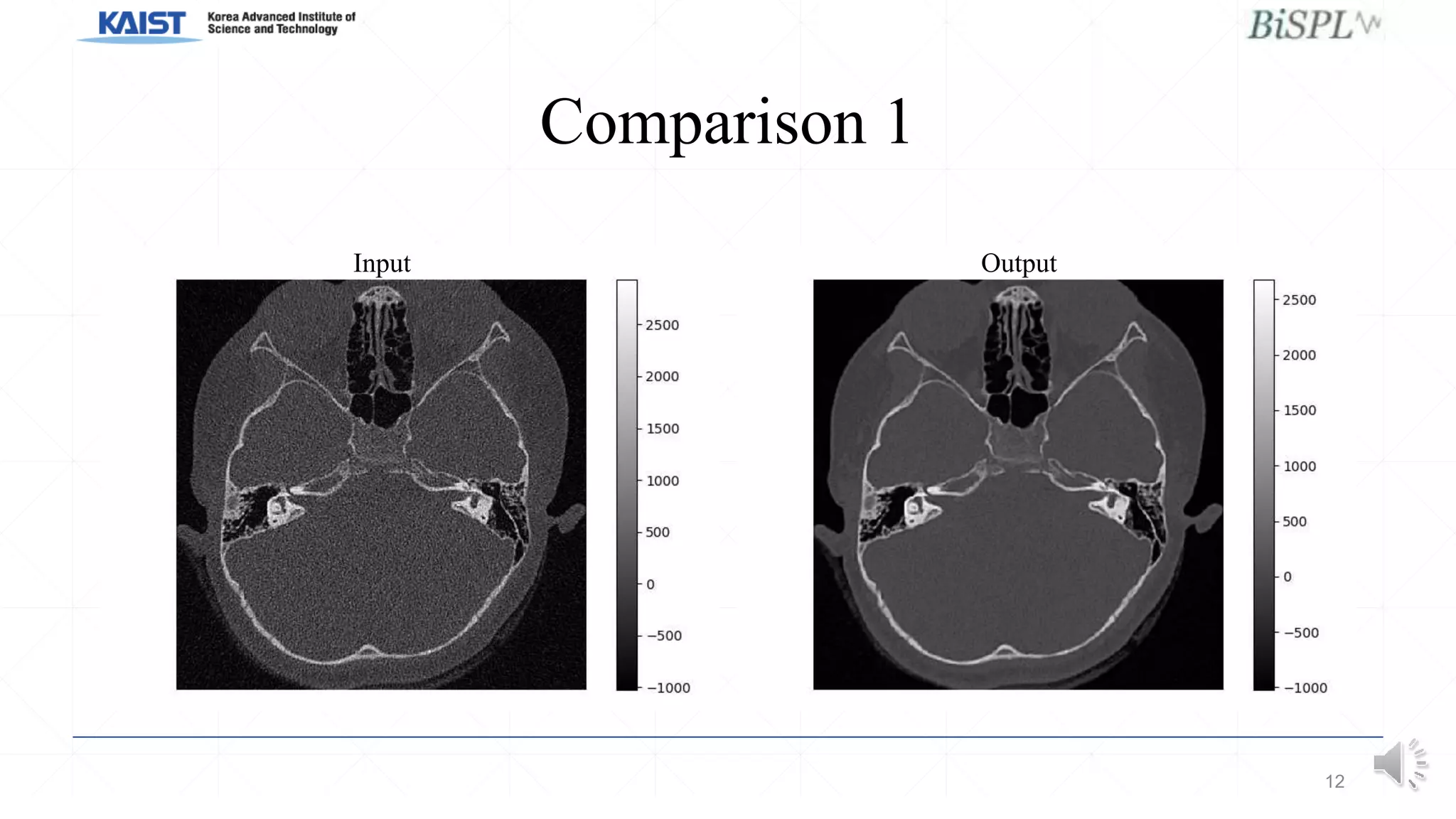

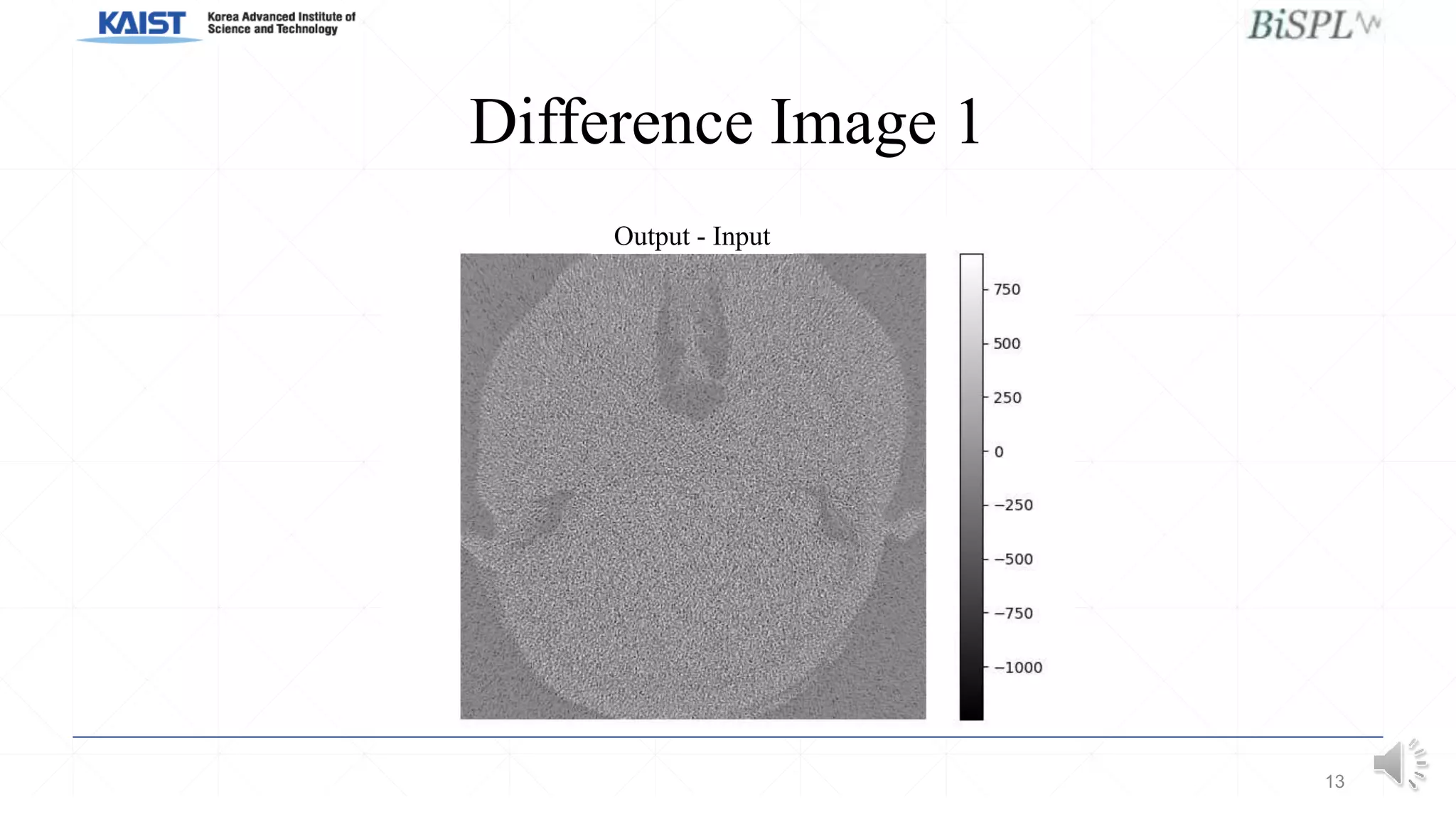

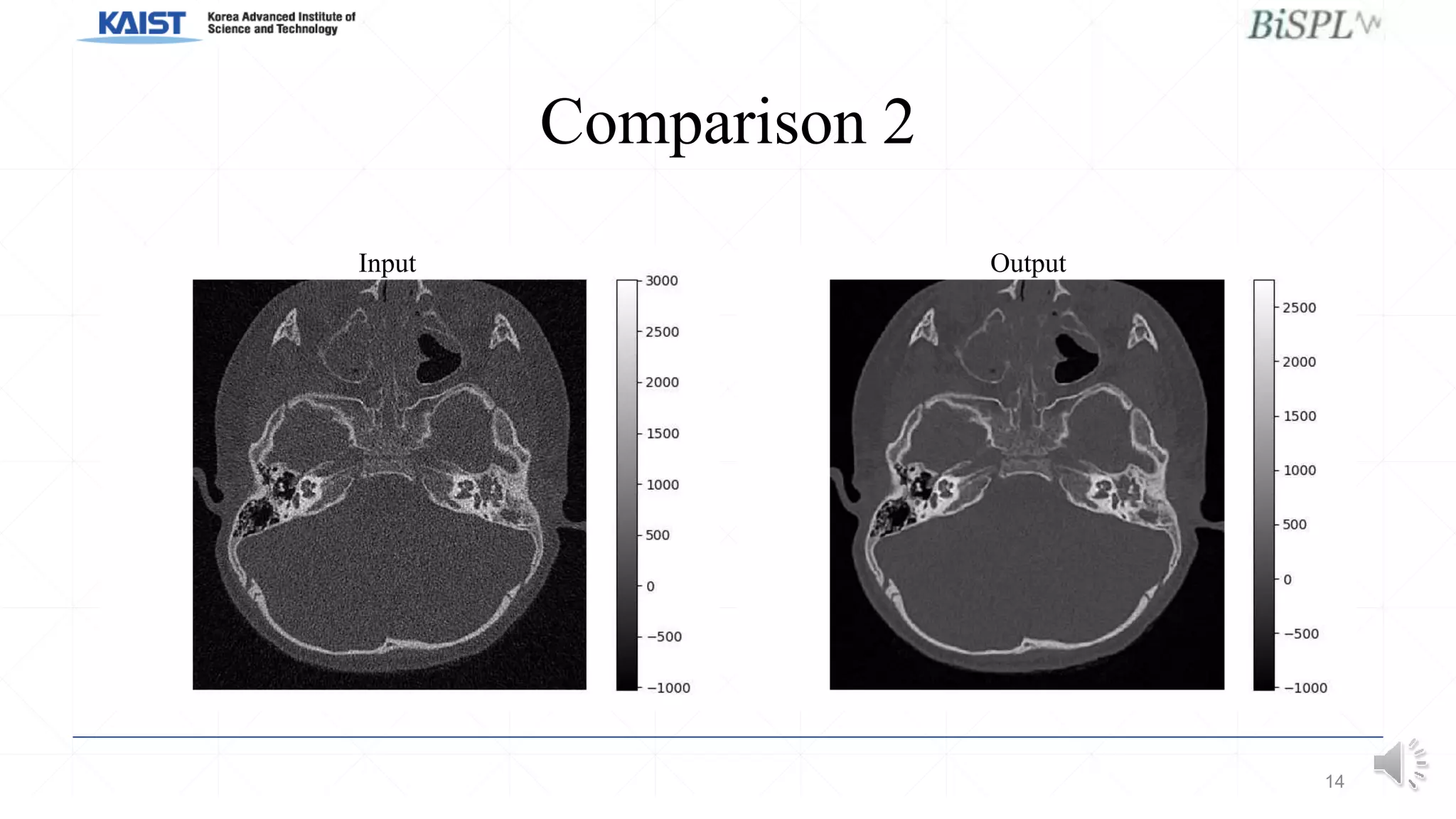

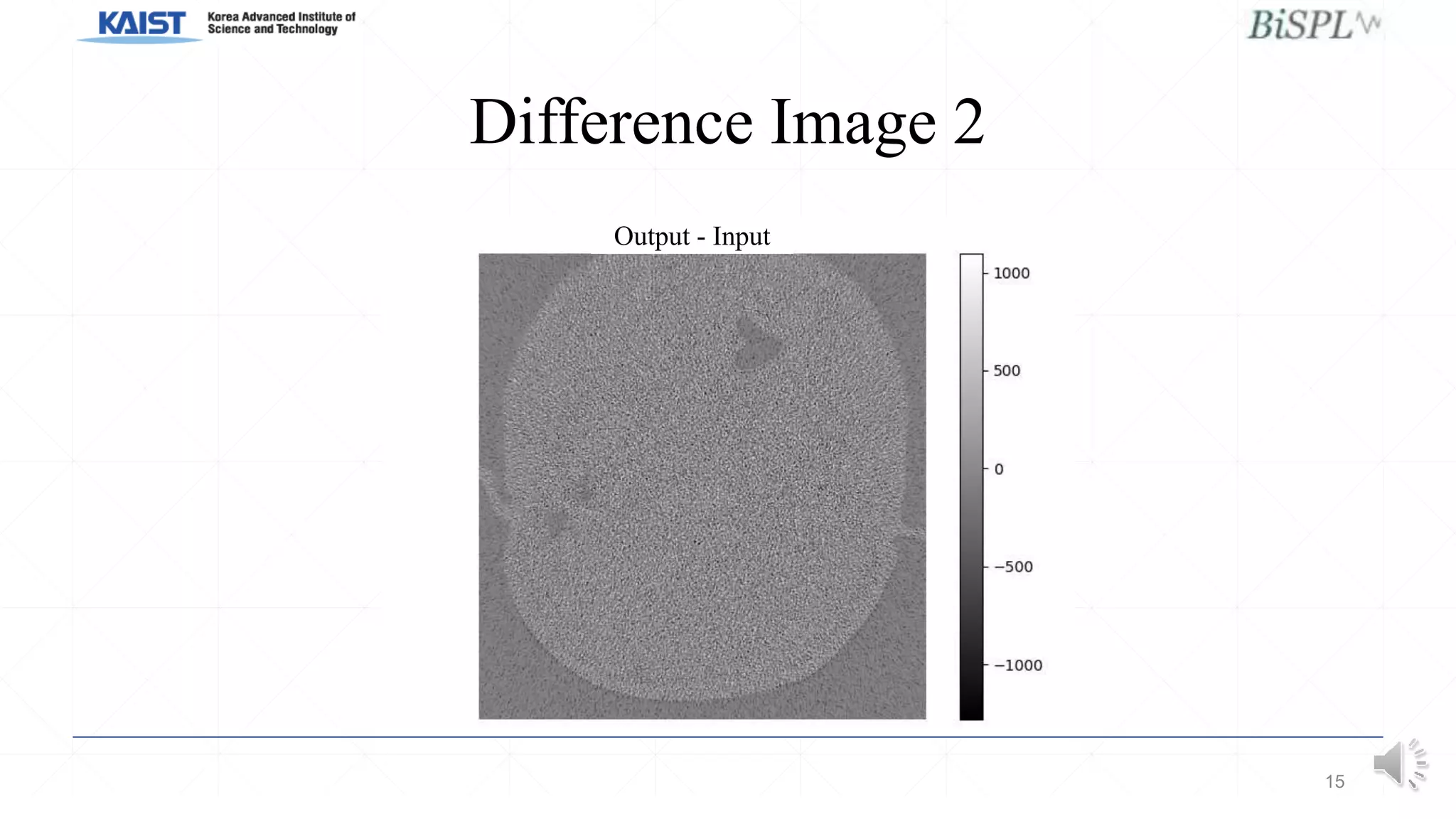

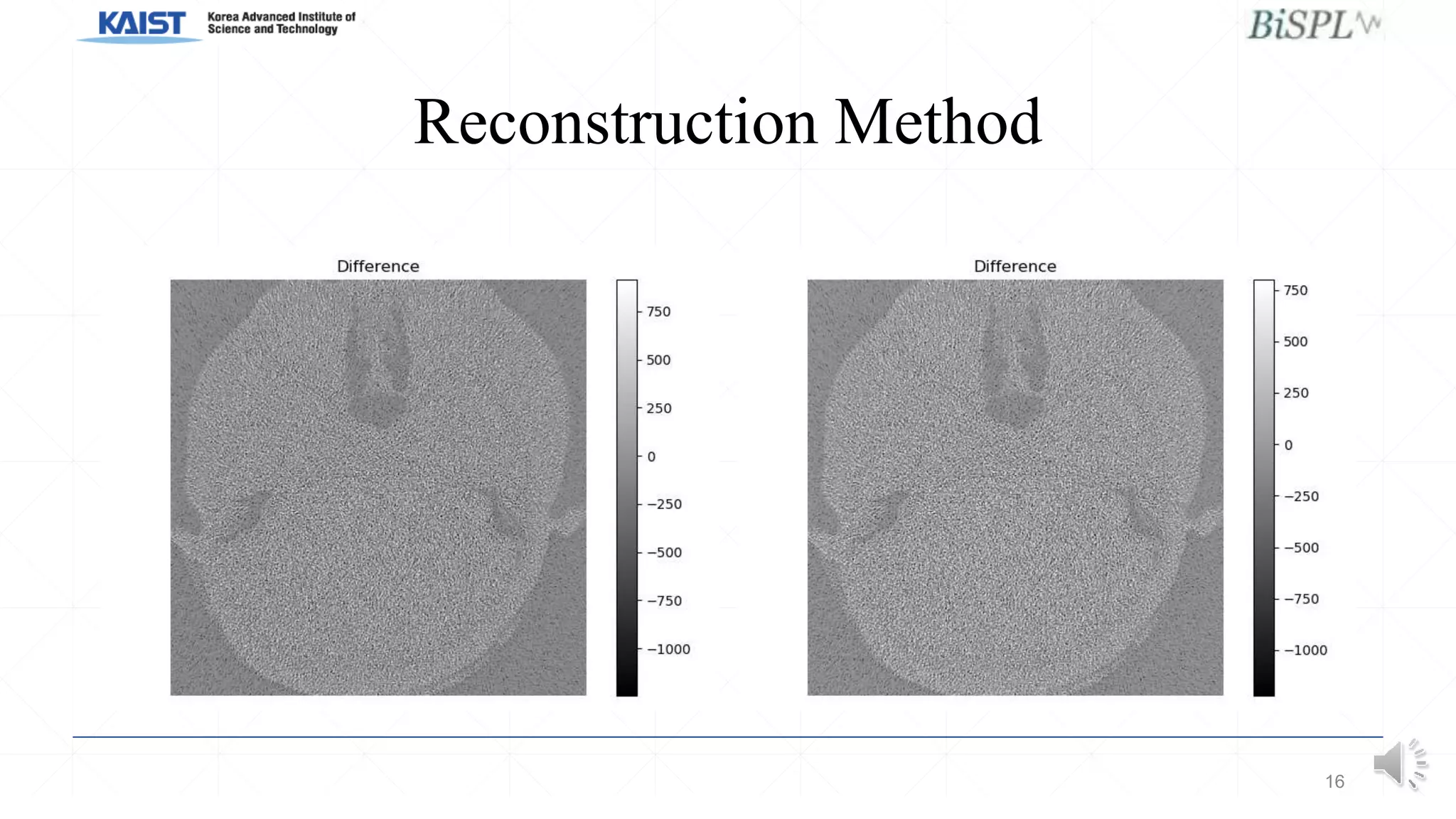

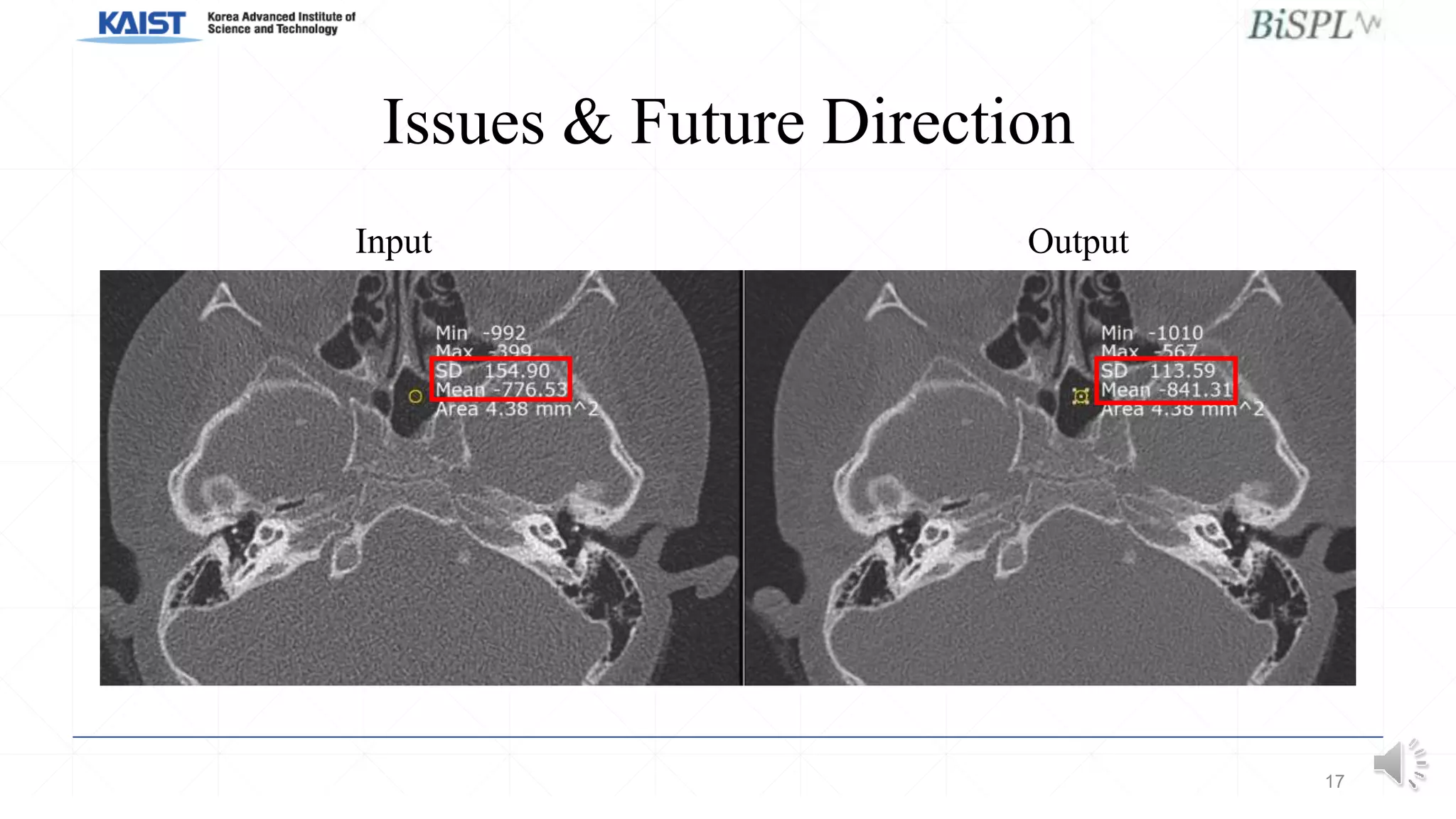

This document presents a poster on denoising unpaired low dose CT images using a self-ensembled CycleGAN, focusing on the comparison between low and high dose images, as well as the architecture and training details. The authors address issues with naive implementations while showcasing results through input-output comparisons. Additionally, the presentation concludes with future directions and invites questions from the audience.