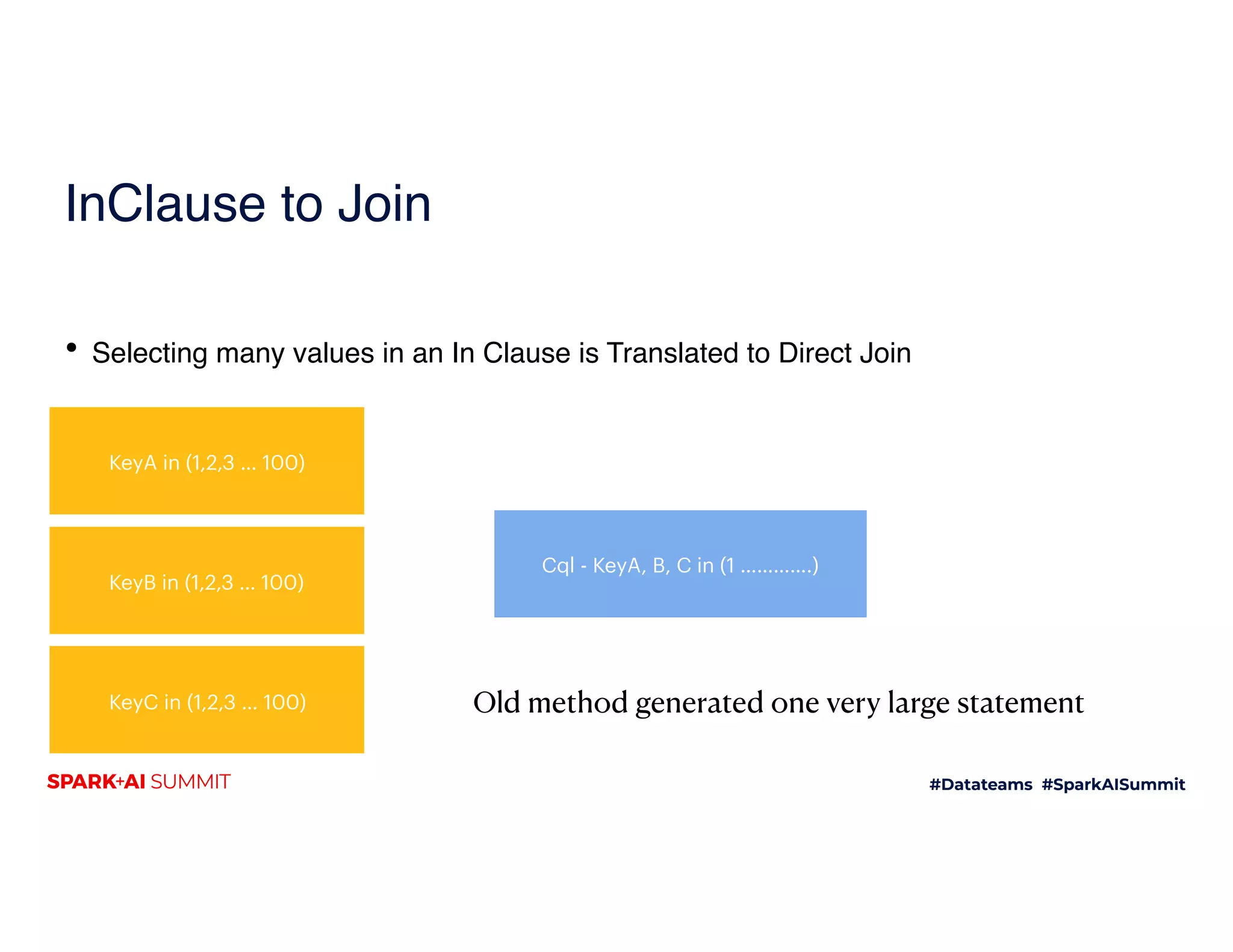

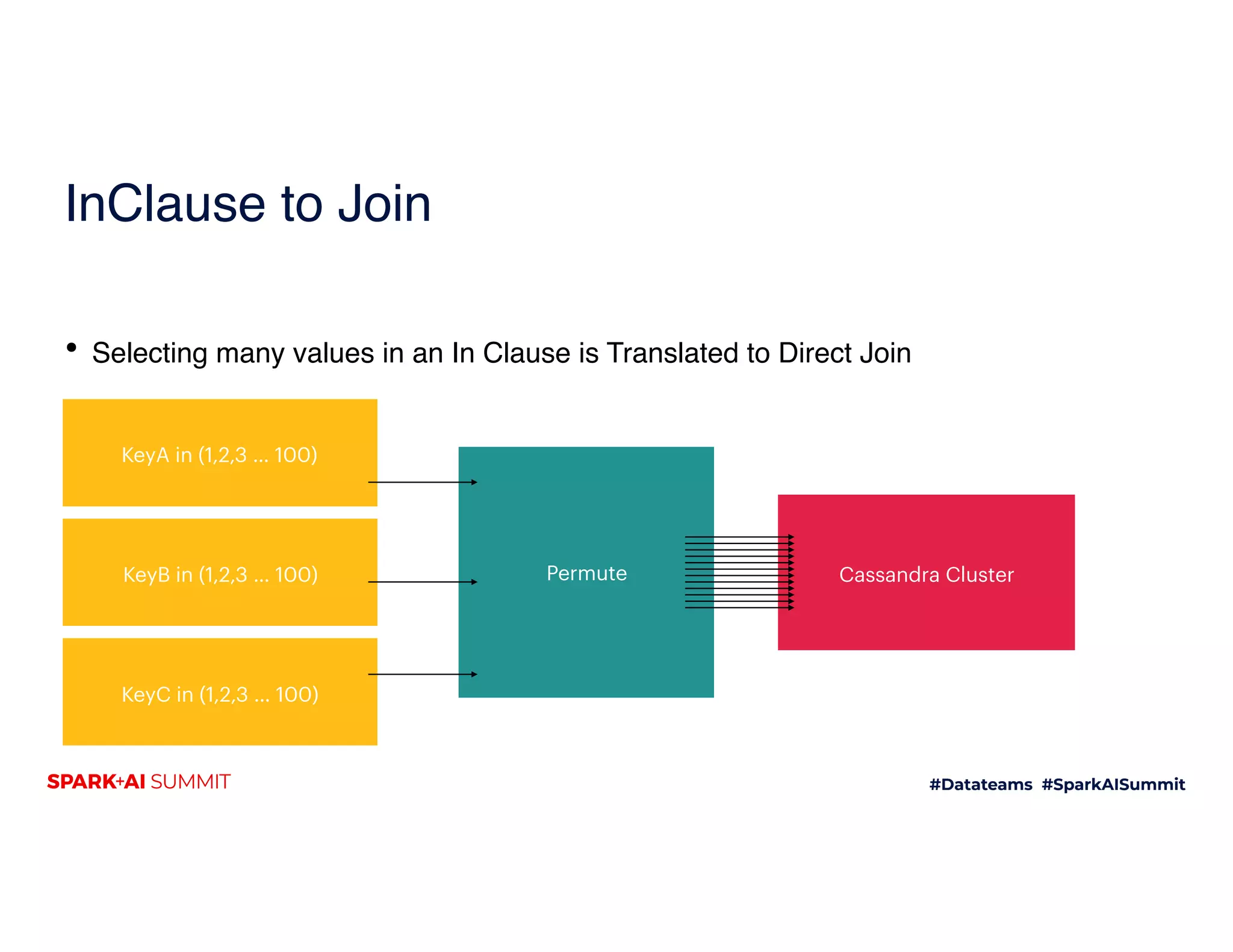

The document discusses the introduction of DatasourceV2 and its integration with Cassandra, emphasizing improvements in user interaction and catalog management, enabling real-time updates and easier table management. It details the setup and use of catalogs, examples of creating and managing tables, and enhancements in data source performance, including support for direct joins and improved query capabilities. Future plans include updates to the Spark Cassandra Connector to support new features and bug fixes for better performance and compatibility.

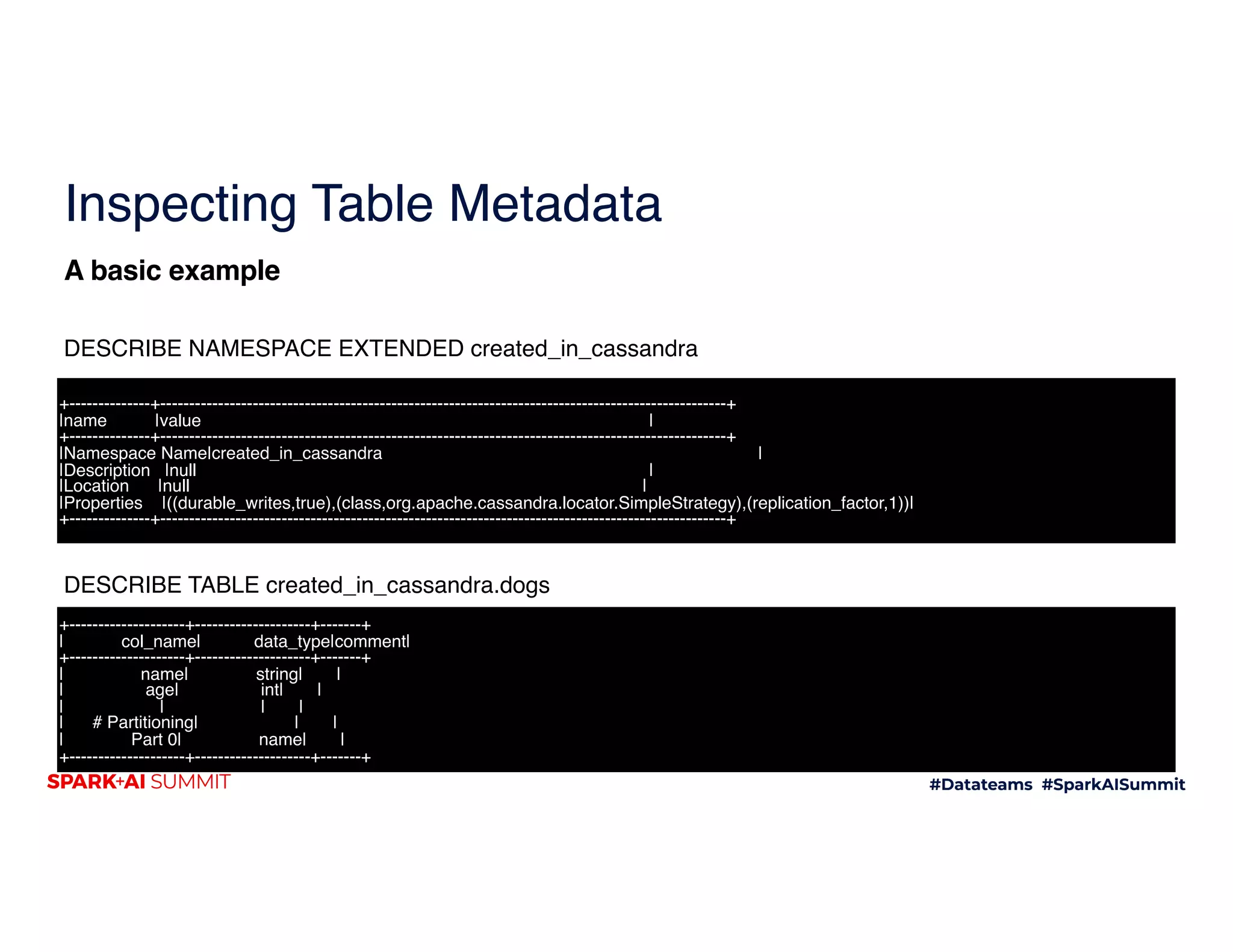

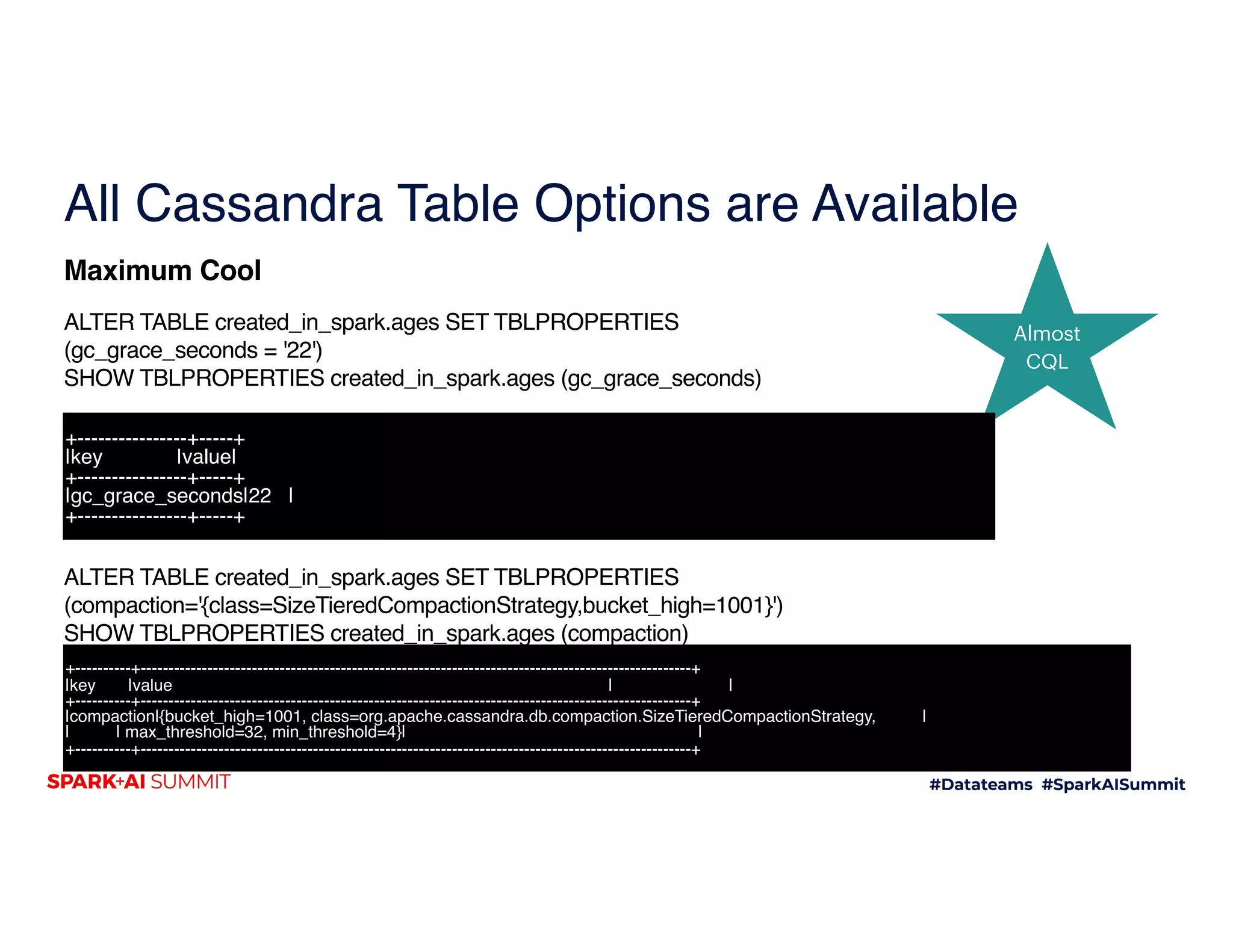

![Inspecting Table Metadata

SHOW TBLPROPERTIES created_in_cassandra.dogs

A basic example

+--------------------+--------------------+

| key| value|

+--------------------+--------------------+

| crc_check_chance| 1.0|

| compression|{chunk_length_in_...|

| clustering_key| []|

| max_index_interval| 2048|

| compaction|{class=org.apache...|

| gc_grace_seconds| 864000|

| extensions| {}|

|bloom_filter_fp_c...| 0.01|

| caching|{keys=ALL, rows_p...|

|dclocal_read_repa...| 0.1|

| min_index_interval| 128|

| speculative_retry| 99PERCENTILE|

| comment| |

|default_time_to_live| 0|

| read_repair_chance| 0.0|

|memtable_flush_pe...| 0|

+--------------------+--------------------+](https://image.slidesharecdn.com/169russellspitzer-200709194436/75/DataSource-V2-and-Cassandra-A-Whole-New-World-14-2048.jpg)

![Partitioning

• SELECT DISTINCT age

1

Created in Spark Ages Table

2

3

4

Part 1

Part 2

*(1) HashAggregate(keys=[age#1174], functions=[])

+- *(1) HashAggregate(keys=[age#1174], functions=[])

+- *(1) Project [age#1174]

+- BatchScan[age#1174] Cassandra Scan created_in_spark.ages](https://image.slidesharecdn.com/169russellspitzer-200709194436/75/DataSource-V2-and-Cassandra-A-Whole-New-World-22-2048.jpg)

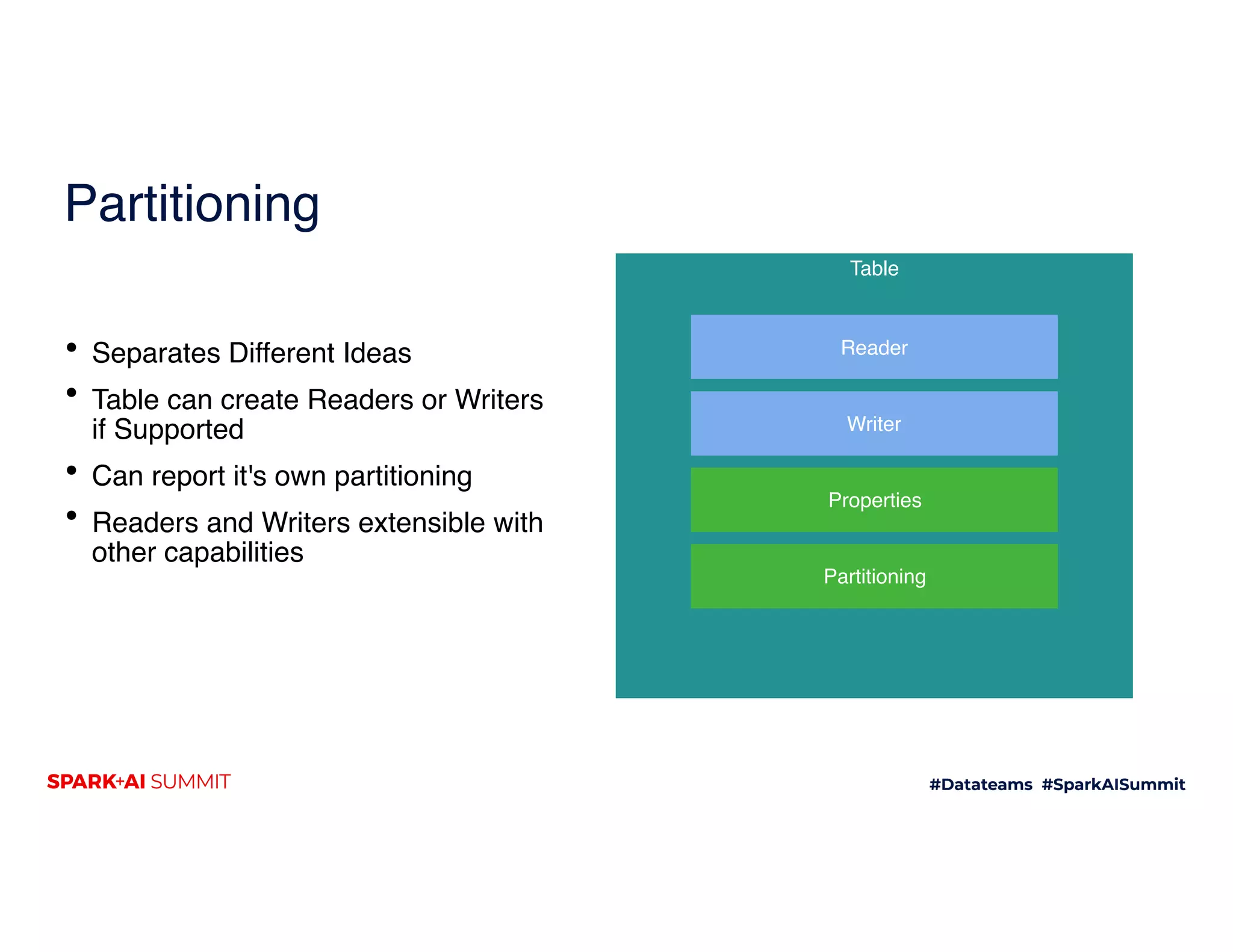

![Partitioning

• SELECT DISTINCT age

• SELECT DISTINCT name

*(1) HashAggregate(keys=[age#1174], functions=[])

+- *(1) HashAggregate(keys=[age#1174], functions=[])

+- *(1) Project [age#1174]

+- BatchScan[age#1174] Cassandra Scan created_in_spark.ages

1 bob suewenjack

Created in Spark Ages Table

2

mag

bob calli sara

3 len sue

fumi

stan

4 dougbob priyaavra

Part 1

Part 2](https://image.slidesharecdn.com/169russellspitzer-200709194436/75/DataSource-V2-and-Cassandra-A-Whole-New-World-23-2048.jpg)

![Partitioning

• SELECT DISTINCT age

• SELECT DISTINCT name

bob suewenjack

Created in Spark Ages Table

mag

bob calli sara

len sue

fumi

stan

dougbob priyaavra

Part 1

Part 2

*(1) HashAggregate(keys=[age#1174], functions=[])

+- *(1) HashAggregate(keys=[age#1174], functions=[])

+- *(1) Project [age#1174]

+- BatchScan[age#1174] Cassandra Scan created_in_spark.ages](https://image.slidesharecdn.com/169russellspitzer-200709194436/75/DataSource-V2-and-Cassandra-A-Whole-New-World-24-2048.jpg)

![Partitioning

• SELECT DISTINCT age

• SELECT DISTINCT name

1 bob suewenjack

Created in Spark Ages Table

2

mag

bob calli sara

3 len sue

fumi

stan

4 dougbob priyaavra

Part 1

Part 2

*(2) HashAggregate(keys=[name#1171], functions=[])

+- Exchange hashpartitioning(name#1171, 200), true, [id=#256]

+- *(1) HashAggregate(keys=[name#1171], functions=[])

+- *(1) Project [name#1171]

+- BatchScan[name#1171] Cassandra Scan created_in_spark.ages

*(1) HashAggregate(keys=[age#1174], functions=[])

+- *(1) HashAggregate(keys=[age#1174], functions=[])

+- *(1) Project [age#1174]

+- BatchScan[age#1174] Cassandra Scan created_in_spark.ages](https://image.slidesharecdn.com/169russellspitzer-200709194436/75/DataSource-V2-and-Cassandra-A-Whole-New-World-25-2048.jpg)

![DirectJoin in Datasources

• JoinWithCassandraTable Support in

Catalyst

• Avoids Full Scans

Partition Keys Cassandra Cluster

== Physical Plan ==

*(2) Project [name#215, age#216, age#110, name#111]

+- Cassandra Direct Join [age = age#216] created_in_spark.ages - Reading (age, name) Pushed {}

+- *(1) Project [name#215, age#216]

+- *(1) Filter isnotnull(age#216)

+- BatchScan[name#215, age#216] Cassandra Scan created_in_cassandra.dogs|

Server Side Filters []|

Columns [name,age]](https://image.slidesharecdn.com/169russellspitzer-200709194436/75/DataSource-V2-and-Cassandra-A-Whole-New-World-29-2048.jpg)