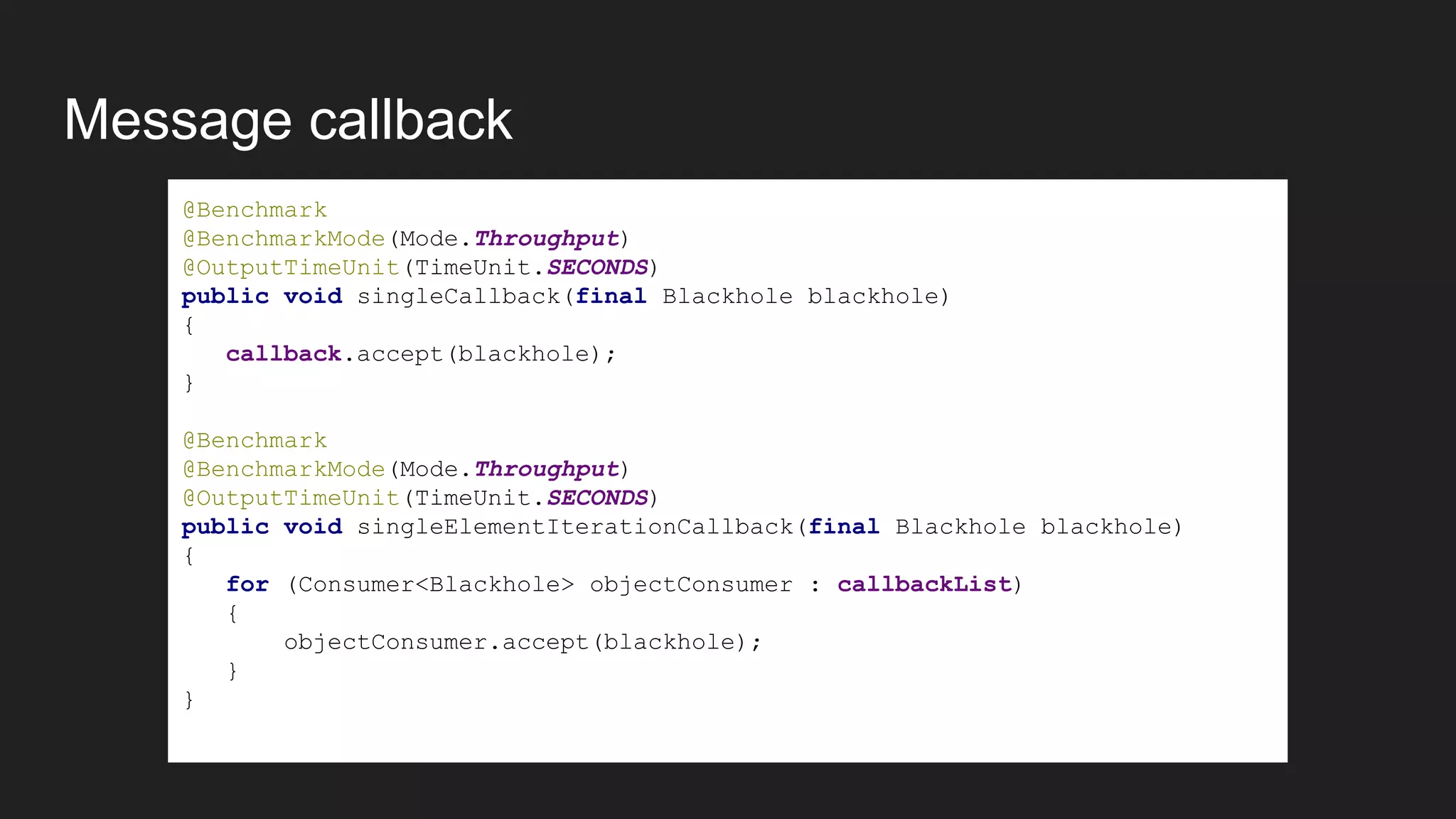

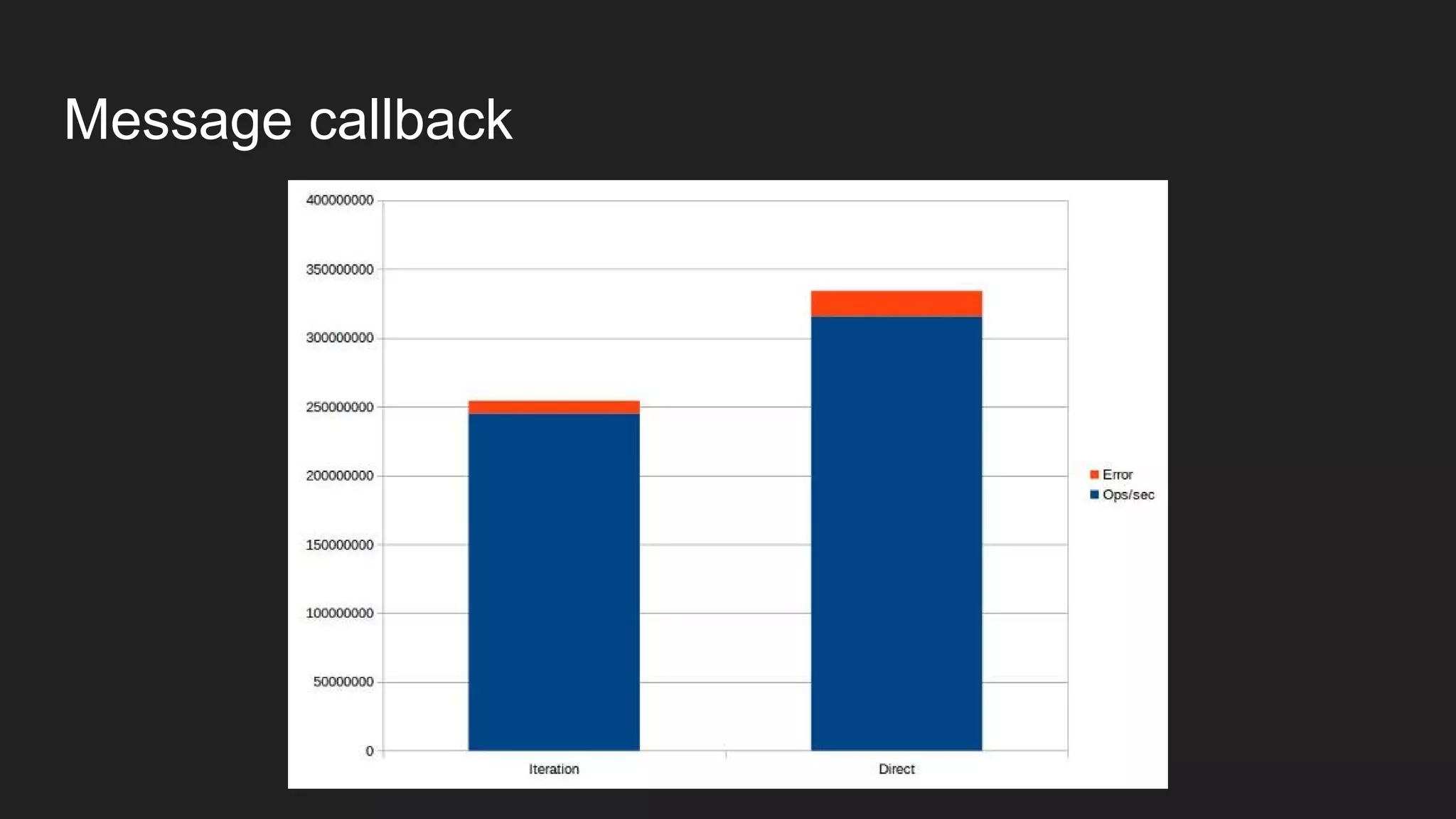

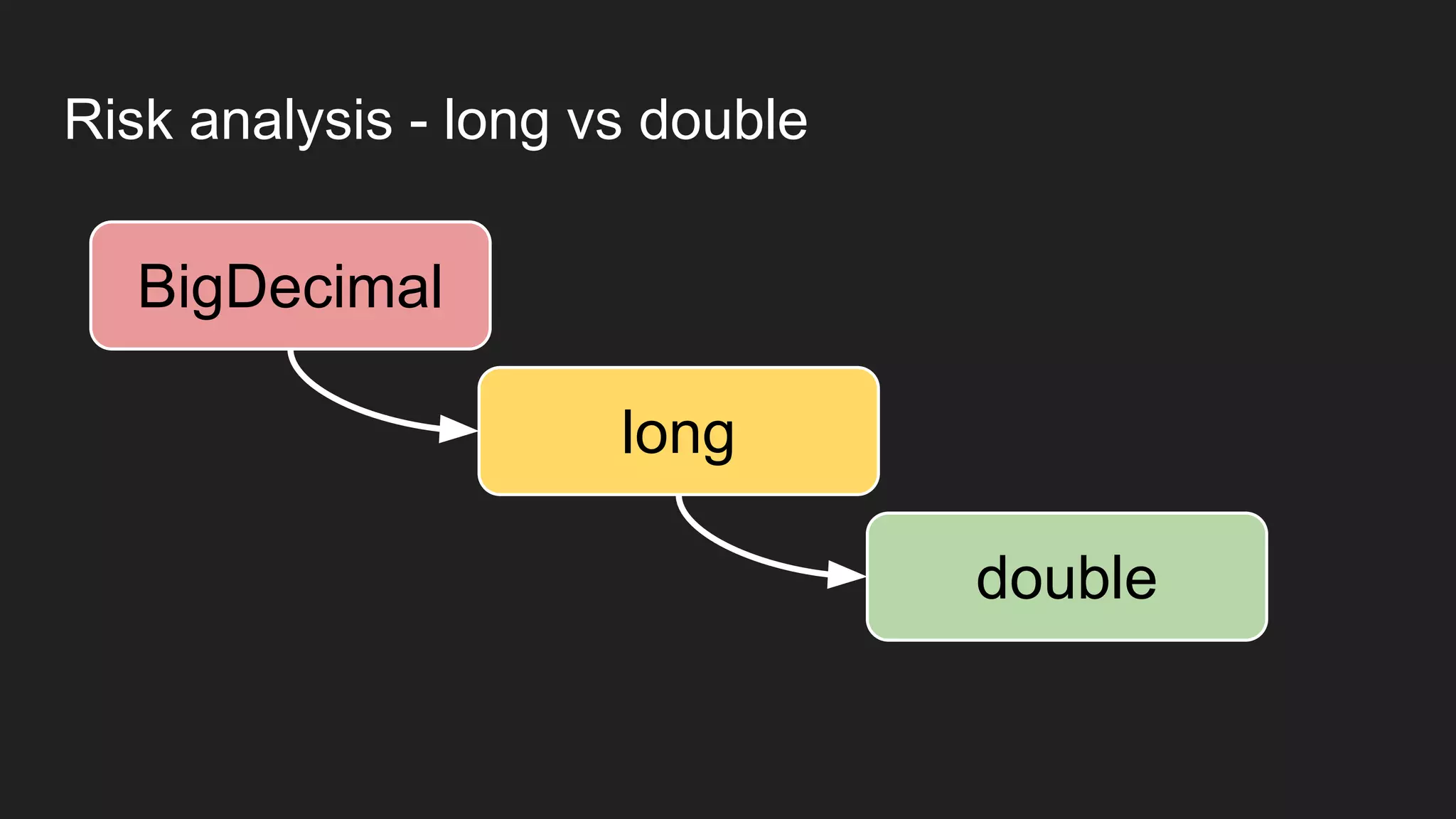

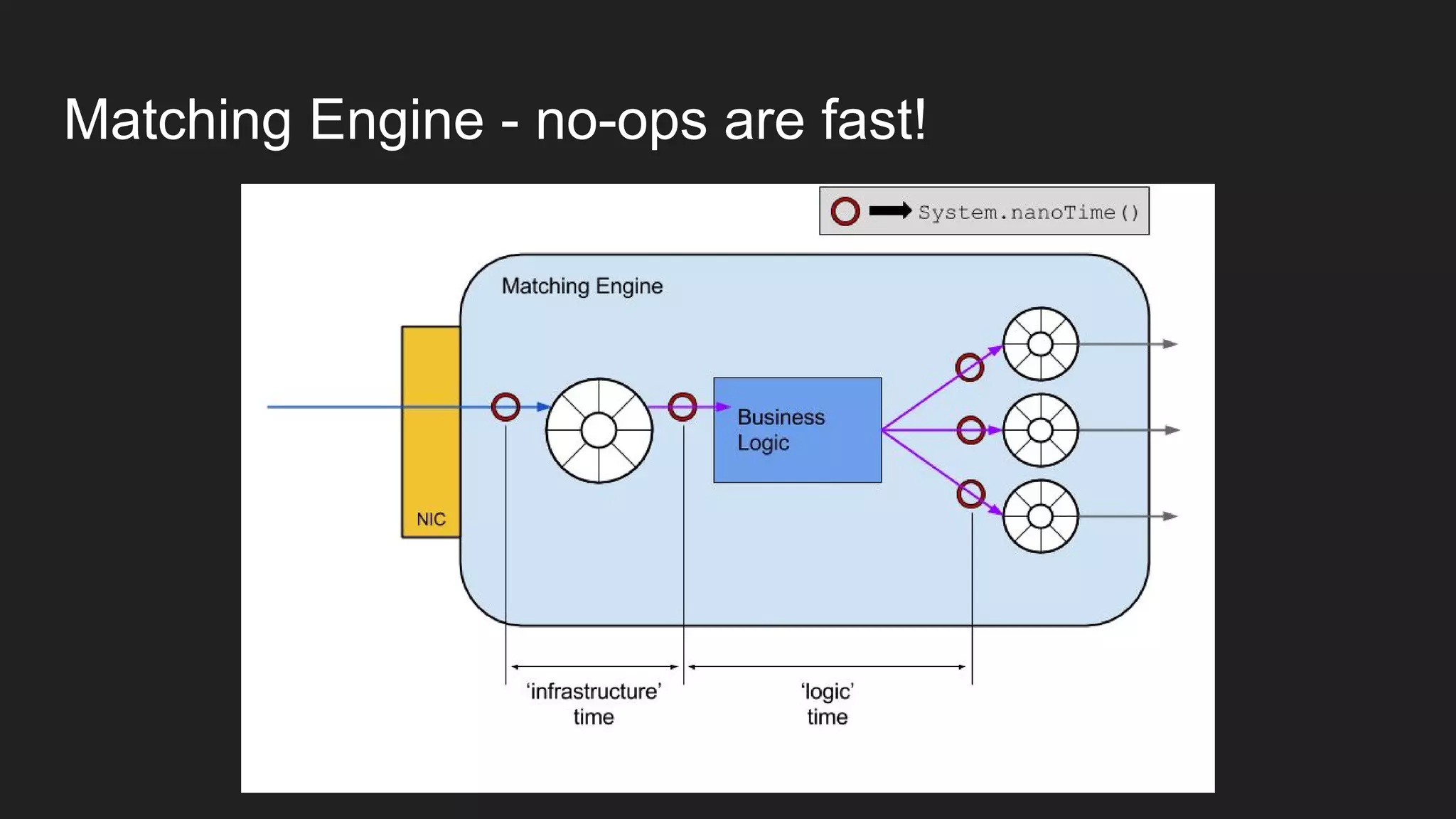

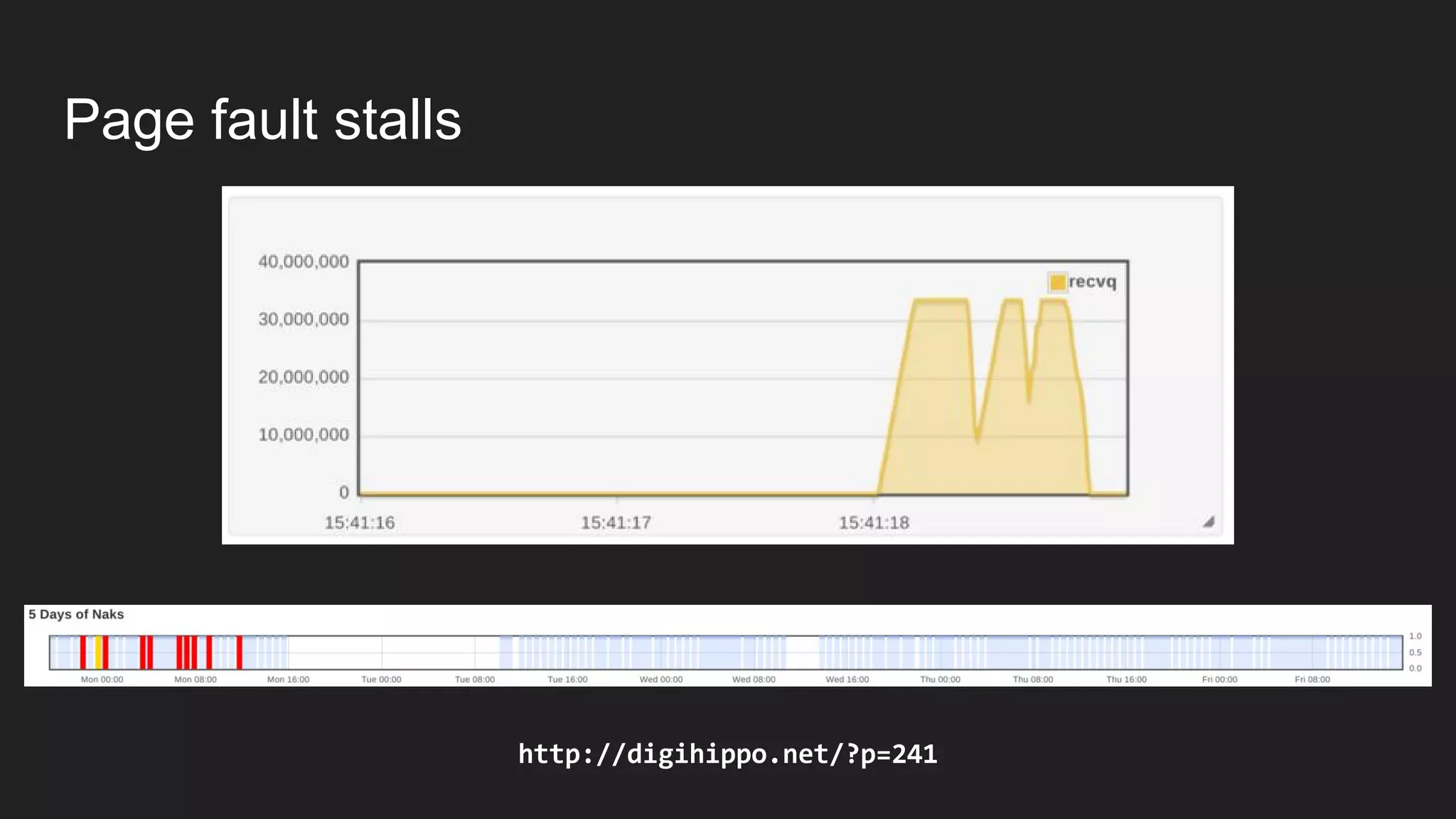

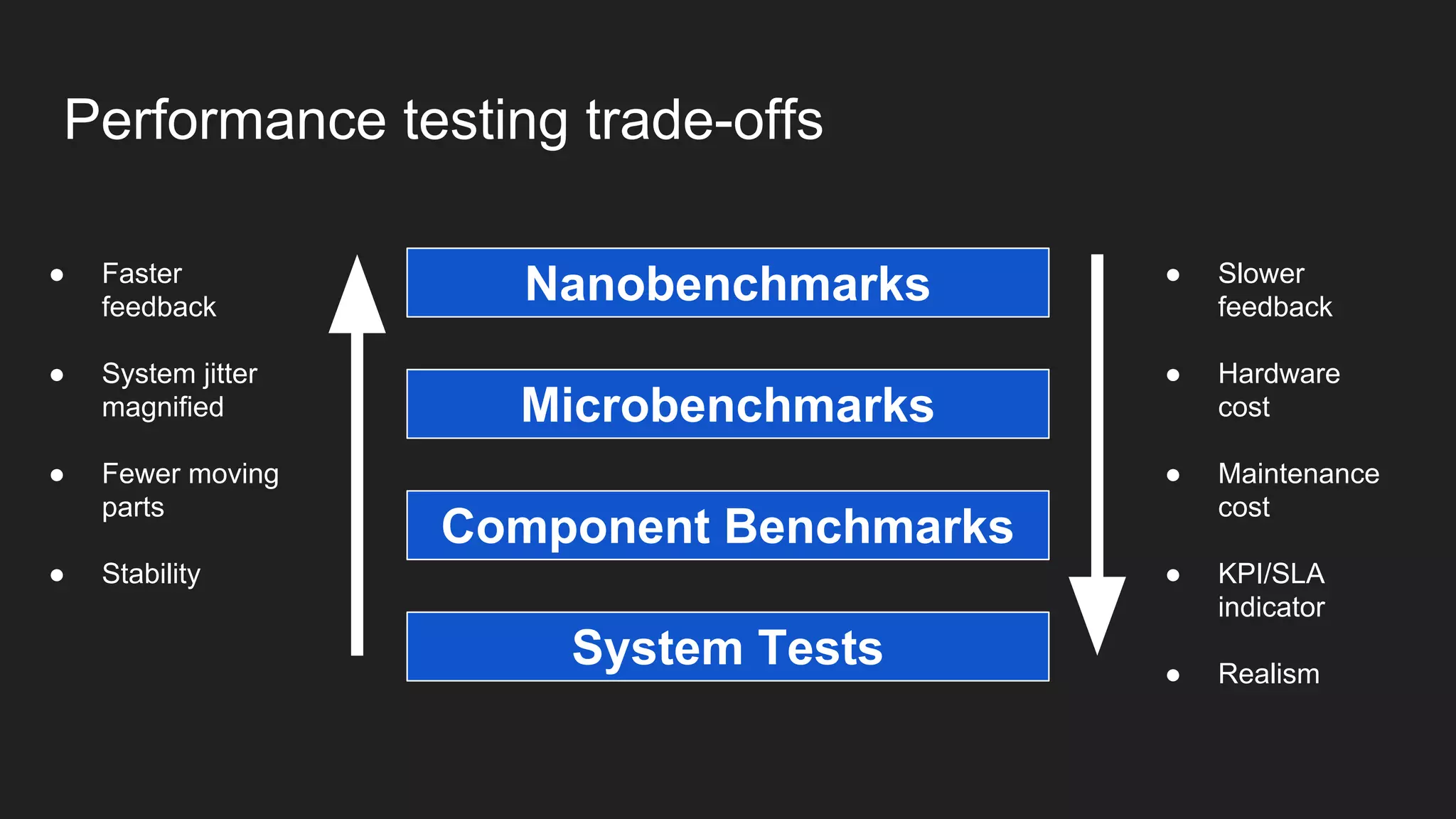

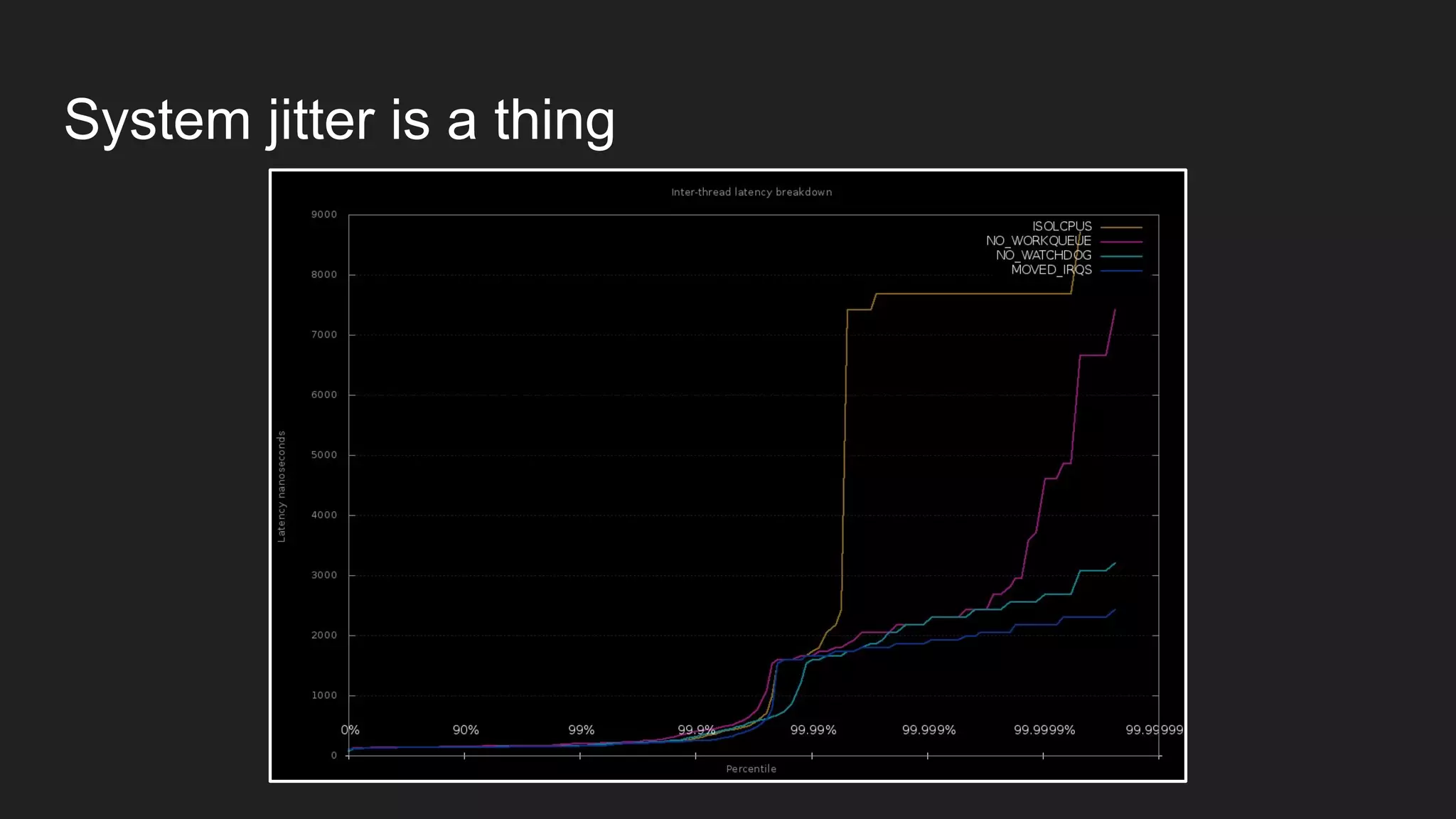

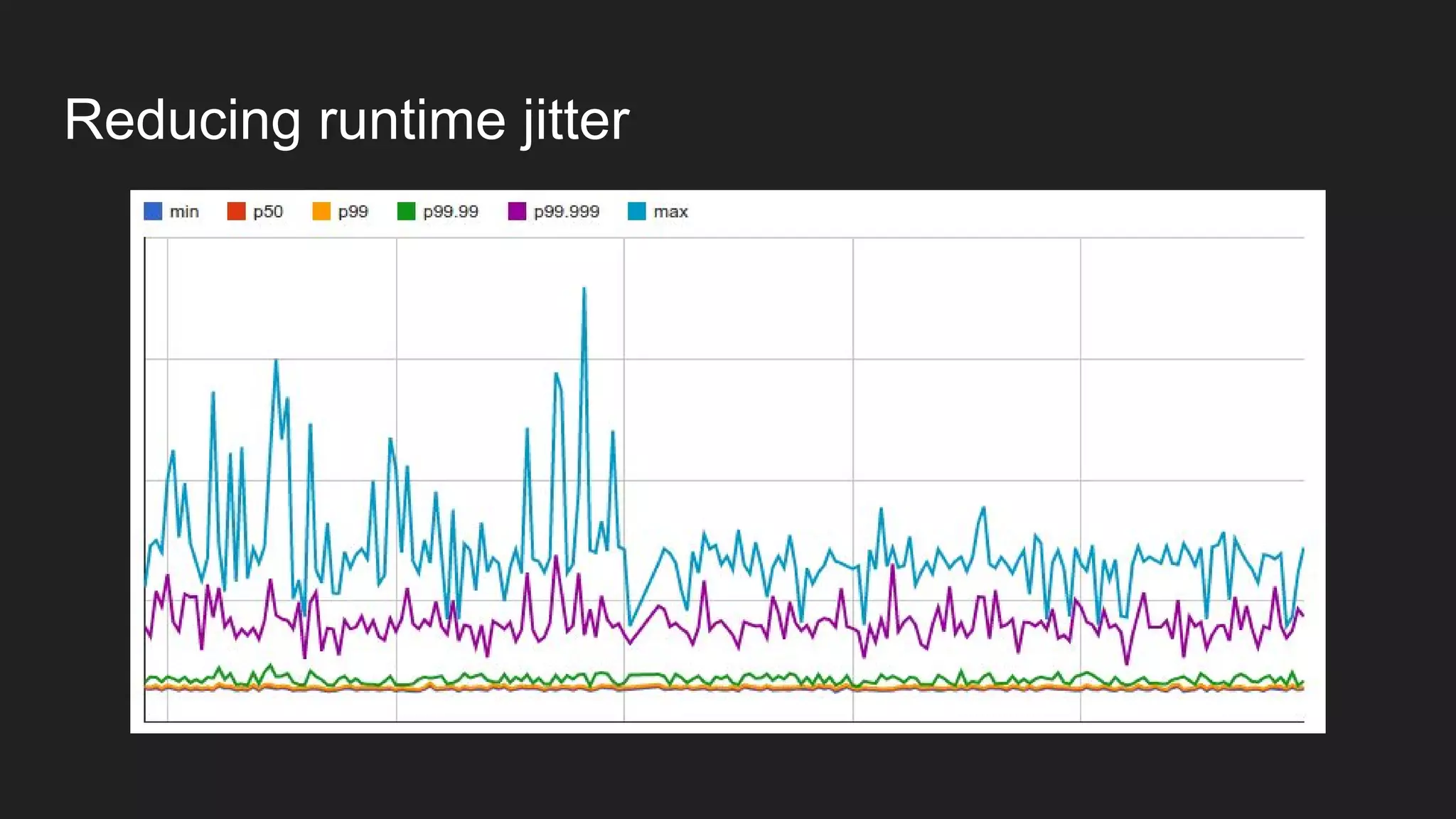

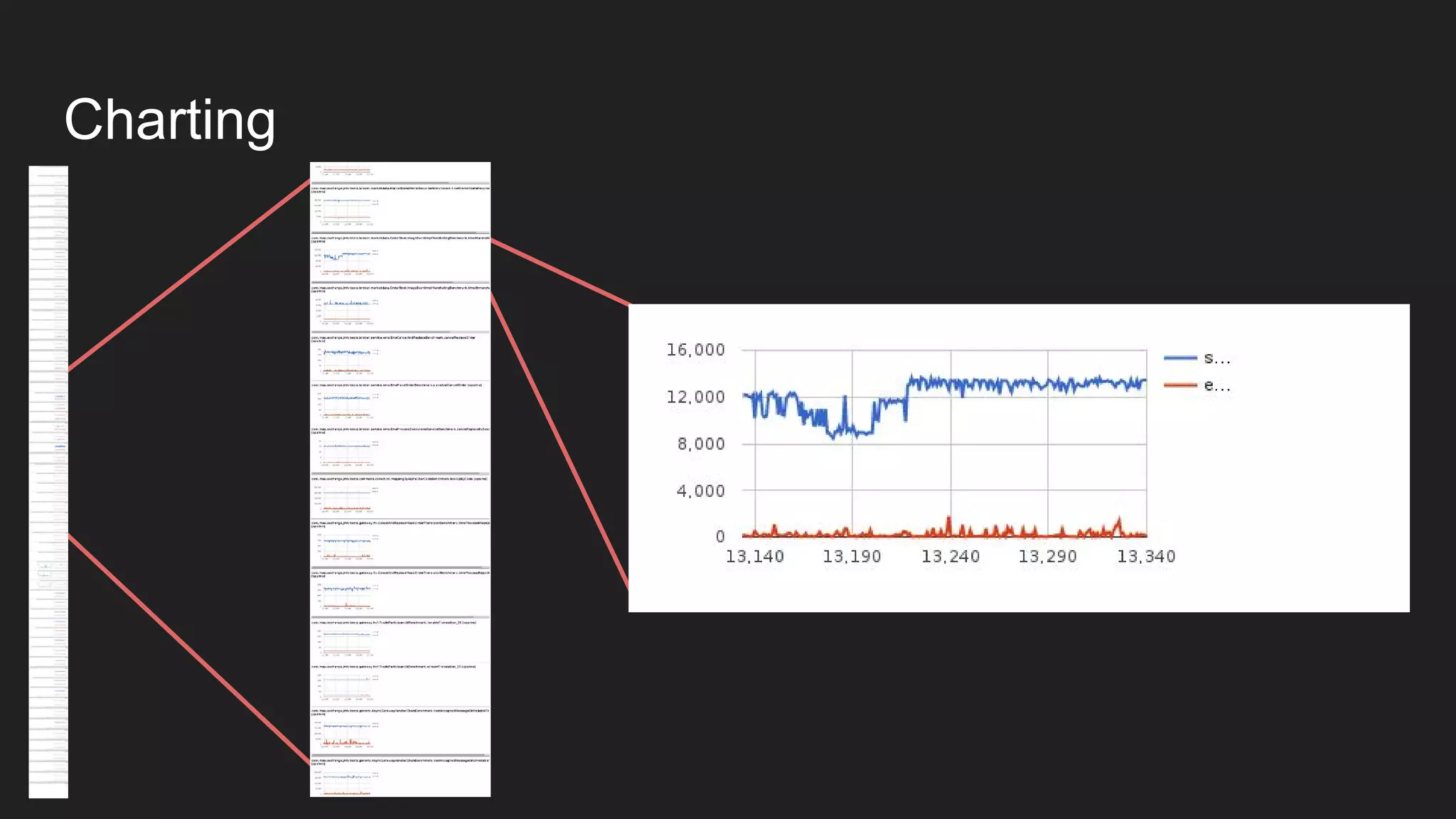

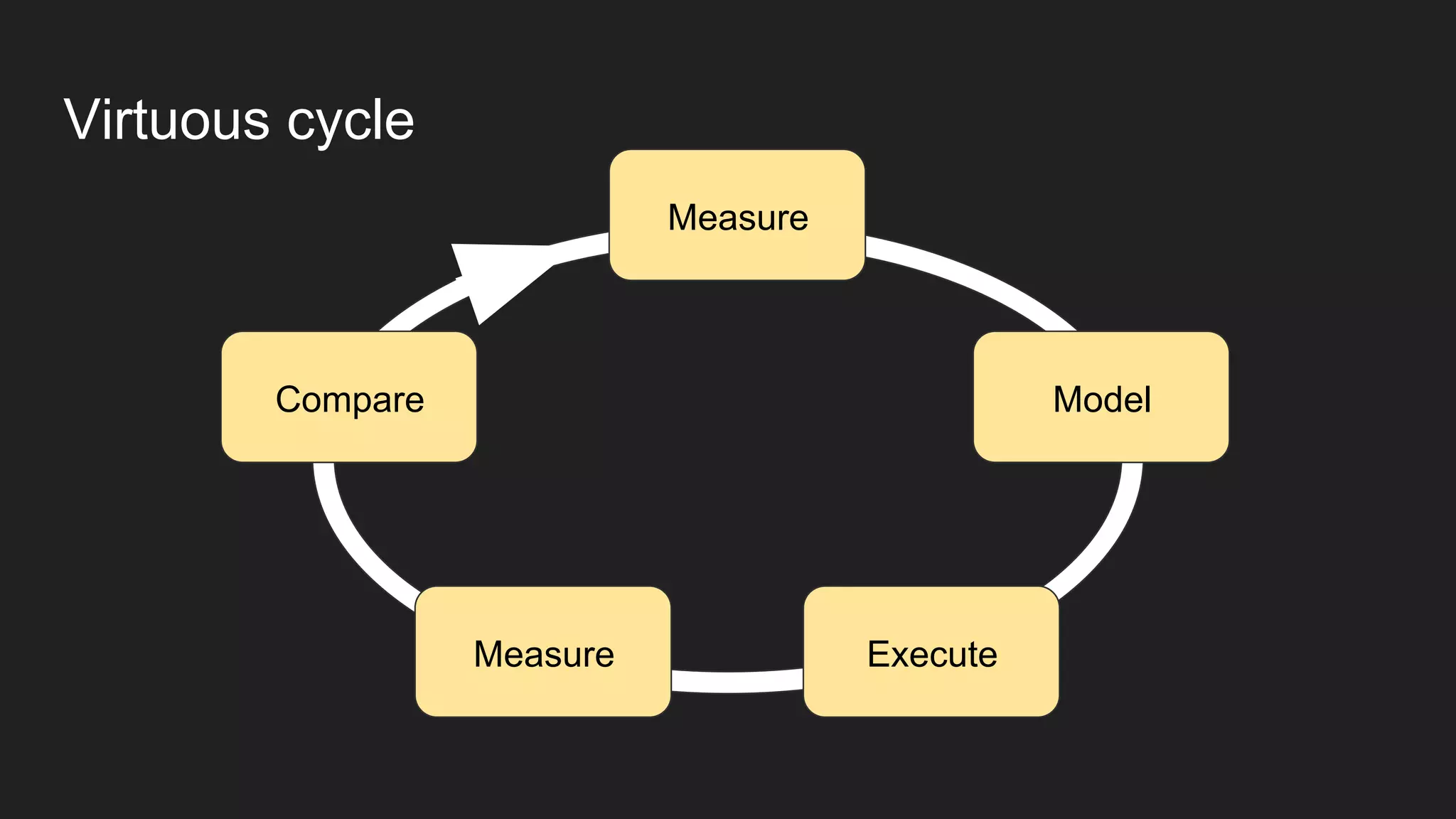

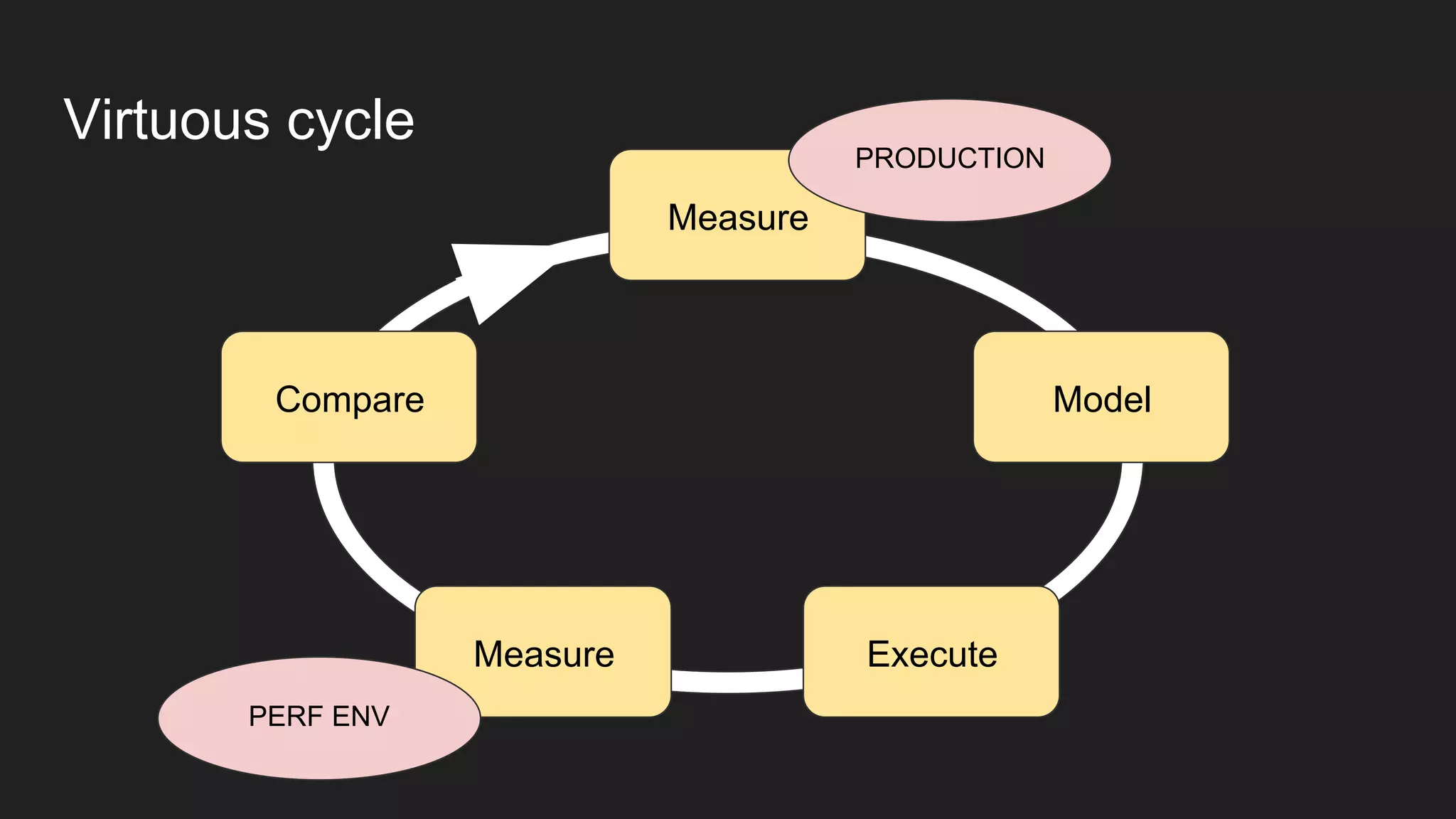

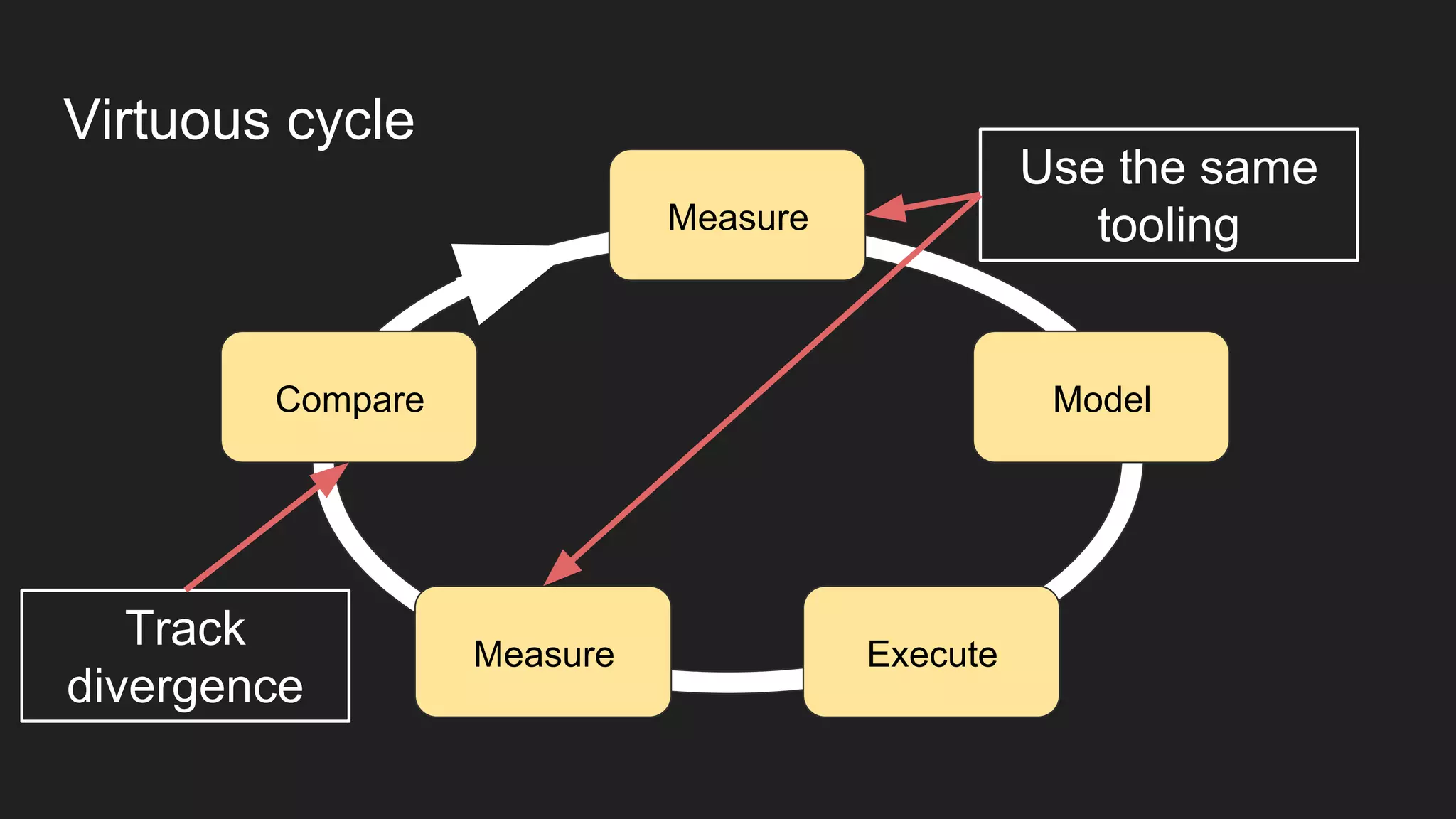

The document discusses the importance of continuous performance testing in software development, highlighting various benchmarks used to assess system performance, including nanobenchmarks, microbenchmarks, component benchmarks, and system performance tests. It emphasizes that process maturity in performance testing leads to increased confidence and maintenance costs while outlining strategies for effective monitoring and regression testing. Additionally, it advocates for using production-grade tools and methodologies to ensure accurate performance assessments and rapid feedback cycles.