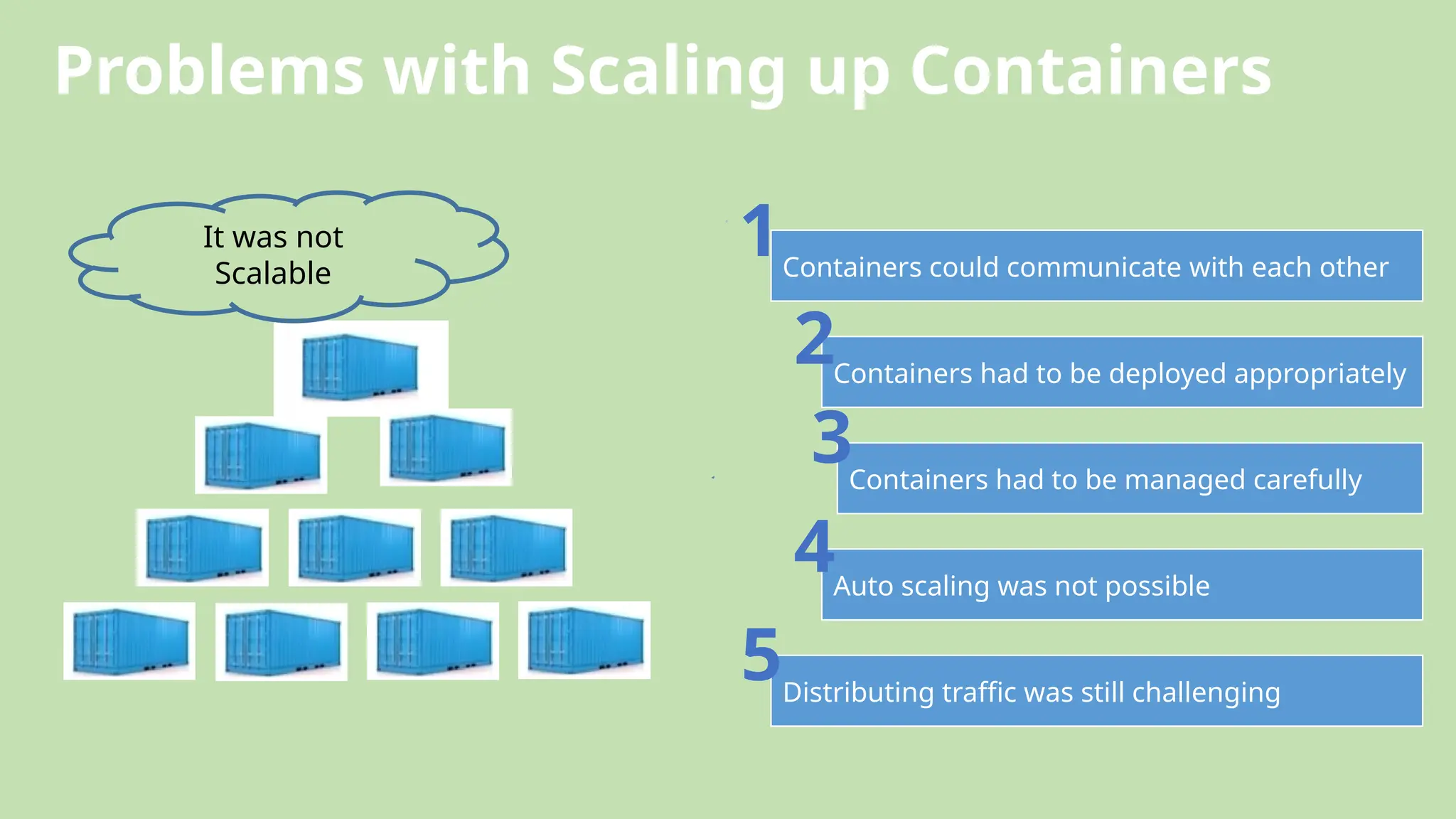

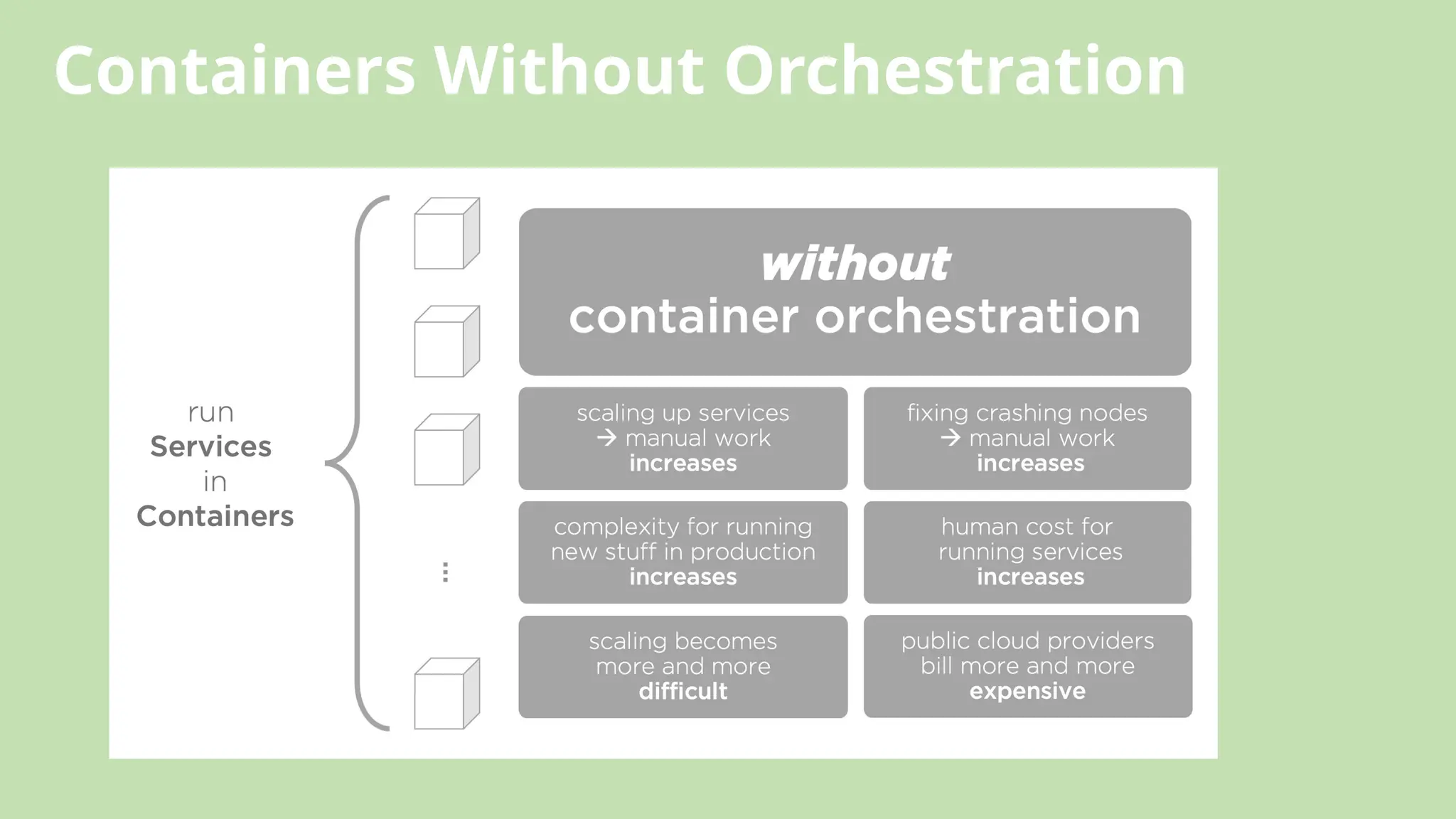

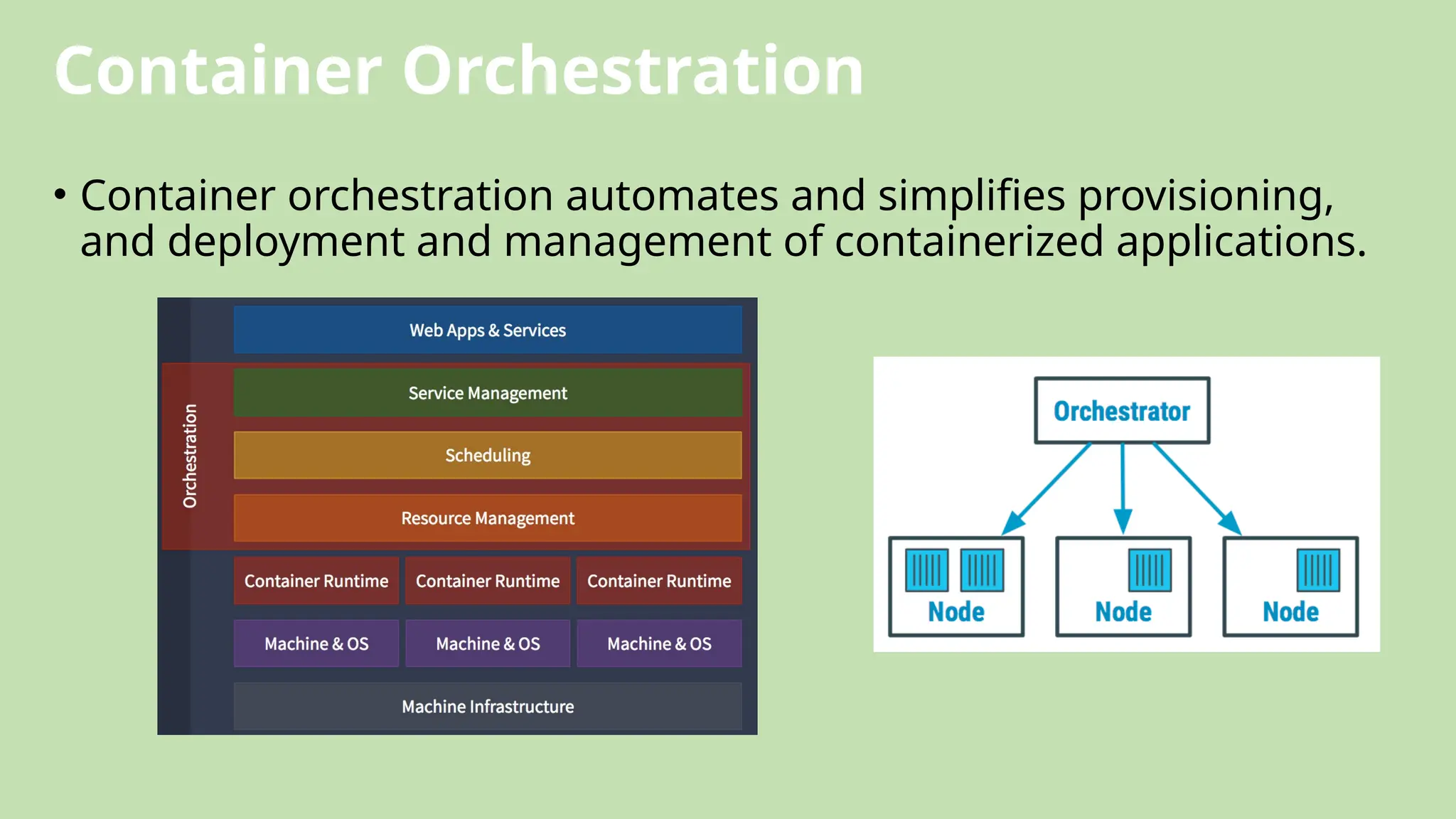

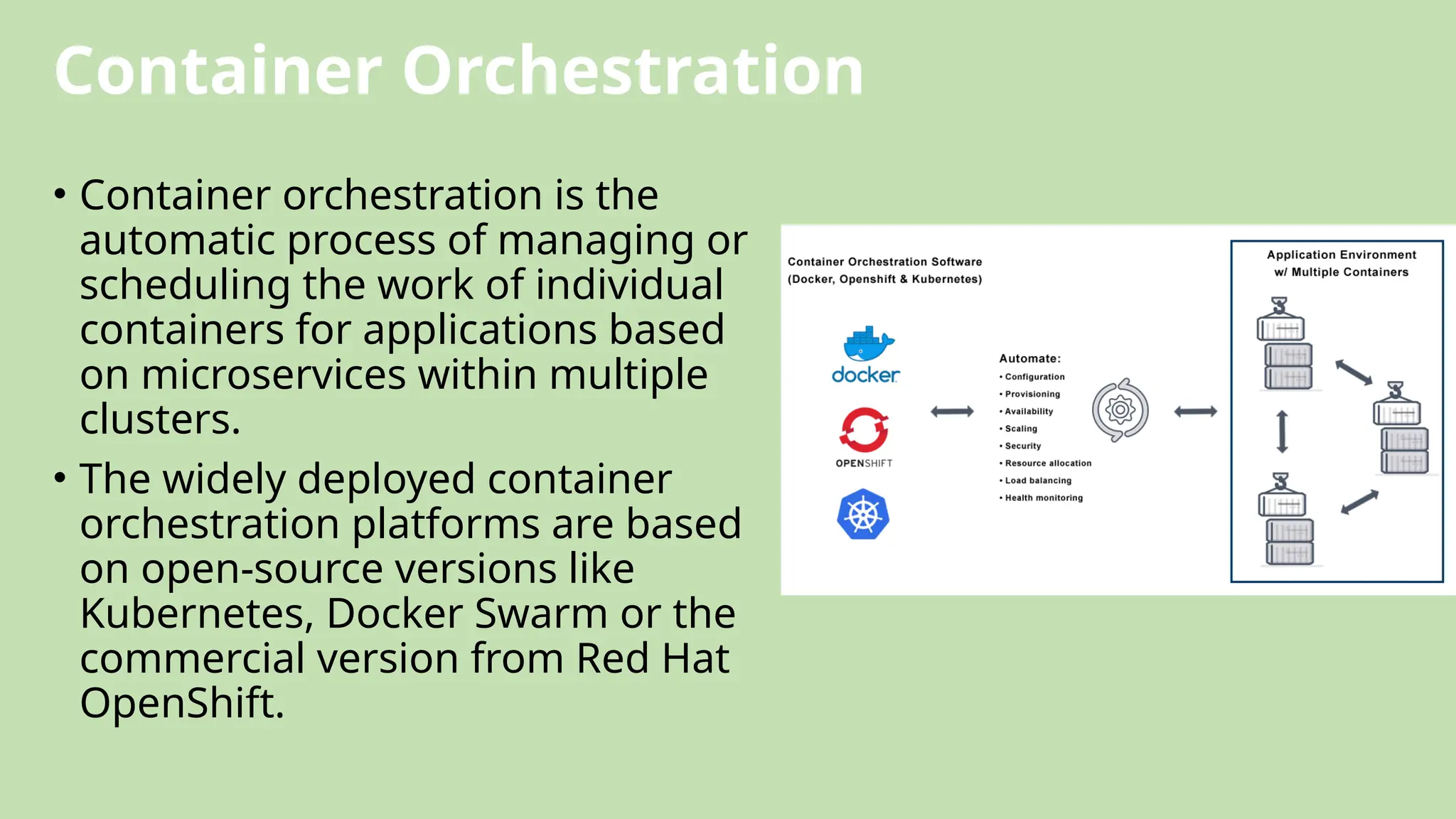

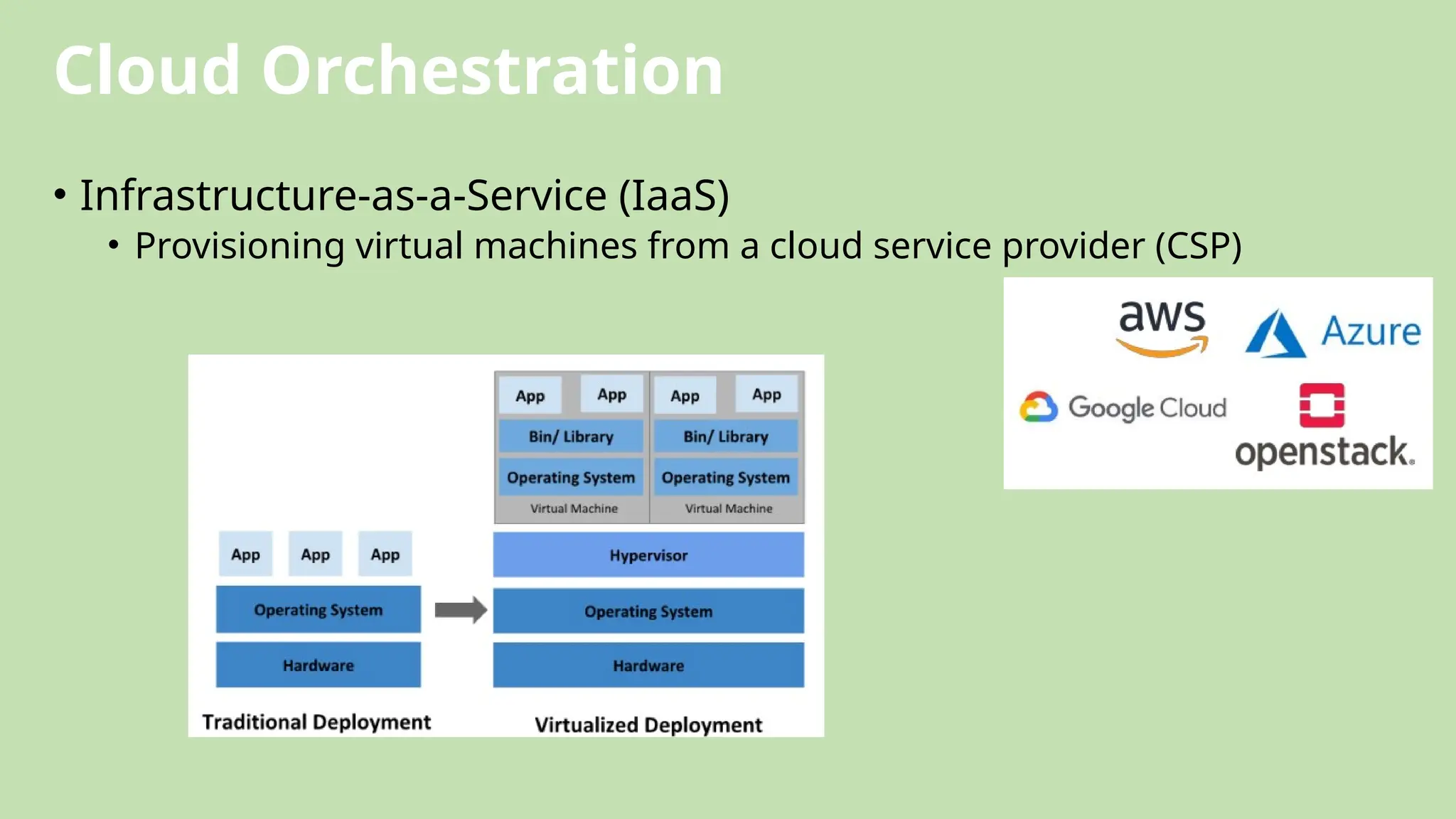

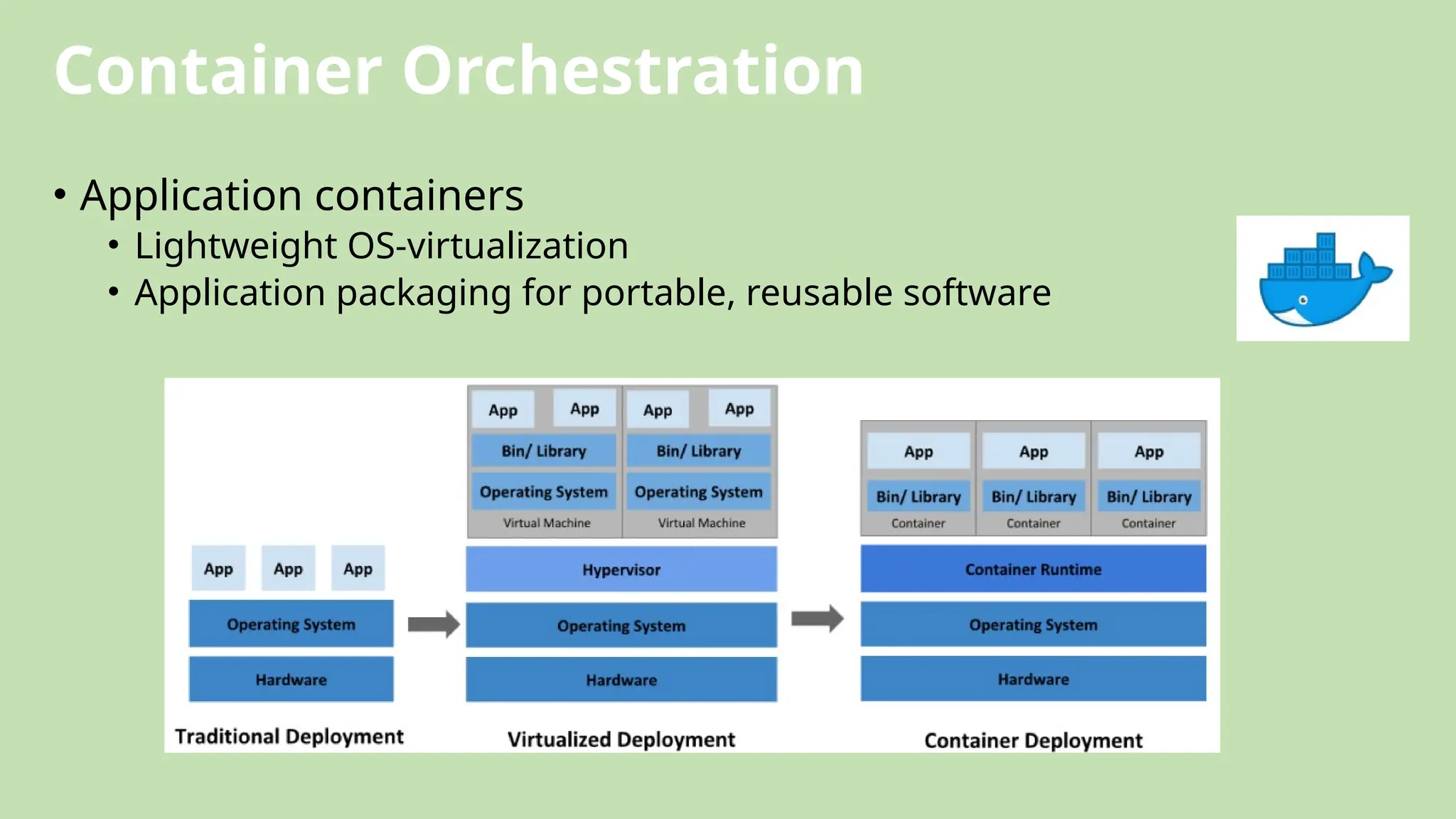

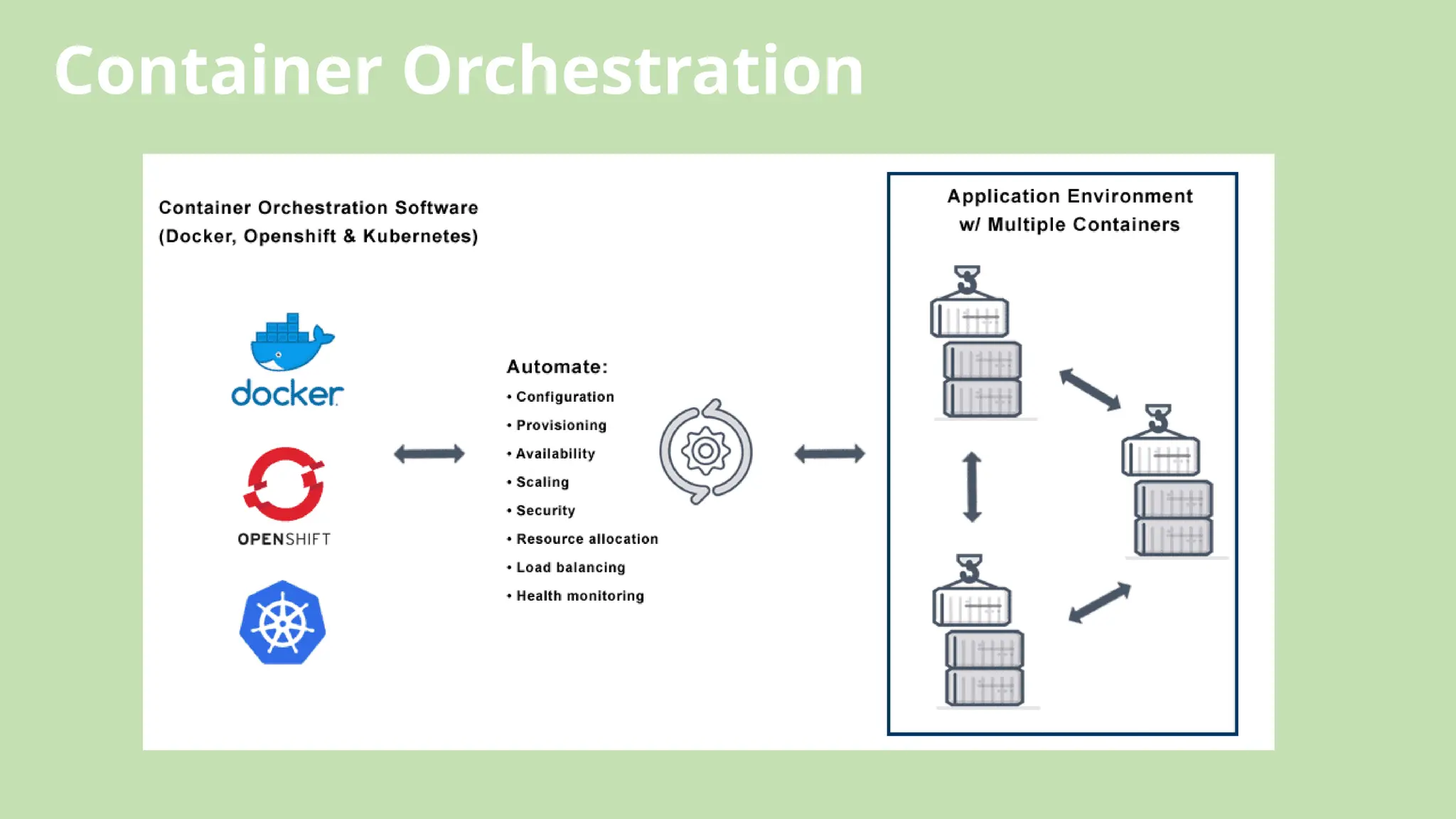

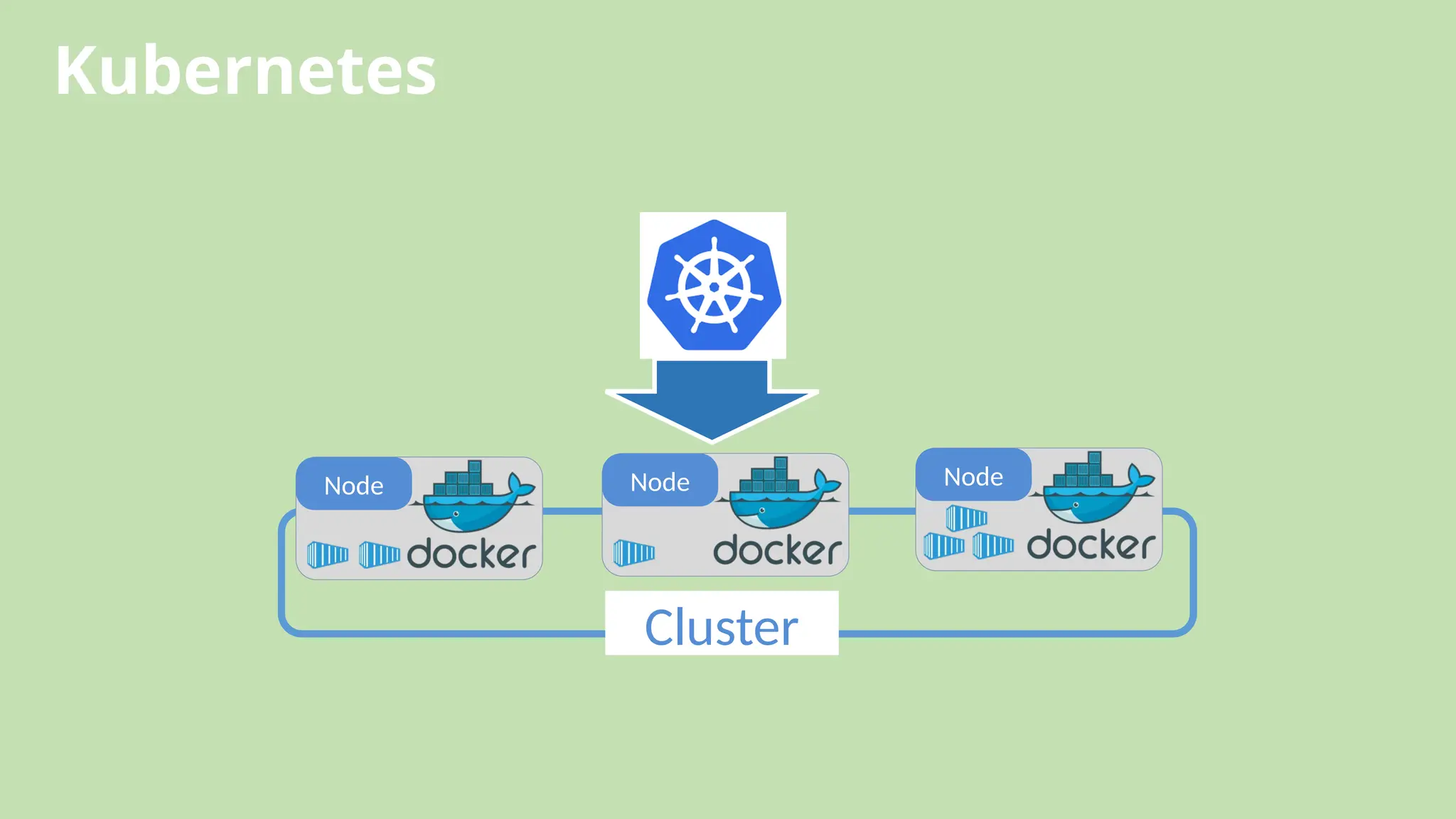

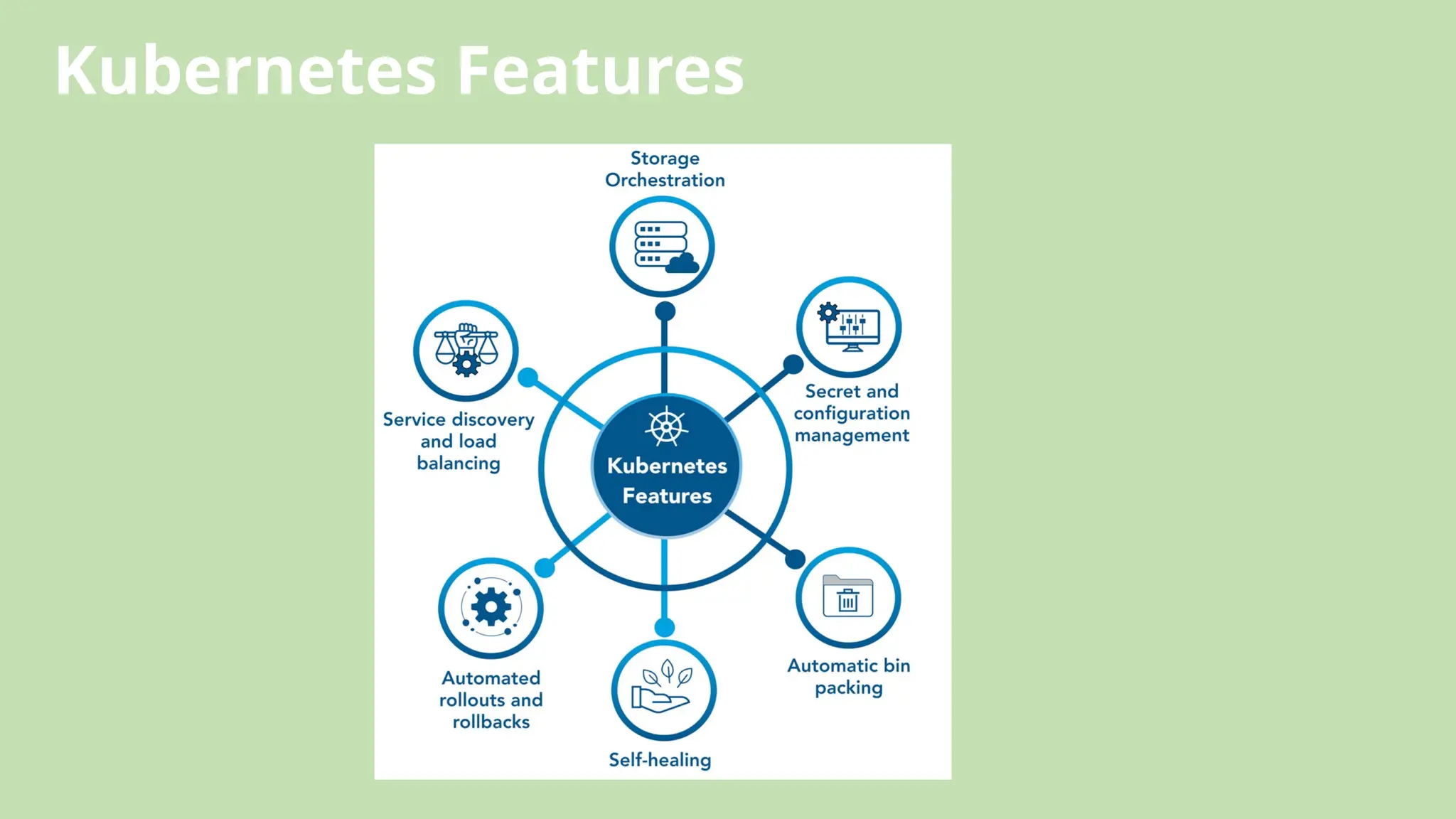

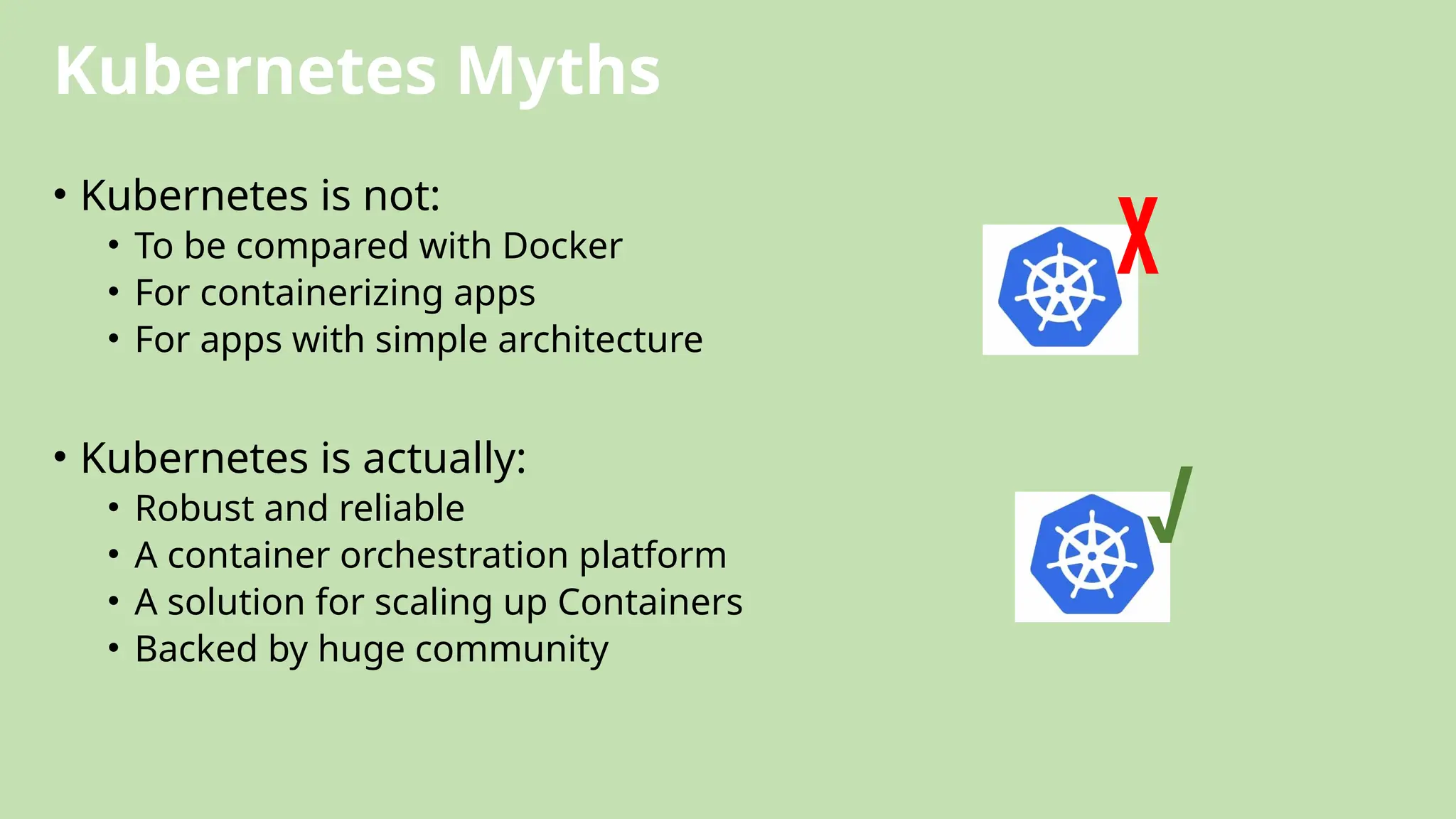

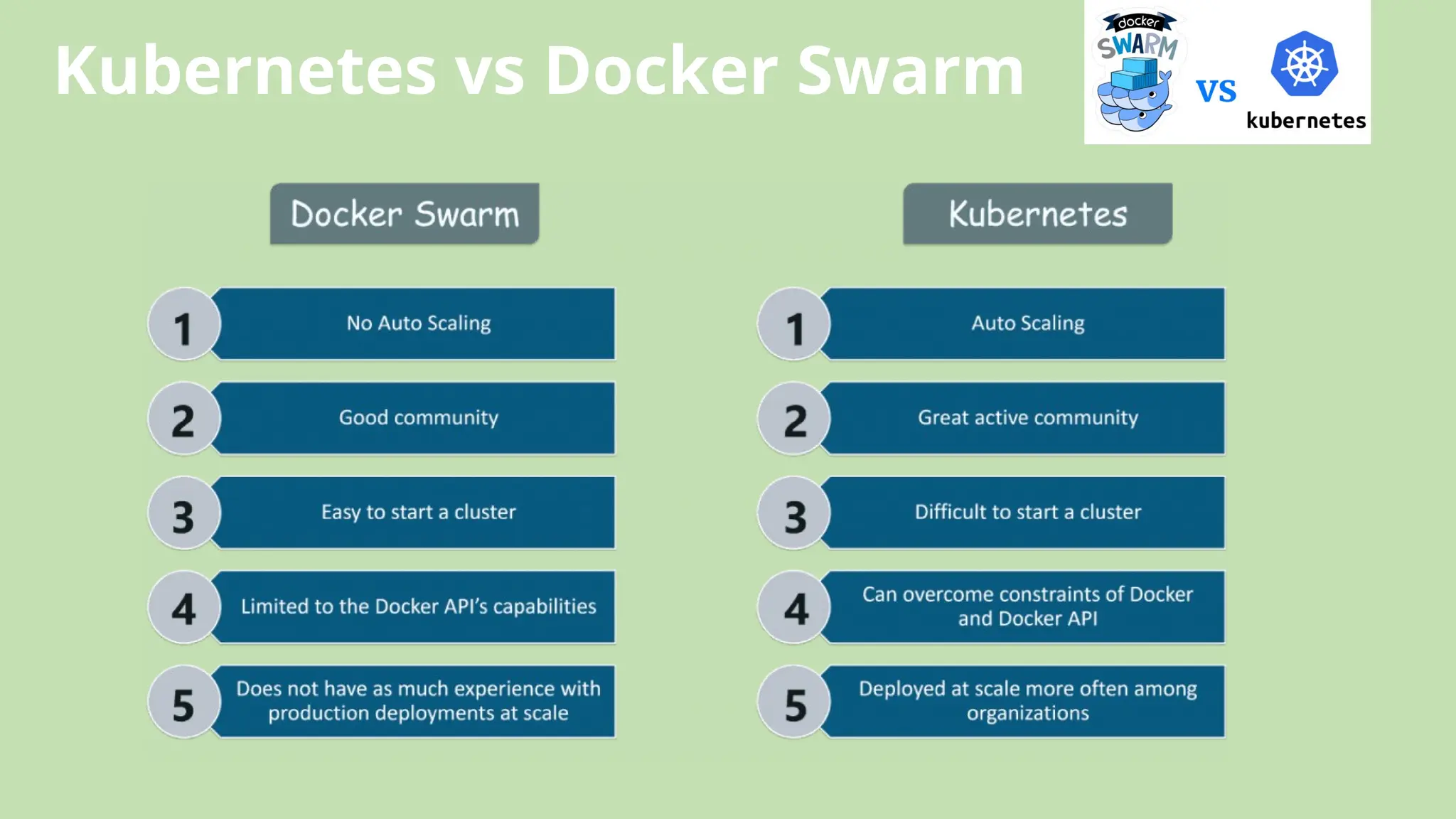

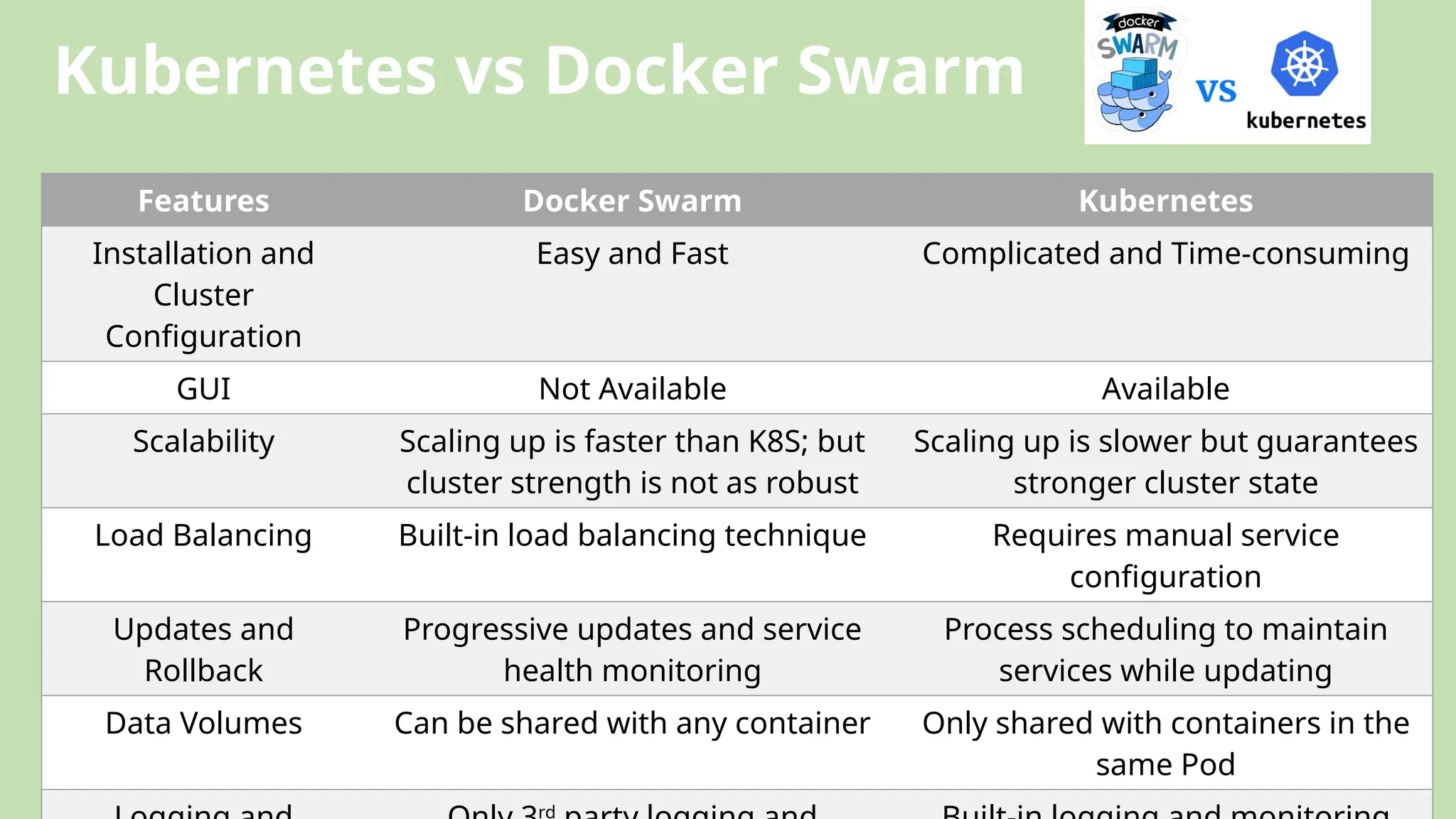

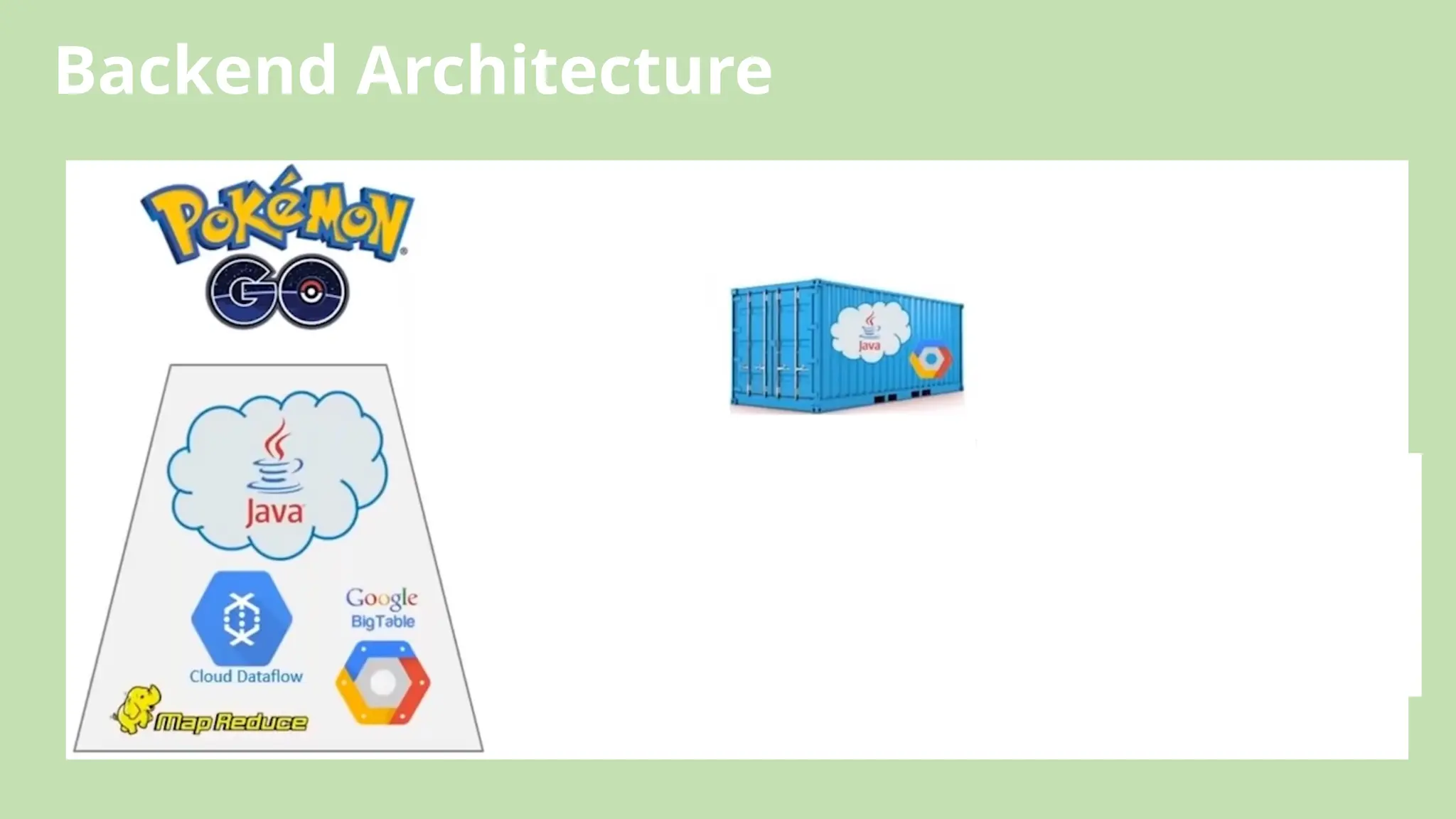

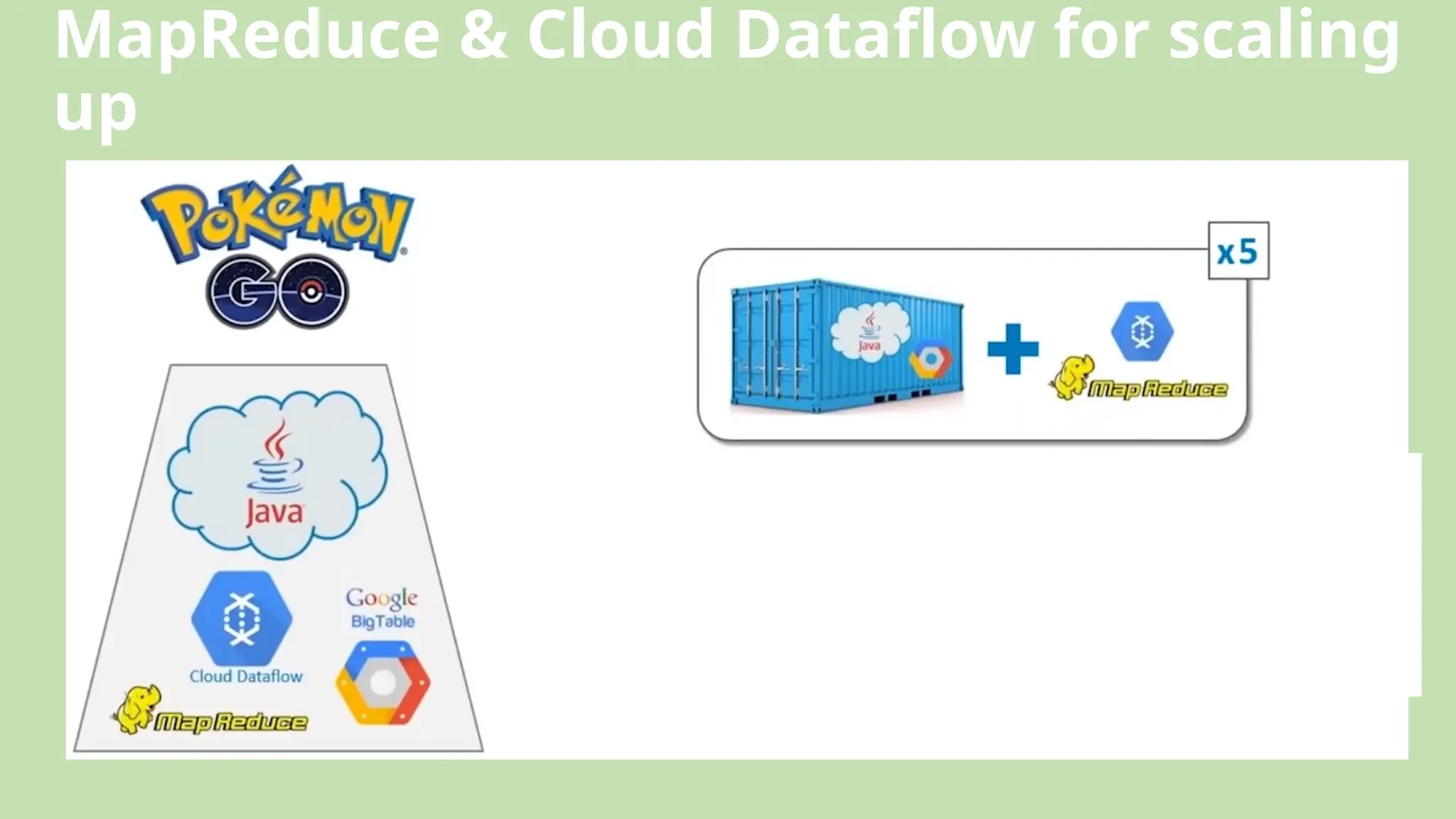

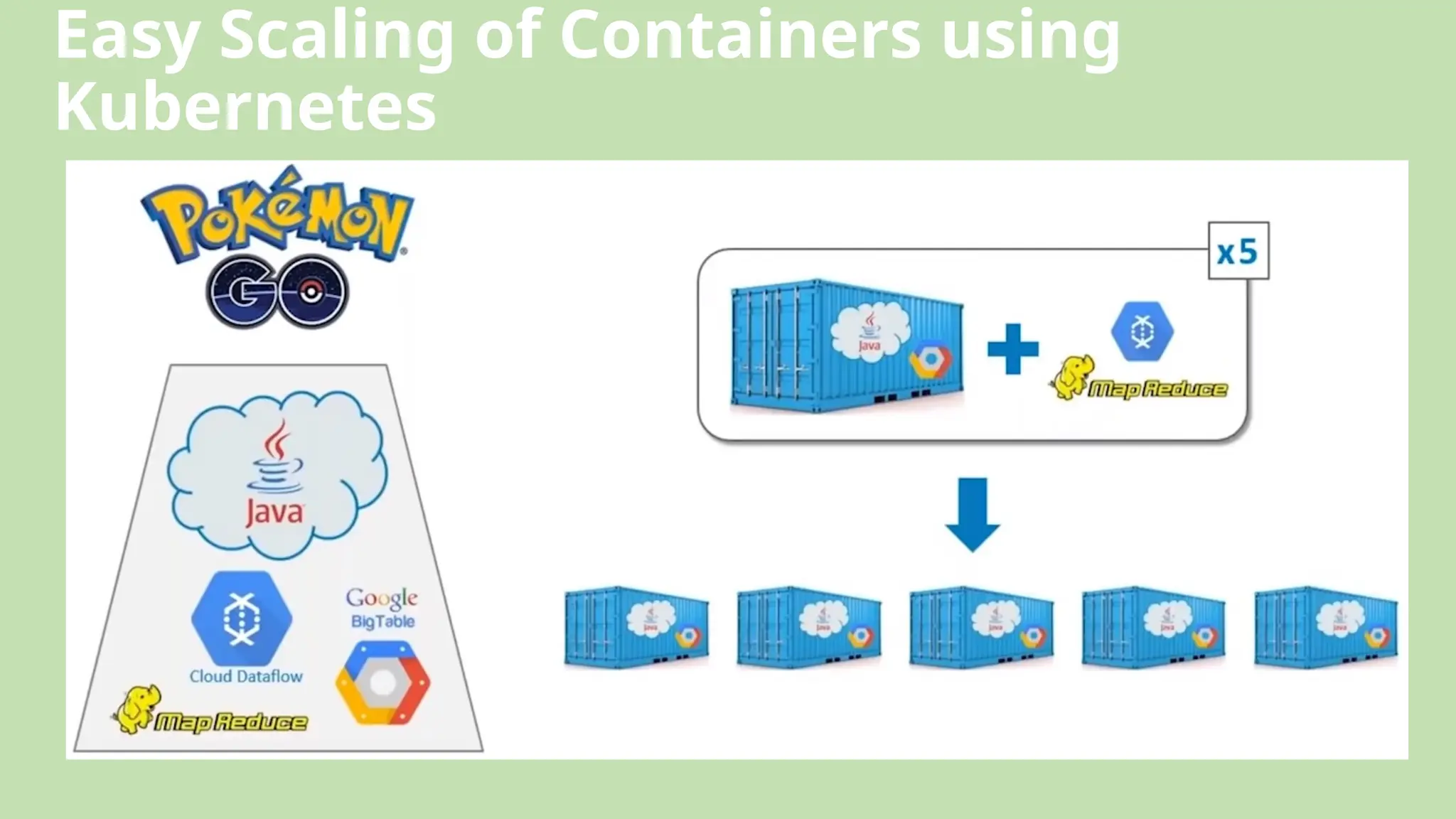

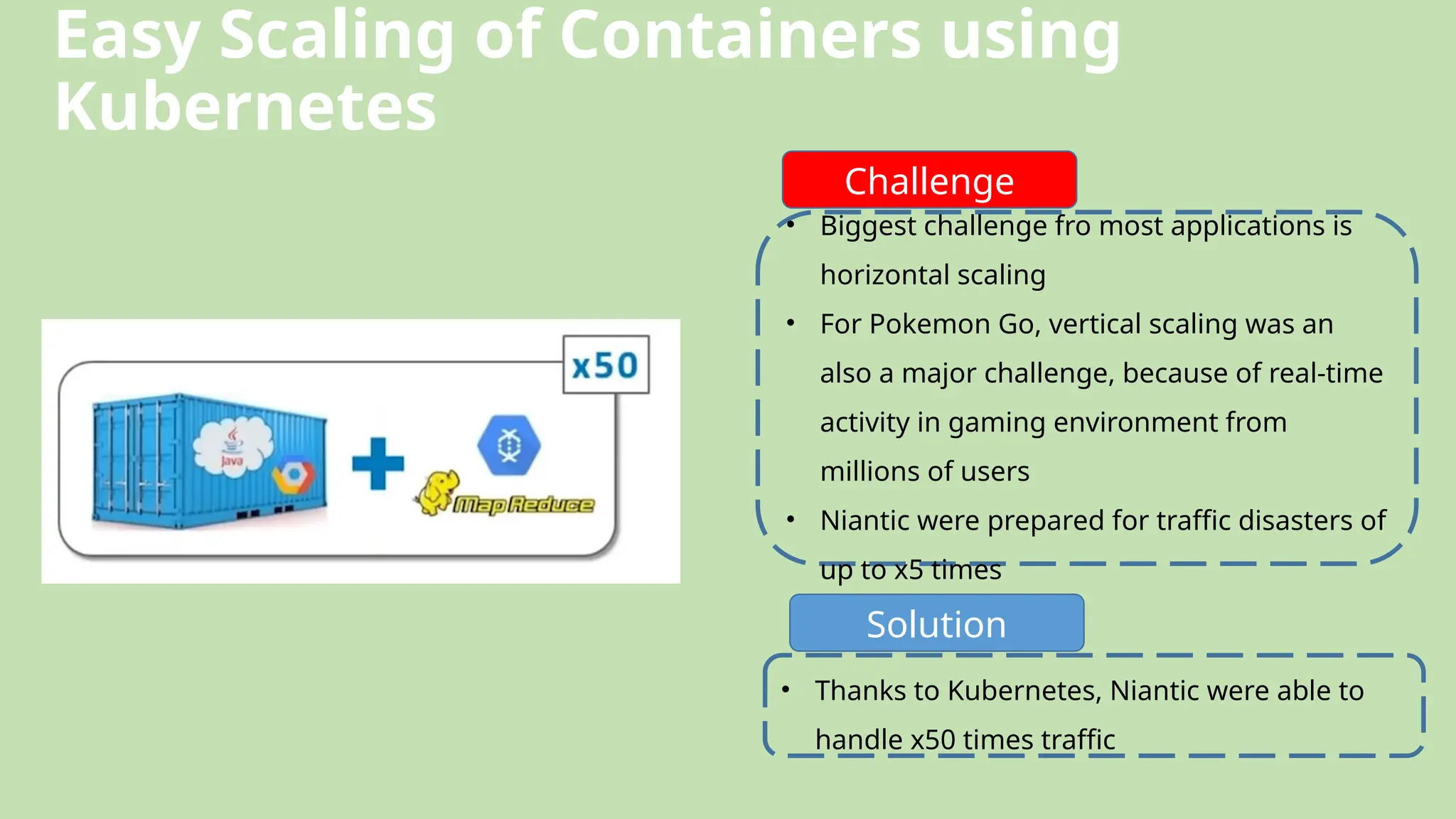

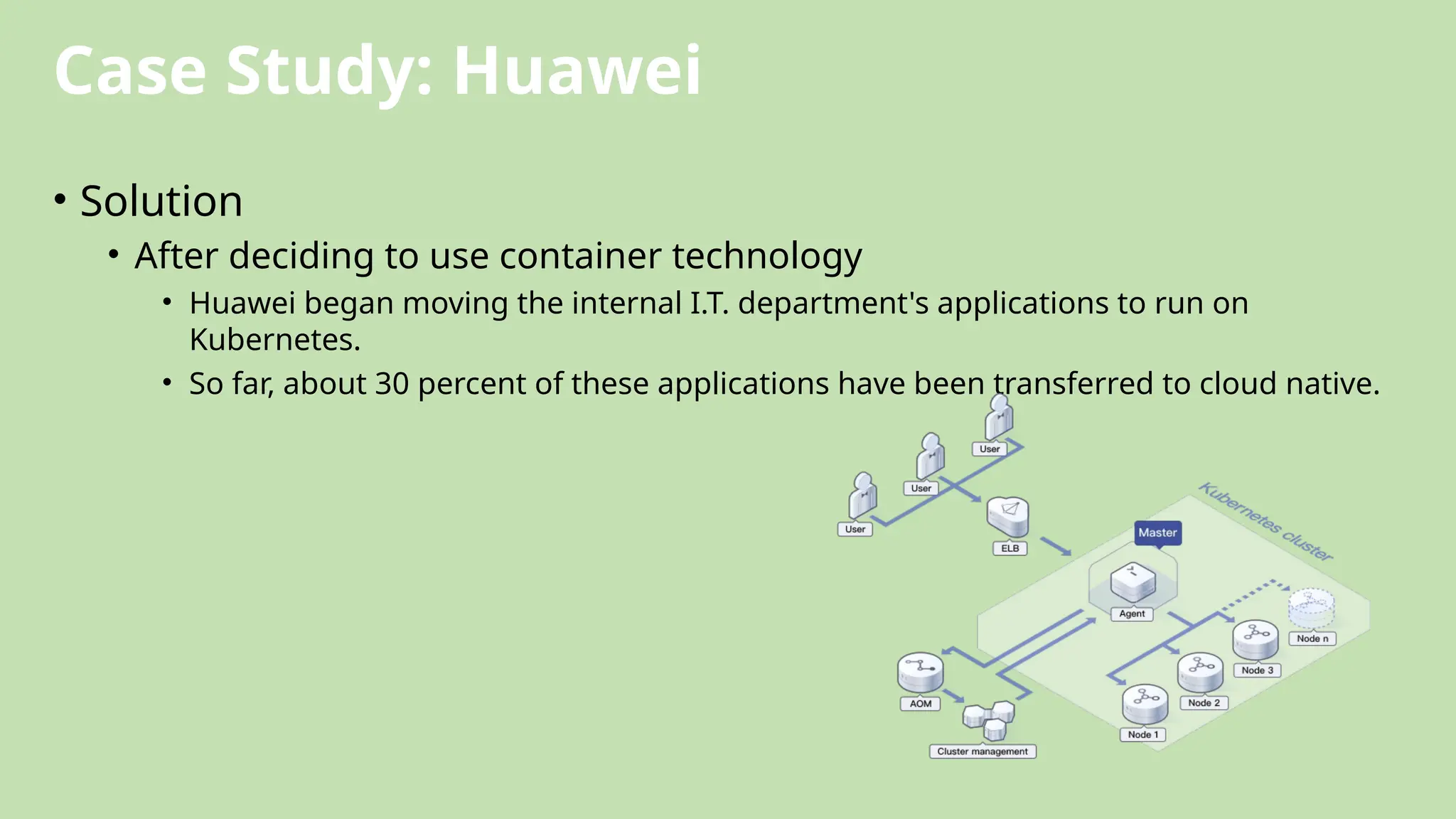

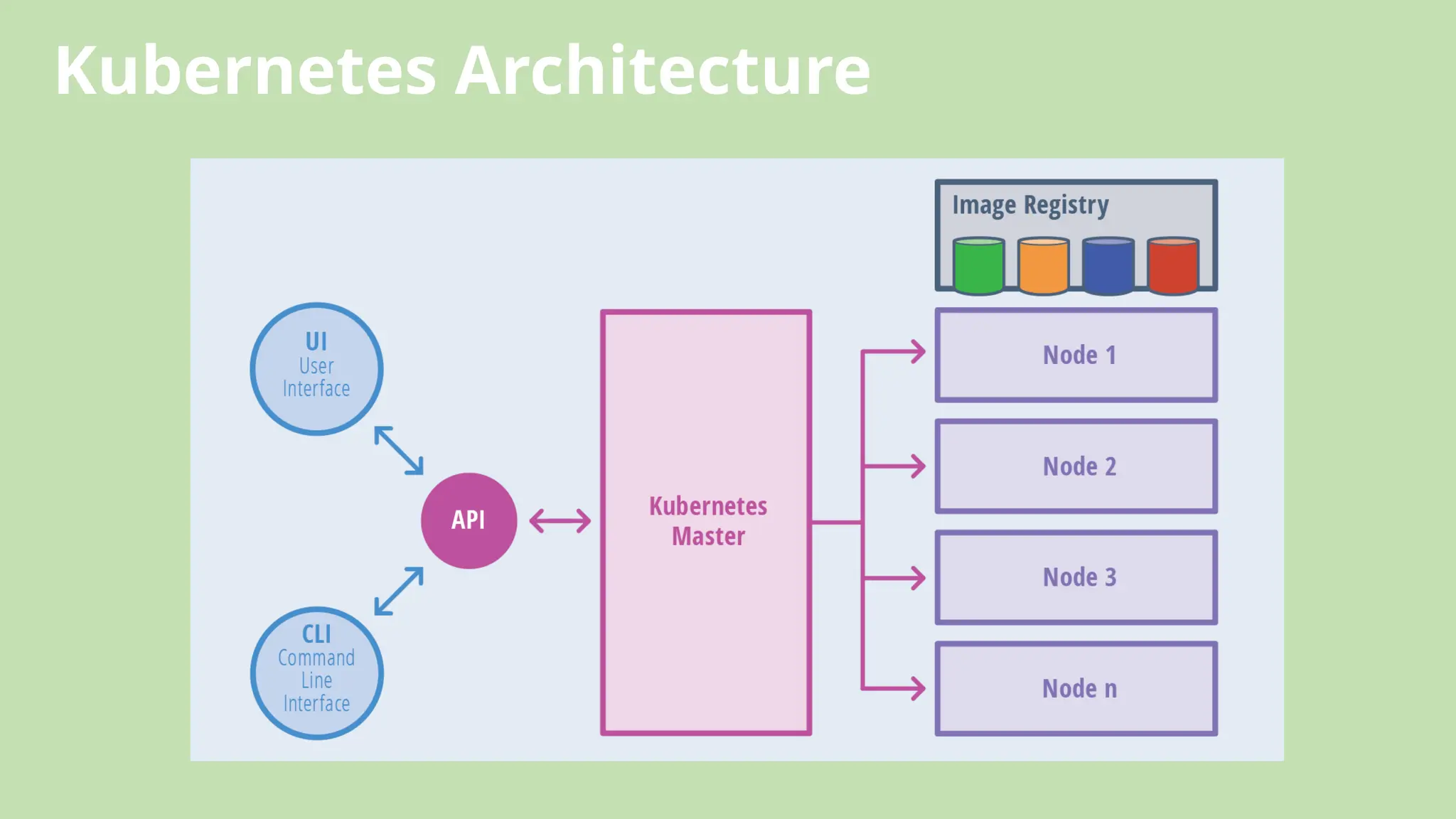

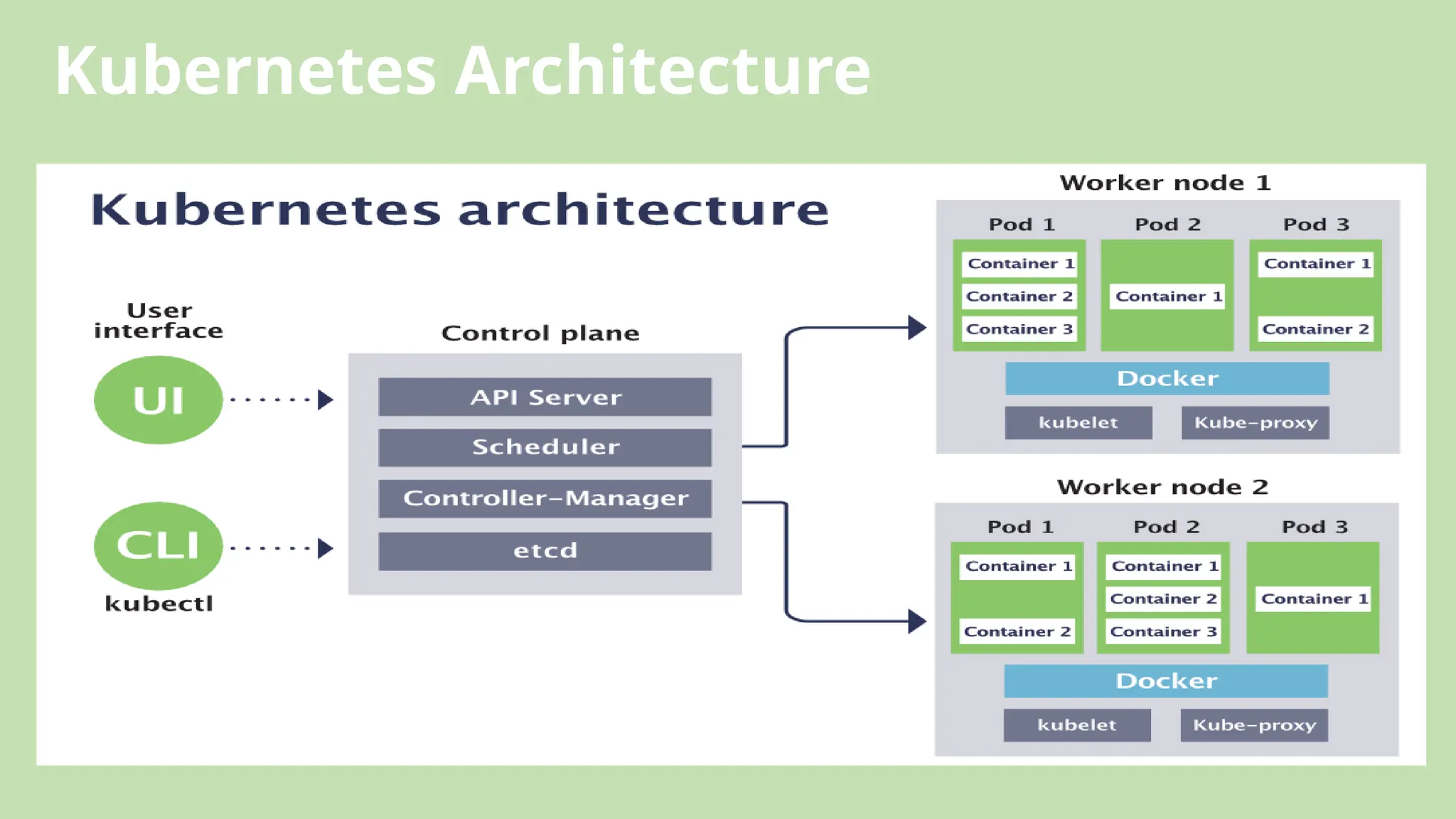

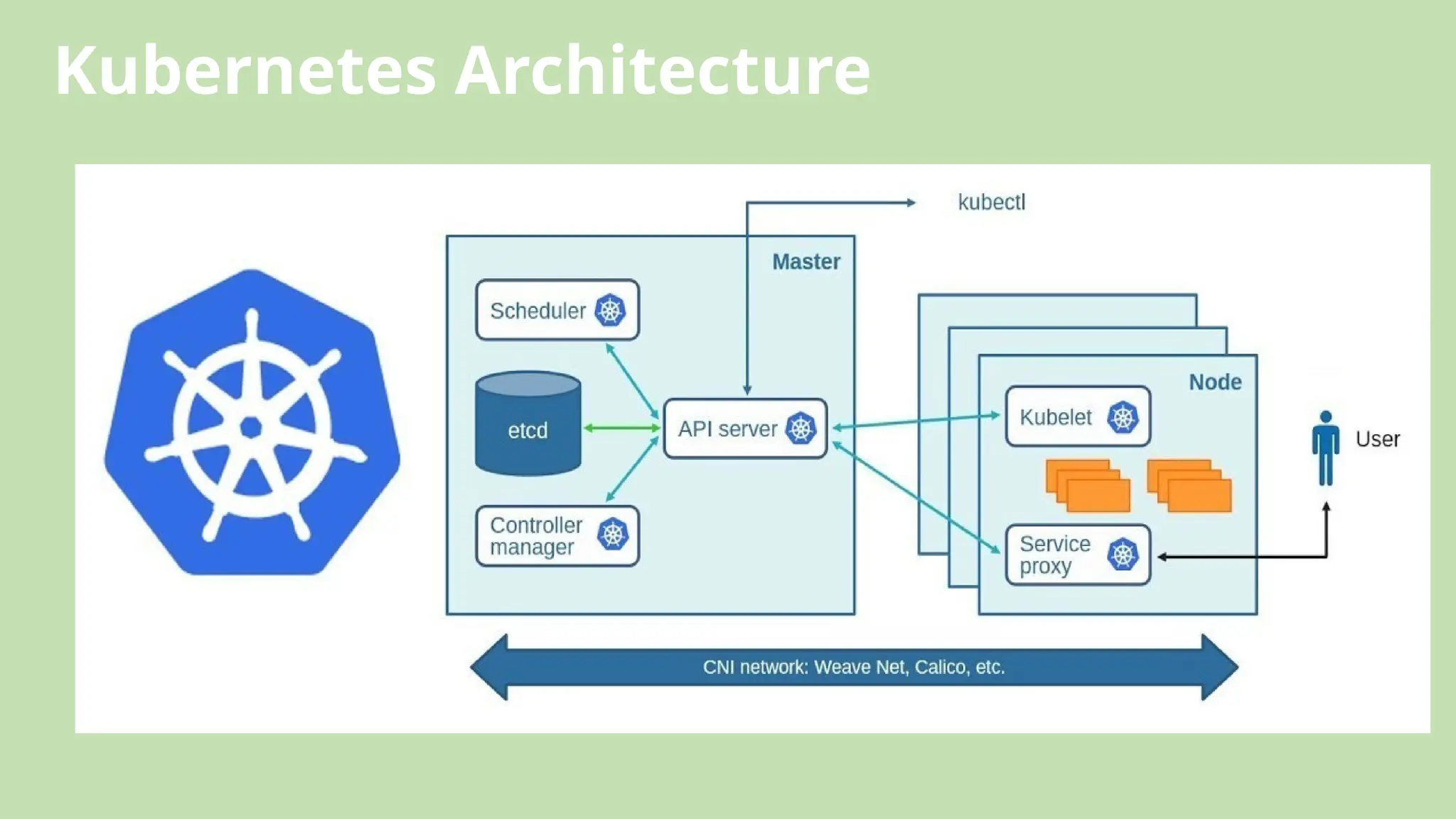

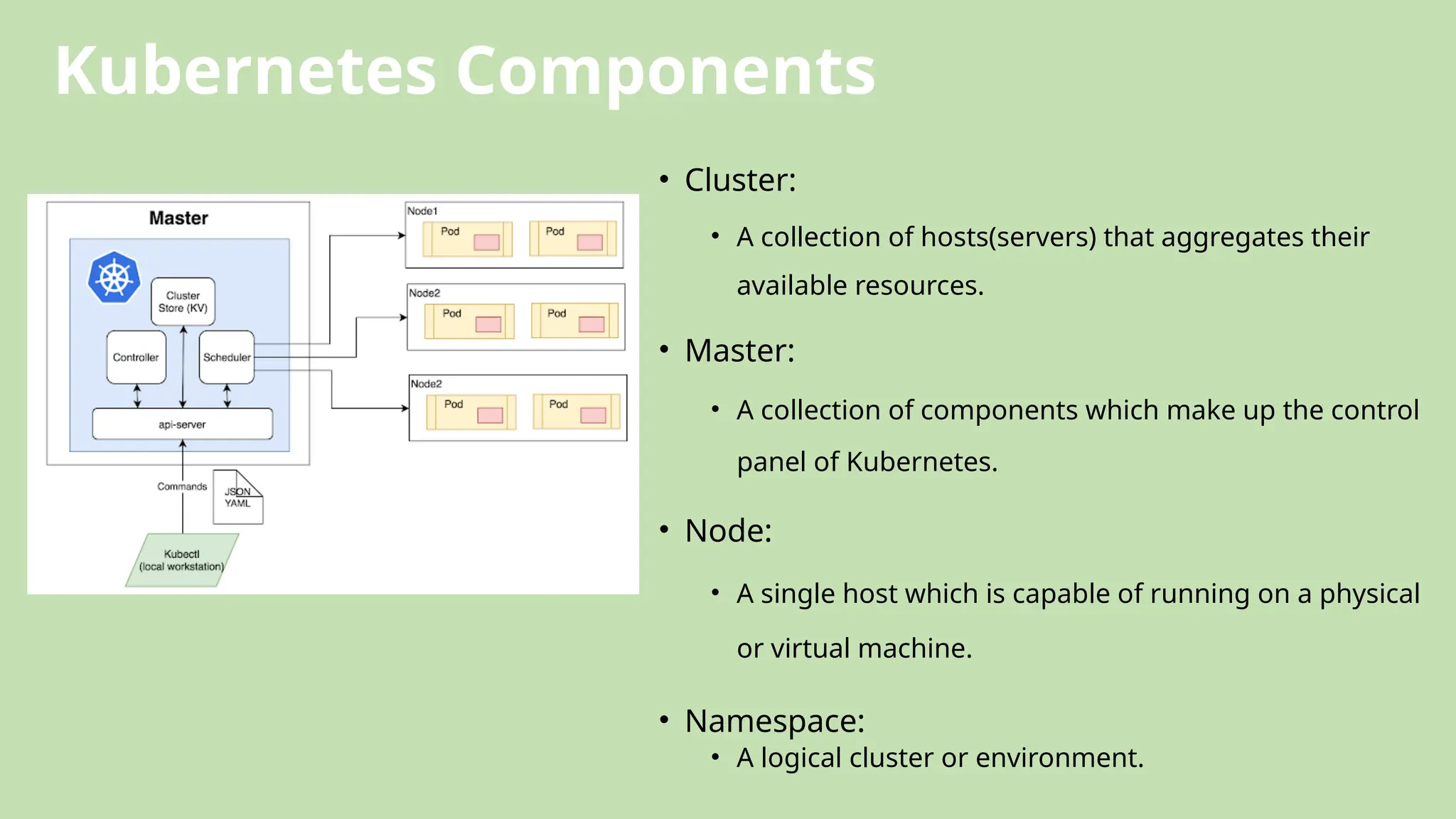

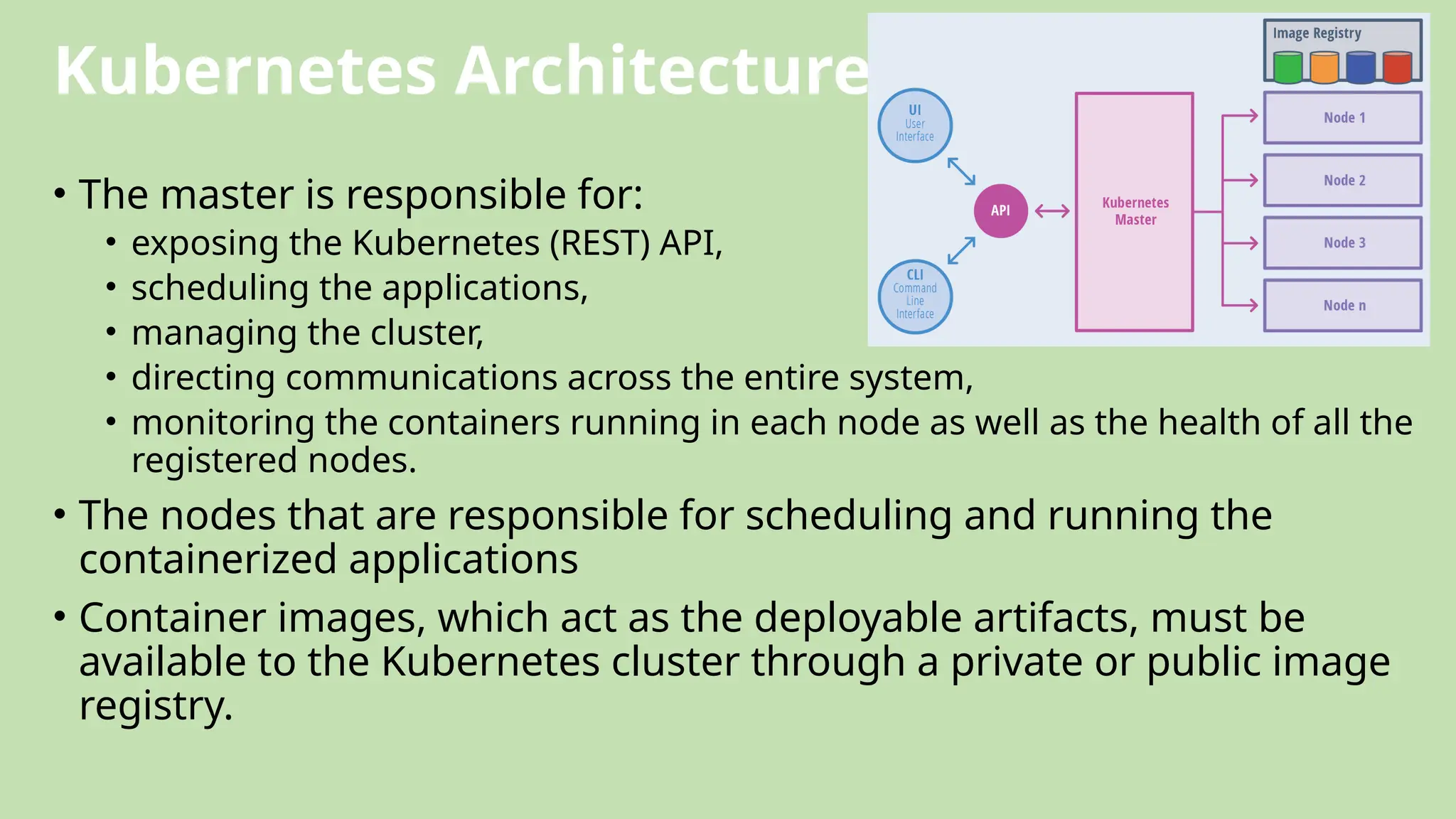

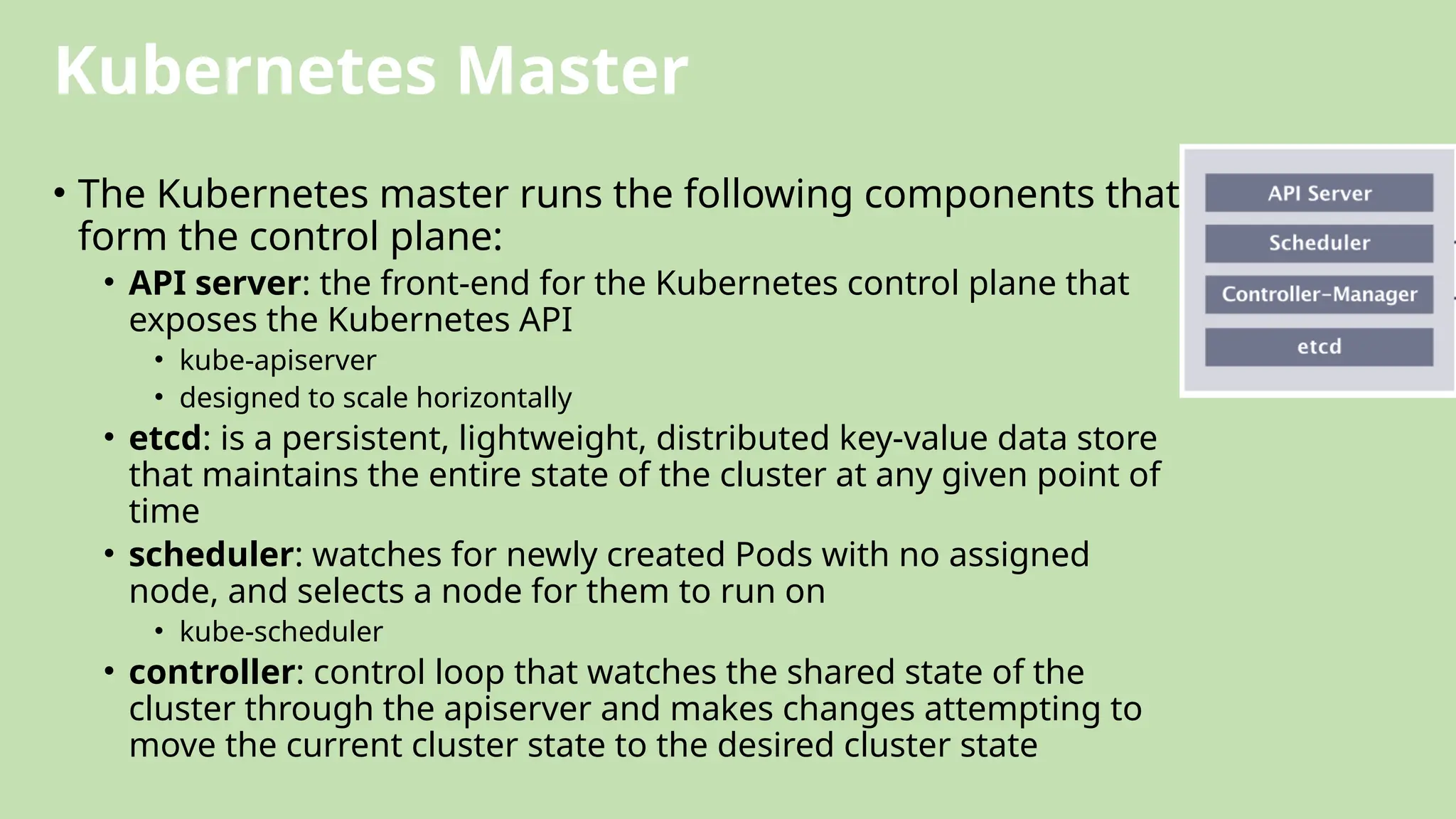

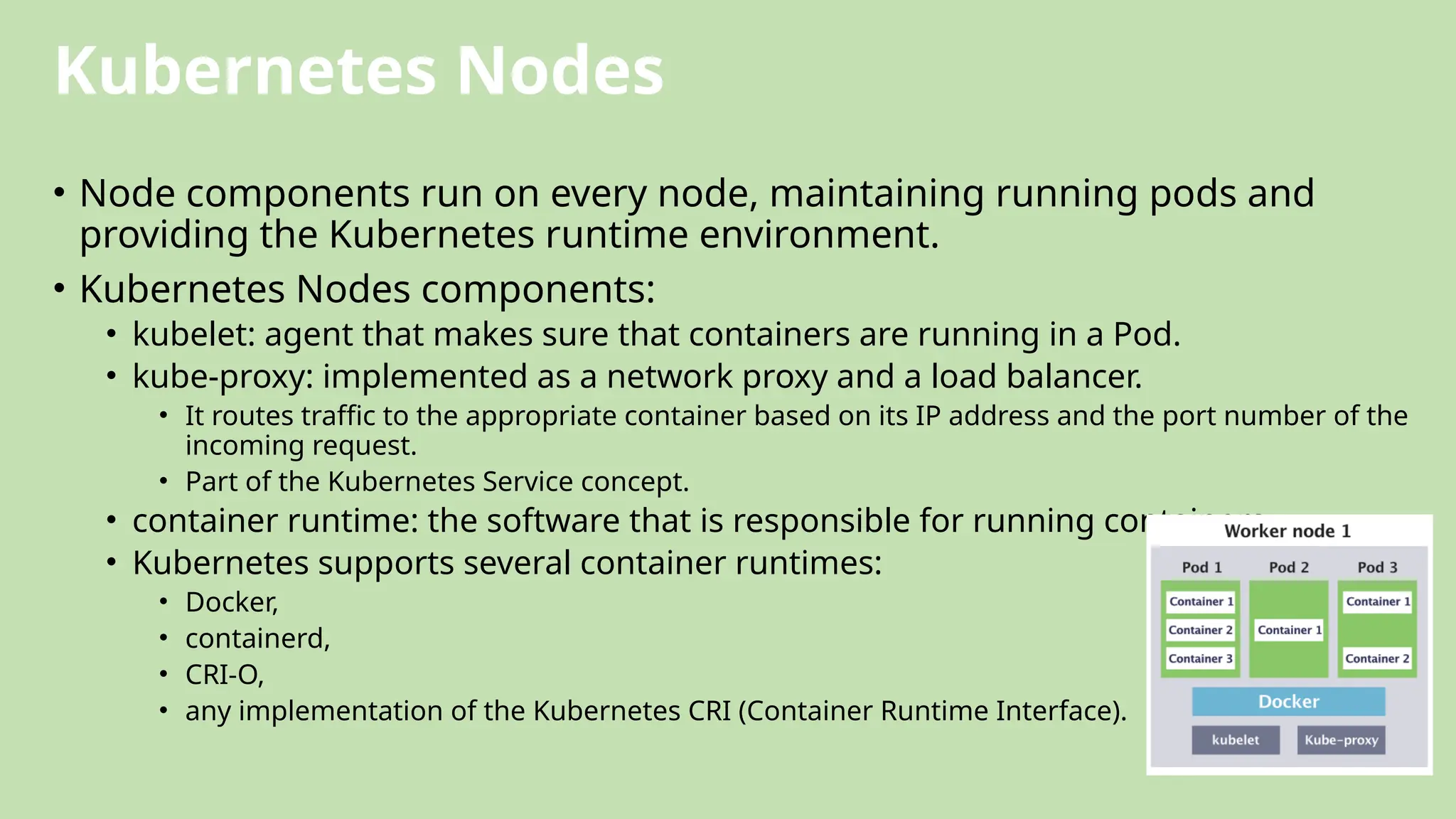

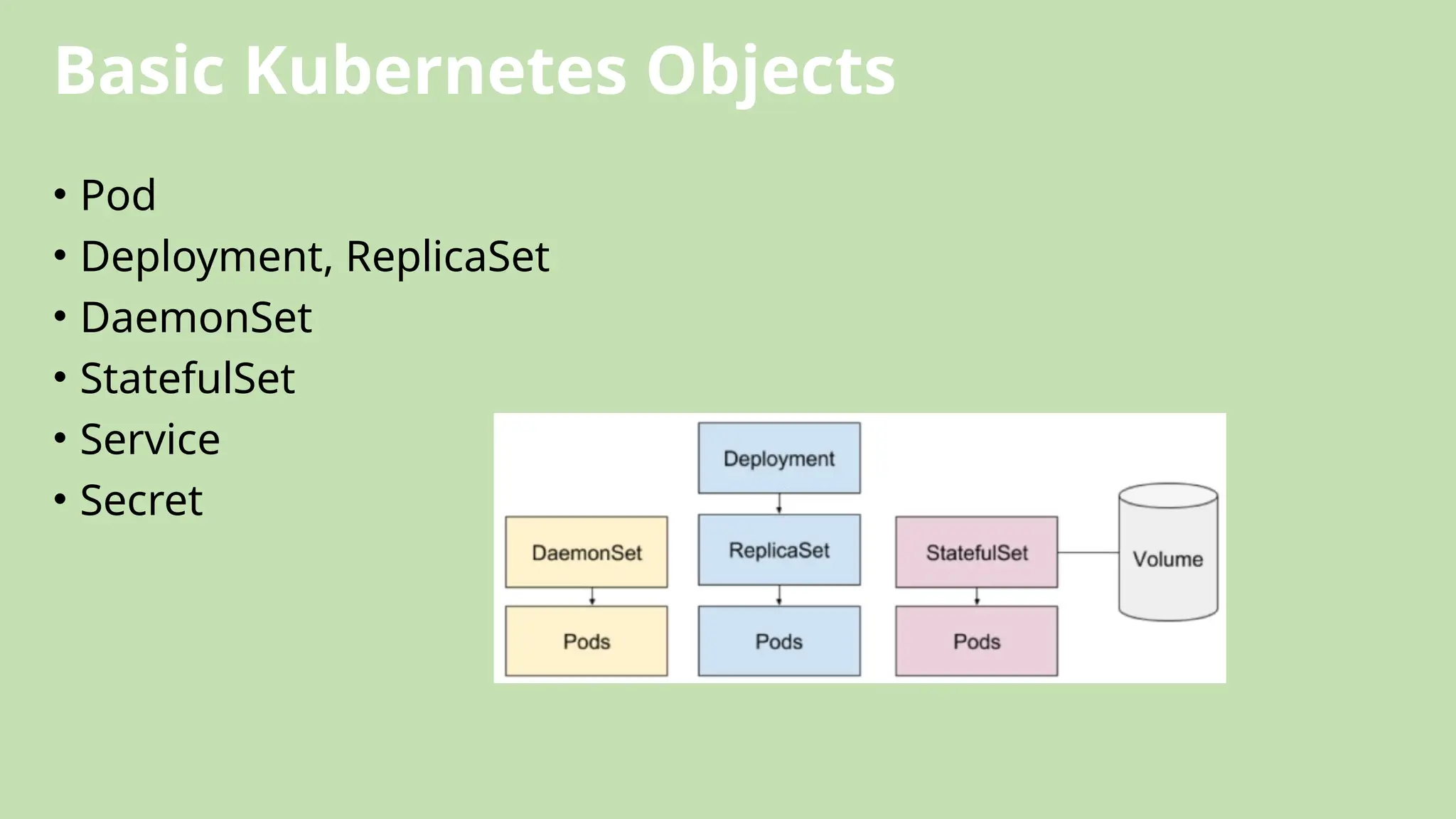

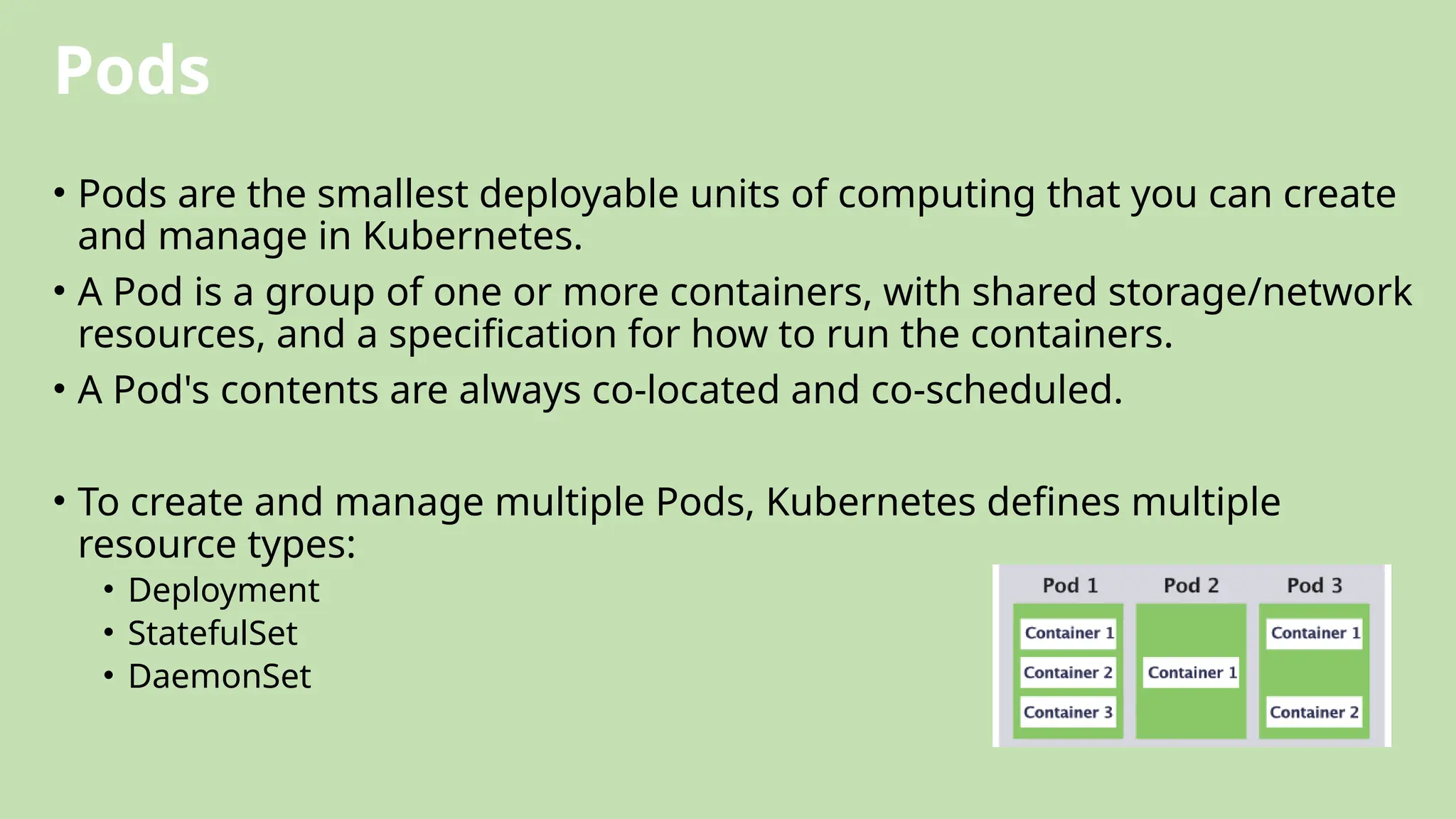

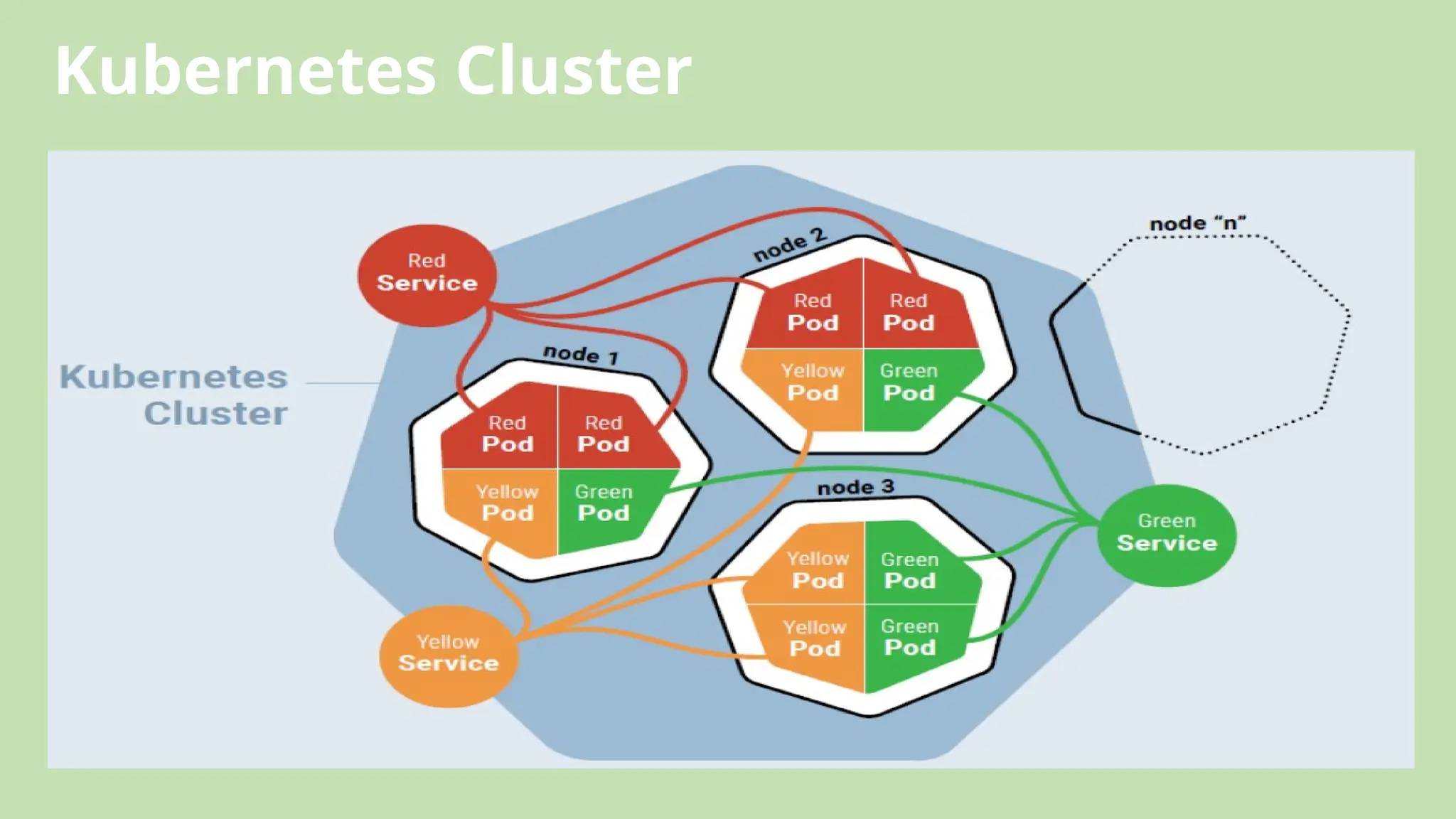

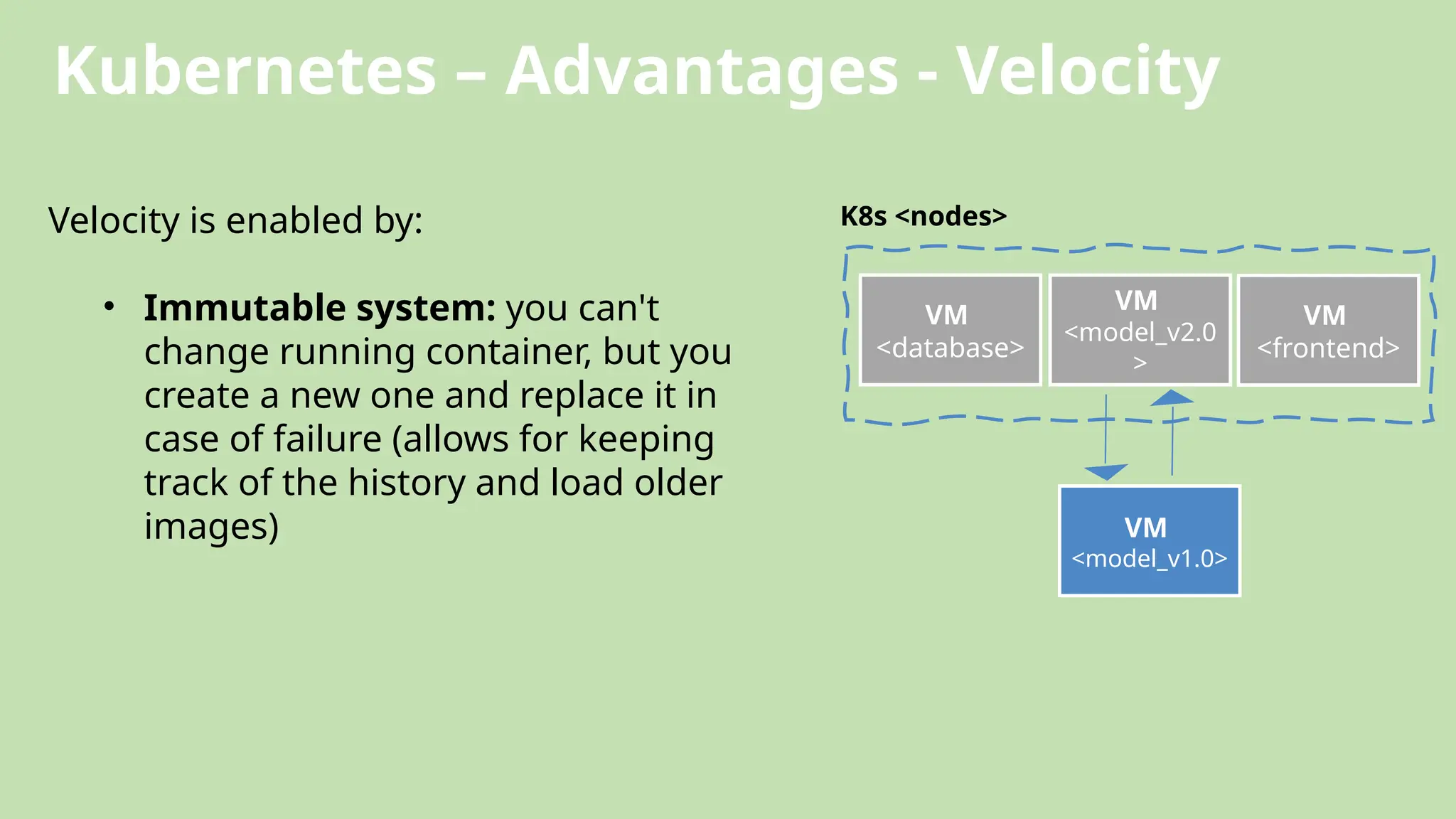

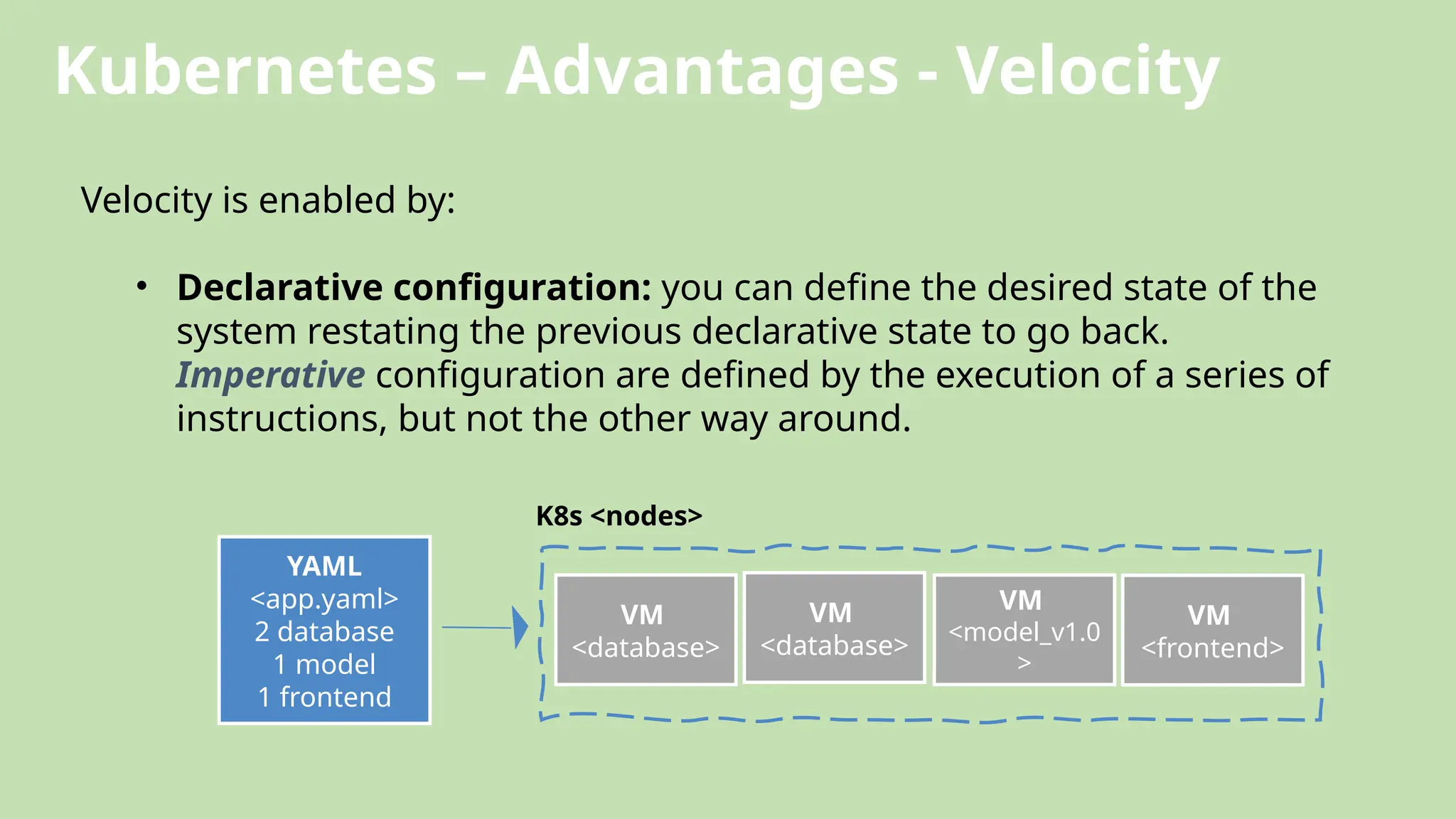

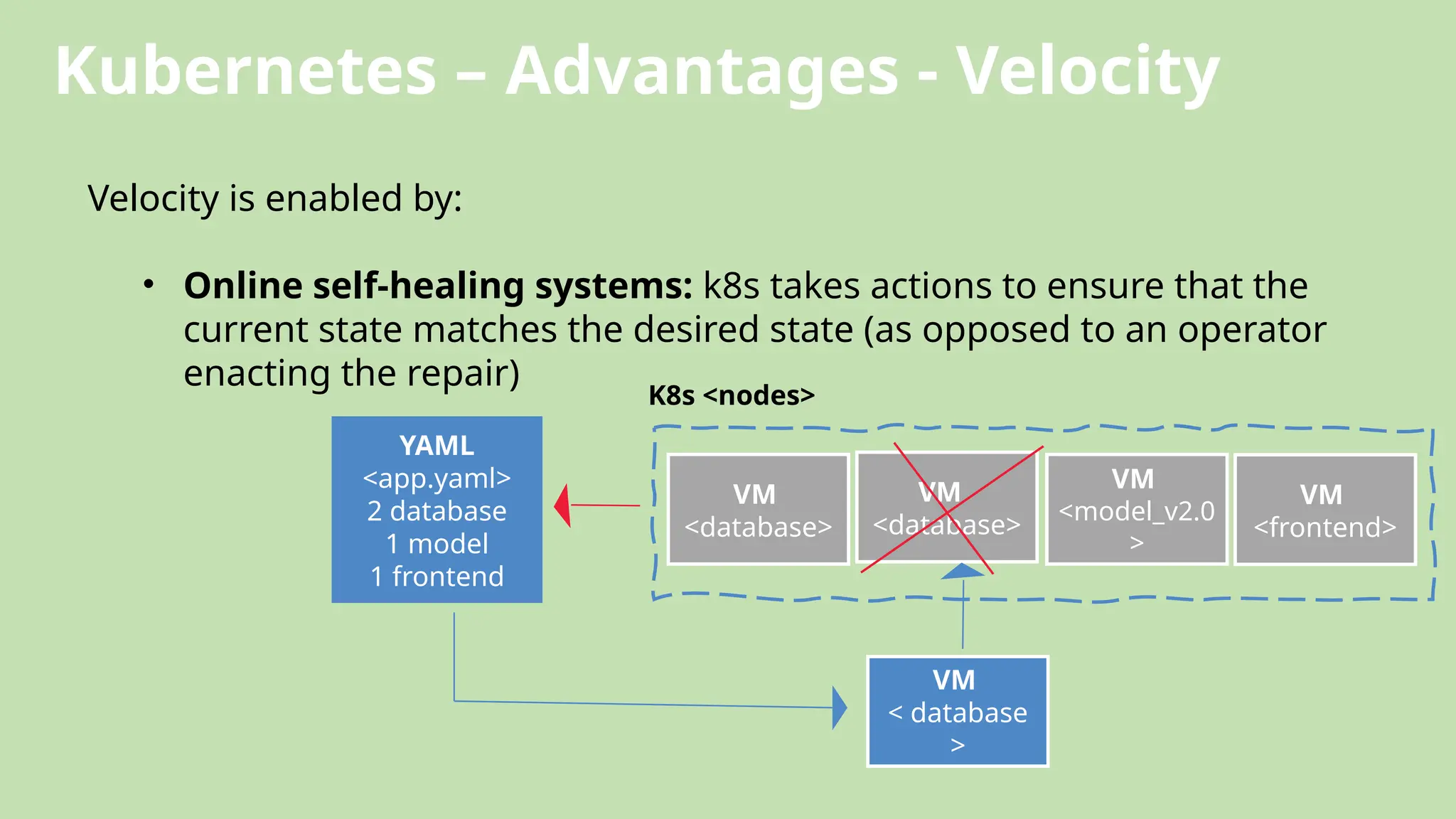

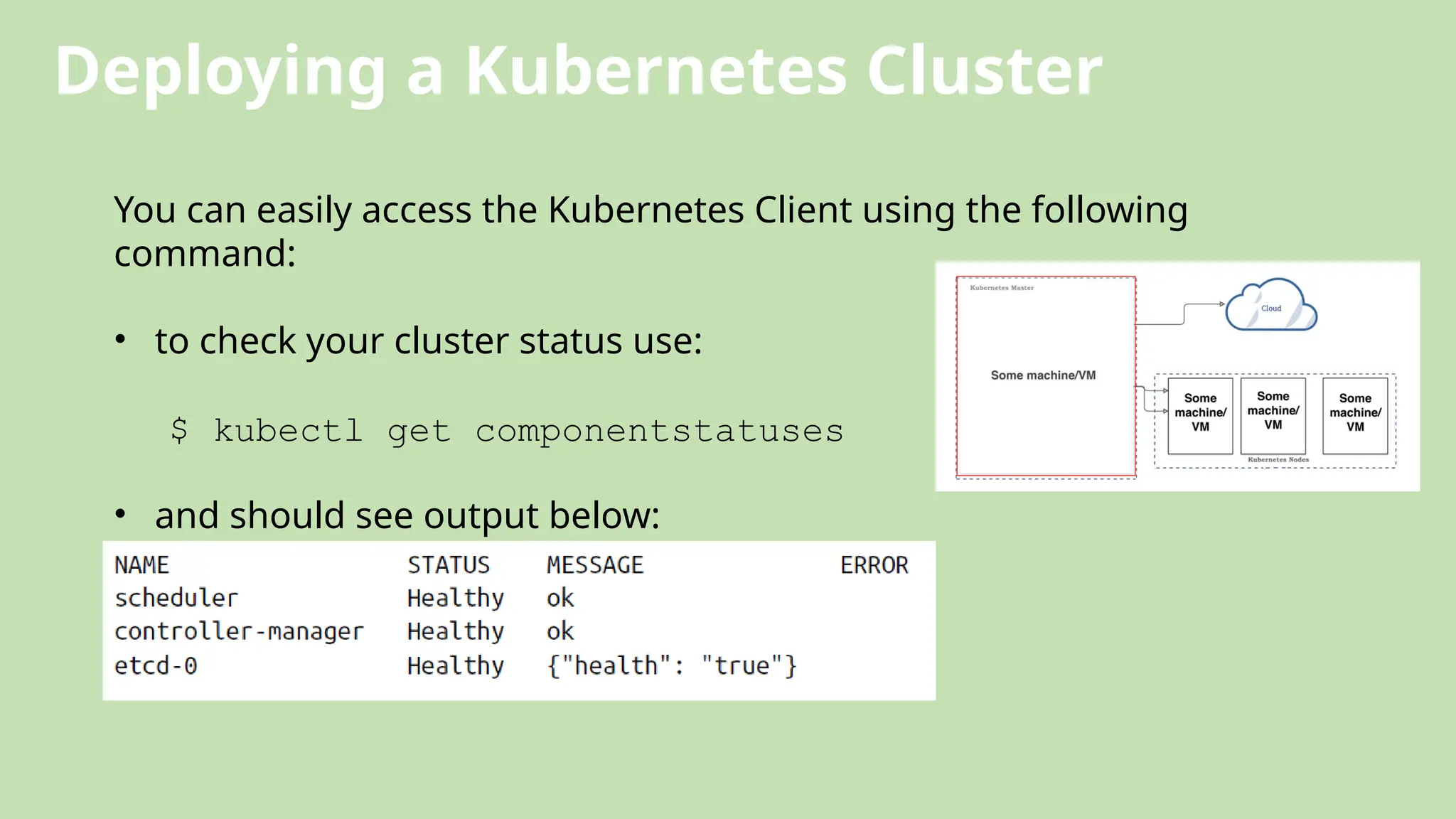

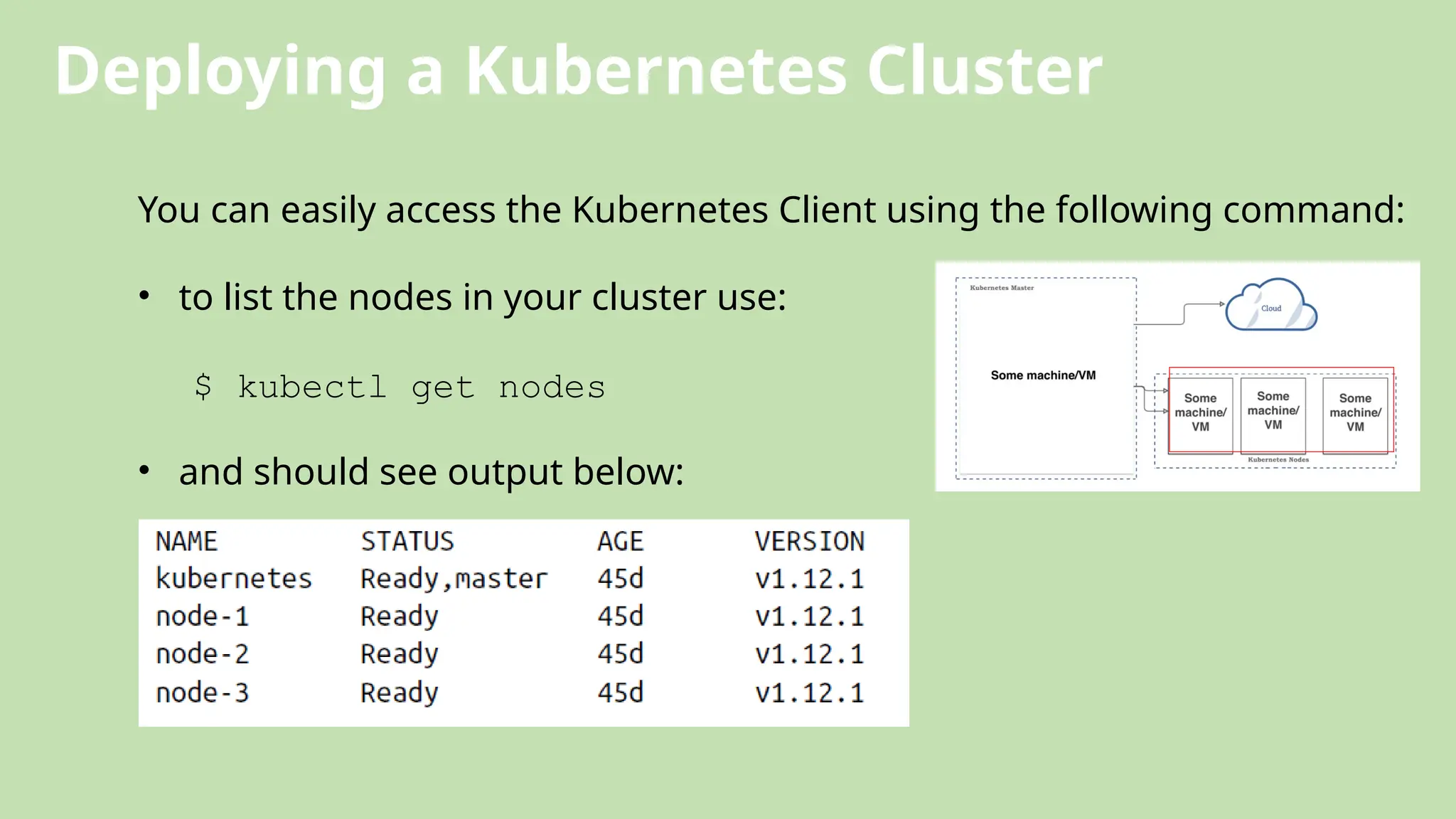

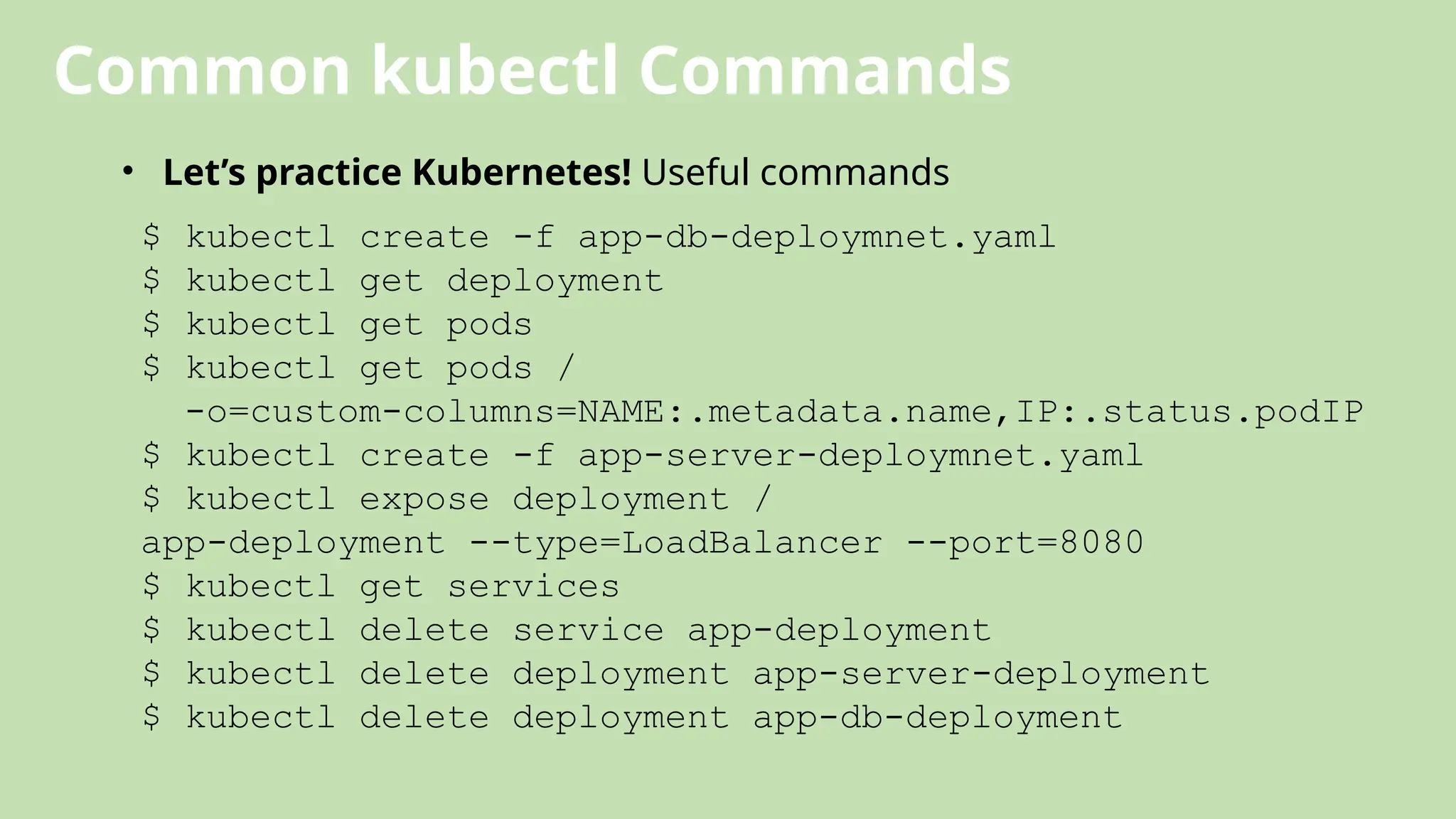

The document outlines the concept and necessity of container orchestration, emphasizing its role in managing the complexities of containerized applications, particularly when deploying microservices at scale. It discusses the advantages of using tools like Kubernetes for automating various tasks such as provisioning, scaling, and networking, while also presenting case studies demonstrating successful implementations of Kubernetes by companies like Pokémon Go and Booking.com. Additionally, the document details Kubernetes architecture, components, and its various features that enhance operational efficiency and security.