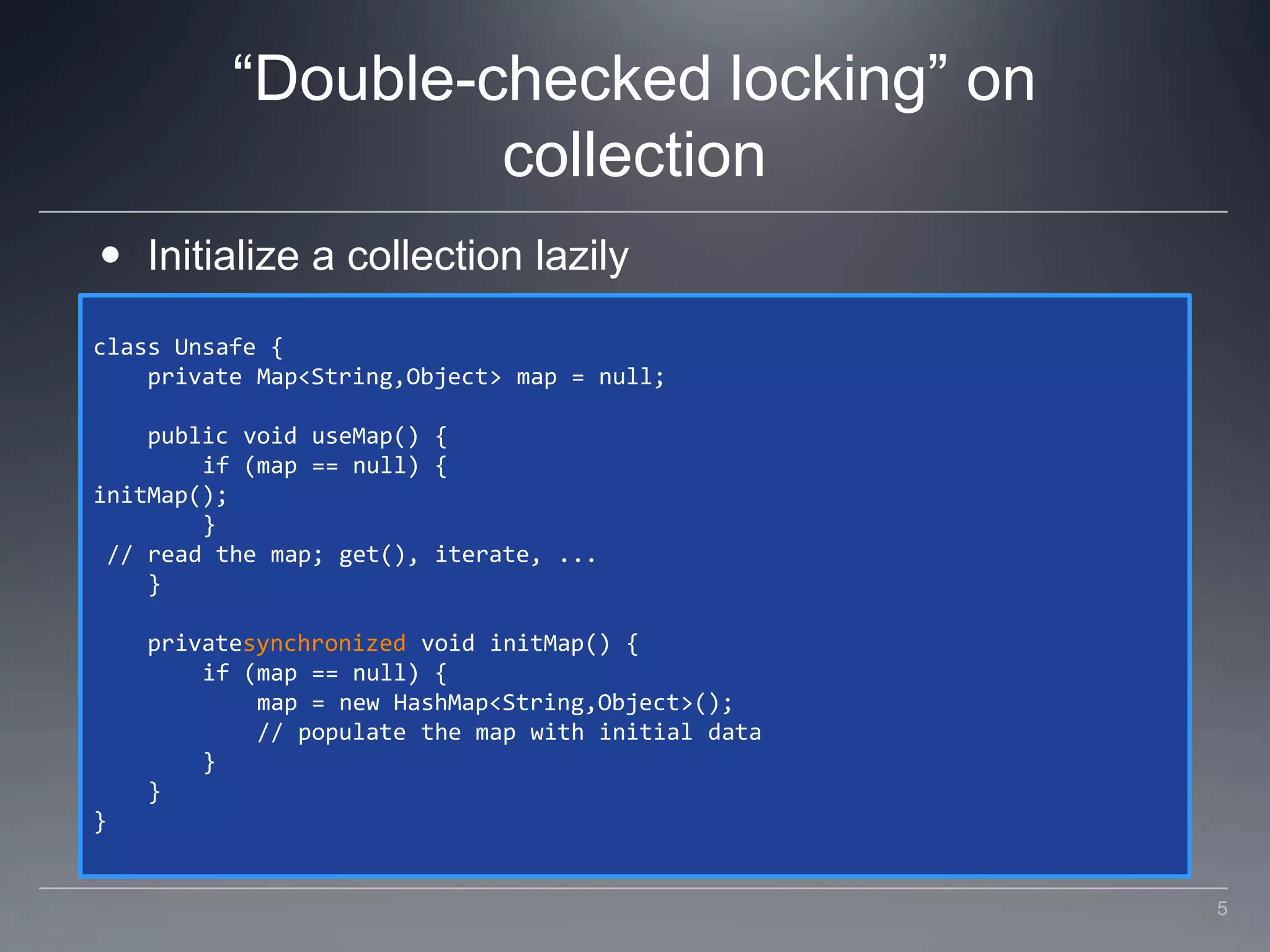

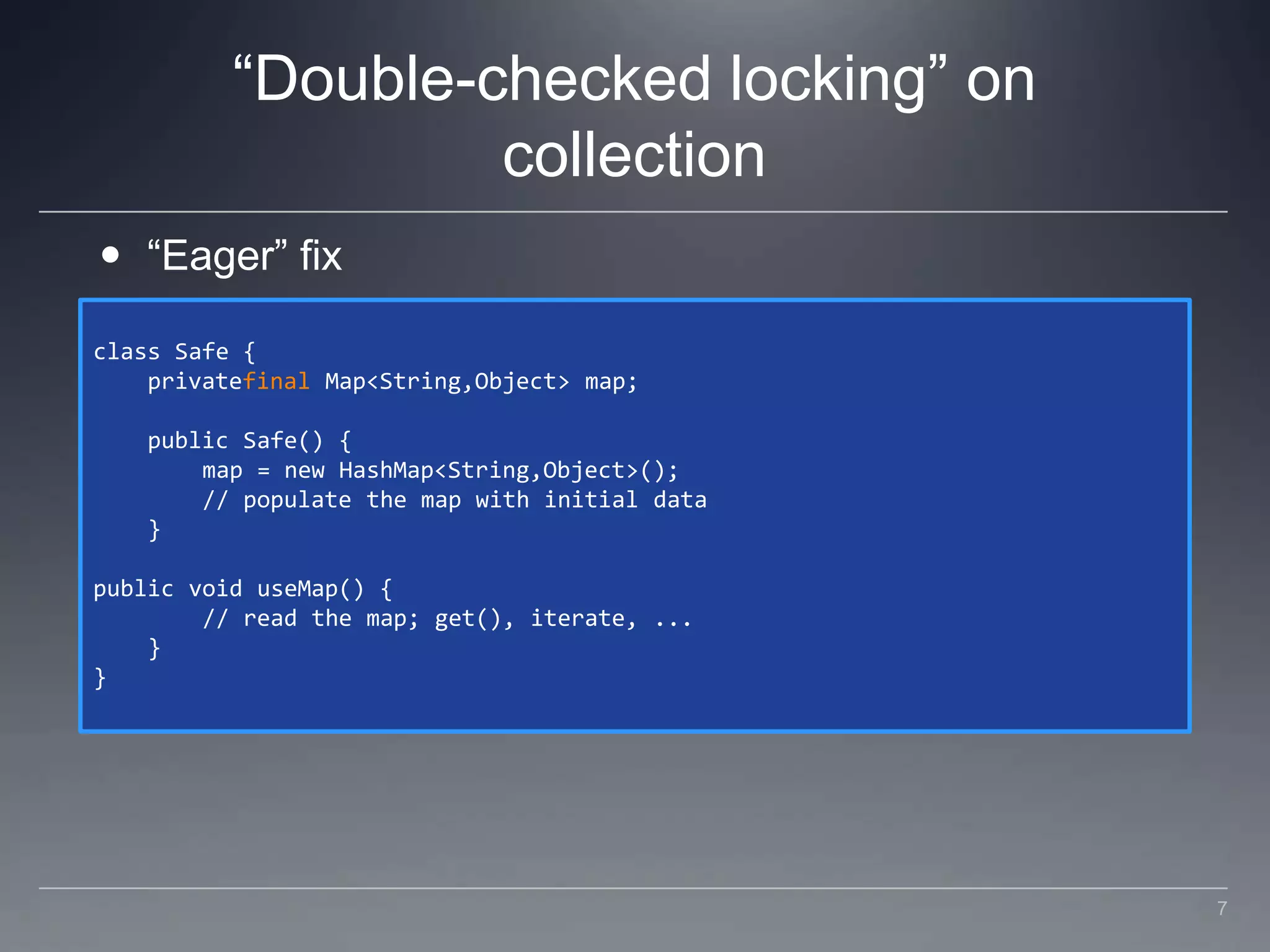

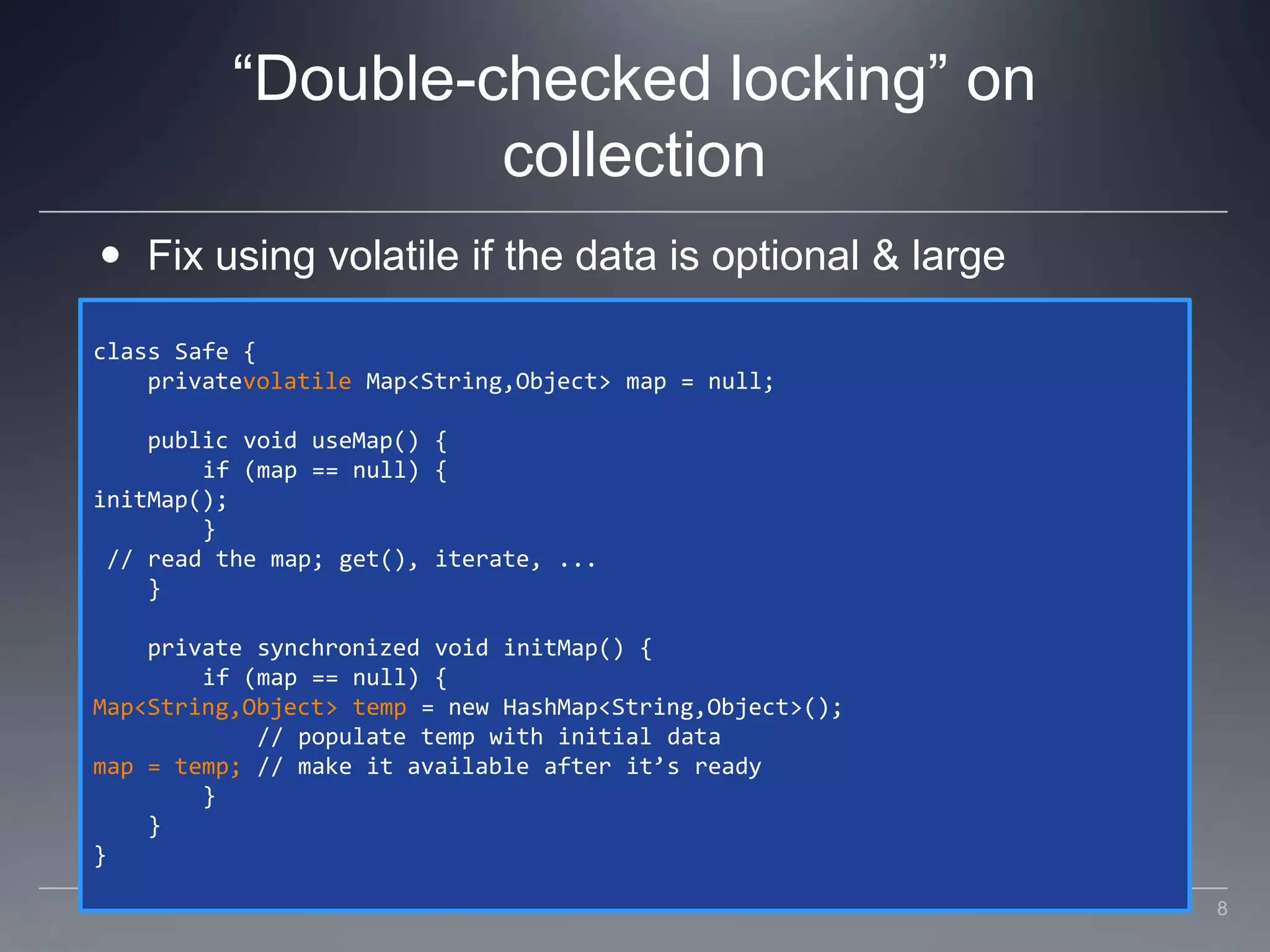

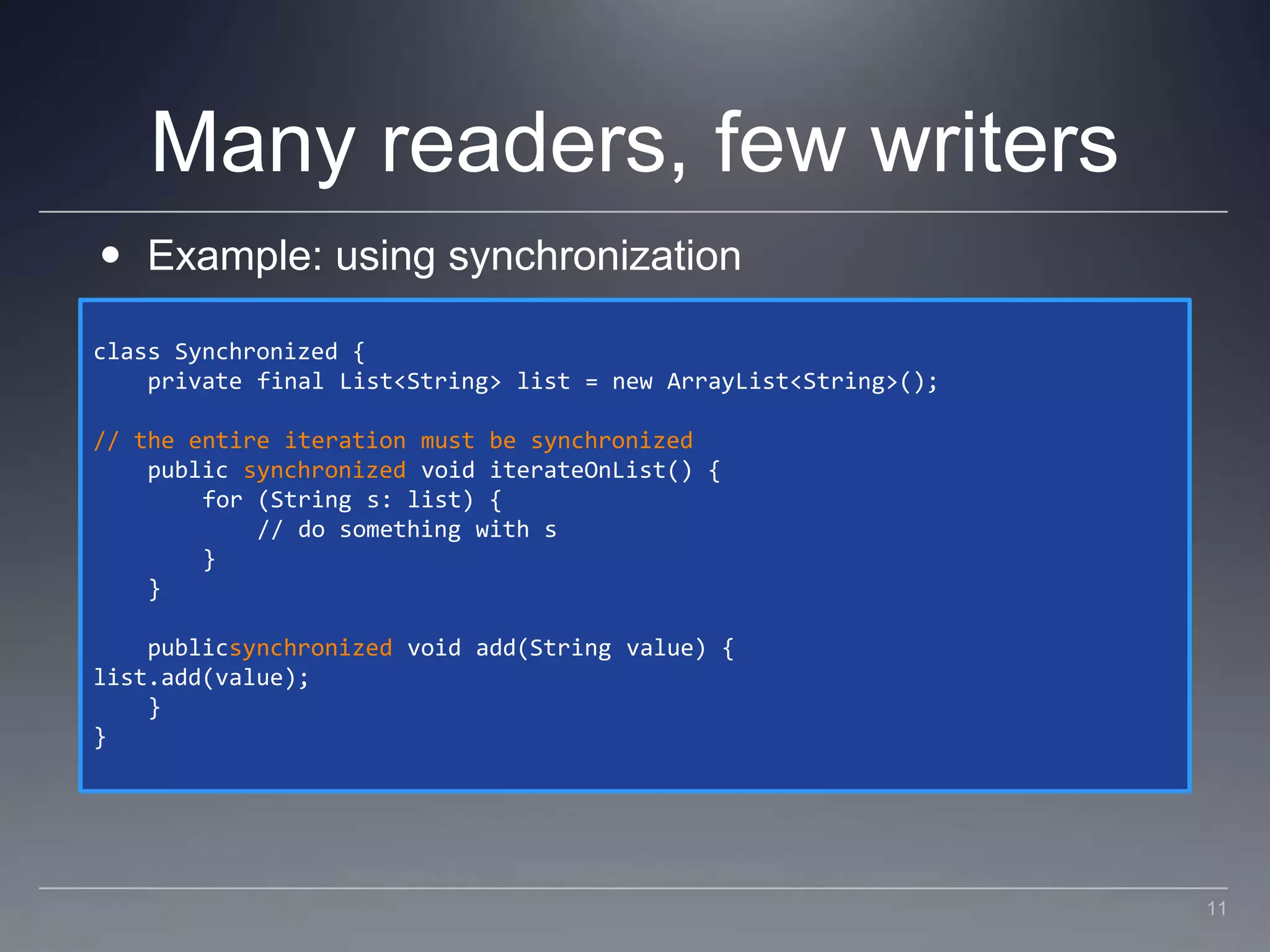

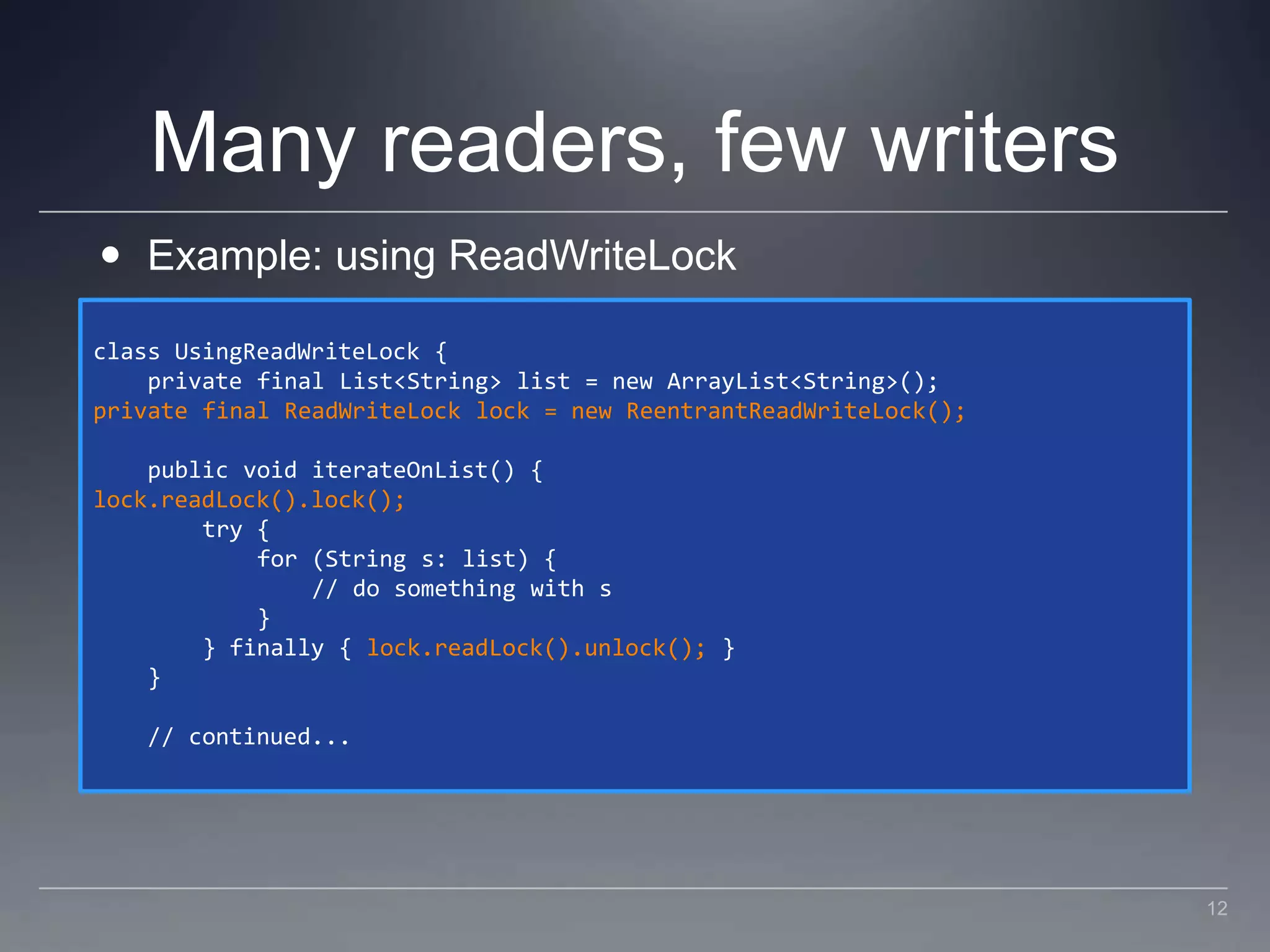

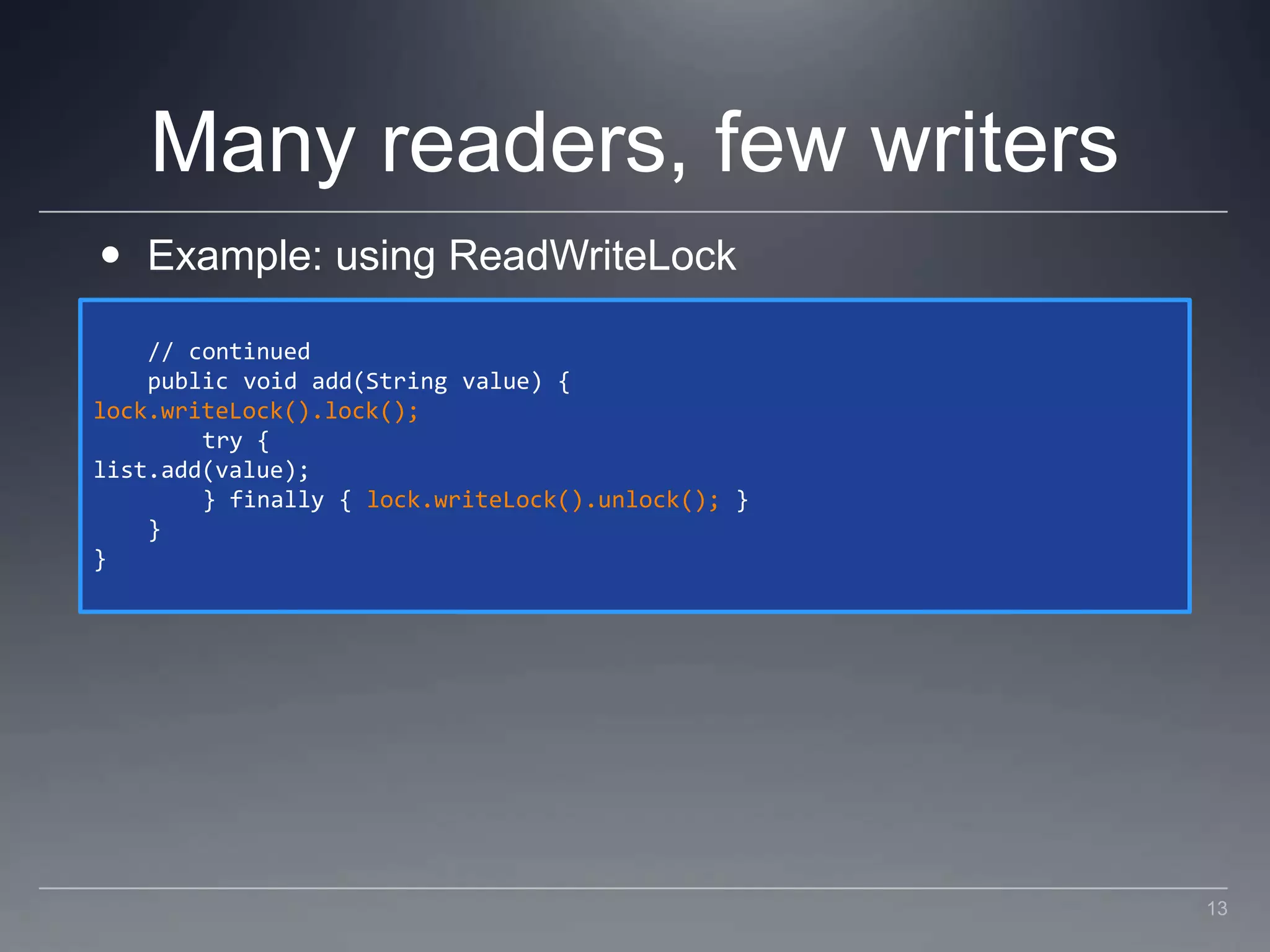

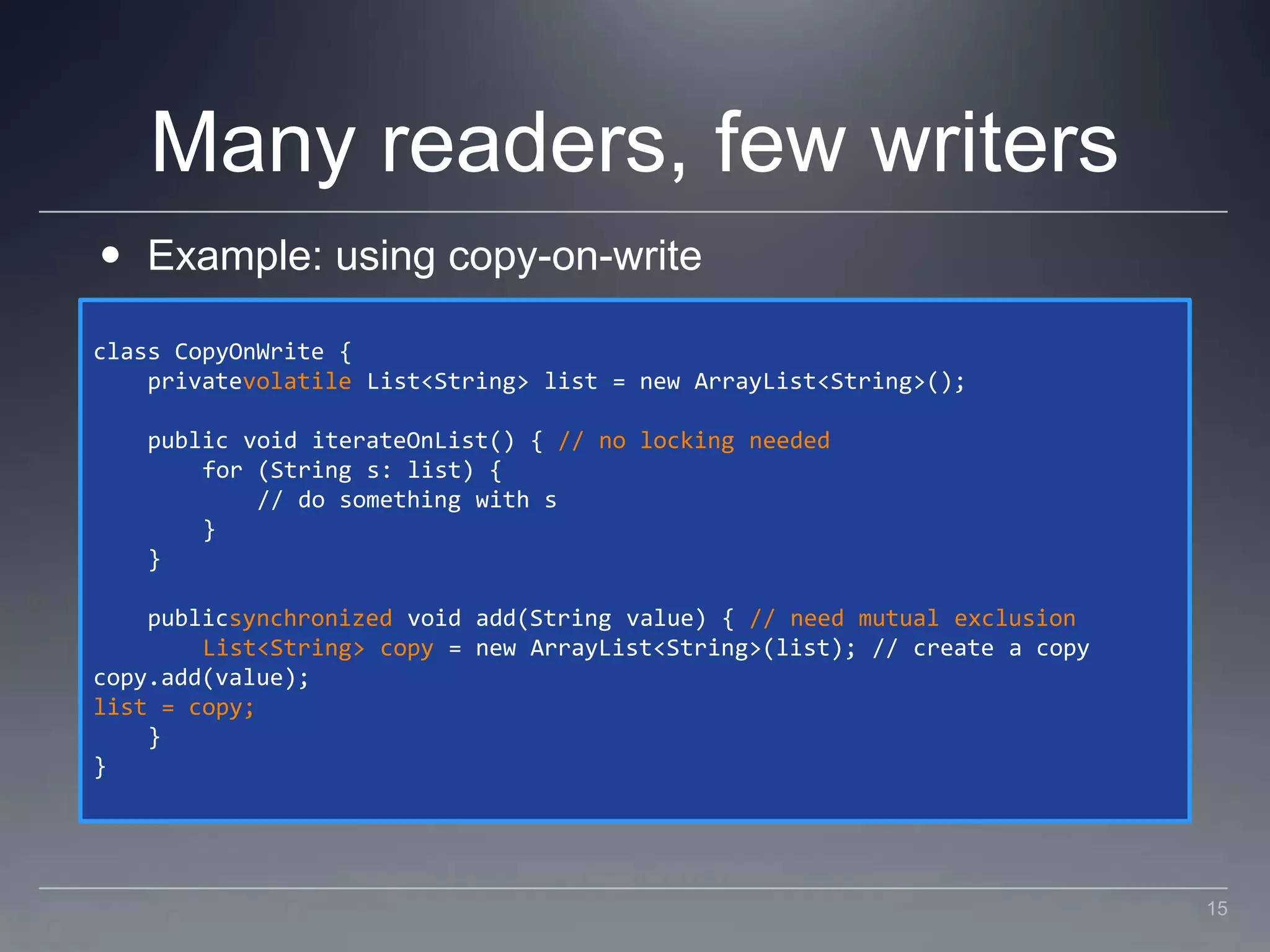

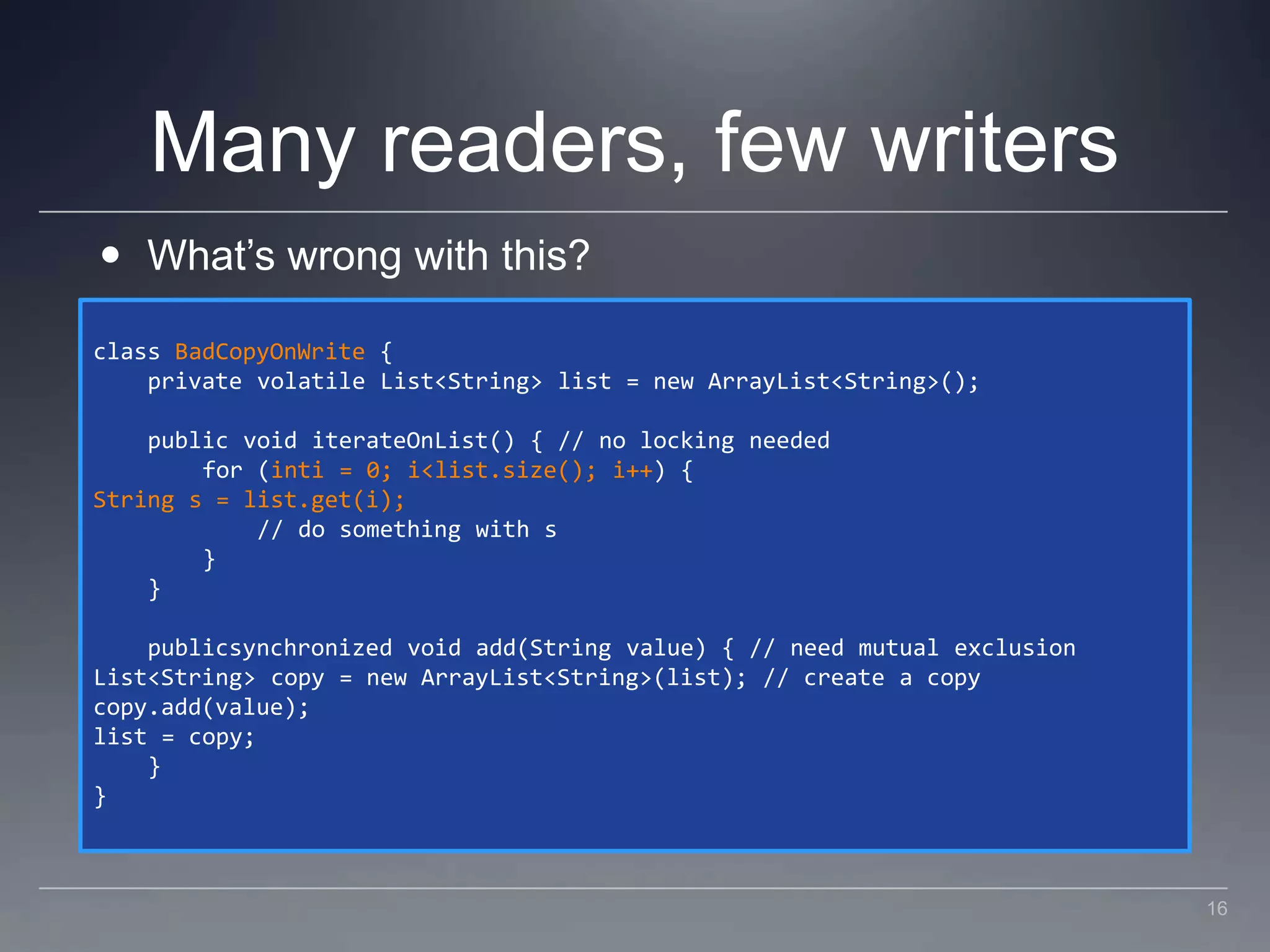

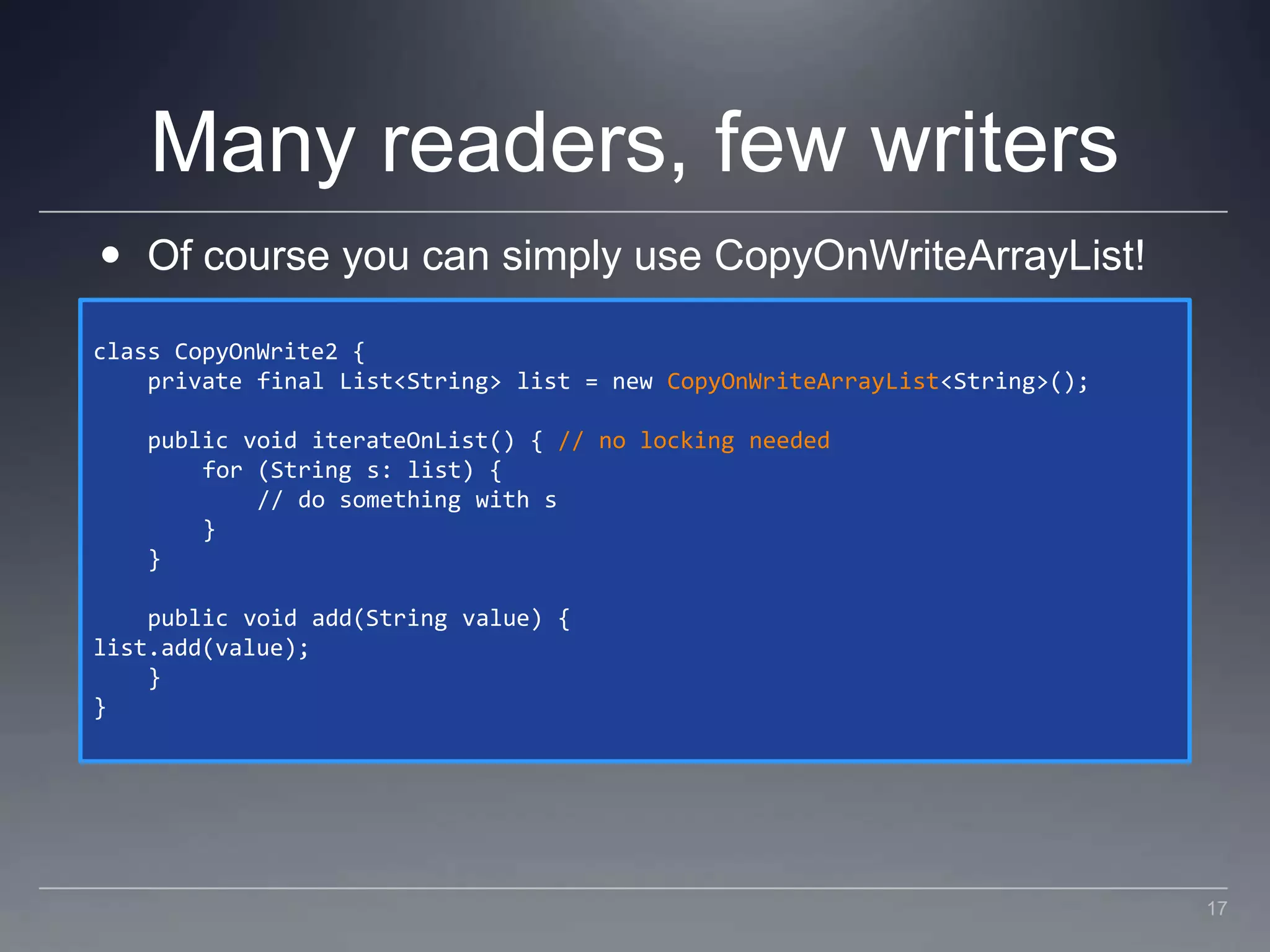

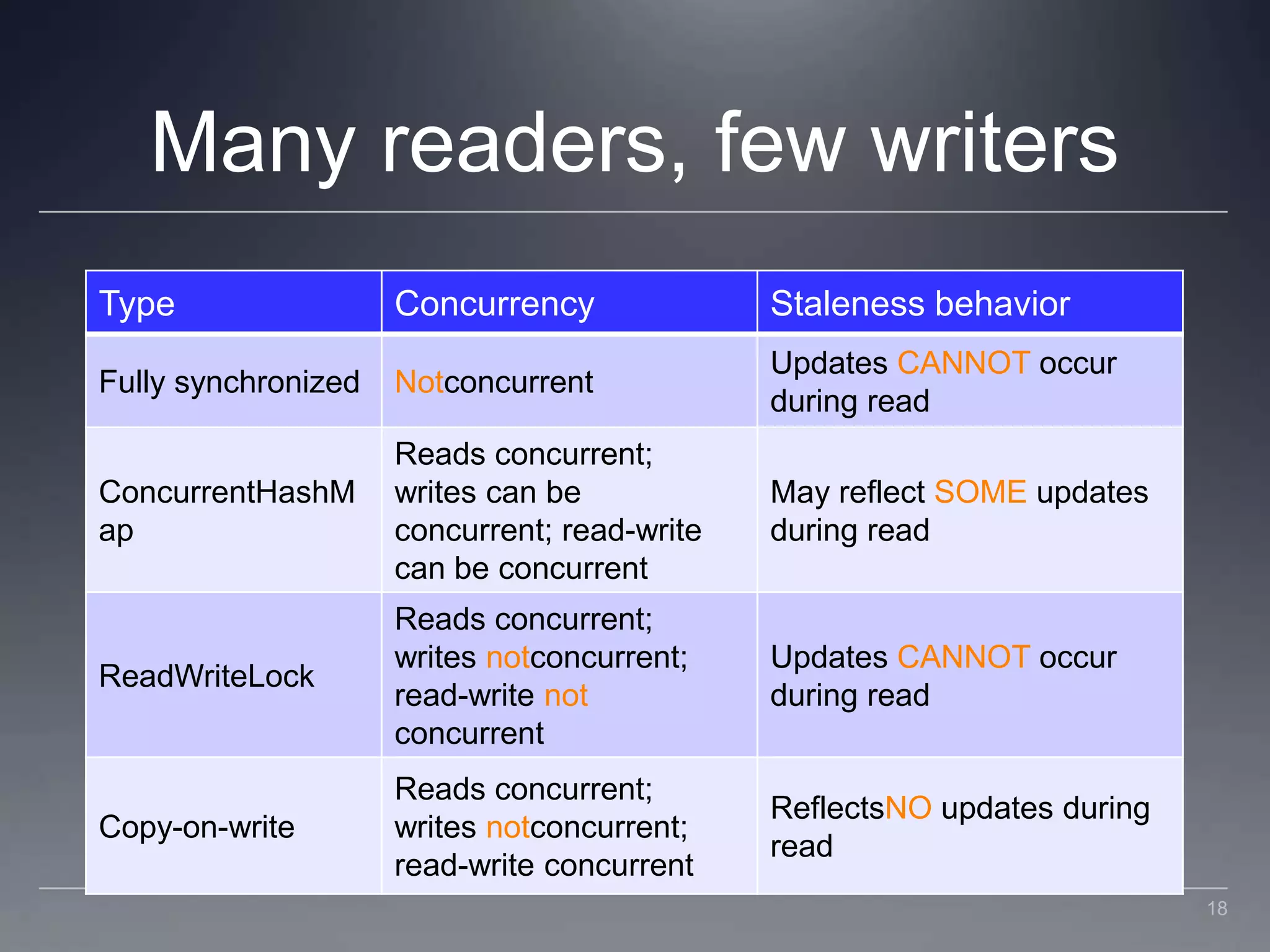

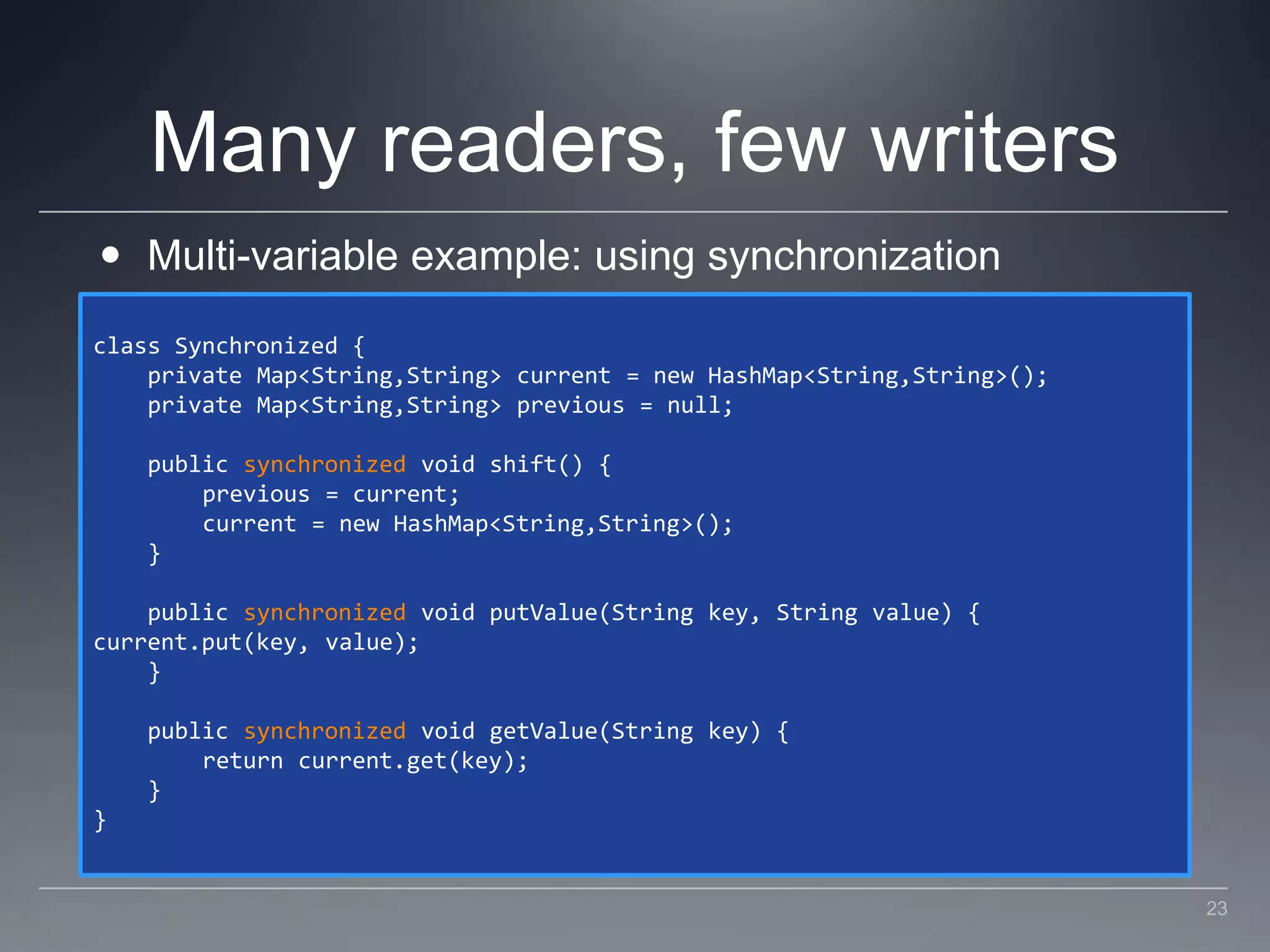

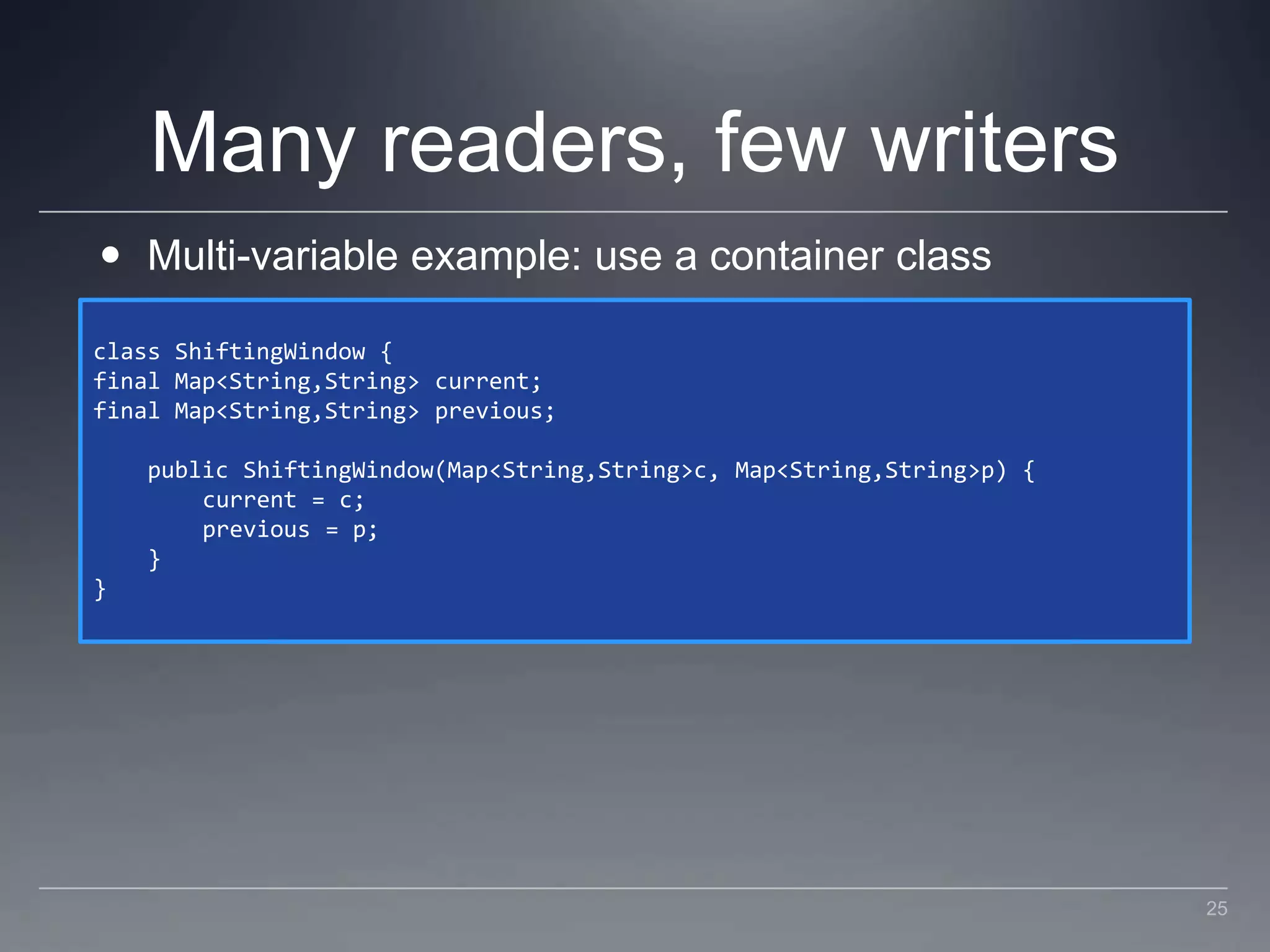

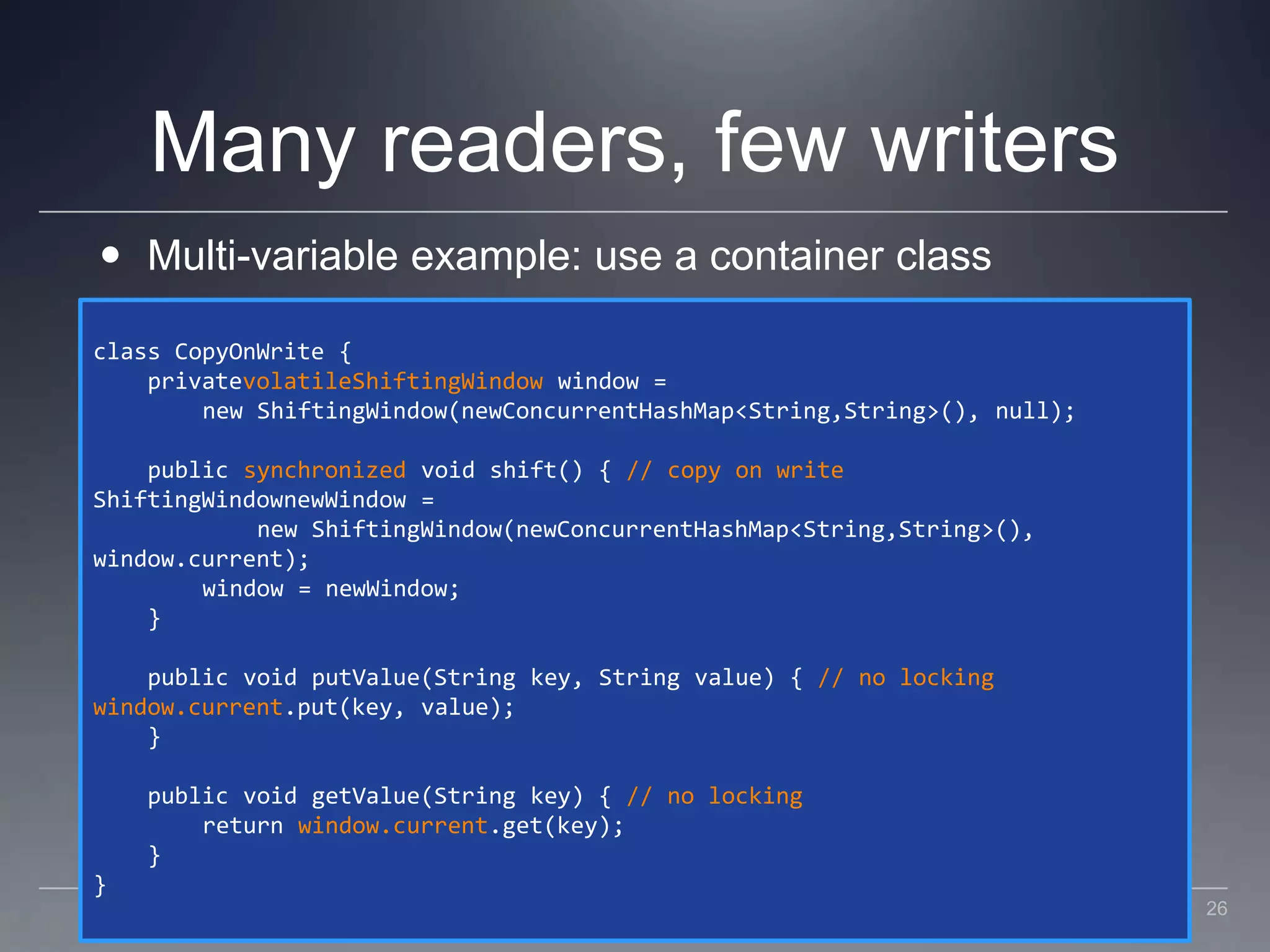

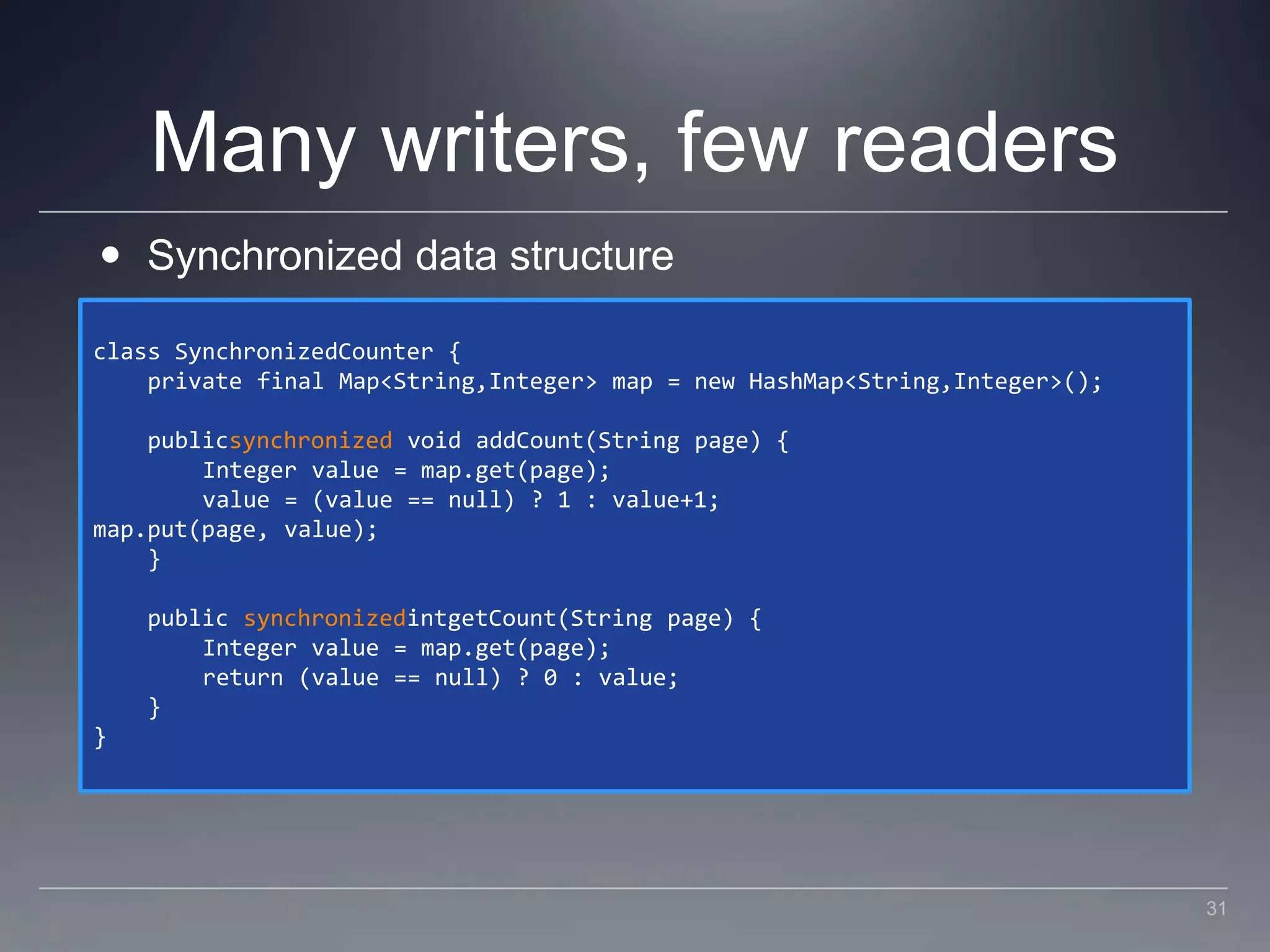

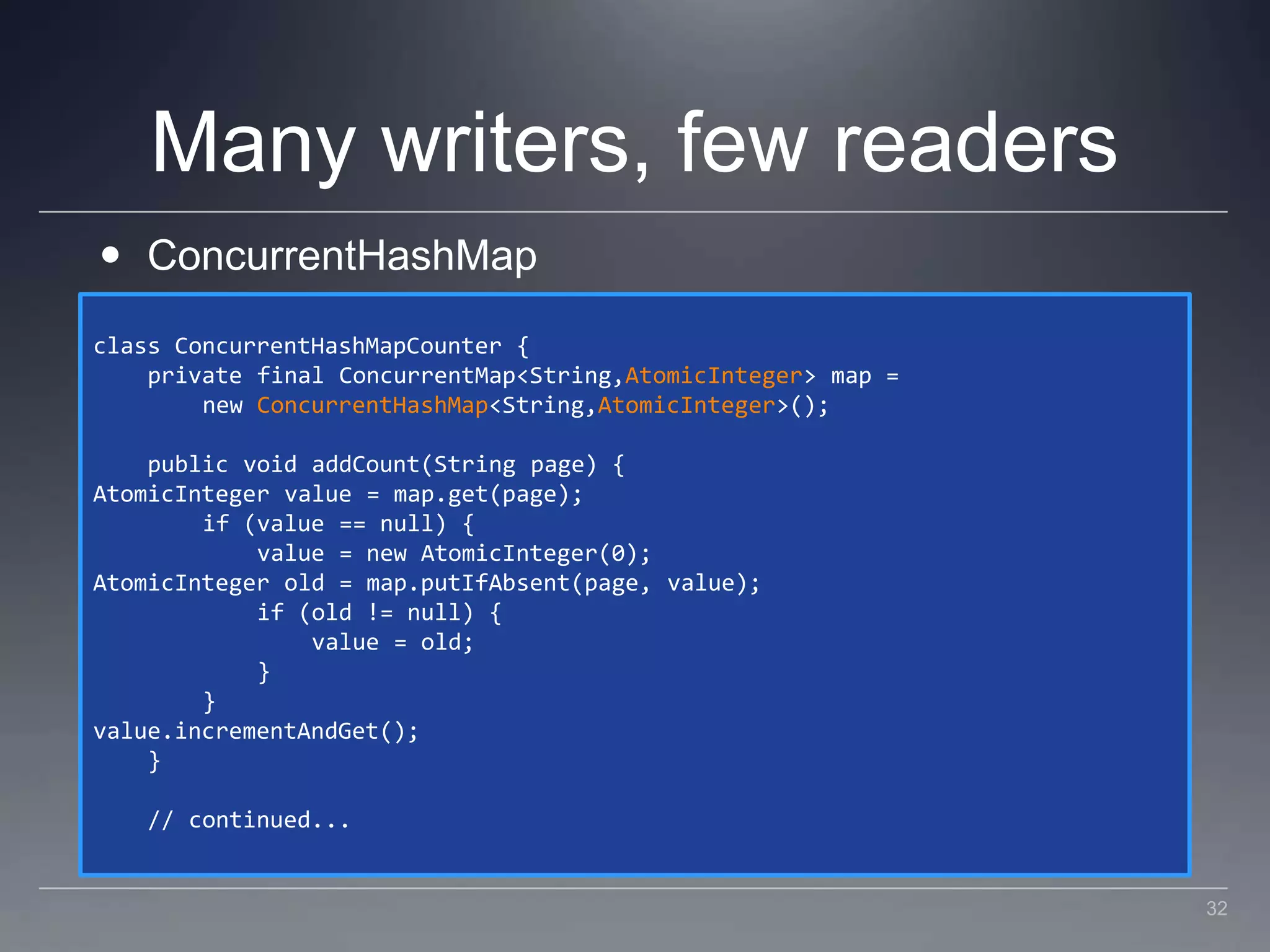

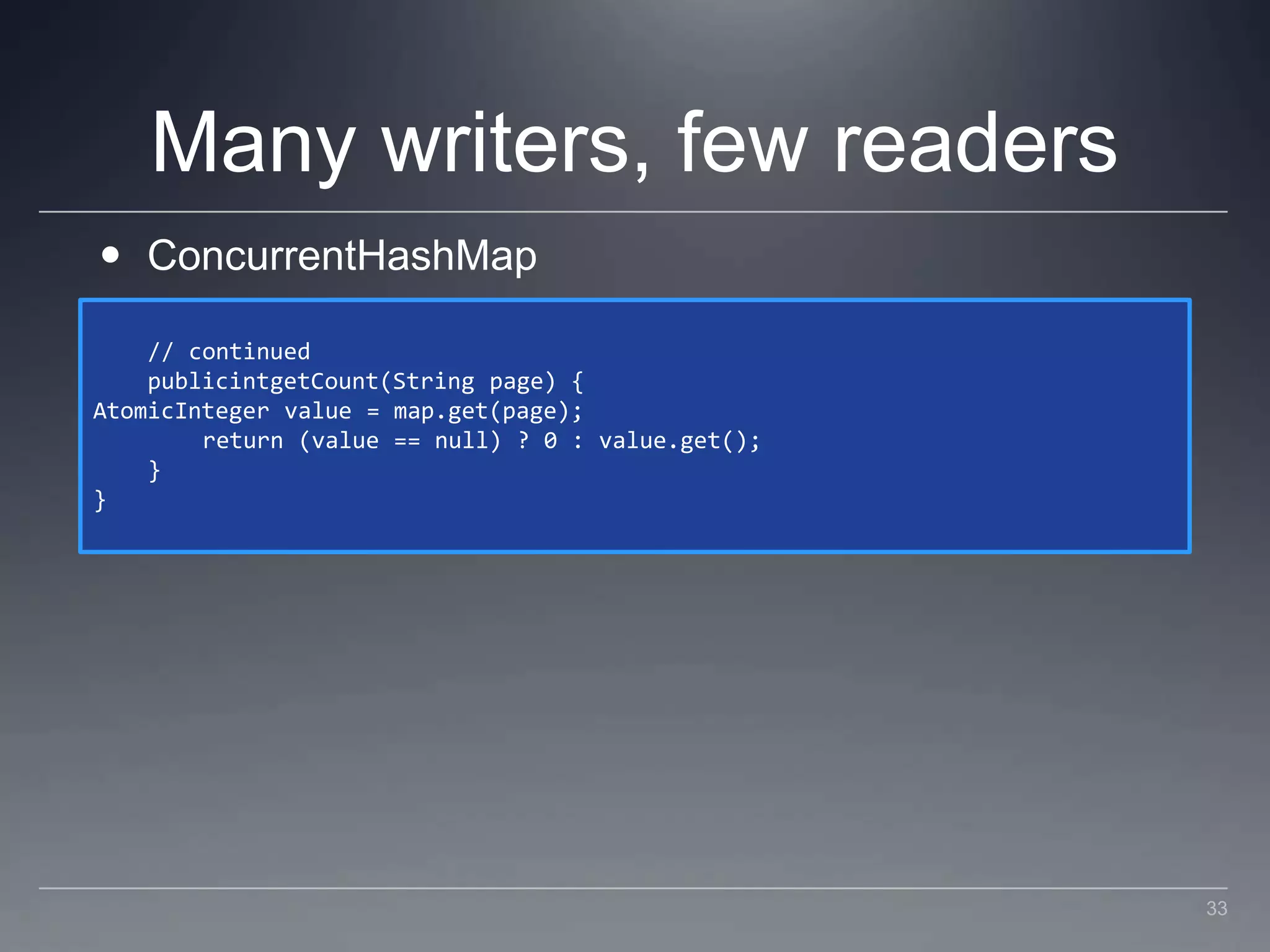

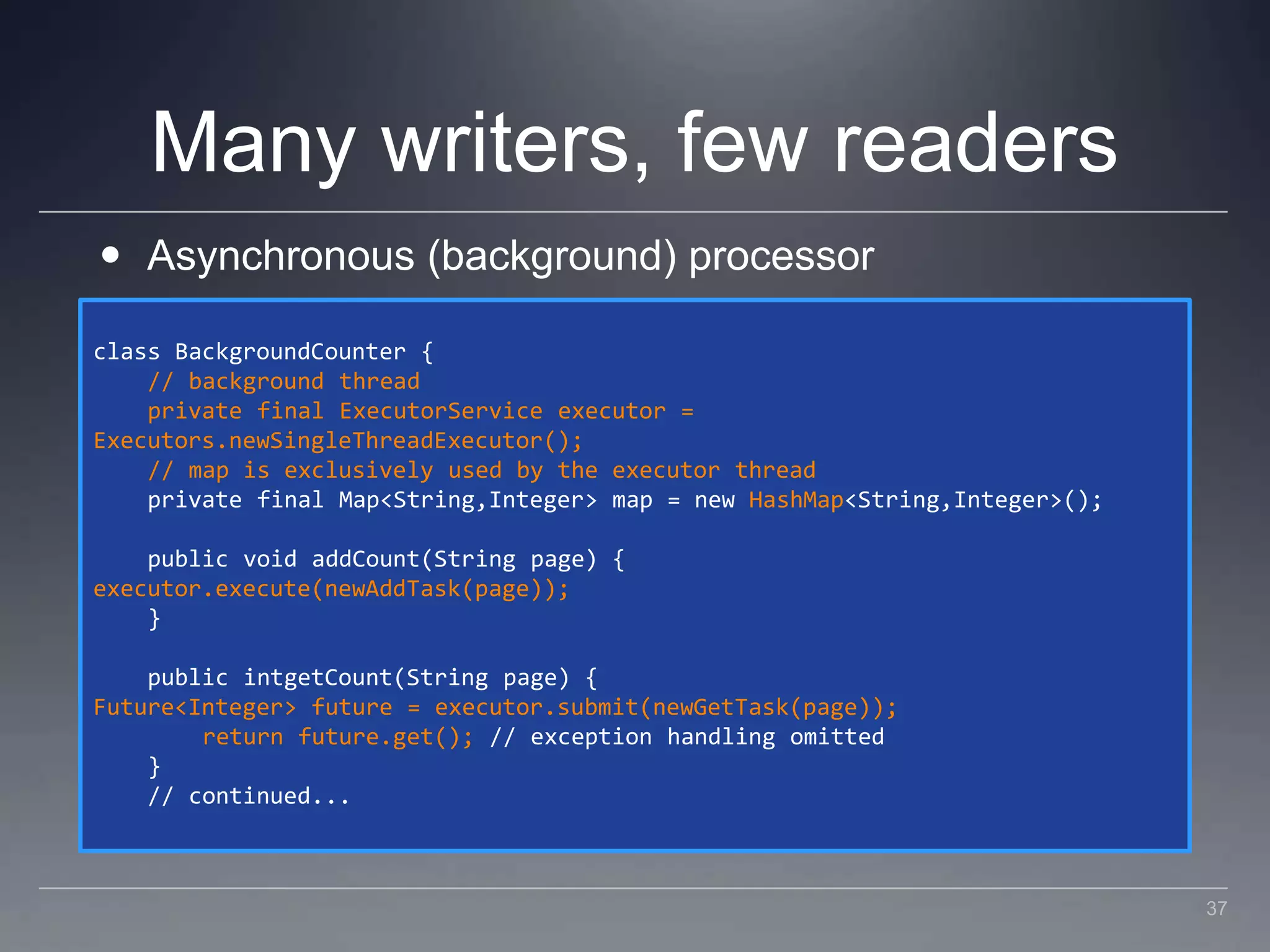

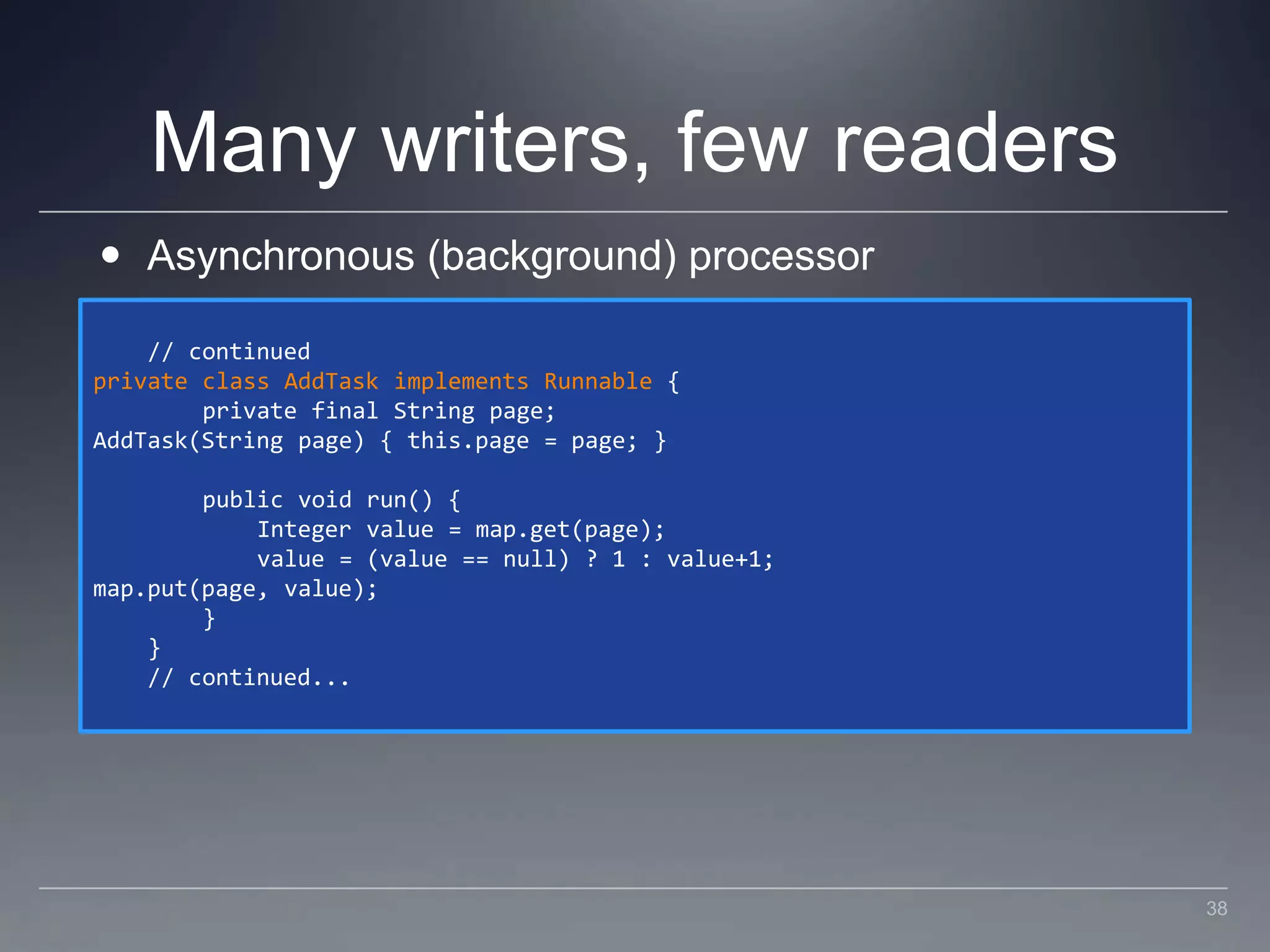

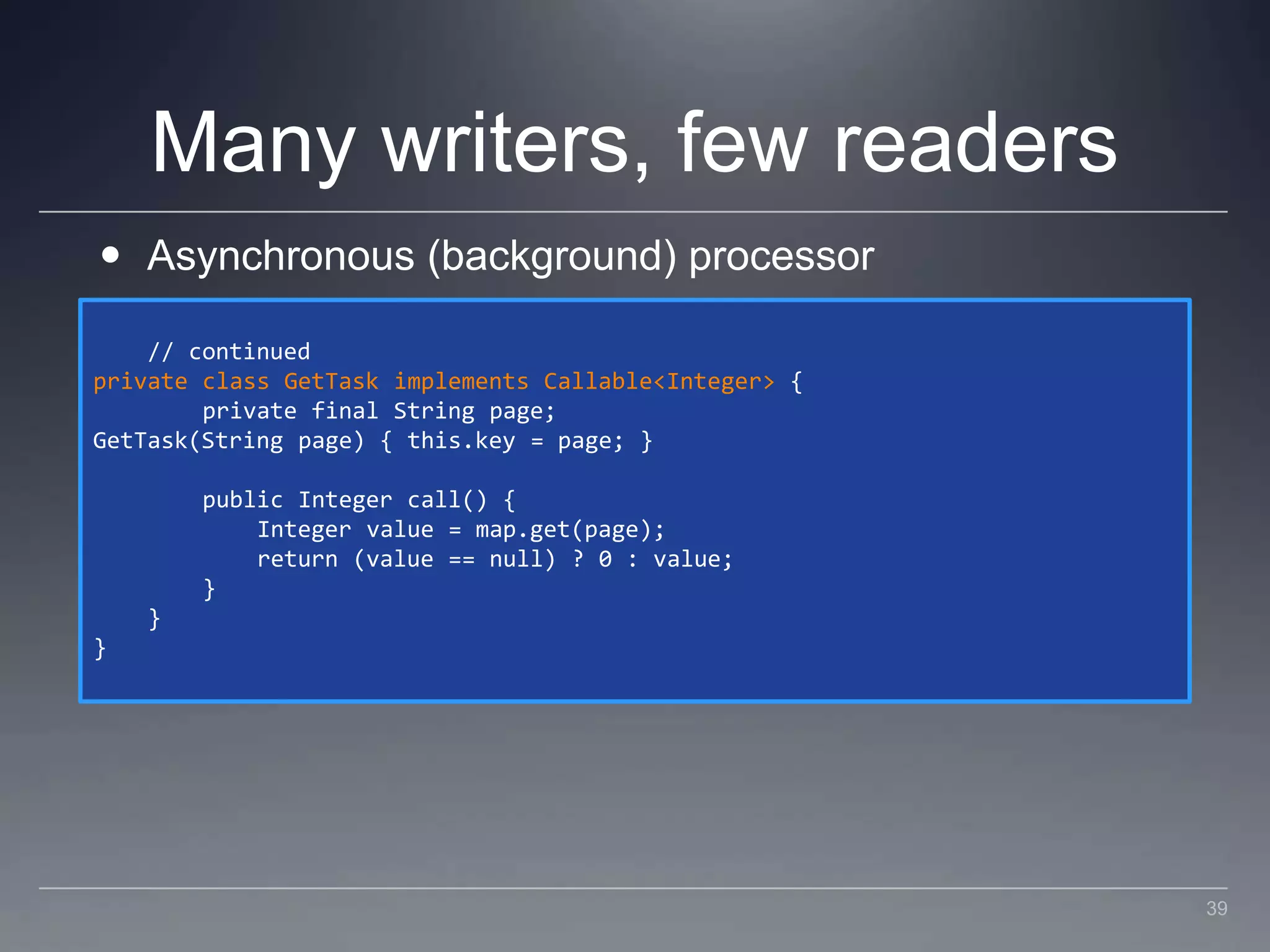

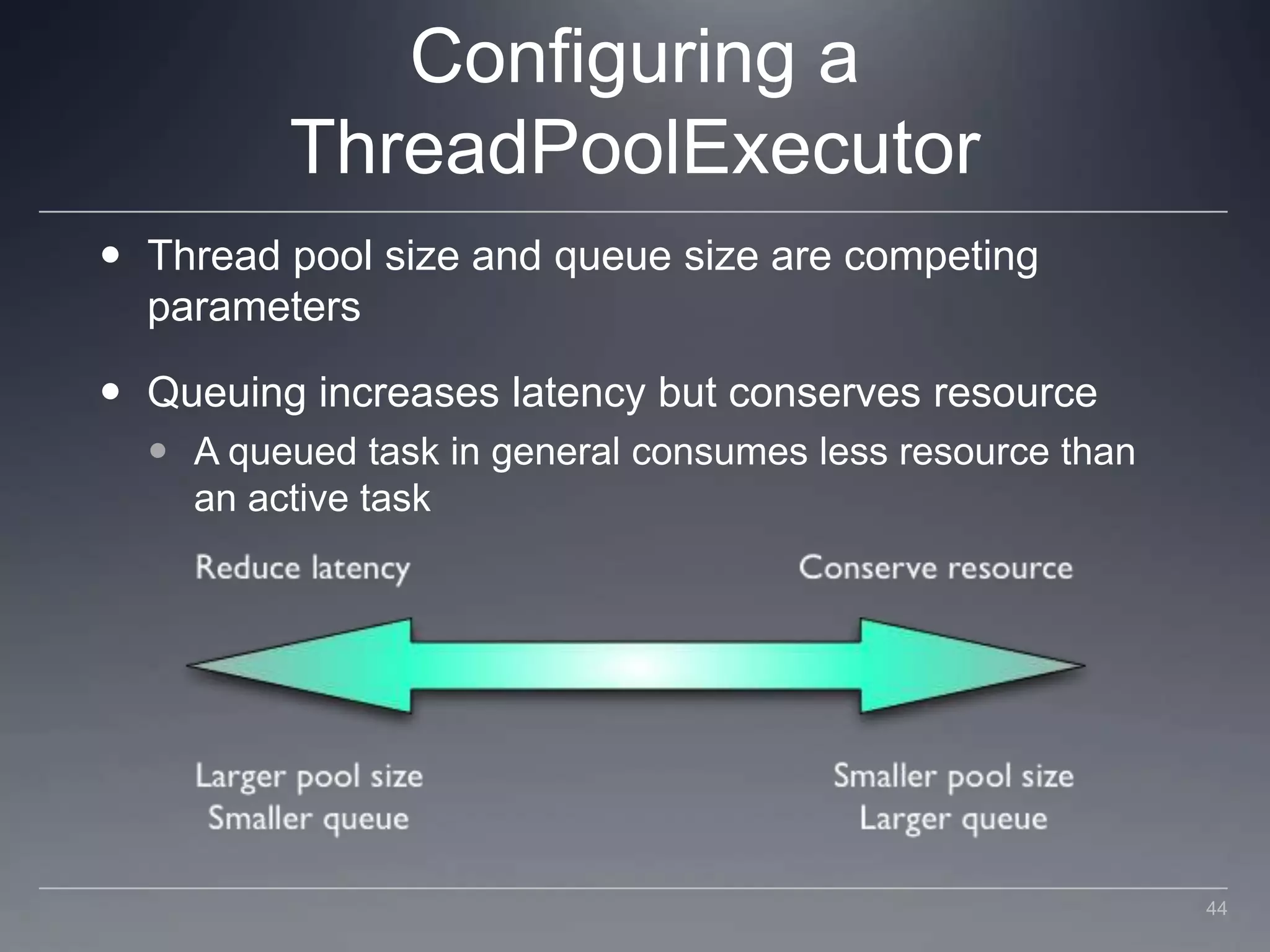

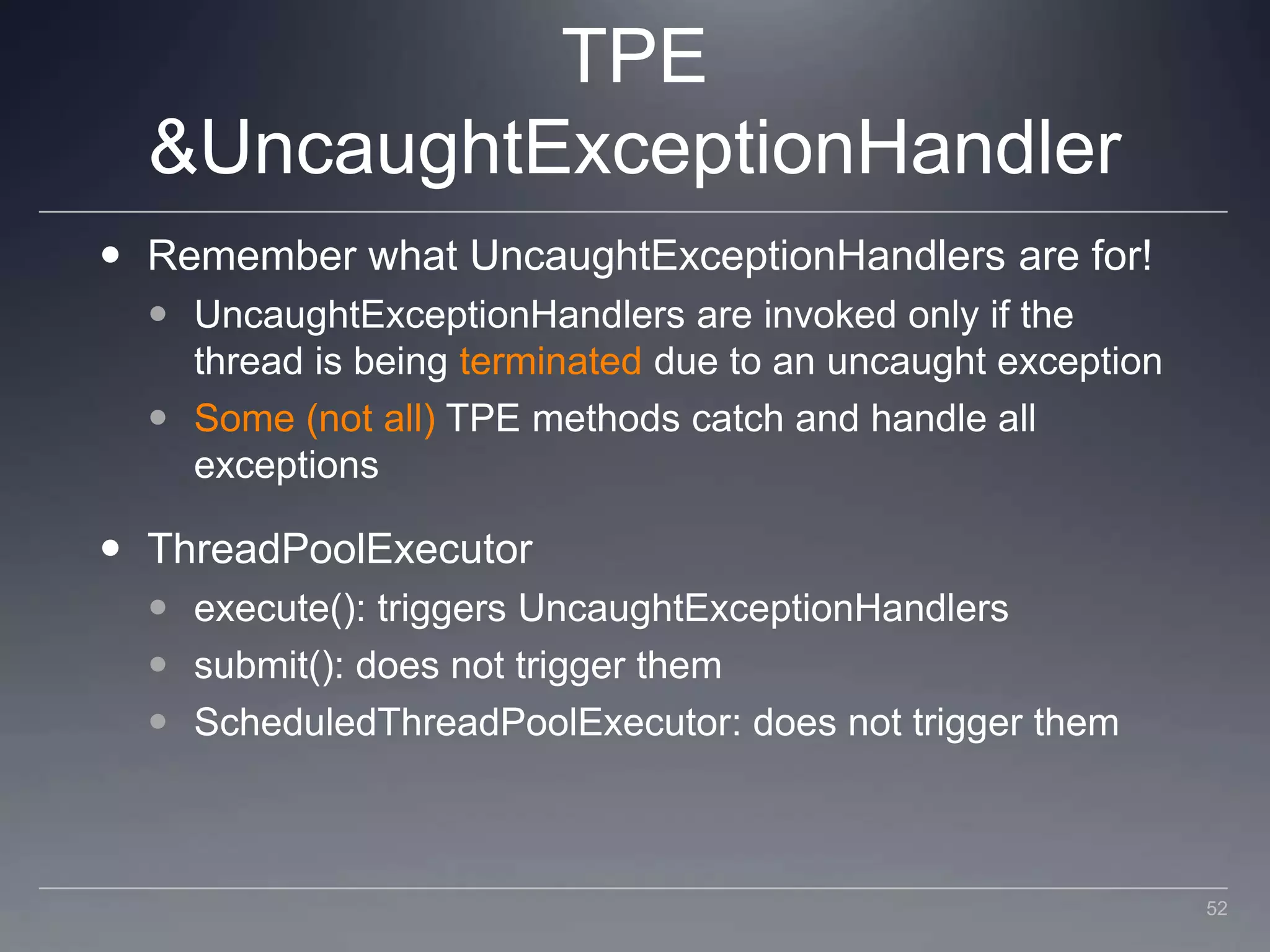

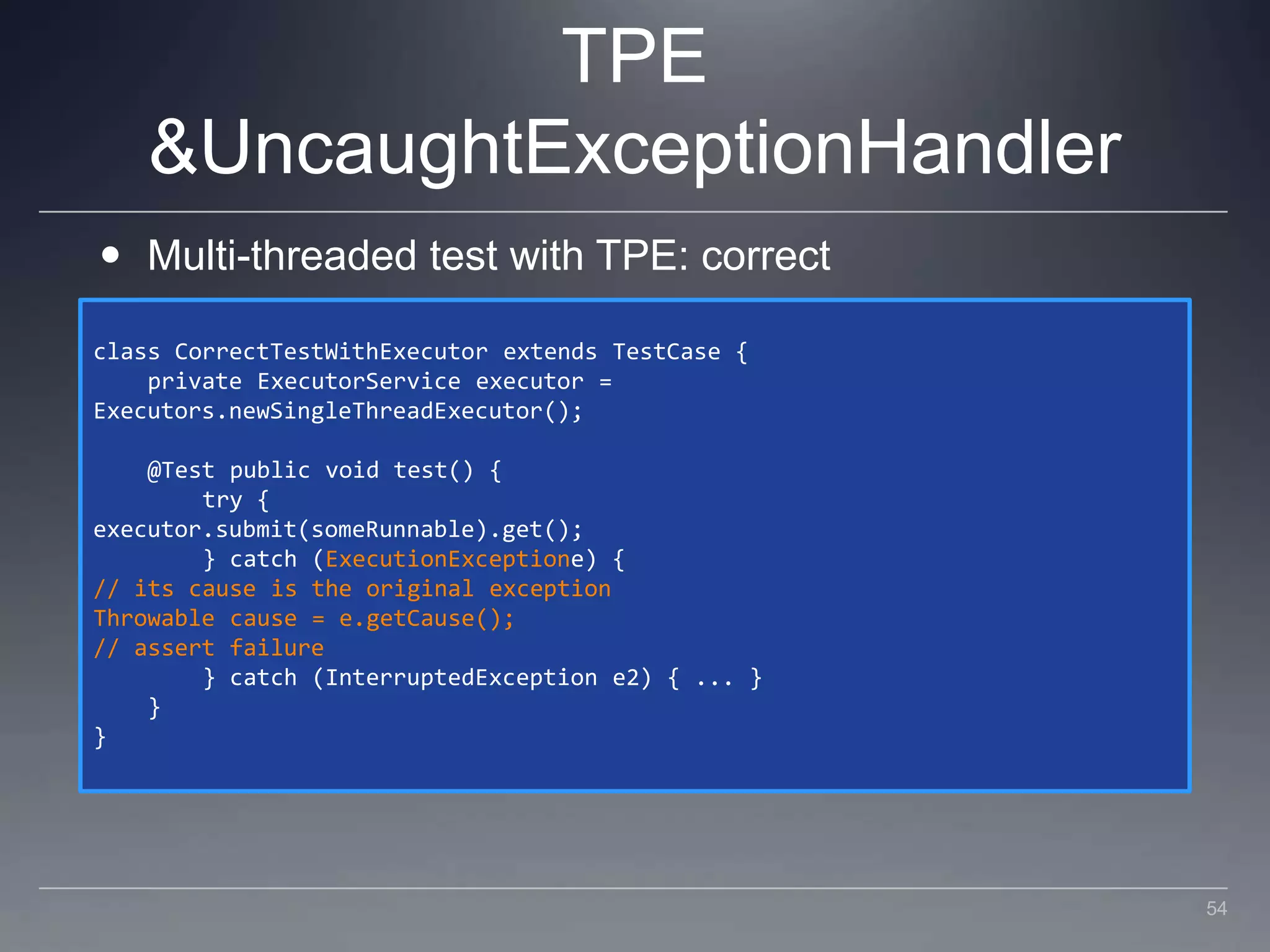

This document discusses patterns and anti-patterns for practical concurrency. It begins with an introduction and agenda, then discusses a "double-checked locking" anti-pattern on collections. It explores patterns for scenarios with many readers and few writers, including using synchronized data structures, concurrent collections, read-write locks, and copy-on-write. It also covers patterns for many writers and few readers, such as concurrent hash maps and asynchronous processing. Finally, it discusses configuring thread pool executors.