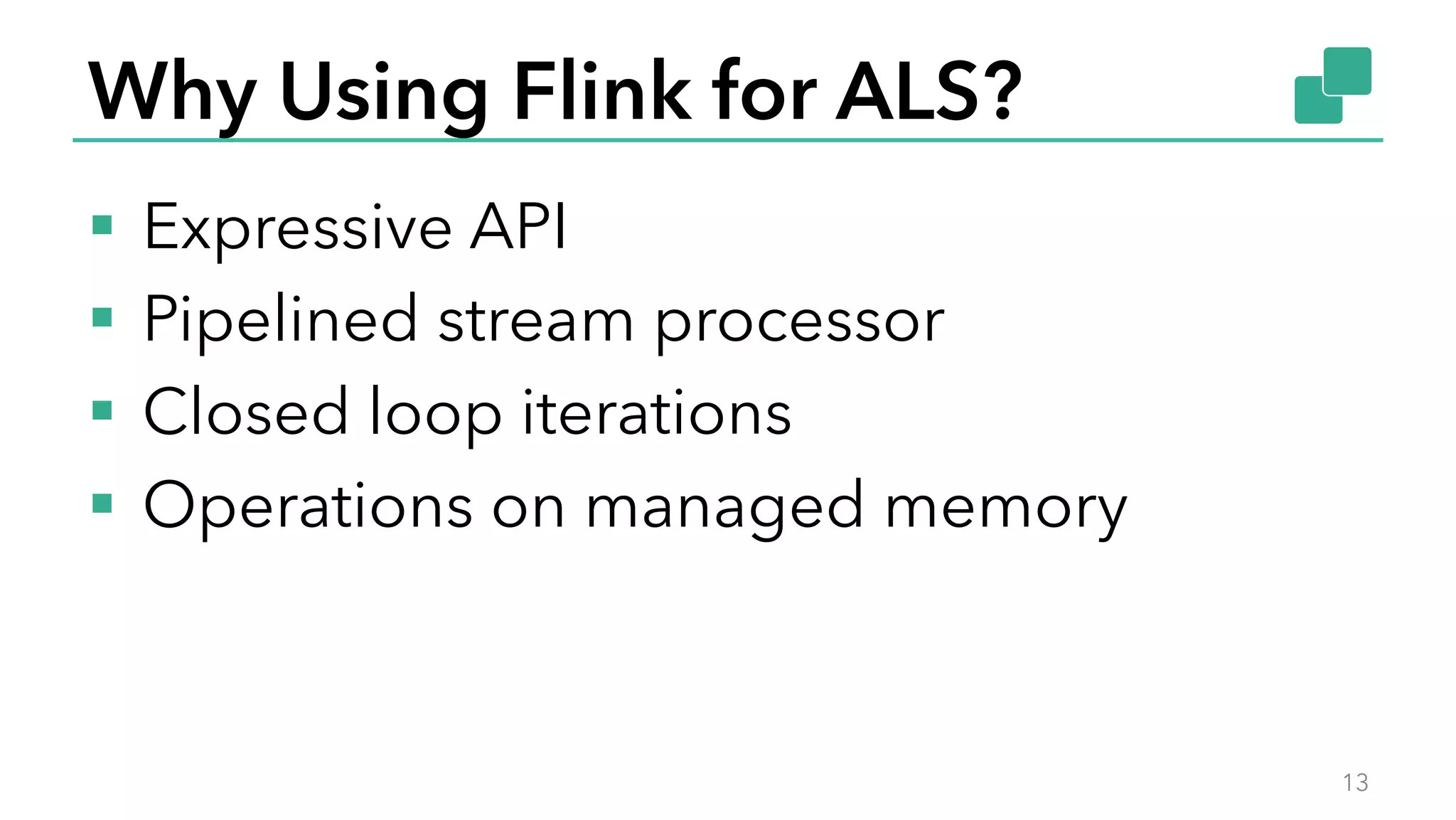

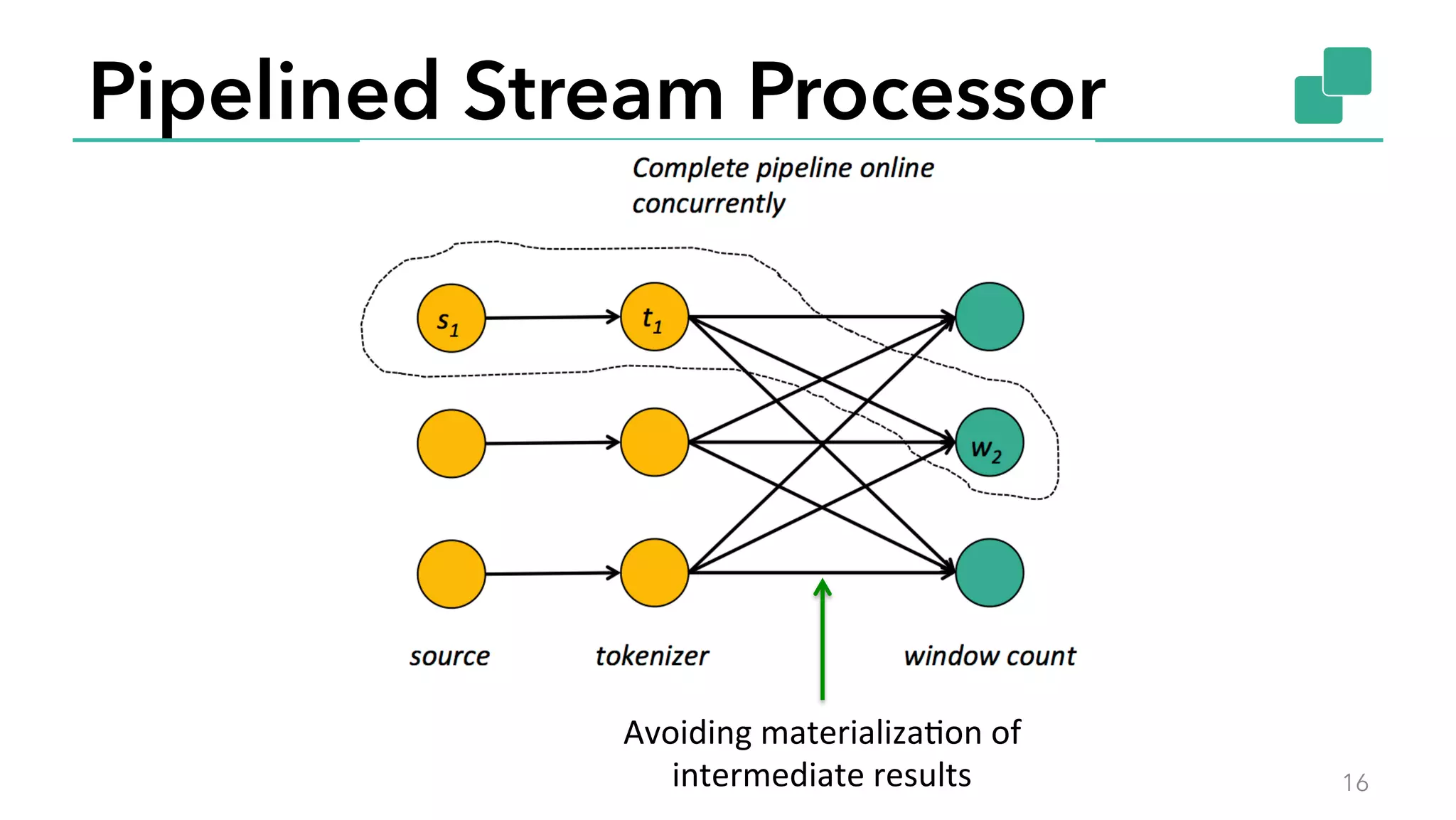

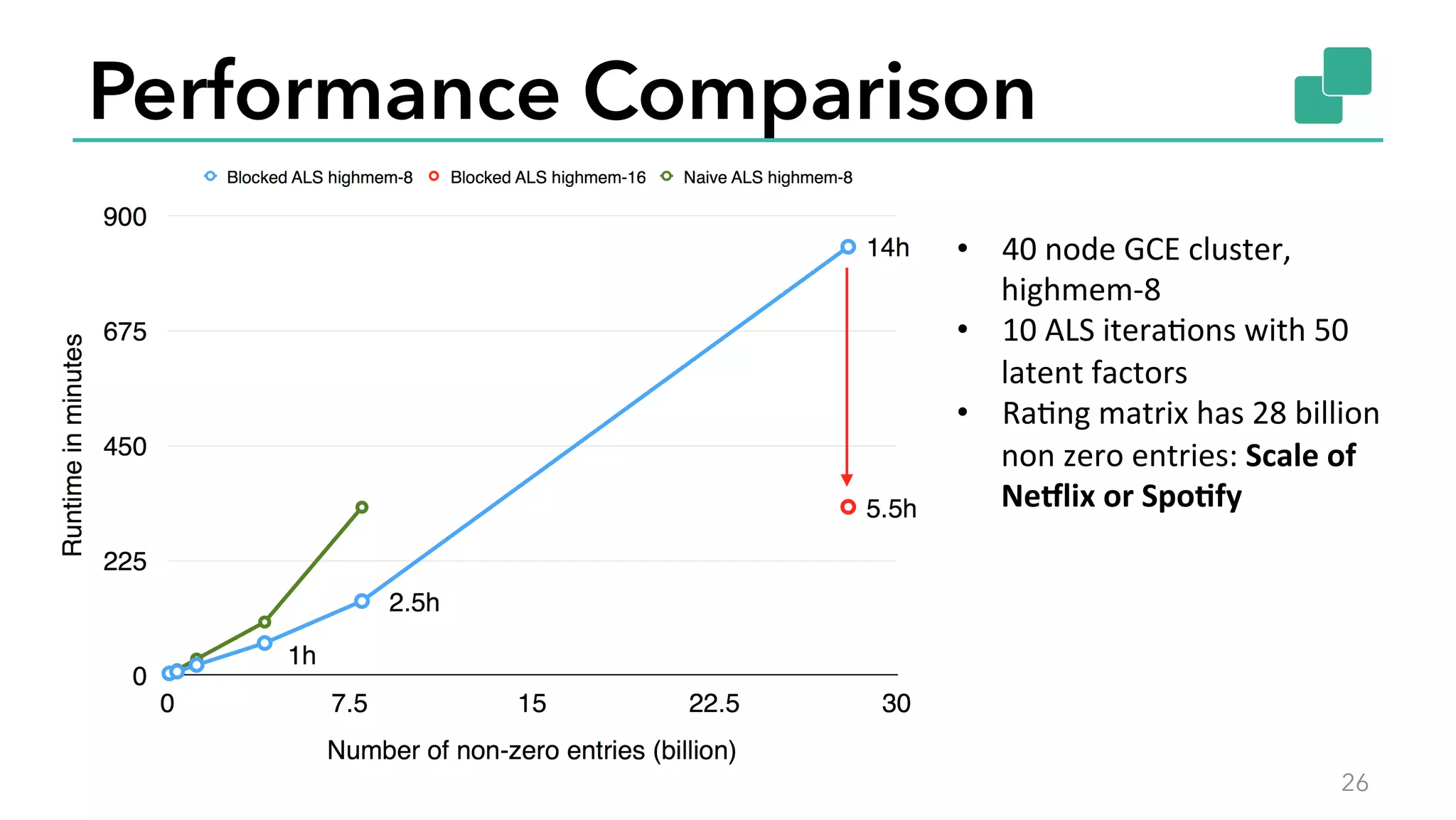

The document discusses the use of Apache Flink for collaborative filtering in recommendations, focusing on the Alternating Least Squares (ALS) method for matrix factorization. It outlines the advantages of Flink's expressive APIs and data processing capabilities, as well as providing performance comparisons of different ALS implementations. The session aims to showcase how to leverage Flink for large-scale recommendation systems effectively.

![Expressive APIs

§ DataSet: Abstraction for distributed data

§ Computation specified as sequence of lazily

evaluated transformations

14

case

class

Word(word:

String,

frequency:

Int)

val

lines:

DataSet[String]

=

env.readTextFile(…)

lines.flatMap(line

=>

line.split(“

“).map(word

=>

Word(word,

1))

.groupBy(“word”).sum(“frequency”)

.print()](https://image.slidesharecdn.com/computingrecommendationsatextremescalewithapacheflink-buzzwords2015-150602083140-lva1-app6891/75/Computing-recommendations-at-extreme-scale-with-Apache-Flink-Buzzwords-2015-15-2048.jpg)

![Program Execution

15

case

class

Path

(from:

Long,

to:

Long)

val

tc

=

edges.iterate(10)

{

paths:

DataSet[Path]

=>

val

next

=

paths

.join(edges)

.where("to")

.equalTo("from")

{

(path,

edge)

=>

Path(path.from,

edge.to)

}

.union(paths)

.distinct()

next

}

Optimizer

Type extraction

stack

Task

scheduling

Dataflow

metadata

Pre-flight (Client)

Master

Workers

Data

Source

orders.tbl

Filter

Map

DataSourc

e

lineitem.tbl

Join

Hybrid Hash

build

HT

prob

e

hash-part [0] hash-part [0]

GroupRed

sort

forward

Program

Dataflow

Graph

deploy

operators

track

intermediate

results](https://image.slidesharecdn.com/computingrecommendationsatextremescalewithapacheflink-buzzwords2015-150602083140-lva1-app6891/75/Computing-recommendations-at-extreme-scale-with-Apache-Flink-Buzzwords-2015-16-2048.jpg)

val

parameters

=

ParameterMap()

.add(ALS.Iterations,

10)

.add(ALS.NumFactors,

50)

.add(ALS.Lambda,

1.5)

als.fit(ratingDS,

parameters)

val

testingDS

=

env.readCsvFile[(Int,

Int)](testingData)

val

predictions

=

als.predict(testingDS)](https://image.slidesharecdn.com/computingrecommendationsatextremescalewithapacheflink-buzzwords2015-150602083140-lva1-app6891/75/Computing-recommendations-at-extreme-scale-with-Apache-Flink-Buzzwords-2015-28-2048.jpg)