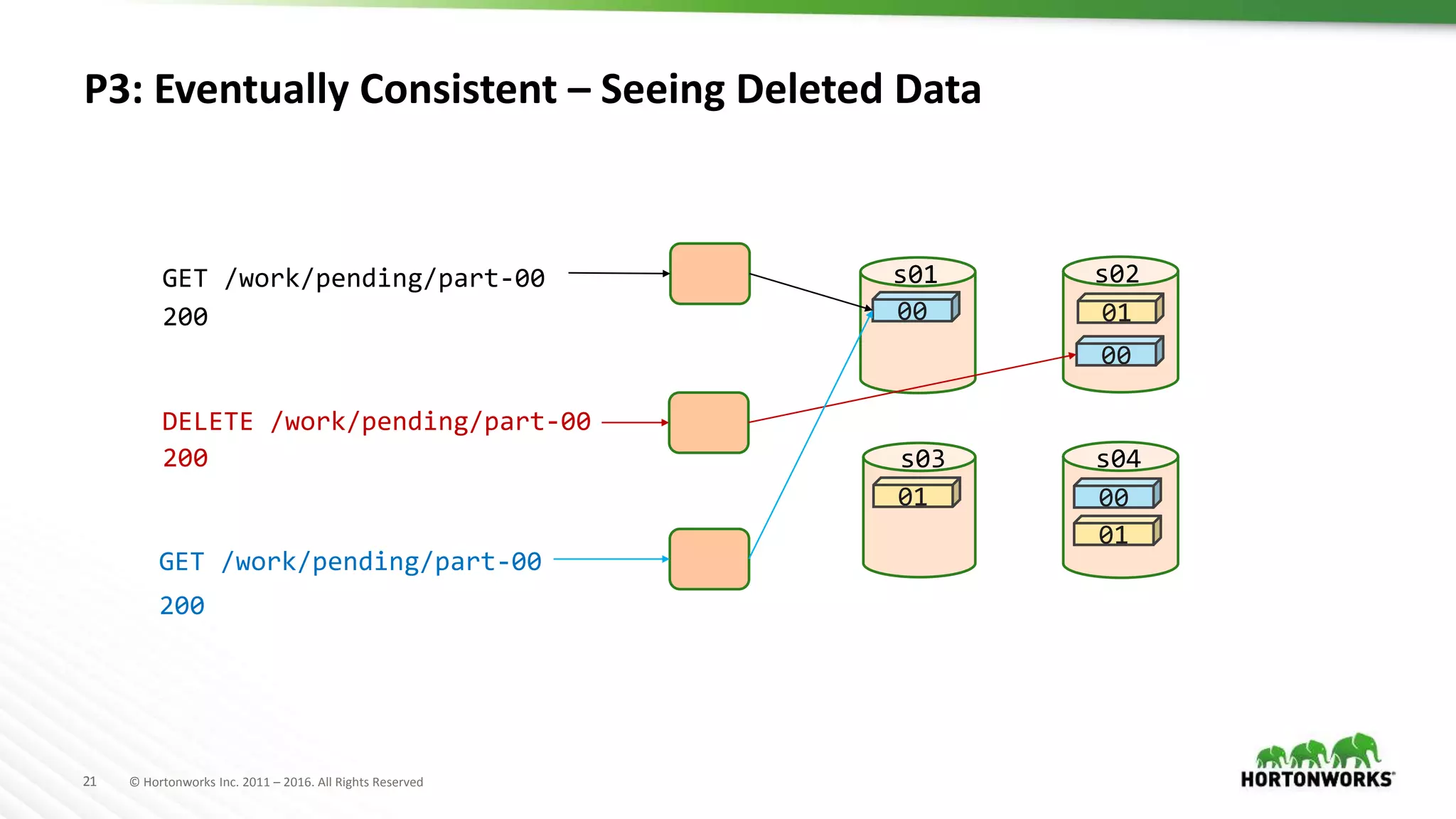

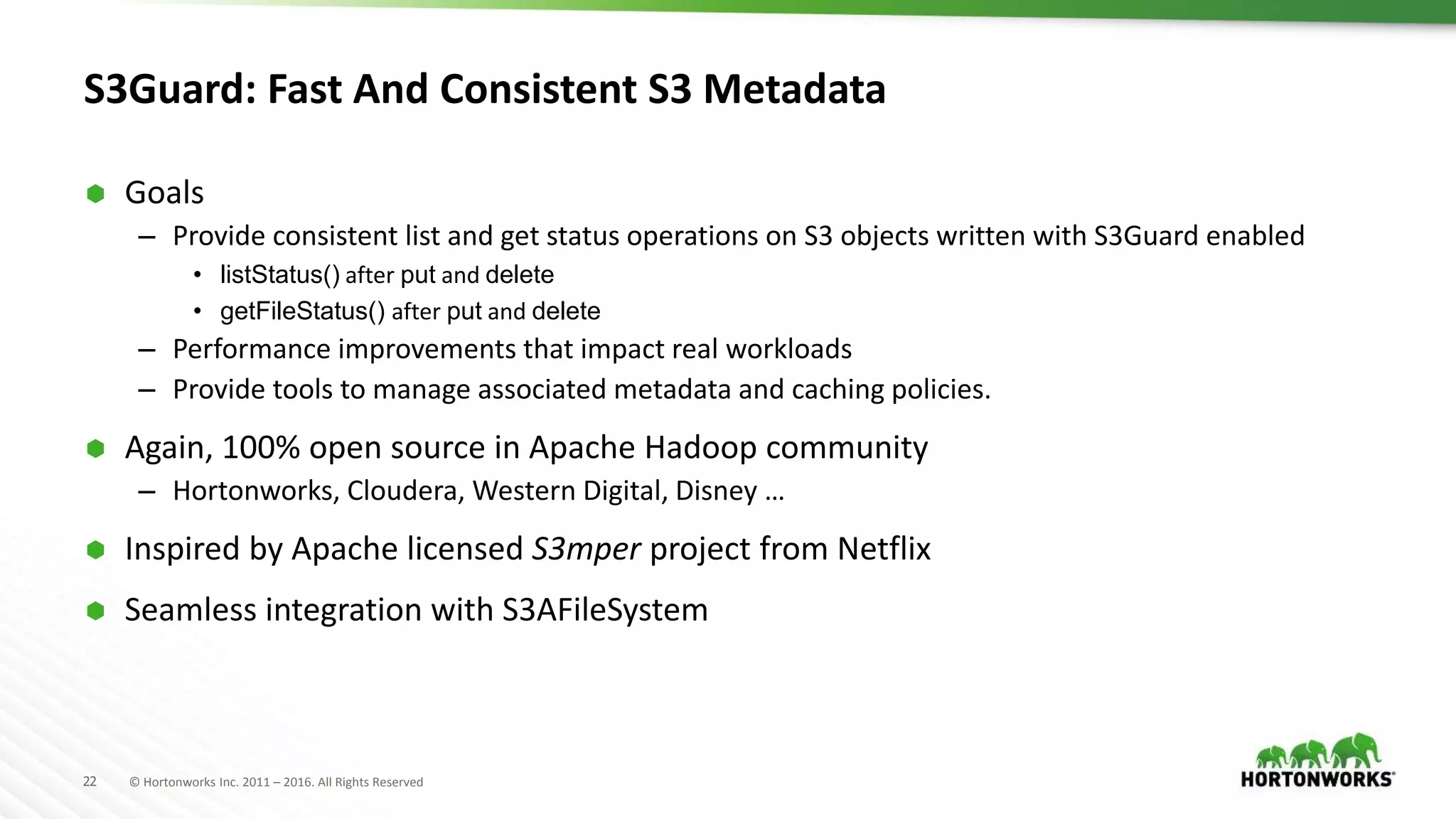

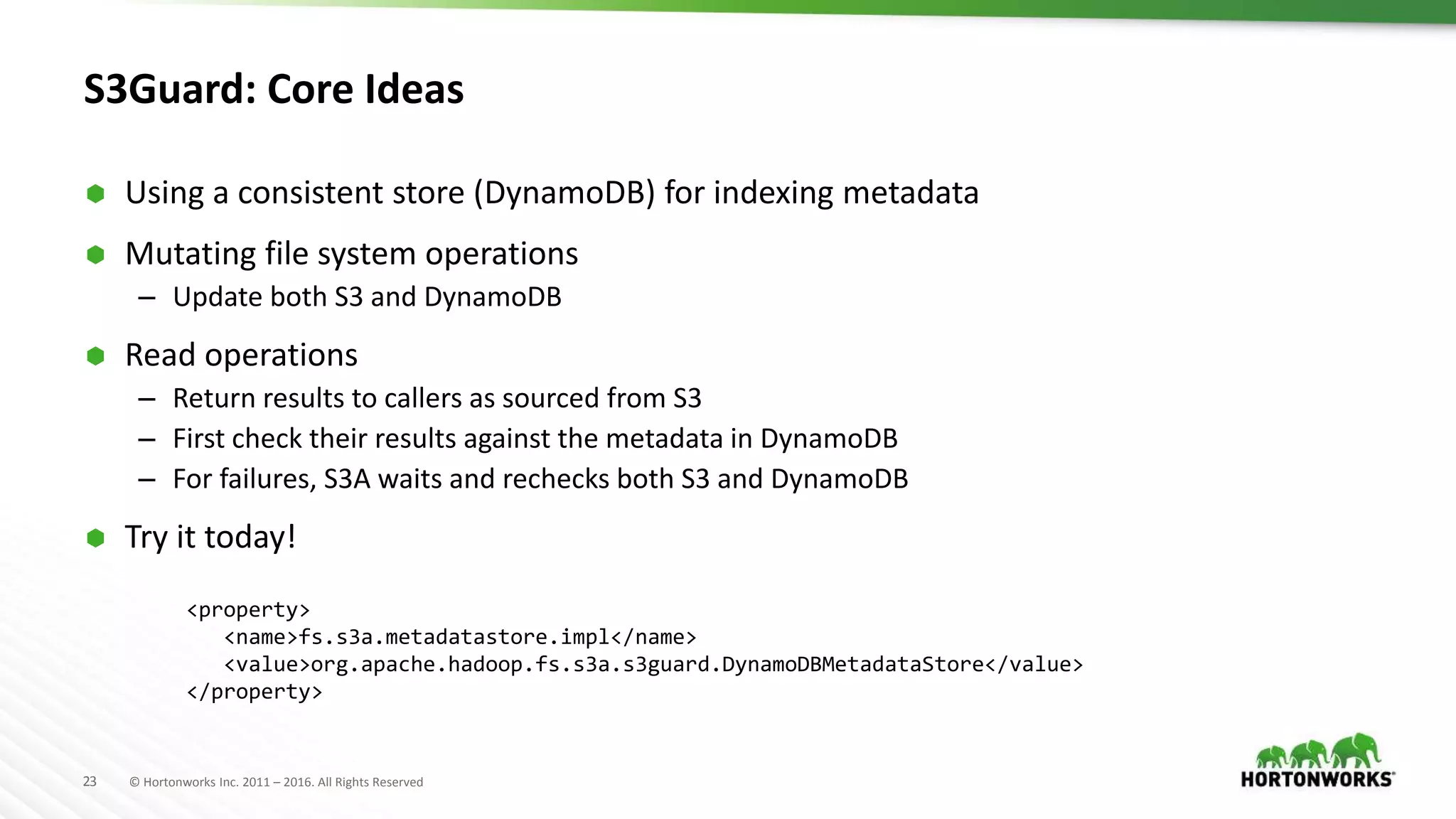

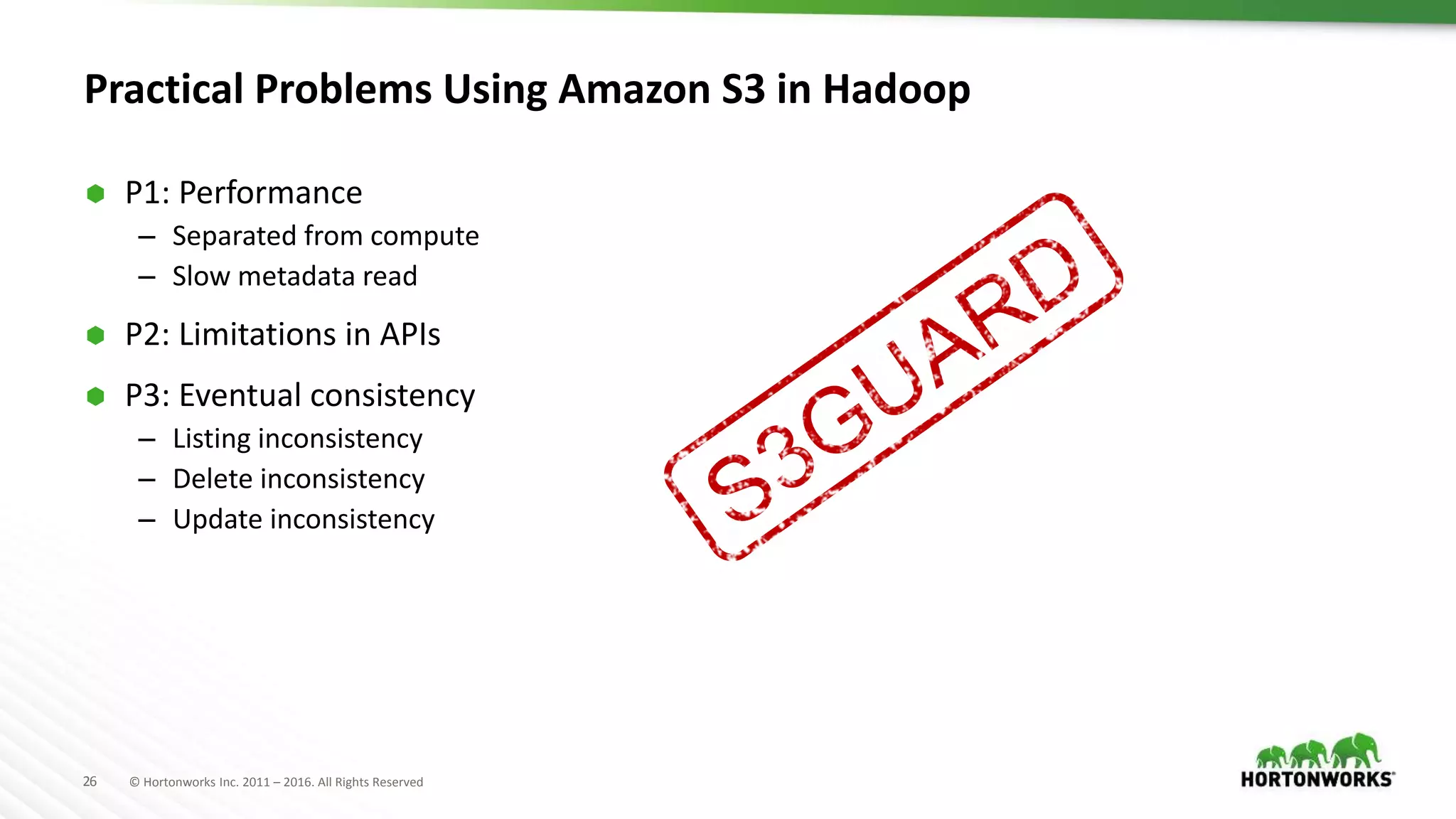

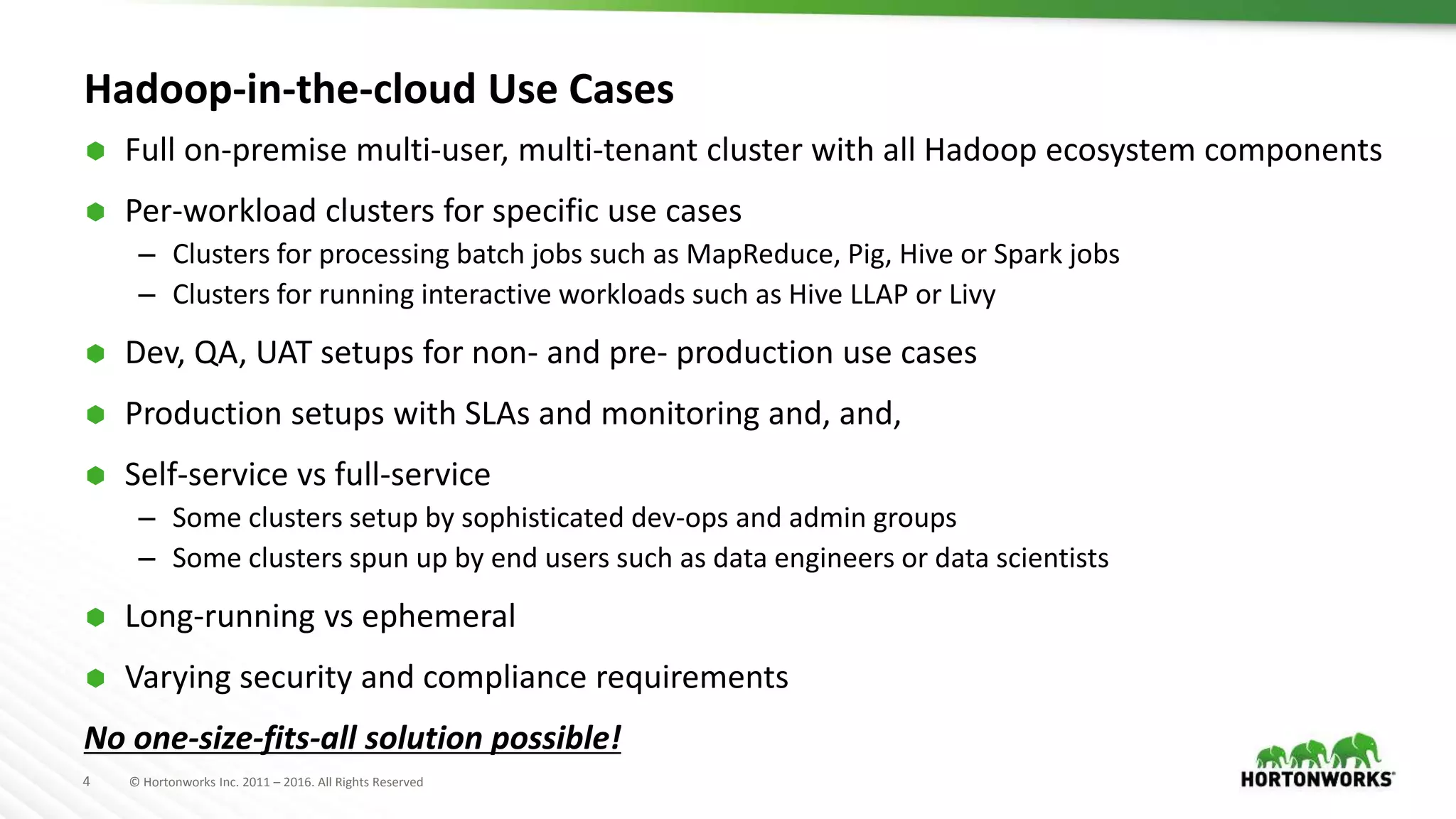

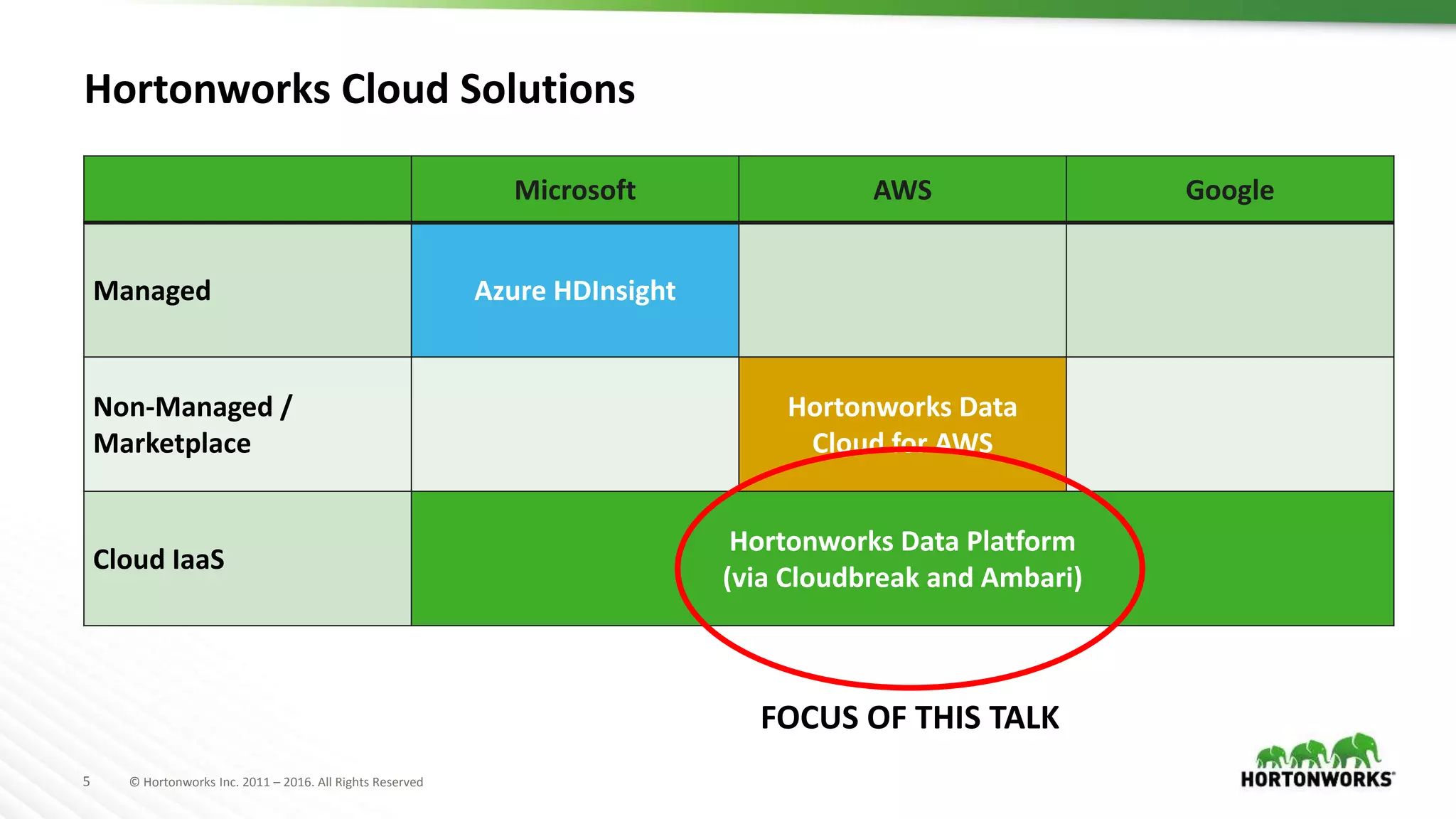

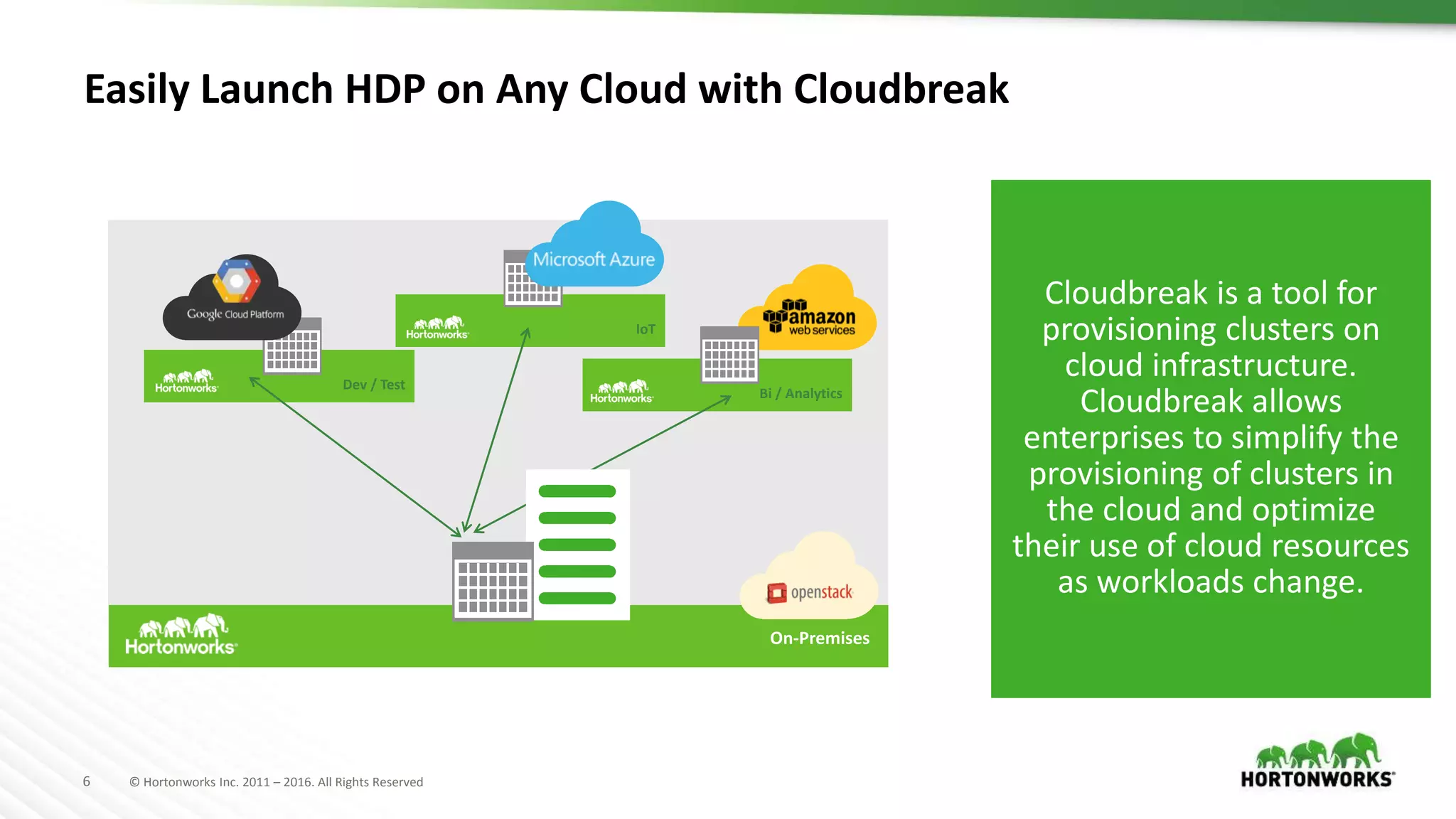

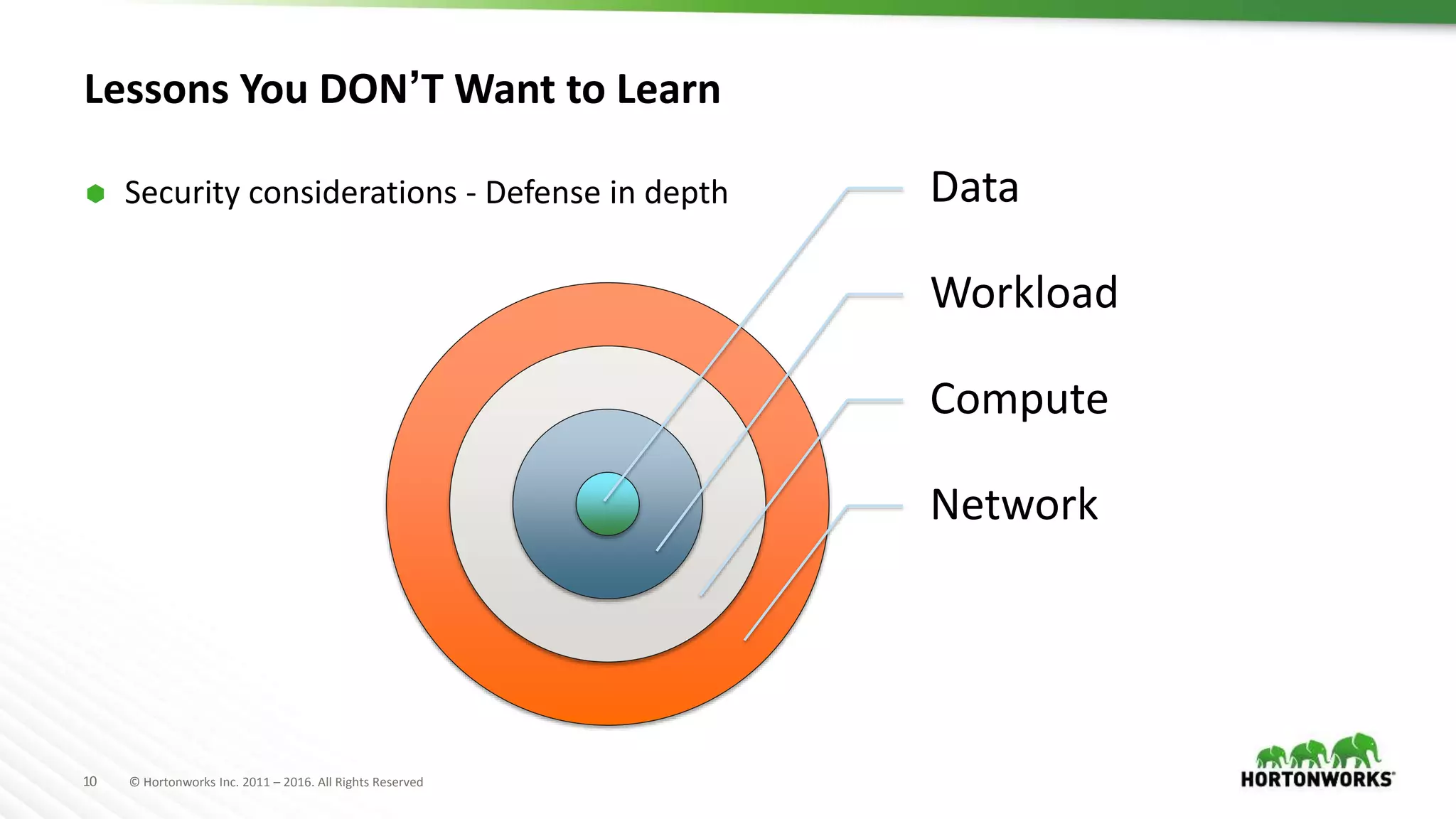

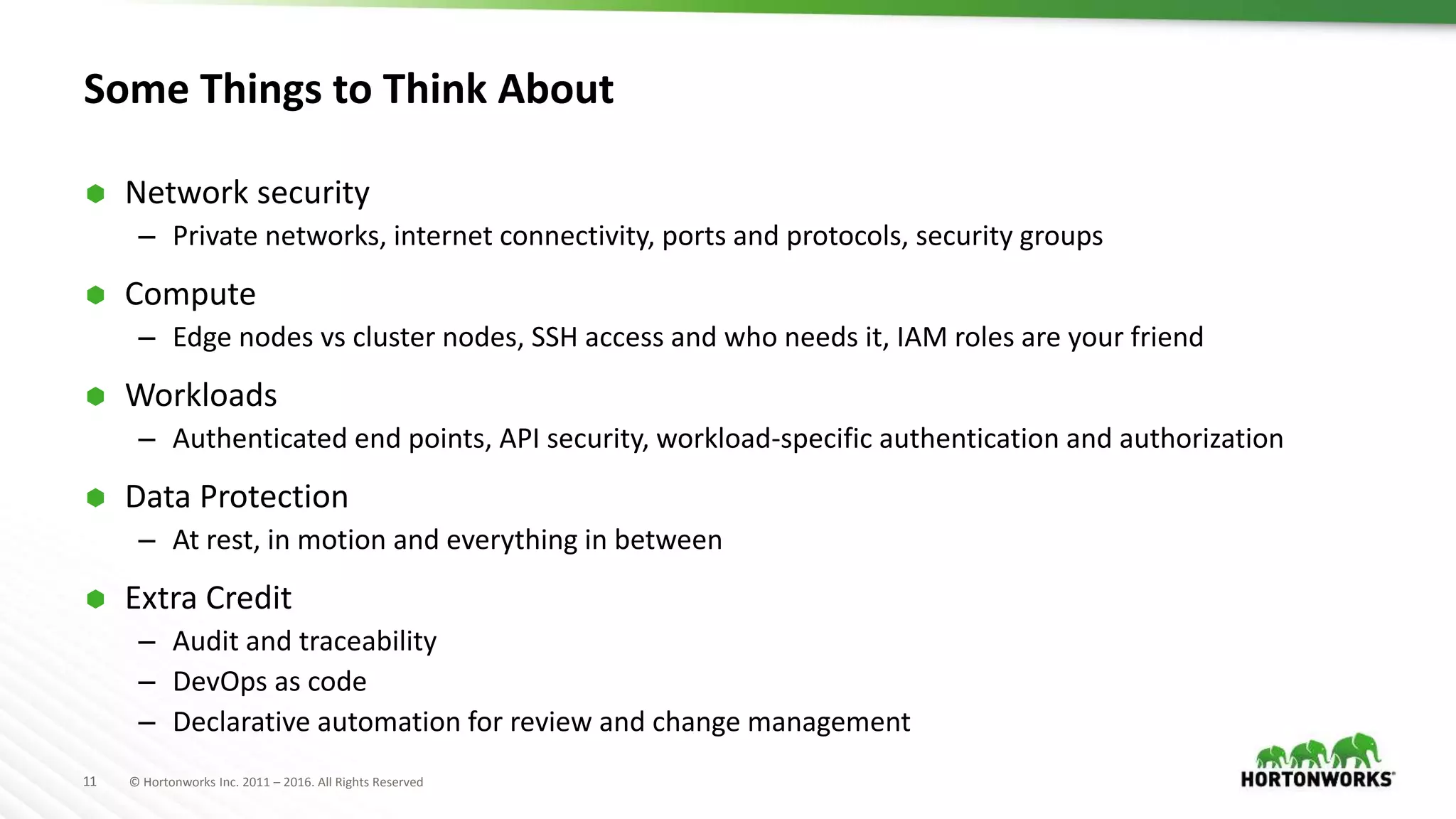

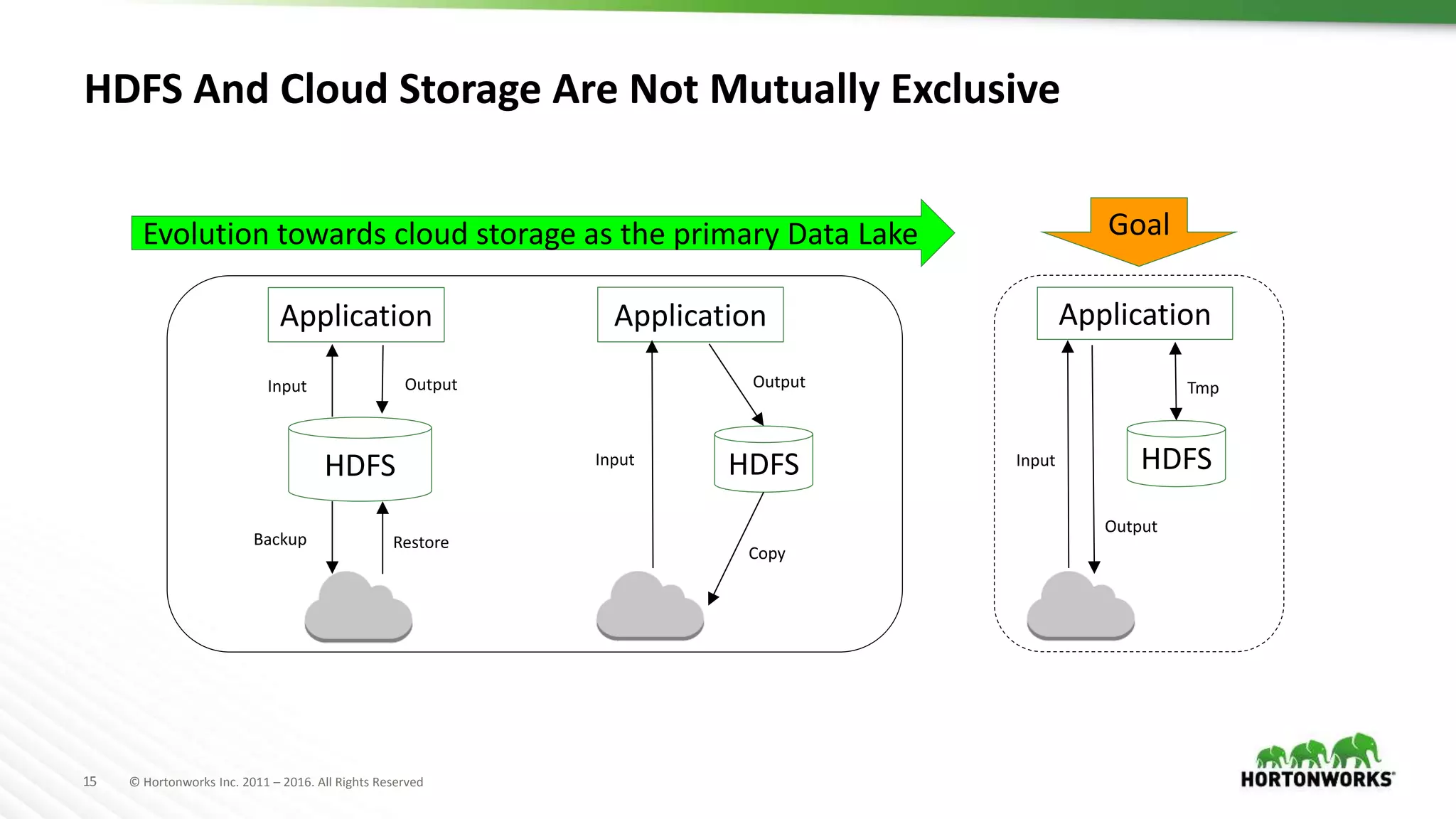

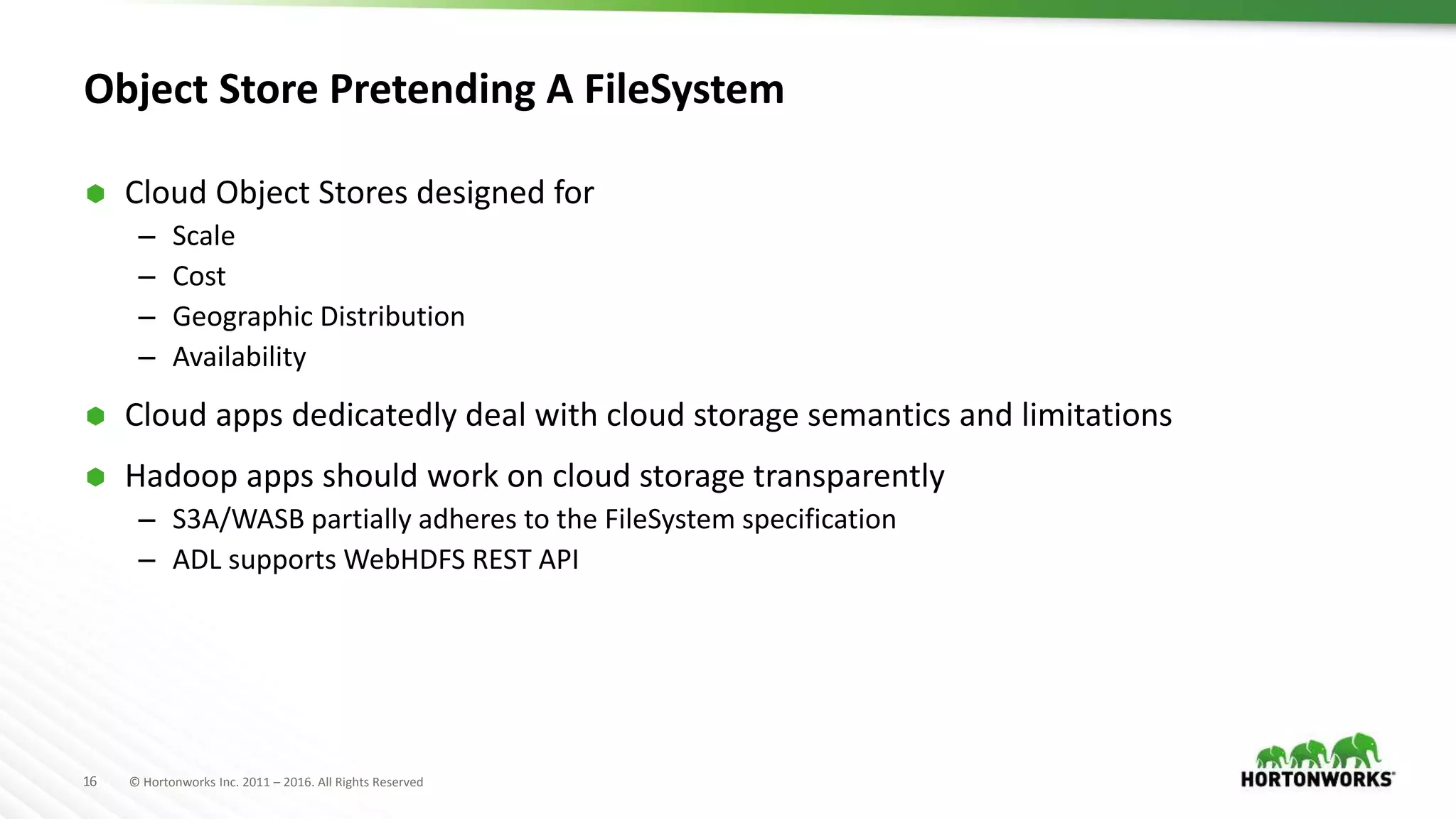

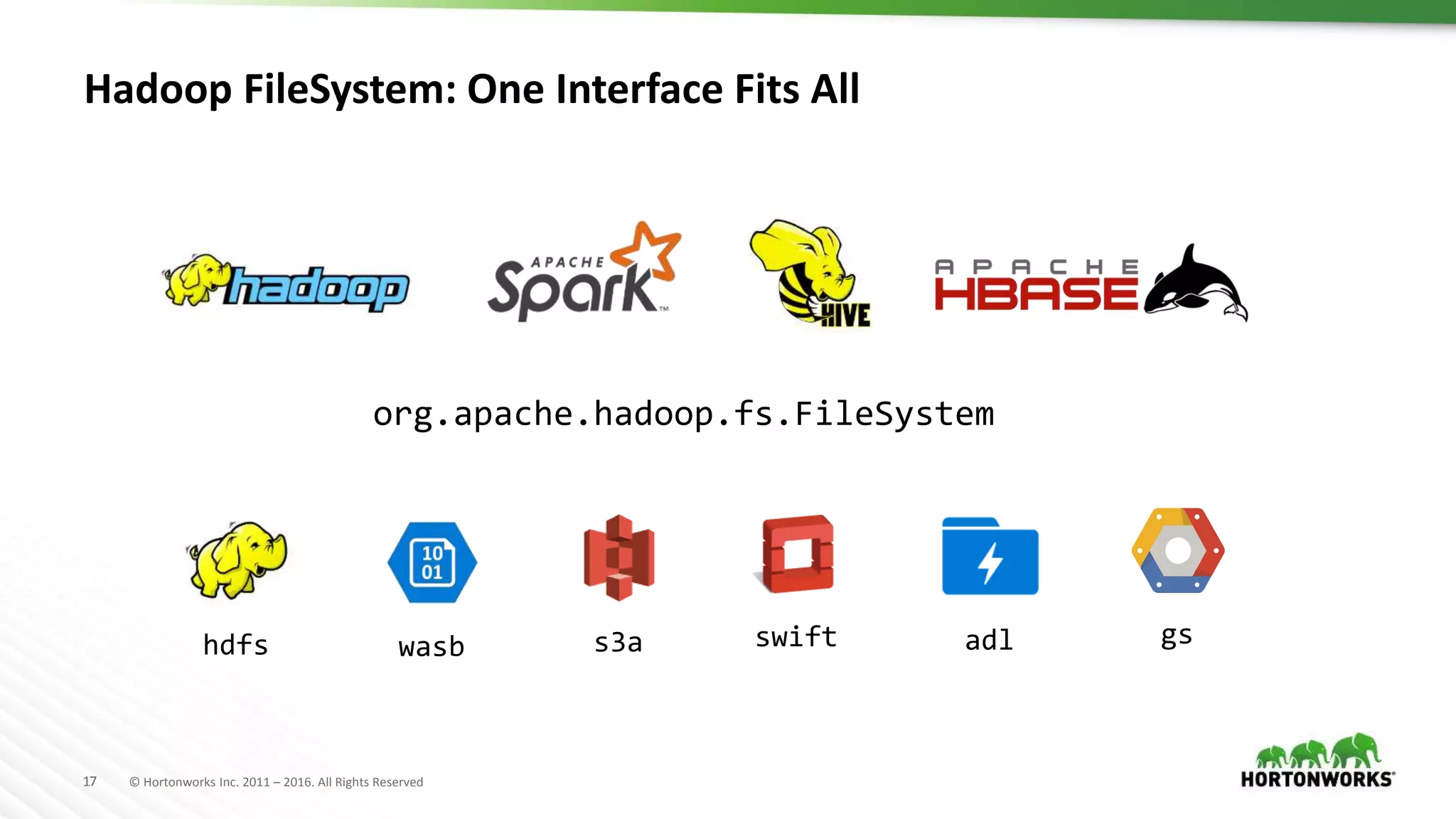

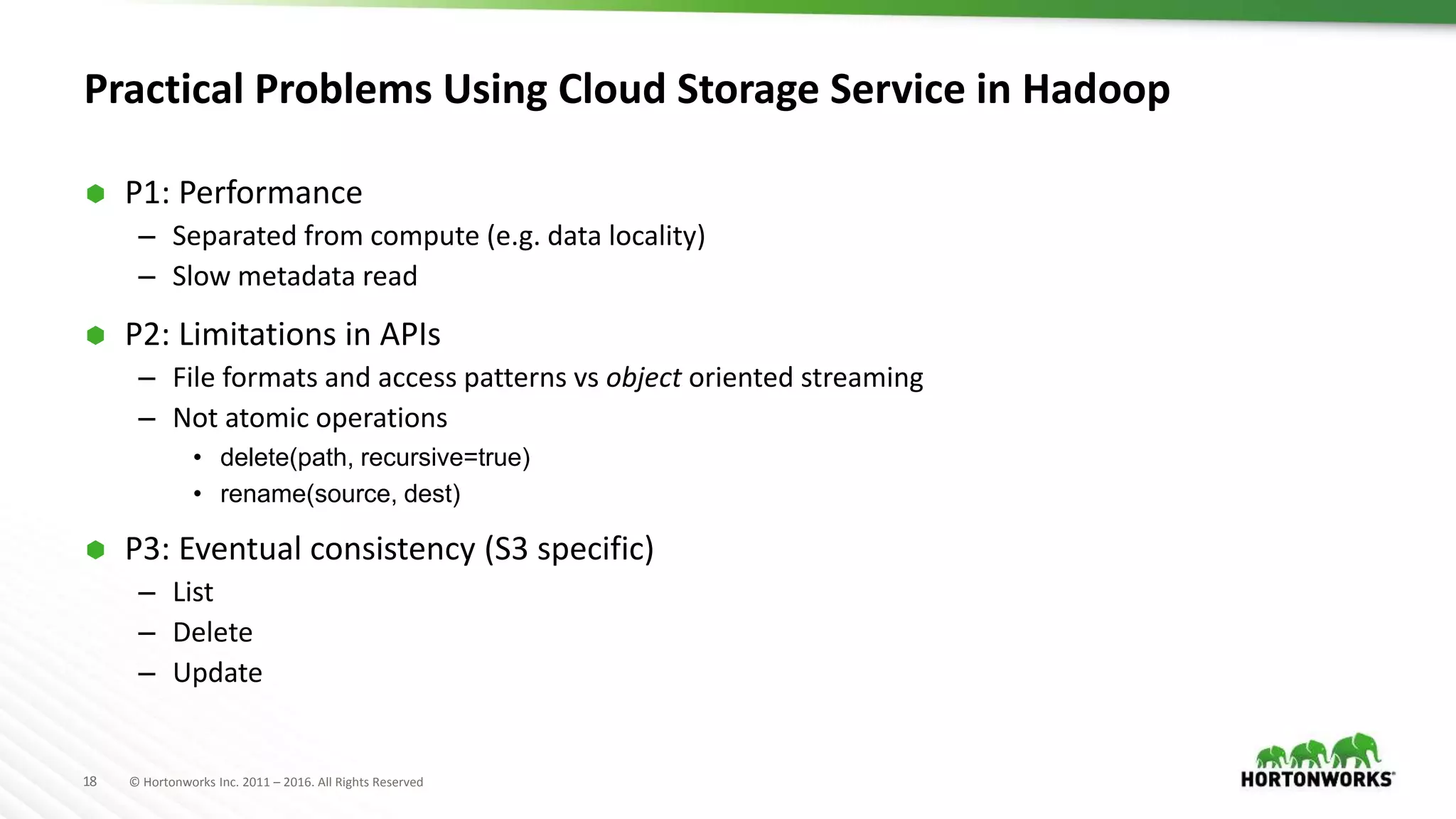

The document discusses the implementation of Hadoop in cloud environments, highlighting various use cases and scenarios, as well as challenges faced during deployment. Key tools like Cloudbreak are introduced for managing Hadoop clusters across different cloud platforms while emphasizing lessons learned regarding performance and storage options. The presentation also addresses practical issues related to cloud storage consistency, particularly when integrating with Hadoop applications.

![19 © Hortonworks Inc. 2011 – 2016. All Rights Reserved

P2: Not Atomic API: rename()

A Series of Operations on The Client

00

00

00

01

01

s01 s02

s03 s04

hash("/work/pending/part-01")

["s02", "s03", "s04"]

copy("/work/pending/part-01",

"/work/complete/part01")

01

01

01

01

delete("/work/pending/part-01")

hash("/work/pending/part-00")

["s01", "s02", "s04"]](https://image.slidesharecdn.com/1493venkateshcloudywithachanceofhadooprealworldconsiderations-170626210949/75/Cloudy-with-a-chance-of-Hadoop-real-world-considerations-19-2048.jpg)