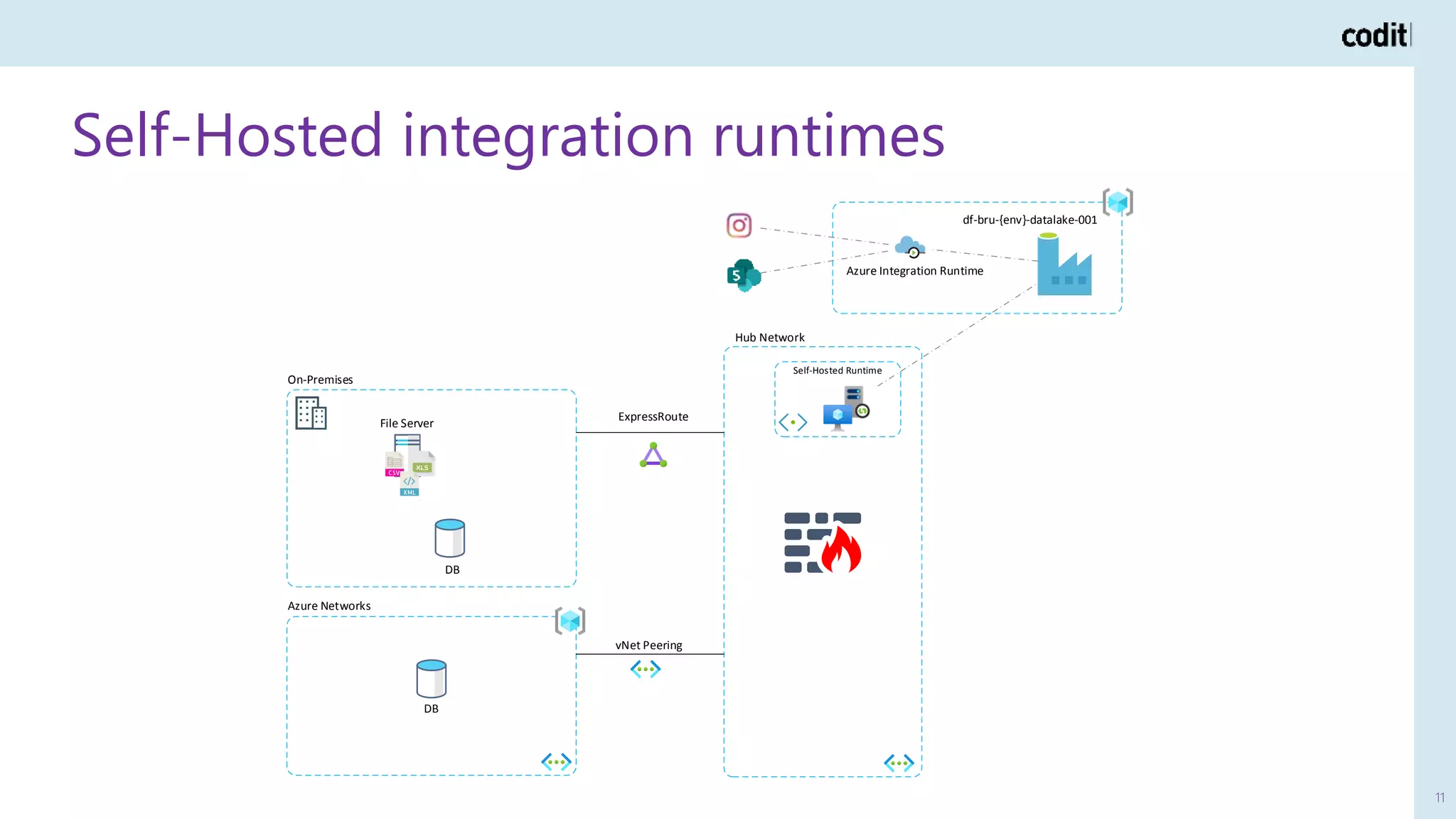

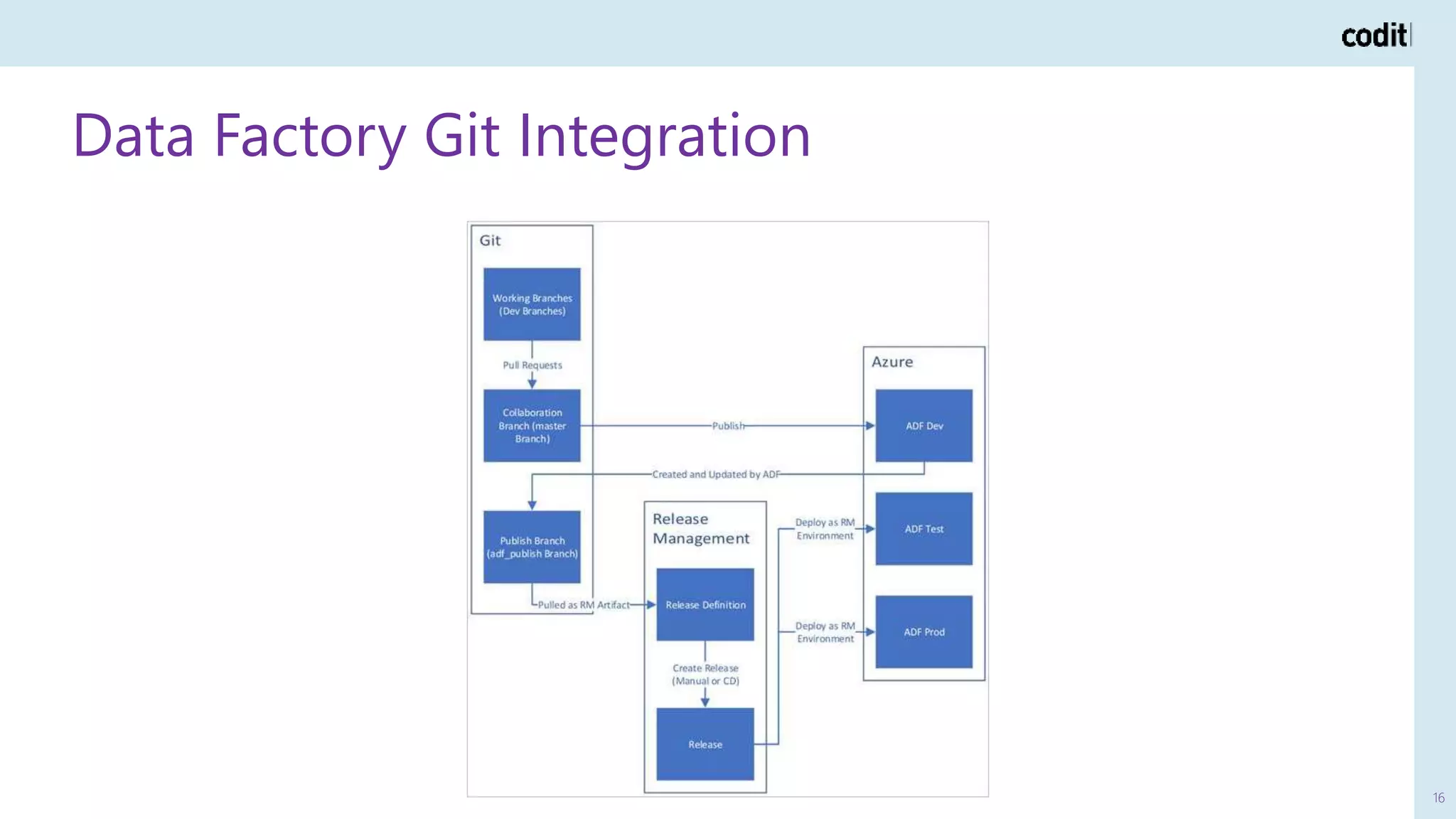

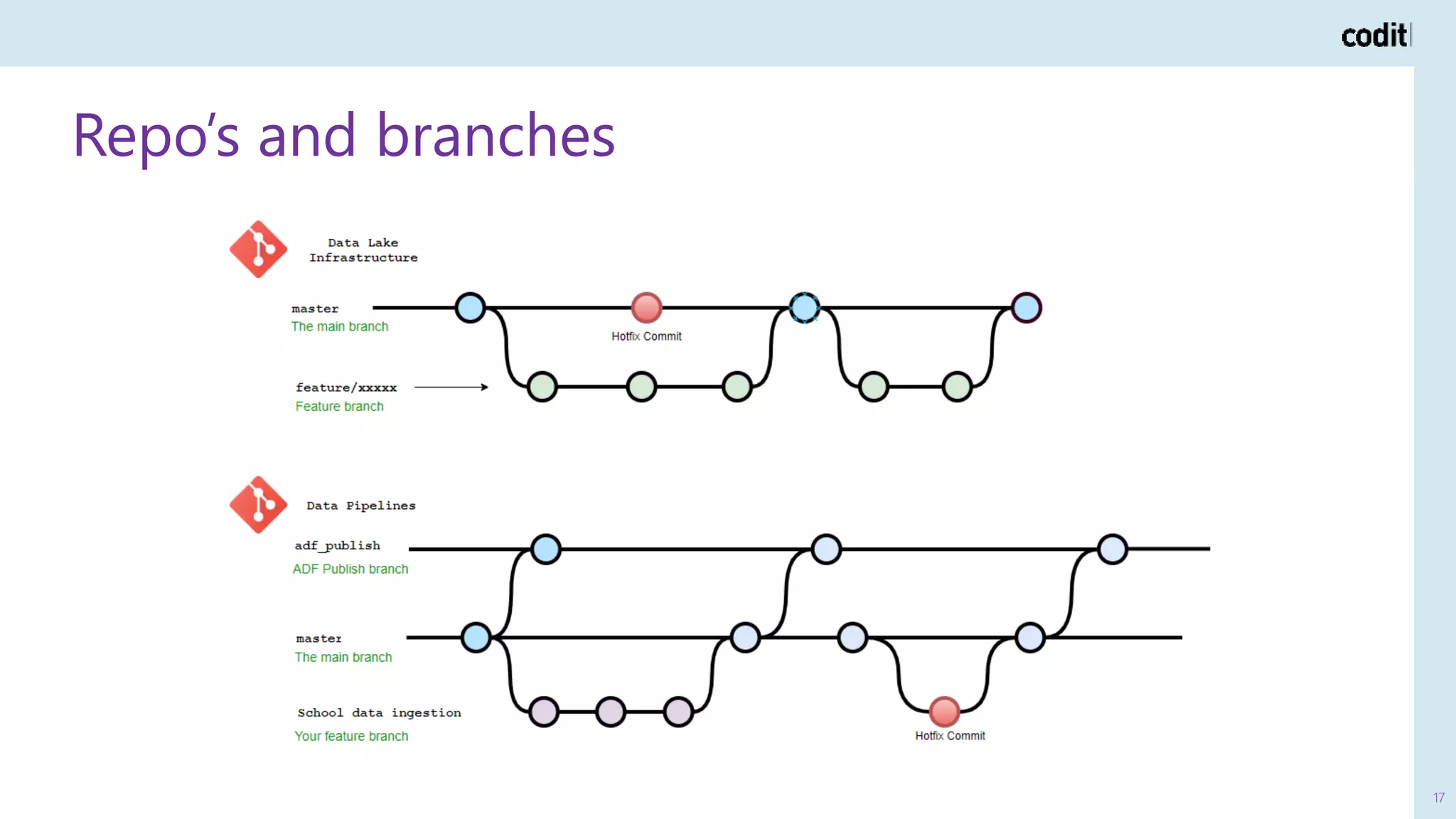

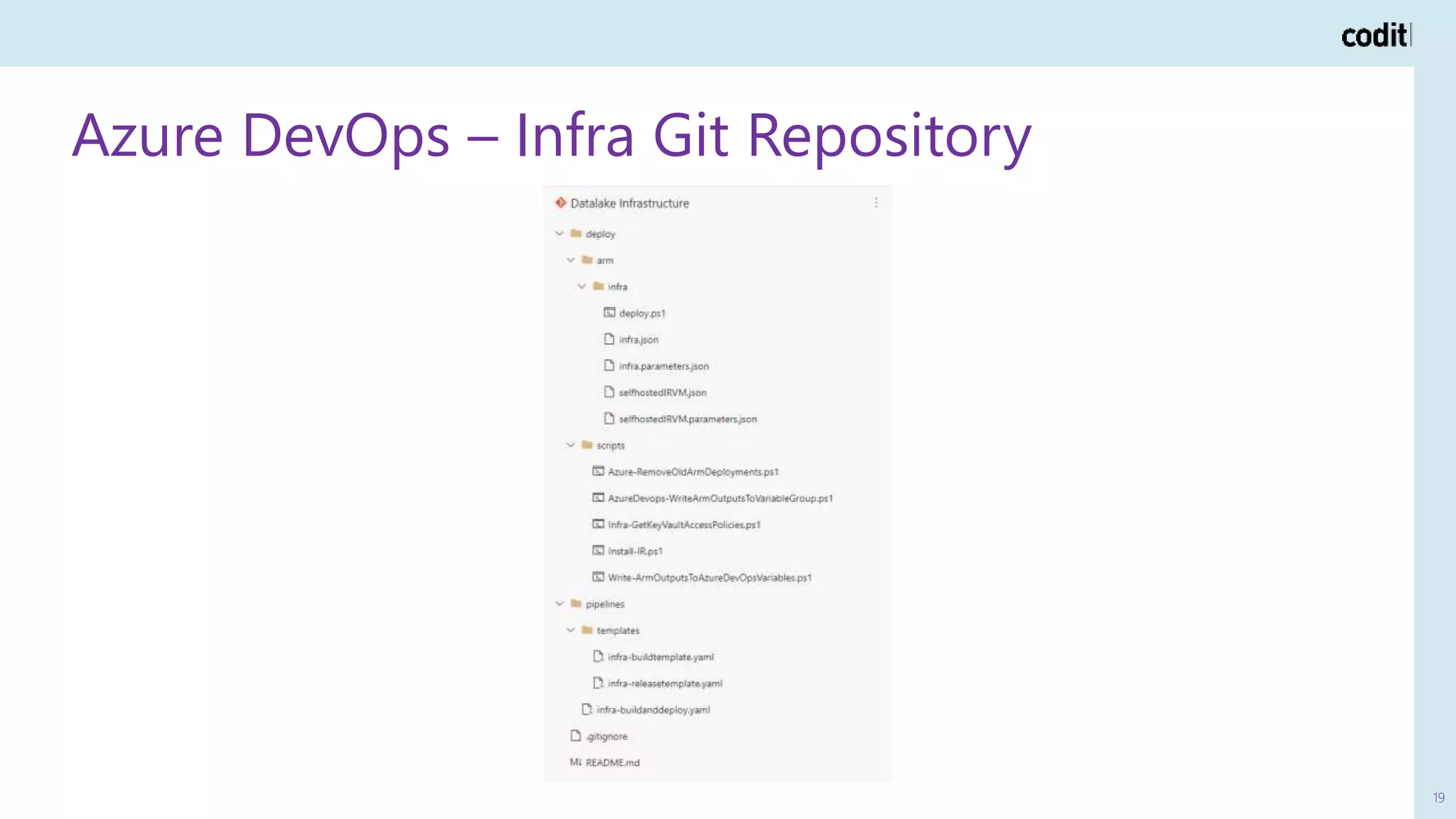

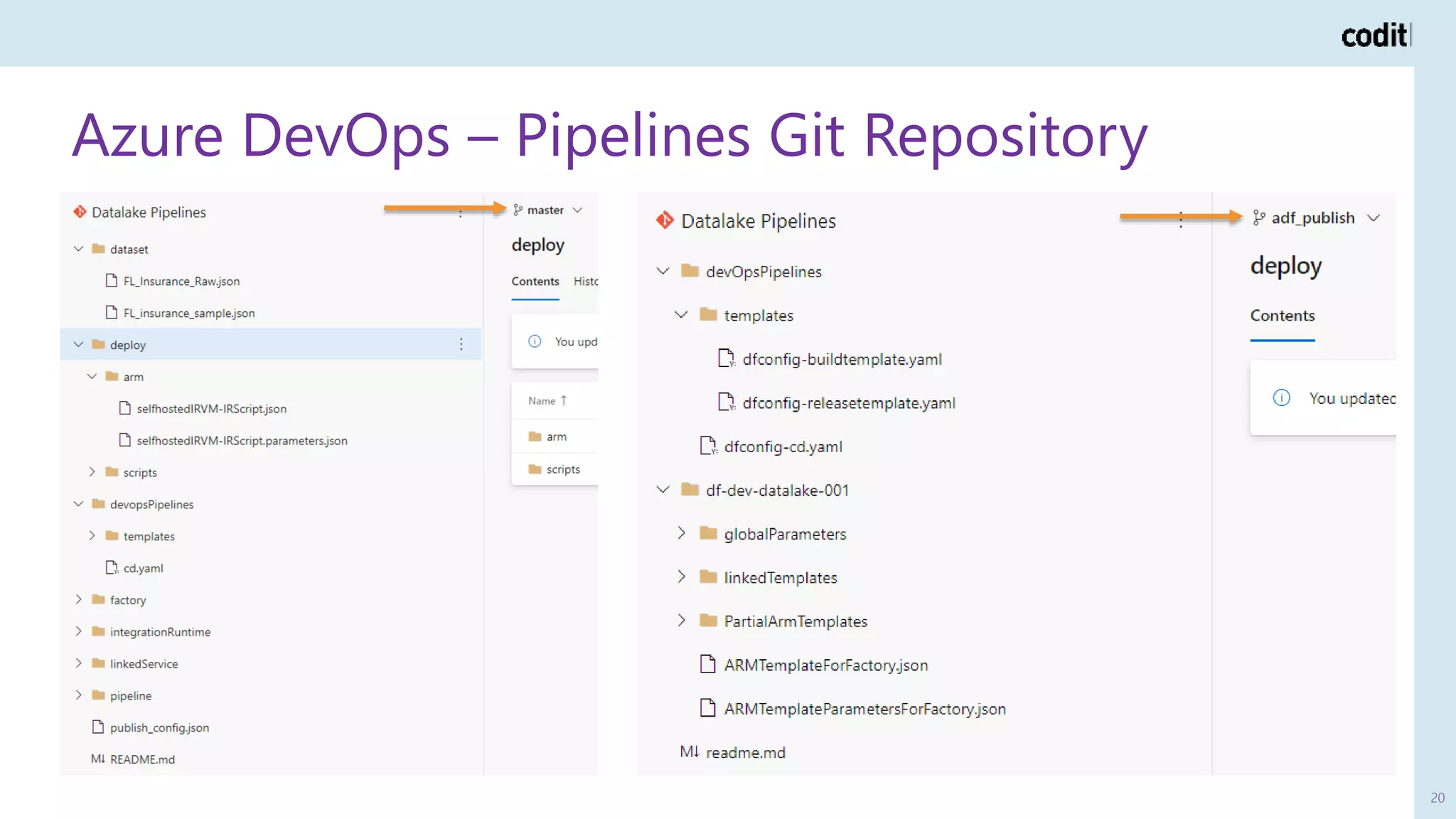

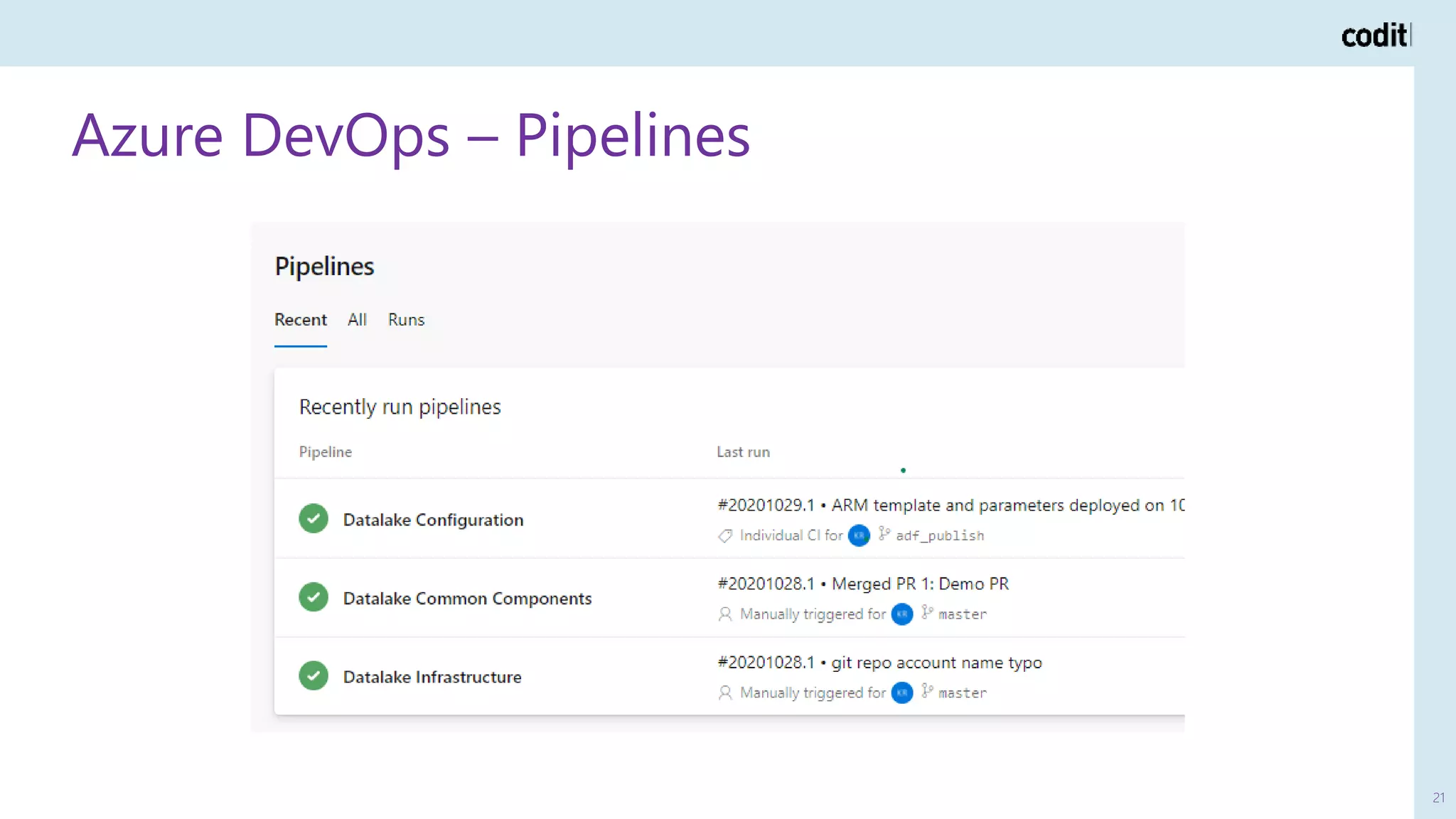

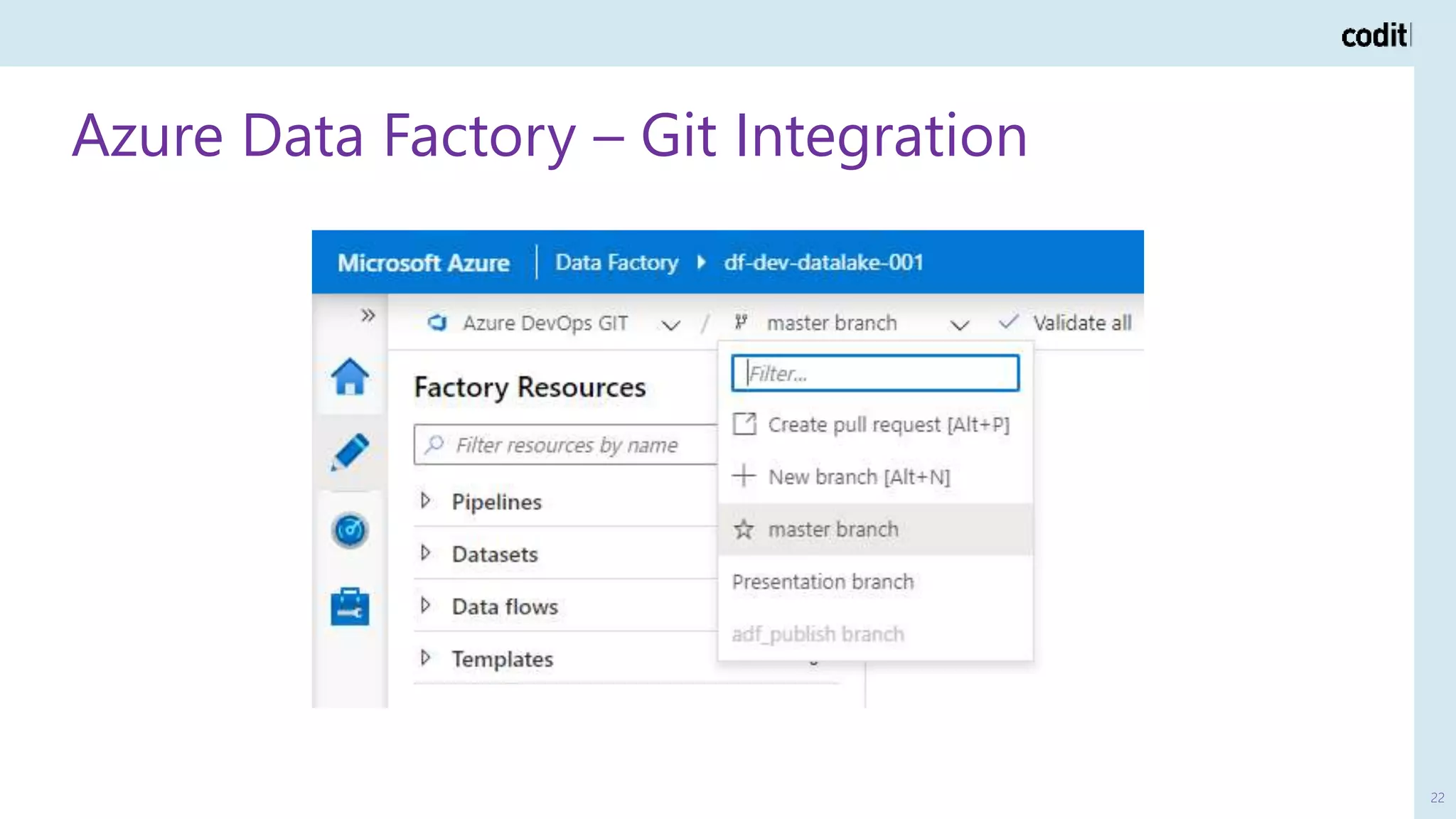

The document discusses the implementation of CI/CD practices for data pipelines using Azure Data Factory in a data platform context. It outlines the architecture of a data lake, the roles and responsibilities involved, and the benefits of Git integration for consistent deployment and collaboration. The document emphasizes enabling agile processes, quality tracking, and better data security in data management.