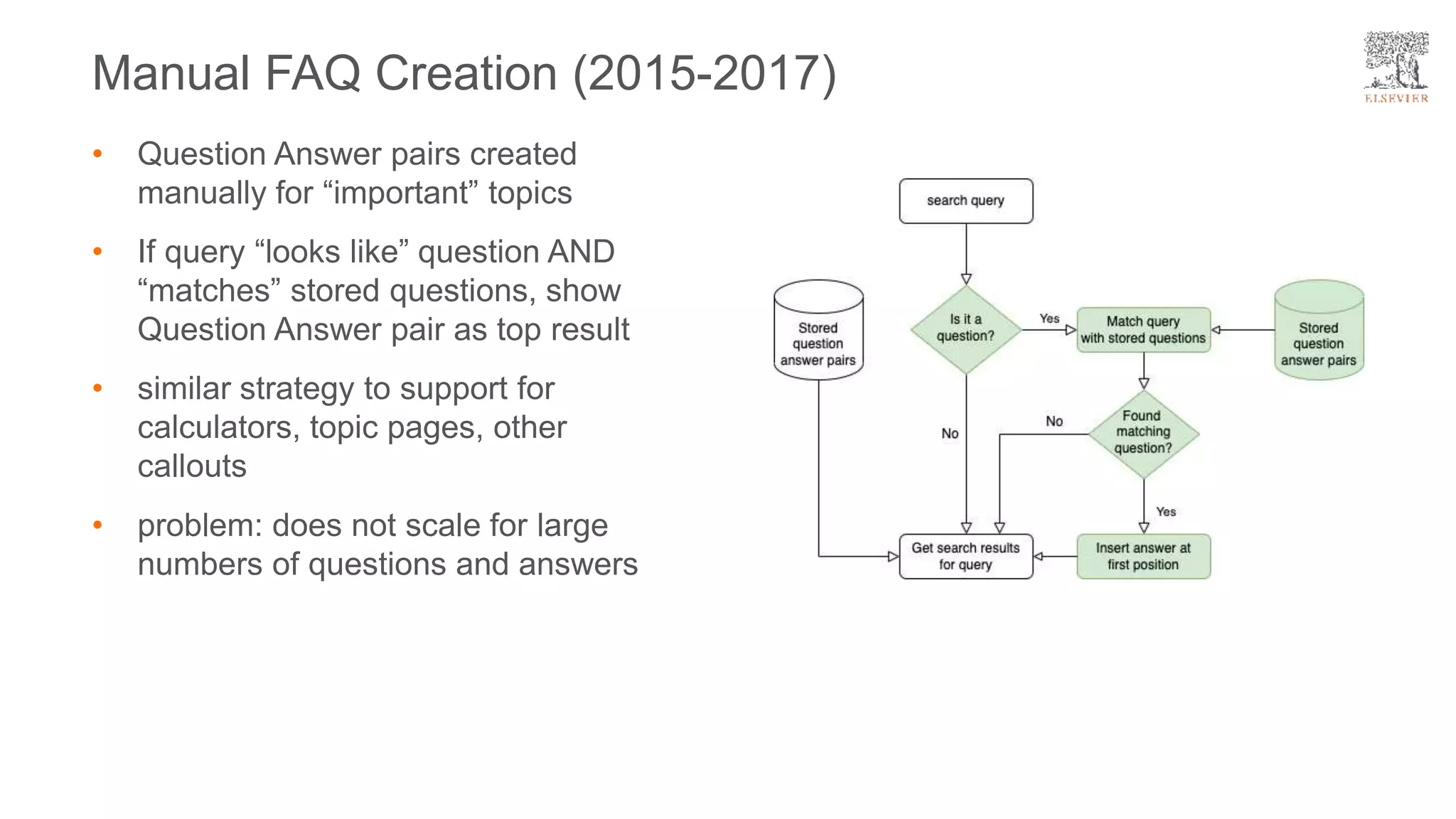

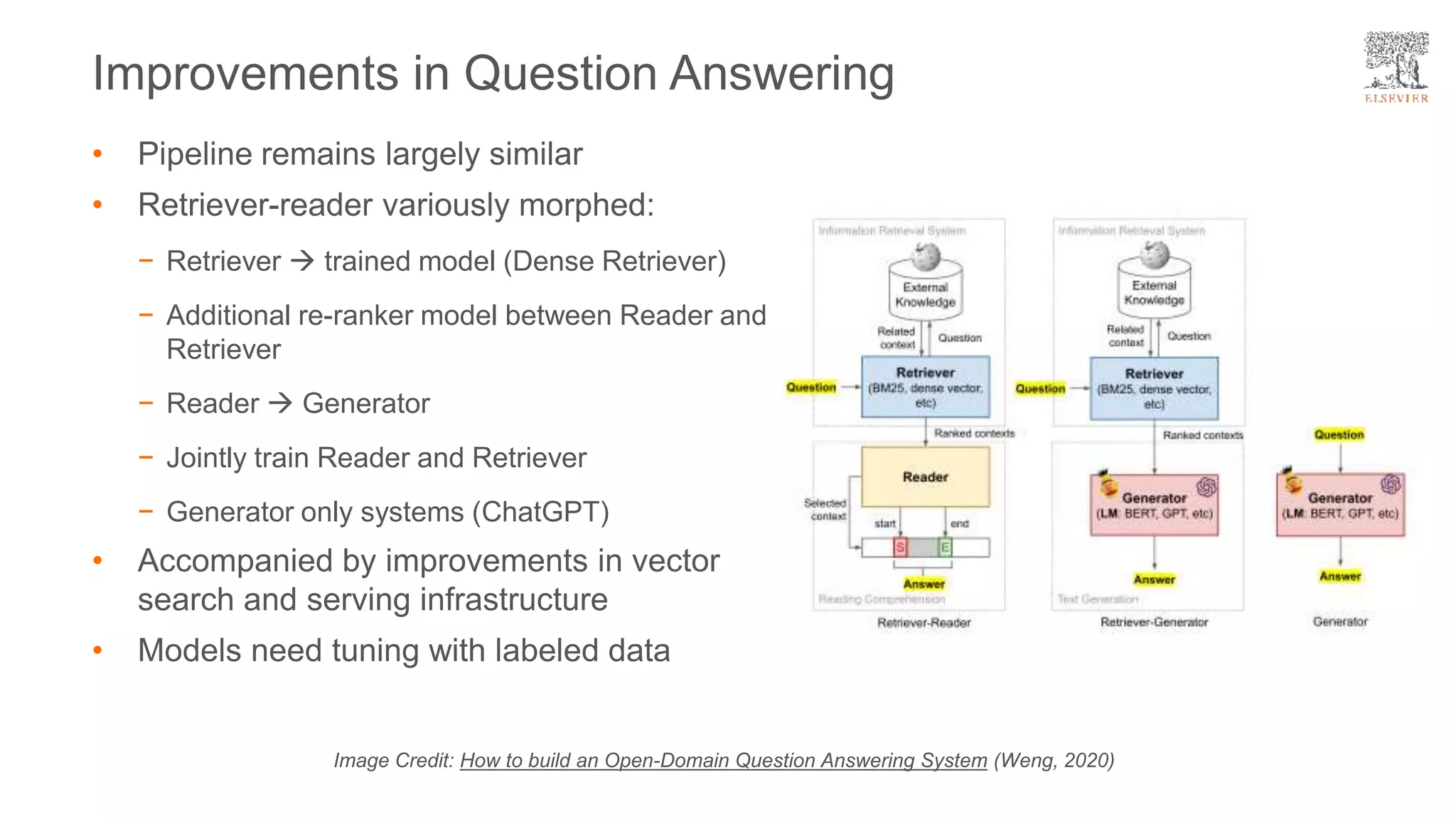

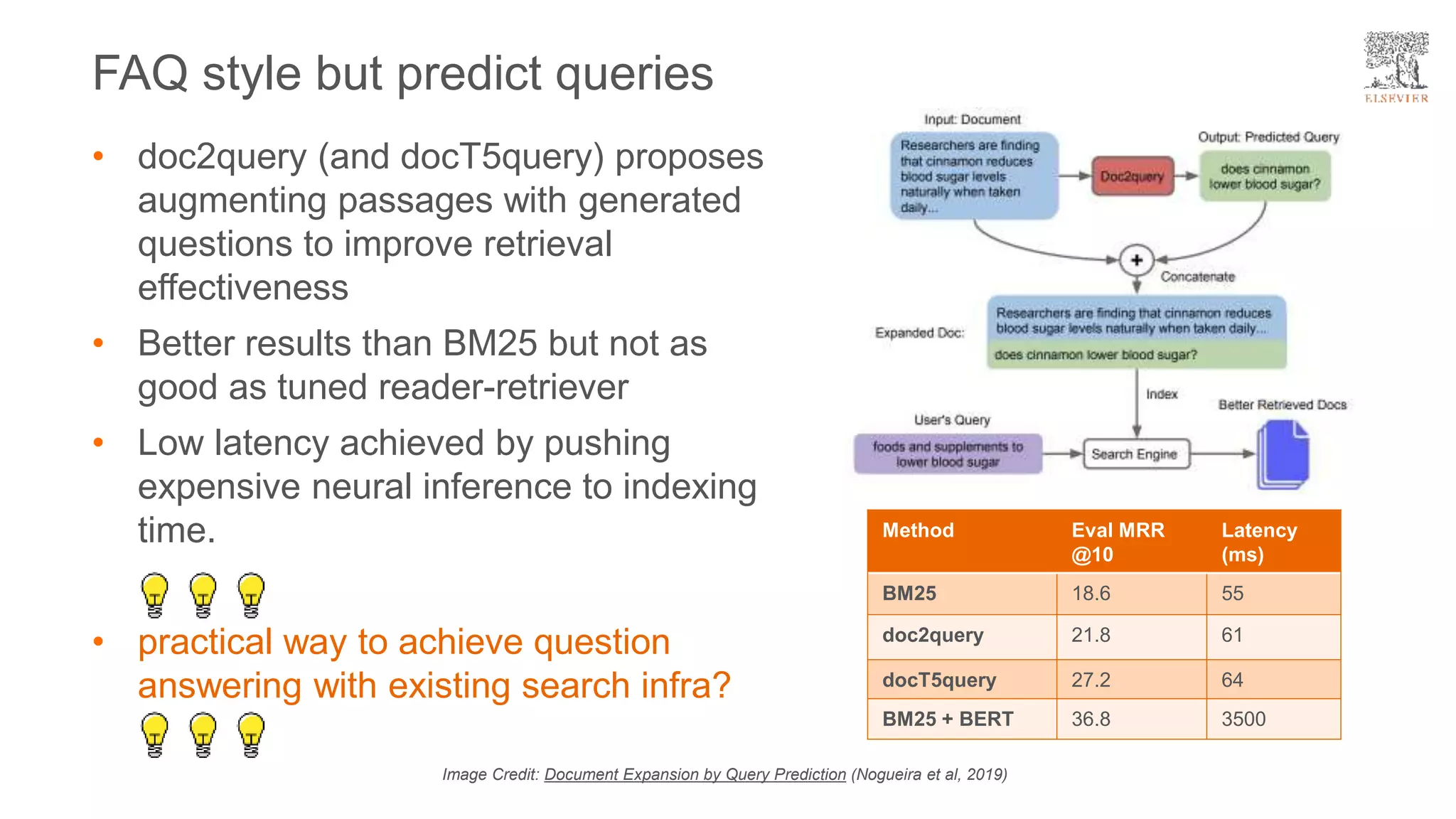

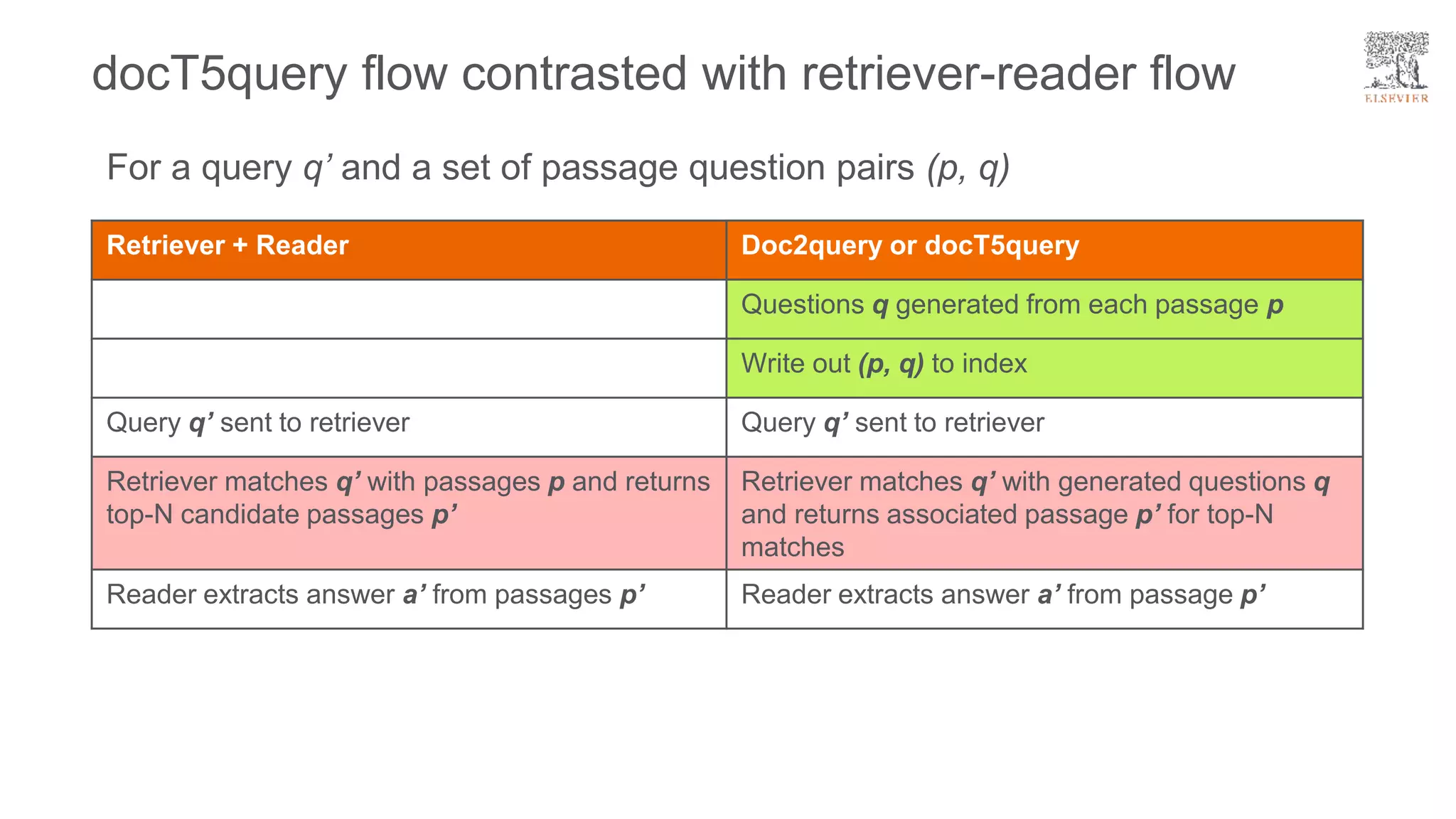

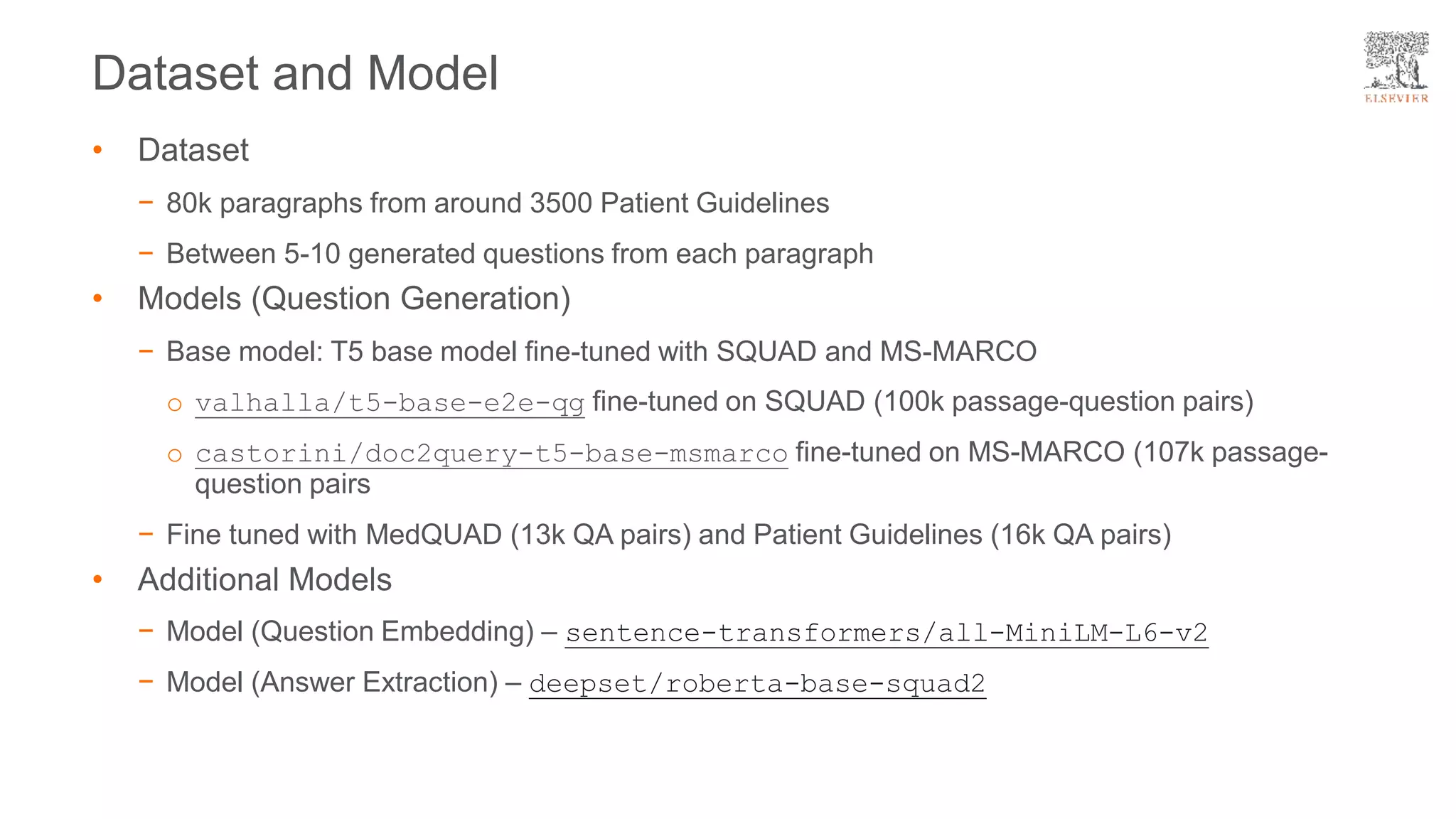

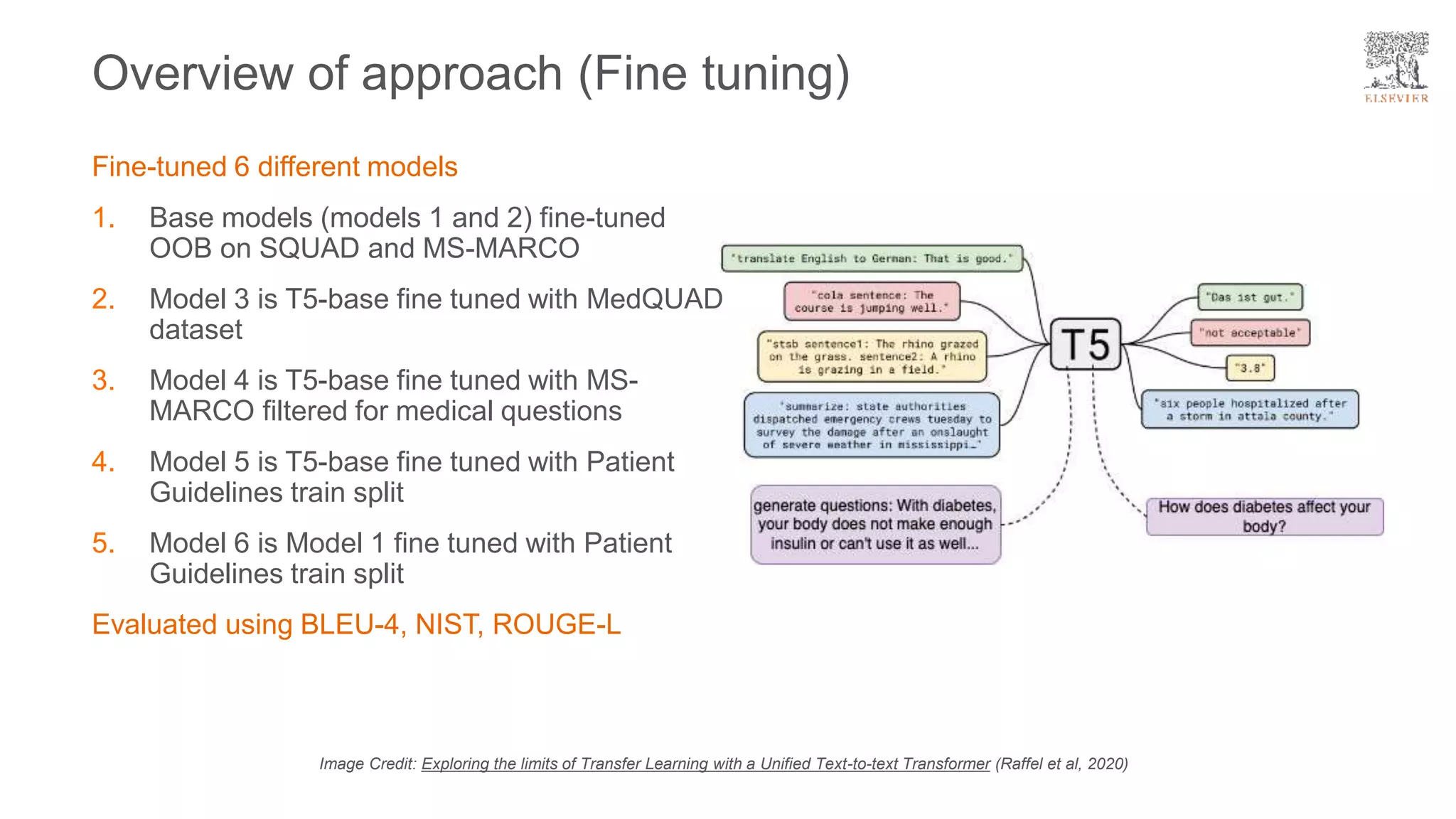

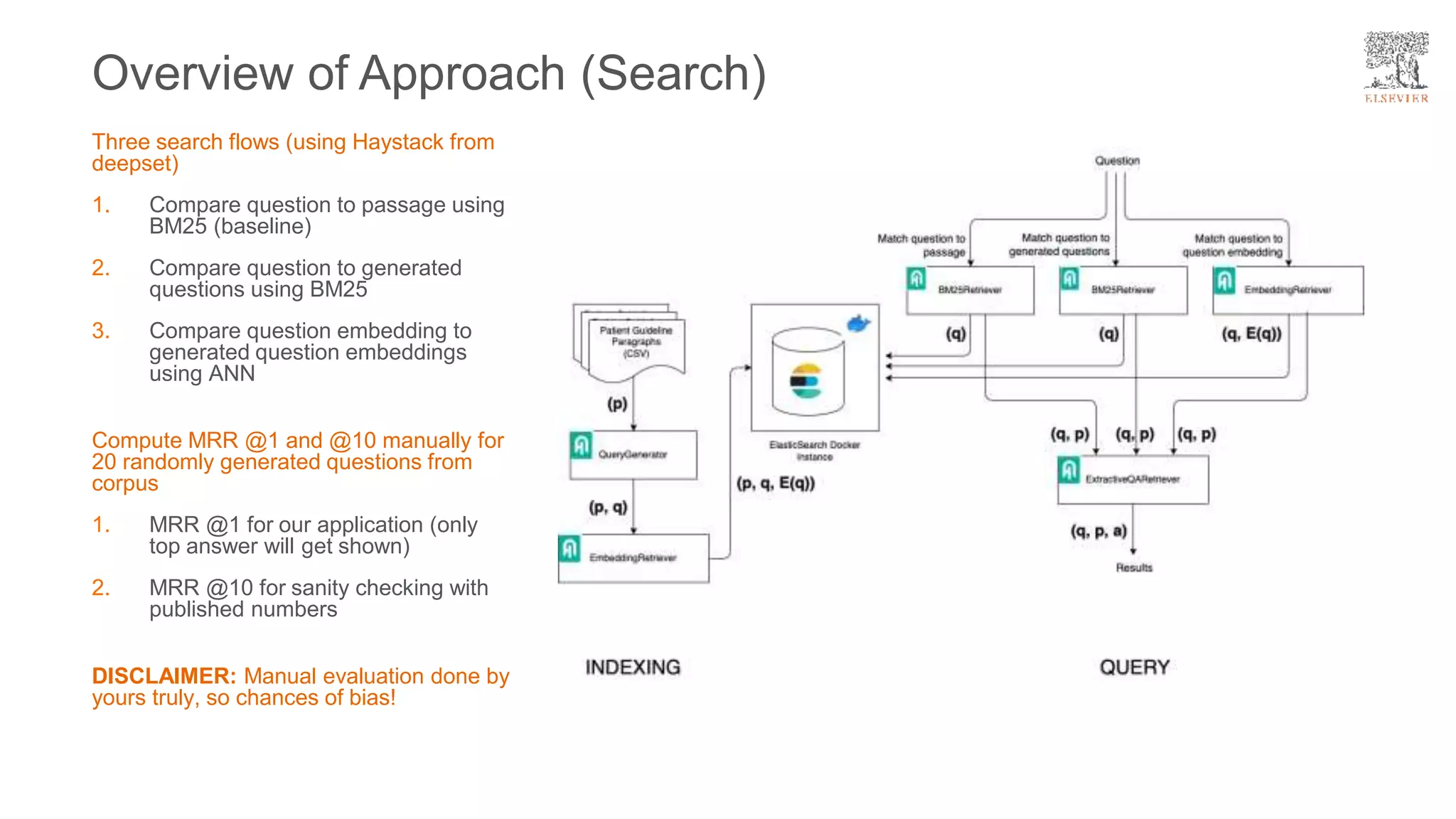

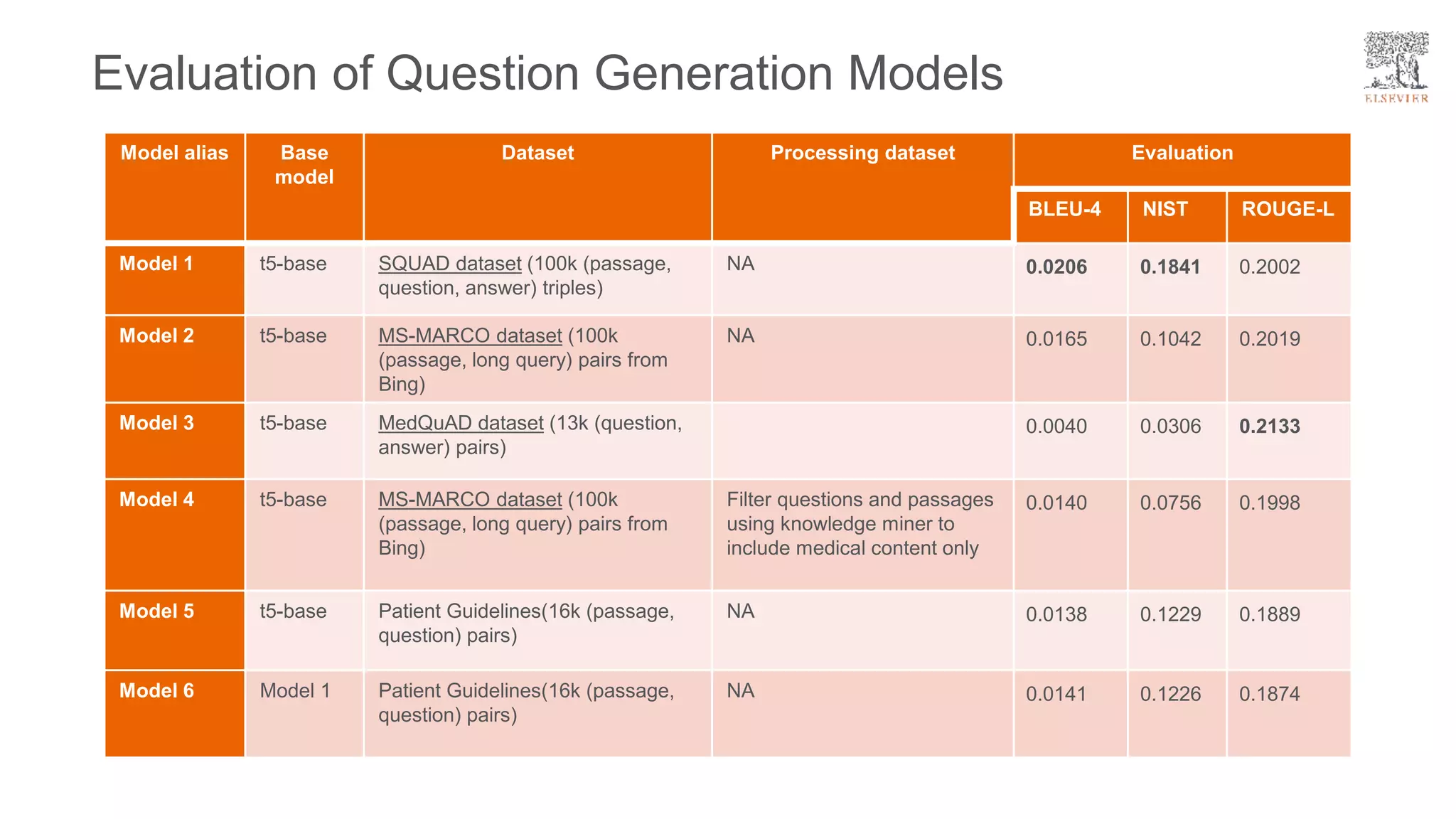

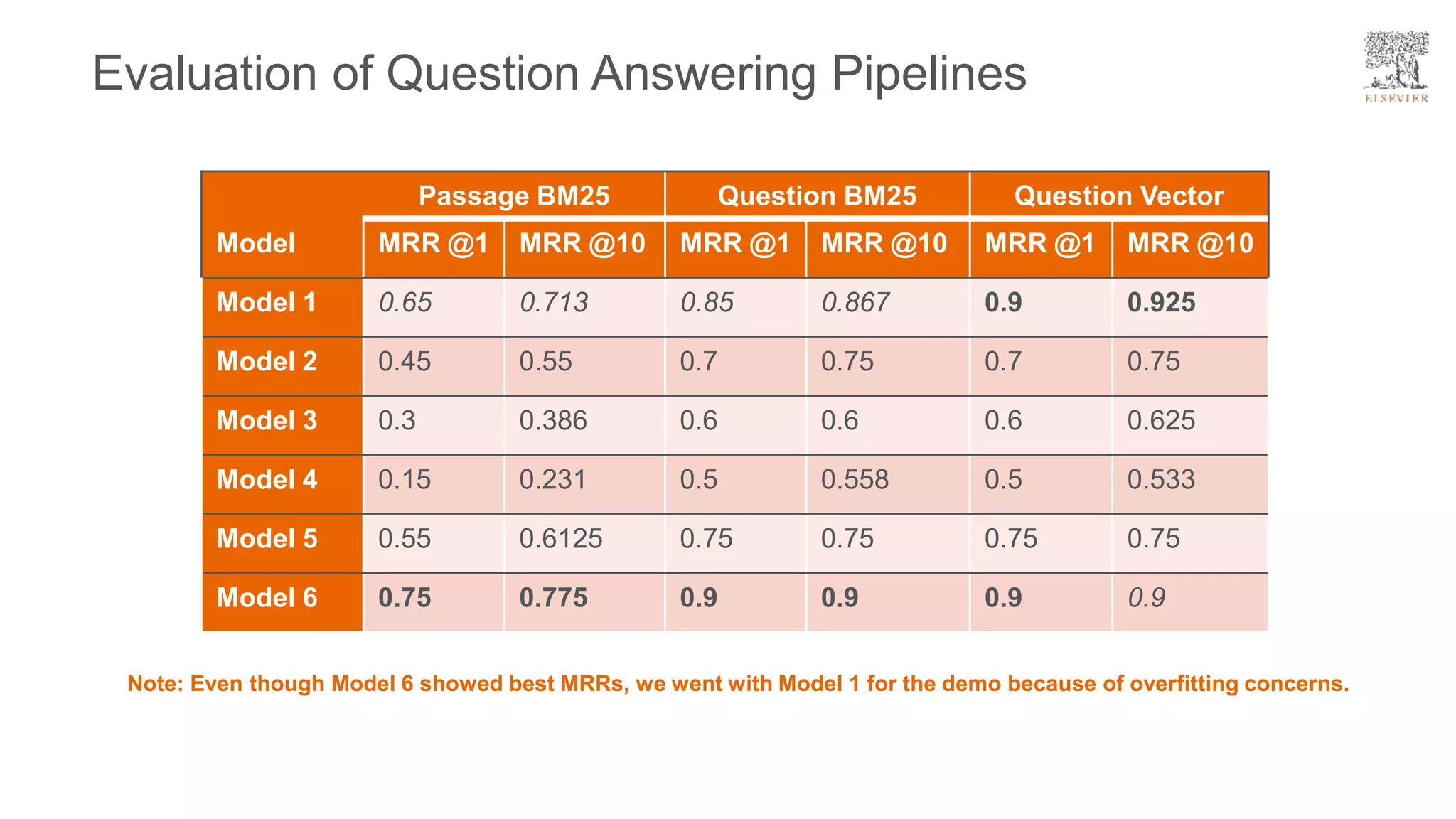

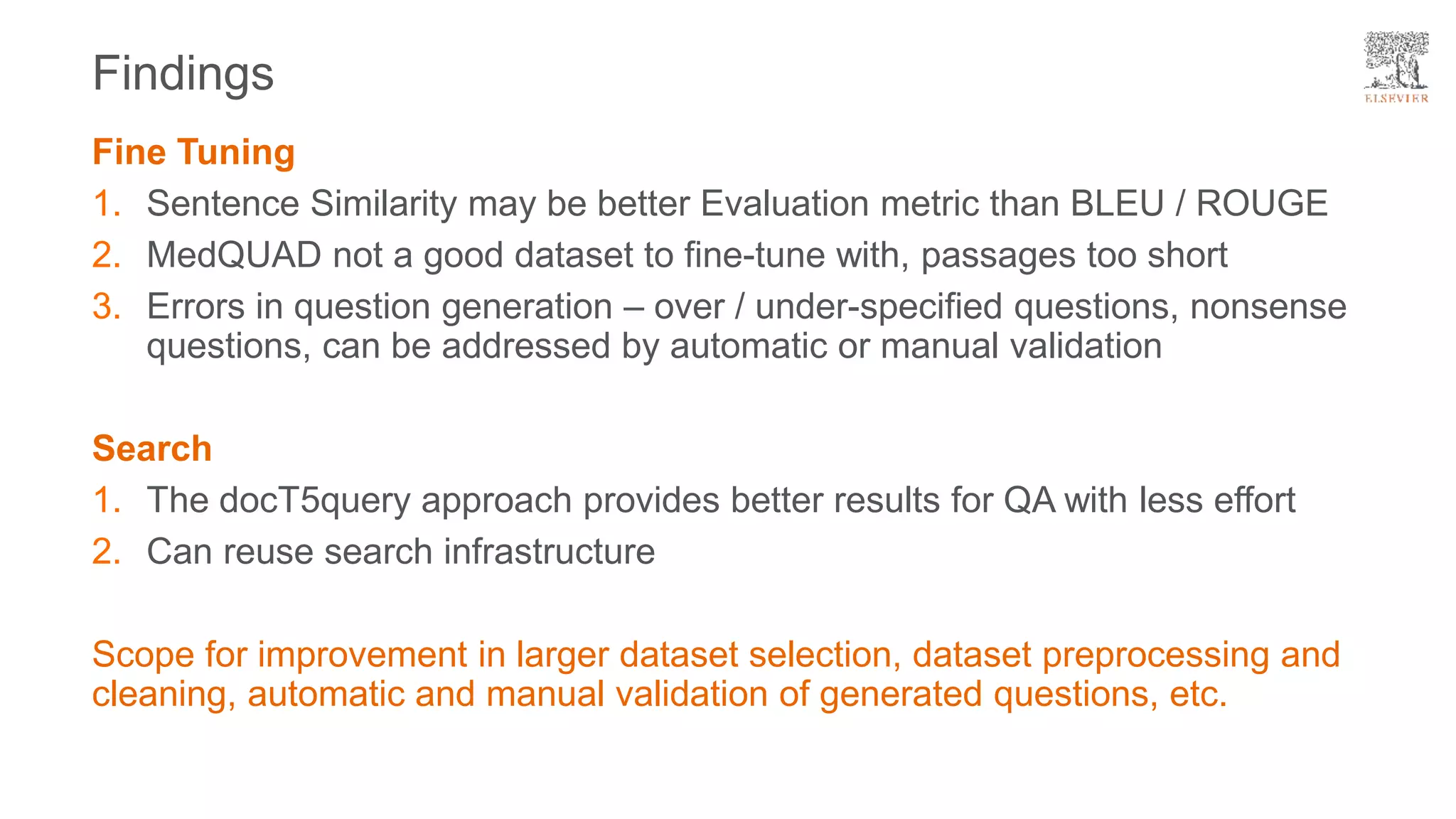

The document presents a framework for improving question answering systems using generated questions from medical documents while addressing scalability issues in traditional approaches. It details the application of various models and methods, including the doct5query approach, which enhances retrieval effectiveness and minimizes the need for labeled data, along with an evaluation of their performance. Key findings include the necessity for model fine-tuning and the potential for improved applications across clinical and educational contexts.