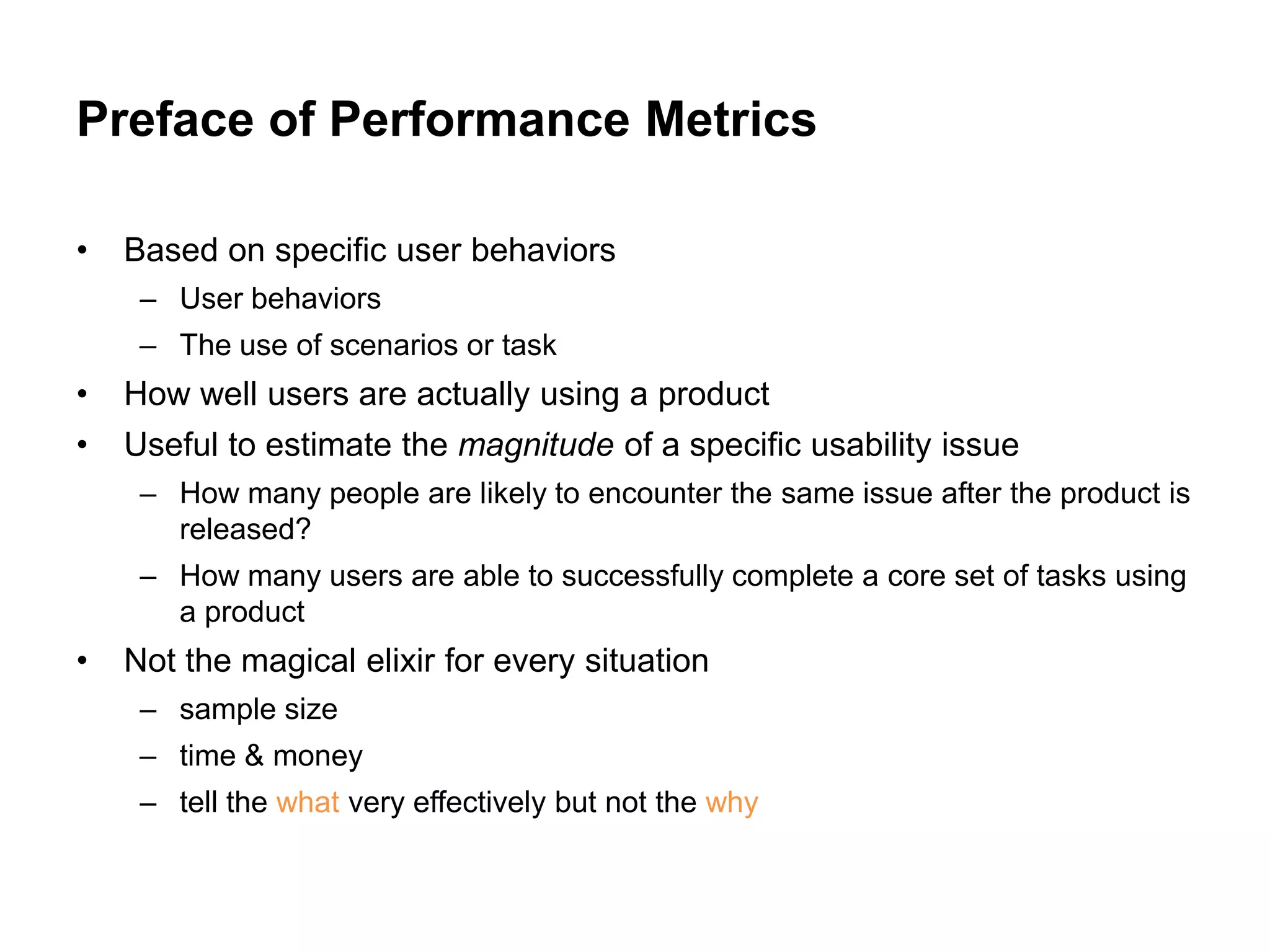

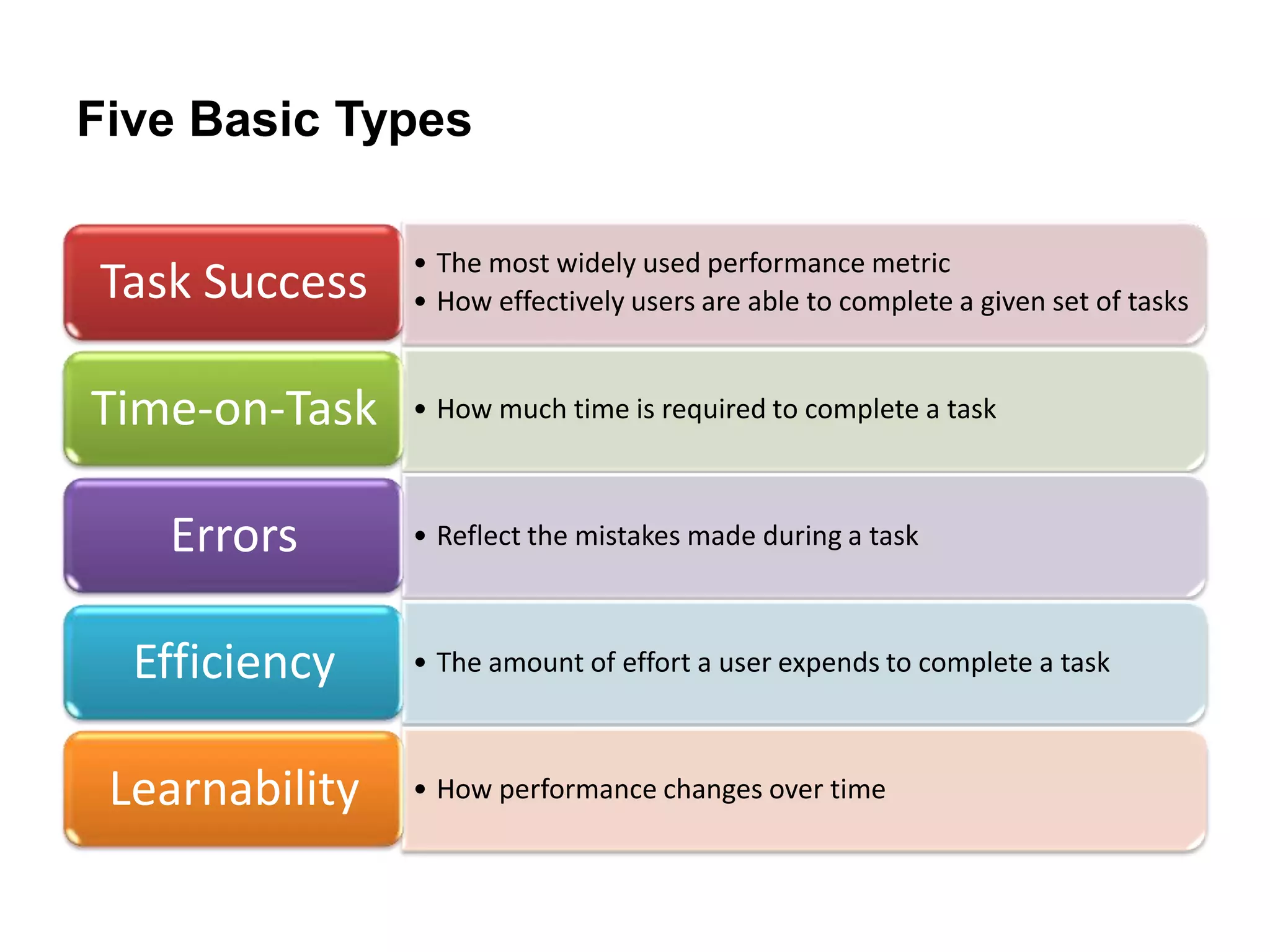

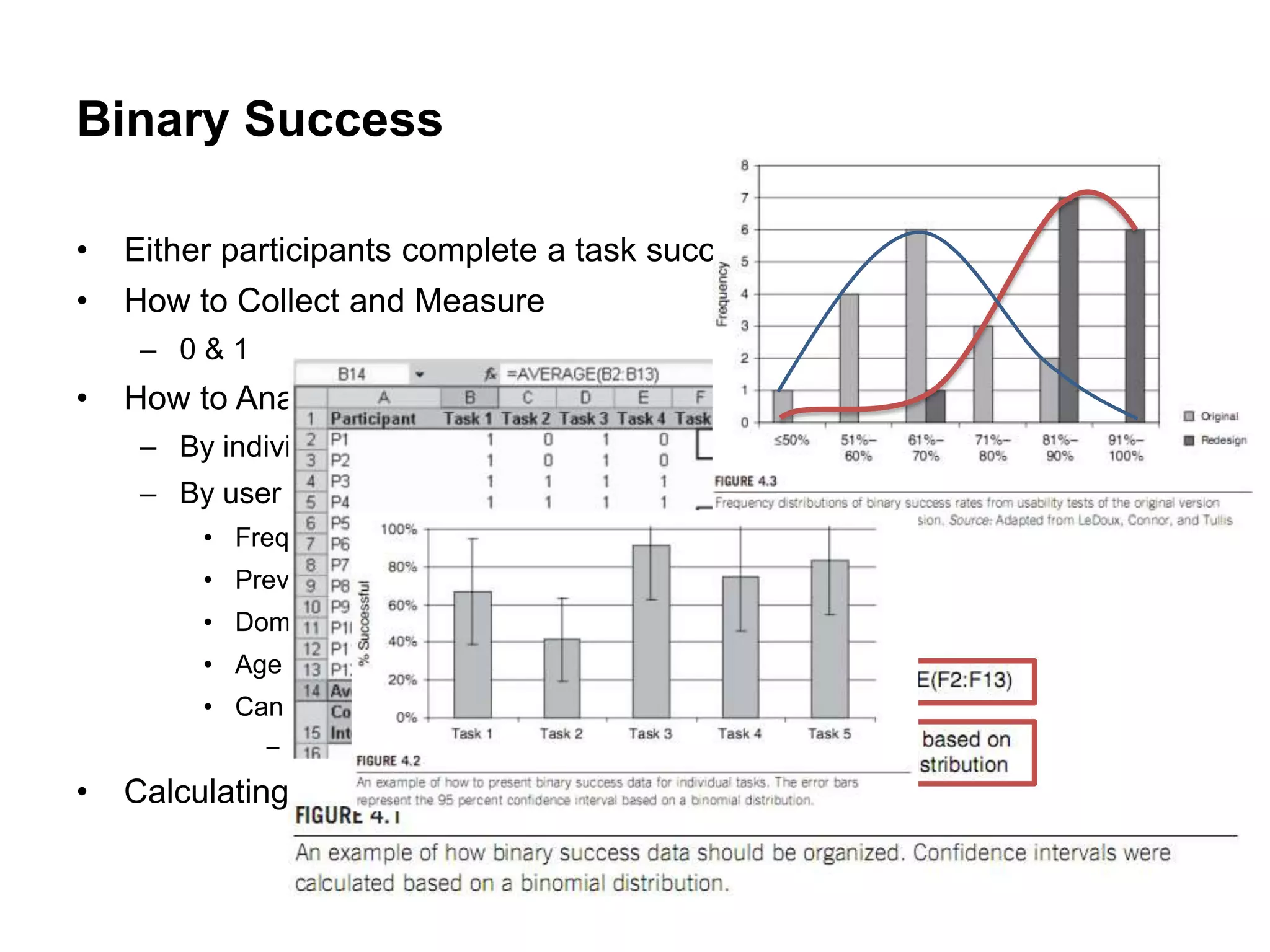

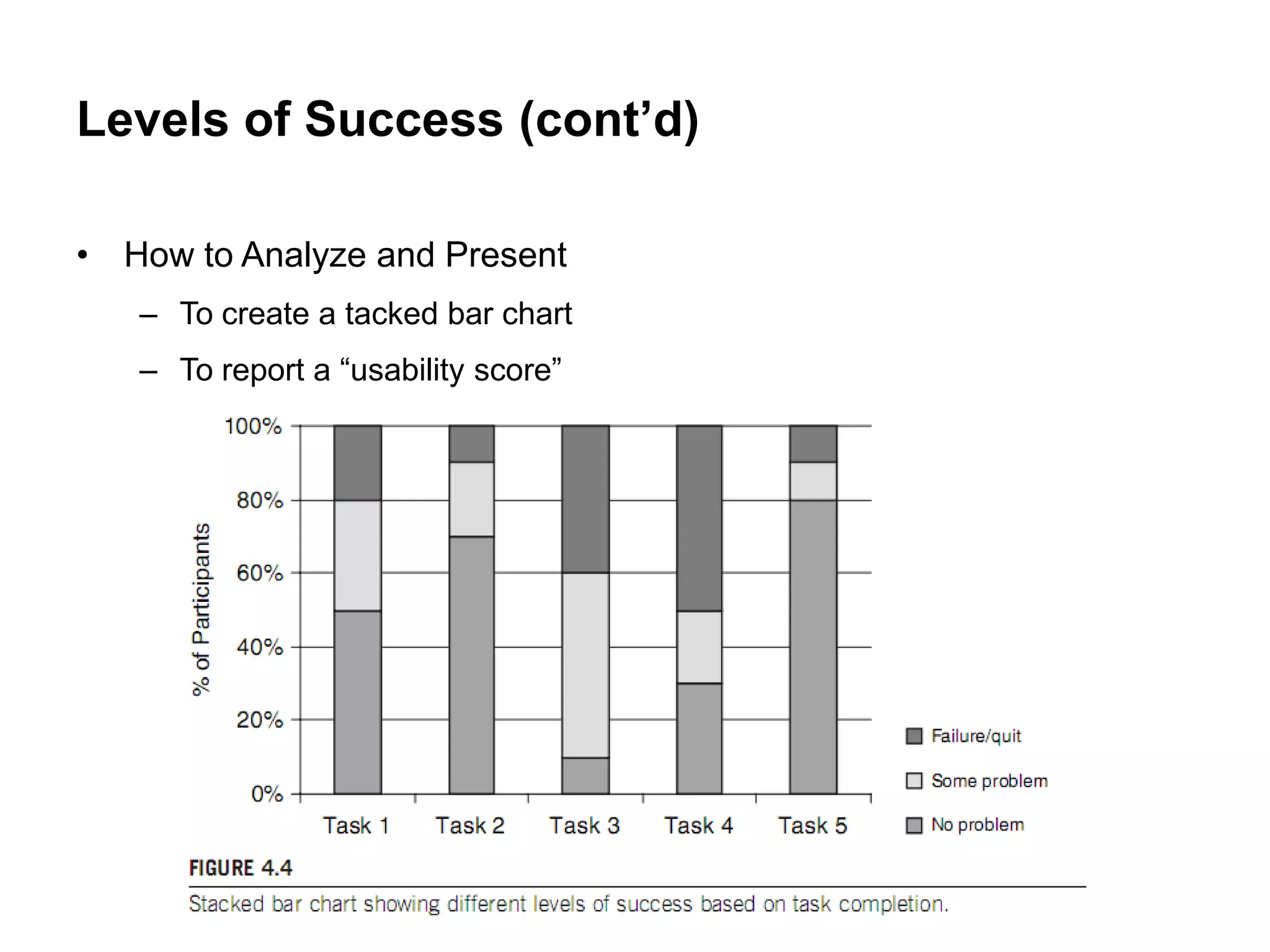

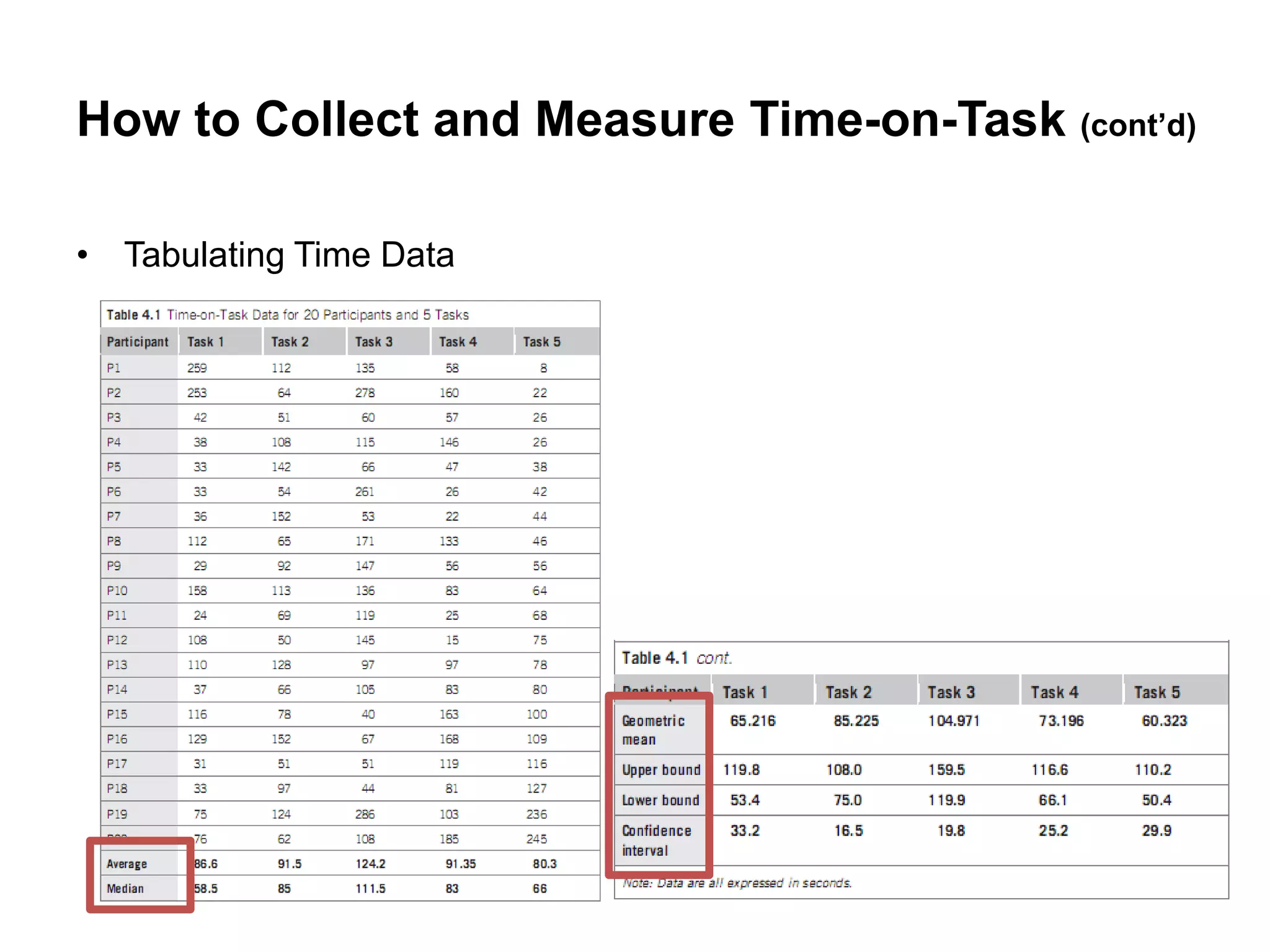

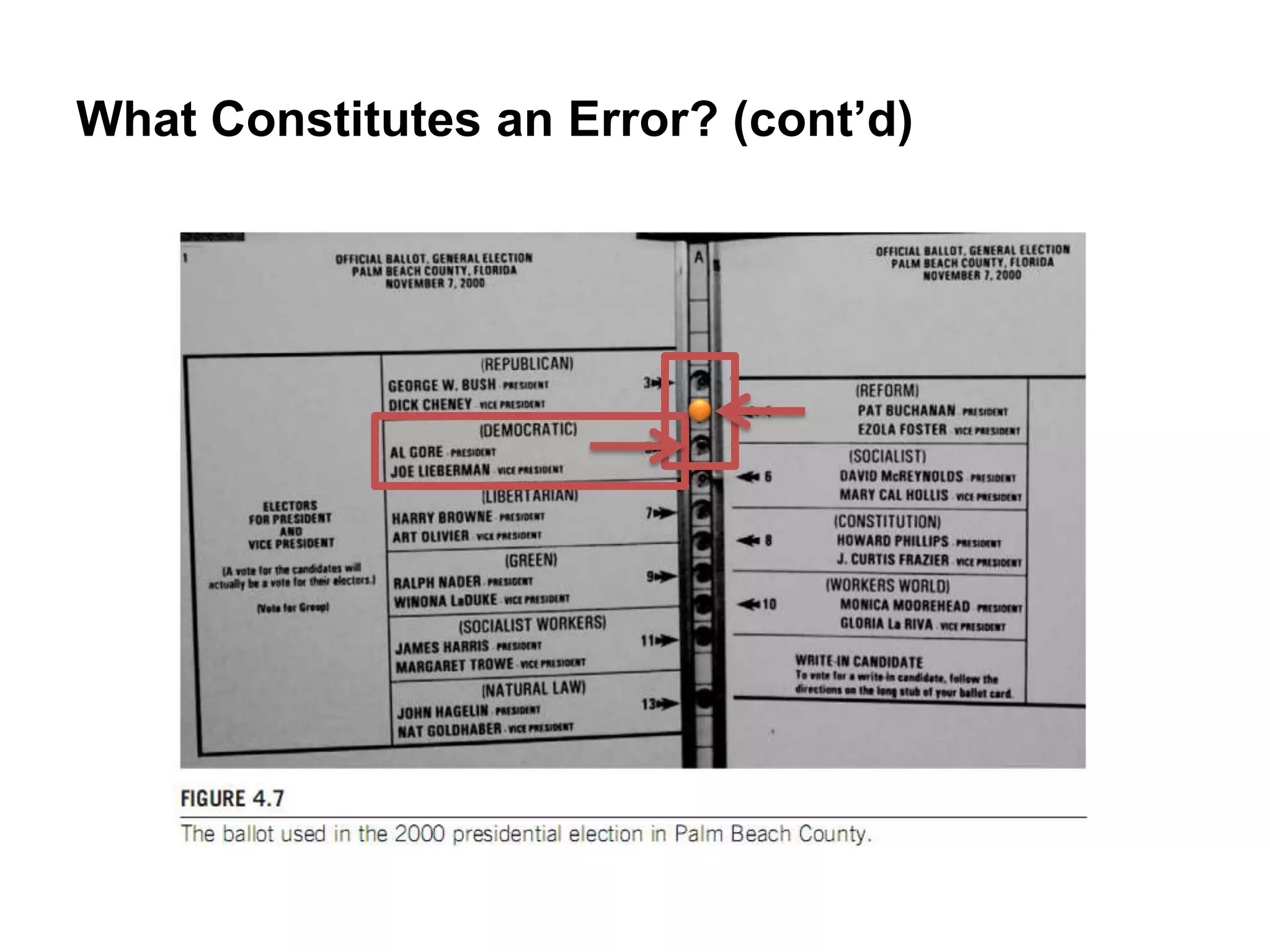

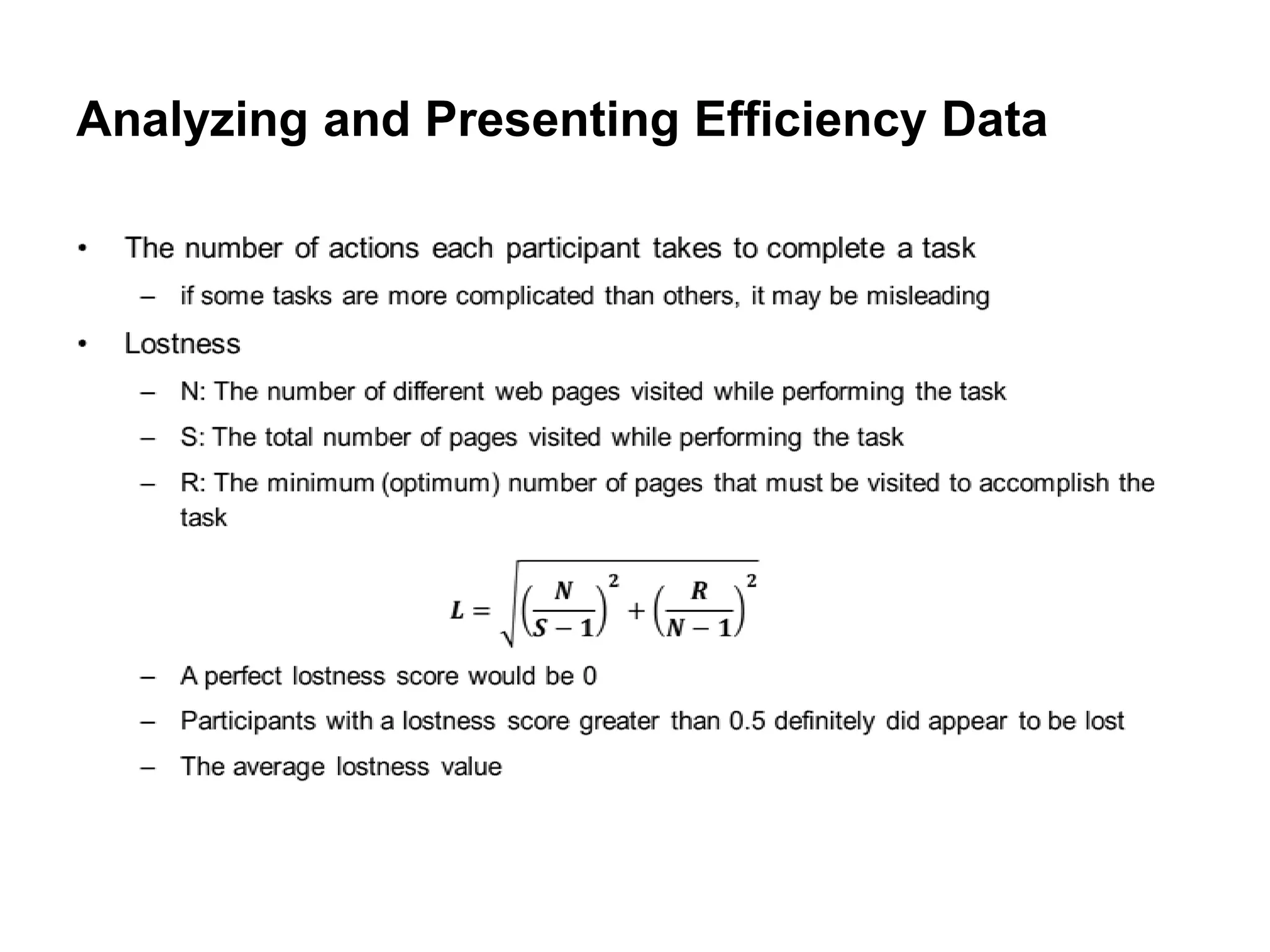

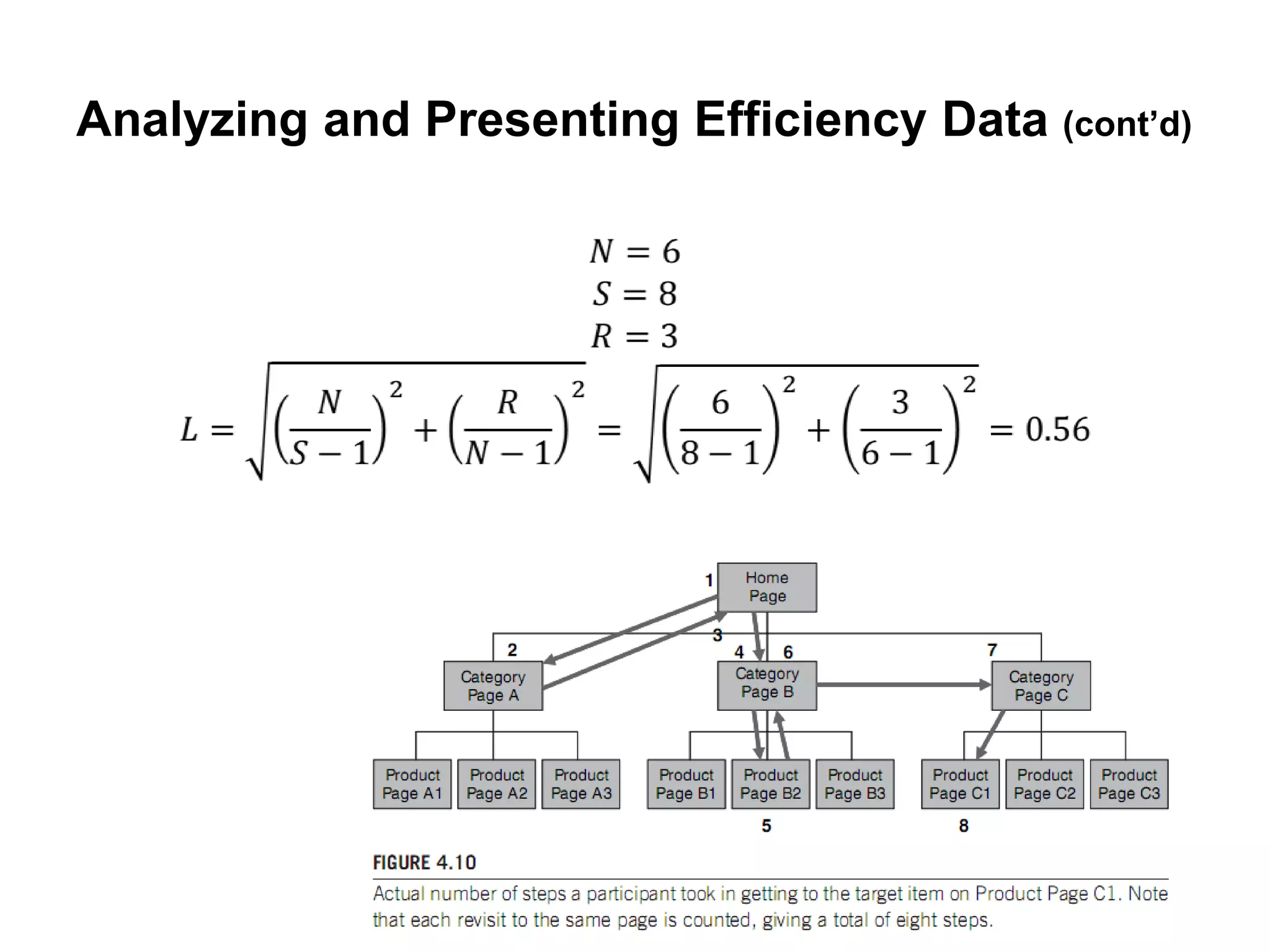

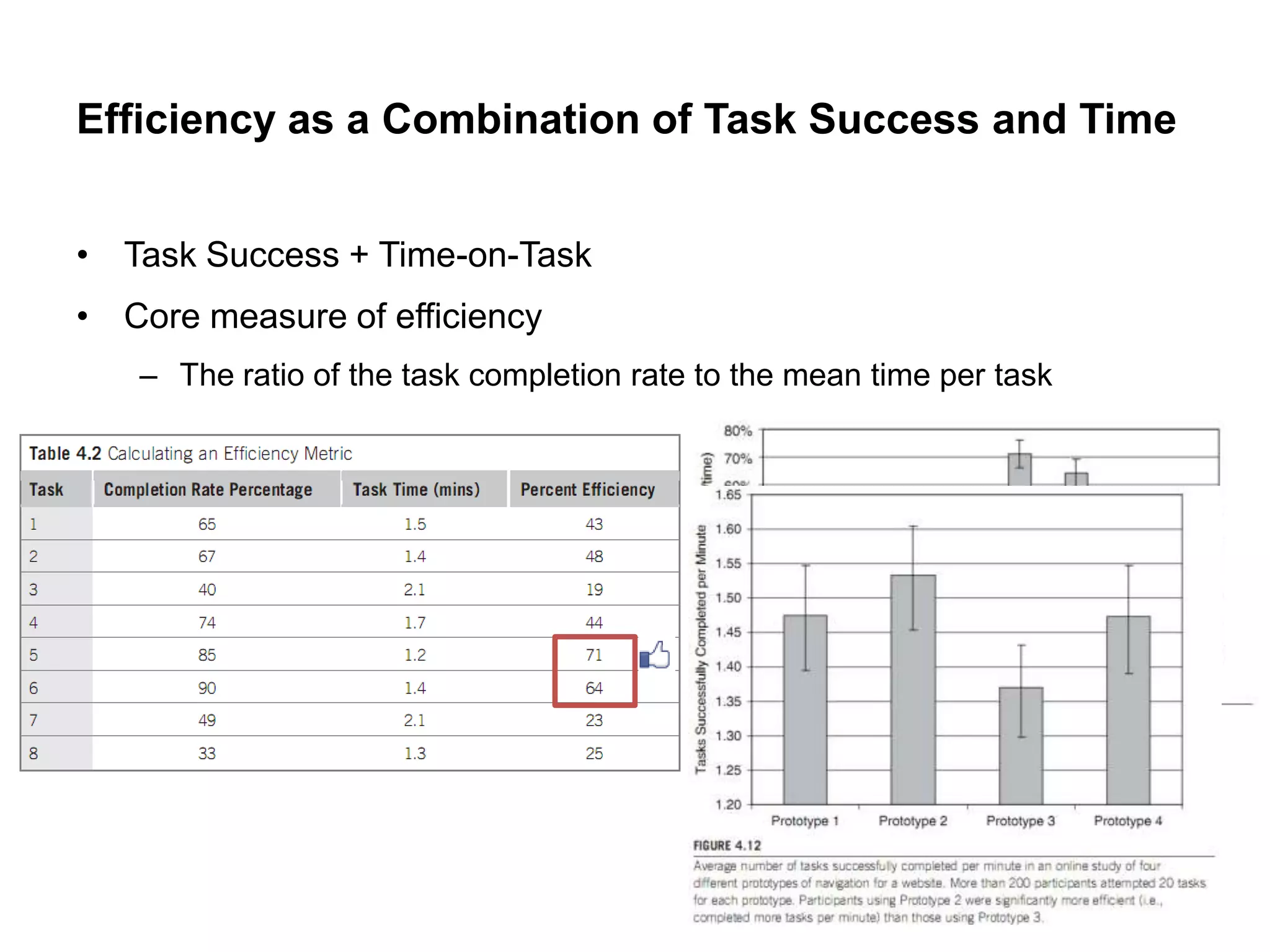

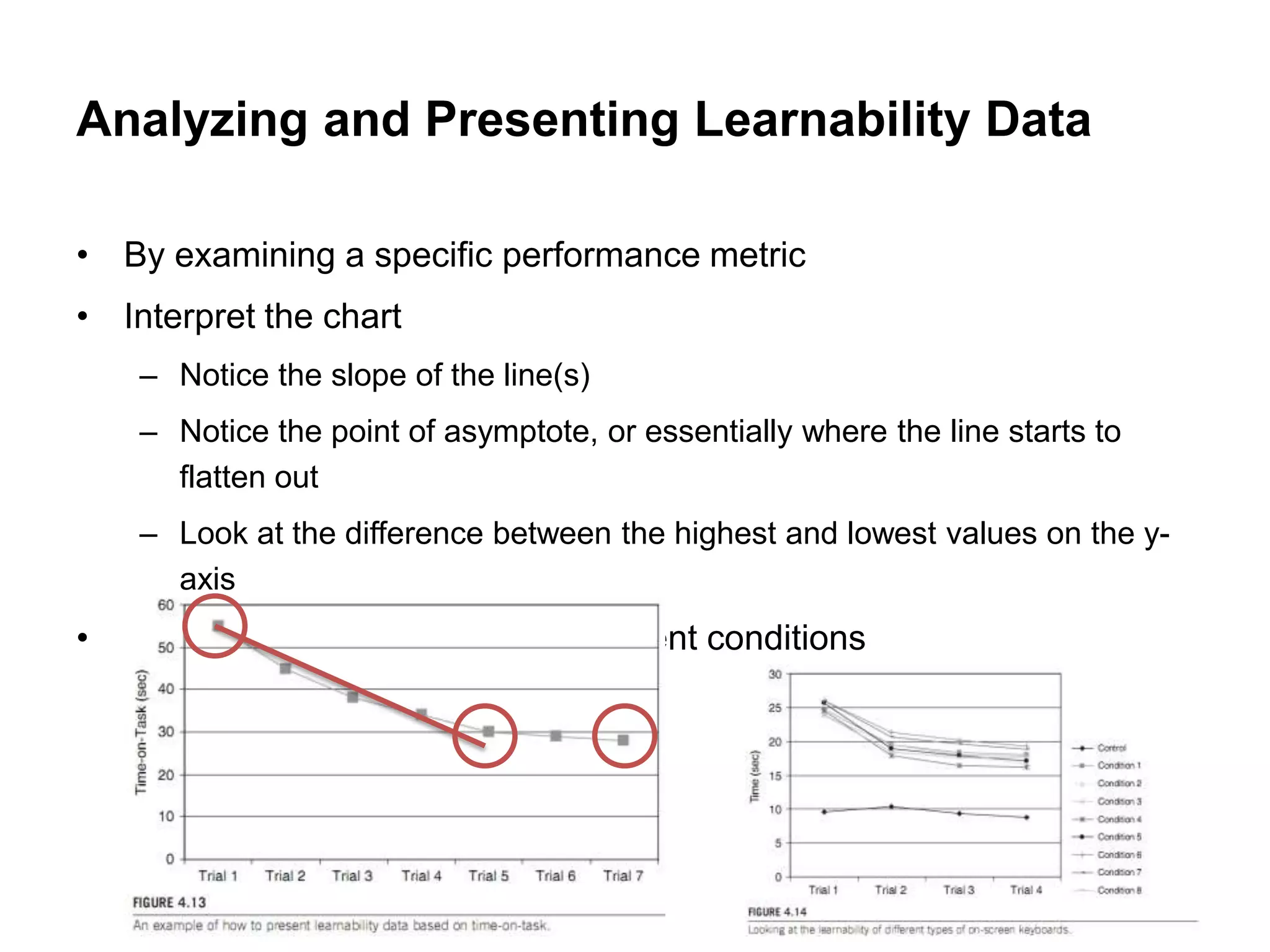

The document summarizes different performance metrics for evaluating usability, including task success, time-on-task, errors, efficiency, and learnability. It provides details on how to collect, measure, analyze, and present data for each metric. For task success, it discusses measuring binary success (completion or not) and levels of success. For time-on-task, it outlines how to record and analyze timing data. For errors, it describes what constitutes an error and how to organize error data. Efficiency considers both time and effort. Learnability examines how performance changes over repeated trials.