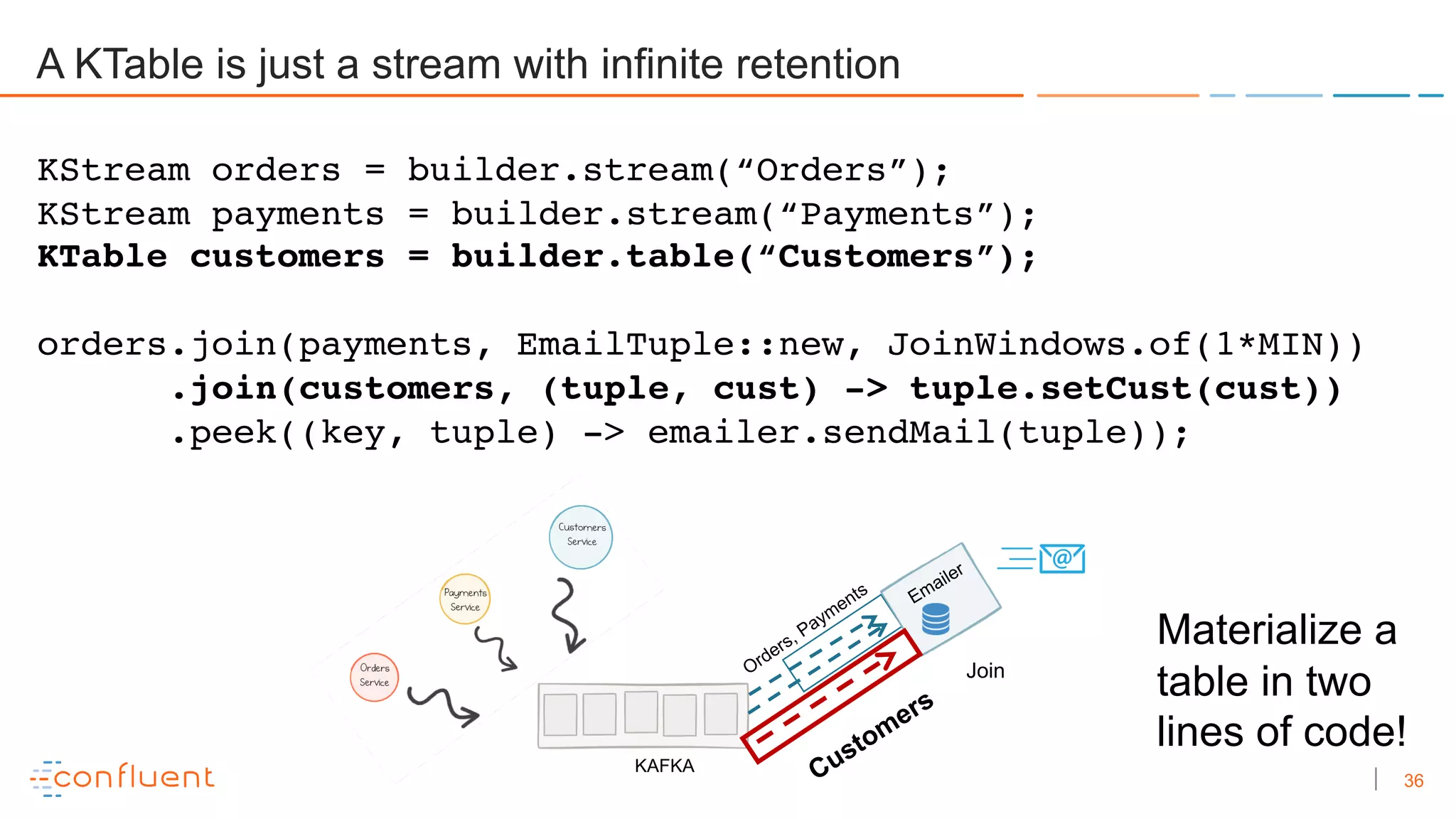

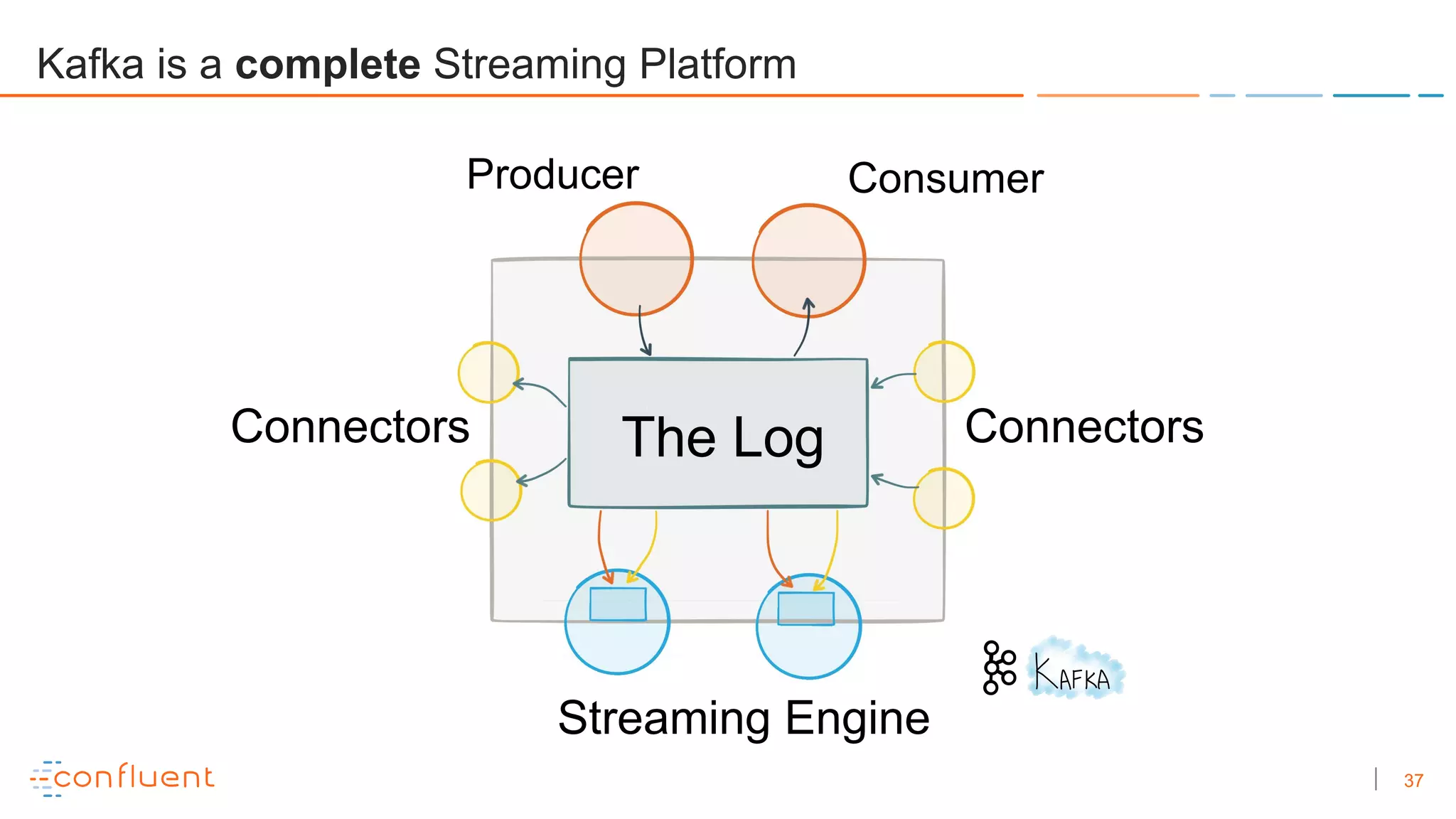

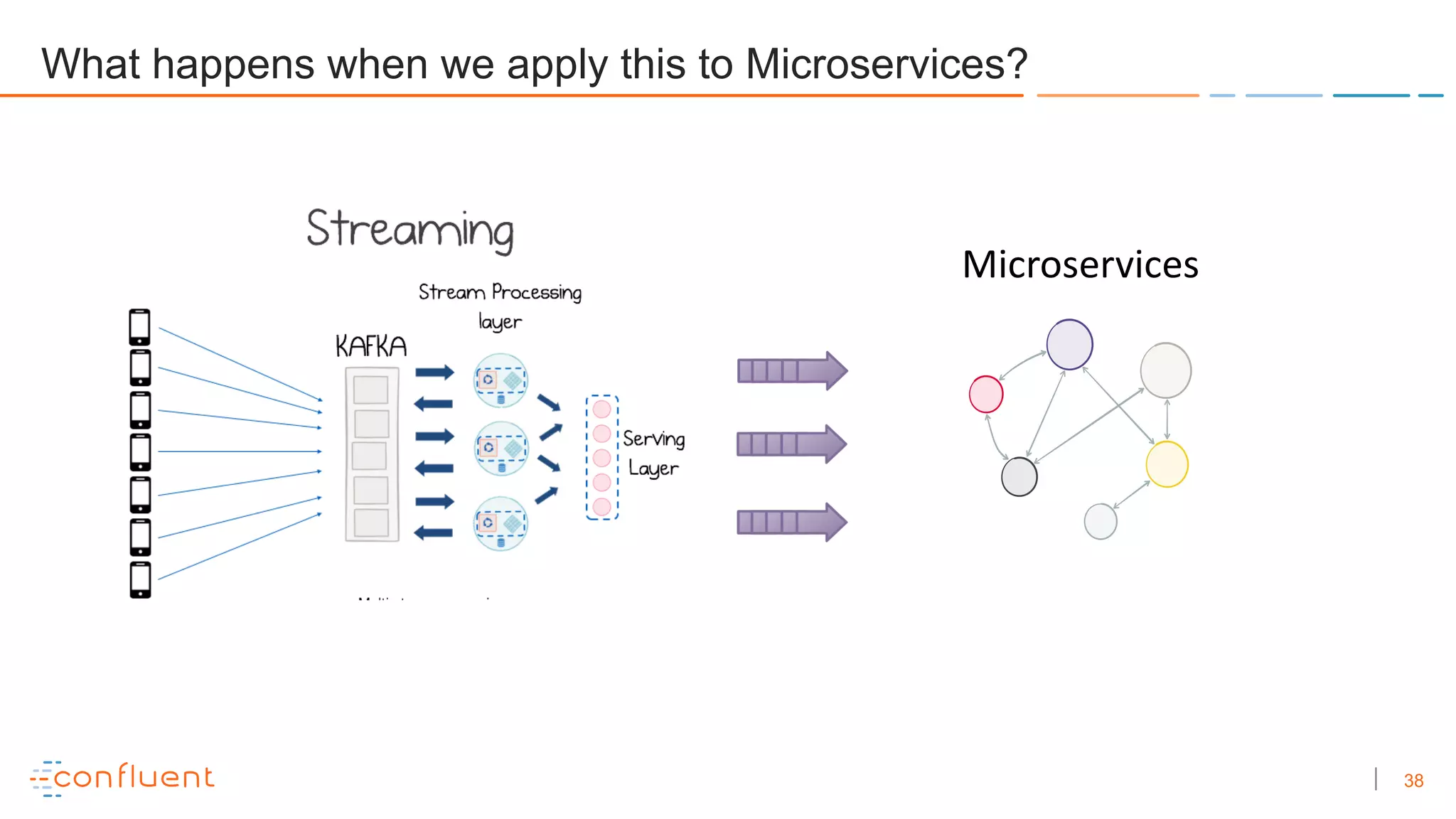

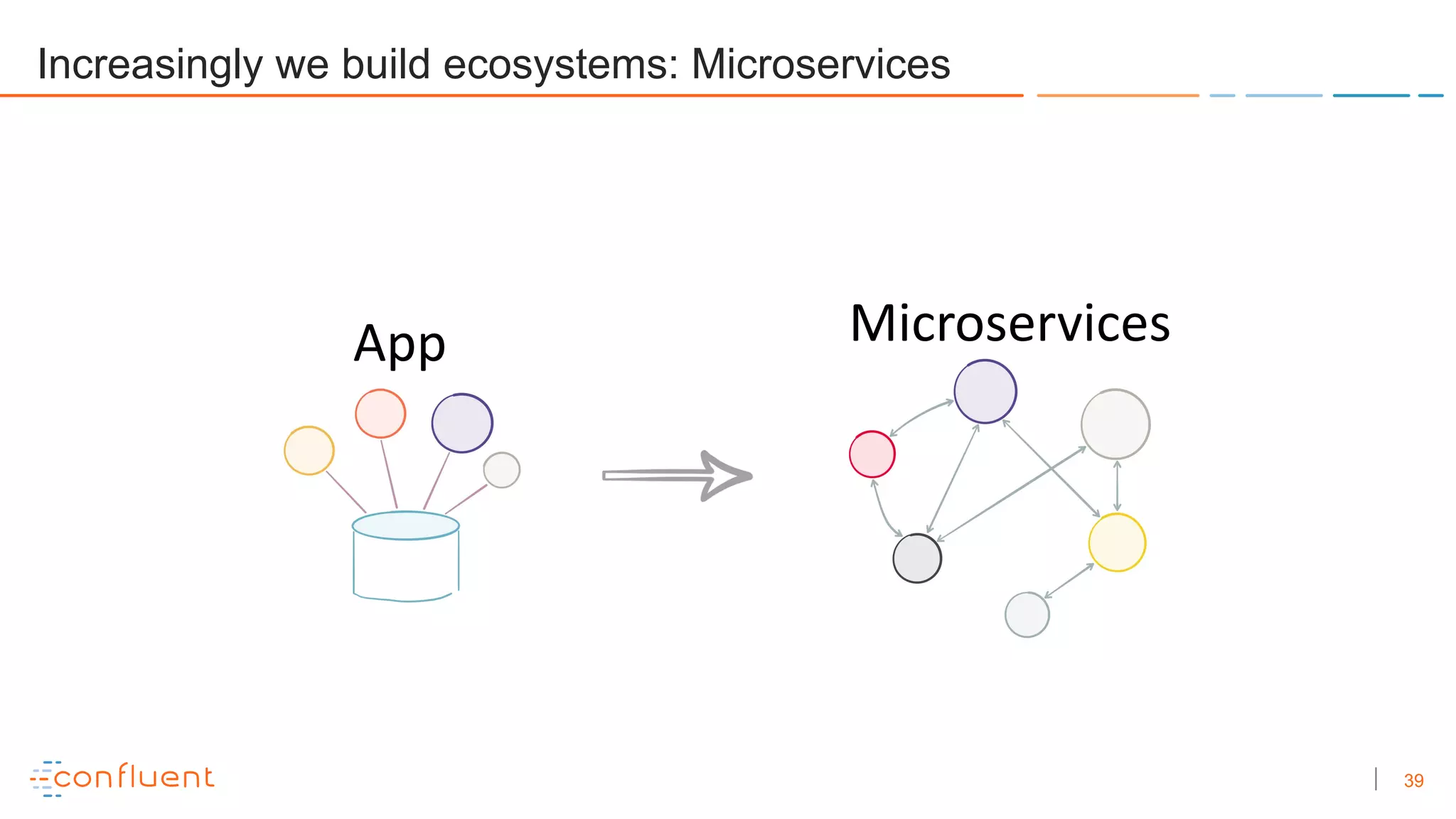

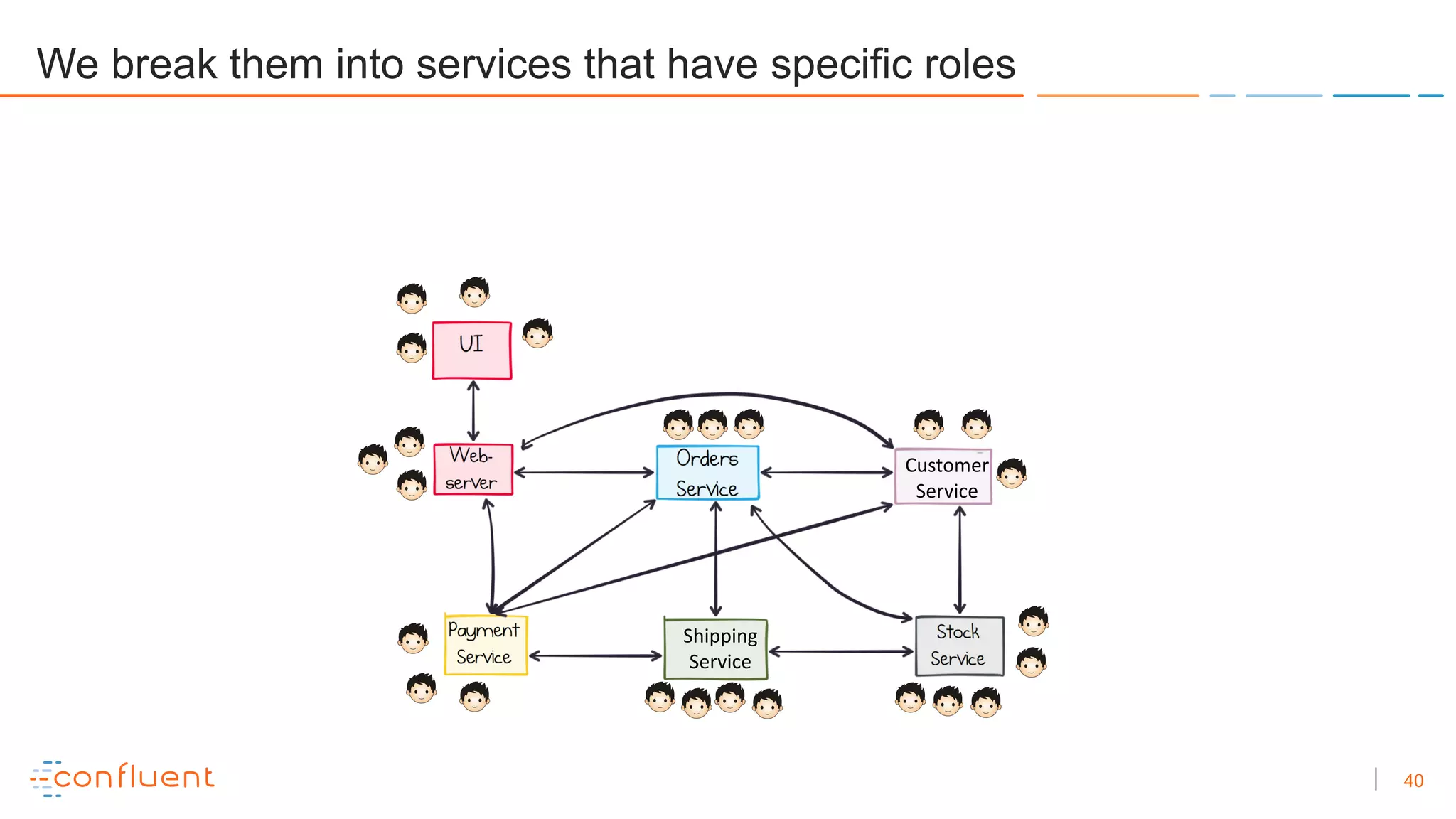

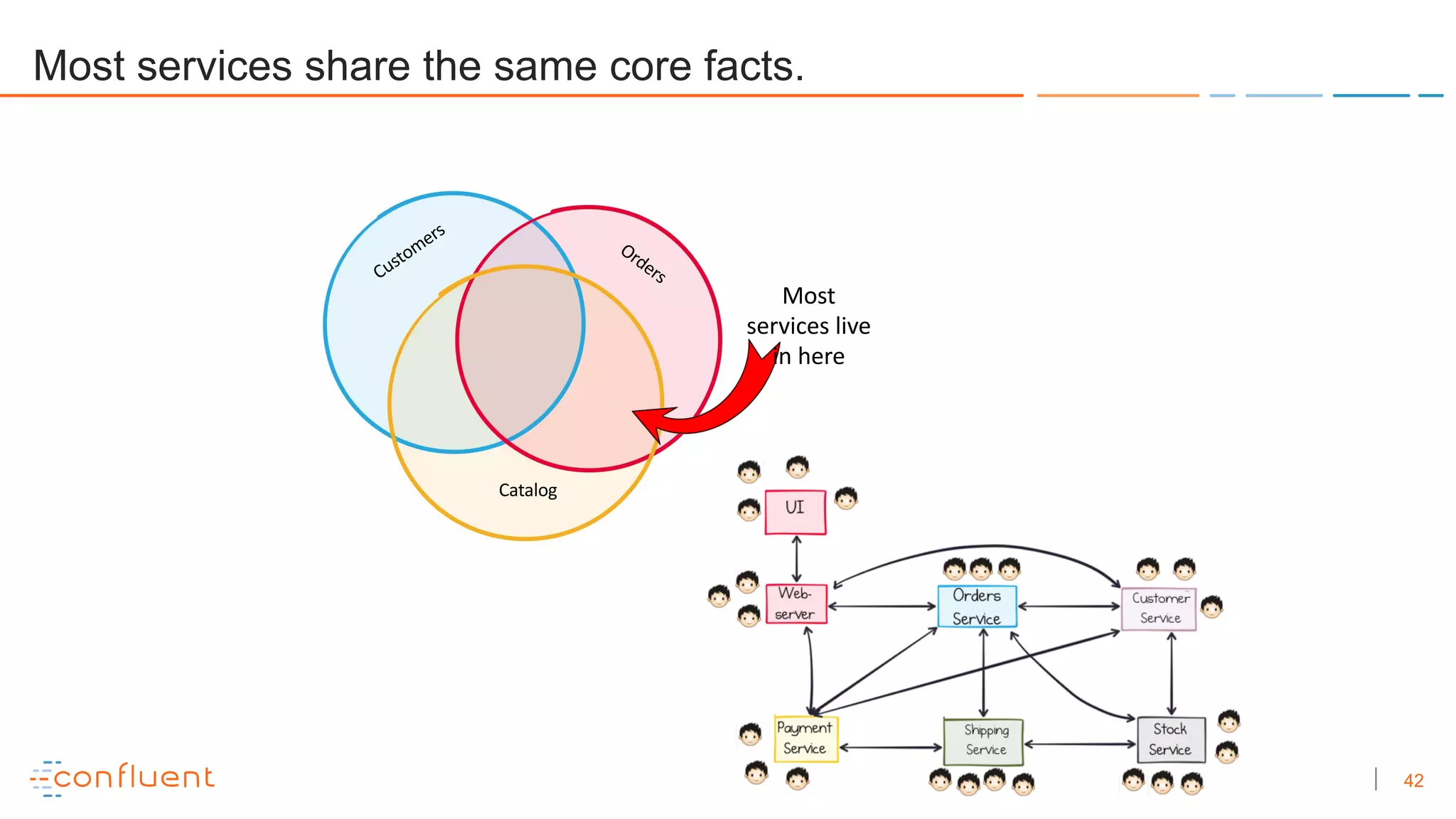

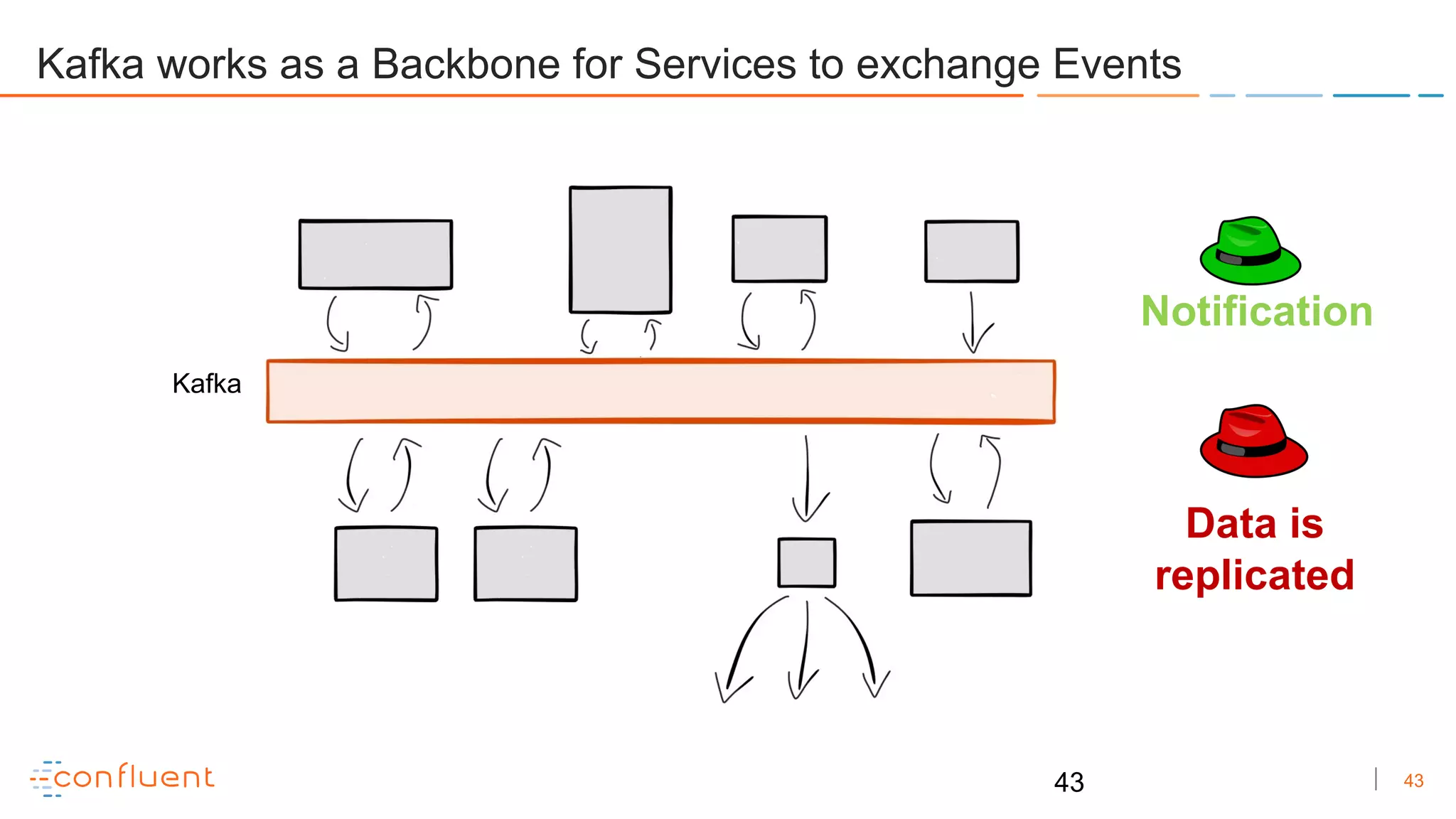

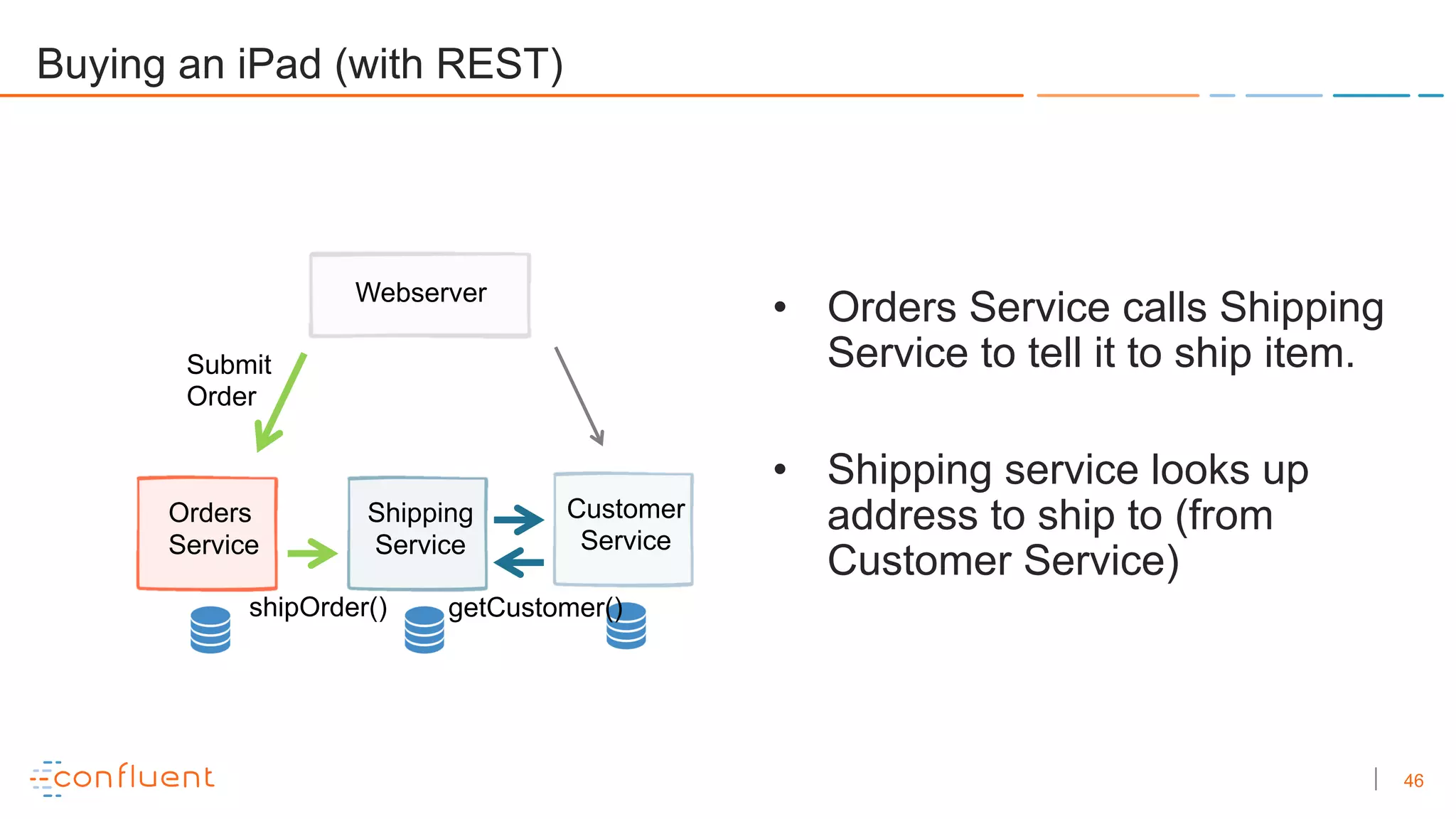

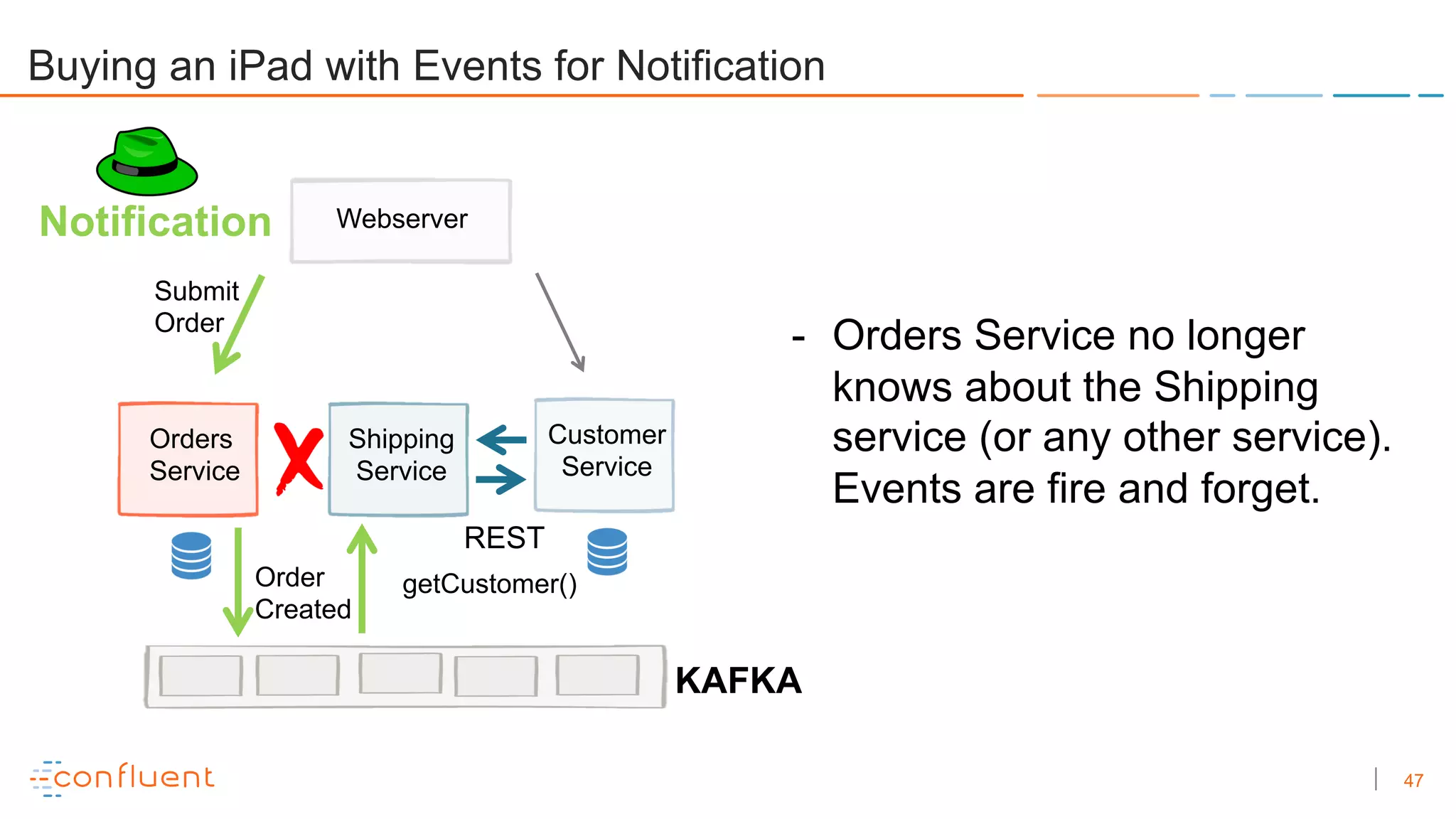

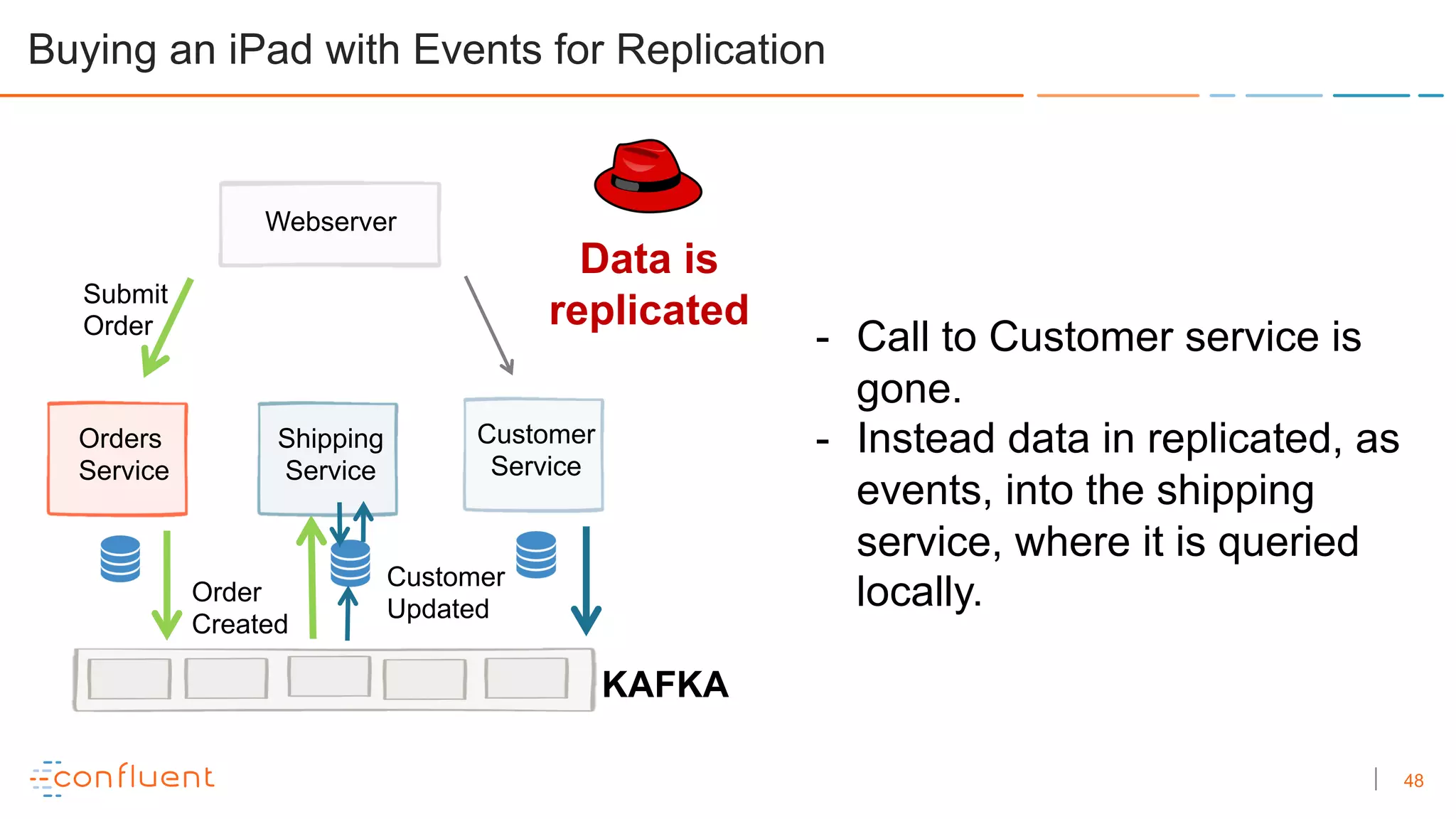

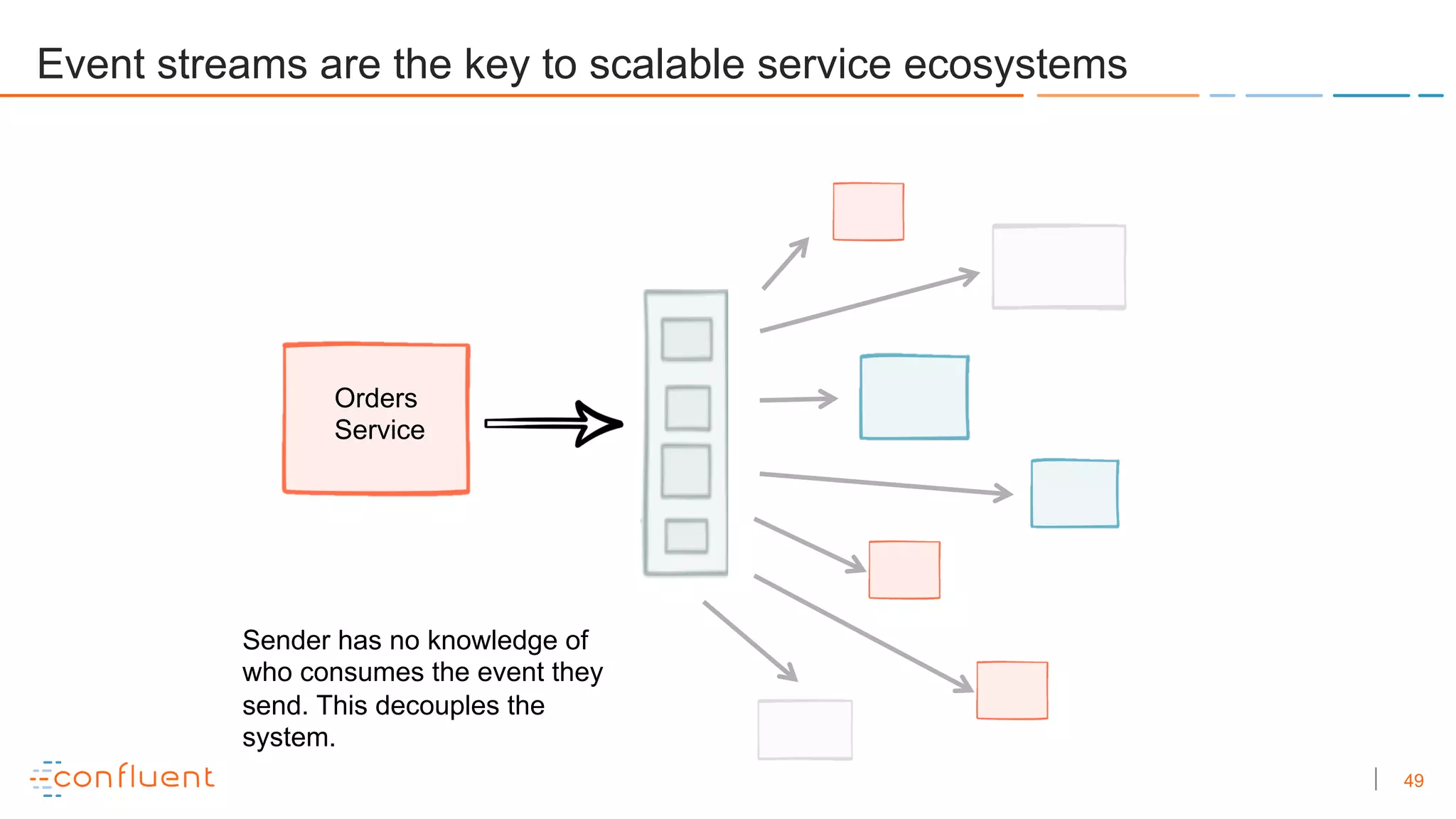

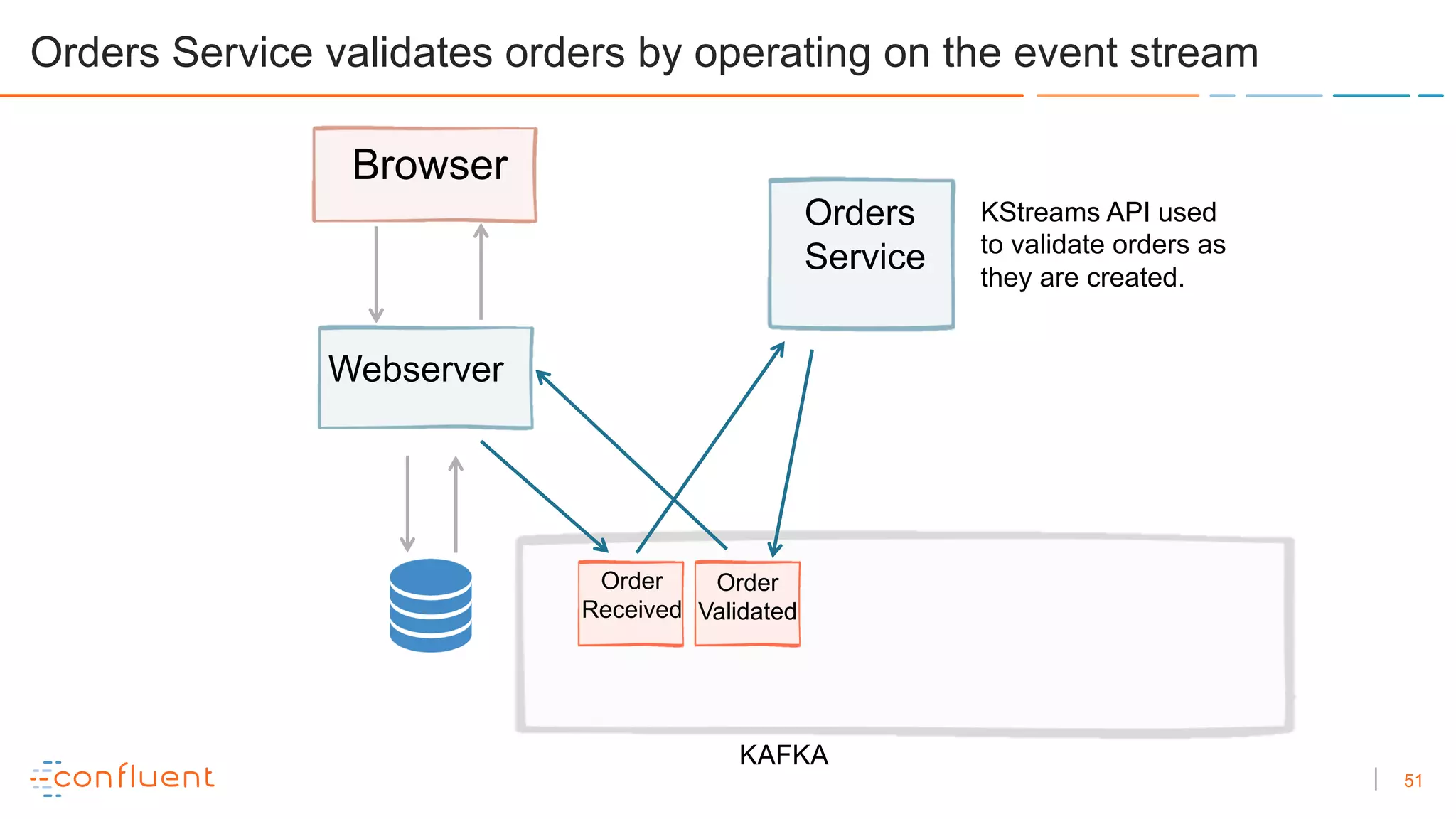

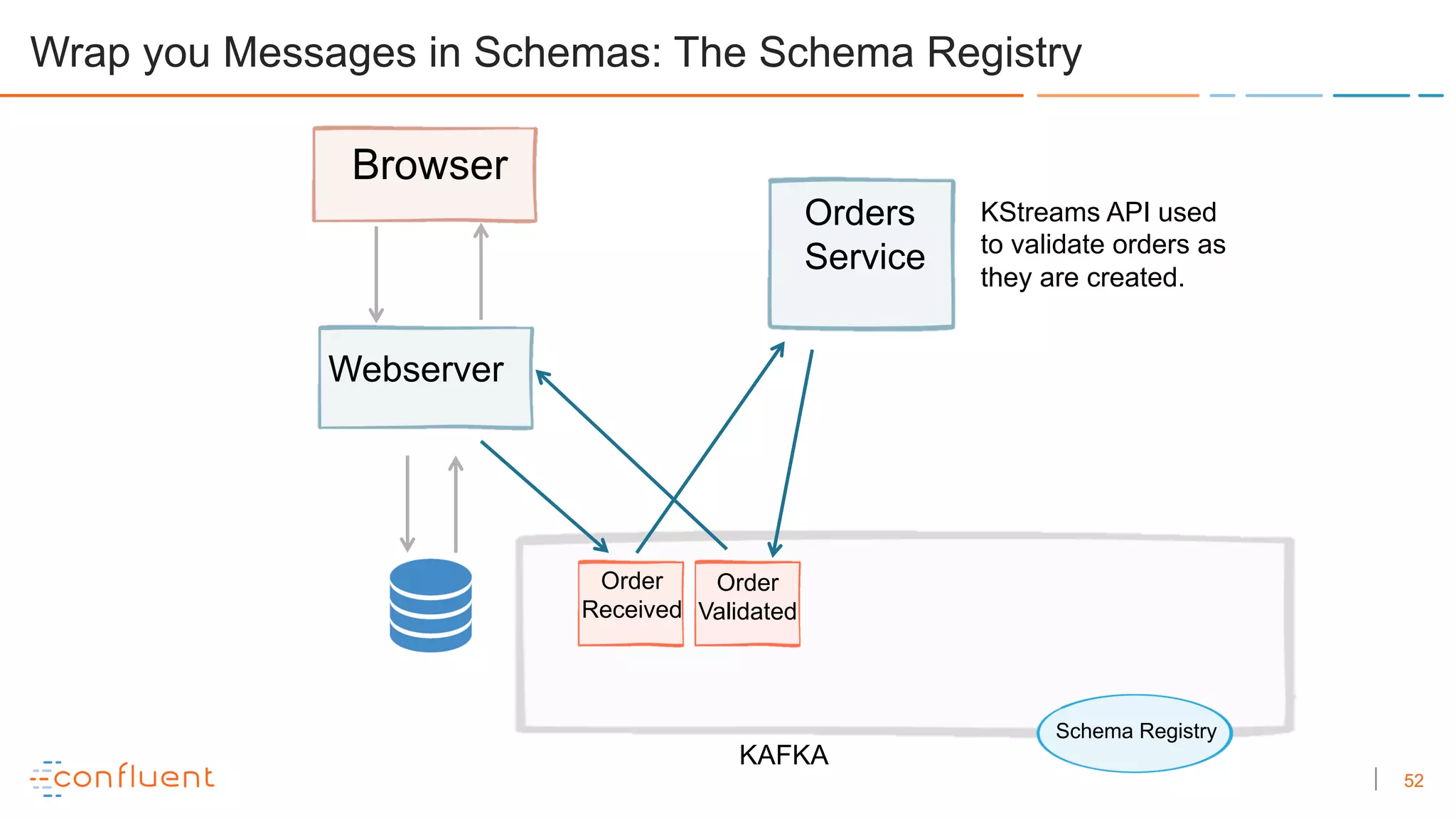

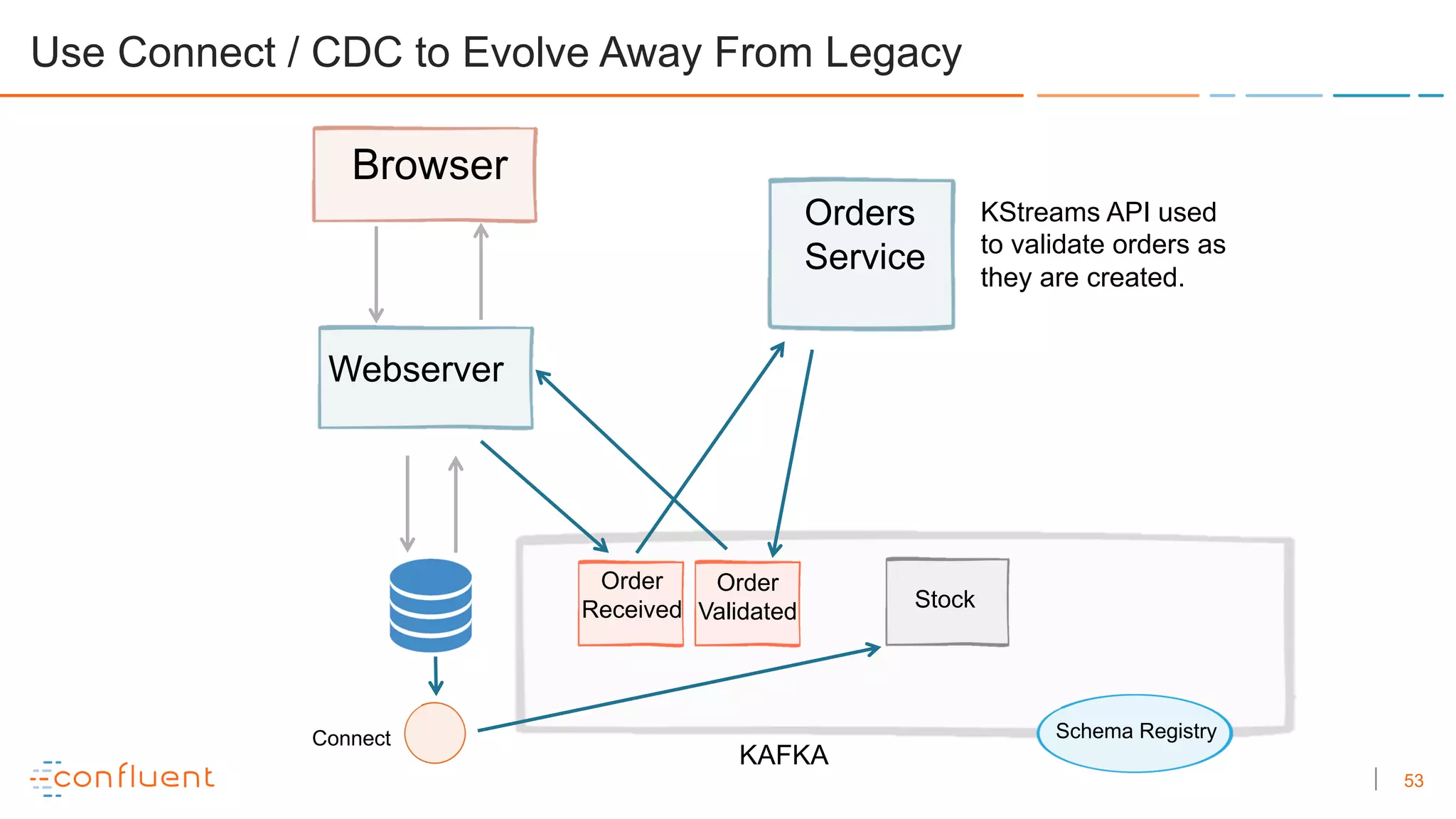

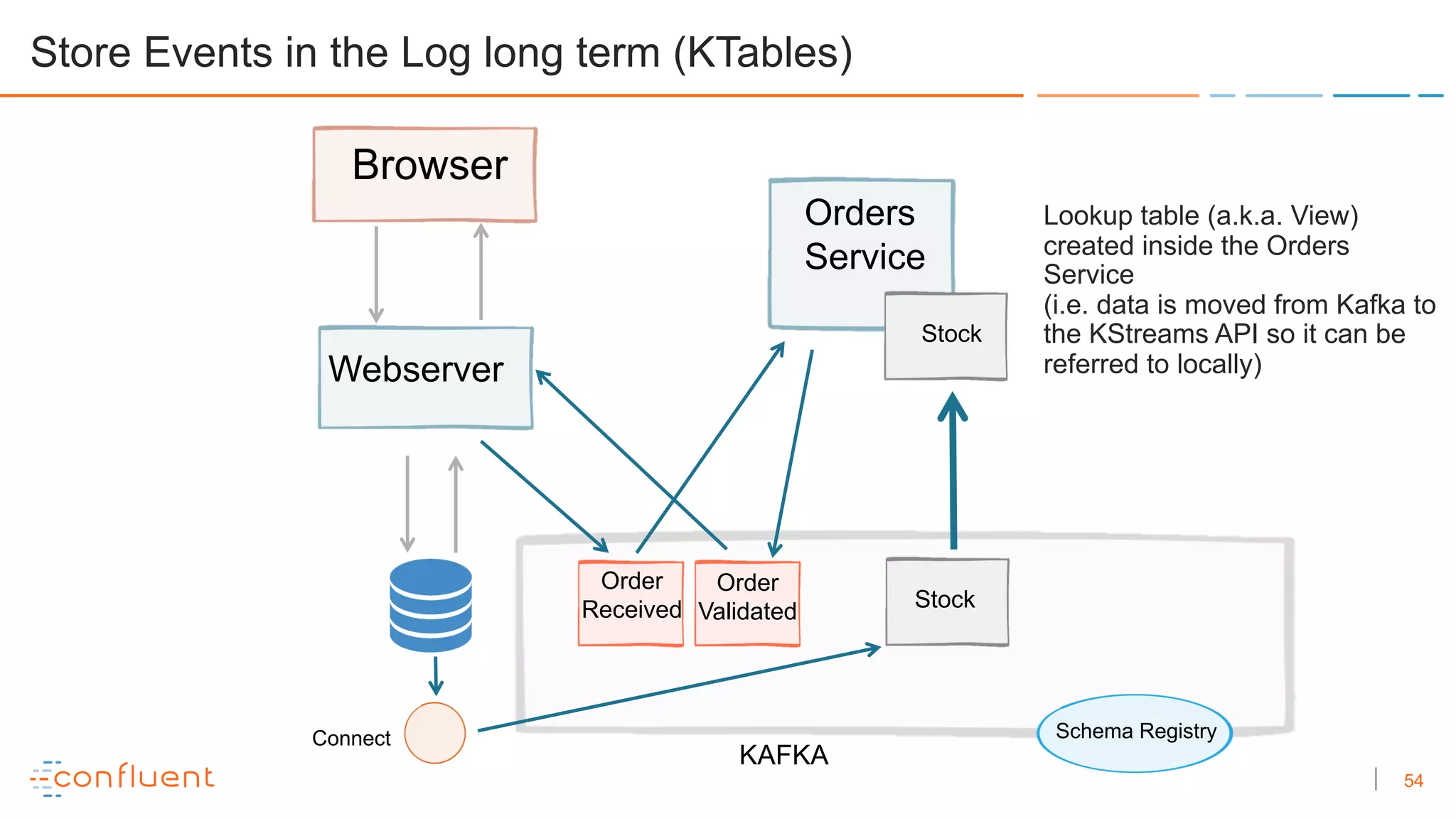

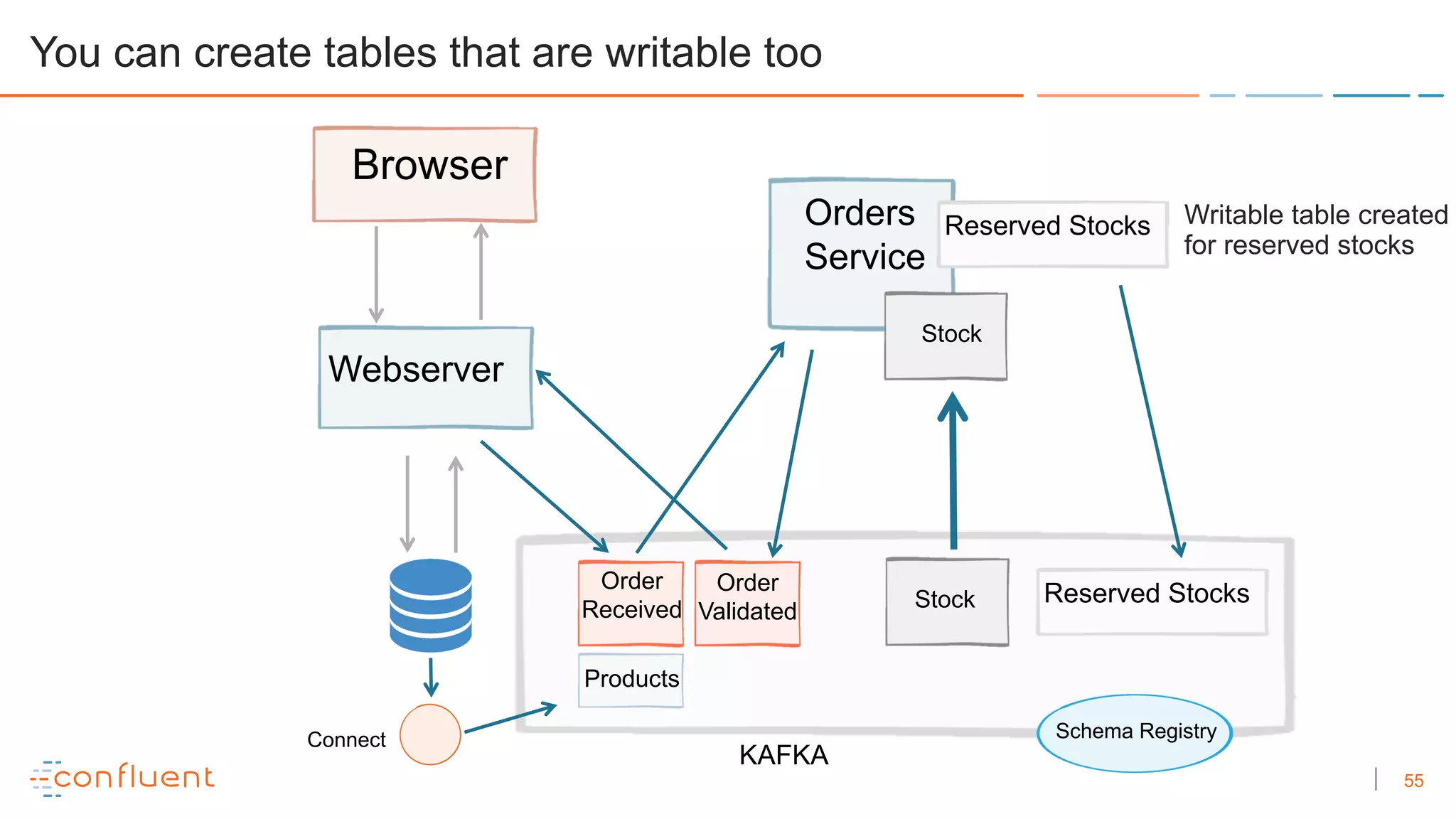

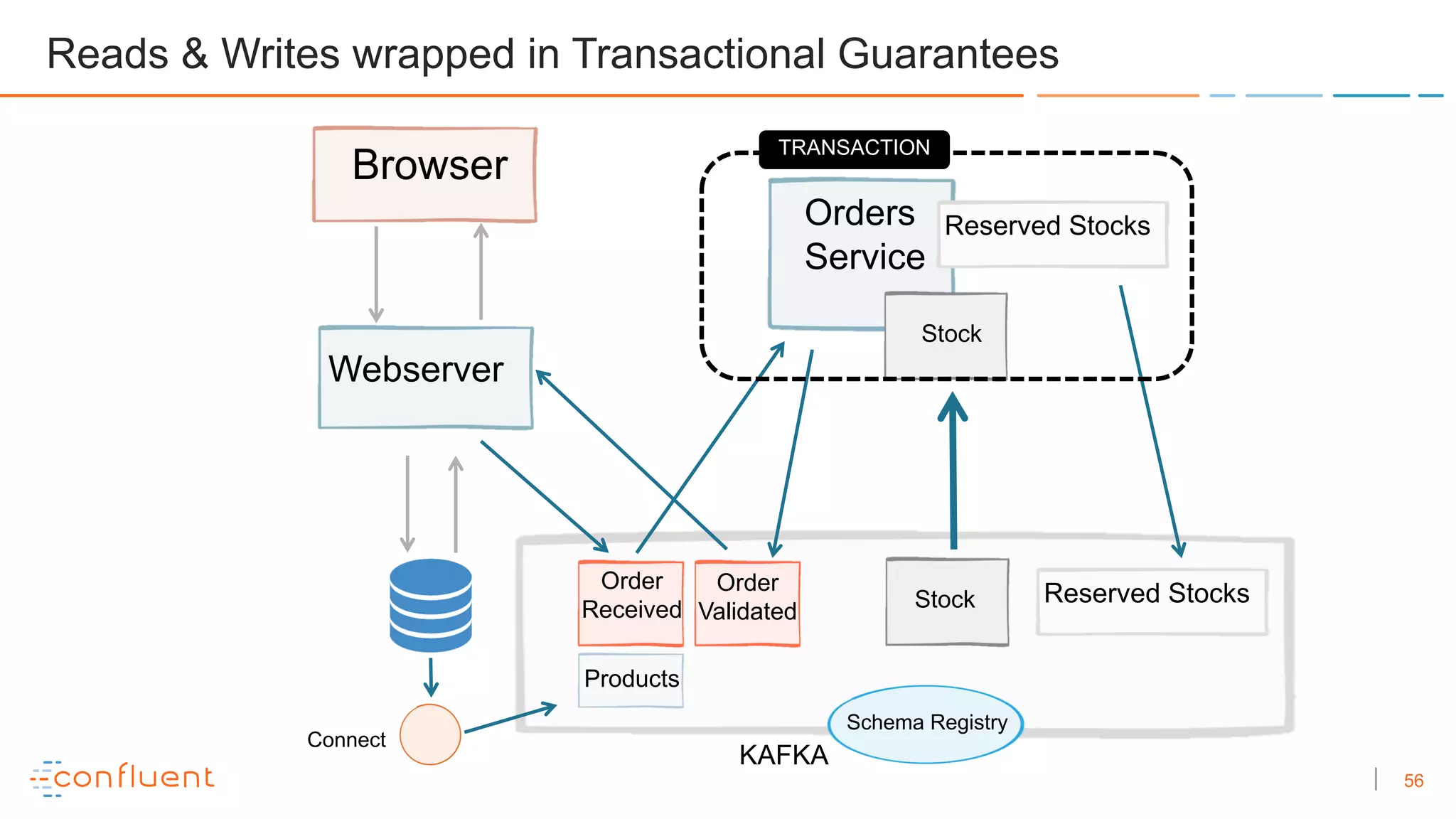

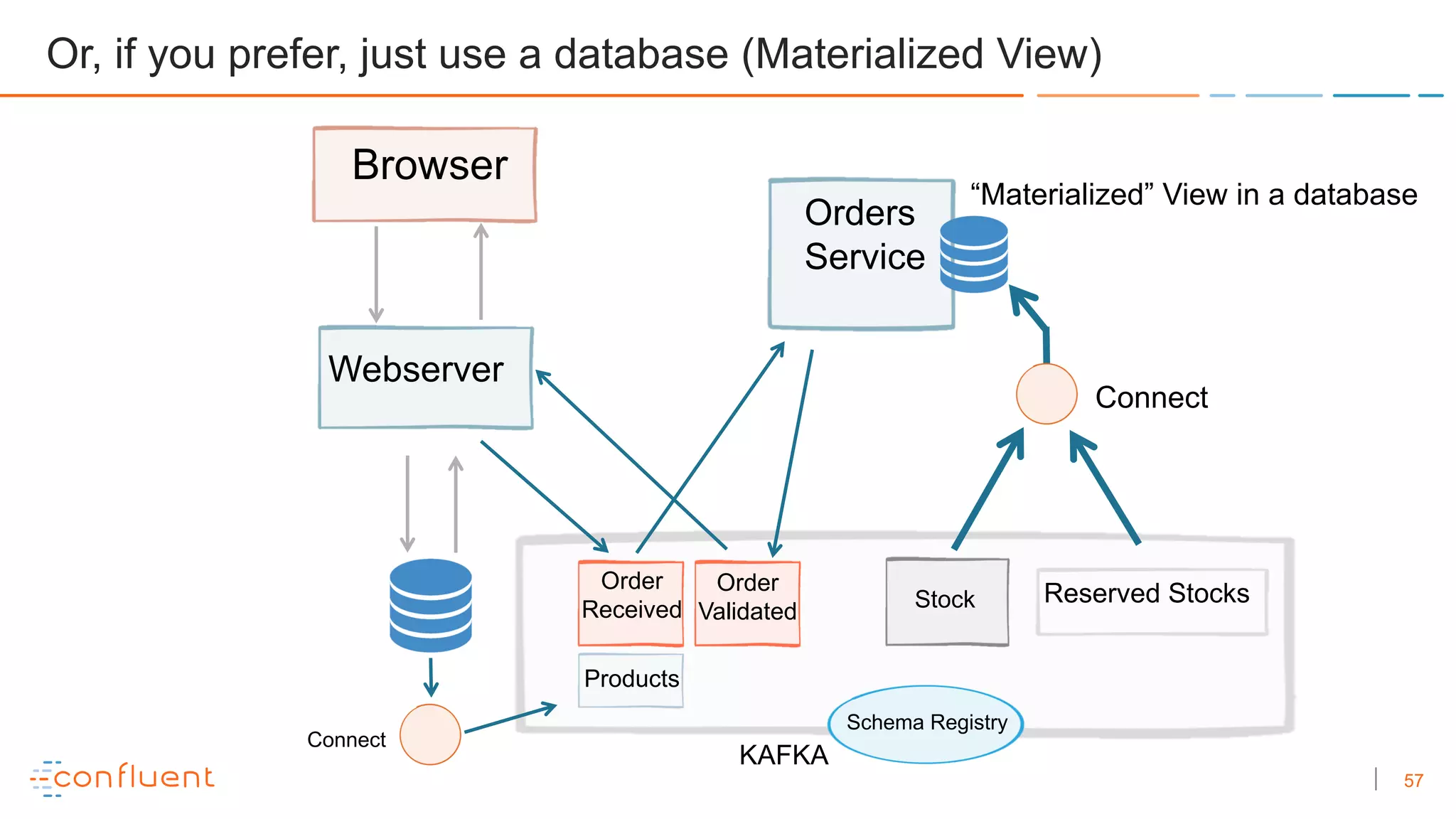

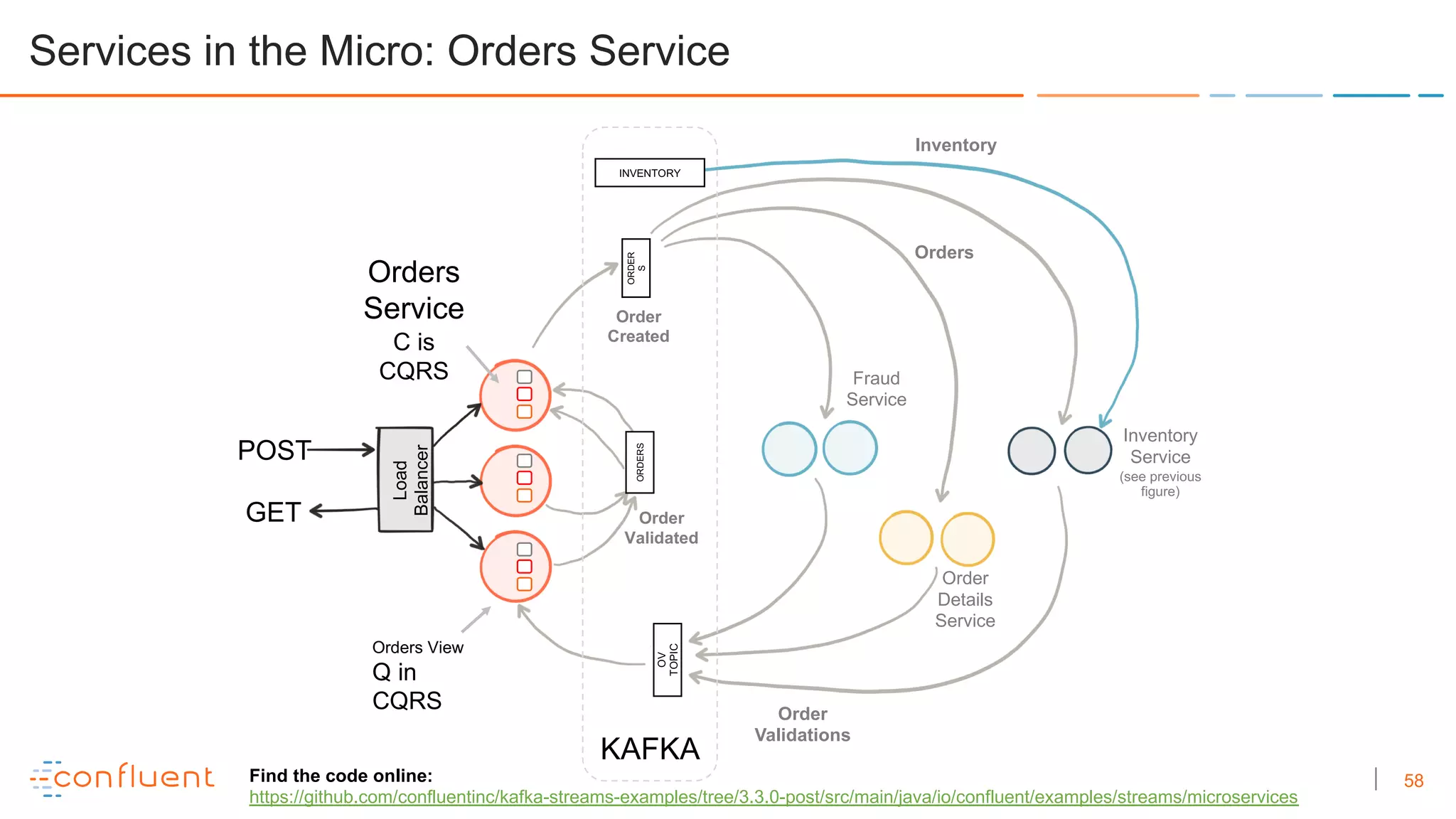

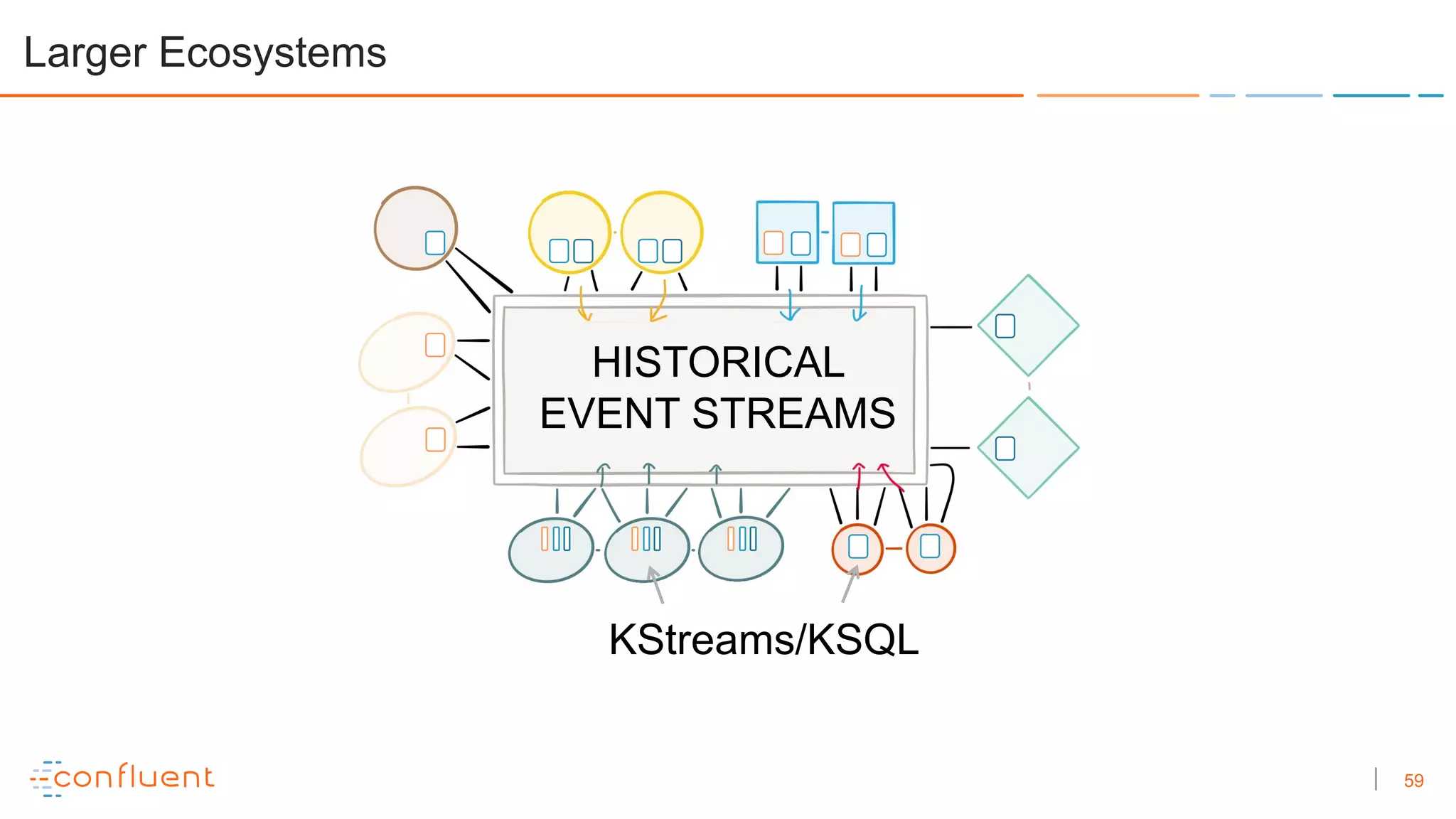

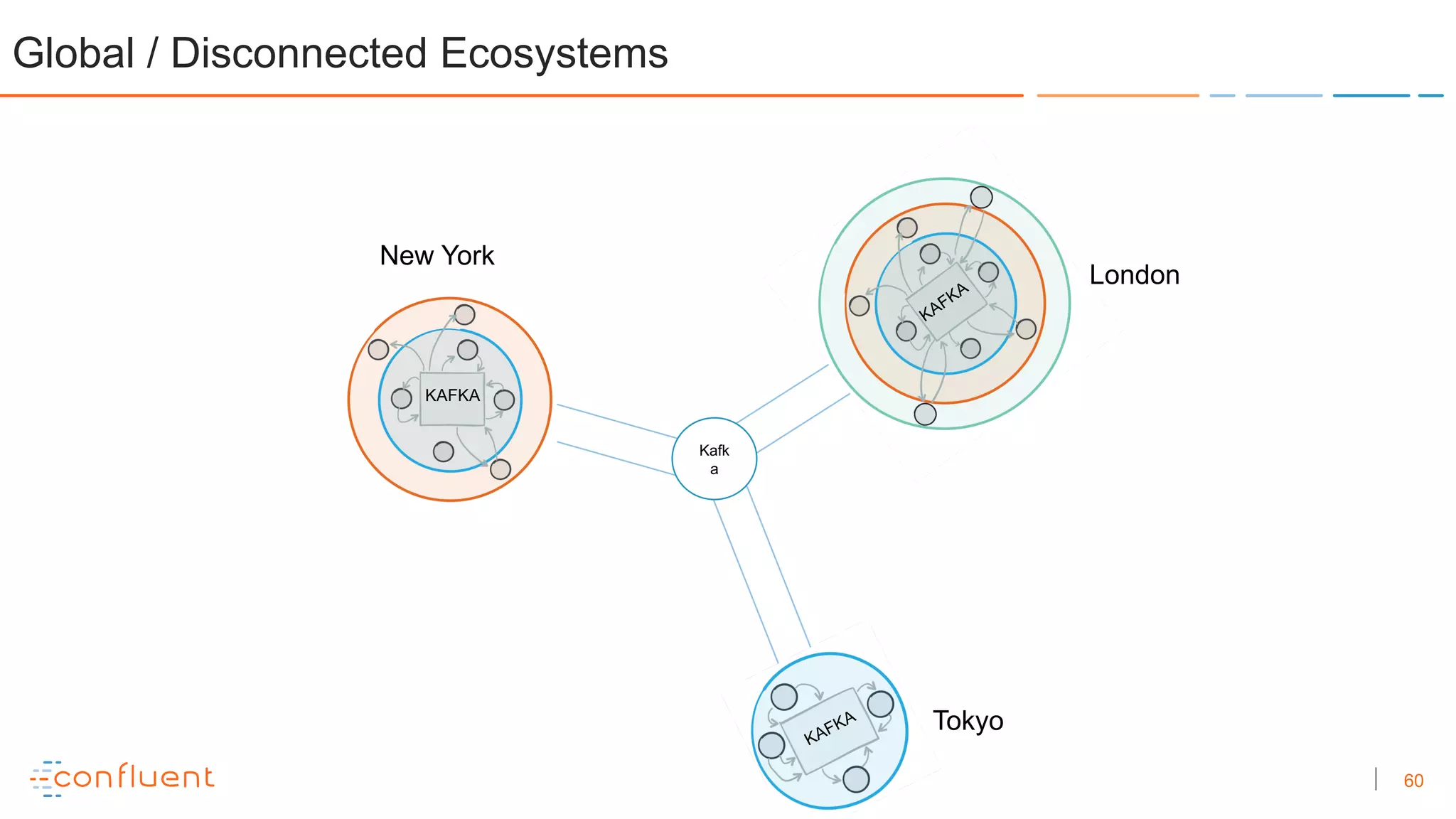

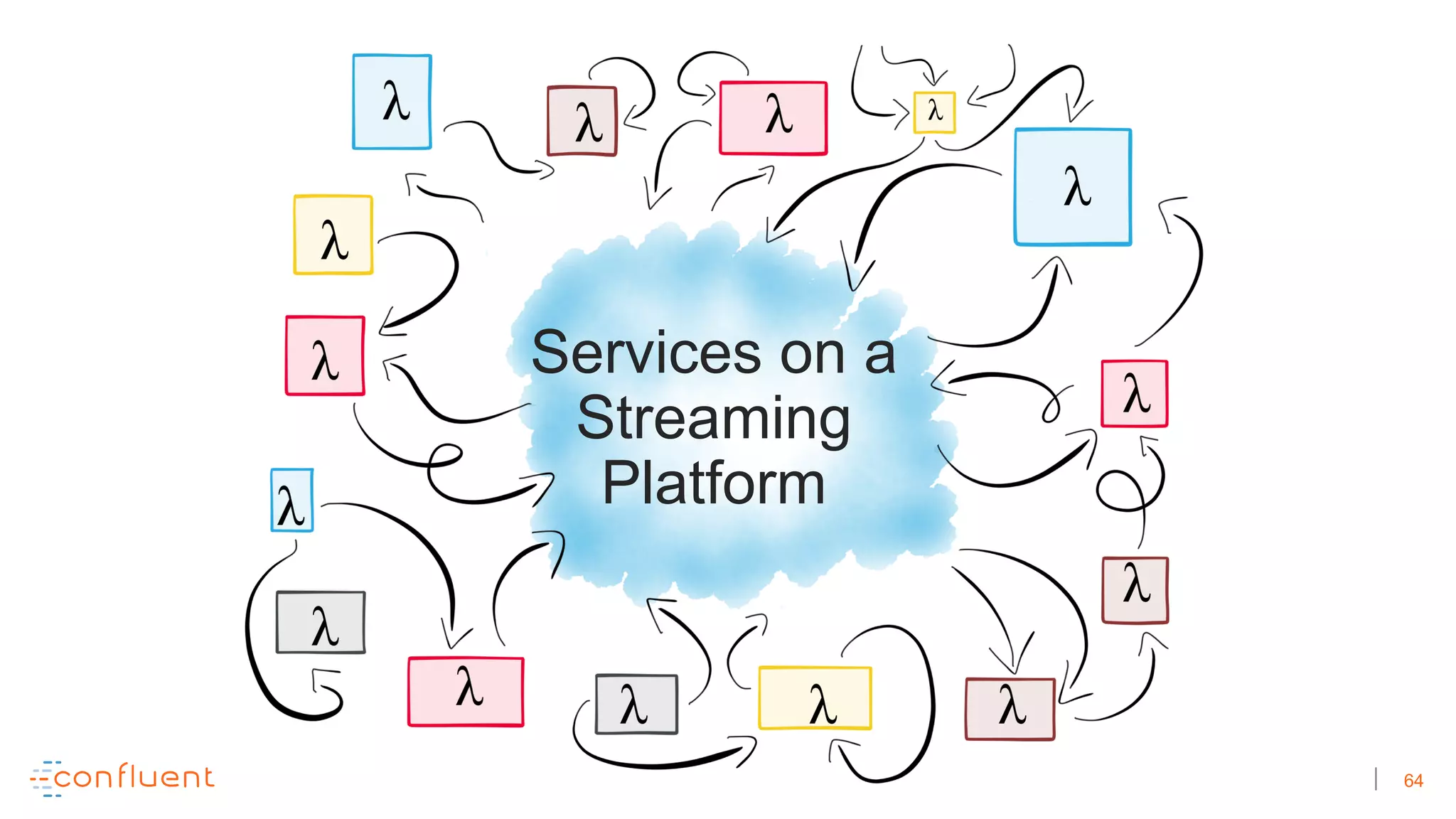

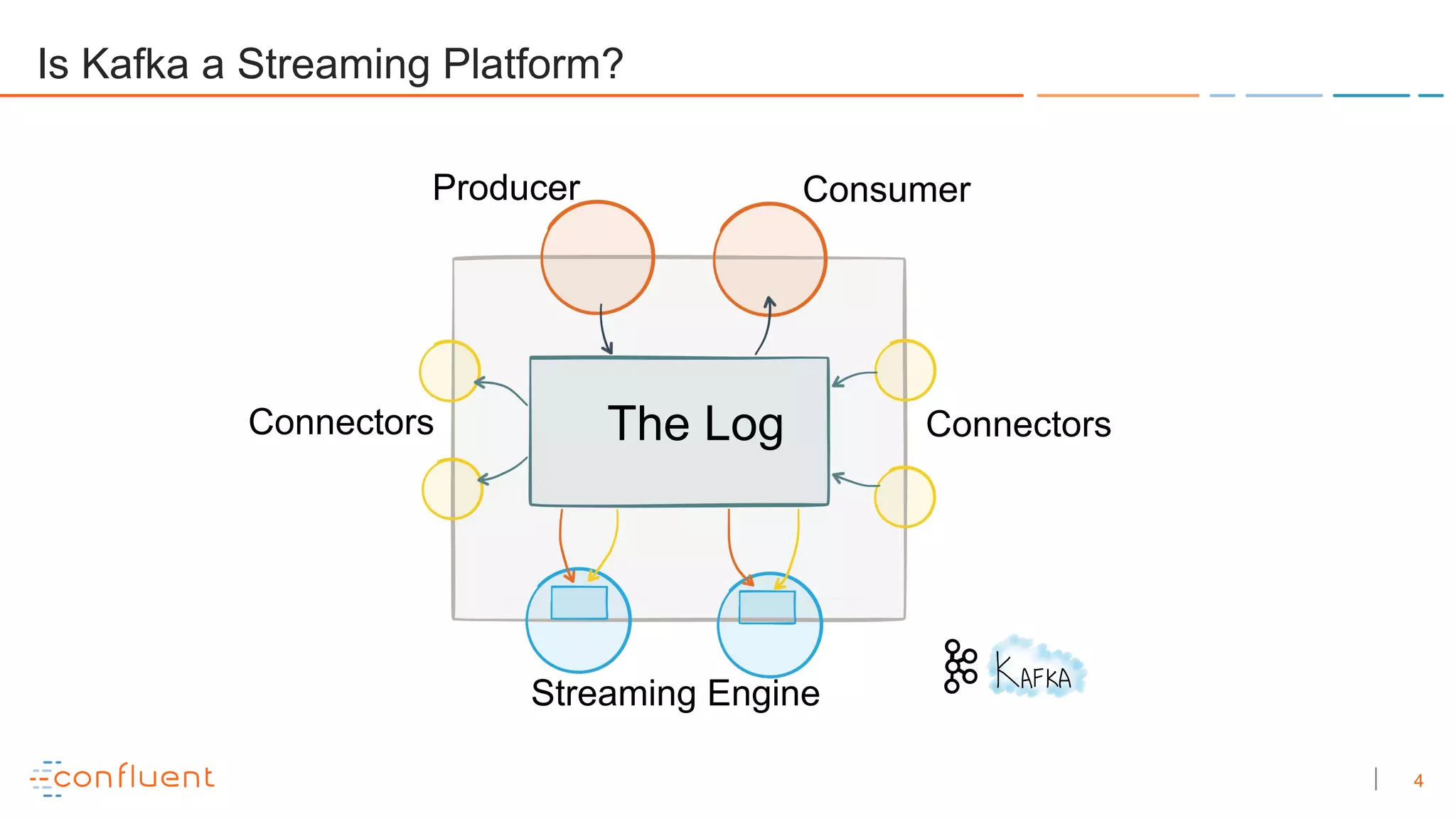

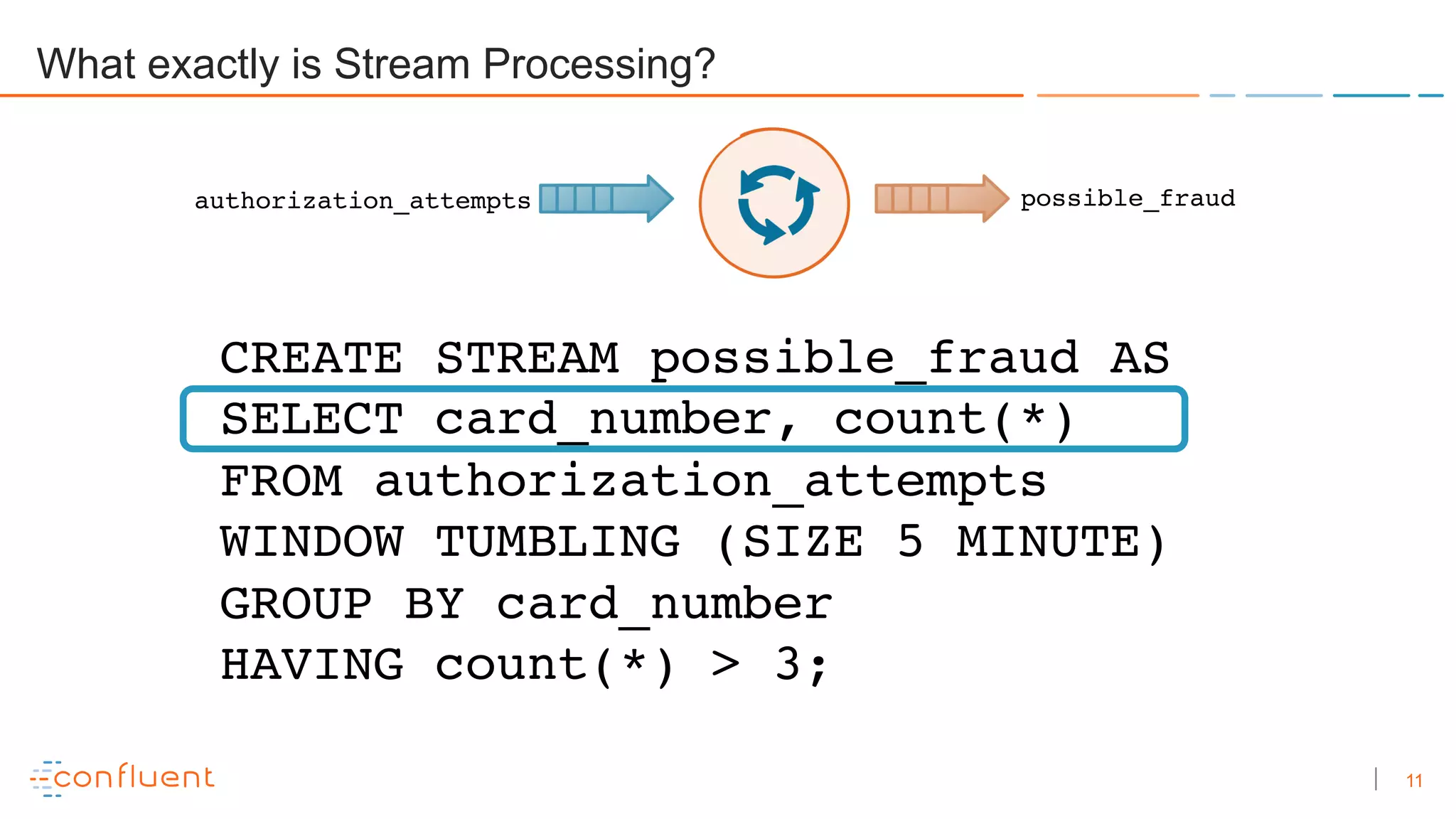

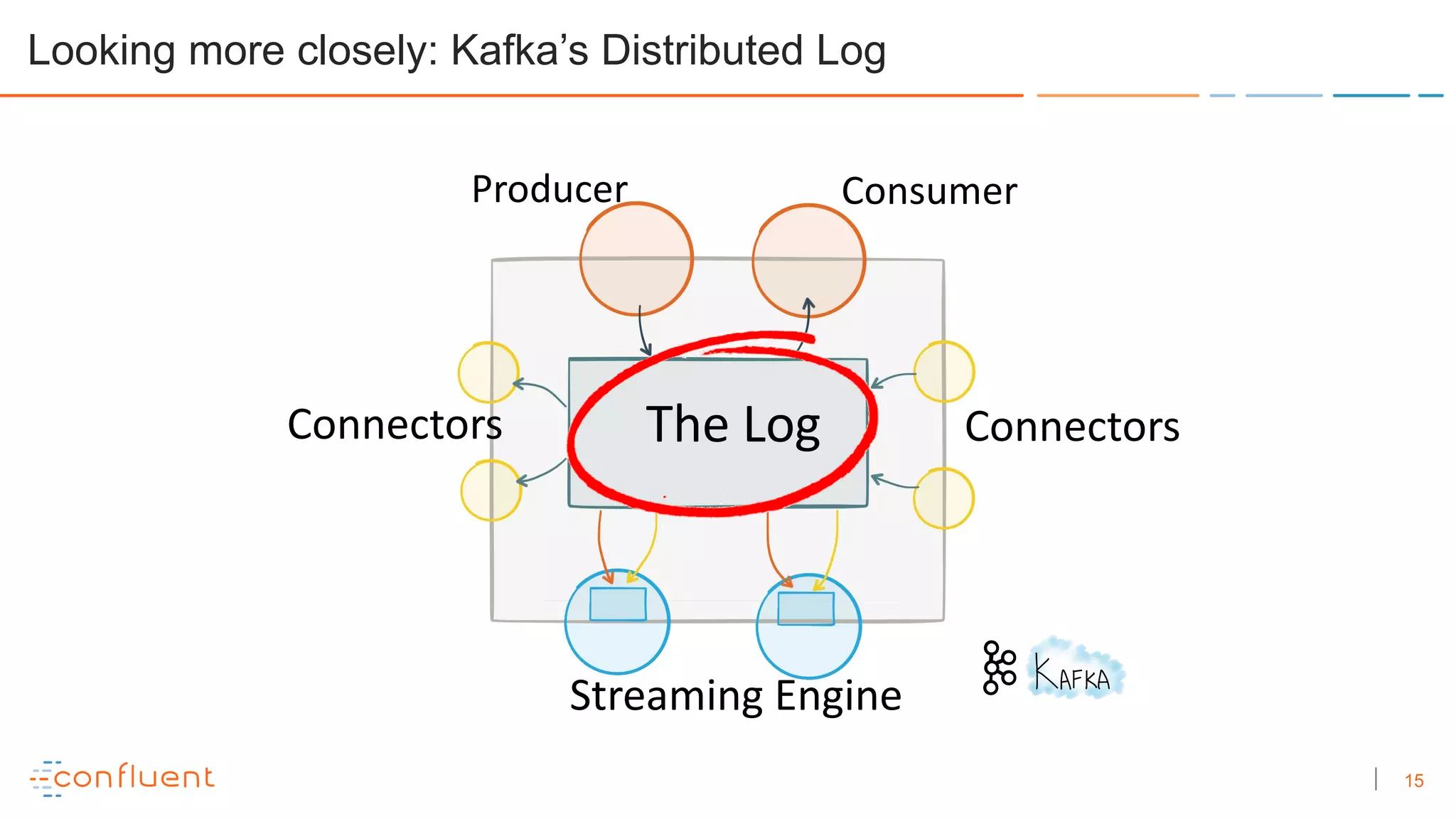

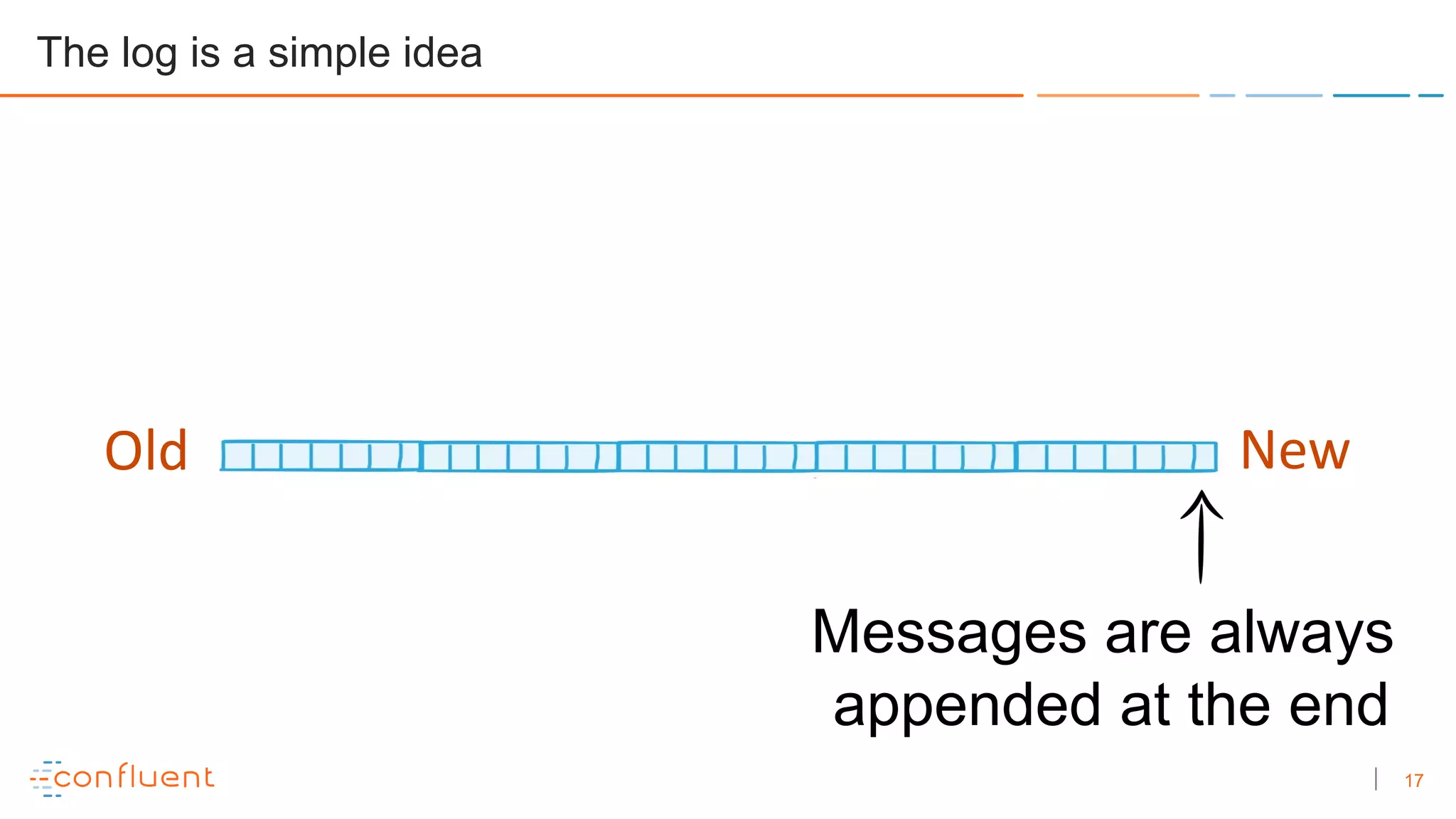

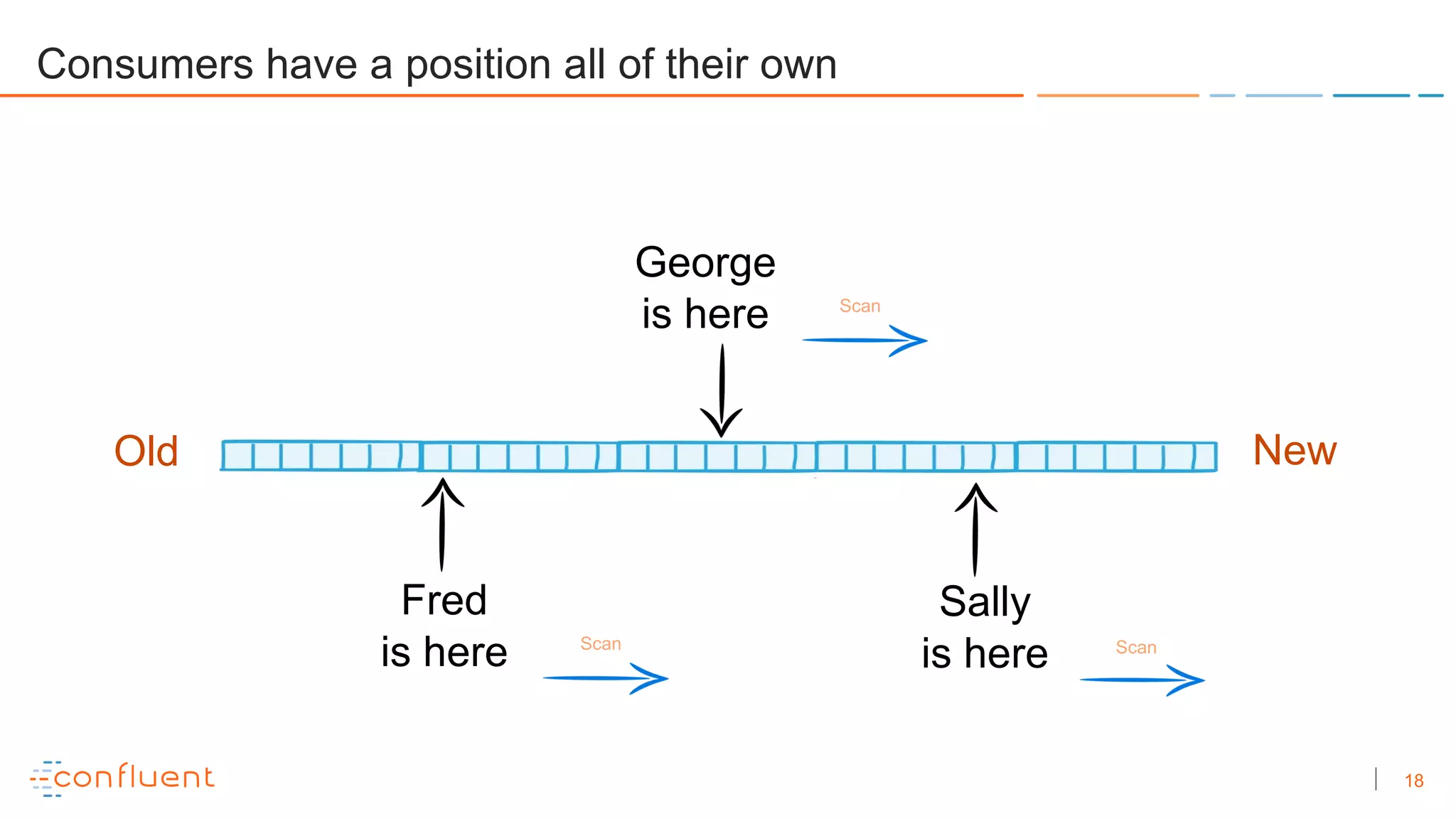

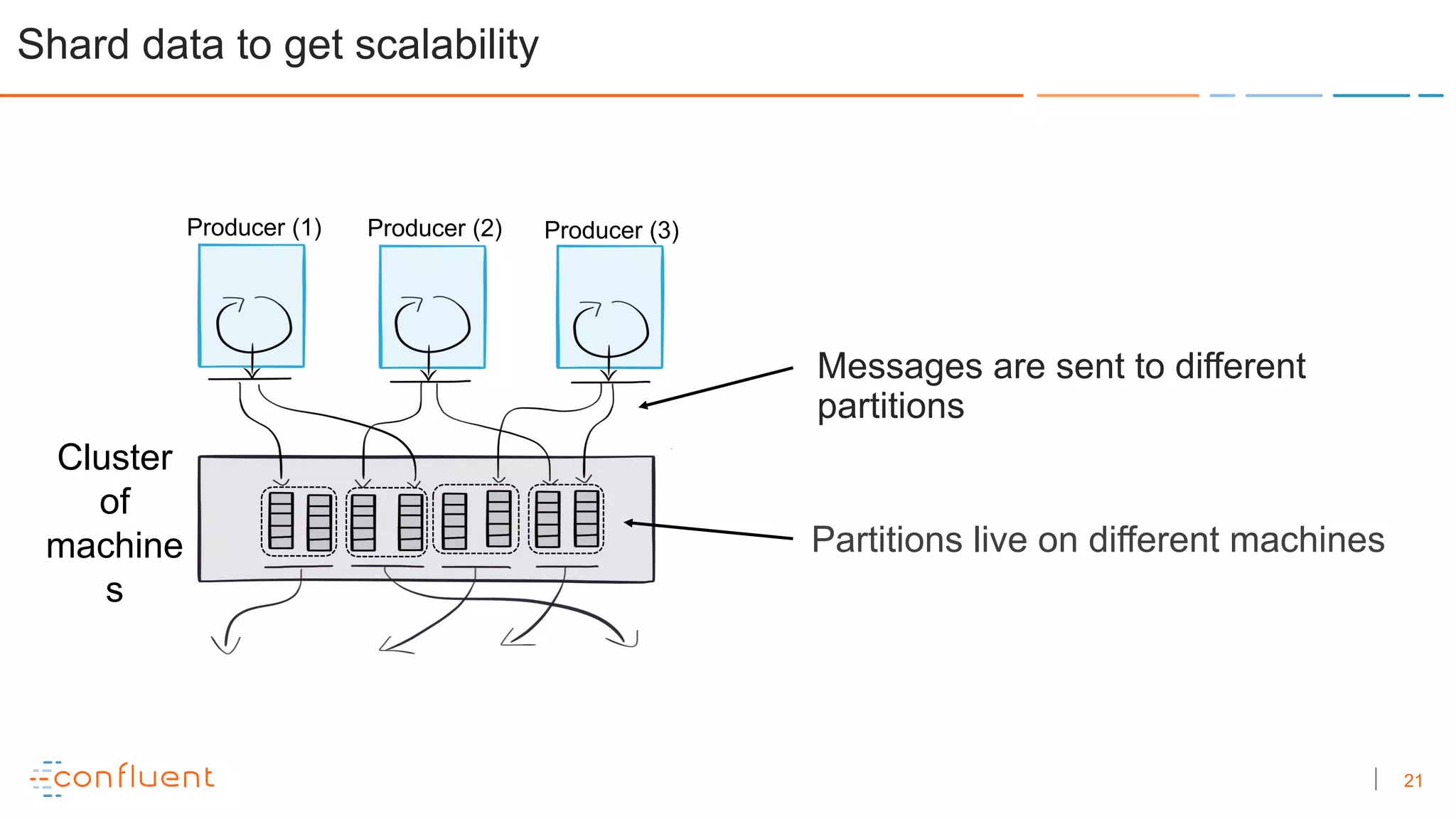

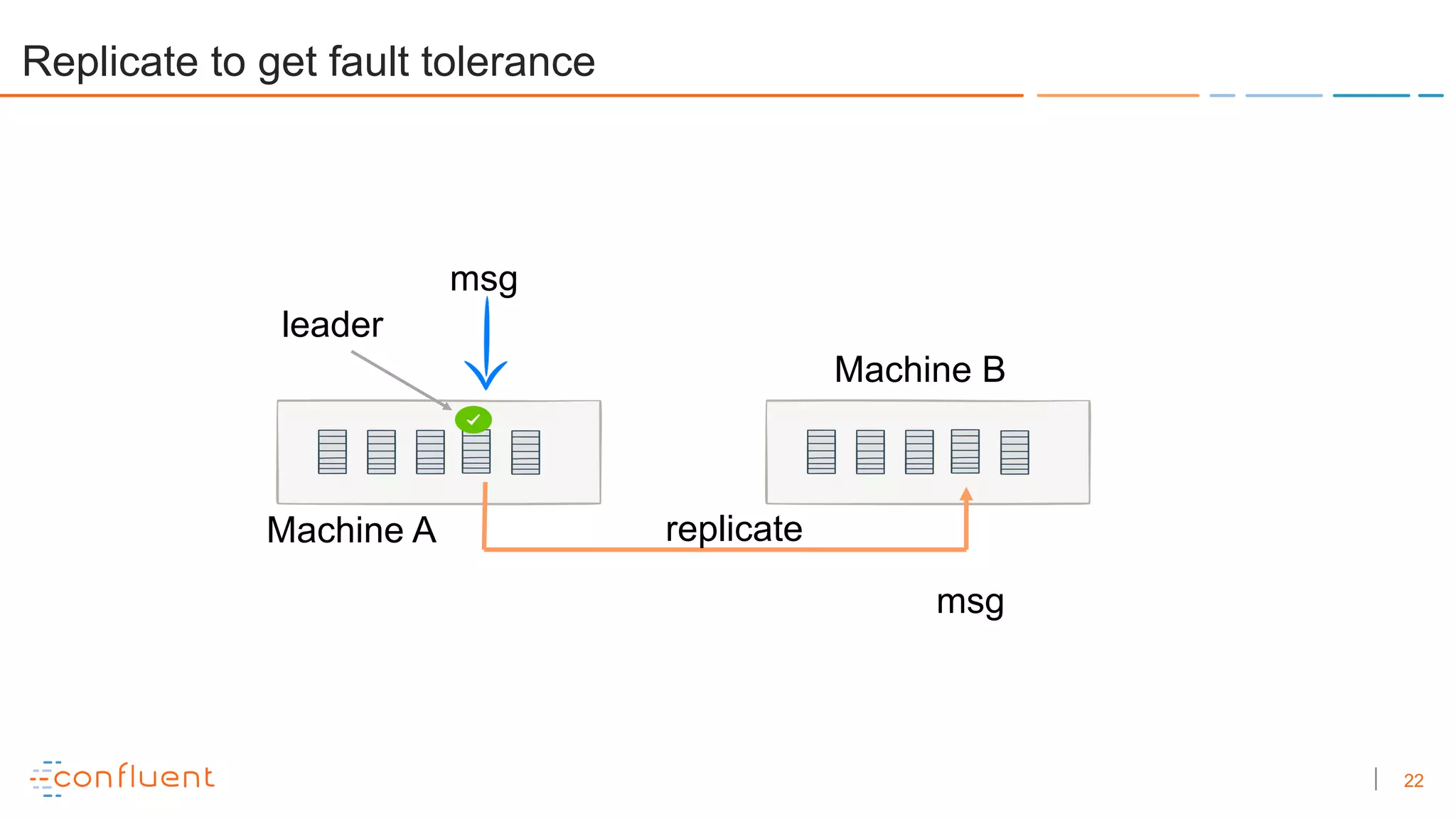

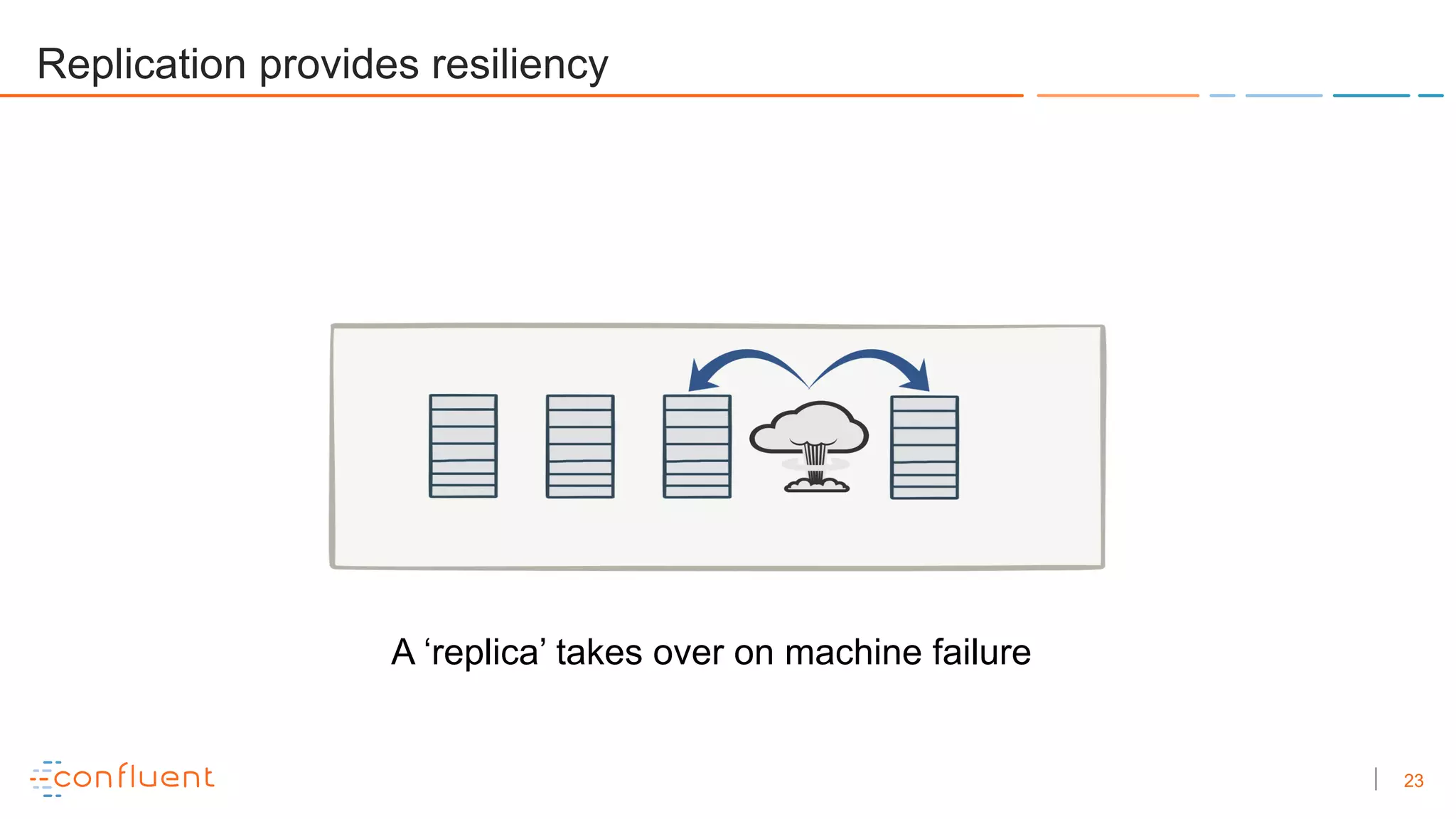

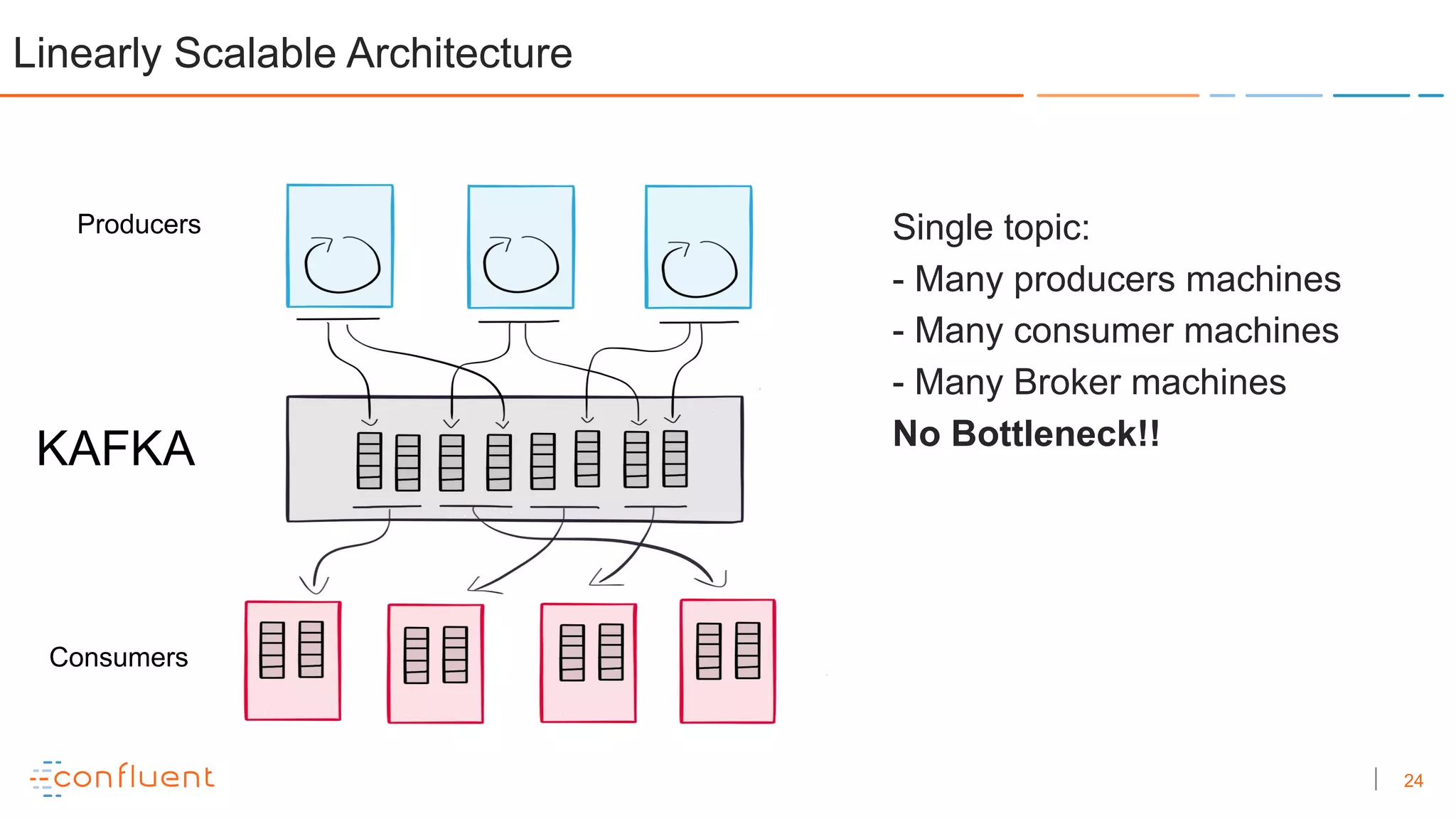

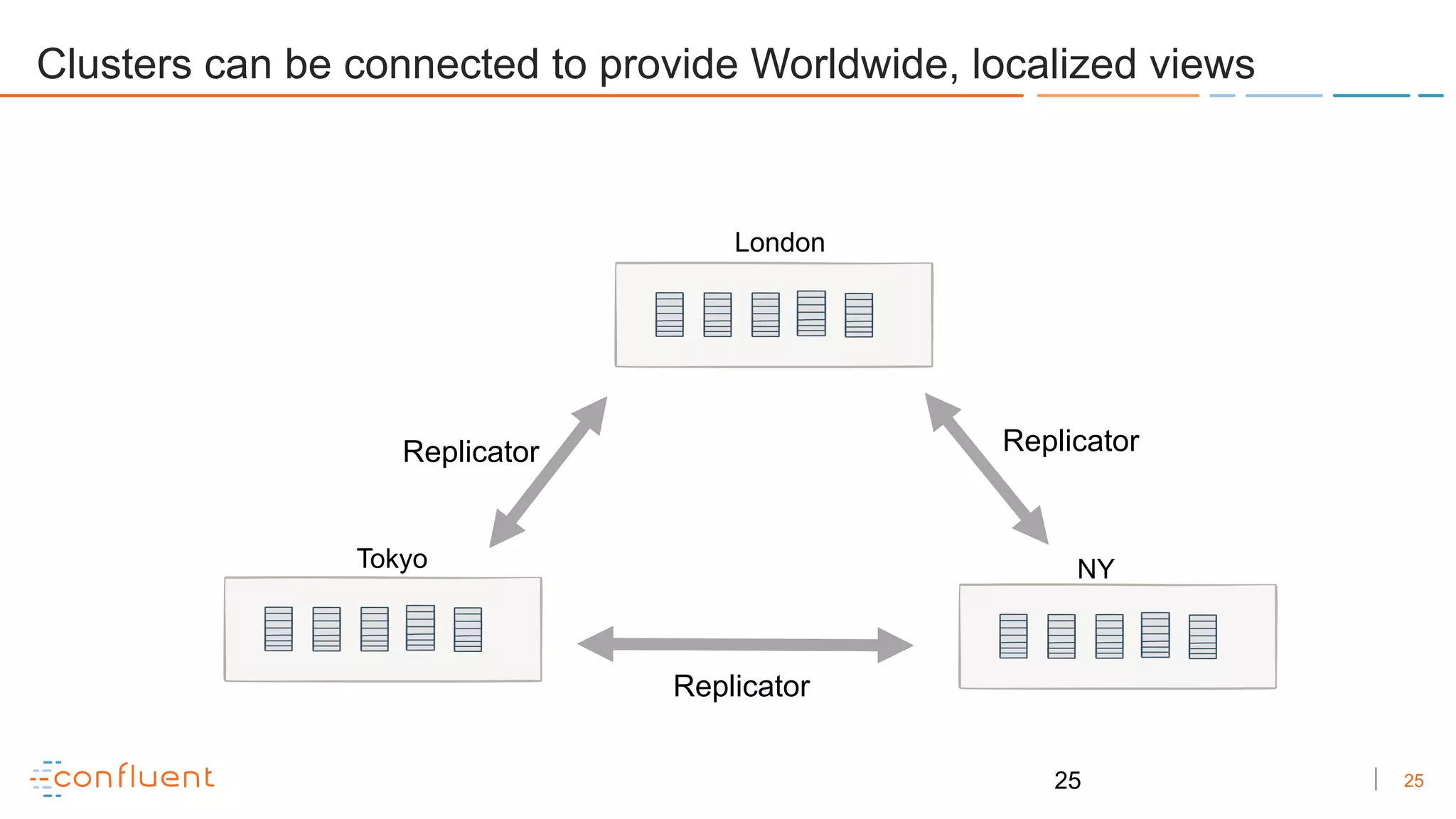

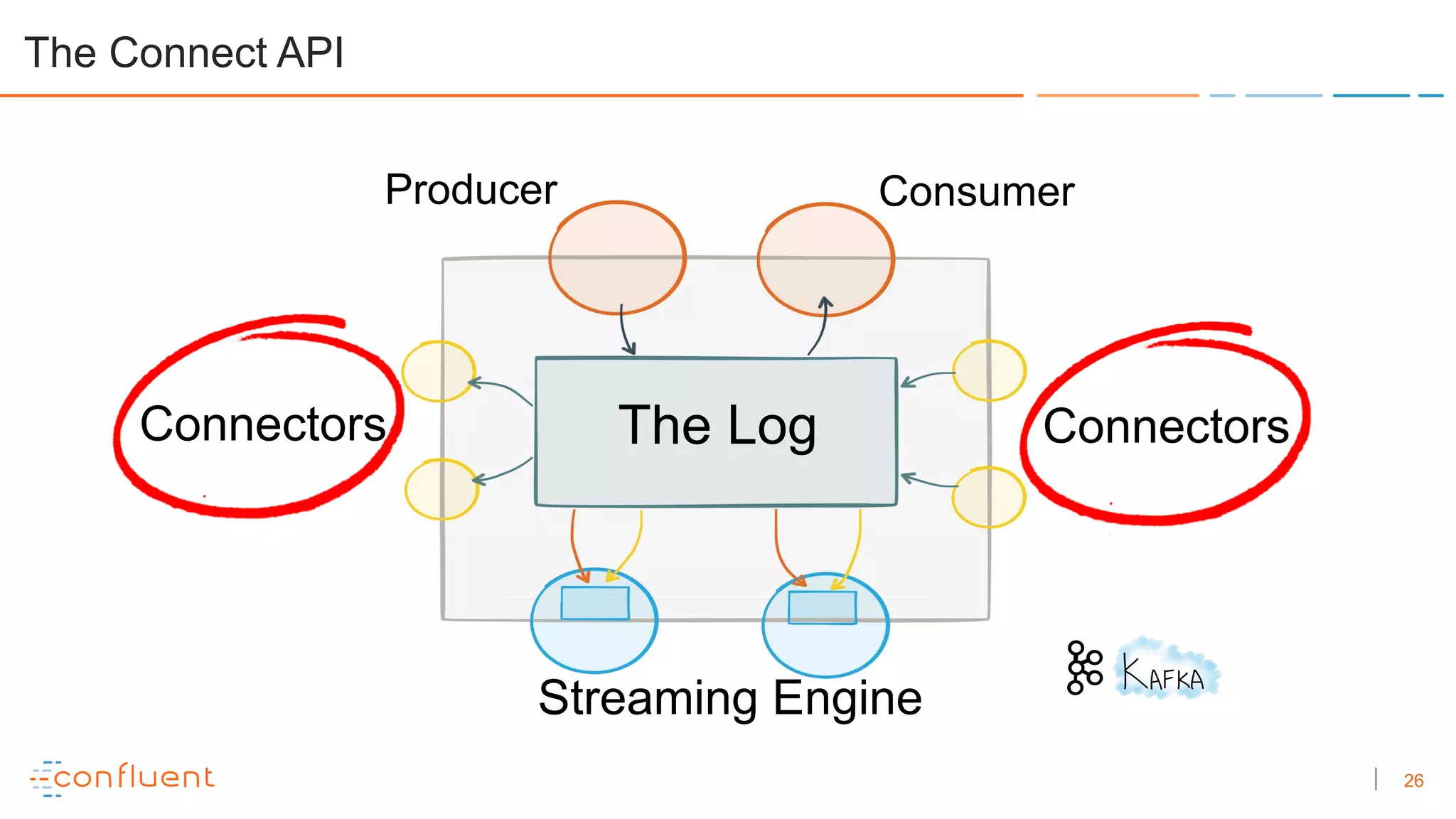

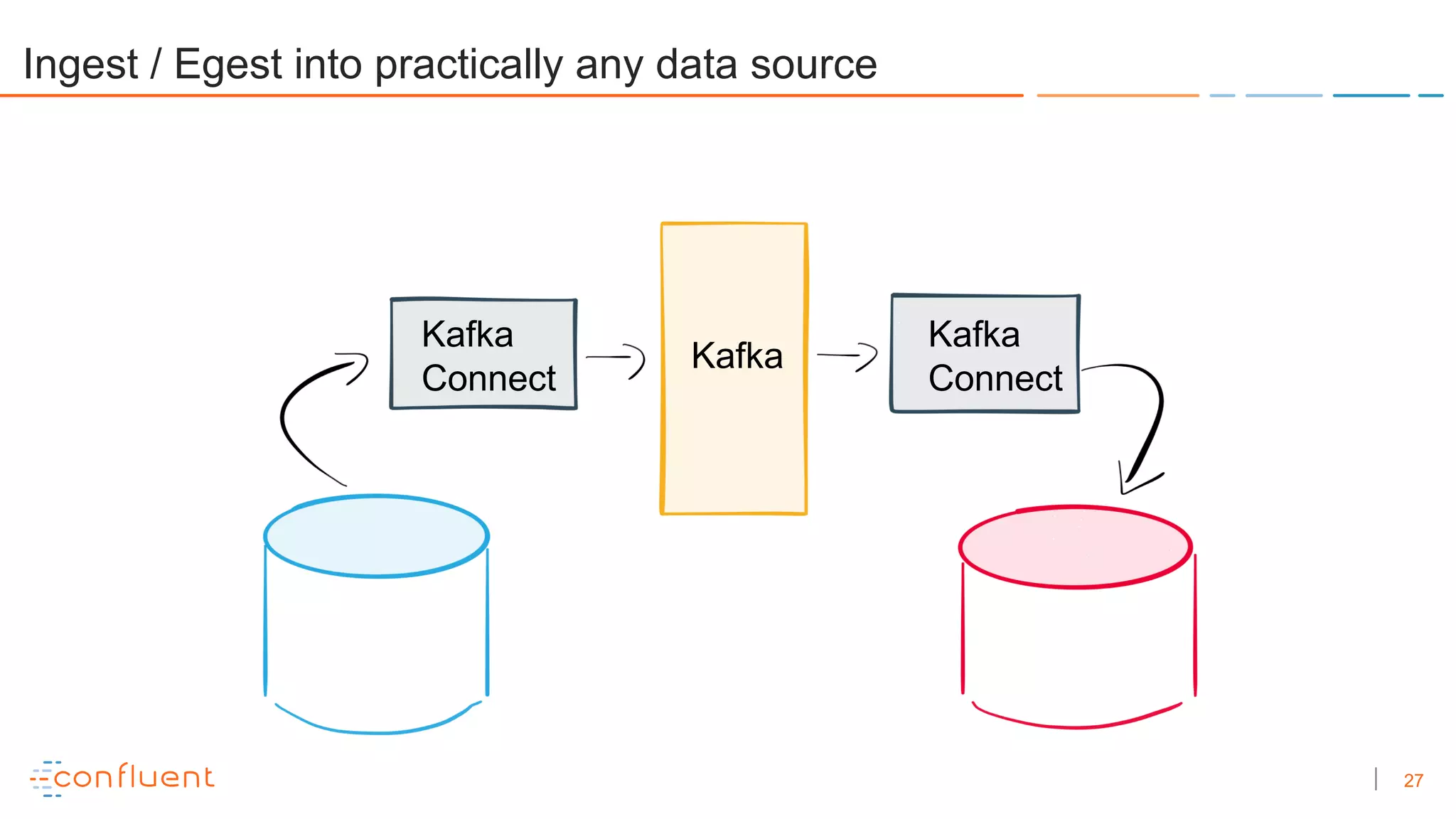

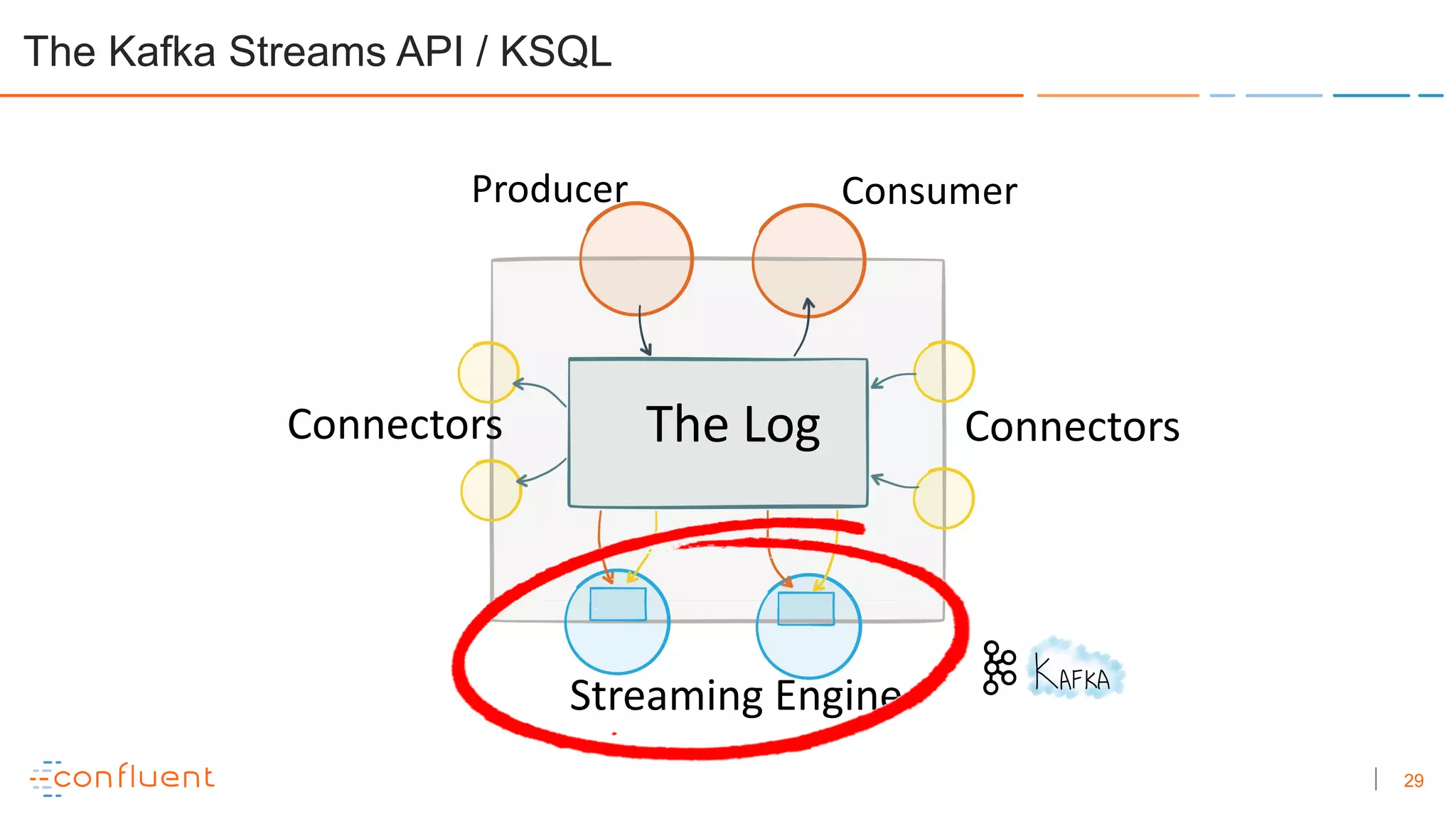

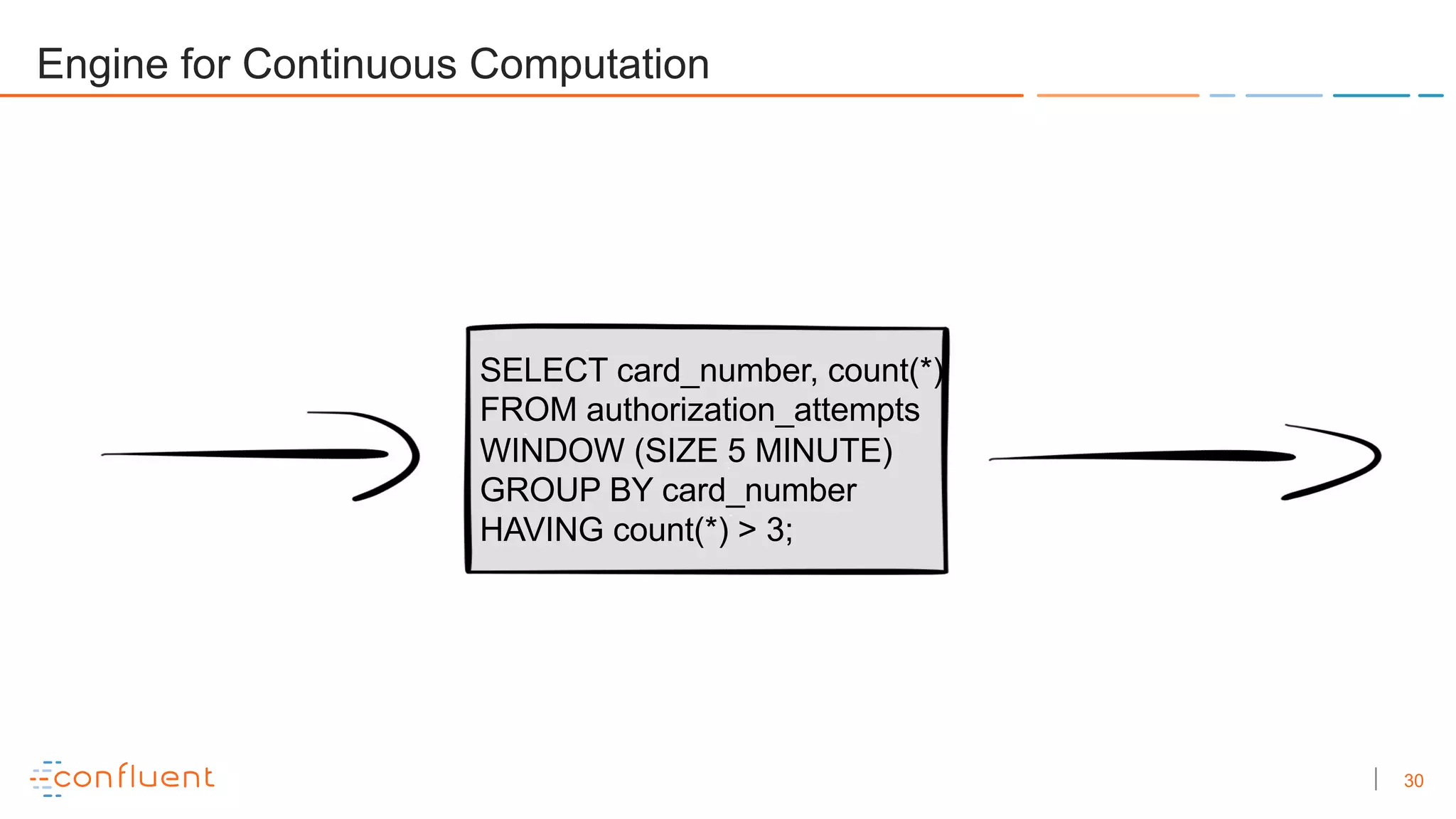

The document outlines the construction of a streaming platform using Kafka, highlighting key concepts such as stream processing, data ingestion, and microservices architecture. It discusses the benefits of Kafka, including scalability, fault tolerance, and the ability to decouple services through event-driven design. The document also provides examples of event streams and the Kafka Streams API for building applications that leverage these principles.

![31

But it’s just an API

public static void main(String[] args) {

StreamsBuilder builder = new StreamsBuilder();

builder.stream(”caterpillars")

.map((k, v) -> coolTransformation(k, v))

.to(“butterflies”);

new KafkaStreams(builder.build(), props()).start();

}

31](https://image.slidesharecdn.com/urbonbayesperemetupberlinoct18kafkastreamingplatform-181029171240/75/Building-a-Streaming-Platform-with-Kafka-30-2048.jpg)