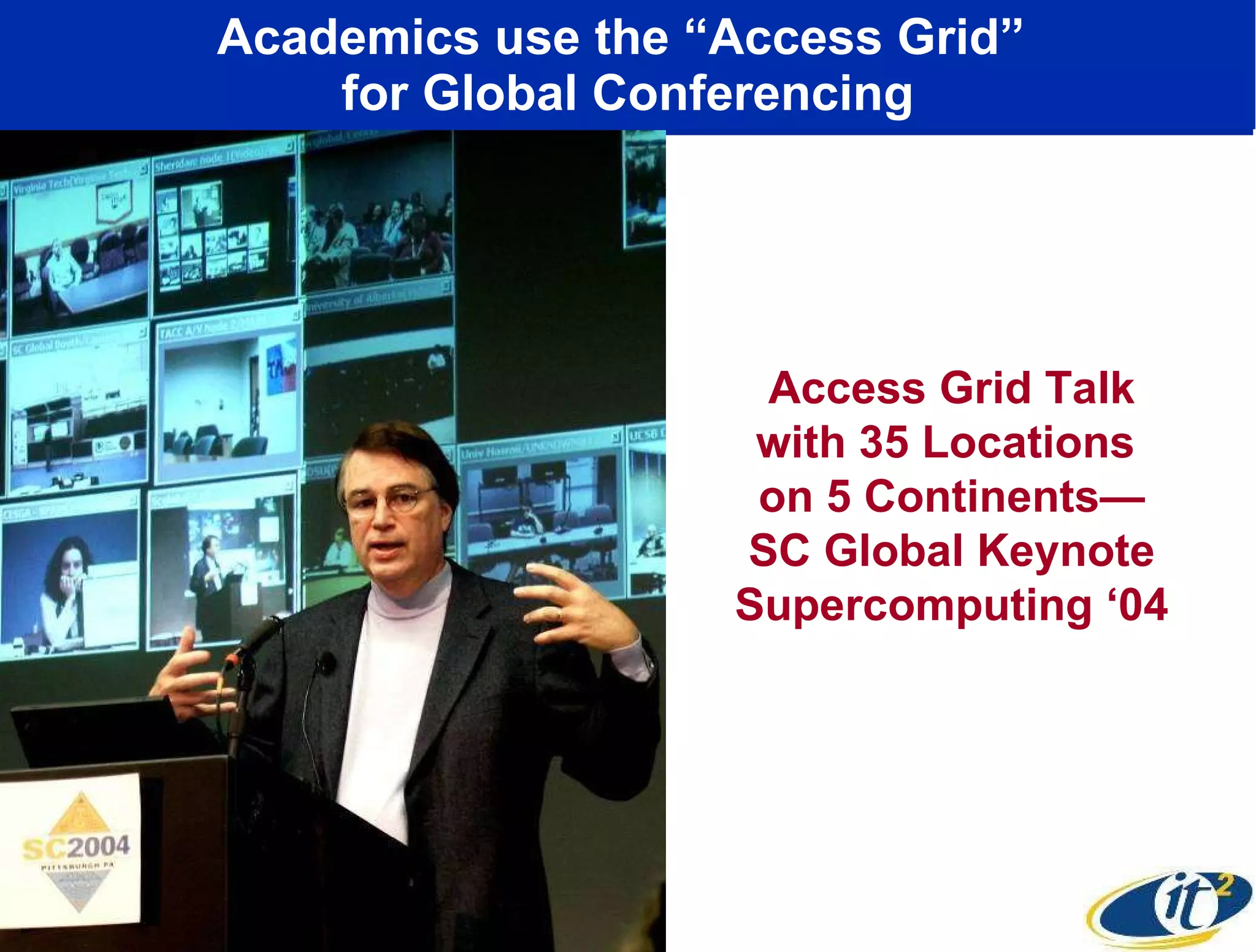

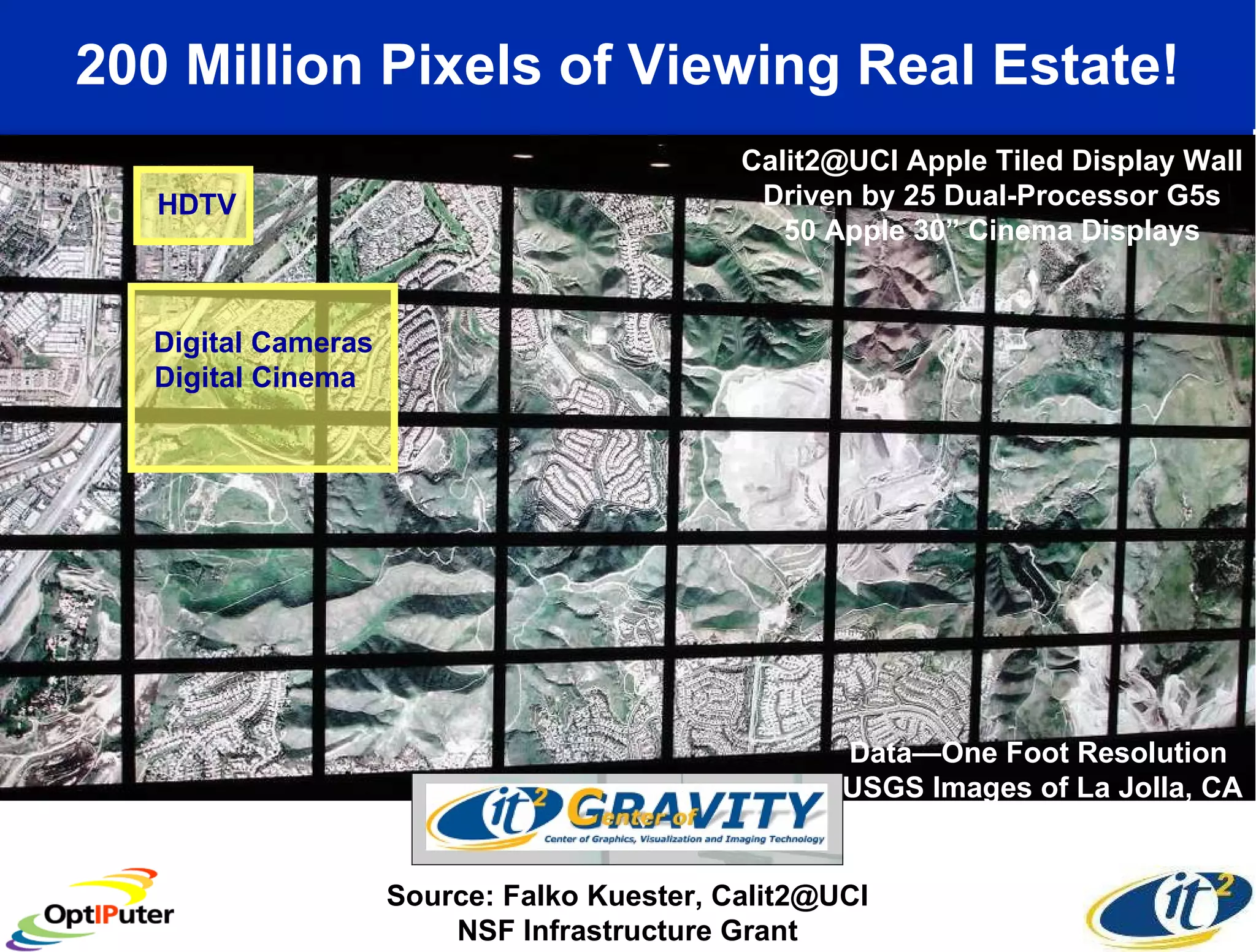

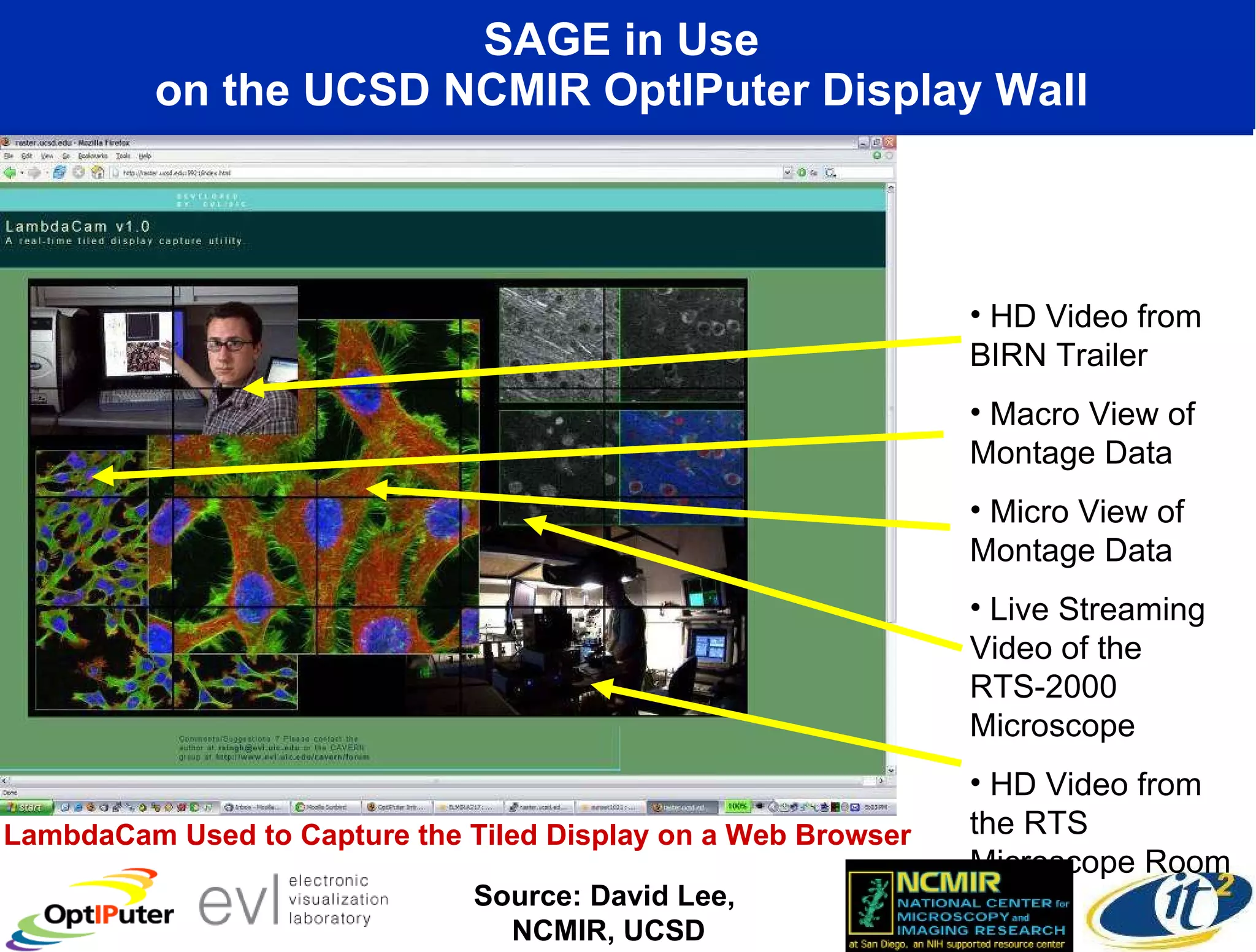

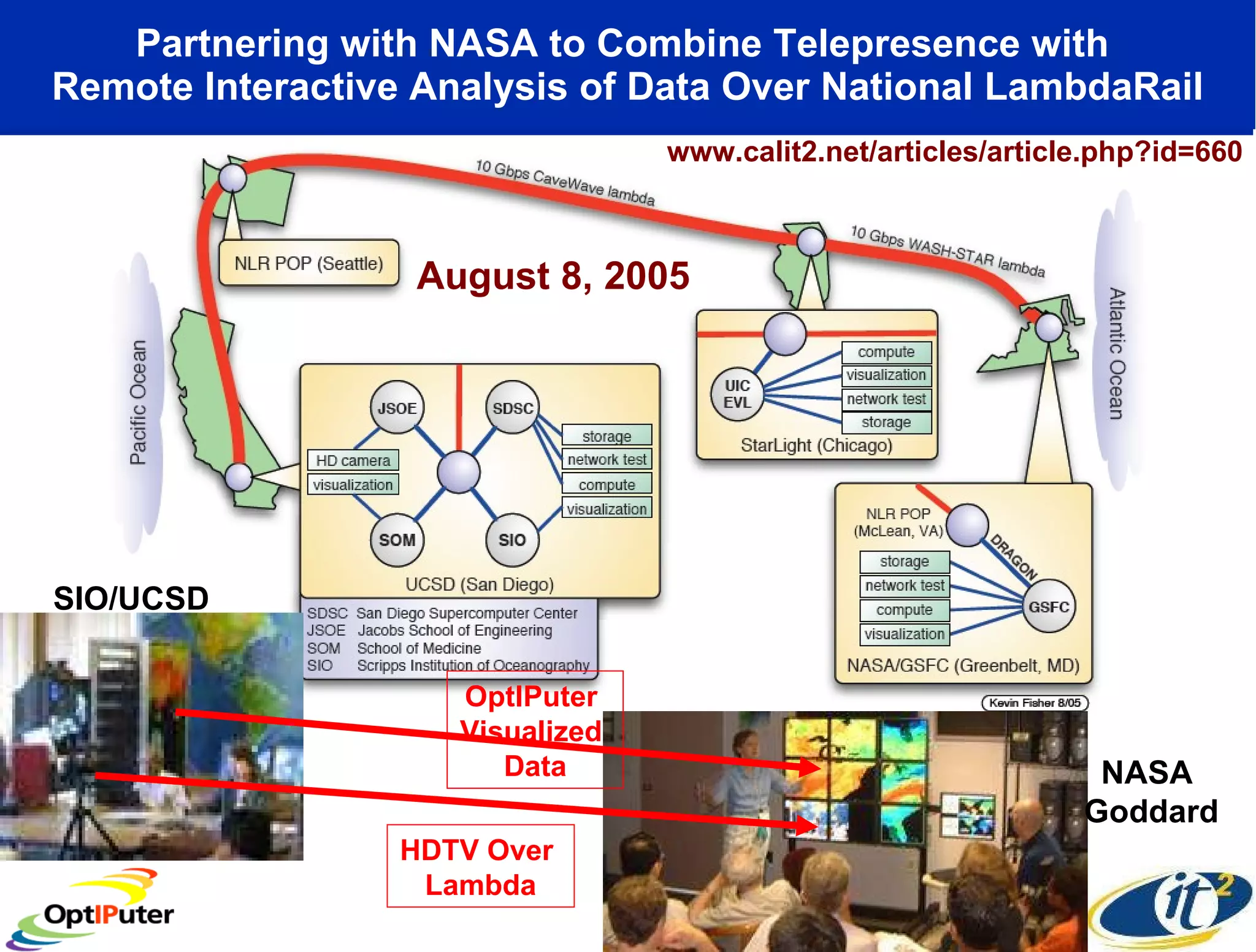

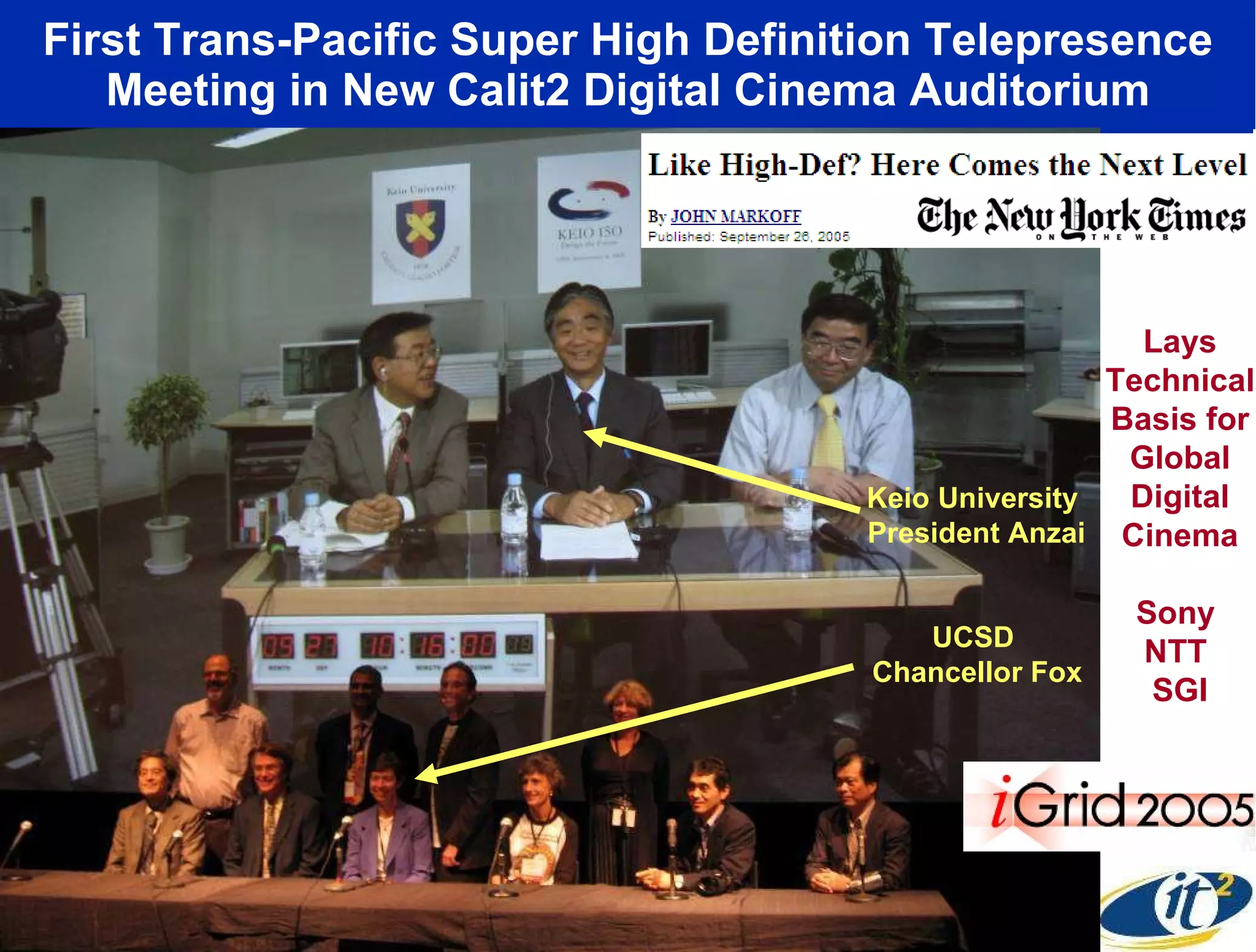

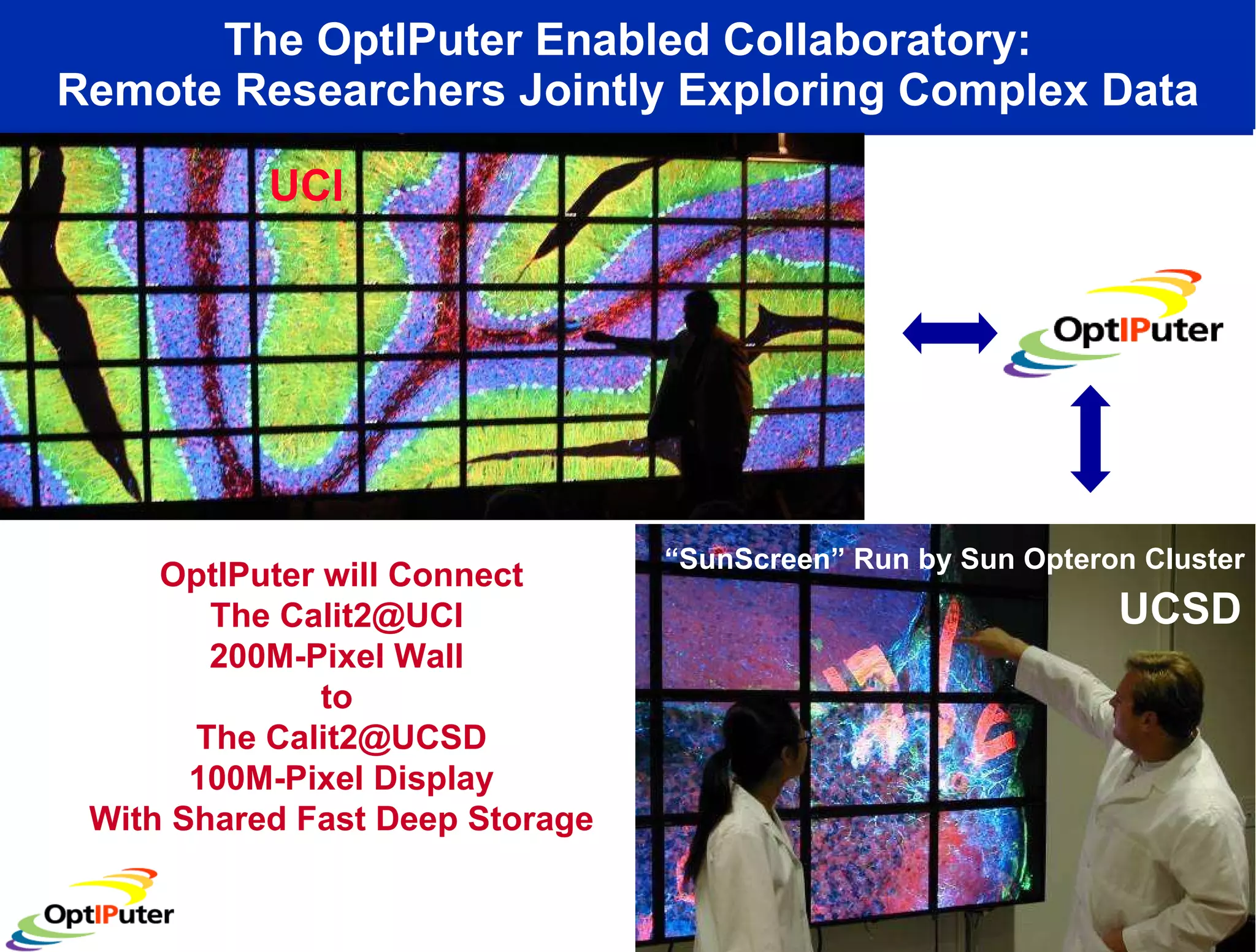

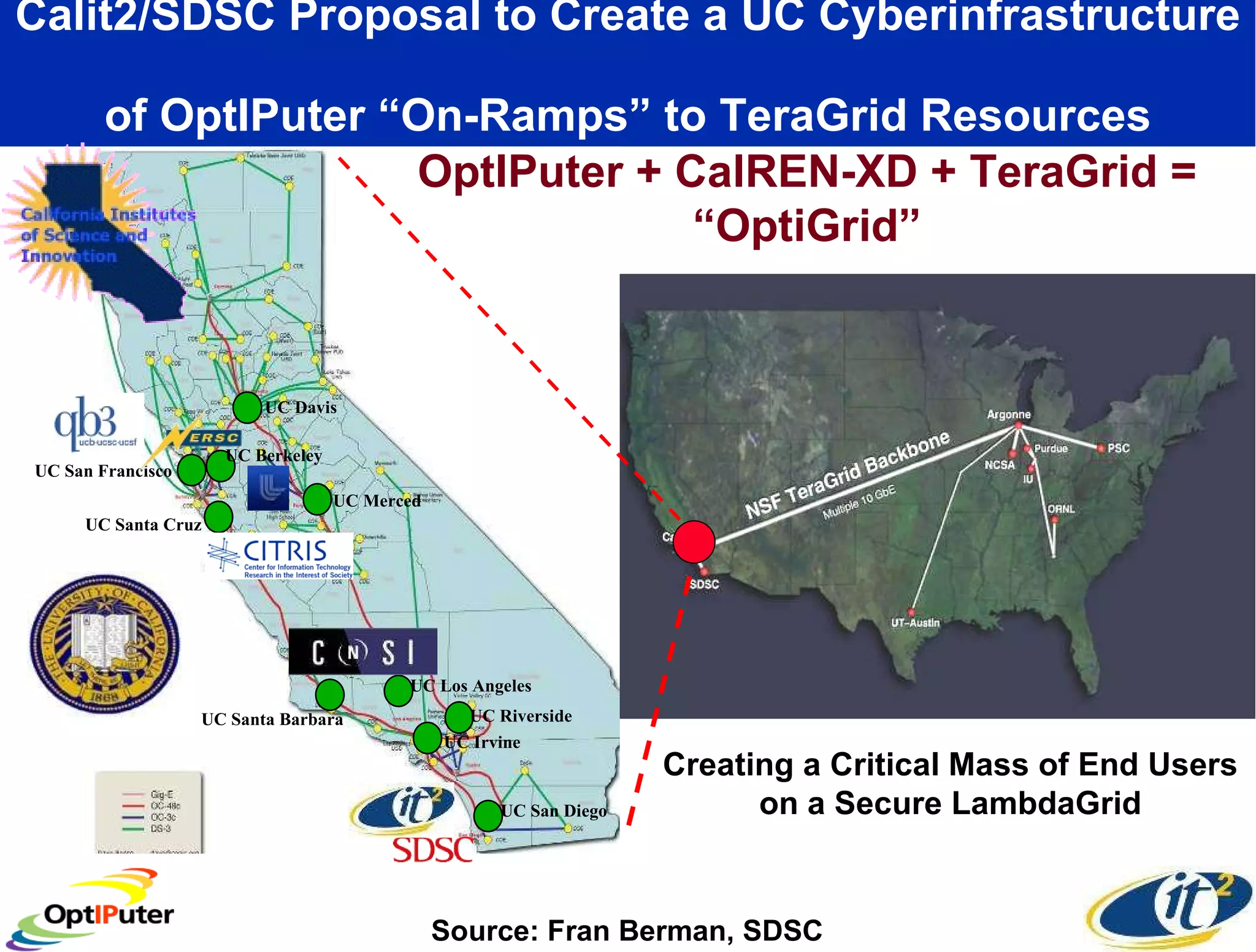

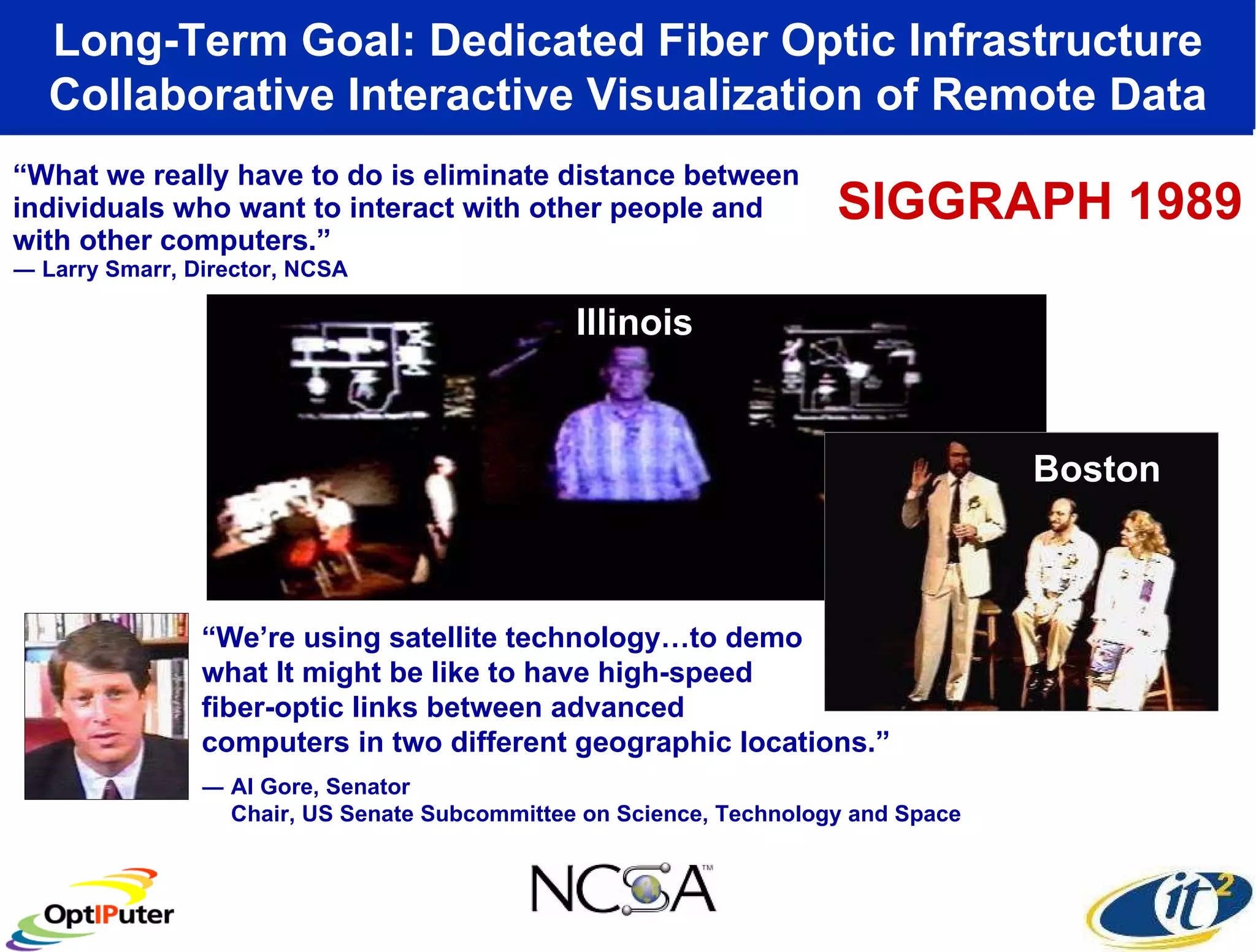

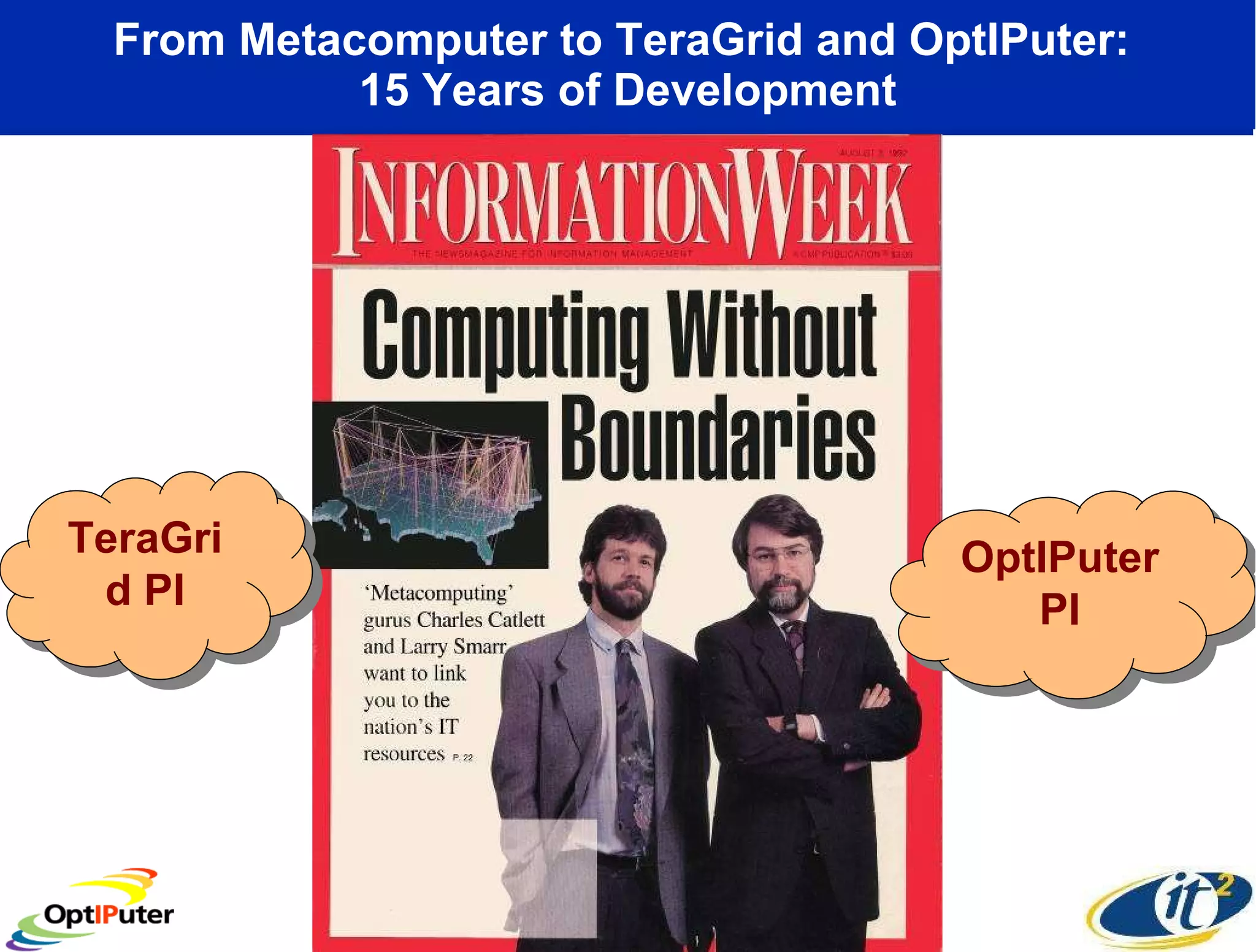

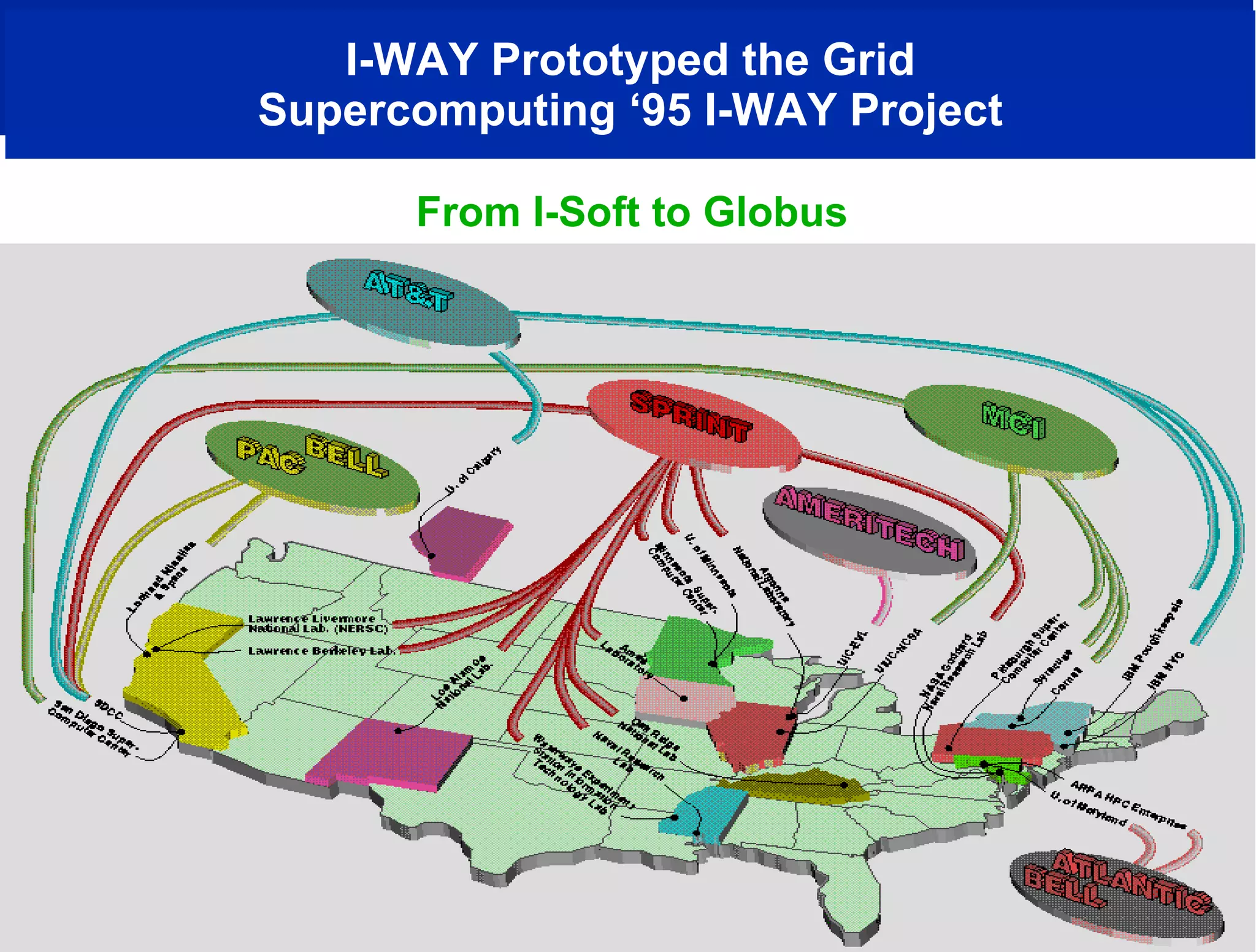

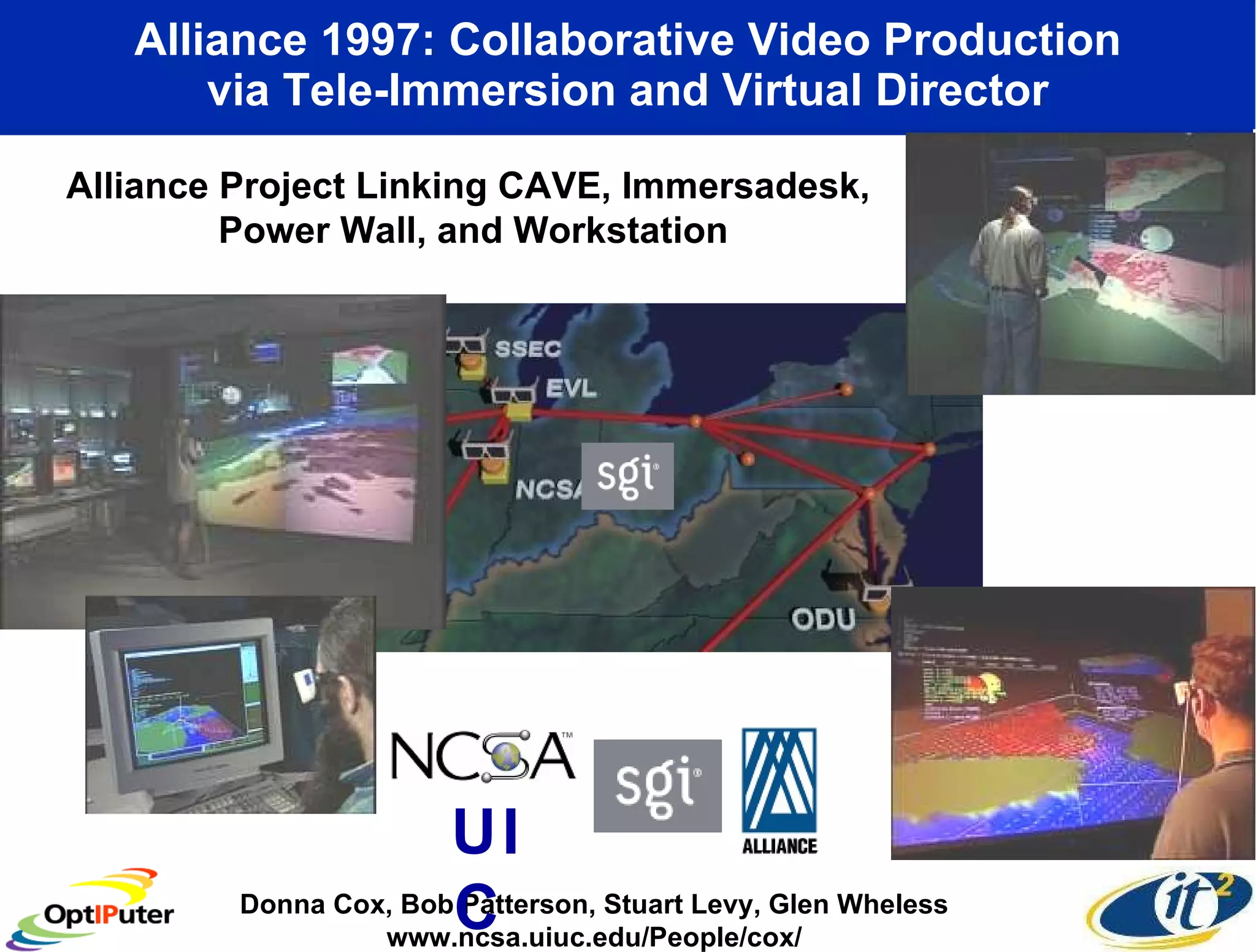

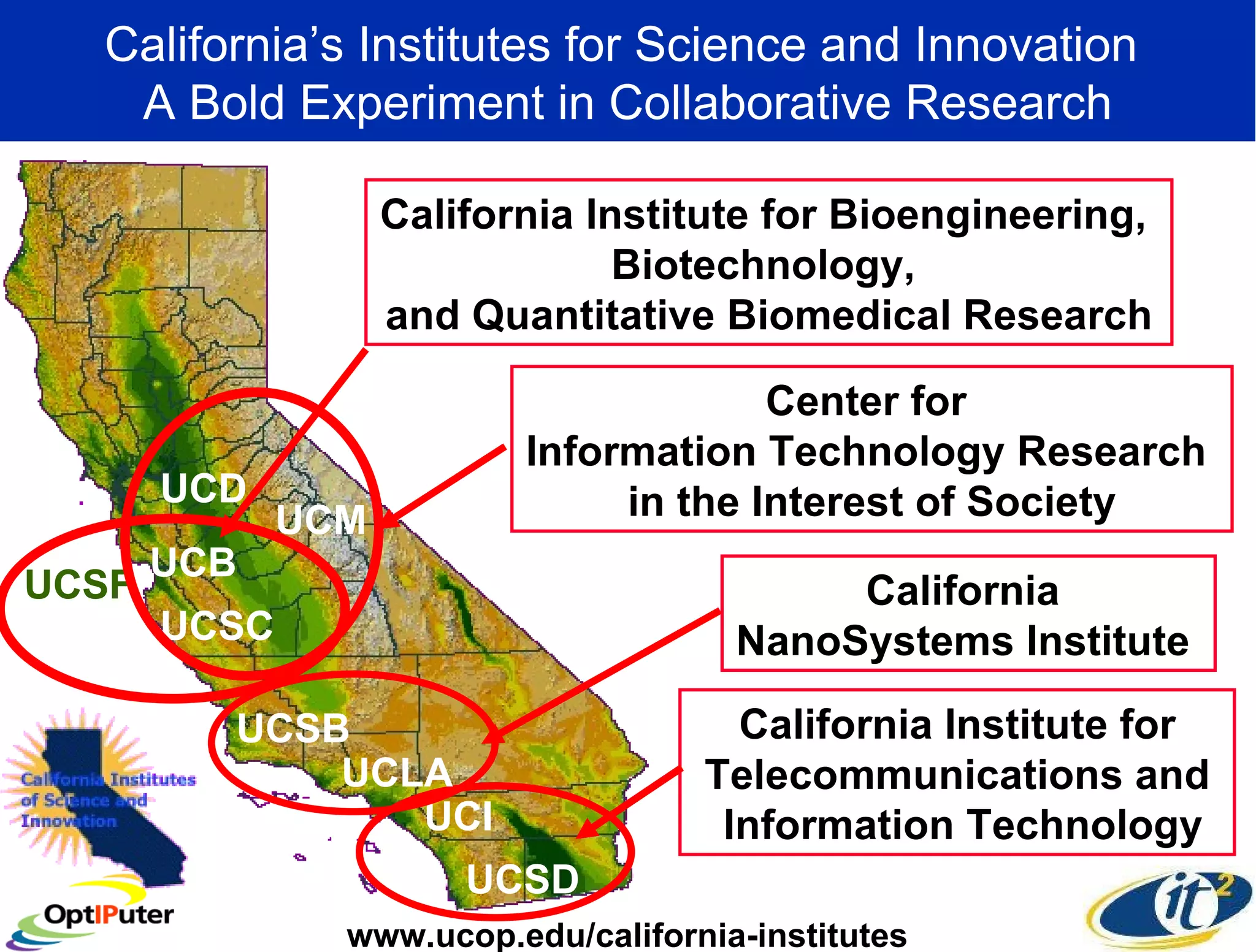

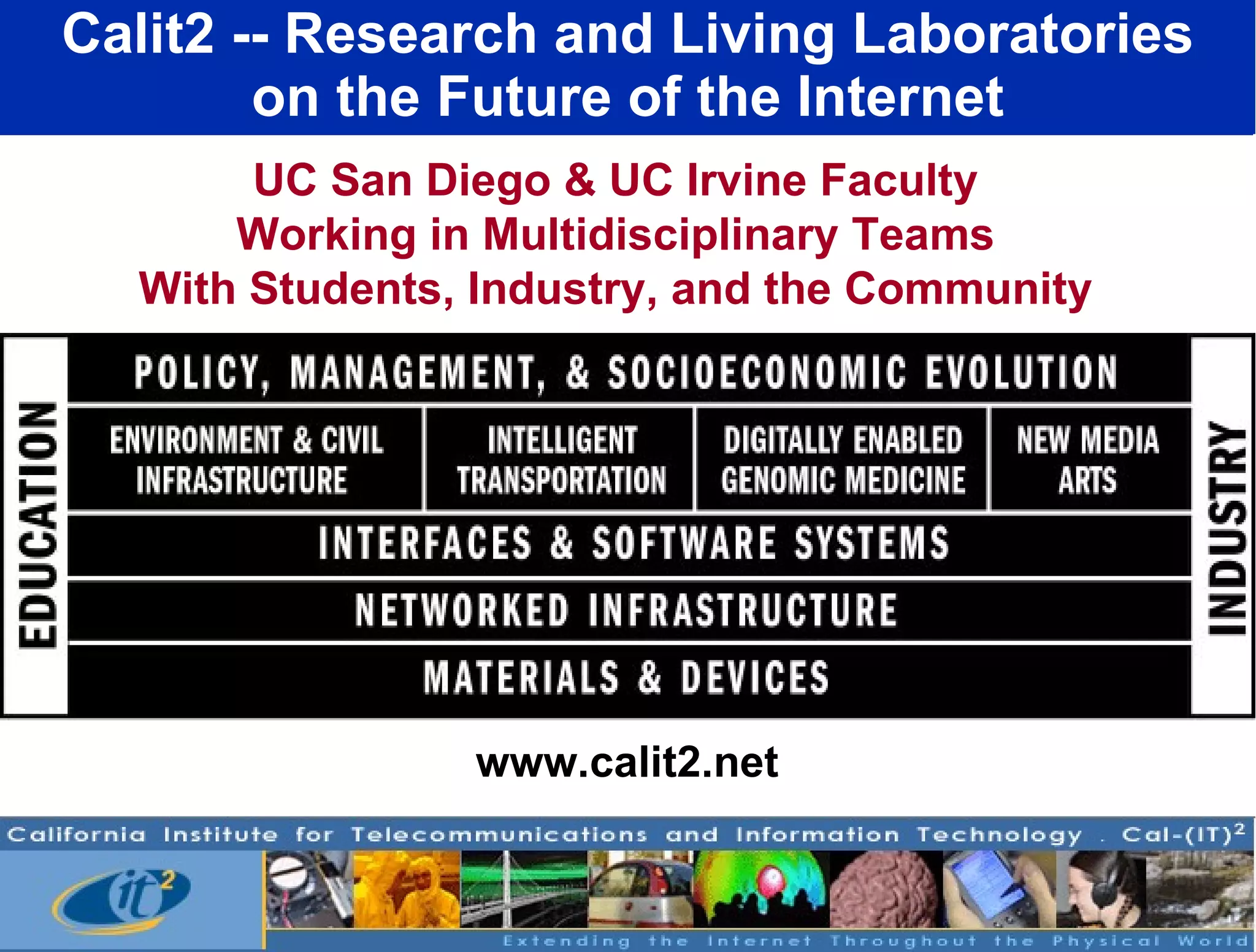

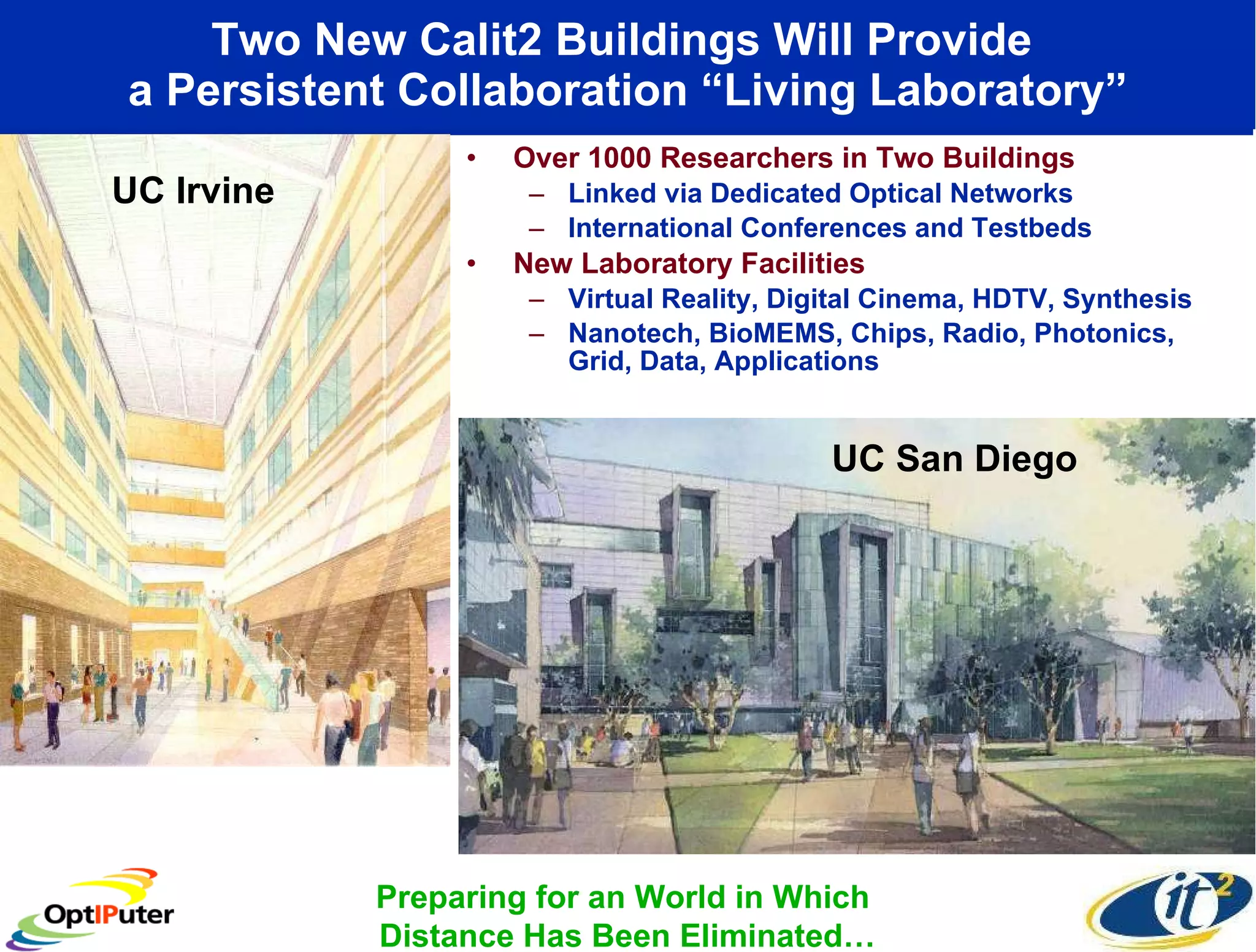

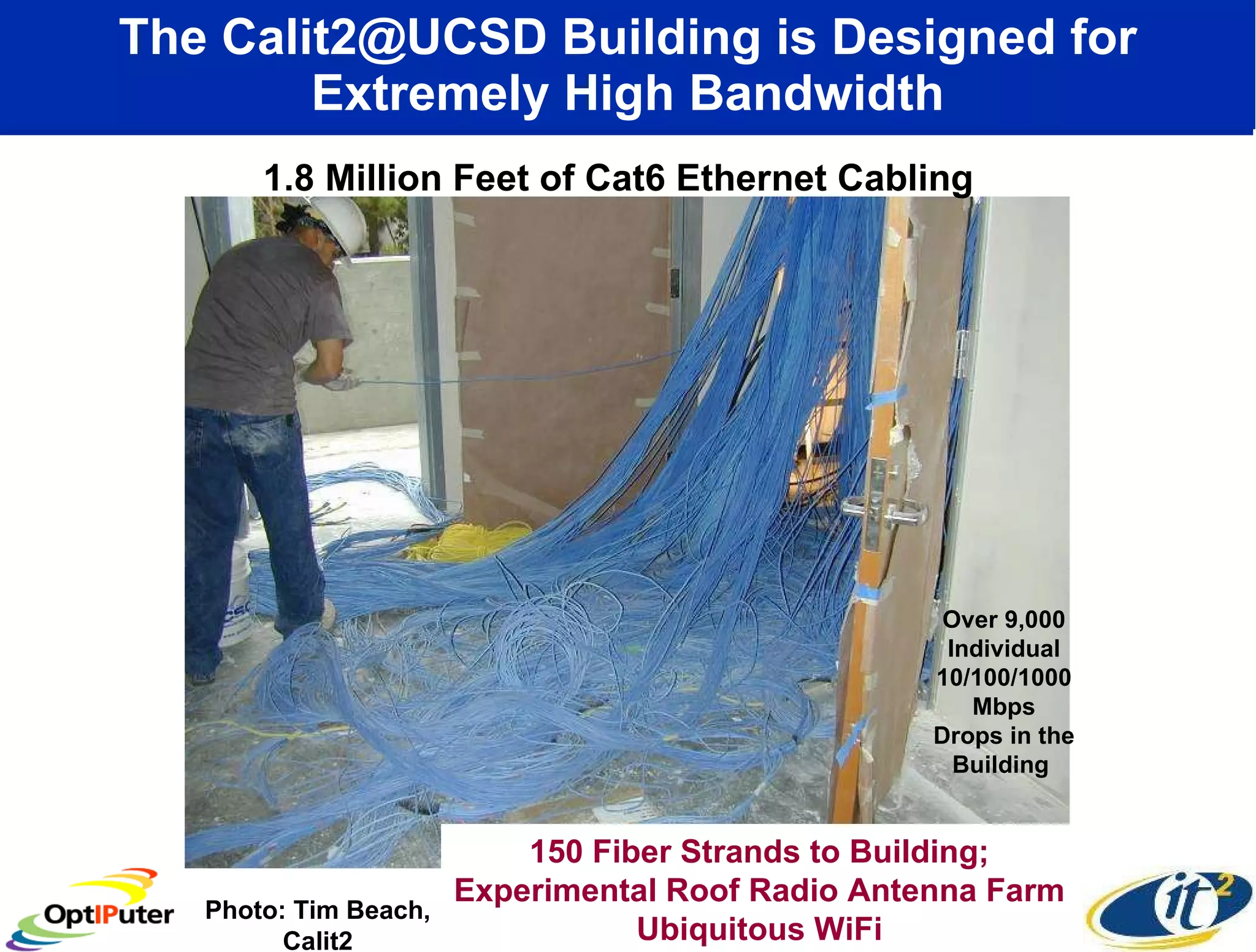

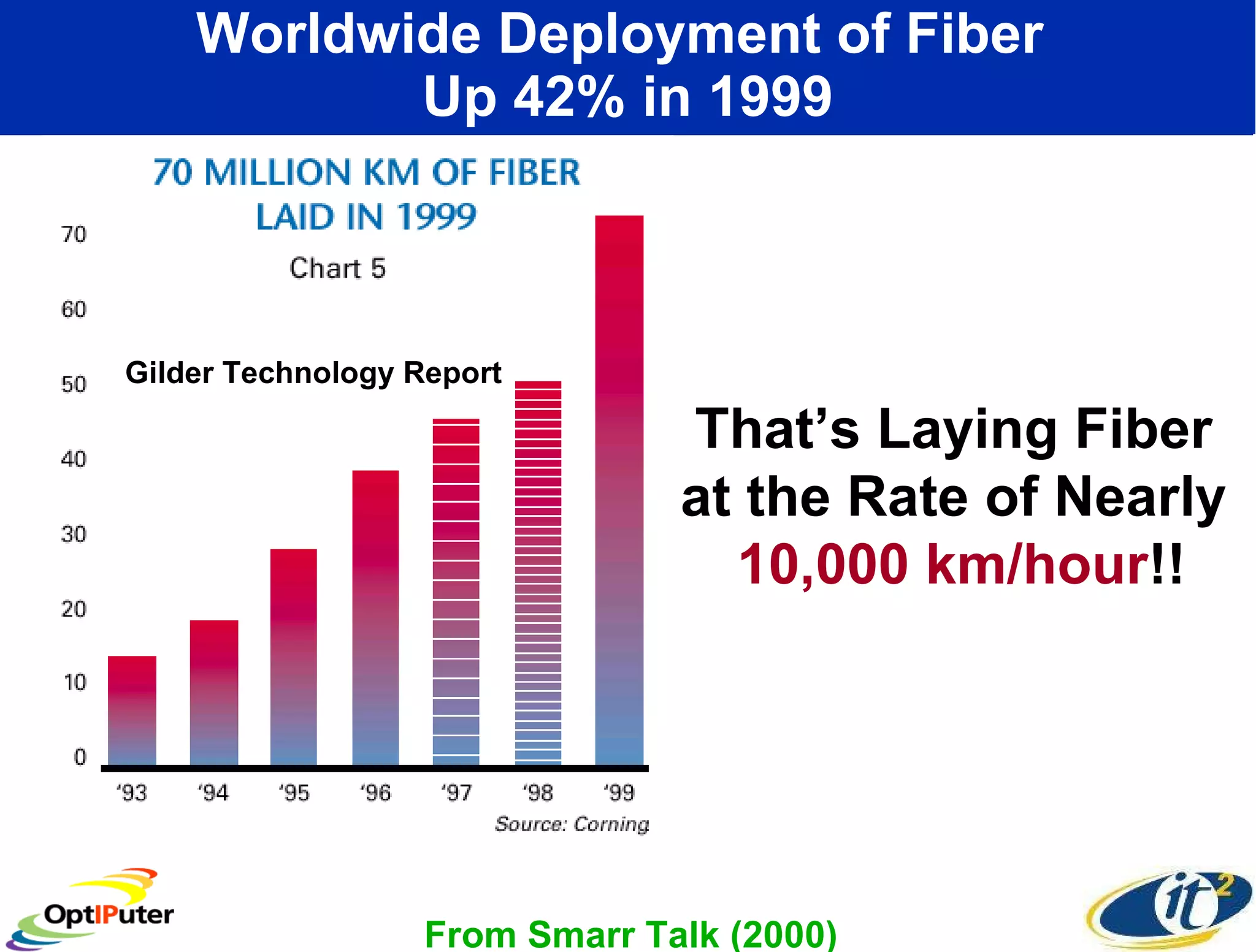

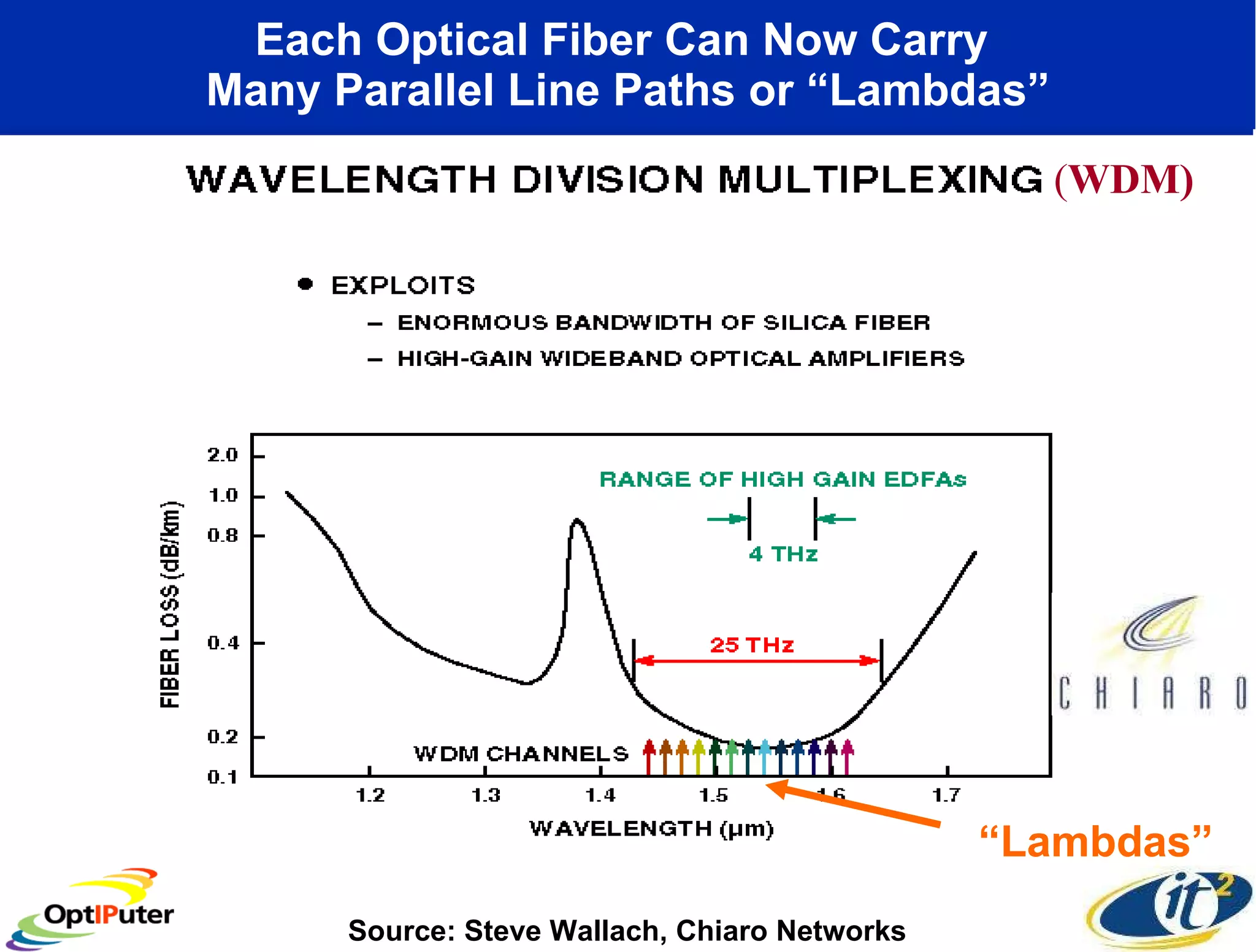

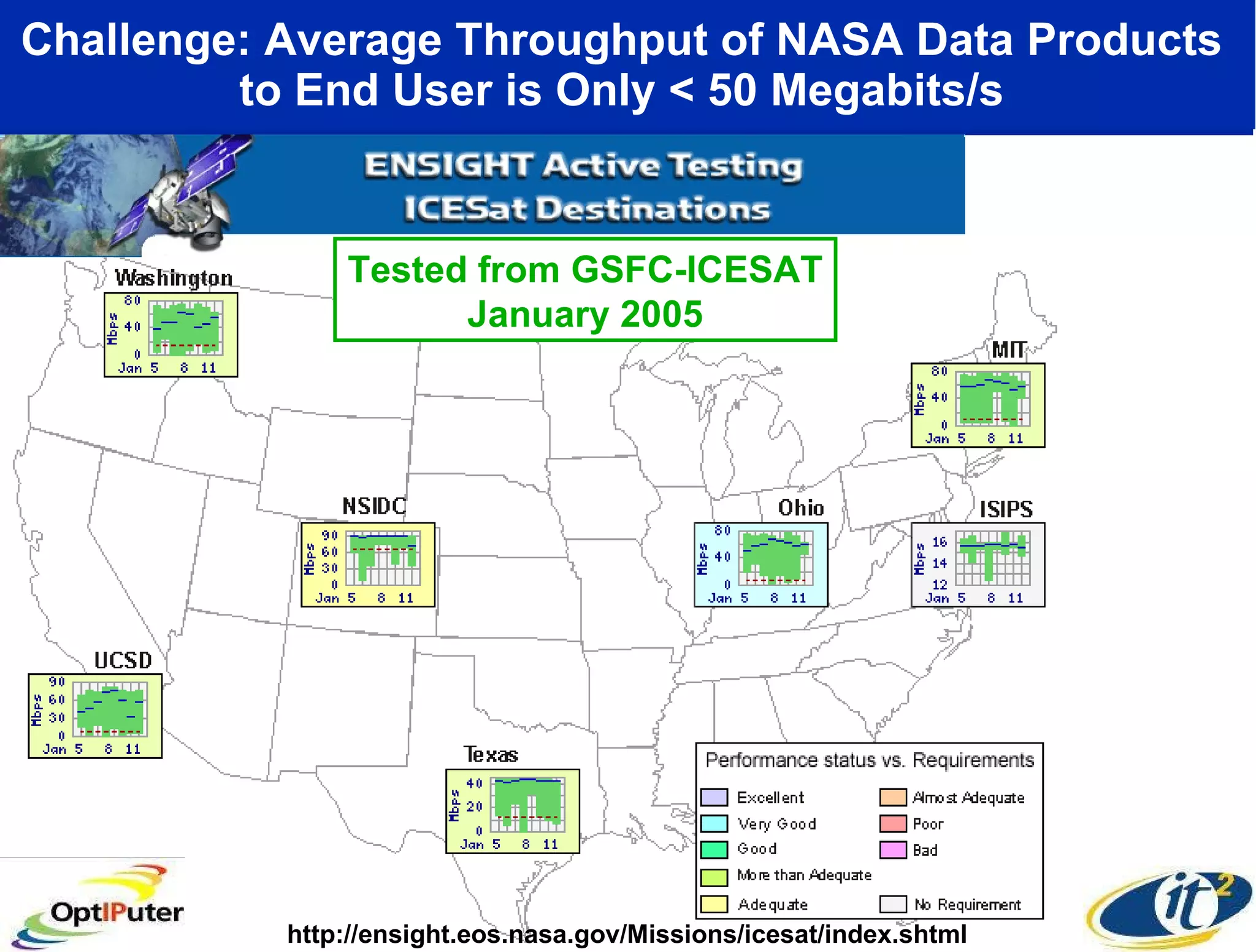

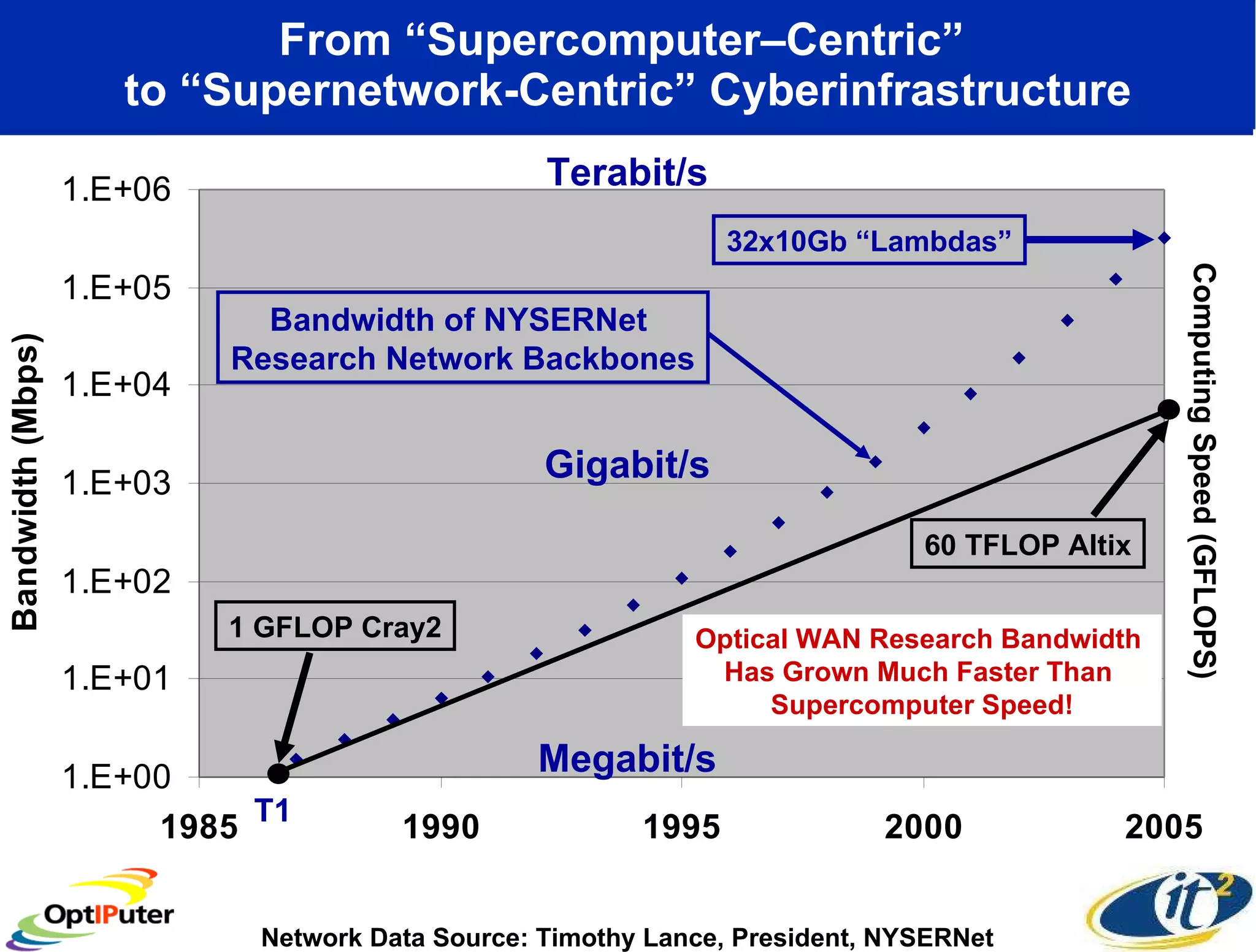

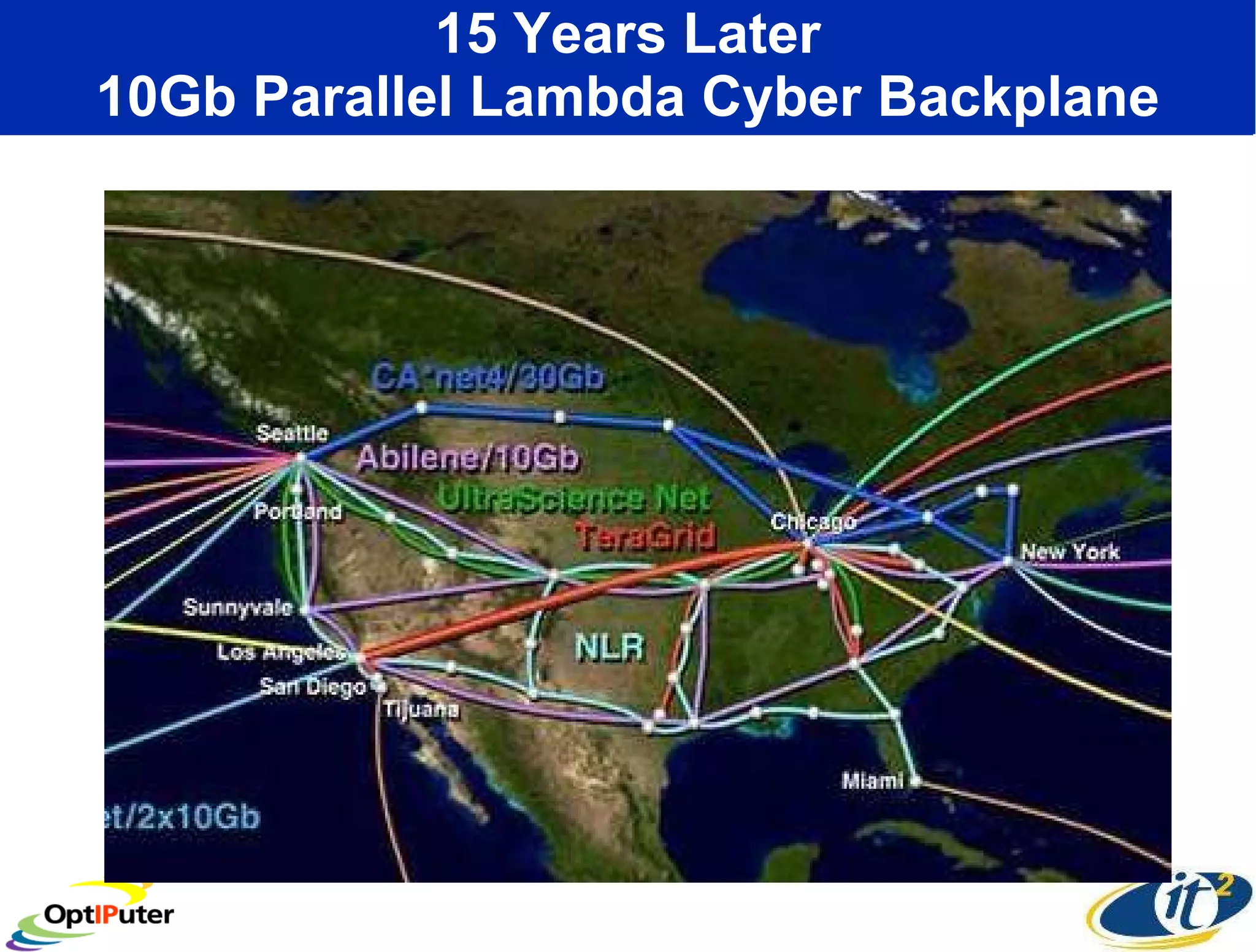

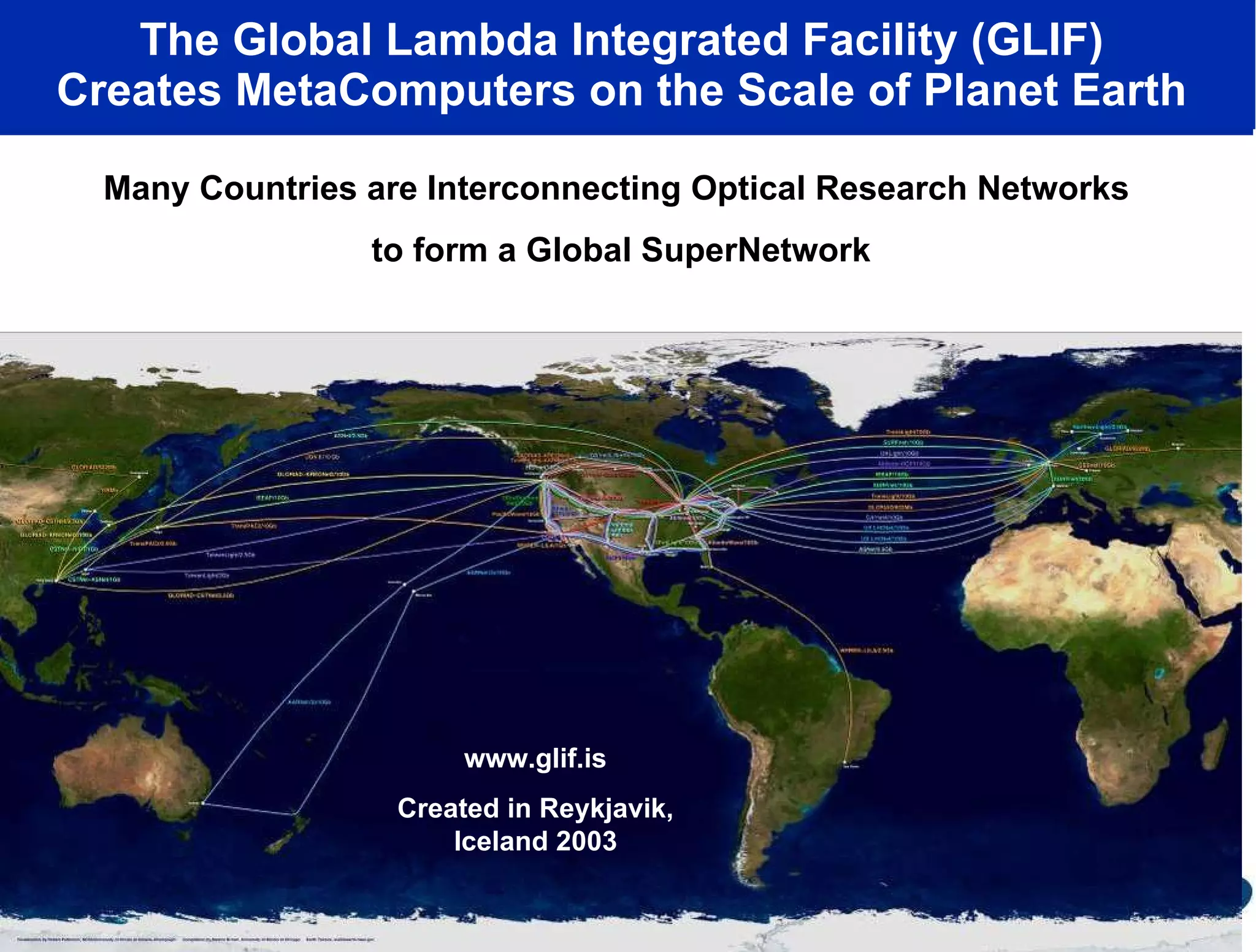

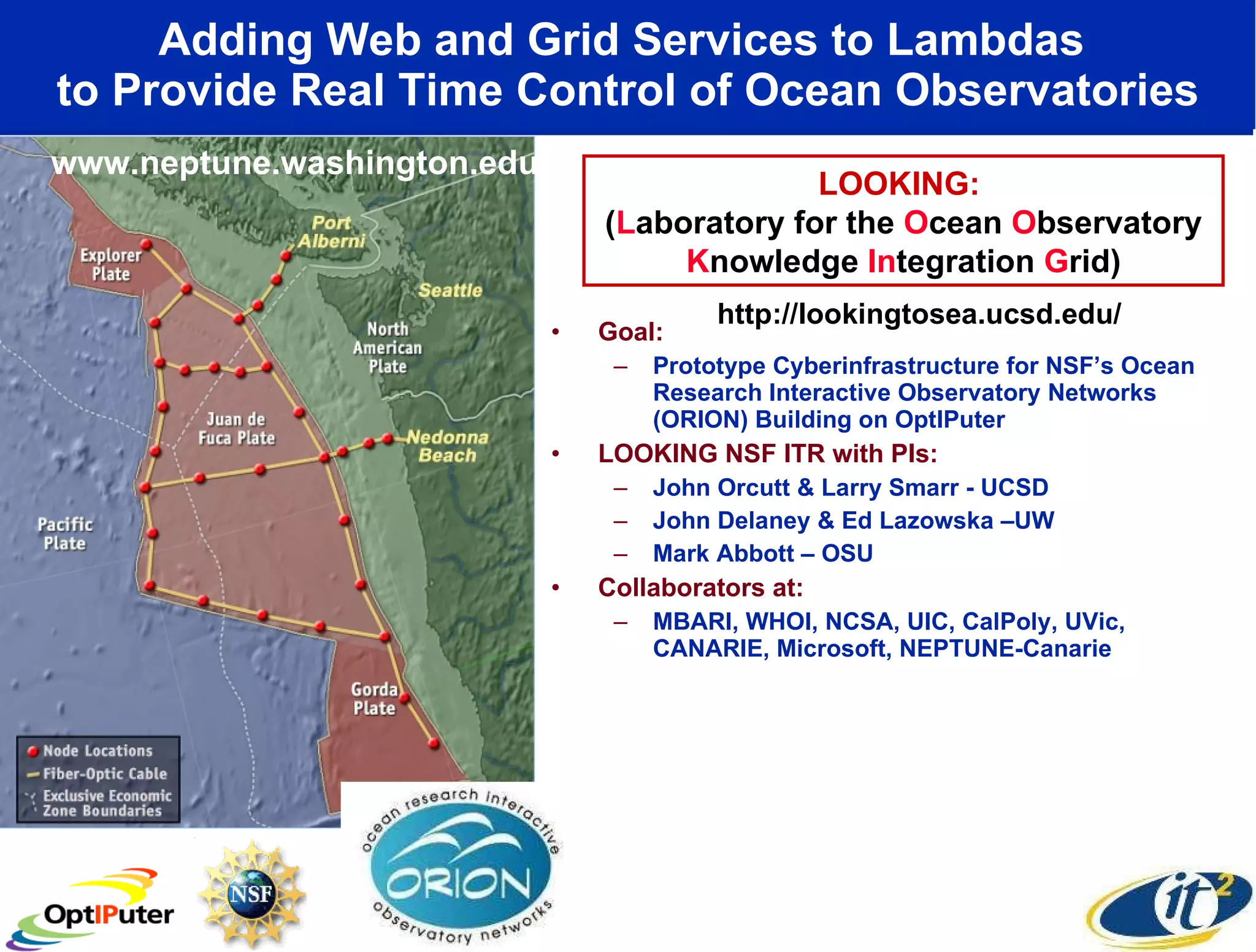

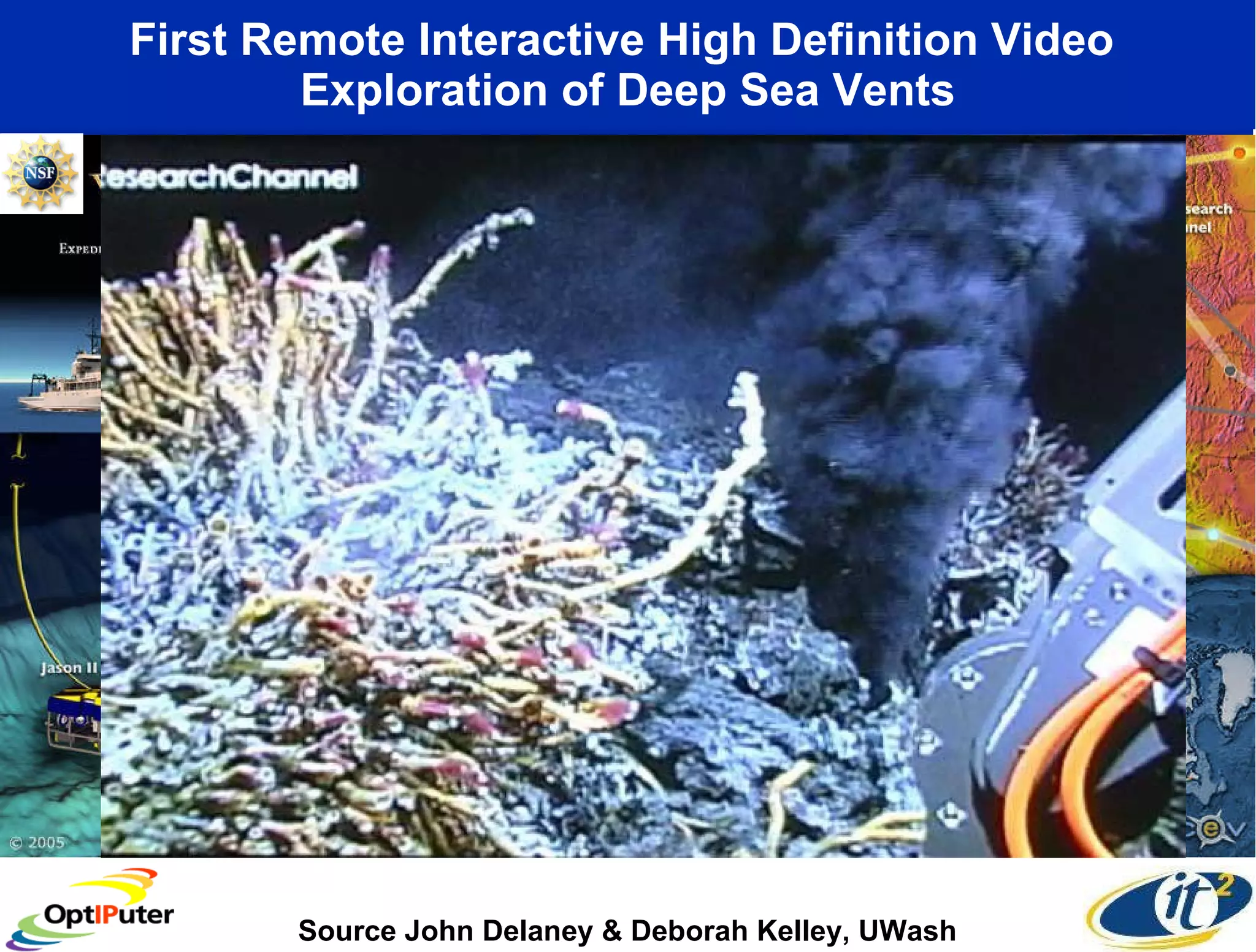

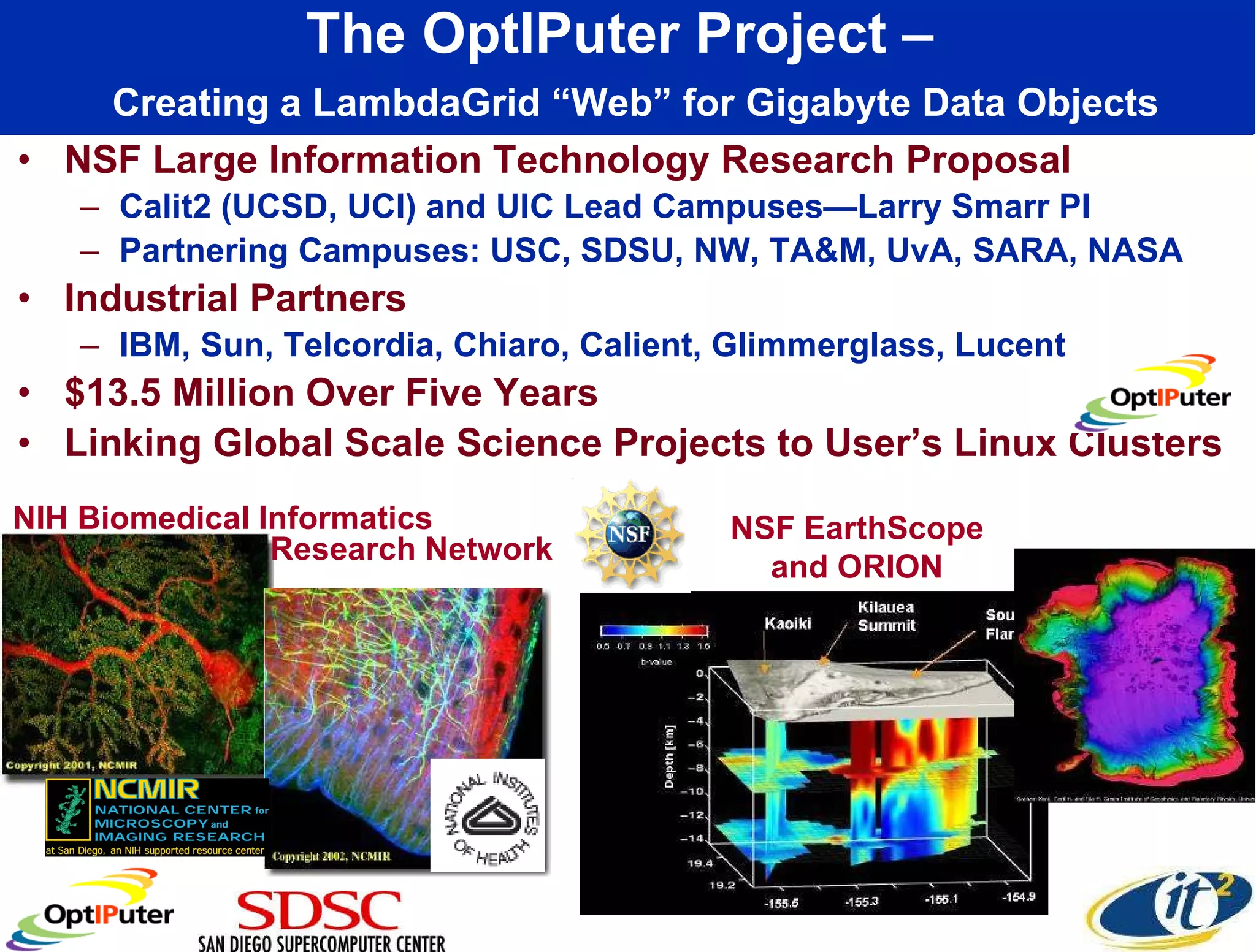

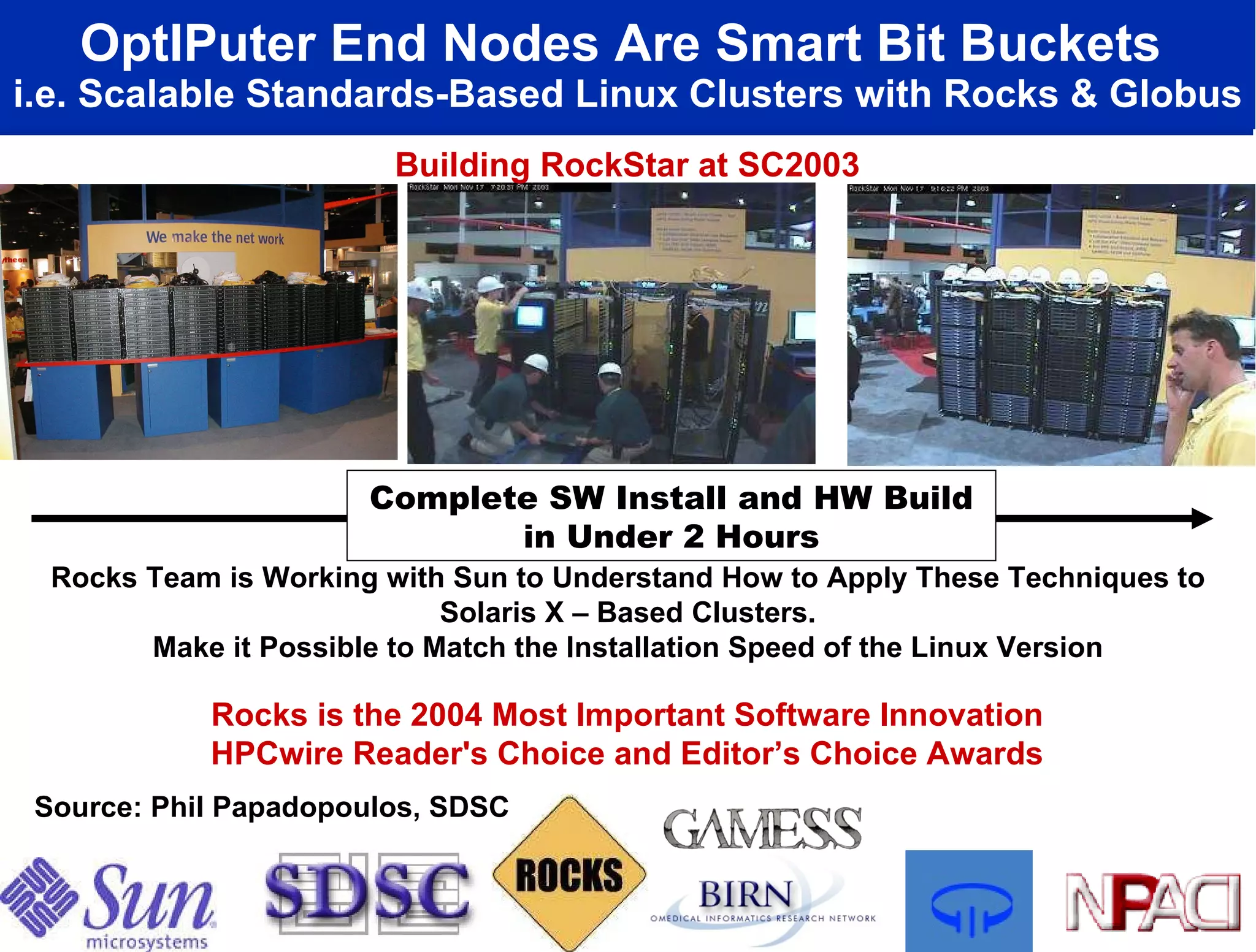

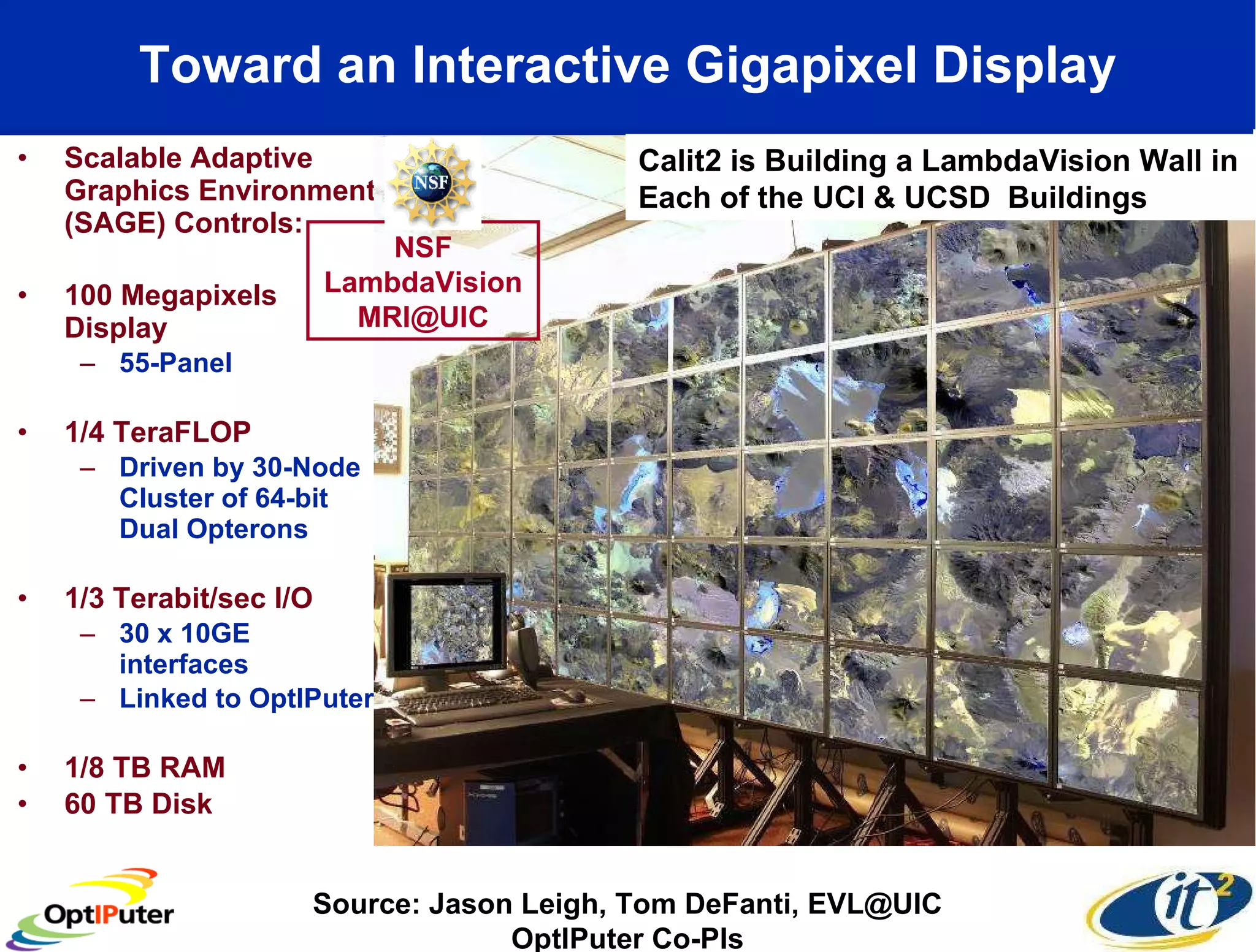

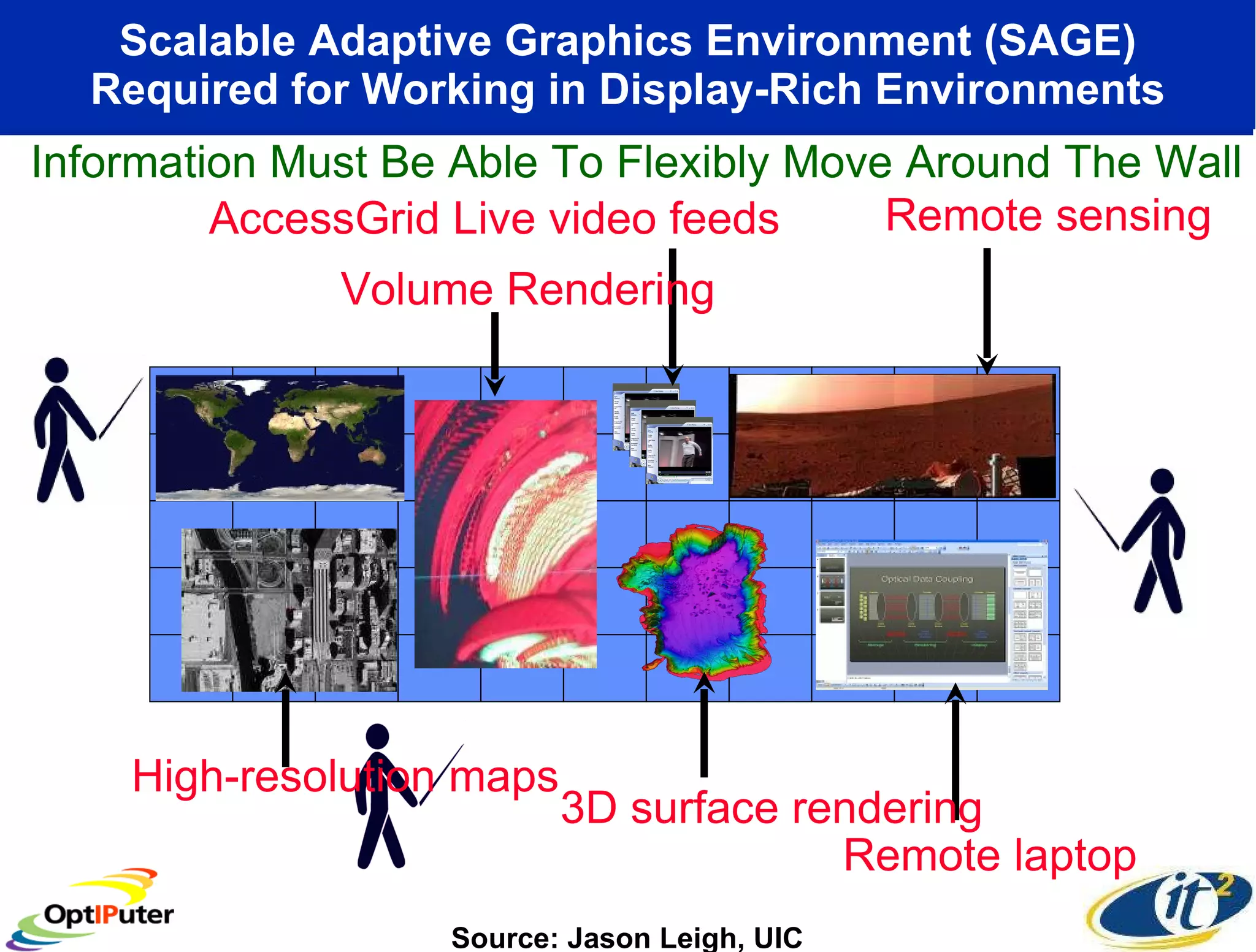

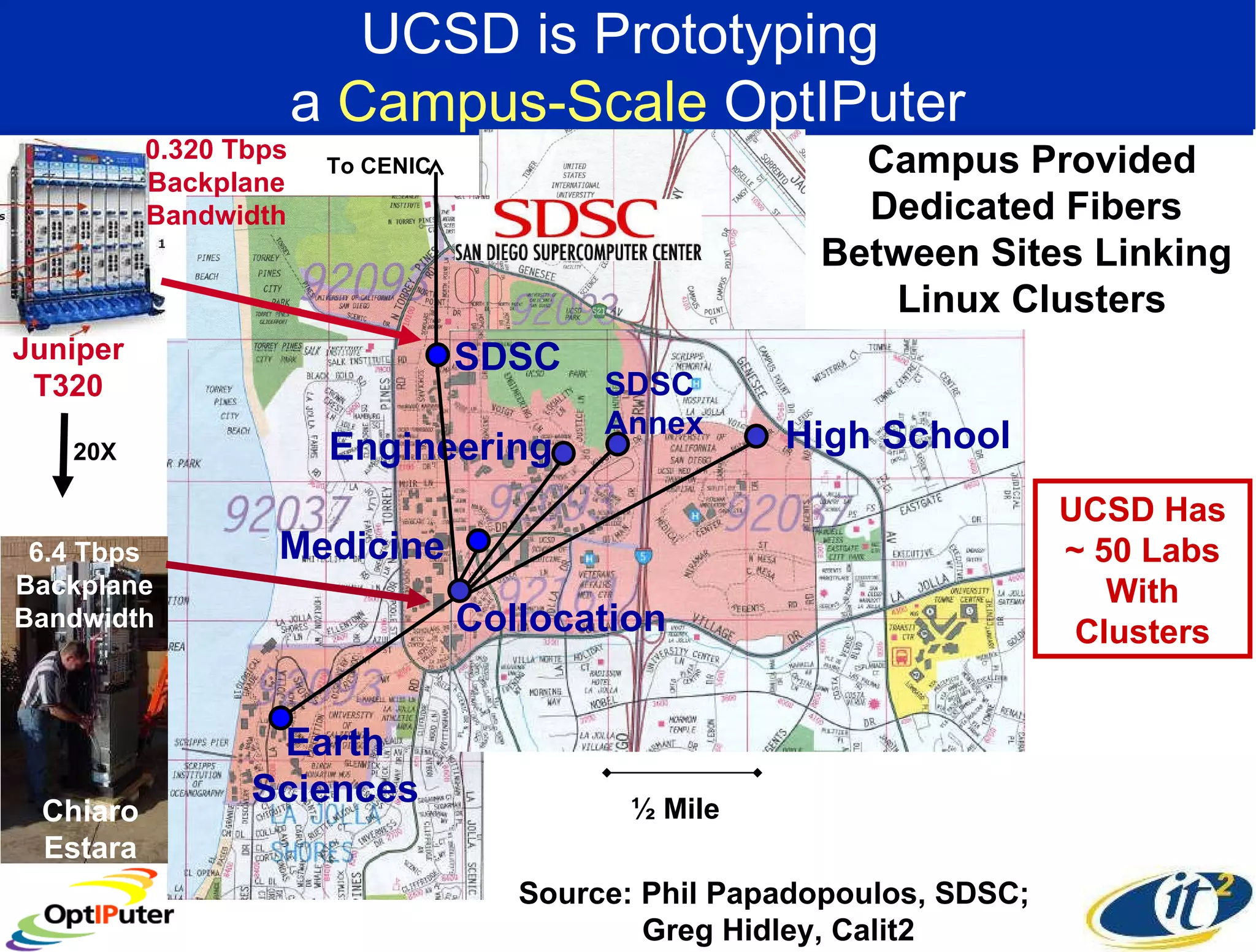

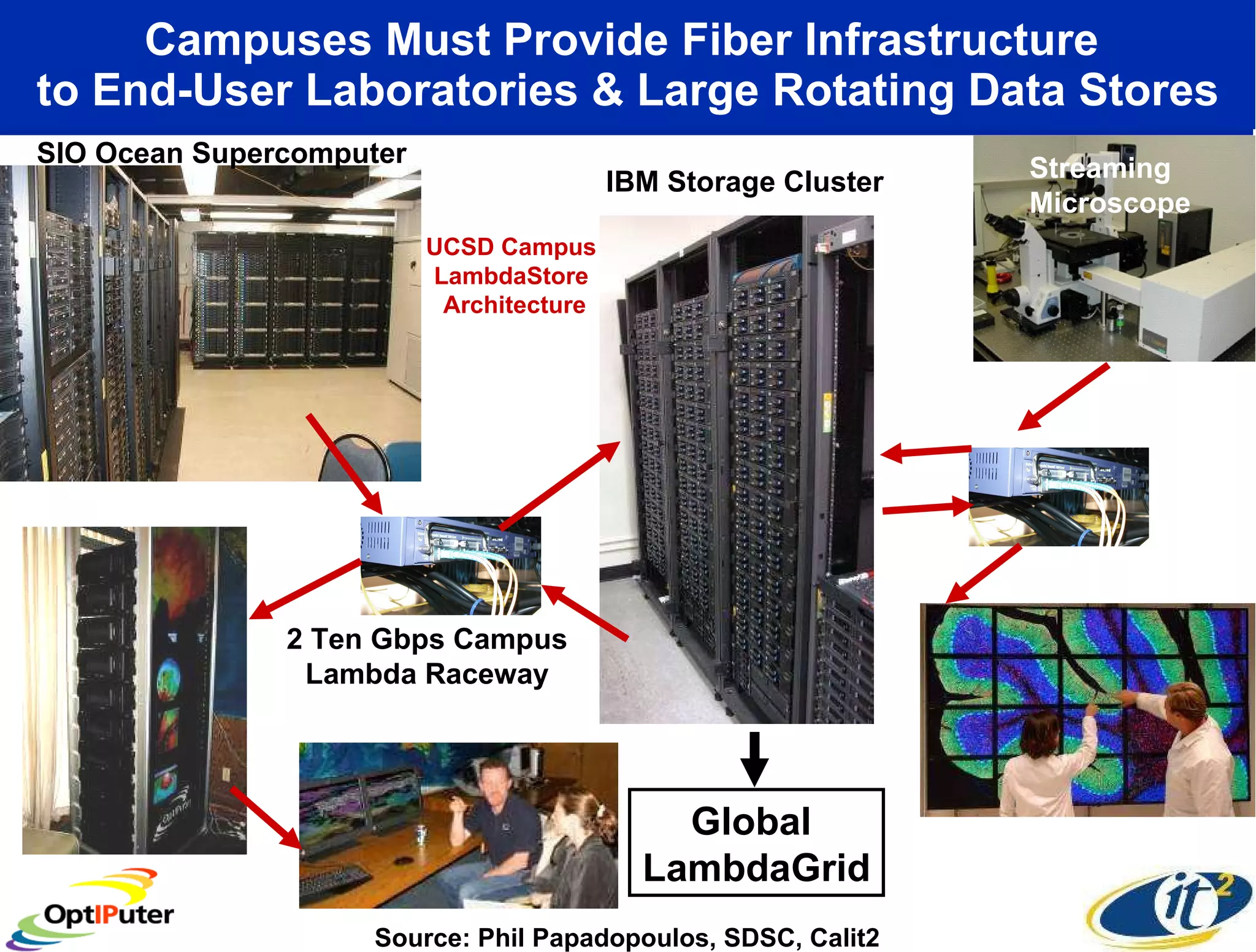

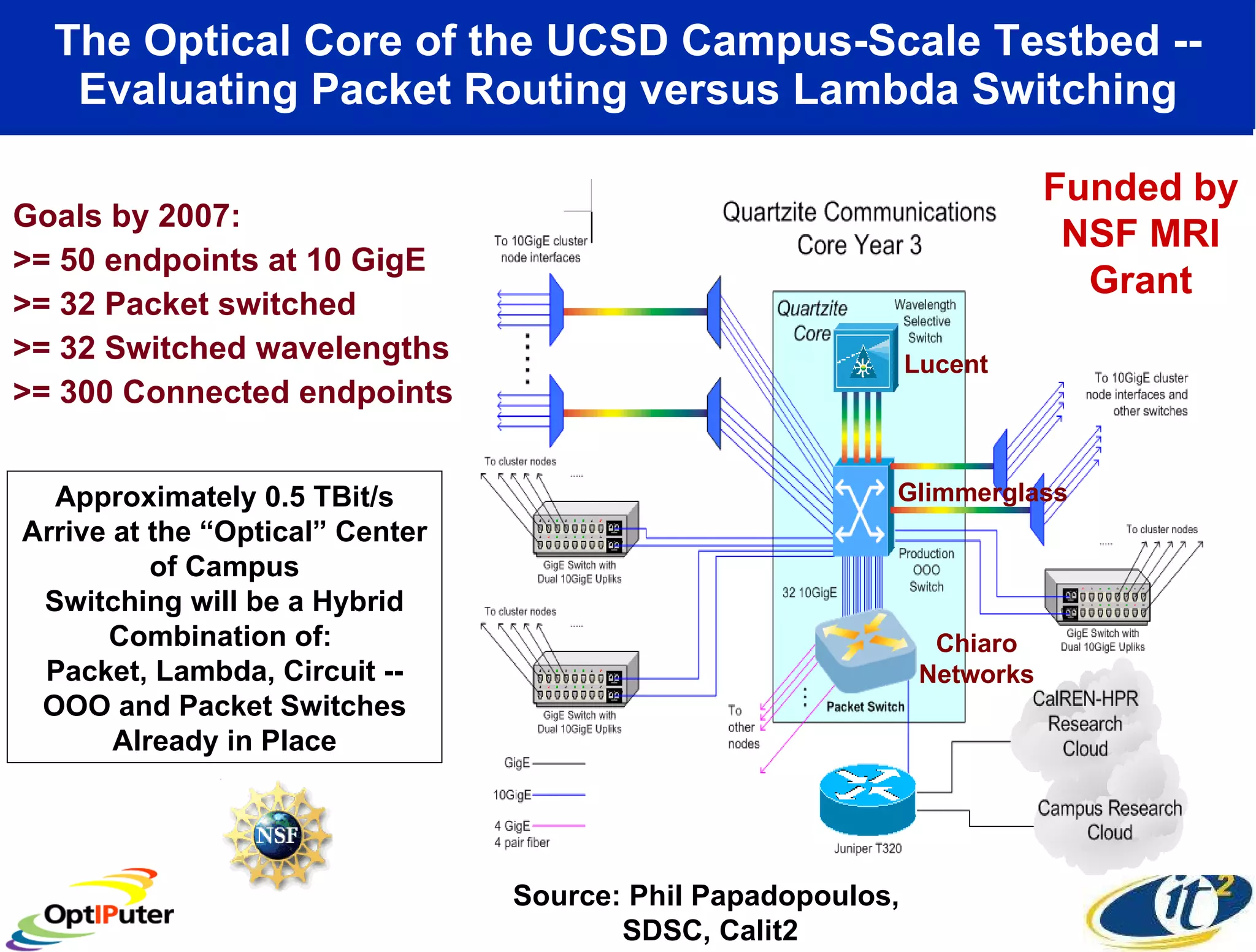

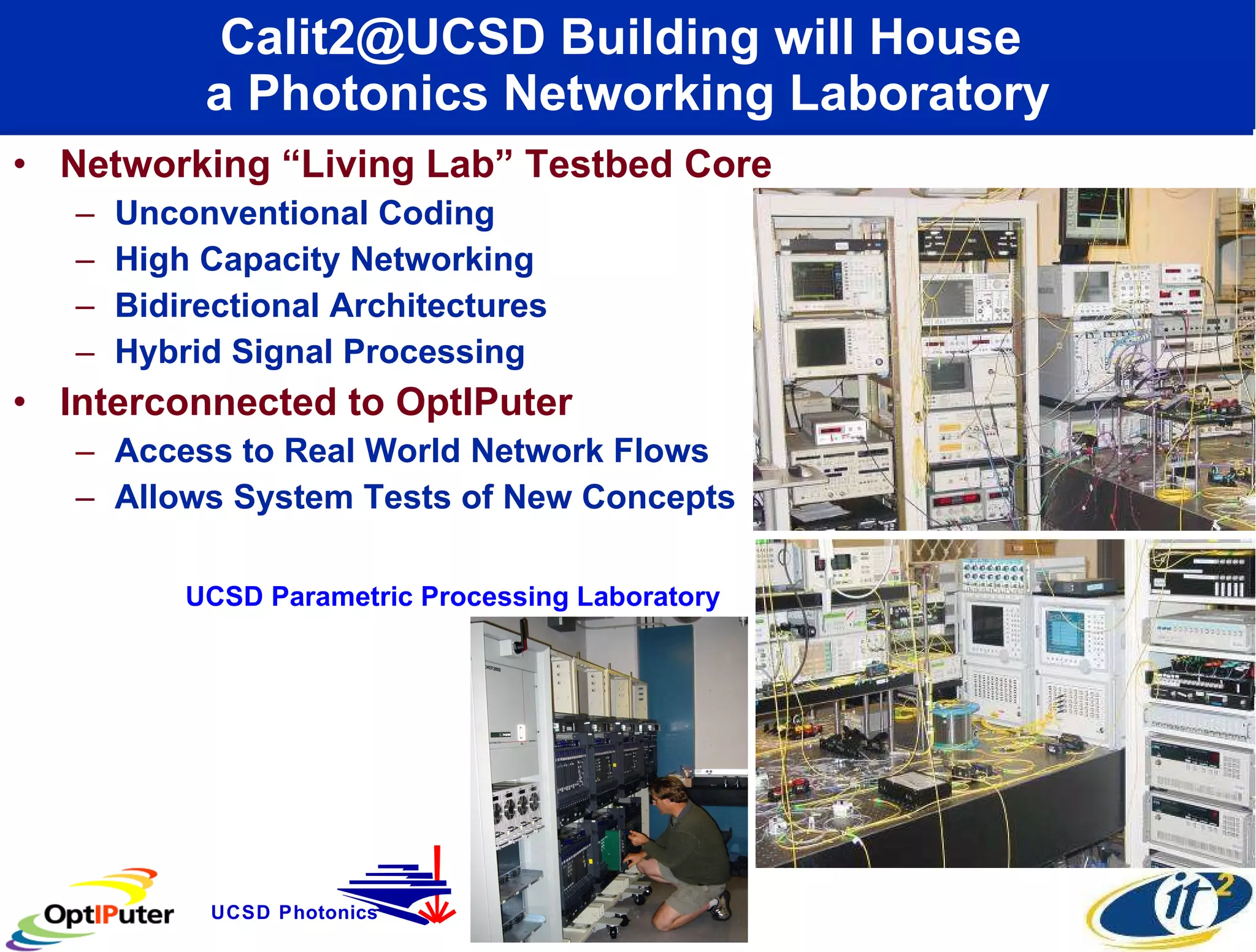

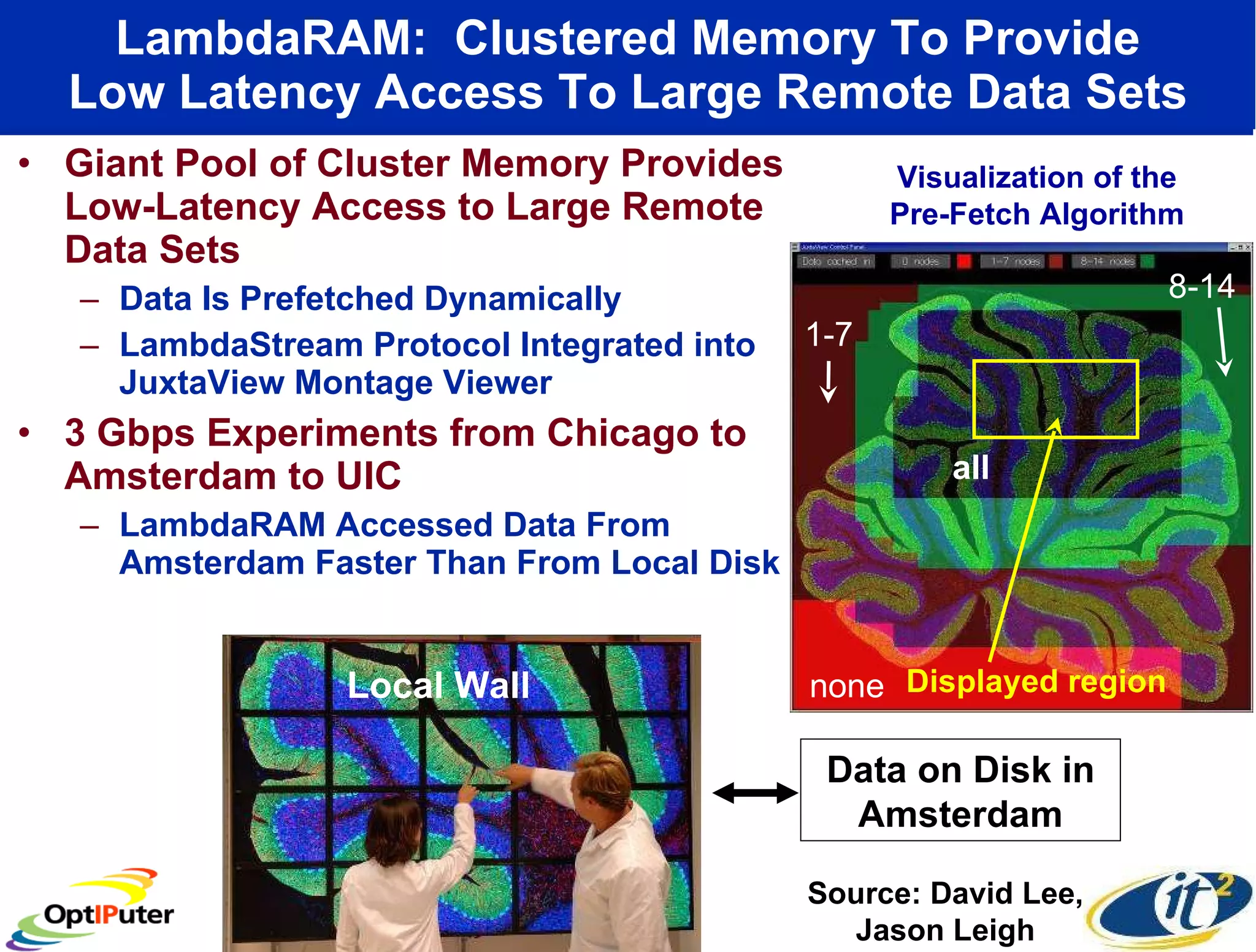

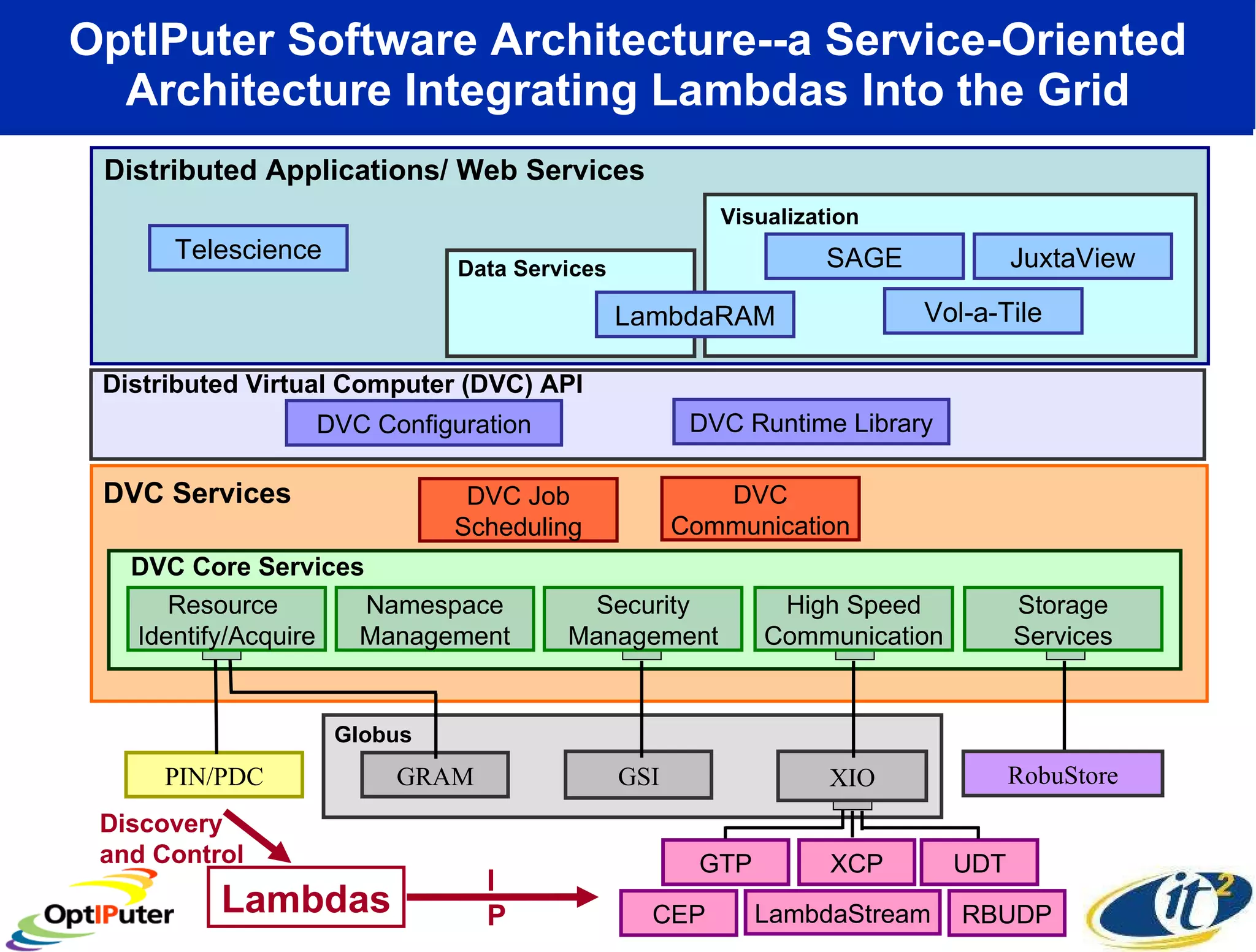

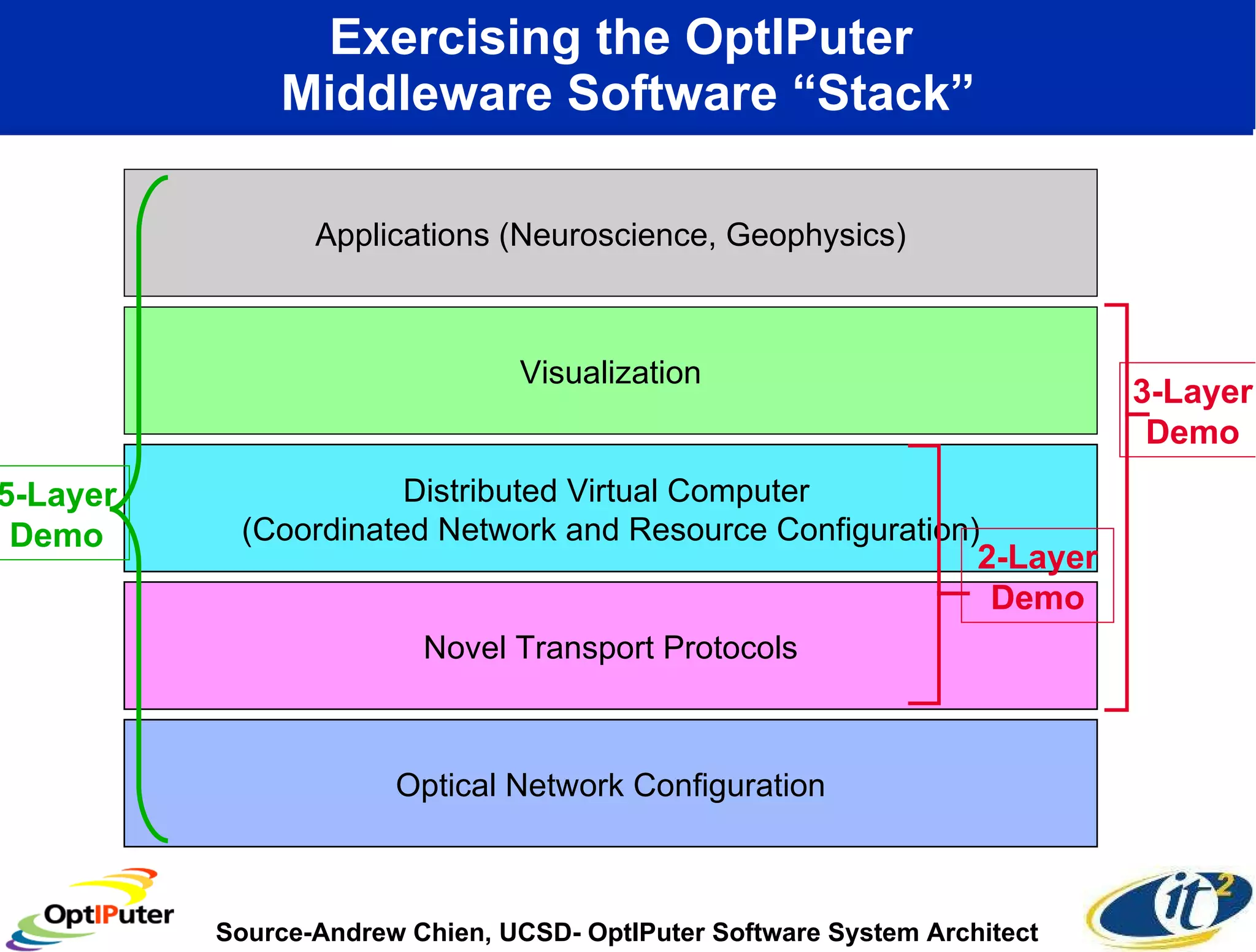

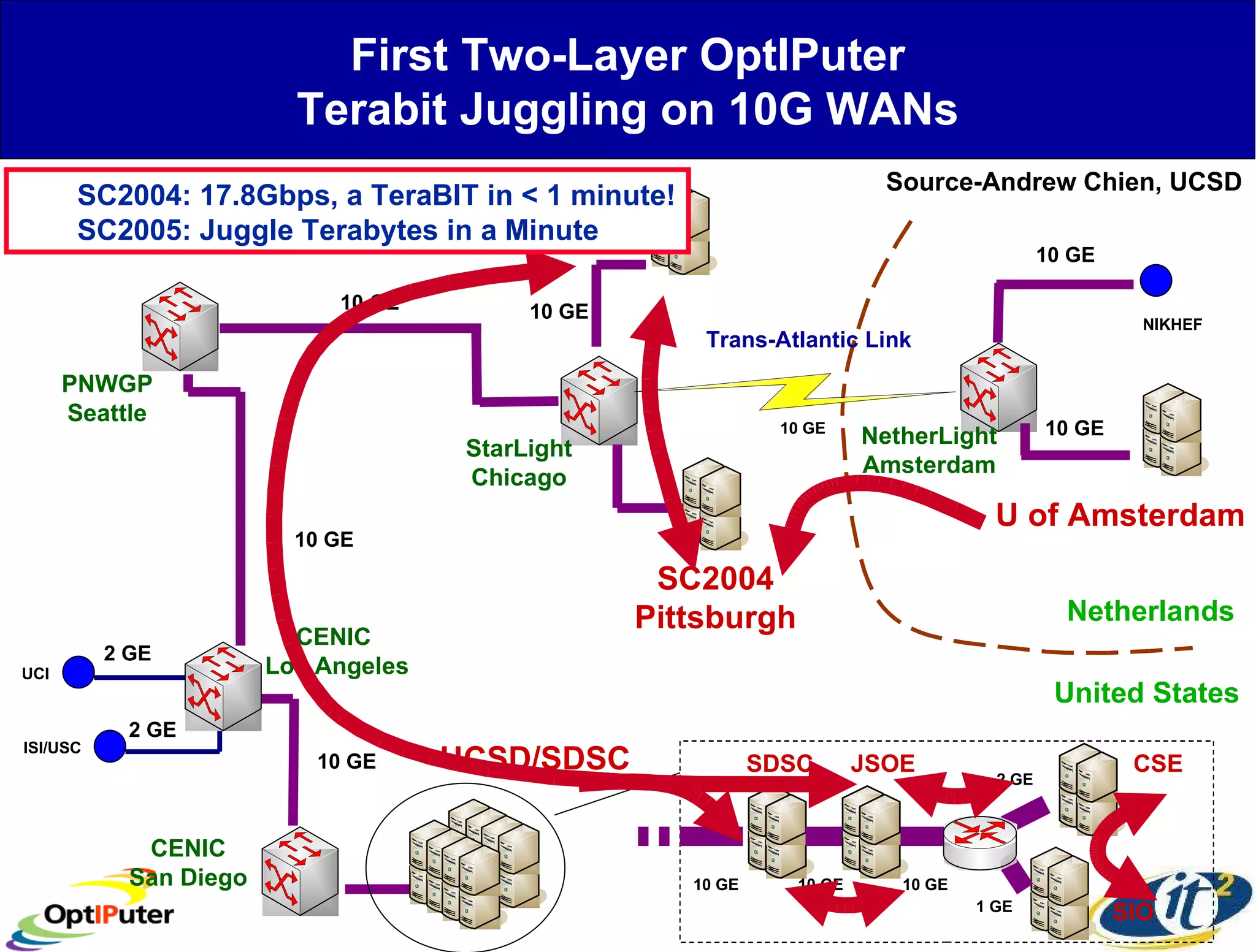

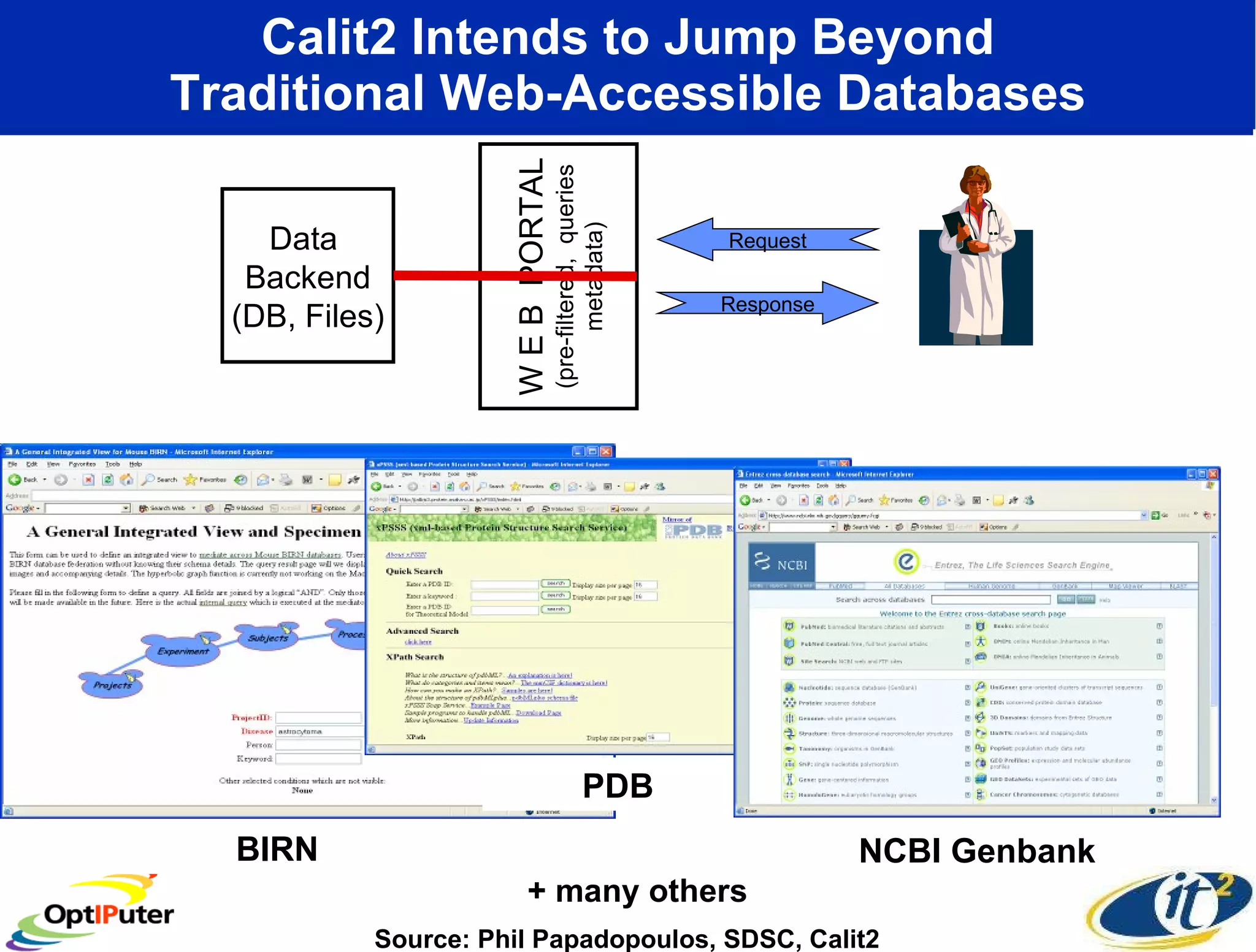

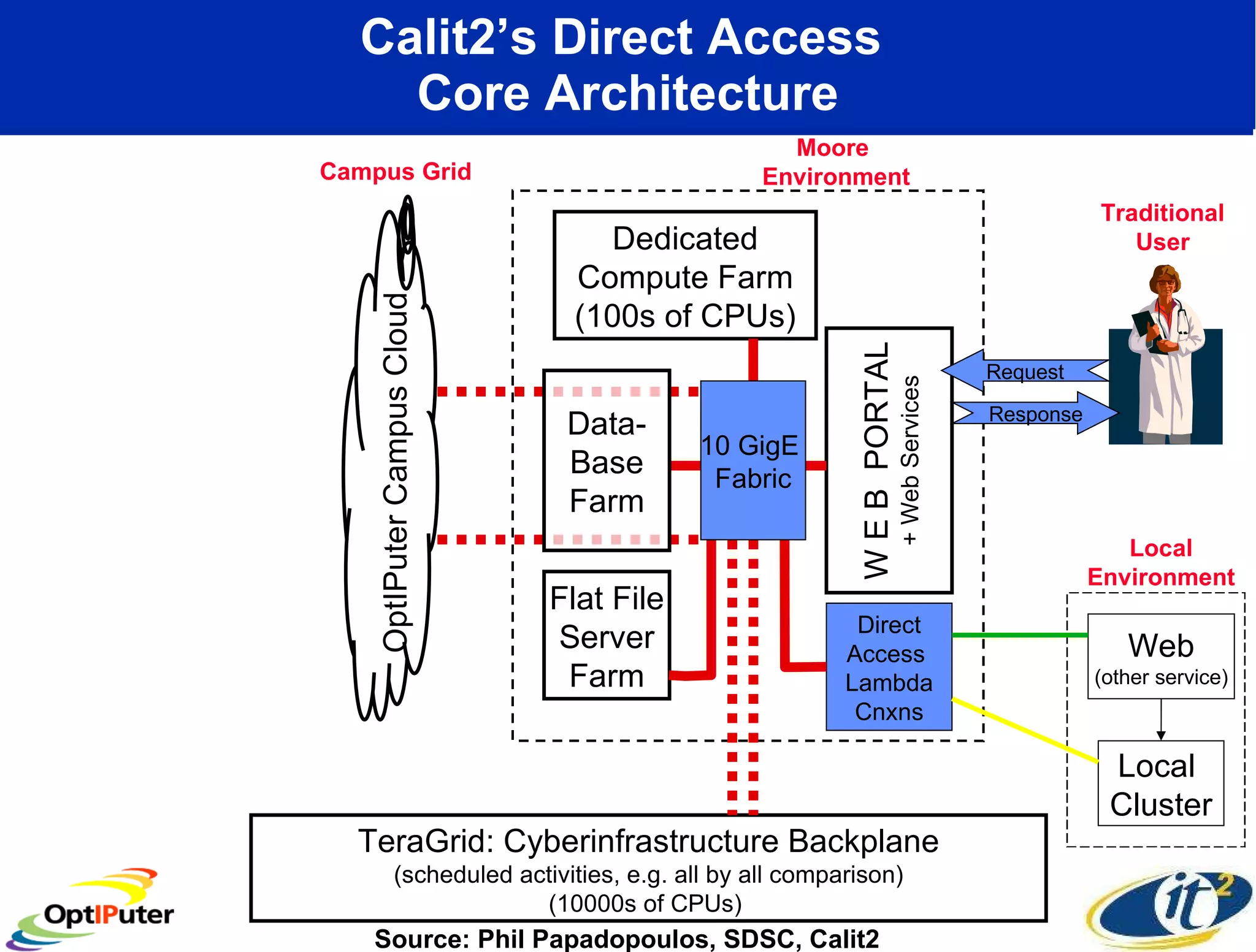

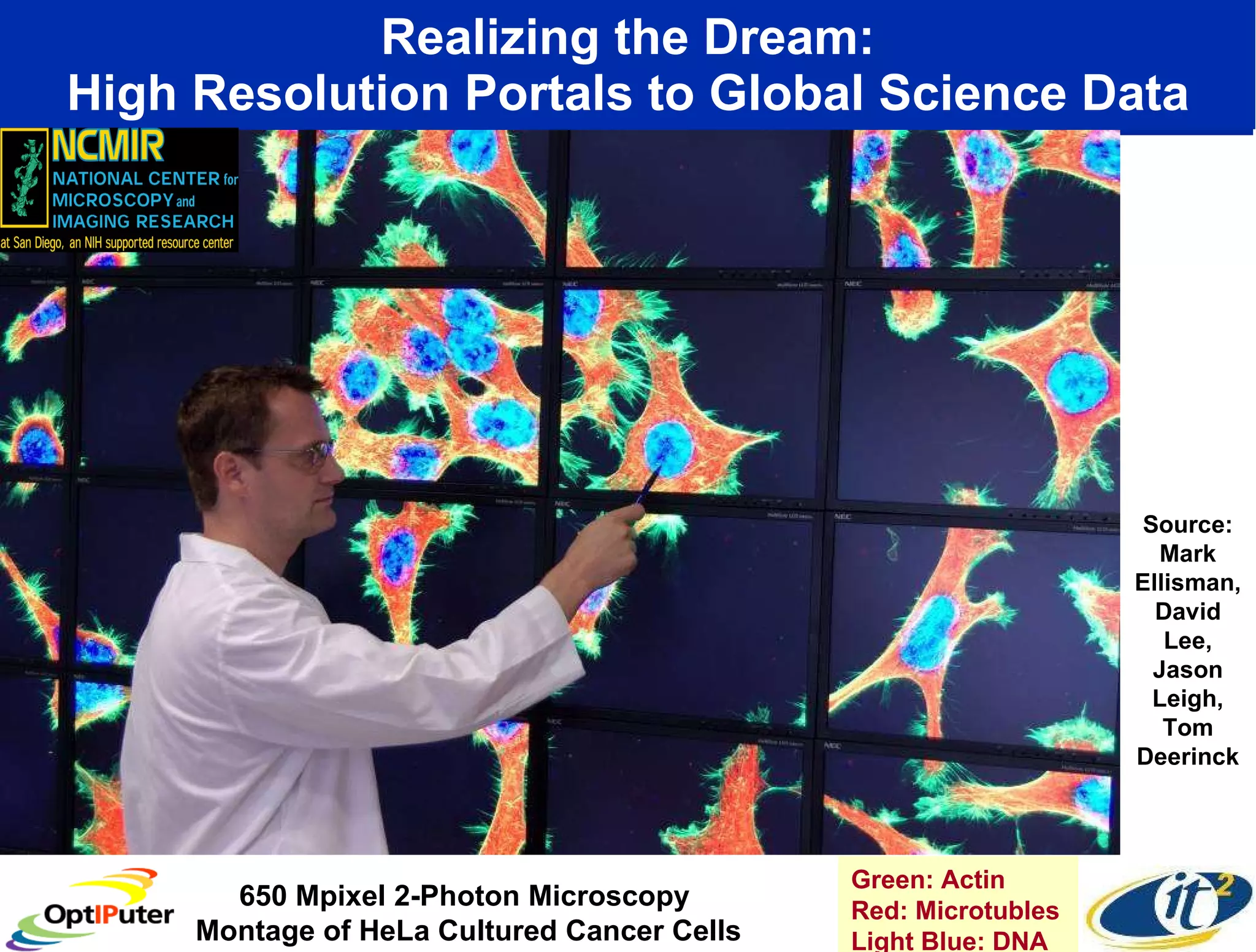

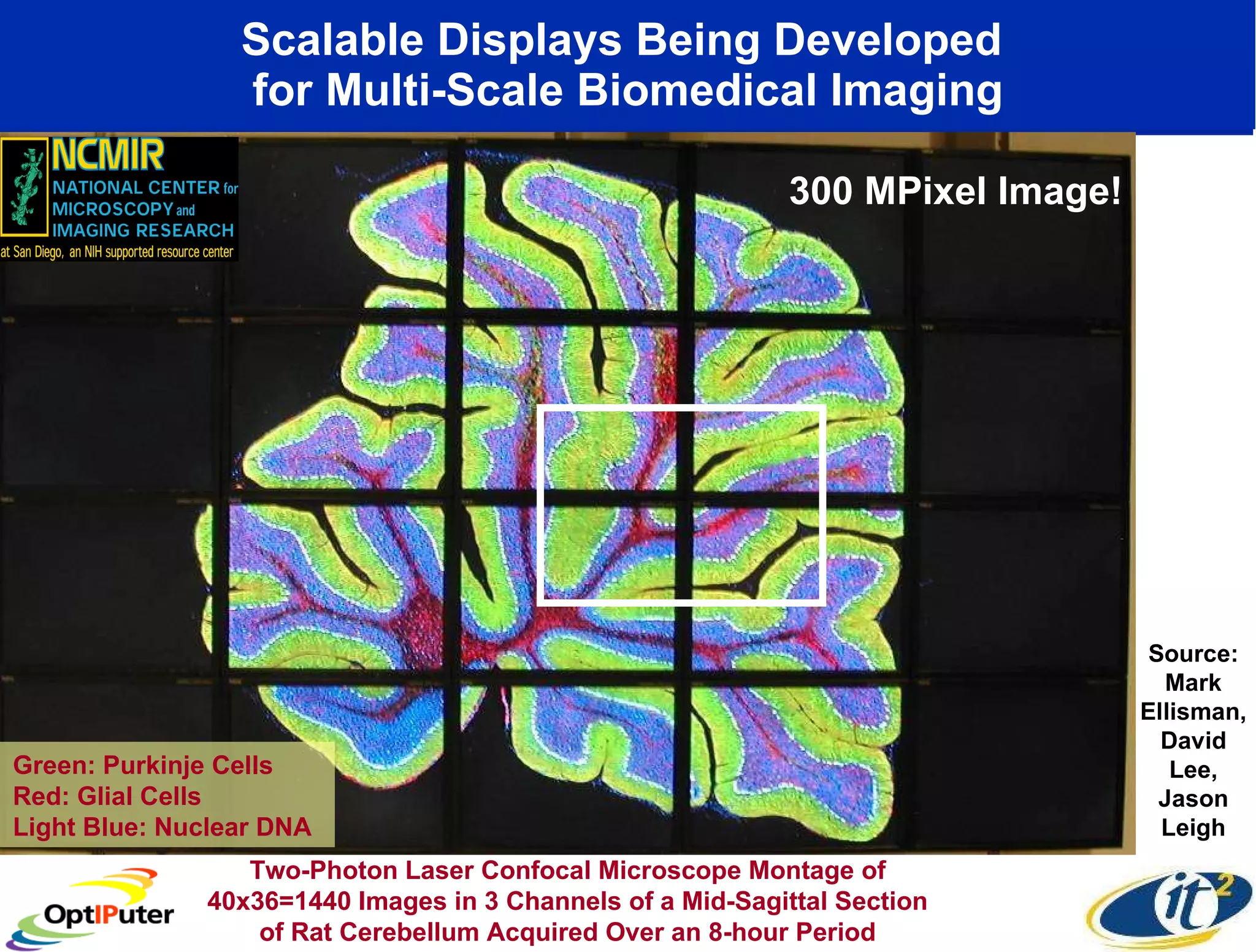

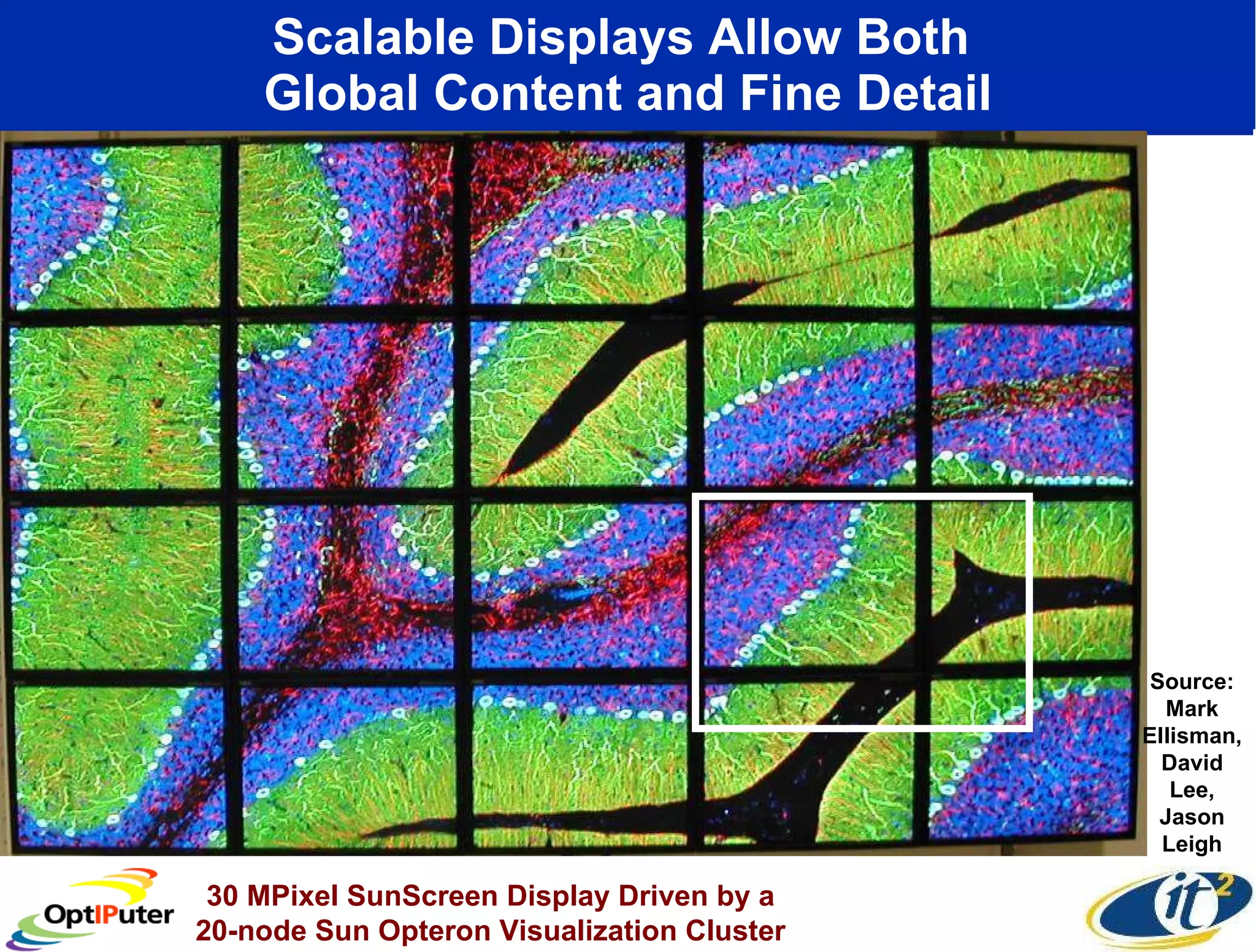

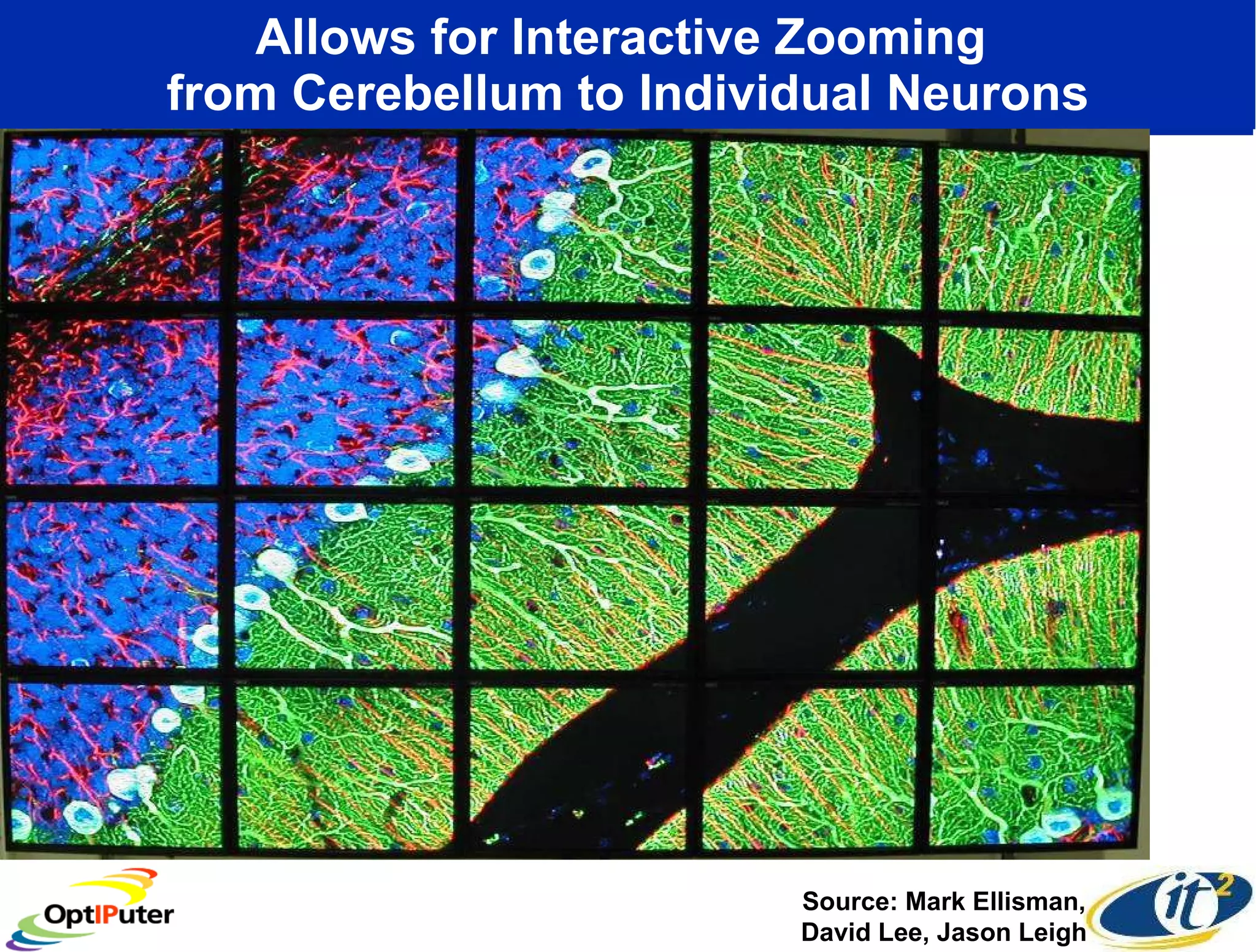

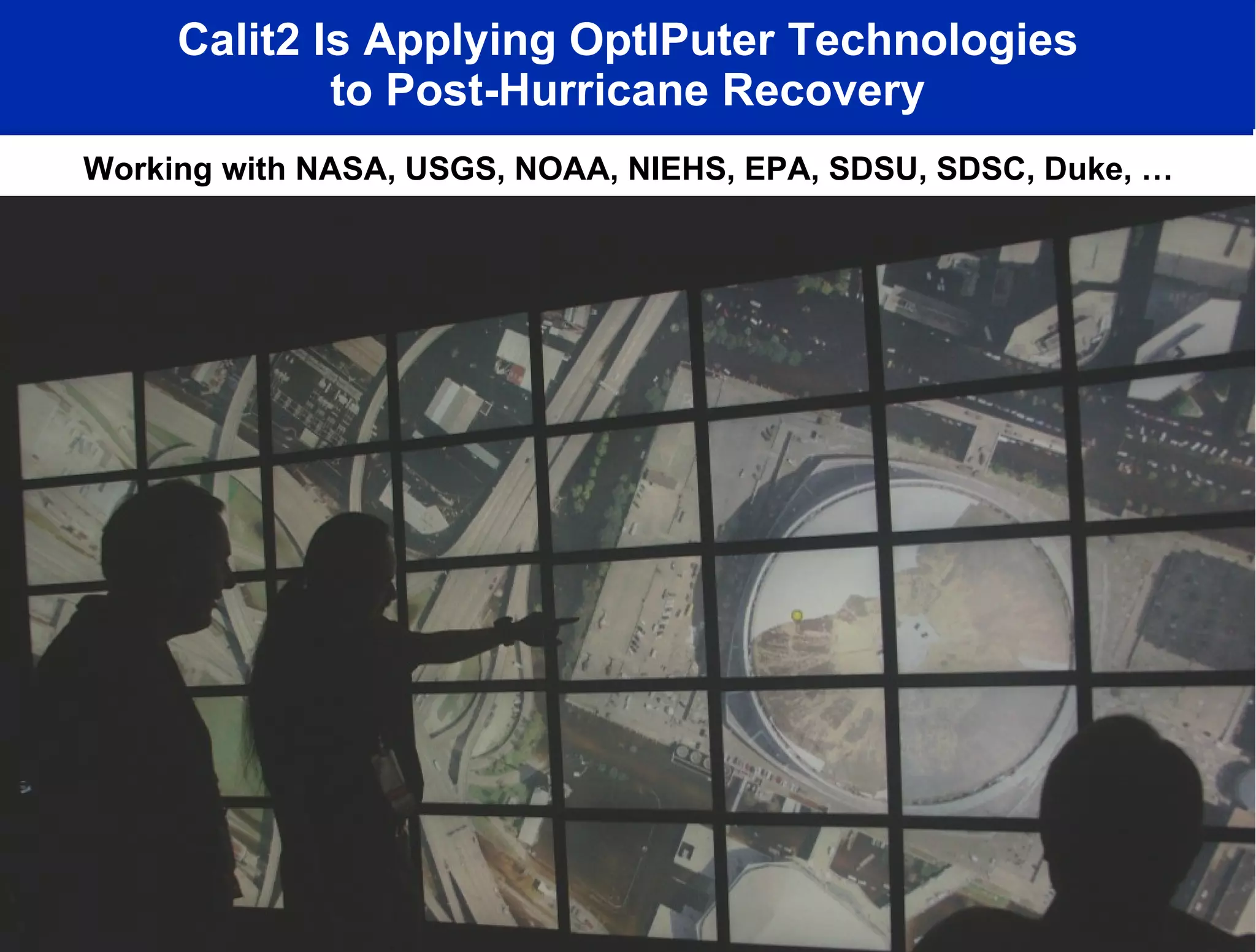

The document discusses the development and vision of high-speed fiber optic networks and collaborative computing, highlighting efforts at institutions like the California Institute for Telecommunications and Information Technology. Key figures like Dr. Larry Smarr and Nandan Nilekani emphasize the elimination of distance for remote interactions and the importance of building a global infrastructure to support advanced computing needs. It details various projects, technological advancements, and collaborations aimed at leveraging these networks for scientific research and digital collaboration.

![We Are Living Through A Fundamental Global Change—How Can We Glimpse the Future? [The Internet] has created a [global] platform where intellectual work, intellectual capital, could be delivered from anywhere. It could be disaggregated, delivered, distributed, produced, and put back together again… The playing field is being leveled.” Nandan Nilekani, CEO Infosys (Bangalore, India)](https://image.slidesharecdn.com/ornloct2005-final-small-100112171005-phpapp02/75/Blowing-up-the-Box-the-Emergence-of-the-Planetary-Computer-7-2048.jpg)

![“ Infosys’s Global Conferencing Center Ground Zero for the Indian Outsourcing Industry.” So this is our conference room, probably the largest screen in Asia- this is forty digital screens [put together]. We could be setting here [in Bangalore] with somebody from New York, London, Boston, San Francisco, all live. …That’s globalization.” --Nandan Nilekani, CEO Infosys](https://image.slidesharecdn.com/ornloct2005-final-small-100112171005-phpapp02/75/Blowing-up-the-Box-the-Emergence-of-the-Planetary-Computer-43-2048.jpg)