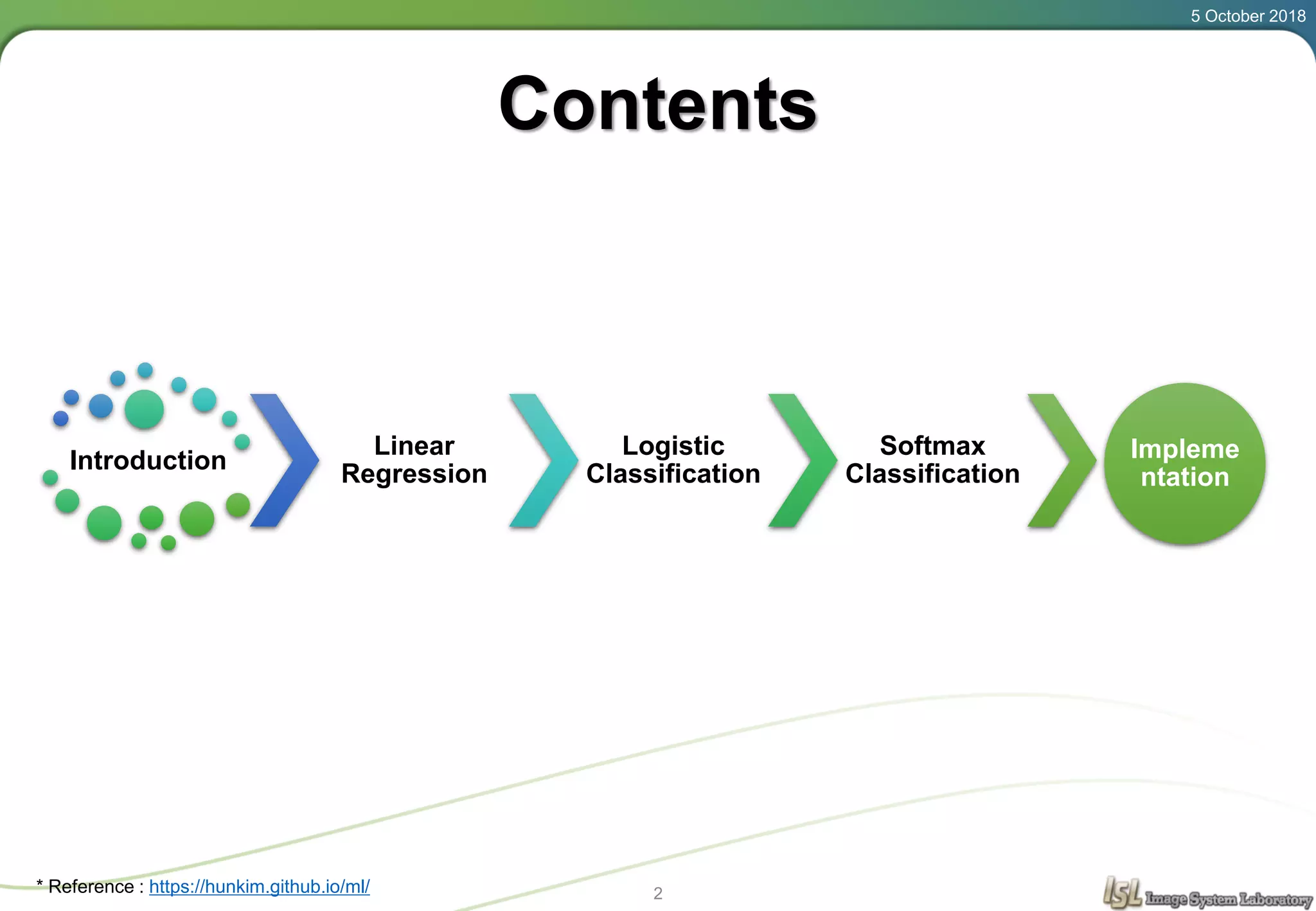

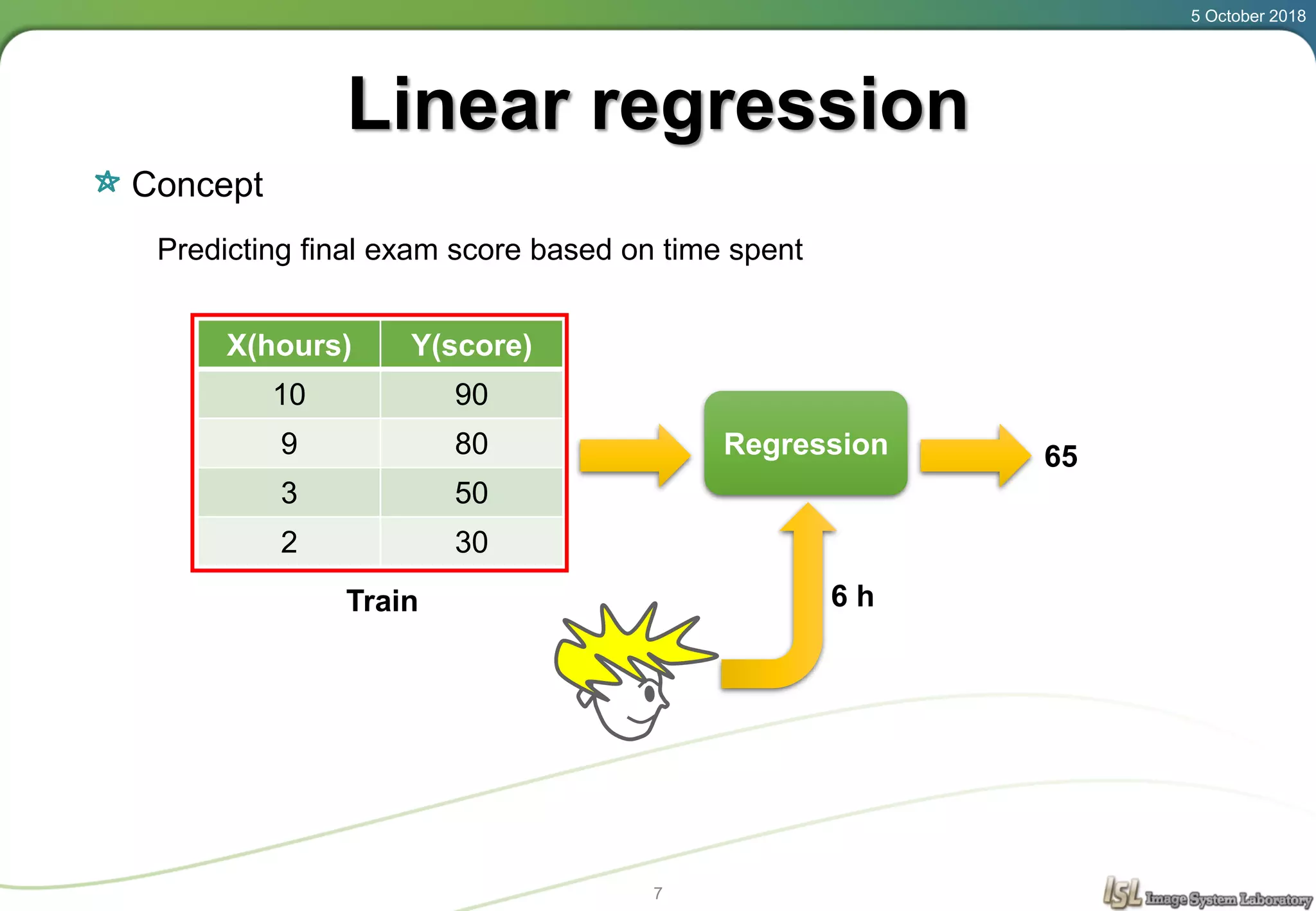

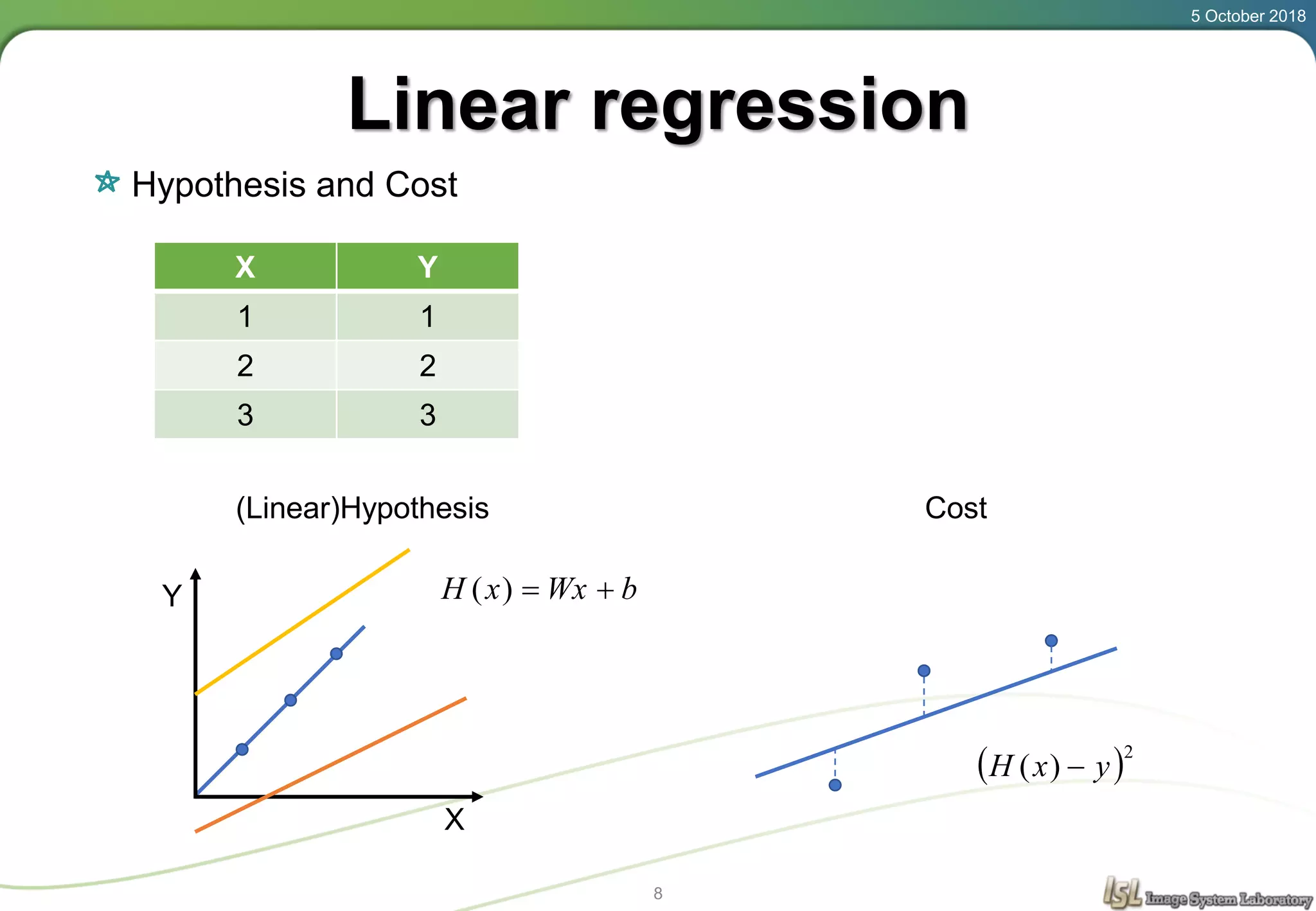

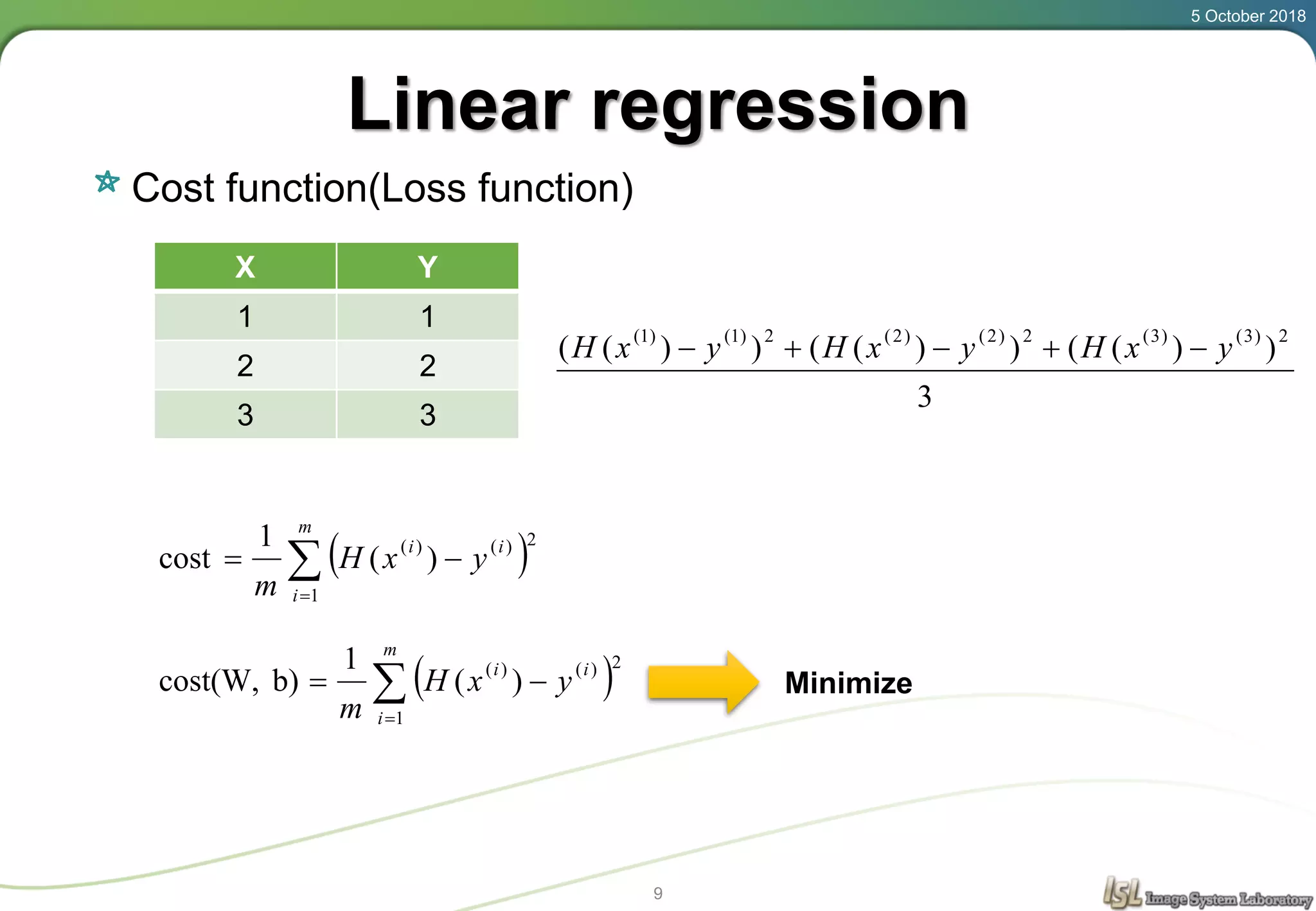

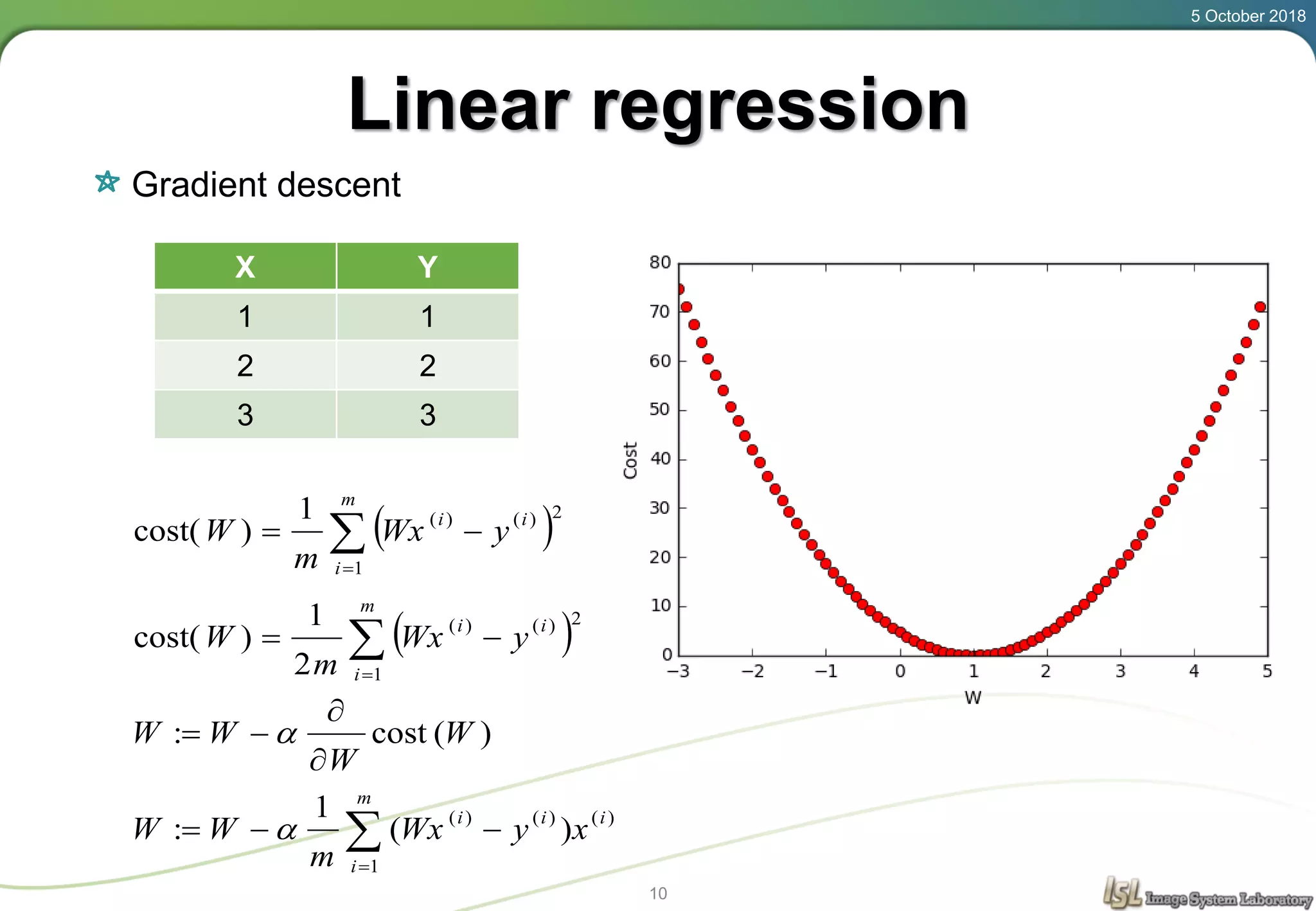

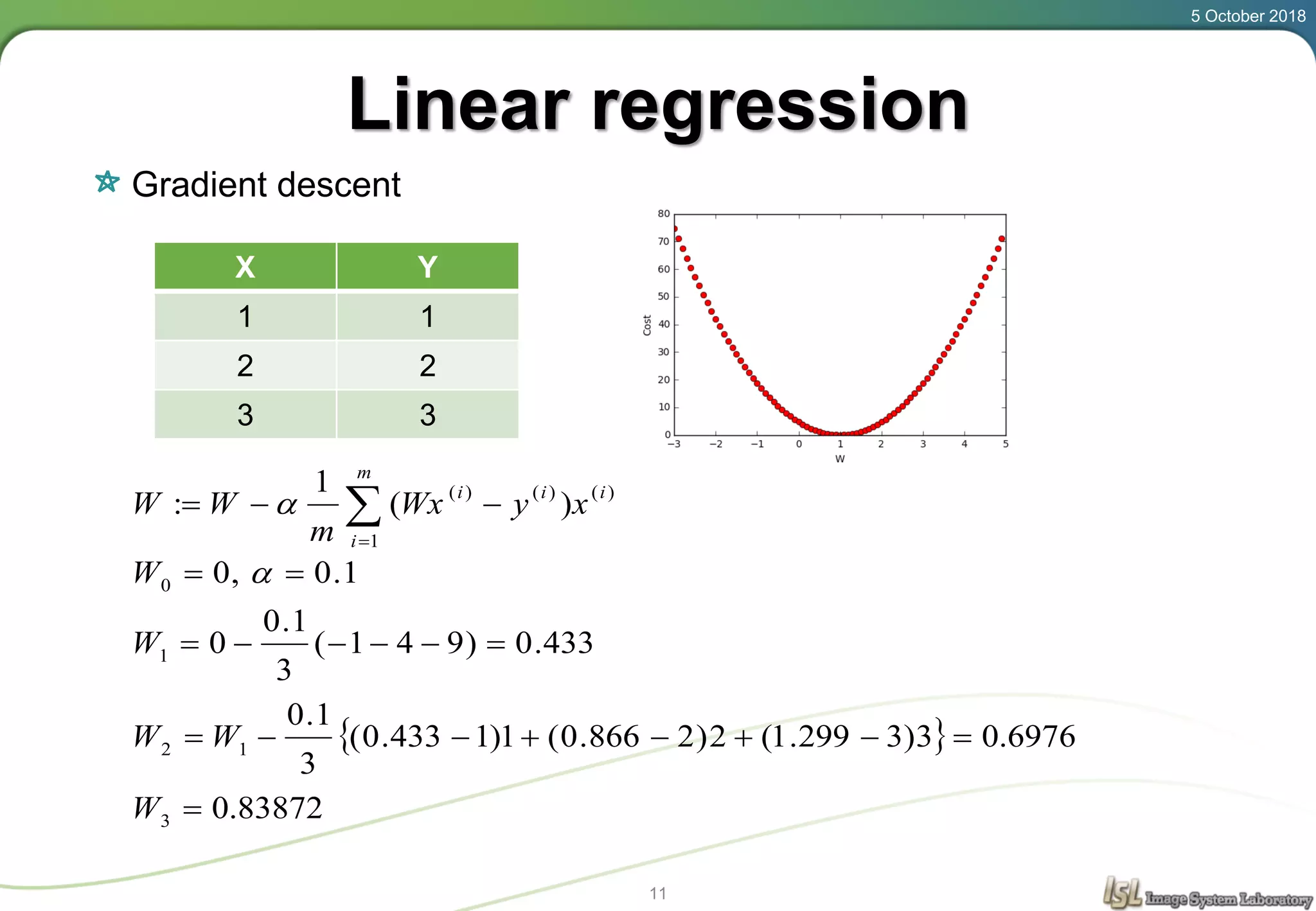

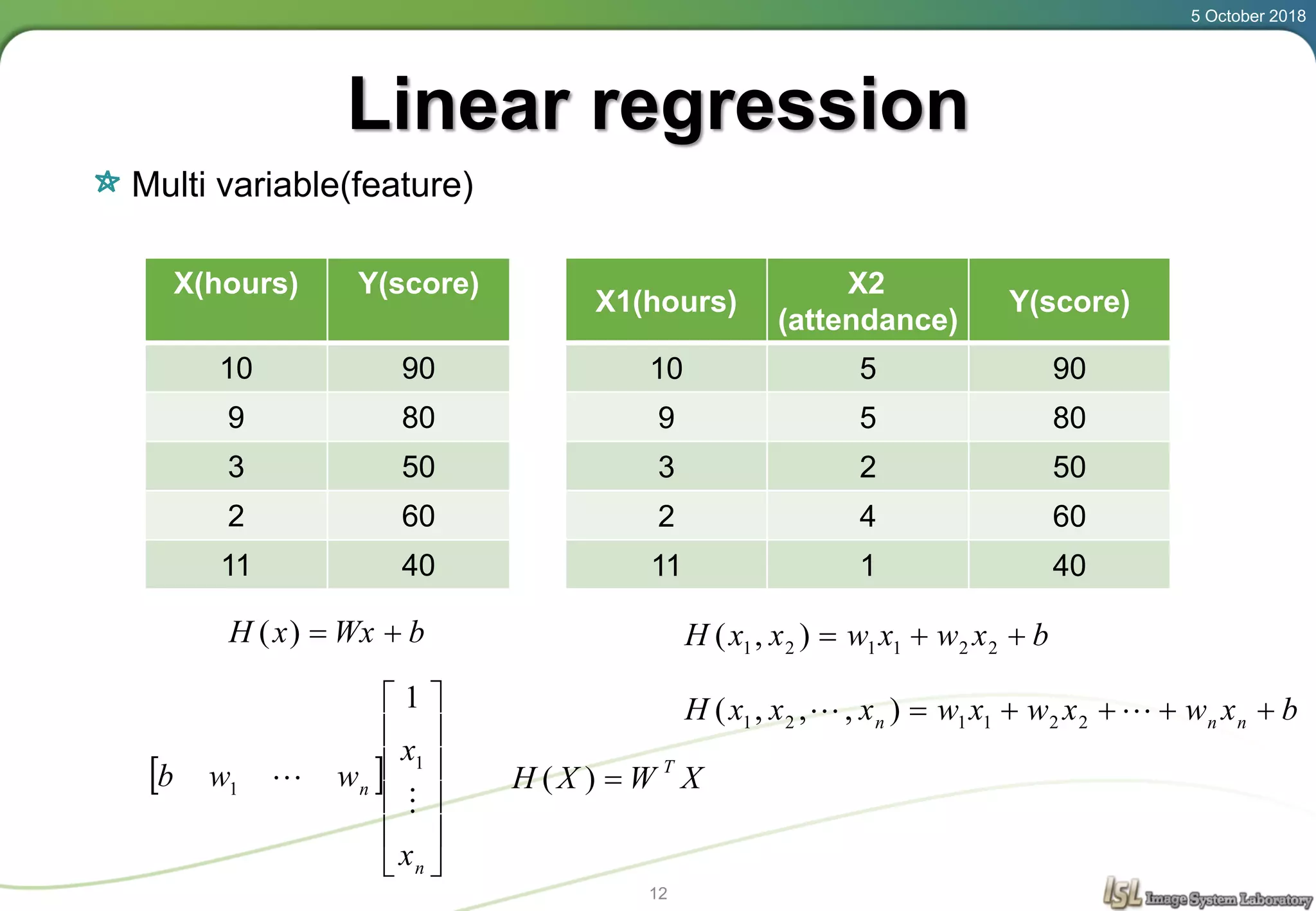

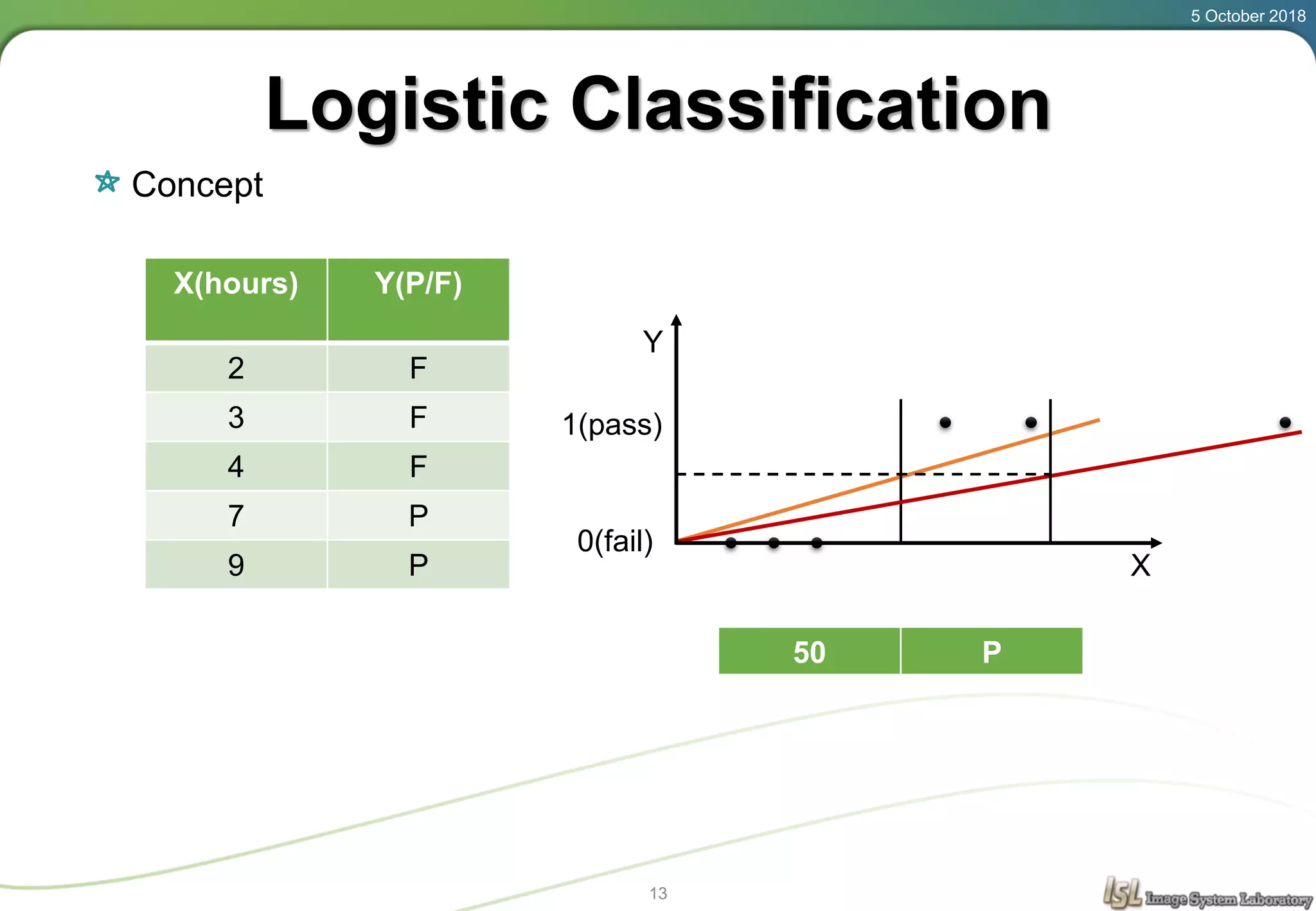

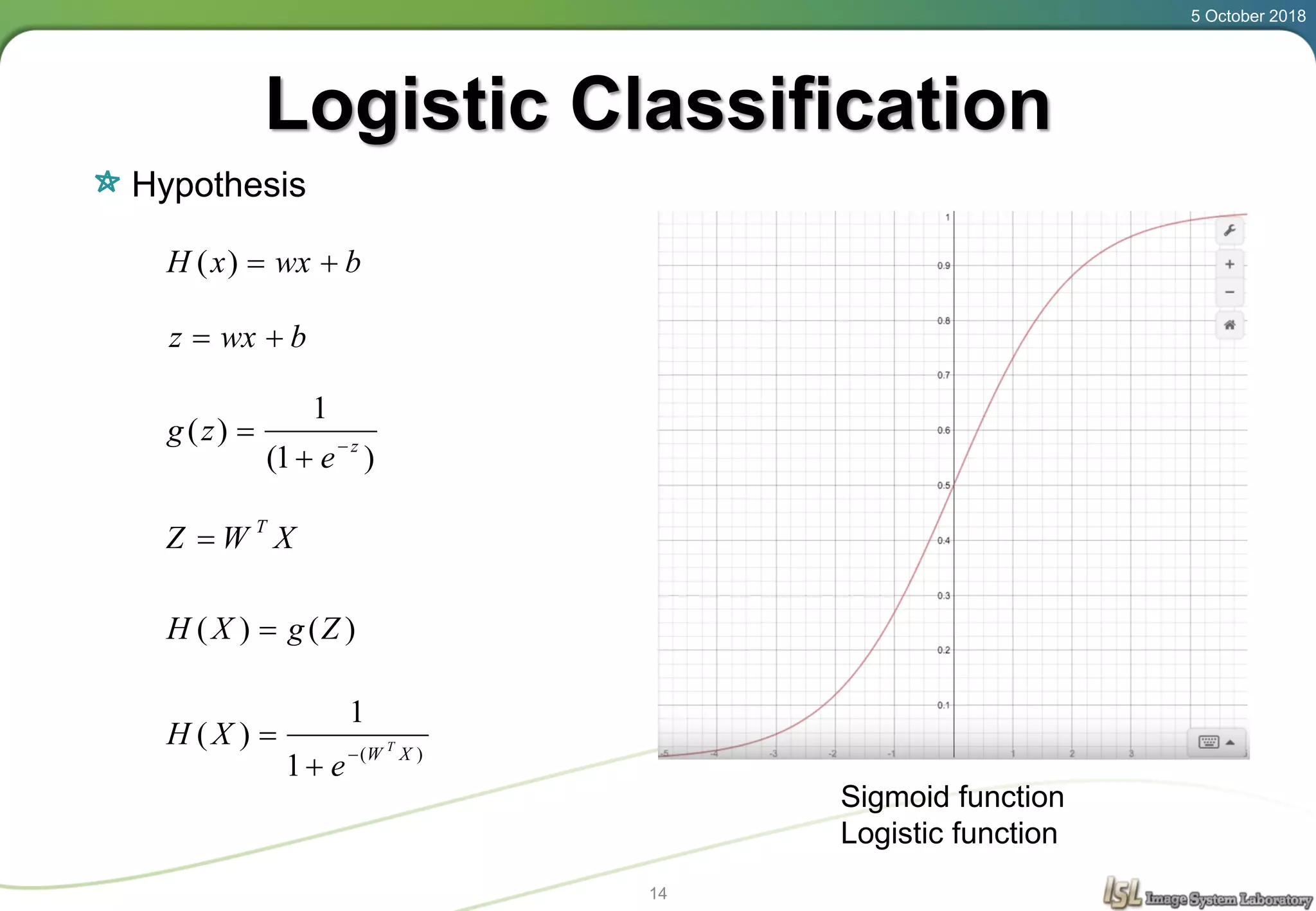

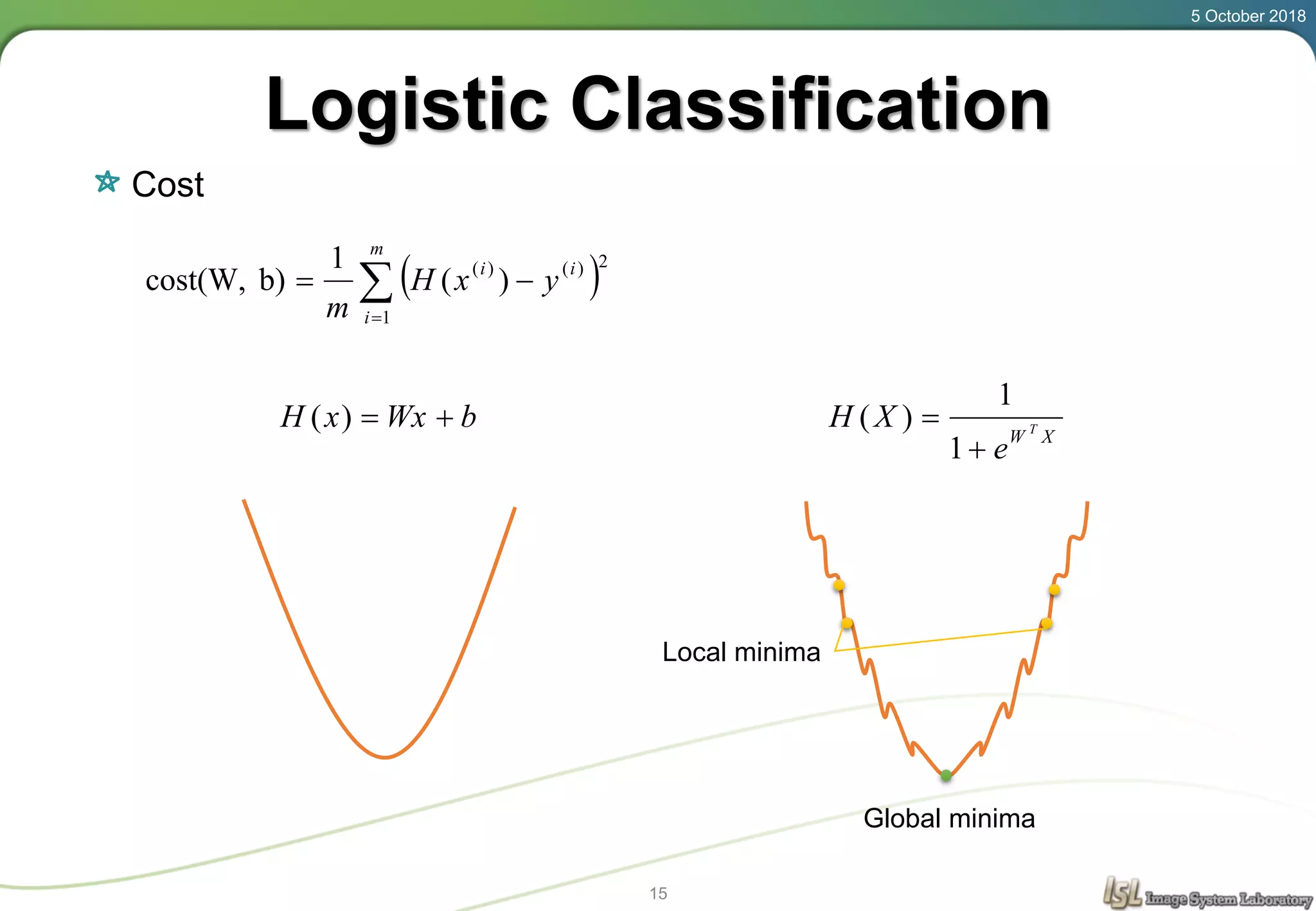

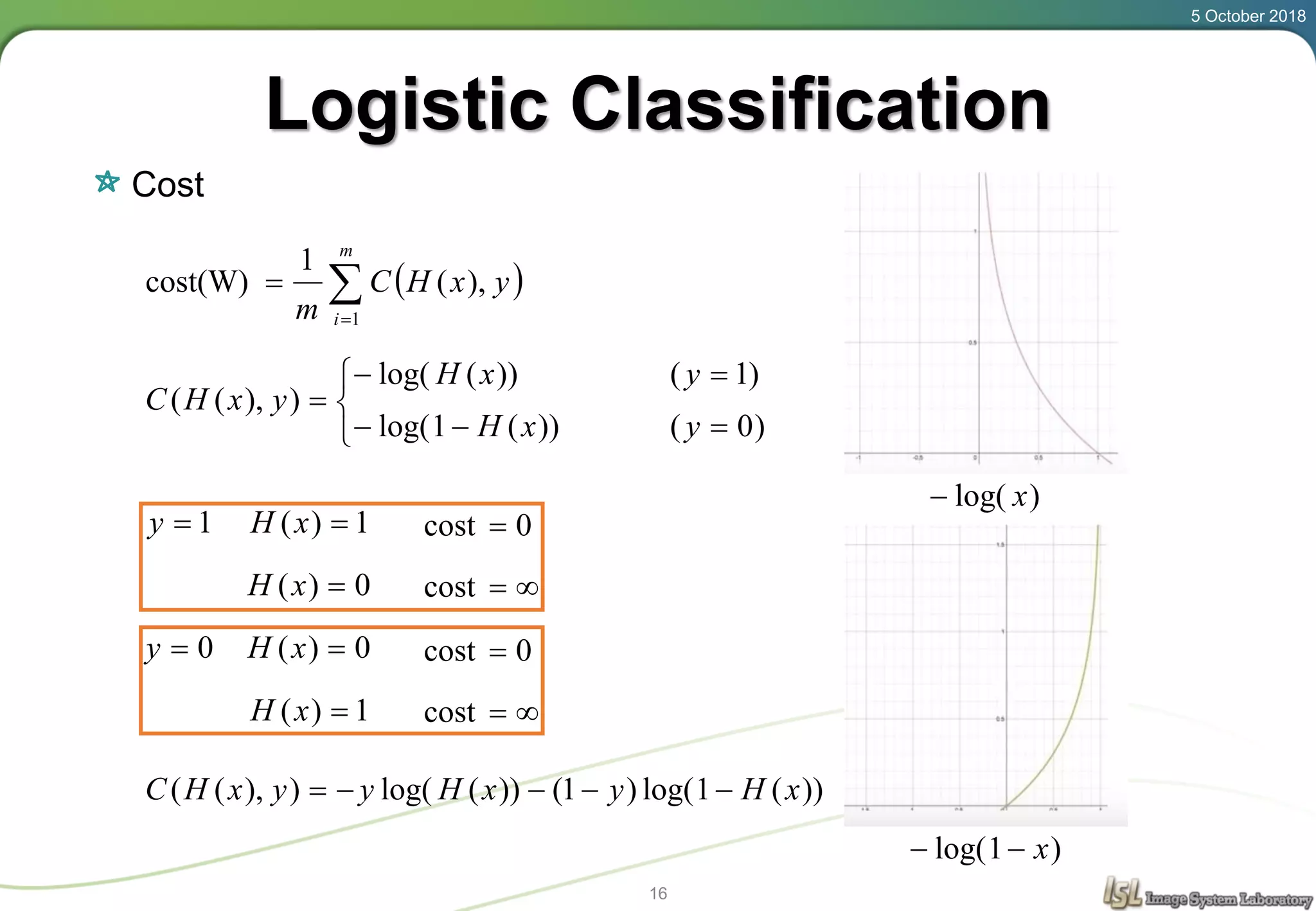

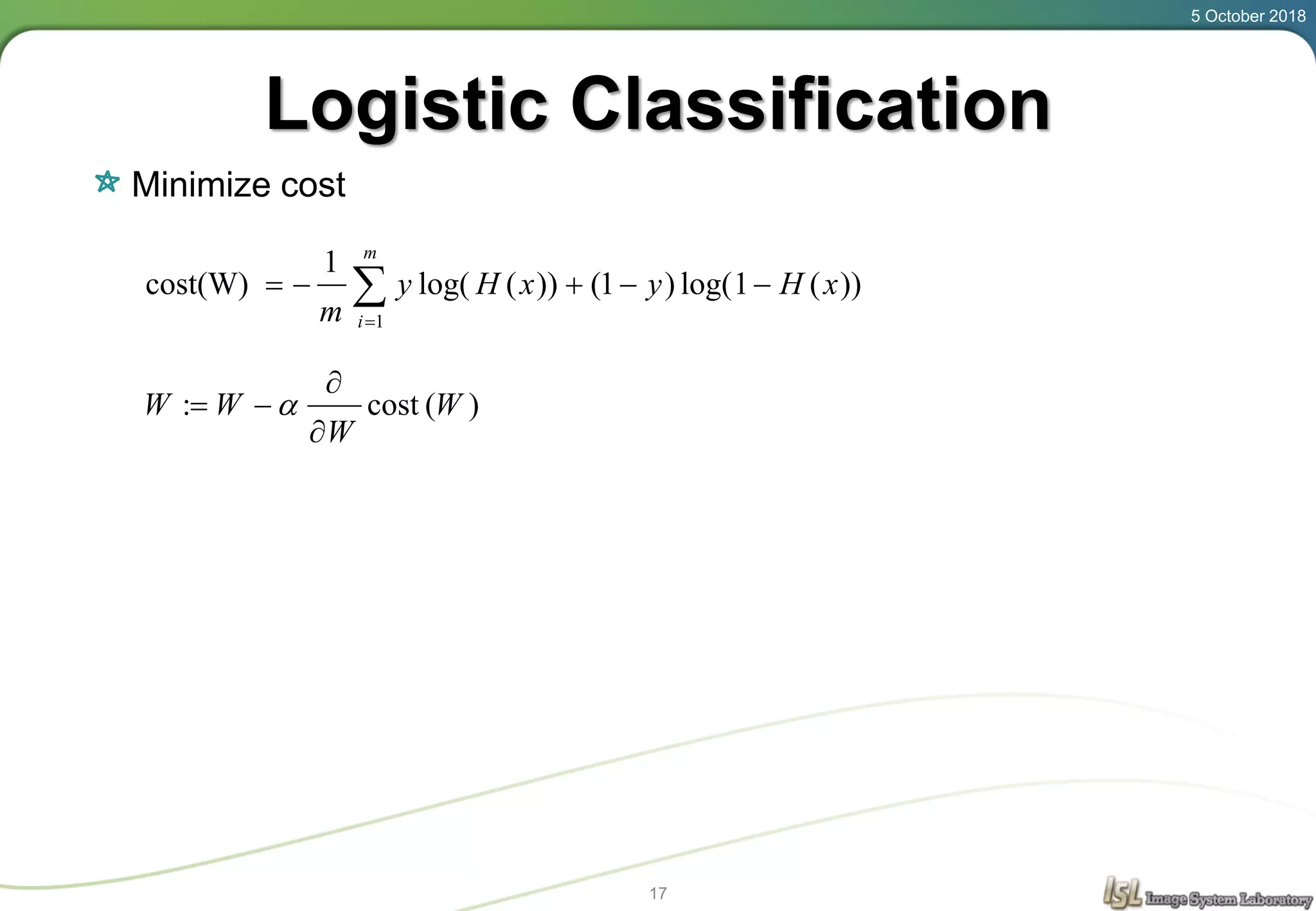

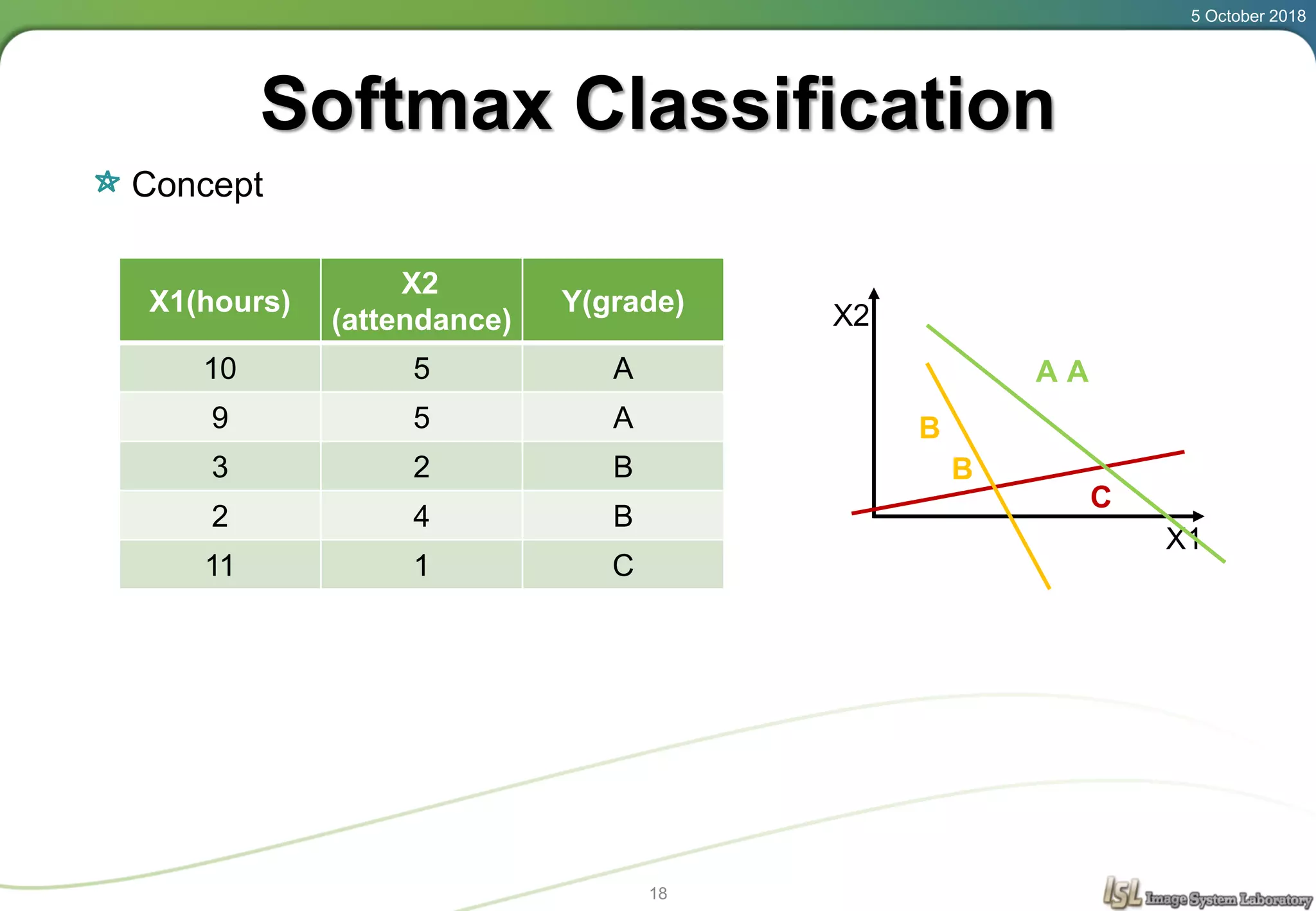

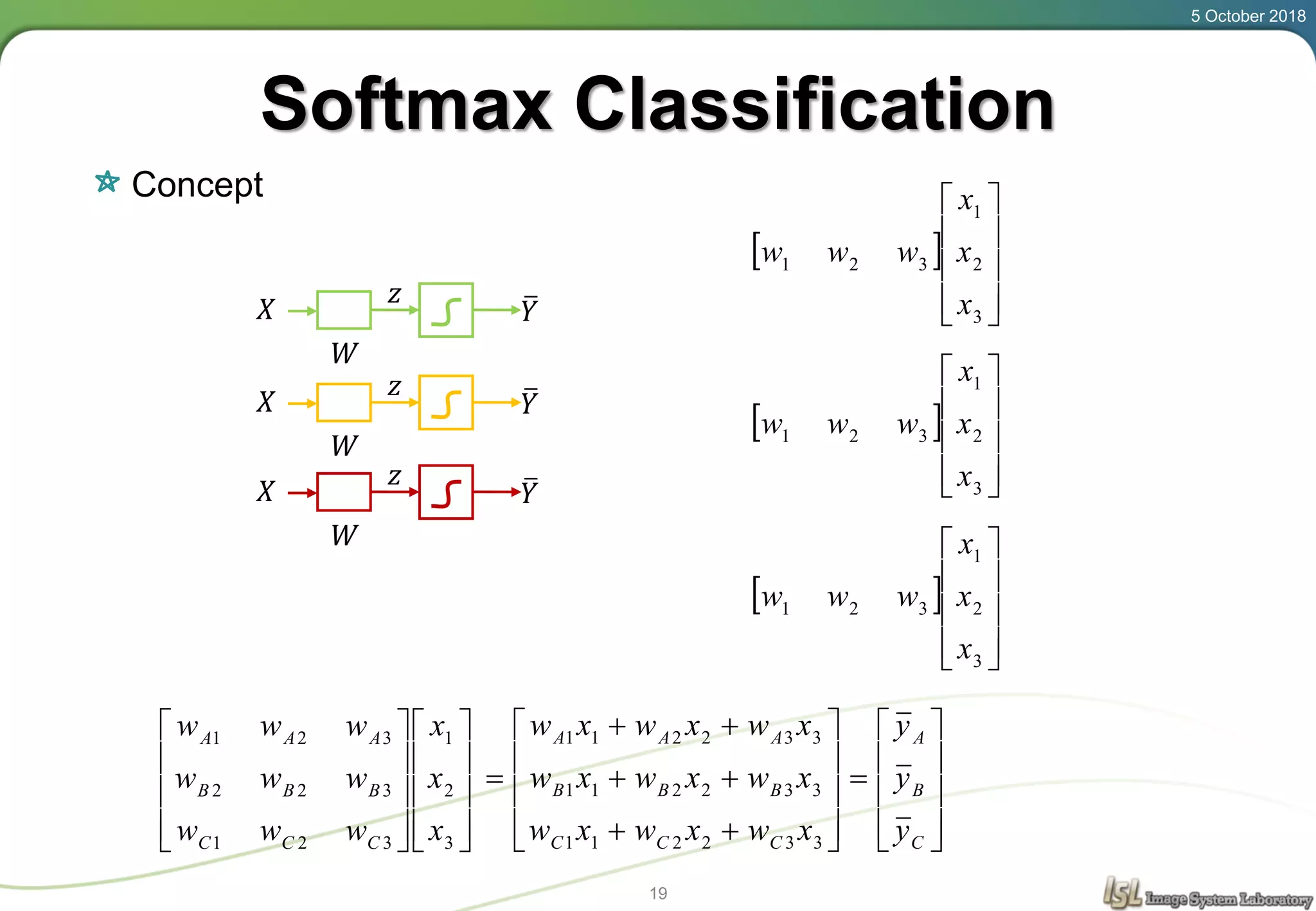

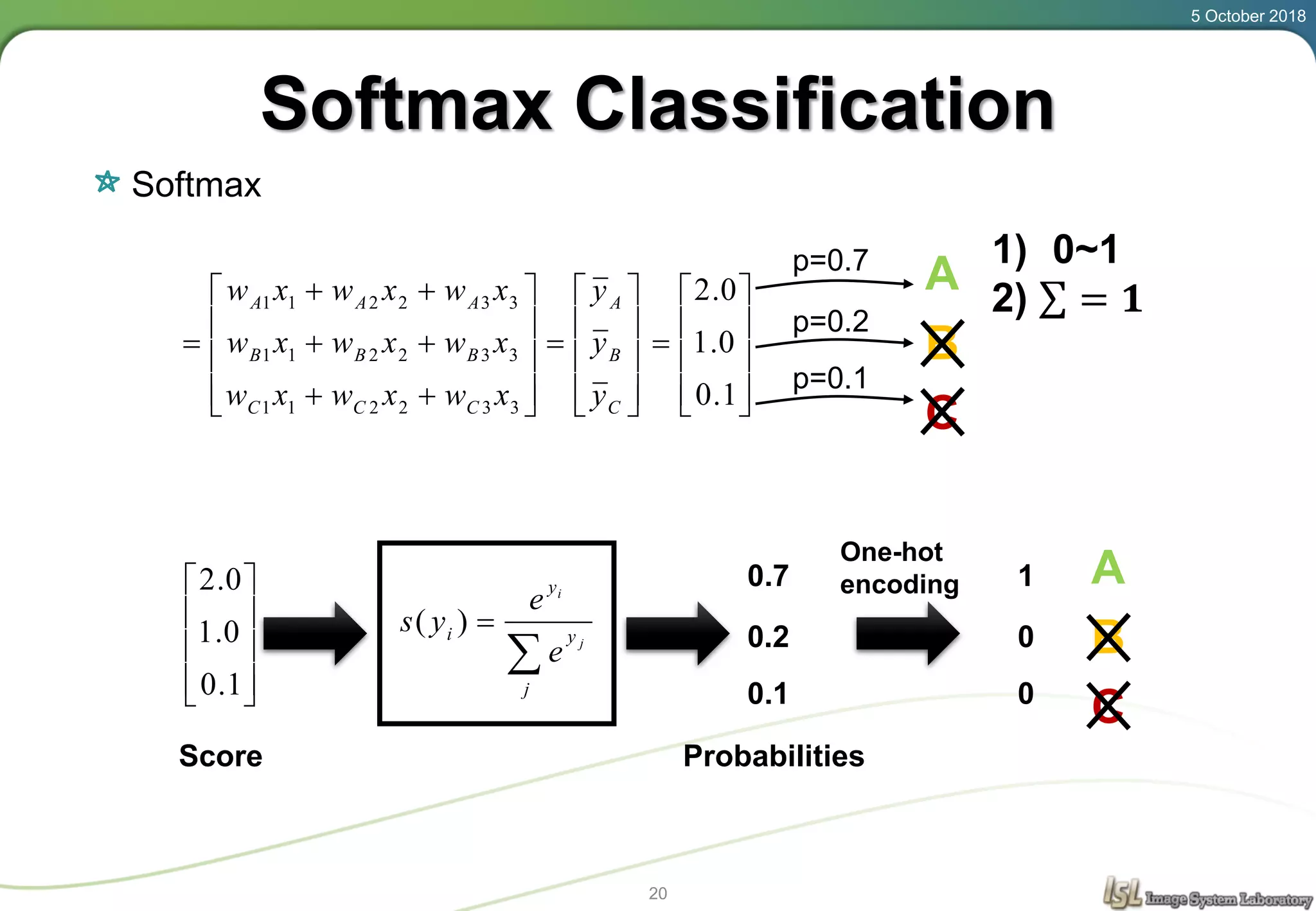

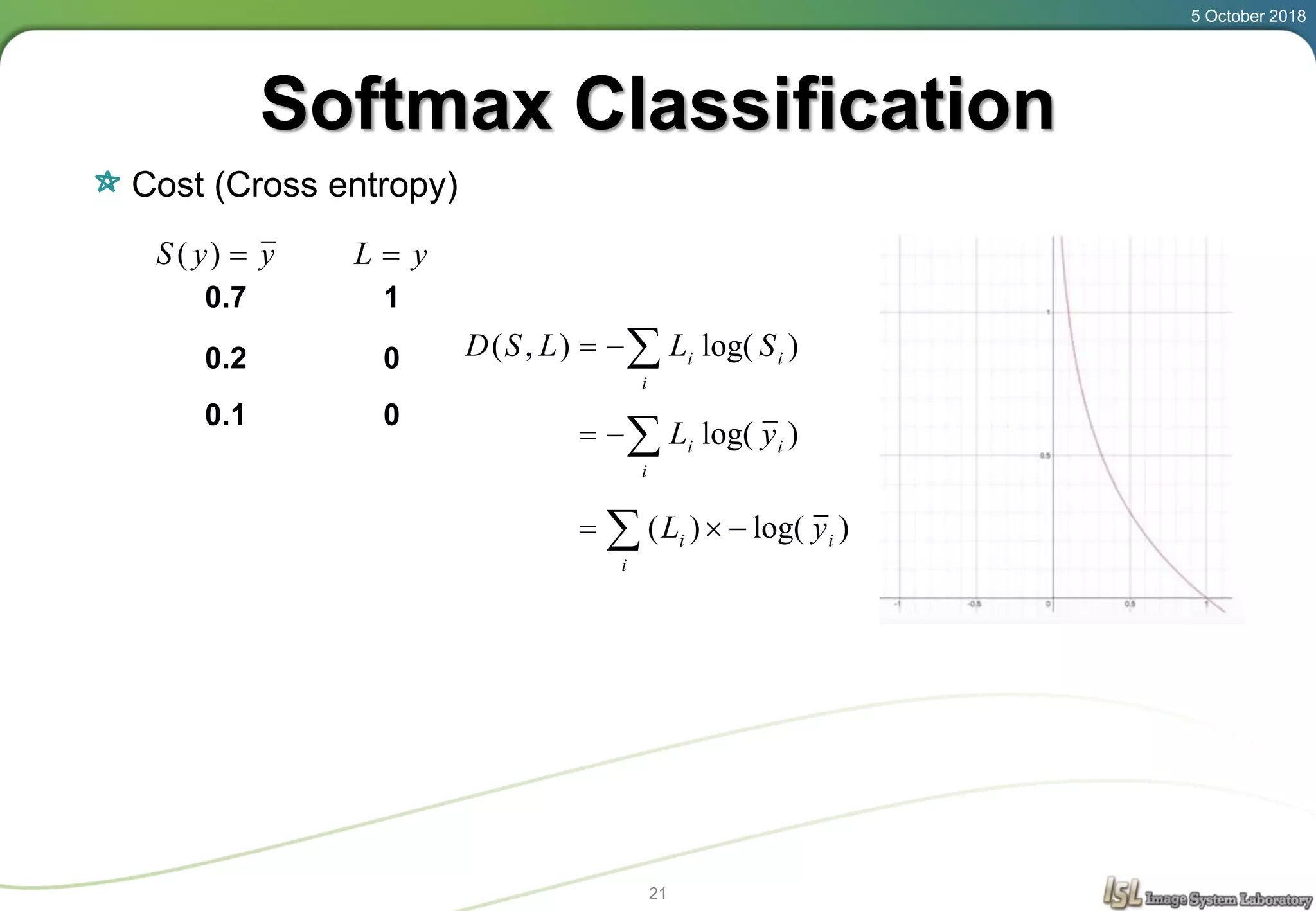

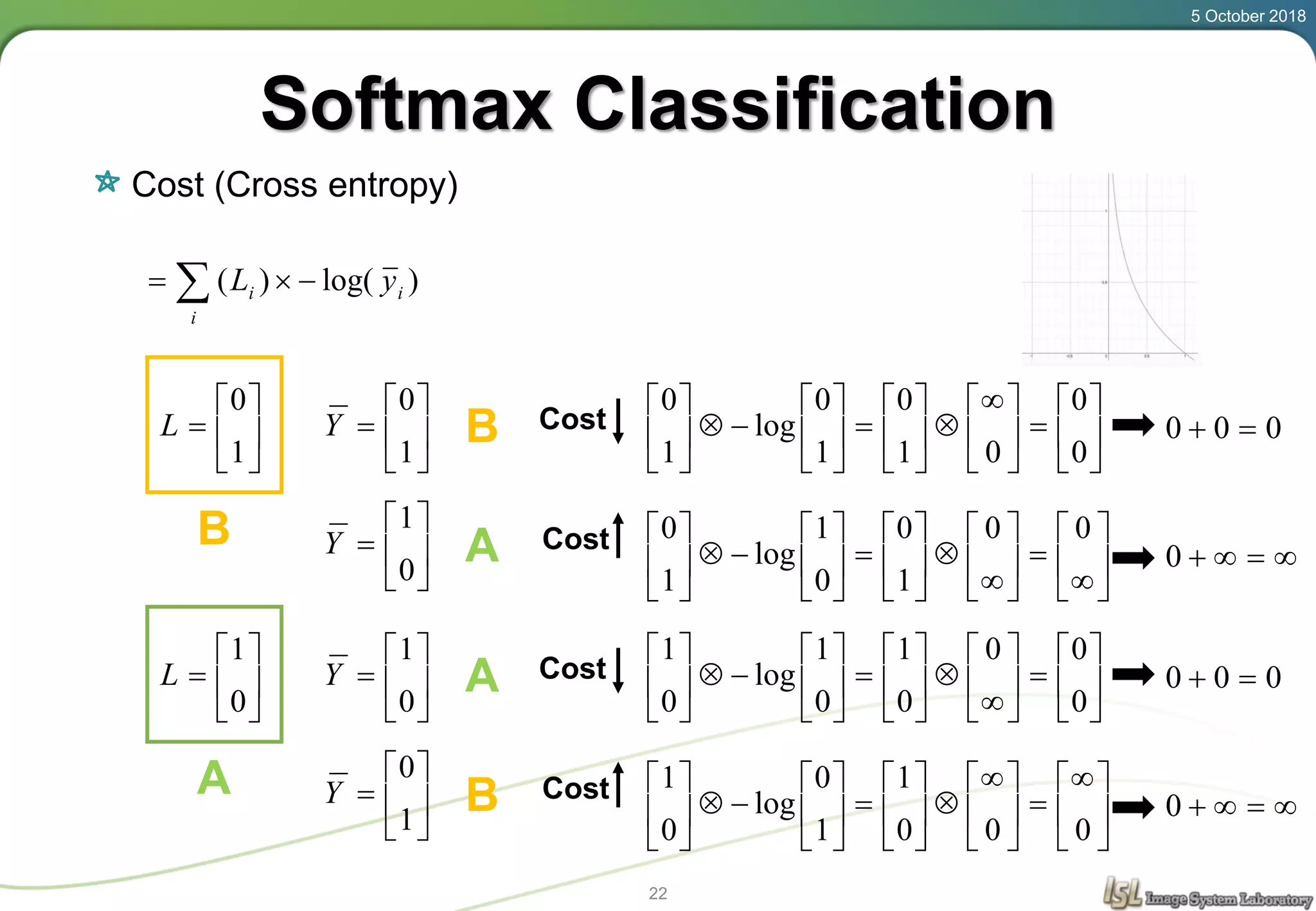

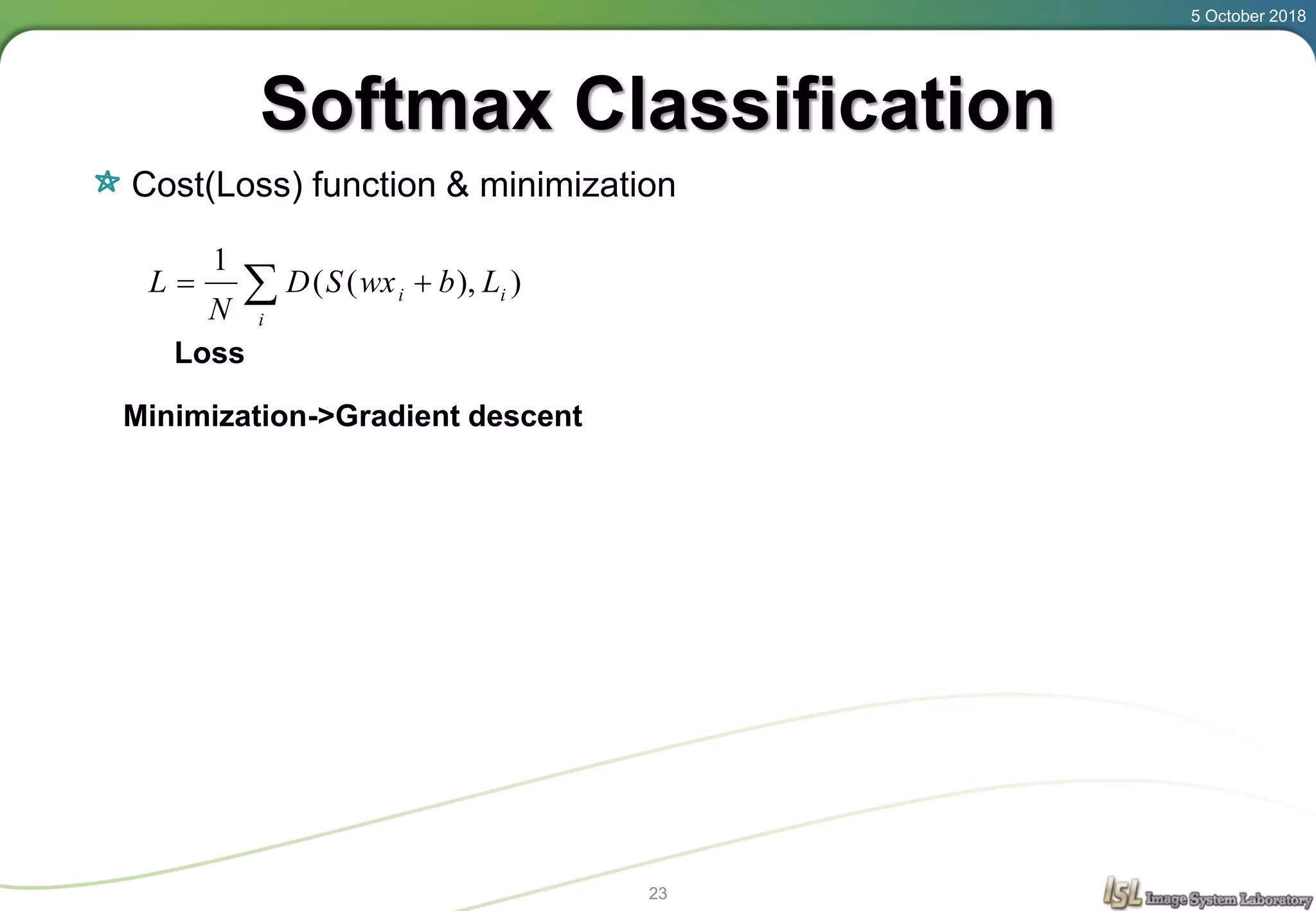

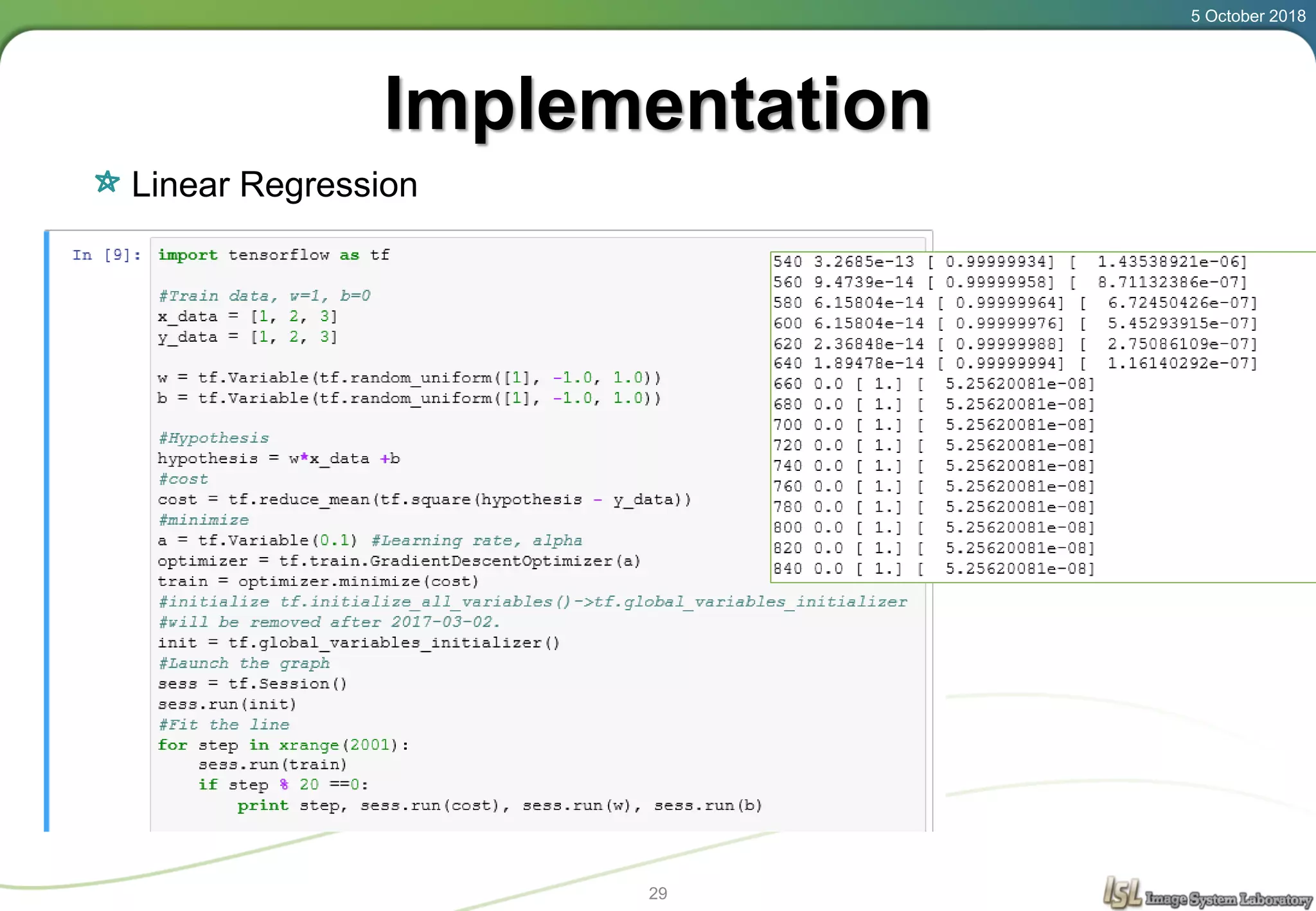

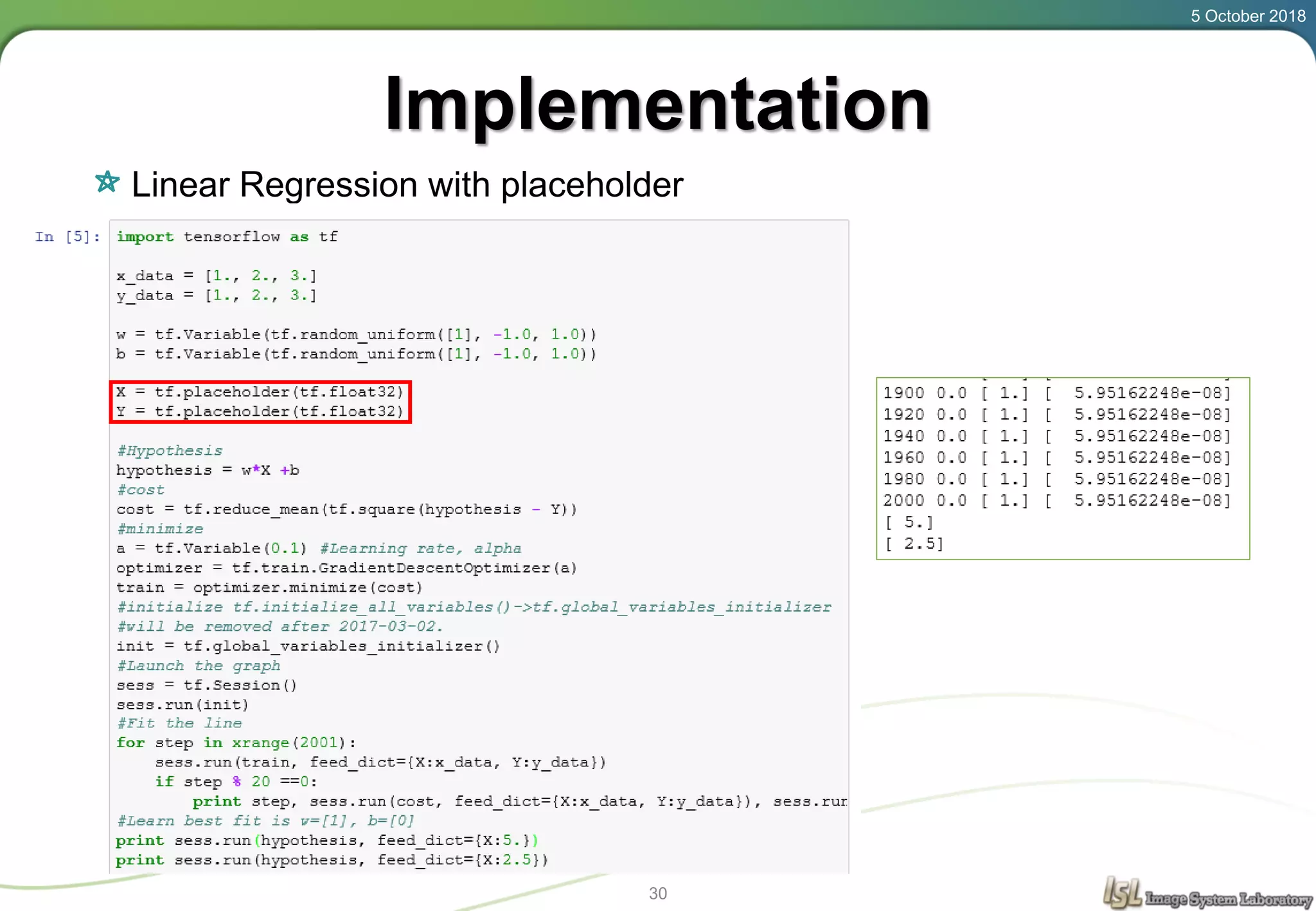

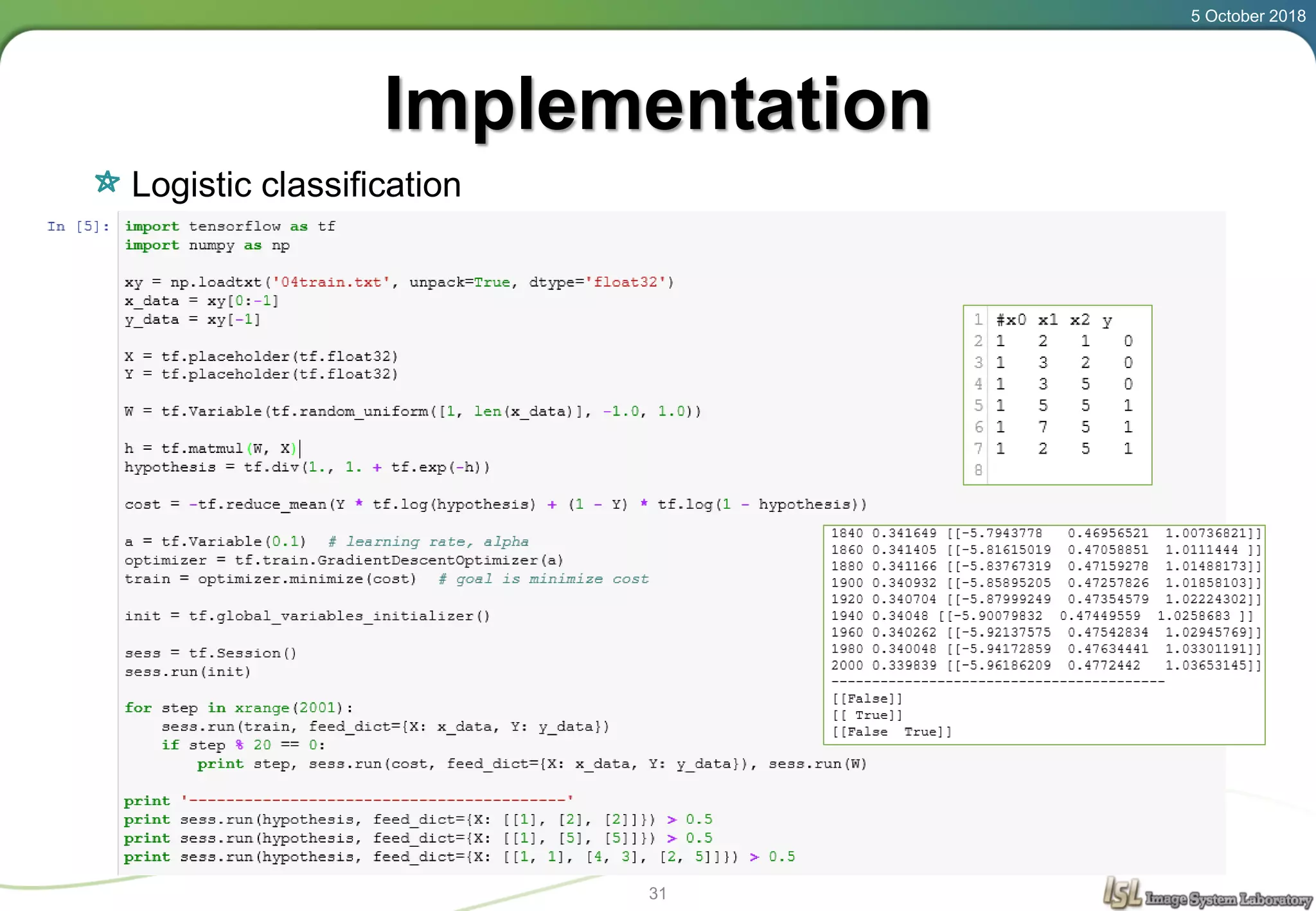

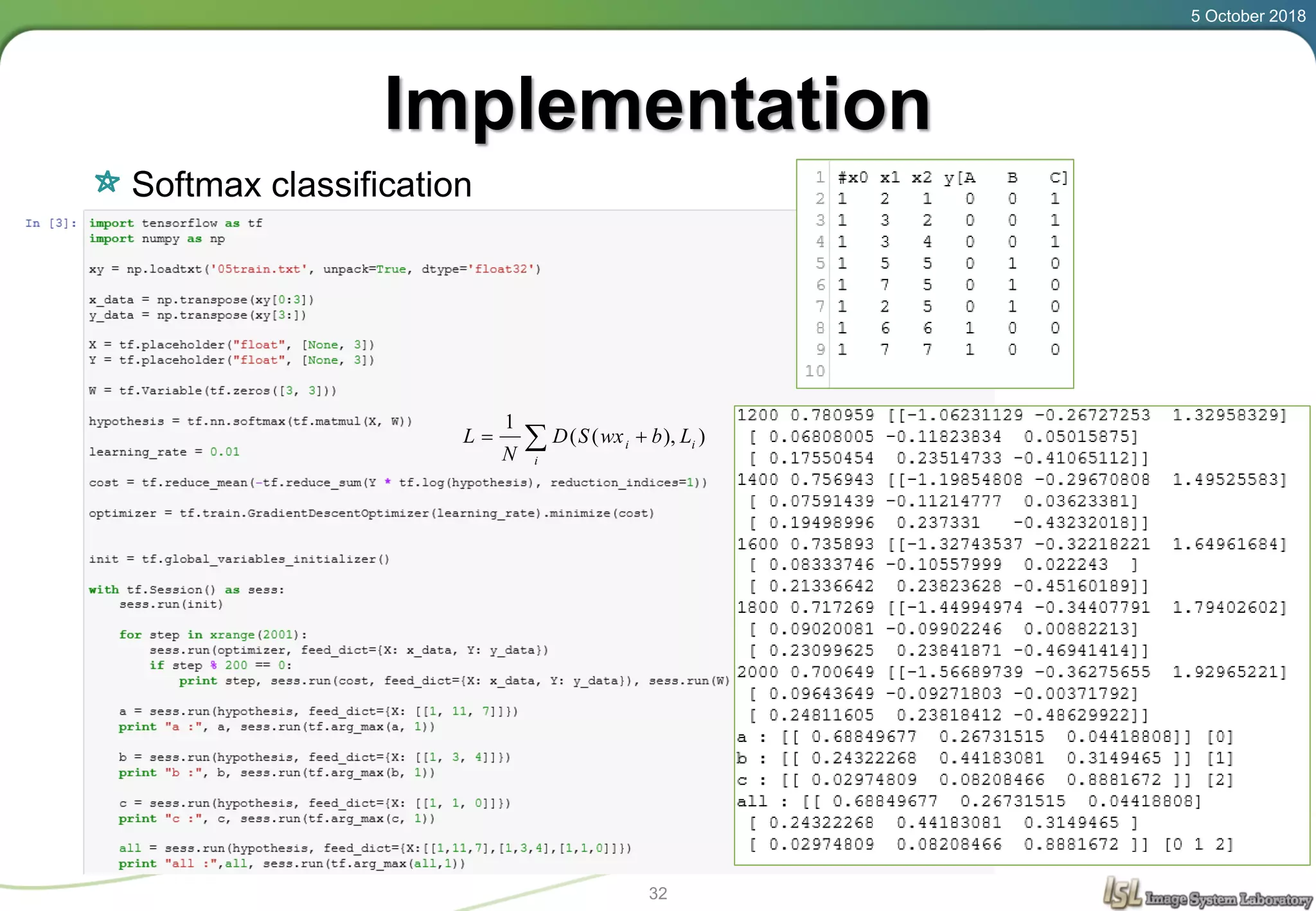

This document provides an overview of basic machine learning concepts including regression, logistic classification, and softmax classification. It introduces linear regression for predicting a continuous output variable, logistic regression for binary classification problems, and softmax classification for multi-class classification problems. Key aspects covered include the hypothesis, cost function, and using gradient descent to minimize the cost function for each of these machine learning algorithms.