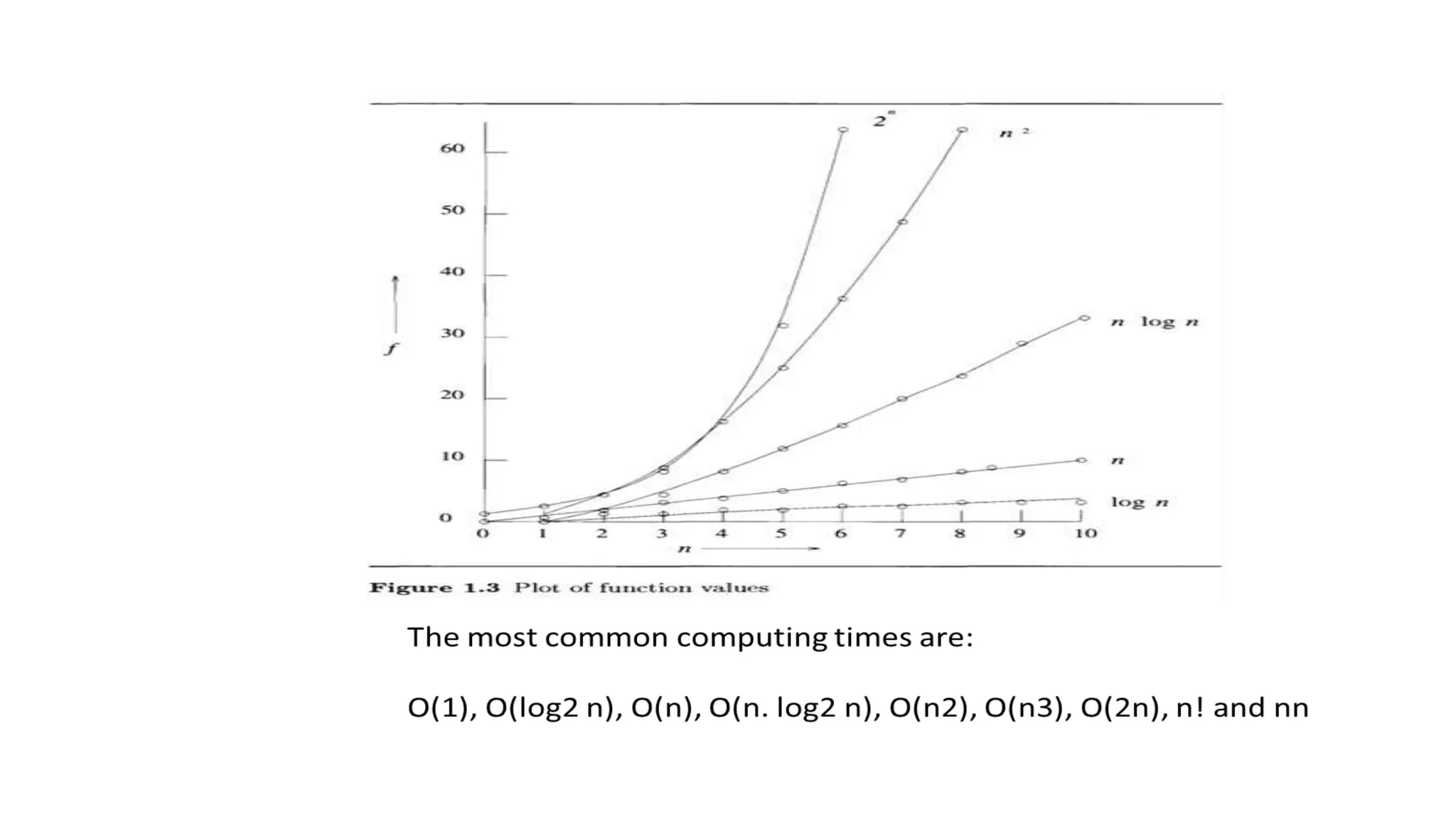

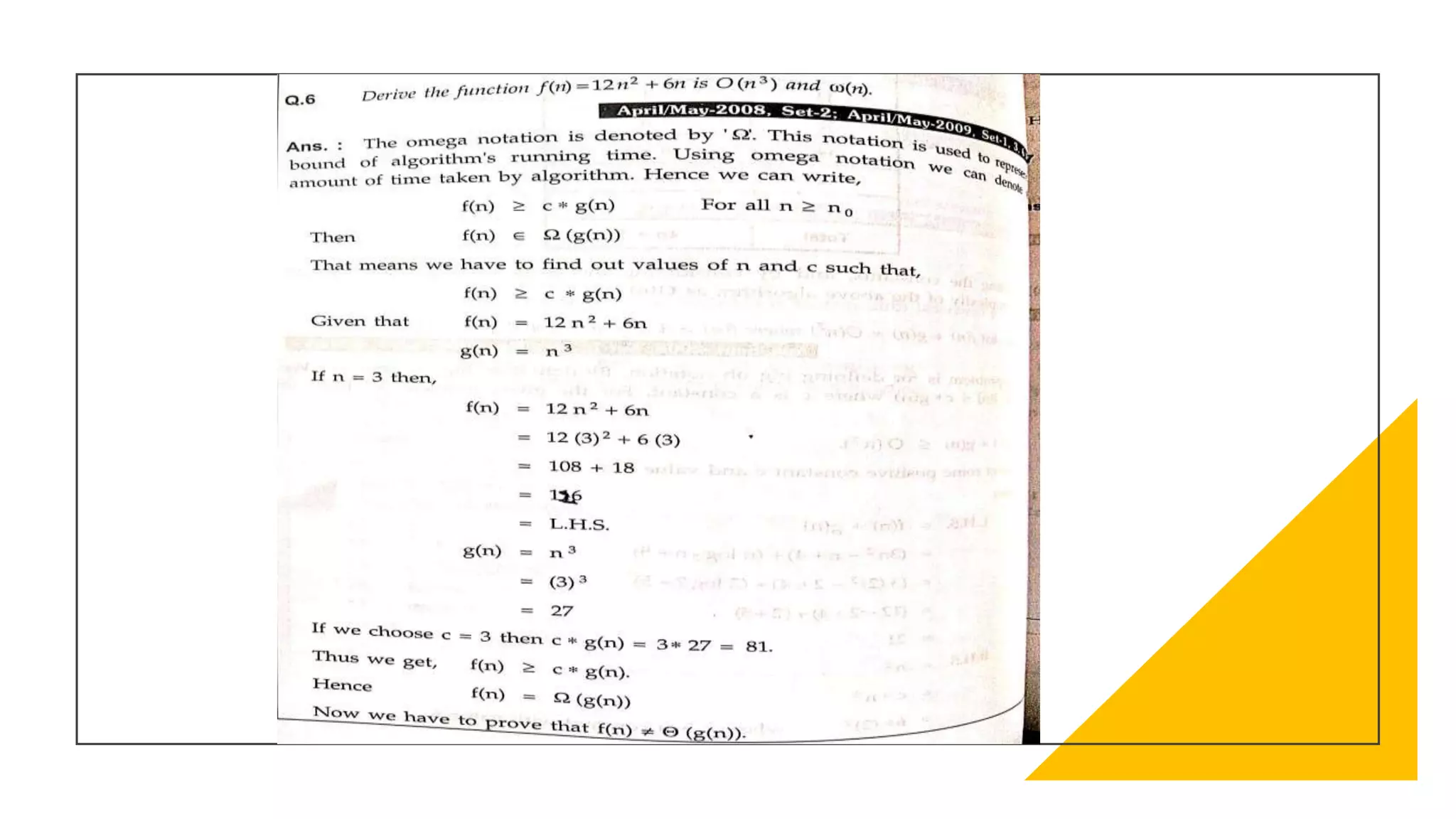

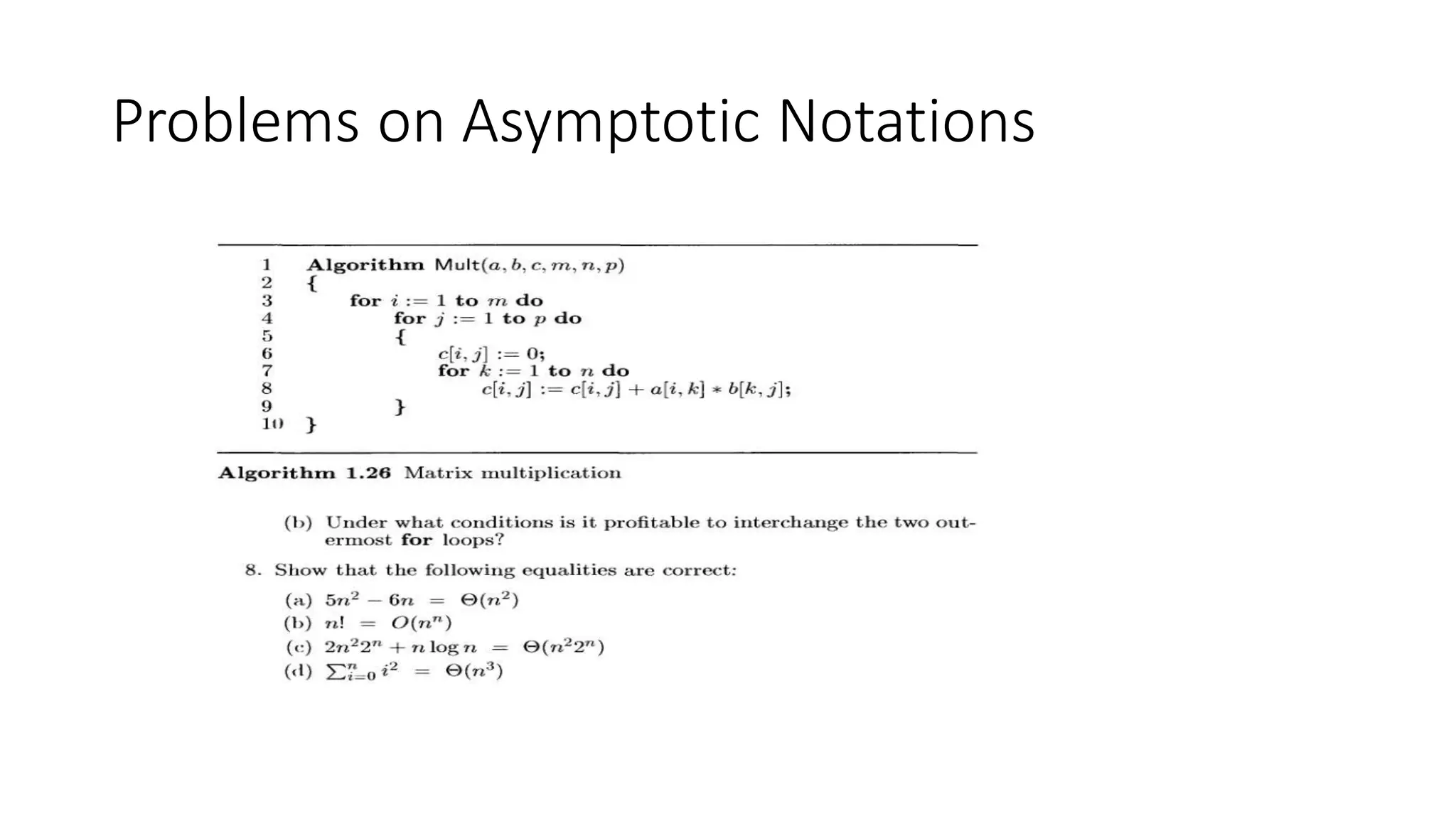

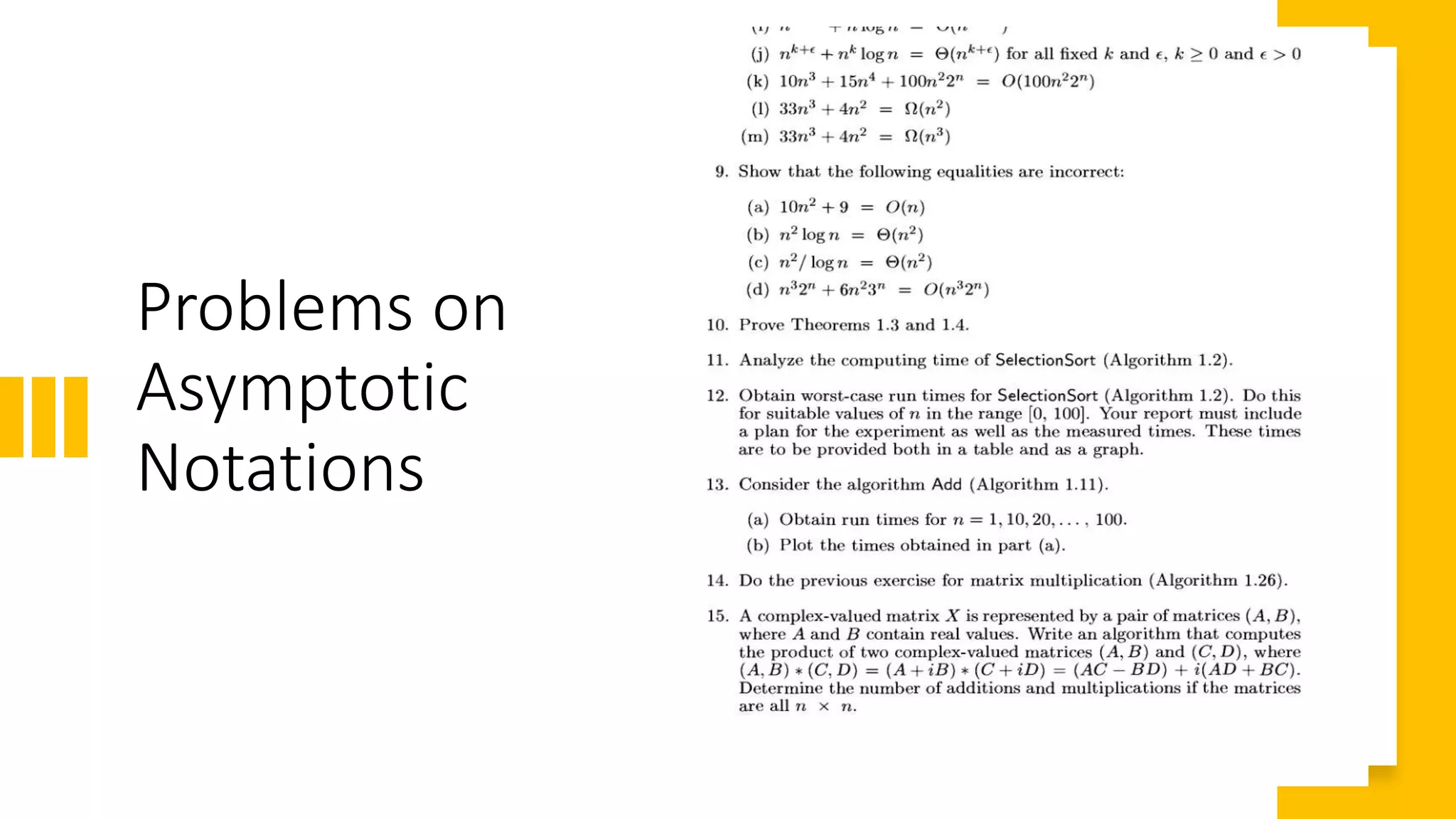

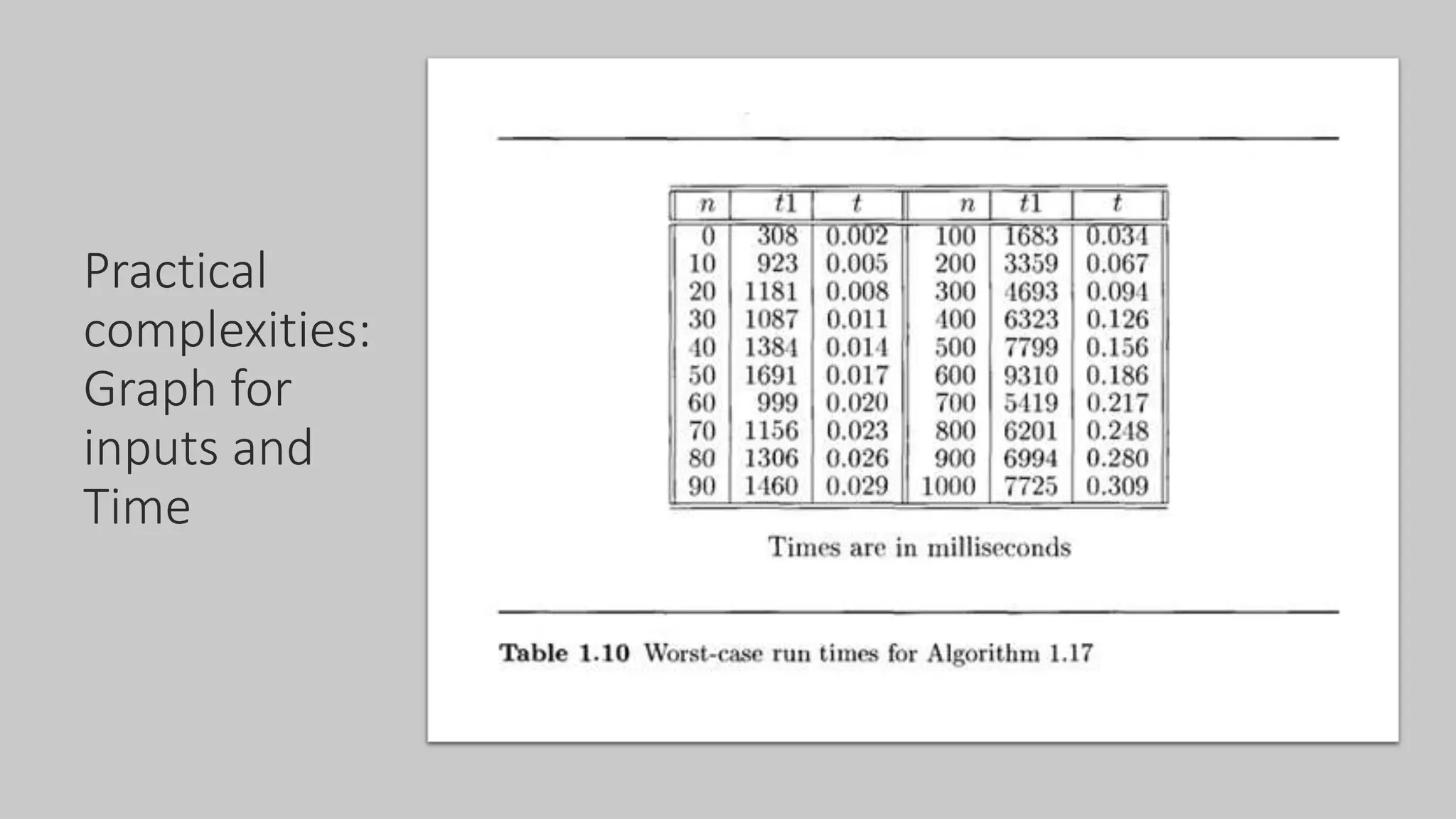

The document discusses asymptotic notations used to analyze algorithms. It defines best case, average case, and worst case time complexities, and commonly used notations like Big-O, Big-Omega, and Big-Theta. Examples of common time complexities are given like constant, logarithmic, linear, quadratic, and exponential. Examples are provided for analyzing the time complexity of nested loops, matrix multiplication, and bubble sort algorithms. General rules for algorithm analysis are outlined regarding ignoring lower order terms and constants, summing segment times, and selecting the worst case complexity.

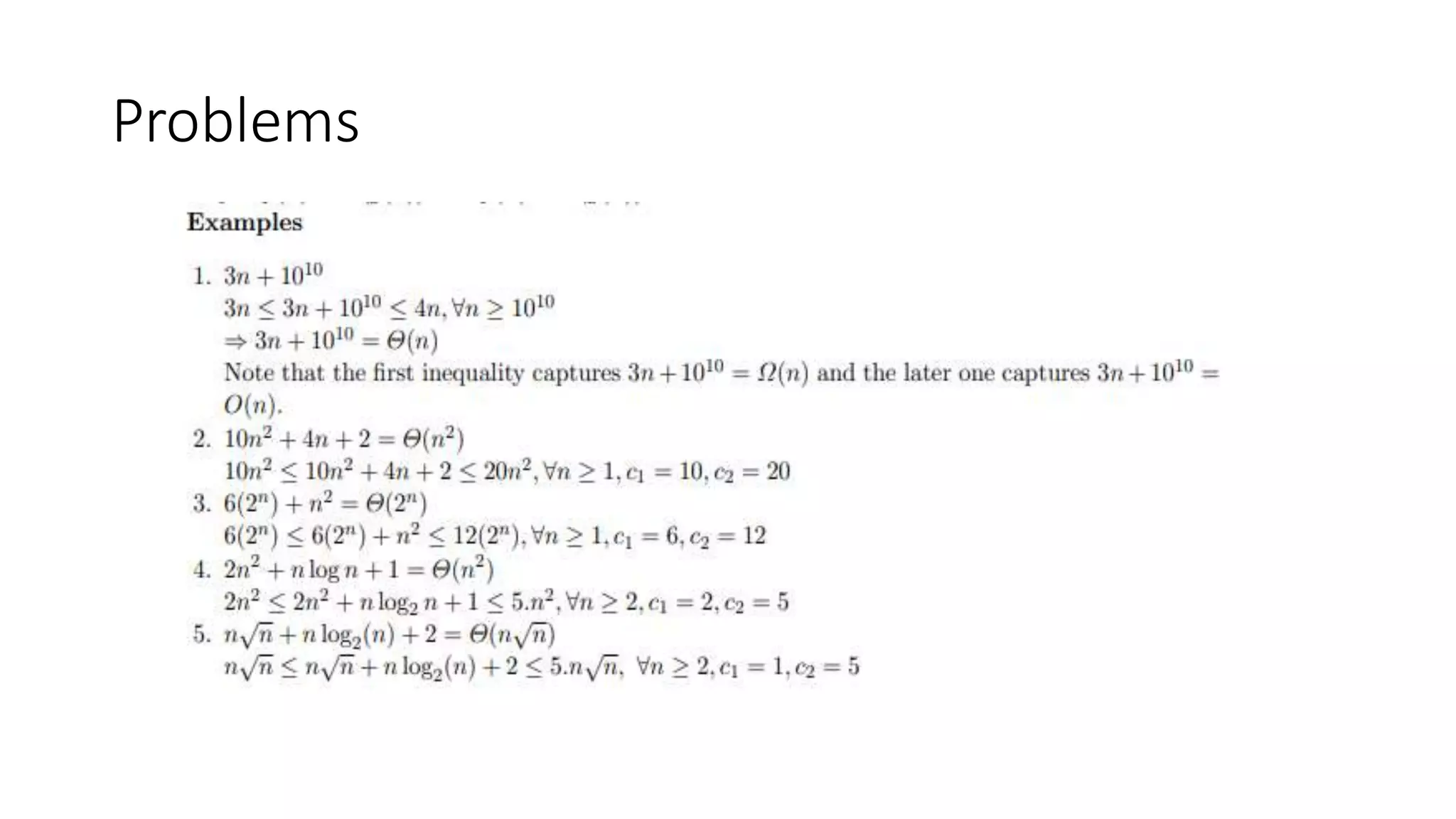

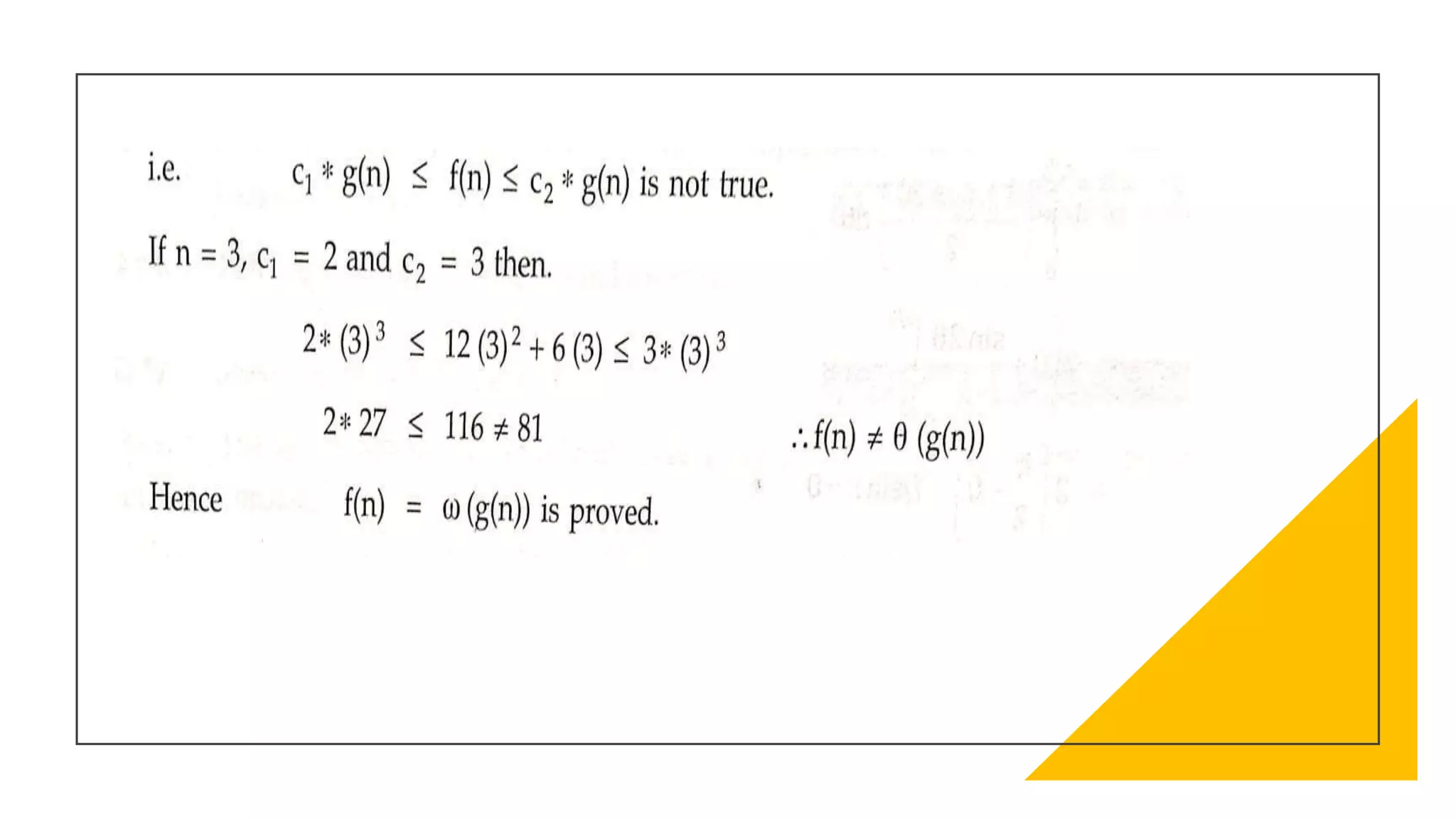

![• Example 1:

•

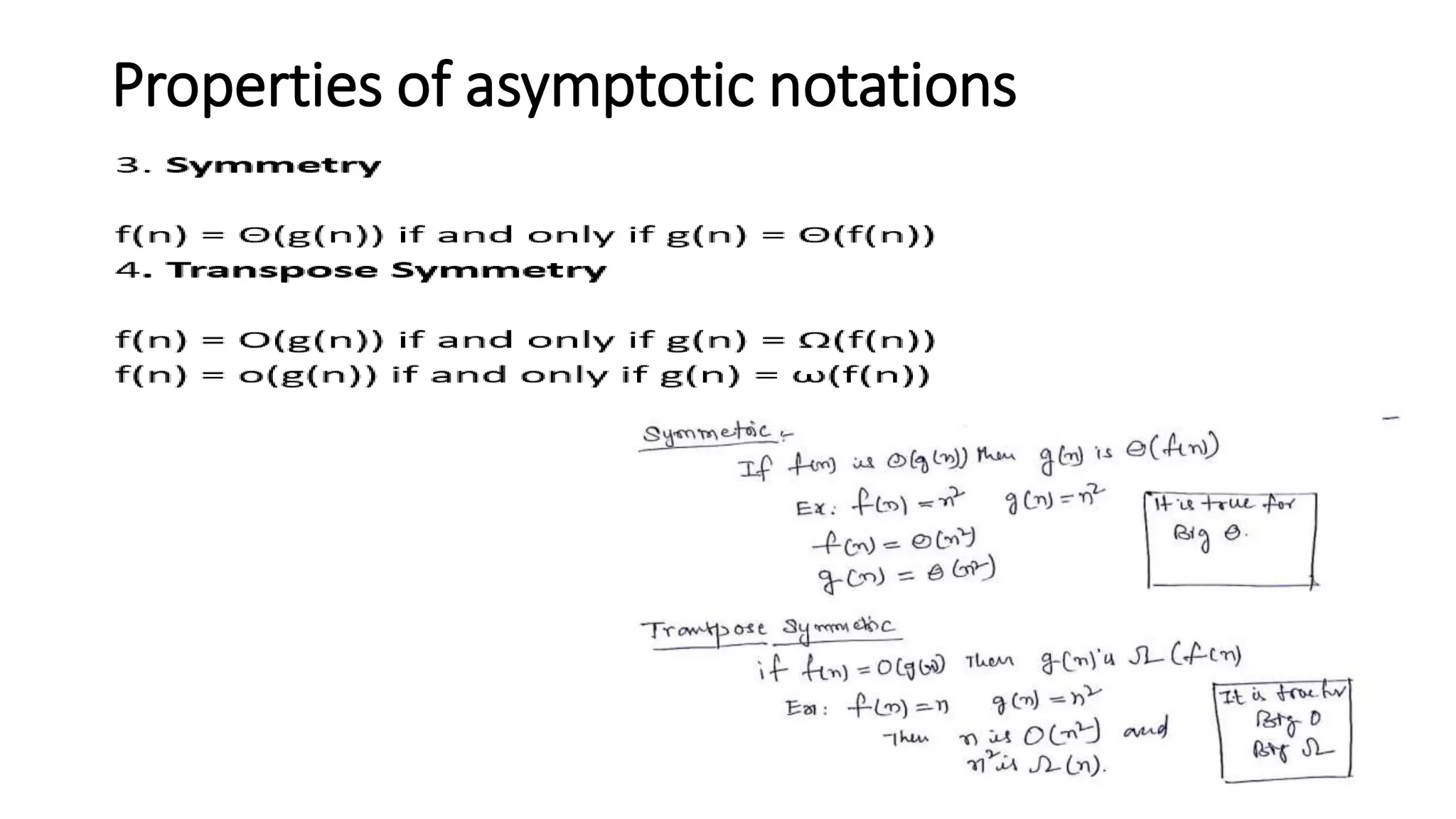

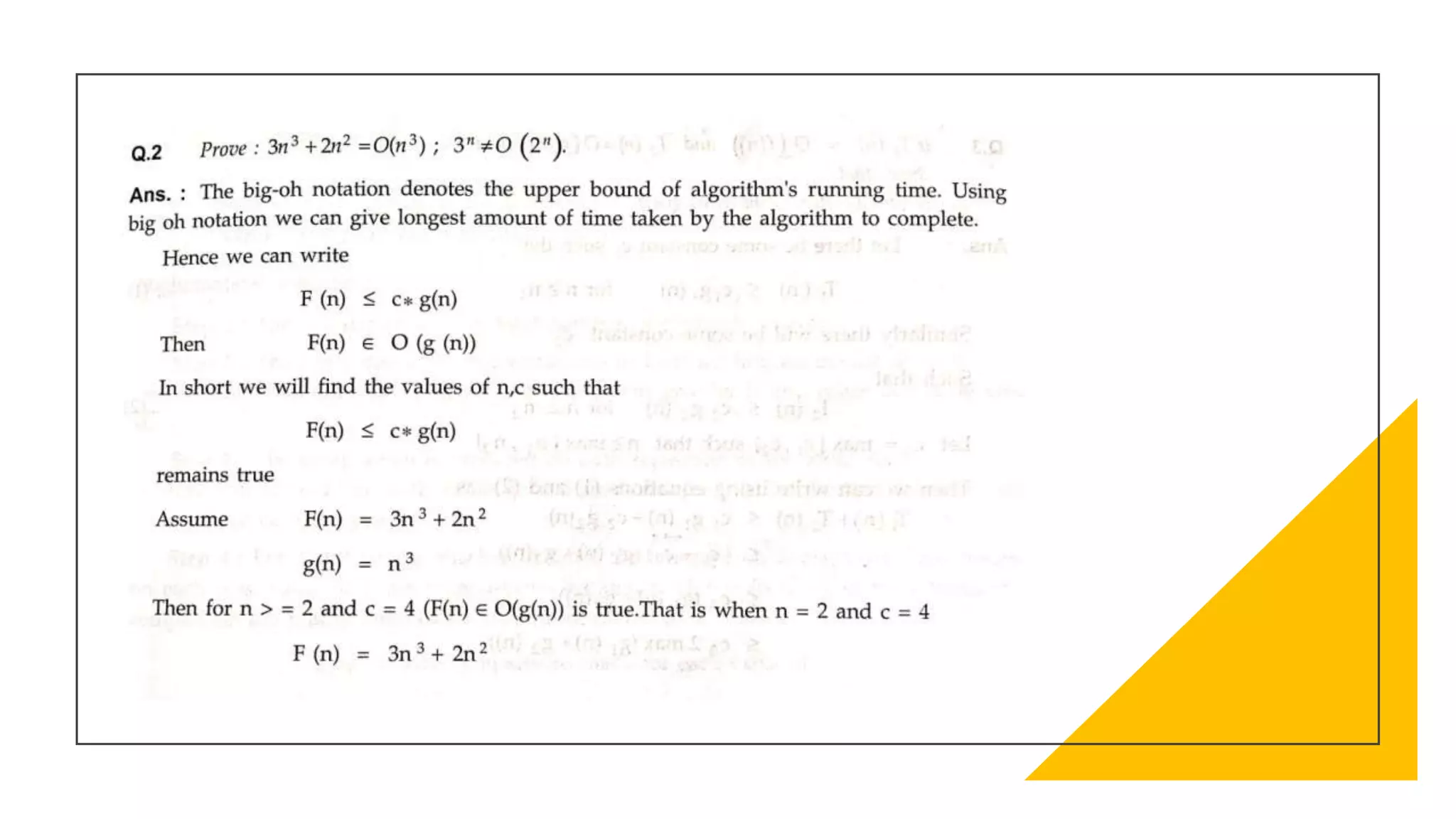

• Analysis for nested for loop

• Now let’s look at a more complicated

example, a nested for loop:

• for (i = 1; i<=n; i++)

• for (j = 1; j<=n; j++)

• a[i,j] = b[i,j] * x;](https://image.slidesharecdn.com/asymptoticnotations-200715064555/75/Asymptotic-notations-35-2048.jpg)

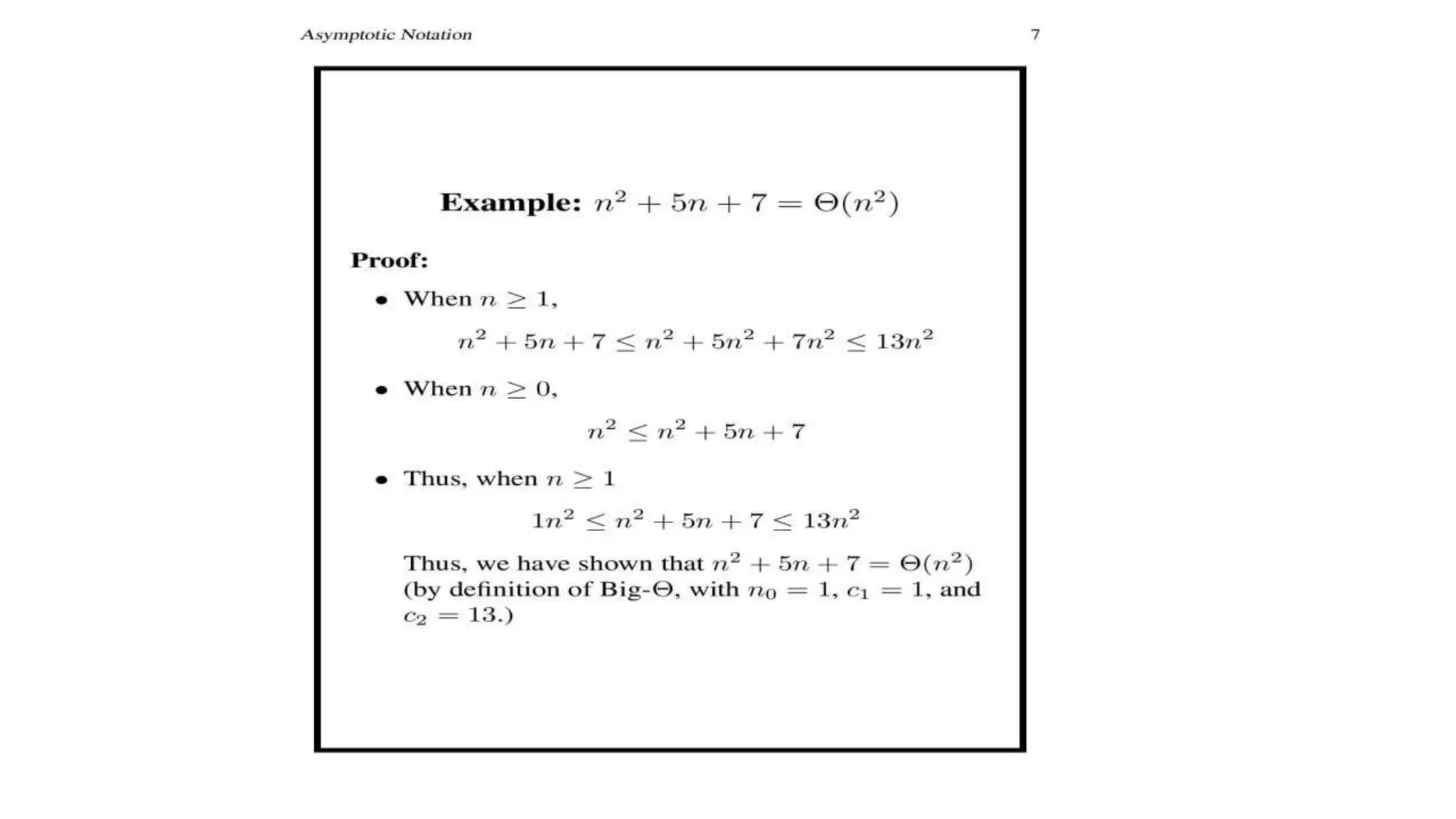

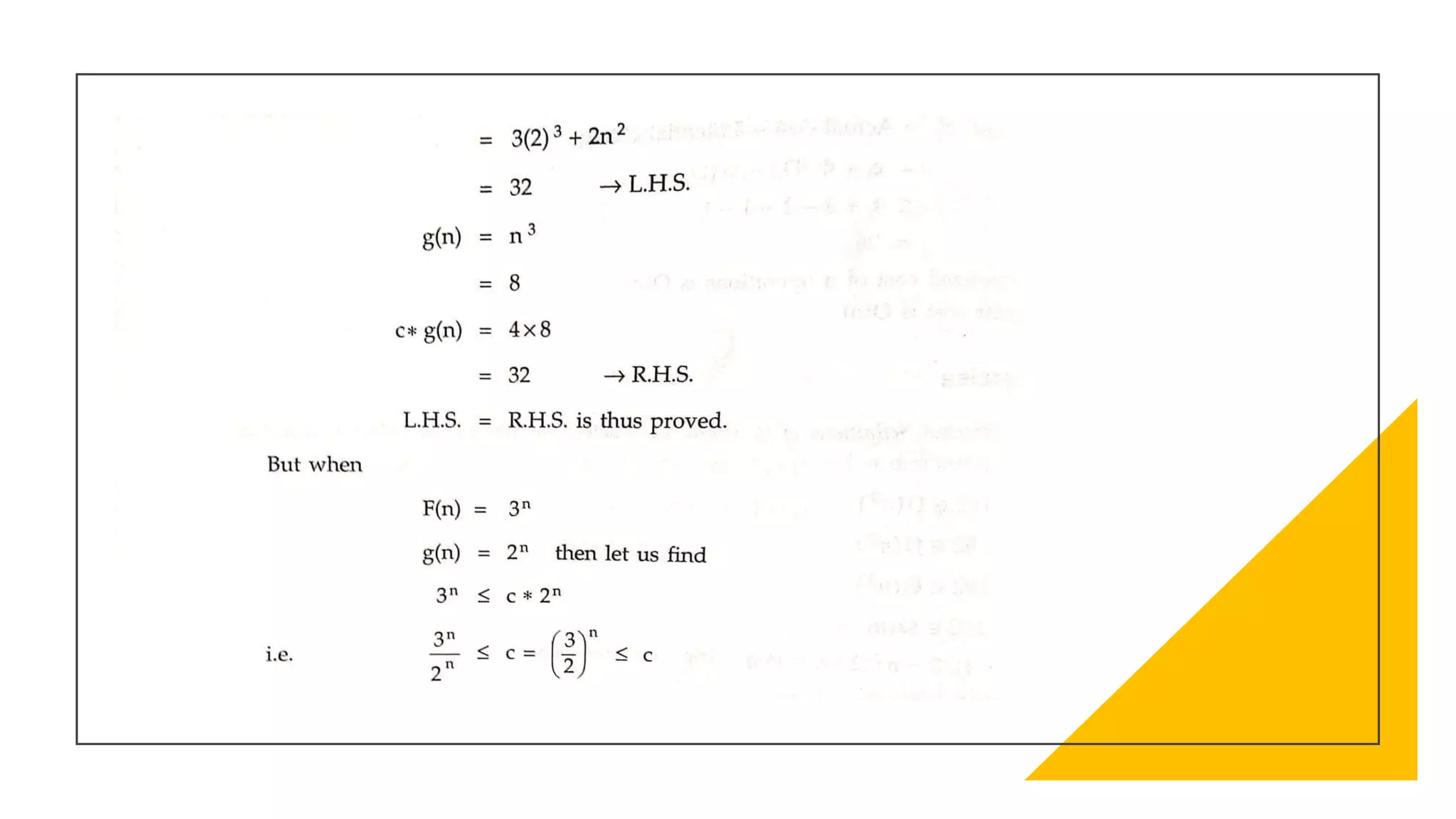

![• Analysis of matrix multiply

• Lets start with an easy case. Multiplying two n

n matrices.

• The code to compute the matrix product C = A

* B is given below.

•

• for (i = 1; i<=n; i++)

• for (j = 1; j<=n; j++) C[i, j] = 0;

• for (k = 1; k<=n; k++)

• C[i, j] = C[i, j] + A[i, k]

* B[k, j];](https://image.slidesharecdn.com/asymptoticnotations-200715064555/75/Asymptotic-notations-36-2048.jpg)

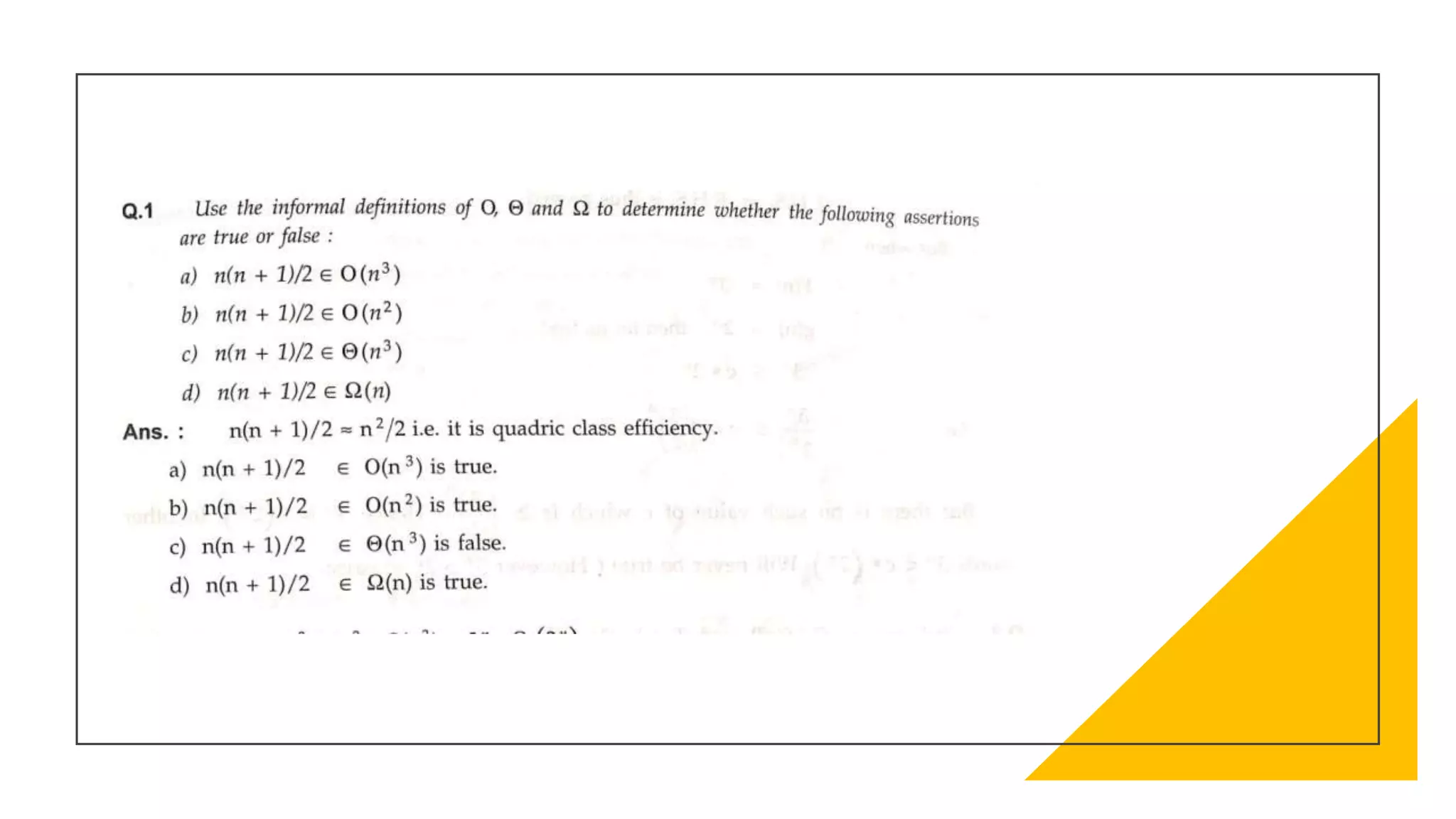

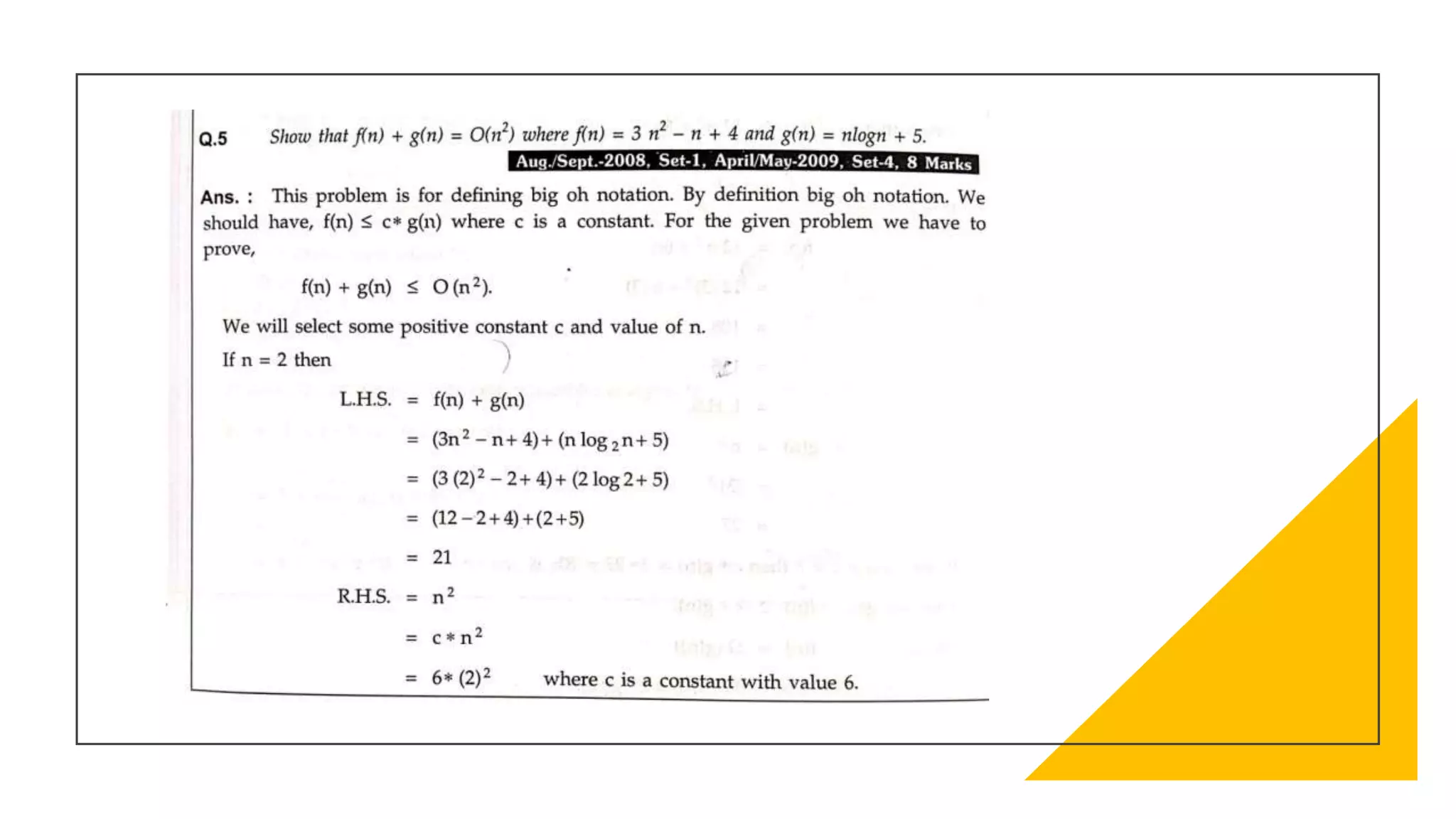

![• Analysis of matrix multiply

•

• Lets start with an easy case. Multiplying two n n matrices. The code

to compute the matrix product C = A * B is given below.

• for (i = 1; i<=n; i++)

• for (j = 1; j<=n; j++) C[i, j] = 0;

• for (k = 1; k<=n; k++)

• C[i, j] = C[i, j] + A[i, k] * B[k, j];

•

• Analysis of bubble sort

• The main body of the code for bubble sort looks something like this:

•

• for (i = n-1; i<1; i--)

• for (j = 1; j<=i; j++)

• if (a[j] > a[j+1])

• swap a[j] and a[j+1];](https://image.slidesharecdn.com/asymptoticnotations-200715064555/75/Asymptotic-notations-37-2048.jpg)