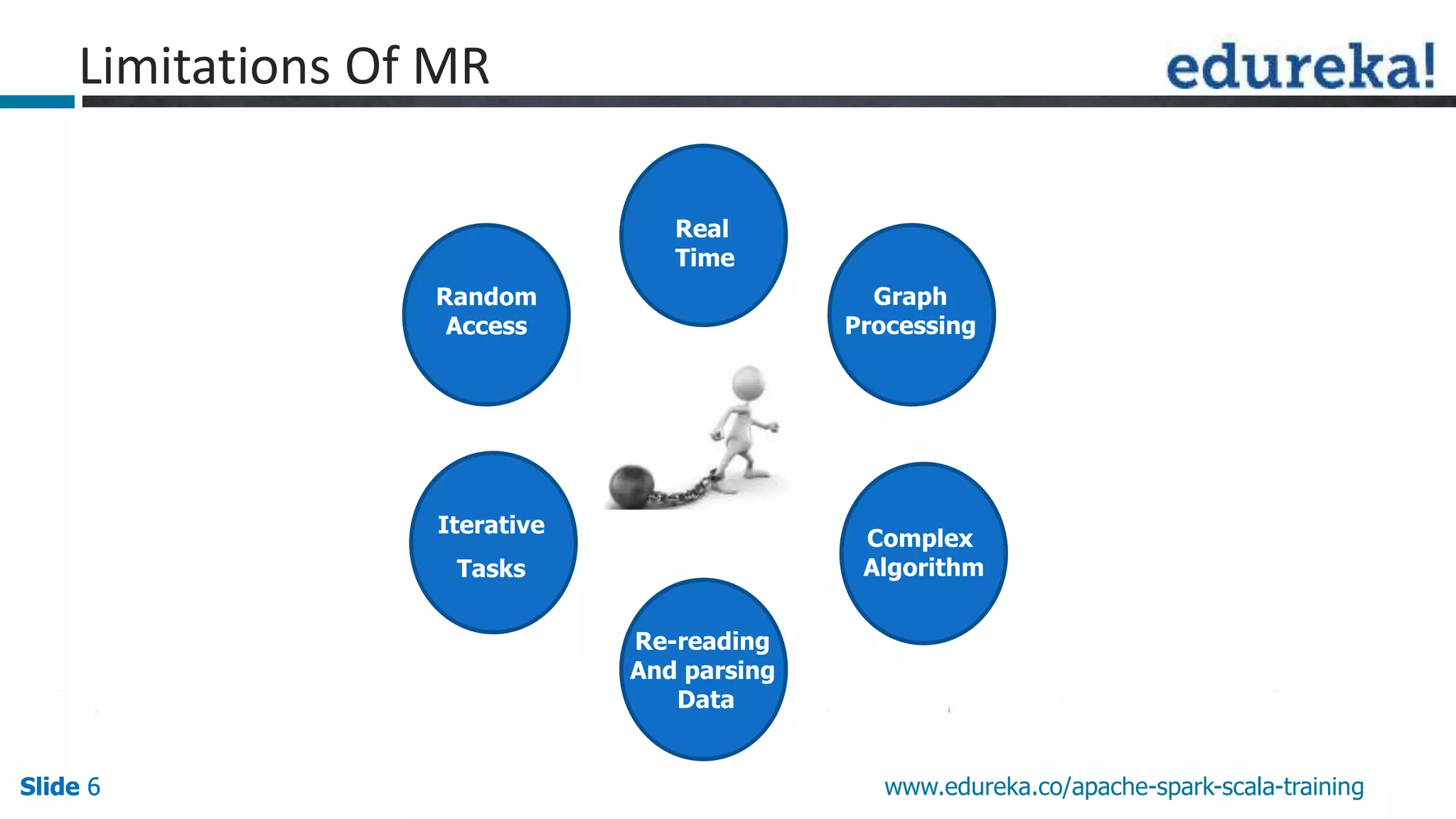

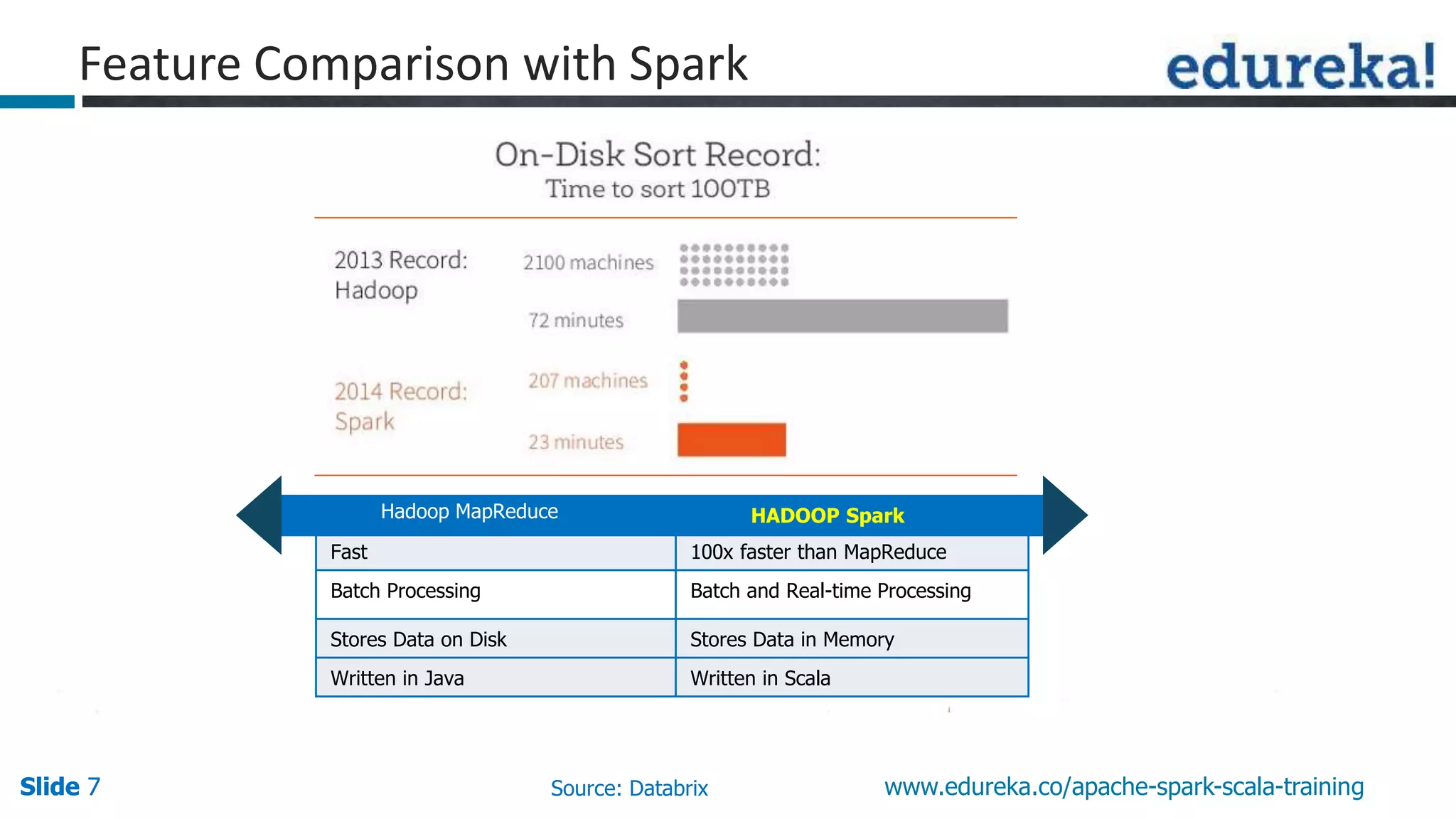

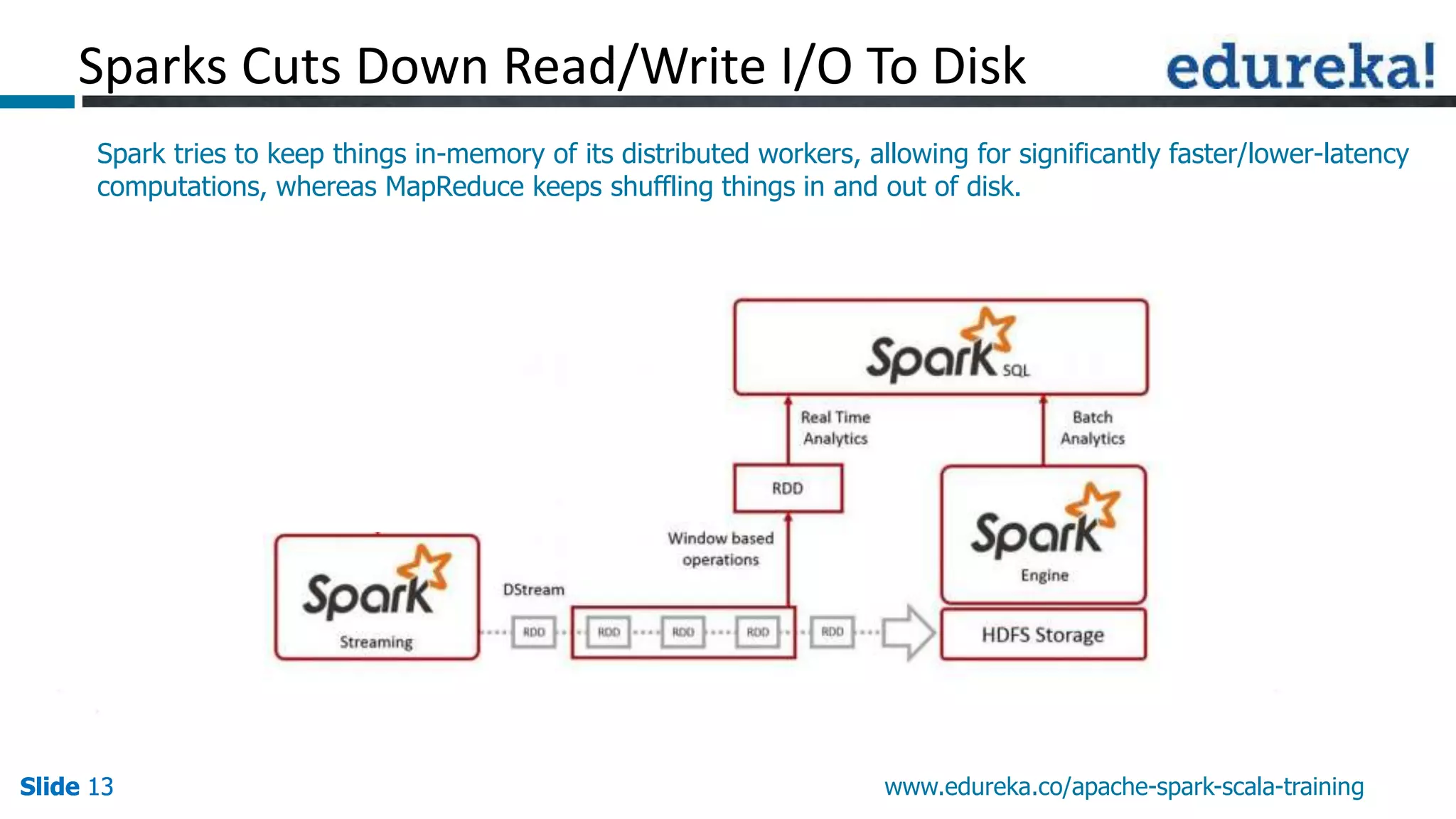

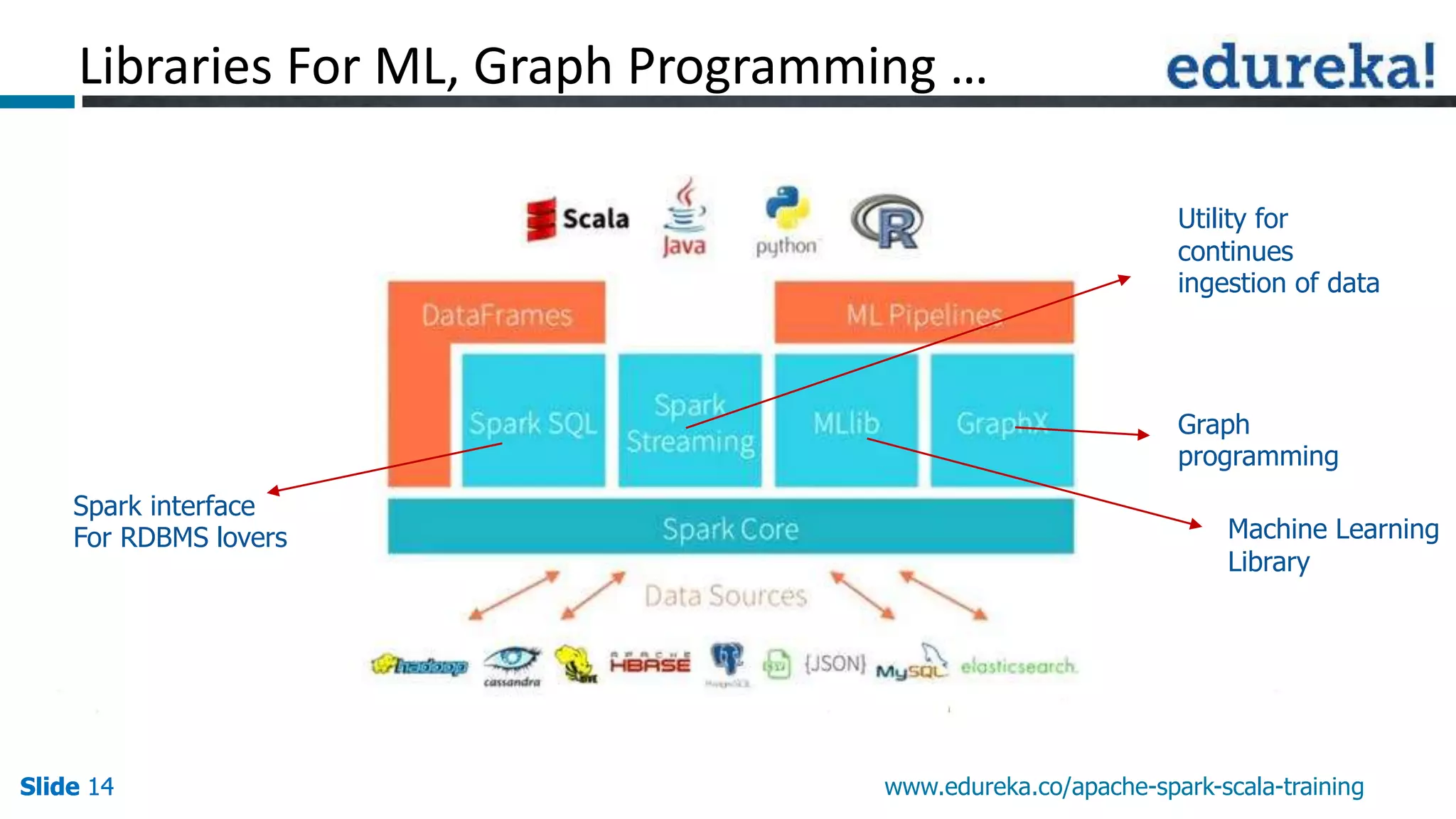

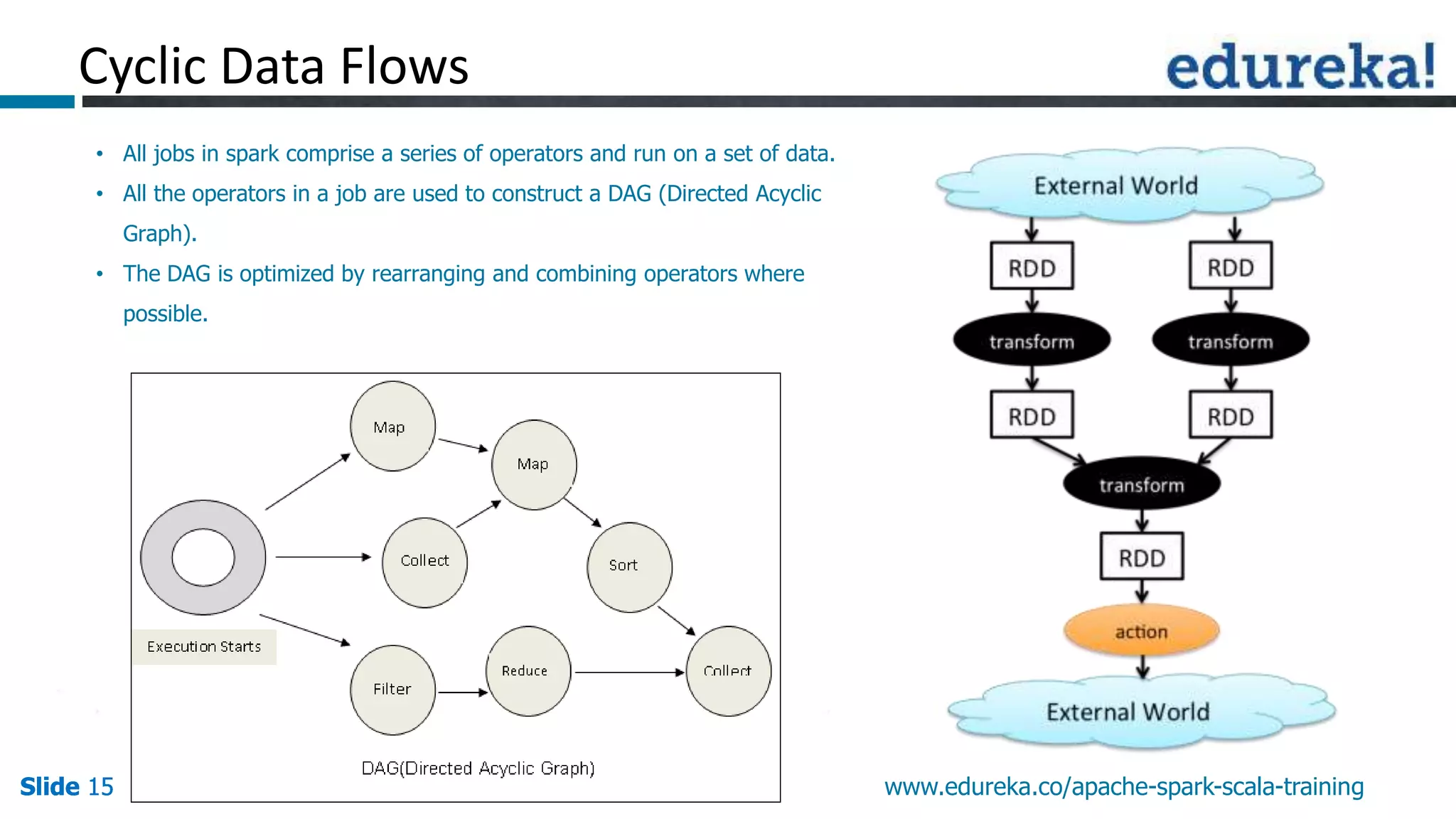

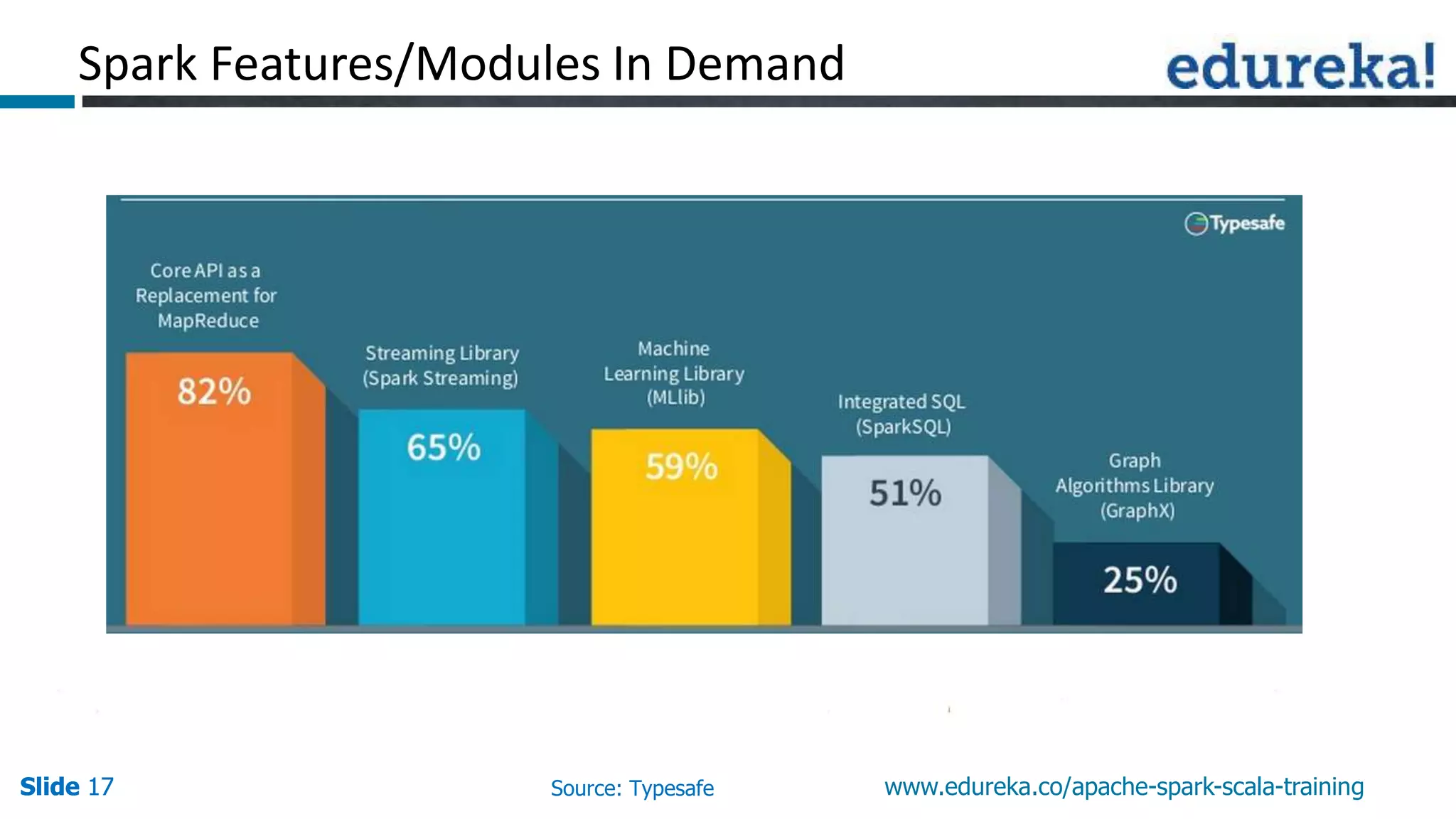

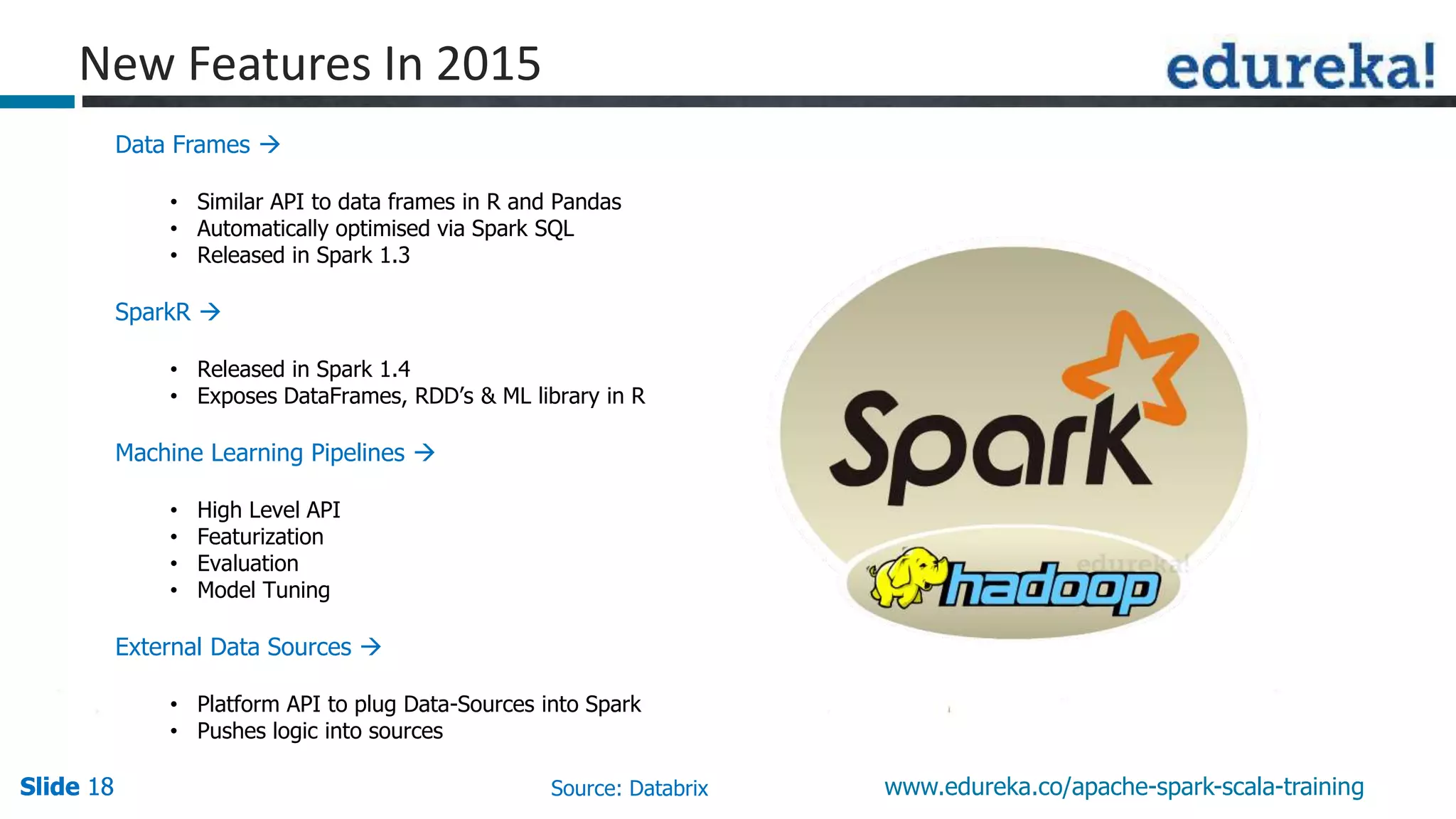

This document discusses how Apache Spark overcomes the limitations of Hadoop MapReduce. It explains that Spark is up to 100 times faster than MapReduce by keeping data in-memory between jobs rather than writing to disk. It also supports features beyond batch processing like machine learning, streaming, and graph processing through its libraries. Spark constructs jobs as directed acyclic graphs of operators that can be rearranged and optimized to cut down on reading and writing to disk.