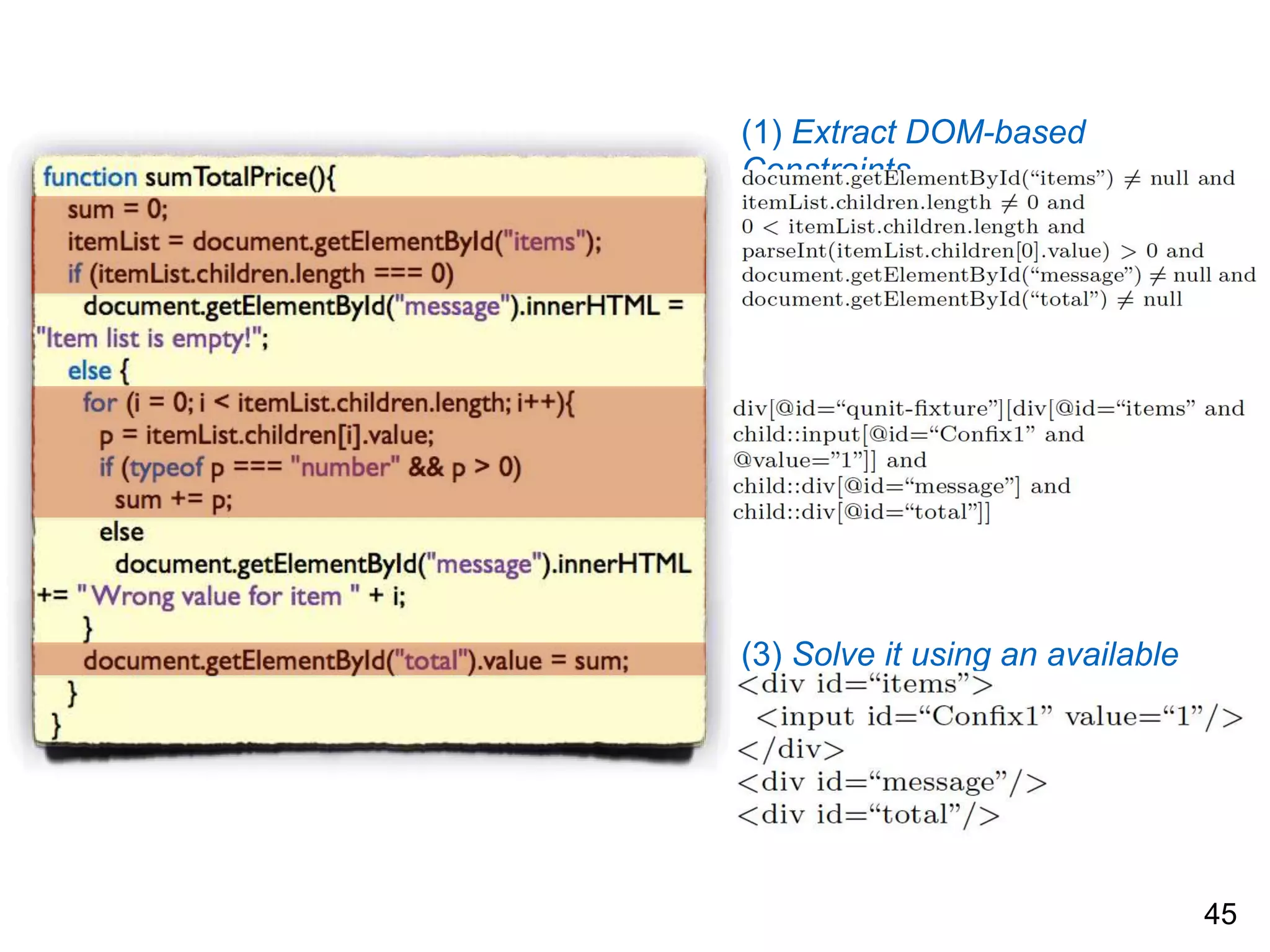

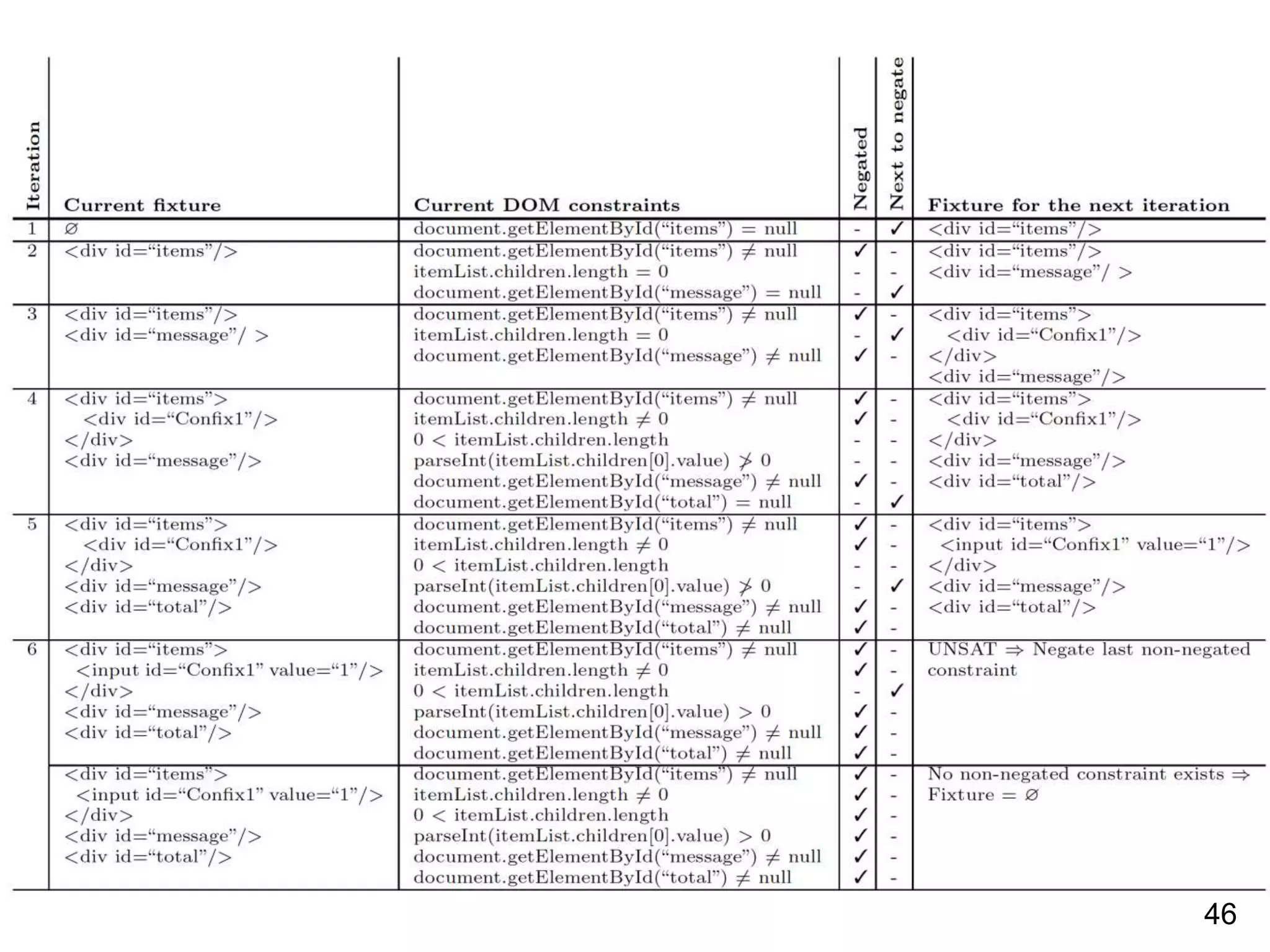

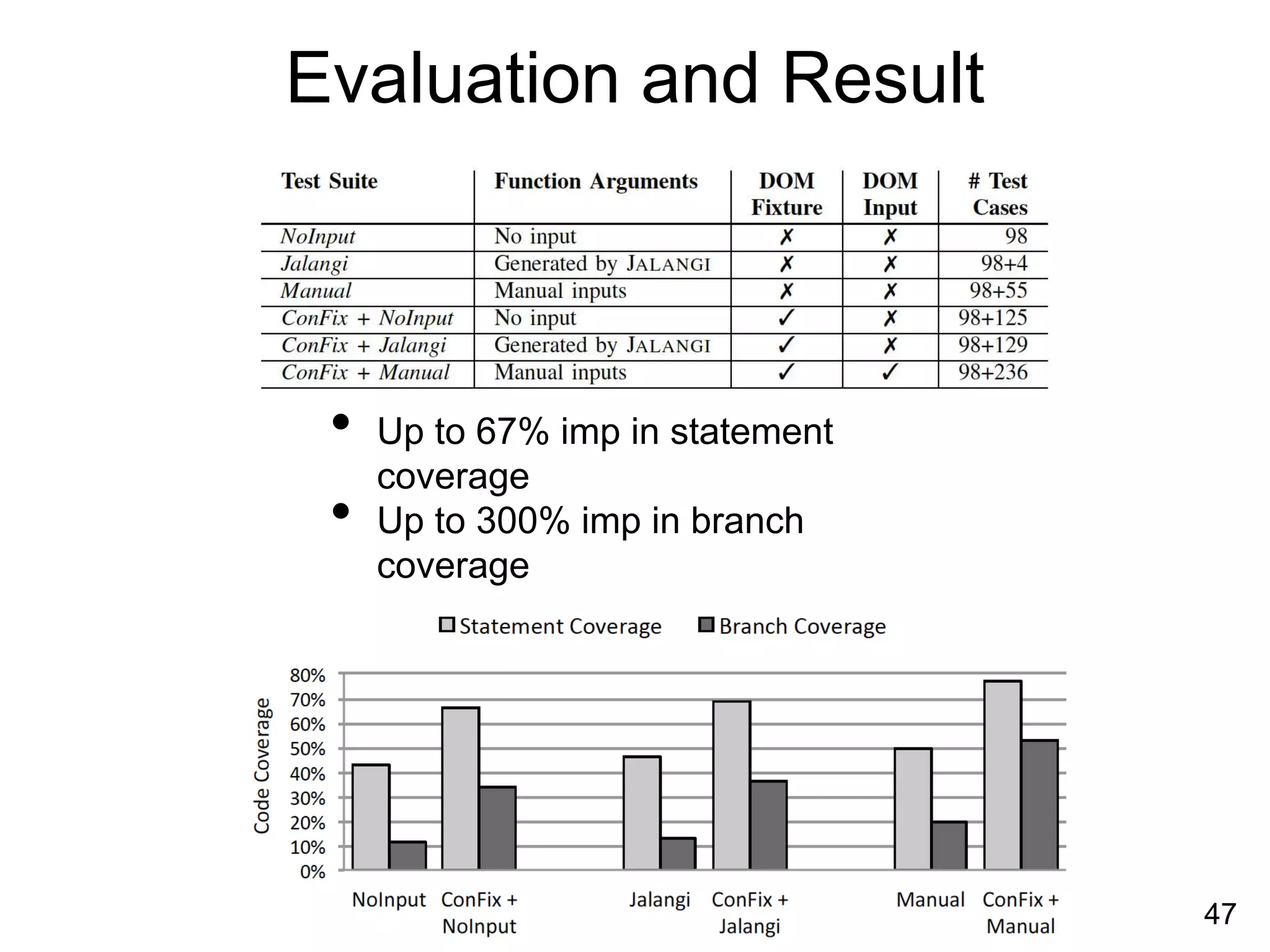

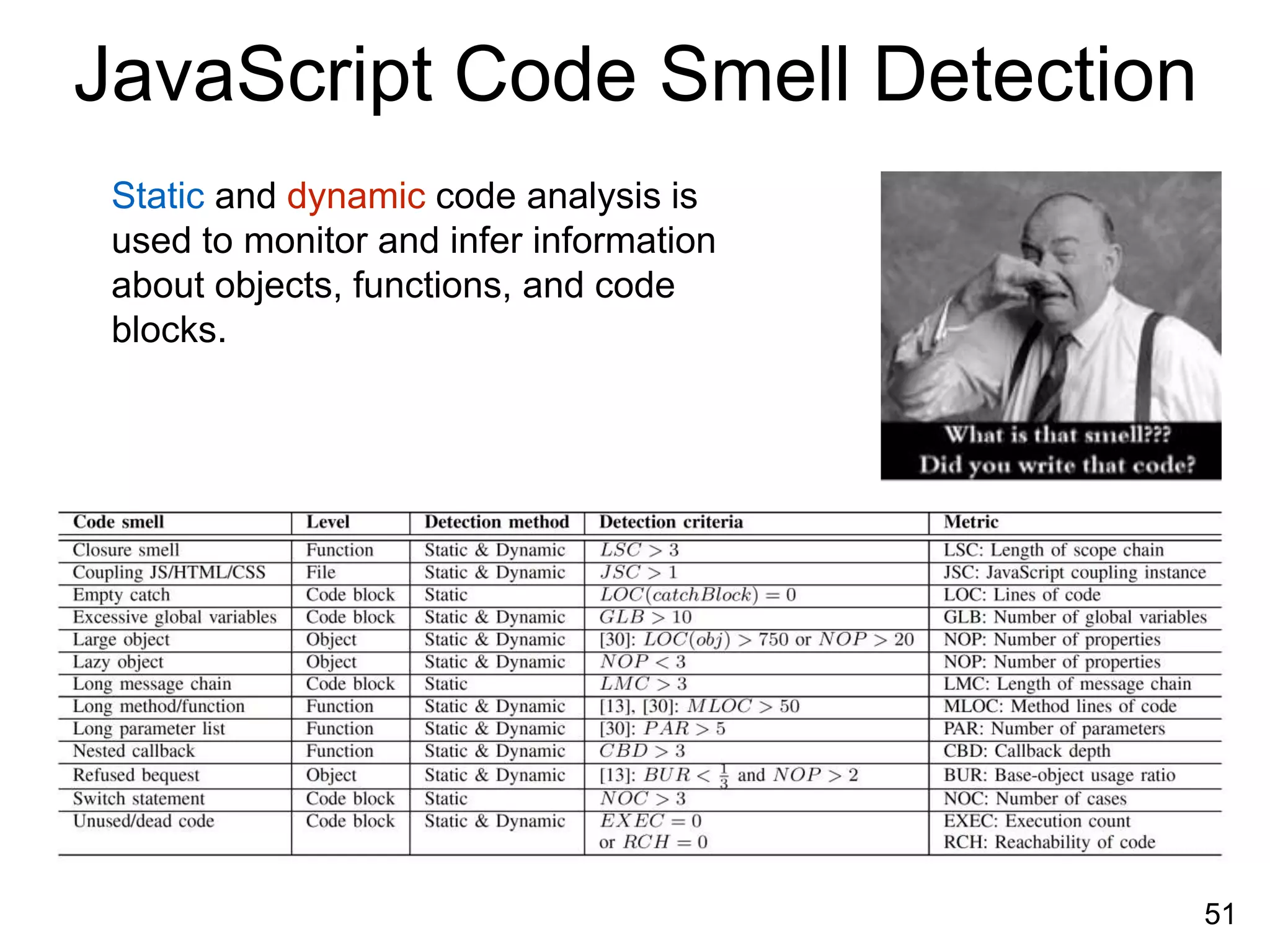

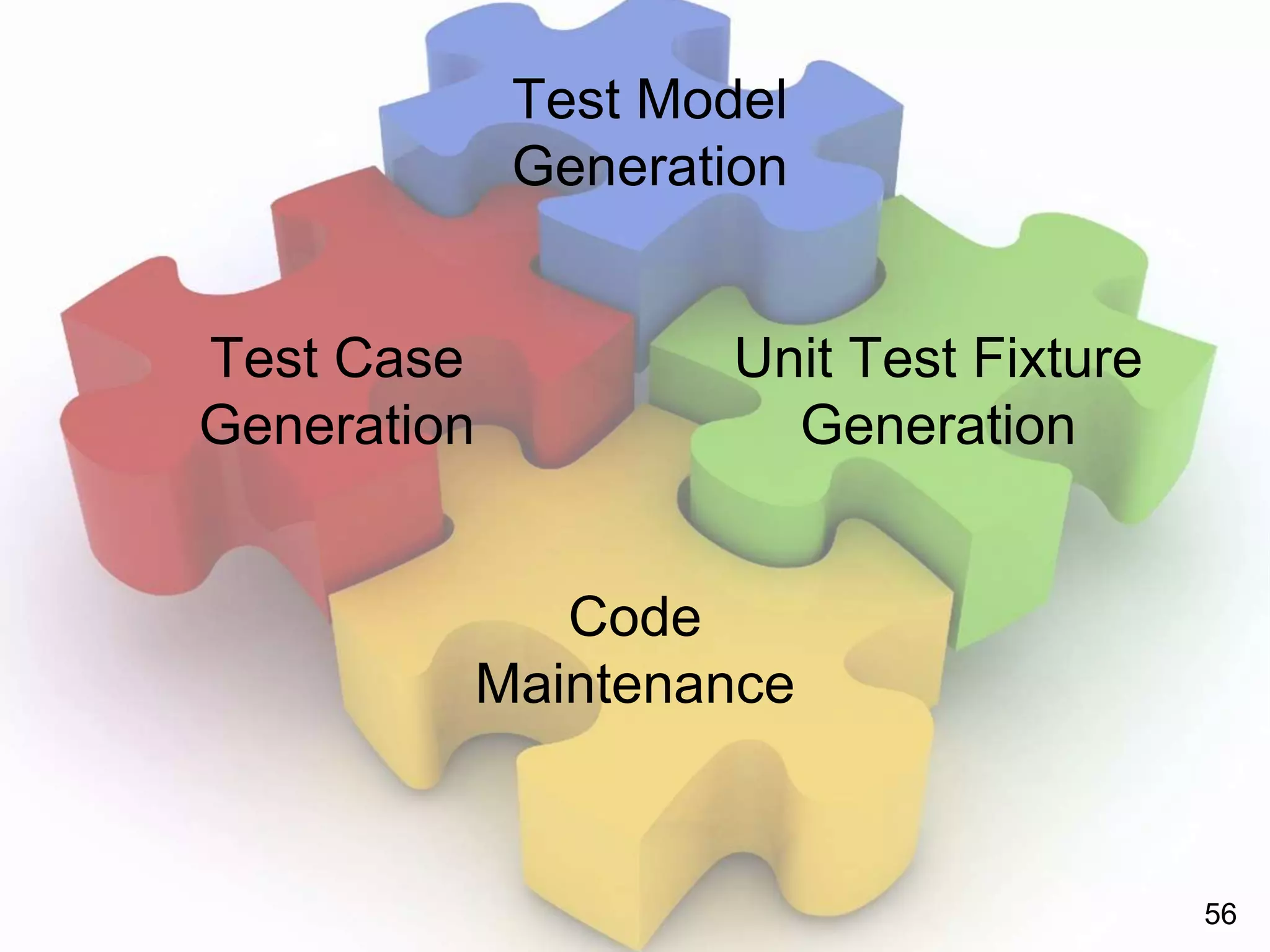

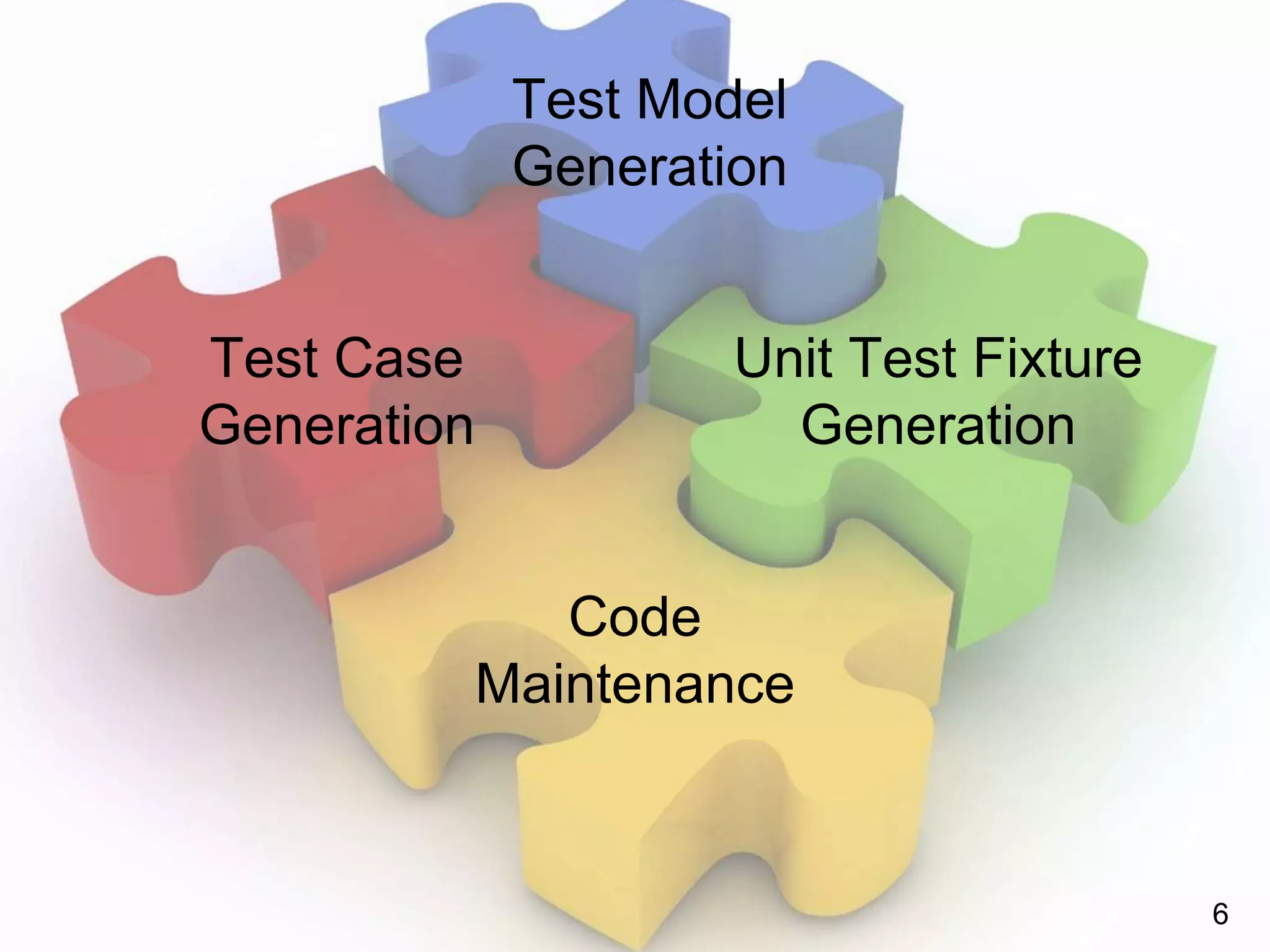

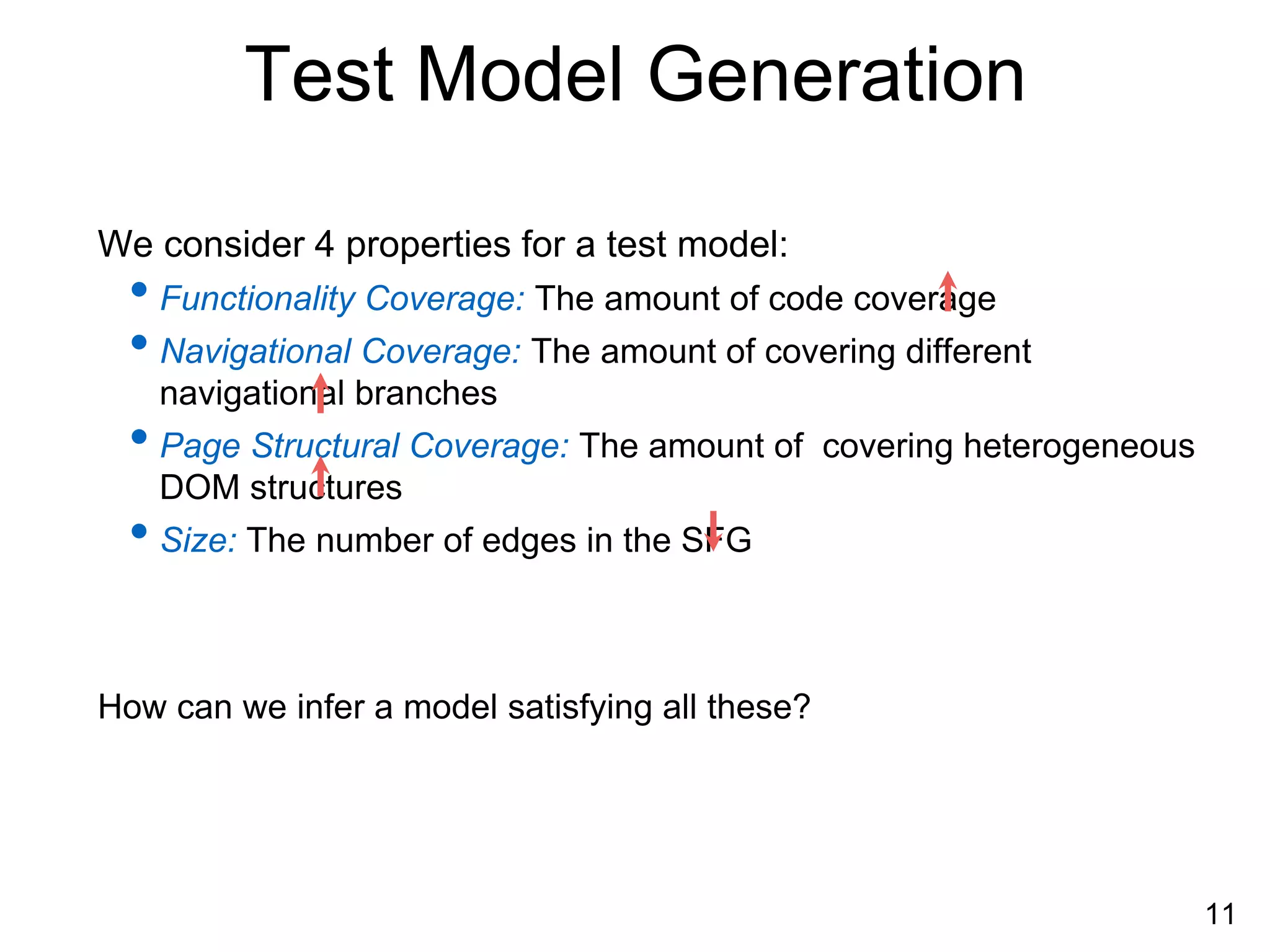

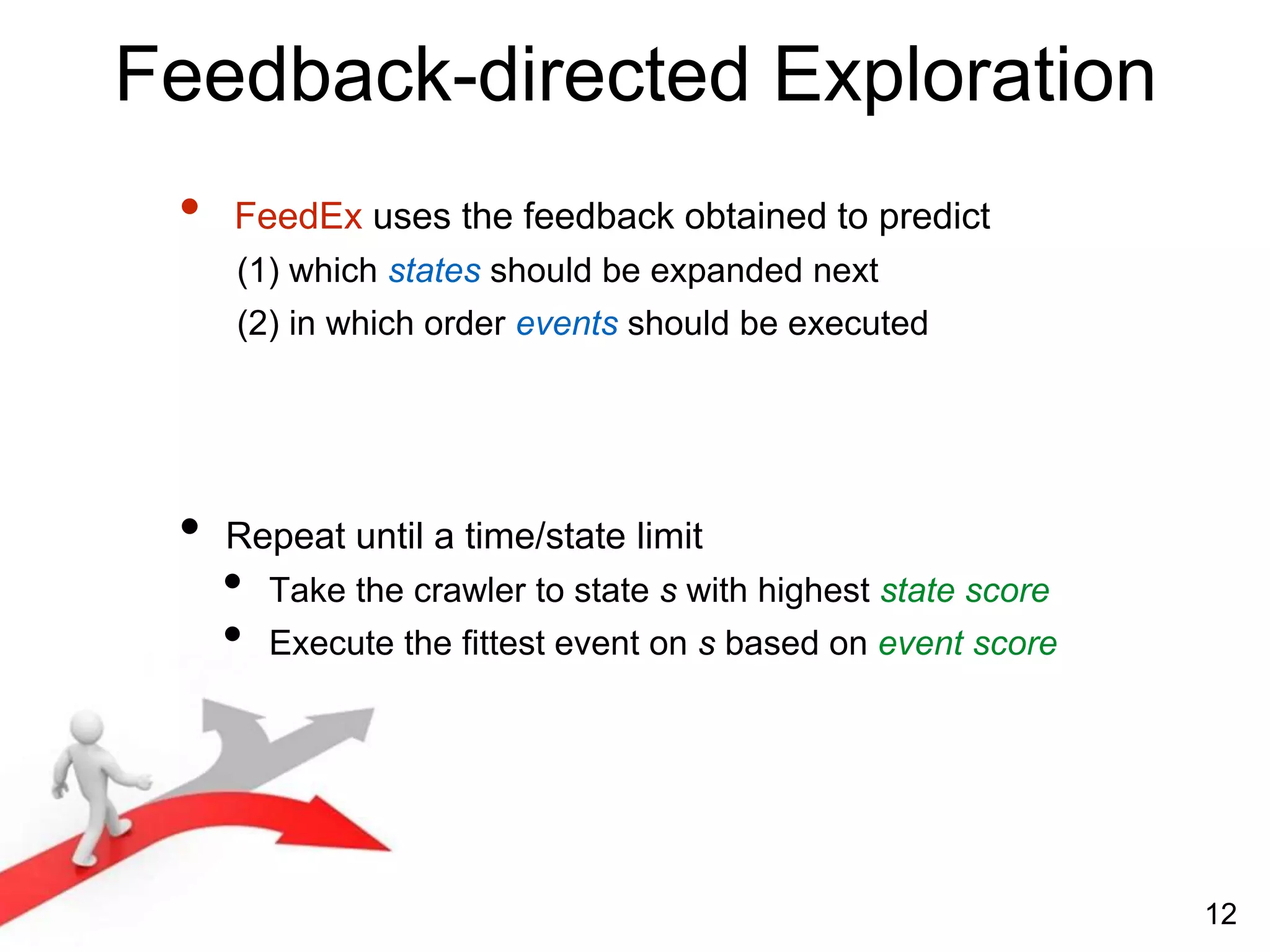

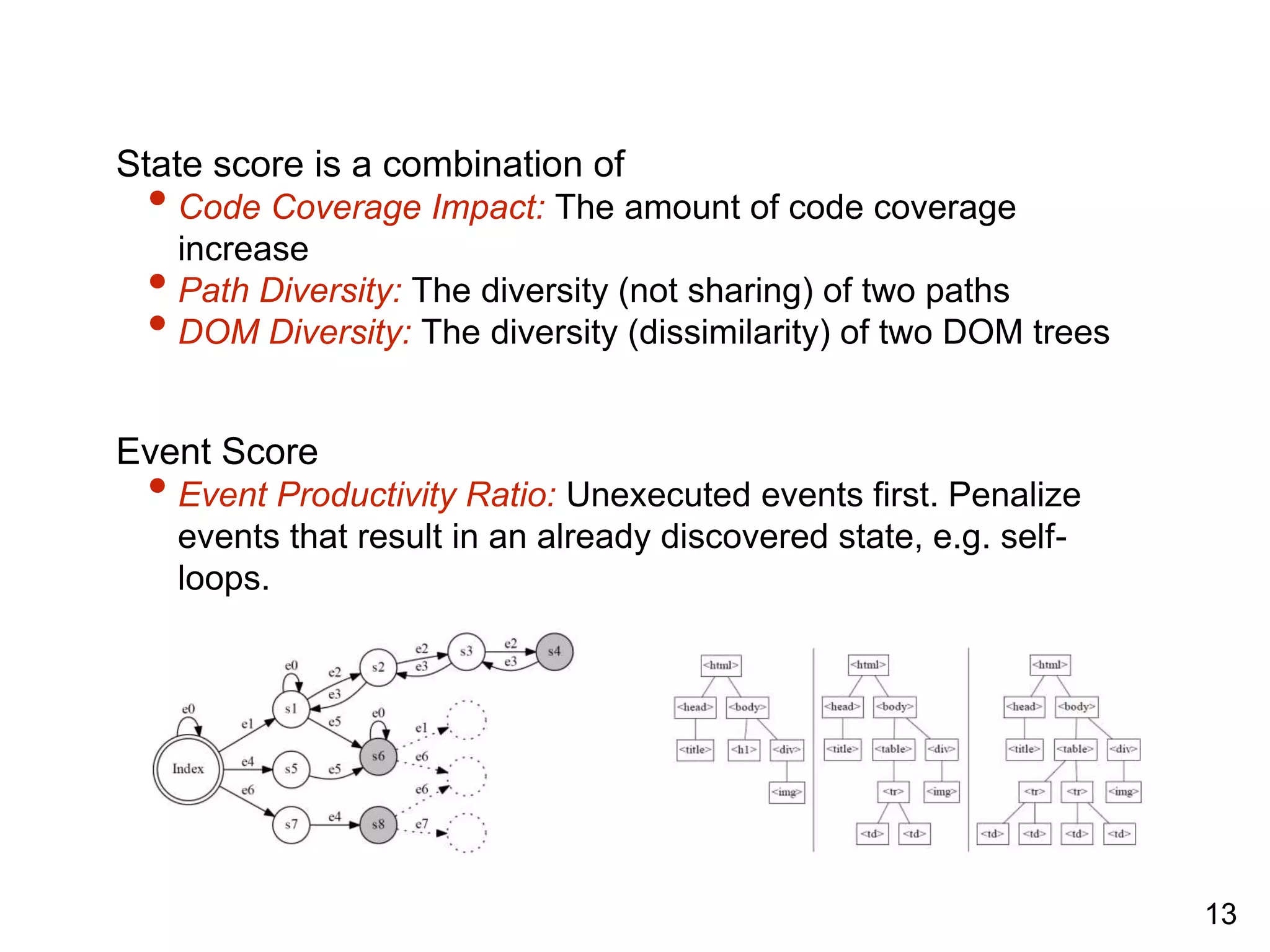

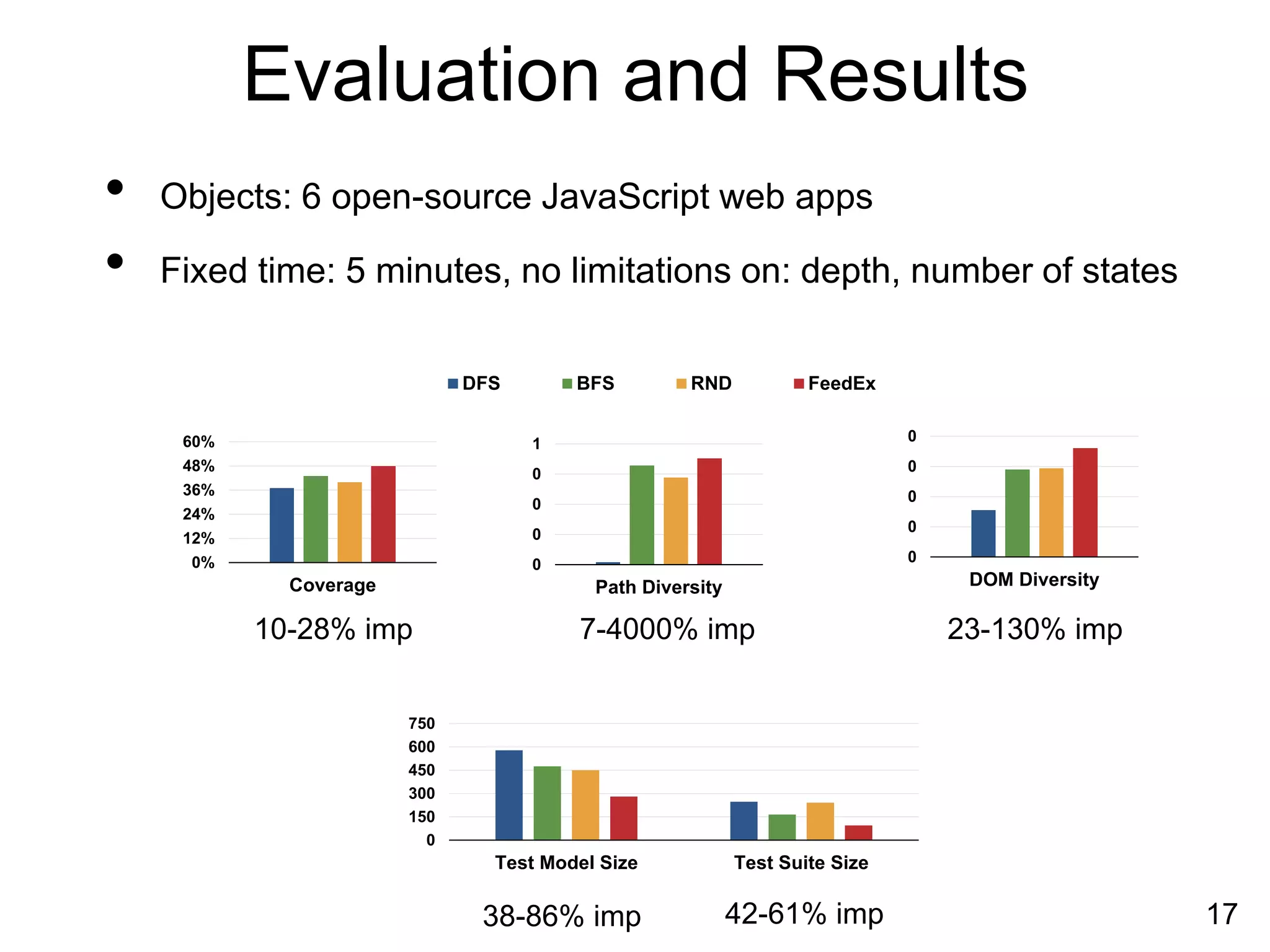

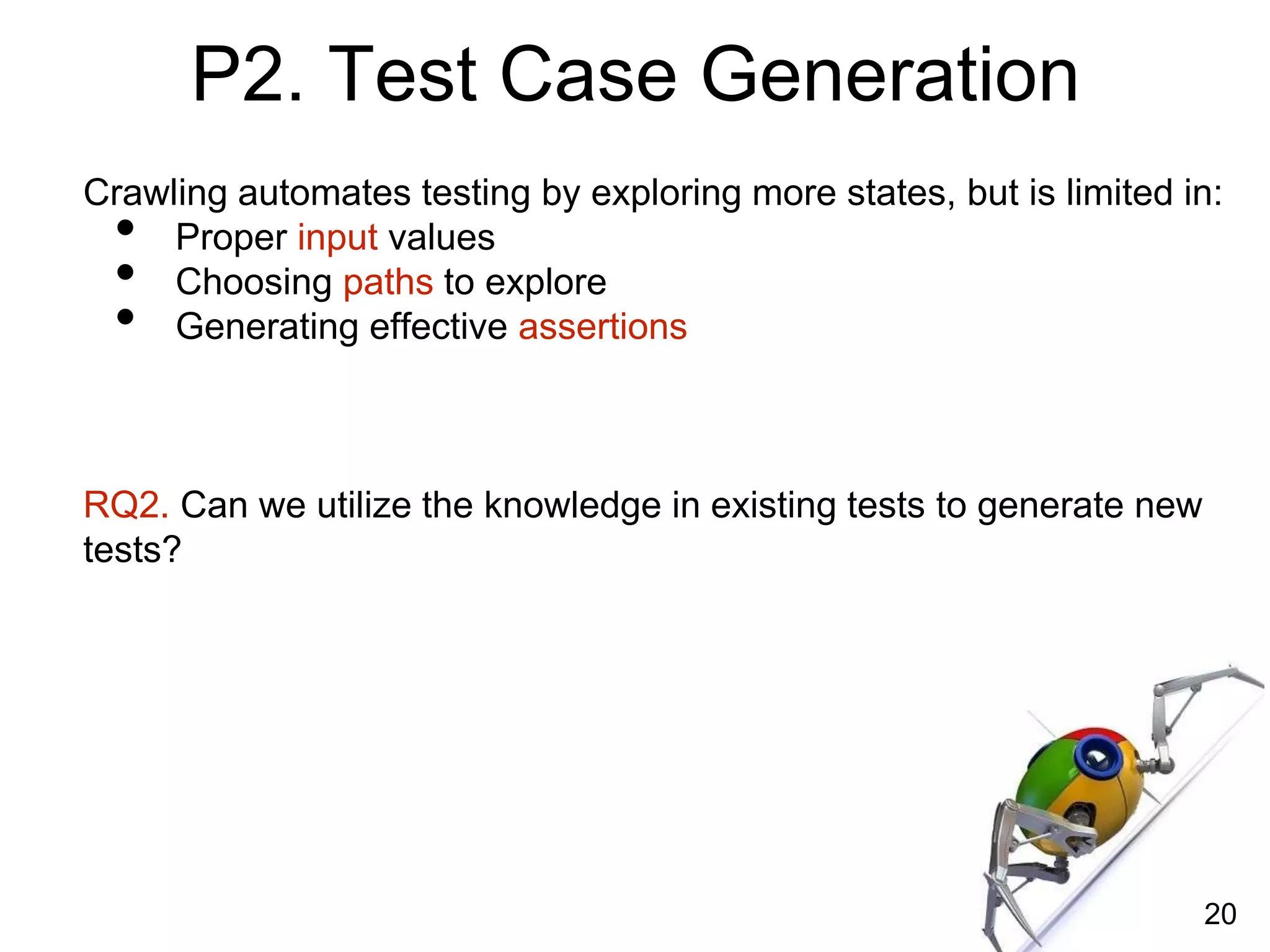

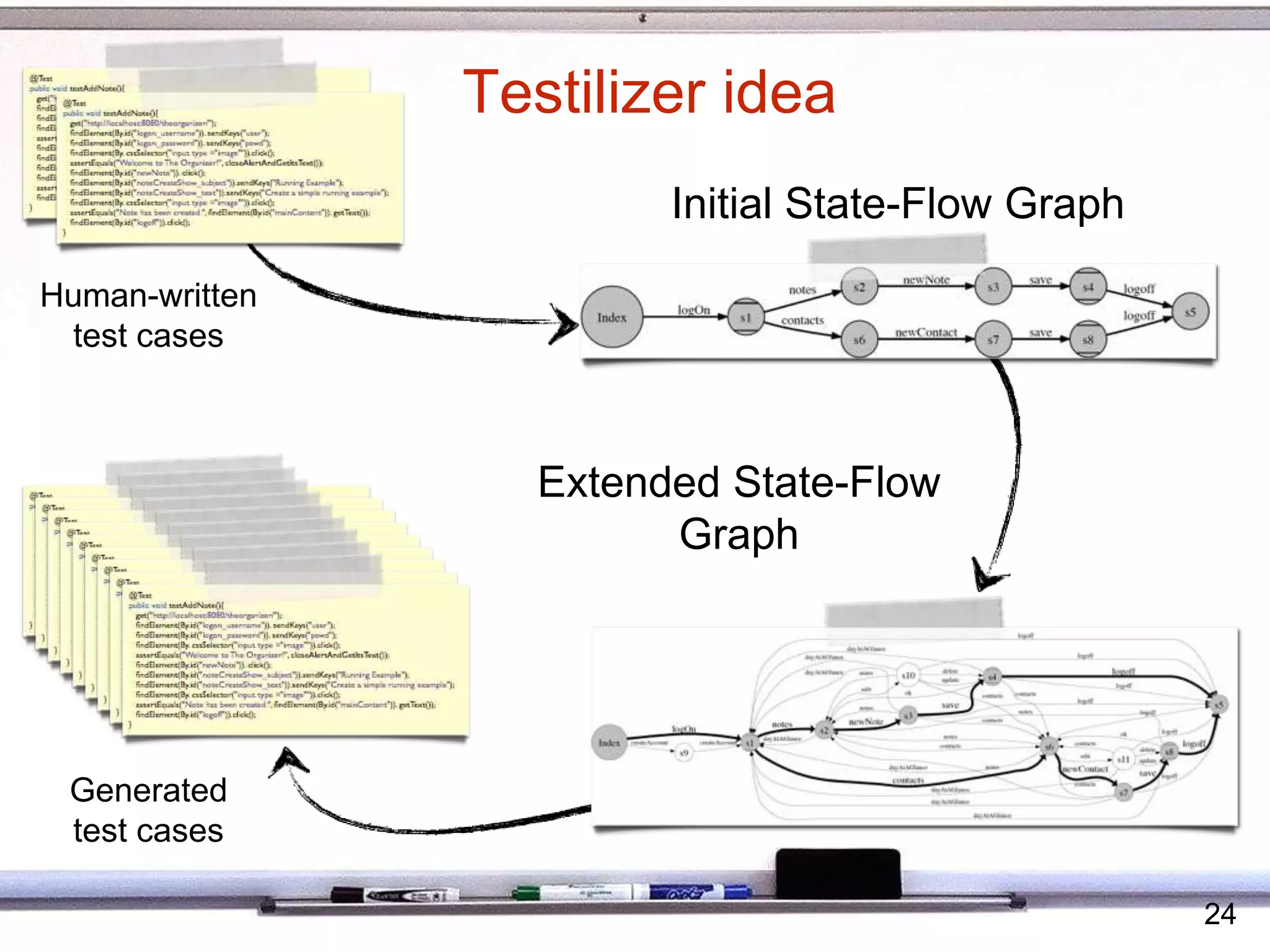

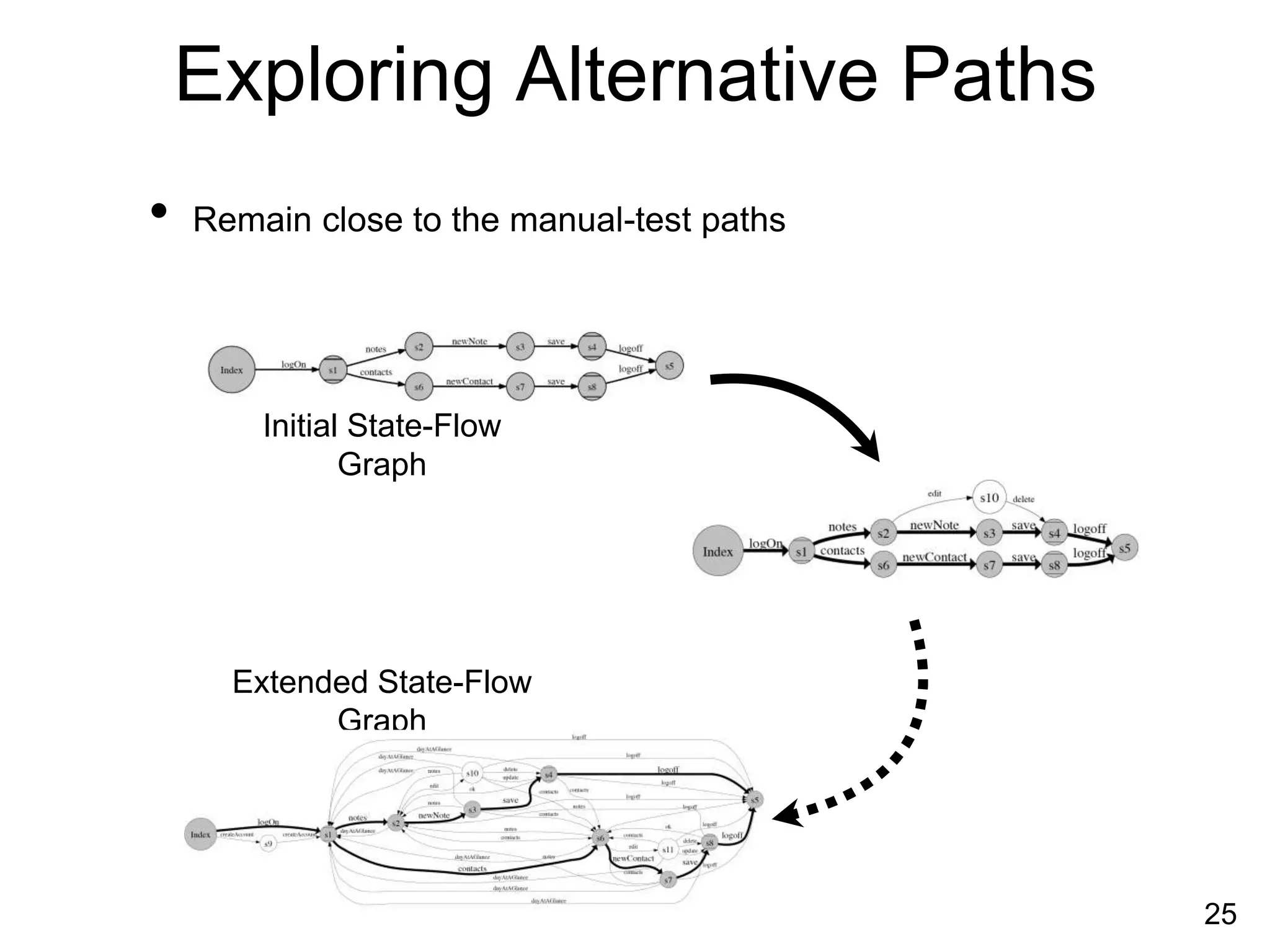

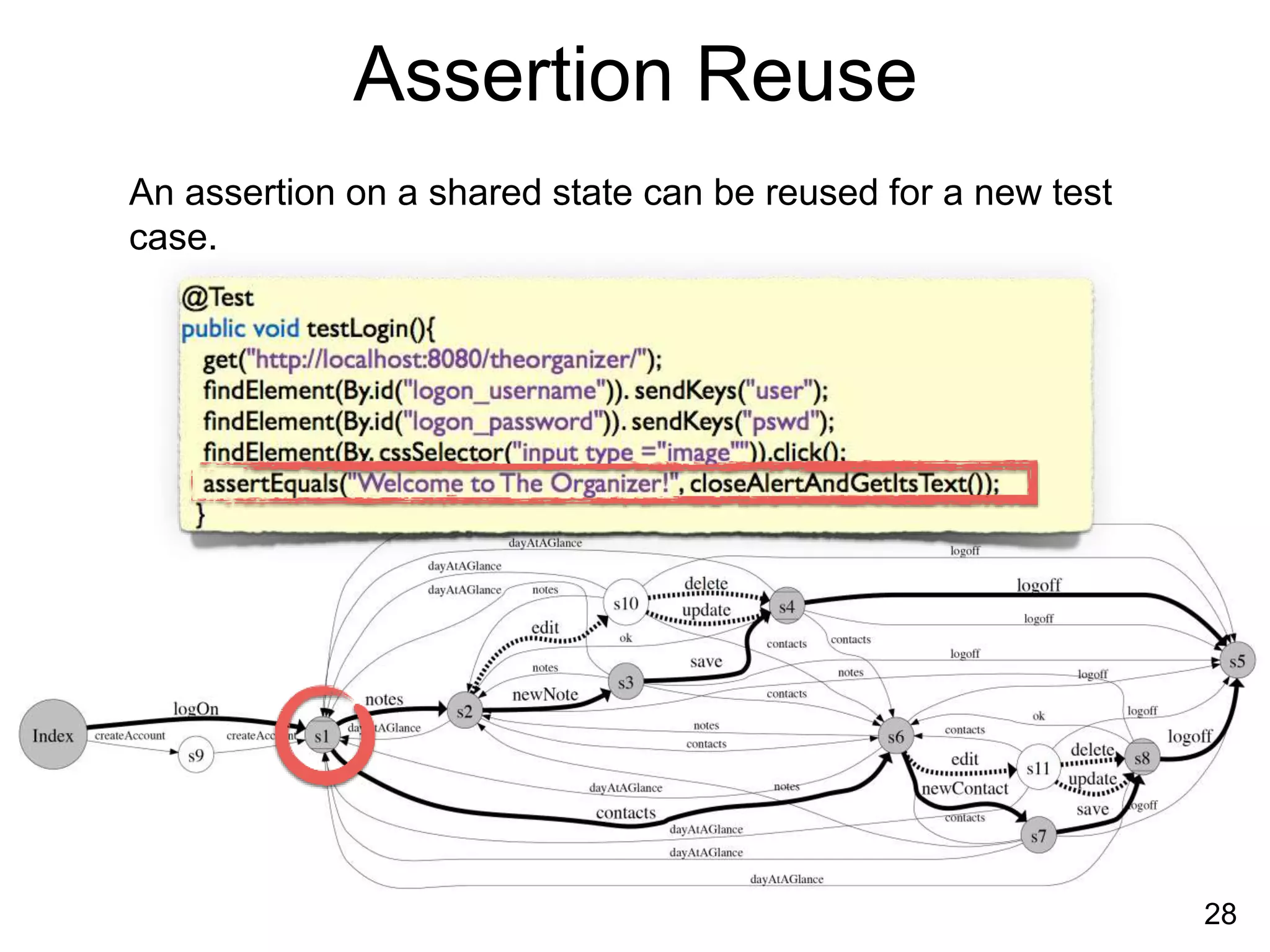

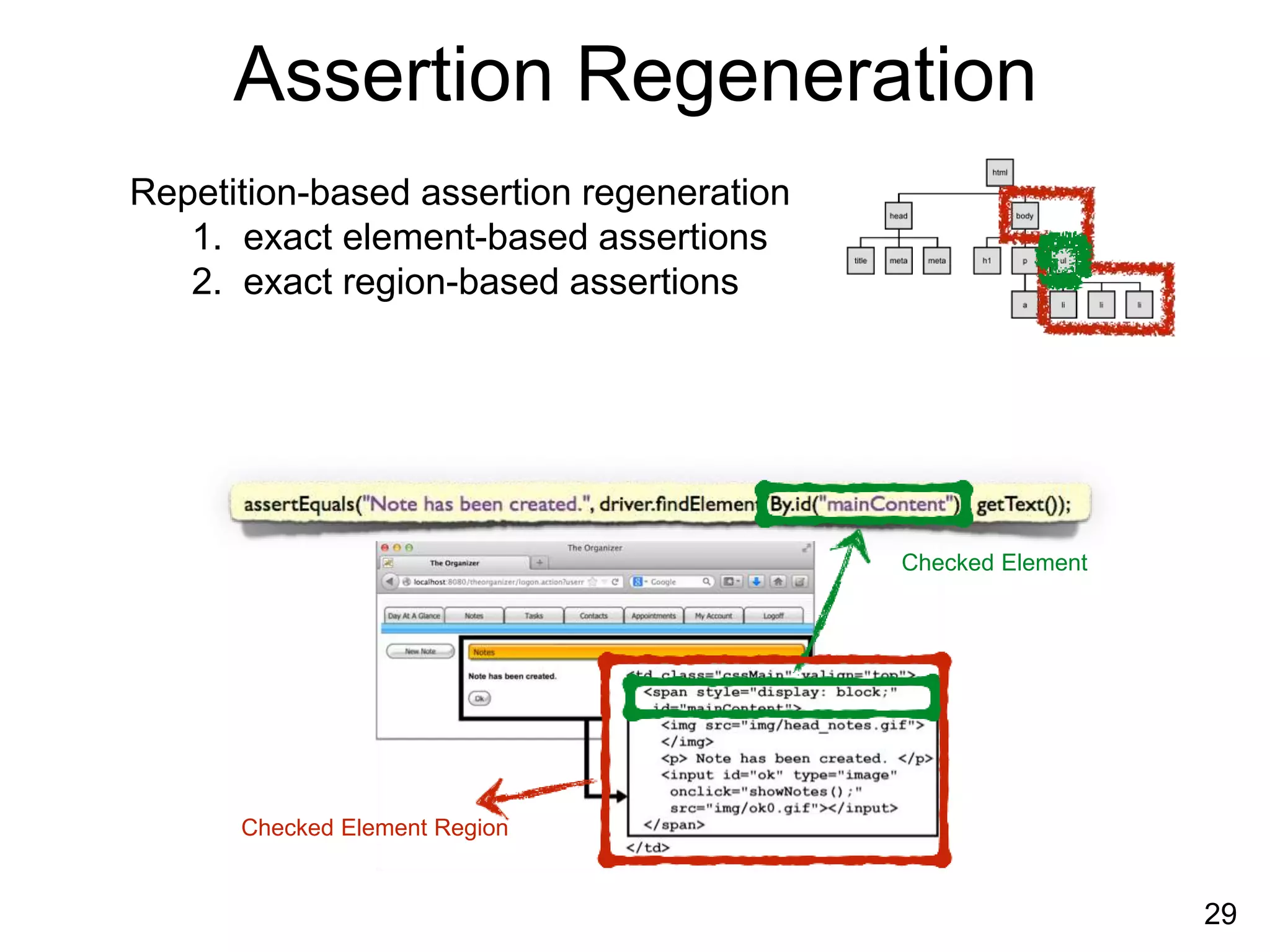

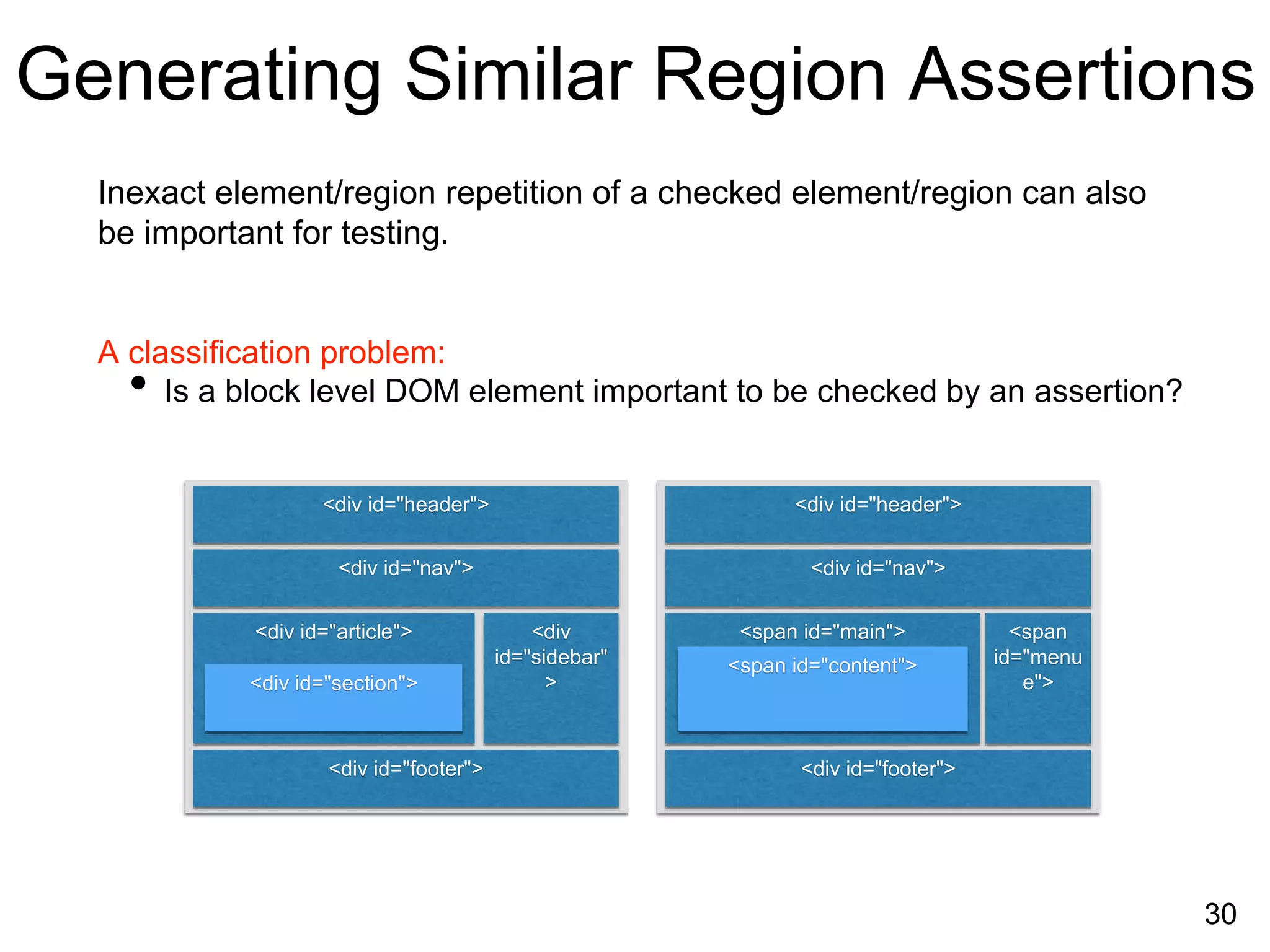

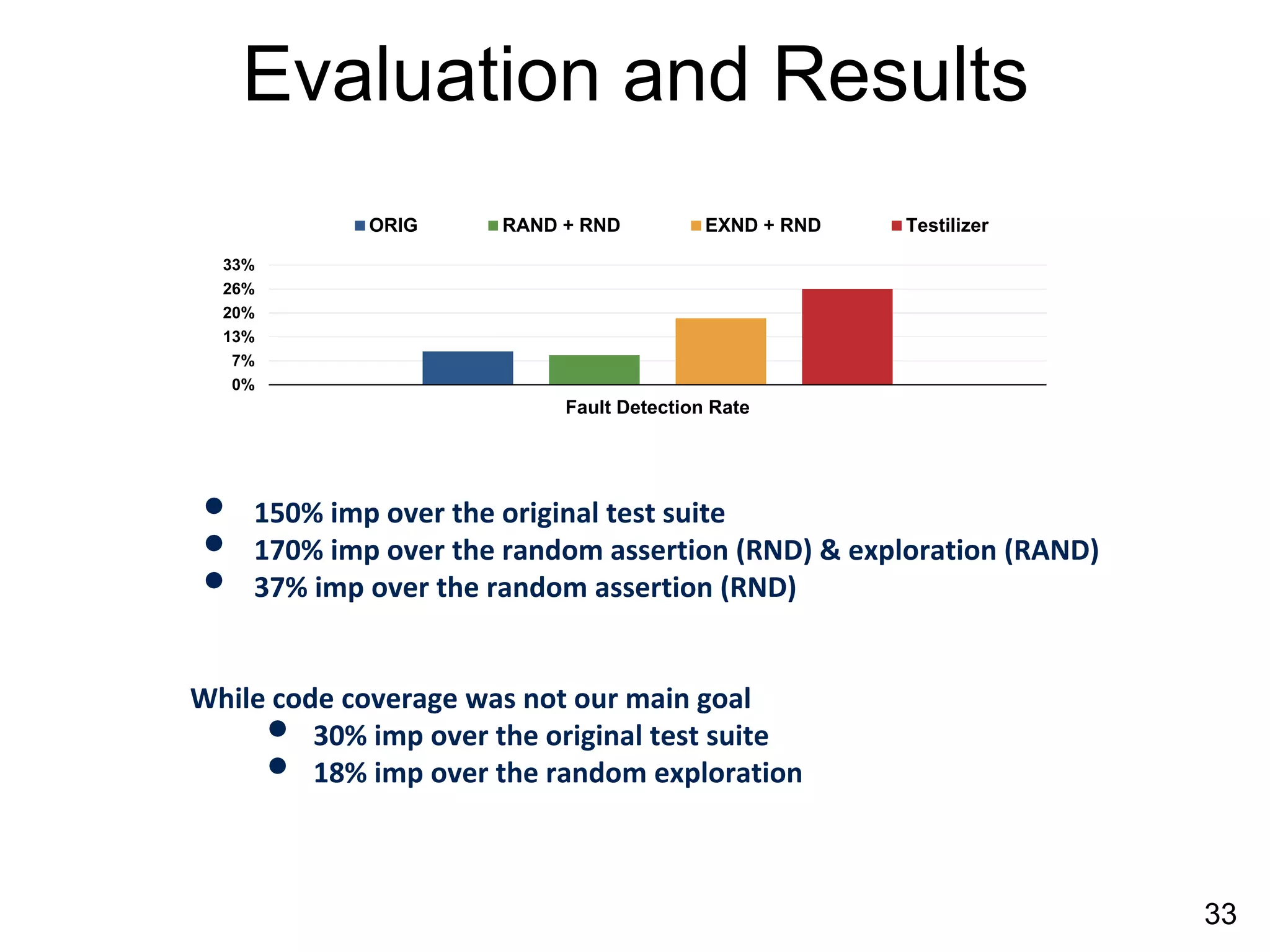

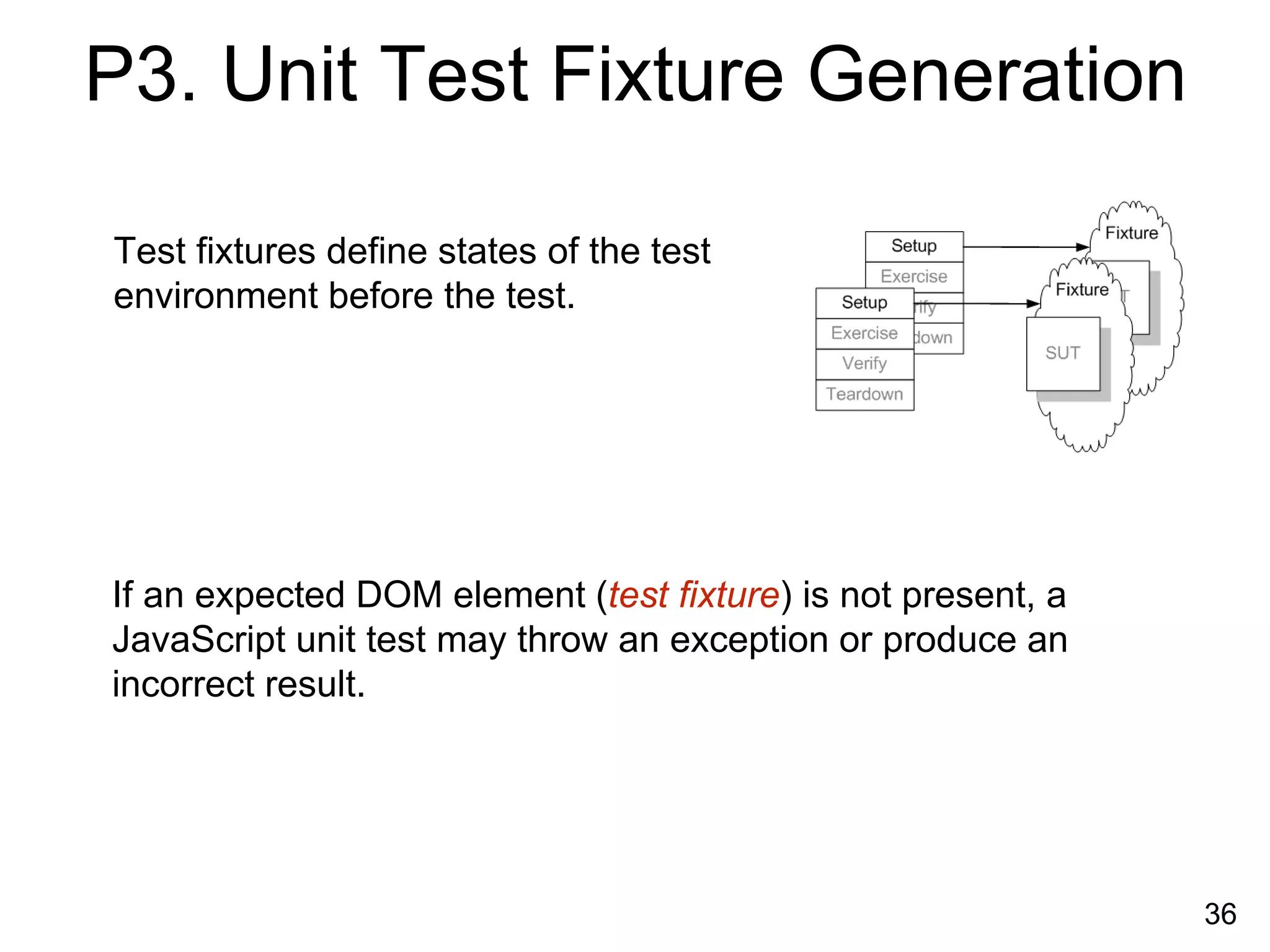

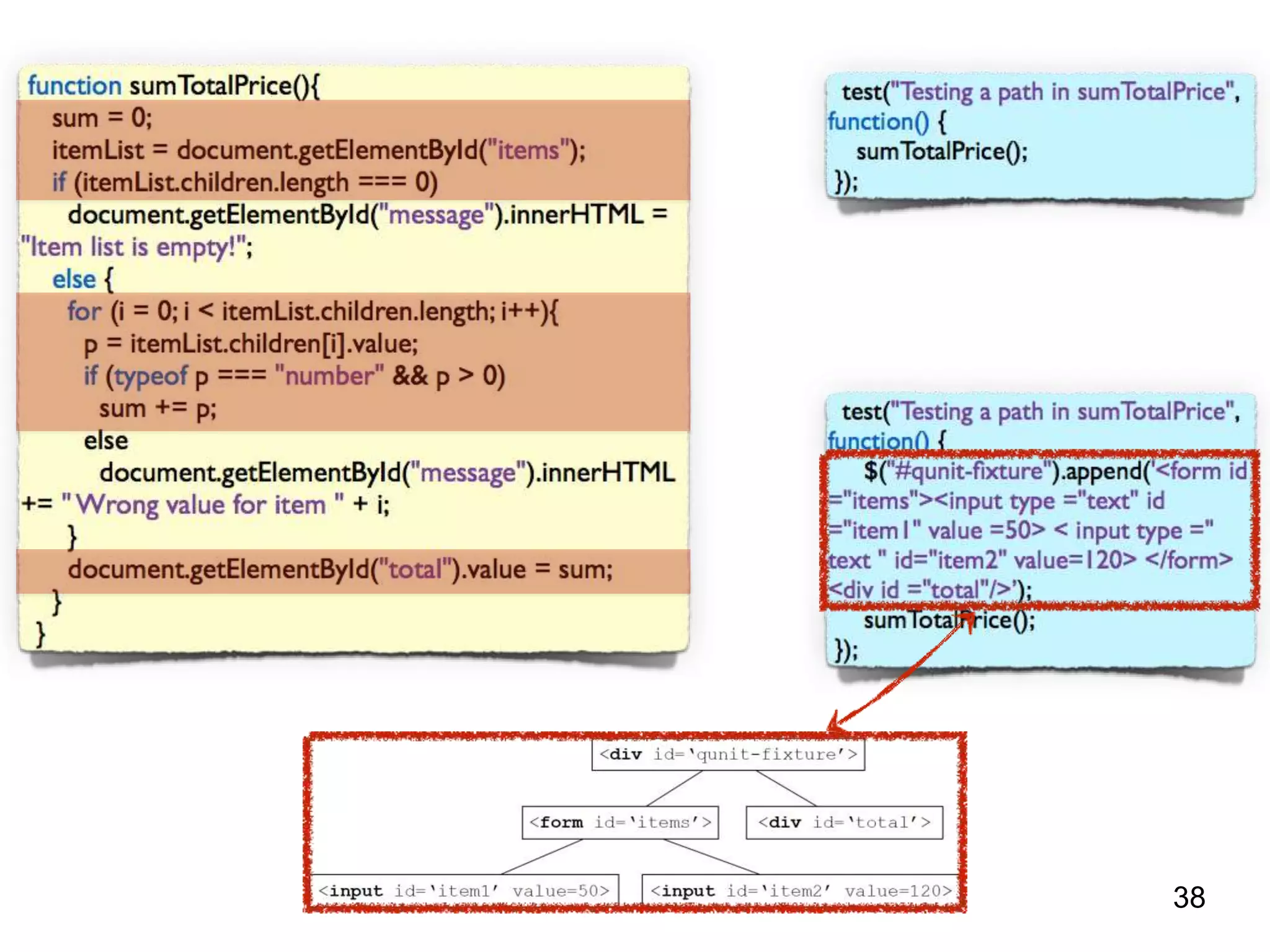

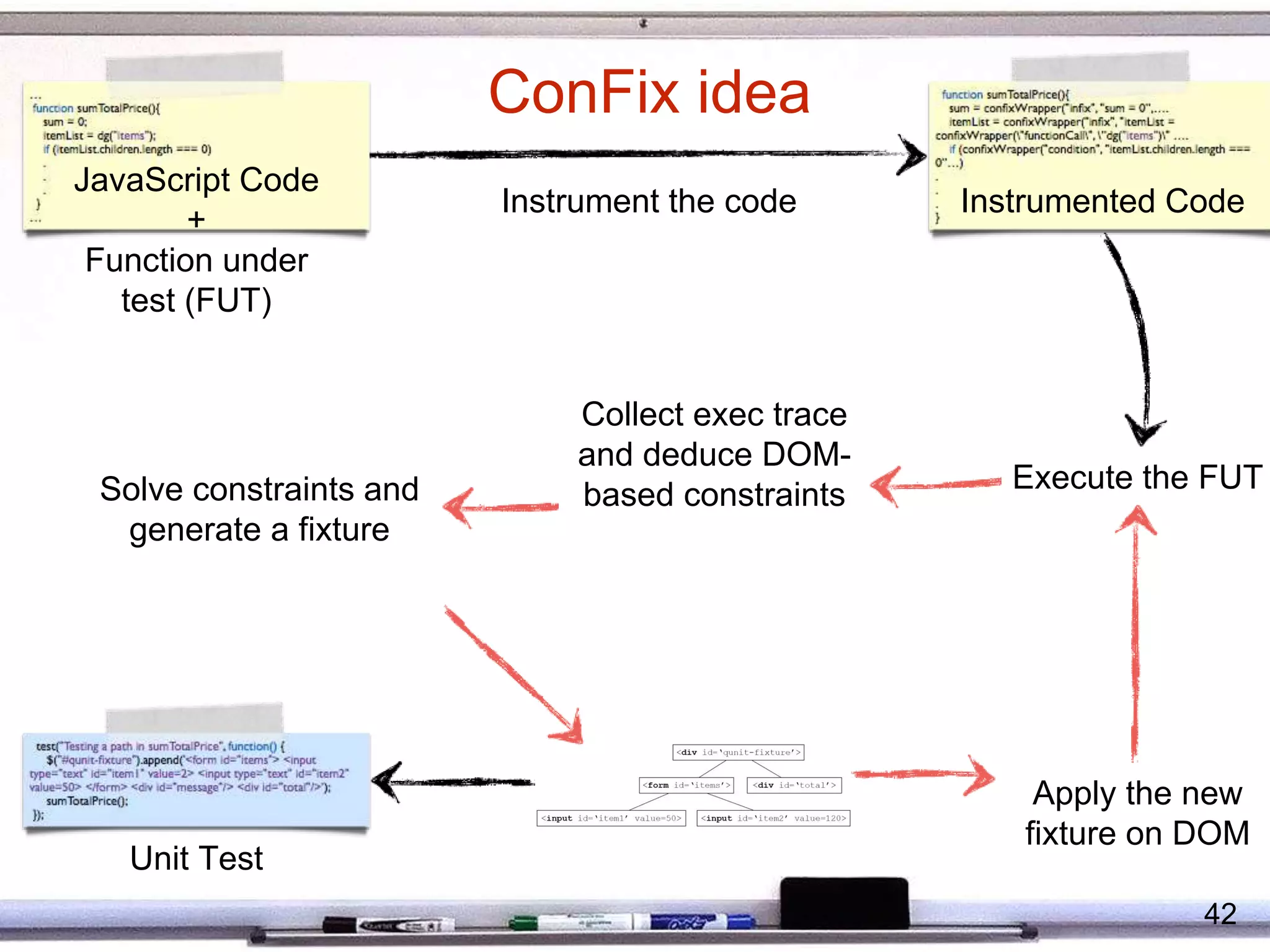

The document discusses automated testing techniques for web applications. It proposes feedback-directed exploration to generate test models more effectively than exhaustive crawling. It also leverages existing manual tests to generate new automated tests by reusing inputs, assertions and exploring alternative paths. A technique called ConFix is presented to automatically generate DOM-based fixtures for unit tests by collecting constraints from code instrumentation. Finally, the document discusses detecting prevalent JavaScript code smells like lazy objects to support automated refactoring.

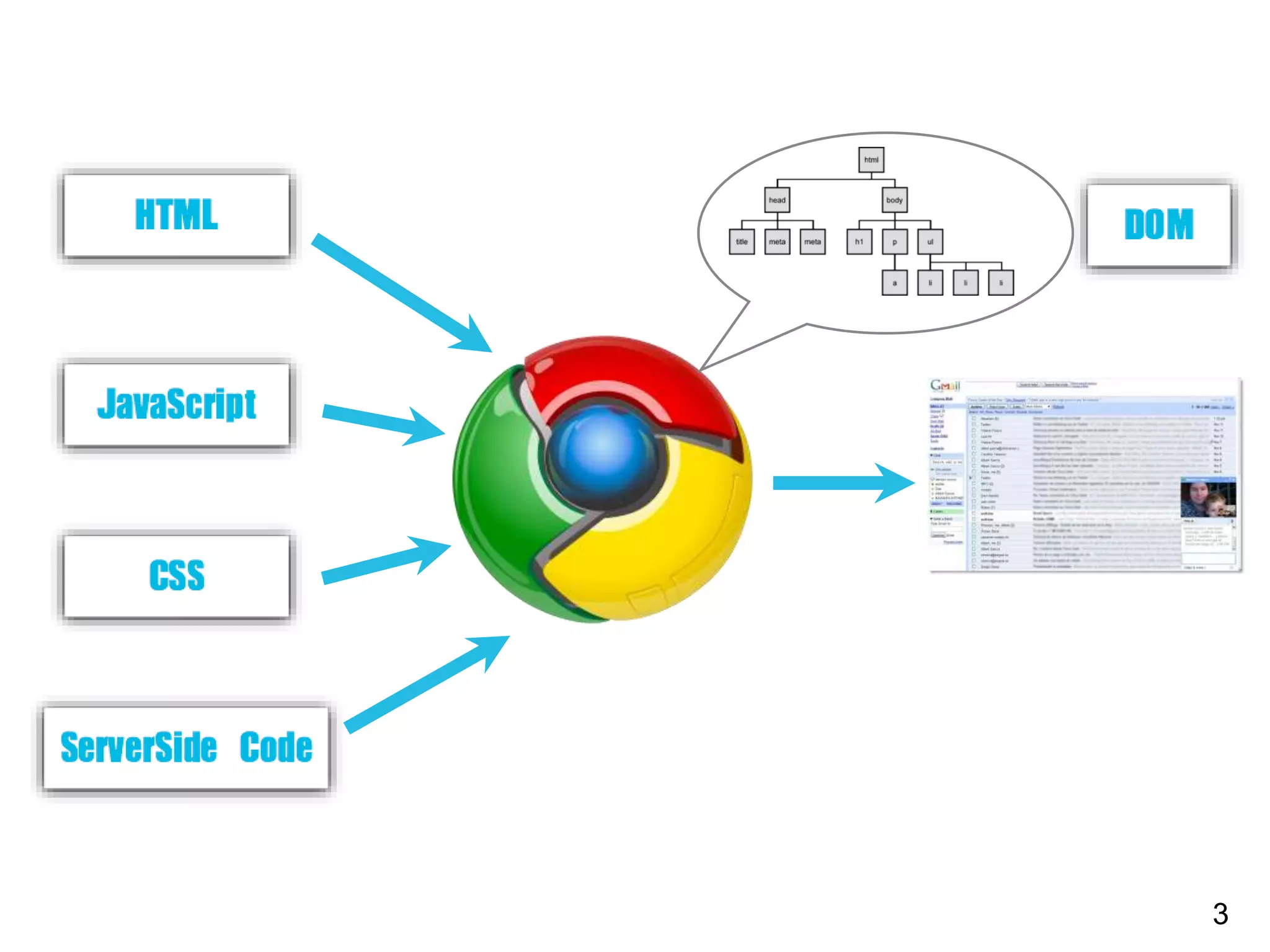

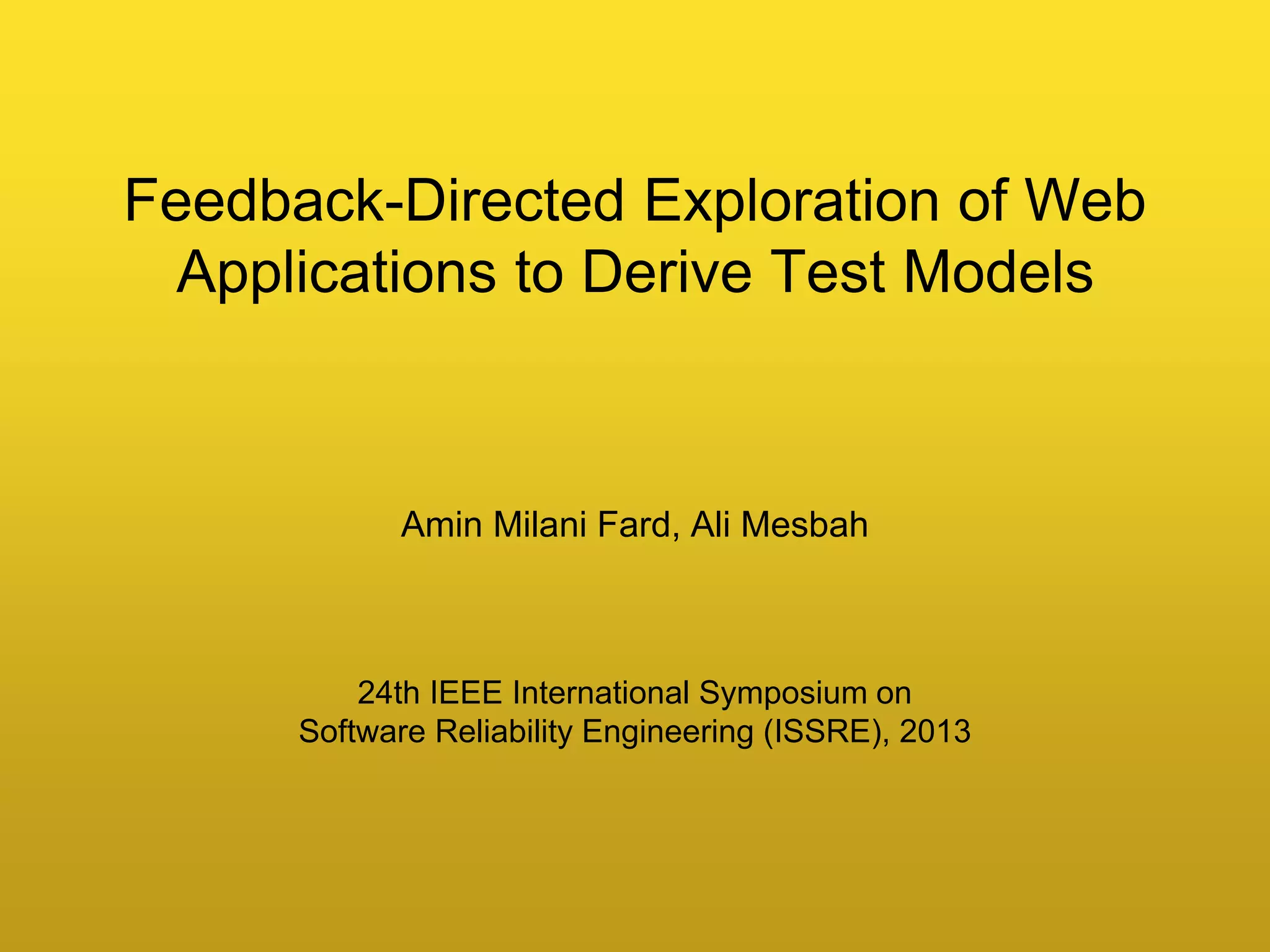

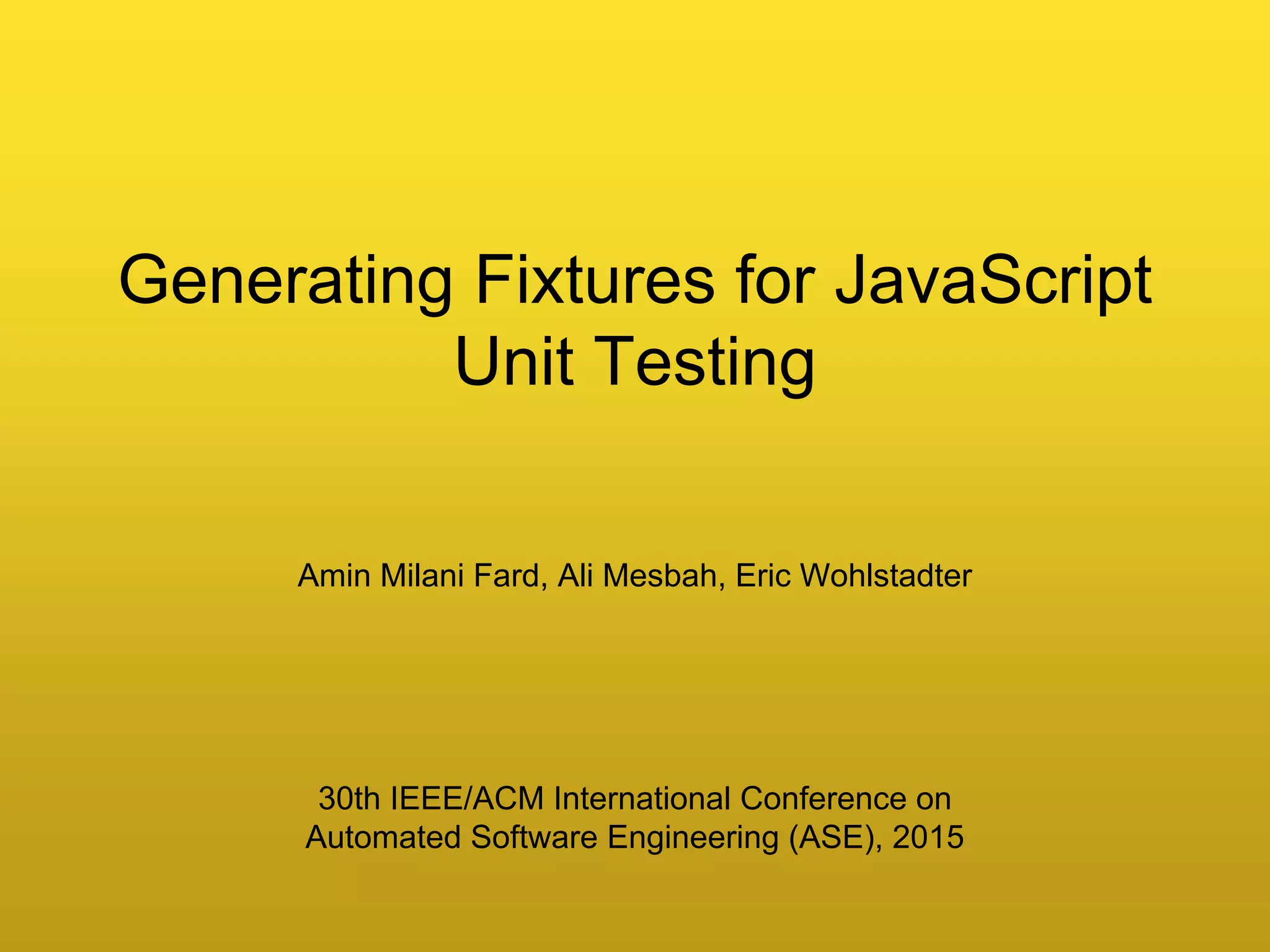

![Instrumentation

trace = [];

function confixWrapper(statementType, statement, varList, varValueList, enclosingFunction, actualStatement) {

trace.push({statementType: statementType, statement: statement, varList: varList, varValueList: varValueList, enclosingFunction:

enclosingFunction, actualStatement: actualStatement});

return actualStatement;

}

function getConfixTrace() {

return trace;

}

function dg(x) {

return confixWrapper("return", "return confixWrapper("functionCall", "document.getElementById(x)", ["x"], [x], "dg",

document.getElementById(x));", [""], [], "dg", confixWrapper("functionCall", "document.getElementById(x)", ["x"], [x], "dg",

document.getElementById(x)));

}

function sumTotalPrice() {

sum = confixWrapper("infix", "sum = 0", [""], [], "sumTotalPrice", 0);

itemList = confixWrapper("infix", "itemList = confixWrapper("functionCall", "dg('items')", ["items"], ['items'],

"sumTotalPrice", dg('items'))", [""], [], "sumTotalPrice", confixWrapper("functionCall", "dg('items')", ["items"], ['items'],

"sumTotalPrice", dg('items')));

if (confixWrapper("condition", "itemList.children.length === 0", [""], [], "sumTotalPrice", itemList.children.length === 0))

confixWrapper("functionCall", "dg('message')", ["message"], ['message'], "sumTotalPrice", dg('message')).innerHTML =

confixWrapper("infix", "confixWrapper("functionCall", "dg('message')", ["message"], ['message'], "sumTotalPrice",

dg('message')).innerHTML = "Item list is empty!"", [""], [], "sumTotalPrice", "Item list is empty!"); else {

for (i = confixWrapper("infix", "i = 0", [""], [], "sumTotalPrice", 0); confixWrapper("loopCondition", "i <

itemList.children.length", ["i", "itemList"], [i, itemList], "sumTotalPrice", i < itemList.children.length); i++)

{

p = confixWrapper("infix", "p = itemList.children[i].value", ["itemList.children[i]"], [itemList.children[i]],

"sumTotalPrice", itemList.children[i].value);

if (confixWrapper("condition", "p > 0", [""], [], "sumTotalPrice", p > 0))

sum += p; else confixWrapper("functionCall", "dg('message')", ["message"], ['message'], "sumTotalPrice",

dg('message')).innerHTML += " Wrong value for item " + i;

}

confixWrapper("functionCall", "dg('total')", ["total"], ['total'], "sumTotalPrice", dg('total')).value = confixWrapper("infix",

"confixWrapper("functionCall", "dg('total')", ["total"], ['total'], "sumTotalPrice", dg('total')).value = sum", [""], [],

"sumTotalPrice", sum);

}

44](https://image.slidesharecdn.com/fum2015-151123211035-lva1-app6892/75/Amin-Milani-Fard-Directed-Model-Inference-for-Testing-and-Analysis-of-Web-Applications-32-2048.jpg)