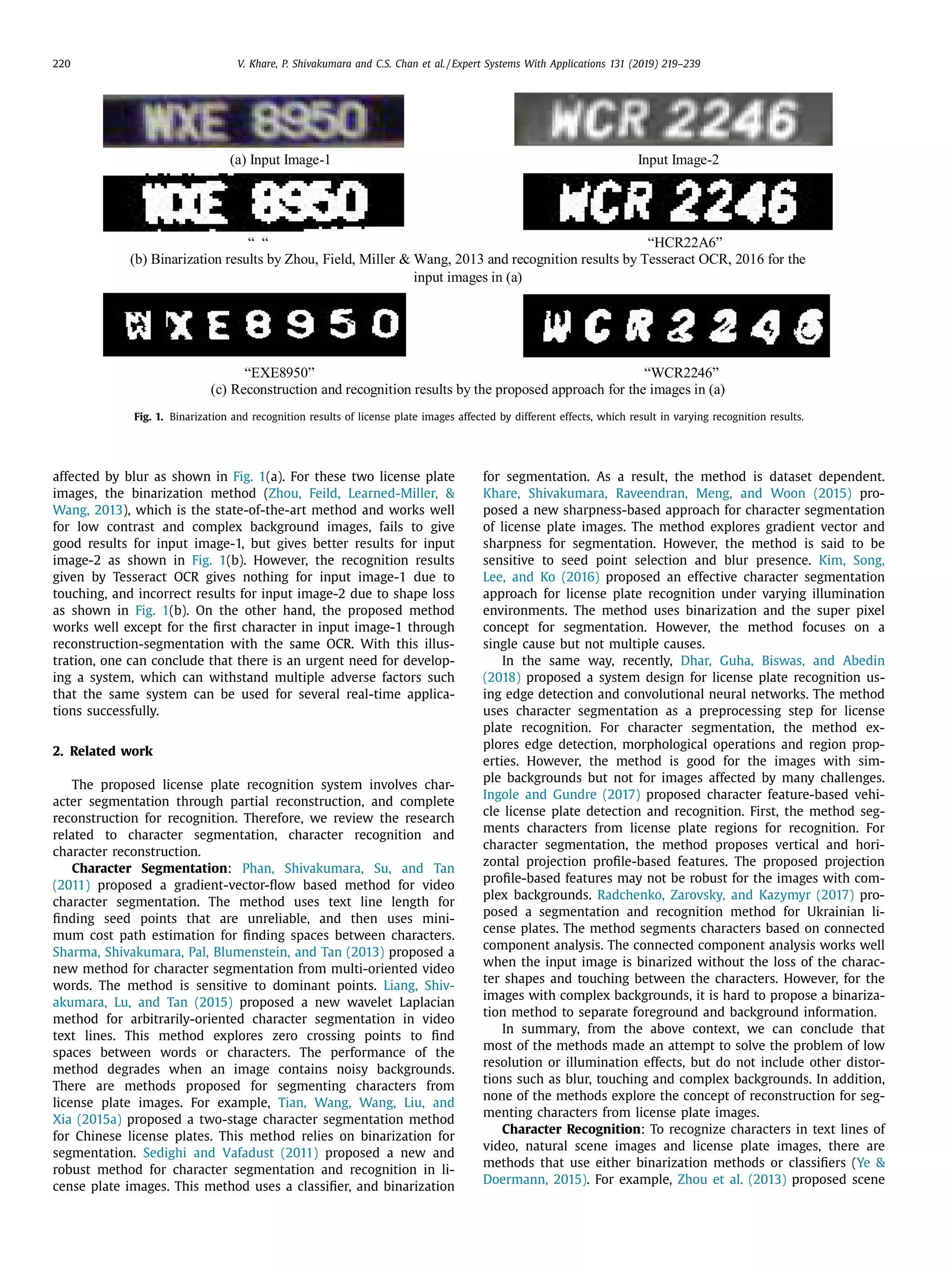

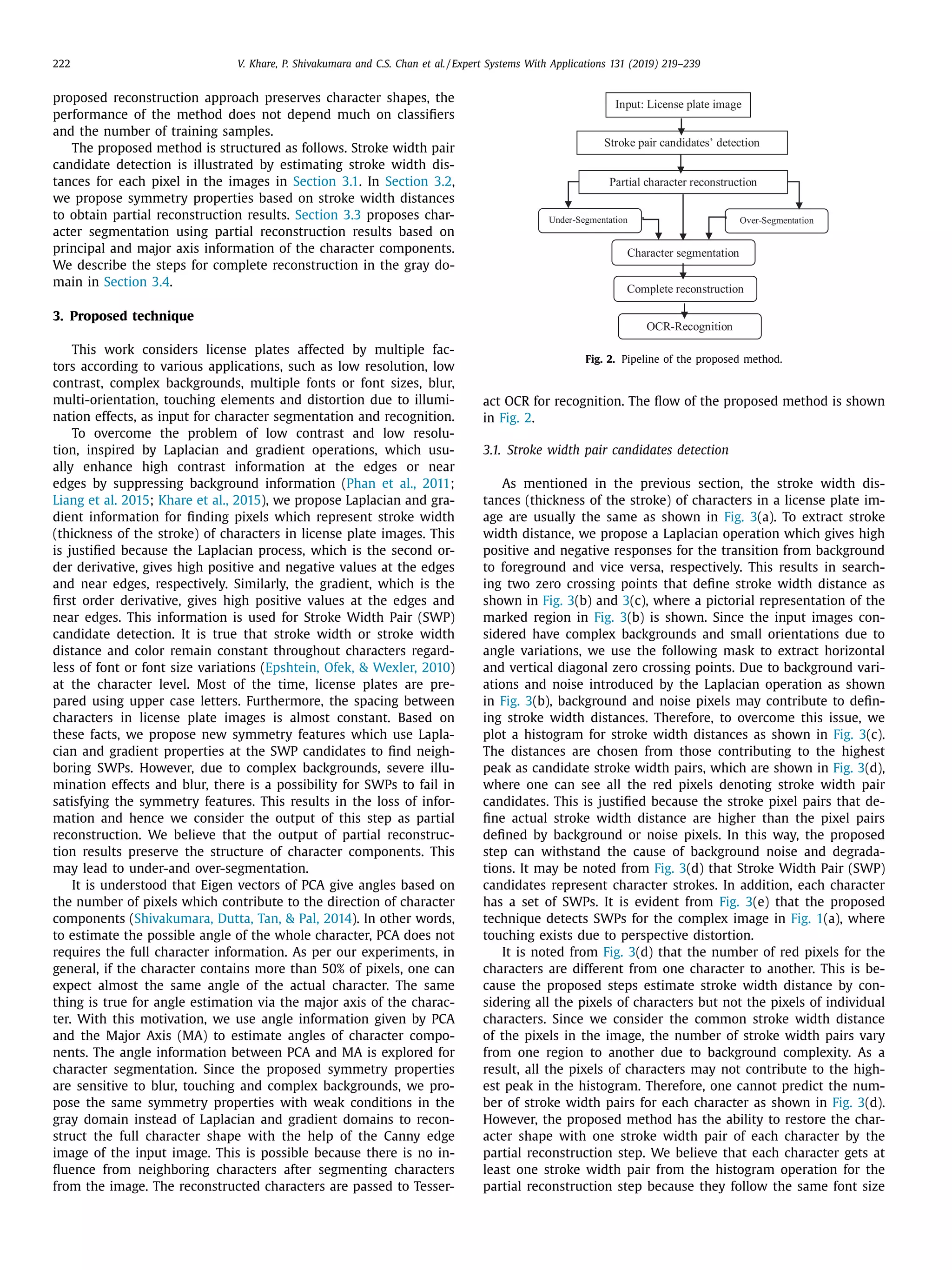

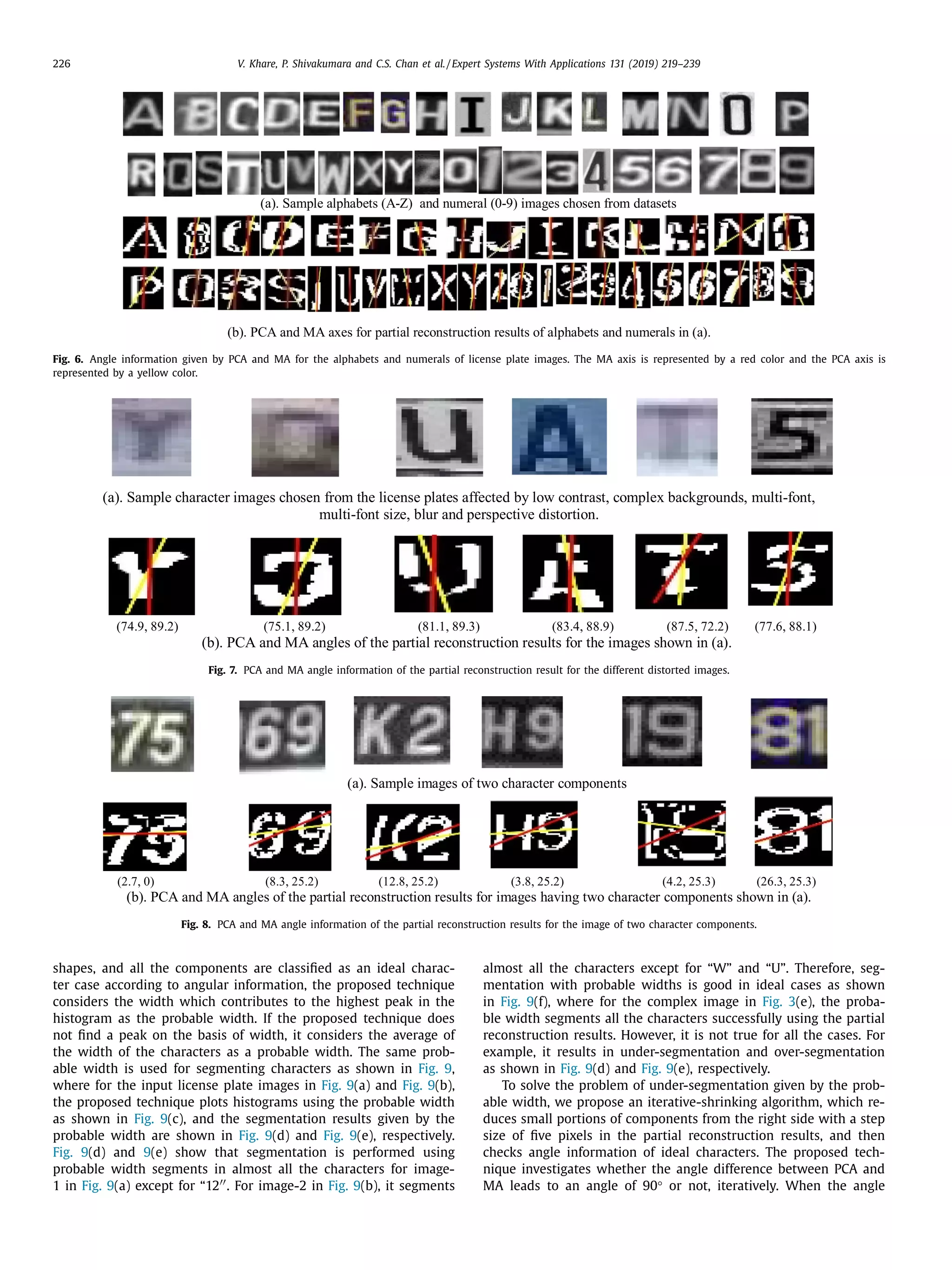

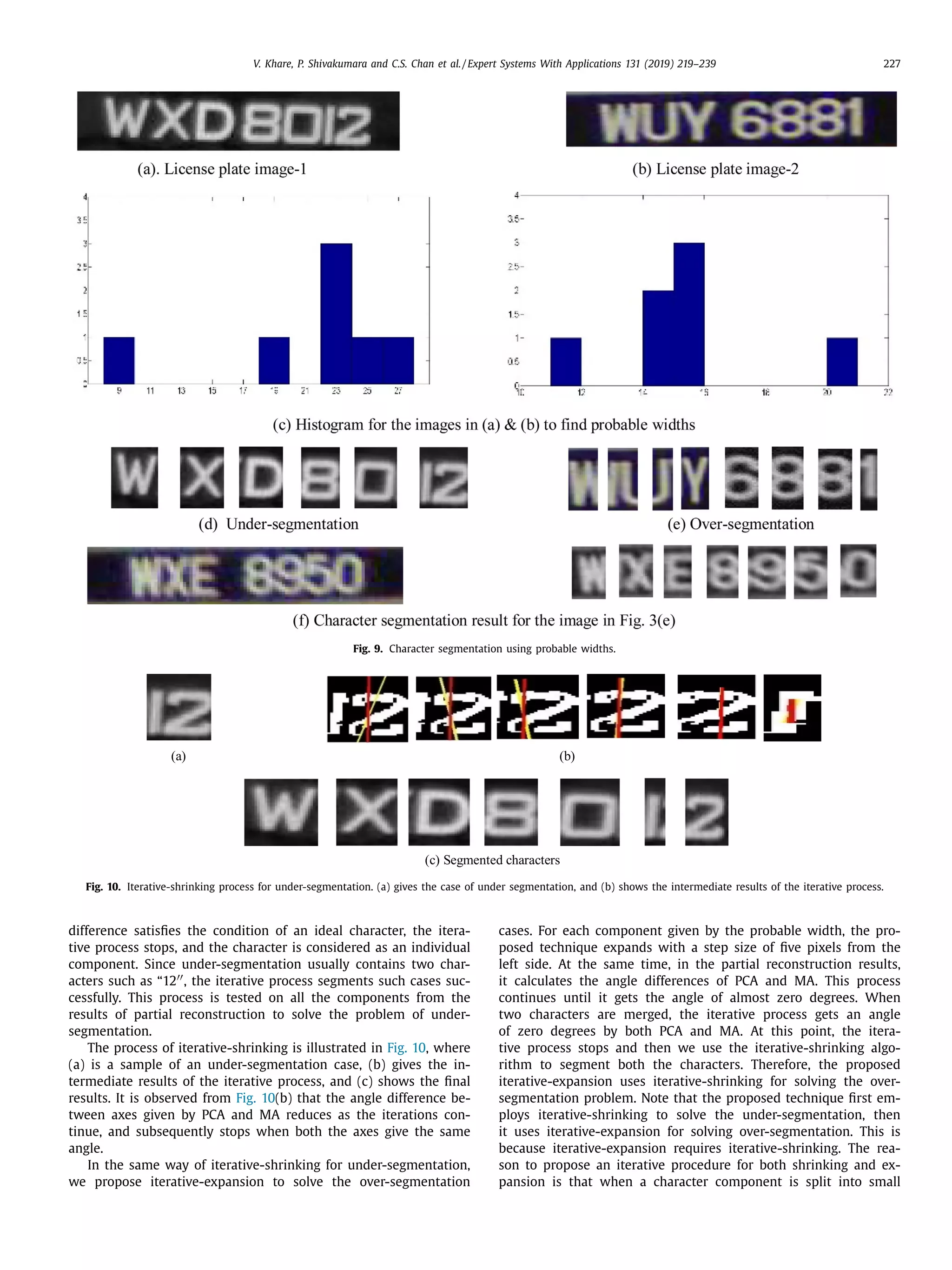

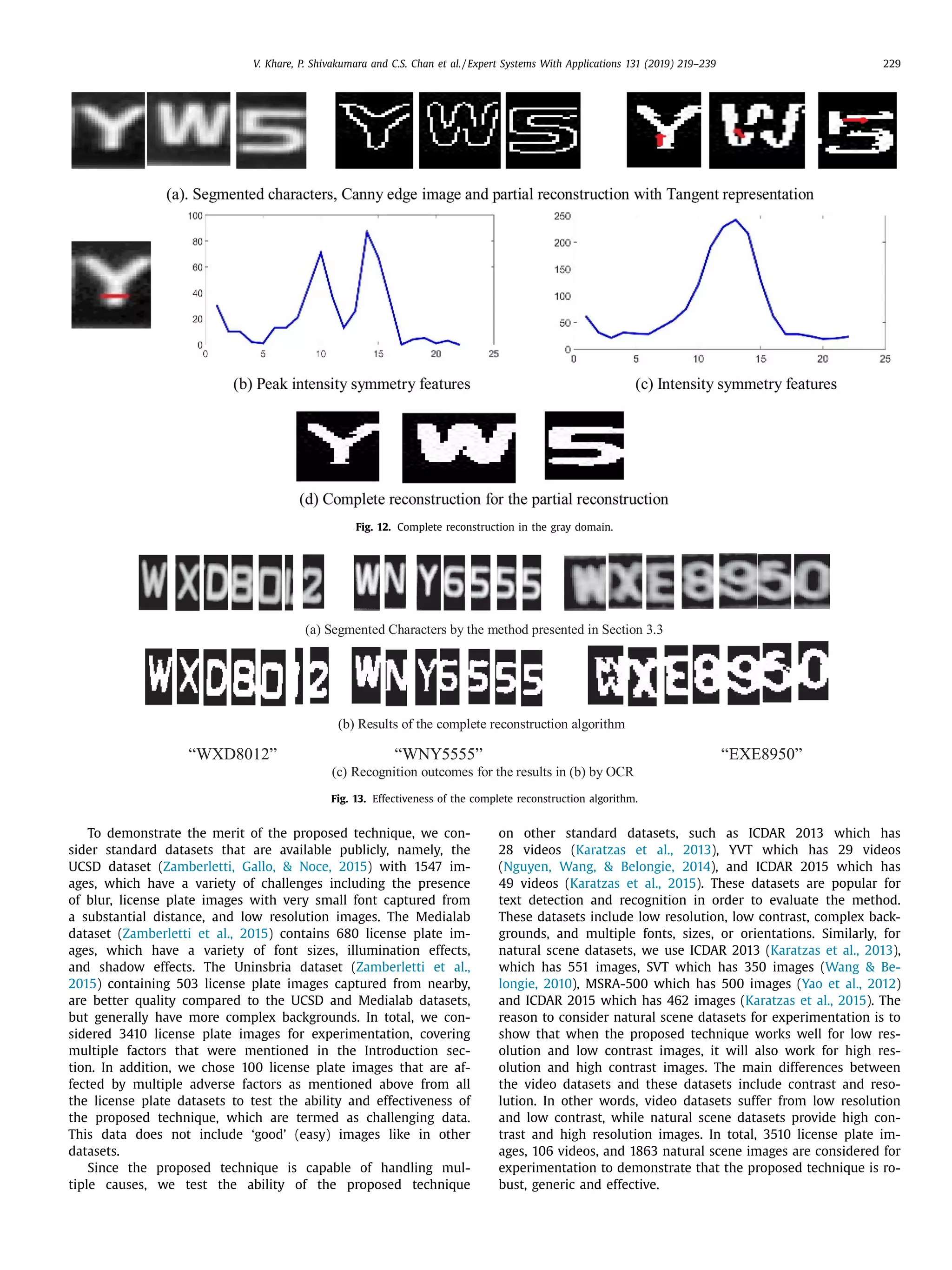

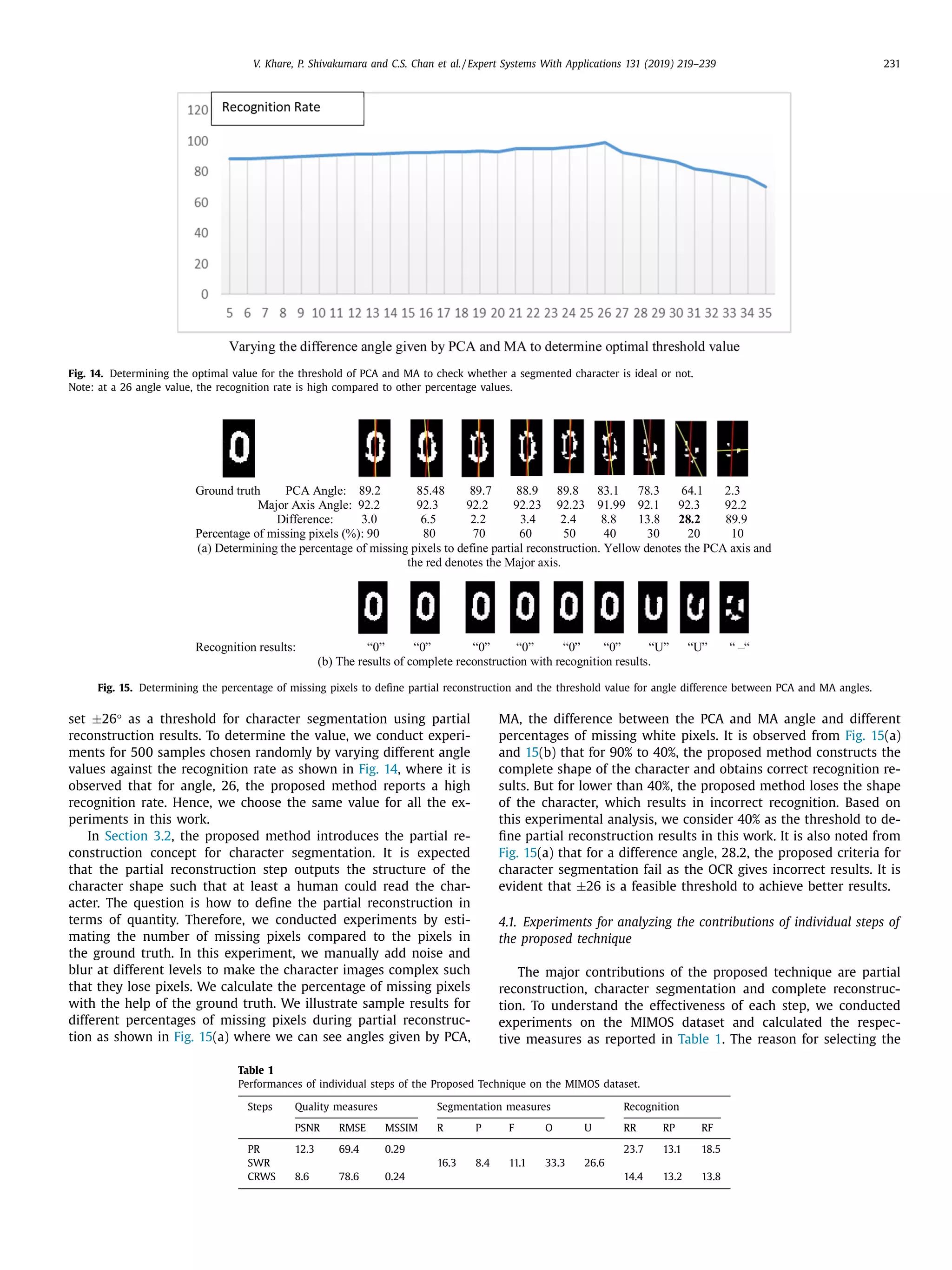

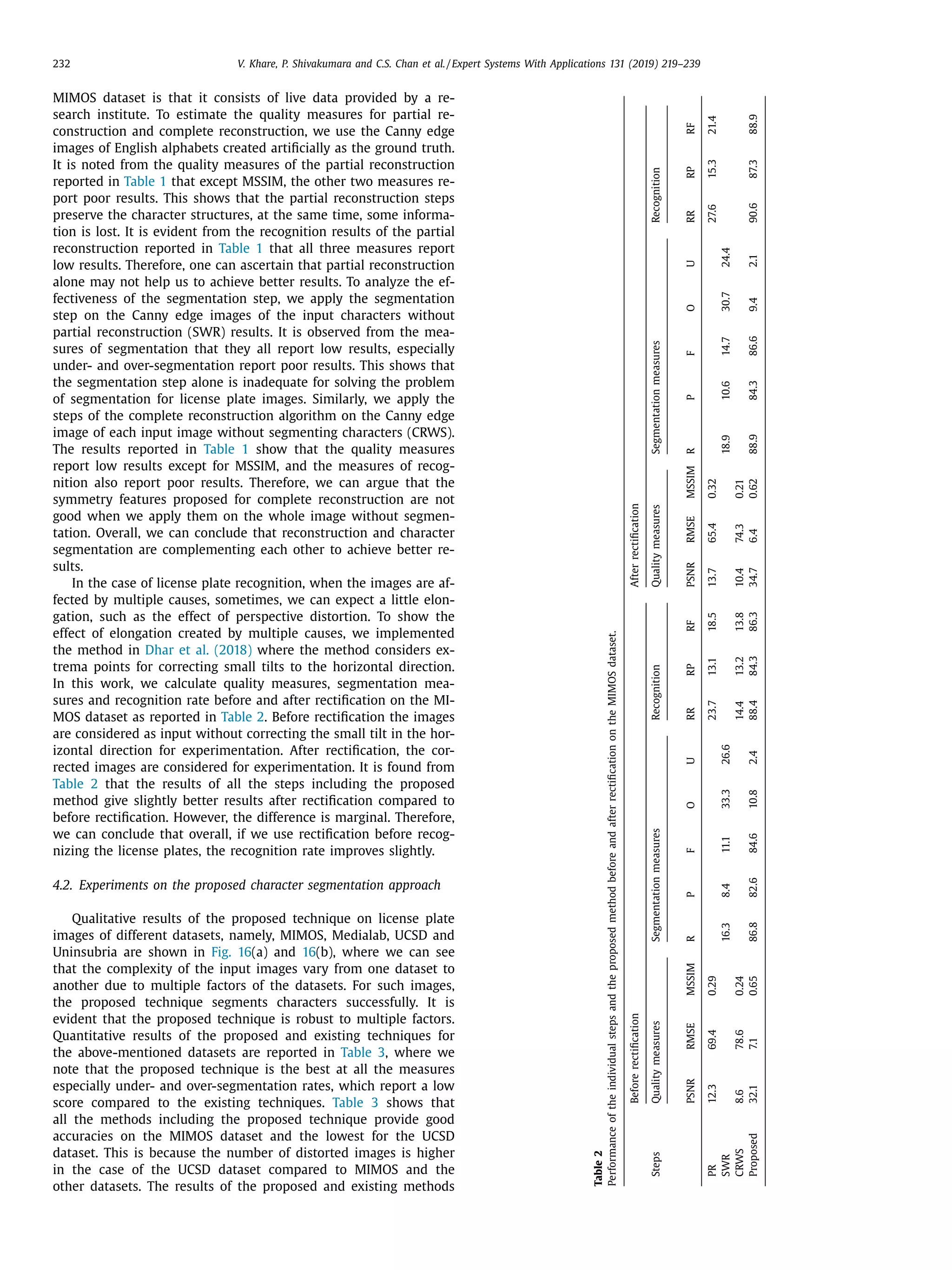

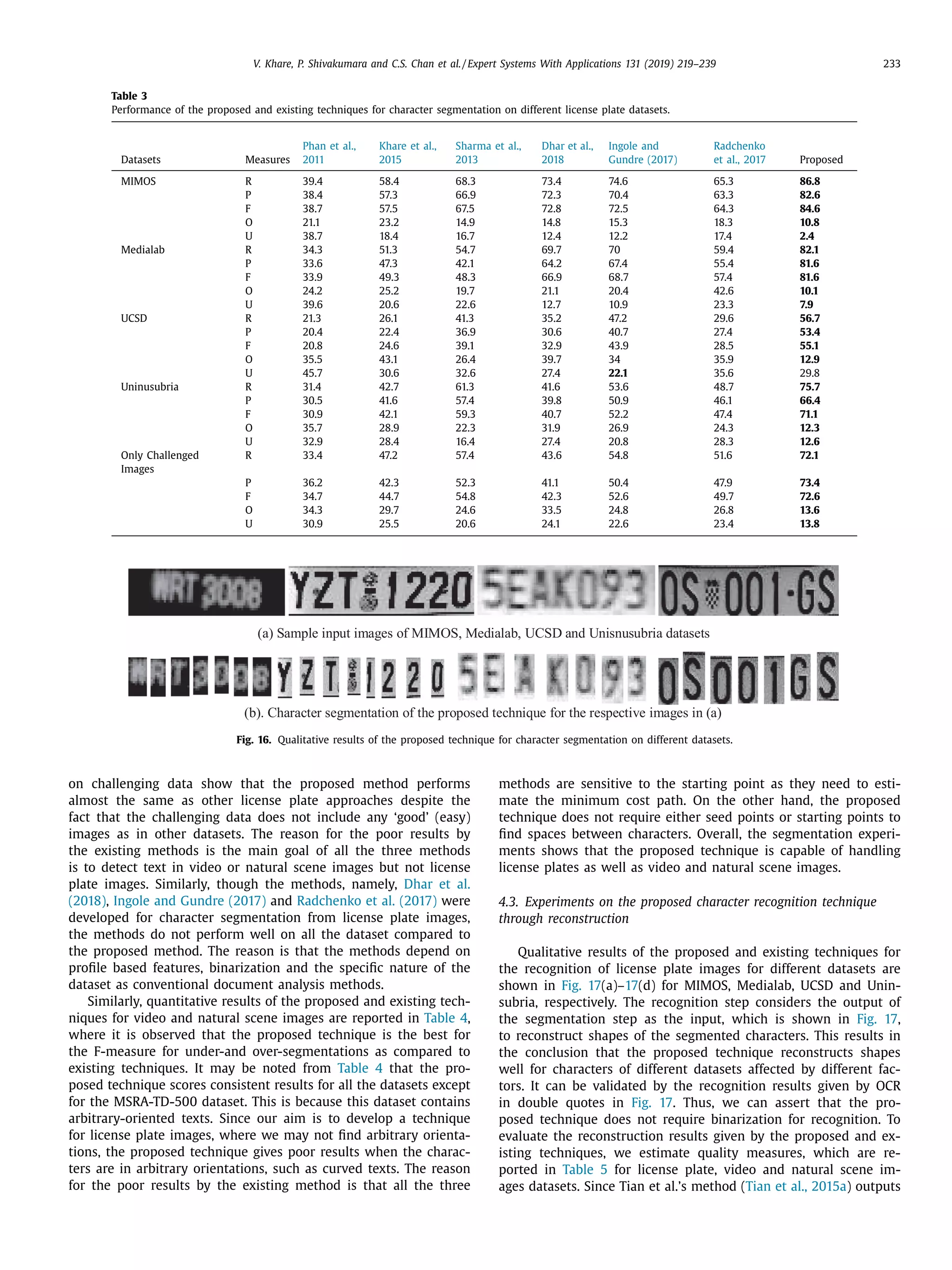

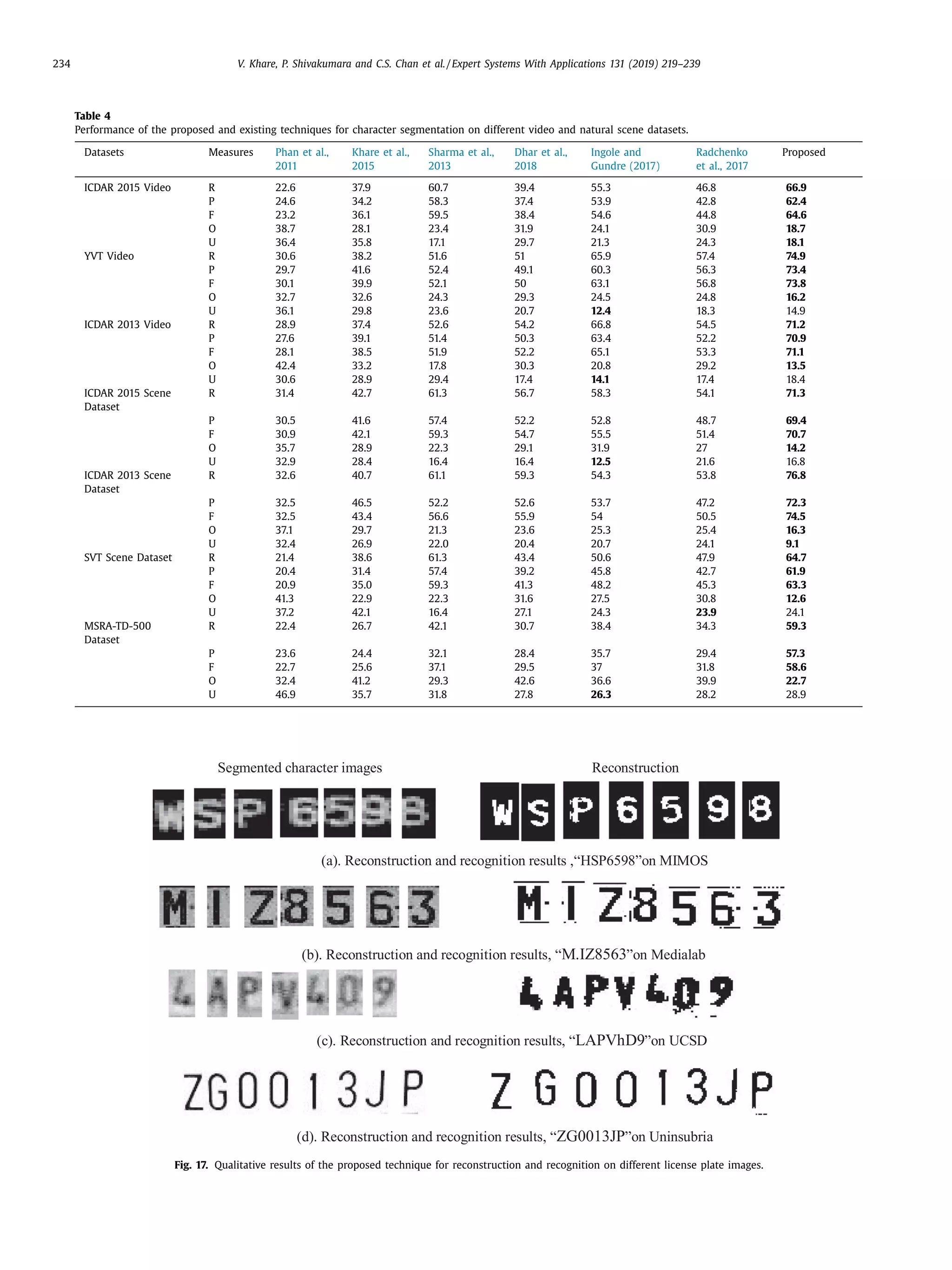

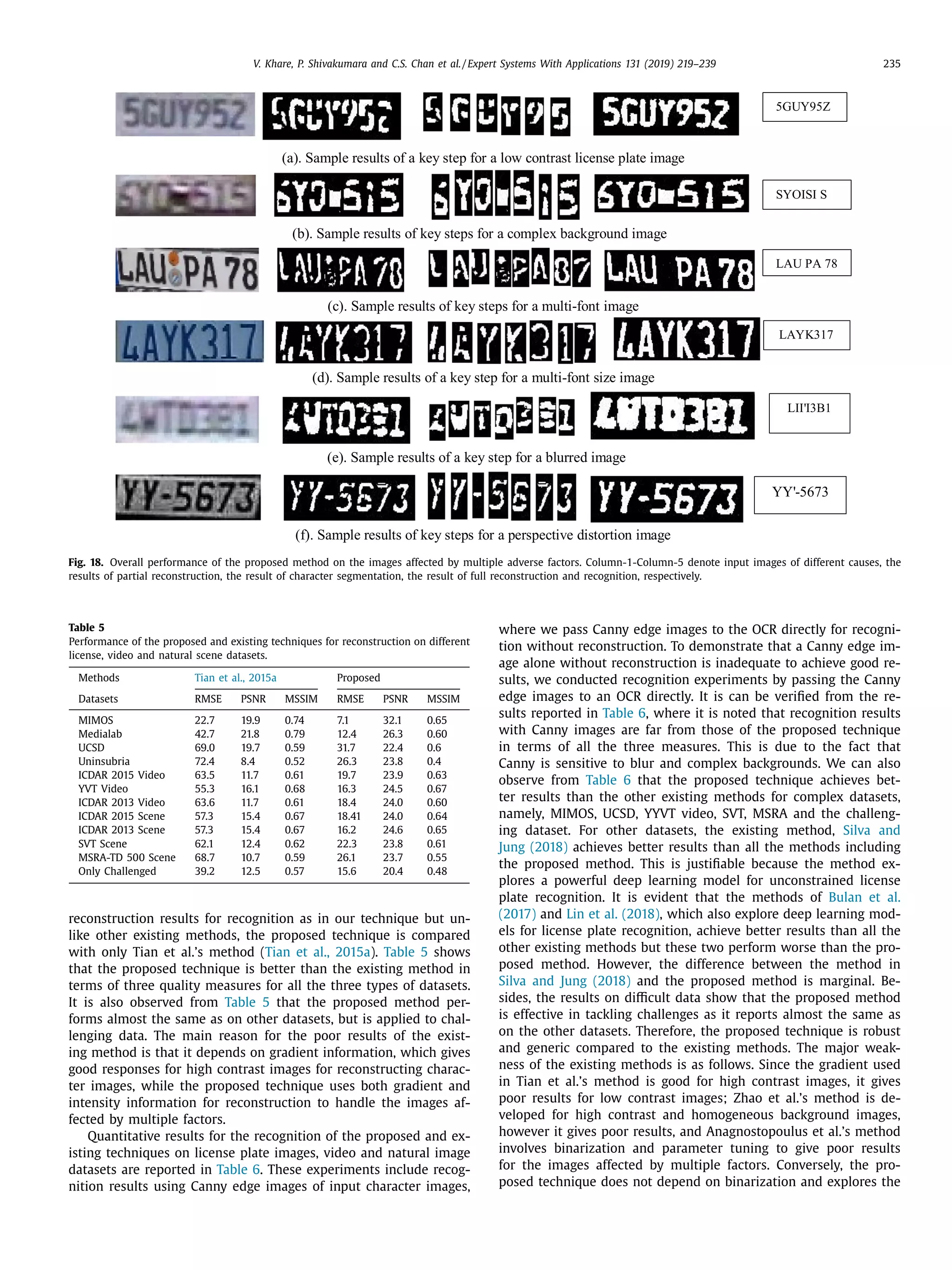

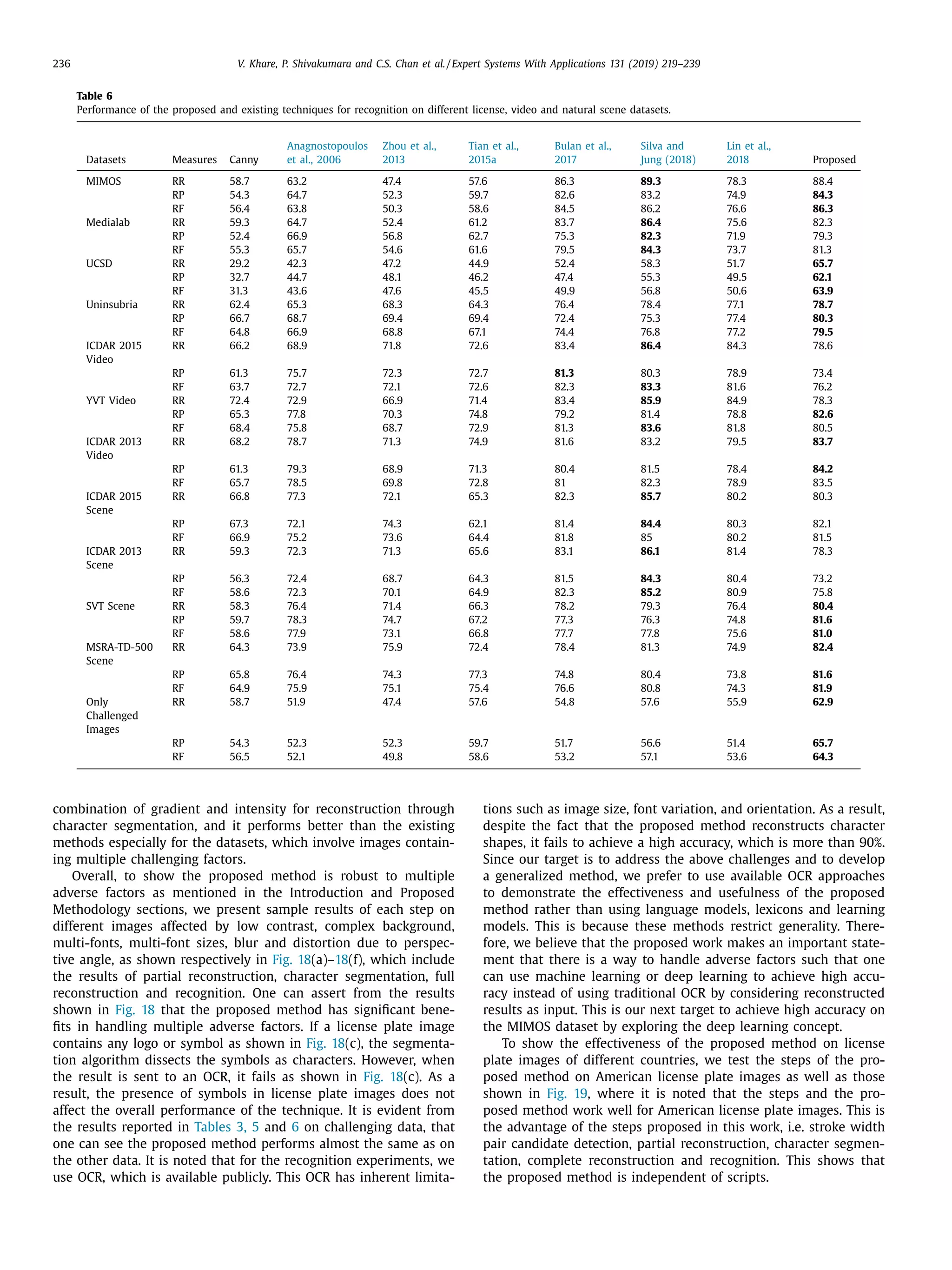

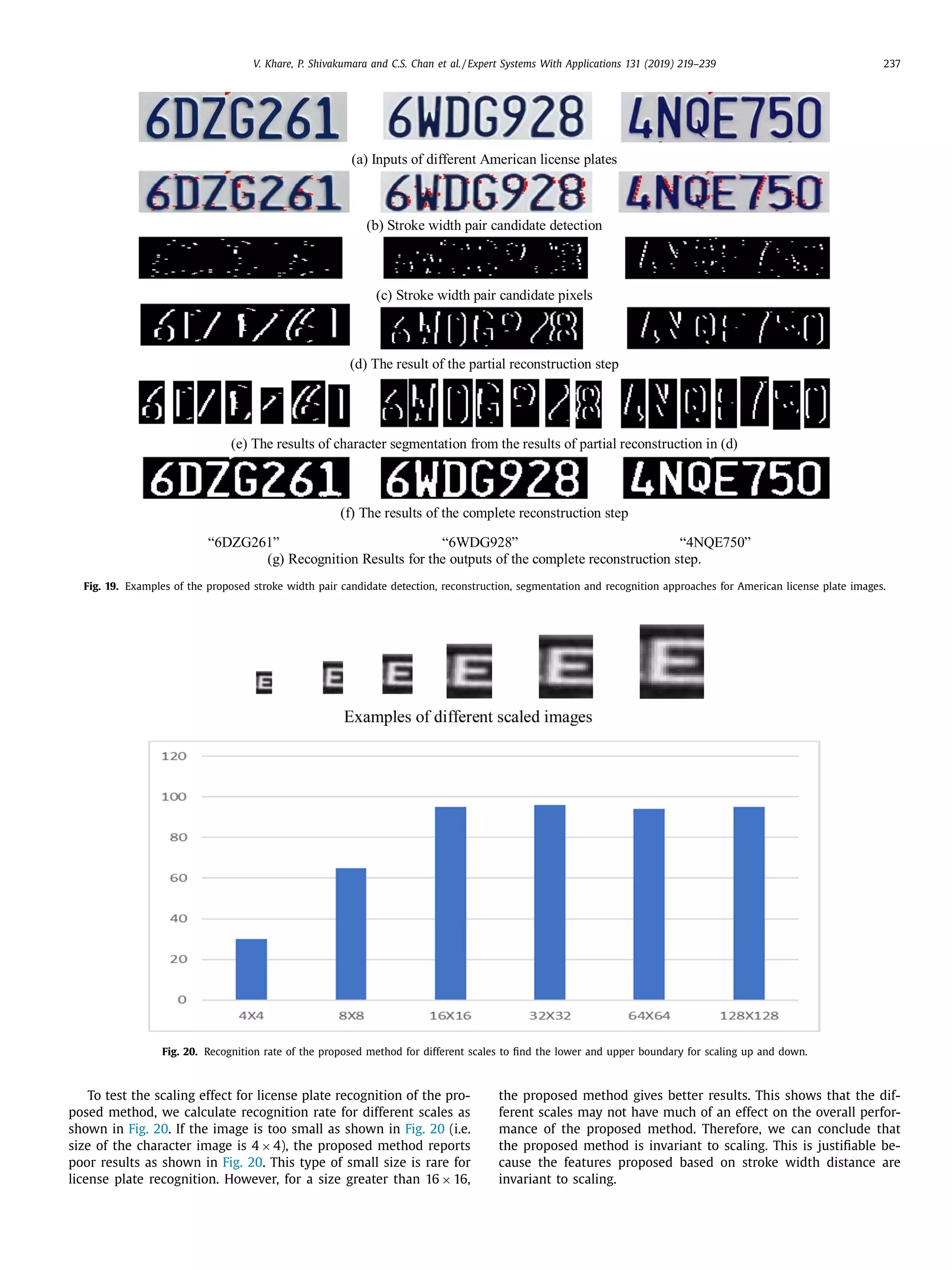

The document discusses a novel approach for license plate recognition that involves character segmentation through partial reconstruction and complete reconstruction for recognition. It aims to develop a system that can handle multiple adverse factors like low resolution, blurring, complex backgrounds, etc. that affect license plate images. The proposed approach uses characteristics of stroke width in the Laplacian and gradient domain to segment character components with incomplete shapes. It then studies the angular information and aspect ratios of character components to further segment characters. Finally, it uses the same stroke width properties to reconstruct the complete shape of each character for improved recognition rates. Experimental results on several benchmark and real-world databases demonstrate the effectiveness of the proposed technique.