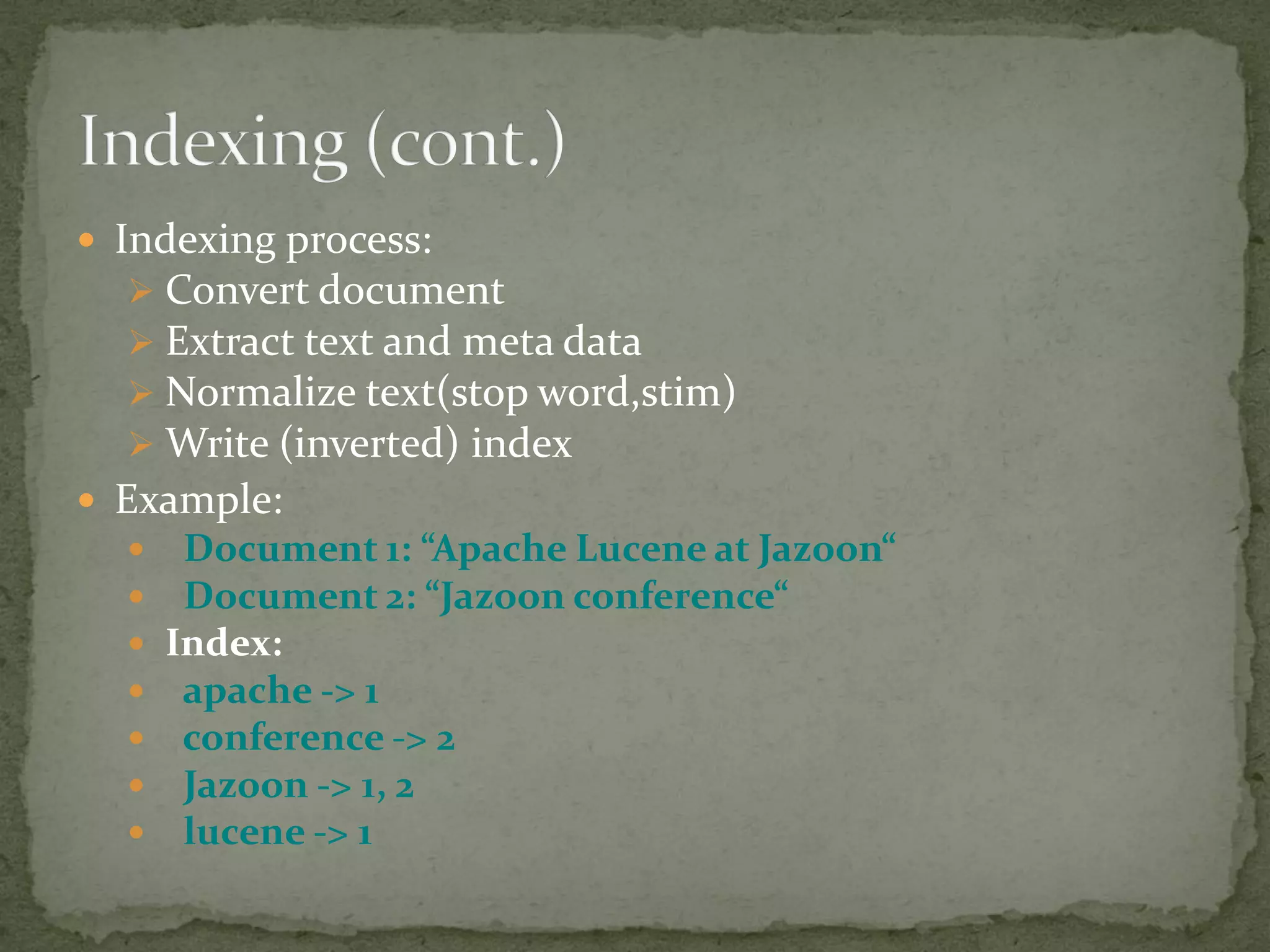

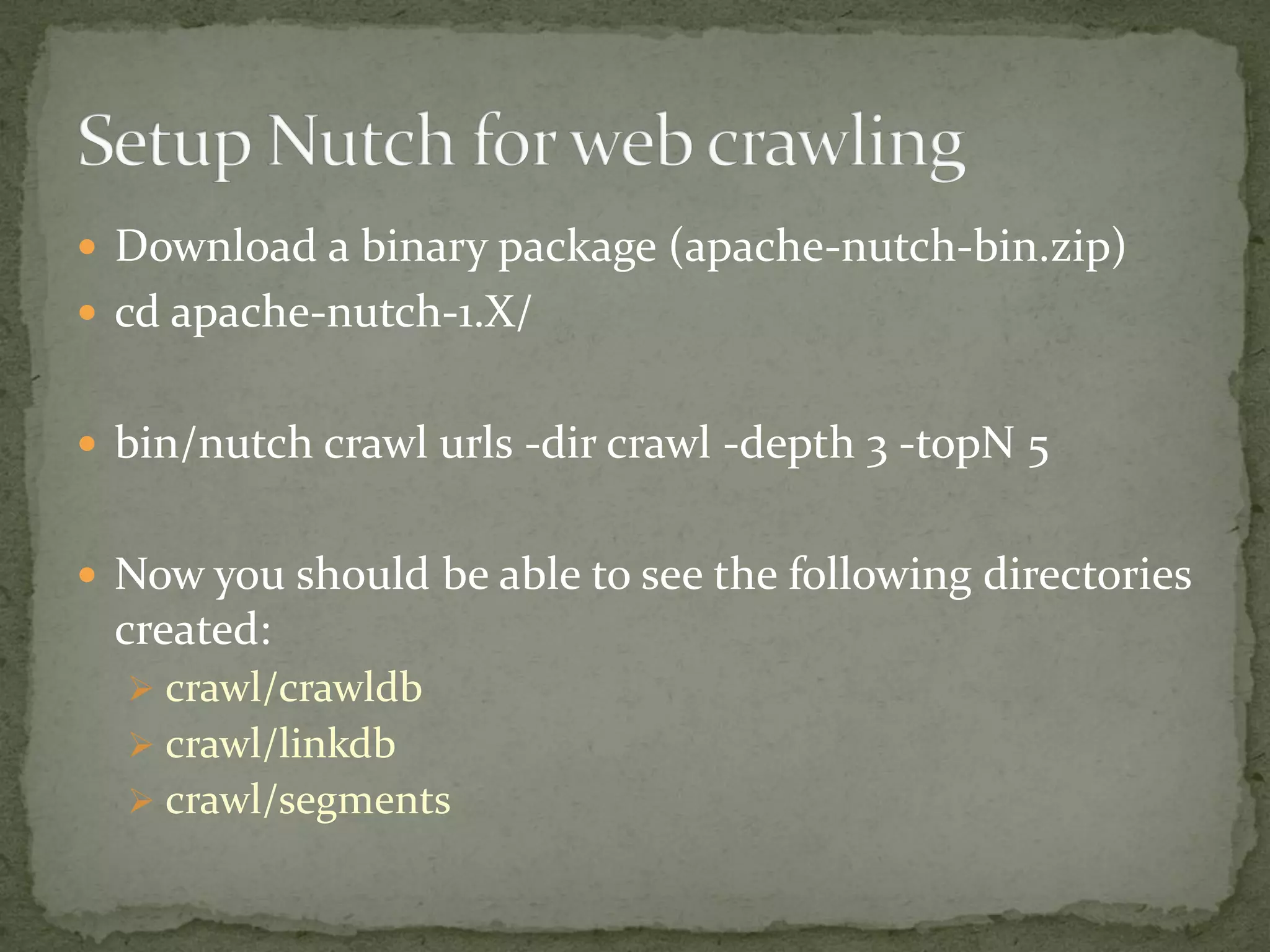

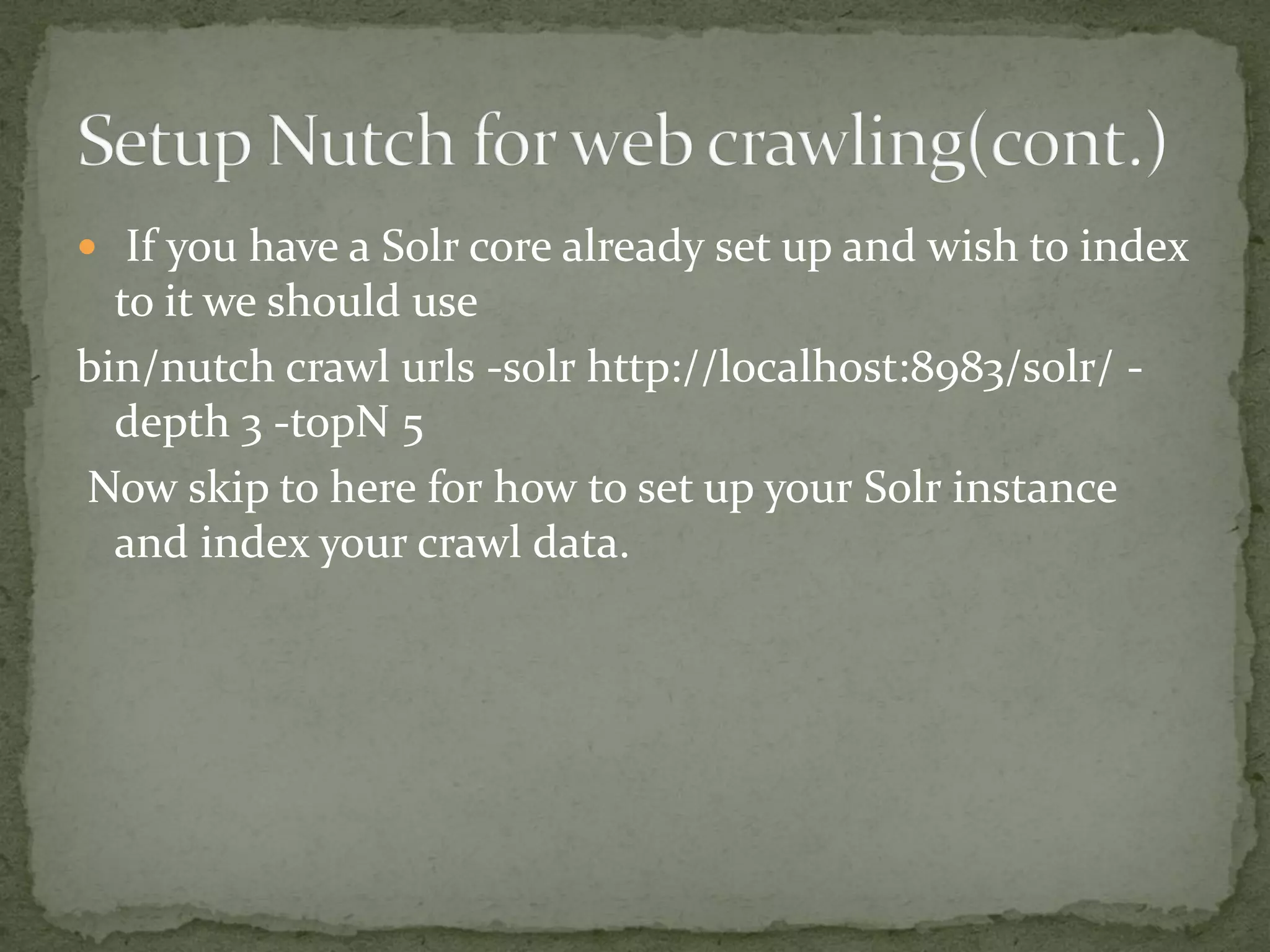

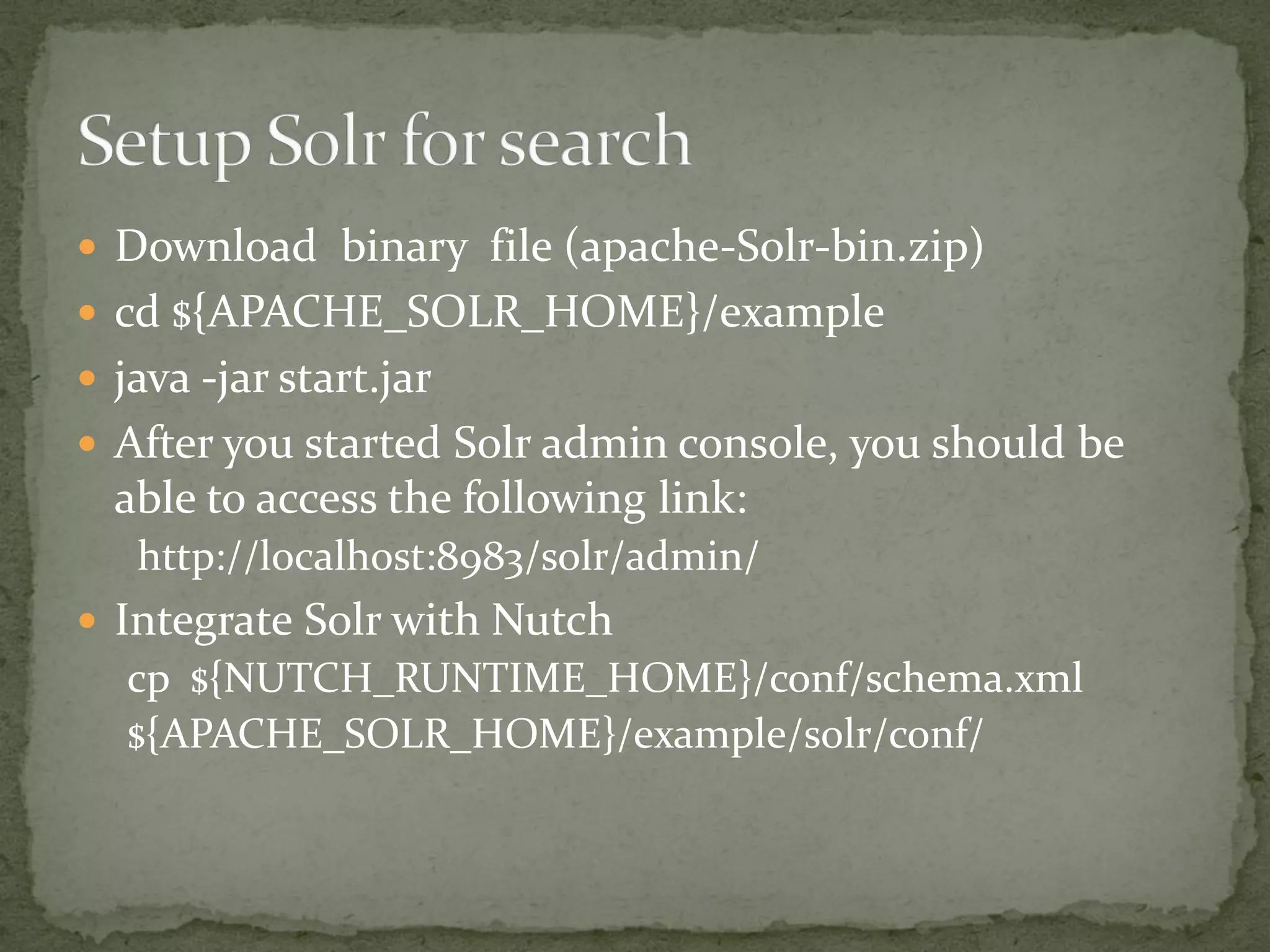

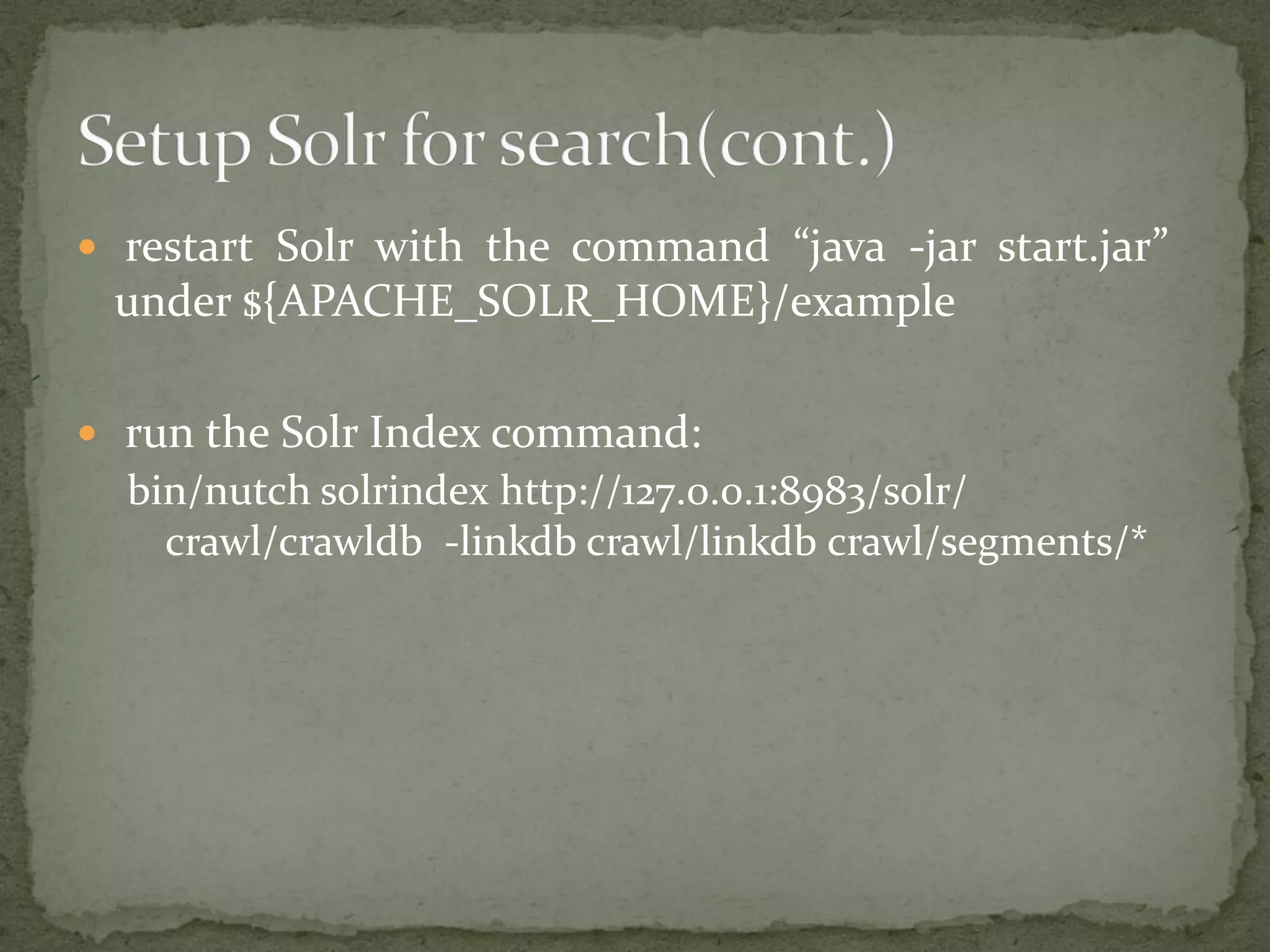

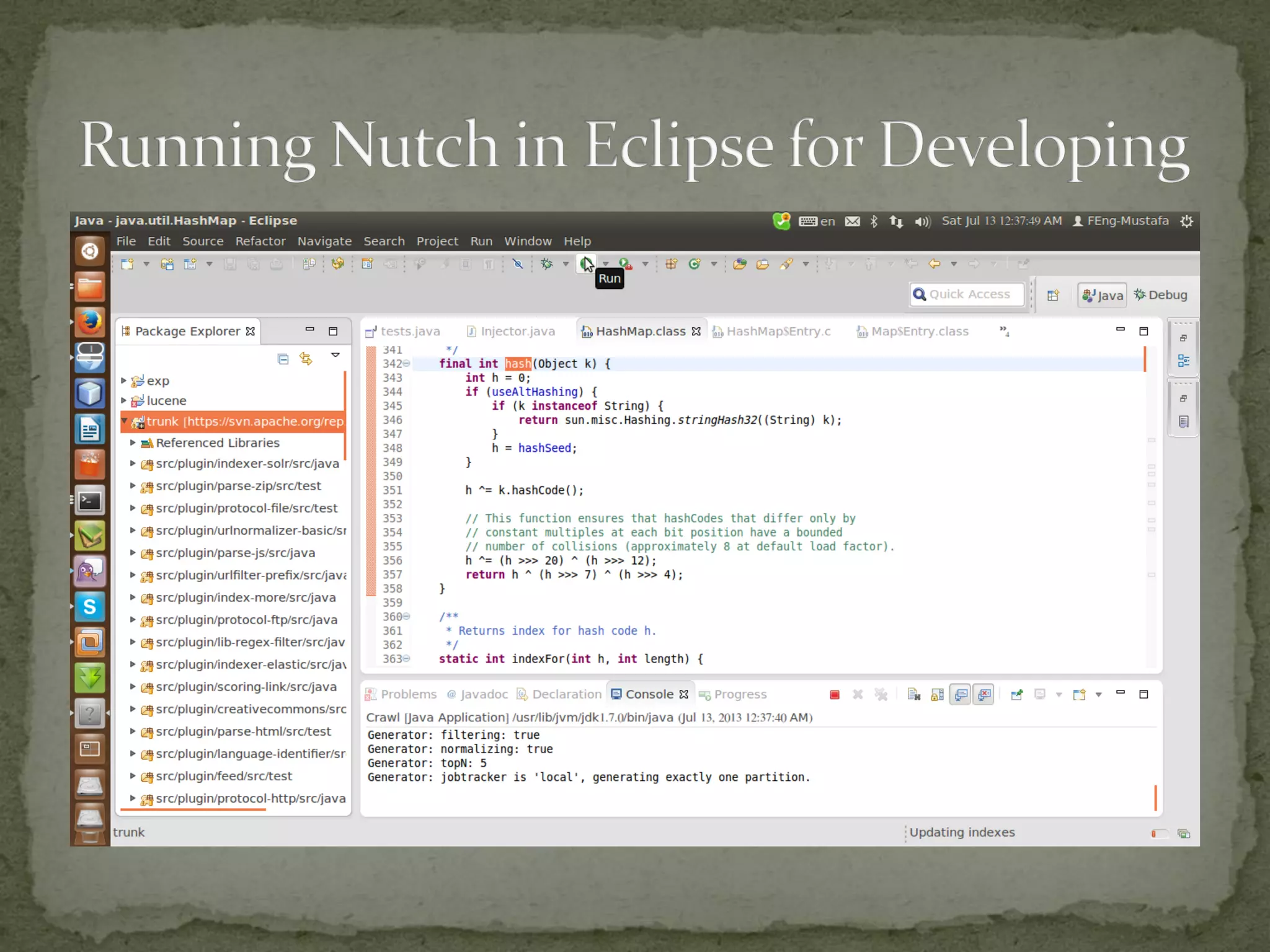

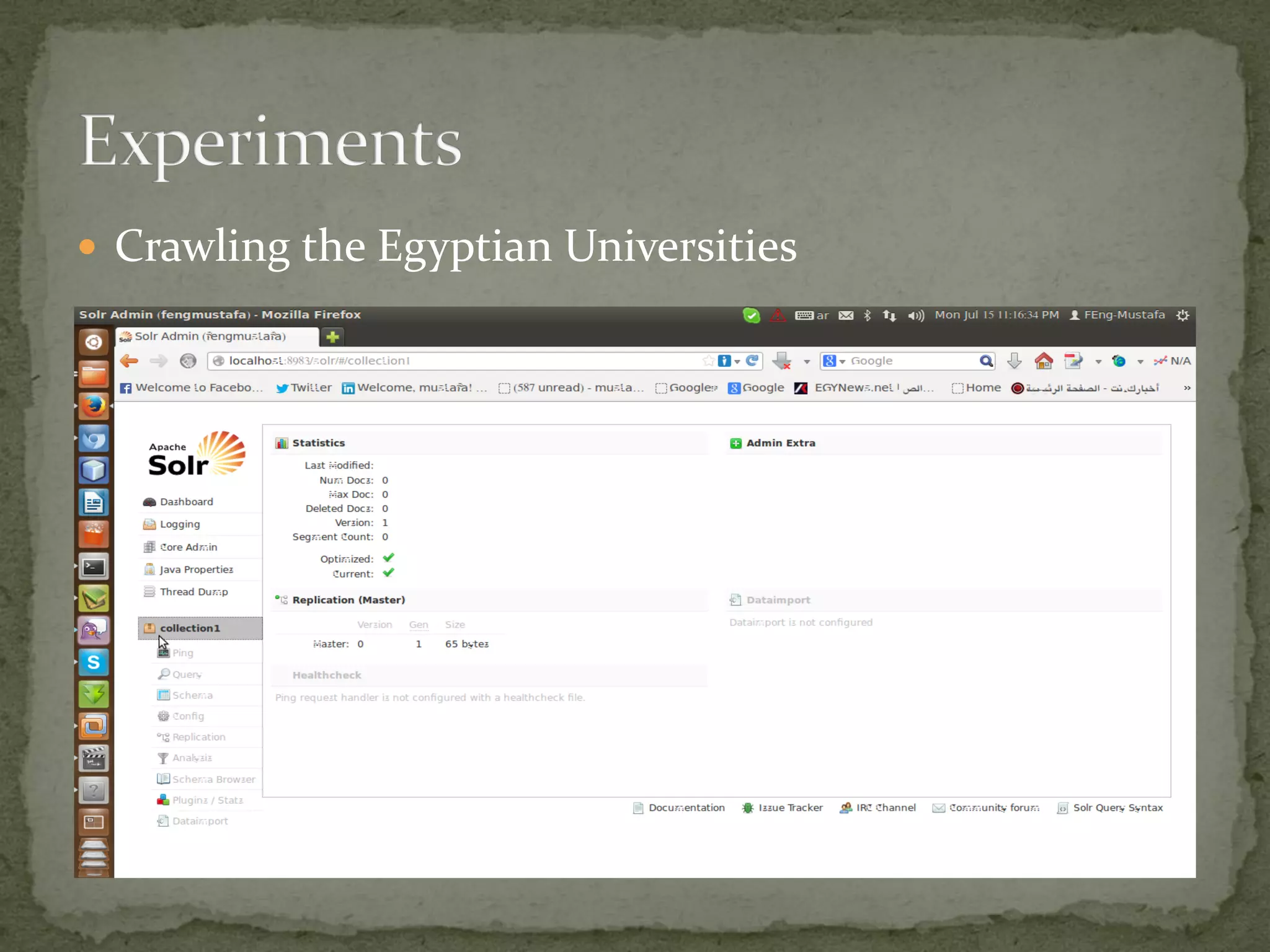

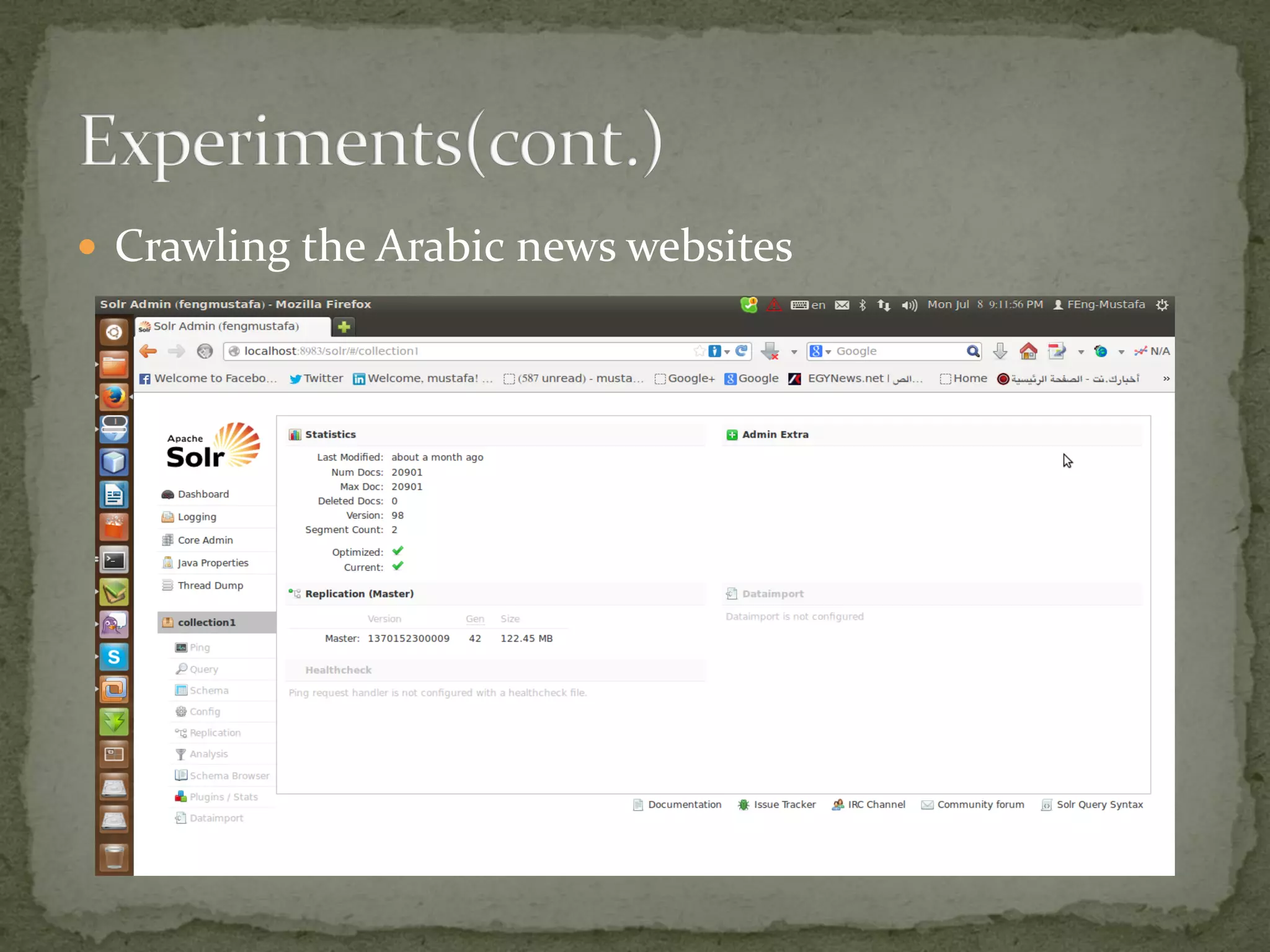

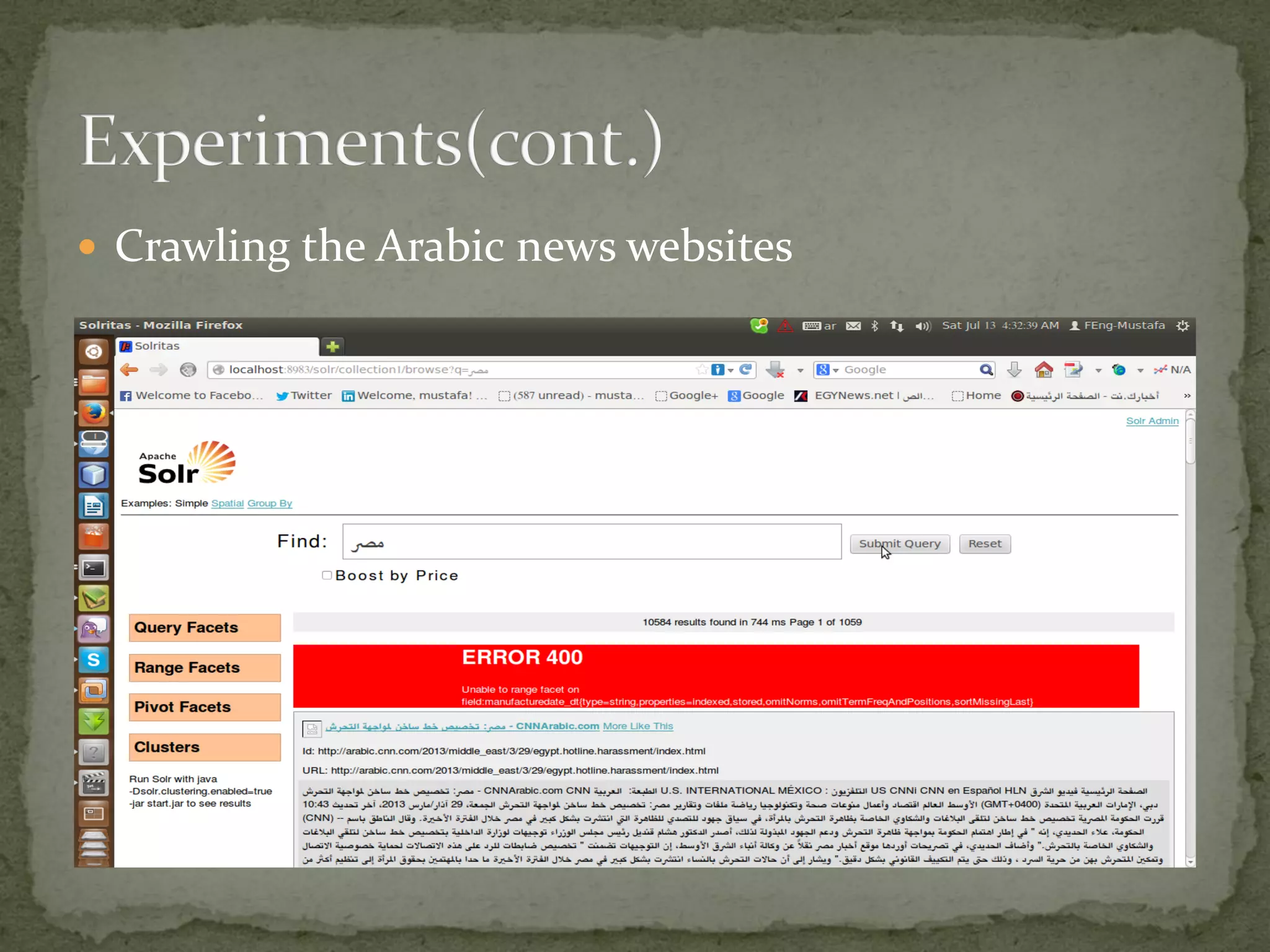

The project aims to develop a customized search engine tailored to local and international news, supervised by Dr. Mohamed A. El-Rashidy and Eng. Ahmed Ghozia at Menoufiya University. It includes features such as web crawling, indexing, and ranking using tools like Lucene, Nutch, and Solr, focusing on scalability and relevance. The document provides a detailed overview of the processes involved in building and setting up the search engine, along with practical implementation steps.

![A customized web search engine [autosaved]](https://image.slidesharecdn.com/acustomizedwebsearchengineautosaved-130724201343-phpapp02/75/A-customized-web-search-engine-autosaved-25-2048.jpg)