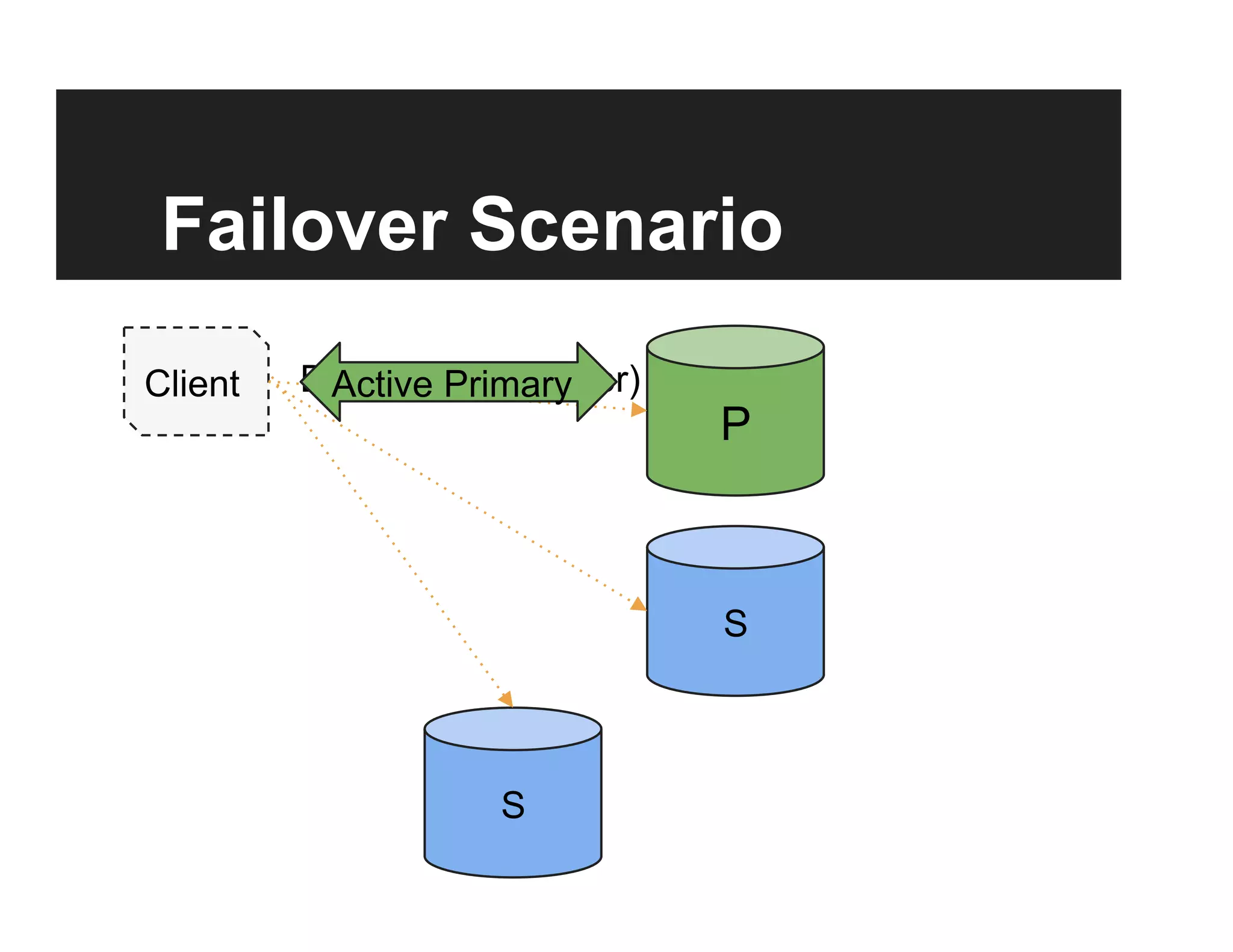

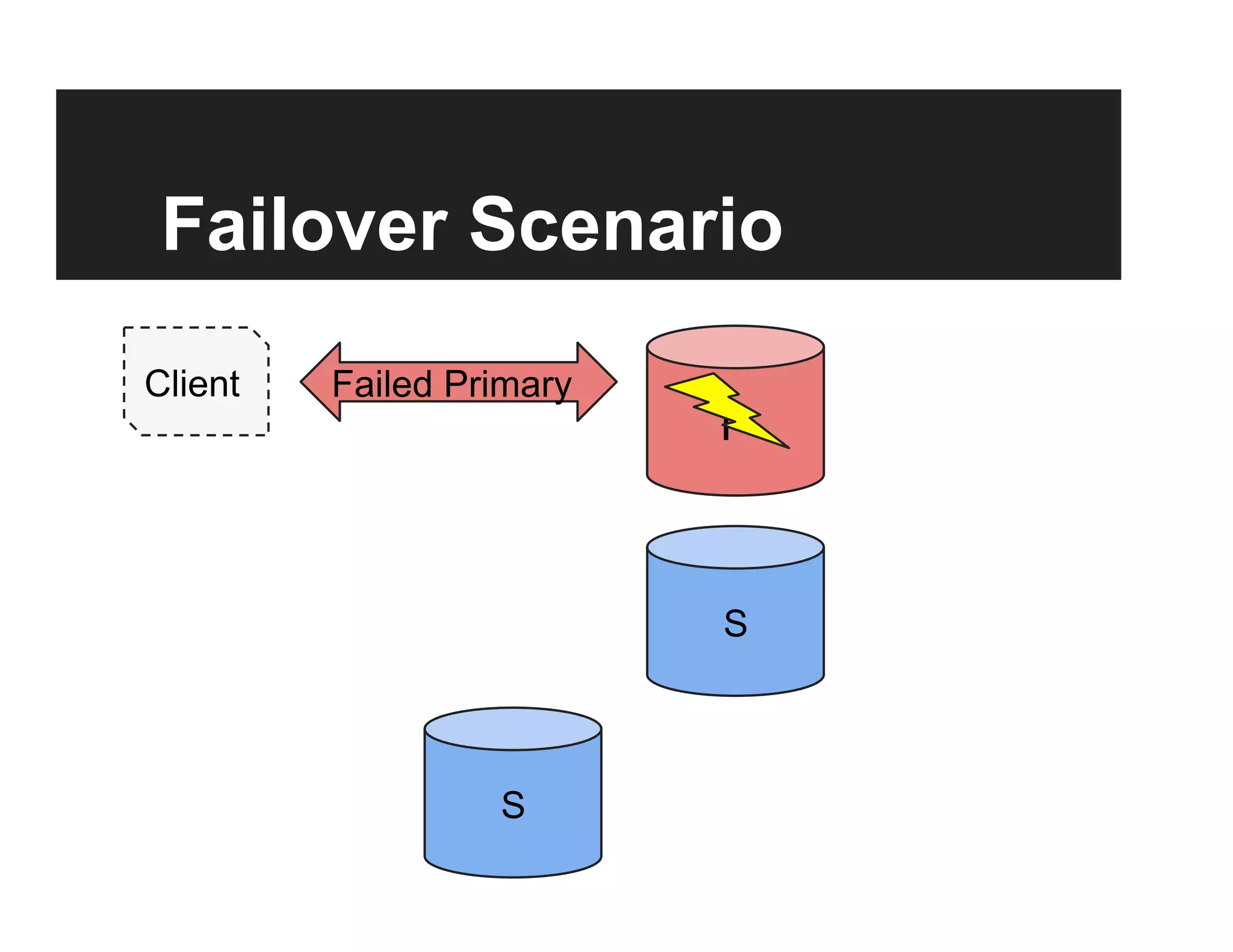

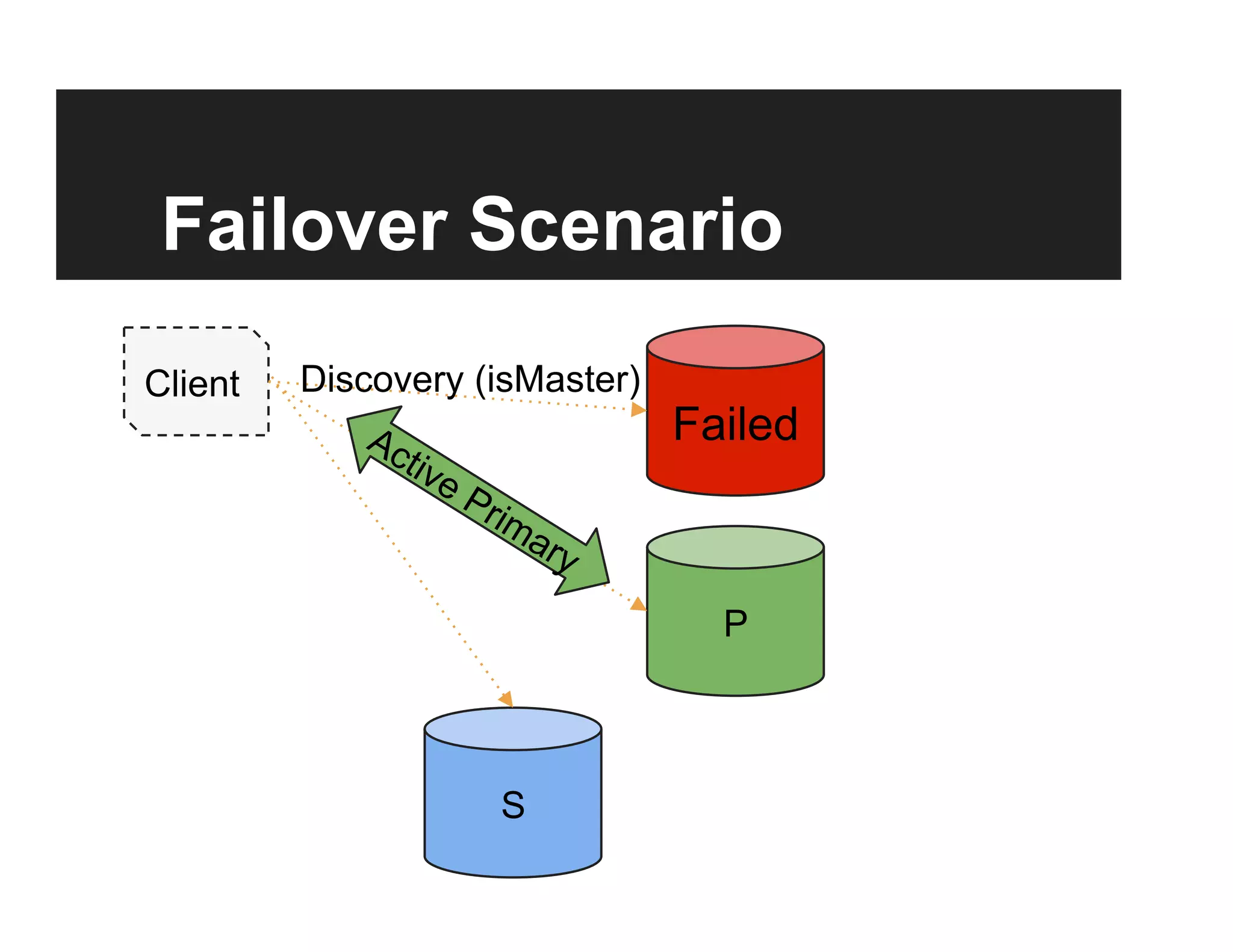

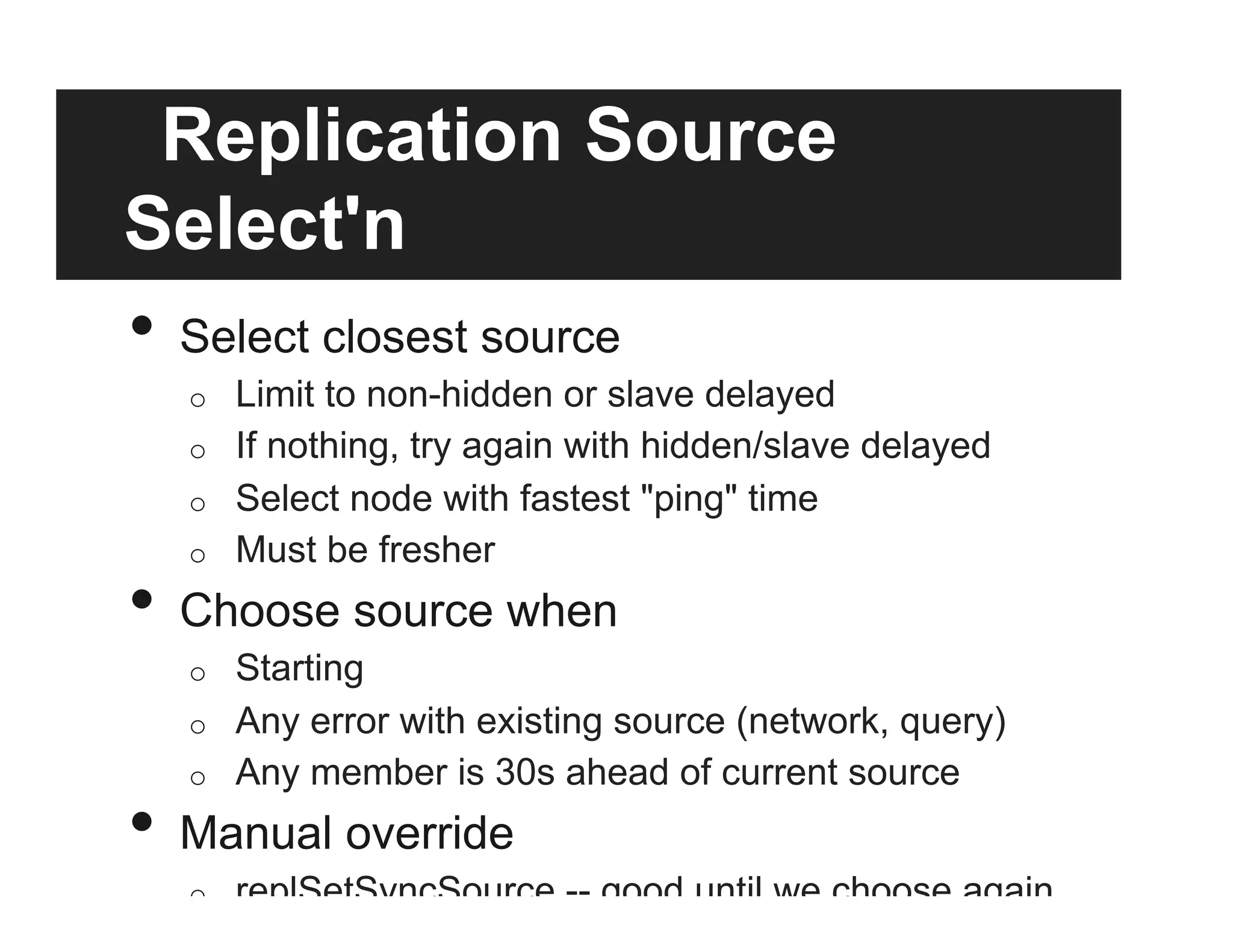

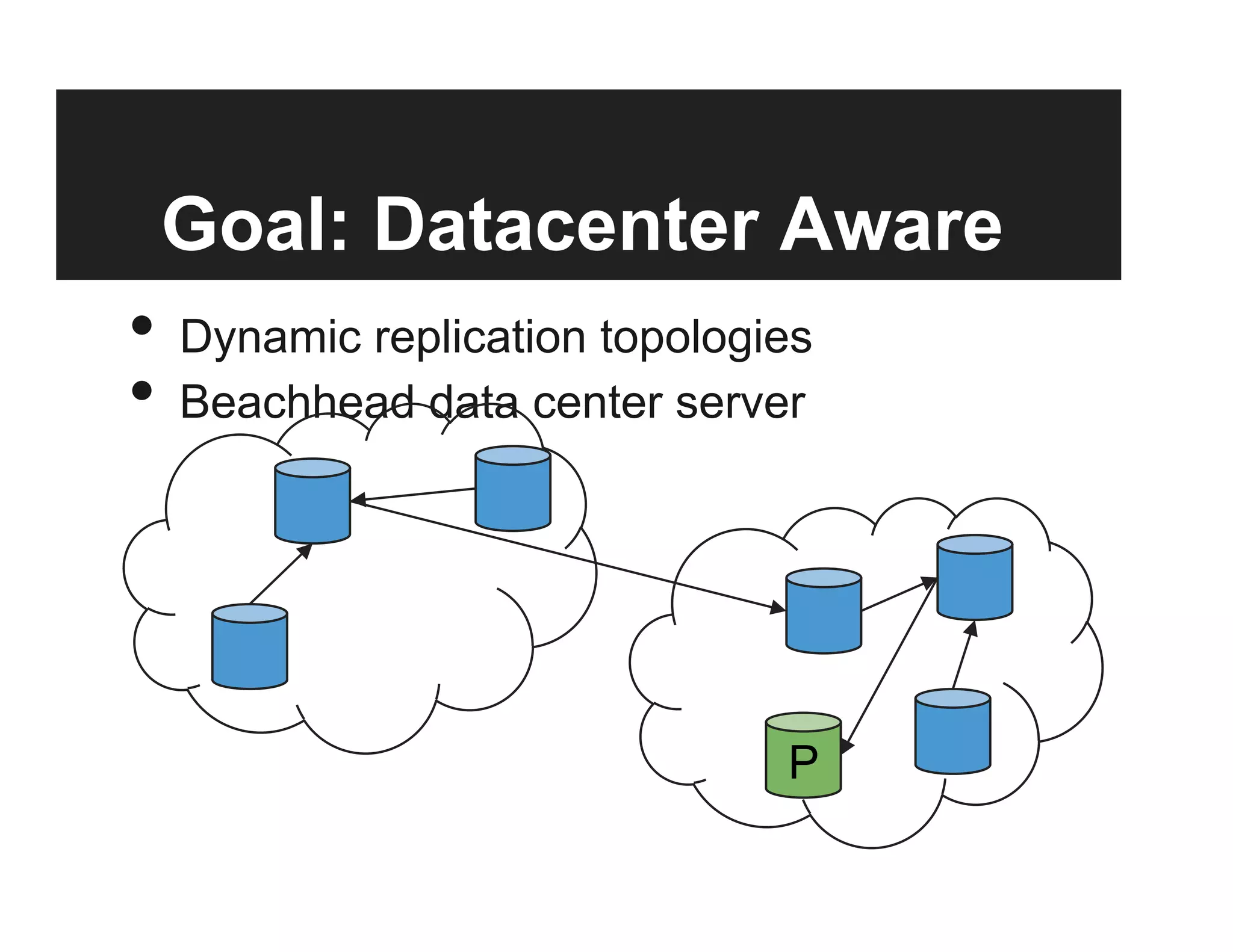

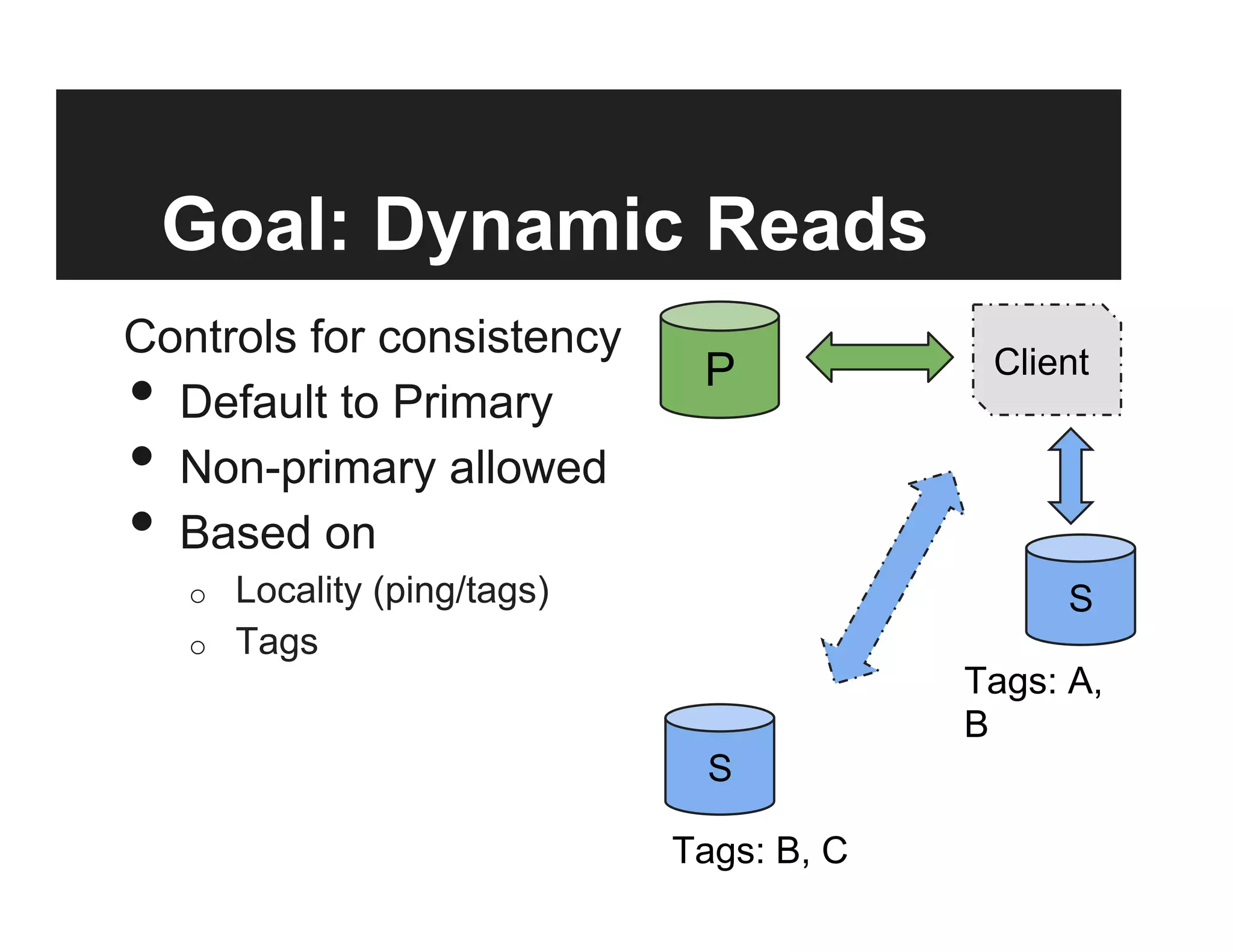

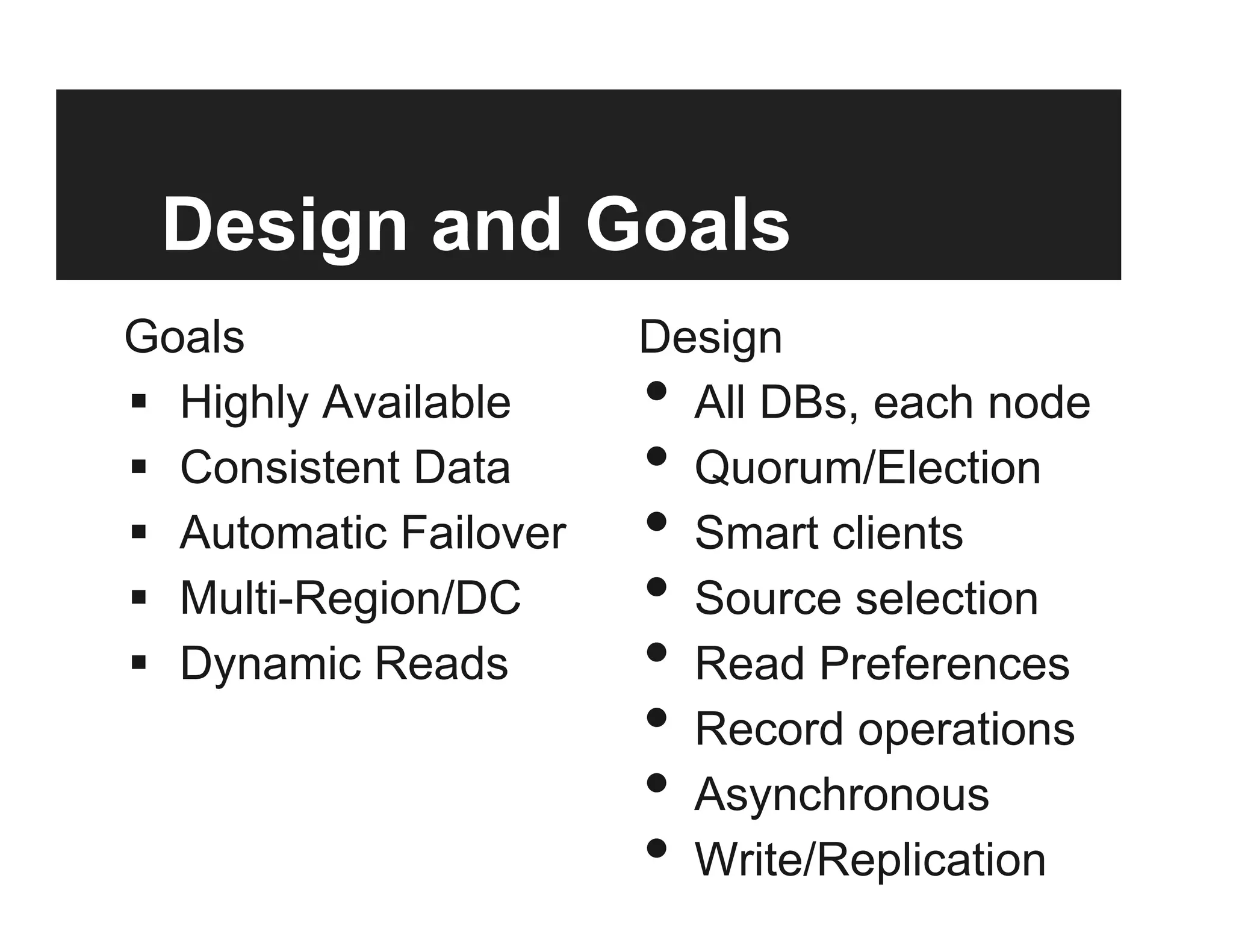

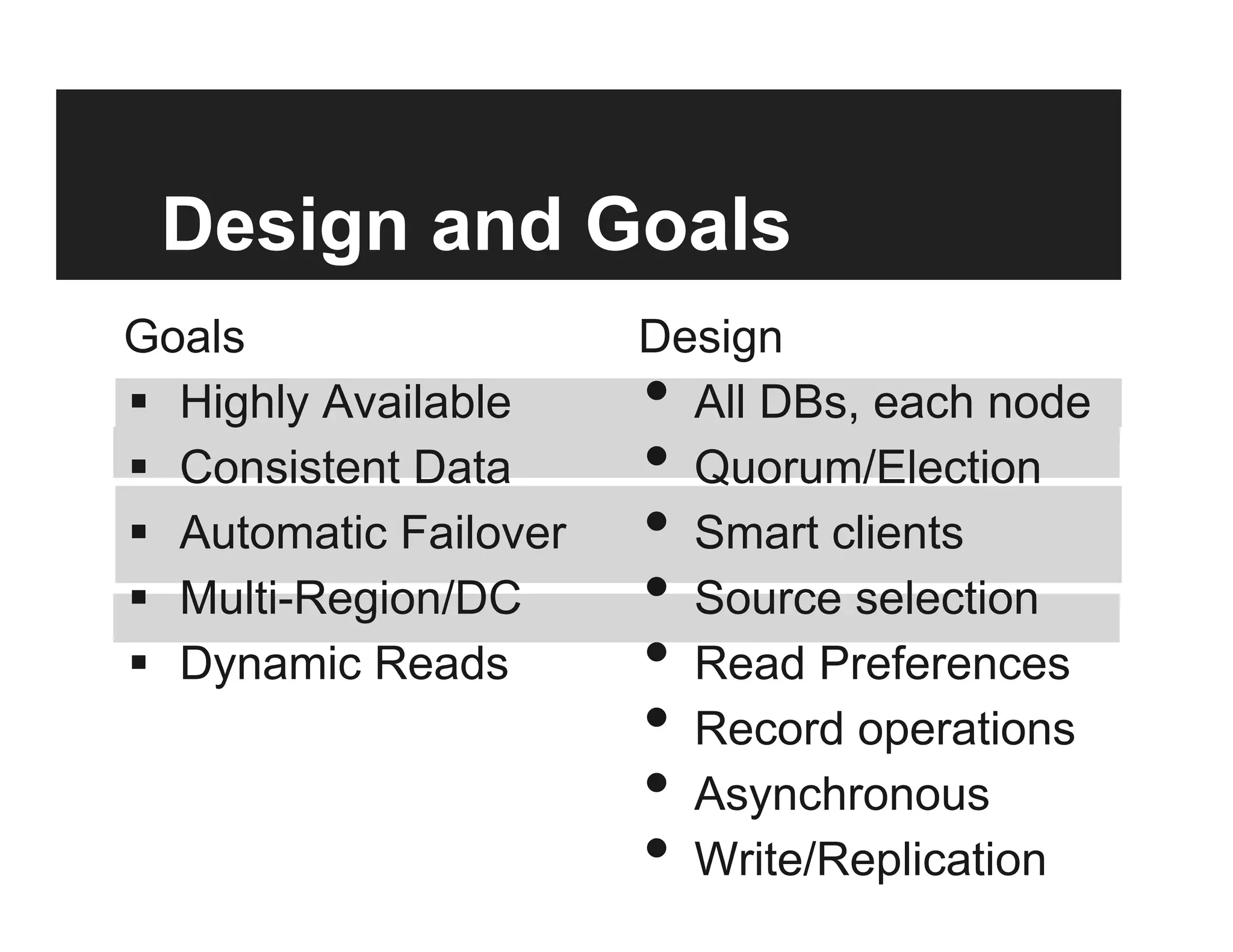

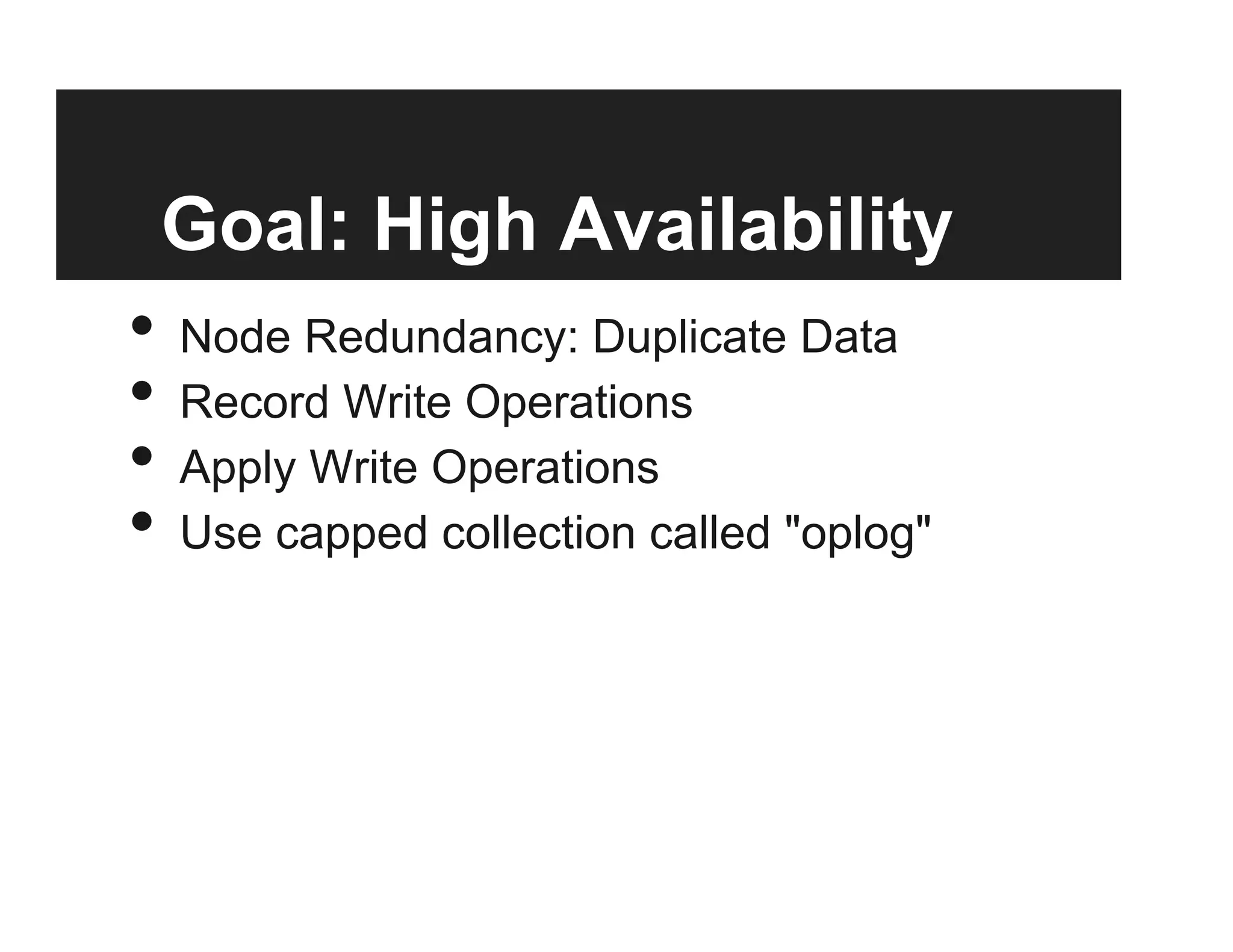

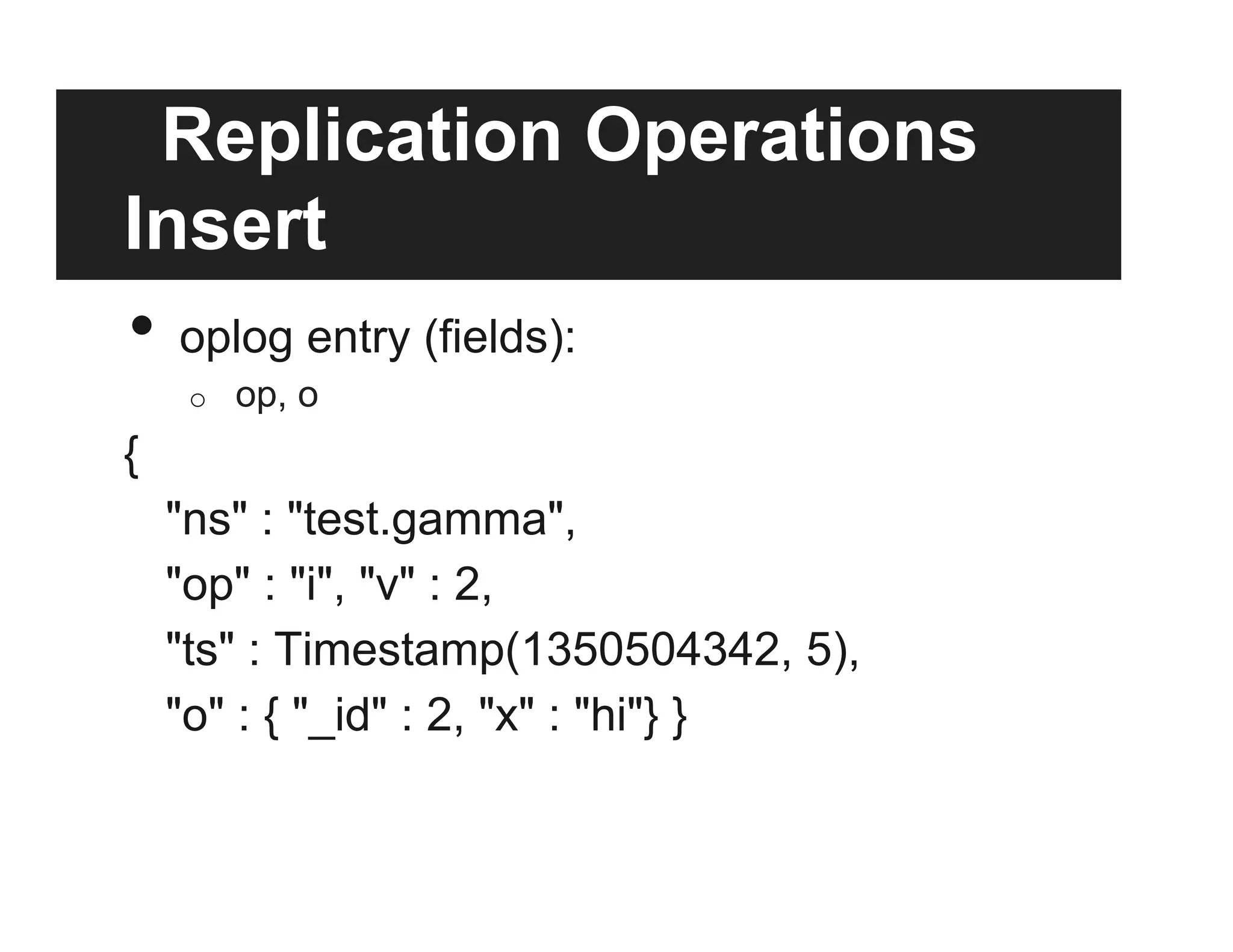

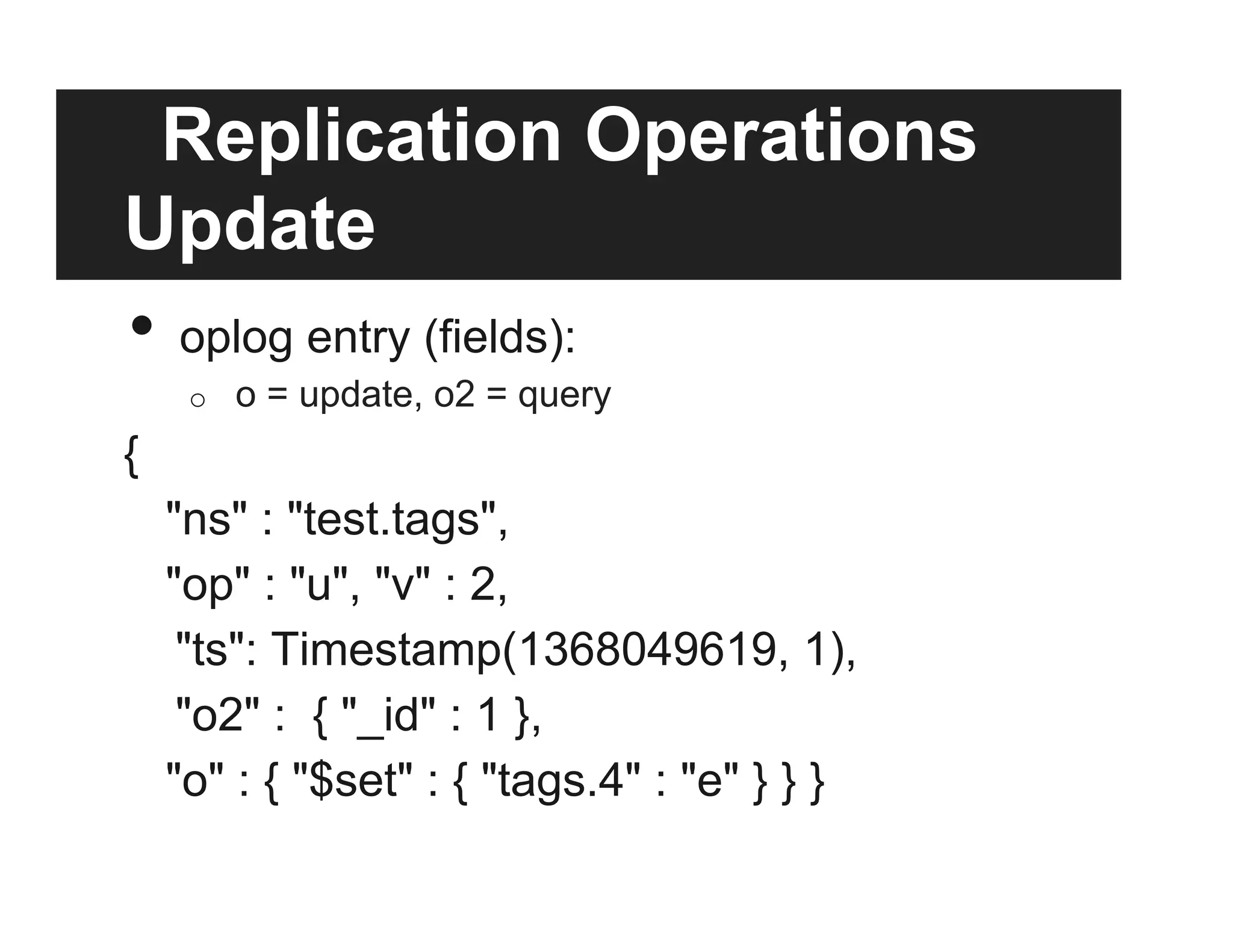

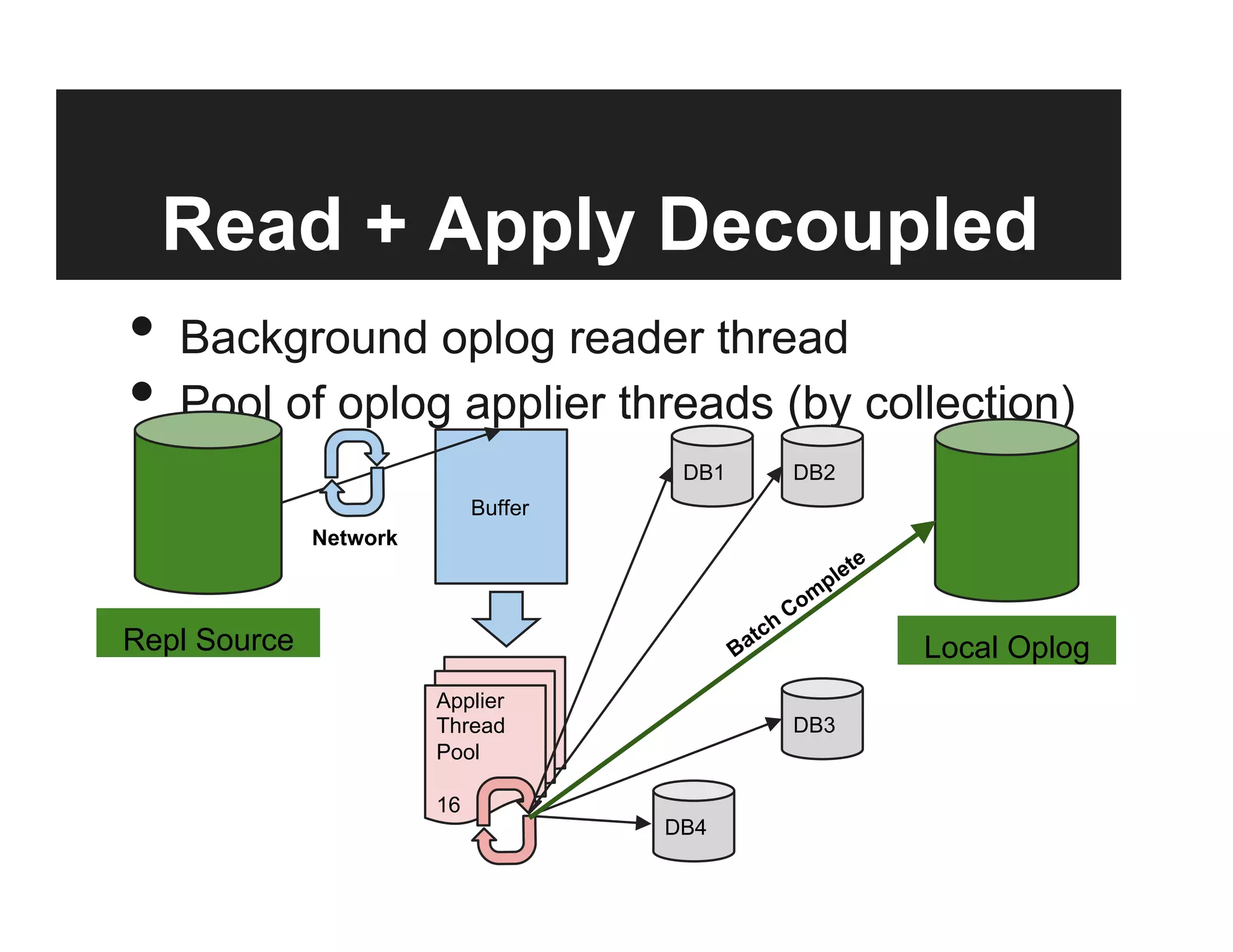

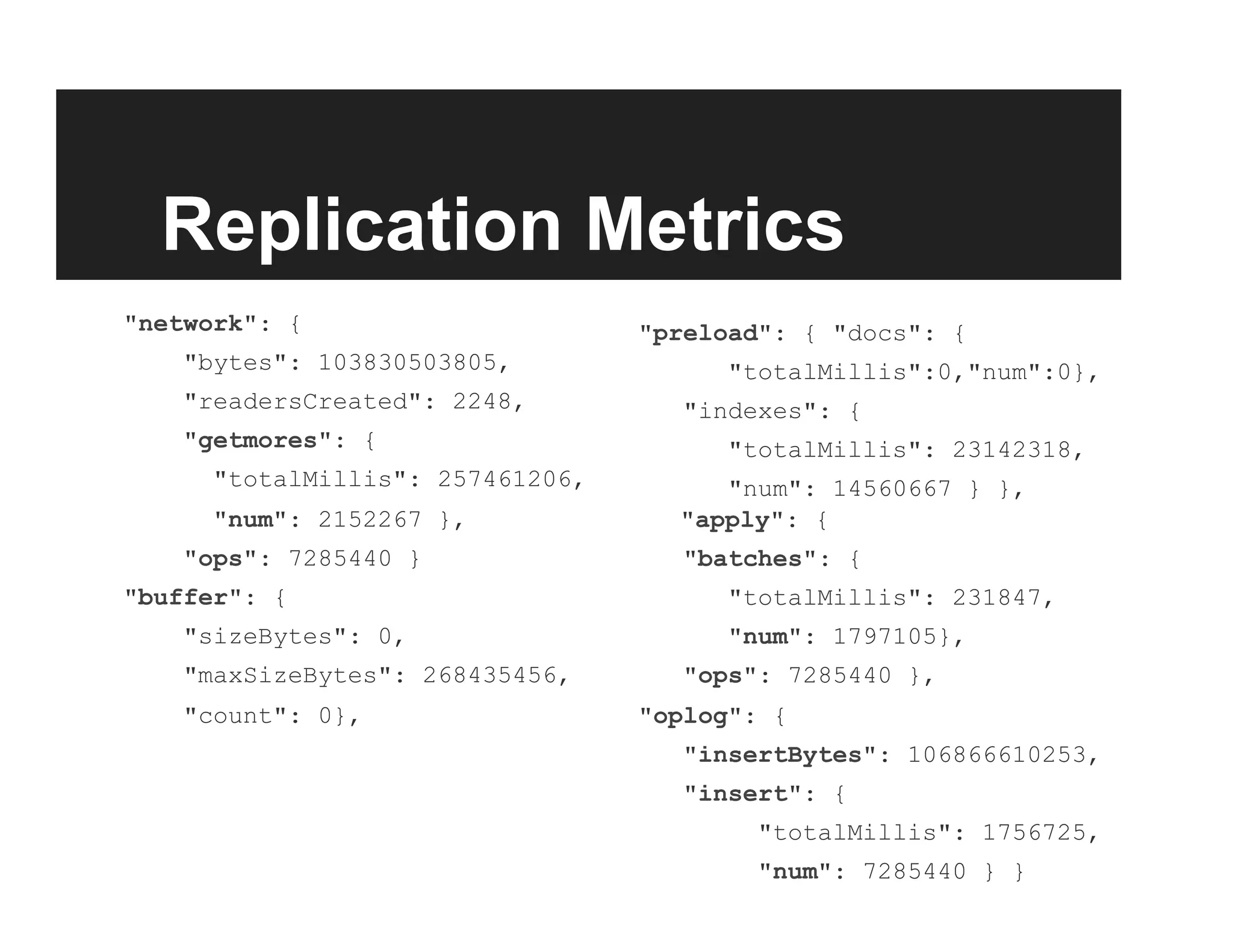

This document discusses the design and goals of advanced replication in MongoDB. The goals are high availability, consistent data, automatic failover across multiple regions/data centers, and dynamic reads. The design includes replicating all databases to each node, using quorum and elections for consistency, smart clients, source selection awareness, recording operations in an oplog, and asynchronous replication with write acknowledgements.

![Discovery

isMaster command:

setName: <name>,

ismaster: true, secondary: false, arbiterOnly:

hosts: [ <visible nodes> ],

passives: [ <prio:0 nodes> ],

arbiters: [ <nodes> ],

primary: <active primary>,

tags: {<tags>},

me: <me>](https://image.slidesharecdn.com/7-130708161907-phpapp02/75/Advanced-Replication-Internals-18-2048.jpg)