This document provides 7 tips for using Apache Spark efficiently:

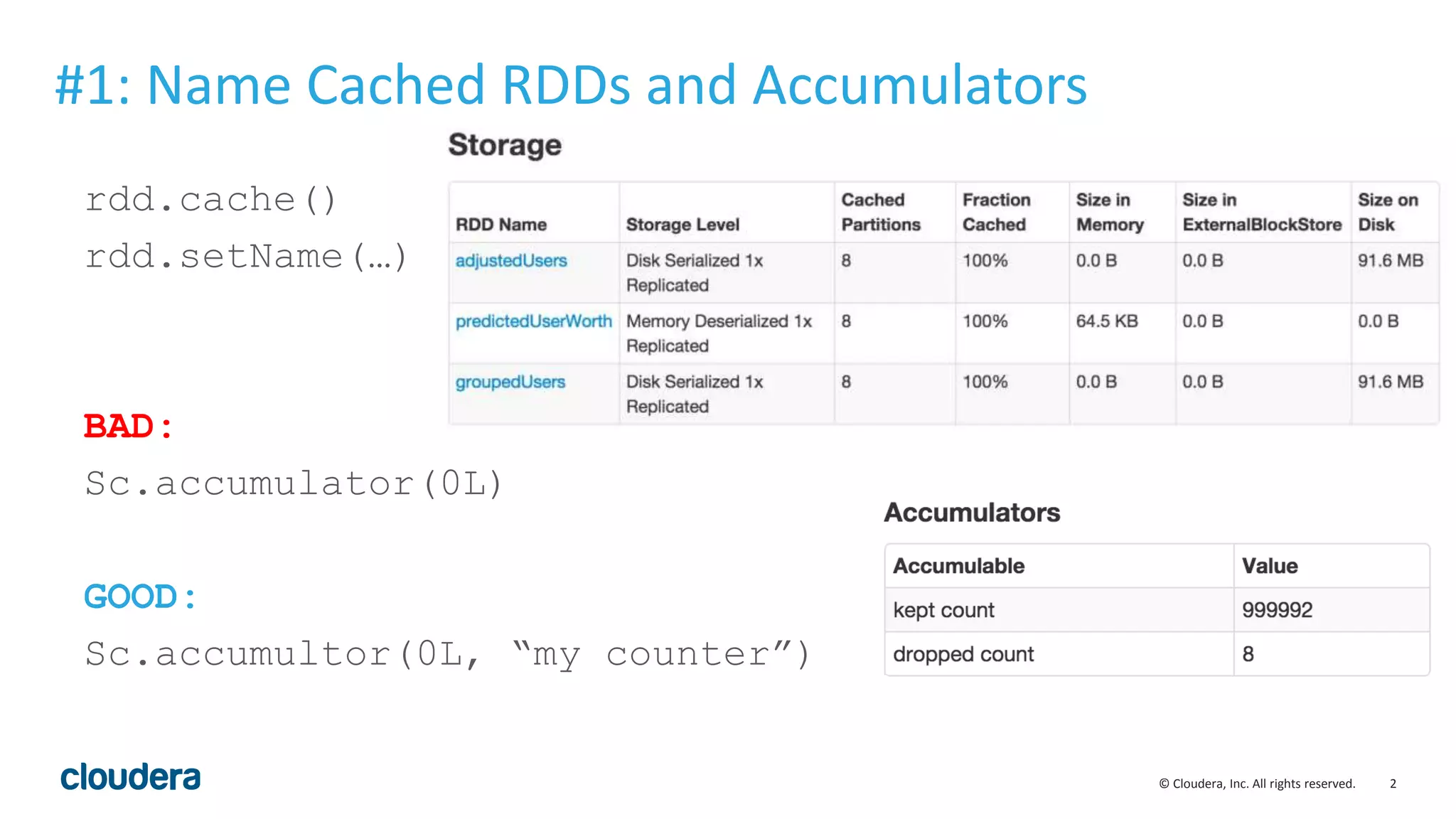

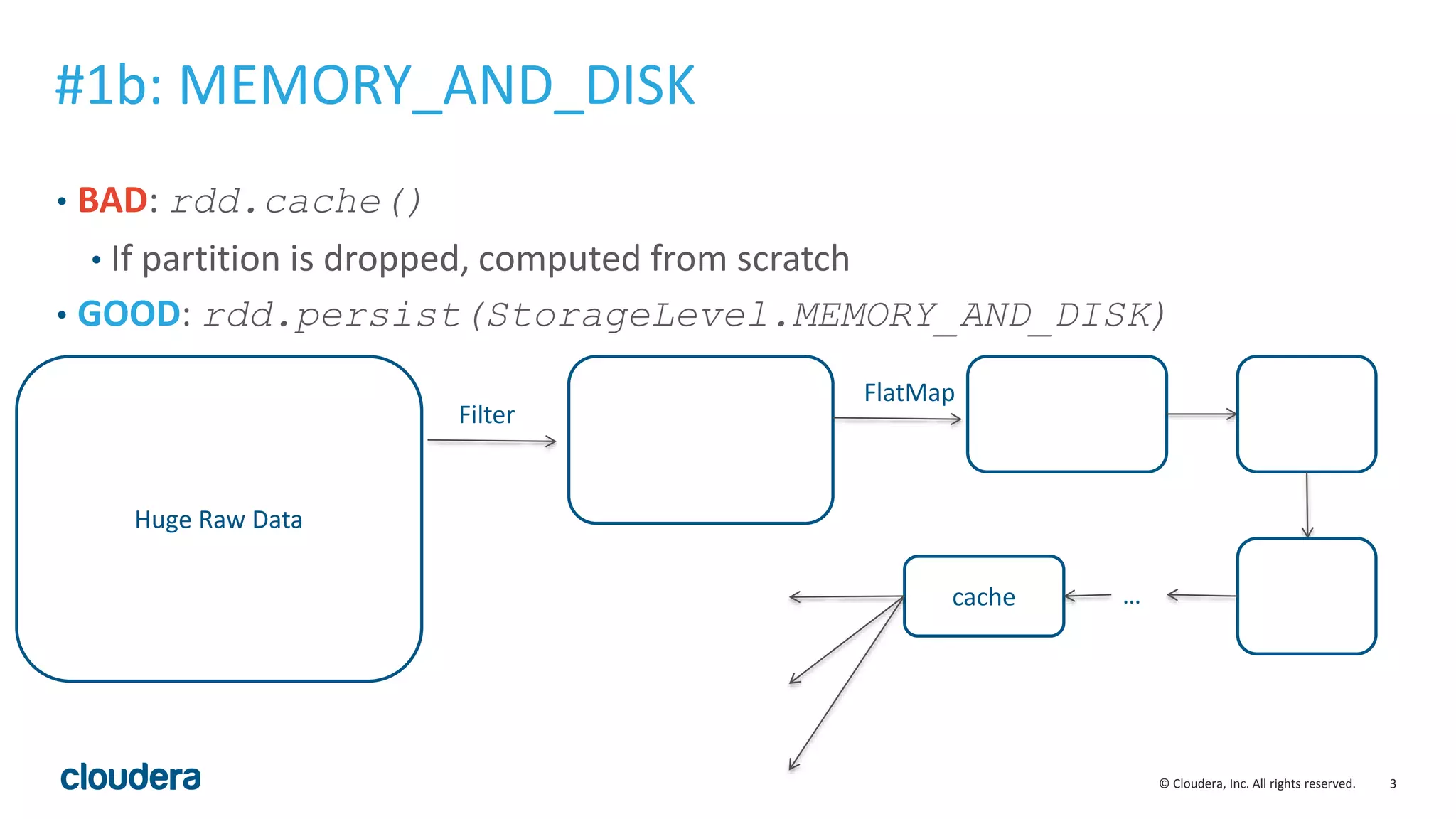

1. Name cached RDDs and accumulators for debugging.

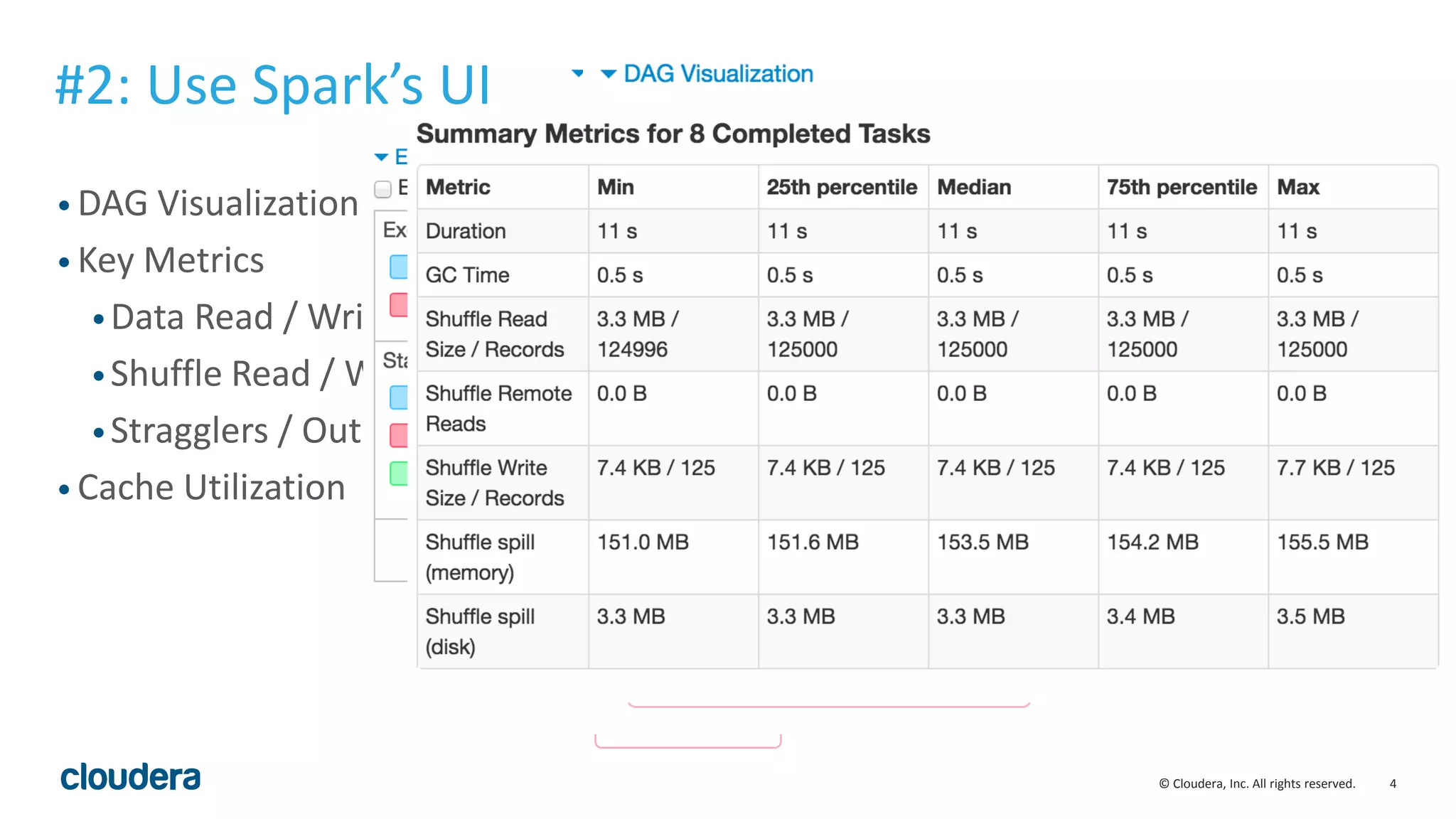

2. Use Spark's UI to visualize jobs and monitor metrics like data read/written and shuffle operations.

3. Add counters to debug jobs and sample errors.

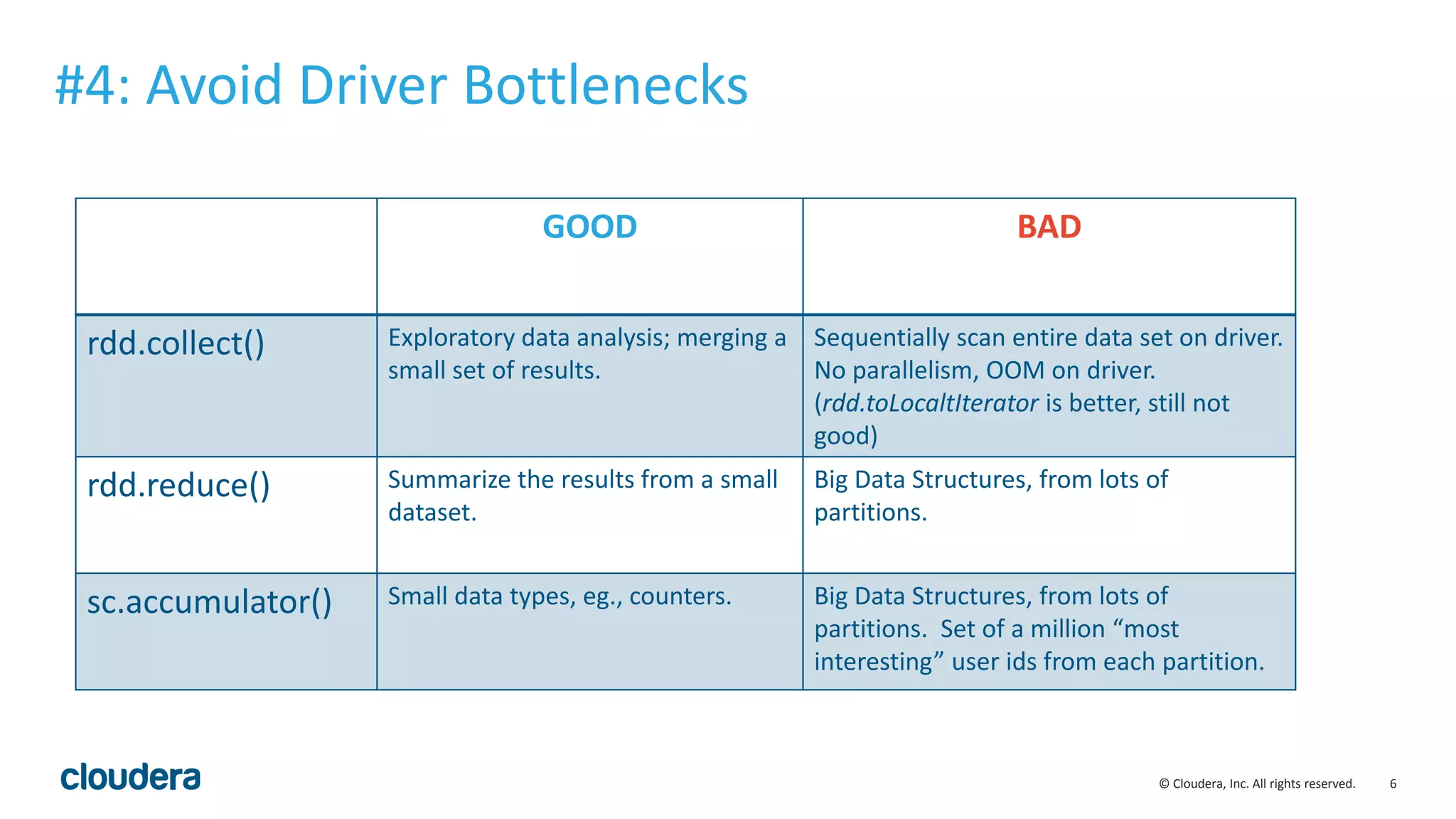

4. Avoid driver bottlenecks by using distributed operations instead of collecting data to the driver.

5. Develop Spark applications in Scala for simpler code.

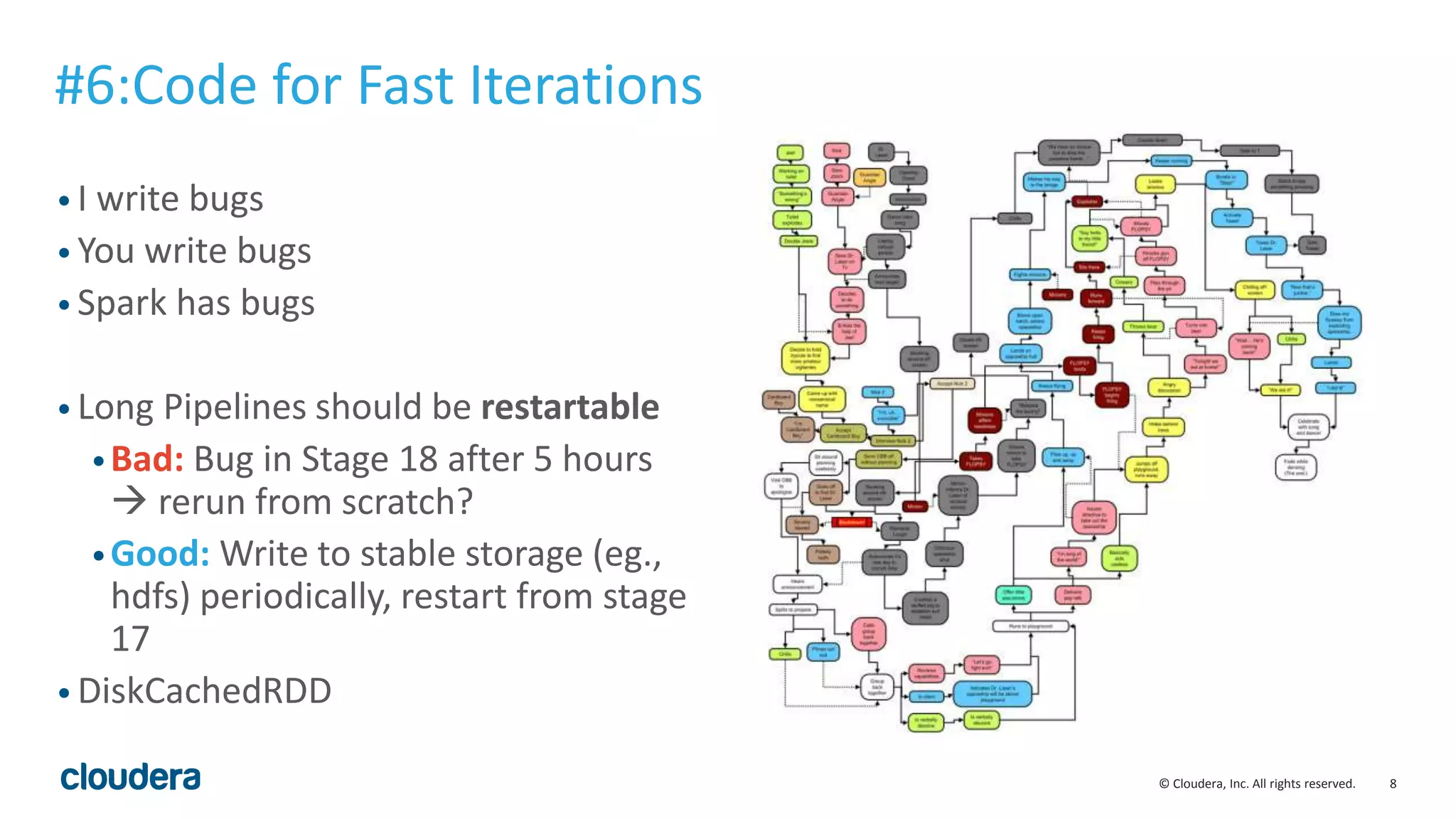

6. Write data periodically to storage for faster iteration if jobs fail.

7. Perform narrow joins when possible to improve performance.

![5© Cloudera, Inc. All rights reserved.

• Use Sample Code

•Count Errors

•Sample Errors

• SparkListener to output updates

• https://gist.github.com/squito/2f7cc0

2c313e4c9e7df4

#3: Debug Counters

val parseErrors = ErrorTracker(

“parsing errors", sc)

val allParsed: RDD[T] =

sc.textFile(inputFile).flatMap { line =>

try {

val r = Some(parser(line))

parseErrors.localValue.ok()

r

} catch {

case NonFatal(ex) =>

parseErrors.localValue.error(line)

None

}

}](https://image.slidesharecdn.com/5apachesparktips-151006174503-lva1-app6892/75/5-Apache-Spark-Tips-in-5-Minutes-5-2048.jpg)