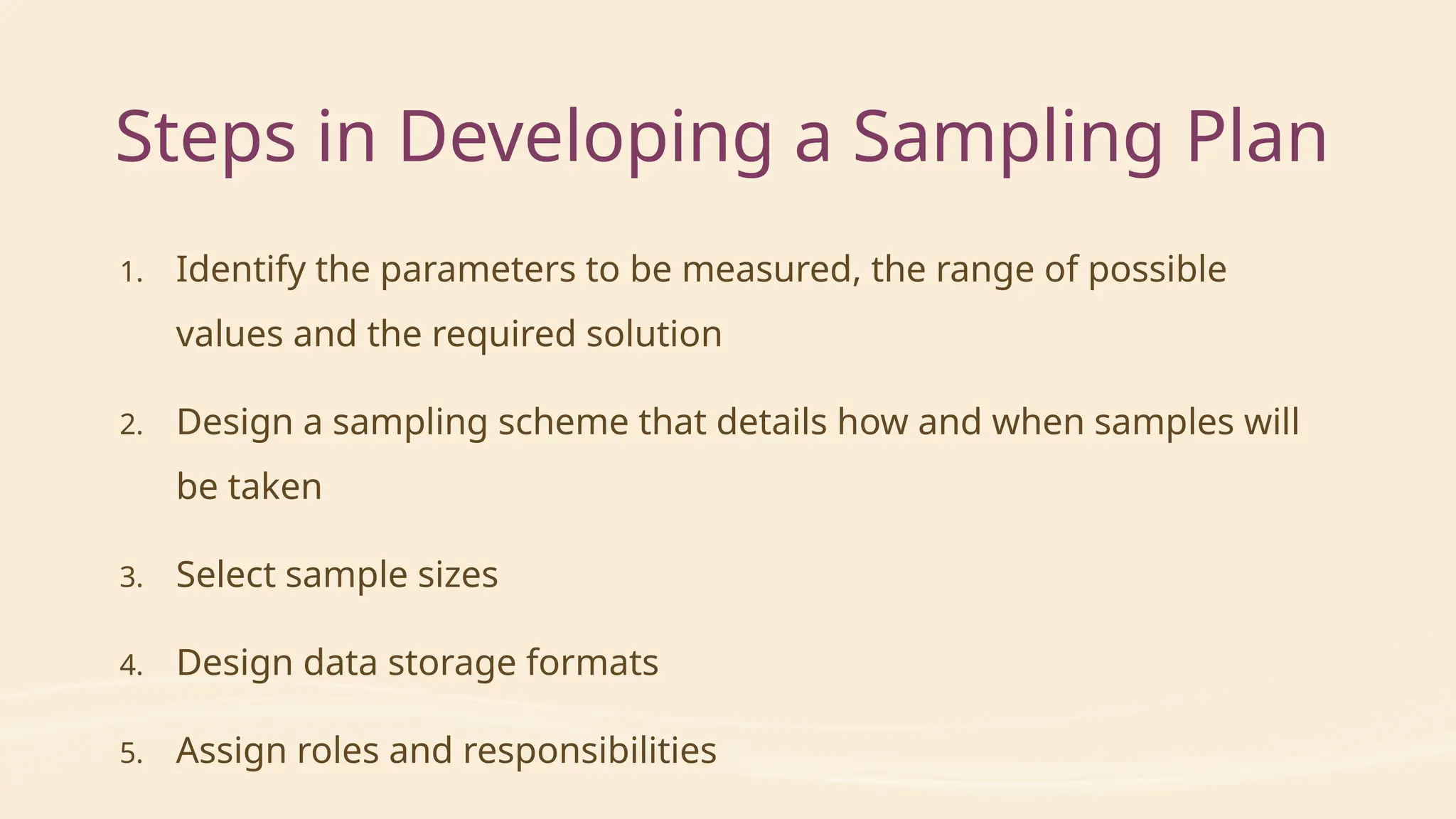

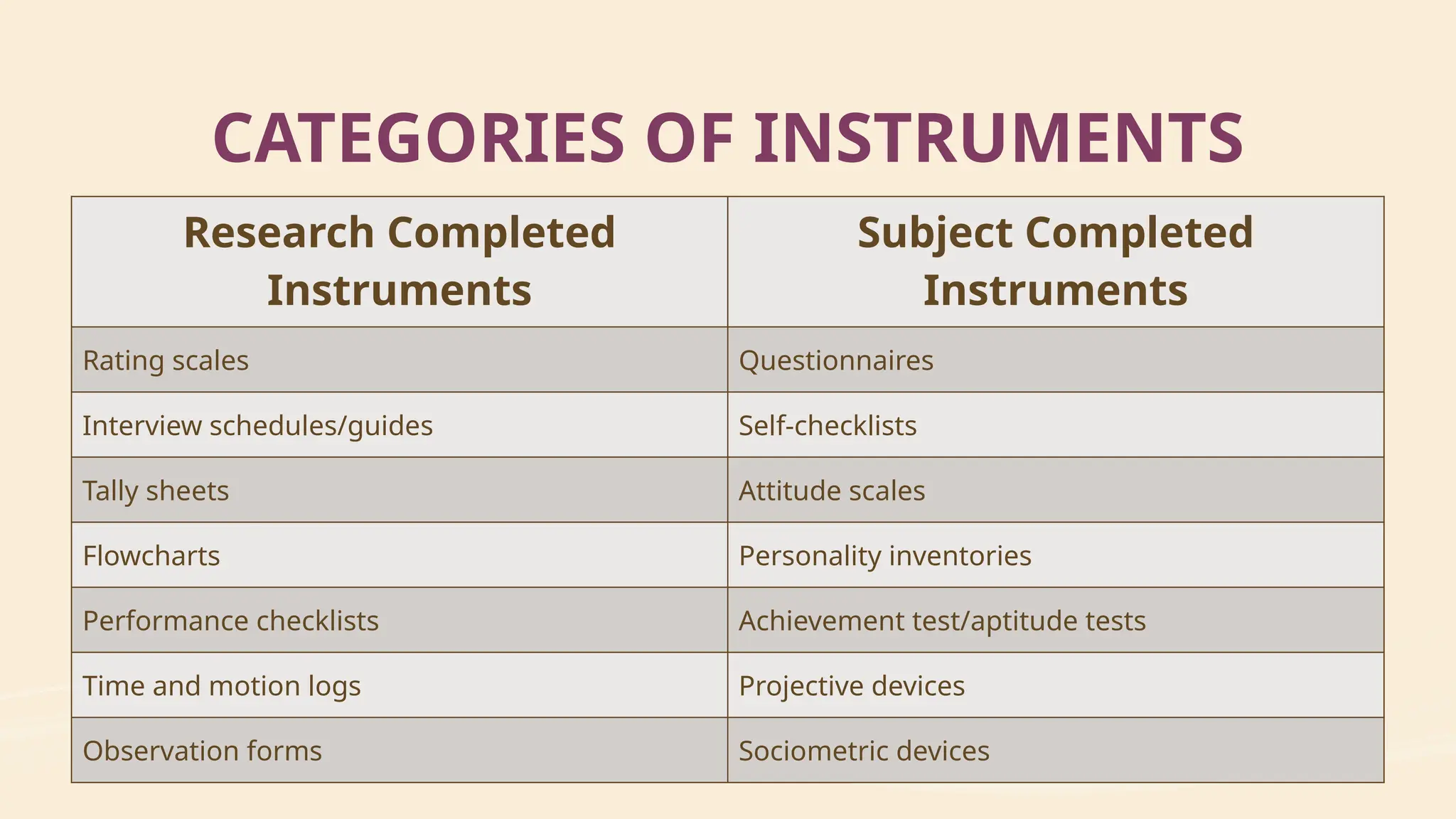

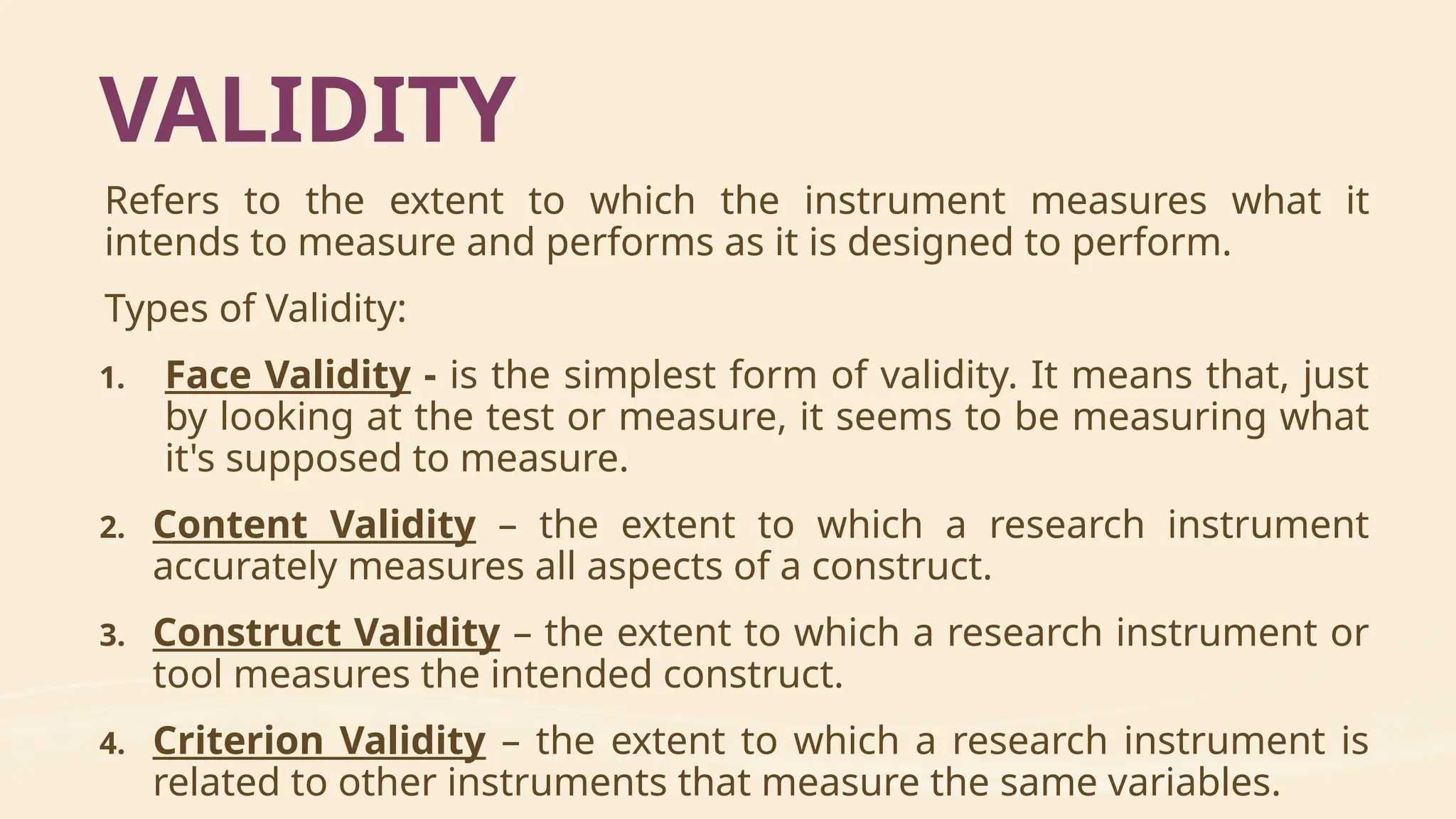

The document outlines the purpose and methods of descriptive research design, including how to systematically collect and analyze data through various techniques such as sampling, interviews, and observations. It emphasizes the importance of validity and reliability in research instruments and provides detailed guidance on report writing and methodology structure. Ultimately, it aims to help researchers accurately describe population characteristics while ensuring that the data collected supports their research objectives.