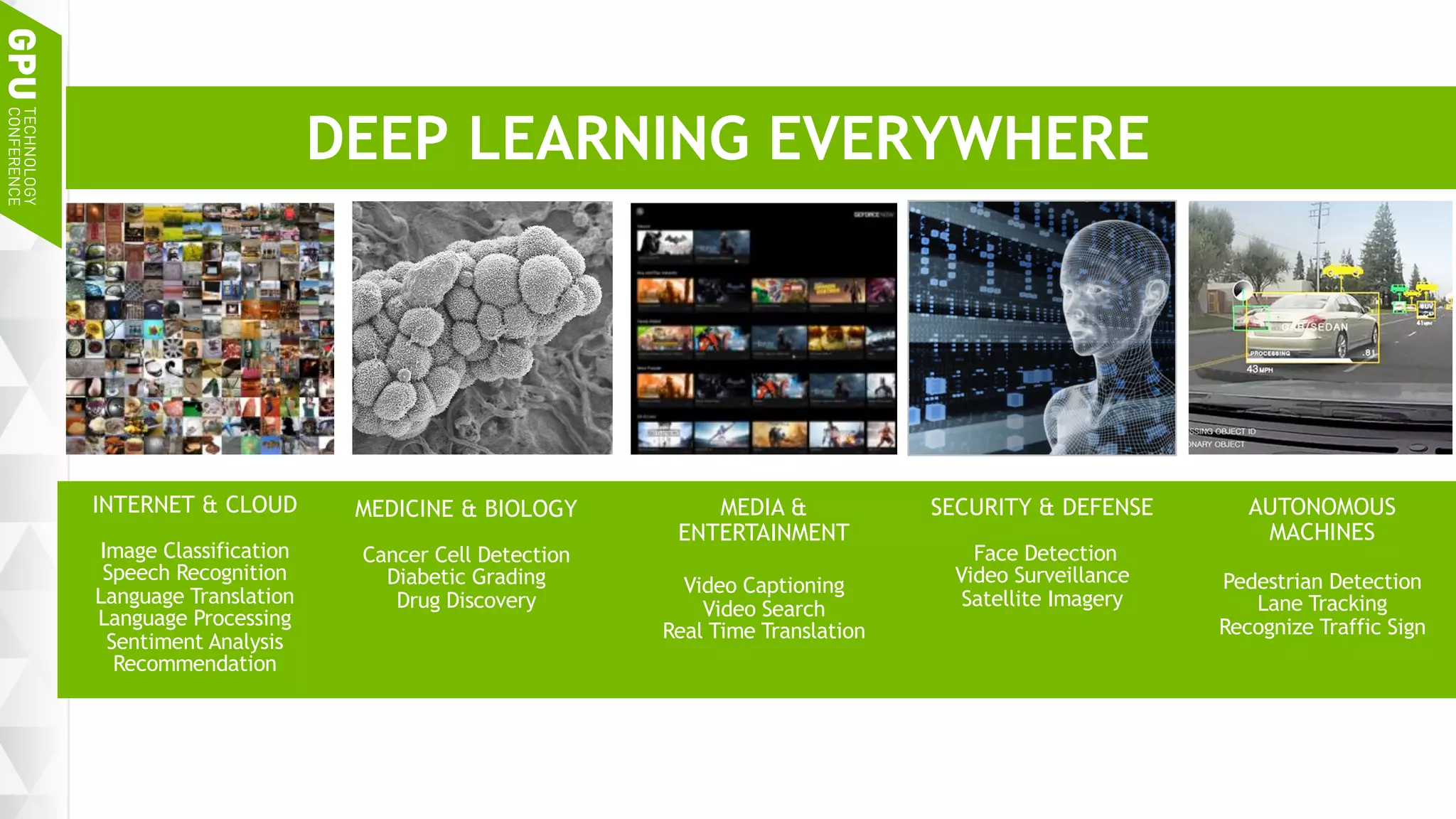

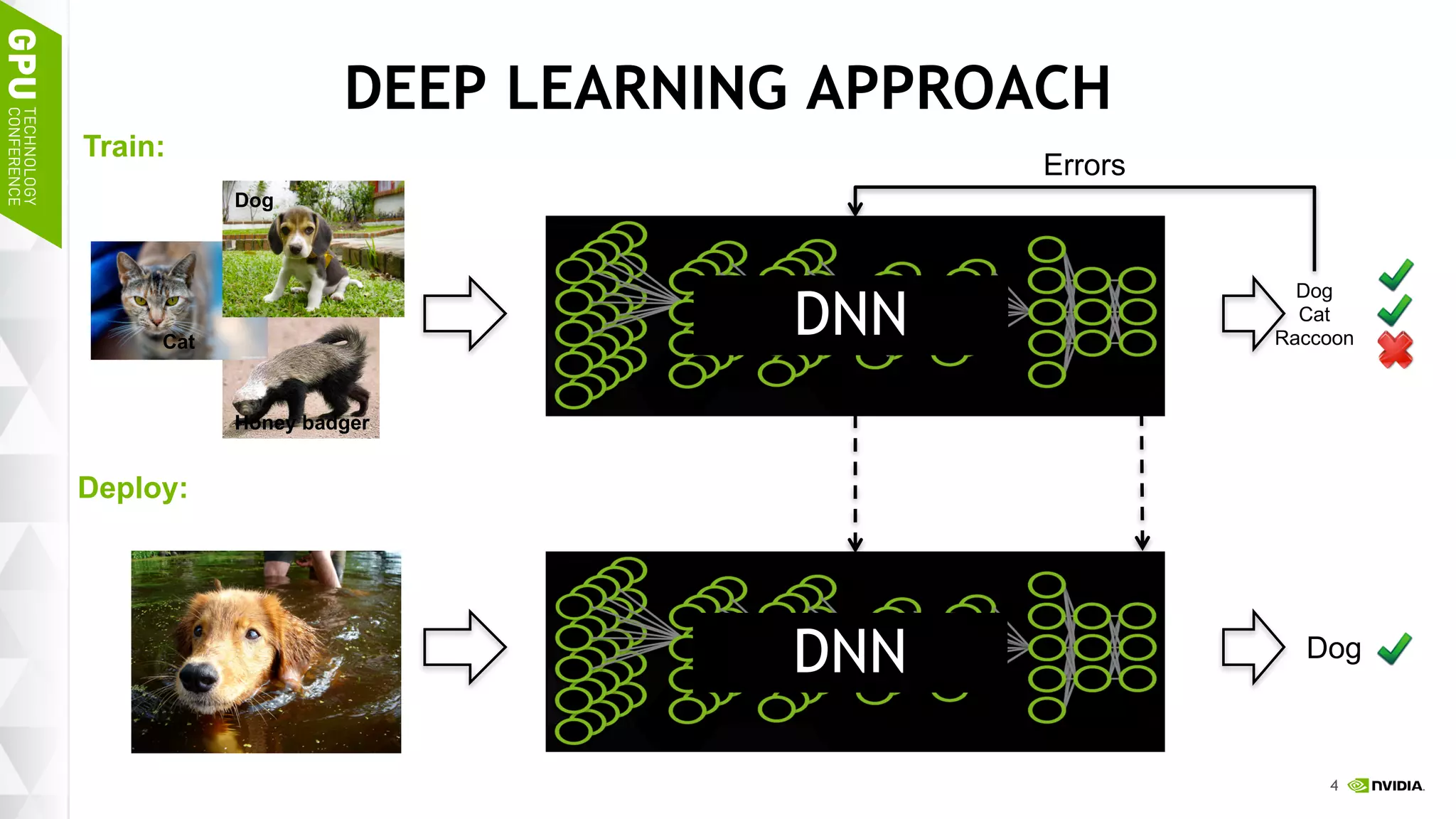

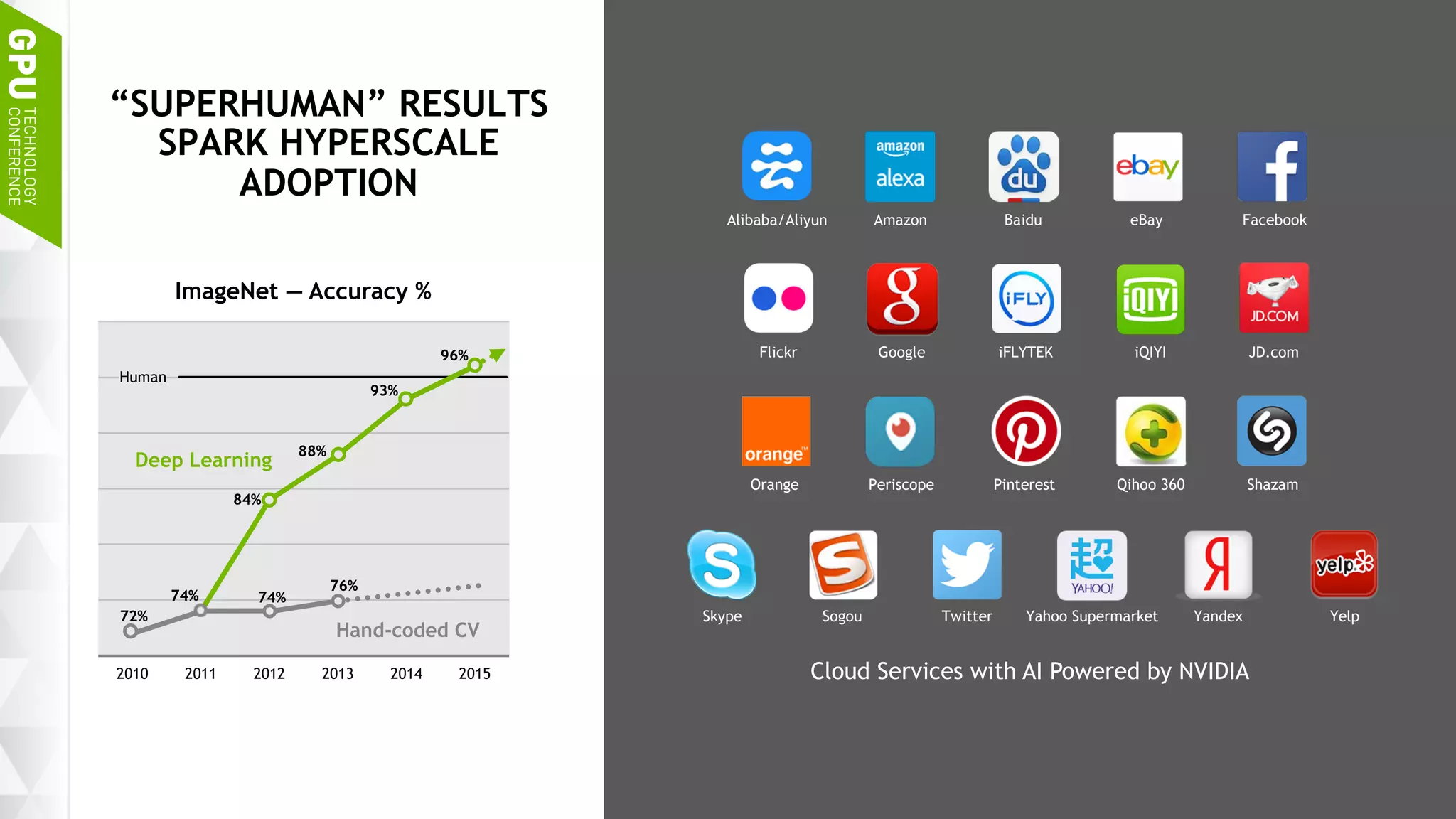

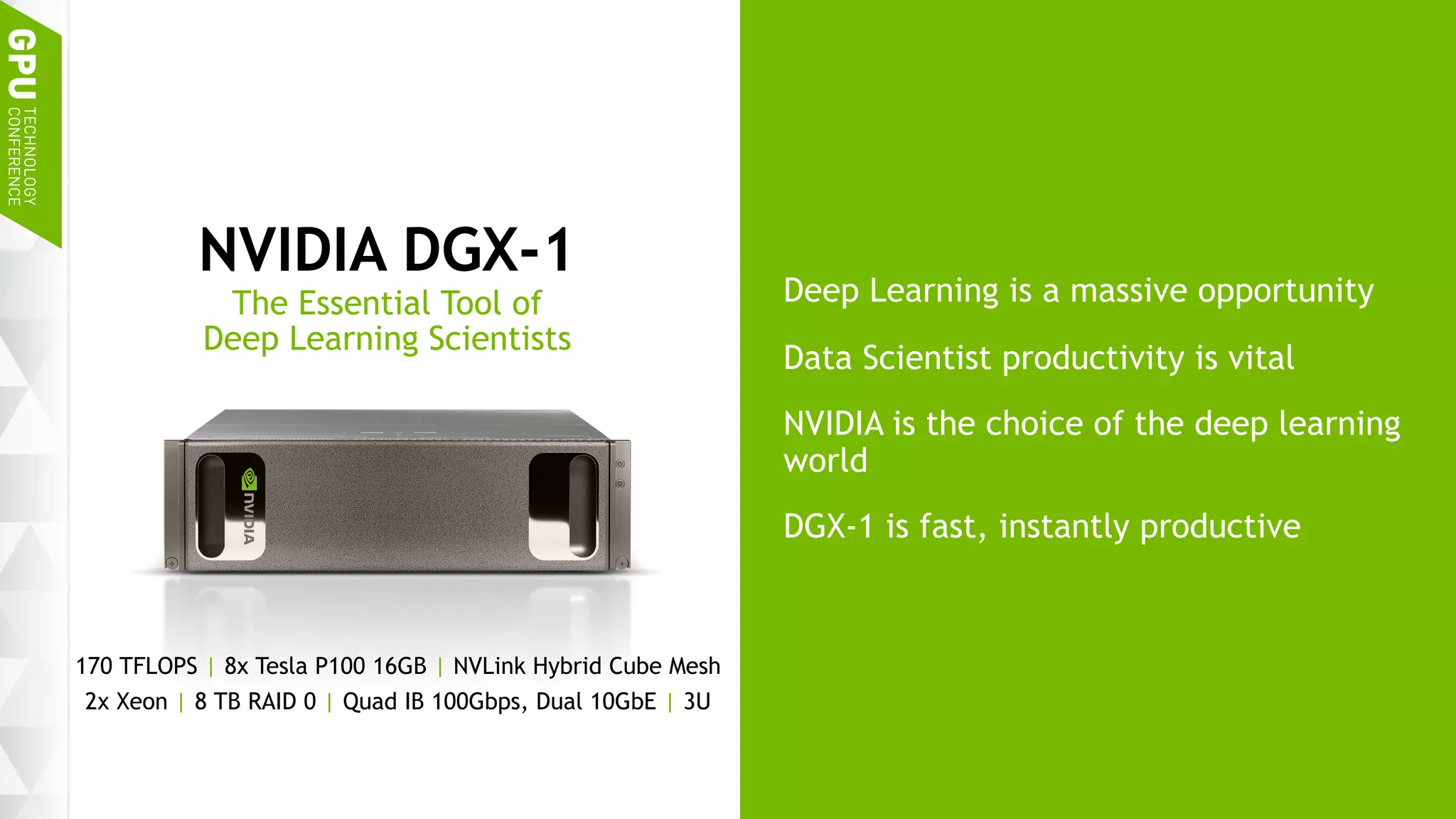

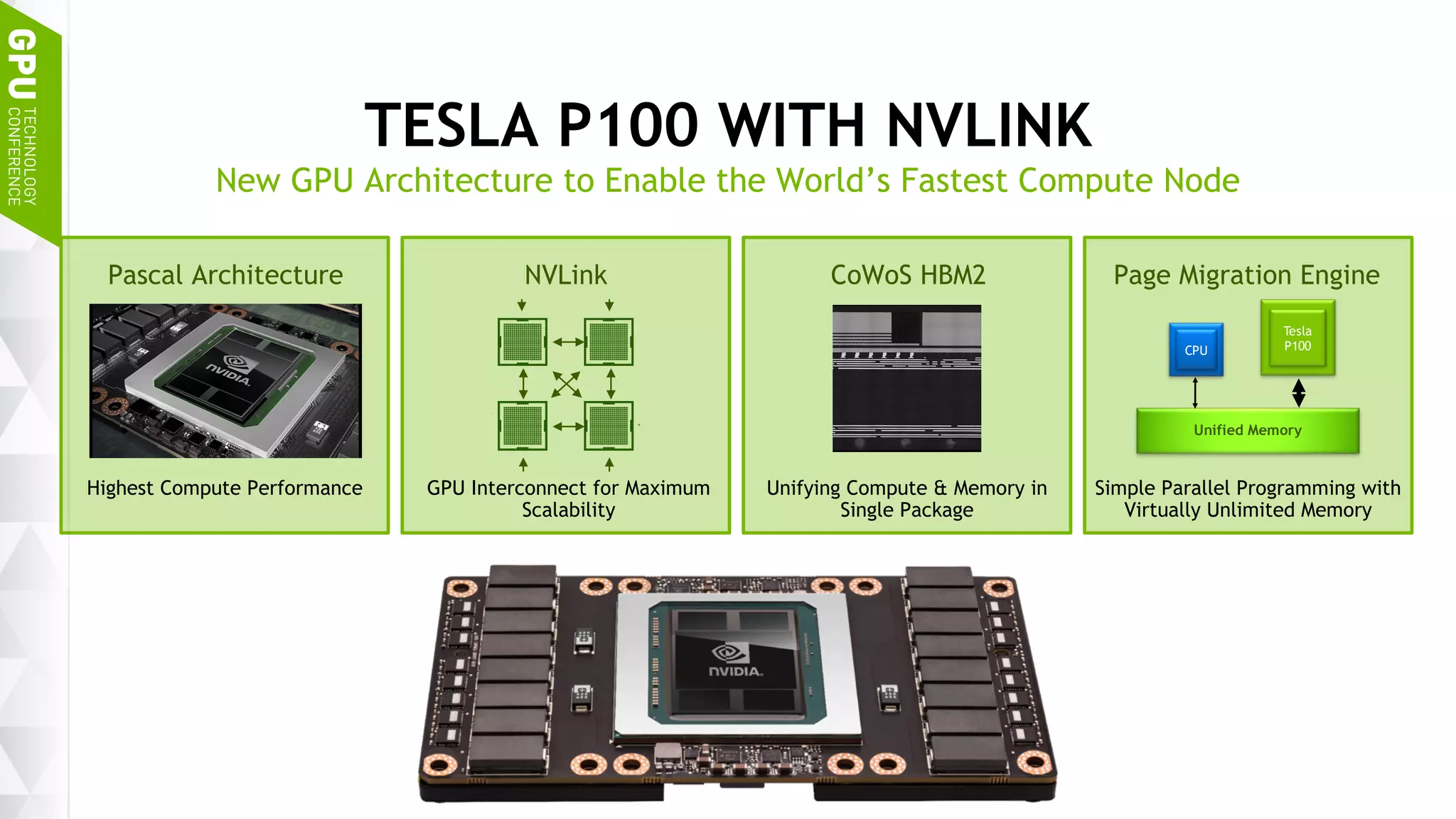

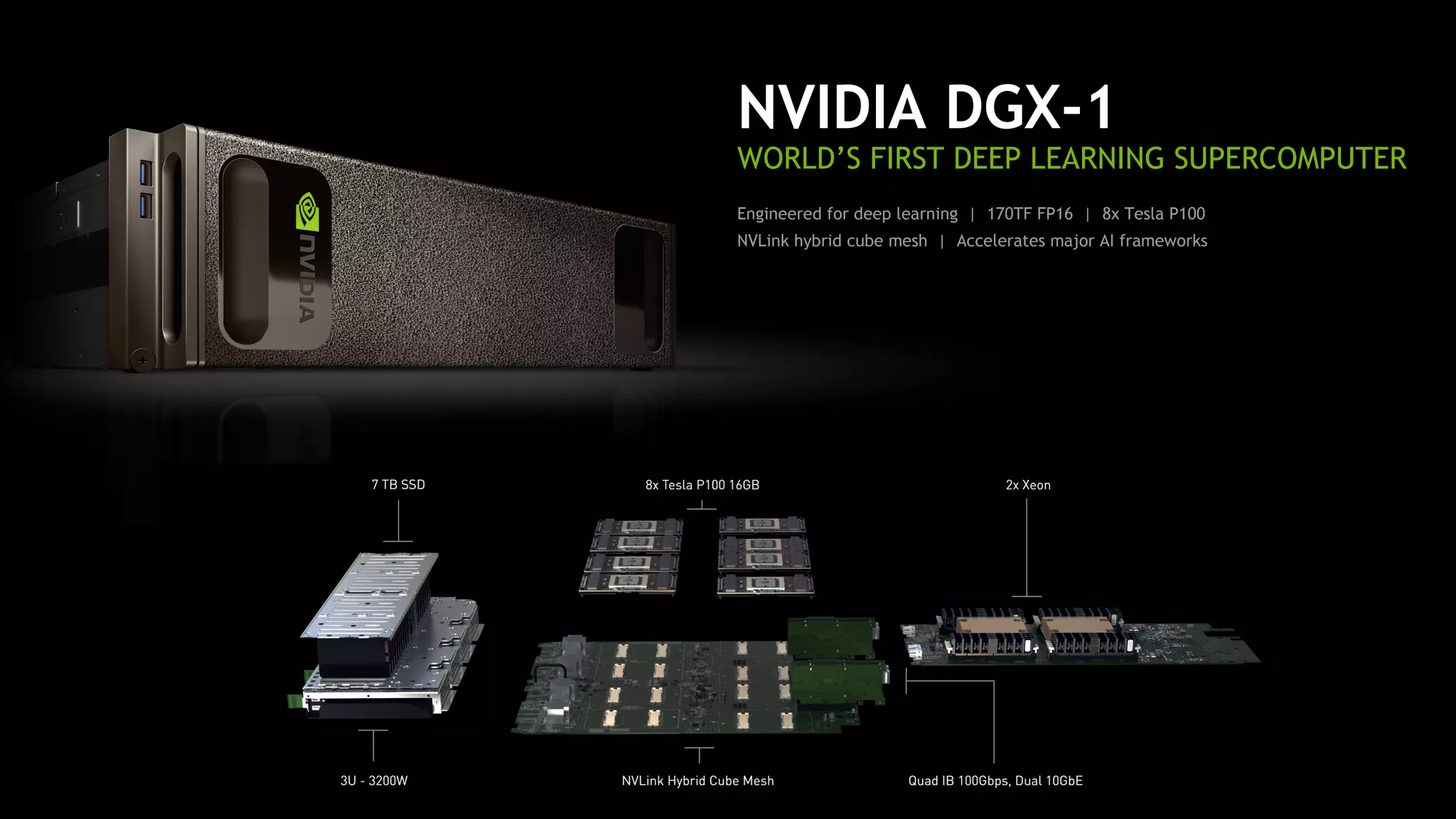

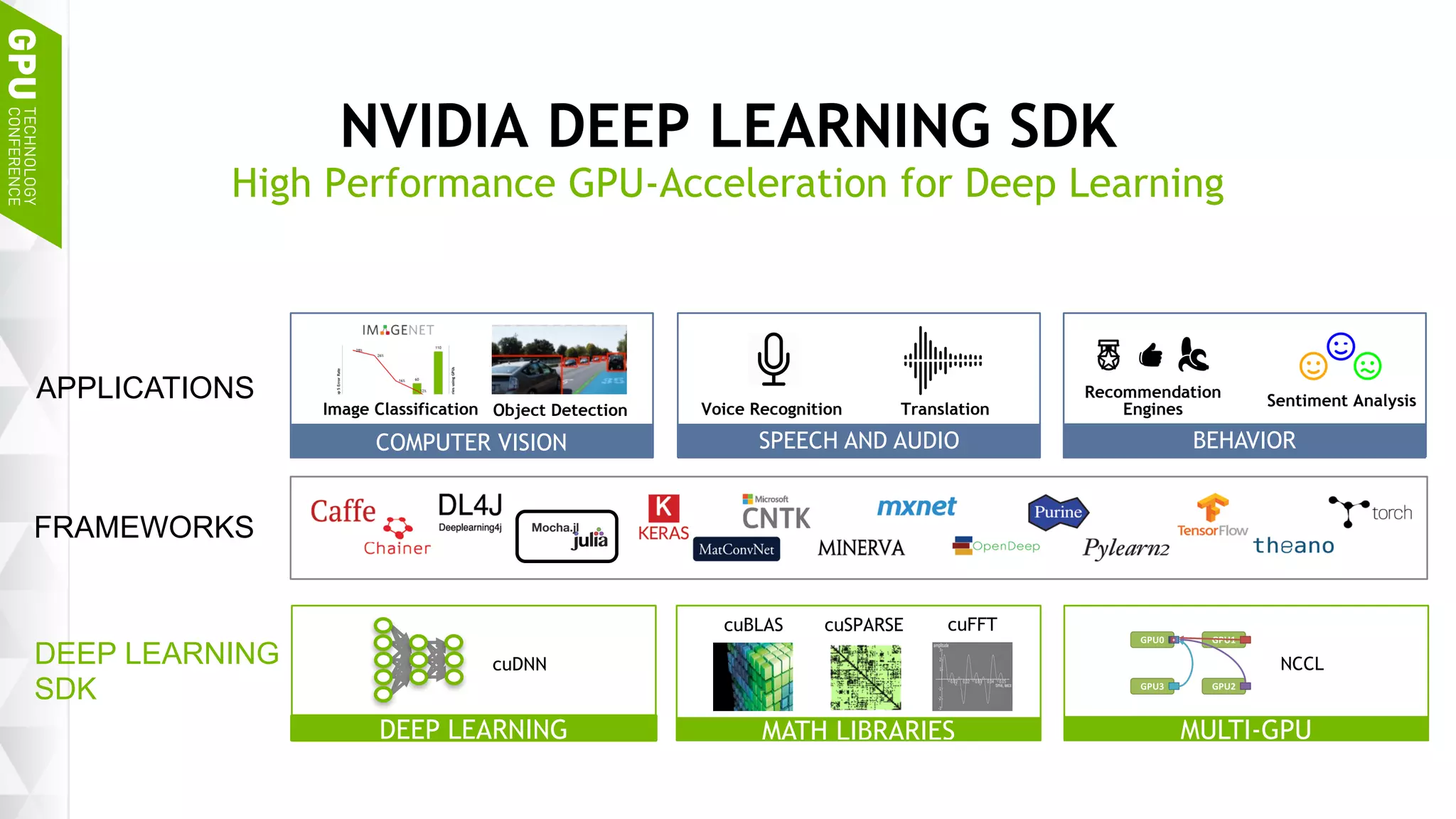

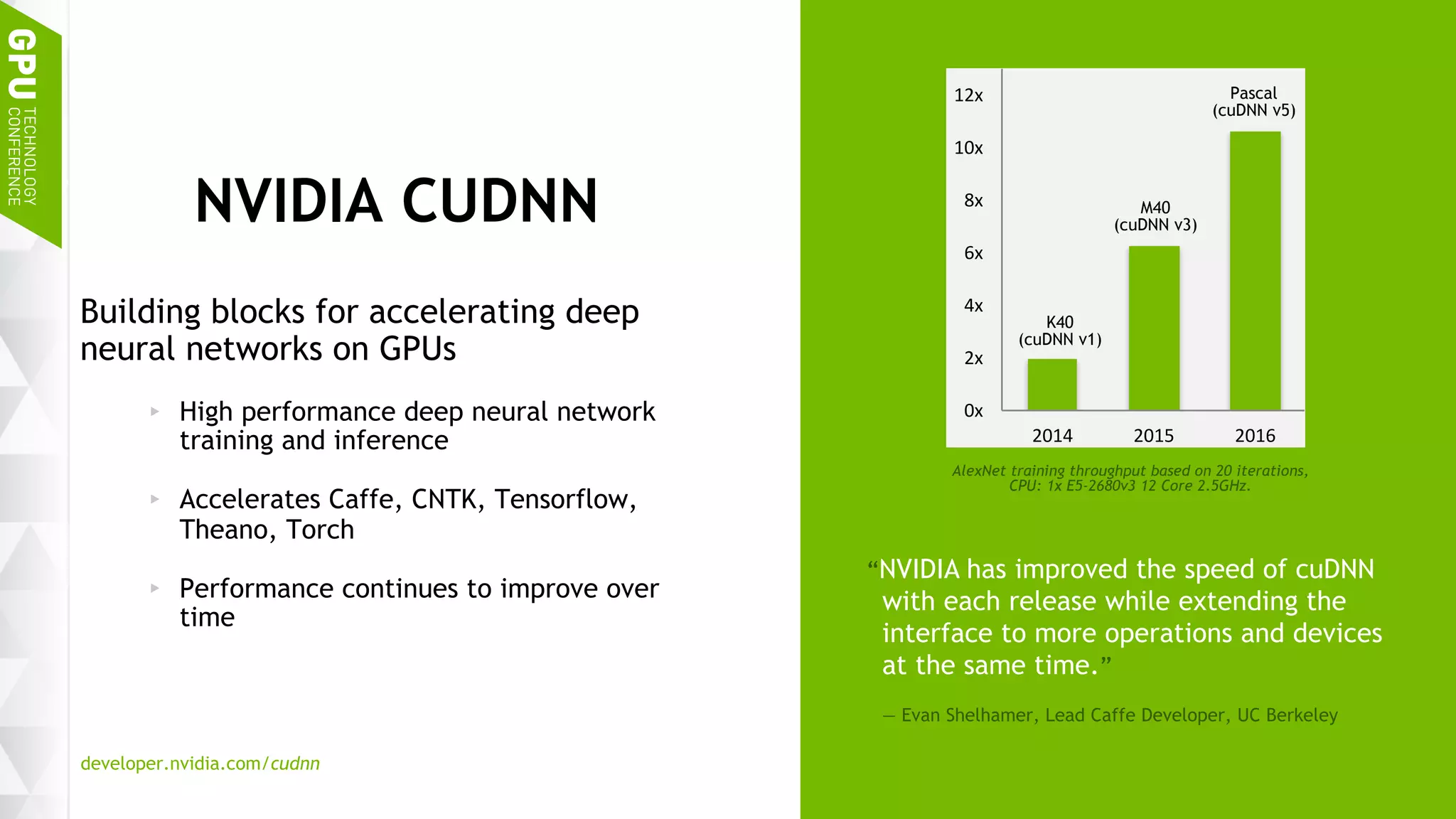

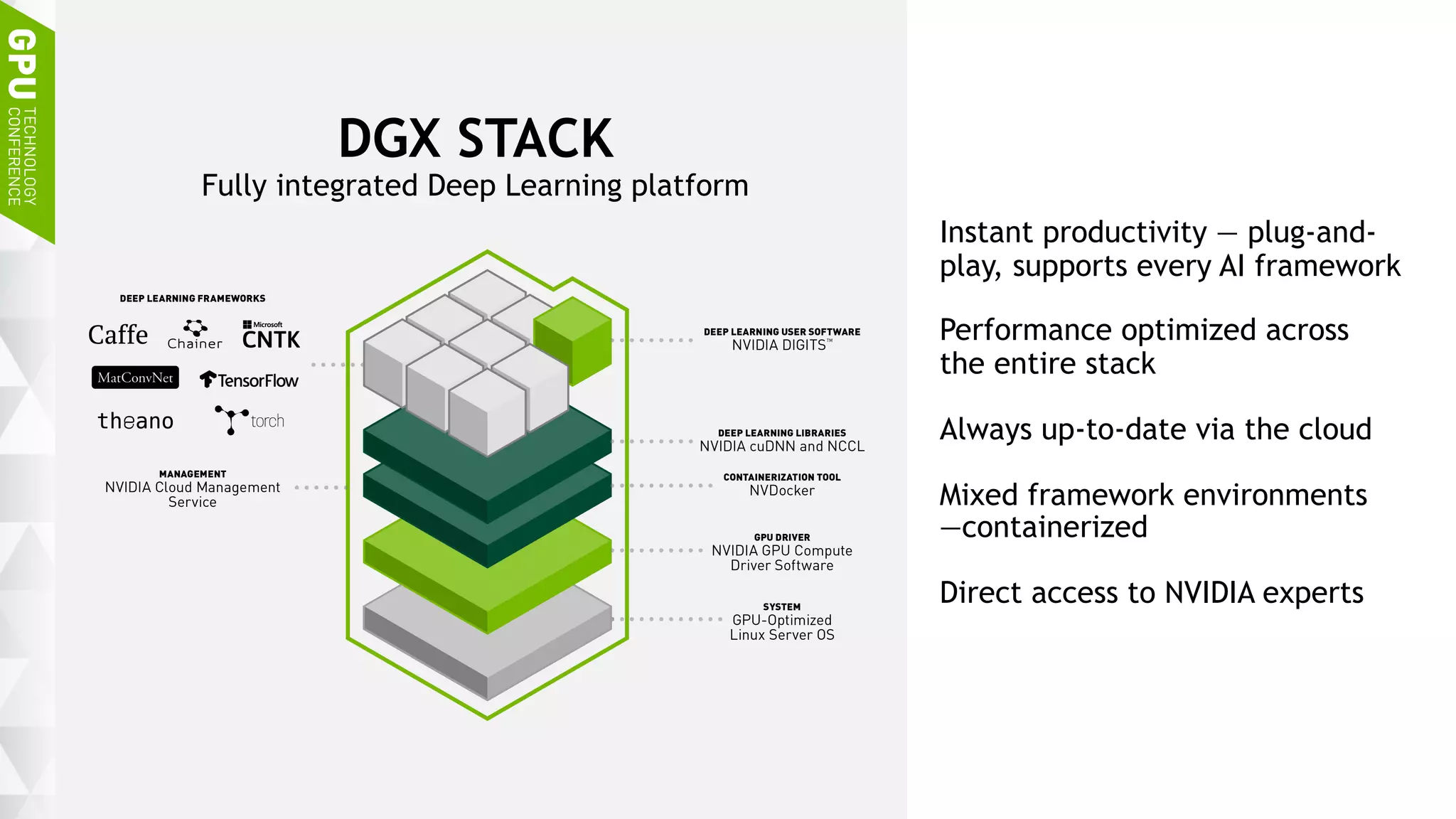

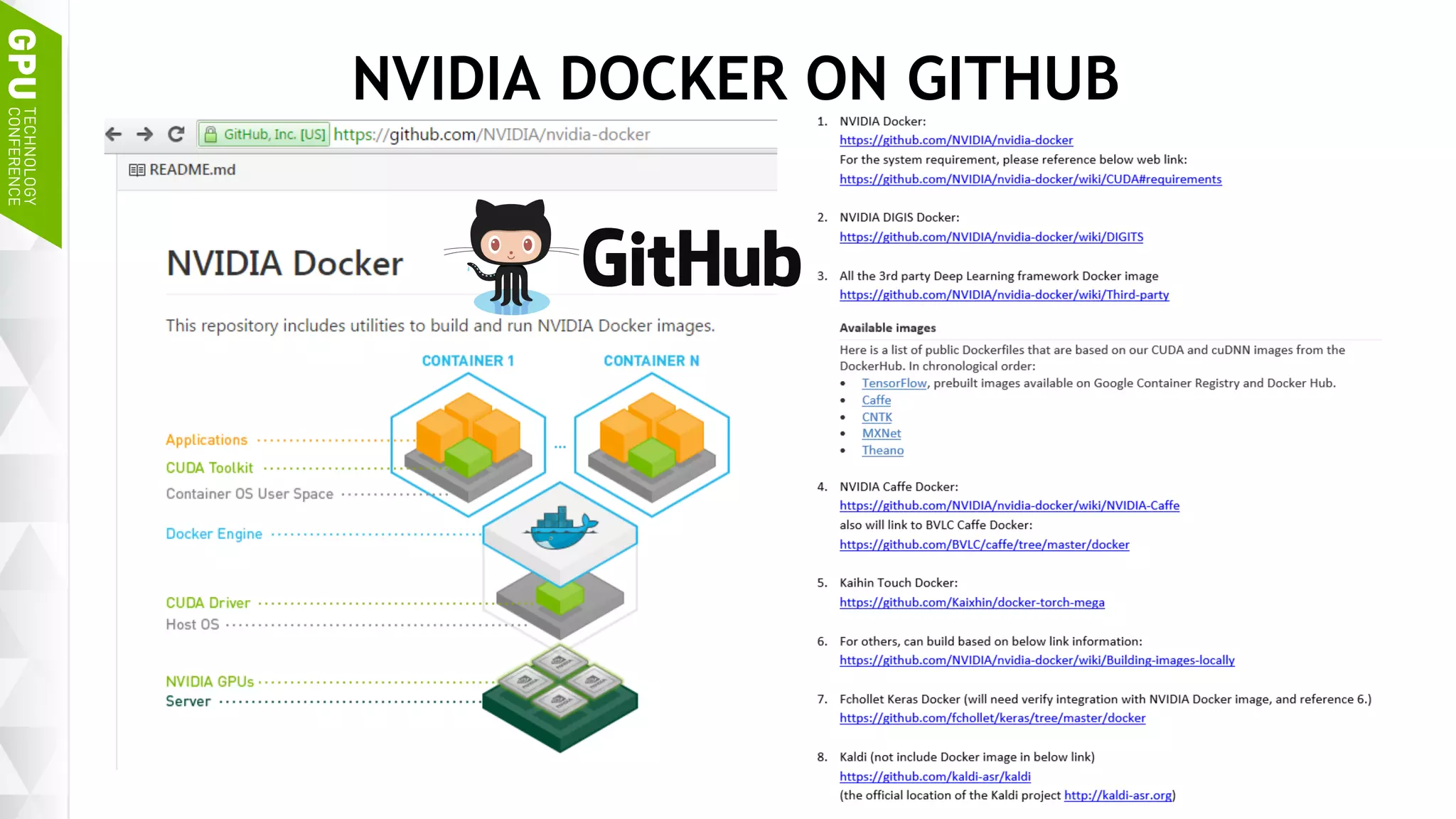

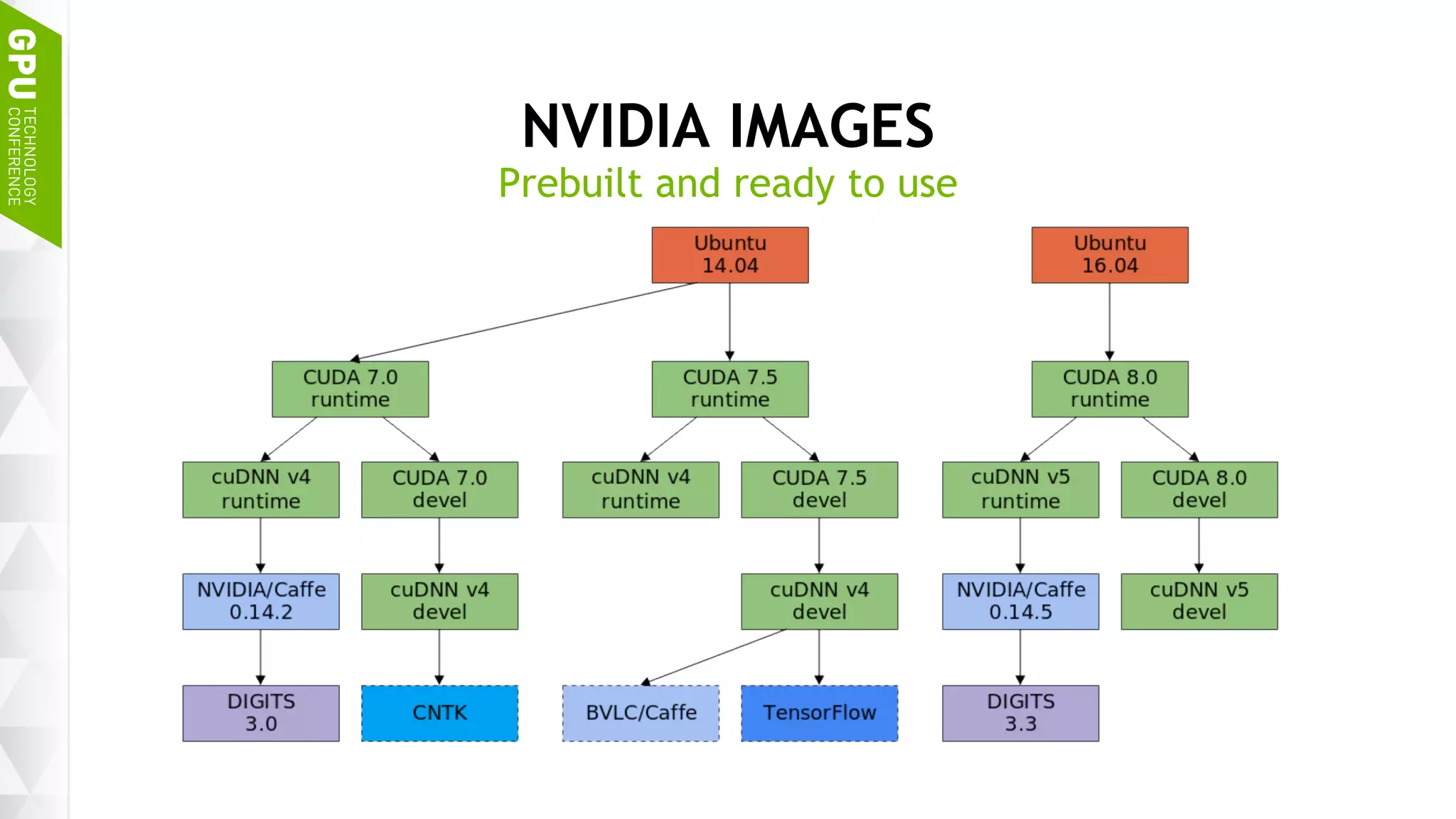

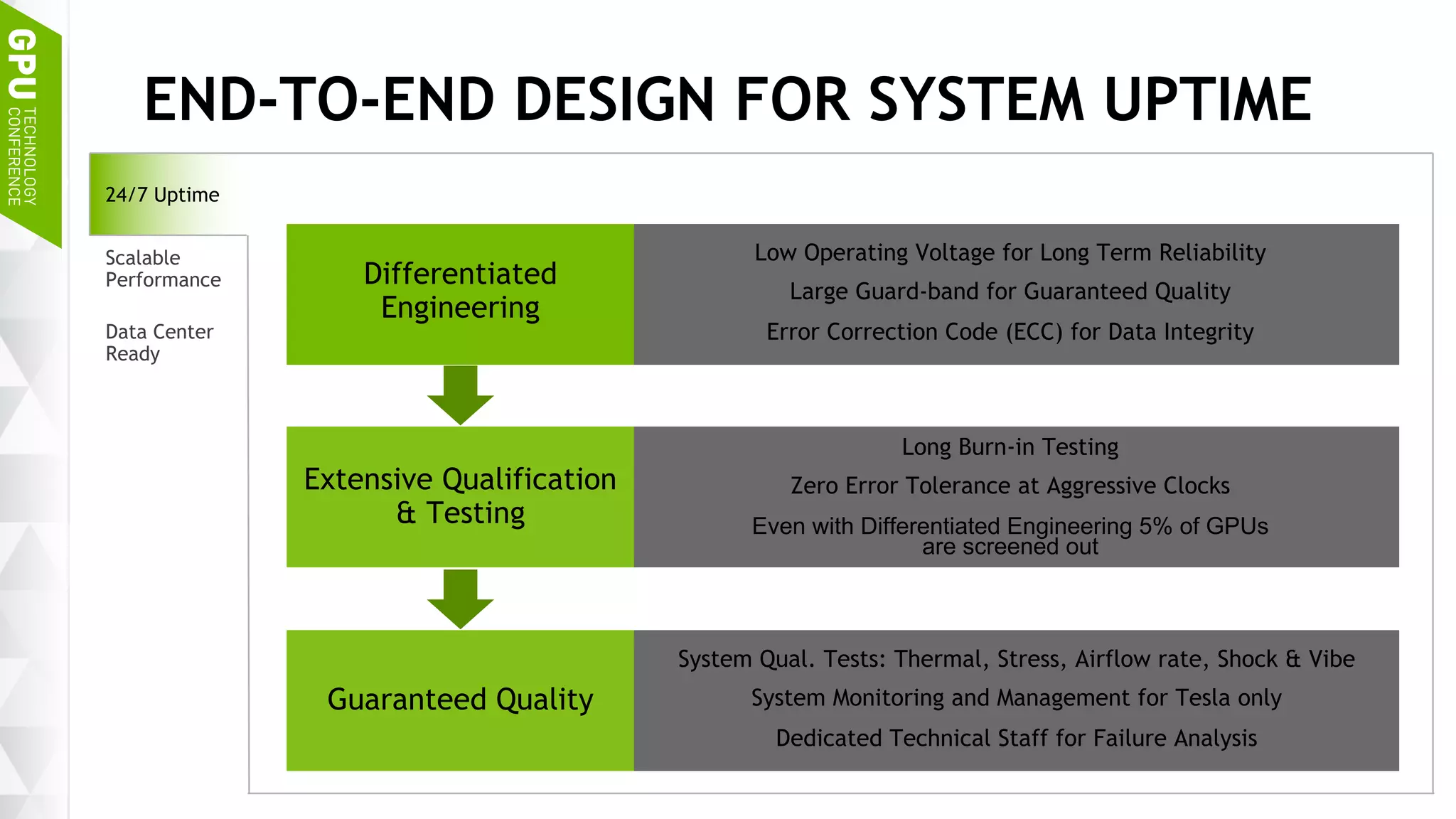

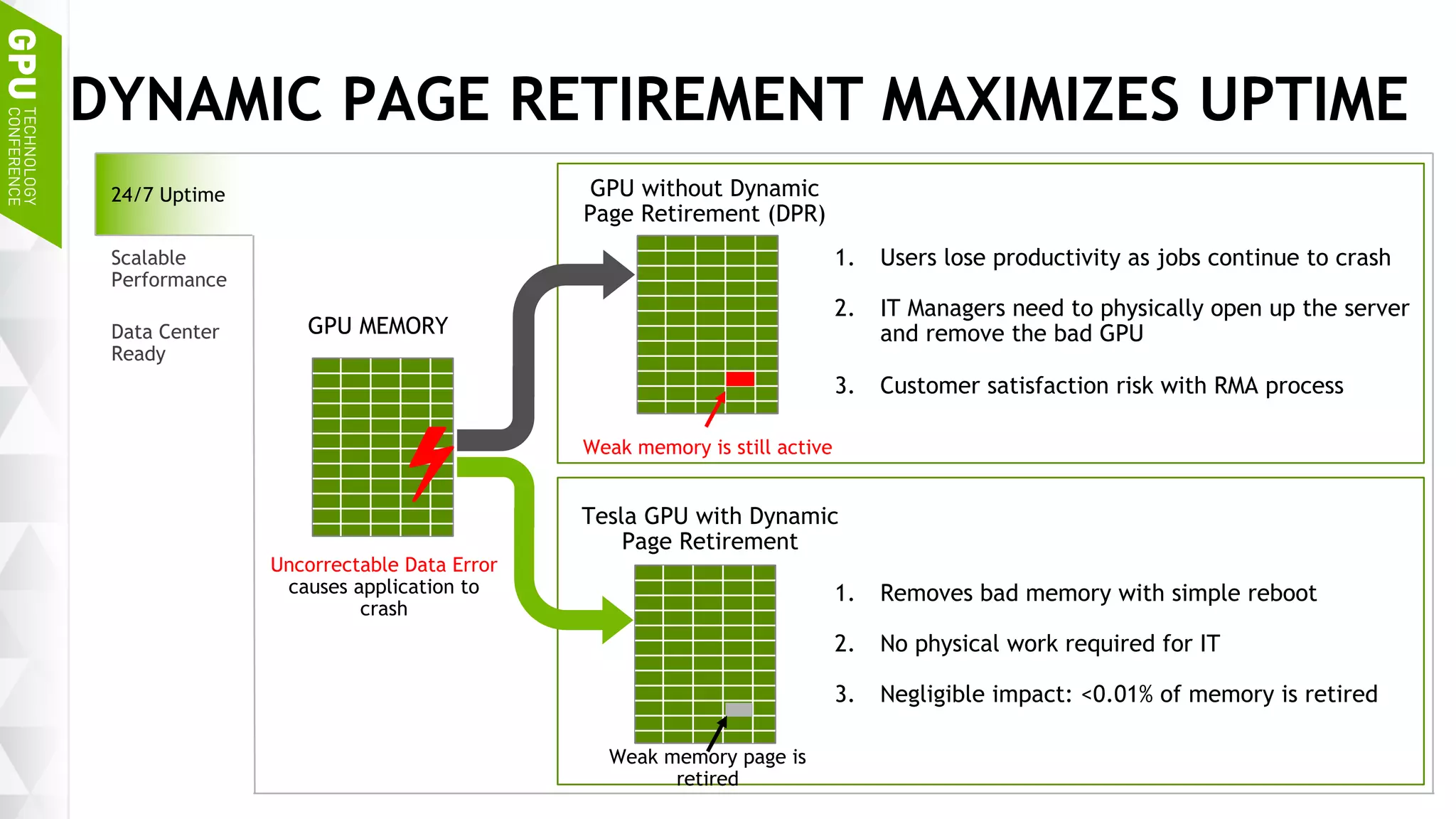

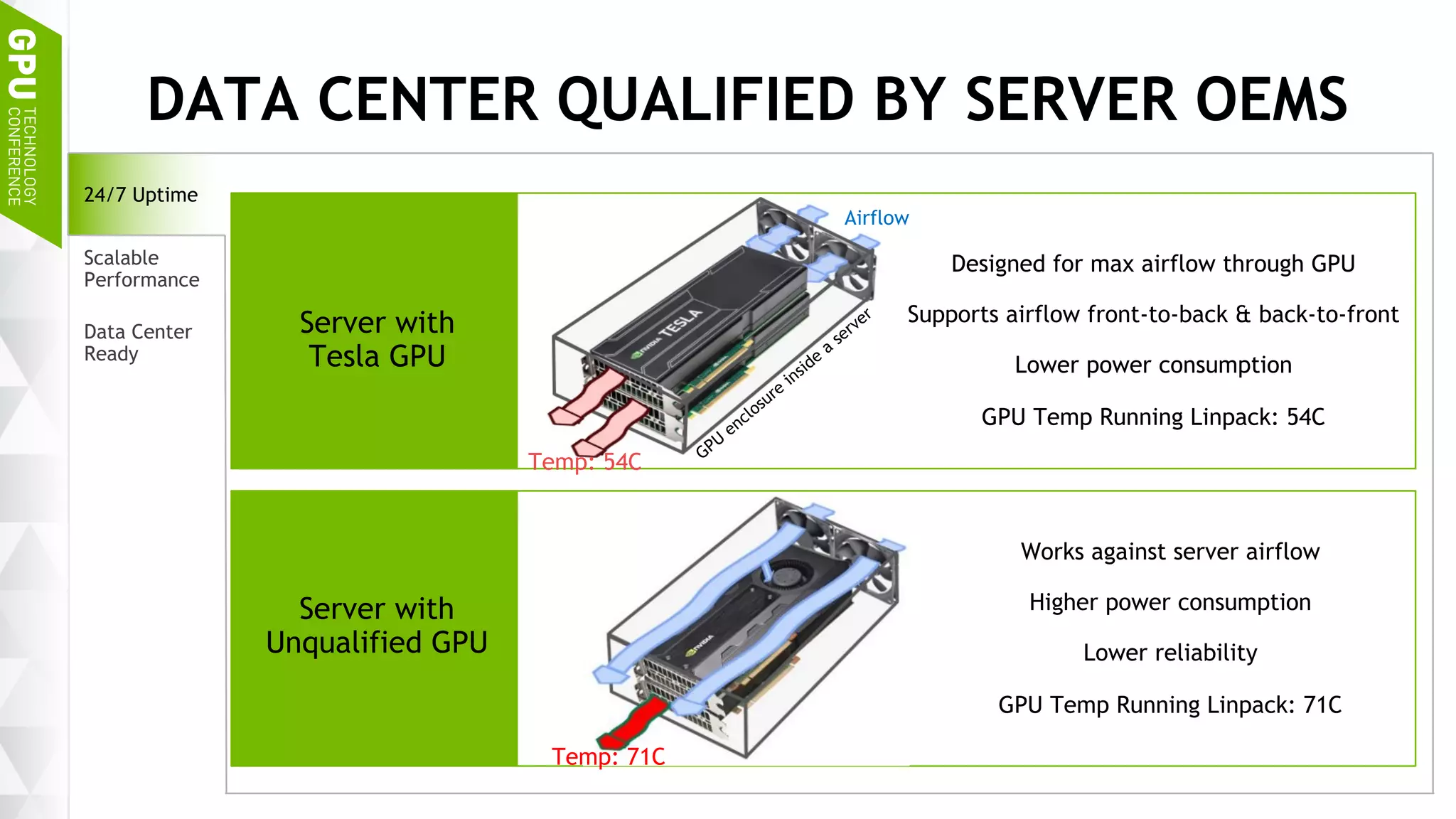

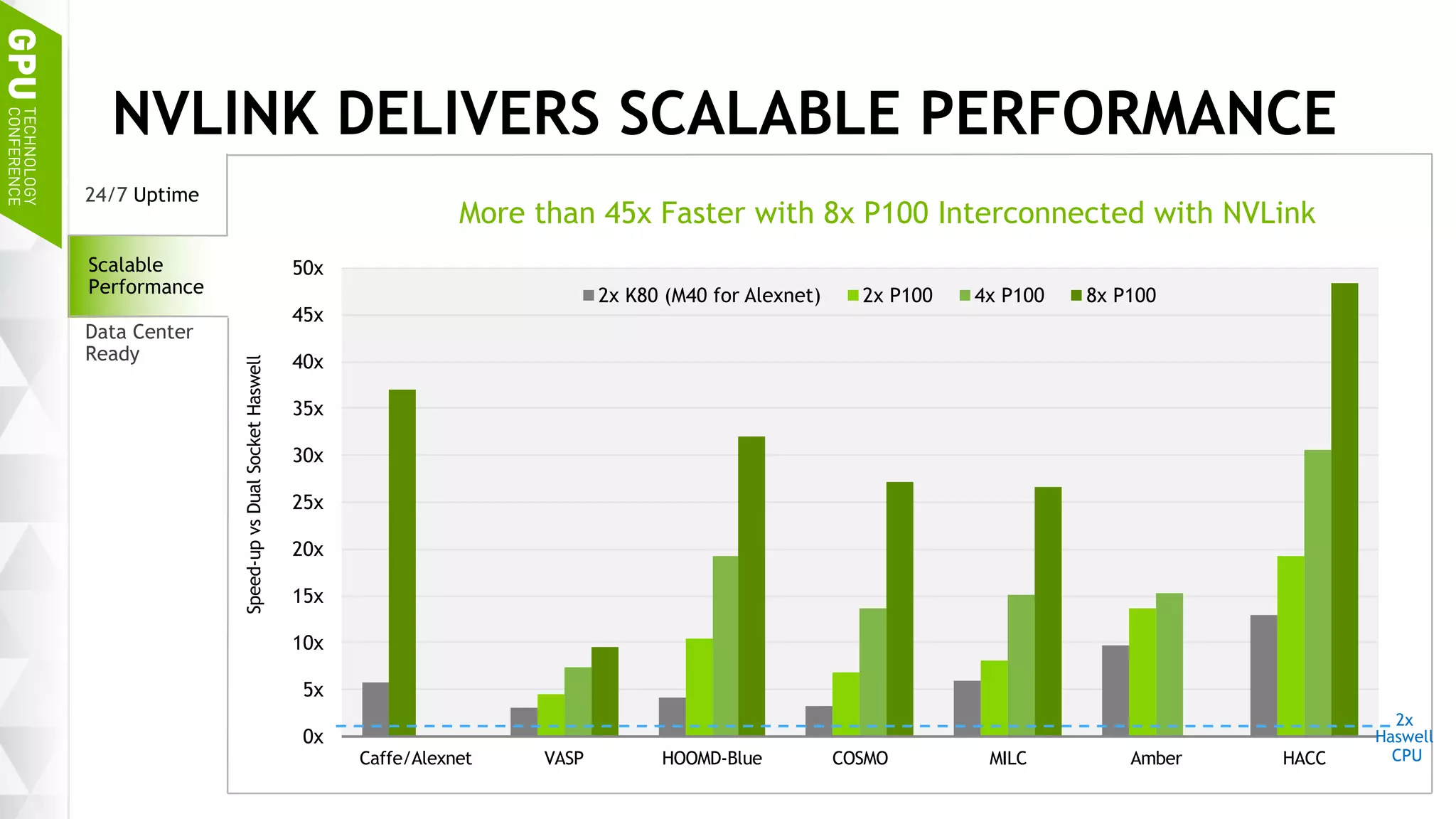

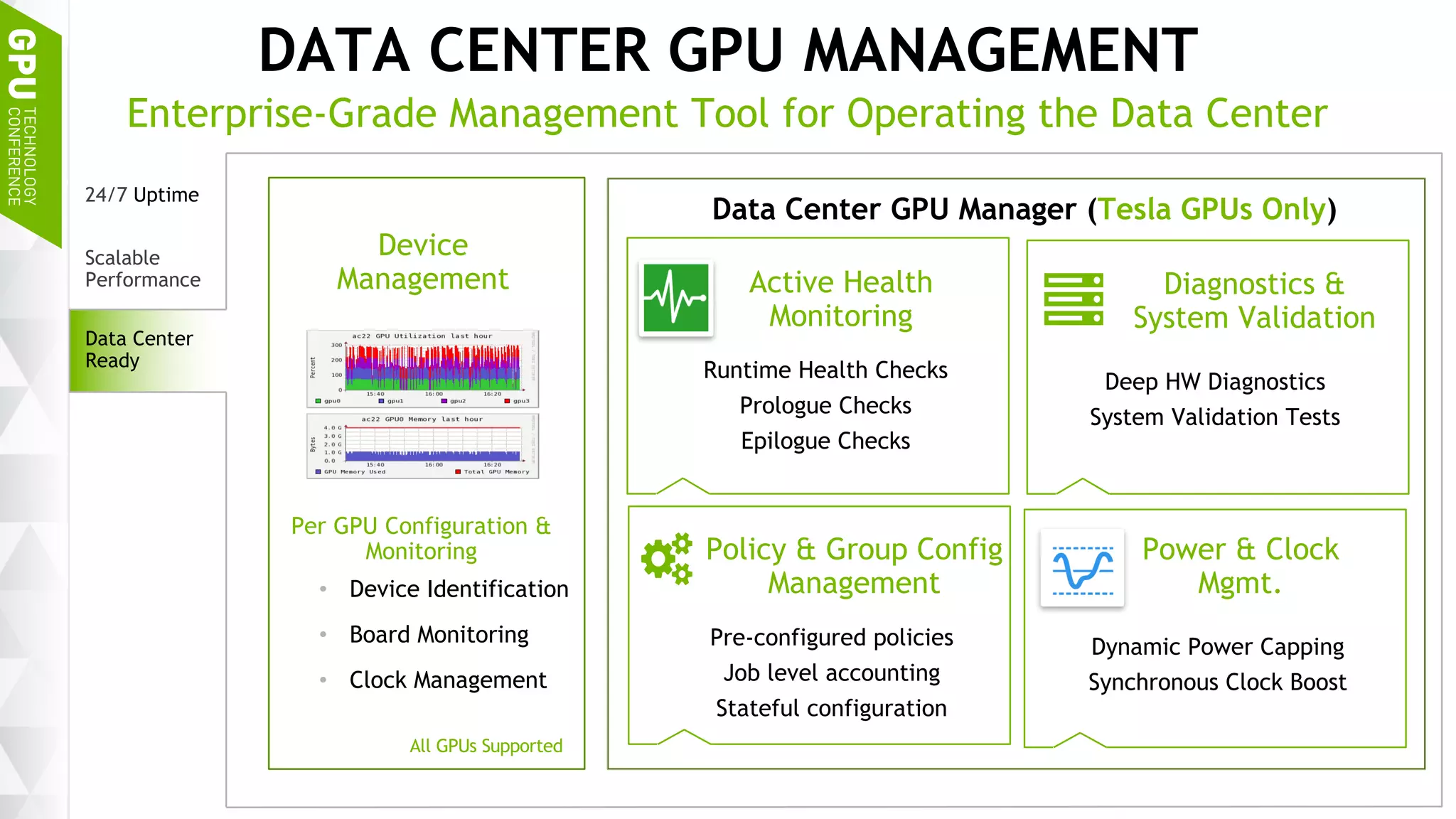

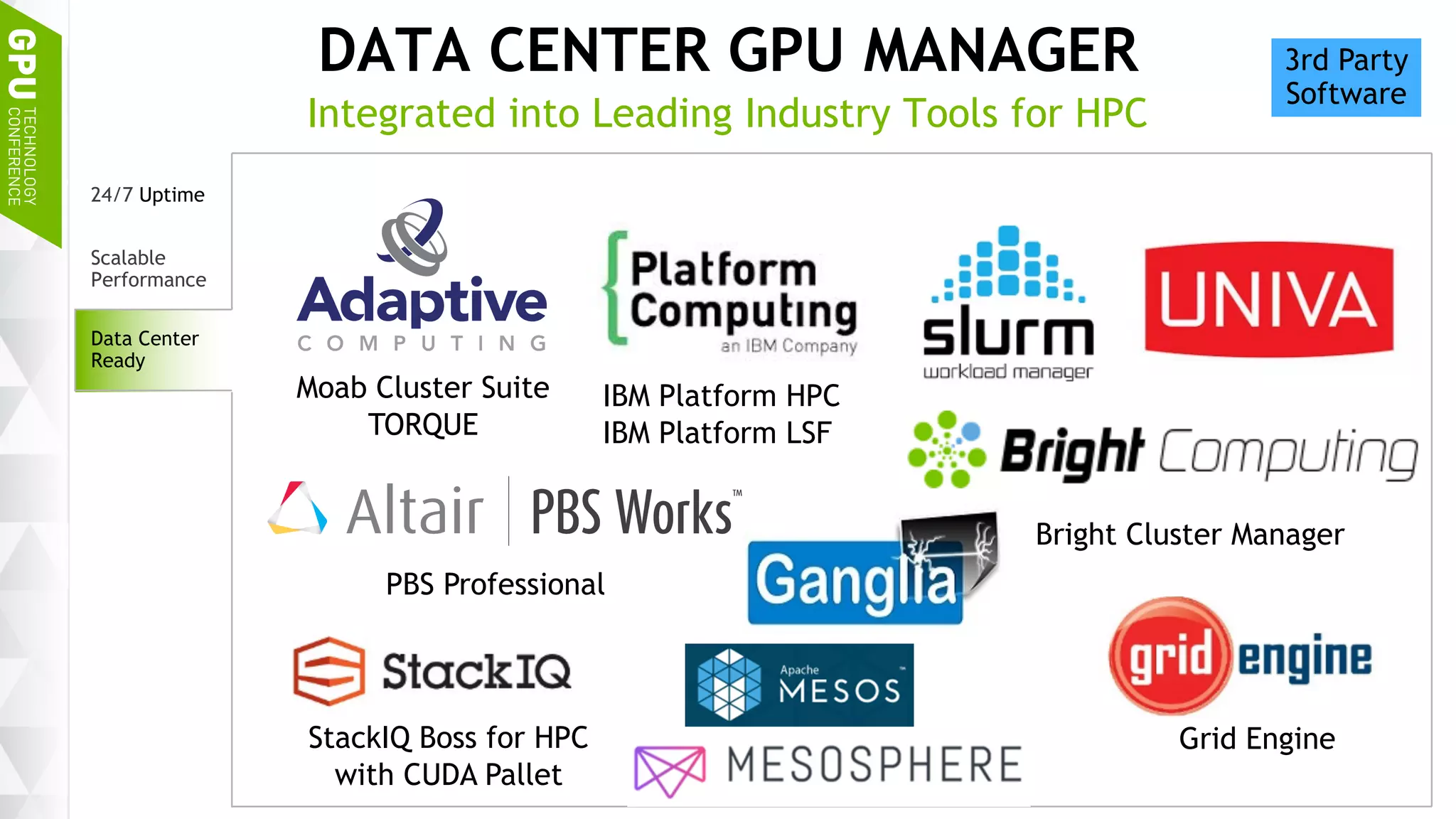

This document discusses NVIDIA's DGX-1 supercomputer and its applications for artificial intelligence and deep learning. It describes how the DGX-1 uses NVIDIA's Tesla P100 GPUs with NVLink connections to provide very high performance for deep learning workloads. It also discusses NVIDIA's software stack for deep learning including cuDNN, DIGITS, and Docker containers, which provide developers with tools for training and deploying neural networks. The document emphasizes how the DGX-1 and NVIDIA's GPUs are optimized for data center use through features like reliability, scalability, and management tools.