Team Disinformation - 2022 Technology, Innovation & Great Power Competition

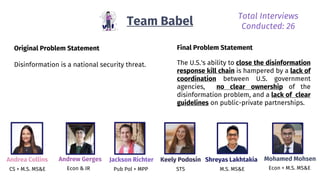

- 1. Original Problem Statement Disinformation is a national security threat. Team Babel Andrea Collins CS + M.S. MS&E Andrew Gerges Econ & IR Jackson Richter Pub Pol + MPP Keely Podosin STS Shreyas Lakhtakia M.S. MS&E Mohamed Mohsen Econ + M.S. MS&E Total Interviews Conducted: 26 Final Problem Statement The U.S.'s ability to close the disinformation response kill chain is hampered by a lack of coordination between U.S. government agencies, no clear ownership of the disinformation problem, and a lack of clear guidelines on public-private partnerships.

- 2. OUR TEAM Andrea Collins CS + M.S. MS&E Keely Podosin STS Jackson Richter Pub Pol + MPP Mohamed Mohsen Econ + M.S. MS&E Andrew Gerges Econ & IR Shreyas Lakhtakia M.S. MS&E

- 3. We walked in with a tech hammer, and all we could think was… where are the nails? Disinformation is a tech problem 1 The U.S. government can’t do much to monitor disinformation because of slow tech adoption 2 Tech companies and algorithms are the best hope to solving this disinformation problem 3

- 4. 26 Conversations with leaders changed our minds Total Interviews: 26 Total Interviews Requested: 63

- 5. In 10 weeks, we narrowed the problem, structured it, pivoted twice, and got to the root Tech can solve “disinformation” WEEK EMOTIONAL STATE 0 - 2 3 - 4 5 - 6 7 - 8 9 - 10 Tech platforms are the problem This is a Russian doll of a problem The US solved this problem before Our poor response results from a lack of ownership and coordination Pivot #1: Need for US govt. involvement Pivot #2: Need for clear ownership in US govt. + better coordination 😎 😏 😳 😶 🌫 ️ 😫

- 6. Work with Private Companies What are they doing? Why? Disinformation Misinformation? What type of disinformation? Early project time was spent boiling the ocean National Security Threat What makes it a threat? Domestic vs. Foreign Disinformation If foreign, which countries specifically? Weeks 1-2 Mental state: 😶 🌫️

- 7. China Russia Other Actors CCP, Chinese diplomats Russian gov’t + citizens {bots} Messaging “Golden China” message, propaganda + COVID-19 disinfo “Broken, divided America” message, Election disinfo Targets Chinese-Americans, Chinese expats Political Activists Platforms Social media and the web: Twitter, WeChat, TikTok Social media and the web: Twitter, Facebook, Youtube Foreign actors have different approaches to disinformation, but they all rely on tech platforms

- 8. BIG IDEA #1 Tech platforms are the common thread across various foreign powers enabling spread of disinformation

- 9. We assumed platforms didn’t care about disinformation and didn’t make it a priority BUT 1. Platforms actually care about addressing disinformation 2. Platforms don't collaborate on disinformation strategies 3. Platforms don’t know what’s expected of them Weeks 3-5 Mental state: ️

- 10. BIG IDEA: Tech companies are working in the dark without collaboration with the U.S. government or other tech companies. “We don't have any communication with other tech platforms, even though we're all part of the disinformation supply chain.” - Google PM

- 11. Spread of disinformation can be broken down Lie created 1 Algorithms amplify reach 3 Users exposed to disinfo 4 Lie placed on platform X 2 Users buy into lies 5

- 12. Different factors drive each stage of the disinformation supply chain Lies created with specific intent, fabricated to play into psychology Eg. China spreads disinformation with the goal of increasing acceptance of Chinese cultural and economic norms in the Asian diaspora. Lie created 1 Algorithms amplify reach 3 Users exposed to disinfo 4 Lie placed on platform X 2 Users buy into lies 5

- 13. The spread of disinformation can be amplified by black-box algorithms in service of clickbait Political economy of clickbait It can be lucrative to post disinformation because of ad revenue. Tech companies create their own individual standards Ranking of disinformation through black-box algorithms Lie created 1 Algorithms amplify reach 3 Users exposed to disinfo 4 Lie placed on platform X 2 Users buy into lies 5

- 14. Disinformation targets existing social divides, making lies easy to buy into Disinformation created to hack into people’s psychology, prey on vulnerability to spread Americans are susceptible; we are not taught media literacy (eg. Finnish education) Lie created 1 Algorithms amplify reach 3 Users exposed to disinfo 4 Lie placed on platform X 2 Users buy into lies 5

- 15. BIG IDEA There’s no single entity looking across each layer of the problem, and coordinating a response strategy for maximum effectiveness

- 16. PIVOT! Collaboration between tech companies and the U.S. government will be key to combating foreign disinformation. Weeks 3-5 Mental state: 😯

- 17. KEY LEARNING: The US beat back disinformation before Weeks 6-7 Mental state: ️

- 18. ● The Nature of Lies ● Personalities of Leaders ● The Goals of Disinformation ● Roles and Risks to Partners, Allies Parallels between today’s disinformation and KGB/Cold War era disinformation 1. Social media a. Cheap and fast spread of speech 2. The lack of response coordination a. Active Measures Working Grp, comprehensive response … but there are a few key differences in both the problem and response: Stage 3 Precursor: we have fought this war before

- 19. No one agency leads disinformation efforts, which are scattered and rarely collaborative. Department of State Global Engagement Center, Active Measures Working Group (terminated) Department of Defense Cyber Command, DARPA Department of Homeland Security Governance Board (terminated) CIA, NSA and others Covert FCC (Limited only to broadcast) Tech Companies Google, Meta, Twitter, Reddit, Microsoft

- 20. BIG IDEA: US response is fragmented; agencies collaborate ad hoc on disinformation with no formal patterns of collaboration "There’s no clear flowchart of where insights, issues, or findings should go, so agency staffers end up just reaching out to “buddies” or contacts in other agencies - Former DHS staffer Week 7 Mental state: ️

- 21. PIVOT! NEW PROBLEM STATEMENT A lack of interagency linkages and clear problem-level ownership within the U.S. government's disinformation response hinders it from closing the kill chain. Week 8 Mental state: ️

- 22. Solution: A national strategy with a clear owner and mandate to align U.S. government agencies and private sector actors Week 9 Mental state: ️

- 23. The national strategy should have an owner, formal inter-agency collaboration, and public- private collaboration 1. Designate an owner for the problem a. Draw inspiration from the Active Measures Working Group b. Give it a mandate that explicitly focuses on foreign disinformation 2. Build formal interagency linkages so that collaboration is easier a. Create a clear organizational flow diagram of who oversees a given response b. Create an interagency group to triage different trends and scenarios 3. Coordinate this response with social media companies a. Cohesive and consistent response minimize the risk of being seen as partisan b. Global Engagement Center can build strong relationships with tech companies to coordinate responses across platforms

- 24. We propose a Disinformation Response Org Chart with a clear owner and more formal links DHS, CDC, other individual agencies Department of Education Intelligence community Diplomats Active Measures Working Group Identify Triage Act Global Engagement Center + tech companies Cyber Command + tech companies

- 25. We have open questions about our proposal 1. What are the tradeoffs of housing the Active Measures Working Group 2.0 in State vs Defense? 2. The US currently responds/retaliates to a very small proportion of disinformation. What does proportional response even mean? 3. How can we include a long-term plan for dealing with this problem by reforming education policy? Week 10 Mental state: 😌

- 27. Fin

Editor's Notes

- Andrew introduce story behind team babel name humanity collaborated to build the largest tower - everyone could understand each other and spoke the same language to punish the hubris of humanity, they were punished with different languages and had no shared basis of what truth was read over problem statements read over total interviews

- Everyone!! Andrew clockwise Jackson

- Jackson our assumption was that the solution would be driven by technology, we came up w 3 specific hypotheses 1, 2, 3 on slide long story short, tech was the problem and solution to this problem.

- Jackson the 26 conversations with leaders across different domains addressing the problem changed our minds

- Jackson **emphasize that there are layers to the problem** over the course of the 10 weeks, our emotional state ran the gamut from boundless optimism to utter dismay and despair we discovered that there were problems behind the problem - and pivoting our attention from tech platforms to the role of the US govt to the structure of the agencies tackling the problem helped us address the root of the issue. now, we’re going to take you on this journey step by step!

- Andrew *Gesture* Our initial problem statement was much too broad: “Disinformation is a national security threat.” We worked to define disinformation, focusing on the different aspects of disinformation and the difference between mis and dis. Then we tried to understand why disinformation has the potential to be a national security threat, and who/where it could target. We looked at how working with private companies would be possible, and what companies were doing on their own individually to lower the threat of disinformation. Finally, we chose to narrow our project down to foreign disinformation in the spirit of great power competition and the themes of this course. We had to pinpoint these definition before we proceeded with our investigation!

- Andrew In studying foreign actors and disinformation, we learned that they often have different perpetrators, separate messaging aims, and different targets. The common thread we found was foreign actors’ reliance on using tech platforms to spread their malign information. This brought us to our first big idea:

- Andrew bueno! read it

- Andrew After speaking with experts from across tech platforms, such as Meta, Google, Youtube, and Jigsaw, what we discovered about tech platforms wasn’t quite what we expected. We found that: 1) it is in the long-term incentives of platforms to minimizing disinformation. (this makes for negative user experience, if people stop seeing the platform as trustworthy, it decreases usage over the long run and influences advertiser, negative press. NOT good for the brand image) 2) Platforms don't collaborate on disinformation strategies - people can follow disinformation from one platform to another 3) platforms don’t know what is expected of them - here are no clear U.S. standards or regulation that they are expected to comply with.

- Andrea ** google/reddit example, emphasize supply chain** But the biggest takeaway was that even though every tech company faced these problems, they were all addressing them in isolation. In other words, each company understood that they were part of the disinformation supply chain - and saw only a portion of it. An example of this is a user searching for something on Google, and being led to a disinformation post on Reddit. Google and Reddit here have no communication between each other. Not only did this help us realize that tech companies are working in the dark, but that there are distinct stages to the spread of disinformation.

- Andrea Read it

- Mohamed - the supply chain *Prac paraphrasing* convey: once we had broken problem down, our interviews involved understanding the factors that drove each stage.

- Mohamed lie: could be a lie placed by foreign actor, or an individual party looking to earn money

- Mohamed

- Shreyas While understanding each stage of the supply chain and corresponding drivers was useful helpful, our main finding was

- Shreyas This finding led us to a pivot. We initially thought the problem would be centered on private tech companies, but then discovered that disinformation goes beyond an algorithm-only problem. The U.S. government has an important role to play in coordinating collaboration between tech companies and the way disinformation is handled across platforms.

- Shreyas In making this pivot, one key learning was that the US beat back disinformation before, most recently during the Cold War For example, during the cold war, the USSR tried to spread disinfo in africa and the US that AIDS was created in a US lab. Operation infektion! USSR trying to get at existing societal divides

- shreyas Parallels between today’s disinformation and KGB/Cold War era disinformation Nature of lies: Based on exacerbating divisions that already exist in society How lies spread: Start a story, them slowly legitimize and amplify it as it picks up Personalities of leaders Putin graduated from the KGB school named after a Cold War KGB chief Goals 4D’s - disorient, divide, deceive and disguise Role and risks to partners / allies / developing countries Was true then and remains true today … but there are a few key differences in both the problem and response: The lack of response coordination No “Active Measures Working Group”; There is a lack of comprehensive response, Lack of willingness to own the problem Social media: Social media is a prevalent source of news, where there is minimal collaboration to respond to disinformation. The speed/scale at which information spreads remains higher than ever. Digital Literacy: In the past, the AMWG used “RAP”: Report, Analyze, Publish…. and this is still necessary, but this needs to be accompanied by a push for digital literacy

- Let’s dive into the US response today a little more; A lot of agencies have to tackle disinformation but shreyas FCC - federal communications commission A lot of different agencies with groups and teams focused on disinformation - a fragmented rather than centralized approach!!

- Shreyas What was particularly surprising was

- keely We discovered that the U.S. has the tools, manpower, technology, and is quite aware of the problem; we have the tools to triage and address the problem, but the lack of a clear strategy hurts our efforts to close the kill chain and actually respond to foreign disinfo threats.

- keely getting to a text heavy slide next but dont worry!!!!

- keely

- andrea bring back something similar to the active measure working group drawing inspiration from the active measures working group separate into stages of disinfo kill chain intelligence community- experience in assessing threats to national security diplomats: coordinate intelligence sharing with allies, bring in broader view of problem to better triage cyber command: boots on the ground experience implementing tech solutions DoE: more long-term action, increasing American digital media literacy

- andrea- here are a few important open questions, we'd love to discuss them with you

- Andrea- and we'll also take your questions at this time.