Scalding by Adform Research, Alex Gryzlov

•Download as PPTX, PDF•

1 like•870 views

Report

Share

Report

Share

Recommended

More Related Content

What's hot

What's hot (19)

Hadoop Tutorial For Beginners | Apache Hadoop Tutorial For Beginners | Hadoop...

Hadoop Tutorial For Beginners | Apache Hadoop Tutorial For Beginners | Hadoop...

What are Hadoop Components? Hadoop Ecosystem and Architecture | Edureka

What are Hadoop Components? Hadoop Ecosystem and Architecture | Edureka

What is HDFS | Hadoop Distributed File System | Edureka

What is HDFS | Hadoop Distributed File System | Edureka

Big data Hadoop Analytic and Data warehouse comparison guide

Big data Hadoop Analytic and Data warehouse comparison guide

Similar to Scalding by Adform Research, Alex Gryzlov

Similar to Scalding by Adform Research, Alex Gryzlov (20)

Migrating ETL Workflow to Apache Spark at Scale in Pinterest

Migrating ETL Workflow to Apache Spark at Scale in Pinterest

Introduction to Apache Spark :: Lagos Scala Meetup session 2

Introduction to Apache Spark :: Lagos Scala Meetup session 2

Apache Spark Introduction and Resilient Distributed Dataset basics and deep dive

Apache Spark Introduction and Resilient Distributed Dataset basics and deep dive

Big Data and Hadoop in Cloud - Leveraging Amazon EMR

Big Data and Hadoop in Cloud - Leveraging Amazon EMR

Boston Apache Spark User Group (the Spahk group) - Introduction to Spark - 15...

Boston Apache Spark User Group (the Spahk group) - Introduction to Spark - 15...

Real time Analytics with Apache Kafka and Apache Spark

Real time Analytics with Apache Kafka and Apache Spark

Global Big Data Conference Sept 2014 AWS Kinesis Spark Streaming Approximatio...

Global Big Data Conference Sept 2014 AWS Kinesis Spark Streaming Approximatio...

Tom Kraljevic presents H2O on Hadoop- how it works and what we've learned

Tom Kraljevic presents H2O on Hadoop- how it works and what we've learned

Productionizing Spark and the REST Job Server- Evan Chan

Productionizing Spark and the REST Job Server- Evan Chan

More from Vasil Remeniuk

More from Vasil Remeniuk (20)

Cake pattern. Presentation by Alex Famin at scalaby#14

Cake pattern. Presentation by Alex Famin at scalaby#14

Опыт использования Spark, Основано на реальных событиях

Опыт использования Spark, Основано на реальных событиях

Funtional Reactive Programming with Examples in Scala + GWT

Funtional Reactive Programming with Examples in Scala + GWT

Recently uploaded

Recently uploaded (20)

Dev Dives: Streamline document processing with UiPath Studio Web

Dev Dives: Streamline document processing with UiPath Studio Web

Tampa BSides - Chef's Tour of Microsoft Security Adoption Framework (SAF)

Tampa BSides - Chef's Tour of Microsoft Security Adoption Framework (SAF)

Leverage Zilliz Serverless - Up to 50X Saving for Your Vector Storage Cost

Leverage Zilliz Serverless - Up to 50X Saving for Your Vector Storage Cost

WordPress Websites for Engineers: Elevate Your Brand

WordPress Websites for Engineers: Elevate Your Brand

Unraveling Multimodality with Large Language Models.pdf

Unraveling Multimodality with Large Language Models.pdf

H2O.ai CEO/Founder: Sri Ambati Keynote at Wells Fargo Day

H2O.ai CEO/Founder: Sri Ambati Keynote at Wells Fargo Day

How AI, OpenAI, and ChatGPT impact business and software.

How AI, OpenAI, and ChatGPT impact business and software.

"Subclassing and Composition – A Pythonic Tour of Trade-Offs", Hynek Schlawack

"Subclassing and Composition – A Pythonic Tour of Trade-Offs", Hynek Schlawack

TrustArc Webinar - How to Build Consumer Trust Through Data Privacy

TrustArc Webinar - How to Build Consumer Trust Through Data Privacy

"LLMs for Python Engineers: Advanced Data Analysis and Semantic Kernel",Oleks...

"LLMs for Python Engineers: Advanced Data Analysis and Semantic Kernel",Oleks...

Transcript: New from BookNet Canada for 2024: BNC CataList - Tech Forum 2024

Transcript: New from BookNet Canada for 2024: BNC CataList - Tech Forum 2024

DSPy a system for AI to Write Prompts and Do Fine Tuning

DSPy a system for AI to Write Prompts and Do Fine Tuning

Powerpoint exploring the locations used in television show Time Clash

Powerpoint exploring the locations used in television show Time Clash

Nell’iperspazio con Rocket: il Framework Web di Rust!

Nell’iperspazio con Rocket: il Framework Web di Rust!

Scalding by Adform Research, Alex Gryzlov

- 2. Cascading Tap / Pipe / Sink abstraction over Map / Reduce in Java

- 3. Cascading

- 5. Scalding • Scala wrapper for Cascading • Just like working with in-memory collections (map/filter/sort…) • Built-in parsers for {T|C}SV, date annotations etc • Helper algorithms e.g. approximations (Algebird library) matrix API

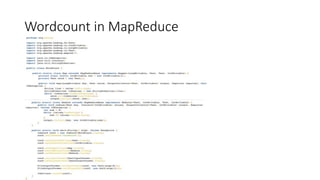

- 7. run the WordCountJob in local mode with given input and output

- 8. Building and Deploying • Get sbt • sbt assembly produces jar file in target/scala_2.10 • sbt s3-upload produces jar and uploads to s3

- 9. Running on EMR • hadoop fs -get s3://dev-adform-test/madeup-job.jar job.jar • hadoop jar job.jar com.twitter.scalding.Tool Entry class com.adform.dspr.MadeupJob Scalding job class --hdfs Run in HDFS mode --logs s3://dev-adform-test/logs Parameter --meta s3://dev-adform-test/metadata Parameter --output s3://dev-adform-test/output Parameter For more complicated workflows you would have to use applications like Oozie or Pentaho, or write a custom runner app, check out https://gitz.adform.com/dco/dco-amazon-runner

- 10. Development • Two APIs: • Fields – everything is a string • Typed – working with classes, e.g. Request/Transaction

- 11. Development • Fields: • No need to parse columns • Redundancy • No IDE support like auto-completion • Typed: • All benefits of types, esp. compile-time checking • More manual work with parsing • Sometimes API can be confusing (TypedPipe/Grouped/Cogrouped…)

- 12. Downsides • A lot of configuring and googling random issues • Scarce documentation, have to read source code/stackoverflow • IntelliJ is slow • Boilerplate code for parsing data

- 13. Some tips • In local mode you specify files as input/output, in HDFS – folders • You can use Hadoop API to read files from HDFS directly, but only on submitting node, not in the pipeline • As a workaround for previous problem, you can use a distributed cache mechanism, but that only works on Hadoop 1 AFAIK • Default memory limit per mapper/reducer is ~200Mb, can be raised by overriding Job.config and adding “mapred.child.java.opts“ -> ”-Xmx<NUMBER>m”

- 14. Resources • https://github.com/twitter/scalding/wiki Wiki • https://github.com/twitter/scalding/tree/develop/tutorial Basic stuff • https://github.com/twitter/scalding/tree/develop/scalding- core/src/main/scala/com/twitter/scalding/examples Advanced examples, e.g., iterative jobs • http://www.slideshare.net/AntwnisChalkiopoulos/scalding-presentation • http://polyglotprogramming.com/papers/ScaldingForHadoop.pdf • http://www.slideshare.net/ktoso/scalding-the-notsobasics-scaladays-2014