Pedersen semeval-2013-poster-may24

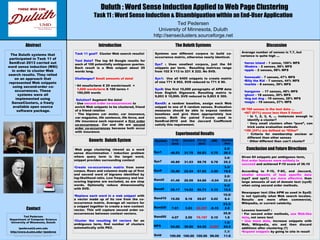

- 1. Poster Design & Printing by Genigraphics® - 800.790.4001 Ted Pedersen Department of Computer Science University of Minnesota, Duluth tpederse@d.umn.edu http://www.d.umn.edu/~tpederse Ted Pedersen University of Minnesota, Duluth http://senseclusters.sourceforge.net Systems use different corpora to build co- occurrence matrix, otherwise nearly identical. Sys7 : Uses smallest corpora, just the 64 snippets per term. Resulting matrices range from 102 X 113 to 221 X 222. No SVD. Sys1: Use all 6400 snippets to create matrix of size 771 X 952. SVD reduced to 771 X 90. Sys9: Use first 10,000 paragraphs of APW data from English Gigaword. Resulting matrix is 9,853 X 10,995. SVD reduced to 9,853 X 300. RandX: a random baseline, assign each Web snippet to one of X random senses. Evaluation measures should be able to expose random baselines and give them appropriately low scores. Both the paired F-score used in SemEval-2010 and the Jaccard Coefficient satisfy this requirement. Given 64 snippets per ambiguous term, first order features were unlikely to succeed and achieved F-10 score of 36.10 According to F-10, F-SC, and Jaccard, smaller amounts of task specific data (sys7 and sys1) are more effective than large amounts of out of domain text (sys9) when using second order methods. Newspaper text (like APW as used in Sys9) is not typically what Web search locates. Results are more often commercial, Wikipedia, or current celebrity. Lessons learned? : ● For second order methods, use Web-like data, not news text ● Use more data, increase snippets with Web, Wikipedia, etc and then discard additions after clustering (?) ● Expand snippets by going to site in result • Task 11 goal? Cluster Web search results! Test Data? The top 64 Google results for each of 100 potentially ambiguous queries. Each result is a Web snippet about 25 words long. Challenges? Small amounts of data! • 64 results/term X 25 words/result = 1,600 words/term X 100 terms = 160,000 words Solution? Augment the data! • Use second order co-occurrences to enrich Web snippets to be clustered, friend of a friend relation • The bigrams car motor, car insurance, car magazine, life sentence, life force, and life insurance each represent a first order co-occurrence. Car and life are second order co-occurrences because both occur with insurance. Introduction Generic Duluth System Conclusion and Future Directions DiscussionThe Duluth SystemsAbstract Contact Duluth : Word Sense Induction Applied to Web Page Clustering Task 11 : Word Sense Induction & Disambiguation within an End-User Application Experimental Results System F-10 2010 Jaccard F1-13 2014 ARI Clusters /Size Sys1 46.53 31.79 56.83 5.75 2.5/ 26.5 Sys7 45.89 31.03 58.78 6.78 3.0/ 25.2 Sys9 35.56 22.24 57.02 2.59 3.3/ 19.8 Rand2 41.49 26.99 54.89 –0.04 2.0/ 32.0 Rand5 25.17 14.52 56.73 0.12 5.0/ 12.8 Rand10 15.05 8.18 59.67 0.02 10.0/ 6.4 Rand25 7.01 3.63 66.89? -0.15 23.2/ 2.8 Rand50 4.07 2.00 76.19? 0.10 35.9/ 1.8 MFS 54.06 39.90 54.42 0.00? 1.0/ 64.0 Gold 100.00 100.00 100.00 99.00 7.7/ 11.6 The Duluth systems that participated in Task 11 of SemEval–2013 carried out word sense induction (WSI) in order to cluster Web search results. They relied on an approach that represented Web snippets using second-order co- occurrences. These systems were all implemented using SenseClusters, a freely available open source software package. Web page clustering viewed as a word sense discrimination / induction problem where query term is the target word, snippet provides surrounding context •Create co-occurrence matrix from some corpus. Rows and columns made up of first and second word of bigrams identified by log-likelihood ratio. Low frequency and low scoring bigrams are excluded, as are stop words. Optionally reduce dimensionality with SVD. •Replace each word in a web snippet with a vector made up of its row from the co- occurrence matrix. Average all vectors for a snippet together to create a new context vector. This will capture second order co- occurrences between context vectors. •Cluster the resulting 64 vectors for an ambiguous term, find number of clusters automatically with PK2. Average number of senses is 7.7, but variance is quite high ... •heron island – 1 sense, 100% MFS •Shakira – 2 senses, 98% MFS •apple – 2 senses, 98% MFS •kawasaki – 7 senses, 47% MFS •Billy the Kid – 7 senses, 44% MFS •marble – 8 senses, 39% MFS •kangaroo – 17 senses, 48% MFS •ghost – 18 senses, 30% MFS •dog eat dog – 19 senses, 28% MFS •magic – 19 senses, 27% MFS Of 769 senses in the test data ... •467 (61%) occur less than 5 times!! • Is 1, 2, 3, 4, … instances enough to identify a cluster? • Very small clusters often “pure”, can trick some evaluation methods •186 (24%) are defined as “Other” • Criteria for membership unclear or different than other senses • Other different than can't cluster?