Systran Augmented Translator

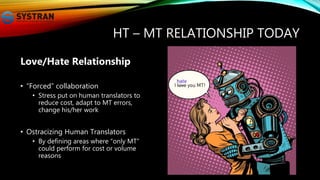

- 1. HT – MT RELATIONSHIP TODAY Love/Hate Relationship • “Forced” collaboration • Stress put on human translators to reduce cost, adapt to MT errors, change his/her work • Ostracizing Human Translators • By defining areas where “only MT” could perform for cost or volume reasons

- 2. WHY? AN INVERSE PARADIGM FORCING USE-CASE TO ADAPT TO TECHNOLOGY • Use Human Translator for MT post- editing • Adapting Human time and competence for correcting MT output • Introduce “good enough” quality • Excluding a priori human supervision and competence

- 3. BACK TO THE FUNDAMENTALS - WHAT DOES THE USER NEED? • High Quality Translation – no compromise on quality • Also true for ... Human Translation • But often users do not need translation • Multilingual information retrieval • Summarization • Multilingual Authoring Help • Language Learning assistant

- 4. THE NEURAL TECHNOLOGIES • Neural technology features: • Generalization • Extract rules from observation • Understanding • Build inner representation of sentence • Global Overview • Possibility to combine long term strategy and short term optimization

- 5. NEW NEURAL APPLICATIONS Beyond the impressive core performance improvement, let us imagine what can be achieved rather than try to fit new technology into the existing framework • Disruptive technology needed!

- 6. THE AUGMENTED TRANSLATOR DISRUPTIVE NEW USE-CASE TO IMAGINE • Massive Translation Monitoring • Translation Checker • Multilingual Authoring • Unique Multilingual assistant

Editor's Notes

- 1/ HT – MT relationship today As a fact today, we do have a love/hate relationship between human and MT for 2 main reasons: 1) MT is presented as a way to make humans more productive – but by changing their workflow and getting rid of the creation part of translation to focus on correction of the machine errors 2) MT is presented as a unique way to perform in some areas (for instance technical support) because of cost and volume reasons – it is kind of a rule – 99% of the volume does not pass through professionals. This is just wrong; volume does not mean we don’t need humans, but that they need to have another role.

- 2/ Why it is wrong? fundamentally wrong because we adapted use-case to technology, instead of adapting technology to use cases. We, for instance, introduced the notion of “good enough” quality, which means cheap bad translation, and we asked humans to correct machines. And today we see new approach like Adaptive MT which are taking the problem differently, but we have really treated localization as technologists and not as users.

- 3/ If we go back to the users – and listen to what they need, no user says he/she wants “good enough translation” – either they want high quality translation (human or machine), or they need something else – but nobody can pretend they want fundamentally “good enough quality” But there are a lot of use cases where users do not request MT, it is just that it is the only thing available, and there are a lot of other solutions that would satisfy them more

- 4/ What are different with Neural technologies, is that it is not just algorithms, it is a set of high level feature – generalization, understanding, and global overview that make the technology stand out.

- 5/ so we do need to be careful not to jump on the technology as just the next “big thing” to replace our current engines, or we will end up building incredible devices, but just reproducing the same errors/limitations of the existing approach.

- 6/ So where is really the innovation – it will be when we will introduce the technology as a way to augment translators and more generally users in need of cross-lingual tools. And there are several use-cases: 1) Massive translation monitoring: like many other industries where automation has been introduced to process more volume, we do need to introduce humans back into the loop of massive translation 2) Build translation checker – i.e. a tool able to correct a given translation exactly like a grammar checker. So that the machine provides feedback on human translation – the total opposite of having human correct machine output. 3) Finally, what we can build now with this new technology are wonderful tools to assist humans to produce multilingual content – >> for instance, we can imagine writing a text in a foreign language with the ability to write in native language when terminology is missing we can help the user to write in a foreign language – in this process, let us suppose that I want to write in Korean, I will miss some terminology, and I should be able to write one word in French for which I am missing the equivalent, and the NN will adapt and propose choices – this is called “multilingual authoring” Note that today – if you want to write in Korean, the only thing you can do is translate first – and then after try to understand what was proposed and adapt with your own words, the machine is not helping the multilingual authoring but is constraining the user to use some structure or some words he does not about. >> or we can imagine an NN translation model adapting to each single sentence and providing not only translation but also translation retrieval, etc...