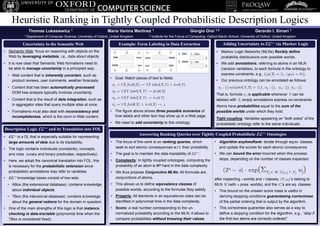

Heuristic Ranking in Tightly Coupled Probabilistic Description Logics

- 1. Heuristic Ranking in Tightly Coupled Probabilistic Description Logics Thomas Lukasiewicz 1 Maria Vanina Martinez 1 Giorgio Orsi 1,2 Gerardo I. Simari 1 1 Department of Computer Science, University of Oxford, United Kingdom 2 Institute for the Future of Computing, Oxford Martin School, University of Oxford, United Kingdom Uncertainty in the Semantic Web Example: Form Labeling in Data Extraction Adding Uncertainty to ++ via Markov Logic • Semantic Web: focus on reasoning with objects on the • Markov Logic Networks (MLNs) flexibly define Web by leveraging metadata, i.e., data about objects. probability distributions over possible worlds. • It is now clear that Semantic Web formalisms need to • We add annotations, referring to atoms in an MLN be able to manage uncertainty in a principled way: (random variables), to each formula in the ontology to − Web content that is inherently uncertain, such as express constraints, e.g., {p(X) = 1, q(a) = 0}. • Goal: Match pieces of text to fields: product reviews, user comments, weather forecasts. • Our previous ontology can be annotated as follows: 1 =X field(X) Y label(X,Y) text(Y) − Content that has been automatically processed: 1 : {canLabel(Y,X) = 1}, 2 : {}, 3 : {}, 4 : {} DOM tree analysis typically involves uncertainty. 2 = XY label(X,Y) field(X) That is, formula 1 is applicable whenever Y can be − Content that is the result of data integration, such as 3 = XY label(X,Y) text(Y) labeled with X; empty annotations express no constraints. in aggregator sites that query multiple sites at once. 4 = X field(X) text(X) Atoms have probabilities equal to the sum of the − Formalisms must also deal with inconsistency and • The figure above shows three possible scenarios of possible worlds under which they hold. incompleteness, which is the norm in Web content. how labels and other text may show up in a Web page. Tight coupling: Variables appearing on “both sides” of the • We need to add uncertainty to this ontology. probabilistic ontology refer to the same individuals. Description Logic ++ and its Translation into FOL Answering Ranking Queries over Tightly Coupled Probabilistic ++ Ontologies • ++ is a DL that is especially suitable for representing large amounts of data due to its tractability. • The focus of this work is on ranking queries, which • Algorithm anytimeRank: iterate through equiv. classes • The logic contains individuals (constants), concepts, seek to sort atomic consequences w.r.t. their probability. and update the scores for each atomic consequence. and roles (unary and binary predicates, respectively). • The goal is to maintain the data-tractability of ++. • We can bound the error incurred when this process • Here, we adopt the canonical translation into FOL; this • Complexity: In tightly coupled ontologies, computing the stops, depending on the number of classes inspected: probability of an atom is #P-hard in the data complexity. (2n s) exp(Fj M, Ct+1 Fj wj) is necessary for the probabilistic extension since probabilistic annotations may refer to variables. • We thus propose Conjunctive MLNs: All formulas are • ++ knowledge bases consist of two sets: conjunctions of atoms. after inspecting s worlds and t classes; (Fi,wi)’s belong to − ABox (the extensional database): contains knowledge • This allows us to define equivalence classes of MLN M (with n poss. worlds), and the Ci’s are eq. classes. about individual objects. possible worlds, according to the formulas they satisfy. • This bound on the unseen score mass is useful in − TBox (the intensional database): contains knowledge • Property: All elements in an equivalence class can be deriving stopping conditions guaranteeing correctness about the general notions for the domain in question. identified in polynomial time in the data complexity. of the partial ordering that is output by the algorithm. • One of the main strengths of this logic is that instance • Score: a real number corresponding to the un- • This correctness guarantee also serves as a way to checking is data-tractable (polynomial time when the normalized probability according to the MLN; it allows to define a stopping condition for the algorithm, e.g., “stop if TBox is considered fixed). compare probabilities without knowing their values. the first two atoms are correctly ordered”.