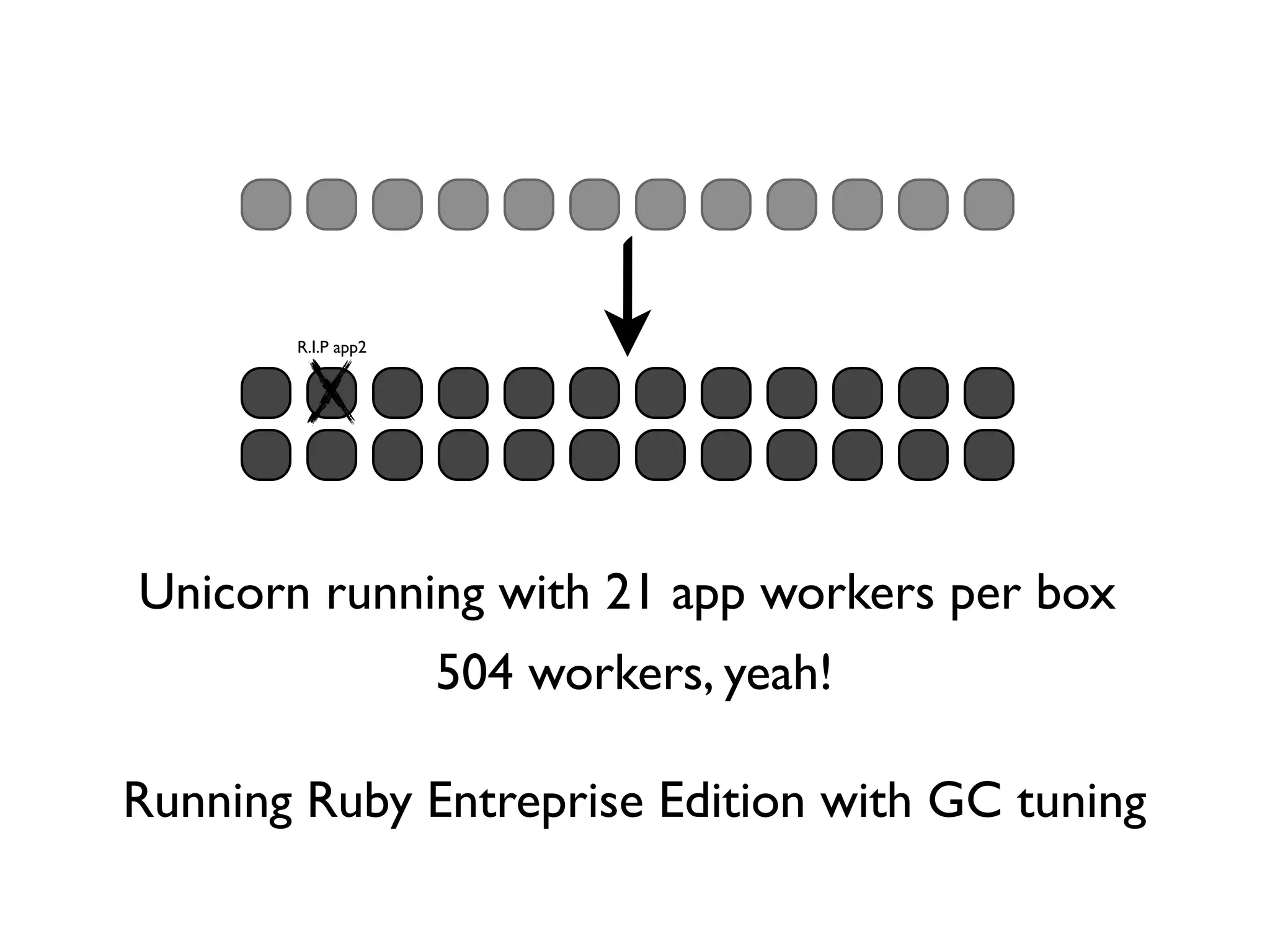

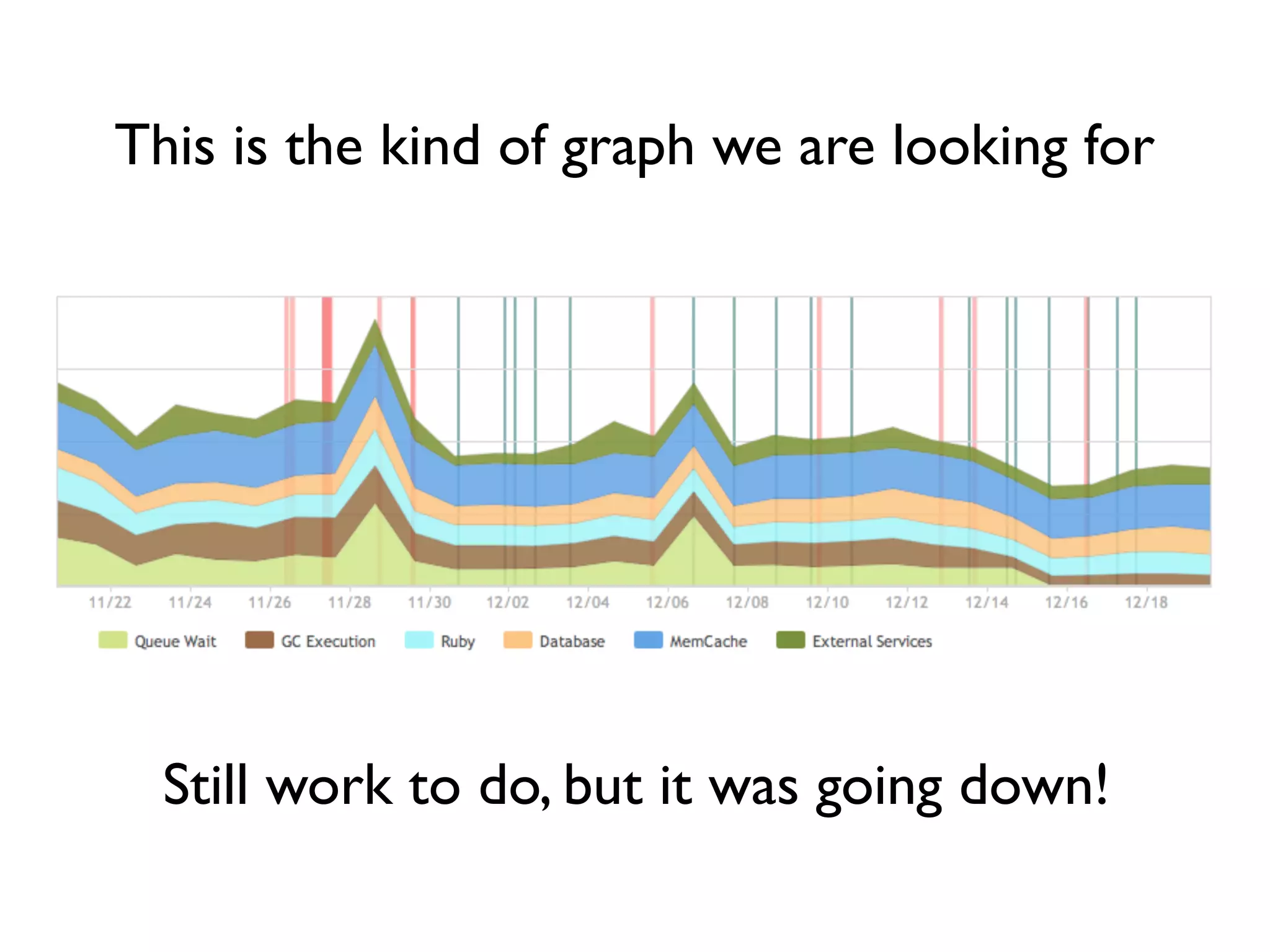

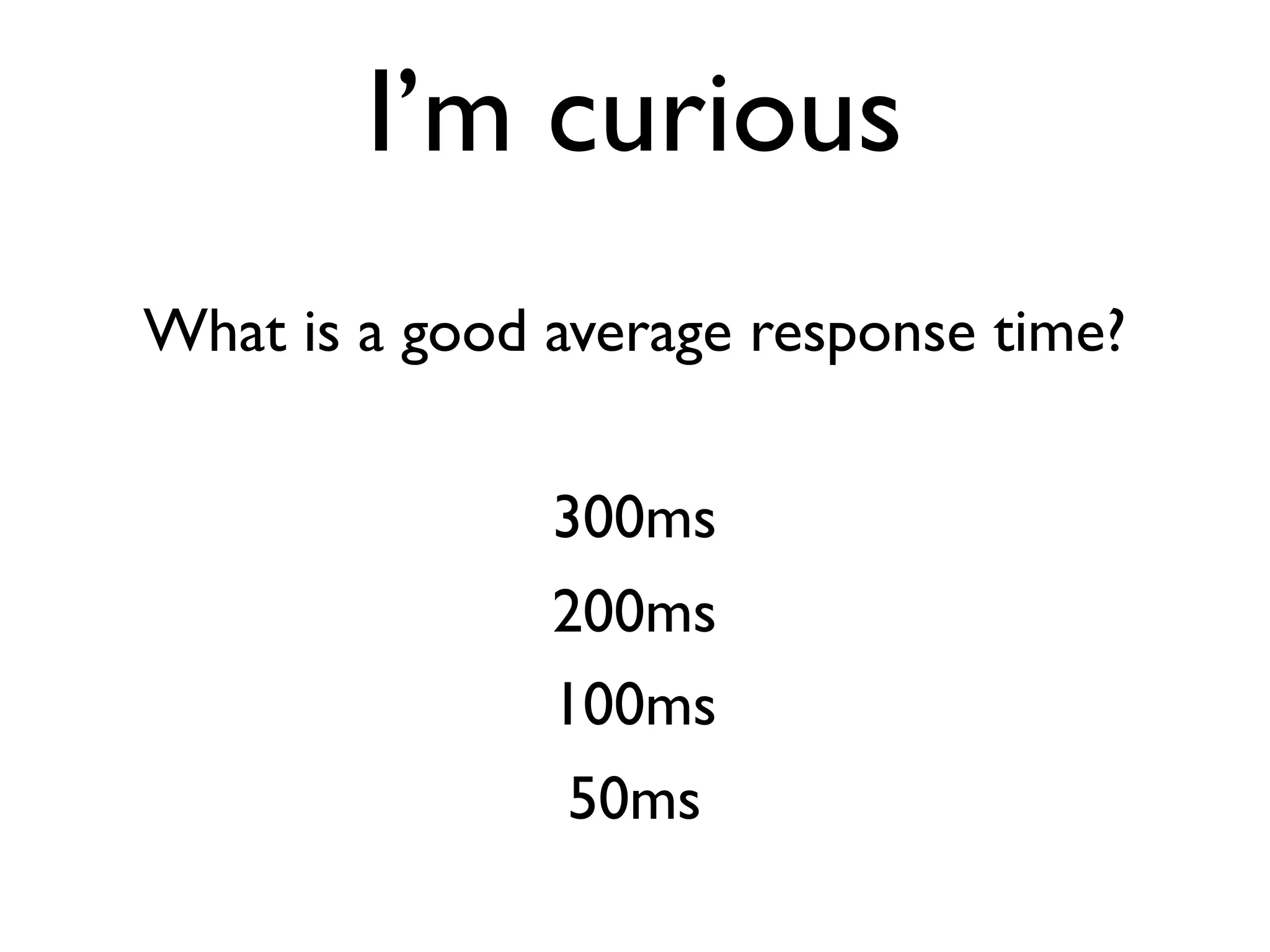

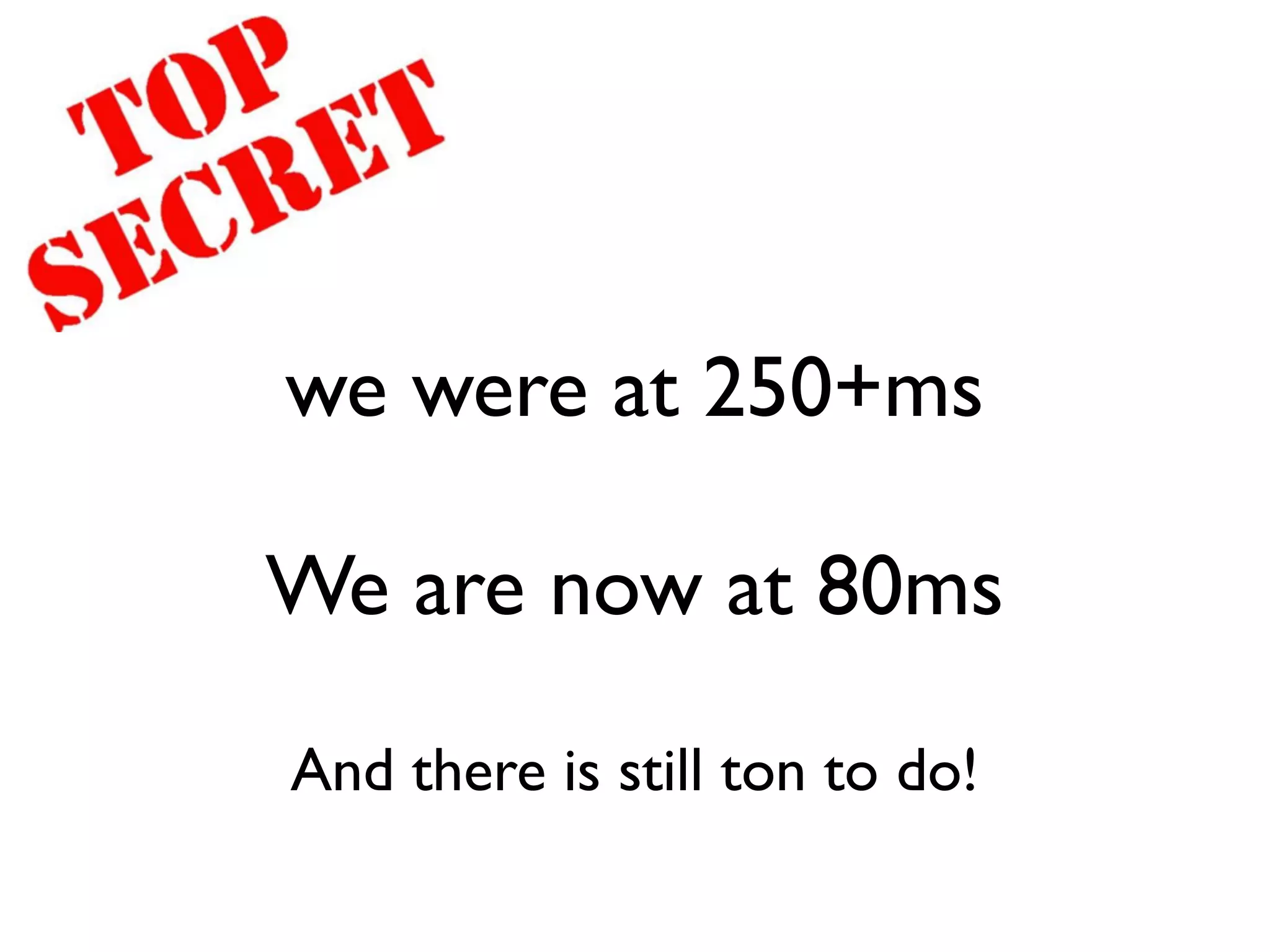

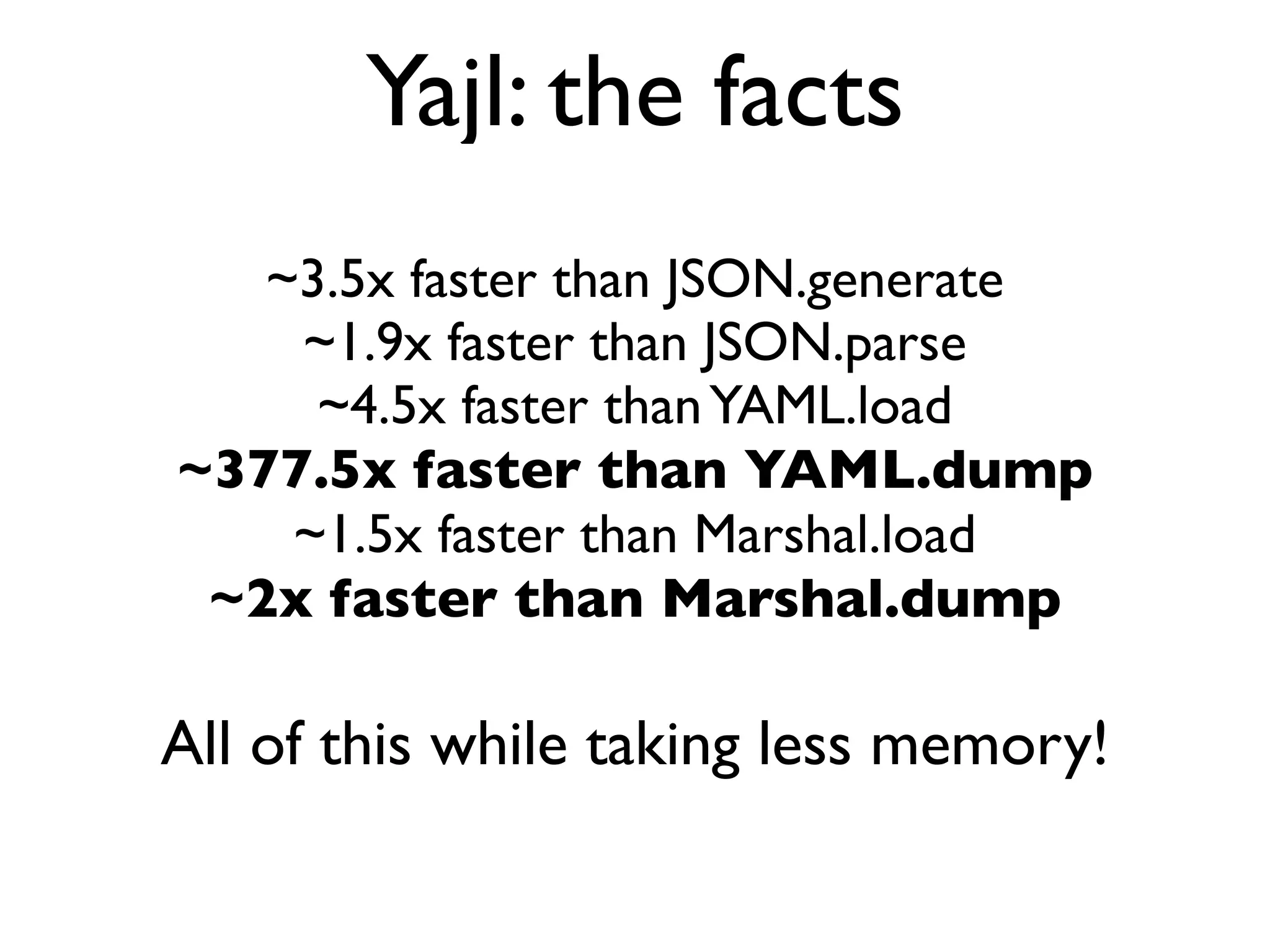

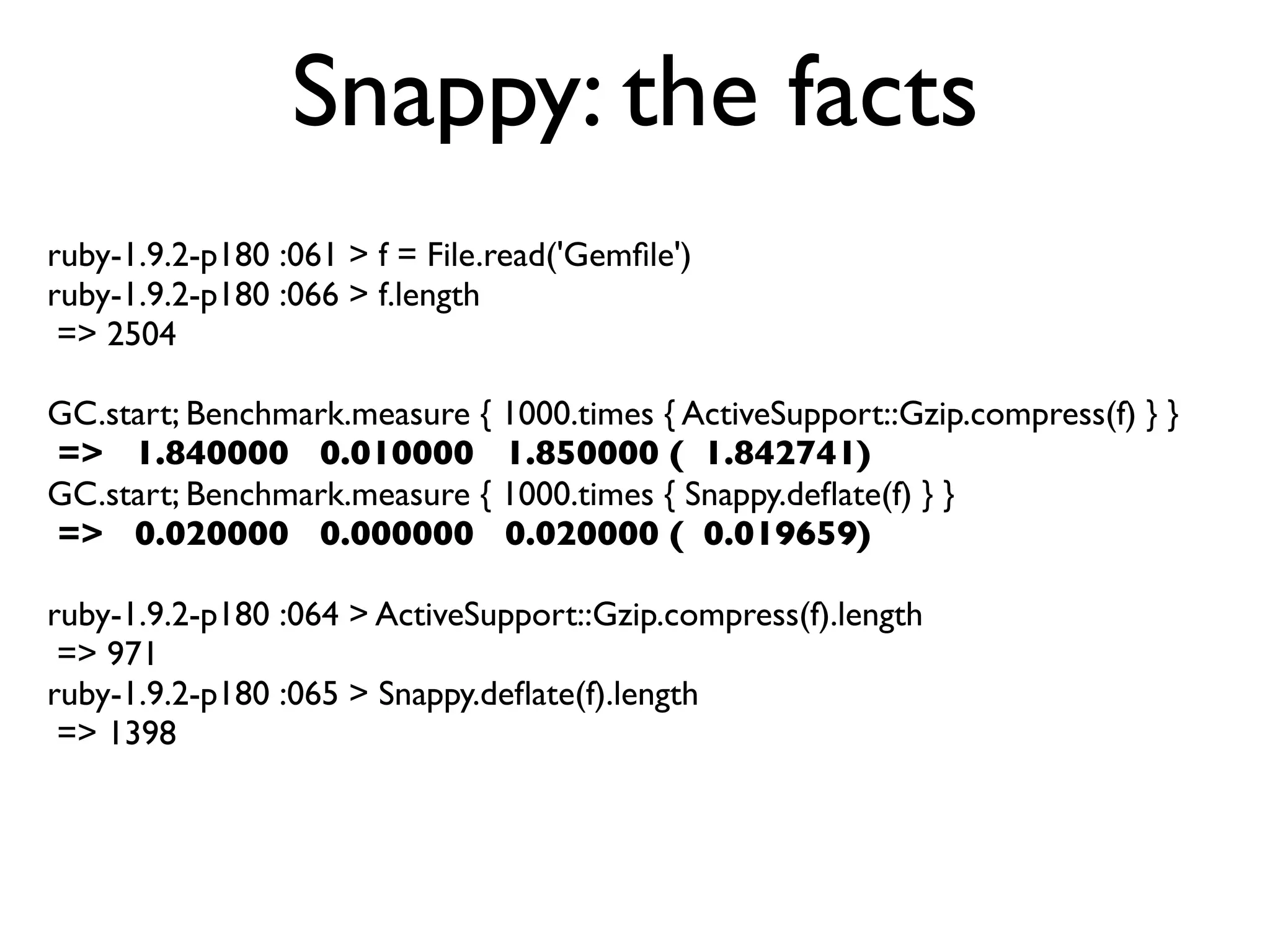

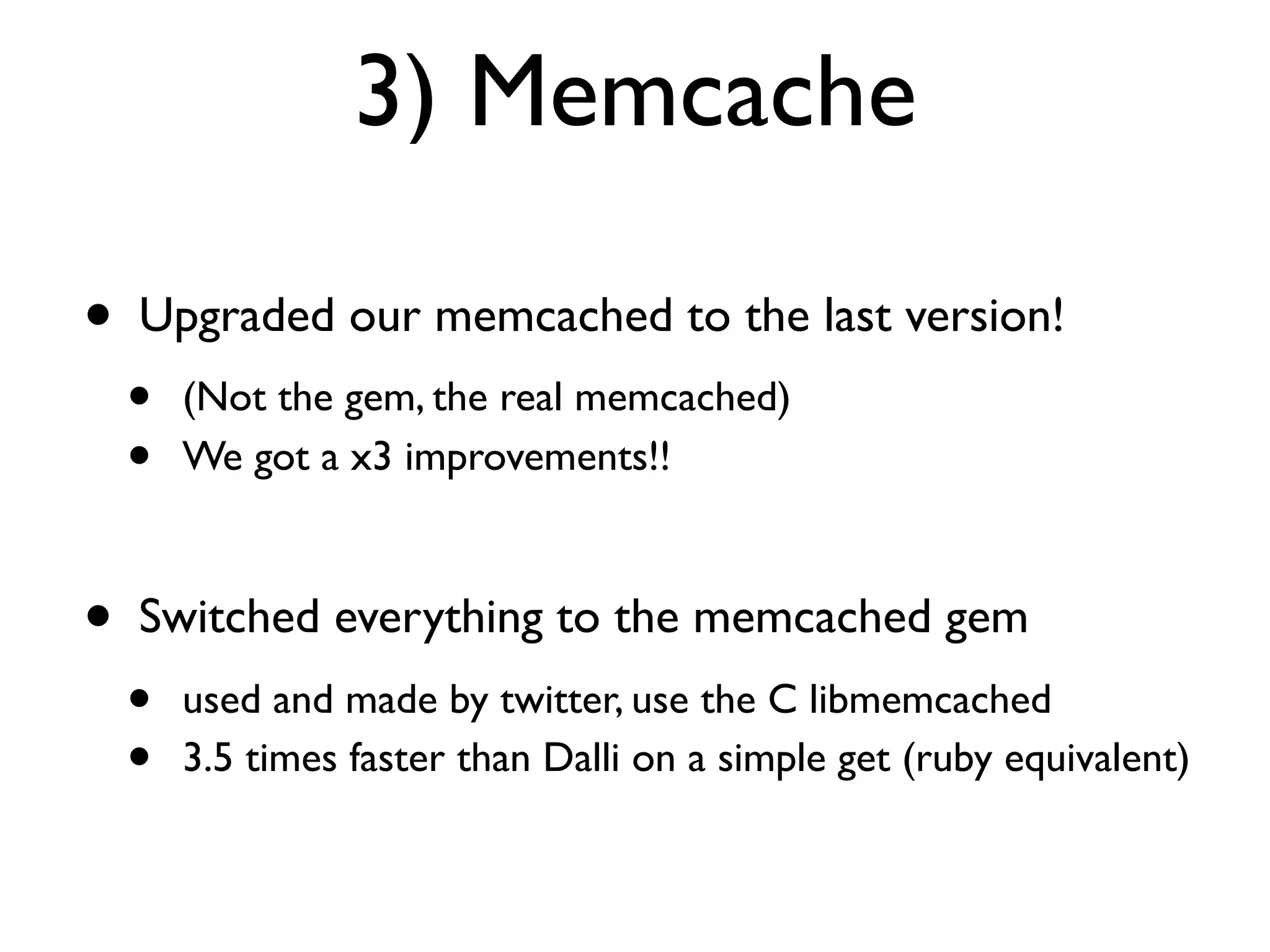

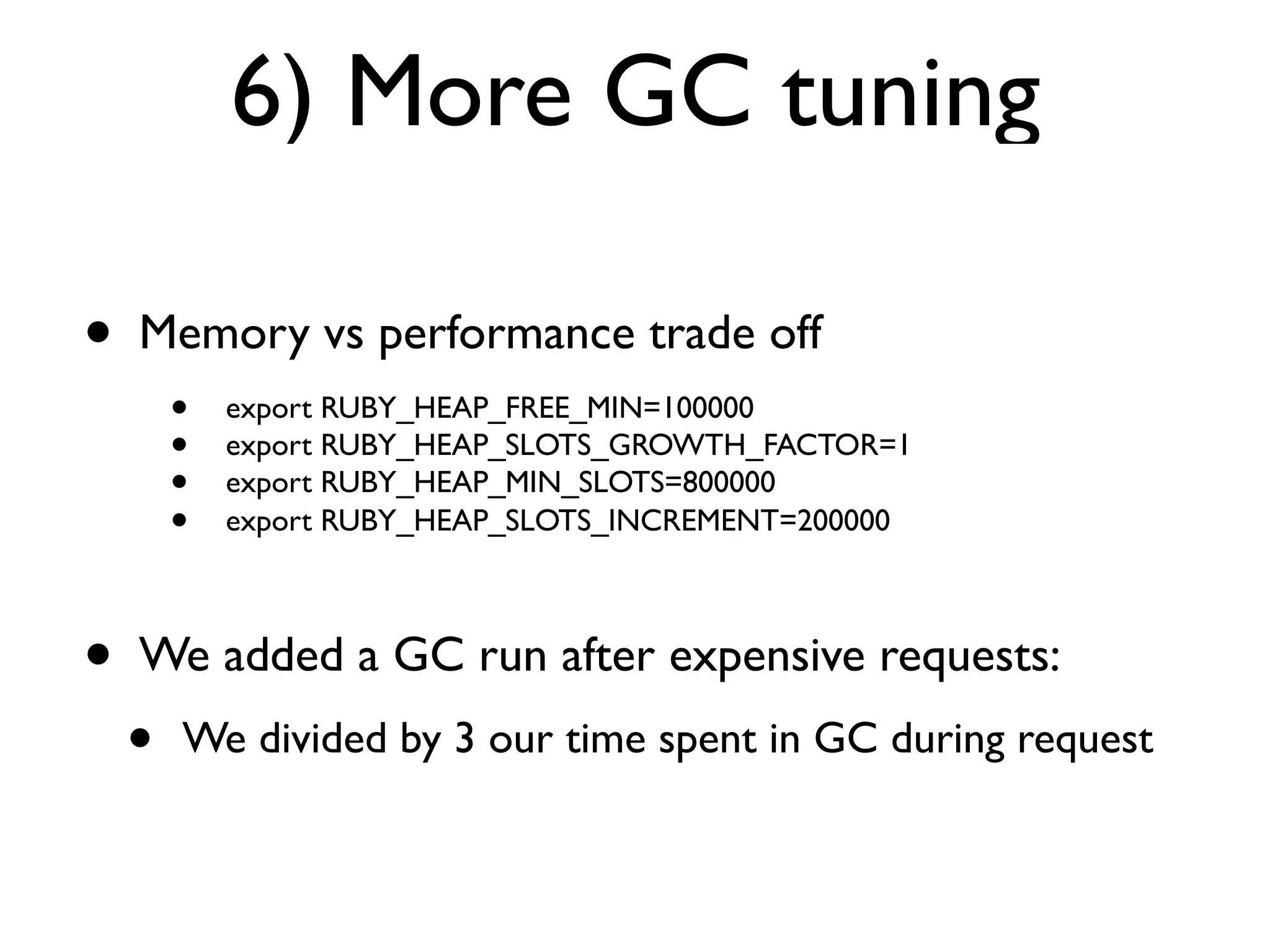

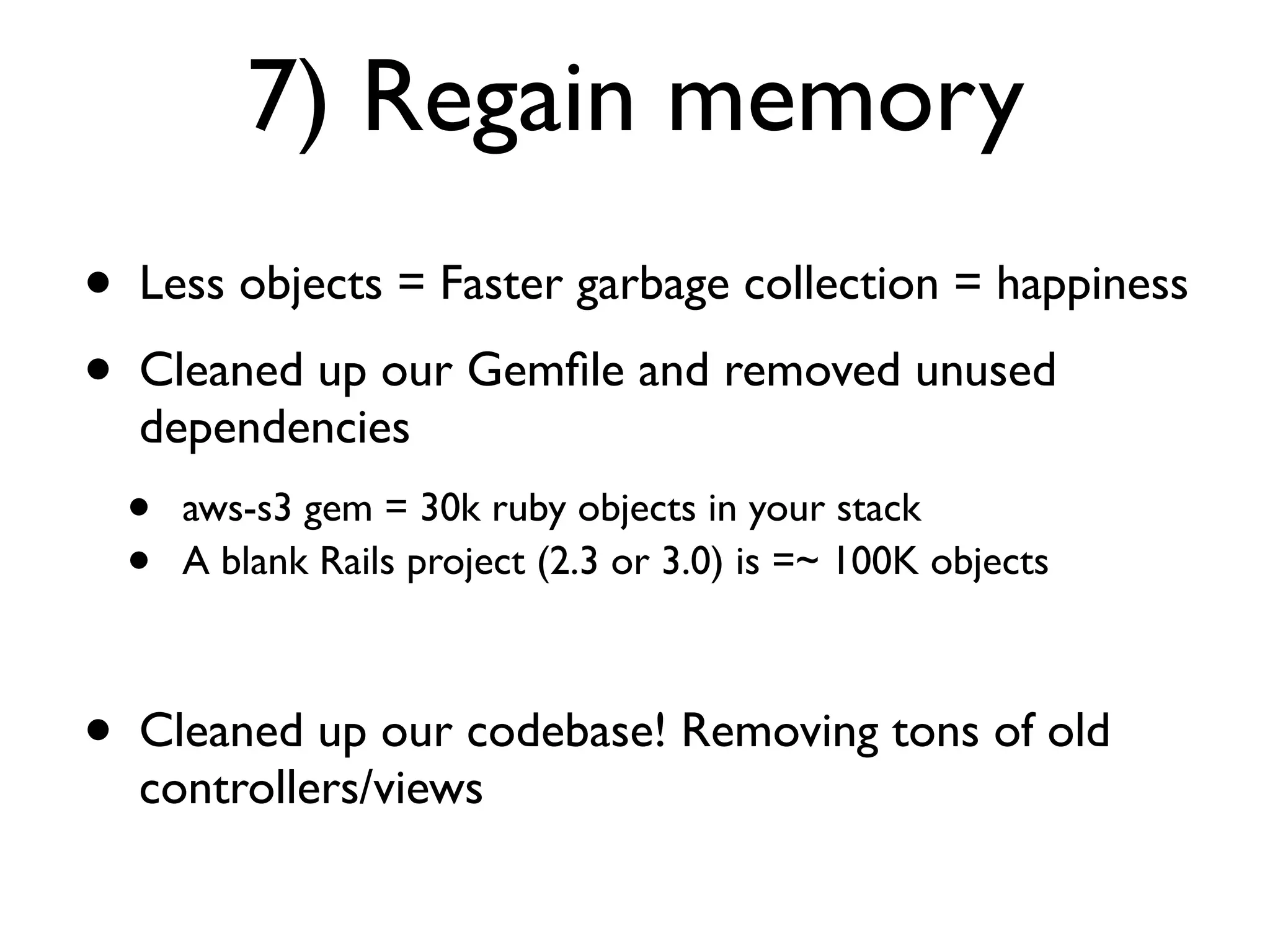

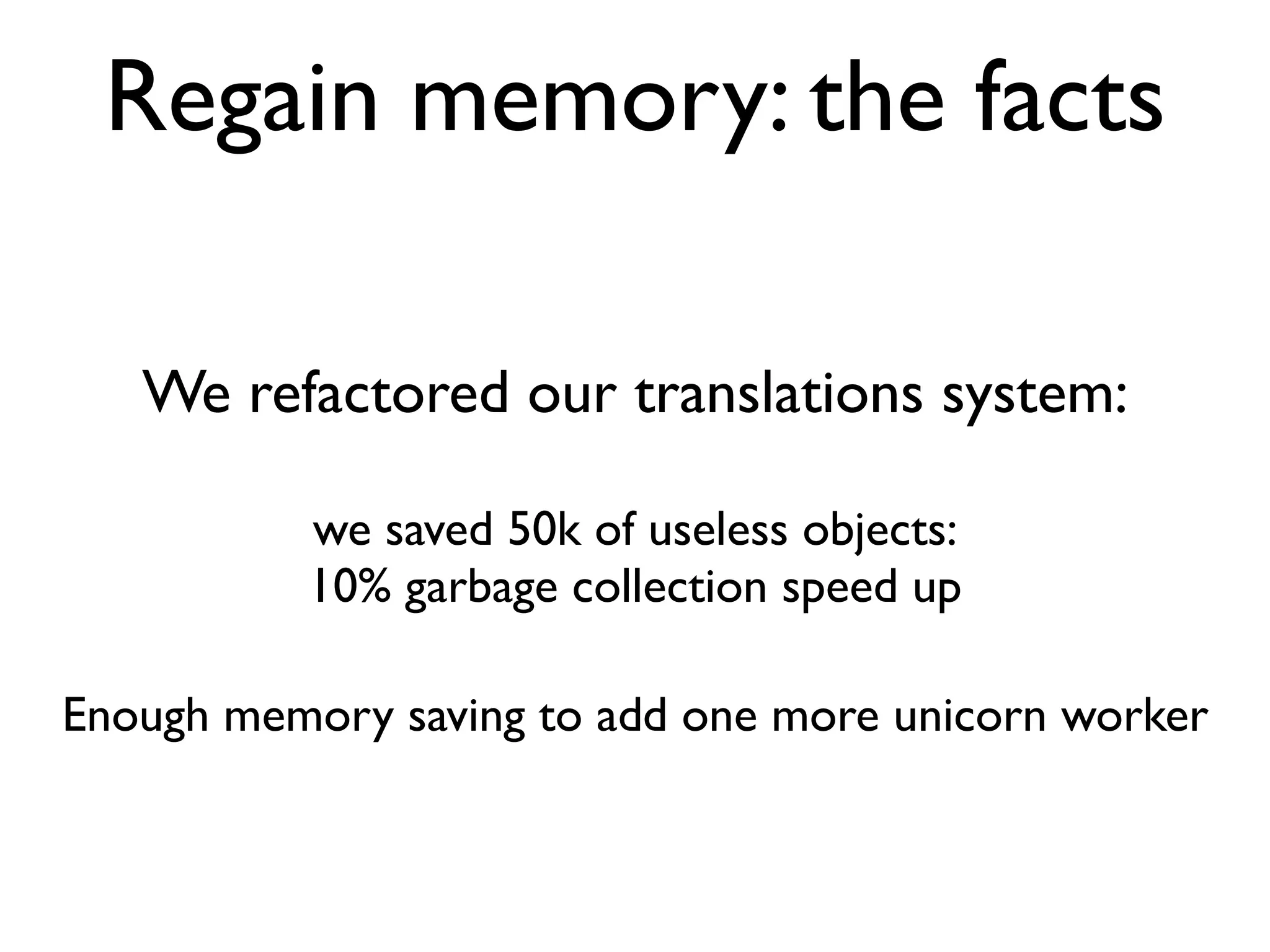

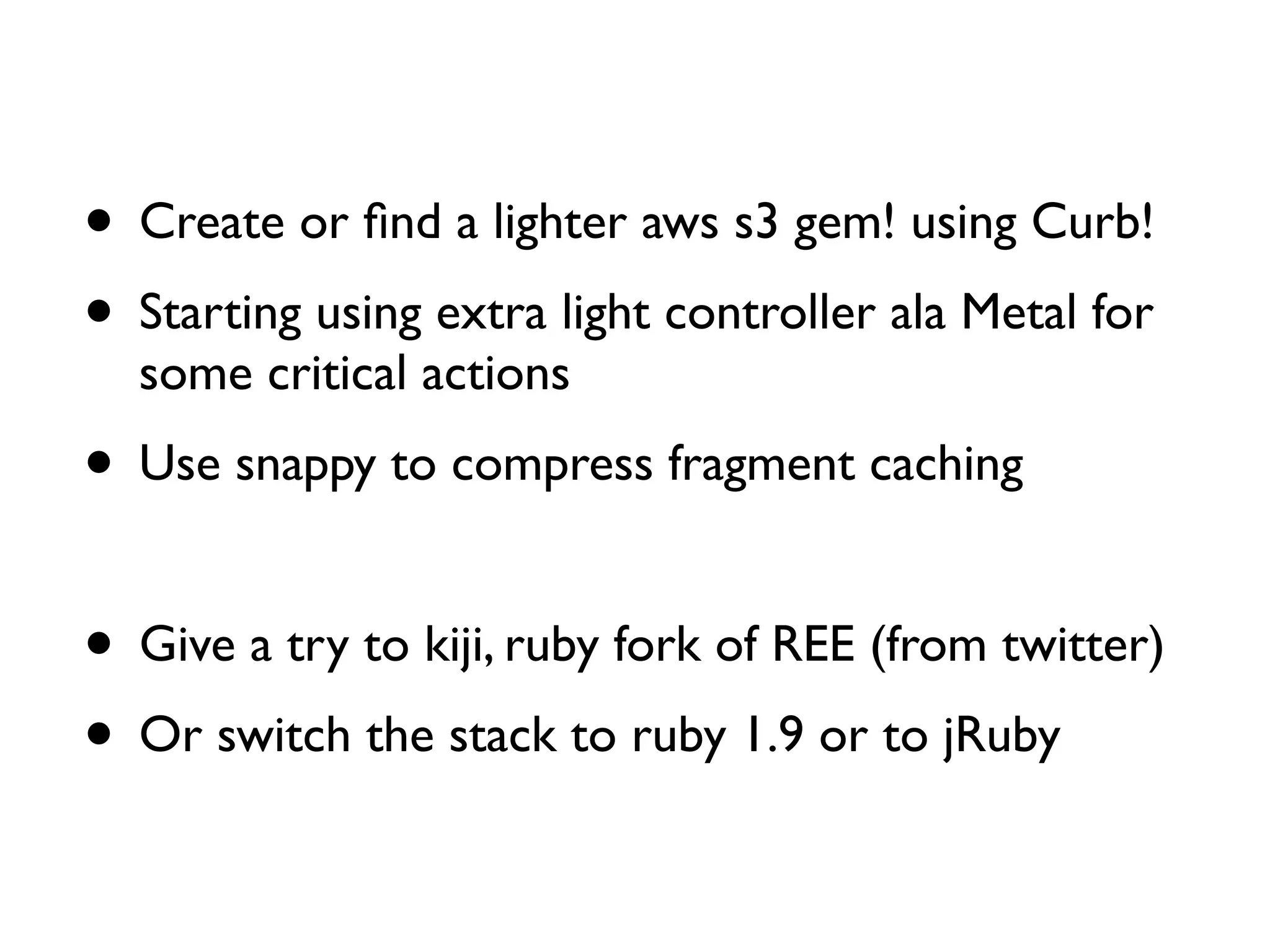

Guillaume Luccisano details strategies to enhance Rails stack performance at Justin.tv, revealing that Rails 3 is slower than Rails 2 and identifying key issues such as slow SQL queries, external dependencies, and garbage collection. Performance improvements were achieved through SQL optimization, the introduction of C libraries for faster processing, upgrades to Memcached, and better garbage collection tuning, ultimately reducing response times from 250ms to 80ms. The document also emphasizes the importance of monitoring tools and offers insights into further optimizations and strategies for ongoing performance improvements.

![Clean up your before_filters

We created a speed_up! method

to skip all before_filters on critical actions

speed_up! :only => [‘critical’, ‘action’]](https://image.slidesharecdn.com/railsperformanceatjustintvguillaumeluccisano-110701014143-phpapp01/75/Rails-performance-at-Justin-tv-Guillaume-Luccisano-54-2048.jpg)