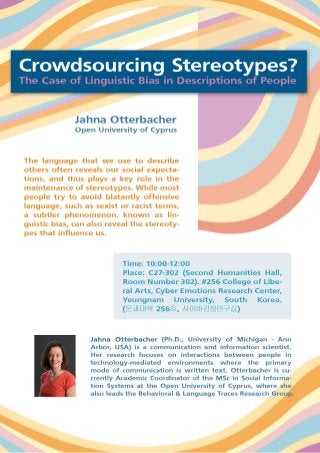

YU CERC's Brown Bag seminar Crowdsourcing Stereotypes? The Case of Linguistic Bias in Descriptions of People Dr. Jahna Otterbacher

1. YU CERC's Brown Bag seminar Crowdsourcing Stereotypes? The Case of Linguistic Bias in Descriptions of People Dr. Jahna Otterbacher Open University of Cyprus Guest lecture will be held Friday 17 April 2015, 10:00-11:30 C27-302 (Second Humanities Hall, Room Number 302). CERC office is located #256 College of Liberal Arts, Cyber Emotions Research Center( 문과대학 256 호, 사이버감성연구실) YeungNam University, 280 Daehak-Ro, Gyeongsan, Gyeongbuk 712-749, Republic of Korea The language that we use to describe others often reveals our social expectations, and thus plays a key role in the maintenance of stereotypes. While most people try to avoid blatantly offensive language, such as sexist or racist terms, a subtler phenomenon, known as linguistic bias, can also reveal the stereotypes that influence us. Linguistic bias is a systematic asymmetry in the way we use language, as a function of the social group of the person being described. Suppose someone shows me a picture of a man helping an older woman cross a busy street. Theory predicts that if the man is from my in-group (i.e., is congruent to stereotypes that I hold), I will describe him with more abstract, interpretive language (e.g., “He is helpful.”) In contrast, if the man is from my out-group, I am more likely to describe him with concrete language, describing the specific scenario (e.g., “He helped the woman cross the street.”) Because message recipients tend to interpret abstract descriptions as enduring qualities of the target person, and concrete descriptions as being transient, it is believed that such linguistic biases play a key role in the maintenance of stereotypes. I consider two social computing settings in which participants describe other people: 1) a game in which players are shown an image and must guess which descriptive labels their partner will assign, and 2) collaboratively authored biographies of famous actors at the Internet Movie Database. In both studies, I found evidence of linguistic biases. In the first study, images of women were more likely to be described using subjective adjectives (e.g., good, bad, beautiful, ugly) as compared to images of men. In the second study, both gender and race were correlated to participants’ language patterns, with white male actors being described more abstractly and subjectively than any other social group. 2. Since the widespread prevalence of linguistic biases in social technologies stands to reinforce stereotypes, further work should consider both the technical features and the social cues built into sharing platforms, which might influence the extent to which linguistic biases are observed. I will discuss such directions for future work, as well as some methodological considerations. The talk will be based on the following publications, which can be downloaded at: http://www.jahna-otterbacher.net/publications/ Otterbacher, J. 2015. Crowdsourcing Stereotypes: Linguistic Bias in Metadata Gene